Virtual Structural Analysis of Jokisivu Open Pit Using ‘Structure-from-Motion’ Unmanned Aerial Vehicles (UAV) Photogrammetry: Implications for Structurally-Controlled Gold Deposits in Southwest Finland

Abstract

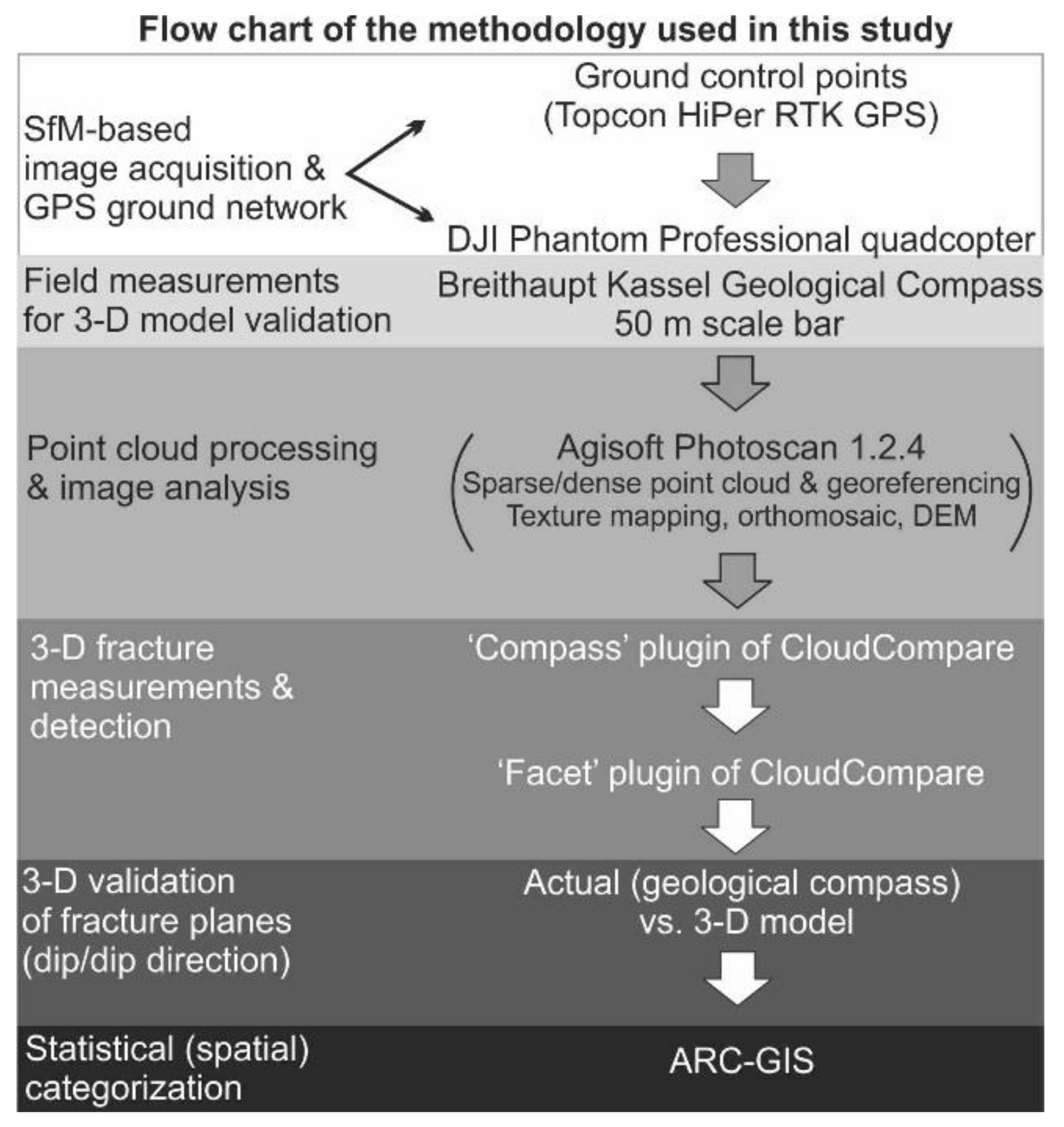

1. Introduction

2. Unmanned Aerial Vehicle (UAV) Photogrammetry

2.1. Backgound

2.2. P3P Aerial Platform

2.3. Image Acquisition and GCPs

3. Jokisivu Gold Mine Case Study

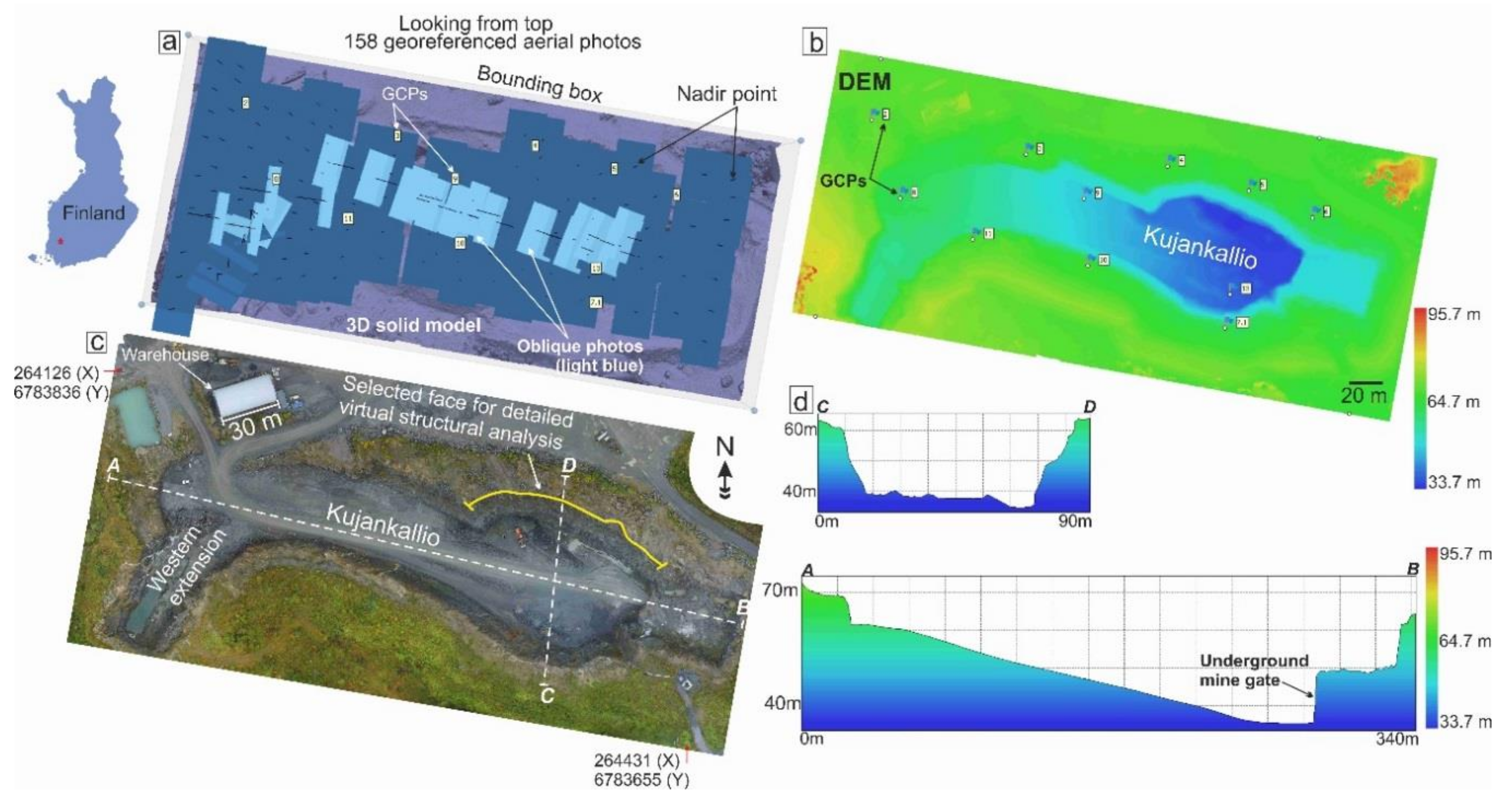

3.1. Kujankallio Open-Pit UAV Photogrammetry

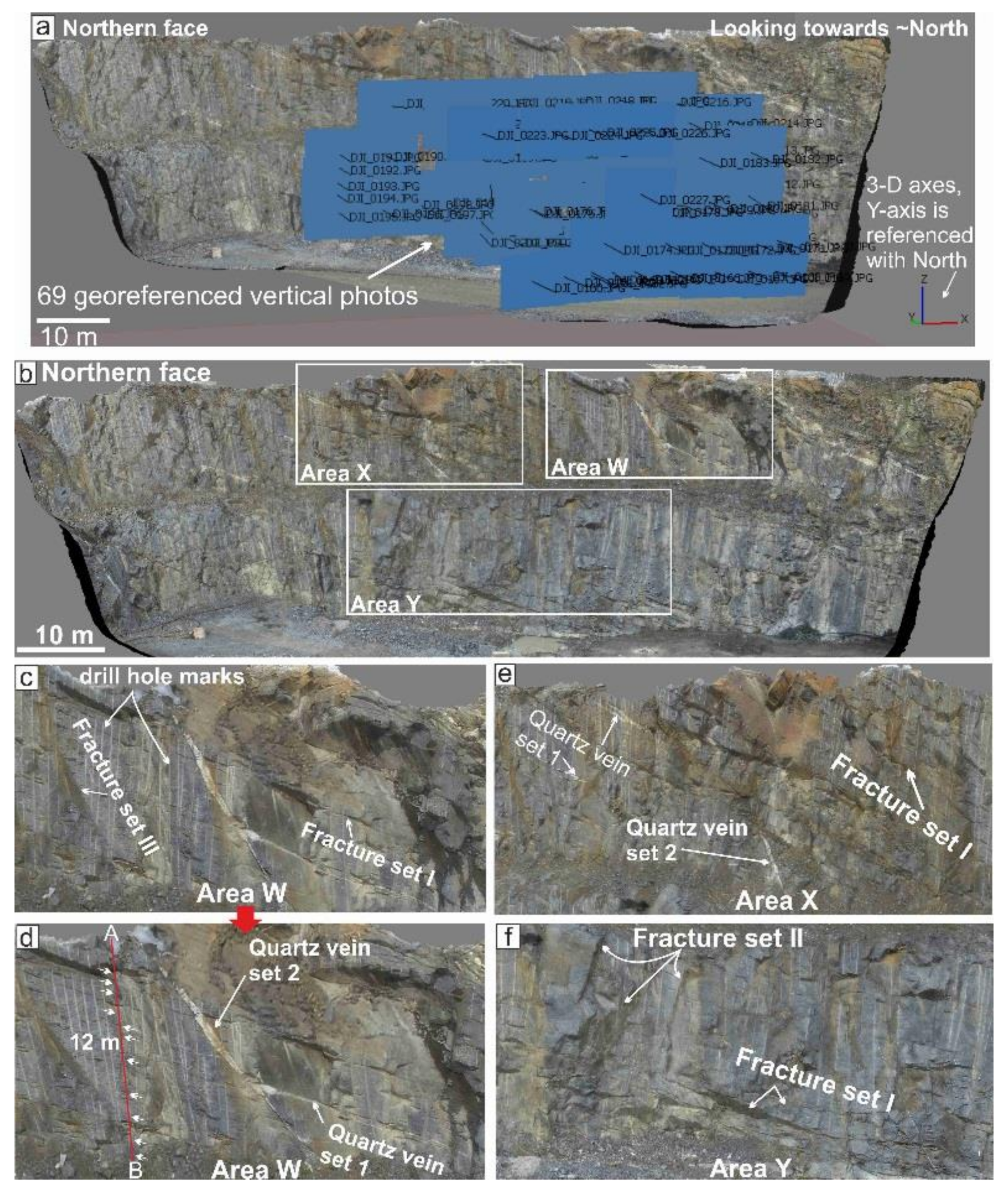

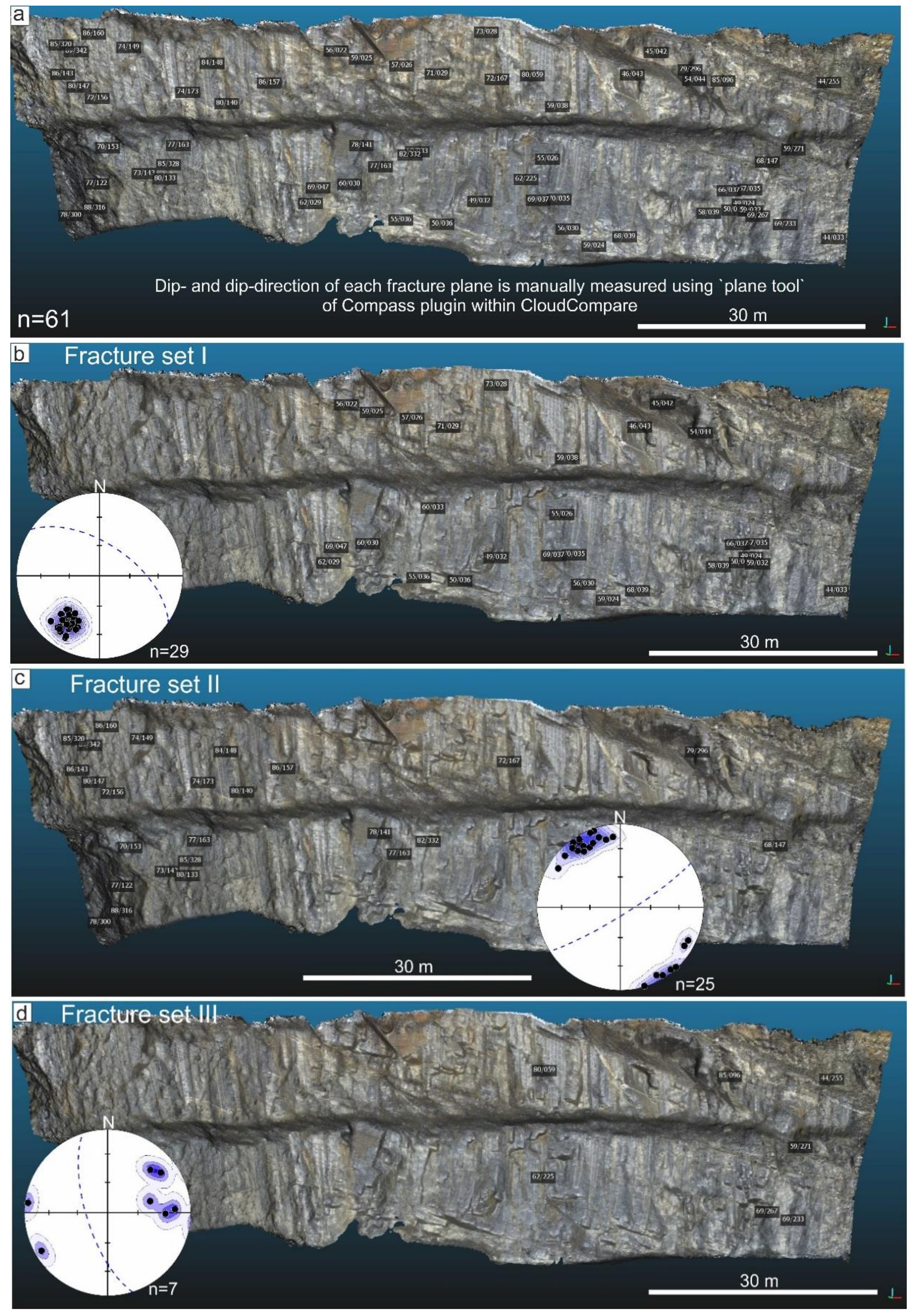

3.2. Northern Face Wall UAV Photogrammetry and Structural Analyses

3.3. Comparison of ‘Compass’ Tool and Field Measurements

3.4. ‘Facet’ Analysis

3.5. ArcGIS Analysis

4. UAV Data and Structural Interpretations

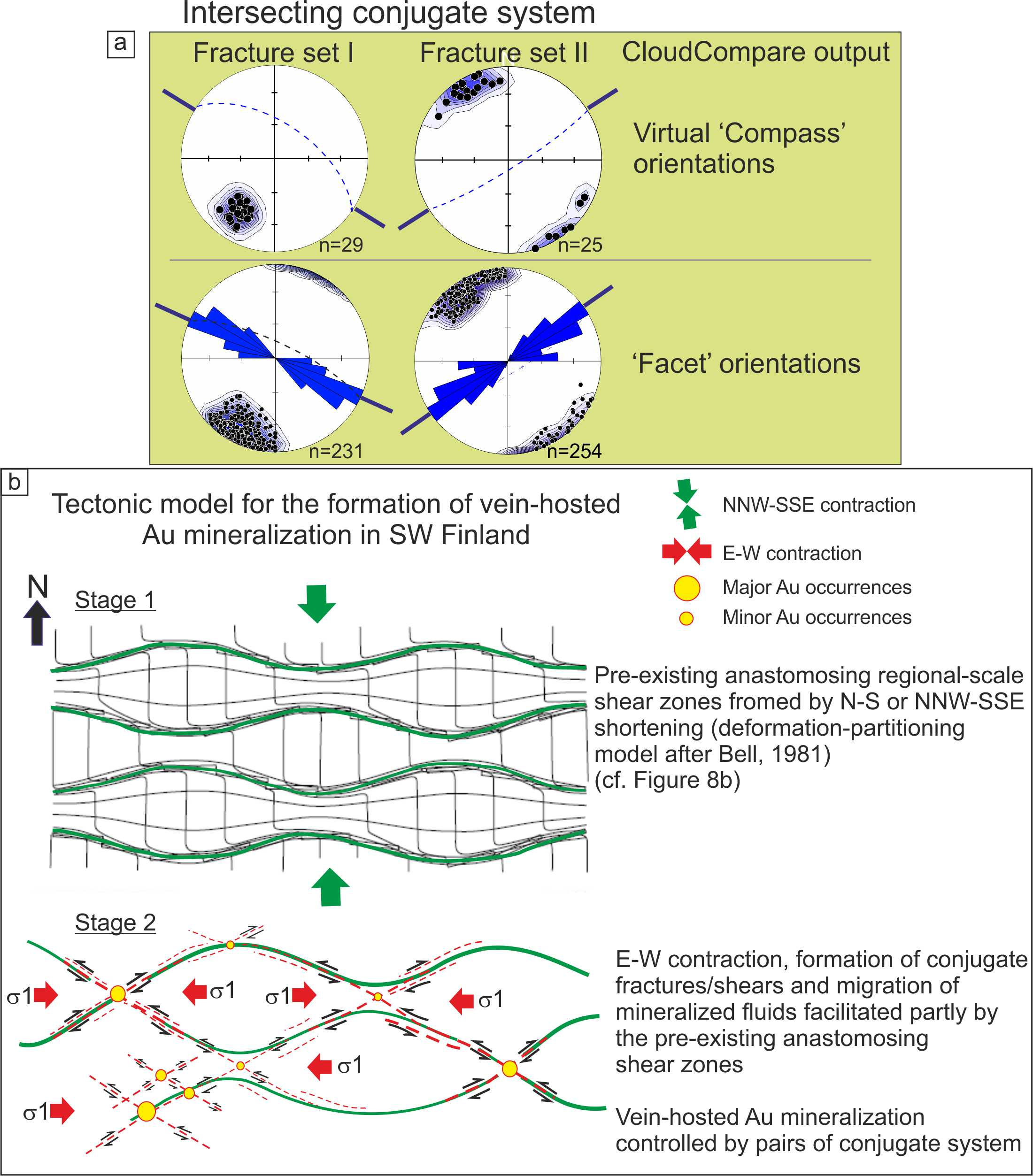

5. Discussion

5.1. Advantages of SfM-MVS Based UAV Data Acquisition in Open-Pit Mines

- (a)

- The only ground-based requirement for UAV-based surveys is an appropriate set of GCPs, whose configuration can be chosen in such a way to avoid active mining operations, restricted areas, and safety regulations. In contrast, data gathering with ground-based instruments is much more limited by these factors, whereas the cost of airborne LiDAR is comparatively high [43].

- (b)

- UAVs allow the acquisition of multi-scale spatial resolution (m to mm/pixel) and multi-temporal (time series) images of exposed rock surfaces [44]. Digital photogrammetry retains an advantage over laser scanning methods in terms of cost effectiveness, backpack portability, high-resolution photorealistic texturing, and color.

- (c)

- UAV quadcopters, in particular, have the advantage of being able to fly at low speed both horizontally and vertically while the camera can be pointed in any direction between 0 and 90° with respect to Earth’s surface (Figure 1). This freedom of movement and observation allows for them to take multi-resolution close-range images of nearly vertical rock faces, as shown herein for the Kujankallio open pit. Quadcopters also require less space for take-off and landing as fixed-wing UAVs.

- (d)

- Because of the relative simplicity and rapidity of installing GCPs, UAV helicopters can be effectively used for incremental mapping of successive excavation phases of open-pit mines from surface to bottom. The 3-D point cloud data obtained during each step can be compared and integrated to generate spatial side- and depth-overlays in a high-resolution four-dimensional (4-D) models of the open-pit mine evolution that can be fused with drill core data.

5.2. Tectonic Implications

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Eisenbeiß, H. UAV Photogrammetry. Ph.D. Thesis, ETH Zürich, Zürich, Switzerland, 2009. [Google Scholar]

- Carrivick, J.; Smith, M.; Quincey, D. Structure from Motion in the Geosciences; Wiley-Blackwell: Hoboken, NJ, USA, 2016; ISBN 9781118895849. [Google Scholar]

- Westoby, M.J.; Brasington, J.; Glasser, N.F.; Hambrey, M.J.; Reynolds, J.M. “Structure-from-Motion” photogrammetry: A low-cost, effective tool for geoscience applications. Geomorphology 2012, 179, 300–314. [Google Scholar] [CrossRef]

- Bemis, S.P.; Micklethwaite, S.; Turner, D.; James, M.R.; Akciz, S.; Thiele, S.T.; Bangash, H.A. Ground-based and UAV-Based photogrammetry: A multi-scale, high-resolution mapping tool for structural geology and paleoseismology. J. Struct. Geol. 2014, 69, 163–178. [Google Scholar] [CrossRef]

- Tavani, S.; Granado, P.; Corradetti, A.; Girundo, M.; Iannace, A.; Arbués, P.; Muñoz, J.A.; Mazzoli, S. Building a virtual outcrop, extracting geological information from it, and sharing the results in Google Earth via OpenPlot and Photoscan: An example from the Khaviz Anticline (Iran). Comput. Geosci. 2014, 63, 44–53. [Google Scholar] [CrossRef]

- Tong, X.; Liu, X.; Chen, P.; Liu, S.; Luan, K.; Li, L.; Liu, S.; Liu, X.; Xie, H.; Jin, Y.; et al. Integration of UAV-based photogrammetry and terrestrial laser scanning for the three-dimensional mapping and monitoring of open-pit mine areas. Remote Sens. 2015, 7, 6635–6662. [Google Scholar] [CrossRef]

- Vollgger, S.A.; Cruden, A.R. Mapping folds and fractures in basement and cover rocks using UAV photogrammetry, Cape Liptrap and Cape Paterson, Victoria, Australia. J. Struct. Geol. 2016, 85, 168–187. [Google Scholar] [CrossRef]

- Riquelme, A.J.; Abellán, A.; Tomás, R.; Jaboyedoff, M. A new approach for semi-automatic rock mass joints recognition from 3D point clouds. Comput. Geosci. 2014, 68, 38–52. [Google Scholar] [CrossRef]

- Riquelme, A.J.; Tomás, R.; Abellán, A. Characterization of rock slopes through slope mass rating using 3D point clouds. Int. J. Rock Mech. Min. Sci. 2016, 84, 165–176. [Google Scholar] [CrossRef]

- Cawood, A.J.; Bond, C.E.; Howell, J.A.; Butler, R.W.H.; Totake, Y. LiDAR, UAV or compass-clinometer? Accuracy, coverage and the effects on structural models. J. Struct. Geol. 2017, 98, 67–82. [Google Scholar] [CrossRef]

- Ojala, J. Gold in the Central Lapland Greenstone Belt; Special Pa; Geological Survey of Finland: Espoo, Finland, 2007; ISBN 9789522170200. [Google Scholar]

- Eilu, P. Mineral Deposits and Metallogeny of Fennoscandia, 2nd ed.; Geological Survey of Finland: Espoo, Finland, 2012; ISBN 9789522171740. [Google Scholar]

- Sayab, M.; Suuronen, J.-P.; Molnár, F.; Villanova, J.; Kallonen, A.; O’Brien, H.; Lahtinen, R.; Lehtonen, M. Three-dimensional textural and quantitative analyses of orogenic gold at the nanoscale. Geology 2016, 44. [Google Scholar] [CrossRef]

- Saalmann, K.; Mänttäri, I.; Peltonen, P.; Whitehouse, M.J.; Grönholm, P.; Talikka, M. Geochronology and structural relationships of mesothermal gold mineralization in the Palaeoproterozoic Jokisivu prospect, southern Finland. Geol. Mag. 2010, 147, 551–569. [Google Scholar] [CrossRef]

- Ullman, S. The Interpretation of Structure from Motion. Proc. R. Soc. Lond. B Biol. Sci. 1979, 203, 405–426. [Google Scholar] [CrossRef] [PubMed]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Luhmann, T.; Robson, S.; Kyle, S.; Boehm, J. Close-Range Photogrammetry and 3D Imaging, 2nd ed.; De Gruyter: Berlin, Germany, 2014; ISBN 978-3-11-030278-3. [Google Scholar]

- Verhoeven, G. Taking computer vision aloft—Archaeological three-dimensional reconstructions from aerial photographs with photoscan. Archaeol. Prospect. 2011, 18, 67–73. [Google Scholar] [CrossRef]

- Seitz, S.M.; Curless, B.; Diebel, J.; Scharstein, D.; Szeliski, R. A Comparison and Evaluation of Multi-View Stereo Reconstruction Algorithms. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), New York, NY, USA, 17–22 June 2006; Volume 1, pp. 519–528. [Google Scholar]

- Bradley, D.; Boubekeur, T.; Heidrich, W. Accurate multi-view reconstruction using robust binocular stereo and surface meshing. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Snavely, N.; Seitz, S.M.; Szeliski, R. Modeling the world from Internet photo collections. Int. J. Comput. Vis. 2008, 80, 189–210. [Google Scholar] [CrossRef]

- Triggs, B.; McLauchlan, P.F.; Hartley, R.I.; Fitzgibbon, A.W. Bundle Adjustment—A Modern Synthesis; Springer: Berlin/Heidelberg, Germany, 2000; pp. 298–372. [Google Scholar]

- Turner, D.; Lucieer, A.; Watson, C. An automated technique for generating georectified mosaics from ultra-high resolution Unmanned Aerial Vehicle (UAV) imagery, based on Structure from Motion (SFM) point clouds. Remote Sens. 2012, 4, 1392–1410. [Google Scholar] [CrossRef]

- Turner, D.; Lucieer, A.; Wallace, L. Direct georeferencing of ultrahigh-resolution UAV imagery. IEEE Trans. Geosci. Remote Sens. 2014, 52, 2738–2745. [Google Scholar] [CrossRef]

- James, M.R.; Robson, S. Mitigating systematic error in topographic models derived from UAV and ground-based image networks. Earth Surf. Process. Landf. 2014, 39, 1413–1420. [Google Scholar] [CrossRef]

- Gonçalves, J.A.; Henriques, R. UAV photogrammetry for topographic monitoring of coastal areas. ISPRS J. Photogramm. Remote Sens. 2015, 104, 101–111. [Google Scholar] [CrossRef]

- Luukkonen, A. Main Geological Features, Metallogeny and Hydrothermal Alteration Phenomena of Certain Gold and Gold-Tin-Tungsten Prospects in Southern Finland; University of Helsinki: Helsinki, Finland, 1994. [Google Scholar]

- Thiele, S.T.; Grose, L.; Samsu, A.; Micklethwaite, S.; Vollgger, S.A.; Cruden, A.R. Rapid, semi-automatic fracture and contact mapping for point clouds, images and geophysical data. Solid Earth 2017, 8, 1241–1253. [Google Scholar] [CrossRef]

- Dewez, T.J.B.; Girardeau-Montaut, D.; Allanic, C.; Rohmer, J. Facets: A cloudcompare plugin to extract geological planes from unstructured 3D point clouds. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 2016, 41, 799–804. [Google Scholar] [CrossRef]

- Korsman, K.; Koistinen, T.; Kohonen, J.; Wennerstrom, M.; Ekdahl, E.; Honkamo, M.; Idman, H.; Pekkala, Y. Bedrock Map of Finland 1:1,000,000 Scale; Geological Survey of Finland: Espoo, Finland, 1997. [Google Scholar]

- Sayab, M.; Suuronen, J.P.; Hölttä, P.; Aerden, D.; Lahtinen, R.; Kallonen, A.P. High-resolution X-ray computed microtomography: A holistic approach to metamorphic fabric analyses. Geology 2015, 43, 55–58. [Google Scholar] [CrossRef]

- Skyttä, P.; Väisänen, M.; Mänttäri, I. Preservation of Palaeoproterozoic early Svecofennian structures in the Orijärvi area, SW Finland-Evidence for polyphase strain partitioning. Precambrian Res. 2006, 150, 153–172. [Google Scholar] [CrossRef]

- Lahtinen, R.; Sayab, M.; Karell, F. Near-orthogonal deformation successions in the poly-deformed Paleoproterozoic Martimo belt: Implications for the tectonic evolution of Northern Fennoscandia. Precambrian Res. 2015, 270, 22–38. [Google Scholar] [CrossRef]

- Bell, T.H. Foliation development—The contribution, geometry and significance of progressive, bulk, inhomogeneous shortening. Tectonophysics 1981, 75, 273–296. [Google Scholar] [CrossRef]

- Sayab, M.; Khan, M.A. Temporal evolution of surface rupture deduced from coseismic multi-mode secondary fractures: Insights from the October 8, 2005 (Mw 7.6) Kashmir earthquake, NW Himalaya. Tectonophysics 2010, 493, 58–73. [Google Scholar] [CrossRef]

- Hessami, K.; Koyi, H.A.; Talbot, C.J. The significance of strike slip faulting in basement zagros fold and thrust belt. J. Pet. Geol. 2001, 24, 5–28. [Google Scholar] [CrossRef]

- Bishop, M.P.; Shroder, J.F. Remote sensing and geomorphometric assessment of topographic complexity and erosion dynamics in the Nanga Parbat massif Remote sensing and geomorphometric assessment of topographic complexity and erosion. Geol. Soc. Lond. Spec. Publ. 2000, 170, 181–200. [Google Scholar] [CrossRef]

- Sayab, M. Tectonic significance of structural successions preserved within low-strain pods: Implications for thin- to thick-skinned tectonics vs. multiple near-orthogonal folding events in the Palaeo-Mesoproterozoic Mount Isa Inlier (NE Australia). Precambrian Res. 2009, 175, 169–186. [Google Scholar] [CrossRef]

- Malehmir, A.; Tryggvason, A.; Lickorish, H.; Weihed, P. Regional structural profiles in the western part of the Palaeoproterozoic Skellefte Ore District, northern Sweden. Precambrian Res. 2007, 159, 1–18. [Google Scholar] [CrossRef]

- Sayab, M.; Miettinen, A.; Aerden, D.; Karell, F. Orthogonal switching of AMS axes during type-2 fold interference: Insights from integrated X-ray computed tomography, AMS and 3D petrography. J. Struct. Geol. 2017, 103. [Google Scholar] [CrossRef]

- Peterson, D.L.; Brass, J.A.; Smith, W.H.; Langford, G.; Wegener, S.; Dunagan, S.; Hammer, P.; Snook, K. Platform options of free-flying satellites, UAVs or the International Space Station for remote sensing assessment of the littoral zone. Int. J. Remote Sens. 2003. [Google Scholar] [CrossRef]

- Read, J.; Stacey, P. Guidelines for Open Pit Slope Design; CSIRO Publishing: Clayton, Australia, 2009; ISBN 9780643094697. [Google Scholar]

- Chen, J.; Li, K.; Chang, K.J.; Sofia, G.; Tarolli, P. Open-pit mining geomorphic feature characterisation. Int. J. Appl. Earth Obs. Geoinf. 2015, 42, 76–86. [Google Scholar] [CrossRef]

- Lucieer, A.; de Jong, S.M.; Turner, D. Mapping landslide displacements using Structure from Motion (SfM) and image correlation of multi-temporal UAV photography. Prog. Phys. Geogr. 2014, 38, 97–116. [Google Scholar] [CrossRef]

- Groves, D.I.; Goldfarb, R.J.; Knox-Robinson, C.M.; Ojala, J.; Gardoll, S.; Yun, G.Y.; Holyland, P. Late-kinematic timing of orogenic gold deposits and significance for computer-based exploration techniques with emphasis on the Yilgarn Block, Western Australia. Ore Geol. Rev. 2000, 17, 1–38. [Google Scholar] [CrossRef]

- Goldfarb, R.J.; Baker, T.; Dubé, B.; Groves, D.I.; Hart, C.J.R.; Gosselin, P. Distribution, Character, and Genesis of Gold Deposits in Metamorphic Terranes. Soc. Econ. Geol. 2005, 100, 407–450. [Google Scholar] [CrossRef]

| Control Points | X Error (cm) | Y Error (cm) | Z Error (cm) |

|---|---|---|---|

| 3 | −1.6 | 1.3 | −2.4 |

| 4 | −0.8 | 0.1 | 9.2 |

| 6 | 3.2 | −0.8 | −7.3 |

| 7.1 | 0.5 | 0.9 | −0.2 |

| 13 | −1.1 | −1.2 | 0.7 |

| 2 | 2.3 | −1.7 | −3.7 |

| 9 | −2.6 | 1.5 | 3.5 |

| Average | 2.0 | 1.2 | 5.0 |

| Check Points | X Error (cm) | Y Error (cm) | Z Error (cm) |

|---|---|---|---|

| 5 | 1.0 | 2.3 | −6.5 |

| 10 | 0.3 | −0.3 | −9.2 |

| 11 | −3.5 | 3.1 | −2.6 |

| 8 | 0.3 | 2.7 | −4.1 |

| Average | 1.8 | 2.4 | 6.1 |

| X Error (cm) | Y Error (cm) | Z Error (cm) | |

|---|---|---|---|

| Control points (7) | 1.9 | 1.1 | 4.9 |

| Check points (4) | 1.8 | 2.3 | 6.1 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sayab, M.; Aerden, D.; Paananen, M.; Saarela, P. Virtual Structural Analysis of Jokisivu Open Pit Using ‘Structure-from-Motion’ Unmanned Aerial Vehicles (UAV) Photogrammetry: Implications for Structurally-Controlled Gold Deposits in Southwest Finland. Remote Sens. 2018, 10, 1296. https://doi.org/10.3390/rs10081296

Sayab M, Aerden D, Paananen M, Saarela P. Virtual Structural Analysis of Jokisivu Open Pit Using ‘Structure-from-Motion’ Unmanned Aerial Vehicles (UAV) Photogrammetry: Implications for Structurally-Controlled Gold Deposits in Southwest Finland. Remote Sensing. 2018; 10(8):1296. https://doi.org/10.3390/rs10081296

Chicago/Turabian StyleSayab, Mohammad, Domingo Aerden, Markku Paananen, and Petri Saarela. 2018. "Virtual Structural Analysis of Jokisivu Open Pit Using ‘Structure-from-Motion’ Unmanned Aerial Vehicles (UAV) Photogrammetry: Implications for Structurally-Controlled Gold Deposits in Southwest Finland" Remote Sensing 10, no. 8: 1296. https://doi.org/10.3390/rs10081296

APA StyleSayab, M., Aerden, D., Paananen, M., & Saarela, P. (2018). Virtual Structural Analysis of Jokisivu Open Pit Using ‘Structure-from-Motion’ Unmanned Aerial Vehicles (UAV) Photogrammetry: Implications for Structurally-Controlled Gold Deposits in Southwest Finland. Remote Sensing, 10(8), 1296. https://doi.org/10.3390/rs10081296