Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset

Abstract

1. Introduction

- -

- We construct a dense labeling remote sensing dataset, DLRSD, for multi-label RSIR. DLRSD is a publicly available dataset, which is a dense labeling dataset in contrast to the existing single-labeled and multi-labeled RSIR datasets.

- -

- We provide a brief review of the state-of-the-art methods for single-label and multi-label RSIR.

- -

- We compare the single-label and multi-label retrieval methods on DLRSD, including traditional handcrafted features and deep learning features. This indicates the advantages of multi-label over single-label for complex remote sensing applications like RSIR and provides the literature with baseline results for future research on multi-label RSIR.

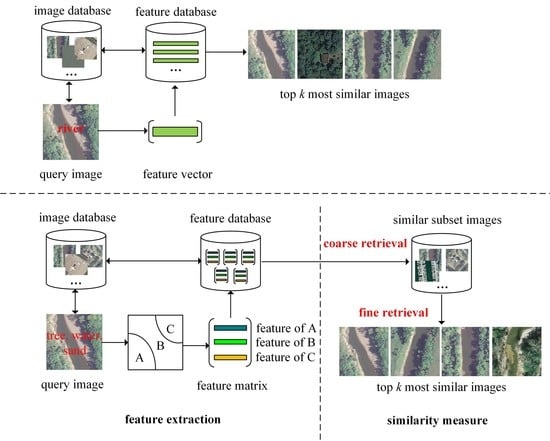

2. Remote Sensing Image Retrieval Methods

2.1. Single-Label RSIR

2.2. Multi-Label RSIR

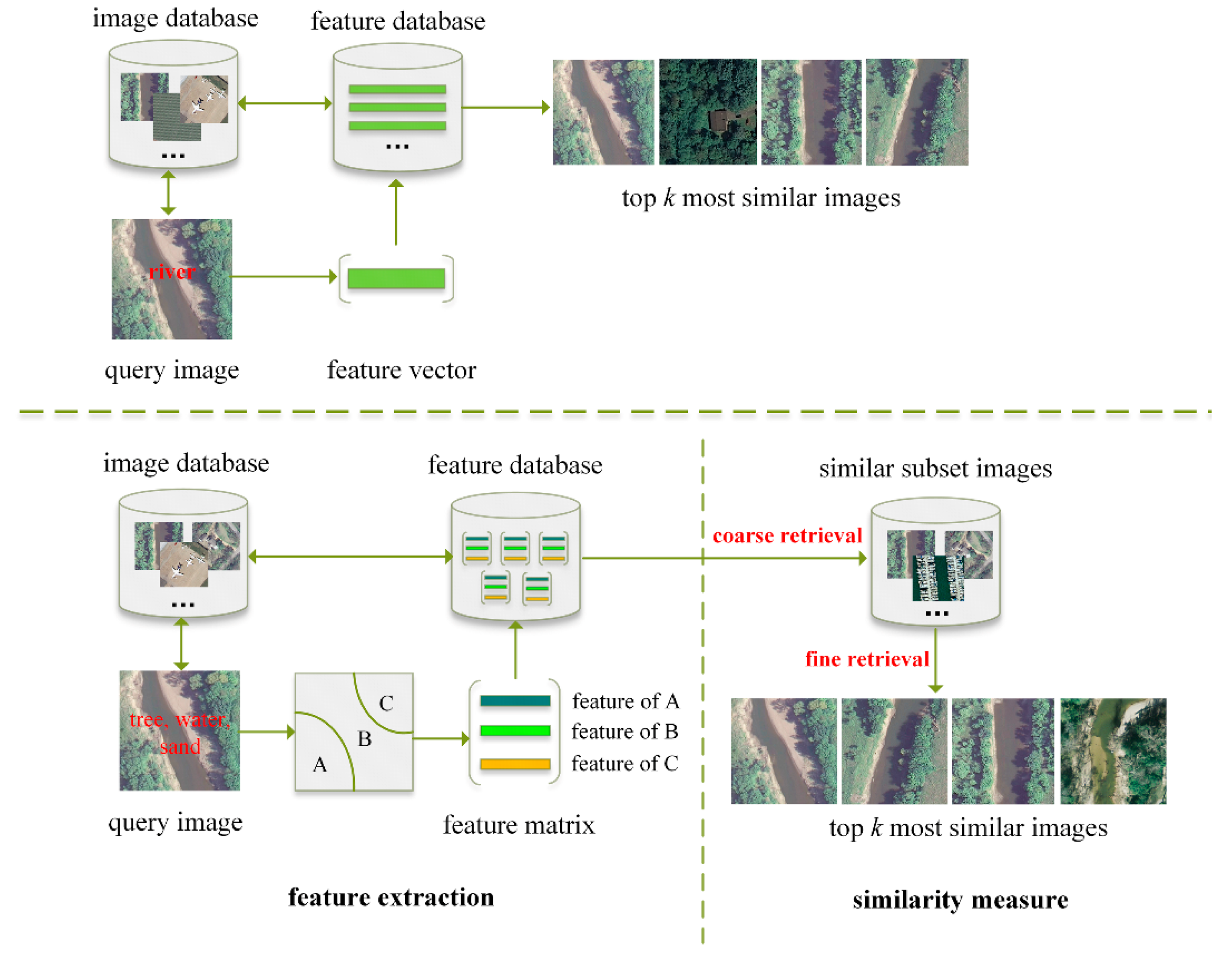

3. DLRSD: A Dense Labeling Dataset for Multi-Label RSIR

3.1. Description of DLRSD

3.2. Multi-Label RSIR Based on Handcrafted and CNN Features

3.2.1. Multi-Label RSIR Based on Handcrafted Features

3.2.2. Multi-Label RSIR Based on CNN Features

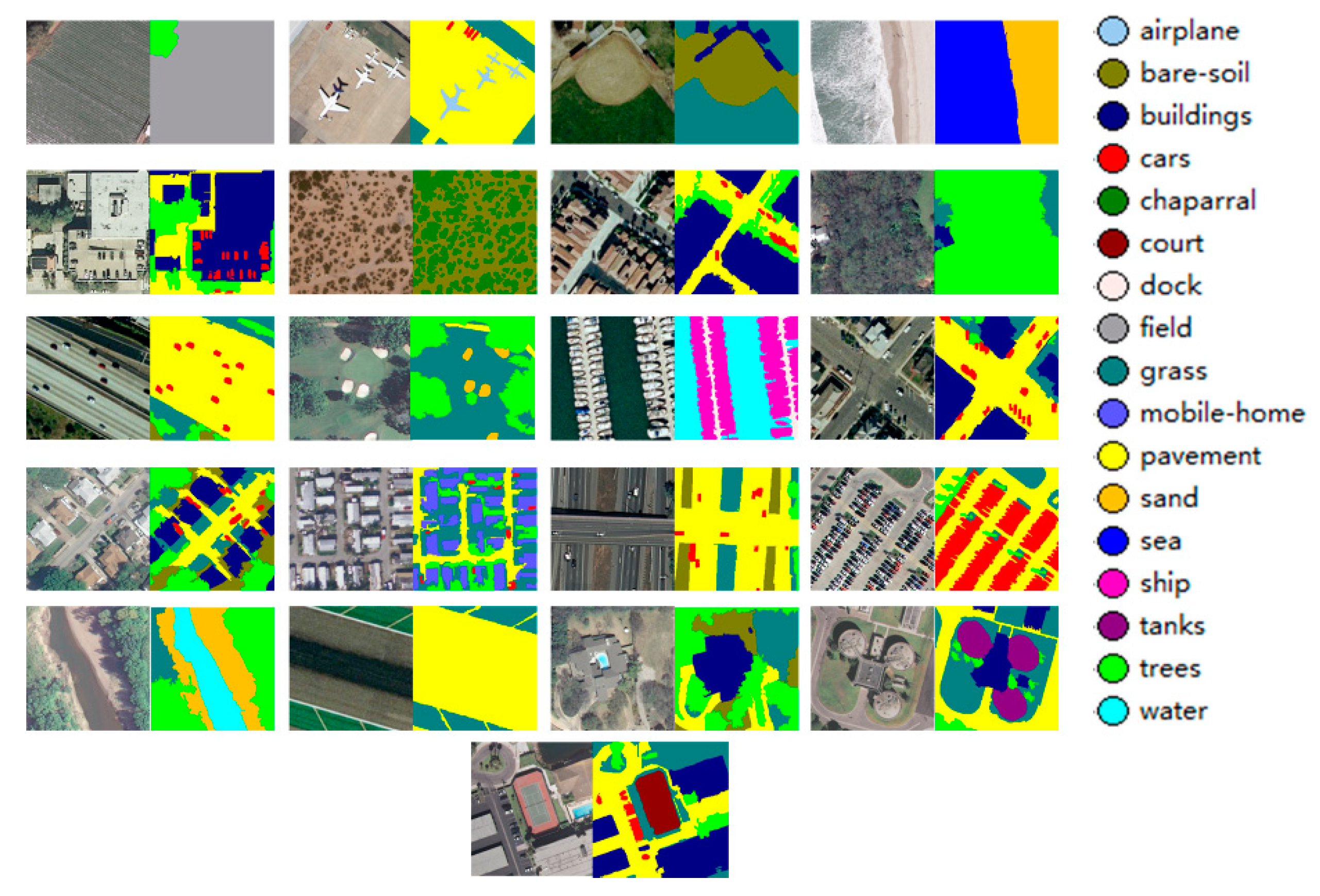

4. Experiments and Results

4.1. Experimental Setup

4.2. Experimental Results

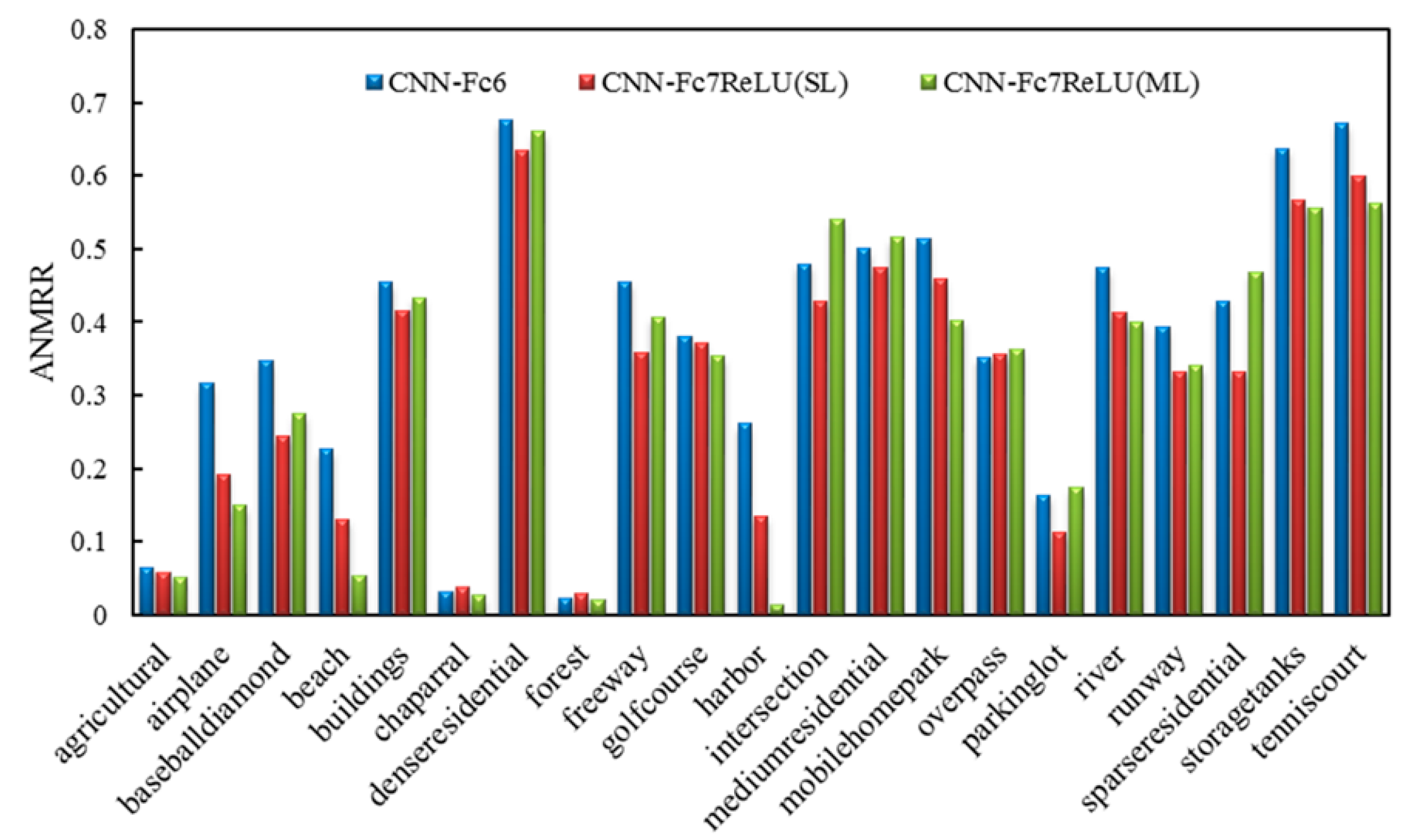

4.2.1. Results of Single-Label and Multi-Label RSIR

4.2.2. Comparisons of the Multi-Label RSIR Methods

5. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Bosilj, P.; Aptoula, E.; Lefèvre, S.; Kijak, E. Retrieval of Remote Sensing Images with Pattern Spectra Descriptors. ISPRS Int. J. Geo-Inf. 2016, 5, 228. [Google Scholar] [CrossRef]

- Aptoula, E. Remote sensing image retrieval with global morphological texture descriptors. IEEE Trans. Geosci. Remote Sens. 2014, 52, 3023–3034. [Google Scholar] [CrossRef]

- Bouteldja, S.; Kourgli, A. Multiscale texture features for the retrieval of high resolution satellite images. In Proceedings of the 2015 International Conference on Systems, Signals and Image Processing (IWSSIP), London, UK, 10–12 September 2015; pp. 170–173. [Google Scholar]

- Shao, Z.; Zhou, W.; Zhang, L.; Hou, J. Improved color texture descriptors for remote sensing image retrieval. J. Appl. Remote Sens. 2014, 8, 83584. [Google Scholar] [CrossRef]

- Scott, G.J.; Klaric, M.N.; Davis, C.H.; Shyu, C.R. Entropy-balanced bitmap tree for shape-based object retrieval from large-scale satellite imagery databases. IEEE Trans. Geosci. Remote Sens. 2011, 49, 1603–1616. [Google Scholar] [CrossRef]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Liu, C.; Wechsler, H. Gabor feature based classification using the enhanced fisher linear discriminant model for face recognition. IEEE Trans. Image Process. 2002, 11, 467–476. [Google Scholar] [PubMed]

- Ojala, T.; Pietikainen, M.; Maenpaa, T. Multiresolution gray-scale and rotation invariant texture classification with local binary patterns. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 971–987. [Google Scholar] [CrossRef]

- Yang, Y.; Newsam, S. Geographic image retrieval using local invariant features. IEEE Trans. Geosci. Remote Sens. 2013, 51, 818–832. [Google Scholar] [CrossRef]

- Oliva, A.; Torralba, A. Modeling the shape of the scene: A holistic representation of the spatial envelope. Int. J. Comput. Vis. 2001, 42, 145–175. [Google Scholar] [CrossRef]

- Howarth, P.; Rüger, S. Evaluation of texture features for content-based image retrieval. In Proceedings of the International Conference on Image and Video Retrieval, Dublin, Ireland, 21–23 July 2004; Springer: Berlin, Germany, 2004; pp. 326–334. [Google Scholar]

- Özkan, S.; Ateş, T.; Tola, E.; Soysal, M.; Esen, E. Performance analysis of state-of-the-art representation methods for geographical image retrieval and categorization. IEEE Geosci. Remote Sens. Lett. 2014, 11, 1996–2000. [Google Scholar] [CrossRef]

- Sivic, J.; Zisserman, A. Video Google: A text retrieval approach to object matching in videos. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; Volume 2, pp. 1470–1477. [Google Scholar]

- Jégou, H.; Douze, M.; Schmid, C.; Pérez, P. Aggregating local descriptors into a compact image representation. In Proceedings of the 2010 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), San Francisco, CA, USA, 13–18 June 2010; pp. 3304–3311. [Google Scholar]

- Perronnin, F.; Sánchez, J.; Mensink, T. Improving the Fisher Kernel for Large-Scale Image Classification. In Proceedings of the European Conference on Computer Vision, Crete, Greece, 5–11 September 2010; pp. 143–156. [Google Scholar]

- Yang, J.; Liu, J.; Dai, Q. An improved Bag-of-Words framework for remote sensing image retrieval in large-scale image databases. Int. J. Digit. Earth 2015, 8, 273–292. [Google Scholar] [CrossRef]

- Aptoula, E. Bag of morphological words for content-based geographical retrieval. In Proceedings of the 2014 12th International Workshop on Content-Based Multimedia Indexing (CBMI), Klagenfurt, Austria, 18–20 June 2014; pp. 1–5. [Google Scholar]

- Dalal, N.; Triggs, B. Histograms of oriented gradients for human detection. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR 2005), San Diego, CA, USA, 20–25 June 2005; Volume 1, pp. 886–893. [Google Scholar]

- Bosch, A.; Zisserman, A.; Munoz, X. Representing shape with a spatial pyramid kernel. In Proceedings of the 6th ACM International Conference on Image and Video Retrieval, Amsterdam, The Netherlands, 9–11 July 2007; ACM: New York, NY, USA, 2007; pp. 401–408. [Google Scholar]

- Zhou, W.; Shao, Z.; Diao, C.; Cheng, Q. High-resolution remote-sensing imagery retrieval using sparse features by auto-encoder. Remote Sens. Lett. 2015, 6, 775–783. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, L.; Tong, X.; Zhang, L.; Zhang, Z.; Liu, H.; Xing, X.; Mathiopoulos, P.T. A three-layered graph-based learning approach for remote sensing image retrieval. IEEE Trans. Geosci. Remote Sens. 2016, 54, 6020–6034. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Li, F.-F. ImageNet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 2–9. [Google Scholar]

- Napoletano, P. Visual descriptors for content-based retrieval of remote-sensing images. Int. J. Remote Sens. 2018, 39, 1343–1376. [Google Scholar] [CrossRef]

- Zhou, W.; Newsam, S.; Li, C.; Shao, Z. Learning Low Dimensional Convolutional Neural Networks for High-Resolution Remote Sensing Image Retrieval. Remote Sens. 2017, 9, 489. [Google Scholar] [CrossRef]

- Chatfield, K.; Chatfield, K.; Simonyan, K.; Vedaldi, A.; Zisserman, A. Return of the Devil in the Details: Delving Deep into Convolutional Nets. In Proceedings of the British Machine Vision Conference, Nottingham, UK, 1–5 September 2014; pp. 1–11. [Google Scholar]

- Nasierding, G.; Kouzani, A.Z. Empirical study of multi-label classification methods for image annotation and retrieval. In Proceedings of the 2010 International Conference on Digital Image Computing: Techniques and Applications (DICTA), Sydney, NSW, Australia, 1–3 December 2010; pp. 617–622. [Google Scholar]

- Li, R.; Zhang, Y.; Lu, Z.; Lu, J.; Tian, Y. Technique of image retrieval based on multi-label image annotation. In Proceedings of the 2010 Second International Conference on Multimedia and Information Technology (MMIT), Kaifeng, China, 24–25 April 2010; pp. 10–13. [Google Scholar]

- Ranjan, V.; Rasiwasia, N.; Jawahar, C.V. Multi-label cross-modal retrieval. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 4094–4102. [Google Scholar]

- Wang, M.; Song, T. Remote sensing image retrieval by scene semantic matching. IEEE Trans. Geosci. Remote Sens. 2013, 51, 2874–2886. [Google Scholar] [CrossRef]

- Wang, M.; Wan, Q.M.; Gu, L.B.; Song, T.Y. Remote-sensing image retrieval by combining image visual and semantic features. Int. J. Remote Sens. 2013, 34, 4200–4223. [Google Scholar] [CrossRef]

- Chaudhuri, B.; Demir, B.; Bruzzone, L.; Chaudhuri, S. Region-based retrieval of remote sensing images using an unsupervised graph-theoretic approach. IEEE Geosci. Remote Sens. Lett. 2016, 13, 987–991. [Google Scholar] [CrossRef]

- Dai, O.E.; Demir, B.; Sankur, B.; Bruzzone, L. A novel system for content based retrieval of multi-label remote sensing images. In Proceedings of the 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 23–28 July 2017; pp. 1744–1747. [Google Scholar]

- Chaudhuri, B.; Demir, B.; Chaudhuri, S.; Bruzzone, L. Multilabel Remote Sensing Image Retrieval Using a Semisupervised Graph-Theoretic Method. IEEE Trans. Geosci. Remote Sens. 2018, 56, 1144–1158. [Google Scholar] [CrossRef]

- Zhou, W.; Newsam, S.; Li, C.; Shao, Z. Patternnet: A benchmark dataset for performance evaluation of remote sensing image retrieval. arXiv, 2017; arXiv:1706.03424. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Jia, Y.; Shelhamer, E.; Donahue, J.; Karayev, S.; Long, J.; Girshick, R.; Guadarrama, S.; Darrell, T. Caffe: Convolutional Architecture for Fast Feature Embedding. In Proceedings of the ACM International Conference on Multimedia, Orlando, FL, USA, 3–7 November 2014; ACM: New York, NY, USA, 2014; pp. 675–678. [Google Scholar]

| Class Label | Number of Images |

|---|---|

| airplane | 100 |

| bare soil | 754 |

| buildings | 713 |

| cars | 897 |

| chaparral | 116 |

| court | 105 |

| dock | 100 |

| field | 103 |

| grass | 977 |

| mobile home | 102 |

| pavement | 1331 |

| sand | 291 |

| sea | 101 |

| ship | 103 |

| tanks | 100 |

| trees | 1021 |

| water | 208 |

| Parameters | Single-Label CNN | Multi-Label CNN |

|---|---|---|

| base learning rate | 0.0001 | 0.0001 |

| momentum | 0.9 | 0.9 |

| weight decay | 0.0005 | 0.0005 |

| max iterations | 3000 | 4000 |

| Handcraft Features | ANMRR | mAP | P@5 | P@10 | P@50 | P@100 | P@1000 |

|---|---|---|---|---|---|---|---|

| Simple Statistics | 0.8580 | 0.1027 | 0.2843 | 0.1912 | 0.1138 | 0.0994 | 0.0646 |

| Color Histogram | 0.7460 | 0.1918 | 0.6489 | 0.5076 | 0.2694 | 0.1964 | 0.0680 |

| Gabor Texture | 0.7070 | 0.2232 | 0.6771 | 0.5508 | 0.3085 | 0.2286 | 0.0680 |

| HOG | 0.8600 | 0.1233 | 0.3415 | 0.2449 | 0.1322 | 0.1037 | 0.0564 |

| PHOG | 0.7990 | 0.1557 | 0.4976 | 0.3840 | 0.2105 | 0.1538 | 0.0581 |

| GIST | 0.7760 | 0.1859 | 0.5755 | 0.4540 | 0.2447 | 0.1756 | 0.0616 |

| GLCM | 0.7700 | 0.1545 | 0.4730 | 0.3744 | 0.2220 | 0.1727 | 0.0683 |

| LBP | 0.7710 | 0.1648 | 0.6158 | 0.4845 | 0.2589 | 0.1810 | 0.0580 |

| MLIR | 0.7460 | 0.2029 | 0.5364 | 0.4267 | 0.2558 | 0.1985 | 0.0703 |

| CNN Features | ANMRR | mAP | P@5 | P@10 | P@50 | P@100 |

|---|---|---|---|---|---|---|

| CNN-Fc6 | 0.3740 | 0.5760 | 0.7895 | 0.6624 | 0.2902 | 0.1758 |

| CNN-Fc6ReLU | 0.4050 | 0.5456 | 0.7629 | 0.6352 | 0.2781 | 0.1681 |

| CNN-Fc7 | 0.3830 | 0.5619 | 0.7643 | 0.6417 | 0.2880 | 0.1714 |

| CNN-Fc7ReLU | 0.3740 | 0.5693 | 0.7814 | 0.6543 | 0.2906 | 0.1735 |

| CNN-Fc6(SL) | 0.3640 | 0.5862 | 0.7905 | 0.6714 | 0.2960 | 0.1780 |

| CNN-Fc6ReLU(SL) | 0.3680 | 0.5829 | 0.7900 | 0.6710 | 0.2936 | 0.1748 |

| CNN-Fc7(SL) | 0.3350 | 0.6123 | 0.8005 | 0.6979 | 0.3064 | 0.1794 |

| CNN-Fc7ReLU(SL) | 0.3180 | 0.6277 | 0.8233 | 0.7076 | 0.3113 | 0.1808 |

| CNN-Fc6(ML) | 0.3620 | 0.5870 | 0.7943 | 0.6767 | 0.2949 | 0.1773 |

| CNN-Fc6ReLU(ML) | 0.3700 | 0.5824 | 0.7810 | 0.6636 | 0.2899 | 0.1715 |

| CNN-Fc7(ML) | 0.3410 | 0.6074 | 0.7924 | 0.6879 | 0.3005 | 0.1745 |

| CNN-Fc7ReLU(ML) | 0.3220 | 0.6273 | 0.8076 | 0.7100 | 0.3080 | 0.1777 |

| CNN-HM | 0.4270 | 0.5188 | 0.6052 | 0.5676 | 0.2713 | 0.1617 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shao, Z.; Yang, K.; Zhou, W. Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset. Remote Sens. 2018, 10, 964. https://doi.org/10.3390/rs10060964

Shao Z, Yang K, Zhou W. Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset. Remote Sensing. 2018; 10(6):964. https://doi.org/10.3390/rs10060964

Chicago/Turabian StyleShao, Zhenfeng, Ke Yang, and Weixun Zhou. 2018. "Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset" Remote Sensing 10, no. 6: 964. https://doi.org/10.3390/rs10060964

APA StyleShao, Z., Yang, K., & Zhou, W. (2018). Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset. Remote Sensing, 10(6), 964. https://doi.org/10.3390/rs10060964