Defining Organizational Context for Corporate Sustainability Assessment: Cross-Disciplinary Approach

Abstract

1. Introduction

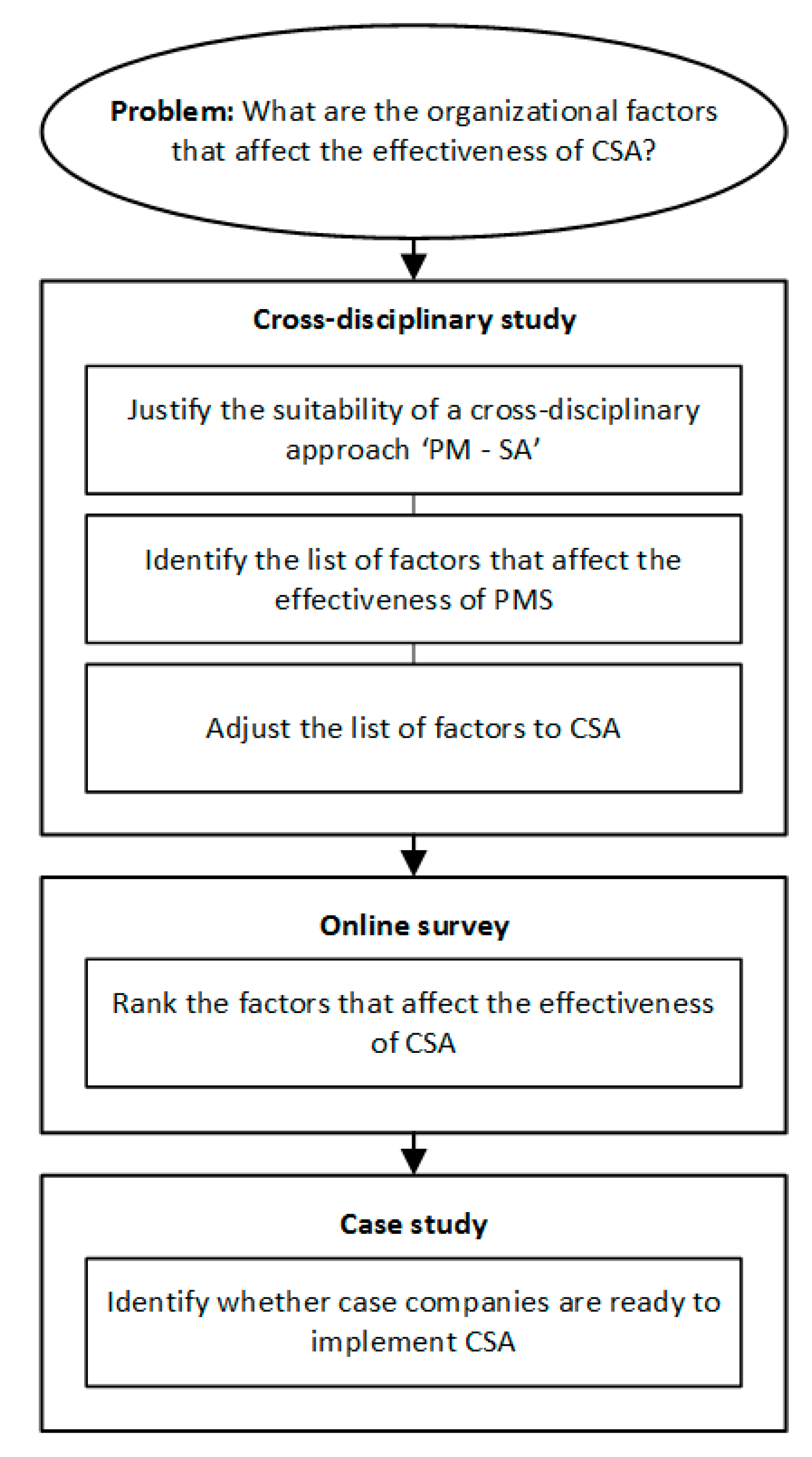

2. Methodology

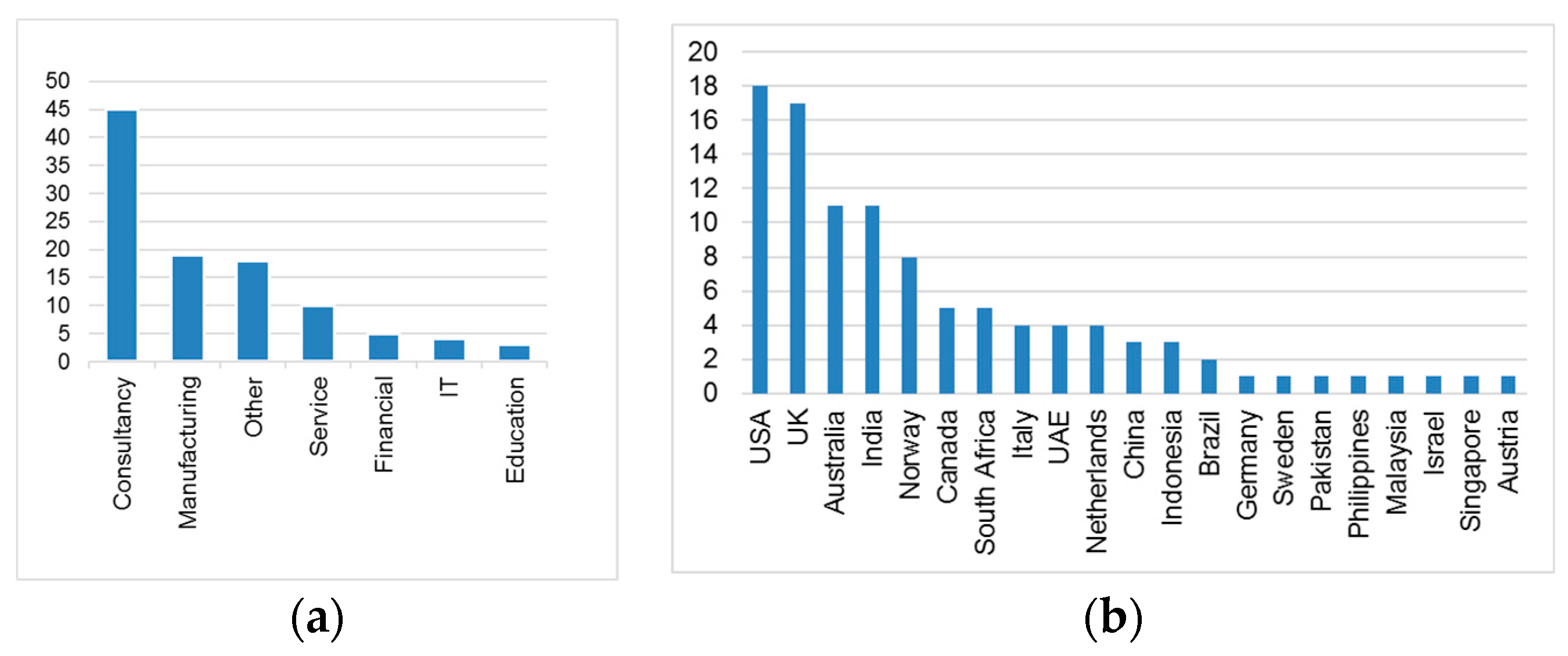

2.1. Survey

2.2. Case Study

3. Why a Cross-Disciplinary Approach

3.1. Comparing the Purpose of PMS and CSA

3.2. Comparing Development of PM and SA Disciplines

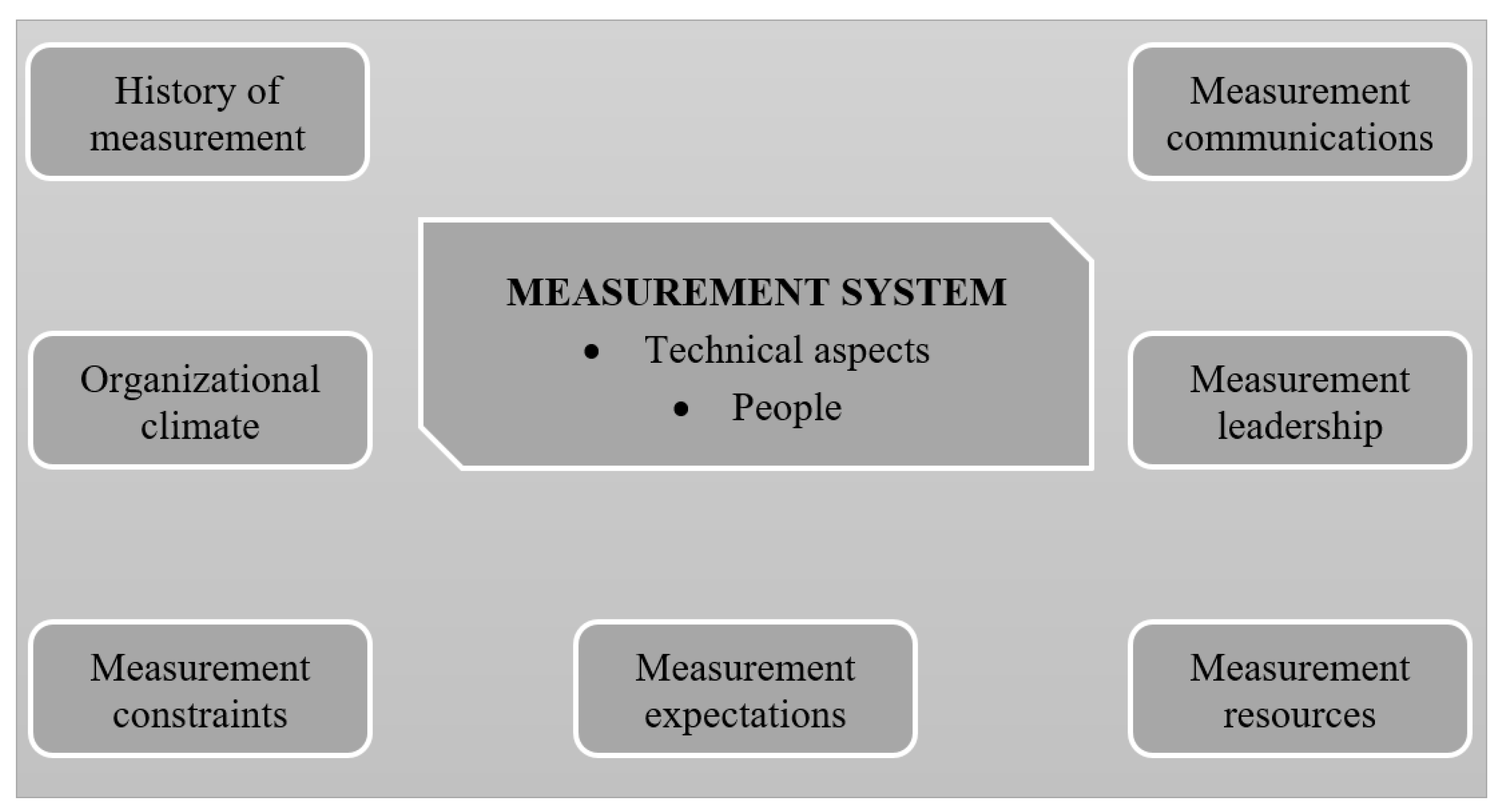

4. Organizational Context for PMS

5. Organizational Context for CSA

5.1. Defining Organizational Context by Using a Cross-Disciplinary Approach

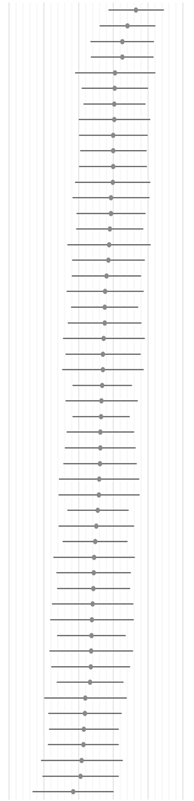

5.2. Validating Organizational Context for CSA Using Online Survey

6. Case Study

6.1. Case Company A

6.1.1. Climate

6.1.2. Resources

6.1.3. Leadership

6.1.4. History

6.1.5. Expectation

6.2. Case Company B

6.2.1. Climate

6.2.2. Resources

6.2.3. Leadership

6.2.4. History

6.2.5. Expectation

6.3. Case Company C

6.3.1. Climate

6.3.2. Resources

6.3.3. Leadership

6.3.4. History

6.3.5. Expectations

6.4. Case Company D

6.4.1. Climate

6.4.2. Resources

6.4.3. Leadership

6.4.4. History

6.4.5. Expectations

6.5. Readiness of the Case Companies to Implement CSA

7. Conclusions and Future Work

Acknowledgments

Conflicts of Interest

References

- Veleva, V.; Ellenbecker, M. Indicators of sustainable production: Framework and methodology. J. Clean. Prod. 2001, 9, 519–549. [Google Scholar] [CrossRef]

- Krajnc, D.; Glavič, P. A model for integrated assessment of sustainable development. Resour. Conserv. Recycl. 2005, 43, 189–208. [Google Scholar] [CrossRef]

- Moneim, A.F.A.; Galal, N.M.; Shakwy, M.E. Sustainable Manufacturing Indicators. In Proceedings of the Global Climate Change: Biodiversity and Sustainability: An International Conference focused on the Arab Mena region and Euromed, Alexandria, Egypt, 15–19 April 2013. [Google Scholar]

- Lu, T.; Gupta, A.; Jayal, A.D.; Badurdeen, F.; Feng, S.C.; Dillon, O.W., Jr.; Jawahir, I.S. A Framework of Product and Process Metrics for Sustainable Manufacturing. In Advances in Sustainable Manufacturing; Seliger, G., Khraisheh, M.M.K., Jawahir, I.S., Eds.; Springer: Berlin/Heidelberg, Germany, 2011; pp. 333–338. [Google Scholar]

- The National Institute of Standards and Technology. Sustainable Manufacturing Indicators Repository. 2011. Available online: http://www.mel.nist.gov/msid/SMIR/Indicator_Repository.html (accessed on 9 October 2014).

- Joung, C.B.; Carrell, J.; Sarkar, P.; Feng, S.C. Categorization of indicators for sustainable manufacturing. Ecol. Indic. 2013, 24, 148–157. [Google Scholar] [CrossRef]

- Singh, S.; Olugu, E.; Fallahpour, A. Fuzzy-based sustainable manufacturing assessment model for SMEs. Clean Technol. Environ. Policy 2014, 16, 847–860. [Google Scholar] [CrossRef]

- Romaniw, Y.; Bras, B.; Guldberg, T. Sustainable manufacturing analysis using an activity based object oriented method. SAE Int. J. Aerosp. 2010, 2, 214–224. [Google Scholar] [CrossRef]

- Rajeev Kumar, K. Evaluating sustainability, environmental assessment and toxic emissions during manufacturing process of RFID based systems. In Proceedings of the IEEE International Symposium on Dependable, Autonomic and Secure Computing, Sydney, Australia, 12–14 December 2011. [Google Scholar]

- Tan, X.; Liu, F.; Dacheng, L.; Li, Z.; Wang, H.; Zhang, Y. Improved Methods for Process Routing in Enterprise Production Processes in Terms of Sustainable Development II. Tsinghua Sci. Technol. 2006, 11, 693–700. [Google Scholar] [CrossRef]

- Jiang, Z.; Zhang, H.; Sutherland, J. Development of an environmental performance assessment method for manufacturing process plans. Int. J. Adv. Manuf. Technol. 2012, 58, 783–790. [Google Scholar] [CrossRef]

- Nidhi, M.B.; Chandran, A.R.; Pillai, V.M. Sustainability assessment of blood bag supply chain: A case study. In Proceedings of the Global Humanitarian Technology Conference, Trivandrum, India, 23–24 August 2013. [Google Scholar]

- Mota-López, D.R.; Sánchez-Ramírez, C.; González-Huerta, M.Á.; Jiménez-Nieto, Y.A.; Rodríguez-Parada, A. A systemic conceptual model to assess the sustainability of industrial ecosystems. In New Perspectives on Applied Industrial Tools and Techniques; Springer: Cham, Switzerland, 2018; pp. 451–475. [Google Scholar]

- Harik, R.; El Hachem, W.; Medini, K.; Bernard, A. Towards a holistic sustainability index for measuring sustainability of manufacturing companies. Int. J. Prod. Res. 2015, 53, 4117–4139. [Google Scholar] [CrossRef]

- Chen, D.; Thiede, S.; Schudeleit, T.; Herrmann, C. A holistic and rapid sustainability assessment tool for manufacturing SMEs. CIRP Ann. Manuf. Technol. 2014, 63, 437–440. [Google Scholar] [CrossRef]

- Bina, O. Context and Systems: Thinking more broadly about effectiveness in strategic environmental assessment in China. Environ. Manag. 2008, 42, 717–733. [Google Scholar] [CrossRef] [PubMed]

- Runhaar, H.; Driessen, P.P.J. What makes strategic environmental assessment successful environmental assessment? The role of context in the contribution of SEA to decision-making. Impact Assess. Proj. Apprais. 2007, 25, 2–14. [Google Scholar] [CrossRef]

- Guijt, I.; Moiseev, A. Resource Kit for Sustainability Assessment. Part B: Facilitators’ Materials; IUCN-The World Conservation Union: Gland, Switzerland; Cambridge, UK, 2001; p. 172. [Google Scholar]

- Robinson, J. Squaring the circle? Some thoughts on the idea of sustainable development. Ecol. Econ. 2004, 48, 369–384. [Google Scholar] [CrossRef]

- Waas, T.; Hugé, J.; Verbruggen, A.; Wright, T. Sustainable development: A bird’s eye view. Sustainability 2011, 3, 1637–1661. [Google Scholar] [CrossRef]

- Davidson, K. A typology to categorize the ideologies of actors in the sustainable development debate. Sustain. Dev. 2014, 22, 1–14. [Google Scholar] [CrossRef]

- Ramsey, J.L. On not defining sustainability. J. Agric. Environ. Ethics 2015, 28, 1075–1087. [Google Scholar] [CrossRef]

- Porter, A.L.; Chubin, D.E. An indicator of cross-disciplinary research. Scientometrics 1985, 8, 161–176. [Google Scholar] [CrossRef]

- Bordons, M.; Morillo, F.; Gómez, I. Analysis of cross-disciplinary research through bibliometric tools. Handb. Quant. Sci. Technol. Res. 2004, 10031003, 437–456. [Google Scholar]

- Bailey, K.D. Towards unifying science: Applying concepts across disciplinary boundaries. Syst. Res. Behav. Sci. 2001, 18, 41–62. [Google Scholar] [CrossRef]

- Matell, M.S.; Jacoby, J. Is there an optimal number of alternatives for Likert scale items? Study I: Reliability and Validity. Educ. Psychol. Meas. 1971, 31, 657–674. [Google Scholar] [CrossRef]

- Yin, R.K. Case Study Research: Design and Methods; SAGE Publications: Thousand Oaks, CA, USA, 2013. [Google Scholar]

- Ackerson, L.G. Literature Search Strategies for Interdisciplinary Research: A Sourcebook for Scientists and Engineers; Scarecrow Press: Lanham, MD, USA, 2006. [Google Scholar]

- Garengo, P.; Biazzo, S.; Bititci, U.S. Performance measurement systems in SMEs: A review for a research agenda. Int. J. Manag. Rev. 2005, 7, 25–47. [Google Scholar] [CrossRef]

- Neely, A.; Gregory, M.; Platts, K. Performance measurement system design: A literature review and research agenda. Int. J. Oper. Prod. Manag. 1995, 15, 80–116. [Google Scholar] [CrossRef]

- Grafton, J.; Lillis, A.M.; Widener, S.K. The role of performance measurement and evaluation in building organizational capabilities and performance. Account. Org. Soc. 2010, 35, 689–706. [Google Scholar] [CrossRef]

- Chenhall, R.H. Integrative strategic performance measurement systems, strategic alignment of manufacturing, learning and strategic outcomes: An exploratory study. Account. Org. Soc. 2005, 30, 395–422. [Google Scholar] [CrossRef]

- Bond, A.; Morrison-Saunders, A. Challenges in Determining the Effectiveness of Sustainability Assessment; Routledge, Taylor & Francis Group: Oxford, UK, 2013. [Google Scholar]

- Taisch, M.; Sadr, V.; May, G.; Stahl, B. Sustainability assessment tools—state of research and gap analysis. In Advances in Production Management Systems. Sustainable Production and Service Supply Chains; Prabhu, V., Taisch, M., Kiritsis, D., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 426–434. [Google Scholar]

- Ness, B.; Urbel-Piirsalu, E.; Anderberg, S.; Olsson, L. Categorising tools for sustainability assessment. Ecol. Econ. 2007, 60, 498–508. [Google Scholar] [CrossRef]

- Pope, J.; Annandale, D.; Morrison-Saunders, A. Conceptualising sustainability assessment. Environ. Impact Assess. Rev. 2004, 24, 595–616. [Google Scholar] [CrossRef]

- Waas, T.; Hugé, J.; Block, T.; Wright, T.; Benitez-Capistros, F.; Verbruggen, A. Sustainability assessment and indicators: tools in a decision-making strategy for sustainable development. Sustainability 2014, 6, 5512–5534. [Google Scholar] [CrossRef]

- Moldavska, A.; Welo, T. Development of manufacturing sustainability assessment using systems thinking. Sustainability 2016, 8, 5. [Google Scholar] [CrossRef]

- Schneider, A.; Meins, E. The unrecognized future dimension of corporate sustainability assessment. In Proceedings of the 4th CORE Conference 2009: The Potential of Corporate Social Responsiblity (CSR) to Suport the Integration of Core EU Strategies, Berlin, Germany, 15–16 June 2009. [Google Scholar]

- Hon, K.K.B. Performance and evaluation of manufacturing systems. CIRP Ann. Manuf. Technol. 2005, 54, 139–154. [Google Scholar] [CrossRef]

- Tung, A.; Baird, K.; Schoch, H.P. Factors influencing the effectiveness of performance measurement systems. Int. J. Oper. Prod. Manag. 2011, 31, 1287–1310. [Google Scholar] [CrossRef]

- Sheate, W.R. The evolving nature of environmental assessment and management: Linking tools to help deliver sustainability. In Tools, Techniques & Approaches for Sustainability Collected Writings in Environmental Assessment Policy and Management; World Scientific: London, UK, 2010; pp. 1–29. [Google Scholar]

- Morrison-Saunders, A.; Pope, J.; Gunn, J.A.; Bond, A.; Retief, F. Strengthening impact assessment: A call for integration and focus. Impact Assess. Proj. Apprais. 2014, 32, 2–8. [Google Scholar] [CrossRef]

- Franco, M.; Bourne, M. Factors that play a role in “managing through measures”. Manag. Decis. 2003, 41, 698–710. [Google Scholar] [CrossRef]

- Ahmad, K.; Zabri, S.M.; Omar, S.S. Factors affecting the adoption of performance measurement system among Malaysian SMEs. Adv. Sci. Lett. 2015, 21, 1430–1434. [Google Scholar] [CrossRef]

- Keathley, H.; van Aken, E.; Letens, G. Performance measurement system implementation: Systematic review of success factors. In Proceedings of the International Annual Conference of the American Society for Engineering Management, Virginia Beach, VA, USA, 15–18 October 2014. [Google Scholar]

- Keathley, H.; Van Aken, E. Systematic literature review on the factors that affect performance measurement system implementation. In Proceedings of the Institute of Industrial Engineers (IIE) Annual Conference, San Juan, Puerto Rico, 18–22 May 2013. [Google Scholar]

- Morrison-Saunders, A.; Fischer, T.B. What is wrong with EIA and sea anyway? A sceptic’s perspective on sustainability assessment. J. Environ. Assess. Policy Manag. 2006, 8, 19–39. [Google Scholar] [CrossRef]

- Bond, A.; Morrison-Saunders, A.; Pope, J. Sustainability assessment: The state of the art. Impact Assess. Proj. Apprais. 2012, 30, 53–62. [Google Scholar] [CrossRef]

- Sala, S.; Ciuffo, B.; Nijkamp, P. A systemic framework for sustainability assessment. Ecol. Econ. 2015, 119, 314–325. [Google Scholar] [CrossRef]

- Alrøe, H.F.; Noe, E. Sustainability assessment and complementarity. Ecol. Soc. 2016, 21, 30. [Google Scholar] [CrossRef]

- Hugé, J.; Waas, T.; Dahdouh-Guebas, F.; Koedam, N.; Block, T. A discourse-analytical perspective on sustainability assessment: Interpreting sustainable development in practice. Sustain. Sci. 2013, 8, 187–198. [Google Scholar] [CrossRef]

- Gibson, R.B. Beyond the pillars: Sustainability assessment as a framework for effective integration of social, economic and ecological considerations in significant decision-making. J. Environ. Assess. Policy Manag. 2006, 8, 259–280. [Google Scholar] [CrossRef]

- Pintér, L.; Hardi, P.; Martinuzzi, A.; Hall, J. Bellagio STAMP: Principles for sustainability assessment and measurement. Ecol. Indicat. 2012, 17, 20–28. [Google Scholar] [CrossRef]

- Bond, A.; Morrison-Saunders, A.; Howitt, R. Framework for comparing and evaluating sustainability assessment practice. In Sustainability Assessment: Pluralism, Practice and Progress; Routledge: London, UK, 2013; pp. 117–131. [Google Scholar]

- Bond, A.; Pope, J.; Morrison-Saunders, A. Introducing the roots, evolution and effectiveness of sustainability assessment. In Handbook of Sustainability Assessment; Morrison-Saunders, A., Pope, J., Bond, A., Eds.; Edward Elgar: Cheltenham, UK, 2015; pp. 3–19. [Google Scholar]

- Grace, W.; Pope, J. A systems approach to sustainability assessment. In Handbook of Sustainability Assessment; Morrison-Saunders, A., Pope, J., Bond, A., Eds.; Edward Elgar: Cheltenham, UK, 2015; pp. 285–320. [Google Scholar]

- Gibson, R.B. Sustainability assessment: Basic components of a practical approach. Impact Assess. Proj. Apprais. 2006, 24, 170–182. [Google Scholar] [CrossRef]

- Cashmore, M.A.; Kørnøv, L. The changing theory of impact assessment. In Sustainability Assessment: Pluralism, Practice and Progress; Bond, A., Morrison-Saunders, A., Howitt, R., Eds.; Routledge: London, UK, 2013; pp. 18–33. [Google Scholar]

- Gibson, R.B.; Hassan, S. Sustainability Assessment: Criteria and Processes; Earthscan: London, UK; Sterling, VA, USA, 2005; p. 254. [Google Scholar]

- Dalal-Clayton, B.; Sadler, B. Sustainability Appraisal: A Sourcebook and Reference Guide to International Experience; Taylor & Francis: London, UK, 2014. [Google Scholar]

- Spitzer, D.R. Transforming Performance Measurement: Rethinking the Way We Measure and Drive Organizational Success; American Management Association: New York, NY, USA, 2007. [Google Scholar]

- Bititci, U.S.; Mendibil, K.; Nudurupati, S.; Garengo, P.; Turner, T. Dynamics of performance measurement and organisational culture. Int. J. Oper. Prod. Manag. 2006, 26, 1325–1350. [Google Scholar] [CrossRef]

- Taylor, A.; Taylor, M. Factors influencing effective implementation of performance measurement systems in small and medium-sized enterprises and large firms: A perspective from Contingency Theory. Int. J. Prod. Res. 2014, 52, 847–866. [Google Scholar] [CrossRef]

| Company | Number of Employees | Turnover (Million Euro) | Type of Industry | Interviewed Functions |

|---|---|---|---|---|

| Company A | 40 | 7 | Production of plastic products | 8 managers, covering functions: Production, Maintenance, R&D, Industrialization, Improvement, Logistics, Purchasing, Economy, HMS, Quality, CEO |

| Company B | 169 | 45 | Production of automobile parts | 11 managers, covering functions: Tooling, CEO, R&D, Industrialization, Production, Maintenance, HMS, Quality, Logistics, Purchasing, Prototyping. |

| Company C | 47 | 12 | Production of equipment for fishery | 8 managers, covering functions: CEO, Supply chain, HR, Marketing, R&D, Purchasing, Service, Sales, Production |

| Company D | 77 | 27 | Production of parts for the public utility industry | 8 managers, covering functions: CEO, Marketing, Sales, Maintenance, Finance, Production, R&D, Logistics, Quality, Purchasing |

| Time Frame | Prevailing Research Focus |

|---|---|

| 1970s | PM was first established as an independent discipline |

| 1990s | Focus on multi-dimensional PMS (sustainability is one of the dimensions) |

| The mid-1990s | Focus on the design of PMS; what to measure? How to develop PMS? |

| The late 1990s | Distinguishing design, implementation, use and review of PMS |

| The late 1990s–Early 2000s | Implementation of PMS and use; how to implement and use? |

| 2000s | Factors of the success of PMS & Context for the PMS; what makes PMS a successful PMS? |

| The mid-2000s | PMS as a dynamic, holistic system |

| Time Frame | Prevailing Research Focus |

|---|---|

| 1970 | The introduction of environmental impact assessment |

| Early 1990s | Development of SEA |

| The mid-1990s | ‘Sustainability Assessment’ became evident as the third generation of impact assessment (integration of economic, environmental and social aspects) |

| 2000s–2010s | Focus on the purposes, principles and design of SA, indicators development |

| 2000s–2010s | Development of generic frameworks for SA and case-based tools for different levels (product, process, system, organization, industrial sector, etc.), including CSA (organizational level) |

| The mid-2010s | “the integration problem” and “the implementation problem” are discussed |

| 2010s | SA as a holistic and complexity-based tool, focus on the technical side |

| Desired Outcomes of the PMS Implementation Phase Include [63] | Desired Outcomes of the CSA Implementation Phase Include |

|

|

| Desired Outcomes of the PMS Use Phase Include [41] | Desired Outcomes of the CSA Use Phase Include |

|

|

1. Organizational Climate:

| 2. Resources for SA:

|

3. Leadership:

| |

4. History of Measurement/Assessment:

| |

5. Expectations from SA:

| |

6. Communication:

|

| Factors | I Do Not Know, % | Average | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|---|---|

| Leadership commitment to/support of assessment | 5.8 | 4.65 |  | |||||

| Organization has a sustainability strategy | 2.9 | 4.41 | ||||||

| Availability of informational/data collection capabilities | 1.9 | 4.25 | ||||||

| Understanding of purposes and benefits of assessment | 1.9 | 4.25 | ||||||

| Focus on ‘continuous improvement’ in an organization | 6.7 | 4.05 | ||||||

| Communication of expectations/strategy | 4.8 | 4.04 | ||||||

| The perceived benefits of assessment | 3.8 | 4.03 | ||||||

| Availability of knowledge/skills in SA | 1 | 4.03 | ||||||

| Availability of time | 1 | 4.00 | ||||||

| Culture that encourages discussion around assessment | 3.8 | 4.00 | ||||||

| Visible use of the system and results | 3.8 | 3.99 | ||||||

| Dissemination of knowledge and results | 4.8 | 3.98 | ||||||

| Availability of human resources | 0 | 3.93 | ||||||

| Active accountability/ownership—part of culture | 3.8 | 3.93 | ||||||

| A quality management culture | 5.8 | 3.90 | ||||||

| Joined-up thinking at all levels of the organization | 6.7 | 3.88 | ||||||

| Organization has customer/stakeholder focus | 4.8 | 3.86 | ||||||

| Relationship of assessment to daily responsibilities | 2.9 | 3.80 | ||||||

| Organizational maturity | 1.9 | 3.76 | ||||||

| Managerial capacity | 3.8 | 3.75 | ||||||

| Leadership skills/style | 8.7 | 3.75 | ||||||

| Legislative mandates | 1.9 | 3.73 | ||||||

| Internal interest groups | 5.8 | 3.70 | ||||||

| Management style | 6.7 | 3.70 | ||||||

| Expectations about the result of assessment | 2.9 | 3.68 | ||||||

| Assessment is an initiative pushed by a parent company | 14.4 | 3.66 | ||||||

| Expectations from the use of assessment | 4.8 | 3.65 | ||||||

| Previous experience with measurement/assessment | 1.9 | 3.63 | ||||||

| Organization is oriented at ‘organizational learning’ | 8.7 | 3.62 | ||||||

| Action-orientation of the organization | 4.8 | 3.62 | ||||||

| Employees involvement in assessment | 3.8 | 3.59 | ||||||

| Incremental goal setting | 6.7 | 3.59 | ||||||

| Level of processes formalization in an organization | 13.5 | 3.56 | ||||||

| Employees perception of assessment | 5.8 | 3.51 | ||||||

| State of previous metrics/indicators | 6.7 | 3.47 | ||||||

| Common language in an organization | 3.8 | 3.45 | ||||||

| Availability of technical skills | 0 | 3.43 | ||||||

| Employees empowerment | 3.8 | 3.43 | ||||||

| Availability of capital resources | 11 | 3.41 | ||||||

| Resistance to measurement/ policy resistance | 4.8 | 3.38 | ||||||

| Use of early warning system/monitoring & evaluation | 8.7 | 3.37 | ||||||

| Resistance to measurement/assessment | 1.9 | 3.36 | ||||||

| Domination of reactive approach in an organization | 8.7 | 3.35 | ||||||

| Misconception of performance measurement | 15.4 | 3.33 | ||||||

| Incentive programs in organization | 2.9 | 3.20 | ||||||

| Priority/abandonment of new initiatives | 14.4 | 3.18 | ||||||

| Unsatisfactory results from assessment | 12.5 | 3.15 | ||||||

| Information systems support (IT support) | 1 | 3.14 | ||||||

| Culture that does not punish people’s errors | 3.8 | 3.09 | ||||||

| Individual behavioural characteristics | 9.6 | 3.05 | ||||||

| Employees have a fear of consequences | 9.6 | 2.84 | ||||||

| Company A | Company B | Company C | Company D |

|---|---|---|---|

| Climate | |||

| Strong focus on continuous improvement, seeing a value of negative results of PM for improvements. Strong focus on the customers. Looking for new ideas and innovation-oriented, proactive approach. Good cooperation between departments and see the value of interdisciplinary cooperation. Positive attitude toward measurements. Do not see a need for a new measurement system. Difficulties with new software. Resistance to measurements that cannot influence. | Strong focus on continuous improvement, use data for identification of improvements. Lack resources for improvement projects. The traditional view on the customers, i.e., satisfy the minimum needs to get profit. Value innovation but lack time.Weak cooperation between departments. Weak link between strategy, goals and measurements. Some measurements are seen as meaningless. See a strong need for a new measurement system. Resistance to measurements that cannot influence. | Great focus on continuous improvements. Strong focus on the customers. No experience with formal measurements. A proactive approach to product development. Close communication within the company. Mostly positive attitude toward measurements | Focus on continuous improvements but lack time for improvement initiatives. Prevailing reactive approach. Balancing customer satisfaction and a parenting company interests. Challenges in cross-department joined-up thinking. In general, positive attitude toward measurements but partly negative toward reporting to parenting company. |

| Resources | |||

| Possibly limited data collection capabilities. Lack of time. | Possibly limited data collection capabilities. Lack of time. | Lack of time. Limited data collection capabilities. | Potentially good data collection capabilities. |

| Leadership | |||

| ISO14001 certified. Positive attitude toward sustainable development. Have basic knowledge about sustainable development. No sustainability strategy. Leadership is sustainability-aware. | ISO14001 certified. See a value in sustainable development. Have some associations with sustainable development but prioritize economy. No sustainability strategy. | No ISO14001 certification. No sustainability strategy or any formal strategy. Very limited knowledge about sustainable development. | No sustainability strategy. ISO 14001 certified. Positive attitude toward sustainable development but mainly from economic benefits. |

| History | |||

| Distributed responsibility for performance measurements. Do not have an overview of all measurements in the company. | Distributed responsibility for performance measurements. Do not have an overview of all measurements in the company. No systematic work with performance measurements. | No formal measurement system No measurement culture. | Distributed responsibility for performance measurements. Do not see value in the integrated measurement system. |

| Expectations | |||

| Expressed clear expectations for a possible assessment system. | Expressed clear expectations for a possible assessment system. | Almost no expectations to assessment system. | Some expectations to a possible assessment system. Partly not seeing a need for a new system, or being strongly against it |

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Moldavska, A. Defining Organizational Context for Corporate Sustainability Assessment: Cross-Disciplinary Approach. Sustainability 2017, 9, 2365. https://doi.org/10.3390/su9122365

Moldavska A. Defining Organizational Context for Corporate Sustainability Assessment: Cross-Disciplinary Approach. Sustainability. 2017; 9(12):2365. https://doi.org/10.3390/su9122365

Chicago/Turabian StyleMoldavska, Anastasiia. 2017. "Defining Organizational Context for Corporate Sustainability Assessment: Cross-Disciplinary Approach" Sustainability 9, no. 12: 2365. https://doi.org/10.3390/su9122365

APA StyleMoldavska, A. (2017). Defining Organizational Context for Corporate Sustainability Assessment: Cross-Disciplinary Approach. Sustainability, 9(12), 2365. https://doi.org/10.3390/su9122365