Abstract

The core of the digital transition is the representation of all kinds of real-world entities and processes and an increasing number of cognitive processes by digital information and algorithms on computers. These allow for seemingly unlimited storage, operation, retrieval, and transmission capacities that make digital tools economically available for all domains of society and empower human action, particularly combined with real-world interfaces such as displays, robots, sensors, 3D printers, etc. Digital technologies are general-purpose technologies providing unprecedented potential benefits for sustainability. However, they will bring about a multitude of potential unintended side effects, and this demands a transdisciplinary discussion on unwanted societal changes as well as a shift in science from analog to digital modeling and structure. Although social discourse has begun, the topical scope and regional coverage have been limited. Here, we report on an expert roundtable on digital transition held in February 2017 in Tokyo, Japan. Drawing on a variety of disciplinary backgrounds, our discussions highlight the importance of cultural contexts and the need to bridge local and global conversations. Although Japanese experts did mention side effects, their focus was on how to ensure that AI and robots could coexist with humans. Such a perspective is not well appreciated everywhere outside Japan. Stakeholder dialogues have already begun in Japan, but greater efforts are needed to engage a broader collection of experts in addition to stakeholders to broaden the social debate.

1. Introduction and Motivations for the Roundtable

Digital transition is all the rage, and the trend is accelerating. Even in the last few years, we have seen significant developments. Computers have surpassed human capabilities to recognize images. The world’s Go champion was defeated by an artificial intelligence program developed by Google DeepMind. The auto industry is now fixated on producing self-driving cars. Drones are increasingly used for shooting videos, and robots are being used not only for manufacturing automation but also in customer service for phone calls. This trend, conspicuous in developed countries, is also occurring in developing economies as smartphones have become ubiquitous worldwide. Recognizing the potential fruits of digital transition, governments across the globe are implementing policies to promote innovation, with Germany’s Industrie 4.0 being perhaps the most prominent (see Appendix A for discussions on key terms such as digital transition and digital technologies).

Japan is no exception. The Council on Science, Technology, and Innovation, a cabinet-level decision-making body on science policy issues, crafted the Fifth Basic Plan for Science [], which was subsequently adopted by the Cabinet of the Government of Japan in January 2017. The plan coined the term “Society 5.0” to emphasize the expected widespread diffusion of various smart technologies (information and communication technologies) and their associated socioeconomic gains. (The term Society 5.0 implies that, after the digital transition, we will see a fifth stage of social development. The previous stages are the hunter–gatherer society, agricultural society, industrial society, and information society [] (p. 13).) The plan laid out a basic policy designed to accelerate innovation and capture the economic benefits, and private industry too is investing heavily in technology.

As with any engineering domain, the new suite of technologies has various sustainability, legal, social, and political implications. Researchers, thought leaders, and governments across the globe are paying increasing attention to both positive and negative aspects of the technologies [,,,,,], and some have begun formulating principles to guide the development of technologies [,,,].

In Japan too, experts (both within and outside the government) and stakeholders have begun discussing issues associated with the digital transition (Table 1). For instance, in February 2017, the Japanese Society for Artificial Intelligence (JSAI) formally adopted the ethical principles [] developed by its Ethics Committee (AE, the third author, is a member of the committee) []. JSAI is now in collaboration with the IEEJ Global Initiative and the Future of Life Initiative to further extend societal debate []. An expert committee at the Ministry of Internal Affairs and Communications (MIC) developed guidelines for research on a network system of AI after international consultation (AE and HS, the sixth author, were committee members) []. See also a review of such policy-relevant documents (in the domain of artificial intelligence) [].

Table 1.

Initiatives on societal implications of digital transition in Japan. Many of them treat AI as a central issue but inevitably touch on robotics, big data, etc. The list is not exhaustive.

These initiatives are laudable, but they suffer from two limitations: (1) the issues discussed have been relatively narrow, excluding potentially important areas; and (2) attempts thus far have been led by experts, mostly in Western societies, despite the aforementioned Japanese initiatives. These two issues are interconnected.

First, the side effects of the digital transition will not be contained in a small number of social domains since digital technologies are general-purpose technologies, necessitating interdisciplinary research and transdisciplinary discourse. In fact, this is one of the essential points raised by the Society 5.0 concept, as mentioned above. However, relatively few issues such as potential AI-induced unemployment, compromised privacy, and super-intelligent machines overtaking the world have received disproportionate levels of interest (see, e.g., []). The role of digital technologies has rarely been discussed in the context of the sustainable development goals (SDGs) of the United Nations. For instance, SDG8 (promoting productive and decent work for all) could come under significant pressure if AI and robots begin replacing many manufacturing and service-sector jobs. There are a few exceptions, such as a study on ASEAN [], but the treatment is often limited. A governmental report refers to SDGs only briefly in a footnote []. Another issue is the interaction between digital technologies and other technology domains. Combining digital technology with nanotechnology and biotechnology could have significant impacts on the world’s biosphere or on human genetics. These are only a few examples, but they highlight the need for greater attention to this topic.

Second, a number of initiatives have been started, but they have been led mostly by experts and have originated predominantly from Western societies, a point already acknowledged by an IEEE report []. Voices from non-Western societies and developing economies are notably absent, for example, in the previously mentioned debate on AI-induced unemployment. In both sustainability science and science and technology studies, there has recently been a strong emphasis on engagement with the lay public and stakeholders. In particular, scholars have been calling for “responsible innovation” [,], but because of the rapid pace of digital transition, more work is needed on this front. For instance, a policy paper on responsible innovation by the European Commission in 2013 does not include a single word about artificial intelligence []. A prior report does mention artificial intelligence but only twice, and it does not place a priority on this aspect at all [].

Clearly, emerging technologies must be viewed in conjunction with their social, cultural, and economic contexts. What kinds of implications does digital transition have for a country such as Japan, a high-income democracy situated in East Asia? Japan is unique when it comes to AI and robots; traditionally, the nation has been at the cutting edge of cultures that fuse technology with various objects and traditions. Examples include AIBO, a robotic pet dog developed by Sony (Tokyo, Japan); Akihabara, an electronic town; and a host of animated characters including Doraemon (a robotic cat from the 22nd century). There are even hotels with robot receptionists [].

Obviously, digital technology could give a great boost to the achievement of several sustainable development goals. For example, ride-sharing and autonomous vehicles could enable a substantial reduction in emissions of greenhouse gases, thereby decreasing the cost of climate change mitigation. Nevertheless, we argue that unintended side effects have not been thoroughly explored yet, and we focus on side effects in this article.

Against this background, we convened a workshop on 19 February 2017 at the University of Tokyo to discuss unintended side effects of digital transition. The workshop started from the hypothesis that—compared to digital technology development—previous studies have been restricted to a small set of unintended side effects (unsee(ns)) that have the potential to endanger systems and structures that are conceived as valuable and that contribute to the resilience of sociotechnological systems.

The participants of the roundtable were asked to present several thought-provoking ideas that could result in propositions on major statements and concerns that could help direct research and research funding on sustainable digital environments. Thus, the identification of major overarching changes, impacts, and concerns has been the starting point. In addition, the roundtable was designed to help answer the following questions:

- What are the major present or future unintended side effects that call for specific attention and understanding?

- How might interdisciplinary collaborations across different disciplines contribute to solving the problems caused by unintended side effects?

- What partnerships of industry, business, government, non-governmental organizations (NGOs), or the public at large would be interested in co-designing transdisciplinary processes in which science and practice work together to learn about the sustainable use of digital technologies?

In this paper, we—all the participants of the roundtable—summarize the discussions and exchanges during and after the roundtable, identifying possible research agendas on digital transition in the context of Japan. Section 2 describes the procedure of the roundtable. Section 3 presents the phenomena associated with digital transition we identified. Section 4 discusses the unintended side effects of such social changes. Discussions on the results and the way forward are presented in Section 5.

2. Procedure

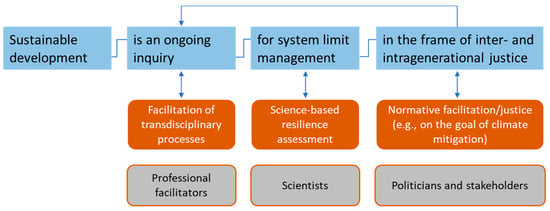

Definitions of the presented key concepts such as sustainability and transdisciplinarity were communicated in a workbook before the expert roundtable. Although there are multiple definitions of sustainability and related concepts such as sustainable development [], they share several essential elements. In this article, we apply the definition developed by a study that included 21 leaders of the Alliance for Global Sustainability projects of Chalmers University, ETH Zurich, MIT, and the University of Tokyo on technology innovation []. Sustainable development is seen as an (i) ongoing inquiry on (ii) system-limit management in the frame of (iii) inter- and intragenerational justice []. We argue that the impacts of change can be assessed using the Sustainable Development Goals []; however, the roundtable focuses on science-based resilience assessment (see Figure 1). By transdisciplinarity, we refer to the integration of knowledge science and practice in projects that are (ideally) co-led by a legitimized decision maker and a scientist [,].

Figure 1.

Definition of sustainability (figure taken from []).

Each participant was asked to elaborate and circulate his/her inputs before the roundtable and to present the key ideas and propositions on a few slides. All verbal communication during the presentation and the discussion was transcribed. All slides and the transcriptions of the presentations and discussions were carefully analyzed by RS (last author) and MS (first author). The resulting structured summary was sent to all participants for corrections, revisions, and additions. We distinguished between: (i) changes in digital transitioning; and (ii) concerns, unintended side effects, and needed human actions. A first list of identified issues has been sent to the participants for corrections and additions.

Following the expert roundtable, in a Delphi-like procedure, each participant was asked to formulate three propositions on digital transition based on the roundtable discussions, and comment on them in up to 150 words. These propositions are presented in Appendix B and served as input for an European and a planned North American expert roundtable. Several of the propositions are presented in the discussion.

3. Phenomena: Toward an Understanding of the Relationship between Digital Technologies and Social Systems

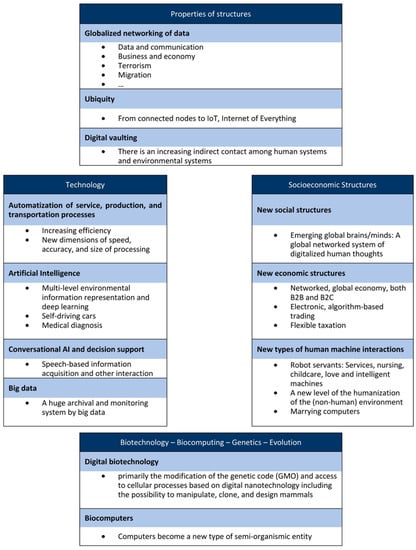

In our first step, we present the changes that have been addressed in the seven presentations. All talks addressed the society–technology relationship, which is presented in the two boxes in the middle of Figure 2. (Note that Figure 2 is mostly based on presentations and discussions during the roundtable, and less so on the propositions in Appendix B. Wherever applicable, we refer to Appendix B.)

Figure 2.

Identified domains of digital changes.

All contributions discussed fundamental changes and the globalization of system structures (see Globalized networking at the top of Figure 2). These refer to the networking of data and communication, business and economics, terrorism and migration (see Figure 2). Computers and sensors are increasingly ubiquitous and accompanied by the increasing indirectness of human communication with the environment, which we call digital vaulting or a digital curtain. This indicates that the individual and other human systems are becoming engulfed by digitalized information and communication media.

On a macro, phenomenological level, the automatization of industrial productivity is subject to increasing efficiency, speed, and accuracy. These are enabled by artificial-intelligence-based machines and computers that can perform certain tasks better, such as playing board games [] and voice [] and image recognition [].

The greatest progress in technology in recent years has been achieved in AI. The performance of the connectionist neural network approach attained a new level by “deep learning” [], which is characterized by multilevel representation, economic correction/rectifying algorithms, and the encoding of large networks. Machine language processing and image understanding have improved fundamentally with many commercial applications in conversational AI and decision support. These innovations are based on the tremendous growth of computational capacity, in accordance with Moore’s law [], and the speed of retrieval, processing, and communication of big data [].

If we look at fundamental innovation in regard to socioeconomic structures, the cultural and media imperialism of globalized radio and TV starting in the 1960s from continental and language clusters was later supplemented by global social media []. However, there are also new dimensions and types of human–machine interactions. For instance, an AI algorithm has taken a seat on a Hong Kong company’s board [], and a French woman has designed a 3D printed robot that she wants to marry because she is sexually attracted to it []. Thus, both communication among humans and the interaction between machines and human systems are in a state of rapid change.

For most of the Japanese participants of the roundtable since its origin, AI and digital technologies have had a strong physical, electronic engineering background and—as basic concepts of cybernetics—roots in biology and technology. The presentation of the (European) initiator of the roundtable revealed that DNA, as the basis of genetics, is deeply rooted in digital (quaternary) numbers and is a genuine digital construct. There is a significant ongoing progress in genetic engineering and biosynthetics, which is poised to open the door to a new stage of evolution. Biocomputing could overcome some of the obstacles to further advance of conventional computing, including storage []. These developments have the potential to raise new questions in many domains, which we illuminate below.

The Japanese–Asian culture seems to be more open to the robotic vision in which certain services that have been traditionally provided by humans (servants, in particular) are replaced my AI-driven machines. This openness allows for exploring new types of human–environment interactions. Examples such as the Henn-na Hotel (robot hotel) [] may be considered real-world laboratories or transdisciplinarity labs [] in which people from science and practice experience, understand, conceptualize, and appraise new settings of human life. A major problem related to the development of an integrative view on the new relationships of social systems and human systems to science systems in this context is that only a small portion of funding for scientific research is targeted for the social sciences.

4. Unintended Side Effects and Concerns Related to Sustainability

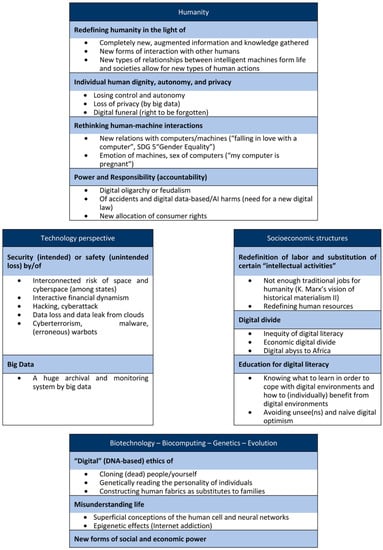

Next, we describe potential unintended side effects of digital transition discussed in the roundtable and in the propositions in Appendix B. A key statement of the roundtable has been that we have to redefine humanity in light of real-time, augmented, digitally vaulted, globally networked, and communicating humans by digital media and a new type of humanization of machines (see Figure 3). (Note that Figure 3 is mostly based on presentations and discussions during the roundtable, and less so on the propositions in Appendix B. Wherever applicable, we refer to Appendix B, which is an important data source.) This will be related to new dependencies of individuals, groups, economic and governmental actors, and countries on those who provide and offer global Internet services that are under limited national control. Redefining humanity relates to new moral, ethical, and legal rules about owning, operating, or being concerned about the new relations that may emerge with intelligent machines.

Figure 3.

Concerns about changes and unintended side effects.

Naturally, the use of AI, which induces (evolutionary) new relations of human and machine interaction, is a major issue that calls for reflection. At the Acceptable Intelligence with Responsibility Study Group (AIR), a group of young researchers revealed that Japanese stakeholder groups (such as policy makers, the public at large, the ICT community, and social science and humanity researchers) show highly significant differences in regard to how intelligent machines should and might be used in child nursing, elder, health care, disaster prevention, military activities, and transportation []. The concerns about new dimensions of human and machine interactions on the level of the individual refer to moral, ethical, and emotional dimensions such as those having to a wife or spouse, the gender of computers, and the idea that computers might one day take care of young children and older people (see Figure 3).

The discussion suggested that the concerns with respect to Internet oligarchy or digital feudalism [] (i.e., the phenomenon that private companies take control of a semipublic space of personal communication) are of a different nature and call for rethinking about law, responsibility, accountability (e.g., who is responsible for error-based impacts), and controllability (see also propositions of HD and AE in Appendix B). In these contexts, critical questions have also emerged in regard to what type of Internet-based digital culture is supporting or harming democracy (including consumer rights). In general, this is subsumed by the power and responsibility subheading in Figure 3.

Risk can be defined as an evaluation of the loss function of an activity, e.g., the use of digital technologies. Thus, risk is always related to a specific use. To better understand digital risks, the distinction between safety, which is generally an unintended physical issue (and thus more related to machines) and security, which focuses on threats by malintended actors, has been suggested. Concerns related to the technology perspective (see Figure 3) are related to many issues, including cyberwar by state actors, cyberattacks, terrorism by malware, and hacking by state and non-state actors, and are based on human factors that are difficult to control. However, the digital layer of technology dramatically increases the interconnectedness of risks as power systems (see HS’s proposition, Appendix B); emergency systems (including health care); production, trade, and financial systems; administration and governmental systems; mobility systems; and defense and military systems are highly interwoven on a real-time level. Digital-technology security management is more related to endogenous factors of the “dragon king type”, which are large or extreme in impacts and based on internal, endogenous (nonlinear, self-reinforcing, etc.) properties and dynamics []. By contrast, safety management is of a “black swan type” [], i.e., it is an improbable, outlier-like, unexpected phenomenon that is difficult if not impossible to predict. It should be noted that here, only a few aspects of socioeconomic changes, such as AI-induced unemployment [], have been touched upon.

The biotechnological and genetic engineering side (Figure 3) of the Digital Revolution poses fundamental questions about human identity, human–environment interactions, new forms of human power by intellectual properties of genetic codes, and many other questions, including what constitutes a (natural) living being (see RS’s proposition, Appendix B), which we discuss below.

From a human perspective, the mastery of digital genetic engineering has an environmental perspective. When we look at the current large-scale manipulation of generic crops, the prospective genetic manipulation of animals and microorganisms is planned to increase the resilience of food production. However, directed evolution is also a potential means of causing vulnerabilities of ecosystems, as the long-term, bottom-up, niche-based evolutionary stress test is overruled by the large-scale spread of one mutated gene.

Moreover, from a human perspective, digital engineering is a two-sided coin. Our increasing knowledge about the human gene allows for identifying genetic defects, but it is also a key for breeding people for certain purposes. Here, from a historical perspective, the most elementary code of heredity—and thus privacy—is going to become public. This calls for a revision of legal systems and a redefinition of privacy. Likewise, biocomputers include cells whose information processing is based not on (human-programmed) algorithmic structures but on the nature, history, and specificities of cells. This certainly poses new questions about responsibility (e.g., if biocomputers cause unexpected severe harm, see Proposition 1 of RS, Appendix B).

5. Discussion

Our expert roundtable produced a rich set of viewpoints on the possible unintended side effects of the digital transition. Though some participants noted the importance of broadened engagement of stakeholders and the general public, more time during the roundtable was spent on side effects themselves. We therefore expand on this point in this section, connecting the identified unintended side effects and the need for transdisciplinary engagement and societal conversations.

In fact, we believe that the results can serve as a useful launchpad for broadening research discussions among various experts and societal conversations with the public and stakeholders. In particular, our discussions highlight how a Japanese perspective could contribute to the global debate, and how Japan should deal with societal debate on the digital transition within the country.

5.1. Japanese Perspectives in the Global Debate

Regarding the bridge between national and global debates, we have rediscovered that the cultural context matters, even in an expert discussion. Previous studies have demonstrated cultural factors for the variegated perceptions of emerging technologies (e.g., [] for genetically modified food; [] for nanotechnology; [] for geoengineering) as well as digital technologies, particularly for robots [,]. One study [] showed how, even among experts, religious views have an effect on the developmental pathways of robots and artificial intelligence in Japan and the United States, with the Japanese demonstrating a preference for humanoid robots over other forms of artificial intelligence (without a body).

Our expert roundtable confirms this general pattern. Compared to views expressed in North America and Europe, the experts in this workshop merely noted and did not emphasize the risk of technological singularity and artificial general intelligence that outcompetes humankind. This is, in fact, true of the general discussion in Japan; such opinions, while not nonexistent, are rather rare compared to the discussions in the US and Europe; this tendency of the debate in the West was noted by []. EA included a proposition (see Appendix B) which even points at that Western values which become a winner in the digital transition.

Although not emphasized in the course of the expert roundtable, a review of recent reports published in Japan on AI and society indicates that there is a desire among experts to co-inhabit with AI and robots. A governmental report [] and the Japanese AI society’s ethical guidelines [] stress this point (see also the transcript of a public discussion on JSAI’s ethical guidelines []. It is not only about the desires of experts but also shows up in the affinity of the lay public toward humanoids and, for example, the innovative Henn-na Hotel (robot hotel), as mentioned above.

Notwithstanding unique perspectives on digital transition, such perspectives are not well communicated to the outside world. Although there are a multitude of ongoing activities in Japan, these seem to be detached from the global (or, more precisely, mostly Western) debate. The Japanese Society of Artificial Intelligence is reaching out to international bodies bearing some fruits. Still, more efforts are needed to bridge the discussions in Japan and those elsewhere.

In fact, there is already planned a global, online debate by the Future Society, the IEEE, the JSAI, and bluenove, entitled “Governing the Rise of AI”, which is expected to run from September 2017 through February 2018. With JSAI as a partner, Japan will play a key role []. Moreover, in recent years, Japan has taken part in many World Wide Views exercises []. These suggest that there is ample room for further deliberation on the unintended side effects of the digital transition.

5.2. Broadening Domestic Conversations

Because of a wide range of possible unintended side effects of the digital transition, it is crucial to broaden the societal debate on the issue in Japan and to involve experts from a more diverse set of backgrounds as well as the lay public and stakeholders. The activities explained in Table 1 encompass a multitude of disciplinary backgrounds, but the discussions thus far have been limited, as mentioned above. Expanding interdisciplinary discussions and research is of great necessity, for example, to explore the implications of the convergence of digital technology and biotechnology. With regard to societal conversations, a survey [] has included some members of the general public, but it is only a start. Distinguishing not only between “haves” and “have nots” but also between “wants” and “want nots” may be a specific question of interest here (see the propositions of AE in Appendix B).

Admittedly, even at the global stage, there is not enough public engagement. Scientists have begun applying concepts such as responsible innovation to issues like artificial intelligence and similar topics only recently []. However, there is growing consensus on the need for public engagement in emerging technological issues. A recent US opinion survey on gene editing demonstrated that, in spite of differentiated attitudes, there exists a near-unanimous call for public engagement []. Concerning geoengineering, the experts [] and the public [] are in agreement about public engagement as well. This is particularly crucial since the experts are keenly aware of the limited coverage of the discussions thus far [].

Japan has rich experiences in all three categories of public engagement—communication, consultation, and participation []. In regard to participation, one of the events that received the most attention was the deliberative polling used for energy strategies under the previous Democratic Party of Japan []. Although the deliberative polling was conducted under the extraordinary conditions of dealing with the aftermath of the 2011 nuclear disaster at the Fukushima Daiichi power plant, it demonstrated the potential for connecting public deliberation with policy-making.

However, here again, the cultural context matters too. It matters for how public deliberation should be conducted []. In East Asia, where Confucian thinking is culturally embedded, a different mode of engagement might be necessary []. The double challenge of facing the new technology and embracing a new mode of engagement would be a key academic task in Japan.

5.3. Future Work

The present work has reported on the February 2017 roundtable conducted in Tokyo, and has identified many pathways for future research and societal conversations. In fact, fostering domestic conversations more broadly, and bridging the internal conversation with the global dialogue are two key tasks. In so doing, the researchers and practitioners must be mindful of the cultural background of Japan and how it differs from those of other countries and regions.

This paper suffers from many limitations. The roundtable itself was limited to a small number of experts from a limited number of disciplines. In fact, the roundtable was intended as the first of a series of expert roundtables that provide inputs on challenges of science and society with respect to sustainable digital environments. A second European expert roundtable, whose results will be published as a follow-up paper, took place this September in Bonn, Germany. Nevertheless, one should therefore interpret the present results only as indicative. To pursue responsible innovation of AI, we need to revisit the research agenda, along with stakeholders, as has been done in the context of a different emerging technology [].

Acknowledgments

This research was supported by The University of Tokyo.

Author Contributions

Masahiro Sugiyama and Roland W. Scholz conceived of the roundtable and organized it. They have equally contributed to the paper. All authors contributed to the discussions during the roundtable by input material. Masahiro Sugiyama and Roland W. Scholz wrote the first draft based on further written inputs that were written by each author. All authors have been involved in the multistage editing and revisions of the paper.

Conflicts of Interest

The authors declare no conflicts of interest.

Appendix A. Note on Terminology

In this note, we use the phrase “digital transition” to refer broadly to the introduction and diffusion of digital technologies such as the Internet, artificial intelligence, the Internet of things, robotics, and their associated changes in society. Although digital technologies encompass a wide range of technologies, we do not look at specific domains. For our present purpose of dissecting unintended side effects because it is not individual technology but the entirety of combined technologies that affects society. Note that this perspective is not unique to us; for example, a recent book by the founding executive editor of Wired discussed the impacts of the whole of digital technologies [], and both the reports by Japanese governmental advisory committees [,] and a policy report in the United Kingdom Parliamentary Office of Science and Technology [] have emphasized the connectivity of artificial intelligence with robots and sensors.

Note that there are (at least) three similar phrases in the literature: digital revolution, digital transformation, and digital transition. These terms have somewhat different connotations, but we chose to use digital transition so that we could benefit from the “transition” literature.

Digitalization (synonymously used with digitization) describes the representation of a digital or analog object or process in digital representation. A digit is an element of a place number system (or place value system or positional numerical system, i.e., in which the position of a digit matters). A digit has a value with respect to a base (e.g., 2 in the case of binary numbers or 10 in the decimal system). Thus, digital structures are discrete and they allow for computation. Digitalization means more than transferring to a discrete structure. The opposite of digital is analog, which considers continuous (real-number-based continuous changes of aspects of the environment).

Digital technologies are machines that master digital representation and computing. They also include the interfaces between the digital and analog worlds (e.g., sensors, D/A converters, actuators and robots, 3D printers, etc.). The transistor and many other technological inventions contributed to the development of digital computers. We may state that both knowledge about digitalization and about digital technologies induced the technological transition.

We can consider the year 2002 as the start of the digital age if we refer to the criterion that the majority of human-produced information has been stored digitally []. The digital transition began following World War II. The Digital Revolution can be considered a socioeconomic, cultural revolution (in the sense of Schumpeter’s Business Cycles []). The series of expert roundtables on structuring research for sustainable digital environments targets unintended side effects. Thus, we are, on the one hand, on the level of real-world phenomena such as automatization by AI-driven machines or digital devices that produce virtual environments. On the other hand, digitalization strongly changes structures, functions, etc. of the inner science systems. The French sociologist of science and technology, Bruno Latour [], based on thorough investigations of laboratory work inferred that the ubiquitous presence of digital computers “in the material practices of the laboratory reflects a larger shift in the epistemological foundations of science from experiment to simulation“ []. As the aspiration is “structuring research for SDE”, this means that, in addition, the unintended side effects on the science system itself call for a critical view.

To describe sociotechnical changes, we consider (at least) three phrases: Digital Revolution, digital transformation, and digital transition. (Another phrase often used is the “information revolution”). The first is often used with the intention of comparison with the Agricultural, Industrial, and Digital Revolutions. The second is frequently invoked in the management literature. The third one, digital transition, is used less often. However, in the different context of sustainability science, there is a broad body of literature on “transition”, including transition management, energy transition, etc. In this paper, we use the phrase digital transition to convey that there are many lessons to be learned from such literature to discuss the issue at hand.

Appendix B. Propositions on the Future Perspectives on Digital Transition

Appendix B.1. Hiroshi Deguchi

Appendix B.1.1. Proposition 1: Platform Lock-In on the B2C Market

Comments on Proposition 1: The first Internet revolution caused platform lock-in on the B2C market. A platform business consists of three stakeholders: (1) the user, (2) the service provider, and (3) the platform provider. A user prefers a platform where there are many service providers. At the same time, a service provider selects the platform preferred by many users. These three stakeholder dynamics cause platform lock-in []. The oligopoly is not caused by a classic increasing-returns economy. Therefore, it is difficult to manage the new reality with traditional antitrust laws. To mitigate the concentration, we have to design new industry policies.

Appendix B.1.2. Proposition 2: Top-Down Optimization and Low-Capability Business Components

Comments on Proposition 2: In in the first quarter century of the new millennium, a new business model has developed, especially in the franchise chain business on the B2C market. The operation on a franchisee and its optimization is designed on a central component. However, there is little capability development on a franchisee component. This is not a traditional division of labor, as discussed by Adam Smith [] or Charles Babbage [], because there is no specialization of labor on a franchisee component.

The workers of the new labor market have been embedded in a newly constructed reality where there is no specialization or job capability development program [].

The new reality causes an unfair capability and income gap.

Appendix B.1.3. Proposition 3: IoT-Based Second Internet Revolution & Reality Shift

Comments on Proposition 3: The first Internet revolution caused a significant change on the B2C-based industrial structure and related life worlds. The second Internet revolution, characterized by IoT-based technology and management [], will change the B2B-based industrial structure and our life world where we are embedded functionally and semantically through a division of labor. New distributed organizations and related work styles depend on new divisions of labor that will be created by the IoT-based management of loosely coupled tasks and projects. Traditional business management has focused on an organizational layer of business processes []; the IoT-based management focuses on “task-” and “project-”based business processes, where tasks and their loosely coupled projects are designed, deployed, operated and managed through IoT based open platform. The IoT-based management system will also change the related business processes to be more flexible and have a variety of systems.

This is one possible scenario of a reality shift. There is another reality shift scenario where the B2B platform will be locked in, and the labor market will be divided into a “high value-added & high-capability creative class” and a “low value-added and low-capability worker class”.

Appendix B.1.4. Proposition 4: Reality Reconstruction and Reality Shift

Comments on Proposition 4: We are living not in a stable, traditional world but in a fluid and continuously constructed and reconstructed world where we are confronted by a continuously shifting reality. The process of modernization is characterized by a disembedded personalizing process from traditional reality [,]. The modern world has been constructed both functionally and semantically. There exists a new embedded process in a new constructed reality. Nevertheless, there is little research on the new embedded and disembedded processes for a continuously constructed and reconstructed reality. How we can analyze the embedded and disembedded processes?

Natural sciences and some social sciences focus on universal laws, but it is impossible to identify a universal social law for a society because the reality of a society is a constructed reality. Some social sciences focus on the construction of a reality for a limited context. They do not focus on the technosocial system where both functional and semantic aspects are required. On the contrary, there exists a commensurability gap between functional and semantic aspects. We have to bridge the commensurability gap between the functional and semantic aspects and construct a new methodology and modeling method to manage the reality shift process.

Keywords: reality shift, reality reconstruction, embedded and disembedded processes, technosocial system.

Appendix B.2. Arisa Ema

Appendix B.2.1. Proposition 1

The widespread use of digital technologies would create digital divides between not only the haves and the have-nots but also between the wants and the want-nots. Society should ensure that the have-nots won’t be disadvantaged and that the rights of the want-nots will be respected.

Comments for Proposition 1: Engaging with digital technologies is a matter of choice, and there will be non-users even after technology becomes widespread. In the case of the Internet, a study by Wyatt [] classified non-users into four categories: resisters, rejecters, the excluded, and the expelled. Resisters and rejecters are called “want-nots” since they choose not to use the Internet, while those excluded and expelled are called “have-nots” because they are forced not to use it. Much attention has been devoted to have-nots, but we should also pay attention to want-nots.

Appendix B.2.2. Proposition 2

Since AI development is dominated by several companies, especially American companies in Silicon Valley, technological development will reflect these few companies at the helm. This situation has the potential to introduce significant biases in the technology that could put a huge population at risk on the global stage.

Comments for Proposition 2: AI provides multiple benefits, and it is creeping into our daily lives without conscious societal decisions. Because the current technology development is dominated by AI giants (i.e., Google, Apple, Facebook, Amazon, (GAFA)) and because they are all based in the United States, their products, optimized for their representative customers, might benefit Americans and Westerners and put those from other regions at risk or at least at a disadvantage. Such biases could be cultural, regional, or economic.

Appendix B.3. Atsuo Kishimoto (Propositions on the Perspective: Risk Management)

Appendix B.3.1. Risk Based Approach (Proposition 1)

Explicit adoption of risk based approach will be needed for a long list of unintended side effects caused by digital transition in order to be useful for evidence based / informed policy making.

Comments for Proposition 1: Digital transition will have a lot of unintended effects on society as well as various kinds of benefit. Our experience of risk based approach in other fields, such as food safety, engineering, mitigation of natural hazards, will contribute to the rational response to the potential unintended effects of digital transition including widespread use of AI. The concept of “risk” consists of two factors; likelihood and severity. The former, likelihood, is often ignored in dealing with hazards and threats. Based on risk analysis societies worldwide will be able to take into account various potential trade-offs and their implications on each stakeholder when making decisions about digital technologies. A risk-based approach would enable societies to reflect on the choices involved and their potential impacts.

Appendix B.3.2. Specifying the Endpoints (Proposition 2)

The first step in implementing proposition 1 is to specify the endpoints, that is, what we want to protect against hazards and threats arising out of digital transformation including the use of AI, and determine the indicators of each endpoint.

Comments for Proposition 2: Unlike traditional risks, such as food safety and machine safety, whose endpoints are human health and safety related ones, the endpoints we need to consider will be protection of privacy, freedom of choice, right to know, reduction of disparity and so on in addressing risks of digital transformation including the use of AI. A measurement method for each endpoint need to be developed in the first place. Since different constituencies have different perspectives on threats, the identification of endpoints would involve various kinds of discourse and deliberations and would require the involvement of experts from a multitude of disciplines as well as stakeholders and citizens.

Appendix B.3.3. Quantifying Likelihood and Severity (Proposition 3)

The second step in implementing proposition 1 is to quantify or semi-quantify the likelihood and severity of each potential hazard and threat arising out of digital transformation including the use of AI.

Comments for Proposition 3: There are many hurdles to overcome in order to achieve this goal. One is to develop methodology to compare different kinds of endpoints, such as privacy [] vs. security. Another one is to specify the severity of each hazard since it inevitably involves a value judgment. Narrowing down the likelihood range also often constitutes a trans-scientific problem []. Concrete discussion will be needed for each case based on societal discourse

Appendix B.4. Junichi Mori

Appendix B.4.1. Proposition 1

As IT systems, including recent artificial intelligence technologies, are rapidly advancing and gradually becoming embedded in our social systems, it is critical to revisit the concept of humanity. Education should provide an appropriate understanding of the recent digital technologies and their potential side effects.

Comments for Proposition 1: As artificial intelligence and its related digital technologies are becoming a part of the fundamental components that constitute our societies, we as human beings might be modulated by the technologies in regard to emotion, faith, or behavior. Moreover, the relationship between humans and machines is certainly changing. It is now quite important to revisit the concept of humanity and to rethink how we should design our own society with regard to human nature, dignity, and ethics instead of the current value system of human-centered principles. Therein, education should play an important role by providing people with an appropriate understanding of recent digital technologies and their potential side effects. Such knowledge, ideas, and resources should be widely available and commonly shared, regardless of one’s educational background and environment.

Appendix B.4.2. Proposition 2

Big data and cutting-edge AI technologies are currently dominated by several IT business entities. With the emerging movement toward open data and science, both governments and industries should promote making data and technologies more available to scientific and public use so that our society becomes decentralized and not dominated by one super-intelligence.

Comment for Propositions 2: A few IT giants are currently dominating massive big data, computational powers, and talented human resources—a situation that may lead to one super-intelligence and thereby decrease our diversity by centralizing our society. However, it is not feasible to determine the best “goal function” to achieve our sustainable development goals. By accepting the pursuit of the various different goals of a diversity of peoples, our complex and pluralistic society will be able to face and overcome the range of unexpected challenges in the future []. One way to keep our society diverse and decentralized is to commit to a system of open data and scientific strategies. Both governments and industries should promote making data and technologies more available for scientific and public use. And we, as the decentralized system with diversity, should take a more creative approach to solving our complex social issues by coping with the super-intelligence to come.

Appendix B.5. Roland W. Scholz (Propositions on the Perspective: ‘Biophysical, Genetic, and Epigenetic Level of the Digital Transformation’)

Appendix B.5.1. Proposition 1

Human evolution has reached a new stage as a result of (i) the digital, engineering-based manipulation of natural variation at the cellular level (i.e., directed evolution vs. random mutation) and (ii) biocomputers that include living cells.

Comments for Proposition 1: DNA is a genuine (quaternary) digital construct. Thus, the genetic and synthetic biological engineering and unsee(ns) resulting from GMOs are part of the Digital Revolution. The case of herbicide-resistant, genetically modified crops (e.g., those treated with Monsanto’s Roundup) showed that property rights on digitally genetically modified plants, animals, humans, etc. may induce large-scale economic and social side effects. Science should reflect on similar cases. A further task is to reflect on non-digital DNA-related properties, as genetic defects may be caused by the folding of DNA. Biocomputers [,] fundamentally change the nature of digital environments, as the “decisions” (i.e., the information processing) provided do not depend on the structure and design of the hardware and program but on the mind (i.e., organismic information processing) and state of the cell. Biocomputers call for new knowledge and theories of programming and logic, as well as an understanding of how to utilize these new types of hybrid beings.

Appendix B.5.2. Proposition 2

Environmental epigenetics and proper epidemiological studies should investigate the psychophysical impacts resulting particularly from the excessive use of digital information by sensitive individuals.

Comments for Proposition 2: A critical question related to various forms of excessive digital media is whether the human biophysical system is phylogenetically prepared for the rapid spread of all forms of digital environments. There is increasing evidence for (i) Internet addiction [] and (ii) the effects of intense Internet use on brain morphology (in a way that is similar to the growth of London’s cab drivers’ hippocampi []). The state-of-the-art knowledge of environmental epigenetics [,] suggests that the epigenetic effects of intense or excessive exposure to highly demanding visual 3D effects cannot be discounted. In this context, (iv) an analysis of excessive exposure to destructive, aggressive, and sexual content in video gaming may become of particular interest if the neurophysiological processes linked to the interactive and enactive processes are considered.

Appendix B.5.3. Proposition 3

The reflective and reflexive capacity of science regarding the digital transition has to be strengthened.

Comments for Proposition 3: Propositions 1 and 2 identified impacts of digital systems on biophysical, genetic, and epigenetic layers of human systems. We think that the potential, the limits, and the 1 (ns) related to resilient human–environment systems have to be reflected upon with respect to the following: (incomplete list)

- The limits of a digital conception of DNA and the potential role of analog models (e.g., in the folding of DNA)

- The understanding of the “nature” of biocomputers

- Vulnerabilities of (agro-)ecosystems and other systems with respect to the digital manipulation (e.g., directed evolution) of DNA

- Individual exposure to digital information systems (e.g., Internet addiction, massive virtual information, etc.)

- The power of knowledge about an individual’s DNA and biotechnological engineering by owners of digital knowledge.

Appendix B.6. Hideaki Shiroyama (Propositions on the Perspective: Risk and Resilience Management)

Appendix B.6.1. Proposition 1

The interconnected and interactive nature of risks our society faces will be strengthened in the digital era.

Comment s for Proposition 1: Digital technology dramatically increases the interconnectedness of risks, as different components of the infrastructure are highly interconnected in the digital era. In addition, digital technology amplifies the endogenous interactive impact of the intentions of actors, malicious or not, in the security field or in the field of marketing.

Appendix B.6.2. Proposition 2

A resilient governance framework needs to be developed to cope with the interconnected and interactive nature of risks in the digital era.

Comments for Proposition 2: It is impossible to prevent all of the possible risk scenarios in the digital era. However, it is necessary to explore the strategies and approaches for addressing such an interconnected and interactive nature of risks in a resilient manner that responds to the different types of dynamism of risks (the rapidly growing type, the slowly growing type, and the wave type). Strategies and approached include utilizing redundancy, disaggregation, a flexible phase-based response, and so on.

Appendix B.6.3. Proposition 3

Different levels of necessity and tolerance for redundancy need to be coordinated in the digital era.

Comment on Proposition 3. One way to deal with the interconnected and interactive risk in the digital era is to put redundancy in place. But the level of redundancy required and tolerated is different and diverse among various sectors. The financial sector in civil use and the military sector require and tolerate higher levels of redundancy, even if they result in high costs. But this is not true in other sectors. Furthermore, those different sectors with diverse levels of necessity for redundancy are connected in the digital era. Thus, the different levels of requirements and expectations need to be coordinated.

Appendix B.7. Masahiro Sugiyama (Propositions on the Perspective: Job Market and Biotech Risks)

Appendix B.7.1. Proposition 1

The effect of AI-induced unemployment will be felt across the globe, including in developing countries. This would create a fundamental problem for many developing countries, which have traditionally relied on manufacturing in the process of transitioning to economic development.

Comment on Proposition 1: The issue of unemployment has been discussed, and the focus has been on developed economies. But this would have global consequences. Full employment and decent work are part of the UN’s Sustainable Development Goal 8.

Appendix B.7.2. Proposition 2

The convergence of digital technology and biotechnology will produce societal challenges that would transcend conventional governance domains.

Comments on Proposition 2: With the rapid progress in biotechnology, scientists have proposed radical ideas, including gene drives, which would alter the gene within the existing ecosystem. The convergence of digital technology with biotechnology will further accelerate such trends, potentially creating unforeseen new challenges.

Appendix B.7.3. Proposition 3

The rapid development of digital technologies presents a fundamental challenge to the design of transdisciplinary processes, which were traditionally much slower than the pace of digital innovations.

Comments on Proposition 3: As with Moore’s law, a large amount of technological progress in the digital domain is happening at an exponential speed. That is, the speed itself keeps increasing. Deliberations and political processes are usually slow, and there is an inherent difficulty associated with engaging the lay public and stakeholders in discussions about the rapidly changing technological landscape. There is a great need for methodological development (perhaps with some help from digital technologies themselves) for fast—but still meaningful—societal dialogues.

References

- Government of Japan. The 5th Science and Technology Basic Plan; Government of Japan: Tokyo, Japan, 2016.

- Committee on Technology. Preparing for the Future of Artificial Intelligence; Executive Office of the President: Washington, DC, USA, 2016.

- Executive Office of the President. Artificial Intelligence, Automation, and the Economy; Executive Office of the President: Washington, DC, USA, 2016.

- World Economic Forum. The Global Risks Report 2017; World Economic Forum: Geneva, Switzerland, 2017. [Google Scholar]

- Harriss, L.; Ennis, J. Automation and the Workforce; Parliamentary Office of Science and Technology: London, UK, 2016. [Google Scholar]

- European Parliamentary Technology Assessment (EPTA). The Future of Labour in the Digital Era: Ubiquitous Computing, Virtual Platforms, and Real-Time Production; European Parliamentary Technology Assessment: Vienna, Austria, 2016. [Google Scholar]

- Stone, P.; Brooks, R.; Brynjolfsson, E.; Calo, R.; Etzioni, O.; Hager, G.; Hirschberg, J.; Kalyanakrishnan, S.; Kamar, E.; Kraus, S.; et al. Artificial Intelligence and Life in 2030. One Hundred Year Study on Artificial Intelligence: Report of the 2015–2016 Study Panel; Stanford University: Stanford, CA, USA, 2016. [Google Scholar]

- Tenets|Partnership on Artificial Intelligence to Benefit People and Society. Available online: https://www.partnershiponai.org/tenets/ (accessed on 23 September 2017).

- The IEEE Global Initiative for Ethical Considerations in Artificial Intelligence and Autonomous Systems. Ethically Aligned Design: A Vision for Prioritizing Human Wellbeing with Artificial Intelligence and Autonomous Systems; IEEE: New York, NY, USA, 2016. [Google Scholar]

- AI Principles—Future of Life Institute. Available online: https://futureoflife.org/ai-principles/ (accessed on 23 September 2017).

- The Conference toward AI Network Society. Draft AI R&D Guidelines for International Discussions; The Conference toward AI Network Society; Ministry of Internal Affairs and Communications: Tokyo, Japan, 2017.

- Japanese Society for Artificial Intelligence. The Japanese Society for Artificial Intelligence Ethical Guidelines; Japanese Society for Artificial Intelligence: Tokyo, Japan, 2017. [Google Scholar]

- Matsuo, Y. About the Japanese Society for Artificial Intelligence Ethical Guidelines. Available online: http://ai-elsi.org/archives/514 (accessed on 23 September 2017).

- Japanese Society for Artificial Intelligence Ethics Committee Summary Report—Open Discussion: The Japanese Society for Artificial Intelligence (2017/5/24). Available online: http://ai-elsi.org/archives/615 (accessed on 23 September 2017).

- Ema, A. Ethically Aligned Design Dialogue: A Case Practice of Responsible Research and Innovation. Jinko Chino (Artif. Intell.) 2017, 32, 694–700. (In Japanese) [Google Scholar]

- Advisory Board on Artificial Intelligence and Human Society. Report on Artificial Intelligence and Human Society; Advisory Board on Artificial Intelligence and Human Society: Tokyo, Japan, 2017. [Google Scholar]

- Acceptable Intelligence with Responsibility. Available online: http://sig-air.org/ (accessed on 23 September 2017).

- Future of Life Institute Benefits and Risks of Artificial Intelligence. Available online: https://futureoflife.org/background/benefits-risks-of-artificial-intelligence/ (accessed on 7 October 2017).

- Chang, J.-H.; Rynhart, G.; Huynh, P. ASEAN in Transformation: How Technology Is Changing Jobs and Enterprises; International Labor Organization: Genève, Switzerland, 2016. [Google Scholar]

- Owen, R.; Macnaghten, P.; Stilgoe, J. Responsible research and innovation: From science in society to science for society, with society. Sci. Public Policy 2012, 39, 751–760. [Google Scholar] [CrossRef]

- Stilgoe, J.; Owen, R.; Macnaghten, P. Developing a framework for responsible innovation. Res. Policy 2013, 42, 1568–1580. [Google Scholar] [CrossRef]

- European Commission Directorate-General for Research and Innovation. Options for Strengthening Responsible Research and Innovation: Report of the Expert Group on the State of Art in Europe on Responsible Research and Innovation; Publications Office of the European Union: Luxemborg, 2013. [Google Scholar]

- Von Schomberg, R. (Ed.) Towards Responsible Research and Innovation in the Information and Communication Technologies and Security Technologies Fields; Publications Office of the European Union: Luxemborg, 2011. [Google Scholar]

- Osawa, H.; Ema, A.; Hattori, H.; Akiya, N.; Kanzaki, N.; Kubo, A.; Koyama, T.; Ichise, R. What is Real Risk and Benefit on Work with Robots? In Proceedings of the Companion of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, HRI ’17, Vienna, Austria, 6–9 March 2017; ACM Press: New York, NY, USA, 2017; pp. 241–242. [Google Scholar]

- Kajikawa, Y. Research core and framework of sustainability science. Sustain. Sci. 2008, 3, 215–239. [Google Scholar] [CrossRef]

- Laws, D.; Scholz, R.W.; Shiroyama, H.; Susskind, L.; Suzuki, T.; Weber, O. Expert views on sustainability and technology implementation. Int. J. Sustain. Dev. World Ecol. 2004, 11, 247–261. [Google Scholar] [CrossRef]

- Scholz, R. The Normative Dimension in Transdisciplinarity, Transition Management, and Transformation Sciences: New Roles of Science and Universities in Sustainable Transitioning. Sustainability 2017, 9, 991. [Google Scholar] [CrossRef]

- The United Nations General Assembly. Resolution Adopted by the General Assembly on 25 September 2015--Transforming Our World: The 2030 Agenda for Sustainable Development; The United Nations General Assembly: New York, NY, USA, 2015. [Google Scholar]

- Scholz, R. Transdisciplinary transition processes for adaptation to climate change. In From Climate Change to Social Change: Perspectives on Science-Policy Interactions; Driessen, P.P.J., Leroy, P., Van Vierssen, W., Eds.; International Books: Utrecht, The Netherlands, 2010; pp. 69–94. [Google Scholar]

- Scholz, R.W.; Steiner, G. The real type and ideal type of transdisciplinary processes: Part I—Theoretical foundations. Sustain. Sci. 2015, 10, 527–544. [Google Scholar] [CrossRef]

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; van den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvam, V.; Lanctot, M.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 529, 484–489. [Google Scholar] [CrossRef] [PubMed]

- Huang, X.; Baker, J.; Reddy, R. A historical perspective of speech recognition. Commun. ACM 2014, 57, 94–103. [Google Scholar] [CrossRef]

- Markoff, J. A Learning Advance in Artificial Intelligence Rivals Human Abilities. The New York Times, 10 December 2015; B3. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Moore, G.E. Cramming more components onto integrated circuits. Electronics 1965, 38, 114. [Google Scholar] [CrossRef]

- Mayer-Schönberger, V.; Cukier, K. Big Data: A Revolution that Will Transform How We Live, Work, and Think; Houghton Mifflin Harcourt: Boston, MA, USA, 2013; ISBN 0544227751. [Google Scholar]

- Crane, D. Theoretical models and emerging trends. In Global Culture: Media, Arts, Policy, and Globalization; Crane, D., Kawashima, N., Kawasaki, K., Eds.; Routledge: London, UK, 2002; pp. 1–28. [Google Scholar]

- Zolfagharifard, E. Would you Take Orders from a ROBOT? An Artificial Intelligence Becomes the World’s First Company Director. Available online: http://www.dailymail.co.uk/sciencetech/article-2632920/Would-orders-ROBOT-Artificial-intelligence-world-s-company-director-Japan.html#ixzz (accessed on 7 October 2017).

- Rahman, K. “We Don’t Hurt Anybody, We Are Just Happy”: Woman Reveals She Has Fallen in Love with a ROBOT and Wants to Marry it. Available online: http://www.dailymail.co.uk/femail/article-4060440/Woman-reveals-love-ROBOT-wants-marry-it.html (accessed on 7 October 2017).

- Extance, A. How DNA could store all the world’s data. Nature 2016, 537, 22–24. [Google Scholar] [CrossRef] [PubMed]

- Scholz, R.W.; Steiner, G. Transdisciplinarity at the crossroads. Sustain. Sci. 2015, 10, 521–526. [Google Scholar] [CrossRef]

- Ema, A.; Akiya, N.; Osawa, H.; Hattori, H.; Oie, S.; Ichise, R.; Kanzaki, N.; Kukita, M.; Saijo, R.; Takushi, O.; et al. Future Relations between Humans and Artificial Intelligence: A Stakeholder Opinion Survey in Japan. IEEE Technol. Soc. Mag. 2016, 35, 68–75. [Google Scholar] [CrossRef]

- Meinrath, S.D.; Losey, J.W.; Pickard, V.W. Digital feudalism: Enclosures and erasures from digital rights management to the digital divide. CommLaw Conspec. 2011, 19, 423–479. [Google Scholar]

- Sornette, D.; Ouillon, G. Dragon-kings: Mechanisms, statistical methods and empirical evidence. Eur. Phys. J. Spec. Top. 2012, 205, 1–26. [Google Scholar] [CrossRef]

- Taleb, N.N. The Black Swan: The Impact of the Highly Improbable; Random House: New York, NY, USA, 2007. [Google Scholar]

- Frey, C.B.; Osborne, M.A. The Future of Employment: How Susceptible Are Jobs to Computerization? Oxford Martin Programme on Technology and Employment: Oxford, UK, 2013. [Google Scholar]

- Finucane, M.L.; Holup, J.L. Psychosocial and cultural factors affecting the perceived risk of genetically modified food: An overview of the literature. Soc. Sci. Med. 2005, 60, 1603–1612. [Google Scholar] [CrossRef] [PubMed]

- Liang, X.; Ho, S.S.; Brossard, D.; Xenos, M.A.; Scheufele, D.A.; Anderson, A.A.; Hao, X.; He, X. Value predispositions as perceptual filters: Comparing of public attitudes toward nanotechnology in the United States and Singapore. Public Underst. Sci. 2015, 24, 582–600. [Google Scholar] [CrossRef] [PubMed]

- Visschers, V.H.M.; Shi, J.; Siegrist, M.; Arvai, J. Beliefs and values explain international differences in perception of solar radiation management: insights from a cross-country survey. Clim. Chang. 2017, 142, 531–544. [Google Scholar] [CrossRef]

- Fraune, M.; Kawakami, S.; Sabanovic, S.; de Silva, R.; Okada, M. Three’s company, or a crowd: The effects of robot number and behavior on HRI in Japan and the USA. In Robotics: Science and Systems XI; Robotics Science and Systems Foundation: Rome, Italy, 2015. [Google Scholar]

- Lee, H.R.; Sabanović, S. Culturally variable preferences for robot design and use in South Korea, Turkey, and the United States. In Proceedings of the 2014 ACM/IEEE International Conference on Human-Robot Interaction, HRI ’14, Bielefeld, Germany, 3–6 March 2014; ACM Press: New York, NY, USA, 2014; pp. 17–24. [Google Scholar]

- Geraci, R.M. Spiritual robots: Religion and our scientific view of the natural world. Theol. Sci. 2006, 4, 229–246. [Google Scholar] [CrossRef]

- Ema, A. Artificial Intelligence and Future Society: Projects and Prospect. In Proceedings of the 29th Annual Conference of the Japanese Society for Artificial Intelligence, Hakodate, Japan, 30 May–2 June 2015. (In Japanese). [Google Scholar]

- Report: Open Discussion: The Japanese Society for Artificial Intelligence (2017/5/24). Available online: http://ai-elsi.org/archives/628 (accessed on 7 October 2017).

- Civic Debate. Available online: http://ai-initiative.org/ai-consultation/ (accessed on 7 October 2017).

- Mikami, N. Public Participation in Decision-Making on Energy Policy: The Case of the “National Discussion” after the Fukushima Accident. In Lessons from Fukushima; Springer International Publishing: Cham, Switzerland, 2015; pp. 87–122. [Google Scholar]

- Brundage, M. Artificial Intelligence and Responsible Innovation. In Fundamental Issues of Artificial Intelligence; Müller, V., Ed.; Springer International Publishing: Cham, Switzerland, 2016; pp. 543–554. [Google Scholar]

- Scheufele, D.A.; Xenos, M.A.; Howell, E.L.; Rose, K.M.; Brossard, D.; Hardy, B.W. U.S. attitudes on human genome editing. Science 2017, 357, 553–554. [Google Scholar] [CrossRef] [PubMed]

- Carr, W.; Preston, C.J.; Yung, L.; Szerszynski, B.; Keith, D.W.; Mercer, A.M. Public engagement on solar radiation management and why it needs to happen now. Clim. Chang. 2013, 121, 567–577. [Google Scholar] [CrossRef]

- Sugiyama, M.; Kosugi, T.; Ishii, A.; Asayama, S. Public Attitudes to Climate Engineering Research and Field Experiments: Preliminary Results of a Web Survey on Students’ Perception in Six Asia-Pacific Countries. Available online: http://pari.u-tokyo.ac.jp/eng/publications/WP16_24.html (accessed on 7 October 2017).

- Rowe, G.; Frewer, L.J. A Typology of Public Engagement Mechanisms. Sci. Technol. Hum. Values 2005, 30, 251–290. [Google Scholar] [CrossRef]

- Nishizawa, M. Citizen deliberations on science and technology and their social environments: case study on the Japanese consensus conference on GM crops. Sci. Public Policy 2005, 32, 479–489. [Google Scholar] [CrossRef]

- Sugiyama, M.; Asayama, S.; Ishii, A.; Kosugi, T.; Moore, J.C.; Lin, J.; Lefale, P.F.; Burns, W.; Fujiwara, M.; Ghosh, A.; et al. The Asia-Pacific’s role in the emerging solar geoengineering debate. Clim. Chang. 2017, 143, 1–12. [Google Scholar] [CrossRef]

- Sugiyama, M.; Asayama, S.; Kosugi, T.; Ishii, A.; Emori, S.; Adachi, J.; Akimoto, K.; Fujiwara, M.; Hasegawa, T.; Hibi, Y.; et al. Transdisciplinary co-design of scientific research agendas: 40 research questions for socially relevant climate engineering research. Sustain. Sci. 2017, 12, 31–44. [Google Scholar] [CrossRef]

- Kelly, K. The Inevitable: Understanding the 12 Technological Forces that Will Shape Our Future; Penguin: New York, NY, USA, 2016; ISBN 9780525428084. [Google Scholar]

- Hilbert, M.; Lopez, P. The World’s Technological Capacity to Store, Communicate, and Compute Information. Science 2011, 332, 60–65. [Google Scholar] [CrossRef] [PubMed]

- Schumpeter, J. Business Cycles: A Theoretical, Historical, and Statistical Analysis of the Capitalist Process; McGraw-Hill: New York, NY, USA, 1939. [Google Scholar]

- Latour, B.; Woolgar, S. Laboratory Life: The Construction of Scientific Facts; Princeton University Press: Princeton, NJ, USA, 1979. [Google Scholar]

- Ensmenger, N. The Digital Construction of Technology: Rethinking the History of Computers in Society. Technol. Cult. 2012, 53, 753–776. [Google Scholar] [CrossRef]

- Deguchi, H. Learning Dynamics in Platform Externality. In Economics as an Agent-Based Complex System; Springer: Tokyo, Japan, 2004; pp. 219–244. [Google Scholar]

- Smith, A. The Wealth of Nations: Books 1-3; Penguin Classics: London, UK, 1982. [Google Scholar]

- Babbage, C. On the Economy of Machinery and Manufactures; Nabu Press: Charleston, SC, USA, 2011. [Google Scholar]

- Gratton, L. The Shift: The Future of Work is Already Here; HarperCollins Business: London, UK, 2011. [Google Scholar]

- Rifkin, J. The Zero Marginal Cost Society: The Internet of Things, the Collaborative Commons, and the Eclipse of Capitalism; Griffin: New York, NY, USA, 2015. [Google Scholar]

- Deguchi, H. Toward Next Generation Social Systems Sciences—From Cross Cultural and Science of Artificial Points of View. In Proceedings of the XVIII ISA World Congress of Sociology, Yokohama, Japan, 13–19 July 2014; Tokyo Institute of Technology: Yokohama, Japan, 2014. [Google Scholar]

- Giddens, A. The Consequences of Modernity; Polity: Cambridge, UK, 1991. [Google Scholar]

- Bauman, Z. Liquid Modernity; Polity: Cambridge, UK, 2000. [Google Scholar]

- Wyatt, S. Non-Users Also Matter: The Construction of Users and Non-Users of the Internet. In How Users Matter: The Co-Construction of Users and Technologies; Oudshoorn, N., Pinch, T.J., Eds.; MIT Press: Cambridge, UK, 2003; pp. 67–79. [Google Scholar]

- Wagner, I.; Boiten, E. Privacy Risk Assessment: From Art to Science, By Metrics. arXiv, 2017; arXiv:1709.03776. [Google Scholar]

- Weinberg, A.M. Science and trans-science. Minerva 1972, 10, 209–222. [Google Scholar] [CrossRef]

- Helbing, D.; Frey, B.S.; Gigerenzer, G.; Hafen, E.; Hagner, M.; Hofstetter, Y.; van den Hoven, J.; Zicari, R.V.; Zwitter, A. Will Democracy Survive Big Data and Artificial Intelligence? Available online: https://www.scientificamerican.com/article/will-democracy-survive-big-data-and-artificial-intelligence/ (accessed on 7 October 2017).

- Esau, M.; Rozema, M.; Zhang, T.H.; Zeng, D.; Chiu, S.; Kwan, R.; Moorhouse, C.; Murray, C.; Tseng, N.-T.; Ridgway, D.; et al. Solving a Four-Destination Traveling Salesman Problem Using Escherichia coli Cells As Biocomputers. ACS Synth. Biol. 2014, 3, 972–975. [Google Scholar] [CrossRef] [PubMed]

- Zhirnov, V.V.; Cavin, R.K. Future Microsystems for Information Processing: Limits and Lessons From the Living Systems. IEEE J. Electr. Devices Soc. 2013, 1, 29–47. [Google Scholar] [CrossRef]

- Montag, C.; Sindermann, C.; Becker, B.; Panksepp, J. An Affective Neuroscience Framework for the Molecular Study of Internet Addiction. Front. Psychol. 2016, 7, 1906. [Google Scholar] [CrossRef] [PubMed]

- Woollett, K.; Maguire, E.A. Exploring anterograde associative memory in London taxi drivers. NeuroReport 2012, 23, 885–888. [Google Scholar] [CrossRef] [PubMed]

- Bollati, V.; Baccarelli, A. Environmental epigenetics. Heredity 2010, 105, 105–112. [Google Scholar] [CrossRef] [PubMed]

- Jirtle, R.L.; Tyson, F.L. (Eds.) Environmental Epigenomics in Health and Disease: Epigenetics and Disease Origins; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).