Air-Quality Prediction Based on the EMD–IPSO–LSTM Combination Model

Abstract

:1. Introduction

2. Materials and Methods

2.1. Principle of EMD

- (1)

- The number of extreme and zero points had to be equal to or differ by no more than one.

- (2)

- For each time series, the average value of the upper envelope formed by the local maximum value and the lower envelope formed by the local minimum value was zero.

- (1)

- Identify all local maxima and local minima of the sequence to be decomposed and connect all local maxima and local minima to form the upper envelope and the lower envelope , respectively.

- (2)

- Identify the mean value of the upper and lower envelopes, and subtract the mean value from the sequence to be decomposed to obtain the component , i.e., .

- (3)

- Determine whether satisfied the IMF condition. If it was satisfied, was the first IMF component. However, if the condition was not satisfied, apply the same processing to as that applied to . The new component would be judged and processed in the same way until the IMF conditions were met. The first component of IMF would then be obtained.

- (4)

- Repeat the above steps with the remaining component as a new decomposition sequence until the component or the remaining component was less than the predetermined value or the remaining component became a monotonic function. The final result was . The decomposition of the original sequence was completed at this point.

2.2. LSTM

- (1)

- The output of and the current input were used as the inputs of the forgetting gate to obtain the output value of the forgetting gate based on Equation (1).where and were the parameters of the forgetting gate, was the activation function which typically used the sigmoid function, and the value range of ranged between 0 and 1. After the forgetting gate, the state vector of the LSTM was .

- (2)

- The output of and the current input were transformed nonlinearly as the input of the input gate to obtain a new state vector . controlled the amount of input through the input gate. The specific equations were Equations (2) and (3).where and were the parameters of the input gate, was the activation function, determined the acceptance of , and the value range of was between 0 and 1. After the input gate, the state vector of the LSTM was .

- (3)

- Update the state vector based on Equation (4).where the new state vector was obtained as the current state vector and the value range of was between 0 and 1.

- (4)

- The output of and the current input were used as inputs of the output gate to obtain the output of the output gate; the specific equation was Equation (5).where and were the parameters of the output gate, was the activation function which typically used the sigmoid function. The value range of was between 0 and 1.

- (5)

- Calculate the ultimate output value of the LSTM neurons based on Equation (6).

2.3. IPSO

- (1)

- Improvement in the inertia weight

- (2)

- Improvement of learning factors

2.4. Model Evaluation Metrics

3. Experiments

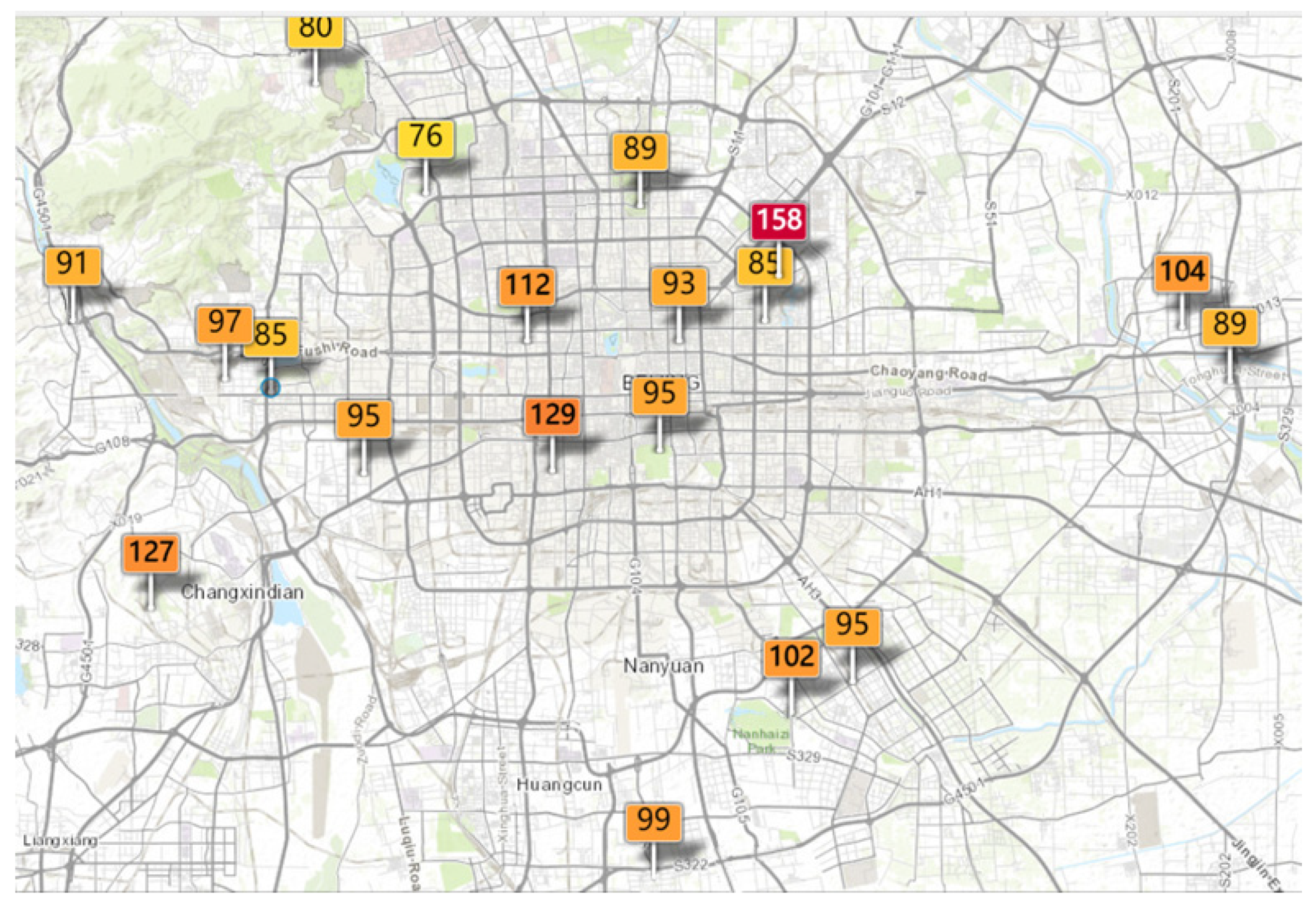

3.1. Data Sources and Preprocessing

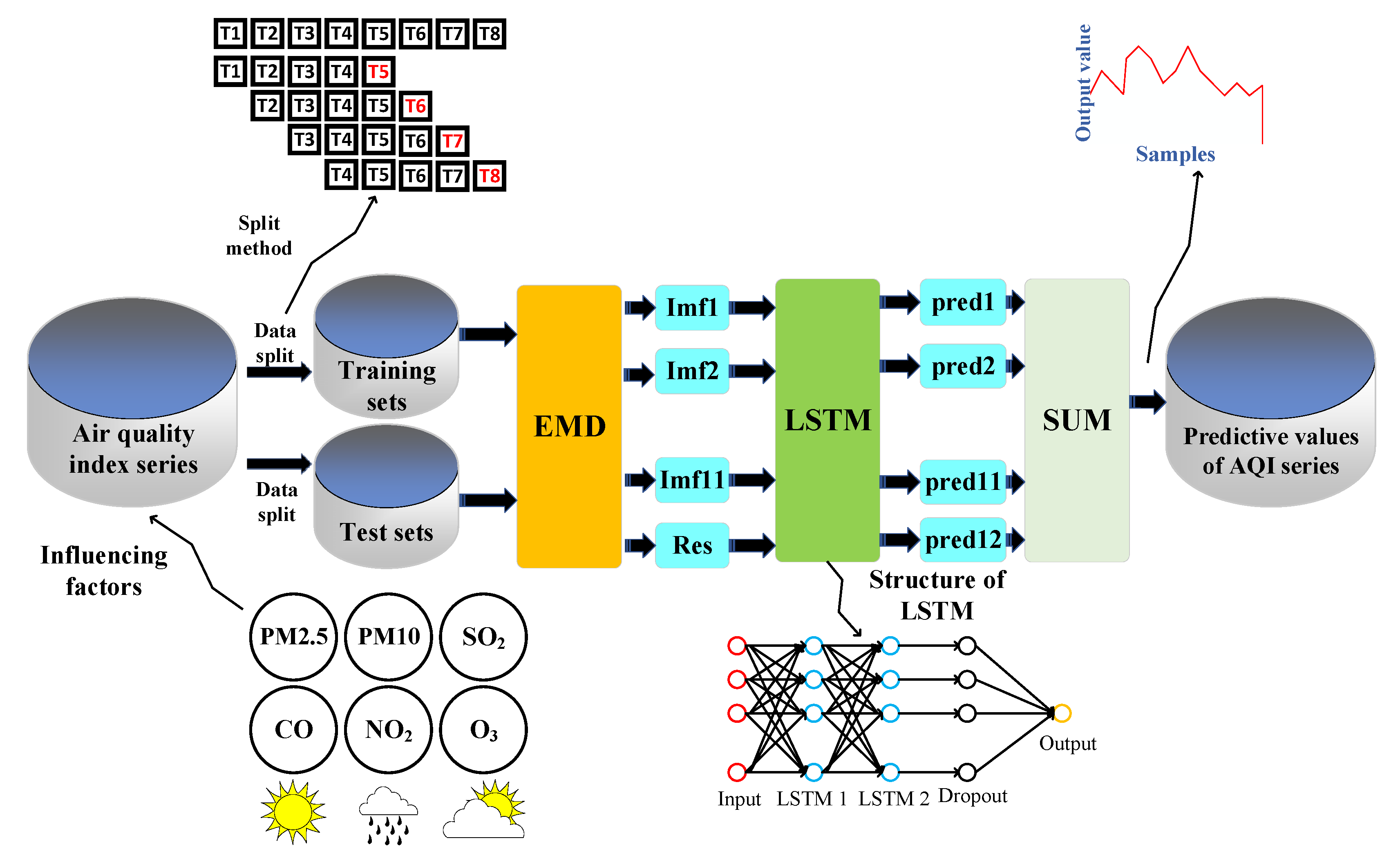

3.2. Predictive Modeling

- (1)

- Normalize the AQI sequence and perform EMD decomposition to obtain multiple IMF and RES components. Then, 95% of the training set samples and 5% of the test set samples were selected and the raw data were transformed into supervised learning to predict the AQI for the future 1 h using data from the past 4 h.

- (2)

- After normalizing the original data, the normalized data were transformed into the data format required for LSTM, then the LSTM neural network was built. Due to the long training time of the LSTM neural network and the low efficiency of the multi-layer network, this experiment set up a two-layer LSTM which obtained better experimental results in the shortest time. Table 3 shows the main parameters of LSTM. Then, obtained components of IMF and the RES component were input into the LSTM neural network.

- (3)

- Based on the multiple iterations of the training set, various parameters of the LSTM model network were trained. After the training set was trained, the prediction was performed on the test set and the components of the IMF prediction results were obtained.

- (4)

- Steps 2 and 3 were repeated to obtain the prediction results of the other components of the IMF and RES.

- (5)

- The predicted values of each IMF component and the remaining components were added, and inverse normalization was performed to obtain the final prediction results.

- (6)

- To initialize the IPSO parameters, we set the population size to 50 and the maximum number of iterations to 100. Taking the number of neurons in the two hidden layers of LSTM as the optimization goal, the optimization range is . MAE is selected as the objective function of the EMD–LSTM neural network, that is, the fitness of the IPSO algorithm function. Finally, through the IPSO algorithm, the optimal number of neurons in LSTM are L1 = 24 and L2 = 16. The number of hidden layer neurons obtained by IPSO is brought into EMD–LSTM, and we find that the model has higher prediction accuracy.

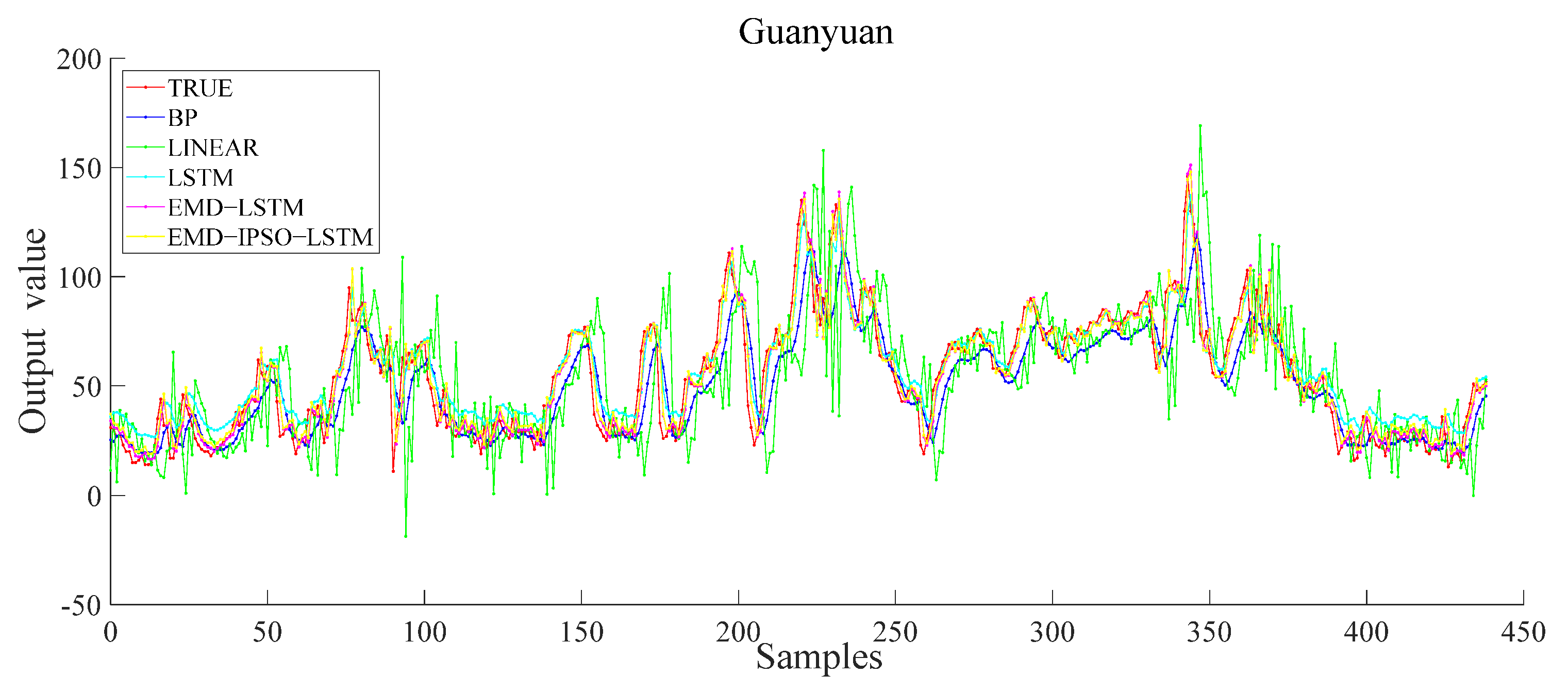

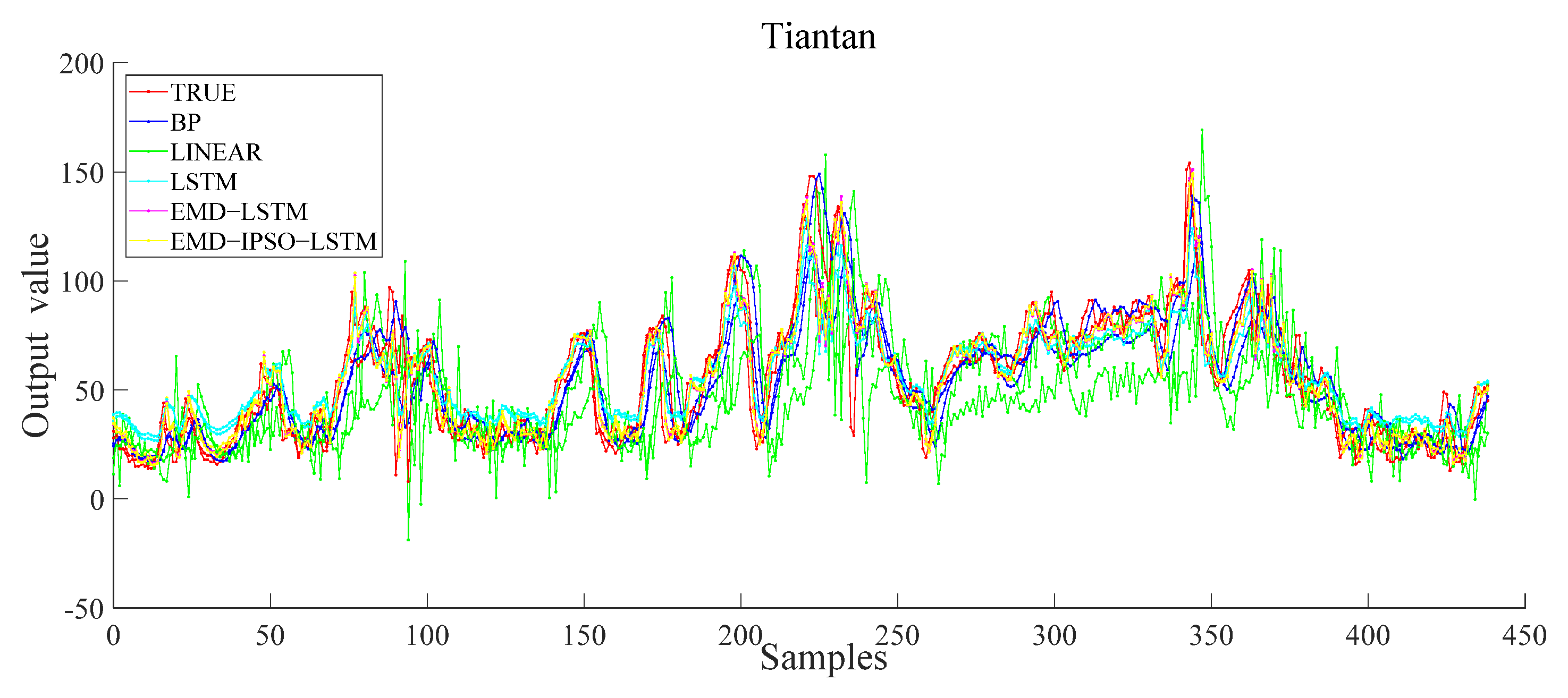

4. Results and Discussion

5. Conclusions

- (1)

- The decomposition of the data into multiple components of different frequencies through EMD decomposition and incorporating them into the LSTM model improved the accuracy of AQI prediction effectively.

- (2)

- The neural units in the hidden layer of LSTM were often determined themselves based on historical experience. Here, the PSO algorithm was selected for optimization and the optimal numbers of neurons in each layer were obtained.

- (3)

- Based on the slow convergence speed of the PSO, the problem of local optimization was easily countered; accordingly, a nonlinear decreasing inertia weight and a learning factor that changed with the inertia weight were proposed. These changes reduced the optimization time and led to a faster convergence toward the global optimum value.

- (4)

- Based on comparative experiments, it was observed that the EMD–IPSO–LSTM hybrid model proposed here had the best prediction performance, and the true and the predicted values had a high degree of fitting. These findings proved that the hybrid prediction method proposed here was effective for future AQI predictions. Therefore, this method has practical application value.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Carbajal-Hernández, J.J.; Sánchez-Fernández, L.P.; Carrasco-Ochoa, J.A. Assessment and prediction of air quality using fuzzy logic and autoregressive models. Atmos. Environ. 2012, 60, 37–50. [Google Scholar] [CrossRef]

- Yang, Z.S.; Wang, J. A new air quality monitoring and early warning system: Air quality assessment and air pollutant concentration prediction. Environ. Res. 2017, 158, 105–117. [Google Scholar] [CrossRef] [PubMed]

- He, H.D.; Li, M.; Wang, W.L. Prediction of PM2.5 concentration based on the similarity in air quality monitoring network. Build. Environ. 2018, 137, 11–17. [Google Scholar] [CrossRef]

- Zhai, W.X.; Cheng, C.Q. A long short-term memory approach to predicting air quality based on social media data. Atmos. Environ. 2020, 237, 117411. [Google Scholar] [CrossRef]

- Hu, F.P.; Guo, Y.M. Health impacts of air pollution in China. Front. Environ. Sci. Eng. 2021, 15, 74. [Google Scholar] [CrossRef]

- Cai, J.X.; Dai, X.; Hong, L. An Air Quality Prediction Model Based on a Noise Reduction Self-Coding Deep Network. Math. Probl. Eng. 2020, 2020, 3507197. [Google Scholar] [CrossRef]

- Yang, Z.C. DCT-based Least-Squares Predictive Model for Hourly AQI Fluctuation Forecasting. J. Environ. Inform. 2020, 36, 58–69. [Google Scholar] [CrossRef]

- Dimri, T.; Ahmad, S.; Sharif, M. Time series analysis of climate variables using seasonal ARIMA approach. J. Earth Syst. Sci. 2020, 129, 149. [Google Scholar] [CrossRef]

- Dun, M.; Xu, Z.C.; Chen, Y. Short-Term Air Quality Prediction Based on Fractional Grey Linear Regression and Support Vector Machine. Math. Probl. Eng. 2020, 2020, 8419501. [Google Scholar] [CrossRef]

- Ko, M.S.; Lee, K.; Kim, J.K. Deep Concatenated Residual Network with Bidirectional LSTM for One-Hour-Ahead Wind Power Forecasting. IEEE Trans. Sustain. Energy 2021, 12, 1321–1335. [Google Scholar] [CrossRef]

- Alotaibi, F.M.; Asghar, M.Z.; Ahmad, S. A Hybrid CNN-LSTM Model for Psychopathic Class Detection from Tweeter Users. Cogn. Comput. 2021, 13, 709–723. [Google Scholar] [CrossRef]

- Chen, Y.R.; Cui, S.H.; Chen, P.Y. An LSTM-based neural network method of particulate pollution forecast in China. Environ. Res. Lett. 2021, 16, 044006. [Google Scholar] [CrossRef]

- Choudhury, A.; Sarma, K.K. A CNN-LSTM based ensemble framework for in-air handwritten Assamese character recognition. Multimed. Tools Appl. 2021, 80, 35649–35684. [Google Scholar] [CrossRef]

- Ko, C.R.; Chang, H.T. LSTM-based sentiment analysis for stock price forecast. PeerJ Comput. Sci. 2021, 7, e408. [Google Scholar] [CrossRef]

- Liu, B.; Jin, Y.Q.; Li, C.Y. Analysis and prediction of air quality in Nanjing from autumn 2018 to summer 2019 using PCR-SVR-ARMA combined model. Sci. Rep. 2021, 11, 348. [Google Scholar] [CrossRef] [PubMed]

- Leong, W.C.; Kelani, R.O.; Ahmad, Z. Prediction of air pollution index (API) using support vector machine (SVM). J. Environ. Chem. Eng. 2020, 8, 103208. [Google Scholar] [CrossRef]

- Wang, P.; Zhang, H.; Qin, Z.D. A novel hybrid-Garch model based on ARIMA and SVM for PM2.5 concentrations forecasting. Atmos. Pollut. Res. 2017, 8, 850–860. [Google Scholar] [CrossRef]

- Du, B.; Liu, Y.R.; Atiatallah Abbas, I. Existence and asymptotic behavior results of periodic solution for discrete-time neutral-type neural networks. J. Frankl. Inst.-Eng. Appl. Math. 2016, 353, 448–461. [Google Scholar] [CrossRef]

- Liu, Y.R.; Liu, W.B.; Obaid, M.A. Exponential stability of Markovian jumping Cohen–Grossberg neural networks with mixed mode-dependent time-delays. Neurocomputing 2016, 177, 409–415. [Google Scholar] [CrossRef]

- Huang, A.L.; Wang, J. Wearable device in college track and field training application and motion image sensor recognition. J. Ambient Intell. Humaniz. Comput. 2021, 1–14. [Google Scholar] [CrossRef]

- Chinnappa, G.; Rajagopal, M.K. Residual attention network for deep face recognition using micro-expression image analysis. J. Ambient Intell. Humaniz. Comput. 2021, 1–14. [Google Scholar] [CrossRef]

- Chen, J.Y.; Zheng, H.B.; Xiong, H. FineFool: A novel DNN object contour attack on image recognition based on the attention perturbation adversarial technique. Comput. Secur. 2021, 104, 102220. [Google Scholar] [CrossRef]

- Sun, G.; Lin, J.J.; Yang, C. Stock Price Forecasting: An Echo State Network Approach. Comput. Syst. Sci. Eng. 2021, 36, 509–520. [Google Scholar] [CrossRef]

- Niu, H.L.; Xu, K.L.; Wang, W.Q. A hybrid stock price index forecasting model based on variational mode decomposition and LSTM network. Appl. Intell. 2020, 50, 4296–4309. [Google Scholar] [CrossRef]

- Carta, S.; Ferreira, A.; Podda, A.S. Multi-DQN: An ensemble of Deep Q-learning agents for stock market forecasting. Expert Syst. Appl. 2021, 164, 113820. [Google Scholar] [CrossRef]

- Lin, Y.H.; Ji, W.L.; He, H.W. Two-Stage Water Jet Landing Point Prediction Model for Intelligent Water Shooting Robot. Sensors 2021, 21, 2704. [Google Scholar] [CrossRef]

- Xie, J.; Chen, G.H.; Liu, S. Intelligent Badminton Training Robot in Athlete Injury Prevention Under Machine Learning. Front. Neurorobot. 2021, 15, 621196. [Google Scholar] [CrossRef]

- Ding, Y.H.; Hua, L.S.; Li, S.L. Research on computer vision enhancement in intelligent robot based on machine learning and deep learning. Neural Comput. Appl. 2021, 34, 2623–2635. [Google Scholar] [CrossRef]

- Seng, D.W.; Zhang, Q.Y.; Zhang, X.F. Spatiotemporal prediction of air quality based on LSTM neural network. Alex. Eng. J. 2021, 60, 2021–2032. [Google Scholar] [CrossRef]

- Qadeer, K.; Rehman, W.U.; Sheri, A.M. A Long Short-Term Memory (LSTM) Network for Hourly Estimation of PM2.5 Concentration in Two Cities of South Korea. Appl. Sci. 2020, 10, 3984. [Google Scholar] [CrossRef]

- Liu, D.R.; Hsu, Y.K.; Chen, H.Y. Air pollution prediction based on factory-aware attentional LSTM neural network. Computing 2020, 103, 75–98. [Google Scholar] [CrossRef]

- Arsov, M.; Zdravevski, E.; Lameski, P. Multi-Horizon Air Pollution Forecasting with Deep Neural Networks. Sensors 2021, 21, 1235. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.Y.; Li, J.Z.; Wang, X.X. Air quality prediction using CT-LSTM. Neural Comput. Appl. 2020, 33, 4779–4792. [Google Scholar] [CrossRef]

- Cabaneros, S.M.; Calautit, J.K.; Hughes, B. Spatial estimation of outdoor NO2 levels in Central London using deep neural networks and a wavelet decomposition technique. Ecol. Model. 2020, 424, 109017. [Google Scholar] [CrossRef]

- Huang, G.Y.; Li, X.; Zhang, B. PM2.5 concentration forecasting at surface monitoring sites using GRU neural network based on empirical mode decomposition. Sci. Total Environ. 2021, 768, 144516. [Google Scholar] [CrossRef]

- Kong, X.Y.; Zhang, T. Improved Generalized Predictive Control for High-Speed Train Network Systems Based on EMD-AQPSO-LS-SVM Time Delay Prediction Model. Math. Probl. Eng. 2020, 2020, 6913579. [Google Scholar] [CrossRef]

- Luo, X.L.; Gan, W.J.; Wang, L.X. A Prediction Model of Structural Settlement Based on EMD-SVR-WNN. Adv. Civ. Eng. 2020, 2020, 8831965. [Google Scholar] [CrossRef]

- Shu, W.W.; Gao, Q. Forecasting Stock Price Based on Frequency Components by EMD and Neural Networks. IEEE Access 2020, 8, 206388–206395. [Google Scholar] [CrossRef]

- Sekertekin, A.; Bilgili, M.; Arslan, N. Short-term air temperature prediction by adaptive neuro-fuzzy inference system (ANFIS) and long short-term memory (LSTM) network. Meteorol. Atmos. Phys. 2021, 133, 943–959. [Google Scholar] [CrossRef]

- Wu, J.M.T.; Li, Z.C.; Herencsar, N. A graph-based CNN-LSTM stock price prediction algorithm with leading indicators. Multimed. Syst. 2021, 1–20. [Google Scholar] [CrossRef]

- Gundu, V.; Simon, S.P. PSO–LSTM for short term forecast of heterogeneous time series electricity price signals. J. Ambient Intell. Humaniz. Comput. 2020, 12, 2375–2385. [Google Scholar] [CrossRef]

- Kazemi, M.S.; Banihabib, M.E.; Soltani, J. A hybrid SVR-PSO model to predict concentration of sediment in typical and debris floods. Earth Sci. Inform. 2021, 14, 365–376. [Google Scholar] [CrossRef]

- Khari, M.; Armaghani, D.J.; Dehghanbanadaki, A. Prediction of Lateral Deflection of Small-Scale Piles Using Hybrid PSO–ANN Model. Arab. J. Sci. Eng. 2019, 45, 3499–3509. [Google Scholar] [CrossRef]

- Malik, A.; Tikhamarine, Y.; Sammen, S.S. Prediction of meteorological drought by using hybrid support vector regression optimized with HHO versus PSO algorithms. Environ. Sci. Pollut. Res. 2021, 28, 39139–39158. [Google Scholar] [CrossRef] [PubMed]

- Liu, H.B.; Dong, Y.J.; Wang, F.Z. Gas Outburst Prediction Model Using Improved Entropy Weight Grey Correlation Analysis and IPSO-LSSVM. Math. Probl. Eng. 2020, 2020, 8863425. [Google Scholar] [CrossRef]

| AQI | Air Quality Level | Representative Color |

|---|---|---|

| 0~50 | Excellent | Green |

| 51~100 | Good | Yellow |

| 101~150 | Light pollution | Orange |

| 151~200 | Moderate pollution | Red |

| 201~300 | Severe pollution | Purple |

| 301~500 | Serious pollution | Maroon |

| Date | Hour | AQI | PM2.5 | PM10 | SO2 | NO2 | O3 | CO |

|---|---|---|---|---|---|---|---|---|

| 1 January 2020 | 0 | 58 | 37 | 66 | 6 | 62 | 2 | 0.9 |

| 1 January 2020 | 1 | 52 | 34 | 53 | 3 | 55 | 2 | 0.9 |

| 1 January 2020 | 2 | 41 | 28 | 41 | 3 | 51 | 2 | 0.7 |

| … | … | … | … | … | … | … | … | … |

| … | … | … | … | … | … | … | … | … |

| … | … | … | … | … | … | … | … | … |

| 31 December 2020 | 21 | 51 | 24 | 51 | 3 | 56 | 4 | 0.4 |

| 31 December 2020 | 22 | 47 | 22 | 47 | 3 | 48 | 9 | 0.4 |

| 31 December 2020 | 23 | 46 | 21 | 46 | 3 | 55 | 4 | 0.4 |

| Parameter | Interpretation | Value |

|---|---|---|

| Batch_size | Number of samples per training | 32 |

| Lr | Learning rate | 0.01 |

| Optimizer | Optimizer | Adam |

| Epochs | Number of iterations | 50 |

| Loss | Loss function | MSE |

| Activation | Activation function | Tanh |

| Site | Model | MAE | RMSE | MAPE | R2 |

|---|---|---|---|---|---|

| DONGSI | BP | 11.02 | 14.15 | 22.64 | 0.71 |

| LR | 17.11 | 23.22 | 32.37 | 0.32 | |

| LSTM | 7.62 | 10.21 | 22.87 | 0.85 | |

| EMD–LSTM | 6.04 | 7.46 | 14.13 | 0.89 | |

| EMD–IPSO–LSTM | 4.02 | 7.11 | 8.07 | 0.97 | |

| GUANYUAN | BP | 10.11 | 13.25 | 21.02 | 0.73 |

| LR | 19.35 | 26.25 | 42.11 | 0.13 | |

| LSTM | 7.65 | 10.25 | 22.85 | 0.85 | |

| EMD–LSTM | 6.05 | 8.32 | 14.21 | 0.89 | |

| EMD–IPSO–LSTM | 4.05 | 6.25 | 8.05 | 0.97 | |

| TIANTAN | BP | 8.65 | 13.02 | 20.53 | 0.78 |

| LR | 20.95 | 27.90 | 37.62 | 0.11 | |

| LSTM | 8.87 | 11.21 | 25.12 | 0.81 | |

| EMD–LSTM | 6.05 | 9.43 | 14.25 | 0.89 | |

| EMD–IPSO–LSTM | 4.42 | 9.12 | 10.05 | 0.96 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Huang, Y.; Yu, J.; Dai, X.; Huang, Z.; Li, Y. Air-Quality Prediction Based on the EMD–IPSO–LSTM Combination Model. Sustainability 2022, 14, 4889. https://doi.org/10.3390/su14094889

Huang Y, Yu J, Dai X, Huang Z, Li Y. Air-Quality Prediction Based on the EMD–IPSO–LSTM Combination Model. Sustainability. 2022; 14(9):4889. https://doi.org/10.3390/su14094889

Chicago/Turabian StyleHuang, Yuan, Junhao Yu, Xiaohong Dai, Zheng Huang, and Yuanyuan Li. 2022. "Air-Quality Prediction Based on the EMD–IPSO–LSTM Combination Model" Sustainability 14, no. 9: 4889. https://doi.org/10.3390/su14094889

APA StyleHuang, Y., Yu, J., Dai, X., Huang, Z., & Li, Y. (2022). Air-Quality Prediction Based on the EMD–IPSO–LSTM Combination Model. Sustainability, 14(9), 4889. https://doi.org/10.3390/su14094889