Motivating Users to Manage Privacy Concerns in Cyber-Physical Settings—A Design Science Approach Considering Self-Determination Theory

Abstract

:1. Introduction

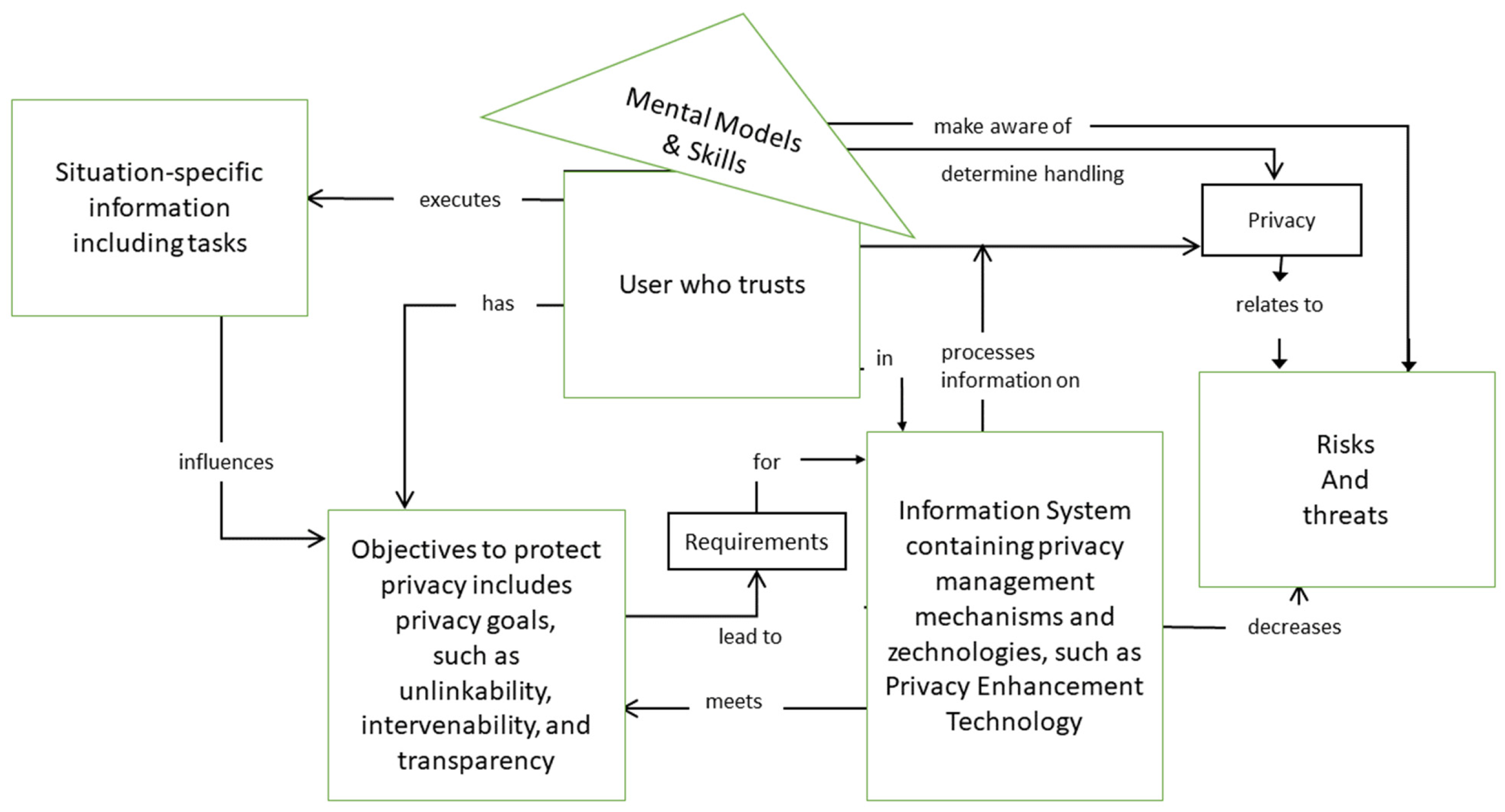

2. Towards User-Centric Privacy Management

2.1. The Shift from Techno- to User-Centric Privacy Awareness

- Encryption: This concerns data that are encrypted between the sending parties, e.g., the user and the receiving party, e.g., an IoT device provider, assuming the receiver is a trusted party.

- Dummy Request: This mechanism adds some effort to communication on the user side—some fake requests are sent in addition to the actual ones to mislead parties, aiming to intrude the user’s privacy.

- Obfuscation: In this case, some noise is added to data or other changes are made, such as complementing information or decomposing messages, in order to conceal the location within a certain area and hinder its recognition through data changes.

- Cooperation: This technique hides a single request through mashing it with a group of other requests. They are sent by other users from a certain region, without a need to communicate with the receiver. Sending all requests at once, the identity could be hidden within the group of cooperating users.

- Trusted Third Parties (TTP): An intermediate component, e.g., server system, is used to hide the identity of users when communicating further; again, assuming this intermediate component can be trusted with respect to preserving privacy.

- Privacy Information Retrieval (PIR): This technique hides the actual request in a large amount of information. Much more information is requested than originally required by a sender.

- Location awareness data enables tracking, and thus disclosing a person’s or a personal component of the location to others.

- Identity information is collected when data refer to their owner, so any malicious part could intercept it.

- Profile as information about individuals is compiled to infer interests by correlation with other user profiles and exchanged data.

- Linkage occurs in the background, when a provider or architecture component puts into mutual context different system components and user activities.

- Exchanged data concern data exchanged between IoT components due to their connectivity. As the data can be assigned to persons due to the roles of ‘sender’ and ‘receiver’, they can be attained and shared. Privacy-relevant information refers to these data.

2.2. User Engagement and Self-Determination

- Unlinkability, in order to ensure that personal data cannot be elicited nor processed, nor used for purposes other than those explicitly specified.

- Intervenability, in order to enable all concerned people have control through system access, and thus, to enforce their legal rights accordingly.

- System transparency, concerning the processing of personally identifiable information, in a verifiable and assessable way.

2.3. Facilitating User Engagement

- Transparency on data level and inferences for users to achieve their informed consent;

- Preference specification on privacy as inherent utility function of IoT applications;

- Availability of context information to support decision-making on (i) and (ii);

- Control in terms of privacy options setting and monitoring throughout runtime.

3. Self-Determination Theory

3.1. Intrinsic Versus Extrinsic Motivation and the Question of Self-Determination

“The term extrinsic motivation refers to the performance of an activity in order to attain some separable outcome, and thus, contrasts with intrinsic motivation”[10].

- External Regulation is the least autonomous form of extrinsic motivation and therefore is placed just right after amotivation. This subtype represents the behavior people perform to receive a reward or to avoid negative consequences as punishments [11]. Hence, external regulation corresponds to “the type of motivation focused on by operant theorists” [10].

- Introjection represents the second non-autonomous subtype of extrinsic motivation, although the underlying values have been “partially internalized” [11]. The “behavior is regulated by the internal reward of self-esteem for success and by avoidance of anxiety, shame or guilty for failure” [11]. Thus, people experience an internal pressure to act without identifying with the underlying value, nor do they “accept it as his or her own” [36].

- Identification is a more self-regulated or autonomous form of extrinsic motivation [11]. “Here, the person has identified with the personal importance of a behavior and has thus accepted its regulation as his or her own” [31]. Hence, people have internalized the underlying values and perceive the behavior as somewhat self-determined [11].

- Integration describes the most autonomous or self-regulated subtype of extrinsic motivation [11]. In contrast to identification, a “person not only recognizes or identifies with the value of the activity, but also finds it to be congruent with other core interests and values” [11]. Such autonomous extrinsic motivation shares with intrinsic motivation perceived self-determination [11] and high-quality performance [31].

3.2. Basic Needs

“[…] in no way does the idea of self- governance imply, either logically or practically, that people’s behavior is determined independently of influences from the social environment […]. We know of no real-world circumstances in which people’s behavior is totally independent of external influences, but, even if there were, that is not the critical issue in whether the people’s behavior is autonomous. Autonomy concerns the extent to which people authentically or genuinely concur with the forces that do influence their behavior”[40].

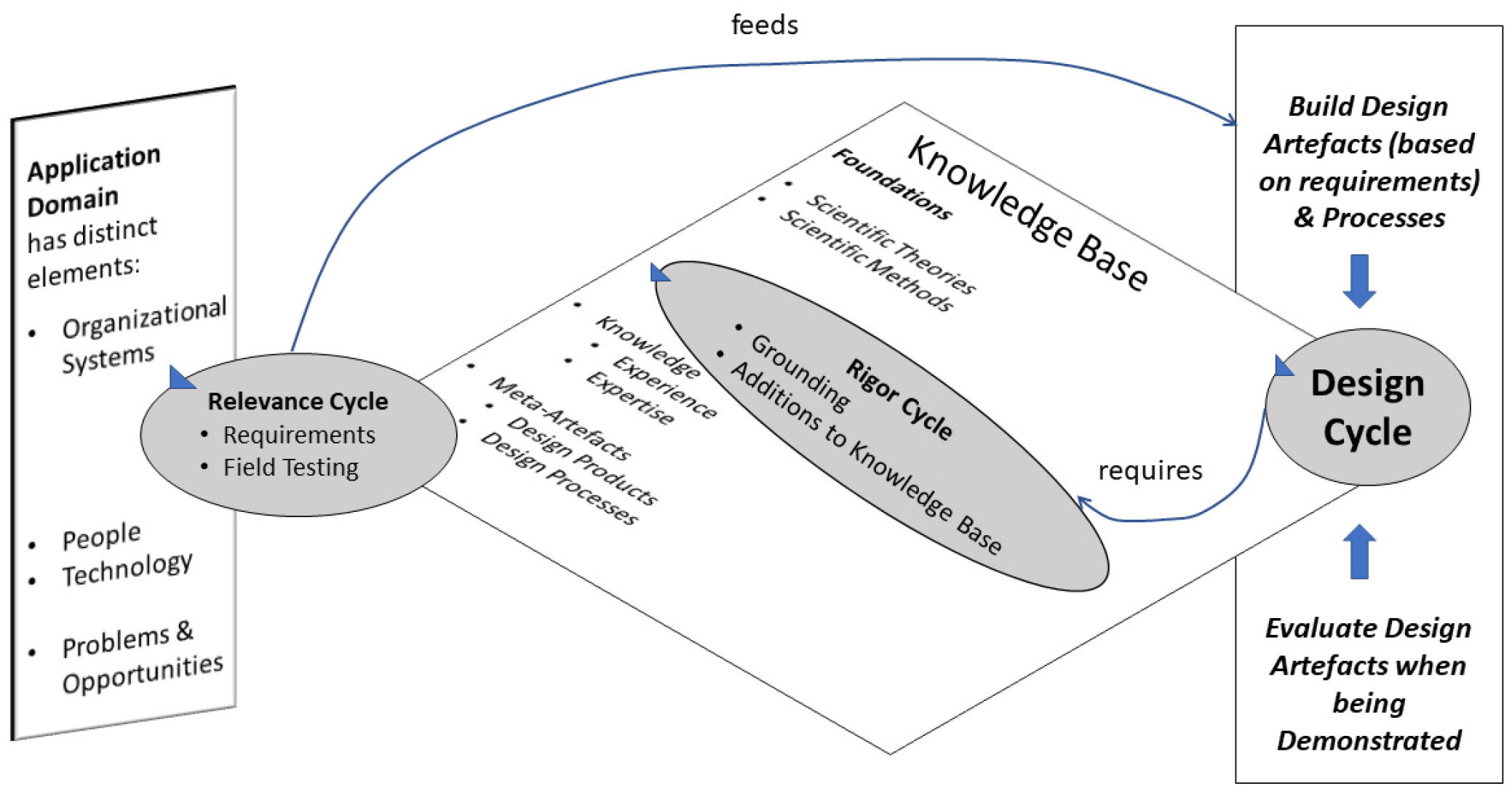

4. Towards Self-Determined Privacy Management

4.1. Framing SDT-Based Development

4.2. SDT-Informed Development Steps

4.3. Appropriation of SDT-Instruments in Development Context

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Banafa, A. Three Major Challenges Facing IoT, IEEE IoT Newsletter. 2017. Available online: https://iot.ieee.org/newsletter/march2017/three-major-challenges-facing-iot.html (accessed on 26 October 2021).

- Dhotre, P.S.; Olesen, H.; Khajuria, S. User privacy and empowerment: Trends, challenges, and opportunities. In Intelligent Computing and Information and Communication; Advances in Intelligent Systems and Computing; Bhalla, S., Bhateja, V., Chandavale, A., Hiwale, A., Satapathy, S., Eds.; Springer: Singapore, 2018; Volume 673, pp. 291–304. [Google Scholar] [CrossRef]

- Laurent, M.; Leneutre, J.; Chabridon, S.; Laaouane, I. Authenticated and privacy-preserving consent management in the Internet of Things. Proc. Comput. Sci. 2019, 151, 256–263. [Google Scholar] [CrossRef]

- Hengstschläger, J.; Leeb, D. Grundrechte; Manz Publishing House: Víenna, Austria, 2012. [Google Scholar]

- Friedewald, M. A new concept for privacy in the light of emerging sciences and technologies. TATuP-Z. Tech. Theor. Prax. 2010, 19, 71–74. [Google Scholar] [CrossRef] [Green Version]

- Poletti, D. IoT and Privacy. In Privacy and Data Protection in Software Services; Senigaglia, R., Orti, C., Bernes, A., Eds.; Springer: Singapore, 2022; pp. 185–275. [Google Scholar]

- Flinn, S.; Lumsden, J. User Perceptions of Privacy and Security on the Web, 2005, In PST. Available online: https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.60.9160&rep=rep1&type=pdf (accessed on 26 October 2021).

- Janssen, H.; Cobbe, J.; Singh, J. Personal Information Management Systems: A User-Centric Privacy Utopia? Internet Policy Rev. 2020, 9, 1–25. [Google Scholar] [CrossRef]

- Princi, E.; Krämer, N.C. Out of control–privacy calculus and the effect of perceived control and moral considerations on the usage of IoT healthcare devices. Front. Psychol. 2020, 11, 582054. [Google Scholar] [CrossRef] [PubMed]

- Ryan, R.M.; Deci, E.L. Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. Am. Psychol. 2000, 55, 68–78. [Google Scholar] [CrossRef]

- Ryan, R.M.; Deci, E.L. Intrinsic and extrinsic motivation from a self-determination theory perspective: Definitions, theory, practices, and future directions. Contemp. Educ. Psychol. 2020, 61, 101860. [Google Scholar] [CrossRef]

- Li, Y.; Chang, K.C.; Wang, J. Self-determination and perceived information control in cloud storage service. J. Comput. Inf. Syst. 2020, 60, 113–123. [Google Scholar] [CrossRef]

- Hevner, A. A Three Cycle View of Design Science Research. Scand. J. Inf. Syst. 2007, 19, 87–92. [Google Scholar]

- Almutairi, M.M.; Abi Sen, A.A.; Yamin, M. Survey of PIR Approach and its Techniques for Preserving Privacy in IoT. In Proceedings of the 2021 8th International Conference on Computing for Sustainable Global Development (INDIACom), New Delhi, India, 17–19 March 2021; pp. 417–421. [Google Scholar]

- Ziegeldorf, J.H.; Morchon, O.G.; Wehrle, K. Privacy in the Internet of Things: Threats and challenges. Secur. Commun. Netw. 2014, 7, 2728–2742. [Google Scholar] [CrossRef]

- Skarmeta, A.; Hernández-Ramos, J.L.; Martinez, J.A. User-centric privacy. In Internet of Things Security and Data Protection; Springer: Cham, Switzerland, 2019; pp. 191–209. [Google Scholar]

- Kounoudes, A.D.; Kapitsaki, G.M. A mapping of IoT user-centric privacy preserving approaches to the GDPR. Internet Things 2020, 11, 100179. [Google Scholar] [CrossRef]

- Wachter, S. Normative challenges of identification in the Internet of Things: Privacy, profiling, discrimination, and the GDPR. Comput. Law Secur. Rev. 2018, 34, 436–449. [Google Scholar] [CrossRef]

- Ayed, G.B. Architecting User-Centric Privacy-as-a-Set-of-Services: Digital Identity-Related Privacy Framework; Springer Theses; Springer: Cham, Switzerland, 2014. [Google Scholar] [CrossRef]

- Tesfay, W.B.; Nastouli, D.; Stamatiou, Y.C.; Serna, J.M. pQUANT: A User-Centered Privacy Risk Analysis Framework. In International Conference on Risks and Security of Internet and Systems; Springer: Cham, Switzerland, 2019; pp. 3–16. [Google Scholar]

- Feth, D.; Maier, A.; Polst, S. A user-centered model for usable security and privacy. In International Conference on Human Aspects of Information Security, Privacy, and Trust; Springer: Cham, Switzerland, 2017; pp. 74–89. [Google Scholar]

- Marky, K.; Voit, A.; Stöver, A.; Kunze, K.; Schröder, S.; Mühlhäuser, M. I Don’t Know How to Protect Myself: Understanding Privacy Perceptions Resulting from the Presence of Bystanders in Smart Environments. In NordiCHI ′20: Proceedings of the 11th Nordic Conference on Human-Computer Interaction: Shaping Experiences, Shaping Society, Tallinn, Estonia, 25–29 October 2020; Association for Computing Machinery: New York, NY, USA, 2020; pp. 1–11. [Google Scholar]

- Knijnenburg, B.P.; Kobsa, A. Taking control of household IoT device privacy. In CCC Sociotechnical Cybersecurity Workshop; 2016; Available online: https://www.ics.uci.edu/~kobsa/papers/2016-CCC-Kobsa.pdf (accessed on 26 October 2021).

- Bracamonte, V.; Tesfay, W.B.; Kiyomoto, S. Towards Exploring User Perception of a Privacy Sensitive Information Detection Tool. In Proceedings of the 7th International Conference on Information Systems Security and Privacy (ICISSP 2021), Online, 11–13 February 2021; Scitepress: Setúbal, Portugal, 2021; pp. 628–634. [Google Scholar] [CrossRef]

- Pape, S.; Harborth, D.; Kröger, J.L. Privacy Concerns Go Hand in Hand With Lack of Knowledge: The Case of the German Corona-Warn-Wpp. Proceedings of IFIP International Conference on ICT Systems Security and Privacy Protection, SEC 2021, Oslo, Sweden, 22–24 June 2021; Jøsang, A., Futcher, L., Hagen, J., Eds.; Springer: Cham, Switzerland, 2021. [Google Scholar] [CrossRef]

- Padyab, A.; Kävrestad, J. Perceived Privacy Problems within Digital Contact Tracing: A Study Among Swedish Citizens. In ICT Systems Security and Privacy Protection, Proceedings of IFIP International Conference on ICT Systems Security and Privacy Protection, SEC 2021, Oslo, Norway, 22–24 June 2021; Jøsang, A., Futcher, L., Hagen, J., Eds.; Springer: Cham, Switzerland, 2021; Volume 625, pp. 270–283. [Google Scholar] [CrossRef]

- Shadbolt, N.; O’Hara, K.; De Roure, D.; Hall, W. Privacy, trust and ethical issues. In The Theory and Practice of Social Machines. Lecture Notes in Social Networks; Springer International Publishing: Cham, Switzerland, 2019; pp. 149–200. [Google Scholar] [CrossRef]

- Michael, J.; Koschmider, A.; Mannhardt, F. Process Mining System Design for IoT, CAiSE Forum; LNBIP 350; Baracaldo, N., Rum, B., Cappiello, C., Ruiz, M., Eds.; Springer: Cham, Switzerland, 2019; pp. 194–206. [Google Scholar] [CrossRef]

- Stöver, A.; Kretschmer, F.; Cornel, C.; Marky, K. Work in progress: How I met my privacy assistant—A user-centric workshop. In Mensch und Computer 2020—Workshopband; Hansen, C., Nürnberger, A., Preim, B., Eds.; Gesellschaft für Informatik e.V.: Bonn, Germany, 2020. [Google Scholar] [CrossRef]

- Vansteenkiste, M.; Niemiec, C.P.; Soenens, B. The development of the five mini-theories of self-determination theory: An historical overview, emerging trends, and future directions. In The Decade Ahead: Theoretical Perspectives on Motivation and Achievement; Emerald Group Publishing Limited: Bingley, UK, 2010. [Google Scholar]

- Ryan, R.M.; Deci, E.L. Intrinsic and Extrinsic Motivations: Classic Definitions and New Directions. Contemp. Educ. Psychol. 2000, 25, 54–67. [Google Scholar] [CrossRef]

- Deci, E.L.; Ryan, R. Intrinsic Motivation and Self-Determination in Human Behavior; Plenum: New York, NY, USA, 1985. [Google Scholar]

- Ryan, R.M.; Deci, E.L. Self-Determination Theory: Basic Psychological Needs in Motivation, Development, and Wellness; Guilford Publications: New York, NY, USA, 2017. [Google Scholar]

- Taylor, G.; Jungert, T.; Mageau, G.A.; Schattke, K.; Dedic, H.; Rosenfield, S.; Koestner, R. A self-determination theory approach to predicting school achievement over time: The unique role of intrinsic motivation. Contemp. Educ. Psychol. 2014, 39, 342–358. [Google Scholar] [CrossRef]

- Deci, E.L.; Ryan, R.M. The support of autonomy and the control of behavior. J. Personal. Soc. Psychol. 1987, 53, 1024. [Google Scholar] [CrossRef]

- Deci, E.L.; Eghrari, H.; Patrick, B.C.; Leone, D.R. Facilitating internalization: The self-determination theory perspective. J. Personal. 1994, 62, 119–142. [Google Scholar] [CrossRef]

- Moller, A.C.; Deci, E.L.; Ryan, R.M. Choice and ego-depletion: The moderating role of autonomy. Personal. Soc. Psychol. Bull. 2006, 32, 1024–1036. [Google Scholar] [CrossRef]

- Chen, B.; Vansteenkiste, M.; Beyers, W.; Boone, L.; Deci, E.L.; Van der Kaap-Deeder, J.; Duriez, B.; Lens, W.; Matos, L.; Mouratidis, A. Basic psychological need satisfaction, need frustration, and need strength across four cultures. Motiv. Emot. 2015, 39, 216–236. [Google Scholar] [CrossRef]

- Deci, E.L.; Ryan, R.M. Overview of self-determination theory: An organismic dialectical perspective. Handb. Self-Determ. Res. 2002, 2, 3–33. [Google Scholar]

- Ryan, R.M.; Deci, E.L. The darker and brighter sides of human existence: Basic psychological needs as a unifying concept. Psychol. Inq. 2000, 11, 319–338. [Google Scholar] [CrossRef]

- Ryan, R.M.; Mims, V.; Koestner, R. Relation of reward contingency and interpersonal context to intrinsic motivation: A review and test using cognitive evaluation theory. J. Personal. Soc. Psychol. 1983, 45, 736. [Google Scholar] [CrossRef]

- Liu, Y. From data flows to privacy issues: A user-centric semantic model for representing and discovering privacy issues. In Proceedings of the 53rd Hawaii International Conference on System Sciences, Honolulu, HI, USA, 7–10 January 2020; University of Hawaii: Honolulu, HI, USA. Available online: http://hdl.handle.net/10125/64541 (accessed on 1 November 2021).

- Steinfeld, N. “I agree to the terms and conditions”: (How) do users read privacy policies online? An eye-tracking experiment. Comput. Hum. Behav. 2016, 55, 992–1000. [Google Scholar] [CrossRef]

- Mohanty, S.P. Healthcare cyber-physical system is more important than before. IEEE Consum. Electron. Mag. 2020, 9, 6–7. [Google Scholar] [CrossRef]

- Peffers, K.; Tuunanen, T.; Rothenberger, M.A.; Chatterjee, S. A Design Science Research Methodology for Information Systems Research. J. Manag. Inf. Syst. 2007, 24, 45–77. [Google Scholar] [CrossRef]

- Rashid, U.; Schmidtke, H.; Woo, W. Managing disclosure of personal health information in smart home healthcare. In International Conference on Universal Access in Human-Computer Interaction; Springer: Berlin/Heidelberg, Germany, 2007; pp. 188–197. [Google Scholar]

- Masur, P.K. How online privacy literacy supports self-data protection and self-determination in the age of information. Media Commun. 2020, 8, 258–269. [Google Scholar] [CrossRef]

- Stary, C.; Kaar, C. Design-Integrated IoT Capacity Building using Tangible Building Blocks. In Proceedings of the 2020 IEEE 20th International Conference on Advanced Learning Technologies (ICALT), Tartu, Estonia, 6–9 July 2020; pp. 185–187. [Google Scholar]

- Stary, C.; Kaar, C.; Jahn, M. Featuring dual learning experiences in tangible CPS education: A synchronized internet-of-things–digital-twin system. In Companion of the 2021 ACM SIGCHI Symposium on Engineering Interactive Computing Systems; ACM: New York, NY, USA, 2021; pp. 56–62. [Google Scholar]

- Asikis, T.; Pournaras, E. Optimization of privacy-utility trade-offs under informational self-determination. Future Gener. Comput. Syst. 2020, 109, 488–499. [Google Scholar] [CrossRef]

- Ruff, C.; Horch, A.; Benthien, B.; Loh, W.; Orlowski, A. DAMA–A transparent meta-assistant for data self-determination in smart environments. In Open Identity Summit 2021, Lecture Notes in Informatics (LNI); Roßnagel, H., Schunck, C.H., Mödersheim, S., Eds.; Gesellschaft für Informatik: Bonn, Germany, 2021; pp. 119–130. [Google Scholar]

| SDT-Addressed User Needs Privacy Management Requirement | Competence | Autonomy | Relatedness |

|---|---|---|---|

| Transparency on data level and inferences to provide informed consent | Users are able due to intelligible access options to recognize which privacy-relevant data are collected and processed to acknowledge sharing those data. Users are able to articulate need for (additional) capacity building to provide informed consent. | It is transparent to each user which entity generates and processes privacy-relevant data and how generation and processing can be influenced, and therefore each user has the choice to intervene in providing consent. | Users perceive respect when having access to this information for providing informed consent. |

| Preference specification features on privacy | Users feel qualified to express their privacy preferences to influence system behavior. | Users have the access rights to edit their preferences on sharing privacy-relevant information. | Users perceive recognition of their needs when having the opportunity to provide their privacy preferences for system adaptation. |

| Context information for informed decision-making | The provided context information brings users into the position to make informed decisions. | Users can decide whether to utilize context information for informed decision-making. | Users can share context information with others for informed decision-making. |

| Privacy options setting and monitoring features throughout runtime | Users have the ability to control system behavior by setting privacy parameters at runtime and monitoring the system behavior. | Users decide when and how to monitor the implementation of their individual privacy requirements, and when and how to modify it. | Users perceive their privacy demands are taken seriously, because they can set privacy options dynamically, and monitor their implementation. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Oppl, S.; Stary, C. Motivating Users to Manage Privacy Concerns in Cyber-Physical Settings—A Design Science Approach Considering Self-Determination Theory. Sustainability 2022, 14, 900. https://doi.org/10.3390/su14020900

Oppl S, Stary C. Motivating Users to Manage Privacy Concerns in Cyber-Physical Settings—A Design Science Approach Considering Self-Determination Theory. Sustainability. 2022; 14(2):900. https://doi.org/10.3390/su14020900

Chicago/Turabian StyleOppl, Sabrina, and Christian Stary. 2022. "Motivating Users to Manage Privacy Concerns in Cyber-Physical Settings—A Design Science Approach Considering Self-Determination Theory" Sustainability 14, no. 2: 900. https://doi.org/10.3390/su14020900

APA StyleOppl, S., & Stary, C. (2022). Motivating Users to Manage Privacy Concerns in Cyber-Physical Settings—A Design Science Approach Considering Self-Determination Theory. Sustainability, 14(2), 900. https://doi.org/10.3390/su14020900