A Systematic Review of Machine-Translation-Assisted Language Learning for Sustainable Education

Abstract

:1. Introduction

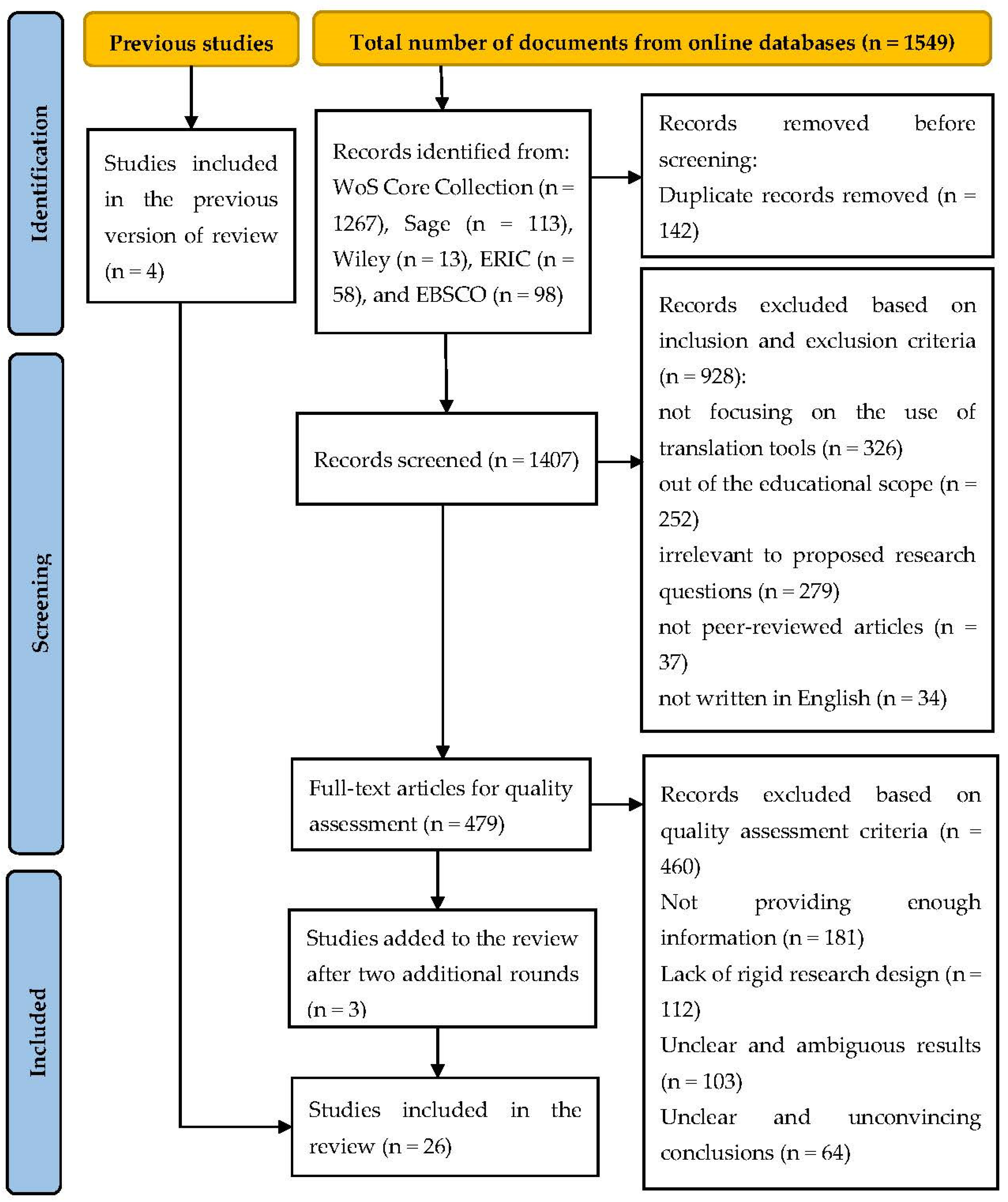

2. Literature Review

3. Research Methods

3.1. Literature Search

3.2. Inclusion and Exclusion Criteria

3.3. Quality Assessment

- (a)

- The study provided enough information for this review study. The options were “Yes (2)”, “Limited (1)”, and “No (0)”.

- (b)

- The study was rigidly designed, and the research design was clearly described. The possible answers were “Yes (2)”, “Limited (1)”, and “No (0)”.

- (c)

- The presentation of the results was clear and unambiguous. The possible answers were “Yes (2)”, “Limited (1)”, and “No (0)”.

- (d)

- The study arrived at clear and convincing conclusions. The options were “Yes (2)”, “Limited (1)”, and “No (0)”.

4. Results

4.1. RQ1. Who Are the Main Users of MT Tools?

| N. | Main Users | Included Studies | Total Number |

|---|---|---|---|

| 1 | Elementary school students | [1,35] | 2 |

| 2 | Secondary school students | [6,36] | 2 |

| 3 | Preuniversity students | [37] | 1 |

| 4 | Undergraduate and graduate students | [4,5,7,27,34,38,39,40,41,42,43,44,45,46,47,48,49] | 17 |

| 5 | Elementary school teachers | [1] | 1 |

| 6 | University educators | [34,50] | 2 |

| 7 | Preservice teachers | [29] | 1 |

| 8 | Not available | [32,33] | 2 |

4.2. RQ2. What Are the Frequently Used Theoretical Frameworks Adopted in MTALL Research?

4.3. RQ3. What Are the Users’ Attitudes towards Machine Translation Tools?

4.3.1. Students’ Attitudes

4.3.2. Teachers’ Attitudes

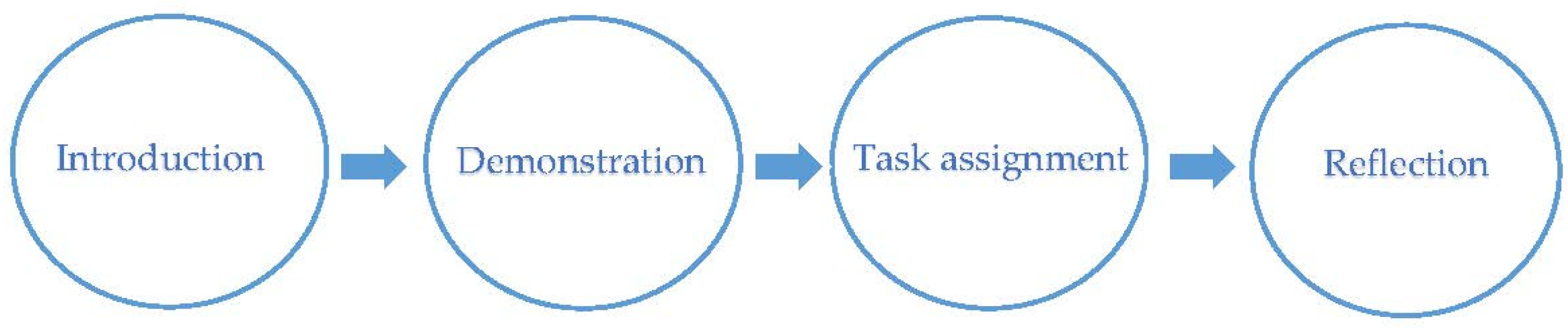

4.4. RQ4. How Are MT Tools Integrated with Language Teaching and Learning?

5. Discussion

6. Conclusions

6.1. Major Findings

6.2. Limitations

6.3. Implications for Future Research

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| N. | Study | Theoretical Framework | Research Instruments | Applications or Platforms | Research Foci | Quality Assessment | ||||

|---|---|---|---|---|---|---|---|---|---|---|

| (a) | (b) | (c) | (d) | Total | ||||||

| 1 | [37] | The taxonomy of error types | Students’ essays | Google Translate | The linguistic accuracy of English translation product | 1 | 2 | 2 | 2 | 7 |

| 2 | [32] | Not available | Tasks and students’ oral reflections | Not available | Students’ attitudes towards MT | 1 | 2 | 2 | 2 | 7 |

| 3 | [4] | Not available | Tasks and questionnaires | Google Translate, Baidu Translate, and Sogou Translate | Students’ attitudes towards MT | 1 | 2 | 2 | 2 | 7 |

| 4 | [50] | Not available | Questionnaires | Not available | Translation educators’ attitudes towards MT | 1 | 2 | 2 | 2 | 7 |

| 5 | [27] | Not available | Questionnaires and students’ reflections | SmartMATE | Students’ perceptions of MT syllabus and self-evaluation of learning outcomes | 1 | 2 | 2 | 2 | 7 |

| 6 | [29] | The TPACK framework | Teachers’ reflections | Speak & Translate | Teachers’ attitudes towards MT | 2 | 2 | 2 | 2 | 8 |

| 7 | [38] | Not available | Interviews | Google Translate | Learners’ perceived affordances of the application and experiences with it | 1 | 2 | 2 | 2 | 7 |

| 8 | [39] | Not available | Interviews | Google Translate | Learners’ behaviors and attitudes towards MT use | 1 | 2 | 2 | 2 | 7 |

| 9 | [40] | Not available | Questionnaires | Google Translate and Naver Translate | Students’ use of MT and attitudes towards it | 1 | 2 | 2 | 2 | 7 |

| 10 | [41] | Technology acceptance model | Questionnaires | Google Translate, Bing Translate, and Baidu Translate | Students’ responses to postediting of MT tools | 2 | 2 | 2 | 2 | 8 |

| 11 | [42] | Not available | Tests | Wordfast Anywhere | The effect of the translation tool on students’ translation skills | 1 | 2 | 2 | 2 | 7 |

| 12 | [5] | Not available | Writing tasks, interviews, and reflection papers | Google Translate and Papago | The effect of translation tools on students’ English writing and students’ attitudes towards translation tools | 2 | 2 | 2 | 2 | 8 |

| 13 | [35] | Not available | Observations | Google Translate | The ways in which students use the translation tool | 1 | 2 | 2 | 2 | 7 |

| 14 | [43] | Not available | Questionnaires | Apertium, Systran, DeepL, Google, Translate 2018, MemSource, and MateCat | Students’ attitudes towards the use of translation tools | 1 | 2 | 2 | 2 | 7 |

| 15 | [1] | Translanguaging | Pupil focus groups and teachers’ interviews | Not available | Students’ and teachers’ attitudes towards MT | 2 | 2 | 2 | 2 | 8 |

| 16 | [7] | Not available | Tests | Microsoft Translator | The effect of machine translation on students’ translation quality | 1 | 2 | 2 | 2 | 7 |

| 17 | [44] | Technology acceptance model | Questionnaires and structural equation analysis | Not available | Students’ behavioural learning patterns in MT use | 2 | 2 | 2 | 2 | 8 |

| 18 | [45] | Technology acceptance model | Questionnaires | Not available | Students’ intention to use MT by considering experience and motivation | 2 | 2 | 2 | 2 | 8 |

| 19 | [6] | Not available | Writing tasks | Google Translate | The effects of the translation tool on writing quality | 1 | 2 | 2 | 2 | 7 |

| 20 | [36] | Not available | Posts and responses from Student Room forum | Google Translate | Students’ attitudes towards the translation tool use | 1 | 2 | 2 | 2 | 7 |

| 21 | [46] | Not available | Evaluators’ scores and reflections | Google Translate | The comparison between students’ translation and machine translation and factors influencing machine translation quality | 1 | 2 | 2 | 2 | 7 |

| 22 | [47] | Not available | Writing tasks and questionnaires | Google Translate | The effect of the translation tool on English writing and students’ attitudes towards Google Translate | 1 | 2 | 2 | 2 | 7 |

| 23 | [48] | CALF measures | Writing tasks and questionnaires | Google Translate | The effect of the translation tool on linguistic features and students’ attitudes toward Google Translate | 2 | 2 | 2 | 2 | 8 |

| 24 | [49] | Not available | Writing tasks and questionnaires | Google Translate | The comparison between students’ translation and machine translation and students’ attitudes towards Google Translate | 2 | 2 | 2 | 2 | 8 |

| 25 | [34] | Ecological theoretical framework | Questionnaires | Not available | Foreign language instructors’ attitudes towards MT | 2 | 2 | 2 | 2 | 8 |

| 26 | [33] | The ADAPT approach | Questionnaires | Google Translate | Students’ attitudes towards the translation tool | 2 | 2 | 2 | 2 | 8 |

References

- Kelly, R.; Hou, H. Empowering learners of English as an additional language: Translanguaging with machine translation. Lang. Educ. 2021, 1–16. [Google Scholar] [CrossRef]

- Kaspere, R.; Horbacauskiene, J.; Motiejuniene, J.; Liubiniene, V.; Patasiene, I.; Patasius, M. Towards sustainable use of machine translation: Usability and perceived quality from the end-user perspective. Sustainability 2021, 13, 13430. [Google Scholar] [CrossRef]

- Stapleton, P.; Kin, B.L.K. Assessing the accuracy and teachers’ impressions of Google Translate: A study of primary L2 writers in Hong Kong. Engl. Specif. Purp. 2019, 56, 18–34. [Google Scholar] [CrossRef]

- Xu, J. Machine translation for editing compositions in a Chinese language class: Task design and student beliefs. J. Technol. Chin. Lang. Teach. 2020, 11, 1–18. Available online: http://www.tclt.us/journal/2020v11n1/xu.pdf (accessed on 10 May 2022).

- Lee, S.M. The impact of using machine translation on EFL students’ writing. Comput. Assist. Lang. Learn. 2020, 33, 157–175. [Google Scholar] [CrossRef]

- Cancino, M.; Panes, J. The impact of Google Translate on L2 writing quality measures: Evidence from Chilean EFL high school learners. System 2021, 98, 102464. [Google Scholar] [CrossRef]

- Olkhovska, A.; Frolova, I. Using machine translation engines in the classroom: A survey of translation students’ performance. Adv. Educ. 2020, 15, 47–55. [Google Scholar] [CrossRef]

- Alhaisoni, E.; Alhaysony, M. An investigation of Saudi EFL university students’ attitudes towards the use of Google Translate. Int. J. Eng. Lang. Educ. 2017, 5, 72–82. [Google Scholar] [CrossRef] [Green Version]

- Lee, S.M. The effectiveness of machine translation in foreign language education: A systematic review and meta-analysis. Comput. Assist. Lang. Learn. 2021, 1–23. [Google Scholar] [CrossRef]

- Kanglang, L.; Afzaal, M. Artificial intelligence (AI) and translation teaching: A critical perspective on the transformation of education. Int. J. Educ. Sci. 2021, 33, 64–73. [Google Scholar] [CrossRef]

- Zhen, Y.; Wu, Y.; Yu, G.; Zheng, C. A review study of the application of machine translation in education from 2011 to 2020. In Proceedings of the 29th International Conference on Computers in Education (ICCE), Electron Network, Bangkok, Thailand, 22–26 November 2021; pp. 17–24. [Google Scholar]

- Suarez, L.M.C.; Nunez-Valdes, K.; Alpera, S.Q.Y. A systemic perspective for understanding digital transformation in higher education: Overview and subregional context in Latin America as evidence. Sustainability 2021, 13, 12956. [Google Scholar] [CrossRef]

- Kirov, V.; Malamin, B. Are translators afraid of artificial intelligence? Societies 2022, 12, 70. [Google Scholar] [CrossRef]

- Huang, X.Y.; Zou, D.; Cheng, G.; Xie, H.R. A systematic review of AR and VR enhanced language learning. Sustainability 2021, 13, 4639. [Google Scholar] [CrossRef]

- Yang, H.; Kim, H.; Lee, J.H.; Shin, D. Implementation of an AI chatbot as an English conversation partner in EFL speaking classes. ReCALL 2022, 1–17. [Google Scholar] [CrossRef]

- Cancedda, N.; Dymetman, M.; Foster, G.; Goutte, C. A statistical machine learning primer. In Learning Machine Translation; Goutte, C., Cancedda, N., Dymetman, M., Eds.; MIT Press: Cambridge, MA, USA, 2009; pp. 1–38. [Google Scholar]

- Omar, A.; Gomaa, Y.A. The machine translation of literature: Implications for translation pedagogy. Int. J. Emerg. Technol. Learn. 2020, 15, 228–235. [Google Scholar] [CrossRef]

- Anazawa, R.; Ishikawa, H.; Takahiro, K. Evaluation of online machine translation by nursing users. Comput. Inform. Nurs. 2013, 31, 382–387. [Google Scholar] [CrossRef]

- Archila, P.A.; de Mejia, A.-M. Bilingual teaching practices in university science courses: How do biology and microbiology students perceive them? J. Lang. Identity Educ. 2020, 19, 163–178. [Google Scholar] [CrossRef]

- Han, C.; Lu, X. Can automated machine translation evaluation metrics be used to assess students’ interpretation in the language learning classroom? Comput. Assist. Lang. Learn. 2021, 1–24. [Google Scholar] [CrossRef]

- Musk, N. Using online translation tools in computer-assisted collaborative EFL writing. Classr. Discourse 2022, 13, 1–27. [Google Scholar] [CrossRef]

- Bowker, L. Chinese speakers’ use of machine translation as an aid for scholarly writing in English: A review of the literature and a report on a pilot workshop on machine translation literacy. Asia Pac. Transl. Intercult. Stud. 2020, 7, 288–298. [Google Scholar] [CrossRef]

- Guerberof-Arenas, A.; Toral, A. The impact of post-editing and machine translation on creativity and reading experience. Transl. Spaces 2020, 9, 255–282. [Google Scholar] [CrossRef]

- Cementina, S. Language teachers’ digital mindsets: Links between everyday use and professional use of technology. TESL Can. J. 2019, 36, 31–54. [Google Scholar] [CrossRef]

- Sun, P.P.; Mei, B. Modeling preservice Chinese-as-a-second/foreign-language teachers’ adoption of educational technology: A technology acceptance perspective. Comput. Assist. Lang. Learn. 2020, 35, 816–839. [Google Scholar] [CrossRef]

- Pan, X. Technology acceptance, technological self-efficacy, and attitude toward technology-based self-directed learning: Learning motivation as a mediator. Front. Psychol. 2020, 11, 564294. [Google Scholar] [CrossRef] [PubMed]

- Doherty, S.; Kenny, D. The design and evaluation of a statistical machine translation syllabus for translation students. Interpret. Transl. Train. 2014, 8, 295–315. [Google Scholar] [CrossRef]

- Tian, Y. Error tolerance of machine translation: Findings from failed teaching design. J. Technol. Chin. Lang. Teach. 2020, 11, 19–35. [Google Scholar]

- Ross, R.K.; Lake, V.E.; Beisly, A.H. Preservice teachers’ use of a translation app with dual language learners. J. Dig. Learn. Teach. Educ. 2021, 37, 86–98. [Google Scholar] [CrossRef]

- Crawford, C.; Boyd, C.; Jain, S.; Khorsan, R.; Jonas, W. Rapid evidence assessment of the literature (REAL): Streamlining the systematic review process and creating utility for evidence-based health care. BMC Res. Notes 2015, 8, 631. [Google Scholar] [CrossRef]

- Moule, P.; Pontin, D.; Gilchrist, M.; Ingram, R. Critical Appraisal Framework. 2003. Available online: http://hsc.uwe.ac.uk/dataanalysis/critFrame.asp© (accessed on 9 May 2022).

- Nino, A. Exploring the use of online machine translation for independent language learning. Res. Learn. Technol. 2020, 28, 2402. [Google Scholar] [CrossRef]

- Knowles, C.L. Using an ADAPT approach to integrate Google Translate into the second language classroom. L2 J. 2022, 14, 195–236. [Google Scholar] [CrossRef]

- Hellmich, E.; Vinall, K. FL instructor beliefs about machine translation: Ecological insights to guide research and practice. Int. J. Comput. Assist. Lang. Learn. Teach. 2021, 11, 1–18. [Google Scholar] [CrossRef]

- Rowe, L.W. Google Translate and biliterate composing: Second graders’ use of digital translation tools to support bilingual writing. Tesol. Q. 2022, 1–24. [Google Scholar] [CrossRef]

- Organ, A. Attitudes to the use of Google Translate for L2 production: Analysis of chatroom discussions among UK secondary school students. Lang. Learn. J. 2022, 1–16. [Google Scholar] [CrossRef]

- Groves, M.; Mundt, K. Friend or foe? Google Translate in language for academic purposes. Engl. Specif. Purp. 2015, 37, 112–121. [Google Scholar] [CrossRef]

- Bin Dahmash, N. “I can’t live without Google Translate”: A close look at the use of Google Translate app by second language learners in Saudi Arabia. Arab. World Engl. J. 2020, 11, 226–240. [Google Scholar] [CrossRef]

- Chompurach, W. “Please let me use Google Translate”: Thai EFL students’ behavior and attitudes toward Google Translate use in English writing. Engl. Lang. Teach. 2021, 14, 23–35. [Google Scholar] [CrossRef]

- Briggs, N. Neural machine translation tools in the language learning classroom: Students’ use, perceptions, and analyses. JALT CALL J. 2018, 14, 2–24. [Google Scholar] [CrossRef]

- Yang, Z.; Mustafa, H.R. On postediting of machine translation and workflow for undergraduate translation program in China. Hum. Behav. Emerg. Technol. 2022, 2022, 5793054. [Google Scholar] [CrossRef]

- El-Garawany, M.S.M. Using Wordfast Anywhere computer-assisted translation (CAT) tool to develop English majors’ EFL translation skills. J. Educ. Sohag Univ. 2021, 84, 36–71. [Google Scholar] [CrossRef]

- Pastor, D.G. Introducing machine translation in the translation classroom: A survey on students’ attitudes and perceptions. Rev. Tradumatica 2021, 19, 47–65. [Google Scholar] [CrossRef]

- Tsai, P.S.; Liao, H.C. Students’ progressive behavioral learning patterns in using machine translation systems—A structural equation modelling analysis. System 2021, 101, 102594. [Google Scholar] [CrossRef]

- Yang, Y.X.; Wang, X.L. Modeling the intention to use machine translation for student translators: An extension of Technology Acceptance Model. Comput. Educ. 2019, 133, 116–126. [Google Scholar] [CrossRef]

- Lee, S.M. An investigation of machine translation output quality and the influencing factors of source texts. ReCALL 2022, 34, 81–94. [Google Scholar] [CrossRef]

- Tsai, S.C. Chinese students’ perceptions of using Google Translate as a translingual CALL tool in EFL writing. Comput. Assist. Lang. Learn. 2020, 1–23. [Google Scholar] [CrossRef]

- Chung, E.S.; Ahn, S. The effect of using machine translation on linguistic features in L2 writing across proficiency levels and text genres. Comput. Assist. Lang. Learn. 2021, 1–26. [Google Scholar] [CrossRef]

- Tsai, S.C. Using google translate in EFL drafts: A preliminary investigation. Comput. Assist. Lang. Learn. 2019, 32, 510–526. [Google Scholar] [CrossRef]

- Rico, C.; Pastor, D.G. The role of machine translation in translation education: A thematic analysis of translator educators’ beliefs. Transl. Interpret. 2022, 14, 177–197. [Google Scholar] [CrossRef]

- Ferris, D.; Liu, H.; Sinha, A.; Senna, M. Written corrective feedback for individual L2 writers. J. Second. Lang. Writ. 2013, 22, 307–329. [Google Scholar] [CrossRef]

- Yang, W.; Kim, Y. The effect of topic familiarity on the complexity, accuracy, and fluency of second language writing. Appl. Linguist. Rev. 2020, 11, 79–108. [Google Scholar] [CrossRef]

- Wang, W.; Curdt-Christiansen, X.L. Translanguaging in a Chinese-English bilingual education programme: A university-classroom ethnography. Int. J. Biling. Educ. Biling. 2019, 22, 322–337. [Google Scholar] [CrossRef]

- García, O.; Li, W. Language, bilingualism, and education. In Translanguaging: Language, Bilingualism and Education; Palgrave Pivot: London, UK, 2014; pp. 46–62. [Google Scholar] [CrossRef]

- Blin, F. Towards an ‘ecological’ CALL theory: Theoretical perspectives and their instantiation in CALL research and practice. In The Routledge Handbook of Language Learning and Technology; Farr, F., Murray, L., Eds.; Routledge: New York, NY, USA, 2016; pp. 39–54. [Google Scholar] [CrossRef]

- Fishbein, M.; Ajzen, I. Belief, Attitude, Intention, and Behavior: An Introduction to Theory and Research; Addison-Wesley: New York, NY, USA, 1975; 578p. [Google Scholar]

- Davis, F.D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef] [Green Version]

- Shulman, L.S. Those who understand: Knowledge growth in teaching. Educ. Res. 1986, 15, 4–14. [Google Scholar] [CrossRef]

- Mishra, P.; Koehler, M.J. Technological pedagogical content knowledge: A framework for teacher knowledge. Teach. Coll. Rec. 2006, 108, 1017–1054. [Google Scholar] [CrossRef]

- O’Brien, S.; Simard, M.; Goulet, M.-J. Machine translation and self-post-editing for academic writing support: Quality explorations. In Translation Quality Assessment: From Principles to Practice; Moorkens, J., Castilho, S., Gaspari, F., Doherty, S., Eds.; Springer: Cham, Switzerland, 2018; pp. 237–262. [Google Scholar] [CrossRef]

- Druce, P.M. Attitude to the use of L1 and translation in second language teaching and learning. J. Second. Lang. Teach. Res. 2012, 2, 60–86. [Google Scholar]

- Yu, Z.; Yu, L.; Xu, Q.; Xu, W.; Wu, P. Effects of mobile learning technologies and social media tools on students engagement and learning outcomes of English learning. Technol. Pedagog. Educ. 2022, 1–18. [Google Scholar] [CrossRef]

- Yu, Z. Sustaining student roles, digital literacy, learning achievements, and motivation in online learning environments during the COVID-19 pandemic. Sustainability 2022, 14, 4388. [Google Scholar] [CrossRef]

- Li, M.; Yu, Z. Teachers’ satisfaction, role, and digital literacy during the COVID-19 pandemic. Sustainability 2022, 14, 1121. [Google Scholar] [CrossRef]

- Yu, Z.; Deng, X. A meta-analysis of gender differences in e-learners’ self-efficacy, satisfaction, motivation, attitude, and performance across the world. Front. Psychol. 2022, 13, 897327. [Google Scholar] [CrossRef]

| N. | Study | Databases | Quality Assessment | Main Users | Theoretical Frameworks | Users’ Perceptions | The Ways of MT Integration |

|---|---|---|---|---|---|---|---|

| 1 | [9] | Cambridge Core, Science Direct, JSTOR, ProQuest, EBSCO, Google Scholar, and six journals | × | × | × | √ | × |

| 2 | [10] | Not available | × | × | × | × | × |

| 3 | [11] | Eight journals indexed by SSCI and CSSCI | × | × | × | × | × |

| 4 | This study | WoS Core Collection, Sage, Wiley, ERIC, and EBSCO | √ | √ | √ | √ | √ |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Deng, X.; Yu, Z. A Systematic Review of Machine-Translation-Assisted Language Learning for Sustainable Education. Sustainability 2022, 14, 7598. https://doi.org/10.3390/su14137598

Deng X, Yu Z. A Systematic Review of Machine-Translation-Assisted Language Learning for Sustainable Education. Sustainability. 2022; 14(13):7598. https://doi.org/10.3390/su14137598

Chicago/Turabian StyleDeng, Xinjie, and Zhonggen Yu. 2022. "A Systematic Review of Machine-Translation-Assisted Language Learning for Sustainable Education" Sustainability 14, no. 13: 7598. https://doi.org/10.3390/su14137598

APA StyleDeng, X., & Yu, Z. (2022). A Systematic Review of Machine-Translation-Assisted Language Learning for Sustainable Education. Sustainability, 14(13), 7598. https://doi.org/10.3390/su14137598