Immersive Robotic Telepresence for Remote Educational Scenarios

Abstract

1. Introduction

1.1. Problem Description

1.2. Challenges

1.3. Research Question(s)

- RQ1—Applicability. How can we use immersive technologies, such as VR and AR, to promote engagement in remote educational scenarios involving robots?

- RQ2—Sustainability. How do IRT solutions fare in light of sustainability considerations?

- -

- RQ2.1 (explanatory). What is the cumulative energy consumption?

- -

- RQ2.2 (exploratory). What are the effects of different immersive technology types on robot performance?

- -

- RQ2.3 (exploratory). What are the deployment costs of each system?

2. Materials and Methods

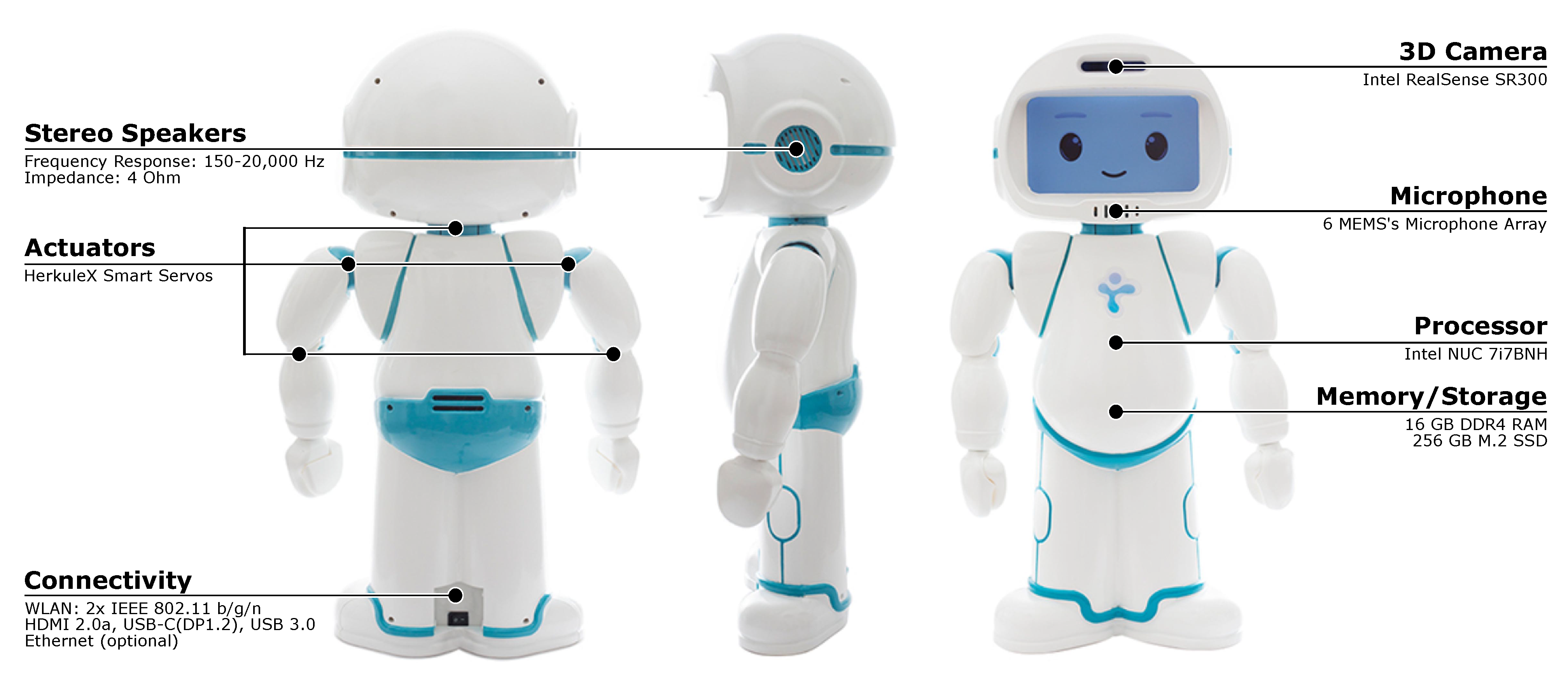

2.1. Robot

2.2. Robot Power Model

2.2.1. Hardware Perspective

- Sensors. Every robot has a set of sensors that measure various physical properties associated with the robot and its environment. Again, we follow Mei et al. [31], who suggested a linear function to model the power consumption of sensors:The proposal connects the sensing power () to two physical constants associated with the device, and is also coupled with the sensing frequency.

- Actuators. This study simplifies the actuators into motors, which convert electrical energy into mechanical energy. The motor power consumption is associated with the mechanical engine and the transforming loss related to friction or resistance, such as those associated with grasping and manipulating objects or the surface where the robot is moving. Once more, Mei et al. [31] proposed a possible model to be applied in this case:A motor’s motion power is associated with mass m, v represents the velocity, and a defines the acceleration. defines the transforming loss, and is the mechanical power, where g is the gravitational constant.

- Main Controller Unit. This element is responsible for managing the robot and parts of the controllers with their associated devices. It comprises the central processing unit (CPU) and can sequence and trigger robot behaviors using the different hardware elements under its controls. This study simplifies the model in that all components are evaluated together, and it does not distinguish between the directly (hard drive and fans) and indirectly measurable (network ports) devices.

- Others. There are different devices that need to be considered, such as controllers, routers, external fans, batteries, displays, or speakers. Each one is modeled on its own, and product specifications define their values.

2.2.2. Software Perspective

- CPU Model. The power consumed by a specific process given a set of constants and the percentage of CPU use over a period of time.

- Memory Model. The power that a process needs when triggering one of the four states of the random access memory (RAM): read, write, activate, and precharge.

- Disk Usage Model. The power consumption associated with read/write processes of a given application when the disk is in active mode.

2.3. QTrobot Power Model

2.3.1. Hardware Perspective

- NUC: The robot integrates an Intel NUC i7 computer running an Ubuntu 16.04 LTS operating system with 16 GB of RAM. The NUC kits are well known for their bounded consumption [33].

- Camera: The QTrobot is equipped with an Intel RealSense D435 depth camera. According to the Intel documentation [34], it demands 0.7 Amps as a feeding source for operating.

- Motors: The robot has eight motors rendering eight DoFs for the robot’s neck and two arms. The neck’s two motors provide pitch and yaw, while each arm contains two motors in the shoulders and one in the elbow. It is out of this work’s scope to evaluate the motor efficiency, so we generalize the power consumption without explicitly dealing with copper, iron, mechanical, and stray losses.

- Display: QTrobot features an LCD panel that is active from the moment that the robot is switched on. This eight-inch multicolor graphic TFT LCD with 800 × 480 pixels mainly shows direct animations of facial expressions. The current version does not allow changes to the backlight brightness, so it is assumed to work under the same operating voltage and current as the robot. It is not possible to measure or extract more information about its consumption without disassembling the display.

- Speaker: The robot has a 2.8 W stereo-class audio amplifier with a frequency rate of 800–7000 Hz.

- Other: Any regulators, network devices, or other control mechanisms beyond our knowledge that somehow drain power.

2.3.2. Software Perspective

- Robot Operating System (ROS) [35]: ROS is considered the de facto standard for robotics middleware. It provides a set of libraries and tools for building and running robot applications.

- NuiTrack™: A 3D tracking system developed by 3DiVi Inc. [36] that provides a framework for skeleton and gesture tracking. It offers capabilities for realizing natural user interfaces.

- QTrobot Interface [37]: The set of ROS interfaces for robot interaction provided by QTrobot’s manufacturer, LuxAI. Following ROS’s publish/subscribe paradigm, it is possible to find an interface for implementing different robot behaviors, such as changing the robot’s emotional and facial expressions, generating robot gestures, or playing audio files.

- Telepresence Components: The set of software components for connecting to the QTrobot ROS interfaces. These components have two views: the QTrobot side, where the components manage robot interfaces, and the operator side, which comprises the components running outside of the robot to control and present robot information in VR-based IRT or app-based IRT. Additionally, there is always a link between both sides presented by a communication channel, but its consumption footprint is not evaluated in this study.

- Object Recognition: There is a component for offering object recognition in the robot. Such components are notorious in the robotics community for having a higher CPU consumption than other software components deployed in the robot. Specifically, [38] is used, which is a webcam-based feature extractor employed to detect objects. Upon detection, the component publishes the object ID and its position on a dedicated ROS topic.

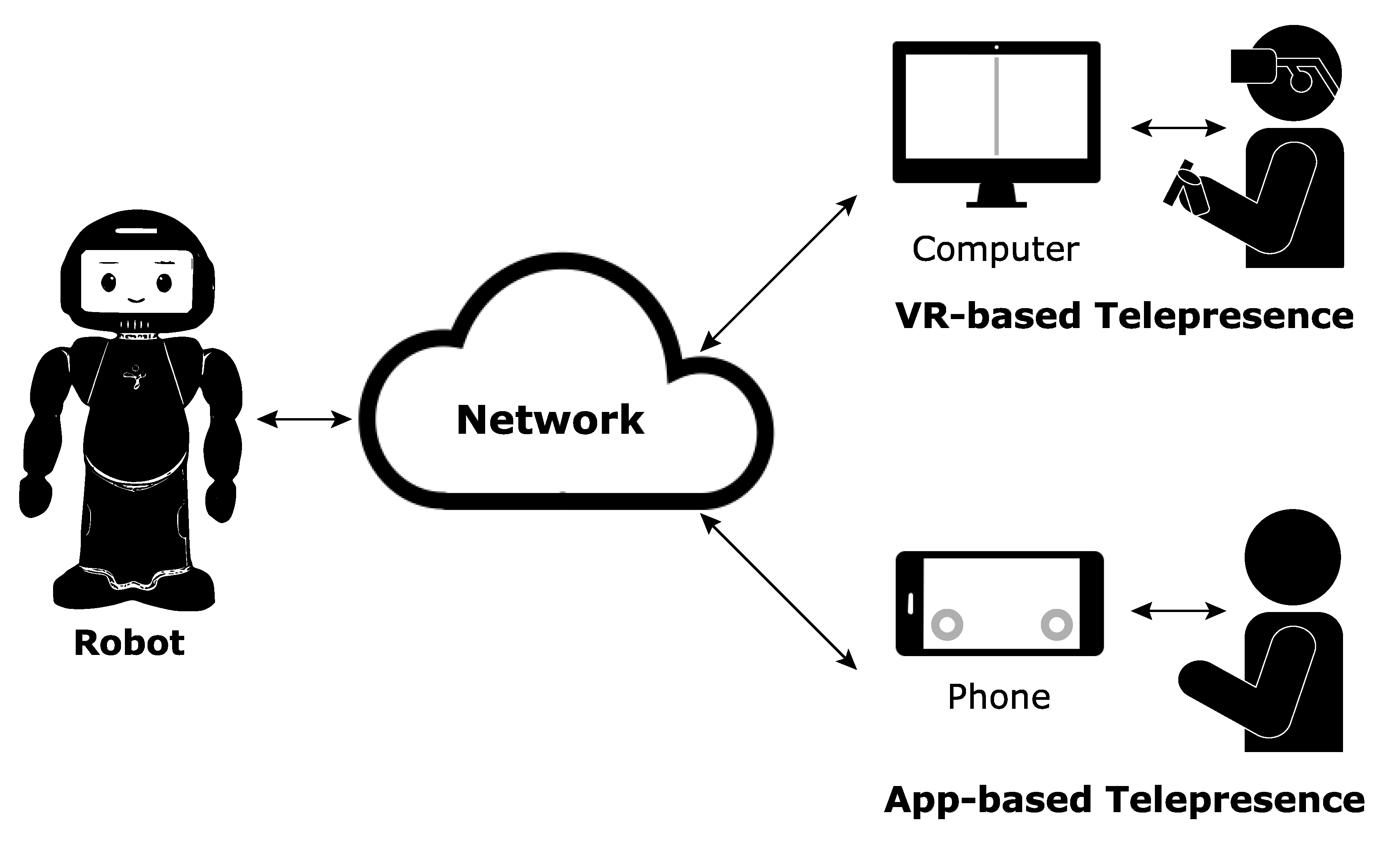

2.4. Telepresence

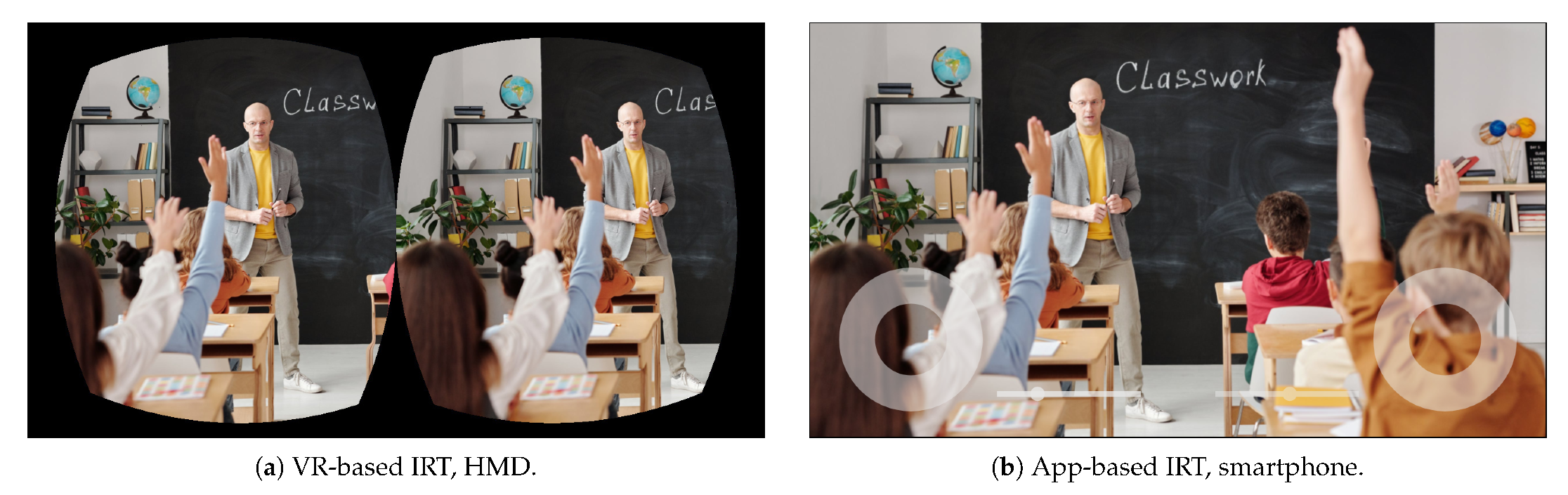

2.4.1. VR-Based IRT

2.4.2. App-Based IRT

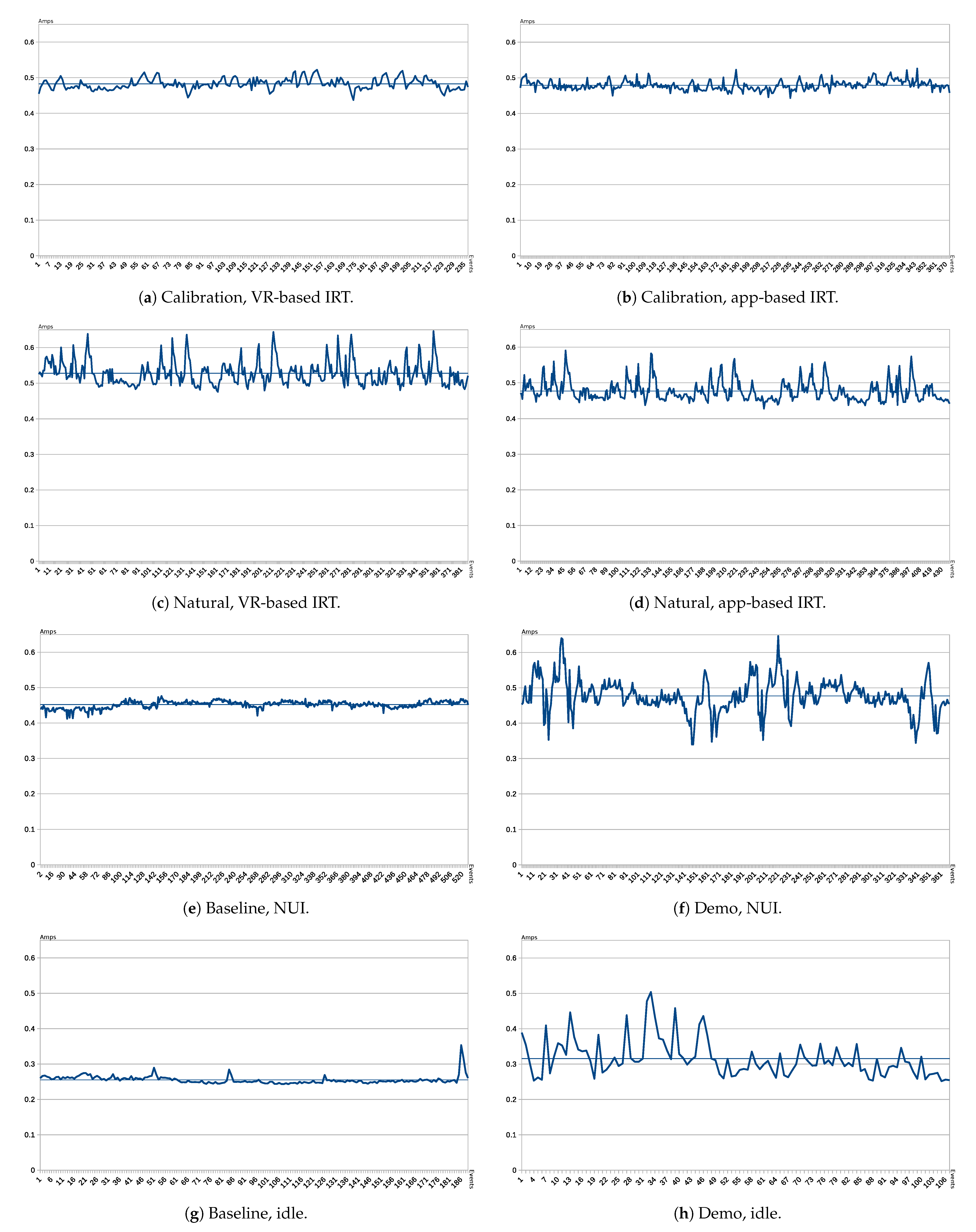

2.5. Experiment Modes and Measurements

- Test/Calibration: Robot behavior associated with various motion and gestures to check the motor status and perform calibration tasks.

- Natural: Classic HRI-related robot behavior comprising motions and gestures such as greetings, head tilts, or hand rubbing.

2.5.1. Baseline

2.5.2. Realistic

3. Results

3.1. Hardware Perspective

3.2. Software Perspective

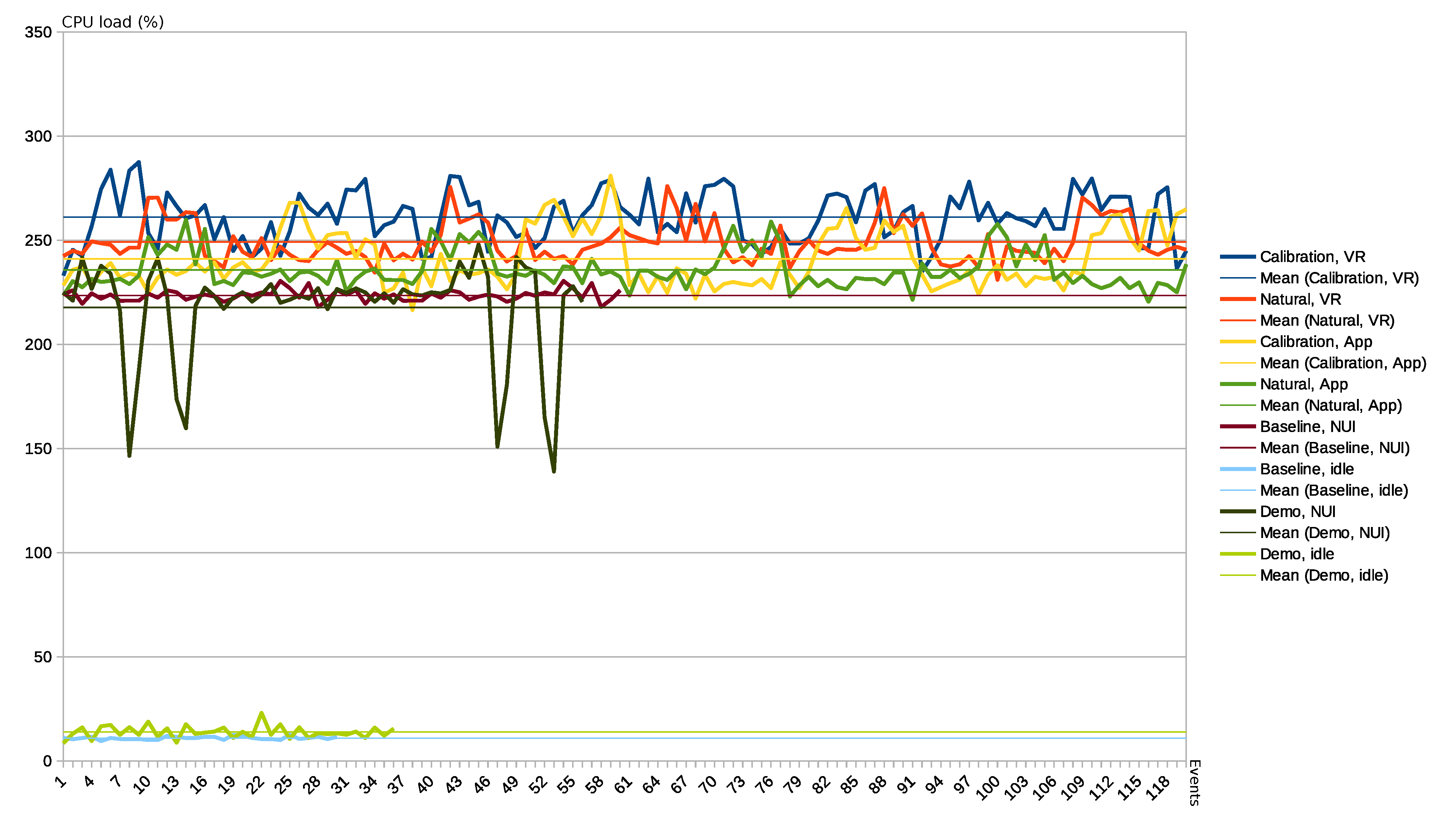

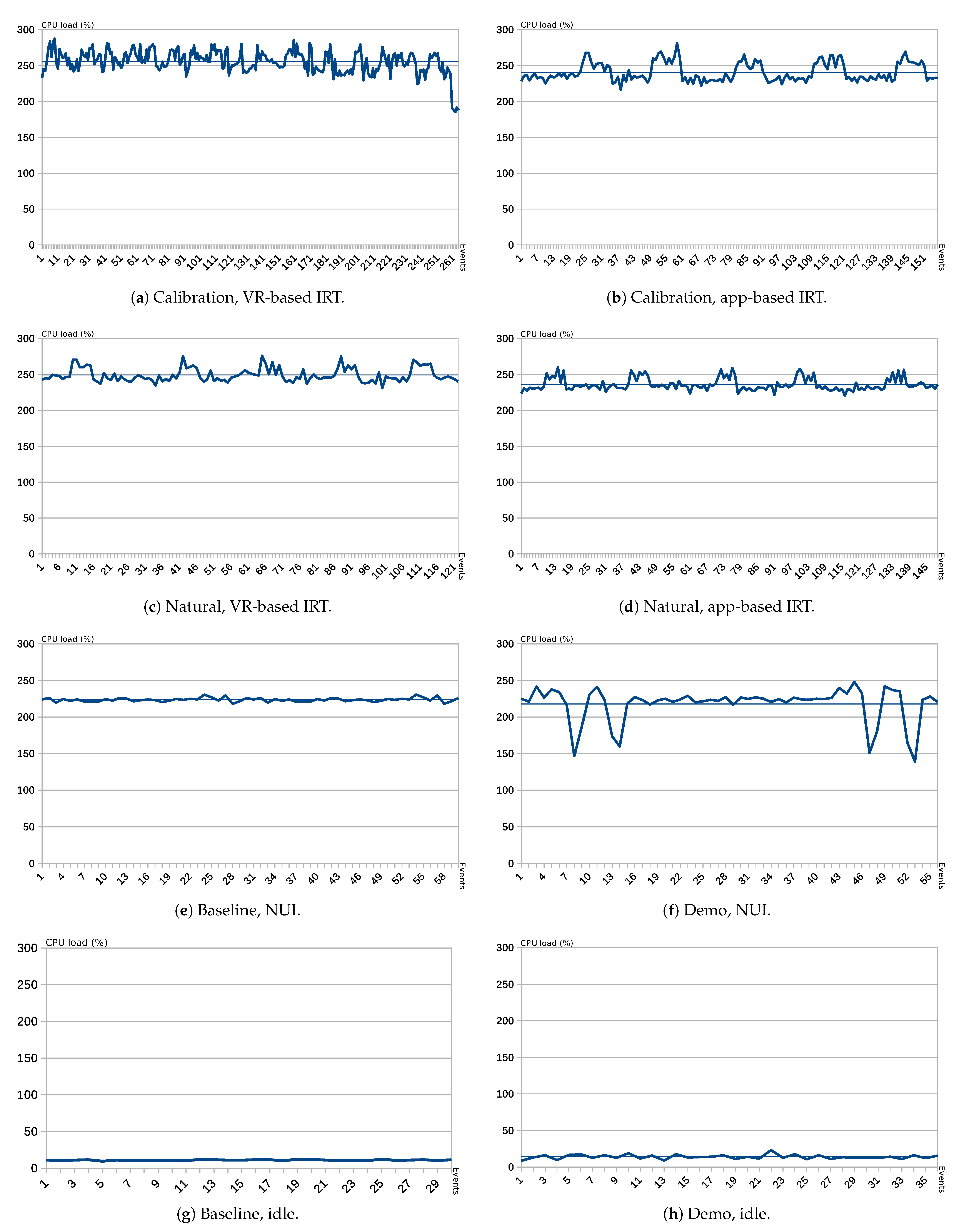

3.2.1. CPU Consumption

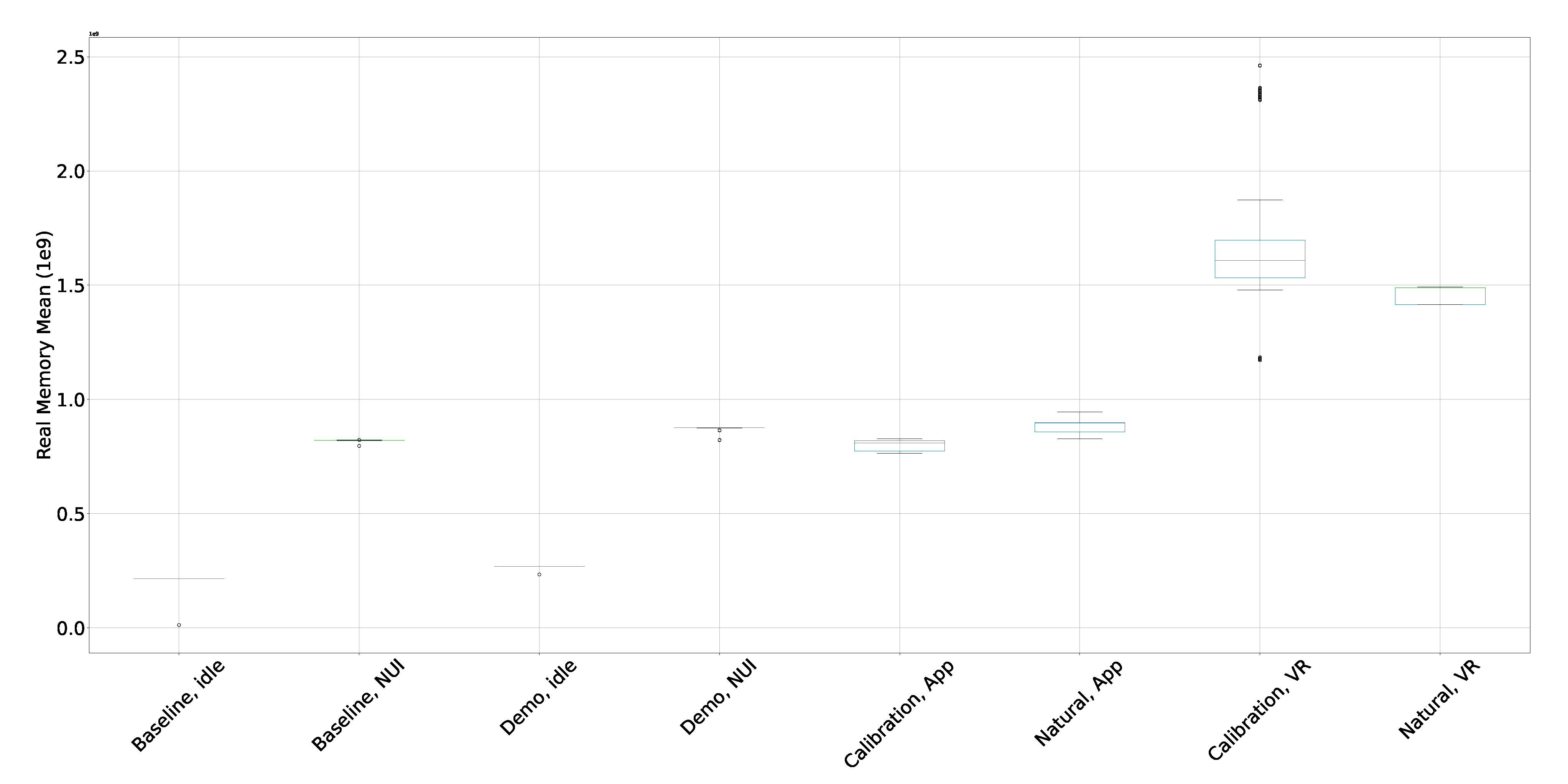

3.2.2. Memory Usage

3.3. Collateral Effects of the Telepresence Option

4. Discussion

4.1. Impact of Measurement Tools

4.2. Effect of Immersive Technologies on the Power Consumption Model

4.3. Developing Energy-Efficient Demos

4.4. Economic Efficiency

4.5. Social Efficiency

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AAC | Amps Alternating Current |

| AMOLED | Active-Matrix Organic Light-Emitting Diode |

| API | Application Programming Interface |

| AR | Augmented Reality |

| CPU | Central Processing Unit |

| DDR | Double Data Rate |

| LCD | Liquid-Crystal Display |

| DoF | Degree(s) of Freedom |

| HMD | Head-Mounted Display |

| HRI | Human–Robot Interaction |

| IRT | Immersive Robotic Telepresence |

| JSON | JavaScript Object Notation |

| LTS | Long-Term Support |

| NUC | Next Unit of Computing |

| OLED | Organic Light-Emitting Diode |

| RAM | Random Access Memory |

| RiE | Robotics in Education |

| ROS | Robot Operating System |

| SDT | Self-Determination Theory |

| TD | Transactional Distance |

| TFT | Thin-Film Transistor |

| VR | Virtual Reality |

References

- Belpaeme, T.; Ramachandran, A.; Scassellati, B.; Tanaka, F. Social Robots for Education: A Review. Sci. Robot. 2018, 3. [Google Scholar] [CrossRef]

- Clabaugh, C.; Matarić, M. Escaping Oz: Autonomy in Socially Assistive Robotics. Annu. Rev. Control Robot. Auton. Syst. 2019, 2, 33–61. [Google Scholar] [CrossRef]

- Belpaeme, T.; Baxter, P.; de Greeff, J.; Kennedy, J.; Read, R.; Looije, R.; Neerincx, M.; Baroni, I.; Zelati, M.C. Child-Robot Interaction: Perspectives and Challenges. In Proceedings of the 5th International Conference on Social Robotics (ICSR), Bristol, UK, 27–29 October 2013; pp. 452–459. [Google Scholar] [CrossRef]

- Toh, L.P.E.; Causo, A.; Tzuo, P.W.; Chen, I.M.; Yeo, S.H. A Review on the Use of Robots in Education and Young Children. J. Educ. Technol. Soc. 2016, 19, 148–163. [Google Scholar]

- Miller, D.P.; Nourbakhsh, I. Robotics for Education. In Springer Handbook of Robotics; Springer: Cham, Switzerland, 2016; pp. 2115–2134. [Google Scholar] [CrossRef]

- Mubin, O.; Stevens, C.J.; Shahid, S.; Al Mahmud, A.; Dong, J. A Review of the Applicability of Robots in Education. Technol. Educ. Learn. 2013, 1, 1–7. [Google Scholar] [CrossRef]

- Jecker, J.D.; Maccoby, N.; Breitrose, H. Improving Accuracy in Interpreting Non-Verbal Cues of Comprehension. Psychol. Sch. 1965, 2, 239–244. [Google Scholar] [CrossRef]

- Okon, J.J. Role of Non-Verbal Communication in Education. Mediterr. J. Soc. Sci. 2011, 2, 35–40. [Google Scholar]

- Crooks, T.J. The Impact of Classroom Evaluation Practices on Students. Rev. Educ. Res. 1988, 58, 438–481. [Google Scholar] [CrossRef]

- Botev, J.; Rodríguez Lera, F.J. Immersive Telepresence Framework for Remote Educational Scenarios. In Proceedings of the International Conference on Human-Computer Interaction, Copenhagen, Denmark, 19–24 July 2020; pp. 373–390. [Google Scholar] [CrossRef]

- Beam. Available online: https://suitabletech.com/products/beam (accessed on 15 March 2021).

- Double. Available online: https://www.doublerobotics.com (accessed on 15 March 2021).

- Ubbo. Available online: https://www.axyn.fr/en/ubbo-expert/ (accessed on 15 March 2021).

- Zhang, M.; Duan, P.; Zhang, Z.; Esche, S. Development of Telepresence Teaching Robots With Social Capabilities. In Proceedings of the ASME 2018 International Mechanical Engineering Congress and Exposition (IMECE), Pittsburgh, PA, USA, 9–15 November 2018; pp. 1–11. [Google Scholar] [CrossRef]

- Cha, E.; Chen, S.; Matarić, M.J. Designing Telepresence Robots for K-12 Education. In Proceedings of the 26th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Lisbon, Portugal, 28 August–1 September 2017; pp. 683–688. [Google Scholar] [CrossRef]

- Gallon, L.; Abenia, A.; Dubergey, F.; Négui, M. Using a Telepresence Robot in an Educational Context. In Proceedings of the 10th International Conference on Frontiers in Education: Computer Science and Computer Engineering (FECS), Las Vegas, NV, USA, 29 July–1 August 2019; pp. 16–22. [Google Scholar]

- Lei, M.L.; Clemente, I.M.; Hu, Y. Student in the Shell: The Robotic Body and Student Engagement. Comput. Educ. 2019, 130, 59–80. [Google Scholar] [CrossRef]

- Kwon, C. Verification of the Possibility and Effectiveness of Experiential Learning Using HMD-based Immersive VR Technologies. Virtual Real. 2019, 23, 101–118. [Google Scholar] [CrossRef]

- Du, J.; Do, H.M.; Sheng, W. Human-Robot Collaborative Control in a Virtual-Reality-Based Telepresence System. Int. J. Soc. Robot. 2020, 1–12. [Google Scholar] [CrossRef]

- Matsumoto, K.; Langbehn, E.; Narumi, T.; Steinicke, F. Detection Thresholds for Vertical Gains in VR and Drone-based Telepresence Systems. In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Atlanta, GA, USA, 22–26 March 2020; pp. 101–107. [Google Scholar] [CrossRef]

- Kim, D.H.; Go, Y.G.; Choi, S.M. An Aerial Mixed-Reality Environment for First-Person-View Drone Flying. Appl. Sci. 2020, 10, 5436. [Google Scholar] [CrossRef]

- Kamińska, D.; Sapiński, T.; Wiak, S.; Tikk, T.; Haamer, R.E.; Avots, E.; Helmi, A.; Ozcinar, C.; Anbarjafari, G. Virtual Reality and its Applications in Education: Survey. Information 2019, 10, 318. [Google Scholar] [CrossRef]

- Allcoat, D.; von Mühlenen, A. Learning in Virtual Reality: Effects on Performance, Emotion and Engagement. Res. Learn. Technol. 2018, 26, 2140. [Google Scholar] [CrossRef]

- Kang, S.; Kang, S. The Study on The Application of Virtual Reality in Adapted Physical Education. Clust. Comput. 2019, 22, 2351–2355. [Google Scholar] [CrossRef]

- Scaradozzi, D.; Screpanti, L.; Cesaretti, L. Towards a Definition of Educational Robotics: A Classification of Tools, Experiences and Assessments. In Smart Learning with Educational Robotics: Using Robots to Scaffold Learning Outcomes; Daniela, L., Ed.; Springer International Publishing: Cham, Switzerland, 2019; pp. 63–92. [Google Scholar] [CrossRef]

- Fernández-Llamas, C.; Conde, M.Á.; Rodríguez-Sedano, F.J.; Rodríguez-Lera, F.J.; Matellán-Olivera, V. Analysing the Computational Competences Acquired by K-12 Students when Lectured by Robotic and Human Teachers. Int. J. Soc. Robot. 2017, 12, 1009–1019. [Google Scholar] [CrossRef]

- Daniela, L.; Lytras, M.D. Educational Robotics for Inclusive Education. Technol. Knowl. Learn. 2019, 24, 219–225. [Google Scholar] [CrossRef]

- Li, T.; John, L.K. Run-time Modeling and Estimation of Operating System Power Consumption. In Proceedings of the ACM International Conference on Measurement and Modeling of Computer Systems (SIGMETRICS), San Diego, CA, USA, 9–14 June 2003; pp. 160–171. [Google Scholar] [CrossRef]

- Abukhalil, T.; Almahafzah, H.; Alksasbeh, M.; Alqaralleh, B.A. Power Optimization in Mobile Robots Using a Real-Time Heuristic. J. Robot. 2020, 2020, 5972398. [Google Scholar] [CrossRef]

- QTrobot. Available online: https://luxai.com/qtrobot-for-research/ (accessed on 15 March 2021).

- Mei, Y.; Lu, Y.H.; Hu, Y.C.; Lee, C.G. A Case Study of Mobile Robot’s Energy Consumption and Conservation Techniques. In Proceedings of the 12th International Conference on Advanced Robotics (ICAR), Seattle, WA, USA, 18–20 July 2005; pp. 492–497. [Google Scholar] [CrossRef]

- Acar, H.; Alptekin, G.; Gelas, J.P.; Ghodous, P. The Impact of Source Code in Software on Power Consumption. Int. J. Electron. Bus. Manag. 2016, 14, 42–52. [Google Scholar]

- Ngo, A. Intel NUC Energy Management. 2018. Available online: https://www.notebookcheck.net/Intel-NUC-Kit-NUC8i7BEH-i7-8559U-Mini-PC-Review.360356.0.html#toc-energy-management (accessed on 15 March 2021).

- Intel RealSense Datasheet. 2020. Available online: https://www.intelrealsense.com/wp-content/uploads/2020/06/Intel-RealSense-D400-Series-Datasheet-June-2020.pdf (accessed on 15 March 2021).

- Quigley, M.; Conley, K.; Gerkey, B.; Faust, J.; Foote, T.; Leibs, J.; Wheeler, R.; Ng, A.Y. ROS: An Open-source Robot Operating System. In Proceedings of the ICRA Workshop on Open Source Software, Kobe, Japan, 12–17 May 2009; Volume 3, p. 5. [Google Scholar]

- 3DiVi Inc. Nuitrack SDK. 2021. Available online: https://nuitrack.com (accessed on 12 March 2021).

- LuxAI. QTrobot Interface. 2020. Available online: https://wiki.ros.org/Robots/qtrobot (accessed on 12 March 2021).

- Labbe, M. find_object_2d. 2016. Available online: https://wiki.ros.org/find_object_2d (accessed on 12 March 2021).

- Codd-Downey, R.; Forooshani, P.M.; Speers, A.; Wang, H.; Jenkin, M.R.M. From ROS to Unity: Leveraging Robot and Virtual Environment Middleware for Immersive Teleoperation. In Proceedings of the 2014 IEEE International Conference on Information and Automation (ICIA), Hailar, China, 28–30 July 2014; pp. 932–936. [Google Scholar]

- Roldán, J.J.; Peña-Tapia, E.; Garzón-Ramos, D.; de León, J.; Garzón, M.; del Cerro, J.; Barrientos, A. Multi-robot Systems, Virtual Reality and ROS: Developing a New Generation of Operator Interfaces. In Robot Operating System (ROS): The Complete Reference (Volume 3); Koubaa, A., Ed.; Springer International Publishing: Cham, Switzerland, 2019; pp. 29–64. [Google Scholar] [CrossRef]

- O’Dea, S. Android: Global Smartphone OS Market Share 2011–2018, by Quarter. 2020. Available online: https://www.statista.com/statistics/236027/global-smartphone-os-market-share-of-android/ (accessed on 15 March 2021).

- Kumar, A. ROS Profiler, GitHub Repository. 2020. Available online: https://github.com/arjunskumar/rosprofiler/blob/master/src/rosprofiler/profiler.py (accessed on 15 March 2021).

- Cabibihan, J.J.; So, W.C.; Pramanik, S. Human-recognizable Robotic Gestures. IEEE Trans. Auton. Ment. Dev. 2012, 4, 305–314. [Google Scholar] [CrossRef]

- Cabibihan, J.J.; So, W.C.; Saj, S.; Zhang, Z. Telerobotic Pointing Gestures Shape Human Spatial Cognition. Int. J. Soc. Robot. 2012, 4, 263–272. [Google Scholar] [CrossRef]

- Buildcomputers. Power Consumption of PC Components in Watts. Available online: https://www.buildcomputers.net/power-consumption-of-pc-components.html (accessed on 15 March 2021).

- Mace, J. Rosbridge Suite. 2017. Available online: http://wiki.ros.org/rosbridge_suite (accessed on 15 March 2021).

- Eurostat—Statistics Explained. Electricity Price Statistics. Available online: https://ec.europa.eu/eurostat/statistics-explained/index.php/Electricity_price_statistics (accessed on 15 March 2021).

- Energuide. How Much Power Does a Computer Use? And How Much CO2 Does That Represent? Available online: https://www.energuide.be/en/questions-answers/how-much-power-does-a-computer-use-and-how-much-co2-does-that-represent/54/ (accessed on 15 March 2021).

- Labaree, D.F. Public Goods, Private Goods: The American Struggle over Educational Goals. Am. Educ. Res. J. 1997, 34, 39–81. [Google Scholar] [CrossRef]

| Power [W] | ||||||||

|---|---|---|---|---|---|---|---|---|

| Baseline | App-Based IRT | VR-Based IRT | ||||||

| Idle | Idle/NUI | Demo | Demo/NUI | Calibration | Natural | Calibration | Natural | |

| Valid | 3 | 8 | 1 | 2 | 5 | 5 | 10 | 5 |

| Missing | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mean | 59.684 | 105.774 | 69.641 | 110.627 | 111.811 | 110.224 | 113.051 | 121.638 |

| Std. Deviation | 1.469 | 1.753 | NaN | 0.063 | 1.285 | 0.768 | 1.359 | 0.885 |

| Minimum | 58.660 | 102.174 | 69.641 | 110.582 | 110.051 | 109.260 | 110.705 | 120.701 |

| Maximum | 61.368 | 107.349 | 69.641 | 110.671 | 113.662 | 111.212 | 115.155 | 122.493 |

| Mean CPU Load [%] | ||||||||

|---|---|---|---|---|---|---|---|---|

| Baseline | App-Based IRT | VR-Based IRT | ||||||

| Idle | Idle/NUI | Demo | Demo/NUI | Calibration | Natural | Calibration | Natural | |

| Valid | 30 | 60 | 36 | 56 | 156 | 149 | 264 | 122 |

| Missing | 234 | 204 | 228 | 208 | 108 | 115 | 0 | 142 |

| Mean | 10.982 | 223.574 | 13.885 | 217.771 | 240.862 | 235.832 | 255.595 | 249.095 |

| Std. Deviation | 0.762 | 2.692 | 3.026 | 24.369 | 13.034 | 8.510 | 16.223 | 9.529 |

| Minimum | 9.530 | 218.030 | 8.541 | 138.965 | 216.535 | 220.540 | 185.175 | 231.063 |

| Maximum | 12.590 | 230.505 | 23.085 | 248.000 | 281.030 | 259.950 | 287.545 | 276.020 |

| Mean CPU Load [%] | |||

|---|---|---|---|

| /find_object_2d | /qt_emotion_app | /qt_nuitrack_app | |

| Mean | 158.601 | 0.142 | 55.990 |

| Std. Deviation | 27.326 | 0.264 | 7.221 |

| Minimum | 79.995 | 0.000 | 46.990 |

| Maximum | 178.980 | 1.000 | 72.540 |

| Memory [MB] | ||||||||

|---|---|---|---|---|---|---|---|---|

| Baseline | App-Based IRT | VR-Based IRT | ||||||

| Idle | Idle/NUI | Demo | Demo/NUI | Calibration | Natural | Calibration | Natural | |

| Valid | 60 | 31 | 56 | 36 | 156 | 150 | 264 | 122 |

| Missing | 204 | 233 | 208 | 228 | 108 | 114 | 0 | 142 |

| Mean | 208.1 | 820.0 | 268.2 | 873.6 | 803.2 | 884.8 | 1658.2 | 1464.4 |

| Std. Deviation | 36.3 | 4.5 | 6.0 | 10.2 | 21.0 | 28.5 | 234.9 | 34.6 |

| Minimum | 12.0 | 796.2 | 233.3 | 821.6 | 764.5 | 828.4 | 1171.3 | 1414.7 |

| Maximum | 214.7 | 822.6 | 269.3 | 877.0 | 827.0 | 945.9 | 2462.6 | 1492.2 |

| Mean CPU Load [%] | Memory [MB] | |||||||

|---|---|---|---|---|---|---|---|---|

| Calibration, App | Calibration, VR | Natural, App | Natural, VR | Calibration, App | Calibration, VR | Natural, App | Natural, VR | |

| Valid | 156 | 164 | 150 | 121 | 156 | 278 | 150 | 121 |

| Missing | 0 | 114 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mean | 5.398 | 10.735 | 4.939 | 13.361 | 9.728 × 10 | 4.017 × 10 | 1.608 × 10 | 8.442 × 10 |

| Mode | 6.005 | 11.000 | 4.995 | 13.505 | 1.011 × 10 | 8.041 × 10 | 1.538 × 10 | 8.371 × 10 |

| Std. Deviation | 0.587 | 0.938 | 0.583 | 1.027 | 6.400 × 10 | 4.603 × 10 | 1.527 × 10 | 512,456.718 |

| Minimum | 3.990 | 7.455 | 2.995 | 10.000 | 8.214 × 10 | 6.188 × 10 | 1.538 × 10 | 8.343 × 10 |

| Maximum | 7.015 | 12.030 | 6.015 | 15.010 | 1.013 × 10 | 1.226 × 10 | 2.162 × 10 | 8.507 × 10 |

| Power [W] | ||||

|---|---|---|---|---|

| Baseline, Idle/NUI | Baseline, Idle | |||

| RosBag | No_RosBag | RosBag | No_RosBag | |

| Valid | 6 | 4 | 3 | 1 |

| Missing | 0 | 0 | 0 | 0 |

| Mean | 106.736 | 106.758 | 63.345 | 58.660 |

| Std. Deviation | 3.430 | 0.355 | 5.577 | NaN |

| Minimum | 102.174 | 106.372 | 59.026 | 58.660 |

| Maximum | 110.671 | 107.205 | 69.641 | 58.660 |

| EUR/Year | ||||||

|---|---|---|---|---|---|---|

| Baseline | Telepresence | |||||

| Idle | NUI | Demo | NUI | VR-Based | App-Based | |

| Valid | 3 | 8 | 1 | 2 | 15 | 10 |

| Mean | 27.749 | 49.178 | 32.379 | 51.434 | 53.892 | 51.616 |

| Minimum | 27.273 | 47.505 | 32.379 | 51.414 | 51.471 | 50.799 |

| Maximum | 28.532 | 49.911 | 32.379 | 51.455 | 56.951 | 52.846 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Botev, J.; Rodríguez Lera, F.J. Immersive Robotic Telepresence for Remote Educational Scenarios. Sustainability 2021, 13, 4717. https://doi.org/10.3390/su13094717

Botev J, Rodríguez Lera FJ. Immersive Robotic Telepresence for Remote Educational Scenarios. Sustainability. 2021; 13(9):4717. https://doi.org/10.3390/su13094717

Chicago/Turabian StyleBotev, Jean, and Francisco J. Rodríguez Lera. 2021. "Immersive Robotic Telepresence for Remote Educational Scenarios" Sustainability 13, no. 9: 4717. https://doi.org/10.3390/su13094717

APA StyleBotev, J., & Rodríguez Lera, F. J. (2021). Immersive Robotic Telepresence for Remote Educational Scenarios. Sustainability, 13(9), 4717. https://doi.org/10.3390/su13094717