1. Introduction

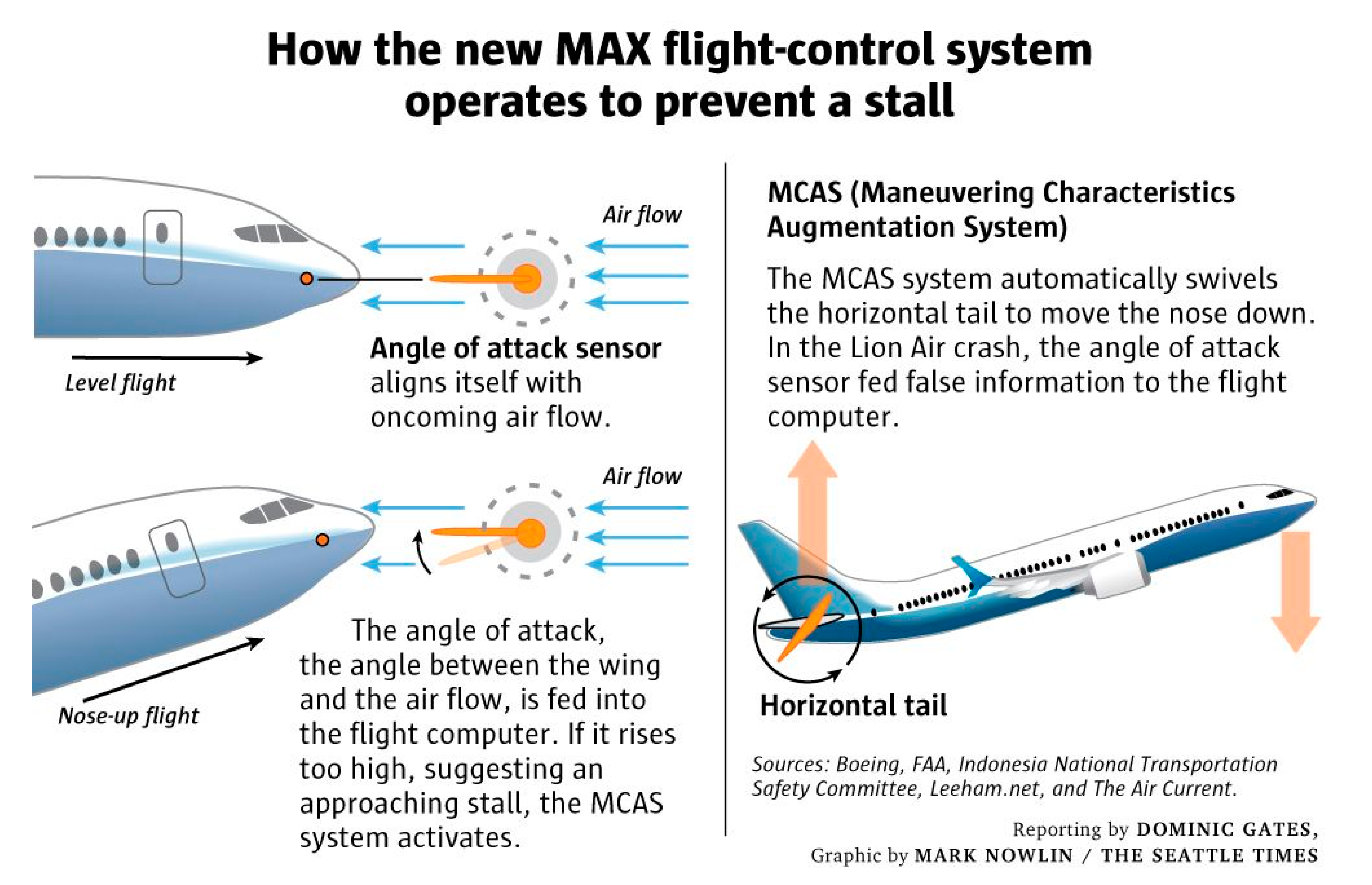

In October 2018, 189 passengers perished when Lion Air Flight 610 crashed a few minutes after taking off from Jakarta. Boeing implied that the crash was due to human error and embarked on a process meant to update the online training software administered to pilots. A few months later, in March 2019, Ethiopian Airlines Flight 302, a second airplane of same type, the 737 MAX model, carrying 157 passengers, crashed. Investigations into both accidents are continuing, but data from the black boxes’ flight data recordings immediately indicated several similarities between the two accidents. One major issue seems to be that the system designed to prevent the plane from stalling appears to have malfunctioned, instead pushing down the nose of the airplanes towards the ground. That system, known as MCAS (Maneuvering Characteristics Augmentation System,

Figure 1), was a recent addition to the MAX. However, most pilots were neither aware of its existence nor trained in how to override it.

Back in 2011, Boeing planned to design a brand-new airplane to substitute the aging fleet of 737. Instead, vying for control of the 21st century market to replace aging aircraft fleets with its leading European rival Airbus [

1], it decided to upgrade the 737 instead. In order to expedite the FAA’s authorization of the new airplane, Boeing maintained that the MAX model was simply a derivative of the traditional 737 aircraft. However, that was not the case. The aeronautical engineers made a major modification to the airplane’s airframe which shifted its center of gravity. Had the FAA known that manufacturer was making such fundamental redesign, it would have required the company to conduct extensive test flights of a prototype in order to examine its systems as contingency for approving mass-production of the aircraft [

2].

The MAX was designed to be a single-aisle, 200-passenger jet that is more sustainable and fuel efficient than previous 737 models [

3]. To accomplish these goals, the manufacturer equipped the MAX with larger engines and shifted their location forward, which disrupted the plane’s aerodynamic structure, causing it to become susceptible to stalling under certain flight scenarios. To obviate this problem, manufacturer developed the MCAS system, which pushed the plane’s nose down to stabilize the aircraft [

4]. When needed, the system engaged automatically. The pilots could not deactivate it unless they had been properly trained to do so in a simulator, and even so, the system would have reactivated by itself after a few seconds. Following the second accident, the FAA grounded the MAX model indefinitely, while engineers struggled to find a solution to the aircraft’s engineering drawbacks [

5].

The goal of this study is to investigate the antecedents of these airplane accidents involving the new MAX 737 utilizing an organizational behavior lens. The chain of events that led to each accident are portrayed with an emphasis on the dearth of learning curve [

6], which could have saved at least the passengers on second flight if the MAX fleet had been grounded immediately after the first crash. Instead, the manufacturer responded to the first crash by placing responsibility on flight crew human-error and appeased the FAA by issuing an Operations Manual Bulletin (OMB) to remind pilots how to behave if they encountered a similar emergency situation again. When asked in both houses of Congress after the second crash whether he had any concerns about the system, the CEO of Boeing, Dennis Muilenburg, euphemistically replied: “

I think about that decision over and over again. If we knew everything back then that we know now, we would have made a different decision.” [

7]

In its mission statement, Boeing emphasizes that one of its core values is its commitment to safety.

“We value human life and well-being above all else and take action accordingly. We are personally accountable for our own safety and collectively responsible for the safety of our teammates and workplaces, our products and services, and the customers who depend on them. When it comes to safety, there are no competing priorities.”

Schein [

8] postulates that culture and leadership constructs are intrinsically connected. The Malcolm Baldrige National Quality Award supports this notion by positioning leadership as the first building block in its framework, which drives all elements of improvement [

9]. Psychological safety is required in order to maintain the cultural traits mentioned above. Team psychological safety is defined as a shared belief that the organization constitutes a safe work environment for interpersonal risk taking in order to foster learning behavior, innovation, and growth [

10]. The employees should neither be reprimanded for raising concerns during meetings about potential occurrence of defects in process that can delay project duration, nor rebuked for discovering malfunctions that demand costly repair or a redesign of an entire system. Instead, promotion should be offered for employees whose timely feedback prevented errors, preserving the company’s brand and world class safety reputation. The term psychological safety originates from mutual trust and respect between the organizational hierarchy’s lower and upper echelons. It stems from a sense of confidence that peers will not embarrass, reject, or punish a fellow member for articulating thoughts implicitly or expressing opinions explicitly about the team performance [

11]. The analysis of this case study finds lack of leadership commitment to safety culture throughout the supply chain echelons [

12].

The rest of the study is organized as follows. In the next section, we discuss the theory of normal accidents [

13], which sheds lights on these accidents from an organizational behavior vantage point. We then use the case study method to glean data about the two accidents. We curated the amass data using Cooper’s [

14] six safety culture dimensions to examine the antecedents of the accidents [

15]. The results emphasize the importance of developing agile prototypes in order to test the interaction between innovative computer systems and human behavior, the compulsory simulator training necessary for those who will be using them, and the danger of improvisation to extend the life cycle of engineering designs that are overdue under time pressure and budget constraint [

16].

Our findings have industrial implications. Given that the manufacturer directly employs more than 160,000 people and is a major US exporter, the grounding of its airplanes creates a bullwhip effect on a large network of aviation parts suppliers. As Michael Feroli, J.P. Morgan’s chief U.S. economist, noted to the bank’s clients: “

America’s GDP could fall by 0.6 percentage points if production of the airplane was halted temporarily. In 2019, sales of the 737 were projected to total about $35 billion, with about 90% accounted for by the MAX model, or about one-quarter of total domestic aircraft production.” [

17].

2. Theoretical Background

2.1. The Normal Accidents Theory

The normal accidents theory pertains to the social side of technological risk [

13]. It postulates that the conventional engineering approach to ensuring safety by building in more warnings and safeguards fails, because the complexity of systems makes failures inevitable. Perrow [

13] asserts that systems that are both interactively complex and tightly coupled incline to catastrophic failure. In such cases, errors in subsystems are susceptible to occur. Furthermore, these errors will spread and interact in ways that are difficult to foresee, understand, or mitigate. Furthermore, in certain cases, precautious measures meant to enhance safety end up adding complexity, which fails their purpose by leading to accidents. In the case of airplanes, there are two main systems whose safety is of critical priority: engines and flight control. Errors in systems happen frequently, they are expected as part of normal operations, and the system is supposed to continue functioning properly despite their occurrence. It is the interdependency between systems that creates hazard. As will be discussed, Boeing created such an interdependency between the propulsion and maneuvering systems by shifting the engine’s location forward and installing a complex MCAS system to balance the airplane flight control [

4]. According to Perrow’s theory [

13], this tightly coupled link is prone to catastrophic failure, because it will interact in dissonant ways that are difficult to foresee, understand, or prevent. Indeed, the MCAS system exemplifies Perrow’s theory [

13], since it pushed the airplane nose down due to a false sensors’ reading instead of ensuring safety by preventing it from stalling [

4]. Pilots had insufficient response time, and the complexity of MCAS prevented their interference to amend risk by disactivating the system. High reliability organizations (HRO) are designed to cope with unexpected situations [

18]. According to Weick [

19], organizational culture is a source of high reliability. Weick illustrates HRO effectiveness in the case of air traffic control, nuclear power generation, and naval carrier operations.

2.2. A Safety Culture

Culture is a three-dimensional creed that encompasses ingrained values, artifacts, and assumptions [

8]. Hofstede [

20] defines it as a process for programming the mind. A safety culture is a broad, organization-wide approach to safety management [

21]. It is the end result of both individual and group efforts to promote the values, attitudes, and goals of an organization’s health and safety program. Organizations with a safety culture show commitment to employees’ well-being that is reflected across echelons within the organization. Leaders gather knowledge from all departments to enhance safety. One method for doing so is to appoint a champion at each location. This person is responsible for identifying workforce hazards and the incumbent during employee training. The champion should also gather near-accident instances that compromised safety as learning experiences, which is a common technique in the aviation arena to avoid large-scale disasters.

In order to build a strong safety culture [

22], several components are needed from the inception. A safety vision should be explicitly defined and publicly announced. Safety responsibilities for each echelon within the organization must be enumerated, including duty procedures and chain of command. Next, everyone must be visibly held accountable for safety. Afterwards, safety should be become a top priority built into the meticulous design of each service/product, not an optional add-on feature for sale that does not exist in the standard product being sold. Agile prototypes should be tested to ensure safety [

23]. Redundancy should be built in for malfunctions. Finally, employees should be educated about the importance of reporting defects that have potential to evolve into accidents.

3. Literature Review: Cooper’s Safety Culture Framework

Cooper’s [

24] seminal framework holds sway in the literature as a luminary articulation of safety culture. The framework underscores that it is the product of multiple goal-directed interactions between people (psychological), jobs (behavioral), and the organization (situational) (Cooper and Finley, 2013). Cooper’s [

25] recent revision of the literature on safety culture documents the lucid link between such a culture and actual safety performance. Data indicates that companies should focus at least 80% of their efforts to change their organizational culture around situational factors such as safety management systems and behavioral factors to prevent incidents. Cooper [

14] enumerated six major dimensions of safety culture: (1) management/supervision, (2) safety systems, (3) risk, (4) work pressure, (5) competence, and (6) procedures and rules.

Management and supervision pertain to the commitment of leadership. Management is held accountable and responsible for safety [

26]. It is the supervisor’s obligation to monitor performance assessment proxies and design mitigation duty procedures through the managerial chain of command.

Safety systems refers to keeping an open channel of communication. Learning lessons from past incidents or near-accidents is an integral tool. Equipment needs to be designed with conformity to standards in each component.

Risk includes appraisal, evaluation, and control phases. For instance, the risk assessment matrix includes data about triggering events, probability of occurrence, likelihood of detection, impact outcome on system, mitigation strategy, and contingency plan.

Work pressure pertains to the inclination to compromise safety under pressure for profitability stemming from factors such as budget cuts, time constraints, and political interventions. Managers trying to satisfy the immediate demands of their jobs often overlook the fact that the costs of accidents tend to outweigh any vantage they might reap from prioritizing productivity over safety.

Competence refers to training employees possess to conduct their toil efficiently and effectively. Lack of competence can cause a slow response to handling a safety crisis situation: as an example, employees might operate equipment incorrectly. As a safety precaution, employees should receive periodical mandatory training.

Procedures and rules pertain to the company’s handbook that constitutes its safety management edifice. Catastrophes and serious incidents often stem from a lack of manual or the lack of the workforce’s access to it. These deficiencies lead to non-compliance and circumvention of stringent institutional rules. Solutions to this problem include auditing.

4. Methodology

To investigate our case study, in lieu of interviews, due to the confidentiality procedures imposed on the agencies involved that are currently under investigation by law enforcement bodies, as an alternative approach, we collected data from reliable secondary sources including articles posted on the official websites of government agencies such as the FAA, NASA, and various government committees such as the Senate and White House.

In April of 2019, the U.S. Secretary of Transportation commissioned the Special Committee to Review the FAA certification process of the MAX 737 model. The committee was endorsed with mandate to institute compulsory amendments before the airplane was deemed suitable to fly again. The committee interviewed aviation and safety management consultants and subject matter experts from the FAA and broached the findings with senior members from the Boeing Aviation Safety Oversight Office (BASOO). Information was gathered from a large panel of Boeing engineers, test pilots, and safety specialists involved in the certification of MAX model. The resulting report was a major source for illumination into the inadequate design and certification process [

2].

Our second source was the report from the Joint Authorities Technical Review [

4]. It was commissioned by the former chair of the National Transportation Safety Board (NTSB) and constituted subject matter experts from the FAA, NASA, and international aviation authorities from nine countries (Australia, Brazil, China, Canada, Europe Union, Indonesia, Japan, Singapore, and United Arab Emirates). It embarked on an exhaustive review of the automated flight control system on the MAX 737 aircraft, including its design for swift pilots’ interaction with the system in order to assess its conformity with safety standards [

4]. In addition, the FAA established a multi-agency Technical Advisory Board to review the manufacturer’s proposed software update of the MCAS system on the grounded 737 MAX, which needs to be rigorously examined (microprocessor stress testing) to ensure it is not creating new hardware compatibility problems with the two outdated flight control computers’ limited 16-bit processing capacity. Importantly, to circumvent bias, the board consisted of experts from the Volpe National Transportation Systems Center that were not involved in any aspect of the 737 MAX certification [

27].

Additional information for our analysis came from the Indonesian and Ethiopian aviation authorities connected with the airlines involved in the crashes. For example, we used the data included in the reports from the Indonesian National Transportation Safety Committee (NTSC) that were collected after the crash as well as its interim report.

Another source of data was the Aviation Safety Reporting System, or ASRS, the FAA’s voluntary, confidential reporting system that permits pilots to report incidents of near-accident misses or close calls without fear of losing their livelihood. NASA, which designed and operates ASRS, is regarded as a neutral third-party due to its lack of enforcement authority and ties with airlines. Deming [

28] emphasized the importance of driving fear out of the organization as an important culture trait, and [

29] iterated it in the quality handbook. A search of the ASRS database in 2020 revealed that the MAX anti-stall system was activated on at least two occasions [

30].

Other sources included information from the French Aviation Agency put in charge of assignment to decode and interpret the flights’ black boxes and cockpit voice recordings of crashed airplanes. We also collected information from numerous articles published in public media outlets such as the Washington Post, New York Times, and Wall Street Journal about the pilots’ reactions before and after the MAX’s first accident. For example, Indonesian officials acknowledged that an off-duty pilot was present inside the cockpit of the Lion Air plane the day before the crash. The crew encountered a similar peril that rendered it to nosedive, but the off-duty pilot seated in the cockpit jump seat correctly diagnosed the situation and helped the crew disable the flight-control system. The next day, a fresh crew operated the flight when it encountered same danger, causing it to crash into the Java Sea.

5. Findings Analyzed Based on Cooper’s Cultural Safety Framework

Cooper’s [

14] framework was employed to classify the data from the case study into six categories: (1) management/supervision, (2) safety systems, (3) risk, (4) work pressure, (5) competence, and (6) procedures and rules.

5.1. Work Pressure

The findings revealed that intense competition in the aviation industry between Boeing and its rival Airbus placed enormous pressure on the manufacturer to produce a new plane as quickly and inexpensively as possible. To meet these demands, the company decided to update an outmoded design without properly testing a prototype.

Since debuting the 737 aircraft in 1967, the manufacturer has delivered approximately 10,000 units on time that became the backbone flagship passenger carrier of leading airlines worldwide. However, the situation changed in 2010, when the company discovered that its main competitor, Airbus, had debuted the A320neo, an innovative fuel-efficient short-haul airplane. Boeing knew that one of its largest customers, American Airlines, was in negotiations with Airbus to replace its short-haul fleet with the A320neo. The A320neo would have had a major negative effect on Boeing’s sales. Therefore, the company was under pressure to create a rival plane that was 15% more fuel efficient and inexpensively in a short timeframe. When American Airlines asked Boeing to propose its own product, the latter quickly responded with the 737 MAX in a record time of nine months [

31].

For the airlines, one of the attractions of the 737 MAX was that pilots were familiar with the 737. Therefore, the manufacturer pondered that pilots would be able to fly the MAX without costly and time-consuming training in a simulator. The manufacturer accepted a backlog of over 5000 MAX orders, worth billions of dollars. A few months after encroaching on the commercial market in 2017, the MAX became a prime choice among companies in the emerging 21st century global airline industry, which needed to replace overdue airplanes in order to cut maintenance costs. Until the two fatal accidents, the MAX accumulated an average of 8600 flights per week [

32].

The first antecedent of the MAX’s design failure stemmed from subcontracting integral work that requires high expertise to companies without proven record in developing avionics systems in order to cut costs. It should be noted that this trend started gaining momentum with the 787 Dreamliner model and accounted for approximately 50% of its parts from over fifty third-parties and strategic partners. The MAX software was developed when the manufacturer was laying off experienced aeronautical engineers. Increasingly, the company relied on an external part-time workforce to conduct software quality assurance. The responsiveness of temporary employees is low, because they lack the career path for promotion [

33].

U.S avionics companies subcontracted more than 30% of their software engineering tasks, compared to 10% for European-based firms. Bilateral sales agreements accelerated the trend to an estimated 35–50% of the entire MAX supply chain components. Interestingly, core tasks that the manufacturer used to traditional maintain total control over in order to validate integration between delicate software and hardware electronic systems were included too, transforming the chief manufacturer role from innovation and new product development into an assembly factory. This situation already proved hazardous in the development of the 787 Dreamliner when the wing measurements did not fit the frame, and there was a gap between the flight deck and the fuselage, which extended the production schedule and delivery fulfilment of orders [

34].

5.2. Safety System

The second antecedent of the plane’s problems was its susceptibility to stalling under certain flight pattern contingencies. Specifically, in order to meet the requirement for the 737 aircraft to be fuel-efficient, several technical changes were needed. The manufacturer shifted the engines forward and extended the nose landing gear by eight inches. This necessitated the introduction of an anti-stalling mechanism known as MCAS (Maneuvering Characteristics Augmentation System) combined with angle of attack (AOA) sensors feeding data to the plane’s computer. In shifting the center of gravity by moving the engines forward to promote fuel economy, the manufacturer rendered the plane aerodynamically off-kilter. In theory, the newly designed MCAS was supposed to compensate for this problem, but it is not a regular protocol in commercial airlines adhering to FAA safety standards [

4].

Adopting this approach risks the lives of hundreds of passengers onboard. Specifically, the MCAS system goes beyond merely a navigation role to actually maintaining the flight angle, thereby introducing what engineers call the risk of pilot-induced oscillations in which the pilot is unable to compensate for the aircraft’s flight pattern. Furthermore, the angle of the attack sensor is known to be a non-reliable device in the aviation industry, which is the reason most airlines insist on having three of them installed inside a commercial airplane nose in order to increase reliability. Surprisingly, MAX had only two sensors and lacked a light on the pilot’s panel that indicates mis-alignment in their observations, limiting the pilot’s awareness of flight risk status. All of these facts were not transmitted in a transparent mode to authorities during the certification process.

A preliminary report from Indonesian investigators shows that Lion Air 610 crashed when a faulty sensor erroneously reported that the airplane was stalling. Consequently, it triggered the MCAS, which pointed the aircraft’s nose down in order that it could gain enough speed to fly safely. This conclusion is corroborated by information recovered from the black box indicating that the plane was repeatedly pushed into a dive position shortly after taking off. Investigators detected evidence for similar angle of attack data from both accidents. Specifically, part of the stabilizer in the wreckage of the Ethiopian airplane with the trim set in an unusual position was similar to that of the Lion Air plane.

5.3. Management and Supervision

The third antecedent of the plane disasters was the ignoring of pilots’ warnings. A search of the Aviation Safety Reporting System [

30] reveals that the MAX anti-stall system was activated on at least two prior incidents as quoted:

“The aircraft accelerated normally and the Captain engaged the ‘A’ autopilot after reaching set speed,” reads one report dated November 2018. “Within two to three seconds, the aircraft pitched nose down bringing the vertical speed indicator to approximately 1200 to 1500 feet per minute. I called ‘descending’ just prior to the GPWS sounding ‘don’t sink, don’t sink’. The Captain immediately disconnected the autopilot and pitched into a climb. The remainder of the flight was uneventful. We discussed the departure at length and I reviewed in my mind our automation setup and flight profile but can’t think of any reason the aircraft would pitch nose down so aggressively.”

In a separate incident in the US, a pilot recalled how the plane started to nosedive momentarily after turning on the autopilot: “As I was returning to my PFD (Primary Flight Display), PM (Pilot Monitoring) called ‘Descending’ followed by almost an immediate: ‘Don’t sink Don’t sink’” The pilot turned off the autopilot and restored the ascendant, but struggled to articulate the antecedent for sharp nosedive: “With the concerns with the MAX 8 nose down stuff, we both thought it appropriate to bring it to your attention.”

After the October Lion Air crash, the manufacturer issued guidance on how to deal with erroneous readings from the anti-stall sensor, but a pilot complained that it “

did nothing” to address the system’s issues. Several pilots made at least five complaints about the 737 MAX in the months leading up to the deadly Ethiopian Airlines crash. In an October report, a pilot complained the MAX auto throttle system did not work properly. The problem was rectified after the pilot adjusted the thrust manually and continued to climb. “

Shortly afterwards I heard about the (other carrier) accident and am wondering if any other crews have experienced similar incidents with the auto throttle system on the MAX?” the pilot wrote in the report. At least six pilots noted being caught off guard when the 737 MAX jet descended suddenly. One pilot claimed it was: “

unconscionable that a manufacturer, the FAA, and the airlines would have pilots flying an airplane without adequately training, or even providing available resources and sufficient documentation to understand the highly complex systems that differentiate this aircraft from prior models.” Another pilot called the manual “

inadequate and almost criminally insufficient. The fact that this airplane requires such jury rigging to fly is a red flag. Now we know the systems employed are error-prone—Even if the pilots aren’t sure what those systems are, what redundancies are in place and failure modes. I am left to wonder: what else don’t I know?” [

35].

The captain’s complaint was logged after the FAA released an emergency airworthiness directive [

36] about the MAX in response to the crash of Lion Air Flight 610. The report maintained that, “

This condition, if not addressed, could cause the flight crew to have difficulty controlling the airplane, and lead to excessive nose-down attitude, significant altitude loss, and possible impact with terrain.” The FAA’s spokesperson acknowledged that the complaint was filed directly through NASA, which serves as a neutral third-party body.

5.4. Competence

The fourth antecedent of the accidents was the lack of training for the pilots on the new modifications to the 737 MAX. The pilots of the Lion Air flight searched the manual to determine why the plane was nose diving. When the MAX jet was under design, engineers concluded that pilots could fly the plane without new training, because it was an upgrade of the previous 737 model. FAA officially decided that the 737′s new features could be learned from an iPad orientation. This non-prudent decision saved the manufacturer substantial expenses, which made it cost competitive with the commensurate Airbus airplane.

The manufacturer compromised safety by cutting the pilots’ training to a minimum. In doing so, it ignored its stated values: “

From the beginning, safety has been Boeing’s number-one priority, starting with the first Boeing Safety Council in 1917. Our enduring values of safety, quality, and integrity are integral to all we do as we design, build, and service the highest-quality, safest products.” [

37].

According to chairman of the safety committee of the Allied Pilots Association (APA), “We assumed they [major changes to the flight control system] were mostly cosmetic differences”. The manufacturer’s working assumption was that the FAA would accelerate the certification process if the plane was introduced as a derivative rather than a major change or a new aircraft altogether.

In a more than two-hour hearing, members of the Senate Commerce Committee reprimanded Boeing CEO Dennis Muilenburg for technical drawbacks withheld from manuals. Muilenburg expressed remorse about the problems. He conceded knowing nothing until recently about email messages between a former Boeing pilot and the FAA in which the pilot disclosed information about misleading regulators into approving training materials and instructing the FAA to delete a flight-control system involved in the accidents. In another recently released exchange from 2016, the former Boeing pilot disclosed to a colleague that before the FAA certified the plane, the pilot encountered challenges in a simulator with the MCAS.

5.5. Risk

The fifth antecedent of the crashes can be traced to risk, which the manufacturer did not bring to the airlines’ attention. Both flights lacked safety features that were offered to the manufacturer for an additional price. Optional features usually encompass aesthetics or comfort, such as spacious seating, brighter lighting, or larger bathrooms. Safety features include communication and navigation systems that are necessary for the plane’s cruise control. The manufacturer offered two safety features as optional that airlines could procure for an extra fee, when these features are of paramount importance given the level of risk posed by the MCAS system. The two features are an angle of attack indicator, indicating the accurate angle of the aircraft posture with respect to the wind, and an angle of attack misalignment light indicator, which turns on if there is a significant difference between the left and right sensor. These features are critical to increase the pilot’s situational awareness (perception of environment). Under pressure to complete the design of the 737 MAX quickly, the manufacturer limited the role of its own pilots in the liminal stages of developing its flight-control system, departing from a tradition of seeking their thorough feedback. As a ramification, Boeing’s test pilots involved in the MAX’s design did not receive information about how quickly or steeply MCAS could turn down a plane’s nose. Nor were they updated that the flight panel relied on a single sensor rather than two to test the airplane body orientation.

As the head of Southwest’s pilot union elaborated, pilots were “

kept in the dark” [

38] regarding this: “

We do not like the fact that a new system was put on the aircraft and wasn’t disclosed to anyone or put in the manuals. Is there anything else on the MAX Boeing has not told the operators? If there is, we need to be informed.” The Allied Pilots Association union of American Airlines voiced similar concerns.

Consequently, the Justice Department has opened a criminal investigation into how the manufacturer designed the MAX. The Transportation Department’s inspector general is inquiring into how the MAX was approved by FAA. Among the issues under consideration is why MCAS was designed with a single point of failure without a contingency plan, and why the process of deactivating the MCAS system by the pilot was not properly articulated. For example, the Lion Air flight crew had just 40 s to identify the problem and take the plane out of its nosedive. It is also unclear why the MCAS was reactivated after the pilots deactivated it, when human command takes control over the airplane computer systems.

Normally, the artificial intelligence (AI) installed in aviation autopilots and safety instruments receives signals from a multitude of sensors encompassing the aircraft about vital parameters such as air speed, engine settings, air temperature, and height. These algorithms combine all information into an optimal flight route, designed to navigate the aircraft to its destination at an appropriate speed and altitude based on international flight routes and traffic. However, an AI’s capability to cope with volatile situations is uncertain.

Importantly, the reaction of the MCAS autopilot is supposed to be subtle when it attains control of the aircraft. Engineers intended the rear stabilizers (the horizontal fins on the aircraft’s tail) to move in increments of no more than 0.6 degrees, but tests show the tail stabilizers can move in large intervals of up to 2.5 degrees.

Black box data retrieved from the Lion Air crash site shows that the pilots encountered difficulty retaining control and overriding the autopilot system, despite 21 attempts, until the aircraft was lost. “

Automation is there to make us safer and more productive in the cockpit,” said the president of the Southwest Airlines Pilots Association, “

but that never replaces the overriding commandment that the pilot has to fly the airplane and know what the aircraft is doing.” [

38].

5.6. Procedures and Rules

The sixth antecedent of the crashes was the accelerated certification that the FAA awarded to the 737 MAX without due rigor. In the ensuing battle over responsibility for the crashes, the manufacturer and the FAA pointed fingers at one another. For instance, the Boeing CEO, Muilenburg, was summoned to the hearing of House Committee on Transportation and Infrastructure. The committee checked the 737 MAX’s development and accreditation. Senior Boeing members publicly reconciled to lawmakers in a tense hearing on Capitol Hill that the company made errors. The FAA proclaimed that the manufacturer had hidden internal communications before the first accident, between the two accidents, and after the second accident. Representative Peter DeFazio, chair of the House Committee on Transportation and Infrastructure, called the instant messages “shocking, but disturbingly consistent with what we’ve seen so far in our ongoing investigation of the 737 MAX, especially with regard to production pressures and a lack of candor with regulators and customers.” He argued the incident “is not about one employee; this is about a failure of a safety culture at Boeing in which undue pressure is placed on employees to meet deadlines and ensure profitability at the expense of safety.”

However, the Senate Commerce Committee also launched an investigation into the certification process, which didn’t vindicate the FAA, citing a litany of claims about improperly trained FAA inspectors working on the MAX. The FAA has been suffering for years from budget cuts leading to an early retirement of qualified workforce. It has been struggling to recruit employees with extensive knowledge on the frontier science pertaining to artificial intelligence and business analytics. As a result, it delegated crucial responsibilities that require subject matter experts who are consultants to the companies that it is in charge of regulating, including Boeing. Surprisingly, past Boeing employees were partly responsible for certifying electronic components belonging the MAX and its new features as airworthy. Lawmakers are now scrutinizing this unwholesome relationship between the FAA and the companies it is supposed to inspect.

6. Discussion

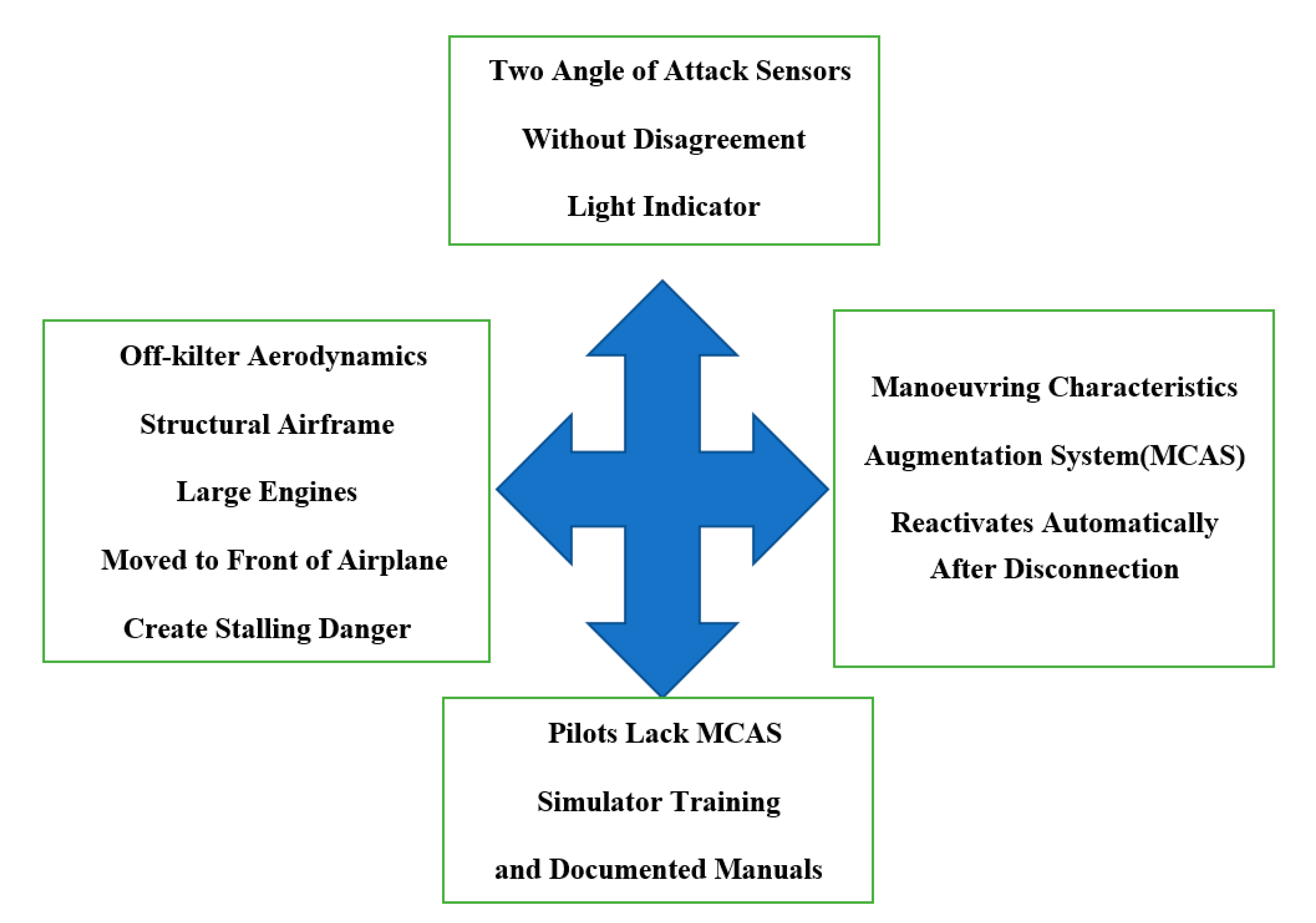

Figure 2 synthesizes the tight coupling system of the MAX’s development through the normal accidents theory lens. In a similar vein,

Table 1 delineates the antecedents of the accidents according to Cooper’s [

14] six dimensions of a safety culture.

What are the outcomes of ignoring a safety culture? For Boeing, the economic effect on the company has been substantial. The manufacturer has paused delivery of MAX airplanes to all customers. The company has suffered a

$28 billion loss in its market value since the Ethiopian Airlines crash, and while it had a pinnacle backlog of approximately 4000 MAX orders worldwide, on March 2019, Indonesian airline Garuda canceled an order of 49 MAX, Brazilian GOL canceled 34 MAX, recently plane-leasing firm Avolon canceled an additional 75 MAX, General Electric’s aircraft-leasing division canceled 69 MAX, and on June 2020, Norwegian Air canceled 92 MAX, orders worth over 20 billion dollars altogether. As a precautionary measure, on December 2019, the manufacturer decided to halt the production of new MAX airplanes in Seattle’s major plant [

39].

The FAA traditional role is to serve as a regulation constraint [

40] for approval of new aircraft models in order to protect passengers’ wellbeing. The US Senate is going to convene a hearing on the FAA’s certification of 737 MAX jets in order to check if FAA fulfilled its mandate. A report released by the independent special committee tasked with reviewing the certification process [

2] revealed a necessity for human resource restructuring of its workforce and an organizational behavior change in the way the FAA evaluation process of new airplane designs is conducted. A set of recommendations were issued by the committee, emphasizing the need for the FAA to rejuvenate by starting a recruitment effort campaign meant to attract fresh college graduates with rigor training in data analytics and artificial intelligence. The field of material science including composite materials and new battery technology deserves special attention by FAA. The committee also identified gaps in the toolset that needed to be addressed by the FAA’s Aircraft Certification Service (AIR). Their report expressed concern that unethical pressure can be exerted on employees by external entities that could prevent them from performing an objective assessment process to renew certification of aircrafts.

Our study suggests that it may be necessary to instill additional steps in order to fill the gaps identified by the special committee meant to improve the manufacturer’s safety and efficacy. First, FAA should diminish the delegation of self-certified permits to manufacturers in order to prevent repetition of the events involving the 737 MAX. Second, the Department of Transportation should maintain strict formal ties with industrial corporations in order to oversee the objectivity of processes. We maintain that such a step is requisite in order to validate staunch support for safety, due to the interdependencies that convoluted the link between FAA and Boeing, possibly obviating objective evaluations about the airworthiness of the latter’s products. As corroborating evidence for this argument, in 2012, an investigation by the Transportation Department’s Office of Inspector General discovered that FAA leadership had not been consistently pursuing requests by agency examiners for Boeing accountability. Similarly, a 2015 report by the inspector general’s office expressed concern about the certification process [

41] stating: “

During our review, industry representatives expressed concern that FAA’s focus was often on paperwork, not on safety-critical items.” The same year, a Boeing executive at a congressional hearing elaborated the relationship as convoluted, because a large number of past Boeing employees were working in the FAA headquarters in the nation’s capital.

Another lesson to learn from this case study is the danger of over-reliance on artificial intelligence in the aviation industry. Pilots during flight are required to make many decisions instantaneously that cannot be delegated to machines. We maintain that the ultimate decisions of commercial flights carrying hundreds of passengers must be made by human beings. No machines should be allowed to repeatedly overrule these decisions, as occurred in the case of the MCAS system. For instance, during the accident, a stick shaker computer system could not be disactivated by the pilot despite being erroneous for six minutes and kept distracting the pilot’s concentration. It is an automatic stall warning system that makes the control column vibrate forcefully in the hands of the pilot if the aircraft is pitched too high and slowing toward stall. Transport Canada safety authority demands, before MAX returns to service, specified instructions for the pilot inside the flight manual on how to pull circuit breakers in order to stop the stick shaker system in the event of an emergency.

Boeing’s actions to restore confidence in the safety of its products are the key to its future. It has taken a number of steps in this direction. First, CEO Dennis Muilenburg relinquished authority in December 2019 and was replaced by Boeing’s chairman, David Calhoun, to restore customers’ confidence. Second, it has responded to the issue of foreign object debris found in the wing fuel tanks of several parked airplanes [

42]. Third, the company has been diligently working on a risk mitigation plan to create software updates in order to address emerging technical problems ascertained during overhaul grind testing such as a warning light of trim-system staying on unintendedly and glitches occurring in the computer that controls runaway stabilizer to unintendedly deactivate auto-pilot during landing. In a similar vein, the company has also acted on demands from the FAA to rewire bundles in the aircrafts’ tail that could overheat into a short circuit leading to the pilot’s loss of directional control. Additionally, as a safeguard measure, if during flight there is misalignment of more than 5.5 degrees between the left and right angle of attack sensors, the MCAS cannot operate. Recently, the manufacturer has being working on a fix to a newly discovered issue during inspection, defects on engine coverings called fairing panels that could lead to loss of power during flight because of vulnerability to lightning strikes. Finally, in order to provide an additional layer of redundancy, Boeing is reconfiguring the two computers’ flight control operations status. For decades, the 737 two computers never cross-checked each other’s operations (the system alternates between computers after each flight). The revised architecture of the flight control system will juxtapose electronic signals from both computers to increase robustness during flight [

43] by switching to a fail-safe two channel redundancy mode [

44].

Future research can build on these case study results to investigate accidents involving autonomous cars too. Specifically, as major car manufacturers are in the process of developing machine learning technologies for autonomous driving capabilities, the reliance on artificial intelligence to automatically navigate cars without a driver’s intervention has led to accidents. For example, The National Transportation Safety Board (NTSB) indicated that Tesla’s semi-autonomous driving feature was partially accountable in a 2018 fatal car crash. The autopilot system steered the Model X sport utility vehicle to the left into the neutral area of the gore without providing an alert to the driver due to the limitations of the Tesla Autopilot vision system’s processing software to accurately maintain the appropriate lane of travel. Consequently, the car was involved in subsequent collisions with two other vehicles [

45].

7. Conclusions

The failure to consider safety as the highest priority has taken a major toll on the manufacturing brand’s esteemed international reputation. Additionally, it damaged the international trust in the accreditation status of the FAA institution, which can negatively impact the regulator’s harmony of bilateral airworthiness agreements. For example, the European Aviation Safety Association (EASA) demands an additional retrofit in the form of a third angle of attack sensor, expressing concerns about peril when disagreement occurs between two sensors’ readings [

46]. Boeing embarked on working on an alternative for a third physical sensor by developing what is called a synthetic sensor, a system that provides an additional indirect angle of attack (AOA) calculation based on a variety of data. For example, the 787 Dreamliner has a similar system called synthetic airspeed, which combines signal data from various inputs and juxtaposes it with sensors’ readings in order to assess the airplane’s altitude and velocity.

Meanwhile, the prolonged grounding of the 737 MAX incurs heavy costs, since it accounted for 40% of the company’s profits. The forecasted cost of training pilots in simulators will increase expenses. Companies such as Southwest Airlines and American Airlines have cancelled thousands of flights designated for MAX over the last two years and are demanding compensation, while their newly acquired airplanes are idle, parking at airport hangers around the United States.

Interestingly, the outbreak of the coronavirus and its development into a worldwide pandemic may turn the tide for Boeing. The decrease in air-travel volume because of quarantine restrictions has given the manufacturer time to work on fixing the MAX malfunctions under the FAA guidance. The manufacturer is aiming to complete the recertification process of MAX in the beginning of 2021, almost two years after the accidents which led it to ground its fleet. This would benefit the national economy, since Boeing maintains its status as the largest U.S. exporter. Specifically, it assembles most of its airplanes in United States, employs over 160,000 people, and is indirectly responsible for 2.5 million jobs through its network of 17,000 suppliers. Ending on a positive note, the executive director of European Union Aviation Agency (EASA) declared that it is expected to issue a draft of an airworthiness directive by the end of 2020, and after half a year without new orders, Polish charter airline Enter Air procured two airplanes from Boeing [

46].