Promoting Students’ Well-Being by Developing Their Readiness for the Artificial Intelligence Age

Abstract

1. Introduction

2. Literature Review

2.1. Student Readiness

2.2. Constructs for Measuring Student Readiness

2.2.1. AI Literacy

2.2.2. Confidence

2.2.3. Relevance

2.2.4. Anxiety

- Q1:

- Does the instrument designed to measure students’ perception of readiness to learn AI provide an interpretable factor structure?

- Q2:

- What are the structural relationships among students’ perception of readiness to learn AI, confidence, anxiety, AI literacy, and the relevance of AI learning?

- Q3:

- Do gender differences exist between male and female students’ perceptions of readiness for AI learning?

- Q4:

- What are the students’ sentiments toward AI as reflected by open-ended questions?

3. Method

3.1. Background

3.2. Participants

3.3. Instruments

3.4. Data Collection and Analysis

4. Results

4.1. Factor Analysis of the Survey

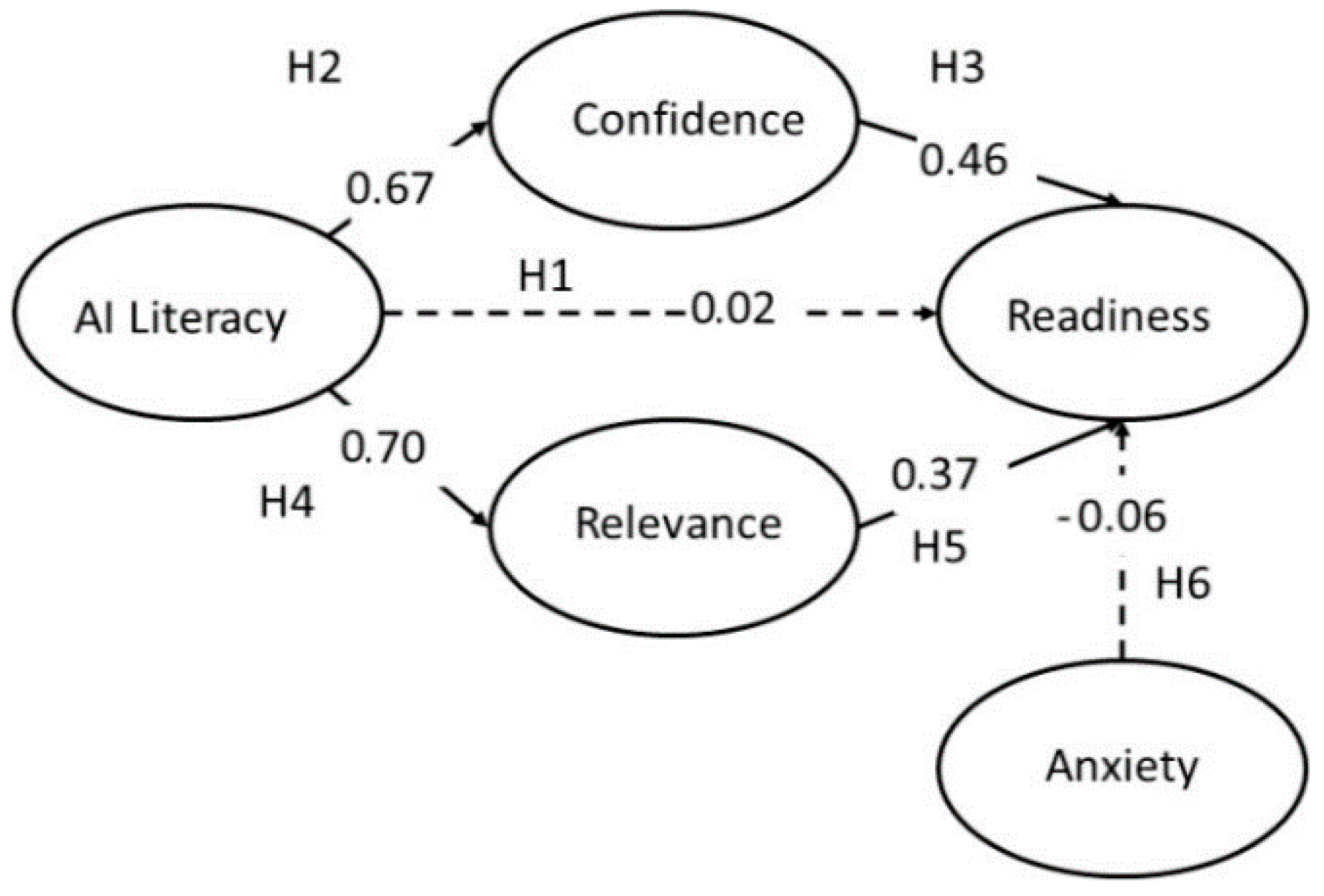

4.2. SEM of the Students’ AI Learning

4.3. Gender Differences

Latent Mean Analysis

4.4. Analyses of Students’ Open-Ended Responses

5. Discussion and Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Cope, B.; Kalantzis, M.; Searsmith, D. Artificial Intelligence for Education: Knowledge and Its Assessment in AI-Enabled Learning Ecologies. Educ. Philos. Theory 2020, 1–17. [Google Scholar] [CrossRef]

- Makridakis, S. The forthcoming Artificial Intelligence (AI) Revolution: Its Impact on Society and Firms. Futures 2017, 90, 46–60. [Google Scholar] [CrossRef]

- Woolf, B.; Lane, H.; Chaudhri, V.; Kolodner, J. AI Grand Challenges for Education. AI Mag. 2013, 34, 66–84. [Google Scholar] [CrossRef]

- UN General Assembly. Transforming Our World: The 2030 Agenda for Sustainable Development; United Nations Resolution A/RES/70/1; UN General Assembly: New York, NY, USA, 2015. [Google Scholar]

- Gherhes, V.; Obrad, C. Technical and humanities students’ perspectives on the development and sustainability of Artificial Intelligence (AI). Sustainability 2018, 10, 3066. [Google Scholar] [CrossRef]

- Chai, C.S.; Lin, P.-Y.; Jong, M.S.Y.; Dai, Y.; Chiu, T.K.F.; Qin, J.J. Perceptions of and behavioral intentions towards learning artificial intelligence in primary school students. Educ. Technol. Soc. 2020, in press. [Google Scholar]

- Chiu, T.K.F.; Chai, C.S. Sustainable Curriculum Planning for Artificial Intelligence Education: A Self-Determination theory Perspective. Sustainability 2020, 12, 5568. [Google Scholar] [CrossRef]

- Knox, J. Artificial Intelligence and Education in China. Learn. Media Technol. 2020, 1–14. [Google Scholar] [CrossRef]

- Elliott, A. The Culture of AI; Routledge: London, UK, 2019. [Google Scholar]

- Tomlinson, C.; Brighton, C.; Hertberg, H.; Callahan, C.; Moon, T.; Brimijoin, K.; Conover, L.; Reynolds, T. Differentiating Instruction in Response To Student Readiness, Interest, and Learning Profile in Academically Diverse Classrooms: A Review of Literature. J. Educ. Gift. 2003, 27, 119–145. [Google Scholar] [CrossRef]

- Ajzen, I. The theory of planned behavior. In Handbook of Theories of Social Psychology; Van Lange, P., Kruglanski, A., Higgins, E., Eds.; Sage: London, UK, 2012; pp. 438–459. [Google Scholar]

- Keller, J. Motivational Design for Learning and Performance: The ARCS Model Approach; Springer: Boston, MA, USA, 2010. [Google Scholar]

- Parasuraman, A.; Colby, C. An Updated and Streamlined Technology Readiness Index. J. Serv. Res. 2015, 18, 59–74. [Google Scholar] [CrossRef]

- Smith, P. Learning Preferences and Readiness for online Learning. Educ. Psychol. 2005, 25, 3–12. [Google Scholar] [CrossRef]

- Hussin, S.; Radzi Manap, M.; Amir, Z.; Krish, P. Mobile Learning Readiness among Malaysian Students at Higher Learning Institutes. Asian Soc. Sci. 2012, 8, 276–283. [Google Scholar] [CrossRef]

- Peterson, C.; Casillas, A.; Robbins, S. The Student Readiness Inventory and the Big Five: Examining Social Desirability and College Academic Performance. Personal. Individ. Differ. 2006, 41, 663–673. [Google Scholar] [CrossRef]

- Xiong, Y.; So, H.; Toh, Y. Assessing Learners’ Perceived Readiness for Computer-Supported Collaborative Learning (CSCL): A Study on Initial Development and Validation. J. Comput. High. Educ. 2015, 27, 215–239. [Google Scholar] [CrossRef]

- Dray, B.; Lowenthal, P.; Miszkiewicz, M.; Ruiz-Primo, M.; Marczynski, K. Developing an Instrument to Assess Student Readiness for online Learning: A Validation Study. Distance Educ. 2011, 32, 29–47. [Google Scholar] [CrossRef]

- Pillay, H.; Irving, K.; Tones, M. Validation of the Diagnostic Tool for Assessing Tertiary Students’ Readiness for online Learning. High. Educ. Res. Dev. 2007, 26, 217–234. [Google Scholar] [CrossRef]

- Jong, M.S.Y.; Lee, J.H.M.; Shang, J.J. Educational use of computer game: Where we are and what’s next. In Reshaping Learning: Frontiers of Learning Technology in a Global Context; Huang, R., Kinshuk, Spector, J.M., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 299–320. [Google Scholar]

- Chai, C.S.; Liang, J.-C.; Tsai, C.-C.; Dong, Y. Surveying and Modelling China High School Students’ Experience of and Preferences for Twenty-First-Century Learning and their Academic and Knowledge Creation Efficacy. Educ. Stud. 2019, 1–18. [Google Scholar] [CrossRef]

- Soulé, H.; Warrick, T. Defining 21st Century Readiness for All Students: What We Know and How to Get there. Psychol. Aesthet. Creat. Arts 2015, 9, 178–186. [Google Scholar] [CrossRef]

- Chai, C.S.; Lin, P.-Y.; Jong, M.S.; Dai, Y.; Chiu, T.K.; Qin, J. Primary School Students’ Perceptions and Behavioral Intentions of Learning Artificial Intelligence. Educ. Technol. Soc 2020, in press. [Google Scholar]

- Zawacki-Richter, O.; Marín, V.I.; Bond, M.; Gouverneur, F. Systematic Review of Research on Artificial Intelligence Applications in Higher Education—Where Are the Educators? Int. J. Educ. Technol. High. Educ. 2019, 16, 39. [Google Scholar] [CrossRef]

- Young, B.J. Gender Differences in Student attitudes toward Computers. J. Res. Comput. Educ. 2000, 33, 204–216. [Google Scholar] [CrossRef]

- Shivers-Blackwell, S.L.; Charles, A.C. Ready, Set, Go: Examining Student Readiness to Use ERP Technology. J. Manag. Dev. 2006, 25, 795–805. [Google Scholar] [CrossRef]

- Ertl, B.; Luttenberger, S.; Paechter, M. The Impact of Gender Stereotypes on the Self-Concept of Female Students in STEM Subjects with an Under-Representation of Females. Front. Psychol. 2017, 8, 703. [Google Scholar] [CrossRef] [PubMed]

- Lee, M.-H.; Chai, C.S.; Hong, H.-Y. STEM Education in Asia Pacific: Challenges and Development. Asia-Pac. Educ. Res. 2019, 28, 1–4. [Google Scholar] [CrossRef]

- Leslie, S.-J.; Cimpian, A.; Meyer, M.; Freeland, E. Expectations of Brilliance Underlie Gender Distributions across Academic Disciplines. Science 2015, 347, 262–265. [Google Scholar] [CrossRef] [PubMed]

- Seldon, A.; Abidoye, O. The Fourth Education Revolution: Will Artificial Intelligence Liberate or Infantalise Humanity; The University of Buckingham Press: Angleterre, UK, 2018. [Google Scholar]

- Hung, M.-L.; Chou, C.; Chen, C.-H.; Own, Z.-Y. Learner Readiness for online Learning: Scale Development and Student Perceptions. Comput. Educ. 2010, 55, 1080–1090. [Google Scholar] [CrossRef]

- Choi, J.R.; Straubhaar, J.; Skouras, M.; Park, S.; Santillana, M.; Strover, S. Techno-Capital: Theorizing Media and Information Literacy through Information Technology Capabilities. New Media Soc. 2020. [Google Scholar] [CrossRef]

- Qin, J.J.; Ma, F.G.; Guo, Y.M. Foundations of Artificial Intelligence for Primary School; Popular Science Press: Beijing, China, 2019. [Google Scholar]

- Amit-Aharon, A.; Melnikov, S.; Warshawski, S. The Effect of Evidence-Based Practice Perception, Information Literacy Self-Efficacy, and Academic Motivation on Nursing Students Future Implementation of Evidence-Based Practice. J. Prof. Nurs. 2020. [Google Scholar] [CrossRef]

- Smith, P.J.; Murphy, K.L.; Mahoney, S.E. Towards Identifying Factors Underlying Readiness for online Learning: An Exploratory Study. Distance Educ. 2003, 24, 57–67. [Google Scholar] [CrossRef]

- Ryan, R.M.; Deci, E.L. Promoting self-determined school engagement. In Handbook of Motivation at School; Wentzel, K.R., Wigfield, A., Eds.; Routledge: New York, NY, USA, 2009; pp. 171–195. [Google Scholar]

- Komarraju, M.; Ramsey, A.; Rinella, V. Cognitive and Non-Cognitive Predictors of College Readiness and Performance: Role of Academic Discipline. Learn. Individ. Differ. 2013, 24, 103–109. [Google Scholar] [CrossRef]

- Kember, D.; Ho, A.; Hong, C. The Importance of Establishing Relevance in Motivating Student Learning. Act. Learn. High. Educ. 2008, 9, 249–263. [Google Scholar] [CrossRef]

- Keller, J.M. Motivational design of instruction. In Instructional-Design Theories and Models: An Overview of Their Current Status; Reigeluth, C.M., Ed.; Erlbaum: Hillsdale, NJ, USA, 1983; pp. 383–434. [Google Scholar]

- Keller, J.M. Motivational Design and Multimedia: Beyond the Novelty Effect. Strateg. Hum. Resour. Dev. Rev. 1997, 1, 188–203. [Google Scholar]

- Keller, J.M. Motivation in Cyber Learning Environments. Int. J. Educ. Technol. 1999, 1, 7–30. [Google Scholar]

- Jong, M.S.Y. Promoting elementary pupils’ learning motivation in environmental education with mobile inquiry-oriented ambience-aware fieldwork. Int. J. Environ. Res. Public Health 2020, 17, 2504. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.-Y.; Wang, Y.-S. Development and Validation of an Artificial Intelligence Anxiety Scale: An Initial Application in Predicting Motivated Learning Behavior. Interact. Learn. Environ. 2019, 1–16. [Google Scholar] [CrossRef]

- Igbaria, M.; Parasuraman, S. A Path Analytic Study of Individual Characteristics, Computer Anxiety and attitudes toward Microcomputers. J. Manag. 1989, 15, 373–388. [Google Scholar] [CrossRef]

- Beckers, J.; Schmidt, H. the Structure of Computer Anxiety: A Six-Factor Model. Comput. Hum. Behav. 2001, 17, 35–49. [Google Scholar] [CrossRef]

- Saadé, R.G.; Kira, D. Mediating the Impact of Technology Usage on Perceived Ease of Use by Anxiety. Comput. Educ. 2007, 49, 1189–1204. [Google Scholar] [CrossRef]

- New Generation Artificial Intelligence Development Plan. Available online: http://www.gov.cn/zhengce/content/2017-07/20/content_5211996.htm (accessed on 6 July 2020).

- Parasuraman, A. Technology Readiness Index (Tri). J. Serv. Res. 2000, 2, 307–320. [Google Scholar] [CrossRef]

- Song, S.H.; Keller, J.M. The Arcs Model for Developing Motivationally-Adaptive Computer-Assisted Instruction. In Proceedings of the Selected Research and Development Papers Presented at the National Convention of the Association for Educational Communications and Technology, Houston, TX, USA, 10–14 February 1999. [Google Scholar]

- Pintrich, P.R.; Smith, D.; Garcia, T.; McKeachie, W. A Manual for the Use of the Motivated Strategies for Learning Questionnaire (MSLQ); The University of Michigan: Ann Arbor, MI, USA, 1991. [Google Scholar]

- Gorsuch, R.L. Factor Analysis, 2nd ed.; Erlbaum: Hillsdale, NJ, USA, 1983. [Google Scholar]

- Hair, J.F.; Black, W.C.; Babin, B.J.; Anderson, R.E.; Tatham, R.L. Multivariate Data Analysis, 6th ed.; Pearson Prentice Hall: Upper Saddle, NJ, USA, 2009. [Google Scholar]

- Kline, R.B. Principles and Practice of Structural Equation Modeling, 3rd ed.; Guilford Press: New York, NY, USA, 2011. [Google Scholar]

- Raykov, T.; Marcoulides, G.A. An Introduction to Applied Multivariate Analysis; Taylor and Francis: Hoboken, NJ, USA, 2008. [Google Scholar]

- Vandenberg, R.J.; Lance, C.E. A Review and Synthesis of the Measurement Invariance Literature: Suggestions, Practices, and Recommendations for Organizational Research. Organ. Res. Methods 2000, 3, 4–70. [Google Scholar] [CrossRef]

- Strauss, A.L.; Corbin, J.M. Basics of Qualitative Research: Techniques and Procedures for Developing Grounded Theory, 2nd ed.; Sage Publications: Thousand Oaks, CA, USA, 1998. [Google Scholar]

- Jong, M.S.Y.; Shang, J.J.; Lee, F.L.; Lee, J.H.M.; Law, H.Y. An exploratory study on teachers’ perceptions of game-based situated learning. In Learning by Effective Utilization of Technologies: Facilitating Intercultural; Mizoguchi, R., Dillenbourg, P., Zhu, Z., Eds.; IOS Press: Amsterdam, The Netherlands, 2006; pp. 525–532. [Google Scholar]

- Jong, M.S.Y.; Shang, J.J.; Lee, F.L.; Lee, J.H.M. An evaluative study on VISOLE—Virtual Interactive Student-Oriented Learning Environment. IEEE Trans. Learn. Technol. 2010, 3, 307–318. [Google Scholar] [CrossRef]

- Hair, J.F., Jr.; Black, W.C.; Babin, B.J.; Anderson, R.E.; Tatham, R.L. Multivariate Data Analysis: A Global Perspective; Pearson Education: Upper Saddle River, NJ, USA, 2010. [Google Scholar]

- Charles, M.; Bradley, K. Indulging Our Gendered Selves? Sex Segregation by Field of Study in 44 Countries. Am. J. Sociol. 2009, 114, 924–976. [Google Scholar] [CrossRef] [PubMed]

- Sechiyama, K. Patriarchy in East Asia: A Comparative Sociology Of Gender; Global Oriental: Leiden, The Netherlands, 2013. [Google Scholar]

- Weber, K. Gender Differences in Interest, Perceived Personal Capacity, and Participation in STEM-Related Activities. J. Technol. Educ. 2012, 24, 18–33. [Google Scholar] [CrossRef]

- Wu, L.; Jing, W. Asian Women in STEM Careers: An Invisible Minority in a Double Bind. Issues Sci. Technol. 2011, 28, 82–87. [Google Scholar]

- So, J.J.; Jong, M.S.Y.; Liu, C.C. Computational thinking education in the Asian Pacific region. Asia Pac. Educ. Res. 2020, 29, 1–8. [Google Scholar] [CrossRef]

| Items | Factor Loading |

|---|---|

| AI Readiness, M = 3.55, SD = 0.64, α = 0.92 | |

| RE2 | 0.87 |

| RE1 | 0.87 |

| RE3 | 0.77 |

| RE4 | 0.70 |

| RE6 | 0.61 |

| AI anxiety, M = 2.27, SD= 1.12, α = 0.94 | |

| A1 | 0.94 |

| A3 | 0.91 |

| A2 | 0.90 |

| A5 | 0.84 |

| AI Literacy, M = 3.54, SD = 0.64, α = 0.90 | |

| AL1 | 0.81 |

| AL2 | 0.73 |

| AL3 | 0.72 |

| AL5 | 0.71 |

| Relevance of AI, M = 3.49, SD = 0.66, α = 0.91 | |

| R5 | 0.82 |

| R4 | 0.79 |

| R2 | 0.75 |

| R1 | 0.67 |

| R3 | 0.66 |

| Confidence in AI, M = 3.48, SD = 0.64, α = 0.89 | |

| C1 | 0.86 |

| C3 | 0.74 |

| C2 | 0.68 |

| C4 | 0.66 |

| 1 | 2 | 3 | 4 | 5 | |

|---|---|---|---|---|---|

| 1. Readiness | (0.77) | ||||

| 2. Anxiety | −0.06 | (0.9) | |||

| 3. AI literacy | 0.59 *** | −0.01 | (0.74) | ||

| 4. Relevance | 0.70 *** | −0.06 | 0.67 *** | (0.74) | |

| 5. Confidence | 0.69 *** | −0.07 | 0.62 *** | 0.68 *** | (0.73) |

| Hypotheses | Path | Estimate | Standardized Weight | Critical Ratio | Hypotheses Supported? |

|---|---|---|---|---|---|

| H1 | AI literacy → Readiness | 0.02 | −0.03 | −0.29 | No |

| H2 | AI Literacy → Confidence | 0.67 | 0.70 | 11.92 *** | Yes |

| H3 | Confidence → Readiness | 0.35 | 0.46 | 6.63 *** | Yes |

| H4 | AI Literacy → Relevance | 0.70 | 0.83 | 14.12 *** | Yes |

| H5 | Relevance → readiness | 0.37 | 0.42 | 4.34 *** | Yes |

| H6 | Anxiety → Readiness | −0.02 | −0.06 | −1.45 | No |

| χ2 | Df | χ2/df | p-Value | CFI | ΔCFI | RMSEA | |

|---|---|---|---|---|---|---|---|

| Configural invariance | 876.605 | 398 | 2.20 | 0.931 | 0.051 | ||

| Metric invariance | 908.730 | 415 | 2.19 | 0.015 | 0.929 | 0.002 | 0.051 |

| Scalar invariance | 954.292 | 437 | 2.18 | 0.000 | 0.926 | 0.003 | 0.051 |

| Differences of Latent Mean | C.R. | |

|---|---|---|

| Relevance | −0.15 | −2.73 ** |

| Anxiety | −0.04 | −0.39 |

| AI literacy | −0.06 | −1.12 |

| Confidence | −0.22 | −3.51 *** |

| Readiness | −0.21 | −3.99 *** |

| Categories | Codes | Meaning of Codes with the Example of Students’ Written Responses | Frequency | Percentage |

|---|---|---|---|---|

| Not applicable | NIL | No comments | 145 | 26.4% |

| Positive | Advanced | AI is advanced technology | 25 | 4.6% |

| “Artificial intelligence is powerful” | ||||

| Better | AI is getting better | 9 | 1.6% | |

| “I think AI will be better in the future.” | ||||

| Intend | Intend to learn more | 20 | 3.6% | |

| “AI is very interesting and I will continue to learn AI.” | ||||

| Social benefits | Use AI to promote social benefits | 9 | 1.6% | |

| “Serve others better with artificial intelligence” | ||||

| Convenience | AI is convenience | 53 | 9.7% | |

| “Good for us is convenience and simplicity” | ||||

| Useful | AI is useful | 112 | 20.4% | |

| “AI can help us to solve difficulties encountered in life” | ||||

| Good | AI is good/great | 60 | 10.9% | |

| “AI is really great” | ||||

| Interesting | AI is interesting | 34 | 6.2% | |

| “Artificial intelligence is fun” | ||||

| Like | I like AI | 31 | 5.6% | |

| “I love artificial intelligence” | ||||

| Neutral | Mixed | Mixed responses (like and fear) | 20 | 3.6% |

| “Artificial intelligence can help humans, but in the future, artificial intelligence may dominate humans.” | ||||

| Change | AI is changing the world | 5 | 0.9% | |

| “Artificial intelligence will change the world” | ||||

| Negative | Difficult | Difficulty in understanding | 6 | 1.1% |

| “I think artificial intelligence is good, but hard to learn” | ||||

| Fear | Fear of AI | 20 | 3.6% | |

| “Artificial intelligence will cause many people to lose their jobs and even human extinction which will be very bad” |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dai, Y.; Chai, C.-S.; Lin, P.-Y.; Jong, M.S.-Y.; Guo, Y.; Qin, J. Promoting Students’ Well-Being by Developing Their Readiness for the Artificial Intelligence Age. Sustainability 2020, 12, 6597. https://doi.org/10.3390/su12166597

Dai Y, Chai C-S, Lin P-Y, Jong MS-Y, Guo Y, Qin J. Promoting Students’ Well-Being by Developing Their Readiness for the Artificial Intelligence Age. Sustainability. 2020; 12(16):6597. https://doi.org/10.3390/su12166597

Chicago/Turabian StyleDai, Yun, Ching-Sing Chai, Pei-Yi Lin, Morris Siu-Yung Jong, Yanmei Guo, and Jianjun Qin. 2020. "Promoting Students’ Well-Being by Developing Their Readiness for the Artificial Intelligence Age" Sustainability 12, no. 16: 6597. https://doi.org/10.3390/su12166597

APA StyleDai, Y., Chai, C.-S., Lin, P.-Y., Jong, M. S.-Y., Guo, Y., & Qin, J. (2020). Promoting Students’ Well-Being by Developing Their Readiness for the Artificial Intelligence Age. Sustainability, 12(16), 6597. https://doi.org/10.3390/su12166597