Intelligent Vehicle Moving Trajectory Prediction Based on Residual Attention Network

Abstract

1. Introduction

- (1)

- The existing methods follow the probabilistic prediction models. Due to the dispersions of the probability distributions in the predicted trajectory, it is difficult to guarantee the prediction accuracy.

- (2)

- Furthermore, other studies may consider the effects of the interactions between vehicles on the prediction results based on the time series methods. However, they barely focus on the fact that the influences of interfering vehicles in different positions and driving situations on the self-vehicle are different. This involves the issue of weight.

2. Related Work

2.1. Prediction Algorithm

2.2. Impact of Interaction on Prediction

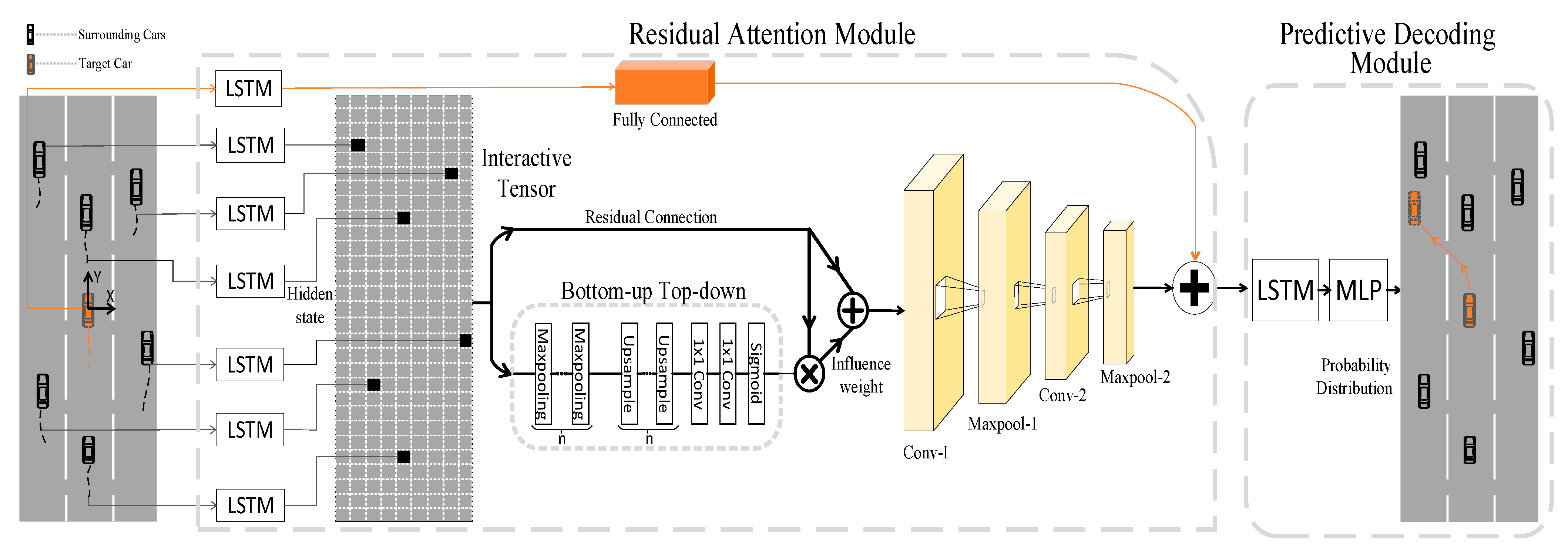

3. Methodology

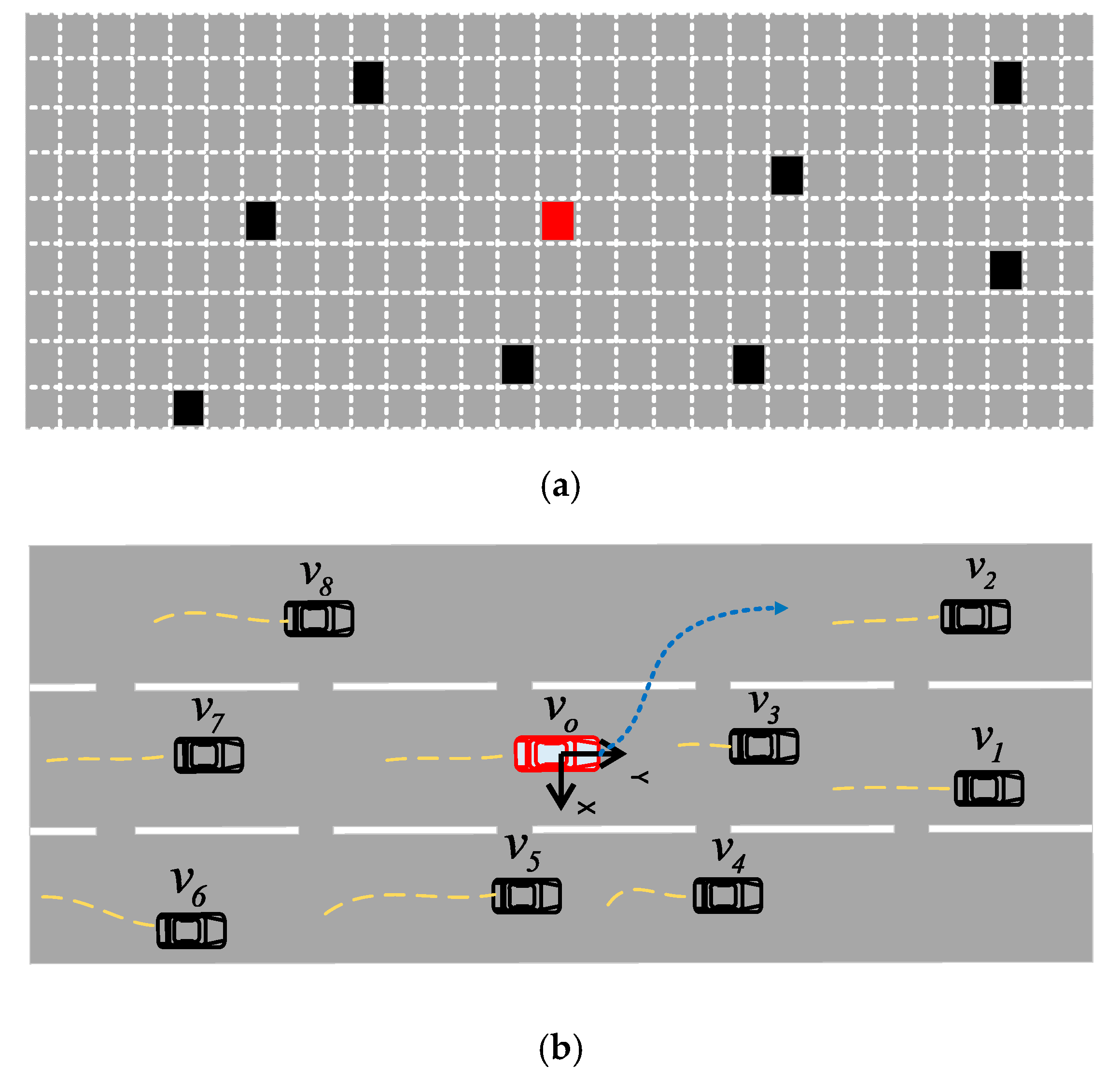

3.1. Research Scenario

3.2. Model Input and Output

3.3. Historical Trajectory Coding

3.4. Interaction Tensor Filling

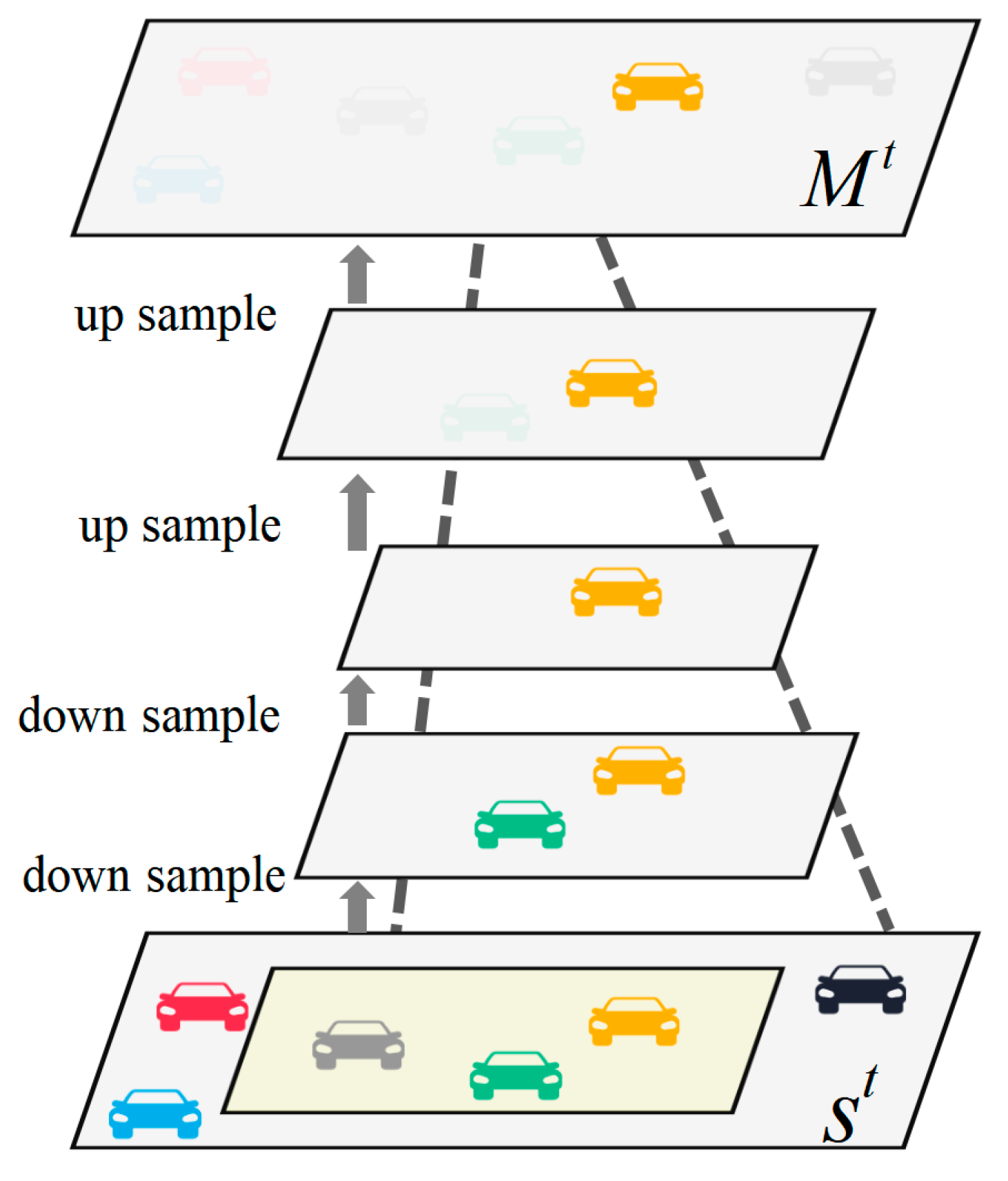

3.5. Vehicle Interaction Feature Extraction

3.6. Weight Coefficient of Multilayer Perceptron

3.7. Predictive Decoding Module

4. Experiment

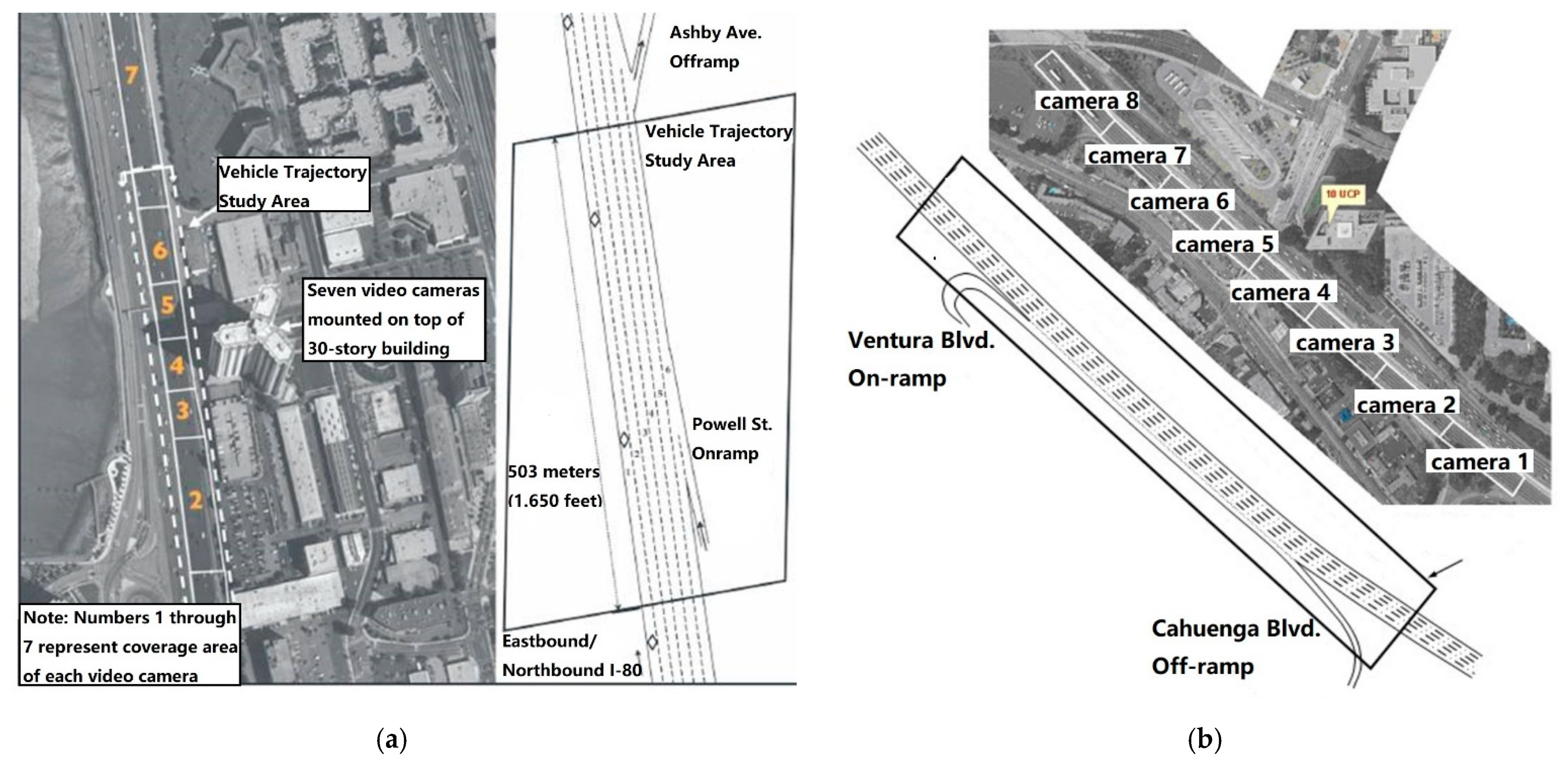

4.1. Data Pre-Processing

4.2. Model Training Details

4.3. Comparison and Analysis of Experimental Results

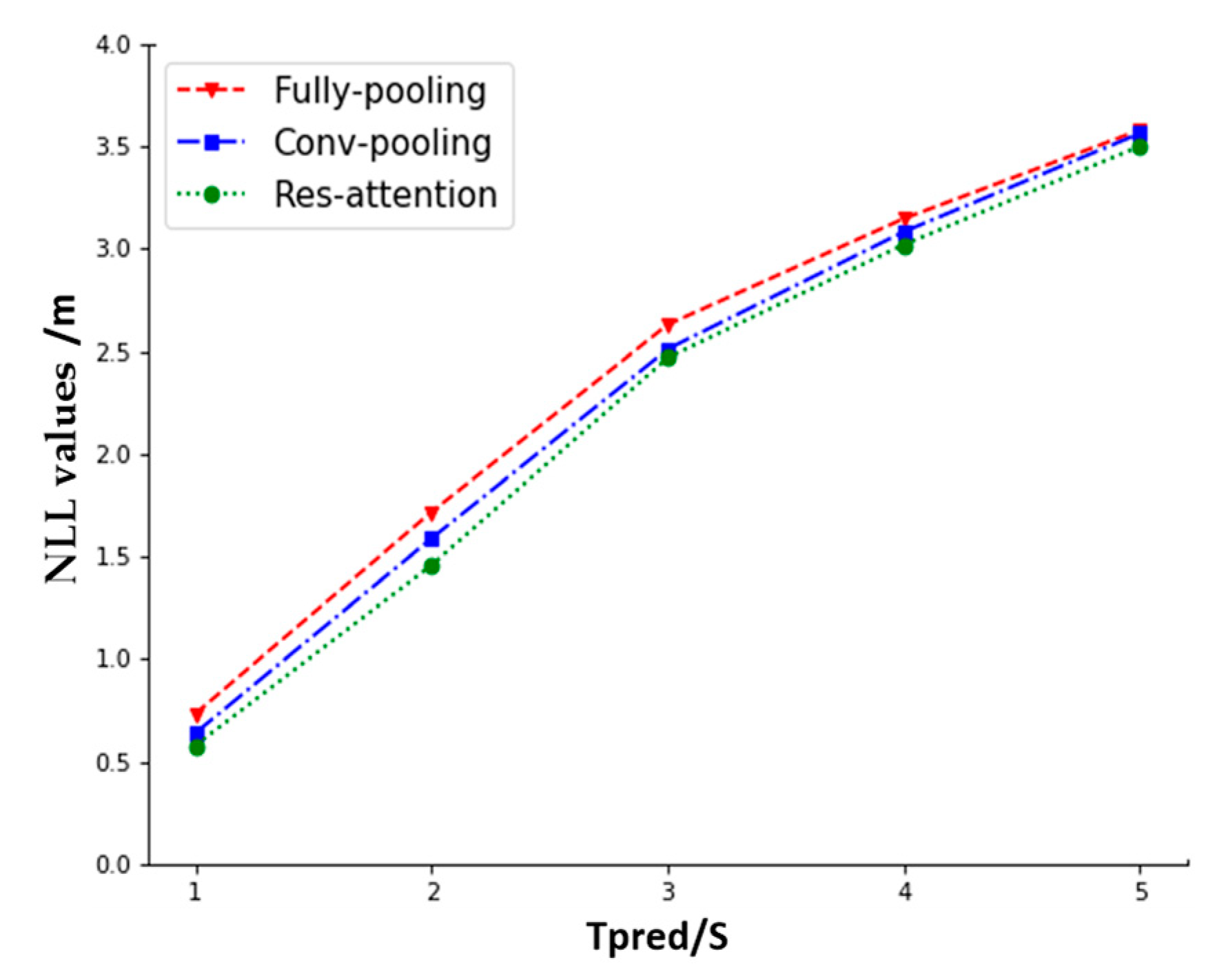

- (1)

- In order to verify the effectiveness of the improved model of this method, the prediction errors of this method were compared with several classical models for the next 5 s under the same historical domain-length trajectory input of 3 s.

- ①

- S-LSTM: A social pooling LSTM proposed in [12], which uses a fully connected layer to extract the interaction between the target vehicle and surrounding vehicles in the original interaction tensor;

- ②

- CS-LSTM: A convolution Social pooling LSTM proposed in [14], which uses a convolutional pooling layer to extract the interaction between the self-vehicle and surrounding vehicles in the original interaction tensor;

- ③

- RA-LSTM: The Res-attention LSTM proposed in this paper introduces the residual attention module to calculate the influence weights of all surrounding vehicles in the influence domain area A to improve the accuracy of extracting the interactive features of surrounding vehicles at each moment.

- (2)

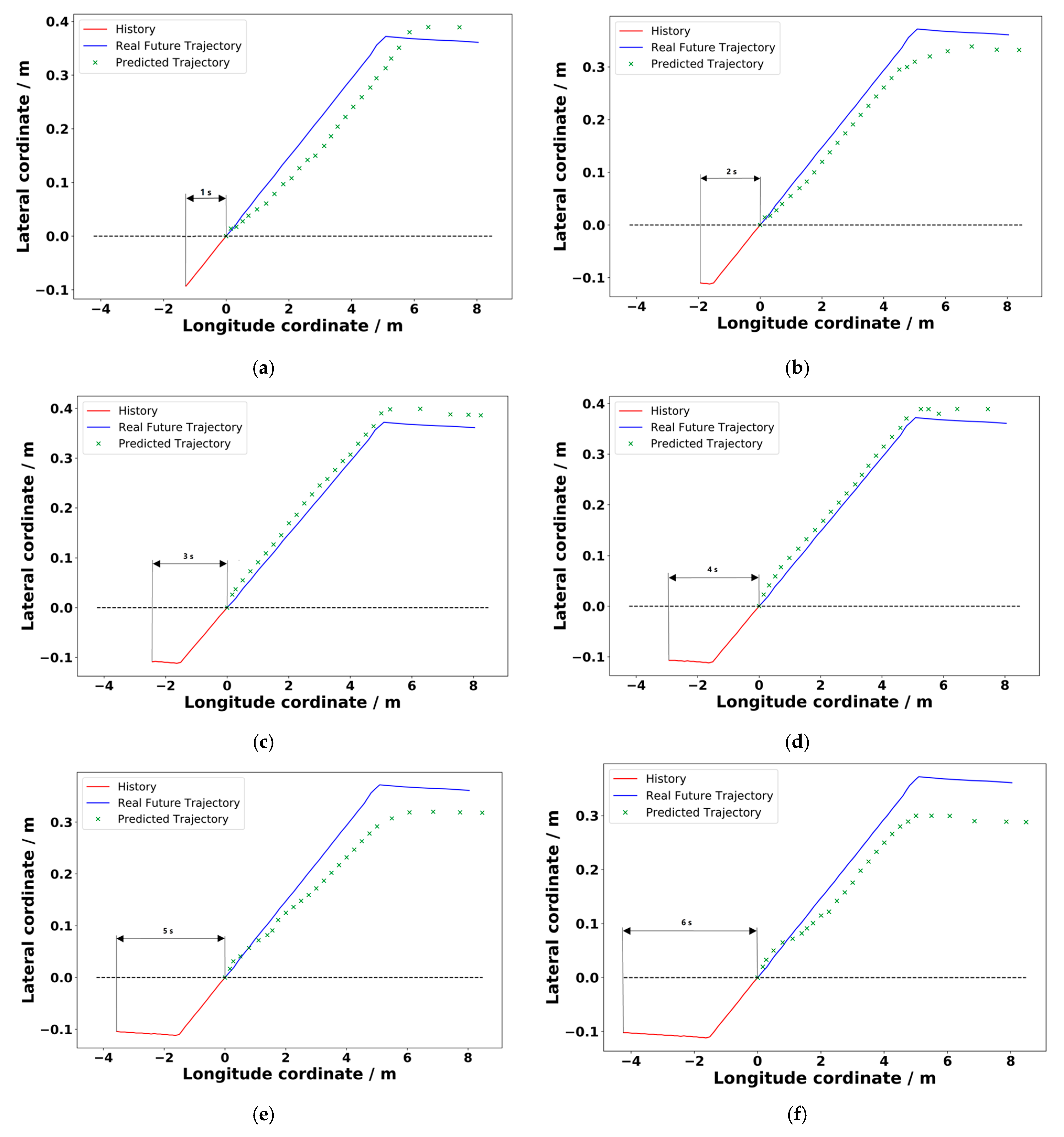

- The history domain length of the model input trajectory has a large impact on the accuracy of the future predicted trajectory; The interaction between the self-vehicle and the surrounding vehicles cannot be extracted from an overly short trajectory history, and an overly long trajectory history will lead to a computational stress disaster. In order to determine the optimal historical domain length of the model, the prediction deviations of this method under different domain lengths of historical trajectories were compared and the predicted trajectories were visualized.

4.3.1. Model Performance Comparison

4.3.2. Impact of Historical Duration on Forecasting Results

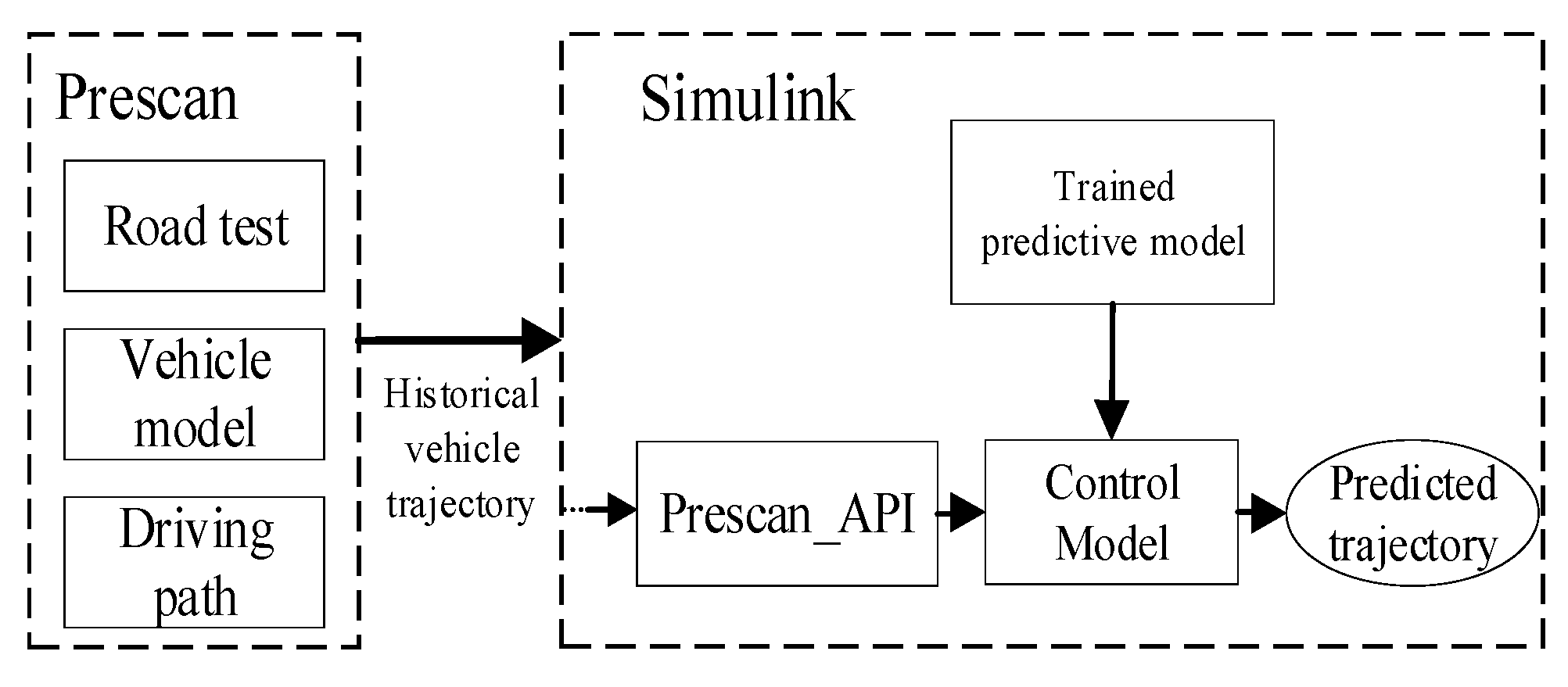

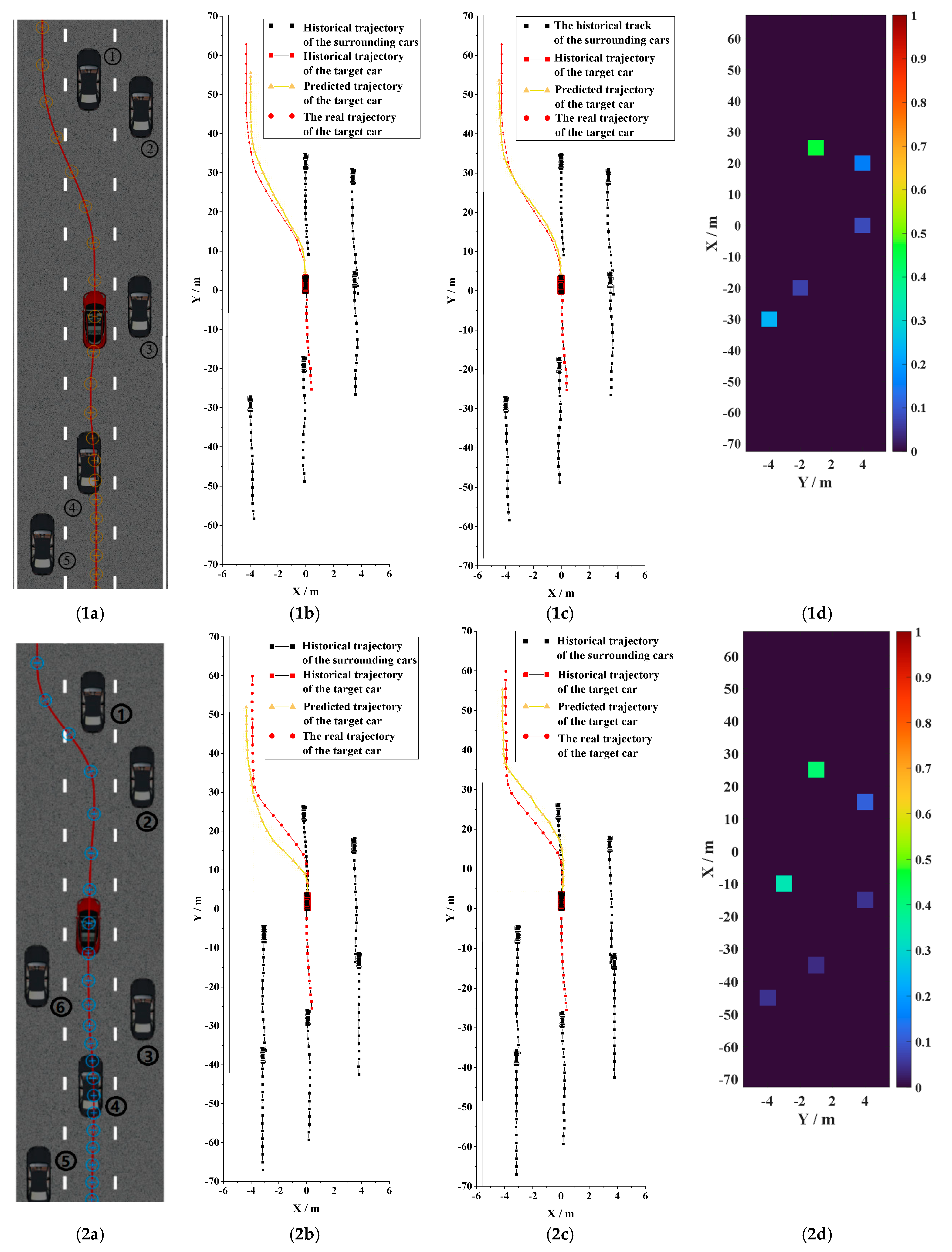

4.4. Scenario-Based Analysis

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Barth, A.; Franke, U. Where will the oncoming vehicle be the next second? In Proceedings of the 2008 IEEE Intelligent Vehicles Symposium, Eindhoven, The Netherlands, 4–6 June 2008. [Google Scholar]

- Ammoun, S.; Nashashibi, F. Real time trajectory prediction for collision risk estimation between vehicles. In Proceedings of the 2009 IEEE 5th International Conference Intelligent Computer Communication and Processing, Cluj-Napoca, Romania, 27–29 August 2009. [Google Scholar]

- Schubert, R.; Adam, C.; Obst, M. Empirical evaluation of vehicular models for ego motion estimation. In Proceedings of the 2011 IEEE Intelligent Vehicles Symposium, Baden-Baden, Germany, 5–9 June 2011. [Google Scholar]

- Tamke, A.; Dang, T.; Breuel, G. A flexible method for criticality assessment in driver assistance systems. In Proceedings of the 2011 IEEE Intelligent Vehicles Symposium, Baden-Baden, Germany, 5–9 June 2011. [Google Scholar]

- Zhang, R. Image Vehicle Motion Trajectory Prediction Method Under Complex Environment. J. Mech. Eng. 2011, 47, 16. [Google Scholar] [CrossRef]

- Song, X.L.; Xiong, Q.W.; Cao, H.T. Research and Simulation on Cooperative Collision Warning Based on Trajectory Prediction. J. Hunan Univ. 2016, 43, 1–7. [Google Scholar] [CrossRef]

- Hermes, C.; Wohler, C.; Schenk, K. Long-term Vehicle Motion Prediction. In Proceedings of the 2009 IEEE Intelligent Vehicles Symposium, Xi’an, China, 3–5 June 2009. [Google Scholar]

- Otto, C.; Leon, F.P. Long-term trajectory classification and prediction of commercial vehicles for the application in advanced driver assistance systems. In Proceedings of the 2012 American Control Conference, Montreal, QC, Canada, 27–29 June 2012. [Google Scholar]

- Houenou, A.; Bonnifait, P.; Cherfaoui, V. Vehicle Trajectory Prediction based on Motion Model and Maneuver Recognition. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots & Systems, Tokyo, Japan, 3–7 November 2013. [Google Scholar]

- Woo, H.; Ji, Y.; Tamura, Y. Trajectory Prediction of Surrounding Vehicles Considering Individual Driving Characteristics. Int. J. Automot. Eng. 2018, 9, 282–288. [Google Scholar] [CrossRef][Green Version]

- Xie, F.; Lou, J.; Zhao, K. A Research on Vehicle Trajectory Prediction Method Based on Behavior Recognition and Curvature Constraints. Automot. Eng. 2019, 41, 1036–1042. [Google Scholar] [CrossRef]

- Xu, Y.; Xie, J.; Zhao, T. Learning Trajectory Prediction with Continuous Inverse Optimal Control via Langevin Sampling of Energy-Based Models. arXiv 2019, arXiv:1904.05453v. [Google Scholar]

- Mozaffari, S.; Al-Jarrah, O.Y.; Dianati, M. Deep Learning-based Vehicle Behaviour Prediction For Autonomous Driving Applications: A Review. IEEE Trans. Intell. Transp. Syst. 2019, 23, 33–47. [Google Scholar] [CrossRef]

- Duan, Y.; Lv, Y.; Wang, F.Y. Travel time prediction with LSTM neural network. In Proceedings of the 2016 IEEE International Conference on Intelligent Transportation Systems, Rio de Janeiro, Brazil, 1–4 November 2016. [Google Scholar]

- Mikhailov, S.; Kashevnik, A. Car Tourist Trajectory Prediction Based on Bidirectional LSTM Neural Network. Electronics 2021, 10, 1390. [Google Scholar] [CrossRef]

- Hou, L.; Xin, L.; Li, S.E. Interactive Trajectory Prediction of Surrounding Road Users for Autonomous Driving Using Structural-LSTM Network. IEEE Trans. Intell. Transp. Syst. 2019, 21, 4615–4625. [Google Scholar] [CrossRef]

- Ji, X.W.; Fei, C.; He, X.K. Intention Recognition and Trajectory Prediction for Vehicles Using LSTM Network. China J. Highw. Transp. 2019, 32, 34–42. [Google Scholar]

- Hu, L. Research on Autonomous Vehicles Trajectory Prediction Method Based on LSTM; Guilin University of Electronic Technology: Guilin, China, 2021. [Google Scholar]

- Su, L.M. Research on Trajectory Prediction Method Based on Machine Learning; Beijing University of Posts and Telecommunications: Beijing, China, 2019. [Google Scholar]

- Khakzar, M.; Bond, A.; Rakotonirainy, A. Driver influence on vehicle trajectory prediction. Accid. Anal. Prev. 2021, 157, 106165. [Google Scholar] [CrossRef] [PubMed]

- Qiao, Y.L.; Du, Y.P.; Zhao, D.Y. A Location Prediction Method of Markov Based on Gaussian Analysis. Comput. Technol. Dev. 2018, 28, 41–44, 50. [Google Scholar]

- Xin, L.; Wang, P.; Chan, C.Y. Intention-aware Long Horizon Trajectory Prediction of Surrounding Vehicles using Dual LSTM Networks. In Proceedings of the 2018 21st International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018. [Google Scholar]

- Wen, H.Y.; Zhang, W.G.; Zhao, S. Vehicle lane change trajectory prediction model based on generative adversarial network. J. S. China Univ. Technol. 2020, 48, 32–40. [Google Scholar]

- Choi, D.; Lee, S. Comparison of Machine Learning Algorithms for Predicting Lane Changing Intent. Int. J. Automot. Technol. 2021, 22, 507–518. [Google Scholar] [CrossRef]

- Kim, B.D.; Kang, C.M.; Lee, S.H. Probabilistic Vehicle Trajectory Prediction over Occupancy Grid Map via Recurrent Neural Network. In Proceedings of the 2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017. [Google Scholar]

- Sadeghian, A.; Alahi, A.; Savarese, S. Tracking the Untrackable: Learning To Track Multiple Cues with Long-Term Dependencies. In Proceedings of the 2017 IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Deo, N.; Trivedi, M.M. Multi-Modal Trajectory Prediction of Surrounding Vehicles with Maneuver based LSTMs. In Proceedings of the 2018 IEEE Intelligent Vehicles Symposium (IV), Changshu, China, 26–30 June 2018; pp. 1179–1184. [Google Scholar]

- Chandra, R.; Bhattacharya, U.; Bera, A. TraPHic: Trajectory Prediction in Dense and Heterogeneous Traffic Using Weighted Interactions. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Amirian, J.; Hayet, J.B.; Pettre, J. Social Ways: Learning Multi-Modal Distributions of Pedestrian Trajectories with GANs. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Li, F.; Gui, Z.; Zhang, Z. A hierarchical temporal attention-based LSTM encoder-decoder model for individual mobility prediction. Neurocomputing 2020, 403, 153–166. [Google Scholar] [CrossRef] [PubMed]

- Gao, H.; Su, H.; Cai, Y. Trajectory prediction of cyclist based on dynamic Bayesian network and long short-term memory model at unsignalized intersections. Sci. China Inf. Sci. 2021, 64, 172207. [Google Scholar] [CrossRef]

- Liang, Y.; Zhao, Z. Vehicle Trajectory Prediction in City-scale Road Networks using a Direction-based Sequence-to-Sequence Model with Spatiotemporal Attention Mechanisms. arXiv 2021, arXiv:2106.11175. [Google Scholar]

- Khosroshahi, A.; Ohn-Bar, E.; Trivedi, M.M. Surround vehicles trajectory analysis with recurrent neural networks. In Proceedings of the 2016 IEEE International Conference on Intelligent Transportation Systems, Rio de Janeiro, Brazil, 1–4 November 2016. [Google Scholar]

- Phillips, D.J.; Wheeler, T.A.; Kochenderfer, M.J. Generalizable intention prediction of human drivers at intersections. In Proceedings of the 2017 IEEE Intelligent Vehicles Symposium, Los Angeles, CA, USA, 11–14 June 2017. [Google Scholar]

- Altche, F.; Fortelle, A. An LSTM network for highway trajectory prediction. In Proceedings of the 2017 IEEE 20th International Conference on Intelligent Transportation Systems, Yokohama, Japan, 16–19 October 2017. [Google Scholar]

- Park, S.H.; Kim, B.D.; Kang, C.M. Sequence-to-Sequence Prediction of Vehicle Trajectory via LSTM Encoder-Decoder Architecture. In Proceedings of the 2018 IEEE Intelligent Vehicles Symposium (IV), Changshu, China, 26–30 June 2018. [Google Scholar]

- Rebello, S.; Yu, H.; Ma, L. An integrated approach for system functional reliability assessment using Dynamic Bayesian Network and Hidden Markov Model. Reliability Engineering? Syst. Saf. 2018, 180, 124–135. [Google Scholar] [CrossRef]

- Leibe, B.; Matas, J.; Sebe, N. Lecture Notes in Computer Science. In Computer Vision—ECCV 2016, Knowledge Transfer for Scene-Specific Motion Prediction; Springer: Cham, Switzerland, 2016; Chapter 42; Volume 9905, pp. 697–713. [Google Scholar] [CrossRef]

- Sun, Y.; Wang, T. Target Vehicle Trajectory Prediction Algorithm based on Time Series. Automob. Appl. Technol. 2020, 6, 31–33. [Google Scholar]

- Alahi, A.; Goel, K.; Ramanathan, V. Social LSTM: Human Trajectory Prediction in Crowded Spaces. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Deo, N.; Trivedi, M.M. Convolutional Social Pooling for Vehicle Trajectory Prediction. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Liu, C.; Liang, J. Vehicle trajectory prediction based on attention mechanism. J. Zhejiang Univ. Eng. Sci. 2020, 54, 1156–1163. [Google Scholar]

- Ding, W.; Chen, J.; Shen, S. Predicting Vehicle Behaviors Over an Extended Horizon Using Behavior Interaction Network. In Proceedings of the 2019 International Conference on Robotics and Automation, Montreal, QC, Canada, 20–24 May 2019. [Google Scholar]

- Wang, Y.; Zhao, S.; Zhang, R. Multi-Vehicle Collaborative Learning for Trajectory Prediction with Spatio-Temporal Tensor Fusion. IEEE Trans. Intell. Transp. Syst. 2020, 23, 236–248. [Google Scholar] [CrossRef]

| Contribution | Year | Datasets | Is There a Comparison of LSTM | Multi-Modal | Methods |

|---|---|---|---|---|---|

| [12] | 2016 | ETH,UCY | S-LSTM | ||

| [25] | 2017 | LSTM | |||

| [26] | 2017 | Two LSTMs | |||

| [14] | 2018 | NGSIM | YES | CS-LSTM | |

| [27] | 2018 | NGSIM | YES | M-LSTM | |

| [28] | 2019 | NGSIM | YES | YES | LSTM-CNN hybrid network |

| [29] | 2019 | ETH,UCY | YES | YES | LSTM + GAN |

| [30] | 2020 | T-LSTM | |||

| [31] | 2021 | YES | LSTM + DBN | ||

| [32] | 2021 | YES | D-LSTM | ||

| [18] | 2021 | NGSIM | SG-LSTM | ||

| [19] | 2021 | GPS logs | Bi-LSTM |

| Prediction Time Domain | RMSE | NLL | ||||

|---|---|---|---|---|---|---|

| S-LSTM | CS-LSTM | RA-LSTM | S-LSTM | CS-LSTM | RA-LSTM | |

| 1 s | 1.105 | 1.099 | 1.099 | 0.733 | 0.639 | 0.422 |

| 2 s | 2.143 | 2.134 | 2.112 | 1.716 | 1.587 | 1.457 |

| 3 s | 3.311 | 3.292 | 3.198 | 2.628 | 2.509 | 2.467 |

| 4 s | 4.664 | 4.642 | 4.551 | 3.145 | 3.081 | 3.0179 |

| 5 s | 6.248 | 6.229 | 6.205 | 3.577 | 3.561 | 3.486 |

| Loss Value | History Input Track Length /s | |||||

|---|---|---|---|---|---|---|

| 1 s | 2 s | 3 s | 4 s | 5 s | 6 s | |

| NLL | 3.593 | 3.557 | 3.498 | 3.497 | 3.581 | 3.614 |

| RMSE | 6.411 | 6.357 | 6.205 | 6.195 | 6.615 | 6.898 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, Z.; Gao, Z.; Gao, F.; Shi, C.; He, L.; Gu, S. Intelligent Vehicle Moving Trajectory Prediction Based on Residual Attention Network. World Electr. Veh. J. 2022, 13, 47. https://doi.org/10.3390/wevj13030047

Yang Z, Gao Z, Gao F, Shi C, He L, Gu S. Intelligent Vehicle Moving Trajectory Prediction Based on Residual Attention Network. World Electric Vehicle Journal. 2022; 13(3):47. https://doi.org/10.3390/wevj13030047

Chicago/Turabian StyleYang, Zhengcai, Zhenhai Gao, Fei Gao, Chuan Shi, Lei He, and Shirui Gu. 2022. "Intelligent Vehicle Moving Trajectory Prediction Based on Residual Attention Network" World Electric Vehicle Journal 13, no. 3: 47. https://doi.org/10.3390/wevj13030047

APA StyleYang, Z., Gao, Z., Gao, F., Shi, C., He, L., & Gu, S. (2022). Intelligent Vehicle Moving Trajectory Prediction Based on Residual Attention Network. World Electric Vehicle Journal, 13(3), 47. https://doi.org/10.3390/wevj13030047