A Next-Generation Cyber-Range Framework for O-RAN and 6G Security Validation

Abstract

1. Introduction

- Comprehensive gap analysis for O-RAN and 6G security validation. We identify a significant gap in current 5G-focused cyber-ranges with the unique security challenges introduced by the Open Radio Access Network (O-RAN) architecture—specifically its disaggregation, numerous open interfaces, reliance on AI/ML-driven RICs, and O-Cloud dependencies—as detailed in O-RAN Alliance specifications. This analysis is extended to anticipate the even greater complexities and novel threat vectors of 6G systems [1,2]—this highlights the requirement for advanced cyber-range functionalities [5,6].

- Novel theoretical framework for next-generation cyber-ranges. We introduce a comprehensive and modular theoretical framework for cyber-ranges that is specifically designed to meet the complex security validation requirements of O-RAN and the upcoming 6G networks, as described in Section 5. This framework focuses on high-fidelity emulation of all key O-RAN components (O-RU, O-DU, O-CU, RICs, SMO) and their defined open interfaces (e.g., Fronthaul, E2, A1, O1, O2). Integrated AI/ML security testing functionalities aimed at identifying threats directed at O-RAN’s intelligent controllers (xApps/rApps) and the lifecycle of AI models [7]. Native support for simulating and validating dynamic Zero Trust Architecture (ZTA) principles across the disaggregated O-RAN and future 6G landscape [8,9,10]. A continuous risk evaluation feedback loop to enhance proactive security assessment.

- Systematic linkage of threats to requirements and practical exercise design. We map the O-RAN threat landscape (informed by O-RAN WG11 threat models) and projected 6G challenges to concrete cyber-range evolution requirements, as detailed in Section 4.2. The practical utility of our framework is further demonstrated through the design of detailed, cybersecurity exercise profiles tailored for O-RAN (presented in Section 5.5 and Appendix A), illustrating how advanced emulation can validate defenses against realistic attack scenarios.

- Conceptualization of an FCR architecture. Recognizing the scalability and specialization challenges of emulating the entire O-RAN/6G ecosystem monolithically, we propose and justify a FCR architecture (conceptually discussed in Section 5.7). This FCR model, built upon our modular framework, offers an approach for comprehensive security testing across distributed and diverse network domains, crucial for future multi-operator and multi-technology environments, particularly relevant for testing distributed ZTA implementations [9] or AI-driven Zero Touch Networks [4].

- A novel exercise definition schema. We propose a comprehensive, machine-readable schema (defined in Section 5.6 with full details in Appendix B) specifically designed to define and orchestrate the complex, multi-stage, and dynamic security scenarios required for O-RAN and 6G. This schema serves as a foundational data model for enabling automation, adaptive exercises, and objective-based assessments.

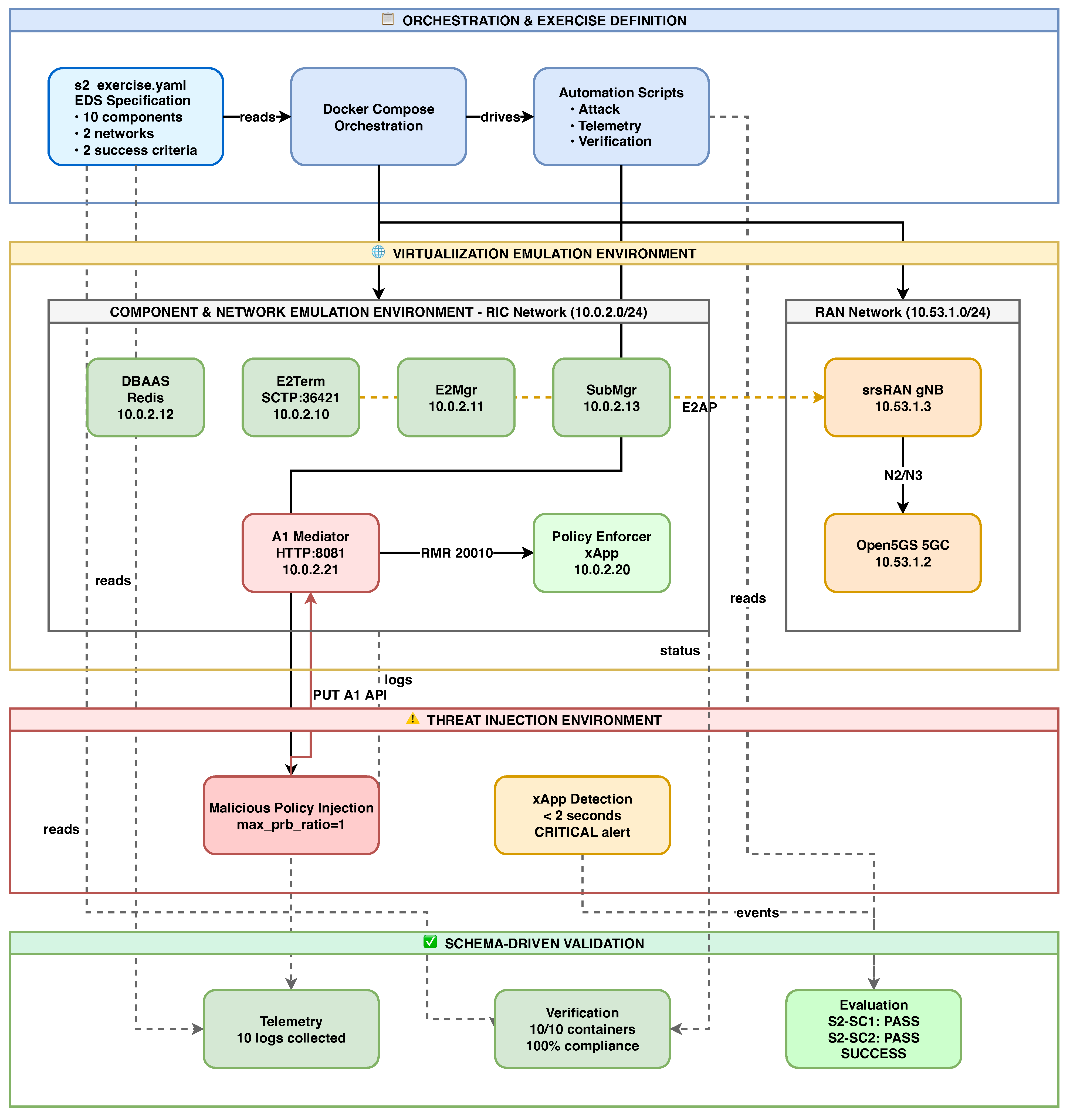

- Proof-of-concept validation of framework feasibility. We implemented Exercise S2 (Malicious A1 Policy Injection) by configuring and integrating existing open-source O-RAN software stacks to demonstrate the practical instantiation of a framework (Section 6). The implementation validates the Exercise Definition Schema design, operational O-RAN interface integration (A1 and E2 with verified message exchanges), and end-to-end exercise execution with automated assessment.

- Identification of key implementation challenges and future research pathways. We provide a realistic assessment of the significant technical and operational hurdles in developing such advanced cyber-ranges. Furthermore, we outline key future research directions, including standardized federation APIs, scalable hybrid emulation techniques, and advanced AI security modeling, to guide subsequent efforts in this critical domain.

2. Cyber-Ranges: Foundations and Evolution for 5G

2.1. Fundamental Cyber-Range Concepts and Architecture

2.2. The Impact of 5G on Cyber-Range Requirements

2.3. Overview of Key 5G Cyber-Range Projects and Efforts

2.4. Gap Analysis: The Shortcomings for O-RAN and Future 6G

3. O-RAN and 6G Threat Landscape

3.1. O-RAN System Architecture and Threat Vectors

3.2. 6G-Specific Security Paradigms

4. Comprehensive Threat Analysis

4.1. Methodology

4.2. Threat Categories and Cyber-Range Requirements

4.3. A Classification of Cyber Security Exercises for Evolving Mobile Networks

5. Theoretical Framework for a Next-Generation Cyber-Range

5.1. Modular Architecture

5.2. Conceptual Topology for O-RAN Emulation

5.3. Functional Capabilities

5.4. Integration with Risk Evaluation

5.5. Illustrative Application: STRIDE-Based O-RAN Exercises on the Next-Gen Cyber-Range

5.5.1. Spoofing Threats and Exercises

5.5.2. Tampering and Repudiation Threats and Exercises

5.5.3. Information Disclosure Threats and Exercises

5.5.4. Denial of Service and Elevation of Privilege Threats and Exercises

5.6. A Schema-Driven Approach to Scenario Orchestration

- Core Metadata and Objectives: This domain defines the exercise’s identity, learning goals, and technical validation objectives, linking every scenario to a clear purpose and measurable success criteria.

- Emulated Environment and Topology: This is where the virtual world is built. It defines the O-RAN and 6G components (RICs, O-DUs, xApps), their software stacks, and any special hardware requirements (e.g., GPUs for AI, SDRs for RF). Crucially, it allows the injection of specific vulnerabilities (e.g., CVEs, weak credentials) into components and defines the properties of the interconnecting O-RAN interfaces.

- FCR Specifics: For multi-range exercises, this dedicated domain defines node affinity rules (e.g., ensuring an O-DU and O-RU run on the same physical node), inter-node link requirements, and trust policies between federated sites.

- Scenario Execution Logic: This is the dynamic heart of the schema. It defines the scenario’s phases, scripted events, and the attacker model (from simple scripts to adaptive AI adversaries). Most importantly, it contains the dynamic adaptation rules—the conditional ‘IF-THEN’ logic that allows the orchestrator to modify the scenario in real-time based on participant actions or telemetry events.

- Participant Roles, Scoring, and Visualization: Finally, the schema defines the roles of participants (Blue Team, Red Team), their permissions, the tools available to them, and the rules for automated scoring. It also specifies how telemetry data should be visualized on role-specific dashboards.

5.7. Federated Cyber-Range Architecture for O-RAN/6G

5.7.1. Inter-Federation Interfaces

- (a)

- The federated orchestration plane. This plane manages the scenario lifecycle across all participating ranges. The challenge here is not just launching VMs; it is maintaining a synchronized state across disparate administrative domains. Current cloud orchestration tools lack the robust, cross-domain failure-handling and resource-negotiation protocols required for this task. A failure in one node must be managed gracefully without collapsing the entire federated exercise.

- (b)

- The federated control plane. This plane must route simulated O-RAN control traffic (e.g., E2, A1, O1) between components hosted on different physical ranges. The primary obstacle is a complex physics problem: latency. Consider the Near-RT RIC’s 10 ms control loop; it fails if the physical network latency between the federated RIC and the O-DU emulators exceeds 50 ms. This plane, therefore, requires either dedicated low-latency interconnects or an advanced network emulation layer that can precisely compensate for physical delay to present an accurate, emulated latency to the systems under test.

- (c)

- The federated data plane. Handling high-bandwidth traffic, particularly the multi-Gbps Open Fronthaul, is the clear bottleneck for any wide-area federation. As practitioners know, routing this traffic between geographically distant sites over a standard WAN is infeasible. This imposes a critical design constraint: data-plane-intensive components like the O-DU and its connected O-RUs must likely reside within a single federated node. Federation is therefore best suited for separating control elements (like the SMO) from the RAN components, not for splitting the RAN itself across a continent.

- (d)

- The federated telemetry plane. To create a coherent view, this plane must aggregate logs, metrics, and alerts from all nodes. The non-negotiable requirement is time synchronization. Without a common, trusted time source (likely using the Precision Time Protocol, PTP) providing nanosecond-level accuracy, correlating a cause on Range A with an effect on Range B becomes forensically impossible. Without synchronized time, forensic analysis becomes an exercise in guesswork.

5.7.2. Illustrative FCR Exercises

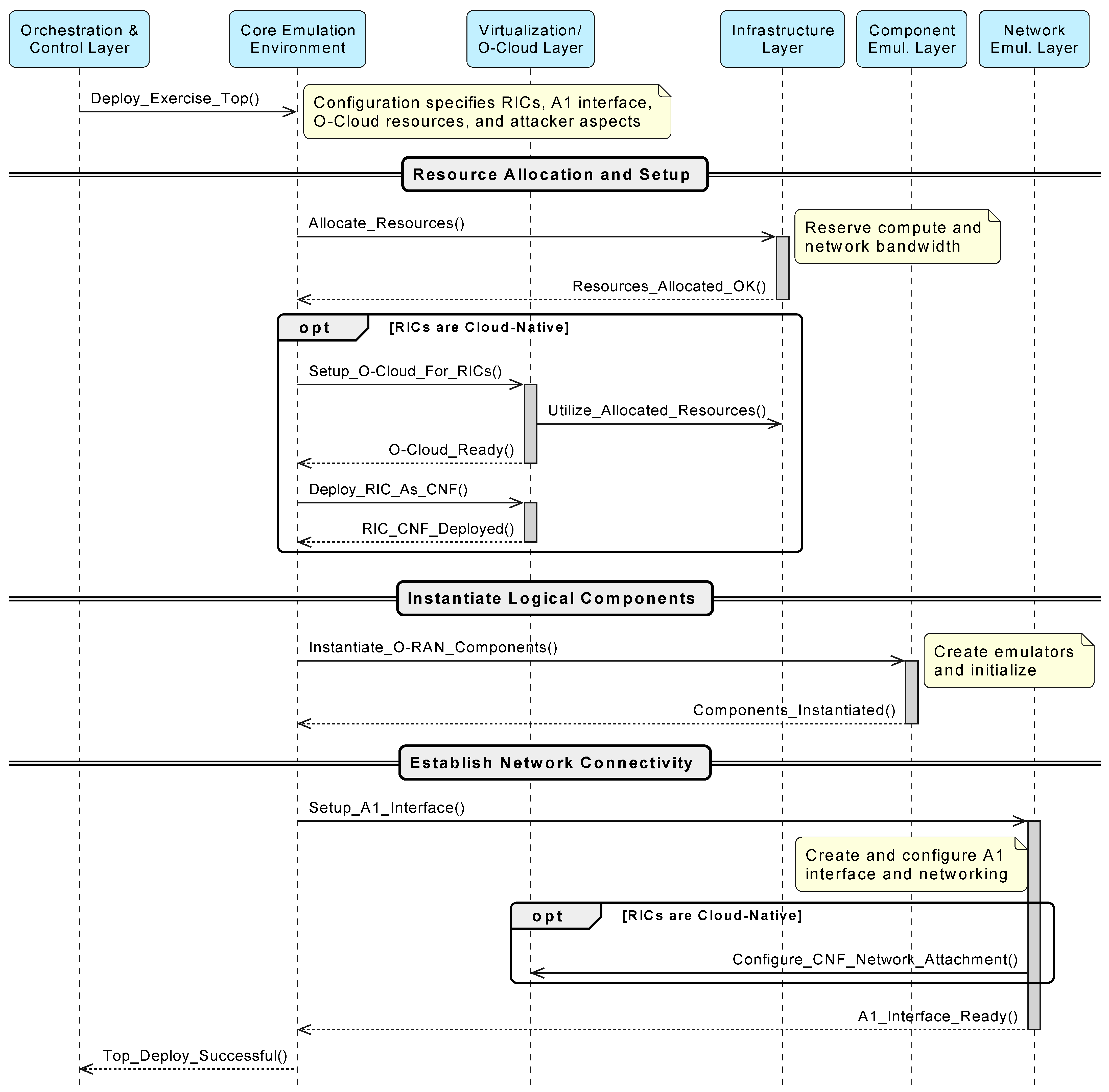

5.8. Operational Workflow of an Exercise Scenario

Internal Workflow for Exercise Topology Deployment

- Resource allocation. The process begins with active resource management. The CEE first queries the Infrastructure Layer (IL) to ensure sufficient capacity exists for the scenario’s resource profile as defined in Config. Upon confirmation, the CEE sends a formal Allocate_Resources request. The IL reserves the specific compute, storage, and network resources, returning a set of unique Allocate_Resource_Handles to the CEE. These handles, representing the finite-resource slice for the exercise, are then passed to subsequent commands to the VOL and CEL to enforce the allocation.

- O-Cloud environment setup. If Config specifies cloud-native RICs, the CEE instructs the VOL to Setup_O-Cloud_For_RICs using those resources. The VOL then deploys the RICs as Containerized Network Functions (Deploy_RIC_As_CNF) on the emulated O-Cloud.

- Logical component instantiation. The CEE directs the CEL to Instantiate_O-RAN_Components. The CEL creates emulators for scenario elements such as the target Near-RT RIC, an optional legitimate Non-RT RIC, and an Attacker Node for A1 spoofing. If RICs were deployed as CNFs earlier, the CEL links these logical emulators accordingly.

- Network connectivity establishment. The CEE tasks the NEL to Setup_A1_Interface. The NEL creates and configures the emulated A1 interface between components (e.g., Attacker Node and Near-RT RIC), assigns IP addresses, and sets up routing as per Config. For cloud-native RICs, the NEL collaborates with the VOL to ensure proper network attachment.

6. Proof-of-Concept

6.1. Proof-of-Concept Implementation and Validation

6.2. EDS Implementation

| Listing 1. Exercise S2 topology specification excerpt from EDS YAML. |

|

6.3. Exercise Telemetry

| Listing 2. Schema-driven telemetry collection using EDS. |

|

6.4. Topology Verification

| Listing 3. Schema-driven network validation ensures that Docker networks match EDS specifications. |

|

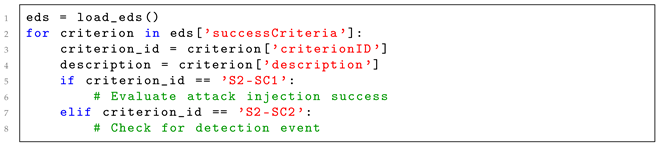

6.5. Evaluation Criteria

| Listing 4. Automated success criteria evaluation from EDS. |

|

6.6. Validation Results

7. Implementation Challenges and Future Work

Practical Implementation Road-Map

- Deploy container orchestration infrastructure on dedicated hardware or cloud platforms. POC implementation: Docker Compose with dual bridge networks (ric_network, ran_network) on a single host. Production alternatives: Kubernetes with CNI plugins (Calico, Flannel) for multi-host orchestration, OpenStack for NFV/VNF deployments, or VMware vSphere for enterprise virtualization supporting the Infrastructure and Virtualization Layers (Section 5.1).

- Integrate RAN emulation software (srsRAN or OpenAirInterface gNB implementations, Open5GS or free5GC core network) with O-RAN Software Community components and verify interface integration. POC implementation: srsRAN gNB with E2 agent, Open5GS 5GC, O-RAN SC Near-RT RIC (i-release), E2 Termination, E2 Manager, A1 Mediator with validated SCTP/HTTP connectivity. Alternatives: OpenAirInterface with –build-e2 flag, free5GC, FlexRIC, oran-sc-ric (x release), or commercial RAN simulators (Keysight, Spirent) supporting the Component Emulation Layer.

- Build automation tooling for exercise lifecycle management including topology deployment, configuration templating. POC implementation: Docker Compose templates with environment variable substitution, Jinja2-based configuration generation for routes.rtg, component configs. Alternatives: Ansible playbooks for declarative configuration, Terraform for infrastructure-as-code, Helm charts for Kubernetes-native deployments, or custom Python orchestrators with EDS YAML parsing supporting the Orchestration and Control Layer.

- Implement threat-injection mechanisms to inject vulnerabilities into the build architecture. POC implementation: Python attack scripts using requests library for A1 REST API policy manipulation, 4-phase attack execution (reconnaissance, injection, verification, cleanup). Alternatives: Metasploit modules for protocol fuzzing, CALDERA for automated adversary emulation, custom xApp-based attack modules via RMR messaging, tcpreplay for traffic injection, or Scapy for packet-level crafting supporting the Threat Injection Engine.

- Develop an orchestration layer to manage exercise execution, resource allocation, and multi-user access control. POC implementation: Manual Docker Compose execution, shell script-based deployment verification, Python-based automated success criteria evaluation from EDS YAML. Alternatives: Web-based UI with Flask/Django frameworks, RESTful APIs for exercise triggering, PostgreSQL for exercise state persistence, Redis for real-time caching, RBAC implementation with Keycloak/OAuth2, and automated scoring engines for the Orchestration and Control Layer.

- Implement the AI/ML Security Testing Layer using adversarial attack frameworks, model-poisoning scenarios, and federated learning testbeds. POC scope: Not implemented in Exercise S2. Implementation approaches: IBM Adversarial Robustness Toolbox (ART) for model evasion attacks, custom adversarial xApps targeting RIC AI models, TensorFlow Federated or PySyft for federated learning poisoning scenarios, or CleverHans library for gradient-based attacks supporting the AI/ML Security Testing Layer.

- Implement APIs to enable distributed exercise execution for achieving federated environments. POC scope: Single-node deployment. Federation approaches: RESTful APIs with mTLS mutual authentication, Apache Kafka or RabbitMQ for cross-domain telemetry streaming, federated identity with OAuth2/SAML, distributed tracing with OpenTelemetry, or blockchain-based trust management [75] for secure inter-CR orchestration supporting the Federated CR architecture (Section 5.7).

- Implement integrations with external systems for utilizing commercial simulation tools and systems. POC implementation: Docker logs for telemetry collection via Python scripts. Production alternatives: ELK Stack (Elasticsearch, Logstash, Kibana) for centralized logging and analysis, Prometheus + Grafana for real-time metrics visualization, Splunk for enterprise SIEM correlation, Jaeger for distributed tracing, or integration with commercial CR platforms (Cyberbit, Keysight) supporting the Telemetry and Monitoring Layer.

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Illustrative O-RAN STRIDE Exercise Profiles

Appendix A.1. Exercise Summary

| ID | Exercise Title | STRIDE | Key Components | Key Interfaces | Res. | Additional Capabilities |

|---|---|---|---|---|---|---|

| S1 | Rogue O-RU with Fronthaul Replay | Spoofing | O-DU, O-RU (legit), O-RU (rogue) | Open FH [F1], O1 | High | [HR-FH], [TIMING], [ZTA] |

| S2 | Malicious A1 Policy Injection via Spoofed Non-RT RIC | Spoofing | Near-RT RIC, Non-RT RIC, SMO | A1 [A1] | Med. | [A1-POL], [POL-ENG] |

| S3 | E2 Node Identity Forgery and False Reporting | Spoofing | Near-RT RIC, E2 Node, xApp | E2 [E2] | Med. | [CORE] only |

| T1 | E2 Service Model Parameter Tampering | Tampering | Near-RT RIC, xApp, E2 Node, E2SM-KPM | E2 [E3] | Med. | [AI-ML], [E2SM-LIB], [STATE-MANIP] |

| T2 | xApp Configuration Tampering via SMO | Tampering | SMO, Near-RT RIC, xApp | O1 [O2], O2 | Med. | [CORE] only |

| R1 | Disputed O1 Configuration Change | Repudiation | SMO, O-DU, Logging System, PKI | O1 [O2] | Med. | [FORENSIC], [CRYPTO-LOG] |

| R2 | Attributing Malicious xApp Activity | Repudiation | Near-RT RIC, xApps, E2 Nodes | E2, A1, Internal RIC | Med. | [FORENSIC], [AI-ML] |

| R3 | Verifying rApp Policy Provenance | Repudiation | Non-RT RIC, rApp, Near-RT RIC, xApp, SMO | R1, A1 [A1], E2, O1 | High | [A1-POL], [FORENSIC], [AI-ML] |

| I1 | xApp Data Exfiltration via Y1 | Info Disc. | Near-RT RIC, xApp, Y1 framework | Y1 [Y1], E2 | Med. | [Y1-FMK], [DLP], [ZTA] |

| I2 | O-Cloud VNF Snapshot Exposure | Info Disc. | O-Cloud, O-DU/O-CU VNF | O2 [O3] | Med. | [VIRT-CLOUD] |

| D1 | E2 Interface Flood against Near-RT RIC | DoS | Near-RT RIC, E2 Nodes, xApps | E2 [E2] | High | [HR-E2], [RIC-RES] |

| D2 | O-RU Resource Exhaustion via Fronthaul | DoS | O-RU, O-DU | Open FH [F1] | High | [HR-FH], [TIMING] |

| D3 | xApp-Induced RIC Resource Starvation | DoS | Near-RT RIC, xApps | Internal RIC, O1 | High | [AI-ML], [RIC-RES] |

| E1 | xApp Sandbox Escape | EoP | Near-RT RIC platform, xApps | Internal RIC APIs | Med. | [AI-ML], [STATE-MANIP] |

| E2 | O-Cloud Container Escape | EoP | O-Cloud worker, O-DU/O-CU CNF | O-Cloud internal, O2 | High | [VIRT-CLOUD], [ZTA] |

| E3 | O1 Vulnerability Exploitation for SMO Access | EoP | SMO, O-CU/Near-RT RIC | O1 [O2], internal | Med. | [NET-SEG] |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| S1: Rogue O-RU with Fronthaul Replay [T-FRHAUL-01A] Weak Open Fronthaul M-Plane mutual authentication and absence of anti-replay mechanisms enable O-RU identity spoofing. | The attacker deploys a rogue O-RU that eavesdrops on legitimate Fronthaul traffic, then replays captured M-Plane setup sequences with spoofed credentials (MAC/IP/certificates) to connect to the legitimate O-DU. Once connected, it injects falsified CUS-Plane data (inaccurate CQI reports) to disrupt O-DU scheduling algorithms and degrade RAN performance. | Detection: Identify rogue O-RU connection attempts despite the use of valid replayed sequences. Validation: Validate the robustness of M-Plane authentication and its anti-replay mechanisms. Impact Assessment: Assess falsified CQI impact on scheduling decisions; test rogue O-RU isolation procedures. |

| S2: Malicious A1 Policy Injection via Spoofed Non-RT RIC [T-A1-01] Weak A1 interface authentication and lack of policy integrity protection enable Non-RT RIC identity spoofing. | Attacker compromises Non-RT RIC credentials or exploits A1 authentication weaknesses to spoof legitimate Non-RT RIC identity. Sends unauthorized/harmful A1 policy messages to Near-RT RIC, instructing suboptimal control decisions that cause targeted DoS for specific UEs/slices or degrade network performance through resource misallocation. | Detection: Detect A1 policies from untrusted/spoofed Non-RT RIC sources. Validation: Validate A1 mutual authentication, policy producer authorization, and message integrity protection. Response: Test Near-RT RIC’s malicious policy rejection capabilities and incident response procedures. |

| S3: E2 Node Identity Forgery and False Reporting [T-E2-01] Weak E2 Node authentication enables unauthorized entities to spoof trusted E2 Node identities. | Attacker deploys a rogue E2 Node emulator or compromises a legitimate E2 Node, then attempts to establish an E2 connection with a Near-RT RIC by forging the identity of a known, trusted E2 Node. If successful, sends falsified E2 RIC Indication messages (incorrect cell load, false UE measurements) to deceive xApps and disrupt RIC-driven RAN optimization. | Detection: Near-RT RIC must detect and reject an E2 Setup attempt from a forged identity. Validation: Validate mutual authentication and authorization mechanisms for E2 Node connections. Resilience: Assess xApp/RIC’s ability to detect and mitigate the impact of consistently false telemetry from a compromised E2 Node. |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| T1: E2 Service Model Parameter Tampering for xApp Deception [T-E2-02] Compromised E2 Nodes can tamper with E2 Service Model parameters or measurement reports without detection. | The attacker compromises the E2 Node (O-CU-UP) and then tampers with the active E2SM-KPM instance parameters associated with the xApp subscription. A compromised node systematically reports skewed KPM measurements to the Near-RT RIC, causing xApp to make suboptimal RAN optimization decisions based on falsified network state information. | Detection: Detect anomalies in E2SM KPM reports or E2 Node behavioral inconsistencies. Validation: Validate the integrity of the E2 message and the E2SM configuration protection mechanisms. Resilience: Assess xApp resilience to corrupted telemetry; trace tampering to the compromised E2 Node. |

| T2: xApp Configuration Tampering via Compromised SMO [O1 Security] Unauthorized modification of xApp configurations through compromised SMO or insecure O1/O2 interfaces. | An attacker gains unauthorized SMO access or intercepts/manipulates O1/O2 communication, then modifies critical xApp configuration parameters deployed on the Near-RT RIC. Altered configurations disable security features, change operational logic detrimentally, or cause excessive resource consumption. | Detection: Detect unauthorized or anomalous xApp configuration changes pushed via the SMO/O1 interface. Validation: Validate integrity and authorization mechanisms for xApp configuration management from SMO. Response: Test procedures for identifying tampered xApp configurations and restoring secure baseline. |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| R1: Disputed O1 Configuration Change to O-DU [O1 Auditability] Inability to definitively attribute critical configuration changes made via O1 to a specific authorized SMO user or process. | A critical O-DU configuration parameter (transmission power, cell barring) was altered via the O1 NETCONF command, allegedly by SMO, causing significant service disruption. The investigation uses secure audit logs, digital signatures on O1 messages (if implemented), and change management records to definitively prove or disprove the legitimate origin and authorization, ensuring non-repudiation. | Forensics: Forensically determine the authenticated source and verify the integrity of the disputed O1 configuration command. Validation: Evaluate the strength, completeness, and tamper-resistance of non-repudiation mechanisms (logging, digital signatures). Procedure Testing: Test incident response and forensic investigation playbooks for disputed configuration changes. |

| R2: Attributing Malicious xApp Activity in Near-RT RIC [xApp Accountability] Difficulty attributing detrimental RAN control actions to specific xApp instance due to insufficient logging, weak identity management, or lack of transactional integrity. | Harmful action (persistent unnecessary handovers, incorrect policy application causing resource starvation) observed in RAN, initially pointing to Near-RT RIC decision. Multiple xApps running with potentially causative capabilities. The exercise leverages fine-grained, cryptographically secured audit logs from the RIC platform, the xApp framework, and potentially signed xApp outputs to unambiguously attribute malicious/erroneous actions to specific xApp instances, versions, and triggering conditions. | Attribution: Definitively identify the specific xApp instance responsible for detrimental RAN control action using logs and telemetry. Log Sufficiency: Evaluate whether the RIC platform provides sufficiently detailed, secure, and tamper-evident logging to support non-repudiation. Forensic Readiness: Test the cyber-range’s forensic capabilities for collecting and analyzing data to trace xApp-initiated events. |

| R3: Verifying rApp Policy Provenance and Enforcement [Policy Traceability] Lack of a verifiable link between the rApp-defined policy in Non-RT RIC and its actual enforcement in Near-RT RIC/RAN, making attribution difficult. | rApp in Non-RT RIC defines network optimization policy (e.g., energy saving), translated into A1 directives for Near-RT RIC. Network KPIs later indicate that the policy was not enforced or enforced incorrectly. Exercise traces policy from rApp definition through A1 transformation/transmission, Near-RT RIC reception/interpretation, to attempted execution via xApps and E2. Uses logs, policy IDs, and interface traces to establish a clear chain of evidence determining if the rApp intent was correctly communicated and acted upon. | Traceability: Establish a verifiable audit trail for the rApp policy across the Non-RT RIC, A1, Near-RT RIC, xApps, and E2 actions. Integrity Verification: Confirm rApp policy intent not altered or misinterpreted during translation and transmission. Accountability: Determine the point of failure or misbehavior if the policy is not correctly enforced, ensuring correct attribution. |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| I1: xApp Data Exfiltration via Y1 Interface [T-Y1-01/02] xApp with excessive permissions or vulnerability accesses sensitive RAN analytics/UE data and exfiltrates via Y1 to unauthorized consumer. | Malicious/compromised xApp on Near-RT RIC leverages access to internal RIC data sources (UE measurements, cell statistics, RAI) to collect sensitive information. Attempts transmission over the Y1 interface to an external entity, either unauthorized to receive granular data or an attacker-controlled Y1 consumer, potentially bypassing Y1 service authorization checks or exploiting overly permissive data exposure policies. | Detection: Detect anomalous data flows or subscription patterns on the Y1 interface indicative of data exfiltration. Validation: Validate Near-RT RIC’s authorization and access control for Y1 service consumers and accessible data types. Prevention: Test Data Leakage Prevention (DLP) mechanisms or security policies for Y1 interface/xApp data access. |

| I2: O-Cloud VNF Snapshot Exposure [T-GEN-06, T-VM-C-03] Unauthorized access to VNF/CNF snapshots stored in O-Cloud, leading to leakage of sensitive configuration data, UE information, or cryptographic keys. | An attacker, through a compromised O-Cloud management account or by exploiting a storage infrastructure vulnerability, gains unauthorized access to VNF/CNF snapshots (O-DU/O-CU). Snapshots may contain sensitive information, including network configurations, security parameters, cached UE data, or private cryptographic keys embedded in VNF/CNF image or persistent storage volumes. Attacker exfiltrates snapshots or extracts sensitive data. | Detection: Detect unauthorized access attempts or anomalous activity related to VNF/CNF snapshot storage/management. Validation: Assess the security of data-at-rest encryption and access control for VNF/CNF snapshots in O-Cloud. Prevention/Hardening: Test procedures for secure VNF/CNF data sanitization before snapshotting and for secure lifecycle management. |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| D1: E2 Interface Flood against Near-RT RIC [E2 DoS] Attacker controlling compromised E2 Nodes or entity with E2 access floods Near-RT RIC with a high volume of E2 messages. | An attacker controlling one or more compromised E2 Nodes, or an entity with E2 interface access, generates a massive flood of legitimate or malformed E2 RIC Subscription Request or RIC Indication messages directed at the Near-RT RIC. The objective is to overwhelm RIC’s E2 interface processing capacity, consume CPU/memory resources, or fill message queues, thereby degrading performance or rendering it unresponsive to legitimate E2 traffic and xApp requests. | Detection: Detect E2 message floods and distinguish them from legitimate high-traffic conditions. Resilience: Evaluate the Near-RT RIC’s resilience to E2 overload, including resource management, rate limiting, and load shedding. Impact Assessment: Assess the impact of RIC DoS on xApp functionality and overall RAN control. |

| D2: O-RU Resource Exhaustion via Open Fronthaul [T-FRHAUL-03] Attacker sends malformed or excessive CUS-Plane/M-Plane traffic over Open Fronthaul to O-RU, aiming to disrupt operation or cause local DoS. | Attacker positioned to inject traffic onto Open Fronthaul link (via compromised O-DU or physical tap) sends high volume of malformed eCPRI packets (CUS-Plane) or excessive/invalid NETCONF messages (M-Plane) to target O-RU. The goal is to exhaust O-RU’s limited processing resources, corrupt the state, or trigger software faults, ultimately leading to O-RU becoming unresponsive or ceasing radio service for assigned cells. | Detection: Detect anomalous or malicious traffic on the Open Fronthaul interface targeting O-RU. Resilience: Evaluate O-RU’s robustness against malformed/high-volume Fronthaul traffic and ability to maintain stability/recover. Impact on O-DU: Assess O-DU’s ability to detect O-RU failure/misbehavior and initiate recovery or alarm procedures. |

| D3: xApp-Induced RIC Resource Starvation [T-xApp-02] Poorly designed, buggy, or malicious xApp consumes excessive computational or memory resources on Near-RT RIC, impacting other xApps or core RIC functions. | xApp deployed to Near-RT RIC with inefficient algorithms, memory leak, or intentionally malicious logic (e.g., fork bomb) starts consuming inordinate CPU cycles, memory, or shared RIC platform resources. Resource starvation leads to severe performance degradation or unresponsiveness of other legitimate xApps and critical RIC platform services, effectively causing DoS for RIC-driven RAN intelligence. | Detection: Detect xApp that causes resource exhaustion on the Near-RT RIC platform. Resource Management Validation: Evaluate the effectiveness of RIC’s resource management and sandboxing in isolating/constraining abusive xApps. Response/Mitigation: Test procedures for identifying, throttling, or terminating misbehaving xApp to restore stability. |

| Exercise and Threat | Attack Scenario | Exercise Objectives |

|---|---|---|

| E1: xApp Sandbox Escape for Lateral Movement within Near-RT RIC [T-xApp-03] Vulnerable xApp escapes the sandbox to gain unauthorized access to other xApps’ resources or core RIC platform functions. | The deployed xApp contains an exploitable vulnerability (in code, a shared library, or the xApp SDK/runtime). The attacker triggers a vulnerability, allowing xApp to break out of the designated sandbox/isolated execution environment. Once escaped, malicious xApp logic attempts to read/write memory of other xApps, interfere with core RIC services, or access RIC-internal APIs without authorization, effectively elevating privileges and potentially gaining control over broader RAN functions. | Detection: Detect xApp sandbox escape attempt and subsequent unauthorized internal RIC activity or API calls. Validation: Validate robustness and effectiveness of Near-RT RIC’s xApp sandboxing, process isolation, and permission mechanisms. Containment: Test the RIC platform’s ability to contain or terminate xApp exhibiting privilege-escalation attack behavior. |

| E2: O-Cloud Container Escape Affecting Hosted O-RAN NFs [T-VM-C-01/02] Attacker exploits container vulnerability in O-RAN CNF (e.g., O-DU) to escape to the underlying O-Cloud host node, gaining broader access. | The attacker exploits a known or zero-day vulnerability in the container runtime (Docker, CRI-O) or the guest OS kernel of an O-Cloud worker node hosting an O-RAN CNF (O-DU). An exploit allows an attacker’s code, initially running within O-DU CNF, to escape container isolation boundaries and gain privileged access (root) to the underlying O-Cloud worker node host OS. From compromised host, attacker attempts to access/manipulate other CNFs on same node or probe/attack O-Cloud infrastructure management components. | Detection: Detect container escape attempt from compromised O-RAN CNF and subsequent privileged activity on O-Cloud host. Validation: Validate the effectiveness of O-Cloud host hardening, container isolation technologies (seccomp, AppArmor, gVisor), and runtime IDS. Containment: Assess the ability to contain the breach to the compromised host and prevent lateral movement to other CNFs or O-Cloud services. |

| E3: O1 Interface Vulnerability Exploitation for SMO Admin Access [T-O-RAN-06] Exploitation of vulnerability in O-RAN NF’s O1 interface implementation (e.g., NETCONF server) by an entity with O1 access, leading to privileged access on NF or SMO. | Attacker, having gained initial (possibly low-privileged) O1 management network access, identifies and exploits a software vulnerability (buffer overflow, command injection) in the O1 interface implementation of O-RAN NF (O-CU or Near-RT RIC). Successful exploitation allows arbitrary code execution on the targeted NF with elevated privileges. Attacker attempts to leverage compromised NF to pivot and gain administrative access to SMO, potentially by stealing SMO credentials stored on NF or using NF as a trusted relay for malicious commands to SMO. | Exploitation and Detection: Successfully exploit the simulated O1 vulnerability on the target NF and detect through the NF or network-based IDS/IPS. Privilege Escalation Detection: Detect attacker’s attempts to escalate privileges on compromised NF and pivot towards SMO. Validation of Hardening: Evaluate the hardening of O-RAN NFs against O1 attacks and the segmentation between the O1 management network and other control planes. |

Appendix A.2. Detailed Exercise Profiles by STRIDE Category

Appendix A.3. O-RAN Threat Context Index

Appendix A.4. Resource Profile Definitions

- Low: Basic component emulation with minimal control plane traffic (2–5 containers, <1 Gbps).

- Medium: Partial O-RAN deployment with 1 RIC and a few E2 Nodes, moderate control plane traffic (5–10 containers, 1–5 Gbps).

- High: Multiple RICs, dozens of nodes, and/or significant data plane traffic generation (10–20 containers, >5 Gbps, specialized hardware may be needed).

- Federated: Requires instantiation across at least two distinct CR nodes with inter-node coordination.

Appendix A.5. Required Next-Generation Cyber-Range Capabilities

Appendix A.5.1. Core Capabilities (Required by All Exercises)

- High-Fidelity O-RAN Emulation Layer: Accurate protocol implementation (E2AP, A1AP, O1 NETCONF/YANG, Open Fronthaul), realistic timing and sequence behavior, security mechanism emulation (authentication handshakes, TLS/IPsec).

- Component Emulation Layer: Near-RT RIC platform with xApp execution framework; Non-RT RIC with rApp support; E2 Node emulators (O-CU, O-DU, O-RU) with configurable behavior; SMO with policy management functions.

- Threat Injection Engine: Orchestrated attack sequence execution; interface-specific attack primitive library (spoofing, tampering, flooding); parameterized attack scenario definition.

- Telemetry and Monitoring Layer: Deep packet inspection for O-RAN interfaces; component-level logging (authentication events, policy decisions, message processing); security event correlation and analysis.

- Network Emulation Layer: Virtual network topology creation; link characteristics simulation (latency, bandwidth, loss); traffic capture and injection capabilities.

Appendix A.5.2. Exercise-Specific Capability Requirements

Appendix A.5.3. Mapping to Framework Architecture

- High-Fidelity O-RAN Emulation, [E2SM-LIB], [A1-POL], [Y1-FMK] → Component Emulation Layer

- Threat Injection Engine, [STATE-MANIP] → Orchestration and Control Layer + Threat Injection Module

- Telemetry and Monitoring, [FORENSIC], [CRYPTO-LOG], [DLP] → Telemetry Collection and Analysis Layer

- Network Emulation, [HR-FH], [HR-E2], [NET-SEG] → Network Emulation Layer

- [AI-ML], [POL-ENG] → AI/ML Security Testing Layer

- [ZTA] → Zero Trust Architecture Validation Module

- [VIRT-CLOUD], [RIC-RES] → Infrastructure Emulation Layer

- [TIMING] → Specialized Hardware/Timing Layer

Appendix B. Conceptual Exercise Definition Schema Details

Appendix B.1. Core Exercise Metadata

- exerciseID: (String, Unique) Globally unique identifier for the exercise.

- exerciseVersion: (String) Version number for tracking updates.

- title: (String) Human-readable name of the exercise.

- description: (Text) A short summary of the exercise’s purpose and scope.

- author: (String) Creator(s) of the exercise definition.

- keywords: (List of Strings) Tags for categorizing and searching (e.g., “O-RAN”, “6G”, “DoS”, “AI_Security”, “ZTA”, “Federated_FL”).

- difficultyLevel: (Enum: Novice, Intermediate, Advanced, Expert_6G).

Appendix B.2. Learning and Validation Objectives

- primaryLearningObjectives: (List of Objects: {objectiveID, description, targetRoleIDs})

- technicalValidationObjectives: (List of Objects: {objectiveID, description, targetComponentIDs})

- successCriteria: (List of Objects: {criterionID, description, linkedObjectiveIDs})

- kpiDefinitions: (List of Objects: {kpiID, metricName, metricSource_TelemetryPath, targetValueOrCondition})

Appendix B.3. Emulated Environment and Topology

- topologyID_Reference: (String, Optional) Link to a reusable base topology.

- components: (List of ComponentDefinition Objects)

- –

- ComponentDefinition: {componentID, componentType, softwareStack, initialConfigurationData, vulnerabilityProfile, etc.}

- –

- vulnerabilityProfile: (List of Strings) Specific CVEs or named weaknesses to enable (e.g., [“CVE-2023-1234”, “Weak_Default_Credentials”]).This field instructs the Component Emulation Layer to actively enable specific vulnerabilities in the emulated software stack, creating a predictable and testable attack surface.

- –

- energyProfileModelID: (String, Optional, 6G) Reference to a model describing power consumption.A forward-looking field essential for researching the trade-offs and interdependencies between energy-saving mechanisms and security posture in 6G.

- interconnections: (List of LinkDefinition Objects: {linkID, source, target, interfaceType, linkFlavor, securityProtocolsRequired})

Appendix B.4. Federated Cyber-Range Specifics

- federationMode: (Enum: No_Federation, Strict_Node_Assignment, Broker_Optimized_Placement).

- nodeAffinity: (List of Objects: {componentID_pattern, targetCRN_ID, type})This is critical for ensuring technical feasibility, for instance, by forcing a data-plane-intensive O-DU and its connected O-RUs to be co-located on the same high-performance node to avoid impossible latency constraints.

- interNodeLinkSpecs: (List of Objects: {linkID_ref, maxLatency_ms, minBandwidth_Mbps})

Appendix B.5. Scenario Definition and Execution Logic

- storyline: (Text, Optional) Narrative for participants.

- scenarioPhases: (List of PhaseDefinition Objects: {phaseID, description, entryCondition, exitCondition})

- attackerModel: (Object: {attackerProfileID, attackerType, objectives, ttpsEmulated})Allows for exercises to be mapped directly to real-world threat actor profiles and known adversary tactics, techniques, and procedures (e.g., from MITRE ATTandCK).

- dynamicAdaptationRules: (List of Rule Objects: {ruleID, ifCondition, thenAction})This defines the core logic for the exercise’s event-condition-action engine, allowing the Orchestrator to create adaptive scenarios that react to participant actions or telemetry events.

Appendix B.6. Participant Roles and Interaction

- roles: (List of RoleDefinition Objects: {roleID, description, permissions_CR, toolsAvailable_CR})

- teamStructure: (List of Team Objects: {teamID, roleIDs_in_team})

Appendix B.7. Scoring and Assessment

- overallScoringModel: (Enum: PointsBased, ObjectiveBased, Hybrid).

- scoringRubric: (List of MetricDefinition Objects: {metricID, description, dataSource, calculationLogic, weight})

- feedbackRules: (List of Objects: {condition, feedbackMessage, targetRoleID})

Appendix B.8. Gamification Elements

- leaderboardConfig: (Object: {enabled, type, anonymity})

- badges: (List of Badge Objects: {badgeID, name, description, unlockCriteria})

Appendix B.9. Visualization and Reporting

- defaultDashboardLayouts_byRole: (List of Objects: {roleID_ref, dashboardDefinitionID})

- afterActionReport_TemplateID: (String) Reference to an AAR template.

Appendix B.10. Resource Allocation Meta-Data

- exercisePriority: (Enum: Critical_RealTime, High_Research, Medium_Training, Low_DevTest).

- optimizationBias: (Enum: Minimize_Latency, Maximize_Fidelity, Minimize_Cost, Minimize_Energy_6G).Provides a hint to the Orchestrator on how to resolve resource contention, which is particularly important in a federated environment with heterogeneous capabilities and costs.

- preemptionPolicy: (Enum: Not_Preemptible, Preemptible_With_SaveState, Preemptible_Terminate).

Appendix B.11. Compliance and Legal

- rulesOfEngagement_Text: (Text)

- dataHandlingPolicyID: (String, Optional)

- loggingPolicy_Audit: (Enum: Anonymous, Pseudonymous, Full_Attribution).

References

- Hexa-X-II Project. Final Overall 6G System Design. 2025. Available online: https://hexa-x-ii.eu/results/ (accessed on 10 September 2025).

- 6G-IA Security Working Group. 6G Security: Position Paper; Technical report; 6G SNS Industry Association: Brussels, Belgium, 2025. [Google Scholar] [CrossRef]

- O-RAN Alliance WG11. O-RAN Study on Zero Trust Architecture for O-RAN. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 11 September 2025).

- Yang, L.; Naser, S.; Shami, A.; Muhaidat, S.; Ong, L.; Debbah, M. Towards Zero Touch Networks: Cross-Layer Automated Security Solutions for 6G Wireless Networks. arXiv 2025, arXiv:2502.20627. [Google Scholar] [CrossRef]

- Saeed, M.M.; Saeed, R.A.; Hasan, M.K.; Ali, E.S.; Mazha, T.; Shahzad, T.; Khan, S.; Hamam, H. A comprehensive survey on 6G-security: Physical connection and service layers. Discov. Internet Things 2025, 5, 28. [Google Scholar] [CrossRef]

- European Union Agency for Cybersecurity. Threat Landscape for 5G Networks. 2022. Available online: https://www.enisa.europa.eu/publications/enisa-threat-landscape-report-for-5g-networks (accessed on 11 September 2025).

- Suomalainen, J.; Ahmad, I.; Shajan, A.; Savunen, T. Cybersecurity for tactical 6G networks: Threats, architecture, and intelligence. Future Gener. Comput. Syst. 2025, 162, 107500. [Google Scholar] [CrossRef]

- Ericsson. Zero Trust Architecture for Evolving Radio Access Networks; White paper; Ericsson: Stockholm, Sweden, 2023. [Google Scholar]

- Chen, X.; Feng, W.; Ge, N.; Zhang, Y. Zero Trust Architecture for 6G Security. arXiv 2022, arXiv:2203.07716. [Google Scholar] [CrossRef]

- Rose, S.; Borchert, O.; Mitchell, S.; Connelly, S. Zero Trust Architecture; Special Publication 800-207; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2020. [CrossRef]

- Yamin, M.M.; Katt, B.; Gkioulos, V. Cyber ranges and security testbeds: Scenarios, functions, tools and architecture. Comput. Secur. 2020, 88, 101636. [Google Scholar] [CrossRef]

- Leitner, M.; Frank, M.; Hotwagner, W.; Langner, G.; Maurhart, O.; Pahi, T.; Reuter, L.; Skopik, F.; Smith, P.; Warum, M. AIT Cyber Range: Flexible Cyber Security Environment for Exercises, Training and Research. In Proceedings of the 2020 European Interdisciplinary Cybersecurity Conference, Rennes, France, 17–19 November 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Ukwandu, E.; Farah, M.; Hindy, H.; Brosset, D.; Kavallieros, D.; Tachtatzis, C.; Bures, M.; Andonovic, I.; Bellekens, X. A Review of Cyber-Ranges and Test-Beds: Current and Future Trends. Sensors 2020, 20, 7148. [Google Scholar] [CrossRef]

- Čeleda, P.; Cegan, J.; Vykopal, J.; Tovarnák, D. KYPO—A Platform for Cyber Defence Exercises. 2015. Available online: https://api.semanticscholar.org/CorpusID:85440478 (accessed on 11 September 2025).

- Vykopal, J.; Ošlejšek, R.; Celeda, P.; Vizváry, M.; Tovarňák, D. KYPO Cyber Range: Design and Use Cases. In Proceedings of the 12th International Conference on Software Technologies—ICSOFT, Madrid, Spain, 24–26 July 2017; SciTePress: Setúbal, Portugal, 2017; pp. 310–321. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology. The Cyber Range: A Guide. 2023. Available online: https://www.nist.gov/publications (accessed on 15 June 2025).

- CybExer Technologies. Cyber Ranges. 2025. Available online: https://cybexer.com/solutions/cyber-ranges (accessed on 19 March 2025).

- Airbus Defence and Space. Airbus CyberRange. 2025. Available online: https://cyber.airbus.com/en/products/cyberrange (accessed on 19 March 2025).

- European Telecommunications Standards Institute. Network Functions Virtualisation (NFV) Package Security Specification. 2023. Available online: https://www.etsi.org/deliver/etsi_gs/NFV-SEC/001_099/021/04.05.01_60/gs_NFV-SEC021v040501p.pdf (accessed on 25 March 2025).

- Khan, R.; Kumar, P.; Jayakody, D.N.K.; Liyanage, M. A Survey on Security and Privacy of 5G Technologies: Potential Solutions, Recent Advancements, and Future Directions. IEEE Commun. Surv. Tutor. 2020, 22, 196–248. [Google Scholar] [CrossRef]

- Rost, P.; Banchs, A.; Berberana, I.; Breitbach, M.; Doll, M.; Droste, H.; Mannweiler, C.; Puente, M.A.; Samdanis, K.; Sayadi, B. Mobile network architecture evolution toward 5G. IEEE Commun. Mag. 2016, 54, 84–91. [Google Scholar] [CrossRef]

- European Union Agency for Cybersecurity (ENISA). 5G Cybersecurity Standards. 2022. Available online: https://www.enisa.europa.eu/publications/5g-cybersecurity-standards (accessed on 9 May 2025).

- Liu, P.; Lee, K.; Cintrón, F.J.; Wuthier, S.; Savaliya, B.; Montgomery, D.; Rouil, R. Blueprint for Deploying 5G O-RAN Testbeds: A Guide to Using Diverse O-RAN Software Stacks; Technical Report NIST TN 2311; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024. [CrossRef]

- SPIDER H2020 Project. The SPIDER concept: A Cyber Range as a Service platform. In Proceedings of the European Conference on Networks and Communications (EUCNC2020), Virtual, 8–11 June 2021. [Google Scholar] [CrossRef]

- Rebecchi, F.; Pastor, A.; Mozo, A.; Lombardo, C.; Bruschi, R.; Aliferis, I.; Doriguzzi-Corin, R.; Gouvas, P.; Alvarez Romero, A.; Angelogianni, A.; et al. A Digital Twin for the 5G Era: The SPIDER Cyber Range. In Proceedings of the 2022 IEEE 23rd International Symposium on a World of Wireless, Mobile and Multimedia Networks (WoWMoM), Belfast, UK, 14–17 June 2022; pp. 567–572. [Google Scholar] [CrossRef]

- SPIDER H2020 Project. Pilot Use Case Scenarios and Architecture of the SPIDER Project. 2021. Available online: https://k3ylabs.com/research-development/projects/h2020-spider/ (accessed on 5 January 2025).

- PAWR. Platforms for Advanced Wireless Research. 2025. Available online: https://advancedwireless.org/ (accessed on 9 May 2025).

- Bonati, L.; Polese, M.; D’Oro, S.; Basagni, S.; Melodia, T. OpenRAN Gym: AI/ML Development, Data Collection, and Testing for O-RAN on PAWR Platforms. Comput. Netw. 2023, 220, 109502. [Google Scholar] [CrossRef]

- Polese, M.; Bonati, L.; D’Oro, S.; Johari, P.; Villa, D.; Velumani, S.; Gangula, R.; Tsampazi, M.; Robinson, C.P.; Gemmi, G.; et al. Colosseum: The Open RAN Digital Twin. arXiv 2024, arXiv:2404.17317. [Google Scholar] [CrossRef]

- Upadhyaya, P.S.; Tripathi, N.; Gaeddert, J.; Reed, J.H. Open AI Cellular (OAIC): An Open Source 5G O-RAN Testbed for Design and Testing of AI-Based RAN Management Algorithms. IEEE Netw. 2023, 37, 7–15. [Google Scholar] [CrossRef]

- Open5GS Project. Open Source 5G Core Network Implementation. 2025. Available online: https://open5gs.org/ (accessed on 9 May 2025).

- free5GC Project. Open-Source 5G Core Network. 2024. Available online: https://free5gc.org/ (accessed on 11 October 2025).

- Software Radio Systems (SRS). srsRAN Project—Open Source 4G/5G Software Radio Suite. Available online: https://www.srsran.com/ (accessed on 9 May 2025).

- 5G Security Test Bed. 5G Security Test Bed Validates Security of HTTP/2 Protocol for 5G. 2024. Available online: https://5gsecuritytestbed.com/reports/ (accessed on 19 June 2025).

- 5G Security Test Bed. Securing 5G: MTLS Security on 5G Network SBI Test Report. 2023. Available online: https://5gsecuritytestbed.com/reports/ (accessed on 19 June 2025).

- 5G Security Test Bed. Securing 5G: Network Slicing Phase 2 Test Report. 2024. Available online: https://5gsecuritytestbed.com/reports/ (accessed on 19 June 2025).

- 5G Security Test Bed. Securing 5G: CSRIC VII 5G Standalone Network Test Report. 2023. Available online: https://5gsecuritytestbed.com/reports/ (accessed on 19 June 2025).

- Keysight Technologies. Netsim. 2025. Available online: https://www.keysight.com/ (accessed on 19 June 2025).

- Spirent Communications. Open RAN Testing—Comprehensive Test and Assurance Solutions. 2025. Available online: https://www.spirent.com/products/open-ran-testing (accessed on 16 June 2025).

- ETSI Technical Committee CYBER. Critical Security Controls for Effective Cyber Defence in 5G Networks; TR 103 305-1; ETSI: Sophia Antipolis, France, 2021. [Google Scholar]

- European Union Agency for Cybersecurity. Security in 5G Specifications. 2023. Available online: https://www.enisa.europa.eu/publications/security-in-5g-specifications (accessed on 13 June 2025).

- O-RAN Alliance WG11. O-RAN Security Threat Modeling and Risk Assessment. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- O-RAN Alliance WG1. O-RAN Architecture Description. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- O-RAN Work Group 3. O-RAN Near-RT RIC Architecture. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- O-RAN Open Fronthaul Work Group. O-RAN Control, User and Synchronization Plane Specification. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- O-RAN Working Group 6. O-RAN O2 Interface General Aspects and Principles. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- O-RAN Alliance WG11. O-RAN Security Protocols Specifications. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 2 June 2025).

- Mavenir Systems. Open Architecture and Supply Chain Diversity: Securing Telecoms into the Future. 2023. Available online: https://www.mavenir.com/ (accessed on 29 June 2025).

- Abdelrazek, L. Exploring the Evolution of RAN Security Management. 2024. Available online: https://www.ericsson.com/en/blog/2024/6/exploring-the-evolution-of-ran-security-management (accessed on 2 June 2025).

- Nokia Corporation. Open RAN Security White Paper. 2025. Available online: https://www.nokia.com/asset/214354/ (accessed on 12 June 2025).

- Polese, M.; Bonati, L.; D’Oro, S.; Basagni, S.; Melodia, T. Understanding O-RAN: Architecture, Interfaces, Algorithms, Security, and Research Challenges. IEEE Commun. Surv. Tutor. 2023, 25, 1376–1411. [Google Scholar] [CrossRef]

- Sapavath, N.N.; Kim, B.; Chowdhury, K.; Shah, V.K. Experimental Study of Adversarial Attacks on ML-based xApps in O-RAN. In Proceedings of the 17th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec), Seoul, Republic of Korea, 27–29 May 2024. [Google Scholar] [CrossRef]

- Tang, B.; Shah, V.K.; Marojevic, V.; Reed, J.H. AI Testing Framework for Next-G O-RAN Networks: Requirements, Design, and Research Opportunities. IEEE Wirel. Commun. 2023, 30, 70–77. [Google Scholar] [CrossRef]

- O-RAN Security Work Group. O-RAN Study on Security for Artificial Intelligence and Machine Learning (AI/ML) in O-RAN. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 12 June 2025).

- O-RAN Security Work Group. O-RAN Study on Security for O-Cloud. 2024. Available online: https://specifications.o-ran.org/specifications (accessed on 12 June 2025).

- National Telecommunications and Information Administration. Open RAN Security Report; Technical report; U.S. Department of Commerce: Washington, DC, USA, 2023.

- O-RAN Security Work Group. O-RAN Alliance Security Update 2025. Available online: https://www.o-ran.org/blog/o-ran-alliance-security-update-2025 (accessed on 12 September 2025).

- Lacava, A.; Bonati, L.; Mohamadi, N.; Gangula, R.; Kaltenberger, F.; Johari, P.; D’Oro, S.; Cuomo, F.; Polese, M.; Melodia, T. dApps: Enabling Real-Time AI-Based Open RAN Control. arXiv 2025, arXiv:2501.16502. [Google Scholar] [CrossRef]

- Next G Alliance (ATIS). Roadmap to 6G: Security. 2022. Available online: https://nextgalliance.org/white_papers/roadmap-to-6g/ (accessed on 12 September 2025).

- Maxenti, S.; Shirkhani, R.; Elkael, M.; Bonati, L.; D’Oro, S.; Melodia, T.; Polese, M. AutoRAN: Automated and Zero-Touch Open RAN Systems. arXiv 2025, arXiv:2504.11233. [Google Scholar]

- O-RAN Alliance WG2. O-RAN Non-RT RIC: Architecture. 2024. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- O-RAN Security Work Group. O-RAN Study on Security for Fronthaul CUS-Plane. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- O-RAN Work Group 1. O-RAN Decoupled SMO Architecture. 2024. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- O-RAN Security Work Group. O-RAN Study on Security for Service Management and Orchestration. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- Agency, N.S. Open Radio Access Network Security Considerations. 2021. Available online: https://www.cisa.gov/sites/default/files/publications/open-radio-access-network-security-considerations_508.pdf (accessed on 12 September 2025).

- O-RAN Security Focus Group. O-RAN Study on Security for Non-RT RIC. 2022. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- O-RAN Security Work Group. O-RAN Study on Security for Near Real Time RIC and xApps 5.0. 2024. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- O-RAN Security Work Group 4. O-RAN Study on O-RU Centralized User Management. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 12 September 2025).

- Saad, W.; Bennis, M.; Chen, M. A vision of 6G wireless systems: Applications, trends, technologies, and and Open Research Problems. IEEE Netw. 2019, 34, 134–142. [Google Scholar] [CrossRef]

- Strinati, E.C.; Barbarossa, S.; Gonzalez-Jimenez, J.L.; Ktenas, D.; Cassiau, N.; Maret, L.; Dehos, C. 6G: The Next Frontier: From Holographic Messaging to Artificial Intelligence Using Subterahertz and Visible Light Communication. IEEE Veh. Technol. Mag. 2019, 14, 42–50. [Google Scholar] [CrossRef]

- Rugeland, P.; Ericson, M.; Wikström, G.; Zou, Z.; Castaneda Cisneros, J.; Jain, A.; Bui, D.T.; Kerboeuf, S.; Outtagarts, A.; Verchère, D.; et al. A Holistic Flagship Towards the 6G Network Platform and System, to Inspire Digital Transformation, for the World to Act Together in Meeting Needs in Society and Ecosystems with Novel 6G Services. Hexa-X-II Project Deliverable D1.2, Version 1.1, 2023. Available online: https://hexa-x-ii.eu/results/ (accessed on 28 September 2025).

- Fang, H.; Wang, X.; Hanzo, L. Learning-Aided Physical Layer Authentication as an Intelligent Process. IEEE Trans. Commun. 2019, 67, 2260–2273. [Google Scholar] [CrossRef]

- Gao, N.; Liu, Y.; Zhang, Q.; Li, X.; Jin, S. Let RFF Do the Talking: Large Language Model Enabled Lightweight RFFI for 6G Edge Intelligence. Sci. China Inf. Sci. 2025, 68, 170308. [Google Scholar] [CrossRef]

- Zheng, H.; Gao, N.; Cai, D.; Jin, S.; Matthaiou, M. UAV Individual Identification via Distilled RF Fingerprints-Based LLM in ISAC Networks. arXiv 2024, arXiv:2508.12597. [Google Scholar] [CrossRef]

- Zuo, Y.; Guo, J.; Gao, N.; Zhu, Y.; Jin, S.; Li, X. A Survey of Blockchain and Artificial Intelligence for 6G Wireless Communications. IEEE Commun. Surv. Tutor. 2023, 25, 2494–2528. [Google Scholar] [CrossRef]

- Pathak, V.; Pandya, R.J.; Bhatia, V.; Lopez, O.A. Qualitative Survey on Artificial Intelligence Integrated Blockchain Approach for 6G and Beyond. IEEE Access 2023, 11, 105935–105981. [Google Scholar] [CrossRef]

- Kalla, A.; De Alwis, C.; Porambage, P.; Gür, G.; Liyanage, M. A Survey on the Use of Blockchain for Future 6G: Technical Aspects, Use Cases, Challenges and Research Directions. J. Ind. Inf. Integr. 2022, 30, 100404. [Google Scholar] [CrossRef]

- Ferrag, M.A.; Friha, O.; Maglaras, L.; Janicke, H.; Shu, L. Federated Deep Learning for Cyber Security in the Internet of Things: Concepts, Applications, and Experimental Analysis. IEEE Access 2021, 9, 138509–138542. [Google Scholar] [CrossRef]

- ISO/IEC 27005:2022; Information Security, Cybersecurity and Privacy Protection—Guidance on Managing Information Security Risks. International Organization for Standardization: Geneva, Switzerland, 2022. Available online: https://www.iso.org/standard/80585.html (accessed on 28 September 2025).

- U.S. National Institute of Standards and Technology. Guide for Conducting Risk Assessments. SP 800-30 Revision 1. 2012. Available online: https://nvlpubs.nist.gov/nistpubs/legacy/sp/nistspecialpublication800-30r1.pdf (accessed on 5 April 2025).

- O-RAN Working Group 6. O-RAN Cloud Architecture and Deployment Scenarios for O-RAN Virtualized RAN. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 5 April 2025).

- O-RAN Open X-Haul Transport Work Group. O-RAN Synchronization Architecture and Solution Specification. 2025. Available online: https://specifications.o-ran.org/specifications (accessed on 5 April 2025).

- Bonati, L.; Polese, M.; D’Oro, S.; del Prever, P.B.; Melodia, T. 5G-CT: Automated Deployment and Over-the-Air Testing of End-to-End Open Radio Access Networks. arXiv 2023, arXiv:2311.03206. [Google Scholar] [CrossRef]

- Villa, D.; Khan, I.; Kaltenberger, F.; Hedberg, N.; Soares da Silva, R.; Maxenti, S.; Bonati, L.; Kelkar, A.; Dick, C.; Baena, E.; et al. X5G: An Open, Programmable, Multi-vendor, End-to-end, Private 5G O-RAN Testbed with NVIDIA ARC and OpenAirInterface. IEEE Trans. Mob. Comput. 2025, 24, 11305–11322. [Google Scholar] [CrossRef]

- European Defence Agency. Cyber Ranges Federation Project Reaches New Milestone. 2018. Available online: https://eda.europa.eu/news-and-events/news/2018/09/13/cyber-ranges-federation-project-reaches-new-milestone (accessed on 5 February 2025).

- Sharkov, G.; Todorova, C.; Koykov, G.; Nikolov, I. Towards a Robust and Scalable Cyber Range Federation for Sectoral Cyber/Hybrid Exercising: The Red Ranger and ECHO Collaborative Experience. Inf. Secur. Int. J. 2022, 53, 287–302. [Google Scholar] [CrossRef]

- Oikonomou, N.; Mengidis, N.; Spanopoulos-Karalexidis, M.; Voulgaridis, A.; Merialdo, M.; Raisr, I.; Hanson, K.; de La Vallée Poussin, P.; Tsikrika, T.; Vrochidis, S.; et al. ECHO federated cyber range: Towards next-generation scalable cyber ranges. In Proceedings of the 2021 IEEE International Conference on Cyber Security and Resilience (CSR), Rhodes, Greece, 26–28 July 2021; pp. 403–408. [Google Scholar] [CrossRef]

- Anomali. What Are STIX/TAXII? Available online: https://www.cloudflare.com/learning/security/what-is-stix-and-taxii/ (accessed on 16 February 2025).

- Cybersecurity and Infrastructure Security Agency. Traffic Light Protocol (TLP) Definitions and Usage. 2022. Available online: https://www.cisa.gov/news-events/news/traffic-light-protocol-tlp-definitions-and-usage (accessed on 16 February 2025).

- 3rd Generation Partnership Project. Security Architecture and Procedures for 5G System; Technical Specification 33.501; European Telecommunications Standards Institute: Sophia Antipolis, France, 2020. [Google Scholar]

- European Telecommunications Standards Institute. MEC Security; Status of Standards Support and Future Evolutions. 2022. Available online: https://www.etsi.org/images/files/ETSIWhitePapers/ETSI-WP-46-2nd-Ed-MEC-security.pdf (accessed on 16 February 2025).

- Kairouz, P.; McMahan, H.B.; Avent, B.; Bellet, A.; Bennis, M.; Bhagoji, A.N.; Bonawitz, K.; Charles, Z.; Cormode, G.; Cummings, R.; et al. Advances and Open Problems in Federated Learning. Found. Trends® Mach. Learn. 2021, 14, 1–210. [Google Scholar] [CrossRef]

- Groen, J.; D’Oro, S.; Demir, U.; Bonati, L.; Polese, M.; Melodia, T.; Chowdhury, K. Securing O-RAN Open Interfaces. IEEE Trans. Mob. Comput. 2024, 23, 11265–11277. [Google Scholar] [CrossRef]

- Stouffer, K.; Pillitteri, V.; Lightman, S.; Abrams, M.; Hahn, A. Guide to Industrial Control Systems (ICS) Security; Special Publication 800-82 Rev. 3; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023. [CrossRef]

- Urias, V.E.; Stout, W.M.S.; Leeuwen, B.V.; Lin, H. Cyber Range Infrastructure Limitations and Needs of Tomorrow: A Position Paper. In Proceedings of the 2018 International Carnahan Conference on Security Technology (ICCST), Montreal, QC, Canada, 22–25 October 2018; IEEE: Piscataway, NJ, USA, 2018. [Google Scholar] [CrossRef]

| Capability | SPIDER | OpenRAN Gym/OAIC | 5GSTB | Keysight/Spirent | Proposed NG-CR |

|---|---|---|---|---|---|

| Primary Focus | 5G core security training | O-RAN AI/ML functional development | Commercial 5G validation | Equipment testing and assurance | O-RAN/6G security validation |

| O-RAN Component Scope | 5G core (limited RAN) | RIC platforms + selected RAN | 5G core focus | Testing environment | Full disaggregated stack |

| Interface Coverage | 5G SBA interfaces | E2, A1 (research focus) | N/A | All major (testing) | All O-RAN interfaces (emulated) |

| AI/ML Security Focus | None | Functional testing only | None | None | Adversarial threat testing |

| Zero Trust Validation | Limited | None | Partial (mTLS, core) | Limited | Native ZTA enforcement |

| Threat Model Alignment | Generic 5G threats | None explicit | Operator-driven | Vendor specs | O-RAN WG11 explicit mapping |

| Exercise Orchestration | Full training scenarios | Research scenarios | Validation tests | Test automation | EDS-based adaptive exercises |

| Complementarity Role | Training delivery | AI/ML functional testing | Commercial validation | Equipment assurance | Training delivery and security validation |

| Group | Core Challenge Addressed | Key Cyber-Range Evolution Requirements |

|---|---|---|

| 1. Systemic O-RAN Threats | Baseline security of components and generic interactions | High-fidelity emulation of O-RAN nodes (O-RU/DU/CU/RIC/SMO per WG1 R004-v15.00, WG2, WG3 R004-v07.00), vulnerability/misconfiguration injection, basic hardening validation. |

| 2. O-Cloud and Virtualization Threats | Securing the cloud-native platform | Realistic O-Cloud stack emulation (K8s, etc. as per WG6 specs), testing VM/container security, O2/internal API security testing (informed by WG11 O-Cloud Security), ID/Time sync vulns. |

| 3. AI/ML and RIC Threats | Securing intelligent controllers | RIC platform emulation (per WG2/WG3 arch), xApp/rApp lifecycle simulation (per WG11 AppLCM), AI attack tool integration (poisoning, evasion, inference against threats in WG11 AI/ML Sec), malicious app deployment. |

| 4. Interface and Protocol Threats | Securing communication over open interfaces | High-fidelity emulation of specific O-RAN interfaces (FH per WG4, E2 per WG3 with E2SMs, A1 per WG2, O1 per WG1/WG10, O2 per WG6, etc.) and protocols (per WG11 Sec Protocols), protocol-aware attack injection/analysis. |

| 5. Multi-Tenancy and Shared Resource Threats | Securing shared infrastructure | Advanced multi-tenancy modeling, inter-tenant isolation testing, shared resource conflict simulation (informed by WG1 Use Cases, WG11 Shared O-RU Sec). |

| 6. Supply Chain | Securing software/hardware | SBOM integration, secure repository simulation, integrity validation testing (informed by WG11 AppLCM Sec), vulnerable dependency injection, decommissioning simulation. |

| 7. External, Physical and Radio Threats | Securing boundaries | Edge/perimeter simulation, basic RF/jamming simulation (or SDR integration), modeling physical attack consequences. |

| 8. 6G-Specific Concepts | AI-native security, pervasive ZTA, quantum and energy-aware security | ZTA policy simulation/testing engines for large-scale, multi-domain 6G RAN scenarios; Federated AI/Range simulation for FL and dApps security; Schema-driven emulation of AI-native security functions; Abstract interfaces for 6G RAN technology validation (RIS, ISAC, THz); Quantum-resistant cryptography evaluation; Energy-aware security modeling. |

| Classification | Focus and Characteristics | Indicative Exercise Scenarios | Key Technologies | CR Requirements |

|---|---|---|---|---|

| 1. Foundational Cyber Defense | Basic IT/network security principles, common vulnerabilities (e.g., malware, phishing), standard network protocols, and endpoint security. | Network Reconnaissance and Vulnerability ScanningPhishing Campaign Simulation and ResponseBasic Malware Analysis (static/dynamic)Firewall and IDS/IPS Configuration Drills | Standard IT (workstations, routers, etc.), Common OS (Windows, Linux), Basic security tools (Nmap, Wireshark). | Basic network emulation, VM hosting, Simple traffic generation, Log collection, User interface for exercise interaction. |

| 2. 5G Core and Transport Security (Non-O-RAN) | Security of 3GPP-defined 5G architecture elements: 5G Core (AMF, SMF, UPF, NRF, SEPP), Service-Based Architecture (SBA), NFV/SDN infrastructure supporting the core, basic network slicing, and initial MEC deployments. | DoS/DDoS against 5GC Network FunctionsSBA Interface Fuzzing and API Security TestingNetwork Slice Isolation and Resource Hijacking ScenariosSecuring NFVI/VIM for 5G NFsBasic MEC Application Security Testing | 5G Core emulators (Open5GS, free5GC), NFV orchestrators (MANO), SDN controllers, Virtualization platforms (OpenStack, K8s), GTP/PFCP. | VNF/CNF emulation, 5G Core protocol simulation, Network slicing emulation, Basic MEC platform emulation, Integration with MANO components, and Monitoring of SBA interfaces. |

| 3. O-RAN Architectural Security | Security of disaggregated O-RAN components (O-CU, O-DU, O-RU, SMO), open interfaces (Fronthaul, F1, E1, O1, O2), and the O-Cloud platform specifically hosting RAN NFs. Multi-vendor interoperability security. | Open Fronthaul Interface Security Testing (M-Plane, CUS-Plane, S-Plane attacks)O-Cloud Security for RAN (O2 interface attacks, VNF/CNF isolation in O-Cloud)O1 Interface Security for O-RAN NFsSMO Security (compromise, malicious orchestration commands) | O-CU/O-DU/O-RU emulators, SMO emulators, O-Cloud platform emulators (K8s-based), Open Fronthaul, E1, F1, O1, O2 interface simulators. | High-fidelity emulation of disaggregated O-RAN nodes and open interfaces, Realistic O-Cloud stack emulation, Multi-vendor component integration, Precise timing/synchronization emulation for Fronthaul. |

| 4. O-RAN Intelligent Control and AI/ML Security | Security of Near-RT RIC, Non-RT RIC, xApps/rApps, A1 and E2 interfaces, AI/ML model lifecycle security, robustness of AI-driven RAN control. | Malicious xApp/rApp Behavior Detection and MitigationA1 Policy Injection/Tampering AttacksE2 Interface Attacks (DoS, data manipulation)AI Model Poisoning/Evasion Attacks against RIC modelsSecure xApp/rApp Onboarding and Lifecycle Management | Near-RT RIC and Non-RT RIC platform emulators, xApp/rApp simulators, E2/A1 interface emulators, AI/ML model libraries, and attack tools. | RIC platform emulation (per WG2/WG3), xApp/rApp lifecycle simulation (per WG11), AI attack tool integration, E2 Service Model support, Real-time control loop simulation, AI model training/inference environment. |

| 5. Advanced Multi-Domain and Projected 6G Exercises | Zero Trust Architectures (ZTA) across federated domains, AI-native security mechanisms, security for extreme disaggregation (e.g., cell-free), security of novel 6G communication/sensing technologies (THz, RIS, ISAC), all managed and described through a comprehensive exercise definition schema enabling complex interactions and dynamic adaptations. | Dynamic ZTA Policy Validation in Multi-Vendor O-RAN/6GTesting AI-Driven Autonomous Security Response in 6GSecurity of Federated Learning across O-RAN RICsVulnerability Analysis of Simulated RIS/THz LinksSecuring Distributed Ledgers for 6G Trust | Advanced ZTA policy engines, AI-native security function simulators, Federated learning frameworks, Simulators for 6G tech (RIS, THz), DLT emulators. | ZTA policy simulation/testing environments, Federated AI/Range simulation capabilities, a Forward-looking research tool for modeling conceptual 6G technologies, Quantum-resistant crypto testing, A rich exercise definition language/schema, and a powerful schema-driven orchestrator. |

| Component/Metric | Result | Validation Method |

|---|---|---|

| Core Framework Validation | ||

| EDS YAML specification | Complete | Utilize Section 5.6 elements |

| Schema-driven telemetry | Operational | Reads EDS, auto-collects 10 logs |

| Schema-driven verification | Operational | 100% deployment compliance |

| Success criteria evaluation | Operational | Automated from EDS criteria |

| O-RAN Deployment Metrics | ||

| Containers deployed | 10/10 | All RIC + RAN components running |

| Networks configured | 2/2 | RIC (10.0.2.0/24), RAN (10.53.1.0/24) |

| A1 interface validation | Operational | Policy CRUD via REST API |

| E2 interface validation | Operational | E2 Setup + E2SM-KPM active |

| Custom xApp integration | Operational | Policy Enforcer with detection |

| Exercise S2 Execution Results | ||

| Attack injection (S2-SC1) | PASS | Malicious policy accepted |

| Detection event (S2-SC2) | PASS | xApp logged alert (<2 s) |

| Overall outcome | SUCCESS | Both criteria met |

| Current Scope Limitations | ||

| Exercise coverage | 1/15 | Single exercise validated |

| Deployment automation | Partial | EDS validates, not generates |

| Topology complexity | Basic | Single gNB, open5gs, oran-sc-ric (i-release), oran-A1-mediator |

| Layer (Section 5.1) | Status | Implementation |

|---|---|---|

| Infrastructure (IL) | Basic | Docker on single host; no distributed mgmt |

| Virtualization (VOL) | Partial | 10 containers; lacks K8s, O2, multi-tenancy |

| Component (CEL) | Functional | O-RAN SC RIC + srsRAN + xApp; lacks O-RU/DU, Non-RT RIC |

| Network (NEL) | Basic | Dual Docker networks; no traffic shaping |

| Telemetry (TML) | Validated | Schema-driven collection; lacks dashboards |

| Threat Injection (TIE) | Basic | 4-phase script; lacks reusable framework |

| AI/ML Security | N/A | Not needed for Exercise S2 |

| Orchestration | Partial | Docker Compose + templates; lacks web UI |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Chaskos, E.; Kolokotronis, N.; Shiaeles, S. A Next-Generation Cyber-Range Framework for O-RAN and 6G Security Validation. Future Internet 2026, 18, 29. https://doi.org/10.3390/fi18010029

Chaskos E, Kolokotronis N, Shiaeles S. A Next-Generation Cyber-Range Framework for O-RAN and 6G Security Validation. Future Internet. 2026; 18(1):29. https://doi.org/10.3390/fi18010029

Chicago/Turabian StyleChaskos, Evangelos, Nicholas Kolokotronis, and Stavros Shiaeles. 2026. "A Next-Generation Cyber-Range Framework for O-RAN and 6G Security Validation" Future Internet 18, no. 1: 29. https://doi.org/10.3390/fi18010029

APA StyleChaskos, E., Kolokotronis, N., & Shiaeles, S. (2026). A Next-Generation Cyber-Range Framework for O-RAN and 6G Security Validation. Future Internet, 18(1), 29. https://doi.org/10.3390/fi18010029