The Cloud-to-Edge-to-IoT Continuum as an Enabler for Search and Rescue Operations

Abstract

1. Introduction

2. Related Work

2.1. Cloud and Edge Robotics for SAR

2.2. Cloud Continuum

2.3. Sensor Networks and IoT for SAR Operations

2.4. Situation Awareness and Perception with Mobile Robots

2.5. Simultaneous Localization and Mapping

2.6. ROS Applications

3. Challenges for Risk Assessment and Mission Control in SAR Operations in Post-Disaster Scenarios

- Dynamic multi-robot mapping and fleet management: the coordination, monitoring and optimization of the task allocation for mobile robots that work together in building a map of unknown environments;

- Computer vision for information extraction: AI and computer vision enable people detection, position detection and localization from image and video data;

- Smart data filtering/aggregation/compression: a large amount of data is collected from sensors, robots, and cameras in the intervention area for several services (e.g., map building, scene and action replay). Some of them can be filtered, and others can be downsampled or aggregated before sending them to the edge/cloud. Smart policies should be defined to also tackle the high degree of data heterogeneity;

- Device Management: some application functionalities can be pre-deployed on the devices or at the edge. The device management should also enable bootstrapping and self-configuration, support hardware heterogeneity and guarantee the self-healing of software components;

- Orchestration of software components: given the SAR application graph, a dynamic placement of software components should be enabled based on service requirements and resource availability. This will require performance and resource monitoring at the various levels of the continuum and dynamic component redeployment;

- Low latency communication: communication networks to/from disaster areas towards the edge and cloud should guarantee low delays for a fast response in locating and rescuing people under mobility conditions and possible disconnections.

4. Nephele Project as Enabler for SAR Operations

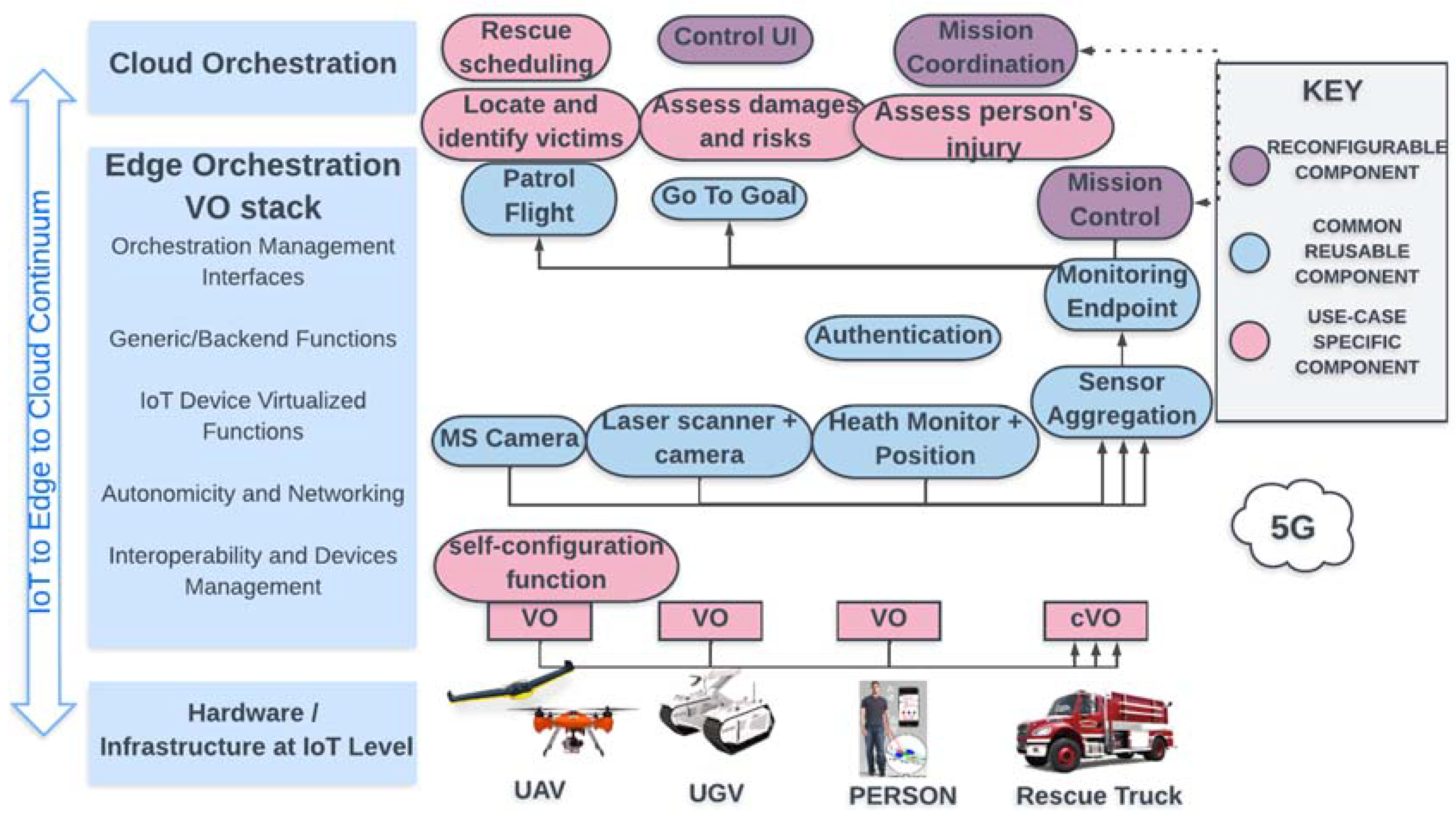

- An IoT and edge computing software stack for leveraging the virtualization of IoT devices at the edge part of the infrastructure and supporting openness and interoperability aspects in a device-independent way. Through this software stack, the management of a wide range of IoT devices and platforms can be realized in a unified way, avoiding the usage of middleware platforms, whereas edge computing functionalities can be offered on demand to efficiently support IoT applications’ operations. The concept of the virtual object (VO) is introduced, where the VO is considered the virtual counterpart of an IoT device. The VO significantly extends the notion of a digital twin as it provides a set of abstractions for managing any type of IoT device through a virtualized instance while augmenting the supported functionalities through the hosting of a multi-layer software stack, called a virtual object stack (VOStack). The VOStack is specifically conceived to provide VOs with edge computing and IoT functions, such as, among others, distributed data management and analysis based on machine learning (ML) and digital twinning techniques, authorization, security and trust based on security protocols and blockchain mechanisms, autonomic networking and time-triggered IoT functions taking advantage of ad hoc group management techniques, service discovery and load balancing mechanisms. Furthermore, IoT functions similar to the ones usually supported by digital twins will be offered by the VOStack;

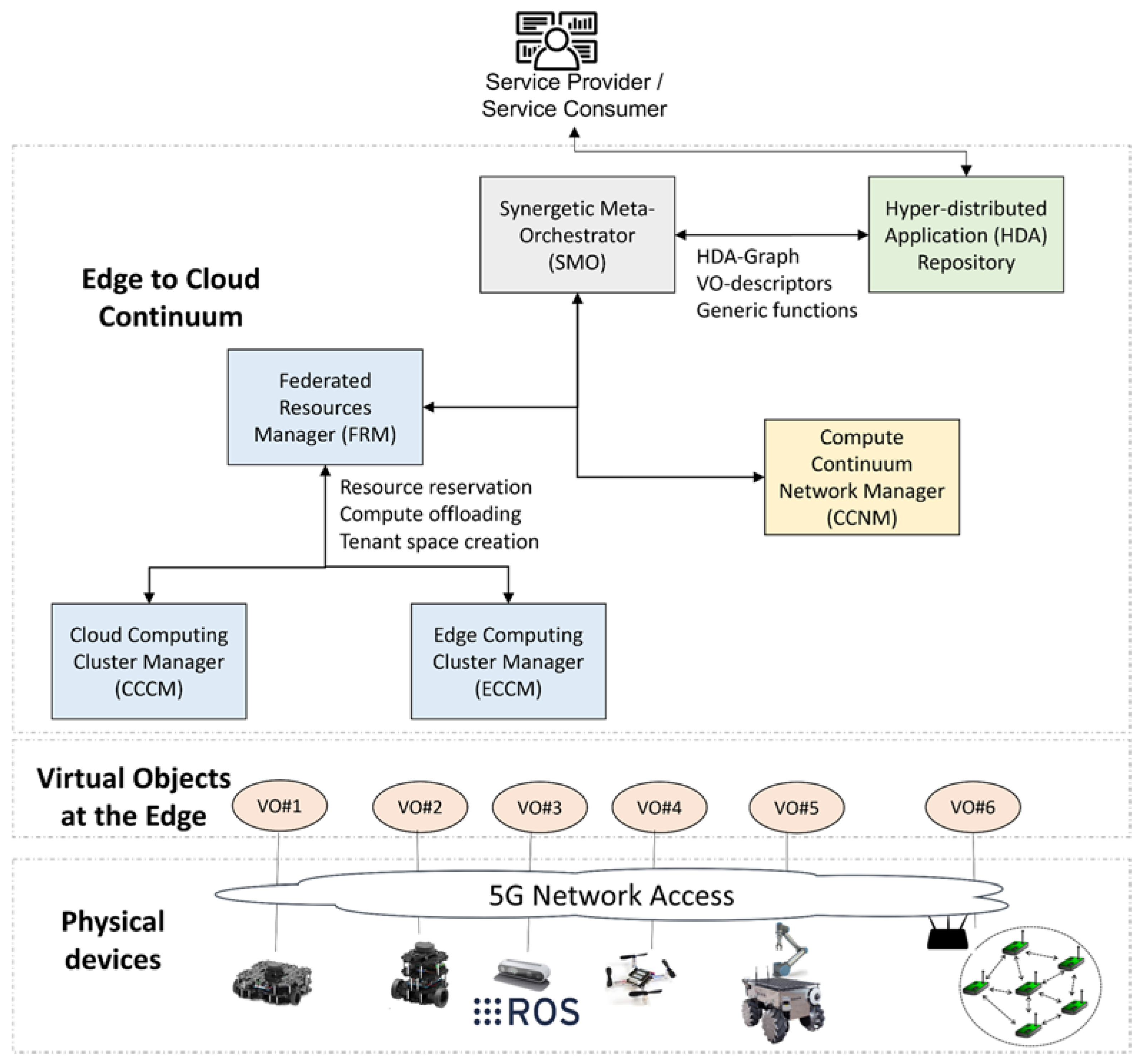

- A synergetic meta-orchestration framework for managing the coordination between cloud and edge computing orchestration platforms, through high-level scheduling supervision and definition. Technological advances in the areas of 5G and beyond networks, AI and cybersecurity are going to be considered and integrated as additional pluggable systems in the proposed synergetic meta-orchestration framework. To support modularity, openness and interoperability with emerging orchestration platforms and IoT technologies, a microservices-based approach is adopted where cloud-native applications are represented in the form of an application graph. The application graph is composed of independently deployable application components that can be orchestrated. Such components regard application components that can be deployed at the cloud or the edge part of the continuum, VOs and IoT-specific virtualized functions that are offered by the VOs. Each component in the application graph is also accompanied by a sidecar -based on a service-mesh approach for supporting generic/supportive functions that can be activated on demand. The meta-orchestrator is responsible for activating the appropriate orchestration modules to efficiently manage the deployment of the application components across the continuum. It includes a set of modules for federated resources management, the control of cloud and edge computing cluster managers, end-to-end network management across the continuum and AI-assisted orchestration. The interplay among VOs and IoT devices will allow for exploitation functions even at the device level in a flexible and opportunistic fashion. The synergetic meta-orchestrator (SMO) interacts with a set of further components for both computational resources management (federated resources manager—FRM) and network management across the continuum, by taking advantage of emerging network technologies. The SMO makes use of the hyper-distributed applications (HDA) repository, where a set of application graphs, application components, virtualized IoT functions and VOs are made available to/by application developers (see Figure 2).

4.1. The Search and Rescue Use Case in NEPHELE

- The synergetic meta-orchestrator (SMO) receives the HDA graph, the set of parameters for the specific instance of the SAR application, the VO descriptors needed for the application and a descriptor of the supportive functions to be deployed in the continuum. The supportive functions are provided by the VOStack and can be, for instance, risk assessments, mission control with task prioritization and optimized planning, health monitoring based on AI and computer vision, predictions of dangerous events, the localization and identification of victims, and so on. The SMO will interact with the federated resources manager and the compute continuum network manager to deploy the networking, computing and storage resource over the continuum according to the requirements derived from the HDA graph, the VO descriptors and the input parameters given by the service consumer;

- The federated resources manager (FRM) orchestrator will ensure that the application components will be deployed either on the edge or on the cloud based on the computational and storage resources needed for the application components and the overall resource availability. For instance, large data amounts used to replay some actions from robots paired with depth images of the surroundings (e.g., using rosbags) can be stored on the remote cloud. On the other hand, maps to be navigated by the robot could be stored at the edge for further action planning. Similarly, computation can be performed on the edge for identifying imminent danger situations or planning a robotic arm movement so that low delay is guaranteed, whereas complex mission optimization and prioritization computations can be performed in the cloud, and the needed resources should be allocated. The FRM will produce a deployment plan that will be provided to the compute continuum network manager;

- The cloud computing cluster manager (CCCM) is responsible for the cloud deployments and interaction with the edge computing cluster manager (ECCM) (e.g., reserve resources, create tenant spaces at the edge and compute offloading mechanisms);

- The edge computing cluster manager (ECCM) is responsible for the edge deployments, providing feedback on the application component and resource status; it receives inputs for compute offloading. Moreover, the ECCM will orchestrate the VOs that are part of the HDA graph and synchronize the device updates from IoT devices to edge nodes and vice versa.

- The compute continuum network manager (CCNM) will receive the deployment plan from the FRM to set up the network resources needed for the different application components for end-to-end network connectivity and meet the networking requirements for the application across the compute continuum. Exploiting 5G technologies, a network slice based on the bandwidth requirements for each robotic device will be the output of the CCNM. Each network slice will ensure it meets the QoS requirements and service level agreements for the given application.

- Once the VOs are deployed, a southbound interface for VO-to-IoT device interactions will be used to interoperate with the physical devices (i.e., robots, drones and sensor gateways). The VO will have knowledge on how to communicate with the IoT devices (i.e., robots, sensor gateways), as this will be stored and available on the VO storage. We assume the IoT devices to be up and running with their basic services and to be connected to the network;

- Physical robots and sensor networks will communicate with each other through the corresponding VOs using a peer interface, whereas the application component that will use the data stream from the VOs will use the Northbound interface. Application components such as map merging, decision-making, health monitoring, etc., will interact with the VOs to exchange relative information;

- The deployed VOs will use the Northbound interface to interact with the orchestrator for monitoring and scaling requests when, for instance, more robots are needed to cover a given area.

4.2. NEPHELE’s Added Value

- Reduced delay in time-critical missions: by exploiting compute and storage resources at the edge of the networks, with the possibility of the dynamic adaptation of the application components deployment over the continuum, lower delays will be expected for computationally demanding tasks on a large amount of data. This will be of high importance as it will strongly enhance first responders’ effectiveness and security in their operations;

- Efficient data management: a large amount of data available and collected by sensors, drones and robots will be filtered, compressed and analyzed by exploiting supportive functions made available by the VOStack in NEPHELE. Only a subset of the produced data will be stored for future reuse based on data importance. This will reduce the bandwidth needed for communications from the incident area to the applications layers that introduce intelligence into the application and by this reduce the delay in communication and the risk of starvation in terms of networking resources;

- Robot fleet management and trajectory optimization: exploiting the IoT-to-edge-to-cloud compute continuum, smart decisions will be taken and advanced algorithms will be provided for optimal robot and drone trajectory planning in multi-robot environments. Solutions will rely on AI techniques able to learn from what fleets robots see in their environment and enable semantic navigation with time-optimized trajectories;

- Rescue operations prioritization: AI techniques and optimization algorithms can elaborate the high amount of data and information collected from the intervention area to support rescue teams in giving priorities to the intervention tasks. The compute continuum will enable computationally heavy and complex decisions in a dynamic environment where risk prediction and assessment, victims’ health monitoring and victim identification may produce new information continuously and new decisions should be triggered;

- HW-agnostic deployment: the introduction of the VO concept and the multilevel meta-orchestration open for device-independent deployment and bootstrapping using generic HW. Different software components of an HDA can be deployed at every level of the IoT-to-edge-to-cloud continuum, which reduces the HW requirements (e.g., in computation and storage) at the IoT level for enabling a given application;

- AI for computer vision and image processing: advanced AI algorithms can be deployed as part of the supportive functions made available through the VOSstack innovation from NEPHELE. These can then be enabled on demand and deployed over the compute continuum for image and analysis and computer vision to locate and identify victims and perform risk assessments and predictions;

- End-to-end security: IoT devices and HDA users will benefit from the security and authentication, authorization, and accounting (AAA) functionalities offered by the NEPHELE framework. These functions will be offered as support functions for the VOs representing the IoT devices and will help in controlling access to the services, authorization, enforcing policies and identifying users and devices;

- Optimal network resource orchestration: based on the HDA requirements, an optimized network resource allocation policy will be enforced over the IoT-to-edge-to-cloud continuum. Here, the experience in network slicing and software-defined networking (SDN) will be exploited to be able to support time-critical applications such as the SAR operations presented in this paper.

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ochoa, S.F.; Santos, R. Human-Centric Wireless Sensor Networks to Improve Information Availability during Urban Search and Rescue Activities. Inf. Fusion 2015, 22, 71–84. [Google Scholar] [CrossRef]

- Choong, Y.Y.; Dawkins, S.T.; Furman, S.M.; Greene, K.; Prettyman, S.S.; Theofanos, M.F. Voices of First Responders—Identifying Public Safety Communication Problems: Findings from User-Centered Interviews; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2018; Volume 1. [Google Scholar]

- Saffre, F.; Hildmann, H.; Karvonen, H.; Lind, T. Self-swarming for multi-robot systems deployed for situational awareness. In New Developments and Environmental Applications of Drones; Springer: Cham, Switzerland, 2022; pp. 51–72. [Google Scholar]

- Queralta, J.P.; Raitoharju, J.; Gia, T.N.; Passalis, N.; Westerlund, T. Autosos: Towards multi-uav systems supporting maritime search and rescue with lightweight ai and edge computing. arXiv 2020, arXiv:2005.03409. [Google Scholar]

- Al-Khafajiy, M.; Baker, T.; Hussien, A.; Cotgrave, A. UAV and fog computing for IoE-based systems: A case study on environment disasters prediction and recovery plans. In Unmanned Aerial Vehicles in Smart Cities; Springer: Cham, Switzerland, 2020; pp. 133–152. [Google Scholar]

- Alsamhi, S.H.; Almalki, F.A.; AL-Dois, H.; Shvetsov, A.V.; Ansari, M.S.; Hawbani, A.; Gupta, S.K.; Lee, B. Multi-Drone Edge Intelligence and SAR Smart Wearable Devices for Emergency Communication. Wirel. Commun. Mob. Comput. 2021, 1–12. [Google Scholar] [CrossRef]

- Goldberg, K.; Siegwart, R. Beyond Webcams: An Introduction to Online Robots; MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Inaba, M.; Kagami, S.; Kanehiro, F.; Hoshino, Y.; Inoue, H. A Platform for Robotics Research Based on the Remote-Brained Robot Approach. Int. J. Robot. Res. 2000, 19, 933–954. [Google Scholar] [CrossRef]

- Waibel, M.; Beetz, M.; Civera, J.; D’Andrea, R.; Elfring, J.; Gálvez-López, D.; Haussermann, K.; Janssen, R.; Montiel, J.; Perzylo, A.; et al. Roboearth. IEEE Robot. Autom. Mag. 2011, 18, 69–82. [Google Scholar]

- Tenorth, M.; Beetz, M. KnowRob: A knowledge processing infrastructure for cognition-enabled robots. Int. J. Robot. Res. 2013, 32, 566–590. [Google Scholar] [CrossRef]

- Arumugam, R.; Enti, V.R.; Bingbing, L.; Xiaojun, W.; Baskaran, K.; Kong, F.F.; Kumar, A.S.; Meng, K.D.; Kit, G.W. DAvinCi: A Cloud Computing Framework for Service Robots. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation, Anchorage, AK, USA, 3–7 May 2010; pp. 3084–3089. [Google Scholar]

- Saxena, A.; Jain, A.; Sener, O.; Jami, A.; Misra, D.K.; Koppula, H.S. Robobrain: Large-scale Knowledge Engine for Robots. arXiv 2014, arXiv:1412.0691. [Google Scholar]

- Ichnowski, J.; Chen, K.; Dharmarajan, K.; Adebola, S.; Danielczuk, M.; Mayoral-Vilches, V.; Zhan, H.; Xu, D.; Kubiatowicz, J.; Stoica, I.; et al. FogROS 2: An Adaptive and Extensible Platform for Cloud and Fog Robotics Using ROS 2. arXiv 2022, arXiv:2205.09778. [Google Scholar]

- Amazon RoboMaker. Available online: https://aws.amazon.com/robomaker/ (accessed on 29 November 2018).

- Shi, W.; Cao, J.; Zhang, Q.; Li, Y.; Xu, L. Edge Computing: Vision and Challenges. IEEE Internet Things J. 2016, 3, 637–646. [Google Scholar] [CrossRef]

- Mouradian, C.; Naboulsi, D.; Yangui, S.; Glitho, R.H.; Morrow, M.J.; Polakos, P.A. A Comprehensive Survey on Fog Computing: State-of-the-Art and Research Challenges. IEEE Commun. Surv. Tutor. 2017, 20, 416–464. [Google Scholar]

- Groshev, M.; Baldoni, G.; Cominardi, L.; De la Oliva, A.; Gazda, R. Edge Robotics: Are We Ready? An Experimental Evaluation of Current Vision and Future Directions. Digit. Commun. Netw. 2022; in press. [Google Scholar] [CrossRef]

- Huang, P.; Zeng, L.; Chen, X.; Luo, K.; Zhou, Z.; Yu, S. Edge Robotics: Edge-Computing-Accelerated Multi-Robot Simultaneous Localization and Mapping. IEEE Internet Things J. 2022, 9, 14087–14102. [Google Scholar] [CrossRef]

- Xu, J.; Cao, H.; Li, D.; Huang, K.; Qian, H.; Shangguan, L.; Yang, Z. Edge Assisted Mobile Semantic Visual SLAM. In Proceedings of the IEEE INFOCOM 2020—IEEE Conference on Computer Communications, Toronto, ON, Canada, 6–9 July 2020; pp. 1828–1837. [Google Scholar]

- McEnroe, P.; Wang, S.; Liyanage, M. A Survey on the Convergence of Edge Computing and AI for UAVs: Opportunities and Challenges. IEEE Internet Things J. 2022, 9, 15435–15459. [Google Scholar] [CrossRef]

- SHERPA. Available online: http://www.sherpa-fp7-project.eu/ (accessed on 19 January 2023).

- RESPOND-A. Available online: https://robotnik.eu/projects/respond-a-en/ (accessed on 19 January 2023).

- Delmerico, J.; Mintchev, S.; Giusti, A.; Gromov, B.; Melo, K.; Horvat, T.; Cadena, C.; Hutter, M.; Ijspeert, A.; Floreano, D.; et al. The Current State and Future Outlook of Rescue Robotics. J. Field Robot. 2019, 36, 1171–1191. [Google Scholar] [CrossRef]

- Bravo-Arrabal, J.; Toscano-Moreno, M.; Fernandez-Lozano, J.; Mandow, A.; Gomez-Ruiz, A.J.; García-Cerezo, A. The Internet of Cooperative Agents Architecture (X-IoCA) for Robots, Hybrid Sensor Networks, and MEC Centers in Complex Environments: A Search and Rescue Case Study. Sensors 2021, 21, 7843. [Google Scholar] [CrossRef] [PubMed]

- Kimovski, D.; Mehran, N.; Kerth, C.E.; Prodan, R. Mobility-Aware IoT Applications Placement in the Cloud Edge Continuum. IEEE Trans. Serv. Comput. 2022, 15, 3358–3371. [Google Scholar] [CrossRef]

- Peltonen, E.; Sojan, A.; Paivarinta, T. Towards Real-time Learning for Edge-Cloud Continuum with Vehicular Computing. In Proceedings of the 2021 IEEE 7th World Forum on Internet of Things (WF-IoT), New Orleans, LA, USA, 14 June–31 July 2021; pp. 921–926. [Google Scholar]

- Mygdalis, V.; Carnevale, L.; Martinez-De-Dios, J.R.; Shutin, D.; Aiello, G.; Villari, M.; Pitas, I. OTE: Optimal Trustworthy EdgeAI Solutions for Smart Cities. In Proceedings of the 2022 22nd IEEE International Symposium on Cluster, Cloud and Internet Computing (CCGrid), Taormina, Italy, 16–19 May 2022; pp. 842–850. [Google Scholar]

- Hu, X.; Wong, K.; Zhang, Y. Wireless-Powered Edge Computing with Cooperative UAV: Task, Time Scheduling and Trajectory Design. IEEE Trans. Wirel. Commun. 2020, 19, 8083–8098. [Google Scholar] [CrossRef]

- Bacchiani, L.; De Palma, G.; Sciullo, L.; Bravetti, M.; Di Felice, M.; Gabbrielli, M.; Zavattaro, G.; Della Penna, R. Low-Latency Anomaly Detection on the Edge-Cloud Continuum for Industry 4.0 Applications: The SEAWALL Case Study. IEEE Internet Things Mag. 2022, 5, 32–37. [Google Scholar] [CrossRef]

- Wang, N.; Varghese, B. Context-aware distribution of fog applications using deep reinforcement learning. J. Netw. Comput. Appl. 2022, 203, 103354–103368. [Google Scholar] [CrossRef]

- Dobrescu, R.; Merezeanu, D.; Mocanu, S. Context-aware control and monitoring system with IoT and cloud support. Comput. Electron. Agric. 2019, 160, 91–99. [Google Scholar] [CrossRef]

- Zhao, X.; Yuan, P.; Li, H.; Tang, S. Collaborative Edge Caching in Context-Aware Device-to-Device Networks. IEEE Trans. Veh. Technol. 2018, 67, 9583–9596. [Google Scholar] [CrossRef]

- Tran, T.X.; Hajisami, A.; Pandey, P.; Pompili, D. Collaborative Mobile Edge Computing in 5G Networks: New Paradigms, Scenarios, and Challenges. IEEE Commun. Mag. 2017, 55, 54–61. [Google Scholar] [CrossRef]

- Lee, J.; Lee, J. Hierarchical Mobile Edge Computing Architecture Based on Context Awareness. Appl. Sci. 2018, 8, 1160. [Google Scholar] [CrossRef]

- Cheng, Z.; Gao, Z.; Liwang, M.; Huang, L.; Du, X.; Guizani, M. Intelligent Task Offloading and Energy Allocation in the UAV-Aided Mobile Edge-Cloud Continuum. IEEE Netw. 2021, 35, 42–49. [Google Scholar] [CrossRef]

- Rosenberger, P.; Gerhard, D. Context-awareness in Industrial Applications: Definition, Classification and Use Case. In Proceedings of the 51st Conference on Manufacturing Systems (CIRP), Stockholm, Sweden, 16–18 May 2018; pp. 1172–1177. [Google Scholar]

- Waharte, S.; Trigoni, N. Supporting Search and Rescue Operations with UAVs. In Proceedings of the 2010 International Conference on Emerging Security Technologies, Canterbury, UK, 6–7 September 2010; pp. 142–147. [Google Scholar]

- Sibanyoni, S.V.; Ramotsoela, D.T.; Silva, B.J.; Hancke, G.P. A 2-D Acoustic Source Localization System for Drones in Search and Rescue Missions. IEEE Sens. J. 2018, 19, 332–341. [Google Scholar] [CrossRef]

- Manamperi, W.; Abhayapala, T.D.; Zhang, J.; Samarasinghe, P.N. Drone Audition: Sound Source Localization Using On-Board Microphones. IEEE/ACM Trans. Audio Speech Lang. Process. 2022, 30, 508–519. [Google Scholar] [CrossRef]

- Sambolek, S.; Ivasic-Kos, M. Automatic Person Detection in Search and Rescue Operations Using Deep CNN Detectors. IEEE Access 2021, 9, 37905–37922. [Google Scholar] [CrossRef]

- Albanese, A.; Sciancalepore, V.; Costa-Perez, X. SARDO: An Automated Search-and-Rescue Drone-Based Solution for Victims Localization. IEEE Trans. Mob. Comput. 2021, 21, 3312–3325. [Google Scholar] [CrossRef]

- Queralta, J.P.; Taipalmaa, J.; Can Pullinen, B.; Sarker, V.K.; Nguyen Gia, T.; Tenhunen, H.; Gabbouj, M.; Raitoharju, J.; Westerlund, T. Collaborative Multi-Robot Search and Rescue: Planning, Coordination, Perception, and Active Vision. IEEE Access 2020, 8, 191617–191643. [Google Scholar] [CrossRef]

- Chen, X.; Zhang, H.; Lu, H.; Xiao, J.; Qiu, Q.; Li, Y. Robust SLAM System Based on Monocular Vision and LiDAR for Robotic Urban Search and Rescue. In Proceedings of the 2017 IEEE International Symposium on Safety, Security and Rescue Robotics (SSRR), Shanghai, China, 11–13 October 2017; pp. 41–47. [Google Scholar]

- Murphy, R.; Dreger, K.; Newsome, S.; Rodocker, J.; Slaughter, B.; Smith, R.; Steimle, E.; Kimura, T.; Makabe, K.; Kon, K.; et al. Marine Heterogeneous Multi-Robot Systems at the Great Eastern Japan Tsunami Recovery. J. Field Robot. 2012, 29, 819–831. [Google Scholar] [CrossRef]

- Silvagni, M.; Tonoli, A.; Zenerino, E.; Chiaberge, M. Multipurpose UAV for search and rescue operations in mountain avalanche events. Geomat. Nat. Hazards Risk 2016, 8, 18–33. [Google Scholar] [CrossRef]

- Konyo, M. Impact-TRC Thin Serpentine Robot Platform for Urban Search and Rescue. In Disaster Robotics; Springer: Cham, Switzerland, 2019; pp. 25–76. [Google Scholar]

- Han, S.; Chon, S.; Kim, J.; Seo, J.; Shin, D.G.; Park, S.; Kim, J.T.; Kim, J.; Jin, M.; Cho, J. Snake Robot Gripper Module for Search and Rescue in Narrow Spaces. IEEE Robot. Autom. Lett. 2022, 7, 1667–1673. [Google Scholar] [CrossRef]

- Liu, K.; Zhou, X.; Zhao, B.; Ou, H.; Chen, B.M. An Integrated Visual System for Unmanned Aerial Vehicles Following Ground Vehicles: Simulations and Experiments. In Proceedings of the 2022 IEEE 17th International Conference on Control & Automation (ICCA), Naples, Italy, 27–30 June 2022; pp. 593–598. [Google Scholar]

- Jorge, V.A.M.; Granada, R.; Maidana, R.G.; Jurak, D.A.; Heck, G.; Negreiros, A.P.F.; dos Santos, D.H.; Gonçalves, L.M.G.; Amory, A.M. A Survey on Unmanned Surface Vehicles for Disaster Robotics: Main Challenges and Directions. Sensors 2019, 19, 702. [Google Scholar] [CrossRef] [PubMed]

- Mezghani, F.; Mitton, N. Opportunistic disaster recovery. Internet Technol. Lett. 2018, 1, e29. [Google Scholar] [CrossRef]

- Mezghani, F.; Kortoci, P.; Mitton, N.; Di Francesco, M. A Multi-tier Communication Scheme for Drone-assisted Disaster Recovery Scenarios. In Proceedings of the 2019 IEEE 30th Annual International Symposium on Personal, Indoor and Mobile Radio Communications (PIMRC), Istanbul, Turkey, 8–11 September 2019; pp. 1–7. [Google Scholar]

- Jeong, I.C.; Bychkov, D.; Searson, P.C. Wearable Devices for Precision Medicine and Health State Monitoring. IEEE Trans. Biomed. Eng. 2018, 66, 1242–1258. [Google Scholar] [CrossRef]

- Kasnesis, P.; Doulgerakis, V.; Uzunidis, D.; Kogias, D.; Funcia, S.; González, M.; Giannousis, C.; Patrikakis, C. Deep Learning Empowered Wearable-Based Behavior Recognition for Search and Rescue Dogs. Sensors 2022, 22, 993. [Google Scholar] [CrossRef]

- Arkin, R.; Balch, T. Cooperative Multiagent Robotic Systems. In Artificial Intelligence and Mobile Robots; Kortenkamp, D., Bonasso, R.P., Murphy, R., Eds.; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Rocha, R.; Dias, J.; Carvalho, A. Cooperative multi-robot systems: A study of vision-based 3-D mapping using information theory. Robot. Auton. Syst. 2005, 53, 282–311. [Google Scholar] [CrossRef]

- Singh, A.; Krause, A.; Guestrin, C.; Kaiser, W.J. Efficient Informative Sensing using Multiple Robots. J. Artif. Intell. Res. 2009, 34, 707–755. [Google Scholar] [CrossRef]

- Schmid, L.M.; Pantic, M.; Khanna, R.; Ott, L.; Siegwart, R.; Nieto, J. An Efficient Sampling-Based Method for Online Informative Path Planning in Unknown Environments. IEEE Robot. Autom. Lett. 2020, 5, 1500–1507. [Google Scholar] [CrossRef]

- Fung, N.; Rogers, J.; Nieto, C.; Christensen, H.; Kemna, S.; Sukhatme, G. Coordinating Multi-Robot Systems Through Environment Partitioning for Adaptive Informative Sampling. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019. [Google Scholar]

- Hawes, N.; Burbridge, C.; Jovan, F.; Kunze, L.; Lacerda, B.; Mudrova, L.; Young, J.; Wyatt, J.; Hebesberger, D.; Kortner, T.; et al. The STRANDS Project: Long-Term Autonomy in Everyday Environments. IEEE Robot. Autom. Mag. 2017, 24, 146–156. [Google Scholar]

- Singh, A.; Krause, A.; Guestrin, C.; Kaiser, W.; Batalin, M. Efficient Planning of Informative Paths for Multiple Robots. In Proceedings of the 20th International Joint Conference on Artificial Intelligence, Hyderabad, India, 6–12 January 2007. [Google Scholar]

- Ma, K.; Ma, Z.; Liu, L.; Sukhatme, G.S. Multi-robot Informative and Adaptive Planning for Persistent Environmental Monitoring. In Proceedings of the 13th International Symposium on Distributed Autonomous Robotic Systems, DARS, Montbéliard, France, 28–30 November 2016. [Google Scholar]

- Manjanna, S.; Dudek, G. Data-driven selective sampling for marine vehicles using multi-scale paths. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017. [Google Scholar]

- Salam, T.; Hsieh, M.A. Adaptive Sampling and Reduced-Order Modeling of Dynamic Processes by Robot Teams. IEEE Robot. Autom. Lett. 2019, 4, 477–484. [Google Scholar] [CrossRef]

- Euler, J.; Von Stryk, O. Optimized Vehicle-Specific Trajectories for Cooperative Process Estimation by Sensor-Equipped UAVs. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May 2017–3 June 2017. [Google Scholar]

- Gonzalez-De-Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Lopez-Granados, F.; Brandstoetter, M.; Tomic, S.; Pedrazzi, S.; Peruzzi, A.; Pajares, G.; Kaplanis, G.; et al. Fleets of robots for environmentally-safe pest control in agriculture. Precis. Agric. 2016, 18, 574–614. [Google Scholar] [CrossRef]

- Tourrette, T.; Deremetz, M.; Naud, O.; Lenain, R.; Laneurit, J.; De Rudnicki, V. Close Coordination of Mobile Robots Using Radio Beacons: A New Concept Aimed at Smart Spraying in Agriculture. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 7727–7734. [Google Scholar]

- Merino, L.; Caballero, F.; Martinez-de-Dios, J.R.; Maza, I.; Ollero, A. An Unmanned Aerial System for Automatic Forest Fire Monitoring and Measurement. J. Intell. Robot. Syst. 2012, 65, 533–548. [Google Scholar] [CrossRef]

- Haksar, R.N.; Trimpe, S.; Schwager, M. Spatial Scheduling of Informative Meetings for Multi-Agent Persistent Coverage. IEEE Robot. Autom. Lett. 2020, 5, 3027–3034. [Google Scholar] [CrossRef]

- Cadena, C.; Carlone, L.; Carrillo, H.; Latif, Y.; Scaramuzza, D.; Neira, J.; Reid, I.; Leonard, J.J. Past, Present, and Future of Simultaneous Localization and Mapping: Toward the Robust-Perception Age. IEEE Trans. Robot. 2016, 32, 1309–1332. [Google Scholar] [CrossRef]

- Bresson, G.; Alsayed, Z.; Yu, L.; Glaser, S. Simultaneous Localization and Mapping: A Survey of Current Trends in Autonomous Driving. IEEE Trans. Intell. Veh. 2017, 2, 194–220. [Google Scholar] [CrossRef]

- De Jesus, K.J.; Kobs, H.J.; Cukla, A.R.; De Souza Leite Cuadros, M.A.; Tello Gamarra, D.F. Comparison of Visual SLAM Algorithms ORB-SLAM2, RTAB-Map and SPTAM in Internal and External Environments with ROS. In Proceedings of the 2021 Latin American Robotics Symposium (LARS), 2021 Brazilian Symposium on Robotics (SBR), and 2021 Workshop on Robotics in Education (WRE), Natal, Brazil, 11–15 October 2021. [Google Scholar]

- Benavidez, P.; Muppidi, M.; Rad, P.; Prevost, J.J.; Jamshidi, M.; Brown, L. Cloud-based Real Time Robotic Visual SLAM. In Proceedings of the 2015 Annual IEEE Systems Conference (SysCon) Proceedings, Vancouver, BC, Canada, 13–16 April 2015. [Google Scholar]

- Wu, J.; Wang, S.; Chiclana, F.; Herrera-Viedma, E. Two-Fold Personalized Feedback Mechanism for Social Network Consensus by Uninorm Interval Trust Propagation. IEEE Trans. Cybern. 2022, 52, 11081–11092. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Militano, L.; Arteaga, A.; Toffetti, G.; Mitton, N. The Cloud-to-Edge-to-IoT Continuum as an Enabler for Search and Rescue Operations. Future Internet 2023, 15, 55. https://doi.org/10.3390/fi15020055

Militano L, Arteaga A, Toffetti G, Mitton N. The Cloud-to-Edge-to-IoT Continuum as an Enabler for Search and Rescue Operations. Future Internet. 2023; 15(2):55. https://doi.org/10.3390/fi15020055

Chicago/Turabian StyleMilitano, Leonardo, Adriana Arteaga, Giovanni Toffetti, and Nathalie Mitton. 2023. "The Cloud-to-Edge-to-IoT Continuum as an Enabler for Search and Rescue Operations" Future Internet 15, no. 2: 55. https://doi.org/10.3390/fi15020055

APA StyleMilitano, L., Arteaga, A., Toffetti, G., & Mitton, N. (2023). The Cloud-to-Edge-to-IoT Continuum as an Enabler for Search and Rescue Operations. Future Internet, 15(2), 55. https://doi.org/10.3390/fi15020055