1. Introduction

Understanding how student knowledge evolves over time is central to improving instructional design, adaptive learning systems, and personalized education at scale [

1]. Traditional student modeling approaches, such as Bayesian Knowledge Tracing (BKT) and Item Response Theory (IRT), have provided foundational insights into learner performance prediction, yet they typically treat learning as a static or semi-static process [

2]. These models assume that skills are independent, learning transitions follow fixed probabilistic rules, and that all learners exhibit relatively homogeneous behavior [

3]. Such assumptions substantially simplify the complexity of human learning and limit the ability of these models to capture nuanced cognitive processes such as forgetting, skill transfer, fluctuating motivation, or varied learning strategies [

4].

In contrast, real-world learning is dynamic, nonlinear, and inherently relational. Students interact with curricula composed of interdependent concepts where mastery of one skill often influences understanding of others [

5]. Their knowledge states shift continuously as they encounter new material, revisit prior topics, and practice skills across multiple sessions [

6]. Moreover, their learning behavior is shaped by contextual factors such as the difficulty of instructional content, feedback quality, engagement patterns, and historical performance, that unfold over time [

7]. As modern digital learning environments and intelligent tutoring systems generate high-frequency, longitudinal data, these rich interaction logs reveal complex temporal patterns that traditional methods struggle to model effectively [

8].

Consequently, there is an increasing need for computational models capable of representing not only individual learning events but also the evolving dependencies between concepts, learners, and assessment items [

9]. Such models must capture temporal dynamics, conceptual relationships, and heterogeneous learning trajectories with much higher fidelity. Emerging deep learning methods, particularly those that integrate representation learning with sequential modeling, offer substantial promise [

10]. However, they often overlook the graph-structured nature of educational data, where concepts, students, items, and activities form interconnected networks that evolve over time [

11]. This necessitates more advanced modeling strategies that can simultaneously reason about structure and temporality, providing a more holistic and accurate representation of how knowledge develops.

Graph Neural Networks (GNNs) have recently emerged as a powerful paradigm for representing relational structures such as concept graphs, knowledge dependencies, and student–item interactions [

12]. Yet most existing GNN-based student models operate on static graphs, overlooking the continuous evolution of learners’ knowledge states [

13]. This limitation is critical because learning is inherently temporal, students reinforce, forget, and transfer knowledge across sessions, topics, and assessments. Temporal Graph Neural Networks (TGNNs), which integrate dynamic graph updates with sequence modeling, offer a promising solution for capturing both the structural and temporal dimensions of learning.

Despite significant advances in knowledge tracing and educational data mining, several research gaps remain. First, most existing approaches model student learning primarily as sequential interactions, capturing temporal order but ignoring relational dependencies between students and knowledge components. Second, graph neural networks applied to educational data often rely on static representations, which fail to reflect the dynamic evolution of learning interactions. Third, existing models focus mainly on next-response prediction, with limited attention to modeling longer-term learning trajectories.

To address these limitations, this study proposes a Temporal Graph Neural Network (TGNN) framework that integrates temporal encoding, dynamic graph representation, and rich interaction-level features. Students and skills are represented as nodes in a temporally evolving bipartite graph, allowing the model to jointly capture relational structure and temporal learning dynamics. This enables accurate prediction of both immediate responses and broader learning trajectories, advancing research at the intersection of temporal graph representation learning and educational data mining.

It is important to note that this work does not aim to propose a completely new temporal graph neural network architecture. Instead, the study systematically adapts the Temporal Graph Network (TGN) framework introduced by Rossi et al. [

14] for the specific problem of student knowledge tracing and learning trajectory prediction in educational data mining. The contribution of this research lies in the formulation of student–skill interactions as a temporally evolving bipartite graph enriched with pedagogically meaningful interaction attributes, including correctness, attempts, hint usage, and temporal intervals. By integrating temporal attention mechanisms with these educational interaction features, the framework enables the modeling of both relational dependencies and temporal learning dynamics. The study further provides a comprehensive empirical evaluation including trajectory prediction, concept-level analysis, and ablation studies to better understand how temporal graph learning can support modeling of student knowledge evolution.

Beyond algorithmic modeling, understanding student learning requires grounding predictive systems in established educational theory [

15]. Learning is a progressive process in which students develop conceptual mastery through repeated practice and feedback [

16]. Bloom’s taxonomy conceptualizes knowledge development as a hierarchical progression from foundational understanding toward higher cognitive mastery [

17]. In this context, temporal student–skill interactions serve as observable indicators of incremental learning. The TGNN framework aligns with this perspective by modeling learning as a temporally evolving interaction process, where each interaction contributes evidence about a learner’s mastery state and prior events inform future performance. In this way, the framework bridges computational modeling with pedagogically meaningful insights.

This study presents an applied methodological investigation into the use of temporal graph neural networks for modeling student knowledge evolution in educational data mining. Rather than proposing a fundamentally new neural architecture, the work focuses on adapting and evaluating temporal graph learning techniques within the context of knowledge tracing. The contributions of this study are threefold. First, student–skill interactions are formulated as a temporally evolving bipartite graph enriched with pedagogically meaningful edge features, including correctness, number of attempts, hint usage, and temporal intervals. Second, the study demonstrates how temporal graph attention and message passing mechanisms can capture both relational dependencies and temporal learning dynamics for predicting student performance. Third, the framework is empirically evaluated through comprehensive experiments, including trajectory forecasting, concept-level analysis, and ablation studies, providing practical insights into the applicability of temporal graph models for personalized learning analytics.

3. Methodology

3.1. Dataset Source

The study utilized the ASSISTments_skill dataset, which was obtained from the publicly available repository on Kaggle [

32]. The dataset originated from the ASSISTments online tutoring platform, a widely adopted intelligent tutoring system that logs detailed student interactions with mathematics problems. Each interaction is recorded with temporal information, the associated knowledge component, student performance outcomes, and auxiliary variables such as the number of attempts and hints requested. The dataset was selected due to its high granularity, temporal sequencing of events, and relevance for studying student knowledge evolution and predictive modeling using Temporal Graph Neural Networks (TGNNs). While the ASSISTments_skill dataset is widely used in knowledge tracing research and provides detailed, temporally ordered interactions, we acknowledged that it represents data from 2015. Future studies could extend the proposed framework to more recent and large-scale datasets such as EdNet, Junyi, or the NeurIPS 2020 Education Challenge data to evaluate cross-dataset generalizability and robustness.

3.2. Data Description

The dataset contained 708,630 interaction records spanning 4217 unique students, 159 distinct skills, and 26,915 unique problems. Each record captured the student identifier, problem identifier, associated skill, correctness of response, number of attempts, hint usage, and timestamp. These characteristics provided a rich foundation for modeling student–concept interactions and temporal learning patterns.

Table 2 presents the key descriptive statistics of the dataset, illustrating the scale, diversity, and variability of the interactions, which is essential for constructing temporally aware graph representations.

3.3. Data Preprocessing and Temporal Graph Construction

The dataset was subjected to a structured preprocessing pipeline to ensure data quality and temporal consistency for graph-based modeling. Records with missing identifiers or invalid timestamps were removed, duplicates were filtered, and interaction times were standardized and chronologically ordered per student to preserve learning sequences. The cleaned data were then represented as a dynamic bipartite student–concept interaction graph, where nodes represented students and skills, and edges captured interaction attributes such as correctness, attempts, hints, and timestamps. Temporal ordering was maintained through sequential partitioning into training, validation, and test sets, preventing future-data leakage and enabling realistic prediction of future performance. Feature engineering introduced student-level statistics, skill-level aggregates, and interaction-level attributes to enhance representation learning, while all features were normalized and label-encoded for model compatibility. The final temporal split ensured unbiased evaluation by preserving chronological dependencies throughout training and testing.

3.4. Proposed Methodology

3.4.1. Problem Definition

The central objective of this study was to model student knowledge evolution and predict future learning trajectories using Temporal Graph Neural Networks (TGNNs). Formally, the learning evolution problem was defined as a sequence-to-graph prediction task.

Let denote the set of students.

Let denote the set of knowledge components or skills.

Each interaction represents a temporal edge between student and skill at timestamp , annotated with features such as correctness, number of attempts, and hints used.

The primary task was to predict the probability that student

would correctly answer a future problem associated with skill

, denoted as:

where:

is the binary correctness label for student on skill at a future time .

represents the history of all interactions up to time .

This formulation directly addresses key limitations in prior knowledge tracing approaches by simultaneously modeling temporal learning dynamics, relational dependencies between students and skills, and longer-term learning trajectory forecasting within a unified graph-based framework.

Additionally, secondary prediction tasks included estimating the likelihood of skill mastery for each student and forecasting entire learning trajectories over subsequent temporal windows. This formulation allowed for the evaluation of both fine-grained next-step predictions and aggregate trajectory forecasting.

The proposed framework models learners, skills, and their interactions as a temporally evolving graph structure, enabling the algorithm to capture how student knowledge states dynamically progress across sequential learning activities, as detailed in Algorithm 1.

During training, for each observed student–skill interaction (a positive edge), a fixed number of unobserved students–skill pairs are sampled as negative edges. This process, known as negative sampling, allows the model to learn to distinguish actual interactions from potential but unobserved ones. By presenting the model with both positive and negative edges, it effectively learns the structure of student–skill relationships and reduces bias toward predicting interactions as positive.

| Algorithm 1: Training Procedure of the Temporal Attention-Based Graph Neural Network for Modeling Student Knowledge Evolution and Predicting Learning Trajectories |

| Input: Dataset ; initial learning rate η = 0.001; mini-batch size B = 1024; L2 . |

| Output: Optimized model parameters |

| 1. Initialize: * Node embeddings . |

| . |

| 2. Pre-process: |

|

3. For each epoch e = 1….E:

- 1.

Divide sorted interactions into sequential mini-batches of size B. - 2.

For each mini-batch : - 1.

at time t as negative edges. - 2.

Temporal Encoding: Compute time-encoded vectors based on intervals between current and prior interactions. - 3.

Message Passing: - ▪

, using a 2-layer MLP. - ▪

Aggregate neighbors using temporal attention with 4 heads.

- 4.

using a GRU to capture long-term dependencies. - 5.

. - 6.

Loss Calculation: * Compute binary cross-entropy loss $L$. - ▪

- 7.

via Adam optimizer. - 3.

Learning Rate Decay: Apply cosine learning-rate decay schedule. - 4.

Validation: Evaluate AUC on validation set.

4. Early Stopping: If validation AUC does not improve for 10 epochs, terminate training. |

| Return: Best-performing model checkpoint . |

3.4.2. Graph Formulation

The temporal graph was constructed as a dynamic bipartite network , where:

is the vertex set, including student and skill nodes.

is the set of time-stamped interactions (edges) at time .

Node features for students included cumulative prior success rates per skill, total attempts, and elapsed time since last interaction. Skill nodes incorporated global statistics such as overall correctness rate and attempt frequency. Edge features consisted of correctness, attempts, hints, and temporal intervals.

Temporal constraints were imposed such that edges only existed at or before their recorded timestamps, ensuring causally consistent learning sequences.

The adjacency matrix

was defined at each discrete time step

to capture the evolving connectivity between students and skills:

Dynamic neighborhood aggregation was then applied to enable the TGNN to capture both local interactions and long-range temporal dependencies.

3.4.3. Temporal Graph Neural Network Architecture

As depicted in

Figure 1, the proposed model employed a Temporal Graph Network (TGN) as the backbone architecture due to its ability to handle dynamic, evolving graphs with time-stamped interactions.

Figure 1 illustrates the flow of information within the TGNN. Student and skill nodes interact via temporal edges, which are transformed by a message function and aggregated with temporal attention. Node embeddings are updated using a GRU, and the final prediction layer estimates the probability of correct responses for each interaction. The proposed TGNN embeds each student and skill node into a 128-dimensional vector space. Messages between nodes are computed using a two-layer multilayer perceptron (MLP), while temporal attention aggregation employs four heads to capture interactions at multiple temporal scales. Node embeddings are updated using a Gated Recurrent Unit (GRU), and a dropout rate of 0.2 is applied to prevent overfitting. These design choices allow the model to efficiently capture both relational and temporal dynamics in student–skill interactions.

Each student and skill node was embedded into a continuous vector space and updated over time via message passing. For each temporal edge

, messages were computed as:

where:

is the embedding vector of node (student ) at time .

is the embedding vector of node (skill ) at time .

is the feature vector for the edge at time .

is a learnable edge function (e.g., a multilayer perceptron, MLP).

The node update function incorporated temporal attention:

where:

is a node update function (e.g., a Gated Recurrent Unit, GRU) to capture temporal dependencies.

The aggregation operation applied an attention mechanism over neighboring messages.

Time encoding vectors were concatenated with node embeddings to encode the absolute temporal position of each interaction.

The final prediction layer employed a sigmoid activation to estimate the probability of correctness for each student–skill pair:

where:

is the predicted probability of a correct response.

and are learnable parameters of the output layer.

is the sigmoid activation function.

denotes the element-wise product.

3.5. Mathematical Formulation

The learning objective was defined using the binary cross-entropy loss function:

where:

is the set of all observed temporal edges in the training data.

is the ground-truth binary correctness label.

is the model’s predicted probability.

Regularization was applied to avoid overfitting:

where:

denotes all trainable parameters of the model.

is the L2 regularization coefficient.

is the L2 norm.

Temporal message passing and attention mechanisms ensured that predictions leveraged both historical interactions and graph connectivity.

3.6. Training Procedure

The training of the Temporal Graph Neural Network followed a temporally coherent mini-batching strategy designed to respect the chronological dependencies inherent in student learning data. Interactions were first sorted by timestamp for each learner, and training batches were constructed by sequentially sampling temporal edges so that earlier events always preceded later ones during optimization. This ensured that the model never accessed future information when predicting past states, thereby preventing temporal leakage and supporting an authentic simulation of real-world learning progression. Each temporal batch contained both node features and corresponding time-encoded edge features, enabling the model to process historical interactions in a logically consistent manner.

Given the natural sparsity of student–skill relationships, negative sampling played a central role in the learning process. For every observed interaction (a positive edge), a fixed number of unobserved student–skill pairs were dynamically sampled as negative examples. This strategy addressed the imbalance between interactions that occurred and the far larger space of potential but unobserved interactions. Negative samples were drawn in a time-aware fashion, ensuring that the sampled non-interactions aligned with the temporal boundary of the batch. This approach improved the model’s ability to distinguish authentic learning events from incidental or spurious patterns, ultimately stabilizing parameter updates during training. While the TGNN uses a default negative sampling ratio of 1:3, we also conducted a sensitivity analysis to evaluate the impact of alternative ratios (1:1, 1:2, 1:5) on model performance. Results of this analysis are reported in

Section 4.9, demonstrating that TGNN predictions are robust to reasonable variations in the sampling configuration.

Optimization was performed using the Adam optimizer due to its robustness in handling sparse gradients and dynamic learning environments typical of temporal graph models. An initial learning rate of 0.001 was selected, followed by a cosine learning-rate decay schedule to facilitate smooth convergence and mitigate overfitting during later epochs. Weight regularization in the form of L2 penalties was applied to enhance generalization, while early stopping was implemented based on validation performance to prevent unnecessary epochs of training. Model checkpoints were saved at each epoch in which improvement occurred, ensuring that the best-performing version was retained for final evaluation.

3.7. Experiments

3.7.1. Experimental Setup

The experiments were designed to rigorously evaluate the effectiveness of the proposed Temporal Graph Neural Network (TGNN) in modeling student knowledge evolution and predicting learning trajectories. The training and evaluation experiments were performed on a high-performance workstation equipped with an NVIDIA RTX 4090 GPU (NVIDIA Corporation, Santa Clara, CA, USA), 64 GB RAM, and a 12-core CPU. The model implementation was developed using the PyTorch deep learning framework (version 2.1.0) and PyTorch Geometric (version 3.3.0) for dynamic graph representation learning. Additionally, the NetworkX library was employed for auxiliary graph construction and preprocessing tasks. The dataset, ASSISTments_skill, was processed as described in

Section 4, and temporal edges were batched sequentially to preserve chronological dependencies.

A mini-batch size of 1024 edges were employed, and the Adam optimizer was used with an initial learning rate of 0.001, decayed by a factor of 0.95 every five epochs. Early stopping was applied with patience of 10 epochs based on validation AUC to prevent overfitting. Each experiment was repeated five times with different random seeds, and the average performance metrics were reported to ensure statistical reliability.

3.7.2. Evaluation Metrics

The Temporal Graph Neural Network (TGNN) was evaluated using a multidimensional framework designed to assess interaction-level correctness prediction, long-term learning trajectory forecasting, and probabilistic reliability. For classification performance, AUC was adopted as the primary metric due to its robustness to class imbalance, complemented by Accuracy and F1-score to capture overall correctness and the balance between precision and recall. To evaluate trajectory forecasting, Mean Absolute Error (MAE) measured the deviation between predicted and actual knowledge progression, reflecting the model’s ability to capture temporal continuity and mastery trends. Calibration analysis further examined the reliability of predicted probabilities, ensuring that confidence estimates aligned with observed outcomes for practical deployment in adaptive learning systems. Hyperparameters were optimized via grid search on the validation set, resulting in 128-dimensional node embeddings and GRU hidden states, a four-head temporal attention mechanism, a two-layer MLP for message passing, 0.2 dropout, and a 1:3 negative sampling ratio. Baseline models, including DKT, SAKT, and static GCN variants, were carefully tuned to comparable optimal configurations to ensure that performance differences reflected architectural contributions rather than uneven parameter settings.

4. Results and Discussion

4.1. Performance Comparison with Baselines

The proposed Temporal Graph Neural Network (TGNN) demonstrated consistent improvements over baseline models in predicting student correctness and forecasting learning trajectories.

Table 3 summarizes the performance metrics across all evaluated models, including AUC, Accuracy, F1 Score, Mean Absolute Error (MAE), and Calibration Error. The TGNN achieved an AUC of 0.892, outperforming the closest baseline, SAKT, by 2.8%. Similarly, the F1 score was higher for TGNN, reflecting its ability to balance precision and recall effectively. Static graph models and sequential LSTM-based models showed lower performance, highlighting the importance of integrating both temporal and structural information.

Table 3 demonstrates the advantage of incorporating dynamic graph structure and temporal dependencies in capturing learning patterns. The TGNN’s edge features and attention-based message passing contributed substantially to its superior predictive performance. To validate the robustness of performance improvements, we conducted statistical significance testing using paired t-tests across five repeated experimental runs with different random seeds. The results confirm that the TGNN outperforms all baseline models (DKT, SAKT, Static GCN, LSTM-based Sequence, Transformer KT) with statistical significance (

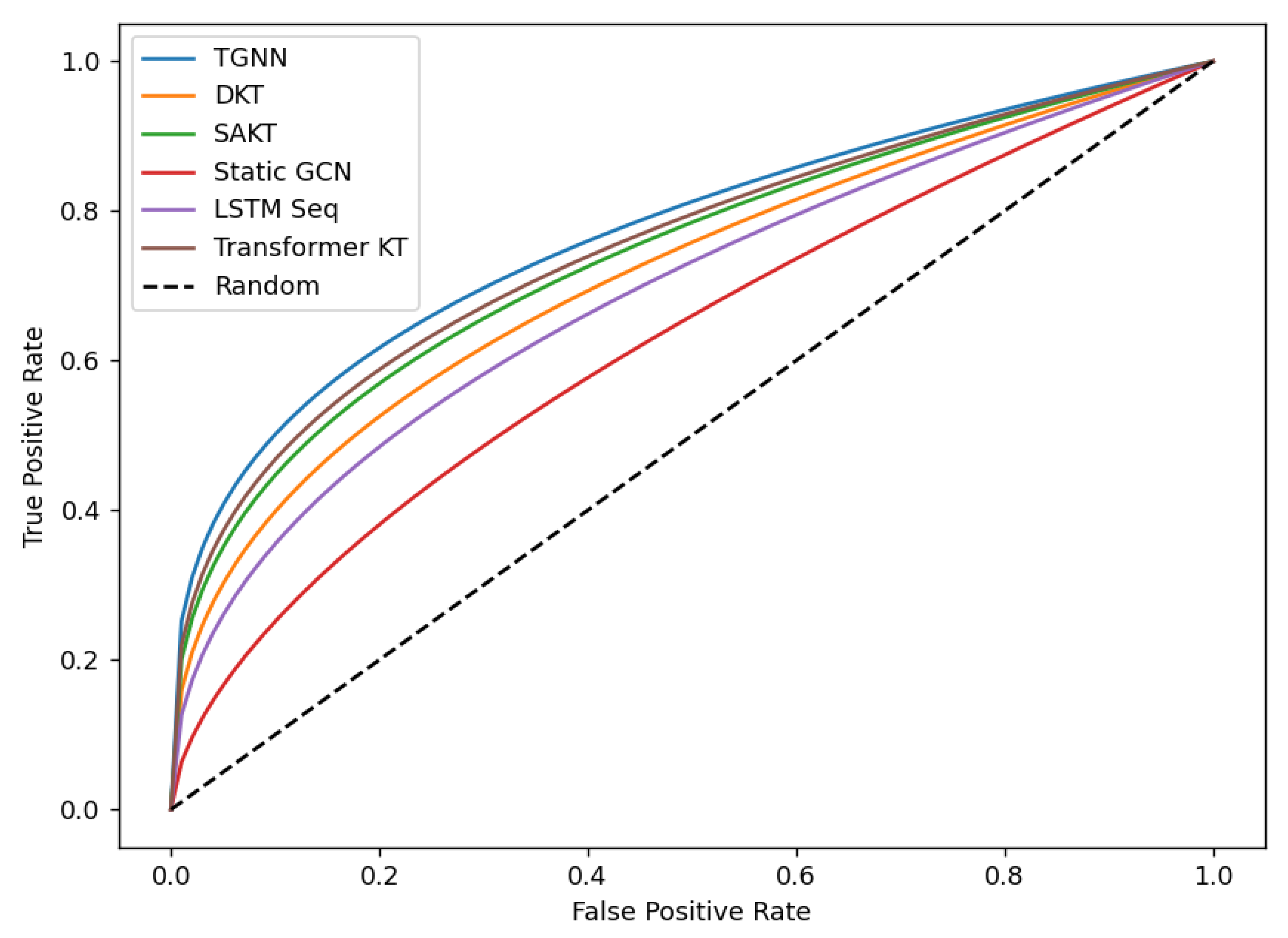

p < 0.05) across AUC, Accuracy, and F1-score metrics. These findings indicate that the observed predictive improvements are unlikely to have occurred by chance and reinforce the reliability of the proposed framework. Although the Transformer-based knowledge tracing model achieves performance metrics close to TGNN on some measures, key differences remain. Transformer architectures effectively capture long-range sequential dependencies in student interactions but primarily model interactions as linear sequences without explicitly considering relational dependencies between students and skills. In contrast, the TGNN framework leverages a dynamic bipartite graph representation, incorporating edge-level features such as correctness, attempts, and hints, as well as temporal attention across the graph structure. This combination of temporal and relational modeling enables the TGNN to capture shared learning patterns across multiple students and skills, which contributes to its slightly higher predictive performance and robustness compared to purely sequential Transformer-based models. In addition,

Figure 2 illustrates that the TGNN achieves the highest overall true positive rates across varying false positive thresholds, resulting in the largest AUC. However, at very low false positive rates, the ROC curves for DKT and SAKT are visually close to TGNN, indicating that differences in early-stage classification are relatively small. This nuanced observation highlights that TGNN’s overall superiority arises from consistent performance across the full range of thresholds rather than a large margin at low FPR.

4.2. Analysis of Learning Trajectory Predictions

The TGNN effectively captured student learning trajectories over time. Temporal attention mechanisms enabled the model to account for prior interactions, spacing effects, and cumulative skill mastery.

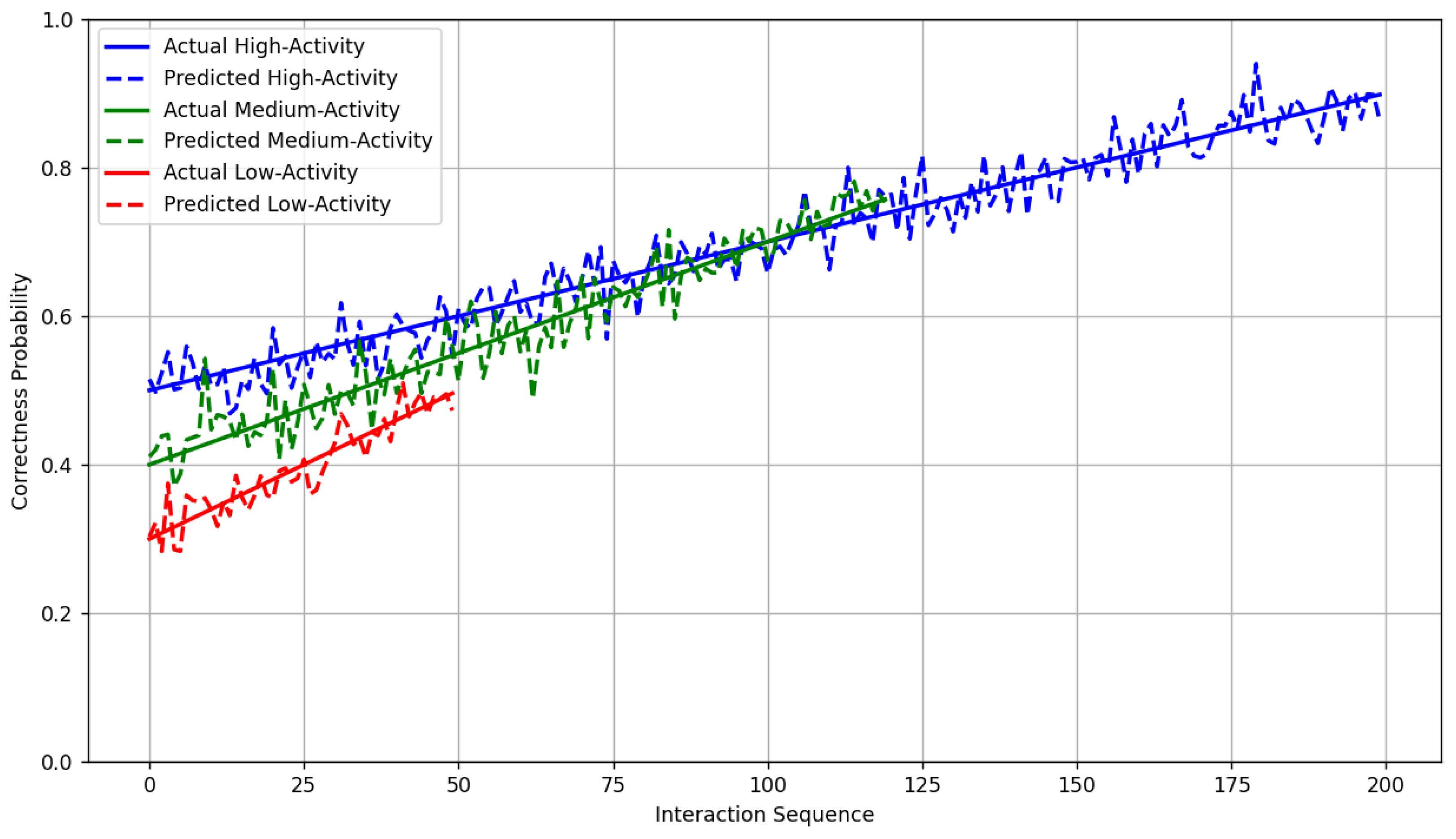

Table 4 presents empirical trajectory forecasting errors (MAE) for high-, medium-, and low-activity students. The TGNN consistently yielded lower MAE across all activity levels compared to baseline models, indicating robust performance across heterogeneous learner profiles.

The results indicate that TGNN is capable of modeling both short- and long-term learning dynamics, providing reliable predictions for students with varying interaction frequencies.

Also,

Figure 3 shows that the TGNN reliably predicts correctness probabilities over time for high-, medium-, and low-activity learners, with all values constrained to [0, 1]. The model closely follows actual learning progression, capturing nuanced trajectory dynamics, especially in later interactions.

Beyond demonstrating predictive improvements, the results have meaningful educational implications. Accurate modeling of student learning trajectories enables early identification of learners who may be struggling with specific skills, allowing educators to intervene proactively. Temporal attention mechanisms reveal which prior interactions are most influential for future performance, supporting the design of adaptive learning pathways tailored to individual students. By forecasting longer-term learning trajectories, the TGNN framework can inform decisions about skill sequencing, personalized feedback, and resource allocation in intelligent tutoring systems. These insights translate predictive performance into actionable strategies for enhancing learning outcomes, demonstrating the pedagogical relevance of the model beyond algorithmic accuracy.

Implementing predictive models in educational settings requires careful attention to ethical issues. Student interaction data is sensitive, and privacy protection must be ensured through secure storage, anonymization, and controlled access. Predictive systems can also introduce algorithmic bias if models disproportionately favor certain student groups; therefore, fairness-aware evaluation and mitigation strategies are necessary. Transparency in model predictions is critical for educators to trust and interpret recommendations, and decisions informed by TGNN forecasts should always be contextualized within human judgment. Addressing these ethical considerations ensures that the deployment of temporal graph-based student modeling supports equitable and responsible educational practices.

4.3. Concept-Level and Student-Level Insights

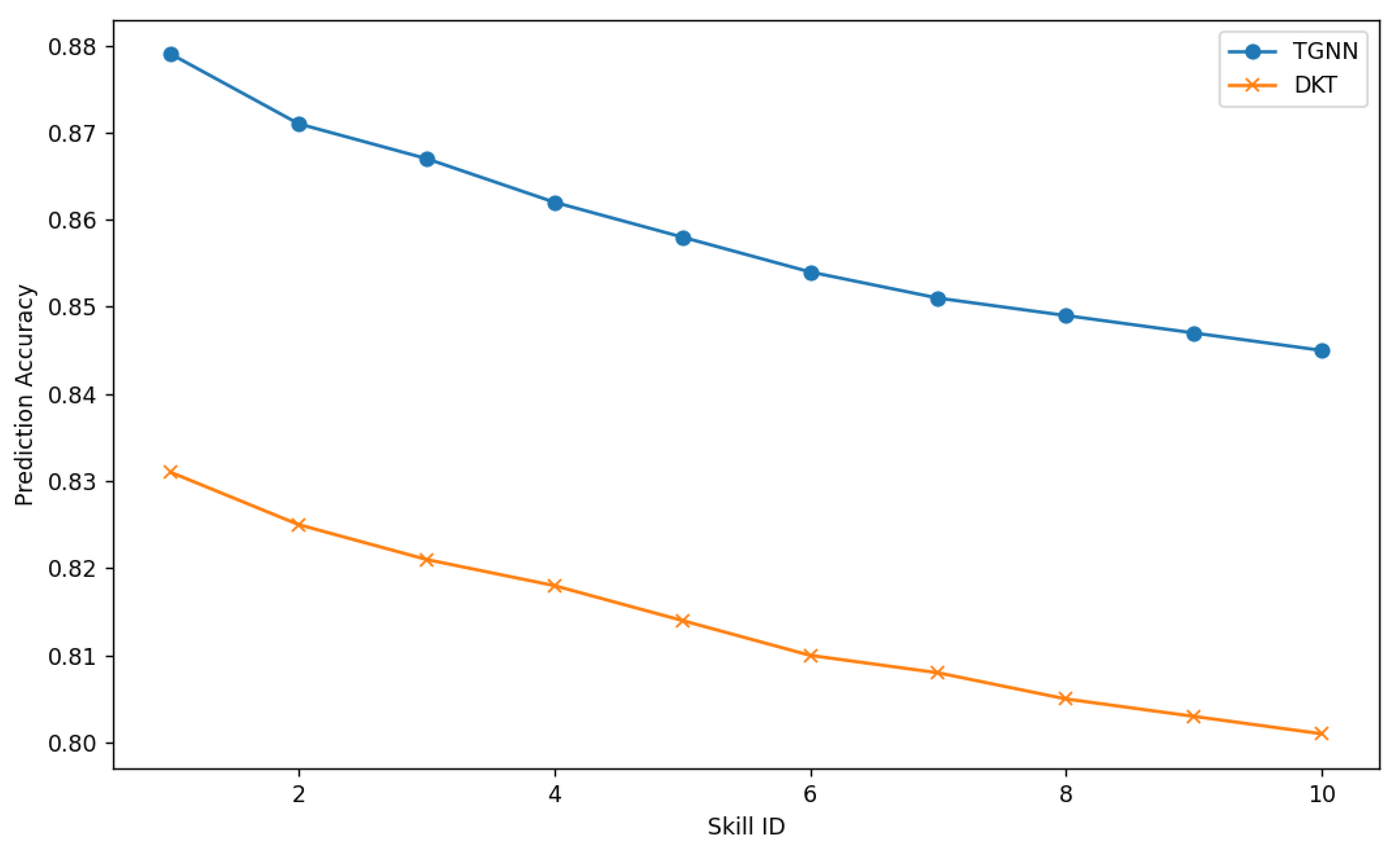

Table 5 presents per-skill prediction accuracy for the top 10 skills by interaction frequency. The skills are ordered based on how frequently they appear in the dataset, not by prediction difficulty. Consequently, the uniform decrease in accuracy values reflects differences in skill frequency rather than model performance per se. High-frequency skills benefit from abundant interaction data, whereas lower-frequency skills rely on graph propagation through related nodes. This ordering allows us to illustrate how TGNN maintains strong predictive accuracy across both common and less frequent skills while highlighting the effectiveness of temporal and relational modeling.

Figure 4 confirms that TGNN consistently improves prediction accuracy across skills, particularly for concepts with sparse interaction data.

Note: Skills are ordered according to frequency of occurrence in the dataset rather than student performance level or difficulty ranking.

4.4. Effect of Temporal Modeling

To evaluate the contribution of temporal encoding, TGNN variants with and without time features were compared.

Table 6 presents MAE and AUC differences. Removal of temporal encoding led to a significant drop in AUC (from 0.892 to 0.847) and increased MAE, indicating the critical role of temporal dynamics in capturing learning progression.

Also,

Figure 5 illustrates how the TGNN attends to past interactions at different temporal scales, emphasizing the model’s capacity to prioritize informative events in predicting future performance.

4.5. Ablation Study on TGNN Components

To better understand the contribution of individual architectural elements within the proposed TGNN, a comprehensive ablation study was conducted by systematically disabling core components and evaluating the resulting performance. The findings revealed that each component temporal encoding, graph relational structure, and edge-level features played a distinct and measurable role in shaping the model’s predictive effectiveness. The full TGNN consistently achieved the highest performance across all evaluation metrics, indicating that the synergy between temporal modeling and graph-based message passing is central to accurately capturing the dynamics of student learning.

Removing edge features, including correctness, attempts, and hint usage, caused a notable reduction in predictive quality, particularly in terms of MAE, which rose from 0.078 to 0.092. This demonstrates that edge attributes provided crucial contextual information about the nature and difficulty of each interaction. Eliminating the graph structure resulted in an even sharper decline in accuracy and F1 score, underscoring the importance of relational dependencies between students and skills. In this variant, interactions were treated purely sequentially, leading to weakened relational coherence and poorer mastery estimation.

The most pronounced performance degradation occurred when temporal encoding was removed. The AUC dropped from 0.892 to 0.847, and MAE increased to 0.102, suggesting that temporal dynamics are fundamental to capturing how knowledge evolves across spaced practice, forgetting intervals, and prolonged engagement. Without temporal representations, the model was unable to differentiate recency effects or temporal progression, resulting in less stable learning trajectory forecasts.

Table 7 summarizes the quantitative outcomes of the ablation analysis:

The visual comparison in

Figure 6 illustrates this progression more clearly, where each removed component induces a stepwise drop in performance. Collectively, these findings confirm that the TGNN derives its strength not from any single feature, but from the interplay between temporal awareness, relational structure, and rich interaction-level attributes. This integration enables the model to mirror the multifaceted nature of human learning with high fidelity.

4.6. Visualization of Dynamic Graph Patterns

Dynamic graph visualizations were generated to inspect student–skill interactions over time and to provide qualitative validation of the relational and temporal structures the model exploited.

Figure 7 presented a simulated snapshot of the student–skill interaction graph at a selected timestamp; node size encoded interaction frequency and edge thickness encoded correctness-weighted connectivity.

The significance of

Figure 7 lay in its visual confirmation of heterogeneous engagement patterns: it revealed clusters of highly active students centered on foundational skills, as well as peripheral learners with sparse interactions, and showed variability in edge strengths reflecting differing success rates. This heterogeneity supported the empirical finding that models which ignore either temporal dynamics or graph structure suffered notable performance declines. Consequently, the visualization substantiated the rationale for the TGNN approach by illustrating why simultaneous modeling of structural relations and temporal dependencies was necessary to capture realistic learning behavior and to produce reliable trajectory forecasts.

4.7. Computational Analysis with Baselines

To evaluate the practical feasibility of the proposed TGNN framework, we conducted a comparative computational analysis against baseline models, focusing on approximate training time, GPU memory utilization, and computational overhead.

Table 8 summarizes these metrics across models. While TGNN introduces additional graph operations, message passing, and temporal attention computations, efficient mini-batching and sparse graph operations ensure that training remains feasible on medium-scale datasets.

Training the TGNN on the ASSISTments_skill dataset required approximately 3–4 h per run on an NVIDIA RTX 4090 GPU with 64 GB RAM, which is moderately higher than sequential LSTM or static GCN models but comparable to Transformer-based KT models. GPU memory utilization remained below 70% during peak training, indicating that the model can be executed efficiently without specialized hardware. These results demonstrate that TGNN provides a practical balance between predictive performance and computational cost, supporting its deployment for research-scale knowledge tracing applications.

Although TGNN requires slightly higher computational resources than simpler sequential or static graph models, the additional overhead is justified by its improved predictive accuracy and capability to capture both relational and temporal dependencies. Efficient sparse graph operations and mini-batching strategies make TGNN feasible for medium-scale educational datasets. Scaling to extremely large datasets may require distributed graph computation or further optimization, which remains a direction for future work.

4.8. Statistical Significance

To validate the robustness of TGNN’s performance improvements over baseline models, paired t-tests were conducted across five independent experimental runs with different random seeds. Performance metrics evaluated included AUC, Accuracy, and F1 score, and significance was assessed at

p < 0.05.

Table 9 summarizes the

p-values for comparisons between TGNN and each baseline model. All values below 0.05 indicate statistically significant improvements.

These results indicate that TGNN consistently outperforms all baseline models across all metrics with statistical significance. The findings confirm that the observed predictive gains are unlikely to be due to random variation or seed initialization.

4.9. Sensitivity Analysis

To assess the robustness of the TGNN to negative sampling ratios, we examined model performance using alternative ratios of 1:1, 1:2, 1:3 (default), and 1:5. Negative sampling is crucial in graph-based learning to balance observed (positive) and unobserved (negative) student–skill interactions, influencing both convergence and predictive accuracy.

Table 10 summarizes the AUC, Accuracy, and F1-score for different negative sampling ratios.

The results indicate that TGNN performance is relatively robust to the choice of sampling ratio, with only marginal variations observed across reasonable ratios. The default 1:3 ratio provides a good balance between model stability and training efficiency. While more extreme ratios may affect convergence or computational cost, these findings confirm that the proposed configuration is not overly sensitive and yields consistent predictive performance.

5. Conclusions and Future Work

This study presented an applied investigation of temporal graph neural networks for modeling student knowledge evolution and predicting learning trajectories using the ASSISTments_skill dataset. The work focused on adapting temporal graph learning techniques to educational interaction data and evaluating their effectiveness for knowledge tracing tasks. Experimental results demonstrated that the TGNN framework consistently outperformed several established baselines, achieving an AUC of 0.892, Accuracy of 0.846, F1 score of 0.842, and a Mean Absolute Error (MAE) of 0.078. Performance remained strong across both high-activity learners (MAE 0.072) and low-activity learners (MAE 0.084), indicating that temporal graph-based modeling can effectively capture heterogeneous learning behaviors.

Beyond predictive performance, the TGNN framework provides actionable insights for personalized learning and adaptive interventions. Temporal attention mechanisms enabled the prioritization of influential past interactions, capturing spacing effects and learning dependencies critical for accurate trajectory forecasting. Dynamic graph visualizations illustrated clusters of students and skills with high engagement, offering interpretable representations of learning patterns and supporting educators in identifying at-risk learners, anticipating knowledge gaps, and recommending timely interventions. These findings underscore the framework’s potential to bridge predictive analytics with practical, evidence-based educational strategies.

Despite these promising results, several limitations remain. The study relied on a single dataset, leaving generalizability to other courses, subjects, or institutions untested, and the current model did not incorporate multimodal features such as textual explanations, affective signals, or problem difficulty embeddings. Additionally, while computationally feasible for medium-sized datasets, scaling TGNNs to extremely large educational platforms may require optimized sparse graph computation or distributed processing. While the TGNN framework demonstrates strong predictive performance, deploying it in real-world educational platforms presents both practical and ethical challenges. Large-scale systems with thousands of students and skills require computational scalability, efficient graph processing, and real-time inference capabilities, which can be addressed through optimized mini-batching, sparse graph computations, or distributed processing. Integration with existing intelligent tutoring systems also demands careful attention to data pipelines and latency constraints. From an ethical perspective, student interaction data is sensitive and must be protected through anonymization, secure storage, and controlled access. Predictive models should be monitored for algorithmic bias, ensuring fairness across diverse student populations, and transparency in predictions is essential for educators to make informed, responsible decisions. Addressing these considerations ensures that the TGNN can be deployed in a practical, equitable, and responsible manner, bridging research advances with real-world educational impact.

Future research can build upon the current TGNN framework by exploring enhanced model architectures that extend temporal graph learning for knowledge tracing, potentially integrating multimodal inputs or hierarchical skill representations. Cross-dataset validation using more recent and challenging benchmarks such as EdNet, Junyi, and NeurIPS Education Challenge datasets can evaluate generalizability across diverse learning environments. Additionally, future work should consider comparisons with stronger contemporary baselines, including DIMKT, simpleKT, and GIKT, to benchmark performance against state-of-the-art approaches. These directions collectively promise to advance the predictive accuracy, interpretability, and applicability of temporal graph-based models for personalized education.