Optimization Algorithms: Comprehensive Classification, Principles, and Scientometric Trends

Abstract

1. Introduction

- A clear and structured synthesis of optimization methods, highlighting their strengths, limitations, and areas of application.

- An in-depth scientometric analysis that identifies research trends, maturity phases, and emerging paradigms in optimization.

- The identification of research gaps and opportunities, particularly at the interface of theoretical developments and practical applications, as well as in emerging hybrid approaches.

- A forward-looking discussion on the evolution of optimization paradigms, emphasizing potential directions for integration and hybridization of methods.

2. Overview of Optimization Methods

- Problem variables: What are the important parameters to be varied?

- Research space: Within which limits should these parameters be varied?

- Objective function: What are the objectives to be reached? How can we express them mathematically?

- Optimization method: Which method do we choose?

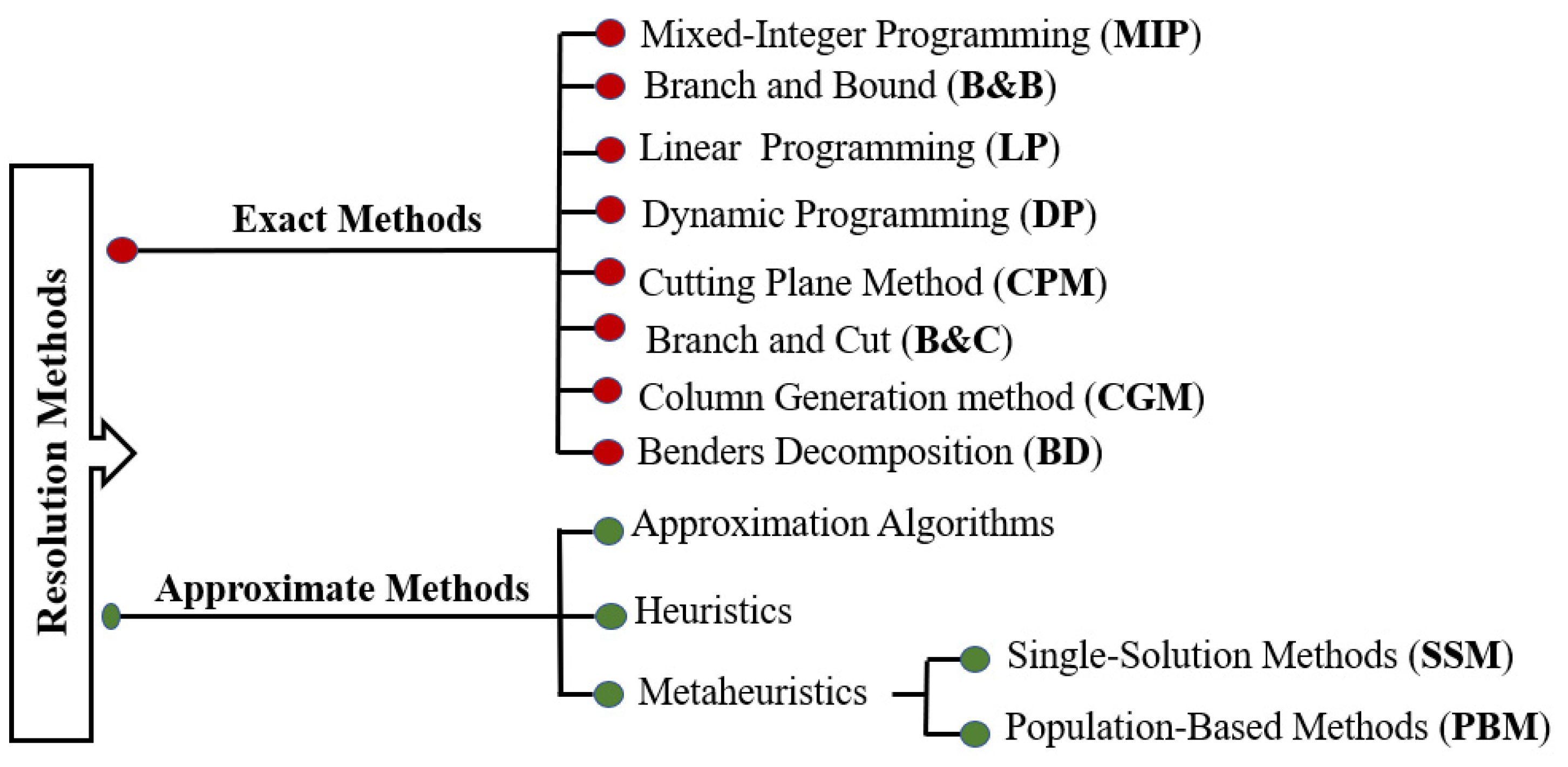

3. Optimization Methods Classification

3.1. Exact Optimization Methods

3.1.1. Mixed-Integer Programming (MIP)

3.1.2. Branch and Bound Algorithm (B&B)

- ▪

- Separation: Separation involves dividing the problem into sub-problems. Thus, by solving all the sub-problems and keeping the best solution found, we are sure to have solved the initial problem. This is equivalent to building a tree to enumerate all the solutions. The set of nodes of the tree that are still to be traversed as being likely to contain an optimal solution, still to be divided, is called the set of active nodes.

- ▪

- Evaluation: The evaluation allows us to reduce the search space by eliminating some subsets that do not contain the optimal solution. The objective is to try to evaluate the interest of exploring a subset of the tree. Branch and Bound uses branch elimination in the search tree in the following way: the search for a minimum cost solution consists of storing the lowest cost solution encountered during the exploration and comparing the cost of each node traversed to that of the best solution. If the cost of the considered node is higher than the best cost, we stop the exploration of the branch, and all the solutions of this branch will necessarily be of higher cost than the best solution already found.

- ▪

- The path strategyWidth first: This strategy favors the vertices closest to the root by making fewer separations from the initial problem. It is less efficient than the two other strategies presented.Depth first: This strategy favors the vertices farthest from the root (of higher depth) by applying more separations to the initial problem. This path quickly leads to an optimal solution by saving memory.The best first: This strategy consists of exploring sub-problems with the best bound. It also avoids exploring all sub-problems that have a bad evaluation with respect to the optimal value.

3.1.3. Linear Programming (LP)

3.1.4. Dynamic Programming (DP)

3.1.5. Cutting Plane Method (CPM)

3.1.6. Branch and Cut Method (B&C)

3.1.7. Column Generation Method (CGM)

3.1.8. Benders Decomposition (BD)

3.2. Approximate Optimization Methods

3.2.1. Approximation Algorithms

3.2.2. Heuristics Concepts

3.2.3. Metaheuristics

- Single-solution metaheuristics: These methods process one solution at a time in order to find the optimal solution.

- Population-based metaheuristics: These methods use a population of solutions at each iteration until the global solution is obtained.

- ▪

- Single-solution metaheuristics

- ▪

- Population-based metaheuristics

3.2.4. Simulation-Based Optimization (SBO) and Machine Learning Metamodels

3.3. Discussion and Comparative Synthesis

4. Comparative Study and Scientometric Framework

- ▪

- Optimality guarantees;

- ▪

- Computational complexity;

- ▪

- Scalability;

- ▪

- Resource requirements;

- ▪

- Implementation complexity.

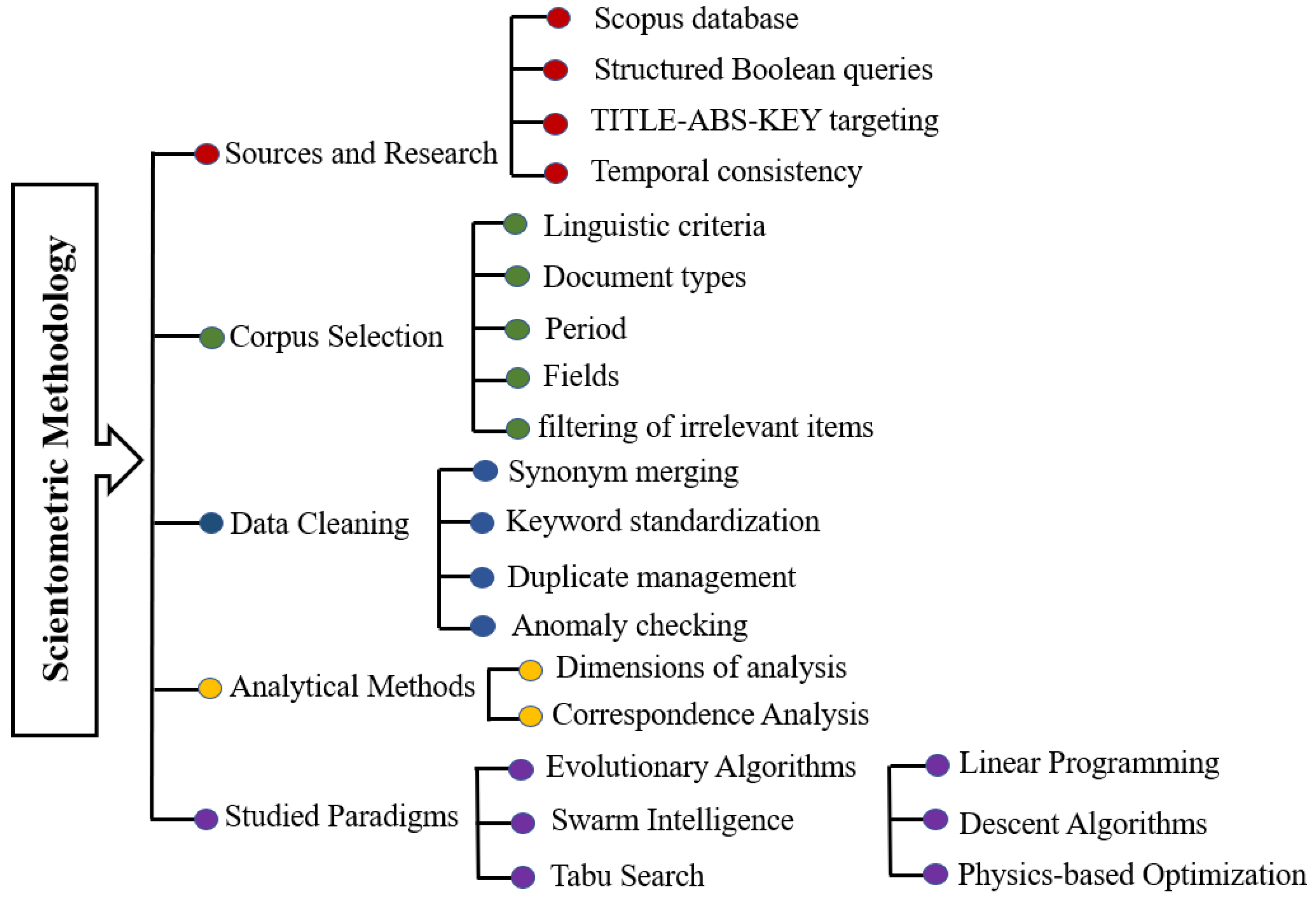

4.1. Scientometric Methodology

- ▪

- Data Sources and Retrieval: Bibliographic data were extracted from Scopus, selected for its multidisciplinary coverage and standardized indexing. Although the use of a single database may influence absolute publication counts, the large corpus analyzed ensures that the relative structural patterns identified in this study remain statistically robust. Structured Boolean queries targeting the TITLE-ABS-KEY fields (title, abstract, and keywords) ensured comprehensive retrieval.

- ▪

- Corpus Selection: Publications were filtered according to language (English), document type (journal articles and conference papers), and relevant scientific disciplines (computer science, engineering, mathematics, physics, energy, decision sciences). Screening was applied to exclude irrelevant records, such as purely biological uses of terms like “evolutionary algorithm.”

- ▪

- Data Cleaning and Standardization: The dataset was refined through synonym merging, keyword standardization, duplicate removal, and anomaly verification, ensuring high-quality and consistent data.

- ▪

- Analytical Methods: Analyses were performed along three complementary dimensions: publication volume, disciplinary distribution, and document type. In addition, a Correspondence Analysis (CA) was conducted to visualize structural associations between optimization paradigms and scientific disciplines in a reduced factorial space, enabling the identification of clusters, trends, and epistemological shifts.

- ▪

- Paradigms Studied: The final dataset encompasses six major optimization paradigms: evolutionary algorithms, swarm intelligence, tabu search, linear programming, descent algorithms combined with statistics, and physics-based optimization (PINNs).

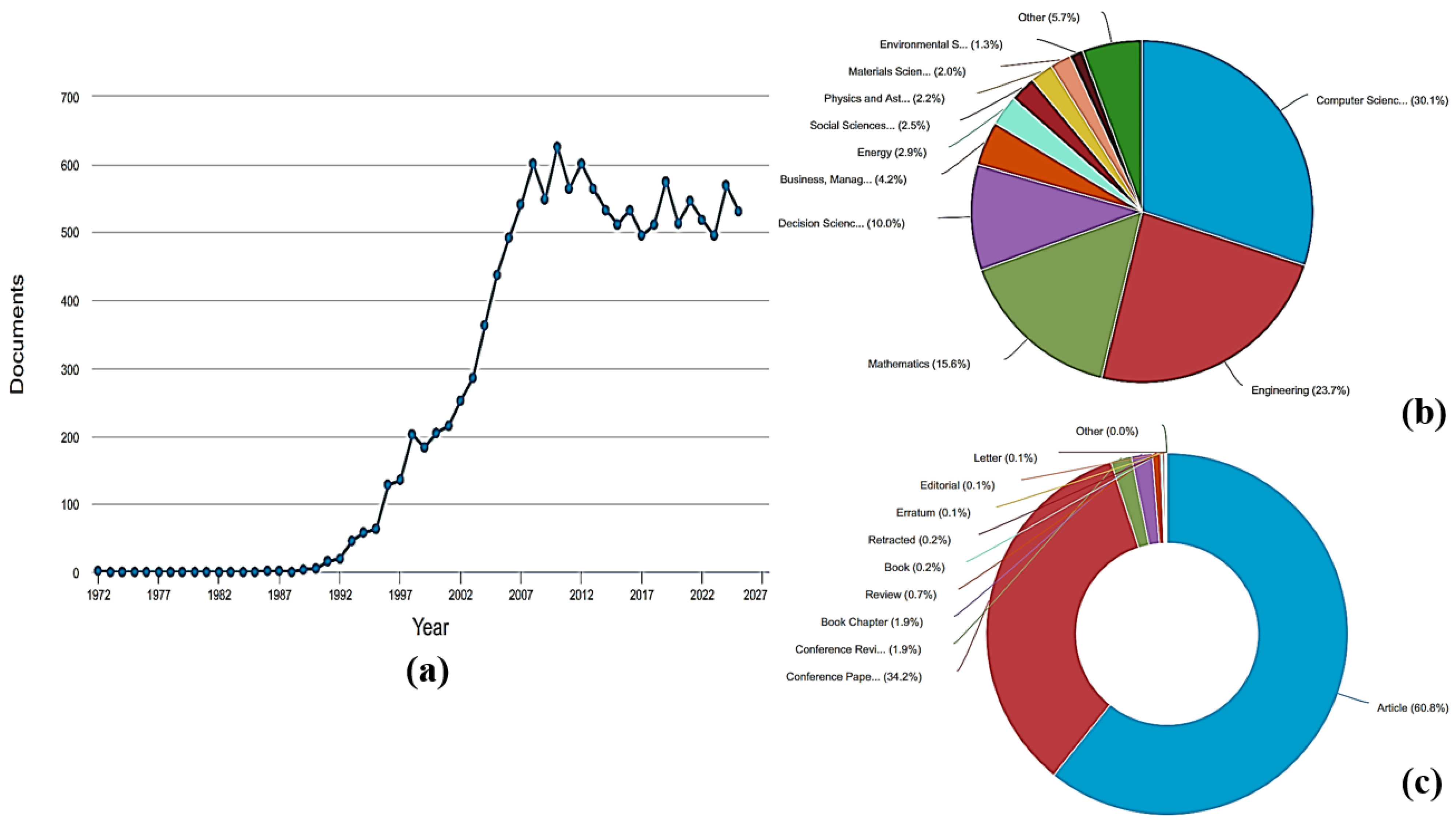

4.2. Comparative Scientometric Analysis of Optimization Paradigms

4.2.1. Scientometric Analysis of Evolutionary Algorithms

4.2.2. Scientometric Dynamics of Swarm Intelligence Algorithms

4.2.3. Scientometric Analysis of the Intersection Between Descent Algorithms and Statistics

4.2.4. Scientometric Dynamics of Tabu Search

4.2.5. Scientometric Dynamics of Linear Programming

- As an integral part of MIP solvers, LP has been an important subroutine;

- LP is increasingly becoming a part of AI and machine learning pipelines (convex relaxations, dual bounds, and model calibration);

- The advent of Big Data applications entails solving huge instances with millions of variables;

- Parallel computing and tailor-made architectures have been significantly developed.

4.2.6. Scientometric Dynamics of Physics-Based Algorithms

4.2.7. Comparative Synthesis of Scientometric Dynamics

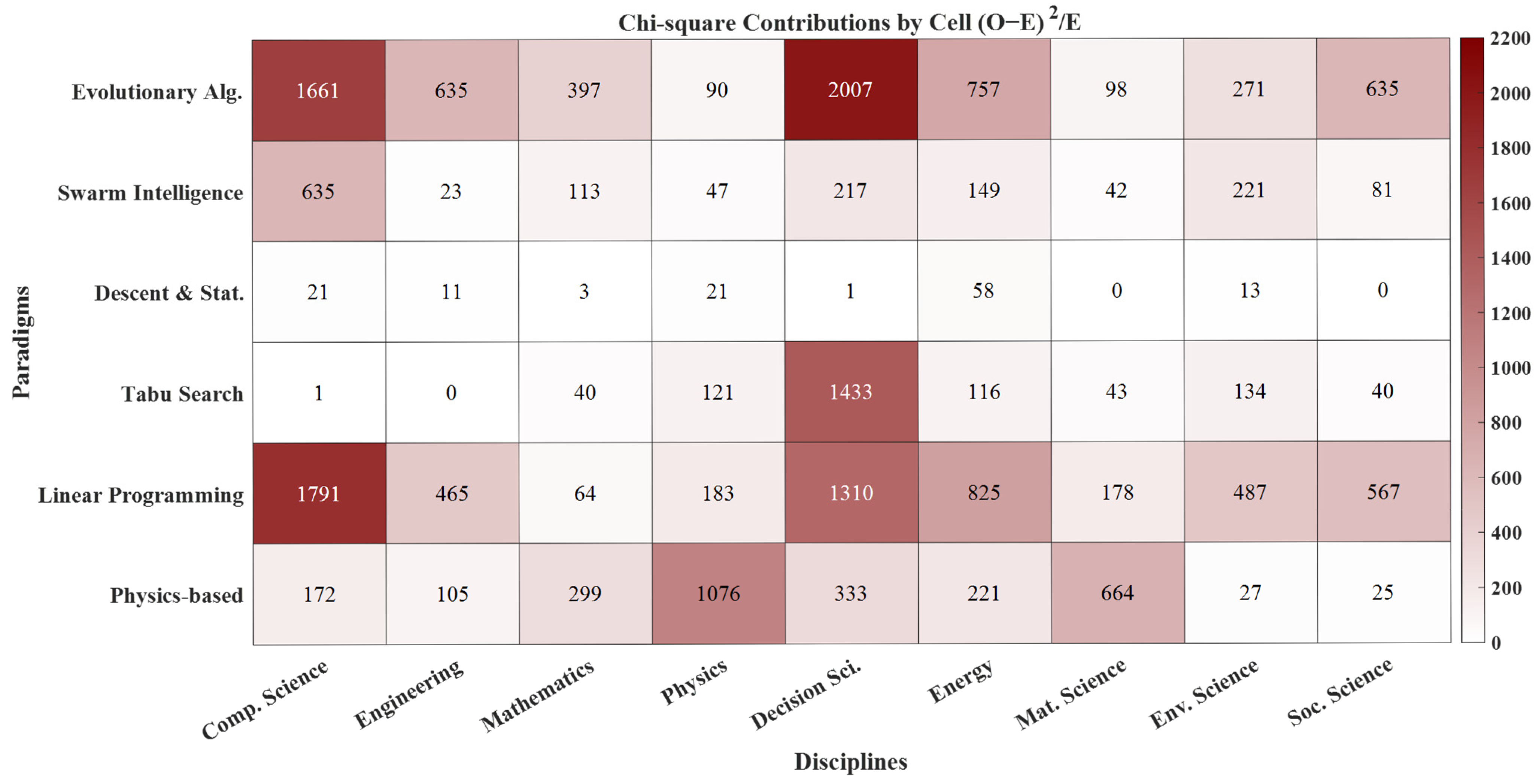

4.2.8. Multivariate Statistical Validation of Paradigm–Discipline Associations

5. Practical Decision Framework for Optimization Algorithm Selection

5.1. Problem Characterization and Algorithm Selection

- ▪

- Variable type: Continuous, discrete/combinatorial, or mixed;

- ▪

- Mathematical structure: Convex or non-convex, linear or nonlinear, smooth or non-differentiable;

- ▪

- Problem scale: Small (tens of variables), medium (hundreds), or large-scale (thousands or more);

- ▪

- Constraint complexity: Weakly constrained, strongly constrained, or equality-dominated;

- ▪

- Objective structure: Single-objective or multi-objective;

- ▪

- Uncertainty level: Deterministic or stochastic formulation;

- ▪

- Computational requirements: Offline optimization or real-time/near–real-time execution.

- ▪

- Evaluation cost: Analytical evaluation versus computationally expensive simulation or black-box model.

5.2. Operational Decision Map for Practitioners

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gao, B.; Peng, C.; Kong, D.; Wang, X.; Li, C.; Gao, M.; Ghadimi, N. Optimum structure of a combined wind/photovoltaic/fuel cell-based on amended Dragon Fly optimization algorithm: A case study. Energy Sources Part A Recovery Util. Environ. Eff. 2022, 44, 7109–7131. [Google Scholar] [CrossRef]

- Hasan, R.; Mendizabal, O.; Dotti, F. Green Virtual Networks for Timely Hybrid Synchrony Distributed Systems. In Proceedings of the 2024 4th International Conference on Electrical, Computer, Communications and Mechatronics Engineering (ICECCME), Malé, Maldives, 4–6 November 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Tambe, P.P. Selective Maintenance Optimization of a Multi-component System based on Simulated Annealing Algorithm. Procedia Comput. Sci. 2022, 200, 1412–1421. [Google Scholar] [CrossRef]

- Abouhssous, K.; Wakrim, L.; Zugari, A.; Zakriti, A. A Three-Band Patch Antenna Using a Defected Ground Structure Optimized by a Genetic Algorithm for the Modern Wireless Mobile Applications. Jordanian J. Comput. Inf. Technol. 2023, 9, 11–20. [Google Scholar] [CrossRef]

- Dejen, A.; Anguera, J.; Jayasinghe, J.; Ridwan, M. Bandwidth Improvement of Dualband mm-wave Microstrip Antenna Using Genetic Algorithm. Int. J. Comput. Digit. Syst. 2023, 13, 1187–1194. [Google Scholar] [CrossRef]

- Hasan, R.; Abdelaziz, A. 5G-OPS: Optimizer of Private 5G Slices. In Proceedings of the 2023 3rd International Conference on Electrical, Computer, Communications and Mechatronics Engineering (ICECCME), Tenerife, Spain, 19–21 July 2023; pp. 1–6. [Google Scholar] [CrossRef]

- Owezarski, P.; Hasan, R.; Kremer, G.; Berthou, P. First Step in Cross-Layers Measurement in Wireless Networks How to Adapt to Resource Constraints for Optimizing End-to-End Services? In Wired/Wireless Internet Communications; Springer: Berlin/Heidelberg, Germany, 2011; Volume 6649, pp. 150–161. [Google Scholar] [CrossRef]

- George, R.; Mary, T.A.J. A sea lion optimized microstrip patch antenna for enhancing the energy efficiency of the WSN. Spat. Inf. Res. 2023, 31, 439–452. [Google Scholar] [CrossRef]

- Xie, C.; Wu, X.; Bai, X. Optimal Strategy: A Comprehensive Model for Predicting Price Trend and Algorithm Optimization. In Proceedings of the 7th International Conference on Cyber Security and Information Engineering. ICCSIE ’22; Association for Computing Machinery: New York, NY, USA, 2022; pp. 457–460. [Google Scholar] [CrossRef]

- Song, Y.; Zhao, G.; Zhang, B.; Chen, H.; Deng, W.; Deng, W. An enhanced distributed differential evolution algorithm for portfolio optimization problems. Eng. Appl. Artif. Intell. 2023, 121, 106004. [Google Scholar] [CrossRef]

- Zhou, H.; Sun, G.; Fu, S.; Liu, J.; Zhou, X.; Zhou, J. A Big Data Mining Approach of PSO-Based BP Neural Network for Financial Risk Management with IoT. IEEE Access 2019, 7, 154035–154043. [Google Scholar] [CrossRef]

- Chen, C.H.; Chen, Y.H.; Lin, J.C.W.; Wu, M.E. An Effective Approach for Obtaining a Group Trading Strategy Portfolio Using Grouping Genetic Algorithm. IEEE Access 2019, 7, 7313–7325. [Google Scholar] [CrossRef]

- Chan, F.T.S.; Wang, Z.X.; Goswami, A.; Singhania, A.; Tiwari, M.K. Multi-objective particle swarm optimization based integrated production inventory routing planning for efficient perishable food logistics operations. Int. J. Prod. Res. 2020, 58, 5155–5174. [Google Scholar] [CrossRef]

- Wang, C.; Qian, Y.; Shaic, S. The Applications of Nature-Inspired Algorithms in Logistic Domains: A Comprehensive and Systematic Review. Arab. J. Sci. Eng. 2021, 46, 3443–3464. [Google Scholar] [CrossRef]

- Devaraj, A.F.S.; Elhoseny, M.; Dhanasekaran, S.; Lydia, E.L.; Shankar, K. Hybridization of firefly and Improved Multi-Objective Particle Swarm Optimization algorithm for energy efficient load balancing in Cloud Computing environments. J. Parallel Distrib. Comput. 2020, 142, 36–45. [Google Scholar] [CrossRef]

- Li, Y.; Soleimani, H.; Zohal, M. An improved ant colony optimization algorithm for the multi-depot green vehicle routing problem with multiple objectives. J. Clean. Prod. 2019, 227, 1161–1172. [Google Scholar] [CrossRef]

- Khan, M.A.; Khan, A.; Alhaisoni, M.; Alqahtani, A.; Alsubai, S.; Alharbi, M.; Malik, N.A.; Damaševičius, R. Multimodal brain tumor detection and classification using deep saliency map and improved dragonfly optimization algorithm. Int. J. Imaging Syst. Technol. 2023, 33, 572–587. [Google Scholar] [CrossRef]

- Jacob, I.J.; Darney, P.E. Artificial Bee Colony Optimization Algorithm for Enhancing Routing in Wireless Networks. J. Artif. Intell. Capsul. Netw. 2021, 3, 62–71. [Google Scholar] [CrossRef]

- Khatouri, H.; Benamara, T.; Breitkopf, P.; Demange, J. Metamodeling techniques for CPU-intensive simulation-based design optimization: A survey. Adv. Model. Simul. Eng. Sci. 2022, 9, 1. [Google Scholar] [CrossRef]

- Vesselinova, N.; Steinert, R.; Perez-Ramirez, D.F.; Boman, M. Learning Combinatorial Optimization on Graphs: A Survey with Applications to Networking. IEEE Access 2020, 8, 120388–120416. [Google Scholar] [CrossRef]

- William-West, T.O.; Ibrahim, M.A. On shadowed set approximation methods. Soft Comput. 2023, 27, 4463–4482. [Google Scholar] [CrossRef]

- Kleinert, T.; Labbé, M.; Ljubić, I.; Schmidt, M. A Survey on Mixed-Integer Programming Techniques in Bilevel Optimization. EURO J. Comput. Optim. 2021, 9, 100007. [Google Scholar] [CrossRef]

- Zhang, J.; Liu, C.; Li, X.; Zhen, H.-L.; Yuan, M.; Li, Y.; Yan, J. A survey for solving mixed integer programming via machine learning. Neurocomputing 2023, 519, 205–217. [Google Scholar] [CrossRef]

- Theurich, F.; Fischer, A.; Scheithauer, G. A branch-and-bound approach for a Vehicle Routing Problem with Customer Costs. EURO J. Comput. Optim. 2021, 9, 100003. [Google Scholar] [CrossRef]

- Rahmaniani, R.; Crainic, T.G.; Gendreau, M.; Rei, W. The Benders Decomposition Algorithm: A Literature Review. Eur. J. Oper. Res. 2017, 259, 801–817. [Google Scholar] [CrossRef]

- Karbowski, A. Generalized Benders Decomposition Method to Solve Big Mixed-Integer Nonlinear Optimization Problems with Convex Objective and Constraints Functions. Energies 2021, 14, 6503. [Google Scholar] [CrossRef]

- Yang, Y. Improved Benders Decomposition and Feasibility Validation for Two-Stage Chance-Constrained Programs in Process Optimization. Ind. Eng. Chem. Res. 2019, 58, 4853–4865. [Google Scholar] [CrossRef]

- Kirkpatrick, S.; Gelatt, C.D.; Vecchi, M.P. Optimization by simulated annealing. Science 1983, 220, 671–680. [Google Scholar] [CrossRef]

- Černý, V. Thermodynamical approach to the traveling salesman problem: An efficient simulation algorithm. J. Optim. Theory Appl. 1985, 45, 41–51. [Google Scholar] [CrossRef]

- Siarry, P.; Berthiau, G.; Durdin, F.; Haussy, J. Enhanced simulated annealing for globally minimizing functions of many-continuous variables. ACM Trans. Math. Softw. 1997, 23, 209–228. [Google Scholar] [CrossRef]

- Taillard, E.D. La Programmation a Memoire Adaptative et les Algorithmes Pseudo-Gloutons: Nouvelles Perspectives pour les Meta-Heuristiques; Guide Books; Istituto Dalle Molle Di Studi Sull Intelligenza Artificiale: Lugano, Switzerland, 1998. [Google Scholar]

- Brimberg, J.; Salhi, S.; Todosijević, R.; Urošević, D. Variable Neighborhood Search: The power of change and simplicity. Comput. Oper. Res. 2023, 155, 106221. [Google Scholar] [CrossRef]

- Stützle, T. Iterated local search for the quadratic assignment problem. Eur. J. Oper. Res. 2006, 174, 1519–1539. [Google Scholar] [CrossRef]

- Lu, S.; Hu, C.; Kong, M.; Fathollahi-Fard, A.M.; Wu, B. A modified adaptive large neighborhood search algorithm for serial-batching machines scheduling considering changeover time and rate-modifying activities. Eng. Appl. Artif. Intell. 2025, 141, 109865. [Google Scholar] [CrossRef]

- Liu, S.; Sun, J.; Duan, X.; Liu, G. Parallel adaptive large neighborhood search based on spark to solve VRPTW. Sci. Rep. 2024, 14, 23809. [Google Scholar] [CrossRef] [PubMed]

- Nedjah, N.; Junior, L.S. Review of methodologies and tasks in swarm robotics towards standardization. Swarm Evol. Comput. 2019, 50, 100565. [Google Scholar] [CrossRef]

- Jain, M.; Saihjpal, V.; Singh, N.; Singh, S.B. An Overview of Variants and Advancements of PSO Algorithm. Appl. Sci. 2022, 12, 8392. [Google Scholar] [CrossRef]

- Dorigo, M.; Stützle, T. Ant Colony Optimization: Overview and Recent Advances. In Handbook of Metaheuristics; Gendreau, M., Potvin, J.Y., Eds.; International Series in Operations Research & Management Science; Springer International Publishing: Cham, Switzerland, 2019; pp. 311–351. [Google Scholar] [CrossRef]

- Aldhaheri, S.; Alghazzawi, D.; Cheng, L.; Barnawi, A.; Alzahrani, B.A. Artificial Immune Systems approaches to secure the internet of things: A systematic review of the literature and recommendations for future research. J. Netw. Comput. Appl. 2020, 157, 102537. [Google Scholar] [CrossRef]

- Guo, C.; Tang, H.; Niu, B.; Lee, C.B.P. A survey of bacterial foraging optimization. Neurocomputing 2021, 452, 728–746. [Google Scholar] [CrossRef]

- Zeng, T.; Wang, W.; Wang, H.; Cui, Z.; Wang, F.; Wang, Y.; Zhao, J. Artificial bee colony based on adaptive search strategy and random grouping mechanism. Expert Syst. Appl. 2022, 192, 116332. [Google Scholar] [CrossRef]

- Ma, H.; Simon, D.; Siarry, P.; Yang, Z.; Fei, M. Biogeography-Based Optimization: A 10-Year Review. IEEE Trans. Emerg. Top. Comput. Intell. 2017, 1, 391–407. [Google Scholar] [CrossRef]

- Meraihi, Y.; Ramdane-Cherif, A.; Acheli, D.; Mahseur, M. Dragonfly algorithm: A comprehensive review and applications. Neural Comput. Appl. 2020, 32, 16625–16646. [Google Scholar] [CrossRef]

- Guerrero-Luis, M.; Valdez, F.; Castillo, O. A Review on the Cuckoo Search Algorithm. In Fuzzy Logic Hybrid Extensions of Neural and Optimization Algorithms: Theory and Applications; Castillo, O., Melin, P., Eds.; Studies in Computational Intelligence; Springer International Publishing: Cham, Switzerland, 2021; pp. 113–124. [Google Scholar] [CrossRef]

- Emary, E.; Yamany, W.; Hassanien, A.E.; Snasel, V. Multi-Objective Gray-Wolf Optimization for Attribute Reduction. Procedia Comput. Sci. 2015, 65, 623–632. [Google Scholar] [CrossRef]

- Abualigah, L.; Shehab, M.; Alshinwan, M.; Alabool, H. Salp swarm algorithm: A comprehensive survey. Neural Comput. Appl. 2020, 32, 11195–11215. [Google Scholar] [CrossRef]

- Hashemi, A.; Dowlatshahi, M.B.; Nezamabadi-Pour, H. Gravitational Search Algorithm: Theory, Literature Review, and Applications. In Handbook of AI-Based Metaheuristics; CRC Press: Boca Raton, FL, USA, 2021. [Google Scholar]

- Talatahari, S.; Hakimpour, F.; Ranjbar, A. The application of multi-objective charged system search algorithm for optimization problems. Sci. Iran. 2019, 26, 1249–1265. [Google Scholar] [CrossRef]

- Ebadifard, F.; Babamir, S.M. Optimizing multi objective based workflow scheduling in cloud computing using black hole algorithm. In Proceedings of the 2017 3th International Conference on Web Research (ICWR), Tehran, Iran, 19–20 April 2017; pp. 102–108. [Google Scholar] [CrossRef]

- Tolabi, H.B.; Shakarami, M.R.; Hosseini, R.; Ayob, S.B.M. Novel FGbSA: Fuzzy-Galaxy-based search algorithm for multi-objective reconfiguration of distribution systems. Russ. Electr. Eng. 2016, 87, 588–595. [Google Scholar] [CrossRef]

- Sun, Y.; Xu, P.; Zhang, Z.; Zhu, T.; Luo, W. Brain Storm Optimization Integrated with Cooperative Coevolution for Large-Scale Constrained Optimization. In Advances in Swarm Intelligence; Tan, Y., Shi, Y., Luo, W., Eds.; Lecture Notes in Computer Science; Springer Nature: Cham, Switzerland, 2023; pp. 356–368. [Google Scholar] [CrossRef]

- Abdulkhaleq, M.T.; Rashid, T.A.; Alsadoon, A.; Hassan, B.A.; Mohammadi, M.; Abdullah, J.M.; Chhabra, A.; Ali, S.L.; Othman, R.N.; Hasan, H.A.; et al. Harmony search: Current studies and uses on healthcare systems. Artif. Intell. Med. 2022, 131, 102348. [Google Scholar] [CrossRef]

- Gómez Díaz, K.Y.; De León Aldaco, S.E.; Aguayo Alquicira, J.; Ponce-Silva, M.; Olivares Peregrino, V.H. Teaching–Learning-Based Optimization Algorithm Applied in Electronic Engineering: A Survey. Electronics 2022, 11, 3451. [Google Scholar] [CrossRef]

- Akyol, S.; Alatas, B. Plant intelligence based metaheuristic optimization algorithms. Artif. Intell. Rev. 2017, 47, 417–462. [Google Scholar] [CrossRef]

- Ong, K.M.; Ong, P.; Sia, C.K. A new flower pollination algorithm with improved convergence and its application to engineering optimization. Decis. Anal. J. 2022, 5, 100144. [Google Scholar] [CrossRef]

- Halim, A.H.; Ismail, I. Nonlinear plant modeling using neuro-fuzzy system with tree physiology optimization. In Proceedings of the 2013 IEEE Student Conference on Research and Development, Putrajaya, Malaysia, 16–17 December 2013; pp. 295–300. [Google Scholar] [CrossRef]

- Goli, A.; Tirkolaee, E.B.; Malmir, B.; Bian, G.B.; Sangaiah, A.K. A multi-objective invasive weed optimization algorithm for robust aggregate production planning under uncertain seasonal demand. Computing 2019, 101, 499–529. [Google Scholar] [CrossRef]

- Xu, H.; Deng, Q.; Zhang, Z.; Lin, S. A hybrid differential evolution particle swarm optimization algorithm based on dynamic strategies. Sci. Rep. 2025, 15, 4518. [Google Scholar] [CrossRef]

- Abbal, K.; El-Amrani, M.; Aoun, O.; Benadada, Y. Adaptive Particle Swarm Optimization with Landscape Learning for Global Optimization and Feature Selection. Modelling 2025, 6, 9. [Google Scholar] [CrossRef]

- Han, J.; Chen, Y.; Huang, X. An Advanced Adaptive Group Learning Particle Swarm Optimization Algorithm. Symmetry 2025, 17, 667. [Google Scholar] [CrossRef]

- Sroka, G.; Wierzchoń, S.T. Robustness and Invariance of Hybrid Metaheuristics under Objective Function Transformations. arXiv 2025, arXiv:2509.05445. [Google Scholar] [CrossRef]

- Peñate-Rodríguez, H.C.; Rivera, G.; Sánchez-Solís, J.P.; Florencia, R. The Scientific Landscape of Hyper-Heuristics: A Bibliometric Analysis Based on Scopus. Algorithms 2025, 18, 294. [Google Scholar] [CrossRef]

- Ariffin, M.A.; Rejab, M.M.; Ibrahim, R.; Mahdin, H. The Evolution of Metaheuristic Research: A Bibliometrics Analysis of Research Trends in Computer Sciences. J. Appl. Sci. Technol. Comput. 2025, 2, 1–15. Available online: https://publisher.uthm.edu.my/ojs/index.php/jastec/article/view/23558 (accessed on 8 March 2026).

- Abouhssous, K.; Wakrim, L.; Zugari, A.; Zakriti, A. A multi-objective genetic algorithm approach applied to compact meander branch line couplers design for 5G-enabled IoT applications. J. Comput. Electron. 2024, 23, 634–646. [Google Scholar] [CrossRef]

- Hoseini Karani, M.M.; Nikoo, M.R.; Dolatshahi Pirooz, H.; Shadmani, A.; Al-Saadi, S.; Gandomi, A.H. Multi-objective evolutionary framework for layout and operational optimization of a multi-body wave energy converter. Energy 2024, 313, 134045. [Google Scholar] [CrossRef]

- Mohammadian KhalafAnsar, H.; Keighobadi, J.; Farhid, M. Estimation of thrust force of cold gas propulsion using a multi-layer perceptron optimized by genetic algorithm. Eng. Res. Express 2025, 7, 045580. [Google Scholar] [CrossRef]

- Jongpluempiti, J.; Vengsungnle, P.; Poojeera, S.; Srichat, A.; Naphon, N.; Eiamsa-ard, S.; Naphon, P. Efficient thermal performance prediction and optimization in HVAC/thermoelectric systems with artificial neural networks and non-dominated sorting genetic algorithm II. Eng. Res. Express 2025, 7, 045550. [Google Scholar] [CrossRef]

- Abouhssous, K.; Wakrim, L.; Zugari, A.; Haddi, S.B.; Handri, K.E.; Zakriti, A. High efficiency and low group delay in an ultra-compact H-shaped directional coupler for 5G applications: An AI-based optimization approach using genetic algorithms and neural networks. AEU-Int. J. Electron. Commun. 2025, 201, 155985. [Google Scholar] [CrossRef]

- Abouhssous, K.; Zugari, A.; Bakkali, M.E.; Wakrim, L.; Zakriti, A. A multi-objective binary genetic algorithm for a low-profile, low-group delay directional coupler: An innovative design for 5G applications. Telecommun. Syst. 2025, 88, 94. [Google Scholar] [CrossRef]

- Zhou, Z.; Li, N.; Bao, H.; Li, Y.; Guo, X.; Zheng, R.; Dong, W.; Ding, W. Large-Scale Multi-Objective Dual-Population Co-Evolutionary Algorithm Based on Decision Variable Boundary Penalty. Concurr. Comput. Pract. Exp. 2025, 37, e70443. [Google Scholar] [CrossRef]

- Yang, Z.; Deng, L.; Di, Y.; Li, C.; Qin, Y.; Zhang, L. A Dual-Population Constrained Multi-Objective Evolutionary Algorithm with Success Incentive Mechanism and its application to uncertain multimodal transportation problems. Eng. Appl. Artif. Intell. 2025, 162, 112586. [Google Scholar] [CrossRef]

- Li, P.; Wu, B.; Wu, N. The three-party evolutionary game of preannouncement strategies for platform’s AI updates considering users’ loyalties. Eng. Appl. Artif. Intell. 2025, 162, 112782. [Google Scholar] [CrossRef]

- Sun, Y.; Shao, Z.; Yan, B. IAGA: A New Method of Automatic Layout for Dimensioning Engineering Drawings. Teh. Vjesn. 2025, 32, 255–266. [Google Scholar] [CrossRef]

- Zhou, R.; Wang, J.; Xie, Z.; Sun, Y.; Lu, G.; Yeow, J.T.W. Superior terahertz radiation detection through novel micro circular log-periodic antenna engineered with an advanced evolutionary neural network algorithm. Microsyst. Nanoeng. 2025, 11, 160. [Google Scholar] [CrossRef]

- Ma, H.; Kang, X.; Huang, Y.; Duan, S.; Li, Y.; Fang, D. Structural transient dynamic topology optimization based on autoencoder-enhanced generative adversarial network and elitist guidance evolutionary algorithm. Comput. Methods Appl. Mech. Eng. 2025, 447, 118417. [Google Scholar] [CrossRef]

- Ahmed Alsarori, A.M.; Sulaiman, M.H. Integrated deep learning for cardiovascular risk assessment and diagnosis: An evolutionary mating algorithm-enhanced CNN-LSTM. MethodsX 2025, 15, 103466. [Google Scholar] [CrossRef]

- Koehler, S.I.; Pentz, J.T.; Middlebrook, E.A.; Hovde, B.T.; Hanschen, E.R. Protocol to detect dilution cycles in chemostat experiments and estimate growth rate slopes with linear modeling with R software chemostat_regression. STAR Protoc. 2025, 6, 104113. [Google Scholar] [CrossRef]

- Malik, V.; Pande, A.; Majumder, A. IclForge: Enhancing In-Context Learning with Evolutionary Algorithms under Budgeted Annotation. In Proceedings of the 34th ACM International Conference on Information and Knowledge Management. CIKM ’25; Association for Computing Machinery: New York, NY, USA, 2025; pp. 5931–5938. [Google Scholar] [CrossRef]

- Bauz-Olvera, S.A.; Quiroga-Sierra, W.A.; Pambabay-Calero, J.J.; Galindo-Villardón, P. Optimizing Gondola Replenishment: A Simulation Approach Using Promodel and Evolutionary Algorithms to Balance Costs. E3S Web Conf. 2025, 658, 01003. [Google Scholar] [CrossRef]

- Jin, X.; Wei, B.; Deng, L.; Yang, S.; Zheng, J.; Wang, F. An adaptive pyramid PSO for high-dimensional feature selection. Expert Syst. Appl. 2024, 257, 125084. [Google Scholar] [CrossRef]

- Jose, M.R.; Vigila, S.M.C. F-CAPSO: Fuzzy chaos adaptive particle swarm optimization for energy-efficient and secure data transmission in MANET. Expert Syst. Appl. 2023, 234, 120944. [Google Scholar] [CrossRef]

- Kumar, S.; Saini, M.; Goel, M.; Panda, B.S. Modeling information diffusion in online social networks using a modified forest-fire model. J. Intell. Inf. Syst. 2021, 56, 355–377. [Google Scholar] [CrossRef]

- Zhang, J.; Xiao, C.; Liang, X.; Yang, W.; Fang, Z.; Zhang, L.; Dai, R.; Li, W.; Ni, H. Machine learning based on a swarm intelligence algorithm and explainable AI for the prediction of reservoir temperature. Energy 2025, 341, 139412. [Google Scholar] [CrossRef]

- Bodha, K.D.; Arun, V.; Awasthi, A.; Mahato, B.; Fotis, G. Rotational gate based quantum particle swarm optimization for benchmark suites and combined economic emission dispatch. Eng. Res. Express 2025, 7, 045345. [Google Scholar] [CrossRef]

- Krishnakumar, B.; Kousalya, K. Optimal Trained Deep Learning Model for Breast Cancer Segmentation and Classification. Inf. Technol. Control 2023, 52, 915–934. [Google Scholar] [CrossRef]

- Goyal, S.; Patterh, M.S. Wireless Sensor Network Localization Based on Cuckoo Search Algorithm. Wirel. Pers. Commun. 2014, 79, 223–234. [Google Scholar] [CrossRef]

- Biondi Neto, L.; Becceneri, J.C.; da Silva, J.D.S.; da Luz, E.F.P.; de Campos Velho, H.F.; da Silva Neto, A.J. Computational Intelligence in Optimization Problems. In Computational Intelligence Applied to Inverse Problems in Radiative Transfer; da Silva Neto, A.J., Becceneri, J.C., de Campos Velho, H.F., Eds.; Springer International Publishing: Cham, Switzerland, 2023; pp. 29–34. [Google Scholar] [CrossRef]

- Chen, Y.; Zhao, X.; Hao, J. A novel MOPSO-SODE algorithm for solving three-objective SR-ES-TR portfolio optimization problem. Expert Syst. Appl. 2023, 233, 120742. [Google Scholar] [CrossRef]

- da Silva Neto, A.J.; de Campos Velho, H.F. Inverse Problems in Radiative Transfer: An Implicit Formulation. In Computational Intelligence Applied to Inverse Problems in Radiative Transfer; da Silva Neto, A.J., Becceneri, J.C., de Campos Velho, H.F., Eds.; Springer International Publishing: Cham, Switzerland, 2023; pp. 19–28. [Google Scholar] [CrossRef]

- Jondri, J.; Indwiarti, I.; Puspandari, D. Retweet Prediction Using Multi-Layer Perceptron Optimized by The Swarm Intelligence Algorithm. J. Online Inform. 2023, 8, 252–260. [Google Scholar] [CrossRef]

- Hu, K.; Zhang, X.; Jiang, Y. Wireless Sensor Networks Node Localization Approach Based on The Cuckoo Algorithm. In Proceedings of the 2023 International Conference on Artificial Intelligence, Systems and Network Security. AISNS ’23; Association for Computing Machinery: New York, NY, USA, 2024; pp. 349–354. [Google Scholar] [CrossRef]

- Li, R.; Ma, Y.; Chen, H.; Yang, X.; Xing, Z. Coordinate descent for top-k multi-label feature selection with pseudo-label learning and manifold learning. Neurocomputing 2025, 658, 131640. [Google Scholar] [CrossRef]

- Korbit, M.; Adeoye, A.D.; Bemporad, A.; Zanon, M. Exact Gauss-Newton optimization for training deep neural networks. Neurocomputing 2025, 658, 131738. [Google Scholar] [CrossRef]

- Ahmed, U.; Waqar, M.; Zouari, F.; Luo, L.; Louati, M.; Li, J.; Ghidaoui, M.S. Multiple scattering-assisted data-driven full waveform inversion for pipeline blockage detection. Eng. Appl. Artif. Intell. 2025, 162, 112420. [Google Scholar] [CrossRef]

- Zhang, J.; Yang, X.; Mou, C.; Zhou, C. Learning surrogate potential mean field games via Gaussian processes: A data-driven approach to ill-posed inverse problems. J. Comput. Phys. 2025, 543, 114412. [Google Scholar] [CrossRef]

- Xu, K.L.; Dong, L.H.; Wang, B.; Li, Z. Preserving-periodic Riemannian descent model reduction of linear discrete-time periodic systems with isometric vector transport on product manifolds. Appl. Math. Lett. 2025, 171, 109692. [Google Scholar] [CrossRef]

- Mishelevich, D.J. Computer graphics in medicine: Six theses. SIGGRAPH Comput. Graph. 1972, 6, 2–12. [Google Scholar] [CrossRef]

- Wang, X.; Liang, Y.; Tang, X.; Jiang, X. A multi-compartment electric vehicle routing problem with time windows and temperature and humidity settings for perishable product delivery. Expert Syst. Appl. 2023, 233, 120974. [Google Scholar] [CrossRef]

- Li, Z.; Li, G.; Bilal, M.; Liu, D.; Huang, T.; Xu, X. Blockchain-Assisted Server Placement with Elitist Preserved Genetic Algorithm in Edge Computing. IEEE Internet Things J. 2023, 10, 21401–21409. [Google Scholar] [CrossRef]

- Kulaç, S.; Kazancı, N. Optimization of In-Plant Logistics Through a New Hybrid Algorithm for the Capacitated Vehicle Routing Problem with Heterogeneous Fleet. Sak. Univ. J. Sci. 2024, 28, 1242–1260. [Google Scholar] [CrossRef]

- Tsogbetse, I.; Bernard, J.; Manier, H.; Manier, M.A. Influence of encoding and neighborhood in landscape analysis and tabu search performance for job shop scheduling problem. Eur. J. Oper. Res. 2024, 319, 739–746. [Google Scholar] [CrossRef]

- Ahmed, G.; Sheltami, T.; Yasar, A. Optimal path recommendation in dynamic traffic networks using the hybrid Tabu-A* algorithm. Transp. Res. Part E Logist. Transp. Rev. 2025, 204, 104414. [Google Scholar] [CrossRef]

- Weng, X.; Hu, R.; Fan, H.; Zhang, J.; Yun, L. A Reliability Model for Electric Vehicle Routing Problem Under Charging Failure Risk. Soc. Sci. Res. Netw. 2024, 12, 4890097. [Google Scholar] [CrossRef]

- Peng, Z.; Kang, Y.; Li, X.; Gao, L.; Liu, Q.; Zhang, C. A hybrid algorithm incorporating sequencing flexibility for integrated process planning and scheduling problem. Swarm Evol. Comput. 2025, 99, 102201. [Google Scholar] [CrossRef]

- Becker, C.; Schneider, M. An A-Priori-Splitting-Based Heuristic for the Split Delivery Vehicle Routing Problem with Time Windows. Networks 2025, 86, 468–516. [Google Scholar] [CrossRef]

- Naz, S.; Zahid, Z.; Ali, R.; Jamil, M.K. S-box generation through enhanced hybrid chaotic maps and tabu-optimized technique. Nonlinear Dyn. 2025, 113, 34001–34023. [Google Scholar] [CrossRef]

- Pandya, K.; Maiti, A. Benchmarking the three-dimensional and the numerical three-dimensional matching problems on the D-Wave Advantage quantum annealer. Inf. Sci. 2025, 721, 122584. [Google Scholar] [CrossRef]

- Goodarzian, F.; Ghasemi, P. A case-driven simulation-optimization model for sustainable medical logistics network. Socio-Econ. Plan. Sci. 2025, 101, 102271. [Google Scholar] [CrossRef]

- Gloria, R.S.; Wahyuningsih, S. Study of the adaptive large neighborhood search with Tabu search (ALNS-TS) algorithm on CVRPTW and its implementation. AIP Conf. Proc. 2025, 3446, 020023. [Google Scholar] [CrossRef]

- Nazari, N.; Yaghoobi, M.A.; Mansouri, N. A dual-phase strategy for clustering: Integrating genetic algorithms with tabu search. Knowl. Inf. Syst. 2025, 67, 12211–12266. [Google Scholar] [CrossRef]

- Mermoud, D.L.; Grabisch, M.; Sudhölter, P. Minimal balanced collections and their applications to core stability and other topics of game theory. arXiv 2025, arXiv:2507.05898. [Google Scholar] [CrossRef]

- Kiiski, E.; Hyytiäinen, K. Trade-offs between carbon conservation and profitability in crop cultivation: Unlocking potential through diversifying crop allocations regionally. Agric. Food Sci. 2024, 33, 268–279. [Google Scholar] [CrossRef]

- Dehshiri, S.J.H.; Amiri, M.; Hajiaghaei-Keshteli, M.; Keshavarz-Ghorabaee, M.; Zavadskas, E.K.; Antuchevičienė, J. Designing a sustainable closed-loop supply chain using robust possibilistic-stochastic programming in pentagonal fuzzy numbers. Transport 2024, 39, 323–349. [Google Scholar] [CrossRef]

- Dick, H.; Dahm, T. Optimization of Ab-Initio Based Tight-Binding Models. Electron. Struct. 2025, 7, 047001. [Google Scholar] [CrossRef]

- Hao, H.; Fang, X.; Chen, Y. Heuristic-Enhanced ILP Process Discovery with Multidimensional Dependency Filtering | Request PDF. Concurr. Comput. Pract. Exp. 2025, 37, e70380. [Google Scholar] [CrossRef]

- Robandi, I.; Aji, A.A.S.; Wibowo, R.S.; Prakasa, M.A.; Prabowo; Putri, V.L.B.; Sutrisno, D.; Widiyawati, E.; Fauzi, M.A. Dynamic Economic Dispatch Using Mixed-Integer Linear Programming for Indonesian Electricity System Integrated with Cascaded Hydropower Plant Considering Take or Pay Contract. Int. J. Intell. Eng. Syst. 2025, 18, 467–481. [Google Scholar] [CrossRef]

- Su, K.; Yang, C.; Shao, Y.; Jiang, D.; Zhou, C.; Wang, L.; Liu, D.; Zhu, P.; Ding, Y.; Zheng, C.; et al. Accelerating multi-energy system online optimization via integer state variable prediction with operation strategy learning. Energy 2025, 341, 139337. [Google Scholar] [CrossRef]

- Ning, C.; Ma, A.; Dong, Z. Data-driven multi-stage distributionally robust scheduling for coupled electricity-hydrogen-refinery systems. Appl. Energy 2025, 401, 126620. [Google Scholar] [CrossRef]

- Haviv, I.; Rabinovich, D. A near-optimal kernel for a coloring problem. Discret. Appl. Math. 2025, 377, 66–73. [Google Scholar] [CrossRef]

- Ariyanto, A.Y.; Kurdhi, N.A. A whale optimization algorithm approach to the heterogeneous electric vehicle routing problem. AIP Conf. Proc. 2025, 3446, 020035. [Google Scholar]

- Ansari, M.; Khamooshi, M.; Toyserkani, E. Adaptive model-based optimization for fusion-based metal additive manufacturing (directed energy deposition). J. Manuf. Process. 2023, 108, 588–595. [Google Scholar] [CrossRef]

- Mirzaee, H.; Kamrava, S. Estimation of internal states in a Li-ion battery using BiLSTM with Bayesian hyperparameter optimization. J. Energy Storage 2023, 74, 109522. [Google Scholar] [CrossRef]

- Ding, X.; Wang, Y.; Guo, P.; Sun, W.; Harrison, G.P.; Lv, X.; Weng, Y. A novel physical and data-driven optimization methodology for designing a renewable energy, power to gas and solid oxide fuel cell system based on ensemble learning algorithm. Energy 2024, 313, 134002. [Google Scholar] [CrossRef]

- Kasterke, M.; Kaufmann, L.; Kateri, M.; Brands, T. An expectation—Maximization algorithm for spectral reconstruction under the spectral hard model. Chemom. Intell. Lab. Syst. 2025, 267, 105518. [Google Scholar] [CrossRef]

- Dey, B.; Zhao, D.; Andrews, B.H.; Newman, J.A.; Izbicki, R.; Lee, A.B. Towards Instance-Wise Calibration: Local Amortized Diagnostics and Reshaping of Conditional Densities (LADaR). arXiv 2025, arXiv:2205.14568. [Google Scholar] [CrossRef]

- Xue, Z.; Peng, W.; Zhang, J.; Chen, R. Two-echelon optimization framework for semi-autonomous truck platooning in container drayage. Comput. Oper. Res. 2026, 188, 107343. [Google Scholar] [CrossRef]

- Li, Z.; Zhang, S.; Yang, X. Evaluation and development of Nusselt number and friction factor correlations for airfoil-fin printed circuit heat exchangers. Int. J. Heat Mass Transf. 2025, 253, 127512. [Google Scholar] [CrossRef]

- Roudbari, N.; Firouzjah, K.G.; Ghasemi, J. Scenario-based sizing and siting of battery swapping stations for electric buses using realistic demand modeling on distribution network. Energy 2025, 341, 139378. [Google Scholar] [CrossRef]

- Sarker, P.; Choi, K.; Nahid, A.A.; Samad, M.A. CatBoost with physics-based metaheuristics for thyroid cancer recurrence prediction. BioData Min. 2025, 18, 84. [Google Scholar] [CrossRef]

- Rickett, C.; Sukumar, S.R.; West, K. Search and Query Framework for Workflows with HPC and AI Models. In Proceedings of the Cray User Group; ACM: New York, NY, USA, 2025; pp. 59–68. [Google Scholar] [CrossRef]

| ML Metamodel | Learning Paradigm | Strengths for SBO | Limitations | Applications |

|---|---|---|---|---|

| Gaussian Processes (Kriging) | Probabilistic/Bayesian | Provides predictive uncertainty; highly effective for small datasets | Computationally expensive for large datasets (O(n3)) | Aerospace design, expensive black-box tuning |

| Support Vector Regression (SVR) | Supervised Learning | Robust in high-dimensional spaces; guarantees global optimum for its loss function | Kernel selection and hyperparameter tuning can be complex | Electronics and telecommunications optimization |

| Random Forest (RF) | Ensemble (Bagging) | Handles nonlinearities and mixed variable types well; robust to outliers | Poor extrapolation outside the training data domain | Supply chain, logistics, combinatorial problems |

| Gradient Boosting (XGBoost/LightGBM) | Ensemble (Boosting) | Extremely high predictive accuracy; computationally efficient training | Risk of overfitting if hyperparameters are not carefully tuned | Industrial process optimization, energy systems |

| Deep Neural Networks (DNN) | Deep Learning | Unmatched capacity for modeling highly complex, nonlinear, large-scale systems | Requires large amounts of simulation data for effective training | Robotics, complex multi-physics simulations |

| Physics-Informed Neural Networks (PINNs) | Deep Learning + Physics | Embeds physical laws in the loss function, reducing data dependency | Complex to formulate and train; loss landscape optimization is challenging | Fluid dynamics, thermodynamics, structural mechanics |

| Methods | Best Suited Problems | Strengths | Weaknesses | Typical Performance |

|---|---|---|---|---|

| GA | Combinatorial, scheduling, antenna design | Strong global exploration, flexible encoding | Slow convergence, parameter sensitive | Good robustness, moderate speed |

| PSO | Continuous optimization, feature selection, production planning | Fast convergence, easy implementation | Premature convergence | High efficiency on smooth landscapes |

| ACO | Routing, multi-depot vehicle problems | Excellent for path construction | High computation on large graphs | High solution quality, slower runtime |

| DE | High-dimensional continuous optimization | Strong mutation-based exploration | Sensitive to control parameters | High accuracy, good scalability |

| SA | Maintenance optimization, traveling salesman | Can escape local minima, simple implementation | Slow for very large problems | Good for global search |

| TS | Scheduling, vehicle routing | Escapes local optima, flexible heuristics | Complex implementation | Good solution quality |

| Hybrid | Complex real-world systems, multi-objective optimization | Balanced exploration & exploitation | High complexity | Superior robustness |

| DA | Energy systems, WSN optimization | Good balance exploration-exploitation | Newer algorithm, parameter sensitive | High solution quality |

| (CS) | Wireless sensor networks | Efficient search for global optimum | Sensitive to population size | Good convergence on multimodal problems |

| ABC | Wireless networks, adaptive search | Good exploration and adaptive mechanisms | Slower convergence in large-scale problems | Competitive accuracy |

| WOA | Electric vehicle routing | Global exploration, flexible application | Can converge prematurely | Effective for multi-depot VRP |

| HS | Healthcare system optimization | Simple, few parameters | Slow convergence for large-scale problems | Moderate efficiency |

| TLBO | Electronic engineering optimization | Simple implementation, no parameters | Slower convergence for complex problems | Moderate solution quality |

| SSA | Multi-objective optimization | Good for exploration-exploitation balance | Sensitive to parameters | Competitive performance |

| GWO | Attribute reduction | Effective multi-objective search | May converge prematurely | High quality solutions |

| BBO | Engineering optimization | Strong global exploration | Parameter sensitive | High solution quality |

| GSA | Optimization problems | Strong exploration capability | Parameter sensitive | Competitive performance |

| BHA | Cloud workflow scheduling | Global search, simple mechanism | Can be trapped in local minima | Good solution quality |

| FGbSA | Distribution system reconfiguration | Efficient multi-objective search | New algorithm, parameter sensitive | High-quality solutions |

| BSO | Large-scale constrained optimization | Cooperative co-evolution | Complex implementation | Good convergence |

| FPA | Engineering optimization | Improved convergence | Sensitive to parameters | Good performance |

| IWO | Aggregate production planning | Effective multi-objective optimization | Parameter sensitive | High-quality solutions |

| SBO | Expensive simulations, high-dimensional problems | Reduces computational cost; enables rapid exploration; can be combined with metaheuristics | Does not always guarantee global optimality; surrogate selection critical | Efficient for large-scale, computationally expensive problems; flexible for multi-objective and stochastic optimization |

| Exact Optimization Methods | Approximate Optimization Methods | Simulation-Based Optimization | |

|---|---|---|---|

| Principle |

|

|

|

| Exhaustiveness |

|

|

|

| Complexity |

|

|

|

| Speed and applicability |

|

|

|

| Performance |

|

|

|

| Scalability |

|

|

|

| Memory/Computational Resources |

|

|

|

| Ease of Implementation |

|

|

|

| Stopping Criteria |

|

|

|

| Typical Solution Output |

|

|

|

| Applications |

|

|

|

| Research Domain | Vol. Scope | Growth Pattern (Annual) | Dominant Disciplines | Journal Ratio | Epistemological Phase | Key Driver/Challenge |

|---|---|---|---|---|---|---|

| Evolutionary Algorithms | Massive (~140,000) | ≈−1.5% | CS 32.8% (≈4596), Eng 20.5% (≈2870), Math 18.2% (≈2548), Physics 3.6% (≈504), Biochemistry 3% (≈420) | High (52%) | Maturity | Standardization & Hybridization |

| Swarm Intelligence | Medium (~2600) | ≈−1.4% | CS 34.8% (≈904), Eng 24.1% (≈626), Math 15.3% (≈397), Physics 5% (≈130), Decision Sci 3% (≈78) | High (57%) | Consolidation | Protocol Unification & Large-Scale Benchmarking |

| Descent & Statistics | Low (~1000) | ≈−19% | CS 32.8% (≈342), Eng 18.8% (≈196), Math 17.4% (≈181), Physics 4.8% (≈50), Decision Sci 4.2% (≈44) | Very High (60%) | Theoretical Validation | Non-Convex Analysis & Hyperparameter Reduction |

| Tabu Search | High (~14,000) | ≈+8% | CS 30.1% (≈4064), Eng 23.7% (≈3200), Decision Sci 10% (≈1350), Business/Mgt 4.2% (≈567) | Very High (61%) | Integrated Component | Hybridization within High-Performance Solvers |

| Linear Programming | Massive (~193,000) | ≈+1.9% | CS 25.4% (≈49,100), Eng 24.9% (≈48,100), Math 16.3% (≈31,500), Energy 5% (≈9670) | Very High (63%) | Fundamental Bedrock | Big Data, MIP Solvers & AI Calibration |

| Physics-based (PINNs) | Emerging (~5500) | ≈+22% | Eng 26.2% (≈1437), CS 21.3% (≈1169), Physics/Astr 8.5% (≈467), Energy 6.5% (≈357), Math 9.5% (≈521) | Balanced (54%) | Paradigm Shift | Bridging “Black-Box” (Data) & “White-Box” (Laws) |

| Problem Characteristics | Recommended Approach | Rationale | Example Applications |

|---|---|---|---|

| Convex, continuous, well-structured | Linear programming or convex optimization | Global optimality guarantees and high efficiency | Portfolio optimization, production planning |

| Moderate-sized mixed problems | Mixed-integer programming | Exact methods suited to structured formulations | Supply chain and scheduling optimization |

| Large-scale combinatorial problems | Genetic Algorithms, PSO, ACO | Near-optimal solutions within reasonable computation time | Vehicle routing, task scheduling |

| Highly nonlinear non-convex problems | Metaheuristics or hybrid methods | Reduced risk of local minima trapping | Structural design optimization |

| Multi-objective optimization | Pareto-based algorithms (e.g., NSGA-II) | Explicit exploration of trade-offs | Energy system design, engineering design |

| Real-time constrained systems | Lightweight heuristics or local search | Low computational overhead | Robotics control, adaptive planning |

| Physics-governed systems | Physics-informed or hybrid approaches | Consistency with physical constraints | Fluid dynamics and structural simulations |

| Expensive simulator or black-box evaluation | simulation-based optimization with surrogate models | Reduced number of costly simulations while preserving solution accuracy | Digital twins, aerospace design, energy systems optimization |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Abouhssous, K.; Hasan, R.; Zugari, A.; Zakriti, A. Optimization Algorithms: Comprehensive Classification, Principles, and Scientometric Trends. Algorithms 2026, 19, 258. https://doi.org/10.3390/a19040258

Abouhssous K, Hasan R, Zugari A, Zakriti A. Optimization Algorithms: Comprehensive Classification, Principles, and Scientometric Trends. Algorithms. 2026; 19(4):258. https://doi.org/10.3390/a19040258

Chicago/Turabian StyleAbouhssous, Khadija, Rasha Hasan, Asmaa Zugari, and Alia Zakriti. 2026. "Optimization Algorithms: Comprehensive Classification, Principles, and Scientometric Trends" Algorithms 19, no. 4: 258. https://doi.org/10.3390/a19040258

APA StyleAbouhssous, K., Hasan, R., Zugari, A., & Zakriti, A. (2026). Optimization Algorithms: Comprehensive Classification, Principles, and Scientometric Trends. Algorithms, 19(4), 258. https://doi.org/10.3390/a19040258