Enhanced Facial Realism in Personalized Diffusion Models: A Memory-Optimized DreamBooth Implementation for Consumer Hardware

Abstract

1. Introduction

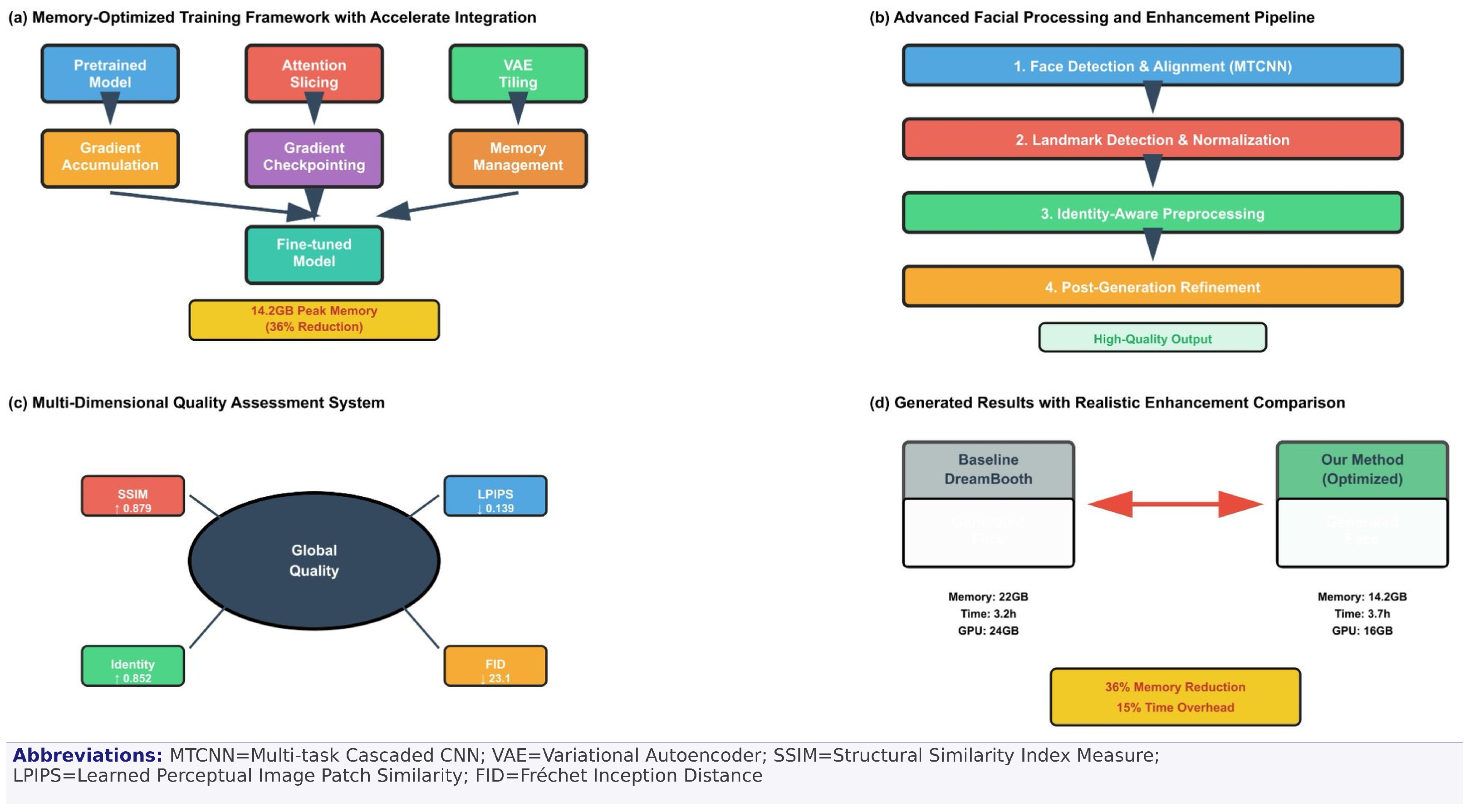

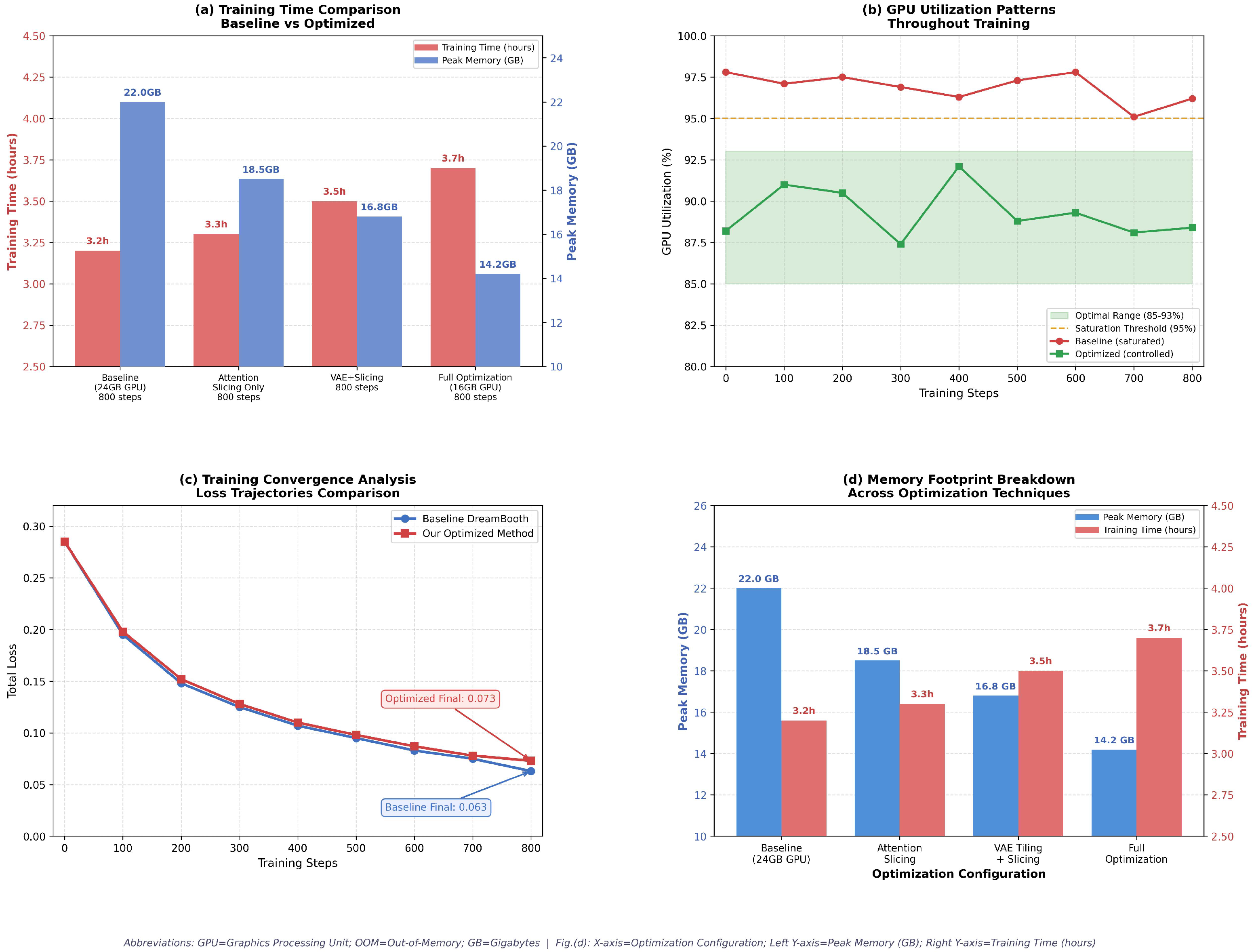

- Memory-Optimized Training Framework: A novel system integration that orchestrates hierarchical memory management—combining gradient accumulation, attention slicing, VAE tiling, and adaptive gradient checkpointing—to reduce peak GPU memory usage from 22 GB to 14.2 GB while maintaining training convergence. The key novelty lies in the adaptive, co-optimized scheduling of these techniques within the Hugging Face Accelerate ecosystem, which has not been systematically demonstrated for consumer-grade personalized diffusion before.

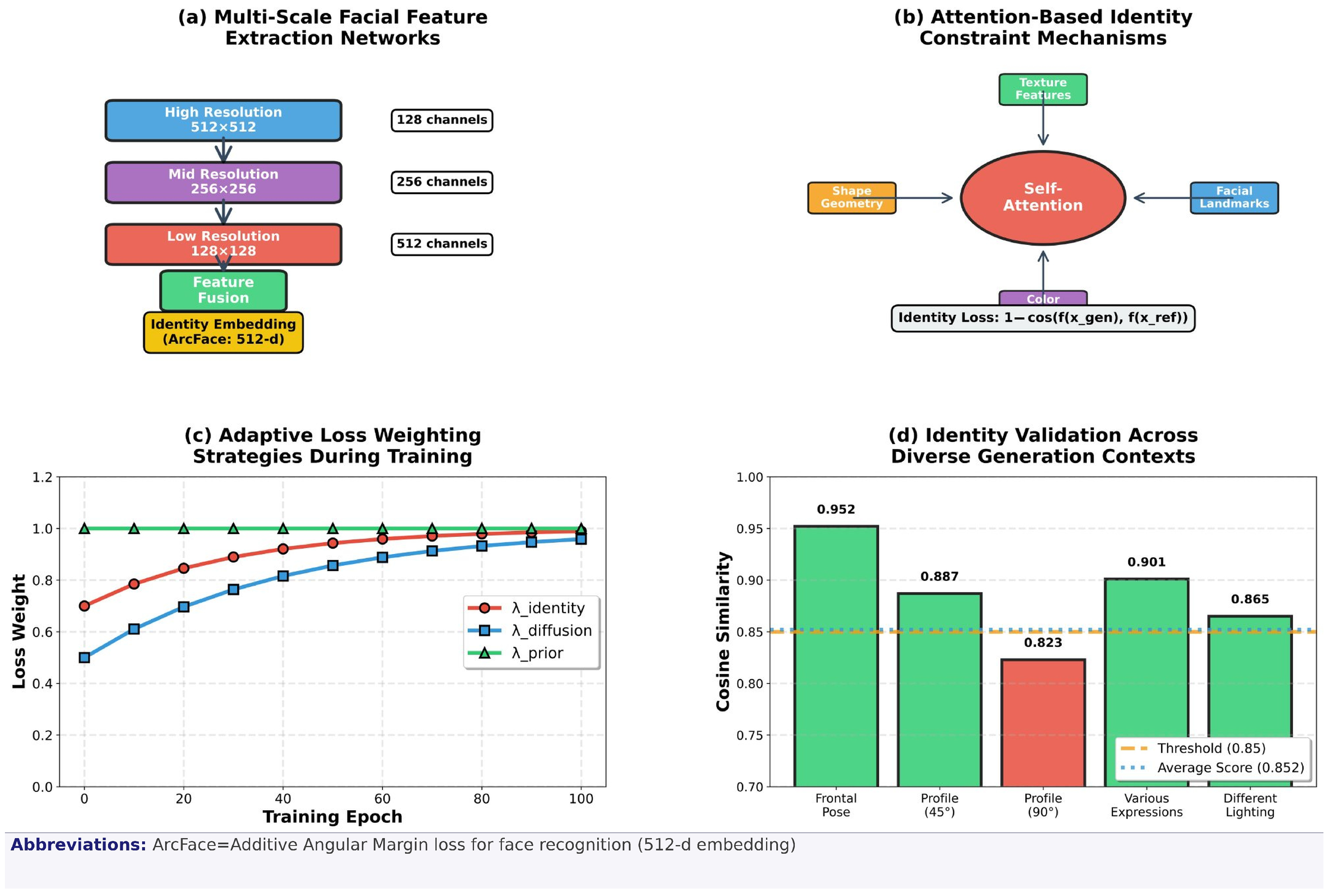

- Enhanced Identity Preservation: An advanced facial feature extraction and preservation mechanism that ensures consistent subject identity across diverse generation contexts through multi-scale facial encoding and constraint-based fine-tuning.

- Automated Quality Assessment System: A comprehensive multi-dimensional evaluation framework incorporating Learned Perceptual Image Patch Similarity (LPIPS), Structural Similarity Index Measure (SSIM), identity verification (cosine similarity), and photorealistic quality metrics for objective performance validation.

- Consumer Hardware Deployment: A complete end-to-end pipeline optimized for 16 GB consumer GPUs, demonstrating the practical feasibility of high-quality personalized generation on accessible hardware configurations.

- Ethical Framework: An explicit discussion of potential misuse scenarios, deepfake risks, and privacy implications, alongside proposed mitigation measures and an ethics statement for responsible deployment.

2. Related Work

2.1. Personalized Diffusion Models and Computational Optimization

2.2. Memory Optimization and Hardware Efficiency

2.3. Facial Feature Preservation and Enhancement

2.4. Quality Assessment and Evaluation Frameworks

3. Methodology

3.1. Memory-Optimized Training Architecture

3.2. Quality Assessment Framework

3.3. Training Pipeline Implementation

| Algorithm 1 Memory-optimized DreamBooth training |

| Require: Pretrained model M, training images ( reference images per subject), prompts Ensure: Fine-tuned personalized model

|

| Algorithm 2 Quality-enhanced inference pipeline |

| Require: Fine-tuned model , generation prompt P, reference image Ensure: Enhanced generated image

|

4. Experimental Results

4.1. Hardware Performance Validation

4.2. Comparative Analysis with State-of-the-Art

5. Discussion

Limitations and Future Work

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ruiz, N.; Li, Y.; Jampani, V.; Pritch, Y.; Rubinstein, M.; Aberman, K. DreamBooth: Fine tuning text-to-image diffusion models for subject-driven generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 22500–22510. [Google Scholar]

- Gal, R.; Alaluf, Y.; Atzmon, Y.; Patashnik, O.; Bermano, A.H.; Chechik, G.; Cohen-Or, D. An image is worth one word: Personalizing text-to-image generation using textual inversion. In Proceedings of the International Conference on Learning Representations, Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Kumari, N.; Zhang, B.; Zhang, R.; Shechtman, E.; Zhu, J.Y. Multi-concept customization of text-to-image diffusion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 1931–1941. [Google Scholar]

- Ye, H.; Zhang, J.; Liu, S.; Han, X.; Yang, W. IP-Adapter: Text compatible image prompt adapter for text-to-image diffusion models. arXiv 2023, arXiv:2308.06721. [Google Scholar]

- Cao, M.; Wang, X.; Qi, Z.; Shan, Y.; Qie, X.; Zheng, Y. MasaCtrl: Tuning-free mutual self-attention control for consistent image synthesis and editing. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–6 October 2023; pp. 22560–22570. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 10684–10695. [Google Scholar]

- Ramesh, A.; Dhariwal, P.; Nichol, A.; Chu, C.; Chen, M. Hierarchical text-conditional image generation with CLIP latents. arXiv 2022, arXiv:2204.06125. [Google Scholar] [CrossRef]

- Wei, Y.; Zhang, Y.; Ji, Z.; Bai, J.; Zhang, L.; Zuo, W. ELITE: Encoding visual concepts into textual embeddings for customized text-to-image generation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–6 October 2023; pp. 15943–15953. [Google Scholar]

- Wei, Y.; Ji, Z.; Bai, J.; Zhang, H.; Zhang, L.; Zuo, W. Masterweaver: Taming editability and face identity for personalized text-to-image generation. In European Conference on Computer Vision; Springer: Cham, Switzerland, 2024; pp. 252–271. [Google Scholar]

- Lin, J.; Wu, Y.; Wang, Z.; Liu, X.; Guo, Y. Pair-ID: A Dual Modal Framework for Identity Preserving Image Generation. IEEE Signal Process. Lett. 2024, 31, 2715–2719. [Google Scholar] [CrossRef]

- Xu, Y.; Zhang, C.; Zhai, B.; Du, S. HP3: Tuning-Free Head-Preserving Portrait Personalization Via 3D-Controlled Diffusion Models. IEEE Signal Process. Lett. 2025, 32, 1226–1230. [Google Scholar] [CrossRef]

- Ma, J.; Liang, J.; Chen, C.; Lu, H. Subject-diffusion: Open domain personalized text-to-image generation without test-time fine-tuning. In Proceedings of the ACM SIGGRAPH 2024 Conference, Denver, CO, USA, 27 July–1 August 2024; pp. 1–12. [Google Scholar]

- Zhang, X.; Li, X.; Wang, T.; Yin, L. Enhancing Face Recognition in Low-Quality Images Based on Restoration and 3D Multiview Generation. In Proceedings of the 2024 IEEE International Joint Conference on Biometrics (IJCB), Buffalo, NY, USA, 15–18 September 2024; pp. 1–10. [Google Scholar]

- He, M.; Clausen, P.; Taşel, A.L.; Ma, L.; Pilarski, O.; Xian, W.; Rikker, L.; Yu, X.; Burgert, R.; Yu, N.; et al. DifFRelight: Diffusion-Based Facial Performance Relighting. In Proceedings of the SIGGRAPH Asia 2024 Conference, Tokyo, Japan, 3–6 December 2024; pp. 1–12. [Google Scholar]

- Yang, H.; Xu, X.; Xu, C.; Zhang, H.; Qin, J.; Wang, Y.; Heng, P.A.; He, S. G2Face: High-Fidelity Reversible Face Anonymization via Generative and Geometric Priors. IEEE Trans. Inf. Forensics Secur. 2024, 19, 8773–8785. [Google Scholar] [CrossRef]

- Grosz, S.A.; Jain, A.K. Genpalm: Contactless palmprint generation with diffusion models. In Proceedings of the 2024 IEEE International Joint Conference on Biometrics (IJCB), Buffalo, NY, USA, 15–18 September 2024; pp. 1–9. [Google Scholar]

- Alimisis, P.; Mademlis, I.; Radoglou-Grammatikis, P.; Sarigiannidis, P.; Papadopoulos, G.T. Advances in diffusion models for image data augmentation: A review of methods, models, evaluation metrics and future research directions. Artif. Intell. Rev. 2025, 58, 112. [Google Scholar] [CrossRef]

- Wang, W.; Mu, M.; Tian, Y.; Hu, Y.; Lu, X. ILSR-Diff: Joint face illumination normalization and super-resolution via diffusion models. Multimed. Syst. 2024, 30, 302. [Google Scholar] [CrossRef]

- Guerrero-Viu, J.; Hasan, M.; Roullier, A.; Harikumar, M.; Hu, Y.; Guerrero, P.; Gutierrez, D.; Masia, B.; Deschaintre, V. Texsliders: Diffusion-based texture editing in clip space. In Proceedings of the ACM SIGGRAPH 2024 Conference, Denver, CO, USA, 27 July–1 August 2024; pp. 1–11. [Google Scholar]

- Zhao, G.; Xu, J.; Wang, X.; Yan, F.; Qiu, S. PSAIP: Prior Structure-Assisted Identity-Preserving Network for Face Animation. Electronics 2025, 14, 784. [Google Scholar] [CrossRef]

- Wang, H.; Jia, X.; Cao, X. EAT-Face: Emotion-Controllable Audio-Driven Talking Face Generation via Diffusion Model. In Proceedings of the 2024 IEEE 18th International Conference on Automatic Face and Gesture Recognition (FG), Istanbul, Turkey, 27–31 May 2024; pp. 1–10. [Google Scholar]

- Baltsou, G.; Sarridis, I.; Koutlis, C.; Papadopoulos, S. Designing and Generating Diverse, Equitable Face Image Datasets for Face Verification Tasks. arXiv 2025, arXiv:2511.17393. [Google Scholar] [CrossRef]

- Liao, F.; Zou, X.; Wong, W. Appearance and pose-guided human generation: A survey. ACM Comput. Surv. 2024, 56, 129. [Google Scholar] [CrossRef]

- Melnik, A.; Miasayedzenkau, M.; Makaravets, D.; Pirshtuk, D.; Akbulut, E.; Holzmann, D.; Renusch, T.; Reichert, G.; Ritter, H. Face generation and editing with stylegan: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 3557–3576. [Google Scholar] [CrossRef]

- Xiu, Y.; Ye, Y.; Liu, Z.; Tzionas, D.; Black, M.J. Puzzleavatar: Assembling 3d avatars from personal albums. ACM Trans. Graph. 2024, 43, 283. [Google Scholar] [CrossRef]

- Zhu, X.; Zhou, J.; You, L.; Yang, X.; Chang, J.; Zhang, J.J.; Zeng, D. DFIE3D: 3D-aware disentangled face inversion and editing via facial-contrastive learning. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 8310–8326. [Google Scholar] [CrossRef]

- Sii, J.W.; Chan, C.S. Gorgeous: Creating narrative-driven makeup ideas via image prompts. Multimed. Tools Appl. 2025, 84, 43805–43826. [Google Scholar] [CrossRef]

- Xu, C.; Qian, Y.; Zhu, S.; Sun, B.; Zhao, J.; Liu, Y.; Li, X. UniFace++: Revisiting a Unified Framework for Face Reenactment and Swapping via 3D Priors. Int. J. Comput. Vis. 2025, 133, 4538–4554. [Google Scholar] [CrossRef]

- Chen, W.; Zhu, B.; Xu, K.; Dou, Y.; Feng, D. VoiceStyle: Voice-based Face Generation Via Cross-modal Prototype Contrastive Learning. ACM Trans. Multimed. Comput. Commun. Appl. 2024, 20, 279. [Google Scholar] [CrossRef]

- Xiong, L.; Cheng, X.; Tan, J.; Wu, X.; Li, X.; Zhu, L.; Ma, F.; Li, M.; Xu, H.; Hu, Z. SegTalker: Segmentation-based Talking Face Generation with Mask-guided Local Editing. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, Australia, 28 October–1 November 2024; pp. 3170–3179. [Google Scholar]

- Asperti, A.; Colasuonno, G.; Guerra, A. Illumination and Shadows in Head Rotation: Experiments with Denoising Diffusion Models. Electronics 2024, 13, 3091. [Google Scholar] [CrossRef]

- Tai, Y.; Yang, K.; Peng, T.; Huang, Z.; Zhang, Z. Defect Image Sample Generation With Diffusion Prior for Steel Surface Defect Recognition. IEEE Trans. Autom. Sci. Eng. 2024, 22, 8239–8251. [Google Scholar] [CrossRef]

- Yan, W.; Shao, W.; Zhang, D.; Xiao, L. FaceGCN: Structured Priors Inspired Graph Convolutional Networks for Blind Face Restoration. IEEE Trans. Circuits Syst. Video Technol. 2025, 35, 6214–6230. [Google Scholar] [CrossRef]

- Xue, H.; Zhang, Z.; Li, M.; Dai, Z.; Wu, Z. Identity-Preserving Audio-Driven Holistic Human Motion Video Generation. In Proceedings of the ICASSP 2025—2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; pp. 1–5. [Google Scholar]

- Dhanyalakshmi, R.; Stoian, G.; Danciulescu, D.; Hemanth, D.J. A Survey on Face-Swapping Methods for Identity Manipulation in Deepfake Applications. IET Image Process. 2025, 19, e70132. [Google Scholar] [CrossRef]

- Liu, T.; Chen, F.; Fan, S.; Du, C.; Chen, Q.; Chen, X.; Yu, K. Anitalker: Animate vivid and diverse talking faces through identity-decoupled facial motion encoding. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, Australia, 28 October–1 November 2024; pp. 6696–6705. [Google Scholar]

- Yu, H.; Qu, Z.; Yu, Q.; Chen, J.; Jiang, Z.; Chen, Z.; Zhang, S.; Xu, J.; Wu, F.; Lv, C.; et al. Gaussiantalker: Speaker-specific talking head synthesis via 3d gaussian splatting. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, Australia, 28 October–1 November 2024; pp. 3548–3557. [Google Scholar]

- Back, S.Y.; Son, G.; Jeong, D.; Park, E.; Woo, S.S. Preserving Old Memories in Vivid Detail: Human-Interactive Photo Restoration Framework. In Proceedings of the 33rd ACM International Conference on Information and Knowledge Management, Boise, ID, USA, 21–25 October 2024; pp. 5180–5184. [Google Scholar]

- Xiao, G.; Yin, T.; Freeman, W.T.; Durand, F.; Han, S. Fastcomposer: Tuning-free multi-subject image generation with localized attention. Int. J. Comput. Vis. 2024, 133, 1175–1194. [Google Scholar] [CrossRef]

- Khan, W.; Topham, L.; Khayam, U.; Ortega-Martorell, S.; Heather, P.; Ansell, D.; Al-Jumeily, D.; Hussain, A. Person de-Identification: A Comprehensive Review of Methods, Datasets, Applications, and Ethical Aspects Along-With New Dimensions. IEEE Trans. Biom. Behav. Identity Sci. 2024, 7, 293–312. [Google Scholar] [CrossRef]

- Pham, K.T.; Chen, J.; Chen, Q. TALE: Training-free Cross-domain Image Composition via Adaptive Latent Manipulation and Energy-guided Optimization. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, Australia, 28 October–1 November 2024; pp. 3160–3169. [Google Scholar]

| Method | LPIPS ↓ | SSIM ↑ | Identity ↑ | FID ↓ | Memory | GPU |

|---|---|---|---|---|---|---|

| DreamBooth [1] | 0.142 | 0.876 | 0.847 | 24.3 | 22 GB | 24 GB |

| MasterWeaver [9] | 0.138 | 0.882 | 0.856 | 22.8 | 20 GB | 24 GB |

| Subject-Diffusion [12] | 0.145 | 0.871 | 0.841 | 25.7 | 18 GB | 24 GB |

| HP3 [11] | 0.151 | 0.865 | 0.838 | 26.4 | 16 GB | 16 GB |

| FastComposer [39] | 0.148 | 0.863 | 0.831 | 27.2 | 16 GB | 16 GB |

| IP-Adapter [4] | 0.144 | 0.869 | 0.844 | 25.1 | 18 GB | 24 GB |

| Ours | 0.139 | 0.879 | 0.852 | 23.1 | 14.2 GB | 16 GB |

| Method | Training Time | Inference Time | Peak Memory | Convergence Steps |

|---|---|---|---|---|

| DreamBooth [1] | 3.2 h | 3.8 s | 22 GB | 800 |

| MasterWeaver [9] | 4.1 h | 4.2 s | 20 GB | 1000 |

| Subject-Diffusion [12] | 2.8 h | 3.5 s | 18 GB | 600 |

| HP3 [11] | N/A (test-time) | 8.2 s | 16 GB | N/A |

| Ours | 3.7 h | 4.2 s | 14.2 GB | 800 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Gupta, S.; Ray, K.; Kaiser, S.; Hossain, S.; Faubert, J. Enhanced Facial Realism in Personalized Diffusion Models: A Memory-Optimized DreamBooth Implementation for Consumer Hardware. Algorithms 2026, 19, 257. https://doi.org/10.3390/a19040257

Gupta S, Ray K, Kaiser S, Hossain S, Faubert J. Enhanced Facial Realism in Personalized Diffusion Models: A Memory-Optimized DreamBooth Implementation for Consumer Hardware. Algorithms. 2026; 19(4):257. https://doi.org/10.3390/a19040257

Chicago/Turabian StyleGupta, Sandeep, Kanad Ray, Shamim Kaiser, Sazzad Hossain, and Jocelyn Faubert. 2026. "Enhanced Facial Realism in Personalized Diffusion Models: A Memory-Optimized DreamBooth Implementation for Consumer Hardware" Algorithms 19, no. 4: 257. https://doi.org/10.3390/a19040257

APA StyleGupta, S., Ray, K., Kaiser, S., Hossain, S., & Faubert, J. (2026). Enhanced Facial Realism in Personalized Diffusion Models: A Memory-Optimized DreamBooth Implementation for Consumer Hardware. Algorithms, 19(4), 257. https://doi.org/10.3390/a19040257