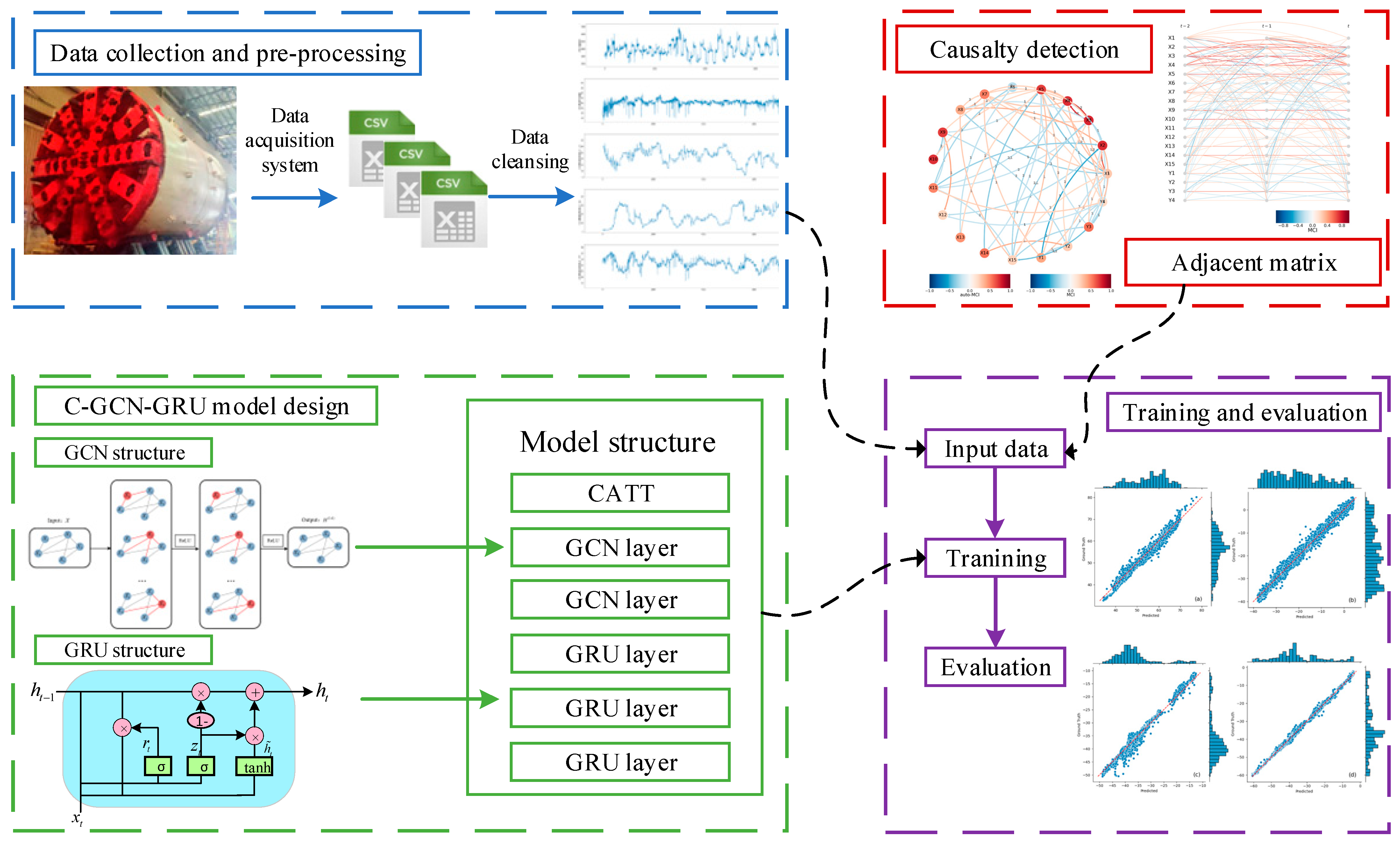

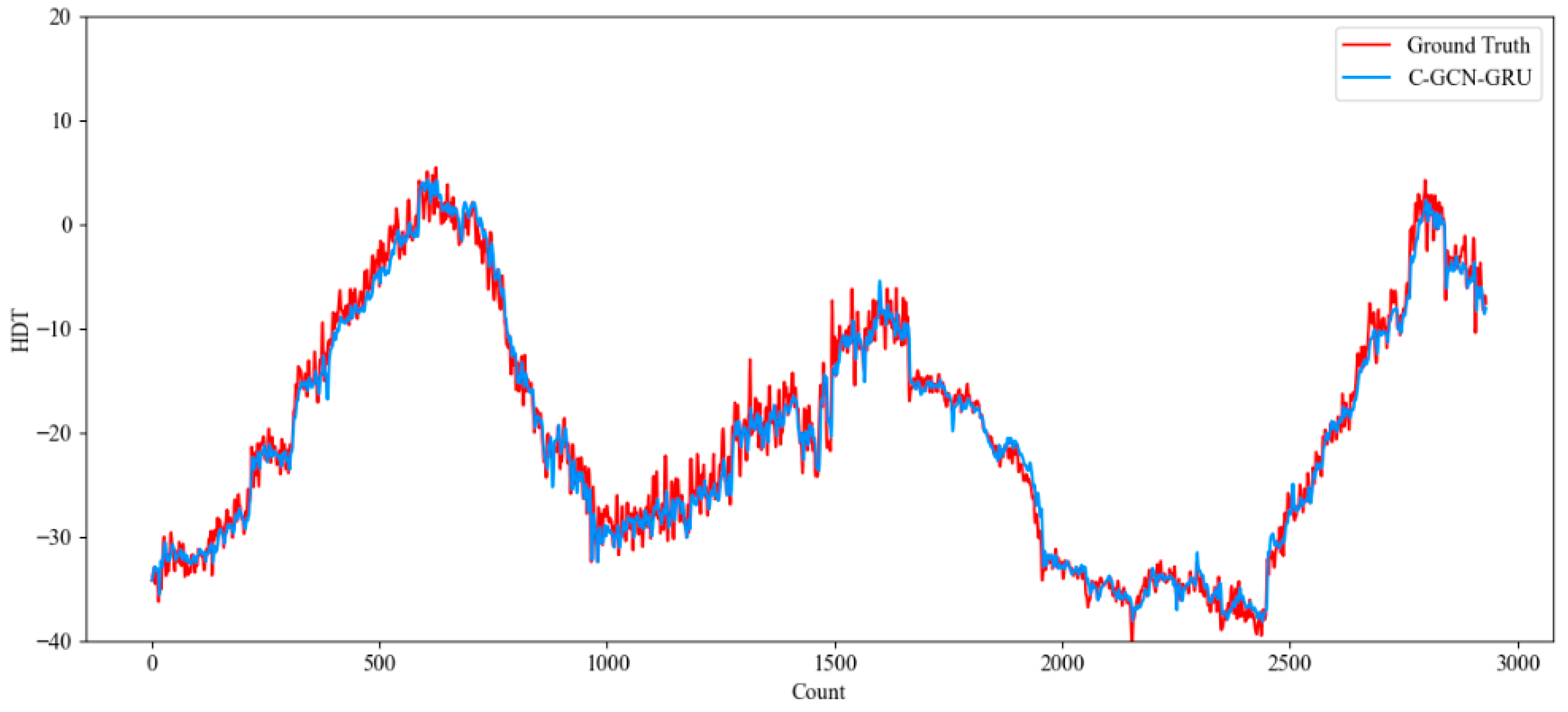

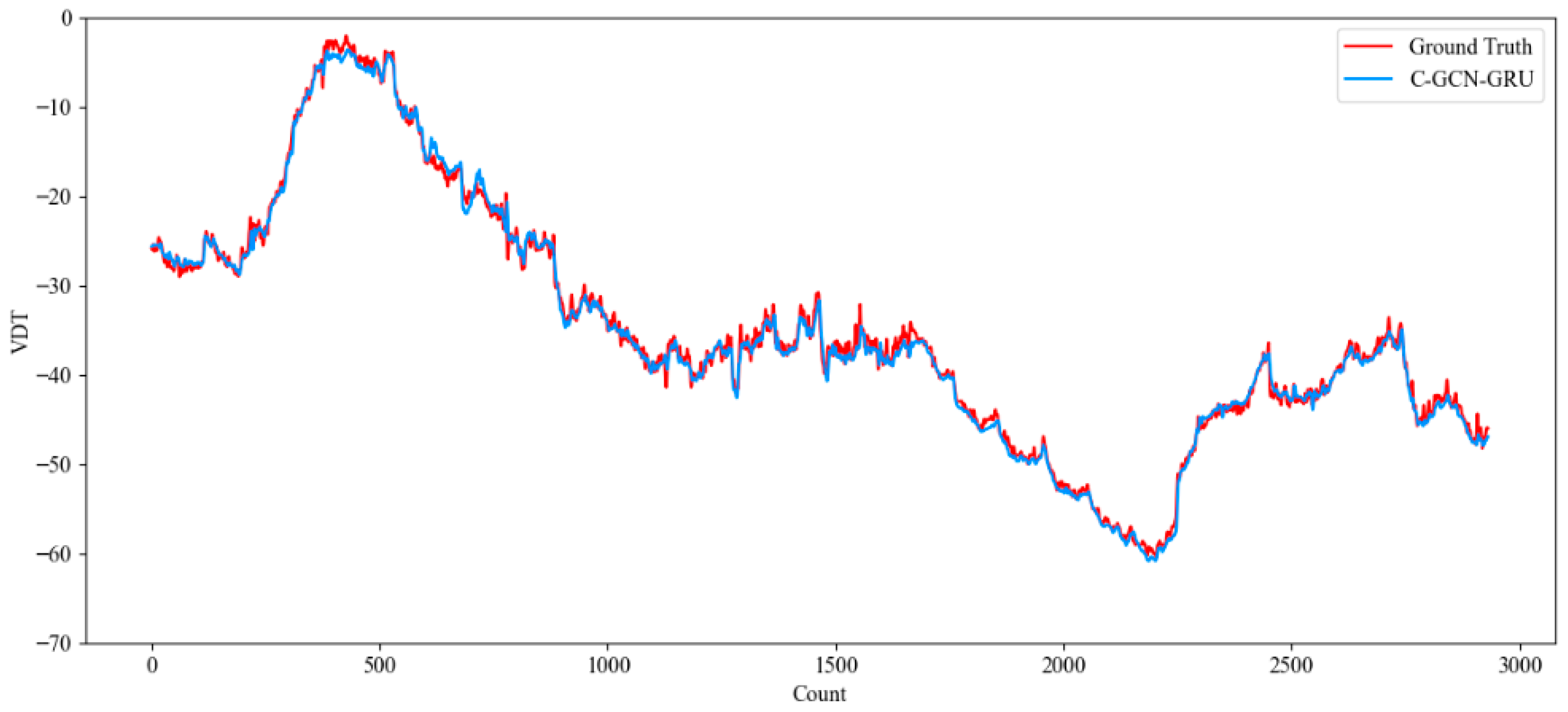

In order to optimize the operating parameters of the shield machine and control the trajectory deviation, a model is needed to simulate the nonlinear relationship between the input parameters and the trajectory deviation. In this paper, we propose the causal extraction adjacency matrix to be incorporated into the GCN-GRU deep learning model with a multi-head causal attention mechanism. In this section, we introduce the GCN, GRU structure, multi-head causal attention mechanism and the training and evaluation process of the model in detail.

3.2.1. GCN-GRU Mechanism

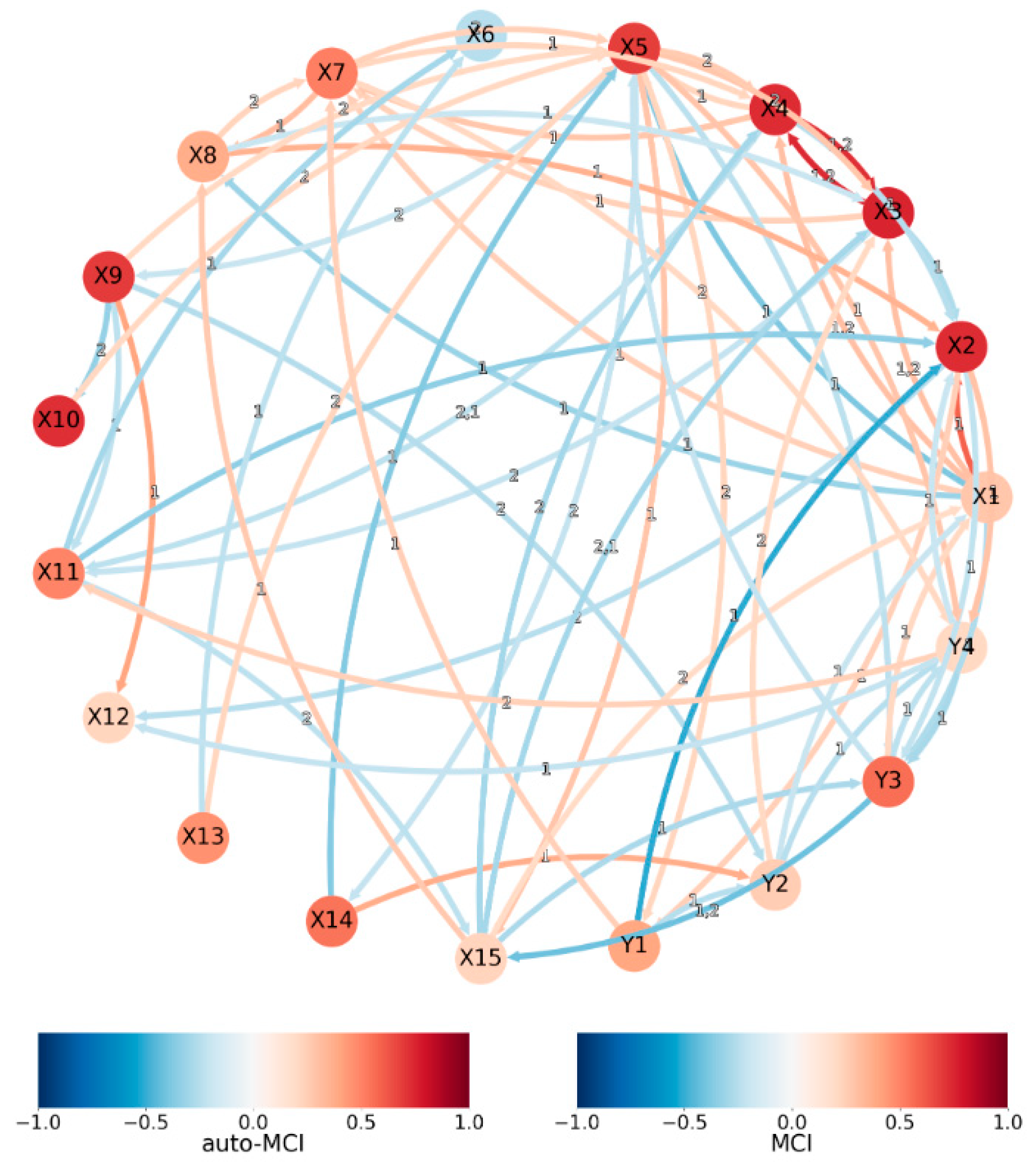

During the shield machine tunneling process, there are complex interactions between the attitude parameters, which contain not only dependencies in the time dimension but also spatial correlations between the parameters. Therefore, we propose to combine the adjacency matrix established by causality with GCN to specifically capture the spatial dependencies among shield machine parameters. Unlike traditional adjacency matrices constructed based on correlation coefficients or expert experience, our adjacency matrix is constructed by causal relationships identified from actual construction data through the PCMCI+ method, which can more accurately reflect the physical influence mechanisms among parameters.

The main idea of GCN is to extract features by aggregating the information of nodes and their neighboring nodes by using the connection relationship between nodes in the graph structure when processing graph data. The hidden state of the network layer at time step

can be expressed as in Equation (3). The schematic diagram of the GCN structure is shown in

Figure 3’s GCN model structure.

where

is the input message of the

layer (

is the initial input data), and

is the adjacency matrix. The difference in the models is mainly in the activation function

. The choice of the forward propagation rule is represented in Equation (4).

where

is the trainable weight matrix of layer

, and

is a nonlinear activation function.

in the above equation indicates that the feature vectors of all neighboring nodes of the target node are summed except for the target node itself, and the addition of a unit matrix can solve this problem. In addition, normalizing the adjacency matrix

by using the positive definite matrix

helps the algorithm to proceed smoothly. Therefore, the forward propagation equation can be designed as shown in Equation (5).

, is the unit matrix. Embedded nodes can be input into any loss function for forward propagation, using stochastic gradient descent and backpropagation strategies to adjust the weight parameters.

The tunneling parameters of the shield machine show obvious time-series characteristics, and the historical trends of the parameters have an important influence on the future attitude. In our test data analysis, we observed complex nonlinear time-series dependencies of shield machine parameters for the Karnaphuli River Tunnel Project, as they varied in different geological conditions. Therefore, we need a model structure that can effectively capture the long-term time-series dependence and is computationally efficient.

Many studies have highlighted not only the causal relationship between TBM parameters but also their temporal dependency. In time-series prediction tasks, a commonly employed deep learning approach is the Recurrent Neural Network (RNN), which is particularly effective for handling sequential dependencies in data. GRU is a computationally efficient variant of LSTM that addresses the gradient vanishing problem of standard RNN through update and reset gating mechanisms, and has demonstrated strong performance in TBM parameter prediction tasks [

25] (Gao et al. 2019). This study employs the GRU as the temporal modeling component, the structure of which is depicted in

Figure 4.

The GRU regulates information flow through two gating mechanisms: the reset gate controls how much of the previous hidden state is combined with the current input , while the update gate determines how much prior memory is retained. Each time step produces a hidden state passed to subsequent steps. The specific formulas of GRU are shown in Equations (6)–(10).

Here, the output hidden state of the GRU is used as input, and a random weight is generated . The attentional weights for each modality are obtained by multiplying with , followed by a bias, and performing a operation on the sum.

3.2.2. Multiple Causal Attention Mechanisms

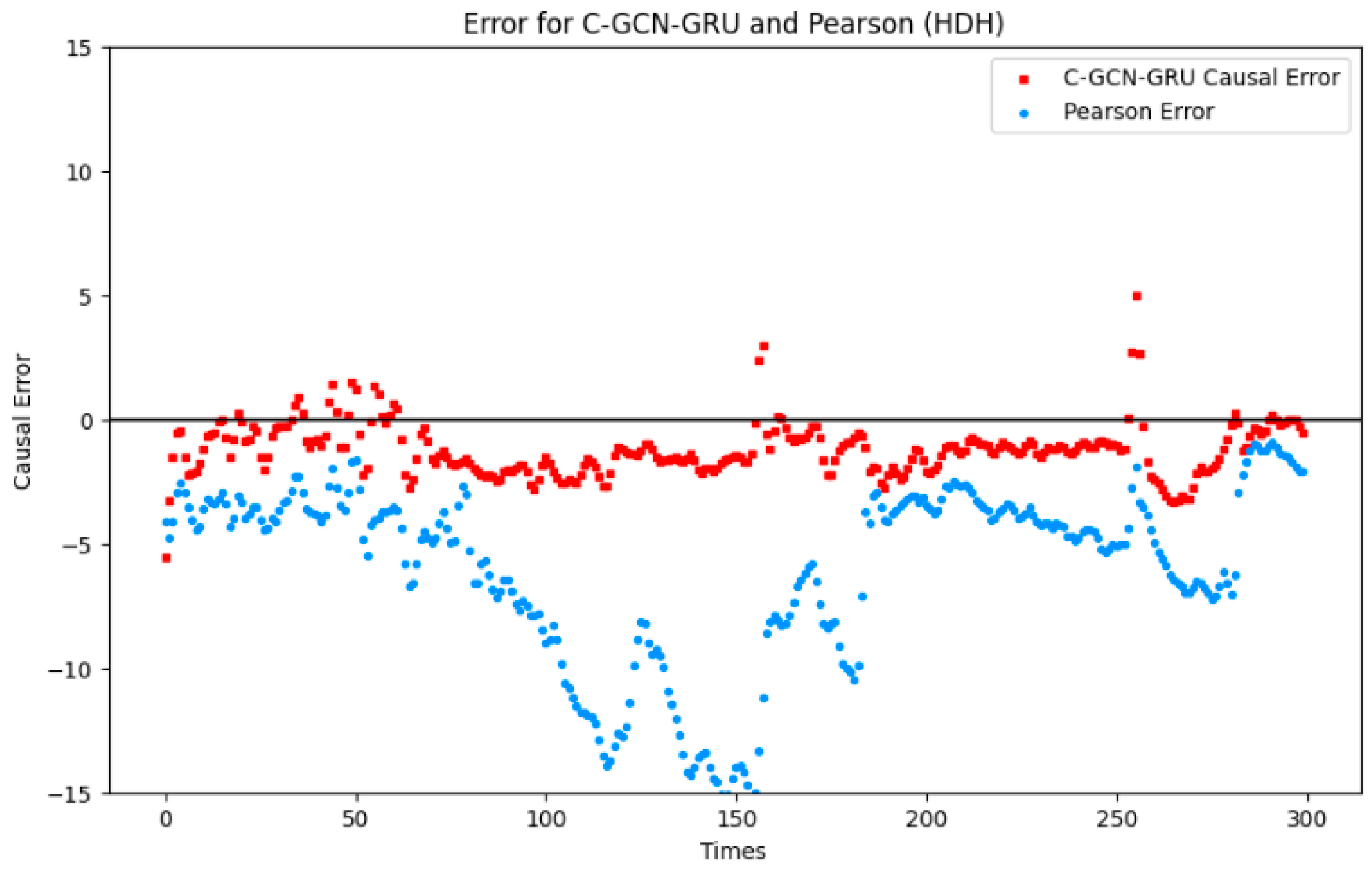

In the shield tunneling environment, the factors affecting the attitude of the shield machine are very complex, including changes in geological conditions, adjustments of operating parameters, and various unmeasured factors (e.g., groundwater and rock interfaces). These factors, as potential confounders, may cause the model to misidentify the relationship between certain parameters. For example, in our data analysis, we found that the correlation between cutter torque and thrust increased significantly when the shield traversed a water-bearing sand layer, but this correlation does not necessarily reflect a direct causal relationship between them; it may be due to the common influencing factor of groundwater pressure.

In the traditional self-attention mechanism, the weights are usually obtained by multiplying the query set Query and the key set Key, and then updating the value set Value. Then, these weights are unsupervised, i.e., the attention weights are not labeled with weight labels during the training process, which may lead to data bias. For example, if there are a lot of descriptions of “man riding a horse” in the training data, the self-attention mechanism will tend to associate “riding” with “man” and “horse”. In the testing stage, when encountering the scenario of “a person driving a horse and carriage”, the self-attention mechanism may incorrectly associate “person” with “horse” and infer “riding”, ignoring the fact that “riding” is the same as “riding” and ignore “carriage”.

The problem is essentially caused by confounding factors (a proper name in causal reasoning), such as when there is no direct causal relationship between X and Y, but X and Y are still related to each other. The theory can be explained by a causal structure diagram, as shown in

Figure 5.

In the figure, X is the input picture, Y is the label, C denotes common knowledge (e.g., a person can ride a horse), C is the confounding factor, and M is the target in picture X. From the causal graph, we can see that there are two paths for X→Y: X→M→Y and X←C→M→Y (with confounders). Therefore, no matter how large the dataset is, if we do not know the confounders, we can never identify the true causal effect by only using P(Y|X) to train the model.

To solve this problem, Nanyang Technological University and Monash University, Australia, jointly proposed Causal Attention (CATT), which uses the front-door criterion and does not require the assumed knowledge of confounders [

34]. Intra-sample attention (IS-ATT) and cross-sample attention (CS-ATT) are proposed to comply with the Q-K-V operation, and the parameters of the Q-K-V operation can also be shared between IS-ATT and CS-ATT to further improve the efficiency in certain architectures. The mathematical processes for in-sample attention, cross-sample attention, and front-gate criterion are shown in Equations (11)–(13).

where

and

are both feature encoding functions.

and

denote vectors.

The structure of a single module of causal attention is shown in

Figure 6.

These include IS-ATT and CS-ATT. After calculating and , we can feed them into the predictor to make decisions or use more stacked attention layers for further embeddings.

Correspondingly, the In-Sampling attention (IS-ATT) formula is as follows:

where all

and

are derived from the current input sample features, and

is derived from

. In cross-modal i-attention, the query vectors represent the sentence context, while the query vectors in the self-attention mechanism still represent the input sample features. For

, each attention vector

is an IS-Sampling probability estimate of

, and the output

is an IS-Sampling evaluation vector.

Similar to IS-ATT, the structure of Cross-Sample attention (CS-ATT) is shown in the red part of the above figure, and the algorithm is as follows:

where both

and

are derived from other samples in the training set, and

is derived from

.

approximates

, and

is the CS-Sampling evaluation vector.

Finally, a single causal attention is obtained from IS-ATT and CS-ATT, respectively, and these two values are then spliced together as the final value. In this study, the “other samples” in CS-ATT are drawn exclusively from within the same training mini-batch, ensuring that no validation or test information is accessible during the attention computation. Mini-batches are constructed solely from the training partition under the chronological data split, guaranteeing strict prevention of information leakage through the cross-sample attention mechanism.

The CATT module is incorporated primarily as a confounding-aware architectural component, whose design is theoretically inspired by the front-door criterion. While the front-door criterion provides the motivation for the intra-sample and cross-sample attention structure, the formal conditions required for front-door identification cannot be rigorously verified in the shield tunneling context. The module should therefore be understood as improving robustness to spurious correlations and distributional biases in the training data, rather than formally identifying causal effects in the do-calculus sense.

The C-GCN-GRU approach begins with using the PCMCI+ algorithm to extract and quantify causal relationships among shield machine parameters. These quantized causal connections are then processed through the CATT module to emphasize relevant causal features. Afterward, the data is fed into a deep learning model that integrates Graph Convolutional Networks (GCN) with Gated Recurrent Units (GRU) to further model the relationships and temporal dependencies.

Figure 7 illustrates the full structure of this deep learning model, showcasing how each component contributes to the model’s overall predictive capability. The input temporal graph data

first passes through the input projection layer, which transforms the features into 128 dimensions. The Causal Self-Attention (CATT) module then processes the data while maintaining the temporal causal structure. The output of the attention module

flows through two consecutive GCN layers, which combine neighboring matrix information for graph convolution, thus maintaining a 128-dimensional feature representation. Following the two GCN layers, the output tensor of shape

is transposed to

, where the sequence length dimension (30) serves directly as the input size for the first GRU layer. This transposition operation aligns the GCN spatial embeddings with the sequential input format required by the GRU temporal modeling component, without introducing additional learnable parameters. Three successive GRU layers process the sequence information using explicit hidden state layers

. Finally, the output of the GRU is passed through the fully connected layers to produce the final 6-dimensional output.