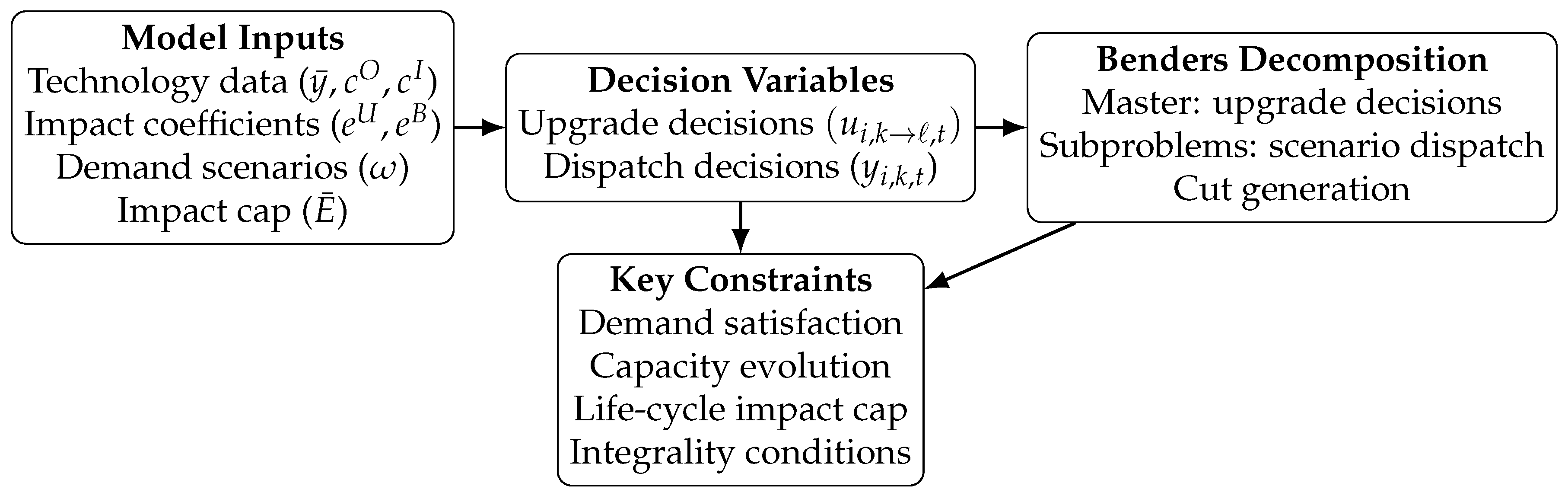

Figure 1.

Overview of the proposed life-cycle constrained planning model and solution approach.

Figure 1.

Overview of the proposed life-cycle constrained planning model and solution approach.

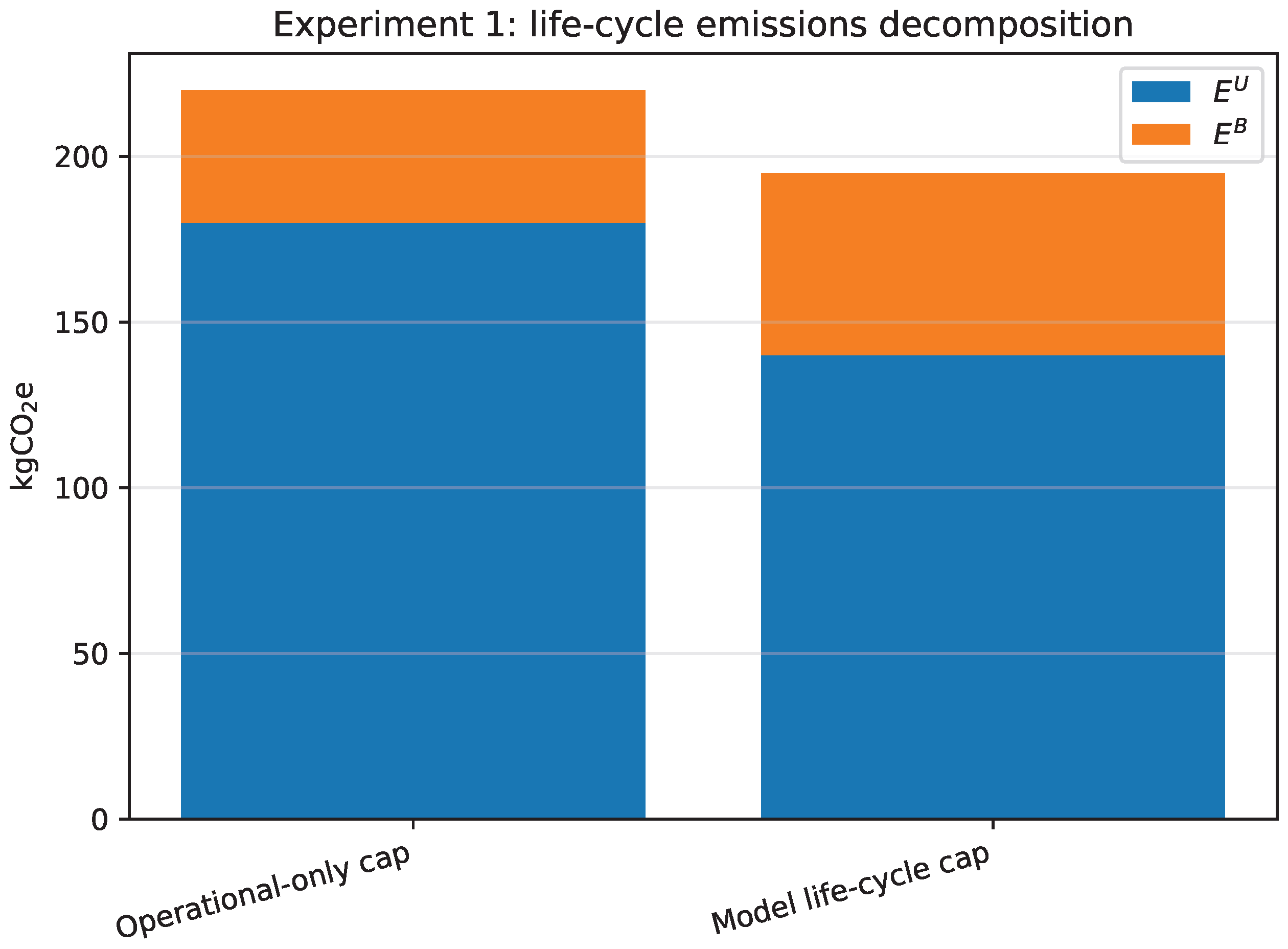

Figure 2.

Experiment 1: decomposition of the achieved time-weighted life-cycle impacts into use-phase () and embodied () components for the two compared formulations.

Figure 2.

Experiment 1: decomposition of the achieved time-weighted life-cycle impacts into use-phase () and embodied () components for the two compared formulations.

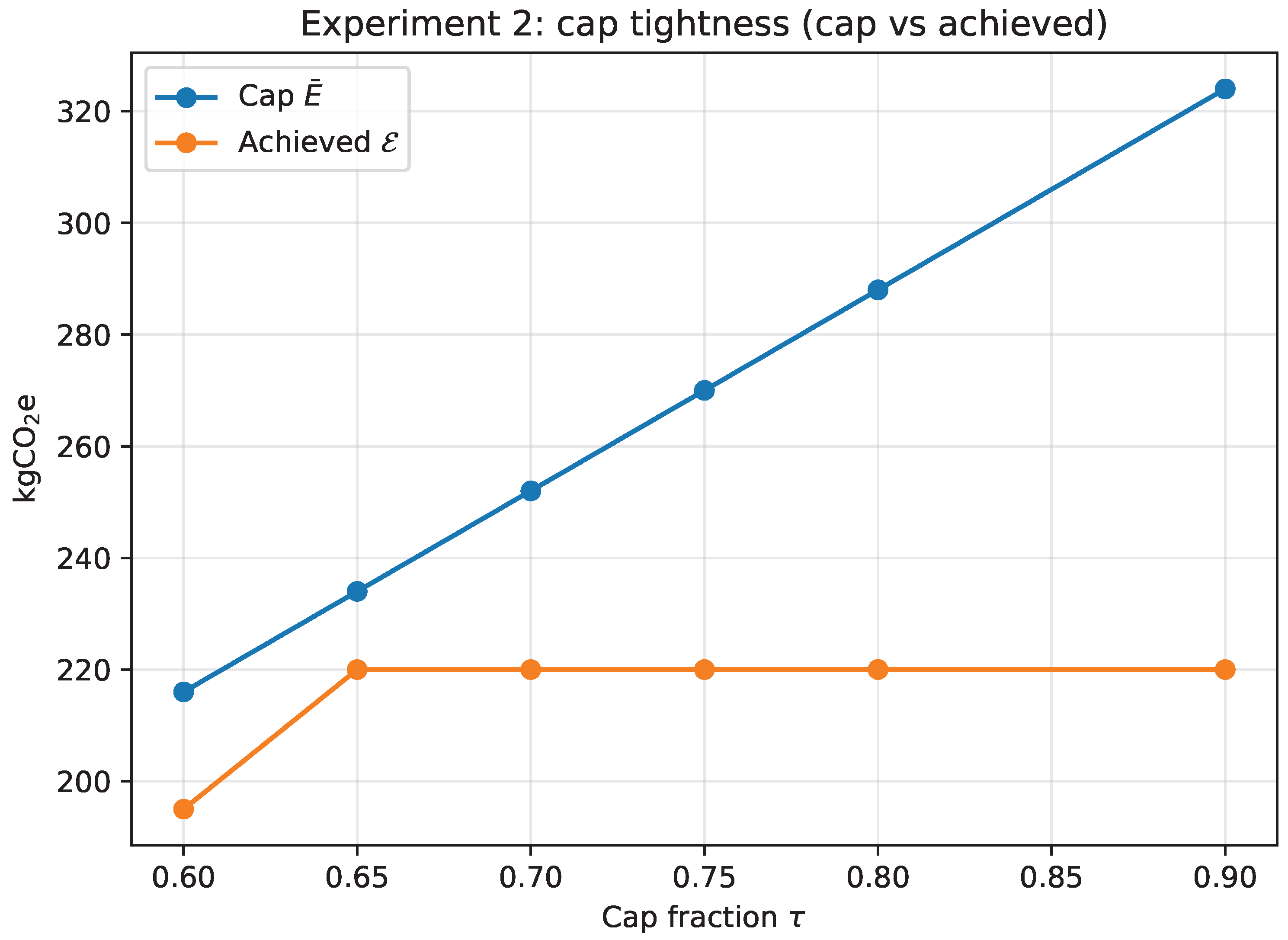

Figure 3.

Experiment 2: cap tightness as the cap fraction varies. The cap is calibrated as and is compared to the achieved .

Figure 3.

Experiment 2: cap tightness as the cap fraction varies. The cap is calibrated as and is compared to the achieved .

Figure 4.

Experiment 2: objective value (discounted cost) vs. cap fraction . The objective increases when the cap becomes binding and additional upgrades are required.

Figure 4.

Experiment 2: objective value (discounted cost) vs. cap fraction . The objective increases when the cap becomes binding and additional upgrades are required.

Figure 5.

Experiment 2: sensitivity to time-weighting. For each , the cap is recalibrated as and compared to the achieved .

Figure 5.

Experiment 2: sensitivity to time-weighting. For each , the cap is recalibrated as and compared to the achieved .

Figure 6.

Experiment 4 (Family A): runtime comparison between the monolithic MILP and the outer-loop Benders implementation on moderate instances.

Figure 6.

Experiment 4 (Family A): runtime comparison between the monolithic MILP and the outer-loop Benders implementation on moderate instances.

Figure 7.

Experiment 4 (Family B, ): runtime comparison between the scenario-expanded monolithic MILP and the outer-loop Benders implementation.

Figure 7.

Experiment 4 (Family B, ): runtime comparison between the scenario-expanded monolithic MILP and the outer-loop Benders implementation.

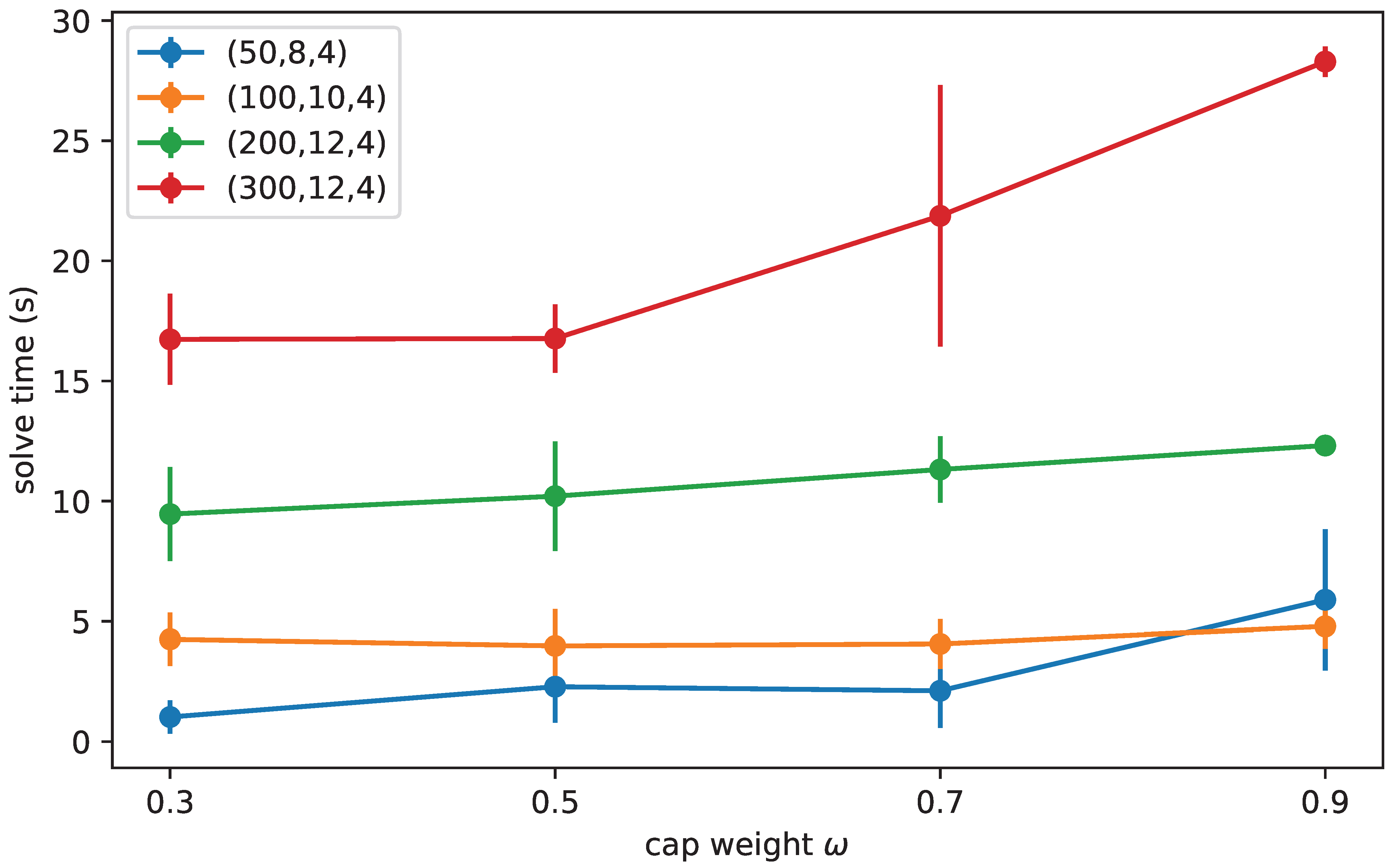

Figure 8.

Family A cap-weight sensitivity: solve time vs. (mean ± sd over 5 seeds).

Figure 8.

Family A cap-weight sensitivity: solve time vs. (mean ± sd over 5 seeds).

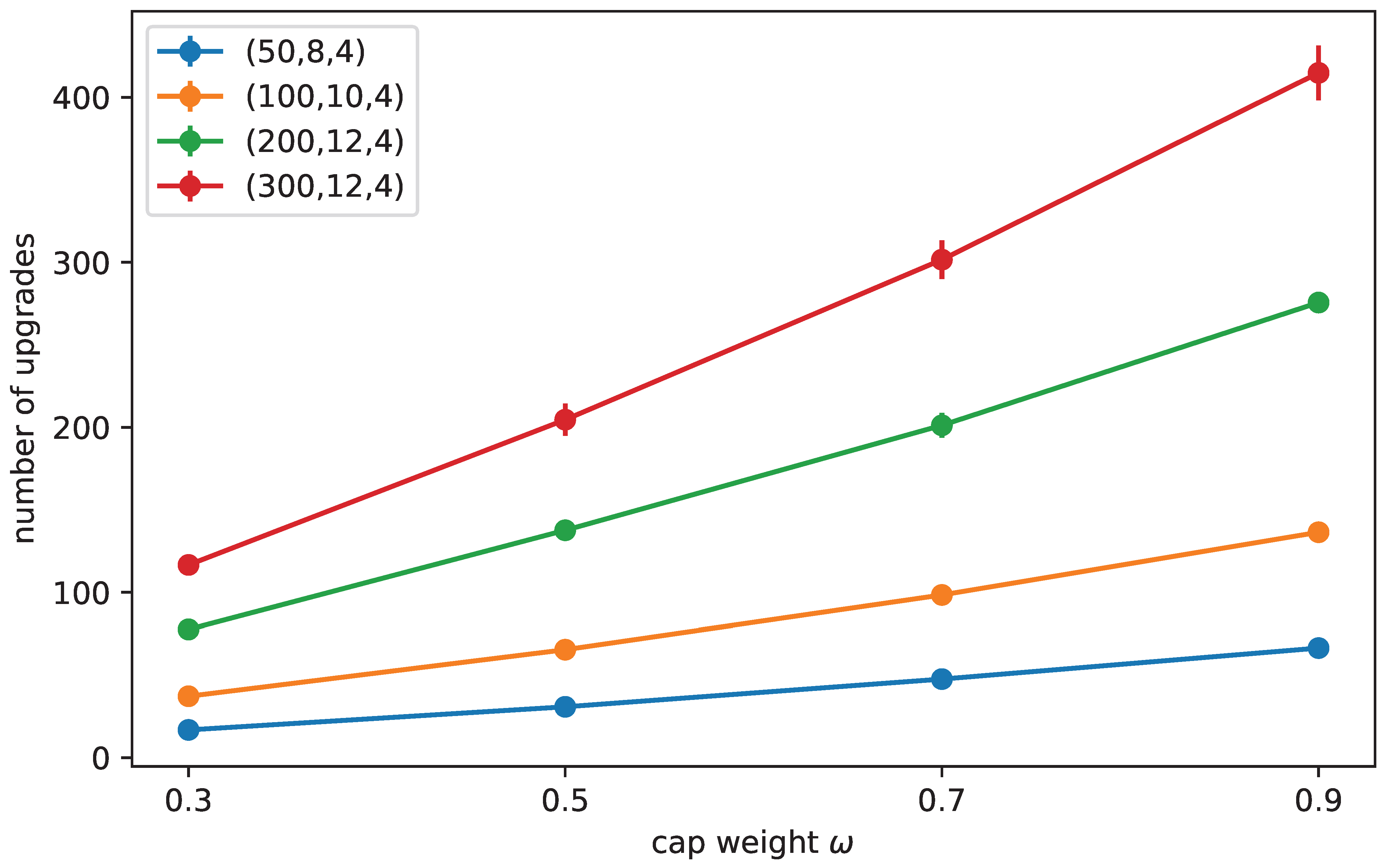

Figure 9.

Family A cap-weight sensitivity: number of upgrades vs. (mean ± sd over 5 seeds).

Figure 9.

Family A cap-weight sensitivity: number of upgrades vs. (mean ± sd over 5 seeds).

Figure 10.

Family A utilization sweep: solve time and number of executed upgrades as a function of the demand fraction (means over 5 seeds).

Figure 10.

Family A utilization sweep: solve time and number of executed upgrades as a function of the demand fraction (means over 5 seeds).

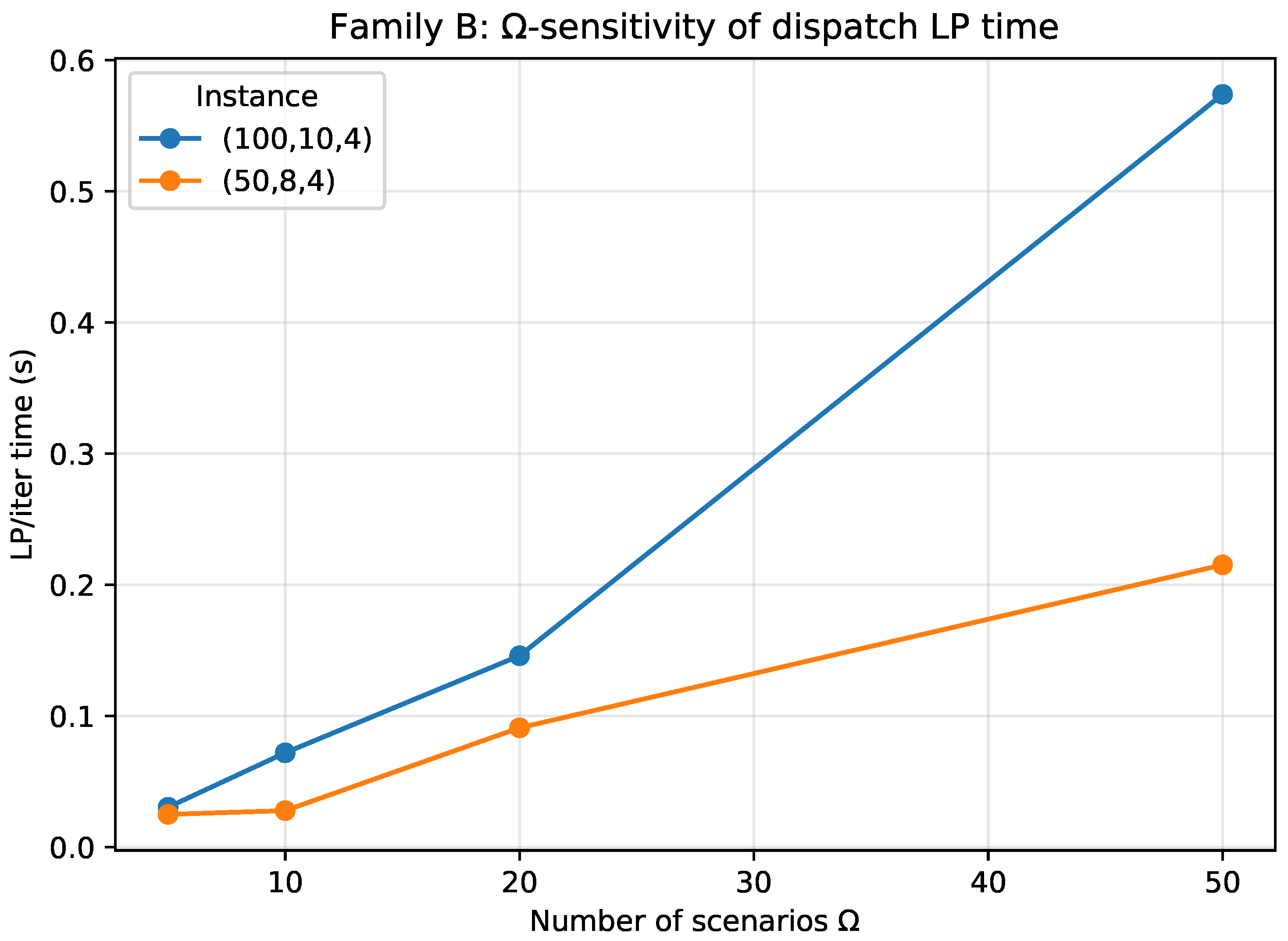

Figure 11.

Family B: dispatch LP time per Benders iteration (LP/iter, build + solve) vs. number of scenarios . The master problem is independent of , so this curve captures the dominant -dependent component of the decomposition runtime.

Figure 11.

Family B: dispatch LP time per Benders iteration (LP/iter, build + solve) vs. number of scenarios . The master problem is independent of , so this curve captures the dominant -dependent component of the decomposition runtime.

Table 1.

Dynamic LCA vs. time-value weighting (exemplification).

Table 1.

Dynamic LCA vs. time-value weighting (exemplification).

| Profile | Emissions () | Characterized () | Unweighted | Weighted |

|---|

| A (early) | (100, 0) | (100, 0) | 100 | |

| B (late) | (0, 100) | (0, 100) | 100 | |

Table 2.

Positioning relative to representative literature streams using a fixed coding: LCA structure is No/Partial/Yes/System-level (explicit process-based integration at the system level rather than asset/transition level); Time-weighting is Yes if impacts are explicitly weighted by time in the model objective/constraints, otherwise No; Endogenous learning is Yes (cost-only) or Yes (cost + embodied) if learning endogenously updates coefficients via cumulative deployment, otherwise No.

Table 2.

Positioning relative to representative literature streams using a fixed coding: LCA structure is No/Partial/Yes/System-level (explicit process-based integration at the system level rather than asset/transition level); Time-weighting is Yes if impacts are explicitly weighted by time in the model objective/constraints, otherwise No; Endogenous learning is Yes (cost-only) or Yes (cost + embodied) if learning endogenously updates coefficients via cumulative deployment, otherwise No.

| Paper(s) | LCA Structure | Time-Weighting | Endogenous Learning |

|---|

| [8,9,10,11] | Partial | No | No |

| [14,15,16,17,18] | Yes | No | No |

| [12,19,20,21] | Partial | No | No |

| [4,5,7,26,27,28] | System-level | Yes | Yes (cost-only) |

| This work | Yes | Yes | Yes (cost + embodied) |

Table 3.

Life-cycle accounting changes the recommended upgrade schedule under the same emissions cap (toy instance, , , ). “Operational-only cap” constrains use-phase impacts only; “Model life-cycle cap” constrains use-phase plus embodied impacts.

Table 3.

Life-cycle accounting changes the recommended upgrade schedule under the same emissions cap (toy instance, , , ). “Operational-only cap” constrains use-phase impacts only; “Model life-cycle cap” constrains use-phase plus embodied impacts.

| Model | Schedule | Obj (€) | (kgCO2e) | (kgCO2e) | (kgCO2e) |

|---|

| Operational-only cap | : none; : : 1 → 3, : 3 → 4 | 533.55 | 180.00 | 40.00 | 220.00 |

| Model life-cycle cap | : : 1 → 2; : : 1 → 3, : 3 → 4 | 534.93 | 140.00 | 55.00 | 195.00 |

Table 4.

Sensitivity to cap tightness on the toy instance (). The cap is set as , where is the no-upgrade time-weighted life-cycle impact. “# Upgrades” is the number of executed upgrades.

Table 4.

Sensitivity to cap tightness on the toy instance (). The cap is set as , where is the no-upgrade time-weighted life-cycle impact. “# Upgrades” is the number of executed upgrades.

| (kgCO2e) | Obj (€) | (kgCO2e) | # Upgrades |

|---|

| 0.90 | 324.00 | 533.55 | 220.00 | 2 |

| 0.80 | 288.00 | 533.55 | 220.00 | 2 |

| 0.75 | 270.00 | 533.55 | 220.00 | 2 |

| 0.70 | 252.00 | 533.55 | 220.00 | 2 |

| 0.65 | 234.00 | 533.55 | 220.00 | 2 |

| 0.60 | 216.00 | 534.93 | 195.00 | 3 |

Table 5.

Effect of time-weighting on the optimal schedule for the toy instance at fixed relative cap tightness . “# Upgrades” is the number of executed upgrades.

Table 5.

Effect of time-weighting on the optimal schedule for the toy instance at fixed relative cap tightness . “# Upgrades” is the number of executed upgrades.

| (kgCO2e) | Obj (€) | (kgCO2e) | # Upgrades |

|---|

| 0.00 | 234.00 | 533.55 | 220.00 | 2 |

| 0.05 | 217.81 | 533.55 | 208.45 | 2 |

| 0.15 | 192.07 | 533.55 | 190.02 | 2 |

| 0.30 | 164.74 | 534.93 | 157.18 | 3 |

Table 6.

Learning inputs used in Experiment 3.

Table 6.

Learning inputs used in Experiment 3.

| Item | Symbol | Value(s) |

|---|

| Learning families | | |

| Family mapping | | |

| Initial deployment | | 1 |

| Tier thresholds | | |

| Learning exponents | | |

| Cost multipliers | | |

| Embodied multipliers | | |

Table 7.

Learning-by-doing can shift upgrade timing and reduce discounted cost under the same life-cycle cap (toy instance). Obj is total discounted economic cost (monetary units); is the time-weighted life-cycle impact in kgCO2e. “# Upgrades” is the number of executed upgrades.

Table 7.

Learning-by-doing can shift upgrade timing and reduce discounted cost under the same life-cycle cap (toy instance). Obj is total discounted economic cost (monetary units); is the time-weighted life-cycle impact in kgCO2e. “# Upgrades” is the number of executed upgrades.

| Variant | Schedule | Obj | | # Upgrades |

|---|

| (€) | (kgCO2e) |

|---|

| No learning () | : : 1 → 3; : : 1 → 3 | 835.79 | 306.31 | 2 |

| Learning () | : : 1 → 2, : 2 → 3; : : 1 → 3 | 718.51 | 295.91 | 3 |

Table 8.

Scalability of the learning-by-doing tiered approximation as the number of tiers S increases. We use a representative instance with . Learning uses one family, , geometric tier thresholds up to with big-, and exponents . All runs use HiGHS (via scipy.optimize.milp), 1 thread, time limit 30 s, and target relative MIP gap . “Added” counts refer to the learning extension only (relative to the core model).

Table 8.

Scalability of the learning-by-doing tiered approximation as the number of tiers S increases. We use a representative instance with . Learning uses one family, , geometric tier thresholds up to with big-, and exponents . All runs use HiGHS (via scipy.optimize.milp), 1 thread, time limit 30 s, and target relative MIP gap . “Added” counts refer to the learning extension only (relative to the core model).

| S | Added Binaries | Added Constraints | Total Constraints | Time (s) | MIP Gap |

|---|

| 2 | 2416 | 8448 | 13,307 | 17.4 | |

| 3 | 3624 | 12,064 | 16,923 | 30.0 | |

| 4 | 4832 | 15,680 | 20,539 | 30.0 | |

| 6 | 7248 | 22,912 | 27,771 | 30.0 | — |

Table 9.

Results on large random instances (Family A; 5 seeds each). “Instance” reports . Running times are seconds (mean ± sd) and relative MILP gap target . “opt.” is the number of runs (out of five) that reached the target gap within the time limit. “Nodes” is the branch-and-bound node count. “# Upgrades” is the number of executed upgrades.

Table 9.

Results on large random instances (Family A; 5 seeds each). “Instance” reports . Running times are seconds (mean ± sd) and relative MILP gap target . “opt.” is the number of runs (out of five) that reached the target gap within the time limit. “Nodes” is the branch-and-bound node count. “# Upgrades” is the number of executed upgrades.

| Instance | Opt. | Time (s) | Nodes | Gapmax | # Upgrades | (kgCO2e) | Slackmax |

|---|

| (50, 8, 4) | 5 | 1.04 ± 0.87 | 1 ± 0 | 1.4 | 22 ± 3 | 34,057.1 ± 1391.6 | 4.37 |

| (100, 10, 4) | 5 | 3.30 ± 2.19 | 3 ± 3 | 9.4 | 76 ± 5 | 81,181.4 ± 2765.0 | 1.31 |

| (200, 12, 4) | 5 | 7.45 ± 2.94 | 2 ± 1 | 6.7 | 175 ± 4 | 181,316.1 ± 3147.3 | 1.46 |

| (300, 12, 4) | 5 | 12.47 ± 3.44 | 1 ± 0 | 4.7 | 261 ± 7 | 272,570.6 ± 4043.0 | 3.49 |

Table 10.

Per-instance results for the large random benchmark (Family A). “# Upgrades” is the number of executed upgrades.

Table 10.

Per-instance results for the large random benchmark (Family A). “# Upgrades” is the number of executed upgrades.

| Instance | Seed | Time | Gap | Obj | | | | # Upgrades |

|---|

| (s) | (€) | (kgCO2e) |

|---|

| (50, 8, 4) | 1 | 0.46 | 1.4 | 213,587.7 | 34,468.1 | 1189.8 | 35,657.9 | 24 |

| (50, 8, 4) | 2 | 2.42 | 0.0 | 203,179.8 | 33,692.1 | 1015.6 | 34,707.7 | 21 |

| (50, 8, 4) | 3 | 1.40 | 0.0 | 212,374.2 | 33,248.1 | 1320.8 | 34,568.9 | 26 |

| (50, 8, 4) | 4 | 0.44 | 0.0 | 201,554.3 | 31,109.6 | 982.4 | 32,092.1 | 20 |

| (50, 8, 4) | 5 | 0.49 | 0.0 | 209,756.4 | 32,280.3 | 978.5 | 33,258.9 | 21 |

| (100, 10, 4) | 1 | 1.50 | 0.0 | 504,698.5 | 74,745.0 | 3761.9 | 78,506.9 | 78 |

| (100, 10, 4) | 2 | 6.41 | 0.0 | 515,805.3 | 80,525.9 | 3804.8 | 84,330.7 | 76 |

| (100, 10, 4) | 3 | 2.66 | 0.0 | 509,479.0 | 77,106.1 | 3784.8 | 80,890.8 | 74 |

| (100, 10, 4) | 4 | 4.63 | 0.0 | 511,997.1 | 79,538.9 | 4139.6 | 83,678.5 | 84 |

| (100, 10, 4) | 5 | 1.28 | 9.4 | 498,366.2 | 74,879.3 | 3620.6 | 78,499.9 | 70 |

| (200, 12, 4) | 1 | 6.15 | 0.0 | 1,151,044.3 | 172,348.5 | 8511.6 | 180,860.0 | 169 |

| (200, 12, 4) | 2 | 12.42 | 6.7 | 1,112,159.4 | 170,625.5 | 8884.3 | 179,509.8 | 173 |

| (200, 12, 4) | 3 | 5.97 | 0.0 | 1,153,106.2 | 170,822.3 | 9134.9 | 179,957.2 | 176 |

| (200, 12, 4) | 4 | 7.74 | 5.5 | 1,106,676.4 | 177,782.6 | 9069.0 | 186,851.5 | 176 |

| (200, 12, 4) | 5 | 5.00 | 4.8 | 1,124,037.4 | 170,089.7 | 9312.2 | 179,401.9 | 179 |

| (300, 12, 4) | 1 | 18.09 | 0.0 | 1,698,561.5 | 259,840.5 | 13,647.5 | 273,488.1 | 271 |

| (300, 12, 4) | 2 | 13.21 | 0.0 | 1,722,169.6 | 263,895.2 | 13,383.8 | 277,279.0 | 264 |

| (300, 12, 4) | 3 | 10.07 | 4.7 | 1,632,953.5 | 254,248.7 | 13,053.9 | 267,302.6 | 258 |

| (300, 12, 4) | 4 | 9.63 | 2.5 | 1,715,825.5 | 256,275.9 | 13,418.0 | 269,693.9 | 261 |

| (300, 12, 4) | 5 | 11.37 | 0.0 | 1,660,864.1 | 262,245.7 | 12,843.8 | 275,089.5 | 253 |

Table 11.

Family A: monolithic MILP vs. Benders-like approach. “Iter.” is the number of Benders iterations (master solves) to reach an outer-loop relative bound gap of . “LP/iter” is the average dispatch LP time; it is typically small relative to Master/Iter time.

Table 11.

Family A: monolithic MILP vs. Benders-like approach. “Iter.” is the number of Benders iterations (master solves) to reach an outer-loop relative bound gap of . “LP/iter” is the average dispatch LP time; it is typically small relative to Master/Iter time.

| MILP Time (s) | Benders Time (s) | Iter. | Master/Iter (s) | LP/Iter (s) |

|---|

| (50, 8, 4) | 1.0 | 2.8 | 12 | 0.22 | 0.01 |

| (100, 10, 4) | 3.3 | 11.0 | 18 | 0.59 | 0.02 |

| (200, 12, 4) | 7.5 | 26.5 | 23 | 1.12 | 0.03 |

| (300, 12, 4) | 12.5 | 30.0 | 30 | 2.08 | 0.05 |

Table 12.

Family B: monolithic MILP vs. Benders-like approach. “gap” is the final reported MIP gap at termination. Benders uses an outer-loop bound gap target of and reports its achieved bound gap at termination.

Table 12.

Family B: monolithic MILP vs. Benders-like approach. “gap” is the final reported MIP gap at termination. Benders uses an outer-loop bound gap target of and reports its achieved bound gap at termination.

| MILP Time (s) | MILP Gap | Benders Time (s) | Benders Gap | Iter. | LP/Iter (s) |

|---|

| (50, 8, 4) | 6.8 | 1.0 | 9.5 | 1.0 | 14 | 0.08 |

| (100, 10, 4) | 30.0 | 2.4 | 18.7 | 1.0 | 19 | 0.12 |

| (200, 12, 4) | 30.0 | 1.6 | 27.9 | 1.0 | 23 | 0.20 |

| (300, 12, 4) | 30.0 | 1.2 | 29.8 | 1.0 | 25 | 0.22 |

Table 13.

Sensitivity of the cap calibration weight on Family A instances (5 seeds each). Cap is set as with . Reported values are mean ± sd over 5 seeds. “opt.” counts runs (out of 5) reaching the target MIP gap within the time limit. “# Upgrades” is the number of executed upgrades.

Table 13.

Sensitivity of the cap calibration weight on Family A instances (5 seeds each). Cap is set as with . Reported values are mean ± sd over 5 seeds. “opt.” counts runs (out of 5) reaching the target MIP gap within the time limit. “# Upgrades” is the number of executed upgrades.

| Instance | | Opt. | Time (s) | Obj (€) | # Upgrades | (kgCO2e) |

|---|

| (50, 8, 4) | 0.3 | 5 | 1.03 ± 0.69 | 39,469.8 ± 619.9 | 16.8 ± 1.3 | 23,769.7 ± 261.0 |

| (50, 8, 4) | 0.5 | 5 | 2.29 ± 1.50 | 41,834.2 ± 676.4 | 30.8 ± 2.3 | 21,804.8 ± 233.6 |

| (50, 8, 4) | 0.7 | 5 | 2.12 ± 1.55 | 45,047.4 ± 751.2 | 47.6 ± 2.4 | 19,839.9 ± 228.3 |

| (50, 8, 4) | 0.9 | 5 | 5.89 ± 2.93 | 49,546.5 ± 848.5 | 66.4 ± 3.6 | 17,875.0 ± 246.5 |

| (100, 10, 4) | 0.3 | 5 | 4.26 ± 1.10 | 88,267.4 ± 2665.5 | 37.2 ± 4.1 | 57,163.2 ± 2092.2 |

| (100, 10, 4) | 0.5 | 5 | 3.99 ± 1.52 | 92,603.8 ± 2715.9 | 65.4 ± 5.4 | 51,940.0 ± 1843.1 |

| (100, 10, 4) | 0.7 | 5 | 4.07 ± 1.03 | 98,615.2 ± 2568.1 | 98.4 ± 5.6 | 46,716.8 ± 1599.5 |

| (100, 10, 4) | 0.9 | 5 | 4.79 ± 0.92 | 107,699.5 ± 2995.5 | 136.4 ± 5.6 | 41,493.6 ± 1364.4 |

| (200, 12, 4) | 0.3 | 5 | 9.46 ± 1.95 | 193,930.1 ± 2263.8 | 77.6 ± 4.3 | 130,419.1 ± 898.4 |

| (200, 12, 4) | 0.5 | 5 | 10.21 ± 2.27 | 201,413.8 ± 1802.3 | 137.6 ± 6.0 | 117,872.1 ± 613.5 |

| (200, 12, 4) | 0.7 | 5 | 11.32 ± 1.38 | 212,039.3 ± 1260.0 | 201.2 ± 7.5 | 105,325.0 ± 475.1 |

| (200, 12, 4) | 0.9 | 5 | 12.31 ± 0.23 | 228,160.6 ± 1168.9 | 275.6 ± 6.4 | 92,778.0 ± 595.9 |

| (300, 12, 4) | 0.3 | 5 | 16.73 ± 1.89 | 292,361.2 ± 4700.9 | 116.6 ± 4.5 | 196,313.6 ± 4530.5 |

| (300, 12, 4) | 0.5 | 5 | 16.77 ± 1.42 | 303,491.0 ± 4775.3 | 204.6 ± 9.8 | 177,409.6 ± 3695.0 |

| (300, 12, 4) | 0.7 | 5 | 21.87 ± 5.44 | 319,489.7 ± 4848.0 | 301.6 ± 11.7 | 158,505.5 ± 2874.6 |

| (300, 12, 4) | 0.9 | 5 | 28.29 ± 0.63 | 343,117.6 ± 5355.9 | 414.8 ± 16.5 | 139,601.4 ± 2087.2 |

Table 14.

Sensitivity to the utilization parameter used to set demand in the random Family A generator. We report mean ± sd over 5 seeds. All runs use a 30 s limit (HiGHS single thread, relative gap ). “# Upgrades” is the number of executed upgrades.

Table 14.

Sensitivity to the utilization parameter used to set demand in the random Family A generator. We report mean ± sd over 5 seeds. All runs use a 30 s limit (HiGHS single thread, relative gap ). “# Upgrades” is the number of executed upgrades.

| Instance | | Opt. | Time (s) | Gapmax | Obj (€) | # Upgrades | (kgCO2e) | Slackmax |

|---|

| (50, 8, 4) | 0.75 | 5 | 0.59 ± 0.76 | 0.0 | 50,459.0 ± 971.6 | 9.8 ± 1.1 | 14,316.8 ± 347.0 | −1.8 |

| (50, 8, 4) | 0.88 | 5 | 0.22 ± 0.16 | 1.8 | 61,525.8 ± 1053.6 | 13.8 ± 1.3 | 17,330.2 ± 356.6 | 1.1 |

| (50, 8, 4) | 0.95 | 5 | 0.57 ± 0.28 | 0.0 | 67,607.5 ± 1109.3 | 15.8 ± 1.3 | 18,953.3 ± 365.4 | 1.5 |

| (100, 10, 4) | 0.75 | 5 | 0.97 ± 0.51 | 0.0 | 118,830.3 ± 2373.9 | 21.6 ± 1.1 | 34,810.3 ± 760.8 | 3.6 |

| (100, 10, 4) | 0.88 | 5 | 1.67 ± 1.22 | 2.1 | 144,460.3 ± 2648.9 | 29.6 ± 1.1 | 42,145.7 ± 845.4 | 2.9 |

| (100, 10, 4) | 0.95 | 5 | 1.31 ± 0.83 | 0.0 | 158,505.8 ± 2802.7 | 33.8 ± 1.3 | 46,112.0 ± 885.6 | 2.2 |

| (200, 12, 4) | 0.75 | 5 | 2.60 ± 0.81 | 0.0 | 265,254.5 ± 4129.0 | 45.0 ± 2.9 | 80,758.8 ± 1099.5 | −1.5 |

| (200, 12, 4) | 0.88 | 5 | 1.97 ± 0.84 | 3.1 | 321,733.3 ± 4655.1 | 60.8 ± 2.9 | 97,772.2 ± 1215.2 | 3.1 |

| (200, 12, 4) | 0.95 | 5 | 4.54 ± 2.08 | 0.0 | 352,742.1 ± 4997.6 | 69.6 ± 3.0 | 106,934.8 ± 1305.6 | 5.8 |

| (300, 12, 4) | 0.75 | 5 | 4.96 ± 2.64 | 0.0 | 398,279.1 ± 5132.6 | 69.2 ± 4.5 | 121,072.8 ± 1889.3 | 1.5 |

| (300, 12, 4) | 0.88 | 5 | 6.31 ± 5.64 | 7.1 | 482,883.3 ± 5610.2 | 92.8 ± 4.1 | 146,500.1 ± 1853.9 | 3.2 |

| (300, 12, 4) | 0.95 | 5 | 5.79 ± 4.22 | 9.2 | 529,343.1 ± 5933.9 | 105.8 ± 4.2 | 160,213.6 ± 1861.2 | 2.0 |

Table 15.

Family B: -sensitivity of the scenario dispatch layer. We report the size of the scenario-expanded dispatch LP solved in each Benders iteration and the corresponding solve time (LP/iter, build + solve), for .

Table 15.

Family B: -sensitivity of the scenario dispatch layer. We report the size of the scenario-expanded dispatch LP solved in each Benders iteration and the corresponding solve time (LP/iter, build + solve), for .

| Instance | | LP per Iter (s) | LP Vars | LP Cons | LP Nnz | Seed |

|---|

| (50, 8, 4) | 5 | 0.0248 | 8000 | 8041 | 24,000 | 3 |

| (50, 8, 4) | 10 | 0.0277 | 16,000 | 16,081 | 48,000 | 3 |

| (50, 8, 4) | 20 | 0.0909 | 32,000 | 32,161 | 96,000 | 3 |

| (50, 8, 4) | 50 | 0.2151 | 80,000 | 80,401 | 240,000 | 3 |

| (100, 10, 4) | 5 | 0.0303 | 20,000 | 20,051 | 60,000 | 3 |

| (100, 10, 4) | 10 | 0.0718 | 40,000 | 40,101 | 120,000 | 3 |

| (100, 10, 4) | 20 | 0.1458 | 80,000 | 80,201 | 240,000 | 3 |

| (100, 10, 4) | 50 | 0.5738 | 200,000 | 200,501 | 600,000 | 3 |

Table 18.

Fleet and cap setup for the case study.

Table 18.

Fleet and cap setup for the case study.

| Item | Value |

|---|

| Fleet size | vehicles |

| Horizon | years |

| Annual demand | 200,000 km/year (all t) |

| Annual capacity (10 vehicles) | 15,000 km/year each |

| Annual capacity (10 vehicles) | 9000 km/year each |

| Initial state | all ICE at |

| Cap definition | |

| Baseline | tCO2e |

| Cap | tCO2e |

Table 19.

Operational-only cap vs full life-cycle cap (case study). “Cap slack” is , so negative indicates a violation of the life-cycle cap. Emissions are in tCO2e over the 10-year horizon.

Table 19.

Operational-only cap vs full life-cycle cap (case study). “Cap slack” is , so negative indicates a violation of the life-cycle cap. Emissions are in tCO2e over the 10-year horizon.

| Model | Upgrades Executed at | Obj (€) | | | | Cap Slack |

|---|

| Operational-only cap | 3 ICE → BEV, 6 ICE → HEV (high-mileage vehicles) | 412,000 | 284.3 | 74.4 | 358.7 | |

| Full life-cycle cap | 8 ICE → BEV (high-mileage vehicles) | 424,000 | 187.6 | 83.2 | 270.8 | 16.2 |

Table 20.

Fleet case-study sensitivity to cap stringency. The cap is where is the all-ICE baseline use-phase emissions (here tCO2e over 10 years). Emissions are life-cycle totals (tCO2e) and slack is . “#” means “numbers of”.

Table 20.

Fleet case-study sensitivity to cap stringency. The cap is where is the all-ICE baseline use-phase emissions (here tCO2e over 10 years). Emissions are life-cycle totals (tCO2e) and slack is . “#” means “numbers of”.

| | ICE → BEV (1) | BEV (1) | | | | Slack | Obj |

|---|

| (tCO2e) | (#) | (#) | (tCO2e) | (tCO2e) | (tCO2e) | (tCO2e) | (€) |

|---|

| 0.85 | 348.5 | 4 | 4 | 298.8 | 41.6 | 340.4 | 8.1 | 412,000 |

| 0.80 | 328.0 | 5 | 5 | 271.0 | 52.0 | 323.0 | 5.0 | 415,000 |

| 0.75 | 307.5 | 6 | 6 | 243.2 | 62.4 | 305.6 | 1.9 | 418,000 |

| 0.70 | 287.0 | 8 | 8 | 187.6 | 83.2 | 270.8 | 16.2 | 424,000 |

| 0.65 | 266.5 | 9 | 9 | 159.8 | 93.6 | 253.4 | 13.1 | 427,000 |

| 0.60 | 246.0 | 10 | 10 | 132.0 | 104.0 | 236.0 | 10.0 | 430,000 |

Table 21.

Dispatch allocation in year (kilometers served by technology).

Table 21.

Dispatch allocation in year (kilometers served by technology).

| Model | ICE km | HEV km | BEV km |

|---|

| Operational-only cap | 65,000 | 90,000 | 45,000 |

| Full life-cycle cap | 80,000 | 0 | 120,000 |

Table 22.

Full life-cycle model trajectory (year-by-year).

Table 22.

Full life-cycle model trajectory (year-by-year).

| t | ICE | HEV | BEV | ICE → HEV | ICE → BEV | HEV → BEV | | | Total | Cum. | Rem. Cap |

|---|

| (tCO2e) | (tCO2e) | (tCO2e) | (tCO2e) | (tCO2e) |

|---|

| 1 | 12 | 0 | 8 | 0 | 8 | 0 | 18.76 | 83.20 | 101.96 | 101.96 | 185.04 |

| 2 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 120.72 | 166.28 |

| 3 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 139.48 | 147.52 |

| 4 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 158.24 | 128.76 |

| 5 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 177.00 | 110.00 |

| 6 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 195.76 | 91.24 |

| 7 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 214.52 | 72.48 |

| 8 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 233.28 | 53.72 |

| 9 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 252.04 | 34.96 |

| 10 | 12 | 0 | 8 | 0 | 0 | 0 | 18.76 | 0.00 | 18.76 | 270.80 | 16.20 |