EvoDropX: Evolutionary Optimization of Feature Corruption Sequences for Faithful Explanations of Transformer Models

Abstract

1. Introduction

2. Related Work

2.1. Post Hoc xAI Methods for Transformer Models

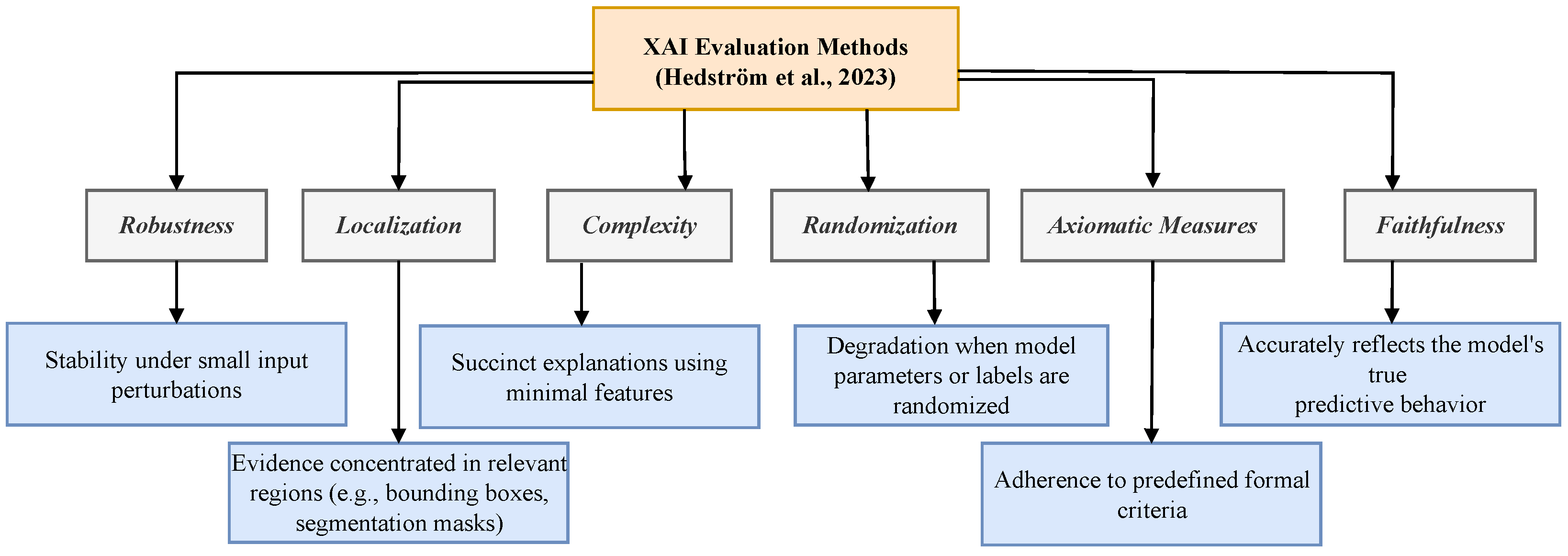

2.2. xAI Evaluation

2.3. Evolutionary Computation and Grammatical Evolution

- N represents the set of non-terminals, which serve as intermediary structures in the mapping process.

- T represents the set of terminals, the elements that appear in the final program.

- P denotes the production rules that define how non-terminals can be expanded.

- S is the starting symbol from which the derivation begins.

3. Methodology and Experiment

- Automated Grammar Generation: We introduce a method for automatically generating a CFG for GE based on the input text.

- GE-based Feature Corruption Sequence Generation: Using the generated grammar and input text, we evolve an optimal feature corruption sequence that maximises SRG.

- Feature Attribution based on Generated Sequence: We compute feature importance using the generated sequence and the probability drop associated with the corruption sequence.

3.1. GE-Based Feature Attribution Generation

3.1.1. Automated Grammar Construction

- Feature Selection: The <token_choice> non-terminal specifies which token (e.g., index 0–6 for a seven-token text) is selected for reordering.

- Movement Direction: The <direction> rule determines whether the chosen token shifts forward (later in the sequence) or backward (earlier in the sequence).

- Step Size: The <steps>non-terminal defines how many positions the token moves (e.g., 0–6 steps).

| Listing 1. Sample CFG for input of token length 6. |

|

3.1.2. Genotype to Corruption Sequence Mapping

| Algorithm 1 Genotype-to-Phenotype Mapping in Grammatical Evolution | |

| Require: Genotype , grammar | |

| Ensure: Phenotype | |

| 1: | Initialise derivation |

| 2: | Initialise codon index |

| 3: | Initialise wrap count |

| 4: | while a non-terminal exists in D and do |

| 5: | Select leftmost non-terminal |

| 6: | Let be production rules for X |

| 7: | Compute rule index |

| 8: | Replace X in D with the rule |

| 9: | |

| 10: | if then |

| 11: | |

| 12: | |

| 13: | end if |

| 14: | end while |

| 15: | if no non-terminals remain in D then |

| 16: | |

| 17: | else |

| 18: | |

| 19: | end if |

| 20: | return |

3.1.3. Optimisation Objective

- and denote the fractions of features corrupted in descending and ascending order of relevance, respectively.

- and represent the model’s output probability after corrupting first and of features, respectively.

3.1.4. Feature Importance Calculation

3.1.5. Evaluation

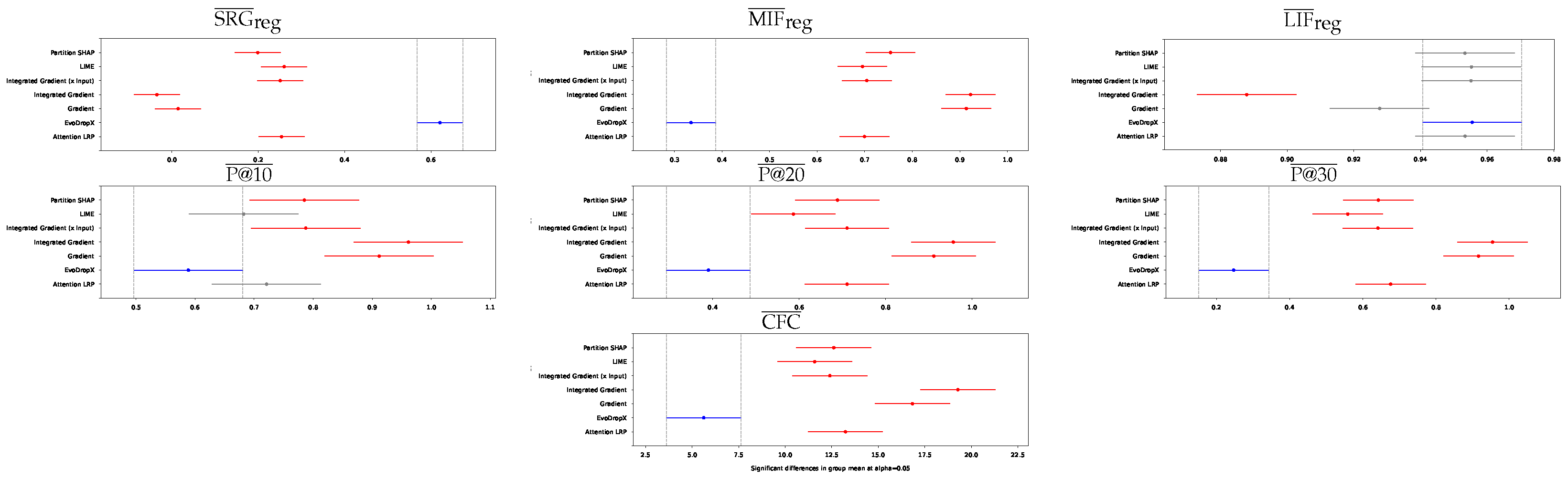

- Regularised SRG: This is the primary faithfulness metric used in our study. It quantifies the area between the MIF and LIF curves, normalised by sentence length.Higher values indicate that the explanation method effectively distinguishes between important and unimportant features.

- Regularised MIF: This metric measures the area under the MIF curve, normalised by sentence length:where and denote the model’s confidence in the originally predicted class as features are progressively corrupted. Higher values indicate that the explanation method effectively distinguishes between important and unimportant features.

- Regularised LIF: This metric computes the area under the LIF curve, normalised by sentence length:Higher values suggest that removing the least-important features has minimal impact on model predictions.

- Performance at K% Token Corruption (p@K): We evaluate p@10, p@20, and p@30, which represent the model’s confidence after corrupting a specified percentage of tokens ranked as most important by the explanation method. For a given input with N tokens and corruption percentage K, we computewhere denotes the input after corrupting the top tokens according to the explanation method’s importance ranking, and represents the model’s confidence score for the originally predicted class. Lower p@K values indicate better explanation quality.

- Counterfactual conciseness (CFC): This metric measures the average number of token corruptions required to flip the model’s prediction to a different class. Lower CFC values indicate more concise explanations, as they identify the minimal set of features whose perturbation can alter the model’s decision.

3.1.6. Extending EvodropX

3.2. Experiment Details

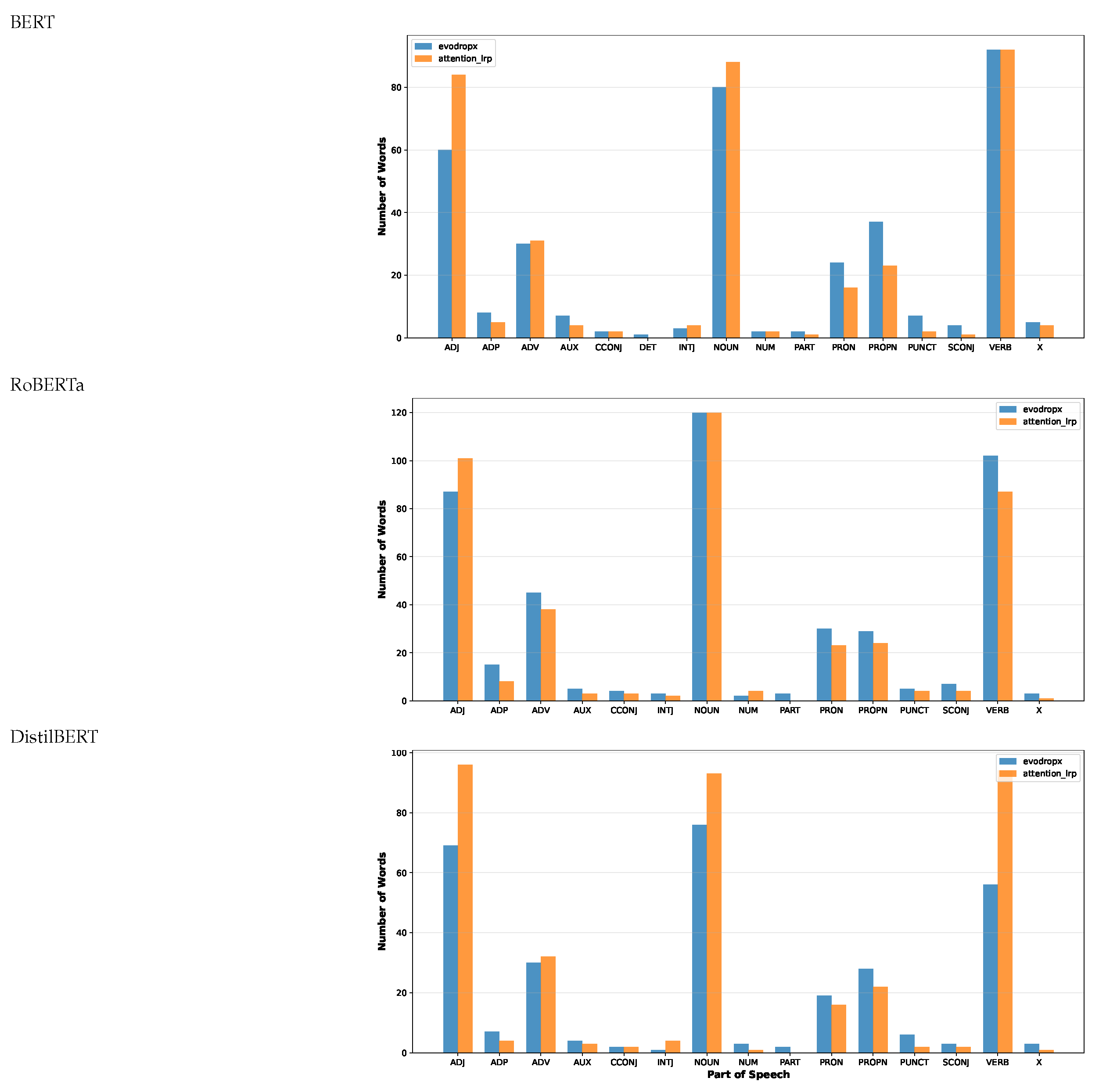

4. Results

5. Conclusions, Limitations and Future Scope

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

Appendix A.1

Appendix A.2

References

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nat. Mach. Intell. 2019, 1, 206–215. [Google Scholar] [CrossRef]

- Lipton, Z.C. The mythos of model interpretability: In machine learning, the concept of interpretability is both important and slippery. Queue 2018, 16, 31–57. [Google Scholar] [CrossRef]

- Murdoch, W.J.; Singh, C.; Kumbier, K.; Abbasi-Asl, R.; Yu, B. Interpretable machine learning: Definitions, methods, and applications. arXiv 2019, arXiv:1901.04592. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Montavon, G.; Samek, W.; Müller, K.R. Methods for interpreting and understanding deep neural networks. Digit. Signal Process. 2018, 73, 1–15. [Google Scholar] [CrossRef]

- Samek, W.; Montavon, G.; Vedaldi, A.; Hansen, L.K.; Müller, K.R. Explainable AI: Interpreting, Explaining and Visualizing Deep Learning; Springer Nature: Cham, Switzerland, 2019; Volume 11700. [Google Scholar]

- Covert, I.; Lundberg, S.; Lee, S.I. Explaining by removing: A unified framework for model explanation. J. Mach. Learn. Res. 2021, 22, 1–90. [Google Scholar]

- Samek, W.; Montavon, G.; Lapuschkin, S.; Anders, C.J.; Müller, K.R. Explaining deep neural networks and beyond: A review of methods and applications. Proc. IEEE 2021, 109, 247–278. [Google Scholar] [CrossRef]

- EU Artificial Intelligence Act. The EU Artificial Intelligence Act, 2024. Available online: https://artificialintelligenceact.eu/ (accessed on 26 February 2026).

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “Why should i trust you?” Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; ACM: New York, NY, USA, 2016; pp. 1135–1144. [Google Scholar]

- Simonyan, K.; Vedaldi, A.; Zisserman, A. Deep inside convolutional networks: Visualising image classification models and saliency maps. arXiv 2013, arXiv:1312.6034. [Google Scholar]

- Bach, S.; Binder, A.; Montavon, G.; Klauschen, F.; Müller, K.R.; Samek, W. On pixel-wise explanations for non-linear classifier decisions by layer-wise relevance propagation. PLoS ONE 2015, 10, e0130140. [Google Scholar] [CrossRef]

- Srinivas, S.; Fleuret, F. Rethinking the role of gradient-based attribution methods for model interpretability. arXiv 2020, arXiv:2006.09128. [Google Scholar]

- Ghorbani, A.; Abid, A.; Zou, J. Interpretation of neural networks is fragile. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; AAAI Press: Washington, DC, USA, 2019; Volume 33, pp. 3681–3688. [Google Scholar]

- Kumar, I.E.; Venkatasubramanian, S.; Scheidegger, C.; Friedler, S. Problems with Shapley-value-based explanations as feature importance measures. In Proceedings of the International Conference on Machine Learning, Virtual, 13–18 July 2020; JMLR: New York, NY, USA, 2020; pp. 5491–5500. [Google Scholar]

- Huang, X.; Marques-Silva, J. On the failings of Shapley values for explainability. Int. J. Approx. Reason. 2024, 171, 109112. [Google Scholar] [CrossRef]

- Doshi-Velez, F.; Kim, B. Towards a rigorous science of interpretable machine learning. arXiv 2017, arXiv:1702.08608. [Google Scholar] [CrossRef]

- Wilming, R.; Kieslich, L.; Clark, B.; Haufe, S. Theoretical behavior of XAI methods in the presence of suppressor variables. In Proceedings of the International Conference on Machine Learning, Honolulu, HI, USA, 23–29 July 2023; JMLR: New York, NY, USA, 2023; pp. 37091–37107. [Google Scholar]

- Molnar, C.; König, G.; Herbinger, J.; Freiesleben, T.; Dandl, S.; Scholbeck, C.A.; Casalicchio, G.; Grosse-Wentrup, M.; Bischl, B. General pitfalls of model-agnostic interpretation methods for machine learning models. In Proceedings of the International Workshop on Extending Explainable AI Beyond Deep Models and Classifiers, Vienna, Austria, 18 July 2020; Springer: Cham, Switzerland, 2020; pp. 39–68. [Google Scholar]

- Freiesleben, T.; König, G. Dear XAI community, we need to talk! Fundamental misconceptions in current XAI research. In Proceedings of the World Conference on Explainable Artificial Intelligence, Lisbon, Portugal, 26–28 July 2023; Springer: Cham, Switzerland, 2023; pp. 48–65. [Google Scholar]

- Krishna, S.; Han, T.; Gu, A.; Wu, S.; Jabbari, S.; Lakkaraju, H. The disagreement problem in explainable machine learning: A practitioner’s perspective. arXiv 2022, arXiv:2202.01602. [Google Scholar]

- Blücher, S.; Vielhaben, J.; Strodthoff, N. Decoupling pixel flipping and occlusion strategy for consistent XAI benchmarks. arXiv 2024, arXiv:2401.06654. [Google Scholar] [CrossRef]

- Ryan, C.; Collins, J.J.; Neill, M.O. Grammatical evolution: Evolving programs for an arbitrary language. In Proceedings of the Genetic Programming: First European Workshop, EuroGP’98, Paris, France, 14–15 April 1998; Proceedings 1; Springer: Berlin/Heidelberg, Germany, 1998; pp. 83–96. [Google Scholar]

- Li, H.; Xu, Z.; Taylor, G.; Studer, C.; Goldstein, T. Visualizing the loss landscape of neural nets. Adv. Neural Inf. Process. Syst. 2018, 31. [Google Scholar]

- Fantozzi, P.; Naldi, M. The explainability of transformers: Current status and directions. Computers 2024, 13, 92. [Google Scholar] [CrossRef]

- Nanda, N.; Chan, L.; Lieberum, T.; Smith, J.; Steinhardt, J. Progress measures for grokking via mechanistic interpretability. arXiv 2023, arXiv:2301.05217. [Google Scholar] [CrossRef]

- Abnar, S.; Zuidema, W. Quantifying Attention Flow in Transformers. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; Jurafsky, D., Chai, J., Schluter, N., Tetreault, J., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 4190–4197. [Google Scholar] [CrossRef]

- Jain, S.; Wallace, B.C. Attention is not explanation. arXiv 2019, arXiv:1902.10186. [Google Scholar]

- Bibal, A.; Cardon, R.; Alfter, D.; Wilkens, R.; Wang, X.; François, T.; Watrin, P. Is attention explanation? an introduction to the debate. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Dublin, Ireland, 22–27 May 2022; Association for Computational Linguistics: Stroudsburg, PA, USA, 2022; pp. 3889–3900. [Google Scholar]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic attribution for deep networks. In Proceedings of the International Conference on Machine Learning, PMLR; JMLR: New York, NY, USA, 2017; pp. 3319–3328. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 618–626. [Google Scholar]

- Nauta, M.; Trienes, J.; Pathak, S.; Nguyen, E.; Peters, M.; Schmitt, Y.; Schlötterer, J.; Van Keulen, M.; Seifert, C. From anecdotal evidence to quantitative evaluation methods: A systematic review on evaluating explainable ai. ACM Comput. Surv. 2023, 55, 1–42. [Google Scholar] [CrossRef]

- Rieger, L.; Hansen, L.K. Irof: A low resource evaluation metric for explanation methods. arXiv 2020, arXiv:2003.08747. [Google Scholar] [CrossRef]

- Gevaert, A.; Rousseau, A.J.; Becker, T.; Valkenborg, D.; De Bie, T.; Saeys, Y. Evaluating feature attribution methods in the image domain. Mach. Learn. 2024, 113, 6019–6064. [Google Scholar] [CrossRef]

- Li, X.; Du, M.; Chen, J.; Chai, Y.; Lakkaraju, H.; Xiong, H. : A Unified XAI Benchmark for Faithfulness Evaluation of Feature Attribution Methods across Metrics, Modalities and Models. Adv. Neural Inf. Process. Syst. 2023, 36, 1630–1643. [Google Scholar]

- Hedström, A.; Weber, L.; Krakowczyk, D.; Bareeva, D.; Motzkus, F.; Samek, W.; Lapuschkin, S.; Höhne, M.M.C. Quantus: An explainable ai toolkit for responsible evaluation of neural network explanations and beyond. J. Mach. Learn. Res. 2023, 24, 1–11. [Google Scholar]

- Adebayo, J.; Gilmer, J.; Muelly, M.; Goodfellow, I.; Hardt, M.; Kim, B. Sanity checks for saliency maps. Adv. Neural Inf. Process. Syst. 2018, 31. [Google Scholar]

- Binder, A.; Weber, L.; Lapuschkin, S.; Montavon, G.; Müller, K.R.; Samek, W. Shortcomings of top-down randomization-based sanity checks for evaluations of deep neural network explanations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 16143–16152. [Google Scholar]

- Kindermans, P.J.; Hooker, S.; Adebayo, J.; Alber, M.; Schütt, K.T.; Dähne, S.; Erhan, D.; Kim, B. The (un) reliability of saliency methods. In Explainable AI: Interpreting, Explaining and Visualizing Deep Learning; Springer Nature: Cham, Switzerland, 2019; pp. 267–280. [Google Scholar]

- Nguyen, A.P.; Martínez, M.R. On quantitative aspects of model interpretability. arXiv 2020, arXiv:2007.07584. [Google Scholar] [CrossRef]

- Arras, L.; Osman, A.; Samek, W. CLEVR-XAI: A benchmark dataset for the ground truth evaluation of neural network explanations. Inf. Fusion 2022, 81, 14–40. [Google Scholar] [CrossRef]

- Deyoung, J.; Jain, S.; Lehman, E.; Rajani, N.; Socher, R.; Wallace, B. A benchmark to evaluate rationalized nlp models. Comput. Lang. 2020. [Google Scholar]

- Budding, C.; Eitel, F.; Ritter, K.; Haufe, S. Evaluating saliency methods on artificial data with different background types. arXiv 2021, arXiv:2112.04882. [Google Scholar] [CrossRef]

- Alvarez Melis, D.; Jaakkola, T. Towards robust interpretability with self-explaining neural networks. Adv. Neural Inf. Process. Syst. 2018, 31. [Google Scholar]

- Zhang, J.; Bargal, S.A.; Lin, Z.; Brandt, J.; Shen, X.; Sclaroff, S. Top-down neural attention by excitation backprop. Int. J. Comput. Vis. 2018, 126, 1084–1102. [Google Scholar] [CrossRef]

- Bhatt, U.; Weller, A.; Moura, J.M. Evaluating and aggregating feature-based model explanations. arXiv 2020, arXiv:2005.00631. [Google Scholar] [CrossRef]

- Sixt, L.; Granz, M.; Landgraf, T. When explanations lie: Why many modified bp attributions fail. In Proceedings of the International Conference on Machine Learning, Virtual, 13–18 July 2020; JMLR: New York, NY, USA, 2020; pp. 9046–9057. [Google Scholar]

- Arya, V.; Bellamy, R.K.; Chen, P.Y.; Dhurandhar, A.; Hind, M.; Hoffman, S.C.; Houde, S.; Liao, Q.V.; Luss, R.; Mojsilović, A.; et al. One explanation does not fit all: A toolkit and taxonomy of ai explainability techniques. arXiv 2019, arXiv:1909.03012. [Google Scholar] [CrossRef]

- Samek, W.; Binder, A.; Montavon, G.; Lapuschkin, S.; Müller, K.R. Evaluating the visualization of what a deep neural network has learned. IEEE Trans. Neural Netw. Learn. Syst. 2016, 28, 2660–2673. [Google Scholar] [CrossRef] [PubMed]

- Chang, C.H.; Creager, E.; Goldenberg, A.; Duvenaud, D. Explaining image classifiers by counterfactual generation. arXiv 2018, arXiv:1807.08024. [Google Scholar]

- Brocki, L.; Chung, N.C. Feature perturbation augmentation for reliable evaluation of importance estimators in neural networks. Pattern Recognit. Lett. 2023, 176, 131–139. [Google Scholar] [CrossRef]

- Koza, J.R. On the Programming of Computers by Means of Natural Selection; MIT Press: Cambridge, MA, USA, 1993. [Google Scholar]

- Droste, S.; Jansen, T.; Wegener, I. On the analysis of the (1 + 1) evolutionary algorithm. Theor. Comput. Sci. 2002, 276, 51–81. [Google Scholar] [CrossRef]

- Antipov, D.; Doerr, B.; Fang, J.; Hetet, T. A tight runtime analysis for the (μ + λ) EA. In Proceedings of the Genetic and Evolutionary Computation Conference, Kyoto, Japan, 15–19 July 2018; ACM: New York, NY, USA, 2018; pp. 1459–1466. [Google Scholar]

- O’Neill, M.; Ryan, C. Grammatical evolution. IEEE Trans. Evol. Comput. 2001, 5, 349–358. [Google Scholar] [CrossRef]

- McKay, R.I.; Hoai, N.X.; Whigham, P.A.; Shan, Y.; O’neill, M. Grammar-based genetic programming: A survey. Genet. Program. Evolvable Mach. 2010, 11, 365–396. [Google Scholar] [CrossRef]

- Achtibat, R.; Hatefi, S.M.V.; Dreyer, M.; Jain, A.; Wiegand, T.; Lapuschkin, S.; Samek, W. Attnlrp: Attention-aware layer-wise relevance propagation for transformers. arXiv 2024, arXiv:2402.05602. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), Minneapolis, MN, USA, 2–7 June 2019; Burstein, J., Doran, C., Solorio, T., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Zhuang, L.; Wayne, L.; Ya, S.; Jun, Z. A Robustly Optimized BERT Pre-training Approach with Post-training. In Proceedings of the 20th Chinese National Conference on Computational Linguistics, Huhhot, China, 13–15 August 2021; Li, S., Sun, M., Liu, Y., Wu, H., Liu, K., Che, W., He, S., Rao, G., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 1218–1227. [Google Scholar]

- Sanh, V.; Debut, L.; Chaumond, J.; Wolf, T. DistilBERT, a distilled version of BERT: Smaller, faster, cheaper and lighter. arXiv 2019, arXiv:1910.01108. [Google Scholar]

- Maas, A.; Daly, R.E.; Pham, P.T.; Huang, D.; Ng, A.Y.; Potts, C. Learning word vectors for sentiment analysis. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, Portland, Oregon, 19–24 June 2011; Association for Computational Linguistics: Stroudsburg, PA, USA, 2011; pp. 142–150. [Google Scholar]

- Socher, R.; Perelygin, A.; Wu, J.; Chuang, J.; Manning, C.D.; Ng, A.; Potts, C. Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, Seattle, WA, USA, 18–21 October 2013; Association for Computational Linguistics: Stroudsburg, PA, USA, 2013; pp. 1631–1642. [Google Scholar]

- Muennighoff, N.; Tazi, N.; Magne, L.; Reimers, N. MTEB: Massive Text Embedding Benchmark. arXiv 2022, arXiv:2210.07316. [Google Scholar]

- McAuley, J.; Leskovec, J. Hidden factors and hidden topics: Understanding rating dimensions with review text. In RecSys ’13: Proceedings of the 7th ACM Conference on Recommender Systems, Hong Kong, China, 12–16 October 2013; ACM: New York, NY, USA, 2013. [Google Scholar]

- Enevoldsen, K.; Chung, I.; Kerboua, I.; Kardos, M.; Mathur, A.; Stap, D.; Gala, J.; Siblini, W.; Krzemiński, D.; Winata, G.I.; et al. MMTEB: Massive Multilingual Text Embedding Benchmark. arXiv 2025, arXiv:2502.13595. [Google Scholar] [CrossRef]

- Hochberg, Y.; Tamhane, A.C. Multiple Comparison Procedures; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 1987. [Google Scholar]

| Model | IMDb | Amazon Polarity | SST-2 |

|---|---|---|---|

| BERT | |||

| RoBERTa | |||

| DistilBERT |

| Evolution Parameter | Value |

|---|---|

| Population size | 400 |

| Maximum initial tree depth | 35 |

| Minimum initial tree depth | 4 |

| Maximum number of generations | 200 |

| Probability of crossover | 0.8 |

| Probability of mutation | 0.01 |

| Elitism size | 1 |

| Hall of fame size | 1 |

| Maximum tree depth | 35 |

| Number of Runs (different seeds) | 3 |

| Dataset | Model | Metric | EvoDropX | AttnLRP | SHAP | LIME | Gradient | IG | IG × Input | p-Value |

|---|---|---|---|---|---|---|---|---|---|---|

| IMDb | BERT | SRG ↑ | 0.76 ± 0.14 | 0.39 ± 0.25 | 0.30 ± 0.25 | 0.44 ± 0.23 | 0.05 ± 0.14 | 0.01 ± 0.16 | 0.41 ± 0.24 | <1 × 10−3 |

| MIF ↓ | 0.19 ± 0.14 | 0.51 ± 0.25 | 0.58 ± 0.26 | 0.44 ± 0.23 | 0.82 ± 0.11 | 0.79 ± 0.15 | 0.50 ± 0.24 | <1 × 10−3 | ||

| LIF ↑ | 0.95 ± 0.01 | 0.90 ± 0.03 | 0.88 ± 0.05 | 0.88 ± 0.05 | 0.87 ± 0.12 | 0.80 ± 0.13 | 0.91 ± 0.03 | <1 × 10−3 | ||

| p@10 ↓ | 0.55 ± 0.47 | 0.77 ± 0.39 | 0.82 ± 0.37 | 0.72 ± 0.43 | 0.95 ± 0.19 | 0.97 ± 0.16 | 0.80 ± 0.37 | 7 × 10−2 | ||

| p@20 ↓ | 0.28 ± 0.43 | 0.65 ± 0.44 | 0.70 ± 0.43 | 0.49 ± 0.46 | 0.87 ± 0.29 | 0.94 ± 0.21 | 0.61 ± 0.45 | 2 × 10−2 | ||

| p@30 ↓ | 0.18 ± 0.34 | 0.58 ± 0.42 | 0.63 ± 0.45 | 0.36 ± 0.43 | 0.87 ± 0.24 | 0.91 ± 0.26 | 0.52 ± 0.44 | 17 × 10−2 | ||

| CFC ↓ | 9.2 ± 7.0 | 20.3 ± 15.5 | 25.2 ± 18.7 | 14.4 ± 12.1 | 38.8 ± 20.0 | 39.6 ± 16.5 | 18.1 ± 14.4 | 37 × 10−2 | ||

| RoBERTa | SRG ↑ | 0.83 ± 0.12 | 0.54 ± 0.27 | 0.58 ± 0.24 | 0.68 ± 0.25 | 0.30 ± 0.22 | 0.04 ± 0.29 | 0.61 ± 0.25 | <1 × 10−3 | |

| MIF ↓ | 0.14 ± 0.13 | 0.42 ± 0.27 | 0.37 ± 0.24 | 0.29 ± 0.25 | 0.62 ± 0.21 | 0.77 ± 0.24 | 0.36 ± 0.25 | <1 × 10−3 | ||

| LIF ↑ | 0.97 ± 0.00 | 0.97 ± 0.01 | 0.95 ± 0.05 | 0.96 ± 0.01 | 0.91 ± 0.12 | 0.81 ± 0.20 | 0.97 ± 0.01 | 99 × 10−2 | ||

| p@10 ↓ | 0.51 ± 0.49 | 0.74 ± 0.40 | 0.80 ± 0.39 | 0.60 ± 0.48 | 0.90 ± 0.26 | 0.94 ± 0.21 | 0.79 ± 0.38 | 73 × 10−2 | ||

| p@20 ↓ | 0.20 ± 0.38 | 0.60 ± 0.46 | 0.69 ± 0.45 | 0.49 ± 0.48 | 0.79 ± 0.37 | 0.92 ± 0.26 | 0.55 ± 0.46 | <1 × 10−3 | ||

| p@30 ↓ | 0.08 ± 0.26 | 0.45 ± 0.46 | 0.48 ± 0.47 | 0.34 ± 0.44 | 0.73 ± 0.41 | 0.84 ± 0.33 | 0.41 ± 0.44 | <1 × 10−3 | ||

| CFC ↓ | 7.7 ± 6.5 | 18.8 ± 15.9 | 17.1 ± 11.9 | 13.9 ± 12.8 | 24.8 ± 16.2 | 37.2 ± 18.5 | 16.2 ± 12.3 | 7 × 10−2 | ||

| DistilBERT | SRG ↑ | 0.68 ± 0.11 | 0.53 ± 0.16 | 0.53 ± 0.16 | 0.54 ± 0.14 | 0.32 ± 0.15 | 0.01 ± 0.19 | 0.55 ± 0.14 | <1 × 10−3 | |

| MIF ↓ | 0.20 ± 0.11 | 0.31 ± 0.16 | 0.31 ± 0.16 | 0.29 ± 0.14 | 0.49 ± 0.14 | 0.62 ± 0.17 | 0.30 ± 0.14 | <1 × 10−3 | ||

| LIF ↑ | 0.88 ± 0.04 | 0.84 ± 0.06 | 0.84 ± 0.07 | 0.83 ± 0.06 | 0.82 ± 0.11 | 0.63 ± 0.18 | 0.85 ± 0.06 | 41 × 10−2 | ||

| p@10 ↓ | 0.45 ± 0.38 | 0.60 ± 0.35 | 0.61 ± 0.37 | 0.55 ± 0.37 | 0.72 ± 0.29 | 0.89 ± 0.20 | 0.62 ± 0.36 | 44 × 10−2 | ||

| p@20 ↓ | 0.18 ± 0.25 | 0.39 ± 0.33 | 0.35 ± 0.33 | 0.28 ± 0.31 | 0.53 ± 0.31 | 0.79 ± 0.29 | 0.39 ± 0.34 | 37 × 10−2 | ||

| p@30 ↓ | 0.09 ± 0.13 | 0.29 ± 0.28 | 0.24 ± 0.26 | 0.20 ± 0.24 | 0.46 ± 0.28 | 0.72 ± 0.32 | 0.25 ± 0.27 | 9 × 10−2 | ||

| CFC ↓ | 6.5 ± 4.4 | 10.3 ± 7.7 | 10.4 ± 8.0 | 8.7 ± 6.9 | 14.6 ± 13.1 | 29.0 ± 16.1 | 10.5 ± 7.4 | 79 × 10−2 | ||

| SST-2 | BERT | SRG ↑ | 0.76 ± 0.12 | 0.27 ± 0.26 | 0.33 ± 0.23 | 0.39 ± 0.23 | 0.17 ± 0.18 | 0.02 ± 0.22 | 0.32 ± 0.23 | <1 × 10−3 |

| MIF ↓ | 0.19 ± 0.13 | 0.66 ± 0.25 | 0.59 ± 0.23 | 0.54 ± 0.23 | 0.75 ± 0.18 | 0.84 ± 0.17 | 0.61 ± 0.23 | <1 × 10−3 | ||

| LIF ↑ | 0.95 ± 0.01 | 0.93 ± 0.05 | 0.92 ± 0.05 | 0.93 ± 0.03 | 0.92 ± 0.07 | 0.85 ± 0.12 | 0.93 ± 0.04 | 22 × 10−2 | ||

| p@10 ↓ | 0.47 ± 0.44 | 0.82 ± 0.33 | 0.83 ± 0.34 | 0.77 ± 0.37 | 0.92 ± 0.22 | 0.91 ± 0.24 | 0.81 ± 0.35 | <1 × 10−3 | ||

| p@20 ↓ | 0.26 ± 0.38 | 0.74 ± 0.35 | 0.69 ± 0.43 | 0.58 ± 0.44 | 0.83 ± 0.31 | 0.89 ± 0.25 | 0.71 ± 0.40 | <1 × 10−3 | ||

| p@30 ↓ | 0.13 ± 0.25 | 0.66 ± 0.40 | 0.56 ± 0.45 | 0.52 ± 0.43 | 0.76 ± 0.35 | 0.89 ± 0.25 | 0.64 ± 0.41 | <1 × 10−3 | ||

| CFC ↓ | 5.1 ± 3.4 | 16.4 ± 11.7 | 14.2 ± 10.1 | 11.7 ± 9.2 | 17.6 ± 11.2 | 24.1 ± 10.1 | 14.0 ± 9.8 | <1 × 10−3 | ||

| RoBERTa | SRG ↑ | 0.81 ± 0.13 | 0.44 ± 0.29 | 0.37 ± 0.31 | 0.57 ± 0.27 | 0.10 ± 0.20 | 0.00 ± 0.21 | 0.53 ± 0.30 | <1 × 10−3 | |

| MIF ↓ | 0.16 ± 0.13 | 0.53 ± 0.29 | 0.59 ± 0.31 | 0.39 ± 0.27 | 0.84 ± 0.17 | 0.88 ± 0.17 | 0.43 ± 0.31 | <1 × 10−3 | ||

| LIF ↑ | 0.97 ± 0.00 | 0.96 ± 0.02 | 0.96 ± 0.05 | 0.96 ± 0.05 | 0.94 ± 0.08 | 0.88 ± 0.19 | 0.96 ± 0.06 | 99 × 10−2 | ||

| p@10 ↓ | 0.45 ± 0.47 | 0.79 ± 0.39 | 0.90 ± 0.28 | 0.69 ± 0.44 | 0.89 ± 0.29 | 0.95 ± 0.21 | 0.77 ± 0.40 | <1 × 10−3 | ||

| p@20 ↓ | 0.21 ± 0.38 | 0.55 ± 0.48 | 0.72 ± 0.42 | 0.46 ± 0.48 | 0.84 ± 0.35 | 0.95 ± 0.21 | 0.57 ± 0.47 | <1 × 10−3 | ||

| p@30 ↓ | 0.09 ± 0.26 | 0.49 ± 0.47 | 0.58 ± 0.47 | 0.40 ± 0.47 | 0.79 ± 0.39 | 0.95 ± 0.19 | 0.43 ± 0.48 | <1 × 10−3 | ||

| CFC ↓ | 5.3 ± 3.3 | 12.2 ± 9.9 | 15.7 ± 10.5 | 9.6 ± 7.8 | 19.9 ± 11.1 | 25.0 ± 8.9 | 11.4 ± 8.9 | <1 × 10−3 | ||

| DistilBERT | SRG ↑ | 0.69 ± 0.08 | 0.26 ± 0.13 | 0.30 ± 0.14 | 0.26 ± 0.12 | 0.39 ± 0.16 | 0.00 ± 0.20 | 0.27 ± 0.14 | <1 × 10−3 | |

| MIF ↓ | 0.07 ± 0.05 | 0.13 ± 0.08 | 0.13 ± 0.09 | 0.12 ± 0.09 | 0.15 ± 0.08 | 0.29 ± 0.13 | 0.15 ± 0.10 | 2 × 10−3 | ||

| LIF ↑ | 0.75 ± 0.08 | 0.39 ± 0.11 | 0.42 ± 0.09 | 0.38 ± 0.09 | 0.54 ± 0.17 | 0.29 ± 0.17 | 0.41 ± 0.11 | <1 × 10−3 | ||

| p@10 ↓ | 0.20 ± 0.34 | 0.54 ± 0.46 | 0.54 ± 0.46 | 0.49 ± 0.47 | 0.66 ± 0.43 | 0.88 ± 0.28 | 0.58 ± 0.47 | <1 × 10−3 | ||

| p@20 ↓ | 0.03 ± 0.12 | 0.23 ± 0.35 | 0.20 ± 0.34 | 0.21 ± 0.35 | 0.28 ± 0.38 | 0.73 ± 0.38 | 0.32 ± 0.42 | 2 × 10−2 | ||

| p@30 ↓ | 0.01 ± 0.01 | 0.05 ± 0.15 | 0.06 ± 0.18 | 0.05 ± 0.14 | 0.07 ± 0.19 | 0.46 ± 0.43 | 0.09 ± 0.23 | 99 × 10−2 | ||

| CFC ↓ | 3.1 ± 1.5 | 4.9 ± 2.7 | 4.8 ± 2.7 | 4.8 ± 2.9 | 5.4 ± 2.9 | 9.7 ± 4.8 | 5.5 ± 3.1 | 1 × 10−2 | ||

| AP | BERT | SRG ↑ | 0.62 ± 0.22 | 0.25 ± 0.29 | 0.20 ± 0.23 | 0.26 ± 0.27 | 0.01 ± 0.12 | −0.03 ± 0.15 | 0.25 ± 0.26 | <1 × 10−3 |

| MIF ↓ | 0.33 ± 0.23 | 0.70 ± 0.29 | 0.75 ± 0.24 | 0.70 ± 0.27 | 0.91 ± 0.09 | 0.92 ± 0.08 | 0.70 ± 0.26 | <1 × 10−3 | ||

| LIF ↑ | 0.96 ± 0.01 | 0.95 ± 0.01 | 0.95 ± 0.01 | 0.96 ± 0.01 | 0.93 ± 0.09 | 0.89 ± 0.14 | 0.96 ± 0.01 | 99 × 10−2 | ||

| p@10 ↓ | 0.59 ± 0.49 | 0.72 ± 0.44 | 0.78 ± 0.41 | 0.68 ± 0.46 | 0.91 ± 0.27 | 0.96 ± 0.19 | 0.79 ± 0.41 | 74 × 10−2 | ||

| p@20 ↓ | 0.39 ± 0.49 | 0.71 ± 0.45 | 0.69 ± 0.46 | 0.59 ± 0.49 | 0.91 ± 0.27 | 0.96 ± 0.19 | 0.71 ± 0.45 | 4 × 10−2 | ||

| p@30 ↓ | 0.25 ± 0.42 | 0.68 ± 0.46 | 0.64 ± 0.47 | 0.56 ± 0.49 | 0.92 ± 0.25 | 0.95 ± 0.19 | 0.64 ± 0.47 | <1 × 10−3 | ||

| CFC ↓ | 5.6 ± 4.8 | 13.2 ± 9.9 | 12.6 ± 9.6 | 11.6 ± 9.6 | 16.8 ± 8.7 | 19.3 ± 7.4 | 12.4 ± 9.1 | <1 × 10−3 | ||

| RoBERTa | SRG ↑ | 0.66 ± 0.19 | 0.19 ± 0.23 | 0.25 ± 0.27 | 0.31 ± 0.25 | 0.05 ± 0.14 | −0.01 ± 0.16 | 0.27 ± 0.26 | <1 × 10−3 | |

| MIF ↓ | 0.29 ± 0.19 | 0.76 ± 0.23 | 0.69 ± 0.27 | 0.64 ± 0.26 | 0.86 ± 0.12 | 0.88 ± 0.14 | 0.68 ± 0.26 | <1 × 10−3 | ||

| LIF ↑ | 0.95 ± 0.01 | 0.95 ± 0.02 | 0.94 ± 0.03 | 0.95 ± 0.03 | 0.91 ± 0.10 | 0.87 ± 0.13 | 0.95 ± 0.02 | 99 × 10−2 | ||

| p@10 ↓ | 0.54 ± 0.49 | 0.84 ± 0.35 | 0.80 ± 0.39 | 0.69 ± 0.45 | 0.89 ± 0.30 | 0.94 ± 0.24 | 0.79 ± 0.40 | 17 × 10−2 | ||

| p@20 ↓ | 0.21 ± 0.39 | 0.72 ± 0.42 | 0.72 ± 0.44 | 0.51 ± 0.49 | 0.82 ± 0.36 | 0.91 ± 0.28 | 0.60 ± 0.47 | 1 × 10−3 | ||

| p@30 ↓ | 0.04 ± 0.19 | 0.64 ± 0.44 | 0.59 ± 0.47 | 0.46 ± 0.48 | 0.79 ± 0.38 | 0.85 ± 0.33 | 0.55 ± 0.46 | <1 × 10−3 | ||

| CFC ↓ | 4.2 ± 3.2 | 13.2 ± 9.7 | 11.9 ± 8.7 | 9.4 ± 8.3 | 14.9 ± 8.9 | 17.4 ± 8.0 | 10.9 ± 8.6 | 1 × 10−3 | ||

| DistilBERT | SRG ↑ | 0.42 ± 0.09 | 0.22 ± 0.11 | 0.22 ± 0.12 | 0.23 ± 0.12 | 0.16 ± 0.12 | −0.01 ± 0.14 | 0.23 ± 0.12 | <1 × 10−3 | |

| MIF ↓ | 0.34 ± 0.10 | 0.44 ± 0.13 | 0.43 ± 0.13 | 0.42 ± 0.13 | 0.50 ± 0.08 | 0.57 ± 0.13 | 0.43 ± 0.13 | 3 × 10−3 | ||

| LIF ↑ | 0.76 ± 0.04 | 0.65 ± 0.07 | 0.65 ± 0.06 | 0.65 ± 0.06 | 0.66 ± 0.13 | 0.57 ± 0.11 | 0.66 ± 0.07 | <1 × 10−3 | ||

| p@10 ↓ | 0.56 ± 0.34 | 0.63 ± 0.31 | 0.62 ± 0.33 | 0.62 ± 0.31 | 0.72 ± 0.29 | 0.81 ± 0.27 | 0.63 ± 0.31 | 82 × 10−2 | ||

| p@20 ↓ | 0.36 ± 0.25 | 0.49 ± 0.28 | 0.48 ± 0.29 | 0.45 ± 0.29 | 0.59 ± 0.24 | 0.76 ± 0.27 | 0.49 ± 0.29 | 36 × 10−2 | ||

| p@30 ↓ | 0.29 ± 0.16 | 0.40 ± 0.22 | 0.39 ± 0.23 | 0.36 ± 0.21 | 0.50 ± 0.17 | 0.68 ± 0.25 | 0.39 ± 0.22 | 6 × 10−2 | ||

| CFC ↓ | 4.3 ± 2.7 | 6.6 ± 4.8 | 6.5 ± 5.3 | 6.0 ± 5.0 | 7.7 ± 5.6 | 12.6 ± 7.0 | 6.5 ± 5.2 | 4 × 10−1 |

| Method | Model Access | Complexity | Parameter Definition |

|---|---|---|---|

| White-Box (Model-Specific) | |||

| Gradient (Saliency) | Required | None | |

| Gradient × Input | Required | None | |

| AttnLRP | Required | None | |

| Integrated Gradients (IG) | Required | m: Integral approx. steps | |

| Black-Box (Model-Agnostic) | |||

| LIME | Agnostic | N: Perturbation samples | |

| Partition SHAP | Agnostic | k: Feature coalitions | |

| EvoDropX (Sequential) | Agnostic | P: Pop., G: Gen., L: Tokens | |

| EvoDropX (Parallel) | Agnostic | ≈ | : Batching Overhead |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Singh, D.K.; Ryan, C. EvoDropX: Evolutionary Optimization of Feature Corruption Sequences for Faithful Explanations of Transformer Models. Algorithms 2026, 19, 187. https://doi.org/10.3390/a19030187

Singh DK, Ryan C. EvoDropX: Evolutionary Optimization of Feature Corruption Sequences for Faithful Explanations of Transformer Models. Algorithms. 2026; 19(3):187. https://doi.org/10.3390/a19030187

Chicago/Turabian StyleSingh, Dhiraj Kumar, and Conor Ryan. 2026. "EvoDropX: Evolutionary Optimization of Feature Corruption Sequences for Faithful Explanations of Transformer Models" Algorithms 19, no. 3: 187. https://doi.org/10.3390/a19030187

APA StyleSingh, D. K., & Ryan, C. (2026). EvoDropX: Evolutionary Optimization of Feature Corruption Sequences for Faithful Explanations of Transformer Models. Algorithms, 19(3), 187. https://doi.org/10.3390/a19030187