1. Introduction

Urban sanitation networks constitute the critical infrastructure underpinning public health and the sustainability of modern cities, managing millions of cubic meters of wastewater and stormwater daily. However, rapid global urbanization—with over 55% of the world’s population now residing in urban environments [

1]—has exponentially increased pressure on these systems, exposing their vulnerabilities in terms of maintenance, efficiency, and resilience. One persistent yet underestimated challenge in this context is pest control, particularly regarding the American cockroach (

Periplaneta americana), which not only poses a direct public health risk as a vector of pathogens [

2] but also serves as a biological indicator of structural and hygiene-related issues within sewer networks [

3]. Proactive management of these systems is therefore a fundamental component of urban sustainability and climate resilience [

4].

Traditionally, pest management in sanitation networks has relied on reactive, chemical-intensive approaches, such as periodic biocide application [

5]. While these methods may offer temporary control, they entail significant limitations, including high operational costs and adverse environmental impacts—such as soil and water contamination—and accelerate the development of pest resistance [

6]. Moreover, reactive strategies fail to address the root causes of pest proliferation, such as the accumulation of organic matter in sewer systems, which creates ideal microhabitats for cockroach survival and reproduction [

7]. In this context, the growing demand for sustainable urban infrastructure management has spurred the search for innovative alternatives that minimize environmental impact and optimize resource use [

5,

8]. Predictive modeling based on data mining algorithms has thus emerged as a key enabler for developing trained mathematical models. These techniques can uncover hidden patterns and extract actionable knowledge from the large volumes of environmental data collected via IoT sensors, thereby enabling precise parametrization of environmental bioindicators to predict public health vector problems [

9,

10]. This approach transforms management from reactive to proactive, converting raw data into actionable intelligence—processed, contextualized information that enables concrete, targeted interventions.

The scientific literature has established that temperature and humidity are key determinants of

Periplaneta americana proliferation in sanitation networks [

11]. However, this conventional perspective presents a critical gap by overlooking the potential of other indicators, such as carbon dioxide (CO

2). While CO

2 is generally recognized as an attractant for certain insects (e.g., mosquitoes), its specific relevance in the context of cockroaches—and, more importantly, its causal link to the anaerobic decomposition of organic matter, which forms the trophic foundation for these pests—has been largely underestimated [

12]. Emerging research suggests that CO

2 could serve as a more precise and earlier bioindicator—not only of pest activity [

13] but also of organic waste accumulation preceding infestations [

14]. This ability to signal emerging problems before they reach critical levels enables preventive interventions [

15,

16,

17], positioning CO

2 as a primary predictor that is causally more informative than conventional environmental parameters.

The identified gap in the literature is not merely about variables but is fundamentally methodological. Although well-established predictive models based on temperature and humidity exist in agricultural contexts—capable of anticipating pest outbreaks through environmental modeling and machine learning techniques [

18]—these advances have not been effectively transferred to urban settings, particularly sewer networks. Most available studies on

Periplaneta americana in underground environments remain limited to isolated correlational analyses, without evolving toward robust predictive systems that integrate multiple data sources [

19]. This limitation is further exacerbated by the lack of models capable of adequately handling the pronounced class imbalance inherent in infestation data—where non-activity is the predominant condition—and by the difficulty of interpreting the relative contribution of each variable within complex data mining architectures [

20]. Consequently, an unverified assumption persists: that temperature and humidity act as universal determinants, ignoring the possibility that their role may be secondary or context-dependent compared to more informative predictors like CO

2, whose relevance in this domain has barely been explored [

21]. Addressing this gap requires closing the full data mining cycle—integrating robust multivariate data acquisition in hostile environments with predictive models capable of discovering, weighting, and operationalizing patterns in real time. In this direction, recent developments in specialized IoT architectures and data mining algorithms are beginning to challenge the traditional paradigm by prioritizing CO

2 as a key variable, establishing actionable quantitative thresholds for early detection and enabling localized, preventive interventions [

22].

To address this knowledge gap, this article incorporates CO2 as the primary bioindicator for developing a robust, operational predictive model to support the sustainable management of urban sanitation networks, offering, beyond theoretical models, a fully implemented IoT-to-decision process, validated in a real-world urban sanitation network, demonstrating its immediate applicability and economic viability for utility operators seeking to optimize resource allocation and reduce maintenance costs. We base our work on the central hypothesis that CO2 is the most important predictor for modeling P. americana activity and that a model derived from an appropriate data mining pipeline can deliver accurate predictions even in extremely imbalanced datasets, where the absence of events is the norm. To test this hypothesis, we conducted an exhaustive evaluation of a broad spectrum of predictive data mining algorithms, employing repeated cross-validation and performance metrics specifically designed for imbalanced settings—most notably the Macro-F1 Score and Balanced Accuracy. Finally, we employed the SHAP (SHapley Additive exPlanations) method as a model interpretability tool to analyze the relative importance of predictor variables within a robust explanatory framework. This approach not only identifies which variables contribute most significantly to predictions but also reveals how their influence varies across different levels of pest activity, thereby adding a layer of contextual understanding to the model’s behavior.

Based on the objectives of this study, the following hypotheses were formulated and subsequently evaluated:

H1. Data mining models can be effectively used to predict cockroach activity levels in urban sewer networks using environmental sensor data.

H2. Carbon dioxide (CO2) concentration has a stronger influence on predicted cockroach activity than other traditional environmental indicators such as temperature and relative humidity.

H3. Geospatial heat map-based visualizations improve the interpretability and operational usability of predictive results compared to traditional chart-based representations.

This work represents a significant step toward the development of smarter and more sustainable urban management systems, aligning with the United Nations Sustainable Development Goals (SDGs), specifically SDG 11 (Sustainable Cities and Communities) [

23].

2. Materials and Methods

This study aimed to identify the optimal data mining model to predict the activity levels of Periplaneta americana in urban sanitation networks using environmental variables. The methodological approach focused on a rigorous process of modeling, cross-validation, and benchmarking of multiple algorithms.

2.1. Study Area and Sensor Deployment

The study was conducted in the urban sanitation network of the Parque Paraíso Arenal residential area, located in Córdoba, Andalusia, Spain (37.85° N, 4.75° W). Covering approximately 168,000 m

2, this area exhibits typical characteristics of Mediterranean urban environments, with hot summers and mild winters—conditions that favor the proliferation of

Periplaneta americana in sewer systems [

24]. The site was selected in collaboration with the municipal utility Saneamientos de Córdoba S.A. with the explicit goal of developing a predictive model that could be replicated in other urban zones with similar climatic conditions, where pest control remains a persistent public health and infrastructure management challenge.

To ensure representative and strategically distributed monitoring across the network, eight manholes (PC1–PC8) were selected for IoT sensor deployment, as shown in

Figure 1. This selection was not random; it was guided by expert pest control technicians from the municipal company, following two key criteria: terrain topography and human activity intensity. First, manholes were chosen to span a range of elevations—from low-lying areas near a stream to progressively higher zones—to assess the influence of altitude on underground environmental conditions. Second, locations with higher wastewater discharge and potential organic matter accumulation—key drivers of pest proliferation [

25]—were prioritized. Each manhole was precisely geolocated using GPS coordinates, enabling direct spatial correlation between collected environmental data and observed cockroach activity, and facilitating the generation of risk heatmaps.

2.2. IoT Architecture and Data Acquisition

Implementing a continuous, non-intrusive monitoring system in underground environments required a robust and energy-efficient IoT architecture (

Figure 2), comprising three main components: IoT sensors, communication infrastructure, and a data management platform.

Initial signal propagation tests revealed that cast iron manhole covers attenuated LoRaWAN transmissions at 868 MHz by approximately 68 dB, disrupting communications when sensors were fully enclosed underground. To resolve this, flexible, adhesive-backed LoRa antennas (EM500-ANT, Milesight IoT, Xiamen, China) were mounted along the inner metallic rim of each cover. This modification created a controlled signal leakage path through the narrow gap between cover and rim, restoring reliable connectivity with packet delivery rates exceeding 98% across all eight monitoring points while preserving the integrity of the underground deployment.

The sensors deployed were Milesight EM500-CO2 units (

https://resource.milesight.com/milesight/iot/document/em500-co2-datasheet-en.pdf, accessed on 30 December 2025) featuring LoRaWAN

® communication technology and designed to monitor CO

2 concentration, temperature, humidity, and barometric pressure in outdoor scenarios. With an estimated battery life of up to ten years (at 10-min intervals and 25 °C), these sensors transmitted data every 10 min via the LoRaWAN protocol—a widely adopted IoT standard due to its low power consumption and long-range transmission capabilities, even in signal-challenged environments such as underground sewer networks [

26].

Data collection and transmission were supported by a 6 dBi antenna and a 4G-connected gateway. This gateway relayed sensor data through The Things Network (TTN)—a global, open, and secure IoT network [

27]—to a data management platform built on the FIWARE architecture, an open-source framework widely used in smart city solutions [

28]. This design ensured reliable, real-time data transmission from manholes to the cloud, overcoming the inherent challenges of limited physical access and extreme conditions in underground settings [

29]. The platform integrated several key components: the FIWARE IoT Agent (for data ingestion from TTN), the Orion Context Broker (OCB) (for storing information in virtual entities), QuantumLeap (for processing and analyzing time-series data stored in CrateDB—a database optimized for such workloads), and Grafana (for intuitive, real-time environmental data visualization) [

30]. This end-to-end integration enabled not only data collection and storage but also analysis and presentation in an operator-friendly format, transforming raw data into actionable visual outputs such as predictive heatmaps [

31].

2.3. Data Collection and Cleaning Protocol

Sampling was conducted daily over a 113-day period from March to June 2022, yielding an initial dataset of 904 samples. The sampling protocol was designed to simultaneously capture biological activity and environmental conditions. Each day, the lid of each of the eight selected manholes was opened, and the presence of P. americana was recorded using an operational categorical scale commonly employed by municipal sanitation services for practical and systematic infestation assessment. Within this study, the scale comprised three levels: 0 (None: no individuals observed), 1 (Low: countable individuals), and 2 (High: uncountable large numbers). This scale reflects standard observational criteria used in urban pest control campaigns, enabling rapid and consistent field assessments. Concurrently, permanently installed sensors automatically transmitted CO2, temperature, and humidity values, ensuring precise temporal alignment between environmental conditions and pest activity.

To guarantee the highest quality and operational validity of the model input data, a data cleaning and filtering protocol was designed a priori based on two exclusion criteria and rigorously applied in strict accordance with both the technical standards established in the scientific literature [

32] and a formal consensus between municipal sanitation technicians (Saneamientos de Córdoba S.A.) and pest control experts from the Colegio Oficial de Biólogos de Andalucía. This protocol—documented before data collection began—defined conditions under which sensor readings and manual activity logs would not reflect stable ecological states, and therefore required exclusion during the final dataset curation phase. These criteria were:

- 1.

Two-hour post-opening exclusion:

Opening a manhole cover introduces transient microclimate perturbations (rapid CO2 dispersion, humidity shifts, and airflow-induced cockroach avoidance behavior) that invalidate both sensor readings and visual counts. Based on pilot tests showing CO2 stabilization after ~95 min (±15 ppm variation over 10 consecutive readings), a conservative 120-min exclusion window was applied uniformly to all eight monitoring points after each daily inspection.

- 2.

Precipitation-related exclusion:

Municipal experts confirmed that Periplaneta americana retreats toward deeper network sections during rainfall, consequently, absence observations during rain reflect transient displacement rather than genuine low activity. Following the expert protocol, all measurements recorded on calendar days with ≥0.2 mm accumulated rainfall (AEMET station # 5402, Córdoba Aeropuerto) were excluded. This threshold corresponds to AEMET’s minimum detection limit and captured all three rainy days during the 113-day monitoring period. The calendar-day approach was preferred because displacement behavior begins before rainfall onset (in response to barometric pressure drops) and persists during runoff phases.

Both rules were applied consistently across all eight manholes (PC1–PC8). The two-hour rule operated at the individual inspection-event level (each manhole had its own independent 120-min window based on its inspection time), while the precipitation rule operated at the calendar-day level, affecting all manholes simultaneously on the three excluded days.

Following data cleaning and preprocessing, the final dataset used for model training and evaluation comprised 904 observations. This sample strikes an appropriate balance between data integrity and statistical representativeness. The distribution of the dependent variable reveals pronounced class imbalance, with the “None” category (no activity) dominating at 77.2% (

n = 699), compared to 18.7% classified as “Low” (

n = 169) and only 4.0% as “High” (

n = 36), confirming the highly imbalanced nature of the problem. Environmental variables exhibited substantial variability, with mean values of 23.83 °C for temperature, 82.85% for relative humidity, and 898.1 ppm for CO

2 concentration, as summarized in

Table 1.

2.4. Model Selection and Variable Importance

All baseline and classical machine learning models, including logistic regression, k-nearest neighbors, support vector machines, decision trees, and voting ensembles, were implemented using scikit-learn (version 1.5.1). Synthetic Minority Over-sampling Technique (SMOTE) and the Balanced Random Forest classifier were implemented using imbalanced-learn (version 0.12.3). Gradient boosting models were implemented using LightGBM (version 4.6.0), XGBoost (version 3.1.2), and CatBoost (version 1.2.8). All experiments were executed in Python (version 3.12.7).

To ensure a comprehensive evaluation, eleven distinct predictive configurations, both classical and state-of-the-art data mining algorithms were considered, including linear models, instance-based methods, tree-based learners, ensemble approaches, and gradient boosting techniques [

33]. Special attention was given to strategies for handling class imbalance:

Logistic Regression (Softmax LR): Multinomial logistic regression was used as a baseline linear classifier, modeling class probabilities through a softmax function. Its simplicity and interpretability make it a useful reference model for evaluating the added value of more complex approaches.

Logistic Regression with Class Weighting (Balanced Softmax LR): A class-weighted variant of logistic regression was employed to mitigate class imbalance by penalizing misclassification of minority classes more heavily. This approach adjusts the loss function without altering the original data distribution.

SMOTE + Logistic Regression: To explicitly address data imbalance, Synthetic Minority Over-sampling Technique (SMOTE) was combined with logistic regression. SMOTE generates synthetic samples of the minority class in feature space, enabling the classifier to learn more robust decision boundaries.

k-Nearest Neighbors (KNN): KNN is an instance-based learning algorithm that assigns class labels based on the majority class among the k closest samples in feature space. While simple, it can capture local data structures and non-linear patterns, particularly in low-dimensional feature spaces.

Support Vector Machine with RBF Kernel (Balanced SVM): A Support Vector Machine with a radial basis function kernel was used to model non-linear class boundaries. Class weighting was applied to account for imbalance, allowing the model to maximize margin separation while emphasizing minority-class samples.

Decision Tree (Balanced Decision Tree): Decision trees recursively partition the feature space using threshold-based rules, producing an interpretable hierarchical structure. Class weighting was incorporated to reduce bias toward the majority class, although single trees may still be sensitive to data variability.

Balanced Random Forest (BalancedRF): Balanced Random Forest is an ensemble of decision trees trained on balanced bootstrap samples. By combining bagging with class-balanced sampling, this method improves robustness and reduces variance while maintaining sensitivity to minority classes.

LightGBM (Balanced): LightGBM is a gradient boosting framework based on decision trees, optimized for efficiency and scalability. It incorporates histogram-based splitting and leaf-wise tree growth, and class weighting was applied to address imbalance during training.

XGBoost with Balanced Sample Weights: XGBoost is a powerful gradient boosting algorithm that optimizes an additive ensemble of trees using second-order gradients. Since native multiclass class weighting is limited, balanced sample weights were applied during training to compensate for class imbalance.

CatBoost (Balanced): CatBoost is a gradient boosting algorithm designed to reduce prediction bias and improve convergence stability. It natively supports automatic class weighting and is particularly effective in handling complex, non-linear relationships.

Soft Voting Ensemble: A soft voting ensemble was constructed by combining the probabilistic outputs of several high-performing base models. By averaging predicted class probabilities, the ensemble aims to leverage complementary strengths of individual classifiers and improve overall generalization performance.

A repeated 10 × 10 cross-validation (CV) scheme was employed to ensure result robustness and mitigate overfitting [

34]. The validation scheme adopted is designed to evaluate whether the models learn universal environmental thresholds governing cockroach activity, and not to assess generalization to previously unseen manholes. Consequently, validation is performed at the observation level using a stratified 10 × 10 repeated cross-validation (10 folds, 10 repetitions), with a fixed random seed for full reproducibility. Splits are conducted at the row level, with stratification applied only to preserve the proportion of activity classes (“None”, “Low”, “High”). Critically, no spatial blocking (by manhole) nor temporal blocking (by day) was enforced: samples from the same manhole or from temporally adjacent days may appear in both training and test sets within a given fold.

Under this hypothesis, observations sharing equivalent environmental conditions (e.g., identical CO2, temperature, and humidity values) are considered valid ecological replicates—even when they originate from the same manhole or from consecutive days. This design aligns with the operational objective of detecting critical environmental states anywhere in the sewer network, independently of location-specific historical patterns. Therefore, the absence of manhole- or time-based blocking is not an oversight, but a deliberate methodological choice, grounded in our central hypothesis (H2) and the real-world deployment requirements defined by the sanitation operator.

Leakage is mitigated through our data-cleaning protocol (

Section 2.3), which excludes all readings taken within two hours after manhole lid opening—eliminating transient microenvironmental perturbations—and ensures that retained observations reflect quasi-independent environmental states.

Given the pronounced class imbalance—where the “None” class dominates—the evaluation prioritized metrics that penalize poor performance on minority classes, including Macro-F1 Score, Balanced Accuracy, and Weighted-F1 Score, rather than conventional accuracy [

35].

To uphold methodological transparency and enable full reproducibility, all data mining algorithms were implemented in Python using the Scikit-learn library [

36]. The complete source code and all experimental scripts have been made publicly available in a GitHub repository, facilitating independent verification and replication. Furthermore, the real-world dataset used for model training and validation has been openly shared via ZENODO [

37], thereby fulfilling the principles of open science and enabling future benchmarking and research advancements.

Model selection followed a rigorous statistical framework. First, the Friedman test [

38] was applied to assess whether significant performance differences existed among the evaluated algorithms. To identify which specific models differed significantly from one another, Nemenyi post hoc diagrams [

39] were used. These visualize the average rank of each model and the critical difference (CD) for each evaluation metric.

Finally, to interpret predictor importance and test our central hypothesis regarding the role of CO

2, we employed the SHAP (SHapley Additive exPlanations) method [

40]. SHAP quantifies the contribution of each predictor—CO

2, temperature, and humidity—to the model’s prediction for each specific class (“None,” “Low,” “High”), offering a contextual and granular understanding of how and why the model makes its decisions.

3. Results

The systematic evaluation of multiple data mining algorithms revealed a set of robust models capable of accurately predicting Periplaneta americana activity levels in urban sanitation networks using environmental variables collected by the IoT system.

3.1. Comparative Model Evaluation and Best Model Selection

For a more detailed comparison,

Table 2 presents the performance metrics of the eleven evaluated models, ranked by their Macro-F1 Score. This metric was selected as the primary criterion for final model selection because it equally penalizes poor performance across all classes—a critical consideration in a highly imbalanced problem where the “High” class accounts for only 4% of the data.

As can be observed, gradient boosting-based models (XGBoost, LightGBM, CatBoost) and the soft voting ensemble (Ensemble_soft) lead the rankings, achieving Macro-F1 scores above 0.92. The XGBoost_balanced model achieved strong predictive performance (Macro-F1 = 0.924) on the Córdoba sewer network dataset under the environmental conditions and sampling protocol described in

Section 2.

Figure 3a reinforces this finding by illustrating the precision–recall trade-off specifically for the minority “High” class (severe infestation). The top five models display curves closely approaching the upper-right corner, indicating high precision and recall for this critical class. Moreover, all top models achieve AUPRC values above 0.96, confirming their capacity to identify critical infestation events with high reliability while avoiding excessive false positives.

Figure 3b shows the Macro-F1 Score of each model as a function of its rank derived from repeated cross-validation. The results clearly place gradient boosting models (XGBoost, LightGBM) and the ensemble method at the top positions, with scores exceeding 0.92, underscoring their superior performance in this highly imbalanced multiclass classification task.

The initial statistical analysis confirmed the presence of significant performance differences among the evaluated algorithms. The Friedman test yielded

p-values below 0.05 for all primary metrics (Accuracy, Balanced Accuracy, Macro-F1, and Weighted-F1), indicating that the models are not statistically equivalent. These differences were clearly visualized using Nemenyi post hoc diagrams (

Figure 4).

The Nemenyi analysis was conducted across the four key metrics, using a critical difference (CD) of 4.2836—below which differences between models are not considered statistically significant. Overall, gradient boosting-based models (XGBoost, LightGBM, CatBoost) and the soft voting ensemble (Ensemble_soft) consistently emerged as the top-performing group across most metrics. Their rank intervals overlap substantially, indicating no statistically significant differences among them.

Notably, this group excelled in metrics most relevant for imbalanced classification tasks—particularly the Macro-F1 Score, which is essential given the extreme class skew (77.2% of instances labeled “None”), and Balanced Accuracy. In contrast, algorithms such as Balanced SVM, Balanced Softmax Logistic Regression, and Balanced Decision Tree consistently ranked in the lower performance groups, with statistically inferior results. Models like Balanced CatBoost and KNN exhibited intermediate behavior: while clearly separated from the top-performing group, they significantly outperformed the weakest models, occupying a distinct middle tier in the performance hierarchy.

3.2. Performance and Error Analysis of the Selected Model (XGBoost_Balanced)

To provide a rigorous assessment of model behavior under extreme class imbalance—where the operationally critical “High” infestation class represents only 4.0% of observations—we conducted a detailed per-class error analysis for the top-performing XGBoost_balanced model.

Table 3 reports the complete error decomposition for the minority class, including support counts (

n = 36 true “High” instances), false negatives (FN = 3), and false positives (FP = 4). Critically, the model achieved a recall of 91.7% for severe infestations, with 95% bootstrap confidence intervals indicating stable performance across validation folds (88.5–94.7%). The associated precision of 91.5% (95% CI: 89.1–93.9%) confirms that alerts generated for high-risk zones carry high reliability, minimizing unnecessary interventions.

Figure 5 presents the row-normalized confusion matrix derived from aggregated out-of-fold predictions across the full 10 × 10 cross-validation procedure. The visualization reveals three operationally significant patterns: (i) exceptional separation between absence (“None”) and severe infestation (“High”), with zero instances of the latter misclassified as absence; (ii) conservative error behavior for the minority class, where the 8.3% of misclassified “High” instances were assigned exclusively to the adjacent “Low” category rather than to “None”; and (iii) a coherent ordinal structure in misclassifications, with errors concentrated between neighboring classes rather than across extremes. This pattern confirms that the model has learned ecologically meaningful decision boundaries that prioritize safety in operational deployment—never completely missing a severe infestation event while maintaining high precision for actionable alerts.

3.3. Variable Importance Interpretation (SHAP Analysis)

To validate our central hypothesis regarding the role of CO2, we conducted a variable importance analysis using the SHAP (SHapley Additive exPlanations) method. This analysis not only confirms that CO2 is the most influential predictor but also reveals how its impact varies across target classes. In our case, we have selected the XGBoost prediction model generated from our dataset for applying SHAP methods due to it is one of the best algorithms, together with ensemblers.

Figure 6 concisely summarizes the mean importance of each variable per class, quantified by the mean absolute SHAP value (mean (|SHAP value|)). The results are unequivocal: carbon dioxide (CO

2) is, without doubt, the most important predictor across all classes, with an average impact exceeding 6.5. This magnitude is nearly double that of humidity (approximately 3.5) and more than double that of temperature (approximately 2.5).

This finding directly challenges the conventional assumption in the literature, which has historically focused on temperature and humidity as the primary determinants of cockroach activity [

11]. In our specific context, CO

2 emerges as a robust and dominant bioindicator—its signal proving essential for the accurate prediction of pest activity levels in urban sewer networks.

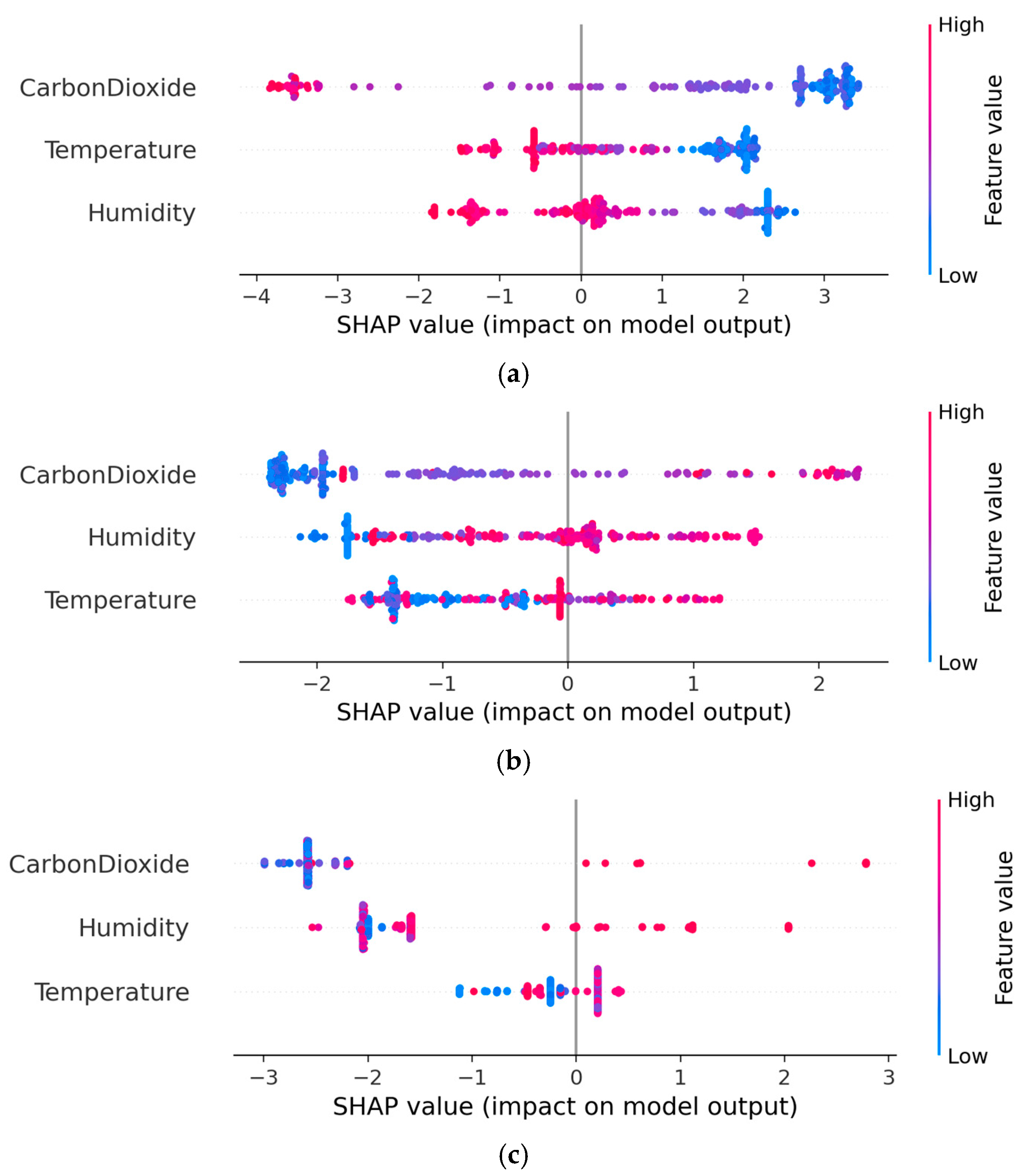

However, the analysis illustrated in the beeswarm plots (

Figure 7) provides a deeper and more contextualized understanding by showing how each predictor—CO

2, temperature, and humidity—influences the model’s prediction for each of the three

Periplaneta americana activity classes. The results reveal the following nuanced patterns:

In the case of the “None” class (Absence of Pests) (

Figure 7a), low CO

2 values have a strongly positive impact on the prediction of this class. This means that when CO

2 is low, the model is very confident that there is no cockroach activity. Temperature and humidity have a much weaker and less consistent impact.

For the “Low” class (

Figure 7b) a more complex pattern is revealed. Average CO

2 values seem to favor the prediction of “Low”, while very high or low values tend to decrease the probability of this class. In addition, high humidity has a positive impact on the prediction of “Low”, suggesting that, in humid conditions, even with medium CO

2, cockroach proliferation may be limited.

Finally, for the “High” Class (Severe Proliferation) the clearest and strongest relationship is observed in

Figure 7c. High CO

2 values have a strongly positive impact on the prediction of “High”. This confirms that high CO

2 is the most robust indicator of severe cockroach proliferation. Temperature also contributes positively, but to a lesser extent, while high humidity tends to reduce the probability of predicting severe infestation (‘High’ class).”.

These results demonstrate that, although CO2 is the dominant predictor, temperature and humidity are not irrelevant. Their influence is contextual and secondary, varying depending on the activity level being predicted. The SHAP analysis enables a transparent understanding of the model’s internal logic, revealing non-linear and class-specific interactions that would remain hidden when relying solely on global feature importance metrics.

3.4. Pest Activity Visualization and Subjective Assessment

The integration of the final model into the real-time monitoring platform enabled the generation of heatmaps visualizing pest activity across the study area. These maps—derived from sensor-collected data processed by the predictive model—provide municipal operators with a powerful, intuitive decision-support tool for proactive infrastructure management.

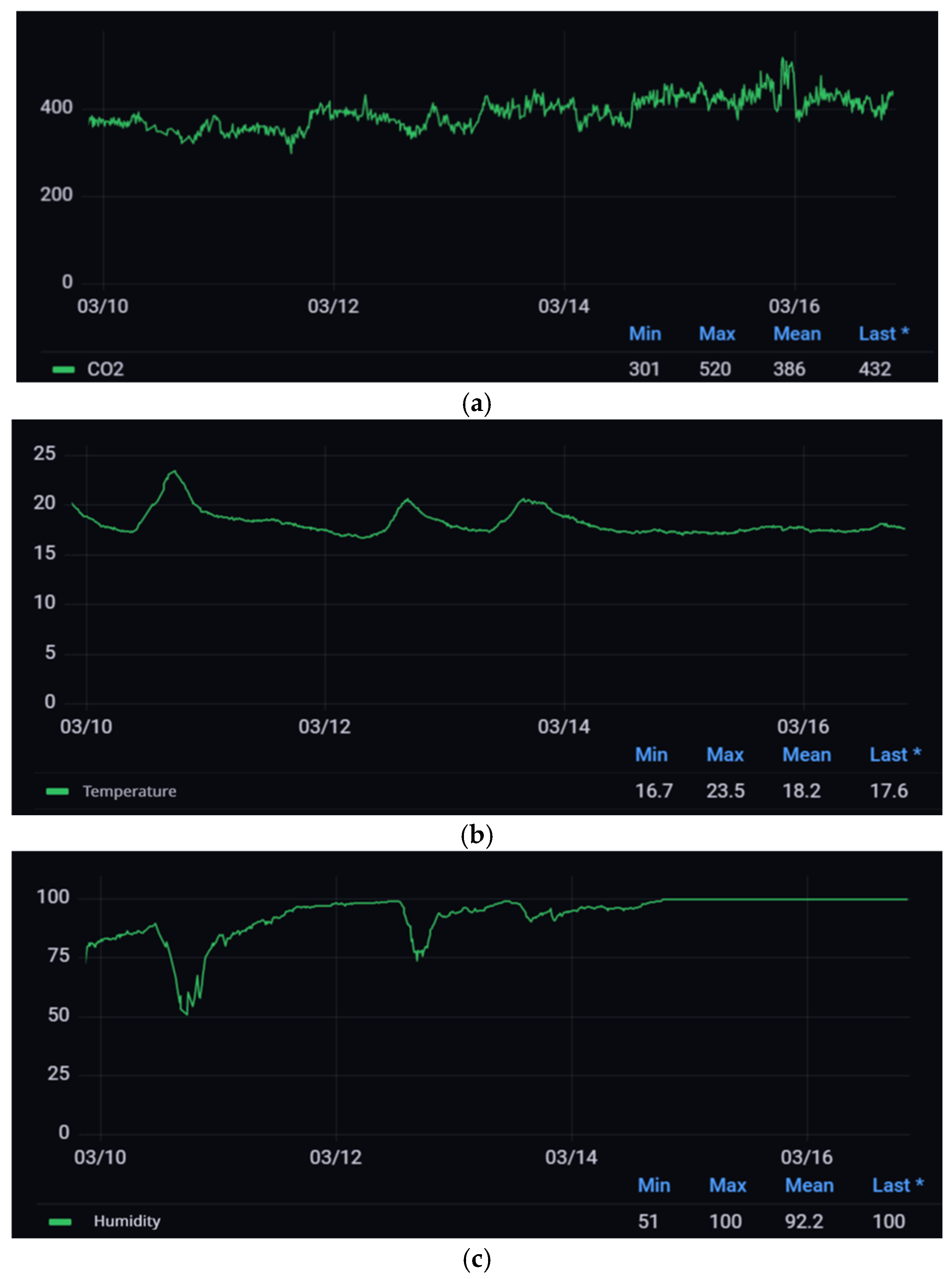

Figure 8 displays the heatmaps generated from the initial (a) and final data (b) of the study period, respectively, after the model had been fully trained and validated. As expected, the beginning of the study (early spring) coincided with minimal cockroach activity. In stark contrast, by mid-June, distinct hotspots of severe infestation emerged, concentrated primarily around Parque Paraíso Arenal and along Amalia Rodríguez and Sauce streets. This spatiotemporal evolution aligns with seasonal biological patterns and validates the model’s ability to capture and visualize the onset and intensification of pest proliferation in real-world urban sewer networks.

To evaluate the effectiveness of this visualization in a real operational setting, a usability assessment was conducted with 22 experts in urban sanitation management—including engineers, technical managers, and maintenance supervisors—each with more than five years of professional experience. Participants compared the heatmap-based interface overlaid on Google Maps against traditional graphical representations displayed in the Grafana dashboard (

Figure 9) for interpreting predicted cockroach activity.

The evaluation followed human-centered design principles as outlined in the ISO 9241-210 standard [

41] and employed a 5-point Likert scale [

42] to assess five key statements regarding the

usefulness and

intuitiveness of each visualization approach. This methodology enabled a structured, user-informed comparison of how effectively each interface supports rapid comprehension, spatial awareness, and operational decision-making in the context of pest monitoring and intervention planning.

The results of this evaluation, summarized in

Table 4, show a strong consensus among experts regarding the operational advantages of the heatmap. 91% of participants agreed or strongly agreed that the map allows for faster identification of critical intervention zones (S1), 86% considered it more intuitive for understanding infestation risk (S2), and 89% judged it more suitable for daily use in control centers (S3). Furthermore, 95% of the experts confirmed that the map is appropriate for daily operational use (S4).

However, as shown in

Figure 10, statement S5—that the heatmap provides more precise information than traditional graphs for detailed quantitative analysis—received a significantly lower level of agreement (64%). This finding indicates that, although the heatmap is superior for strategic decision-making and situational awareness, traditional graphs remain preferred for tasks requiring exact numerical precision.

4. Discussion

The results of this study provide a multidimensional analysis of the application of predictive data mining techniques—supported by IoT sensors—to address the complex, highly imbalanced operational problem of predicting cockroach infestation. The discussion is structured around four main interpretive axes emerging from the findings: the redefinition of the hierarchy among environmental predictors, the differential capacity of algorithms to handle extreme class imbalance, the critical role of interpretability and visualization in operational adoption, and the inherent limitations that outline the path for future research.

4.1. Algorithmic Robustness in Extreme Imbalance: Beyond Global Accuracy

The systematic comparative evaluation highlights a significant disparity in the ability of different data mining algorithms to manage the extremely skewed class distribution (77.2% “None”). The consistent statistical superiority of gradient boosting-based models (XGBoost, LightGBM, CatBoost) and the soft voting ensemble (Ensemble_soft)—confirmed by the Friedman test and Nemenyi diagrams (

Figure 4)—goes beyond mere accuracy metrics.

Their exceptional performance in Macro-F1 Score (>0.92) and AUPRC for the “High” class (>0.97) (

Table 2) indicates that these algorithms possess intrinsic mechanisms—such as adaptive loss functions or asymmetric tree growth—that enable them to learn robust signals from minority classes without being overwhelmed by the majority. In contrast, the significantly lower performance of classical algorithms such as KNN, SVM, or simple decision trees—even with class-balancing adjustments—demonstrates that in domains with extreme imbalance, algorithm selection is not a technical detail but a critical factor determining the very feasibility of a predictive solution. This finding has direct implications for the design of future operational systems, where effectiveness in detecting rare but critical events is paramount.

4.2. The Supremacy of CO2: Reinterpreting Traditional Environmental Predictors

The most robust and consistently replicated finding across all metrics and interpretability methods is the identification of carbon dioxide (CO

2) as the single most influential predictor for modeling

P. americana activity. This result substantially challenges the prevailing consensus in the specialized literature, which for decades has positioned temperature and humidity as the primary environmental determinants [

11,

15]. The SHAP analysis not only quantifies this primacy—showing a mean impact nearly double that of humidity and more than double that of temperature (

Figure 5)—but also reveals its causal and contextual nature.

This dominance can be interpreted by recognizing CO

2 as a proxy for ongoing biological processes within the sewer ecosystem. While temperature and humidity define a potential habitability envelope, CO

2 concentrations constitute a direct, integrative chemical signature of active biological metabolism—particularly anaerobic decomposition of organic matter, which serves as the primary food substrate [

13]. Thus, elevated CO

2 levels (above the identified threshold of 794 ppm) do not merely indicate favourable conditions but signal the active presence and metabolic activity of colonies, functioning as a more immediate and specific bioindicator than thermohygrometric parameters.

The class-wise SHAP analysis (

Figure 6) adds a crucial layer of understanding by showing that temperature and humidity are not irrelevant but rather modulatory and conditional. For instance, the positive role of high humidity for the “Low” class and its negative effect for the “High” class suggests the existence of complex thresholds and interactions where these traditional factors can either amplify or attenuate the effect of the dominant predictor (CO

2), redefining their contribution as secondary and contextual rather than deterministic.

4.3. Interpretability and Visualization: From Black-Box Models to Actionable Intelligence

The ultimate utility of a predictive model in an operational setting depends not only on its accuracy but also on end users’ ability to understand and act upon its outputs. This study addressed this challenge at two complementary levels. First, the application of the SHAP method provided local and global explanations that go beyond traditional feature importance metrics. By decomposing how each variable contributes to each specific prediction (

Figure 7), SHAP transforms the model into a diagnostic tool, enabling managers not only to trust an alert but also to understand the underlying environmental logic—for example, discerning whether a “High” risk alert is primarily driven by a CO

2 spike or by a combination of factors.

Second, the usability evaluation with domain experts (

Table 4,

Figure 10) offers strong empirical validation of the operational value of geospatial visualization. The high agreement level (≥86%) that heatmaps improve rapid identification of critical zones, intuitiveness, and suitability for daily use confirms the hypothesis that this representation better aligns with real-time situational awareness and strategic decision-making needs. The nuanced result for statement S5—where only 64% found the heatmap superior for detailed quantitative analysis—is equally revealing. It suggests that geospatial visualizations and traditional analytics (e.g., Grafana dashboards) are not mutually exclusive but complementary, each optimal for a different phase of the management cycle: heatmaps for monitoring and prioritization, and dashboards for auditing and in-depth analysis.

4.4. Limitations and Practical Considerations

Despite the methodological rigor and positive outcomes, it is important to acknowledge this study’s limitations to contextualize its scope and guide future work. A primary limitation is the geographic and climatic specificity of the study area. The external validity of the model—particularly the identified CO2 thresholds—must be evaluated in sanitation networks across different climatic regions, topographies, and land-use patterns to ensure generalizability. Future work will focus on multi-city trials to assess transferability and determine whether CO2 thresholds and variable importance hierarchies require recalibration in distinct urban contexts.

Second, the current model relies on a limited set of three environmental variables. Incorporating additional predictors—such as concentrations of other gases (CH4, H2S), hydraulic parameters, or external data (precipitation, seasonality)—could significantly enrich the model, capturing more complex interactions and enhancing both predictive power and explanatory capacity.

A third consideration concerns the economic and operational feasibility of large-scale deployment. The initial cost of IoT infrastructure and ongoing maintenance may pose barriers to widespread adoption. However, the anticipated development of more affordable sensors and advances in communication technologies are likely to enable faster, more cost-effective scaling of these solutions.

5. Conclusions

This work is framed within an industrial special issue, prioritizing robustness, deployability, and decision-support value over theoretical algorithmic novelty. In this sense, this study has demonstrated the viability of an integrated predictive system for proactive pest management in sanitation networks, validating the three research hypotheses through a rigorous approach combining IoT sensing, advanced data mining, and geospatial visualization.

First, Hypothesis H1 is confirmed: data mining models—particularly those based on gradient boosting (XGBoost, LightGBM, CatBoost) and ensemble methods—can be used with high efficacy to predict Periplaneta americana activity levels from environmental data. Their exceptional performance (Macro-F1 > 0.92) on a markedly imbalanced dataset—where the class of greatest interest (“High”) represents only 4%—highlights their robustness and suitability for such applications. These algorithms not only significantly outperformed classical methods but also proved capable of extracting reliable predictive patterns from complex environmental signals, establishing a methodological precedent for data mining in critical infrastructure monitoring.

Second, the results strongly support Hypothesis H2: carbon dioxide (CO2) concentration emerges as the most influential environmental predictor, clearly surpassing temperature and relative humidity. The SHAP analysis quantified this primacy, revealing that CO2’s average impact on model output is approximately double that of humidity and more than double that of temperature. This finding redefines the relevant bioindicator framework for sewer pest management, shifting focus from traditional thermohygrometric parameters to a direct marker of biological activity and organic matter decomposition. The identification of a ~794 ppm threshold as a cutoff for severe proliferation further provides a quantitative, actionable criterion for early warning alerts.

Finally, Hypothesis H3 is validated: geospatial heatmap visualization of predictive outputs proved significantly more effective than traditional graphical representations for operational interpretation and decision-making. The usability evaluation with 22 experts revealed overwhelming consensus (≥86% agreement) that this interface enables faster identification of critical zones, is more intuitive, and is better suited for daily use in control centers. This result confirms that effectively translating model output into an intuitive visual tool is essential for bridging the gap between technical prediction and on-the-ground preventive action.

In summary, this work not only verifies the stated hypotheses but also delivers a complete, reproducible methodological framework integrating robust IoT data capture, imbalance-resilient predictive modeling, causal variable interpretation, and operational visualization. It demonstrates that urban pest management can be transformed from a reactive, biocide-dependent paradigm to a predictive, sustainable, and data-driven approach.

The findings not only offer a concrete solution but also chart a clear path for future development. For this predictive approach to mature and scale, several research directions are valuable. Notably, CO2 thresholds and variable importance were established in a specific network under a Mediterranean climate; the logical next step is to test the model’s adaptability in other contexts—such as sanitation networks in cities with different climates, more complex topographies, or higher urban density. This external validation is crucial to ensure the tool is robust and scalable, evolving from a successful case study into a widely applicable solution.

Simultaneously, the current predictive model has significant room to become richer and more precise—for example, by incorporating new data sources such as measurements of other decomposition-related gases (methane or hydrogen sulfide), in-pipe flow parameters, or real-time meteorological data. Such enhancements could reveal subtler environmental interactions and not only refine predictions but also deepen understanding of the complex ecology of the urban subsurface.

From a practical standpoint, the long-term economic and operational viability of the IoT infrastructure remains an open challenge. Research into active data mining strategies—for instance, algorithms that dynamically decide when and how frequently to measure based on estimated risk—could drastically optimize energy consumption and sensor lifespan, reducing maintenance costs and enhancing system sustainability.

True operational transformation, however, will come with full integration into municipal workflows. The ultimate goal is to automatically connect the predictive platform with existing asset management systems (CMMS) and digital mapping tools (GIS) already used by city authorities. In this vision, a high-risk alert would automatically generate a work order for maintenance teams, assign an optimized route, and log the completed intervention—closing the loop of intelligent management without manual intervention.

Finally, the versatility of the methodological framework developed here invites broader application. The same logic of sensorization, data mining, and visualization could be extended to predict the activity of other vectors—such as rodents or mosquitoes—or even to monitor other infrastructure issues, such as pipe corrosion or grease and sediment accumulation.

Advancing along these lines will decisively contribute to realizing the ideal of smart, resilient cities—shifting from a management model based on reaction and fixed schedules to one governed by data and anticipation, where infrastructure is not merely repaired but proactively and sustainably cared for in service of public health.