1. Introduction

Graphs are a fundamental abstraction for modeling relational data across domains such as data mining, network analysis, and information retrieval [

1,

2,

3]. However, their pairwise structure inherently limits the ability to represent high-order interactions involving multiple entities [

4,

5,

6,

7,

8]. Hypergraphs address this limitation by allowing for a hyperedge to connect an arbitrary number of nodes, providing a natural representation for group-level or co-occurring interactions such as collaborative authorship or multi-item transactions.

Local clustering aims to identify a coherent, densely connected region around a seed node [

9,

10,

11]. It enables scalable, seed-centric exploration without requiring global processing of the entire structure, making it valuable for large and complex datasets. While local clustering has been extensively studied on graphs, extending it to hypergraphs—particularly

heterogeneous hypergraphs with multi-typed nodes and hyperedges—remains challenging. Existing hypergraph clustering techniques often treat all nodes and hyperedges uniformly, ignoring semantic types, interaction roles, or structural heterogeneity. This leads to clusters that are structurally connected but semantically incoherent, limiting the interpretability and utility of the results.

To overcome these limitations, this paper investigates the problem of local clustering in heterogeneous hypergraphs and introduces HHLC, a heuristic framework built on a type-aware conductance measure. This measure jointly captures high-order connectivity and semantic consistency, enabling clusters that are both structurally coherent and type-sensitive. By integrating heterogeneity into the clustering process, our approach addresses the shortcomings of traditional node-centric and uniform hypergraph methods.

1.1. Applications

Heterogeneous hypergraphs naturally arise in many real-world systems where interactions involve multiple entities of different types. Local clustering on such structures enables focused exploration centered on a given seed while preserving both structural and semantic signals [

12,

13,

14].

Scholarly networks: A paper may be associated with authors, venues, topics, and affiliations. Identifying a local cluster around a seed paper or author helps reveal tightly related research themes or collaboration communities.

Knowledge graphs and multi-modal information retrieval: Queries often involve entities connected through high-order relations (e.g., “diseases genes drugs”, “events locations documents”). Heterogeneous local clustering enables retrieving semantically coherent contextual neighborhoods for downstream reasoning or search.

Social and interaction networks: Modern platforms include users, groups, posts, tags, and events. Local clustering around a user or interaction event helps uncover latent micro-communities or activity patterns that cannot be captured by pairwise graph models.

In all these scenarios, interactions are inherently high-order and multi-typed, making heterogeneous hypergraphs a natural representation. A type-aware local clustering algorithm can therefore uncover compact, semantically coherent neighborhoods that are difficult to detect using homogeneous or graph-based methods.

1.2. Motivation and Challenge

Hypergraphs naturally capture high-order interactions, yet most existing models assume homogeneous nodes and hyperedges, overlooking the semantic distinctions and structural asymmetries that arise in real heterogeneous data. This limitation becomes more pronounced in local clustering, where current methods typically treat all entity types uniformly and fail to distinguish between type-consistent and cross-type expansions. Moreover, classical graph-based conductance measures do not extend directly to heterogeneous hypergraphs, as they ignore partially overlapping high-order relations and type-specific boundaries. As a result, existing approaches often yield clusters that are structurally connected but semantically incoherent, mixing unrelated types and diminishing interpretability. These challenges call for a principled, type-aware formulation of local clustering that jointly preserves high-order connectivity and semantic consistency.

1.3. Contribution

To address these challenges, we propose a new framework for local clustering in heterogeneous hypergraphs that integrates type semantics directly into both the objective function and the cluster expansion process. Our method leverages the expressive power of hyperedges to capture high-order relationships while enforcing type-aware consistency to produce coherent, interpretable clusters centered around a given seed hyperedge.

Our main contributions are as follows:

We formalize the problem of type-aware local clustering in heterogeneous hypergraphs, introducing a principled setting where both high-order connectivity and semantic heterogeneity are simultaneously considered.

We propose a novel heterogeneity-aware conductance metric that jointly evaluates structural compactness and type consistency, enabling more faithful assessment of cluster quality in multi-typed hypergraphs.

We design an enhanced greedy expansion algorithm that selectively incorporates type-consistent hyperedges, penalizes cross-type interactions, and prunes low-contribution structures, leading to clusters that are both semantically coherent and structurally compact.

We conduct extensive experiments on multiple real-world heterogeneous datasets, demonstrating that our approach consistently outperforms strong baselines in terms of conductance, semantic purity, and overall clustering quality.

2. Related Works

Hypergraphs extend traditional graph structures by allowing for edges, known as hyperedges, to connect more than two vertices, enabling a richer representation of complex relationships in data [

15]. This generalization has proven particularly useful in domains such as computer vision, bioinformatics, and social network analysis, where interactions often involve groups rather than pairs. Recent research has focused on leveraging hypergraph spectral theory and partitioning techniques to improve clustering and classification tasks [

16]. However, most classical hypergraph models assume homogeneity in vertex and edge types, which limits their expressiveness in scenarios involving multi-modal or multi-relational data [

17].

Heterogeneous hypergraphs address this limitation by using different types of nodes and hyperedges. This allows for more detailed modeling of real-world systems such as academic networks, recommendation platforms, and knowledge graphs [

18]. Local clustering focuses on finding tightly connected communities around a seed node or within a small region. It is popular because it scales well and supports personalized applications. Common methods adapt random walks, diffusion, or personalized PageRank to handle type-specific interactions and edge semantics [

17]. Despite these advances, challenges remain in balancing computational efficiency with the preservation of structural and semantic heterogeneity [

16].

Graph-based models provide a structured way to represent entities and their relationships. Traditional methods such as collaborative filtering and matrix factorization have been widely used. These approaches were later improved by graph neural networks (GNNs), which use neighborhood information to learn richer representations of users and items [

19].

GNN-based methods capture more context than earlier techniques. However, they are usually limited to pairwise interactions between nodes. This restriction makes it hard to model complex relationships that often appear in real-world recommendation scenarios. For example, a user’s preferences may depend on multiple factors such as item categories, social connections, and temporal patterns. Capturing these higher-order interactions requires more advanced models beyond simple graphs.

To address this limitation, hypergraph-based models have gained attention for their ability to represent high-order relationships [

20]. In a hypergraph, a hyperedge can connect multiple nodes simultaneously, enabling the modeling of group-level interactions such as co-purchases, shared attributes, or multi-user collaborations. Recent works have applied hypergraph neural networks (HGNNs) to recommendation tasks, demonstrating improved performance by capturing richer structural information [

19].

Despite these advances, the study of local clustering in hypergraphs remains relatively underexplored. Local clustering is a fundamental task in graph analysis, aiming to identify densely connected subgraphs around a seed node. While extensively studied in traditional graphs, its extension to hypergraphs introduces new challenges due to the complexity of hyperedge connectivity and the lack of well-defined neighborhood structures [

21].

Most hypergraph-based recommendation models treat nodes and hyperedges as homogeneous. This ignores the semantic diversity in real-world data. Heterogeneous hypergraphs address this issue by allowing for multiple types of nodes and hyperedges. They provide a more expressive way to model diverse entities and interactions. Recent studies have started exploring this approach by incorporating node and edge types into hypergraph learning [

22]. However, applications in local clustering are still limited [

23]. More research is needed to fully leverage heterogeneity for better performance.

Hypergraphs extend traditional graphs by allowing for hyperedges to connect multiple nodes simultaneously, enabling higher-order modeling of interactions. Most existing hypergraph-based recommendation models treat nodes and hyperedges as homogeneous, ignoring semantic diversity in real-world data. Heterogeneous hypergraphs address this limitation by incorporating multiple node and edge types, providing a more expressive framework for modeling diverse entities and relationships [

18]. Recent studies have begun exploring this direction by incorporating node and edge types into hypergraph learning [

22], but applications to local clustering and recommendation remain limited [

23].

In the broader domain of hypergraph community detection, Kamiński et al. (2024) introduce h-Louvain, a scalable modularity-based method that effectively balances cluster coherence and size [

24]. Meanwhile, Xiang et al. (2024) develop HGNE, which combines hypergraph convolution with contrastive node embedding to detect communities through higher-order relationships [

25]. Although these methods achieve strong performance in homogeneous hypergraphs, they generally assume uniform node and edge types. Our work bridges this gap by adapting local clustering to heterogeneous hypergraphs, explicitly modeling node and hyperedge types to enhance clustering quality in complex systems.

Our work builds upon these foundations by proposing a novel algorithm for local clustering in heterogeneous hypergraphs. By leveraging the structural and semantic richness of heterogeneous hypergraphs, our method aims to uncover meaningful clusters that better capture complex relationships among entities. This approach extends existing clustering techniques by explicitly modeling heterogeneity, which is often overlooked in prior work.

3. Preliminaries

Definition 1 (Hypergraph). Let denote an unweighted hypergraph, where V is the set of nodes consisting of n nodes, and E is the set of hyperedges consisting of m hyperedges. Each hyperedge is a subset of V.

Definition 2 (Dual-Hypergraph). The dual hypergraph of a hypergraph is hypergraph . The nodes of the dual hypergraph correspond to the hyperedges E of the original hypergraph H. The hyperedges of the dual hypergraph correspond to nodes V of the original hypergraph H.

Definition 3 (Heterogeneous Hypergraph, HH). , where is a hypergraph or dual-hypergraph, and for , .

Remark 1. A dual hypergraph provides an alternative representation of a hypergraph by swapping nodes and hyperedges. In an HH, each hypergraph captures a distinct type of information and is therefore unique. No two hypergraphs within the heterogeneous hypergraph represent the same structural or semantic relationships.

Definition 4 (Local Clustering). Given an unweighted hypergraph H and a seed node or seed hyperedge s, the goal of local clustering is to identify a cluster S within H that originates from s and satisfies the following two properties: (i) seed relevance, meaning that the nodes and hyperedges in S are strongly related to the given seed s; and (ii) locally optimal cluster quality, meaning that S achieves a high quality with respect to a chosen structural or semantic criterion within its local neighborhood, even if it is not globally optimal.

Definition 5 (Heterogeneous Clustering)

. Given a heterogeneous hypergraph, , a seeding node s, where , and a heterogeneous filter, , where , and , heterogeneous clustering, , is a sub heterogeneous hypergraph from the seed node containing the edges aligning the filter over heterogeneous aspects:where is all the possible subsets of . Remark 2. Heterogeneous clustering expands from a seed node by including only the hyperedges that satisfy a predefined filter function. All nodes incident to these filtered hyperedges are added as the expansion proceeds. As a result, the cluster contains only hyperedges (and their associated nodes) matching specific edge types, such as authored-by or published-in, thereby ensuring type-consistent growth.

Definition 6 (Compatible Hypergraph). Given a hypergraph, , a compatible hypergraph of H, denoted as , , where .

Remark 3. Two compatible hypergraphs are either homogeneous hypergraphs or can be converted to homogeneous hypergraphs. This means that hypergraphs have nodes or edges of the same type in themselves or their dual hypergraphs.

Definition 7 (Heterogeneous Hypergraph Conductance)

. Given a heterogeneous hypergraph, , and a heterogeneous clustering, , the heterogeneous hypergraph conductance , where Each component measures the proportion of hyperedges connecting within the cluster, i.e., the sum of internal hyperedges , versus those connecting outside the cluster . A higher indicates stronger internal connectivity and a more well-separated cluster.

Remark 4. The heterogeneous hypergraph conductance is defined as a vector-valued measure to capture type-specific structural properties across different compatible hypergraphs. This vector formulation preserves fine-grained information about heterogeneity and is not optimized directly. In the proposed framework, serves as an intermediate representation, from which a scalar conductance objective is derived (Section 4.2) to guide greedy local expansion. 4. Our Approach

Building on the formal framework of heterogeneous hypergraphs and clustering defined in

Section 3, we now describe our algorithmic approach for performing local clustering in such structures. Our method is centered around three core ideas: (i) type-consistent cluster expansion, (ii) cross-type penalty regularization, and (iii) low-contribution hyperedge pruning. These techniques are combined in a greedy clustering algorithm that iteratively expands from a given seed node.

4.1. Cluster Initialization and Type Filtering

Given a heterogeneous hypergraph and a seed node , we initialize a candidate cluster . To maintain semantic coherence, we restrict candidate expansions to nodes of the same type as s or nodes connected through relevant edge types specified by a filter . These type constraints enforce consistency in local clusters, avoiding noisy or irrelevant node types.

At each step, we define the

typed neighborhood of

S as

4.2. Conductance-Guided Node Expansion

Although

is defined as a vector-valued measure, greedy optimization requires a scalar objective to compare candidate expansions. For each candidate node

, we evaluate the conductance of the new cluster

using the heterogeneous conductance vector

defined in Equation (

2). To guide greedy expansion, we derive a scalar conductance objective

by aggregating the vector components:

where

denotes the conductance of the

i-th compatible hypergraph and

represents its relative importance. In this work, we use uniform weights

.

Given a candidate expansion by adding node

v, the improvement in conductance is computed as

A candidate is accepted only if , ensuring monotonic improvement of cluster quality.

4.3. Cross-Type Edge Penalty

To discourage structurally ambiguous or semantically noisy connections, we introduce a penalty factor on hyperedges that involve cross-type connections. That is, if a hyperedge e connects nodes of multiple types, its contribution to the external volume is multiplied by . This reduces the likelihood of expanding into mixed-type regions and encourages type-consistent cluster growth.

The modified conductance becomes:

Effect of the penalty: By increasing the contribution of cross-type hyperedges to the external volume, the penalty explicitly amplifies the boundary cost of semantically inconsistent connections. As a result, candidate expansions that introduce cross-type noise are less likely to yield positive conductance gain.

Monotonicity and termination: Since

only affects the external term and never decreases the internal contribution, any accepted expansion step strictly decreases the scalar conductance

defined in

Section 4.2. Therefore, the greedy expansion process is monotonic and guarantees termination either when no candidate node yields a positive conductance gain or when the maximum number of iterations is reached.

Parameter choice: In practice,

controls the trade-off between semantic purity and structural diversity. Larger values of

enforce stronger type consistency but may lead to smaller clusters, while smaller values allow for more heterogeneous expansion. We analyze the sensitivity of HHLC to different

values in

Section 5.6.

4.4. Low-Contribution Edge Pruning

Suppose no candidate node yields an improvement in conductance. In that case, we apply a refinement step: we remove the internal hyperedge in S with the lowest aggregate degree (i.e., total node degrees across the cluster). This encourages compactness and removes redundant or weakly informative structures. If pruning also fails to improve conductance, the algorithm terminates.

4.5. Complexity Analysis

We analyze the time complexity and parallelization potential of the proposed greedy clustering Algorithm 1 on heterogeneous hypergraphs.

| Algorithm 1: Heterogeneous Hypergraph Conductance-Aware Local Clustering |

![Algorithms 19 00079 i001 Algorithms 19 00079 i001]() |

4.5.1. Time Complexity

At each iteration, the algorithm evaluates all candidate nodes for possible inclusion. For each candidate, the conductance score is computed based on edge inclusion and penalty-weighted boundary cost. Let d be the average number of hyperedges per node, and be the number of candidates per iteration. Assuming hyperedge size is bounded, each conductance computation is , leading to per step. Over a maximum of T iterations, the overall time complexity is .

4.5.2. Parallelizability

The conductance evaluations for all candidate nodes in are independent and can be executed in parallel. This makes each iteration naturally parallelizable over candidates using multi-threaded or GPU-based computation frameworks. Additionally, if the underlying hypergraph data structure supports parallel neighbor and edge access, further speedup can be achieved during neighborhood computation and pruning steps.

Our algorithm is thus scalable to large hypergraphs and suitable for deployment in real-time or interactive applications where fast local clustering is required.

4.6. Example Illustration

Consider a heterogeneous hypergraph with users (U), items (I), and tags (T). A hyperedge may connect . Starting from user node , our algorithm would expand only to other user nodes, choosing the one that reduces conductance the most, while penalizing hyperedges that involve non-user types. If conductance stagnates, weak user-only hyperedges may be pruned to refine the result.

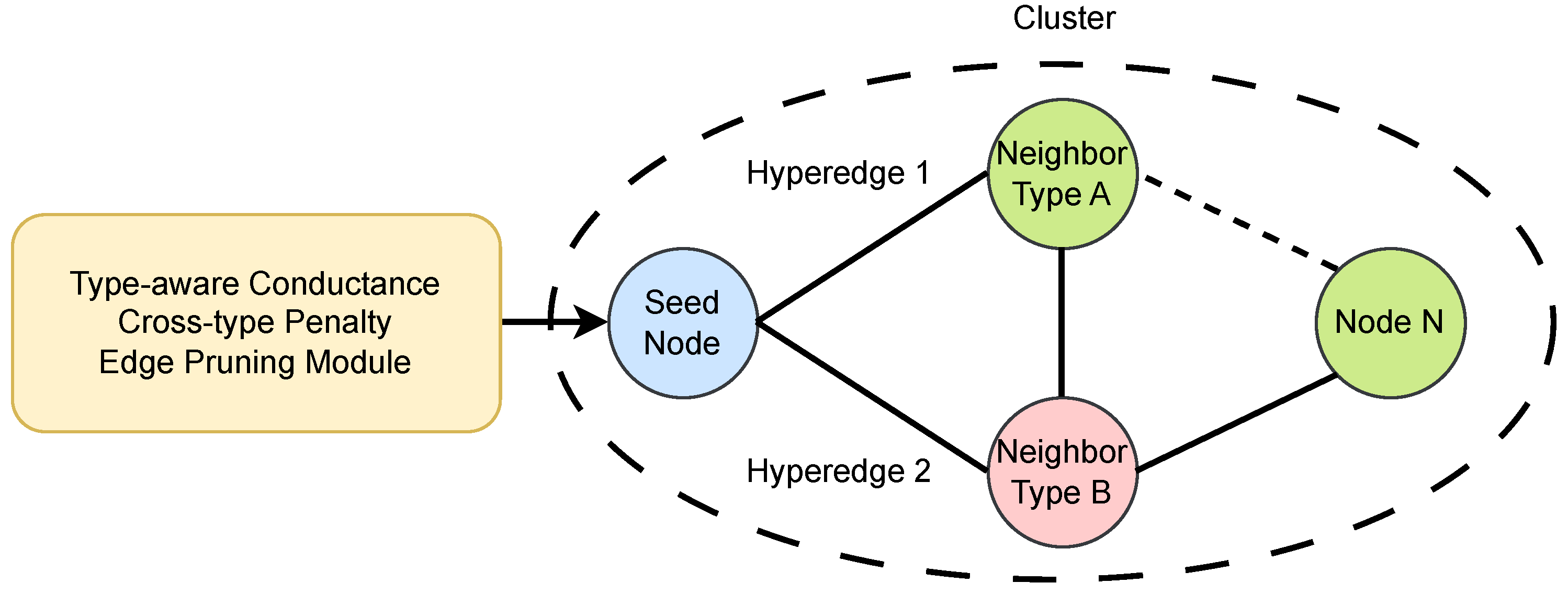

4.7. Diagram: Framework Overview

Figure 1 illustrates the proposed framework. The clustering starts from a seed node and expands via type-consistent neighbors, guided by type-aware conductance. Cross-type penalties and low-contribution edge pruning are integrated to produce high-quality, interpretable local clusters.

5. Experiments and Results

5.1. Datasets

For each dataset, we construct a dual hypergraph where

Each node represents a central entity (e.g., a paper or a movie).

Each hyperedge represents a typed relationship (e.g., author group, venue, keyword for DBLP).

We treat these typed hyperedges (e.g., “authored-by”, “published-in”) as heterogeneous hyperedges, enabling clustering based on edge-type consistency and semantics.

5.2. Experiment Setup

We randomly select 20 seed hyperedges from each dataset. Each method starts from the same set of seed hyperedges and performs clustering for up to 10 steps or until convergence. Our proposed algorithm optimizes a heterogeneity-aware conductance function, adding hyperedges that maximize the internal-to-external hyperedge ratio, while penalizing cross-type inconsistencies.

All experiments are repeated 5 times and averaged for robustness.

Parameter Settings:

Max clustering steps: 10.

Cross-type penalty factor : 1.5.

Node2Vec embedding dimension (for baseline): 128.

KMeans clusters: 20.

All methods are implemented in Python using PyTorch (v2.2.0) and PyTorch Geometric (v2.6.1), and executed on a Linux machine with an Apple M1 Pro chip with 3.22 GHz and 32 GB unified memory.

5.3. Baselines

The selected baselines represent different paradigms for local clustering, including graph-based diffusion, embedding-based clustering, and node-centric hypergraph methods. To evaluate the effectiveness of HHLC, we compare it against the following baseline methods:

- (1)

Node-based Local Hypergraph Clustering (Node-LHC)

Our previous version of local clustering, which operates directly on nodes and does not distinguish between edge types [

15]. A node is added to the cluster if it improves the internal conductance ratio, computed without regard to hyperedge semantics.

- (2)

Personalized PageRank (PPR)

We compute PPR scores from the seed node over the original heterogeneous graph [

26]. The top-

k nodes with the highest scores are selected as the cluster. This approach represents classical graph-based local exploration but does not incorporate hyperedge structures or heterogeneity.

- (3)

Node2Vec + KMeans

We generate node embeddings using Node2Vec over the projected heterogeneous graph [

27,

28]. A KMeans clustering is then performed in the embedding space, followed by selecting the cluster containing the seed. This method reflects topology-aware global clustering, ignoring hyperedge semantics.

The parameter settings of all baseline methods are summarized in

Table 1 to facilitate reproducibility. For fairness, all baseline methods are evaluated on the same underlying data. Note that graph-based baselines operate on pairwise projections of the original hypergraph and therefore do not explicitly model hyperedge semantics or hyperedge types. All baseline methods are evaluated on the same datasets; however, graph-based baselines operate on pairwise projections and do not have access to explicit hyperedge type information, reflecting differences in modeling assumptions rather than unfair experimental settings.

5.4. Evaluation Metrics

We employ four widely recognized metrics to rigorously evaluate clustering performance:

5.4.1. Heterogeneous Hypergraph Conductance

This metric measures structural compactness by comparing the ratio of internal to external hyperedges within a cluster. Formally, it is defined as

where the summation is over all hyperedges

e, and

is the indicator function that equals 1 if the condition inside the braces holds and 0 otherwise. The numerator counts hyperedges fully contained within the cluster

C. The denominator counts hyperedges that intersect the cluster but are not fully contained. These represent the boundary hyperedges.

5.4.2. Cluster Size

The total number of nodes or hyperedges included in the final cluster. This reflects the expansion behavior of each method.

5.4.3. Type Purity (TP)

TP quantifies the semantic consistency within a cluster by measuring the dominance of the most frequent type among all cluster elements [

29]. Given a cluster

C with elements of different semantic types

T, the Type Purity is computed as

where

counts the number of elements of type

t in cluster

C, and

denotes the total number of elements in

C. A higher TP indicates that the cluster is largely composed of a single dominant type, reflecting semantic coherence.

This metric is crucial because, in heterogeneous hypergraphs, meaningful clusters often correspond to groups dominated by specific entity or relation types (e.g., authors, venues, keywords). High Type Purity ensures that the clustering respects these semantic boundaries, avoiding mixed or noisy aggregations that may dilute interpretability.

5.4.4. Type Diversity (TD)

While TP measures homogeneity, TD captures the richness of type coverage within a cluster [

30]:

where

denotes the cardinality of the set of types present in

C. A higher TD indicates that the cluster contains a broader variety of semantic types.

Balancing TP and TD is essential in heterogeneous hypergraphs:

- -

Solely maximizing TP risks trivial clusters containing only one type, limiting the cluster’s semantic expressiveness.

- -

Emphasizing TD alone can lead to overly heterogeneous clusters lacking clear semantic focus.

Thus, our evaluation incorporates both metrics to ensure clusters are not only semantically coherent but also capture meaningful multi-type interactions inherent in the data. This balance reflects the core strength of our hyperedge-aware clustering approach.

5.5. Results

We evaluate our proposed HHLC algorithm against four baselines: Node-based Local Hypergraph Clustering (Node-LHC), Personalized PageRank (PPR), HHLC without the heterogeneity penalty (), and Node2Vec + KMeans with sampled embeddings. Each method is run on the DBLP, Cora, and IMDB datasets with 5 random seed nodes. We report the following metrics: heterogeneous hypergraph conductance, cluster size, TP, and TD.

5.5.1. Quantitative Results

Table 2 and

Table 3 summarize the conductance and cluster size results averaged over all seed hyperedges. HHLC consistently achieves the highest conductance across all datasets, indicating its effectiveness in identifying structurally compact local clusters with fewer boundary hyperedges. At the same time, HHLC maintains stable cluster sizes with low variance, suggesting that the proposed type-aware expansion strategy successfully balances between over-expansion and premature termination.

Comparing HHLC with its ablated variant, removing the heterogeneity penalty () leads to a clear drop in conductance accompanied by larger and more variable cluster sizes. This observation highlights the importance of penalizing cross-type hyperedges, as ignoring heterogeneity introduces structural and semantic noise during cluster growth.

As shown in

Table 3, Node-LHC produces noticeably larger clusters with lower conductance, demonstrating that type-agnostic expansion fails to preserve compact local neighborhoods. PPR consistently yields the smallest clusters and the lowest conductance values, which is expected since it operates on a pairwise graph projection and cannot exploit high-order hyperedge structures. Node2Vec + KMeans achieves moderate cluster sizes, but still underperforms HHLC in conductance, reflecting the loss of explicit hyperedge semantics in embedding-based approaches.

Table 4 and

Table 5 further report TP and TD. HHLC consistently attains the highest TP while maintaining moderate TD, indicating that the discovered clusters are both semantically coherent and structurally diverse. In contrast, removing the heterogeneity penalty or relying on baseline methods results in lower purity and inflated diversity, confirming that explicit modeling of hyperedge types is crucial for identifying meaningful local structures in heterogeneous hypergraphs.

5.5.2. Analysis

Across all datasets, HHLC demonstrates strong advantages in both structural and semantic dimensions. The heterogeneity-aware conductance effectively balances internal connectivity and type consistency, enabling the algorithm to avoid cross-type drift while still capturing meaningful heterogeneous interactions. This is reflected by the consistently higher TP and conductance compared with the ablated variant and node-based baselines.

Lower conductance indicates that a cluster has fewer boundary hyperedges relative to its internal connections. This typically correlates with higher type purity because clusters dominated by consistent edge types tend to form dense internal structures, while heterogeneous or noisy clusters introduce more external connections, increasing conductance.

Conceptually, Node-LHC operates on node-centric expansion without considering hyperedge semantics or type constraints. It treats all hyperedges uniformly, which can lead to clusters that are structurally large but semantically inconsistent. In contrast, HHLC incorporates edge-type awareness and penalizes cross-type expansions, ensuring that clusters remain both compact and semantically coherent. This fundamental difference explains why HHLC achieves better balance between conductance and type purity, beyond numerical performance.

Node-LHC and PPR both fail to utilize typed hyperedges, leading to either overly large and noisy clusters (Node-LHC) or overly small and incomplete ones (PPR). Node2Vec + KMeans captures global similarity patterns, but its embedding space dilutes high-order hyperedge semantics, resulting in weaker purity and structural compactness than HHLC.

5.5.3. Summary

The results collectively demonstrate that modeling high-order interactions together with type semantics is essential for high-quality local clustering in heterogeneous hypergraphs. HHLC achieves this balance and consistently outperforms both node-based and graph-based baselines, confirming the benefit of integrating hyperedge structure and heterogeneity-aware conductance.

5.6. Parameter Sensitivity Analysis

We analyze the sensitivity of HHLC to the cross-type penalty parameter on the IMDB dataset. Specifically, we vary while keeping all other parameters fixed, and evaluate the resulting clusters using heterogeneous hypergraph conductance, Type Purity (TP), and Type Diversity (TD).

Table 6 and

Figure 2 jointly illustrate the effect of the cross-type penalty parameter

on cluster quality. Specifically, both

Table 6 and

Figure 2 report the variation in conductance, Type Purity (TP), and Type Diversity (TD) under different values of

.

The results are reported in

Table 6. As

increases, the conductance consistently decreases, indicating that stronger penalization of cross-type hyperedges leads to more structurally compact clusters. Notably, the TP remains stable across different

values, suggesting that the proposed penalty effectively suppresses cross-type noise without sacrificing semantic purity. The TD also remains constant on IMDB, reflecting the inherently limited number of node types involved in local clusters on this dataset.

Overall, these results demonstrate that HHLC is robust to the choice of and that provides a reasonable balance between structural compactness and semantic consistency. We therefore adopt as the default setting in the main experiments.

6. Conclusions

This study introduces HHLC, a heterogeneous hyperedge-based local clustering algorithm designed to uncover semantically coherent and structurally compact clusters in heterogeneous hypergraphs. By directly leveraging hyperedge semantics and a type-aware conductance measure, HHLC captures high-order connectivity and node-type consistency, addressing fundamental limitations of traditional node-centric and graph-based clustering approaches.

Our framework integrates three key innovations: (i) expansion with type-consistent neighbors to preserve semantic integrity, (ii) penalization of cross-type noise to enhance structural fidelity, and (iii) pruning low-contribution hyperedges for improved interpretability and compactness. Extensive experiments on three real-world datasets demonstrate that HHLC consistently outperforms strong baselines across metrics such as conductance, semantic purity, and type diversity. Ablation studies further confirm the critical role of heterogeneity-aware penalties in achieving robust clustering performance.

Beyond empirical gains, HHLC contributes to the broader algorithmic landscape by offering a principled approach for local clustering in complex, multi-relational environments, an area of growing importance in network science, data mining, and AI-driven applications. Future research will explore adapting HHLC to dynamic and attributed hypergraphs, scaling to large-scale heterogeneous networks, and incorporating learning-based strategies for penalty tuning. Overall, HHLC provides a versatile and semantically grounded framework that advances the state of the art in hypergraph algorithms and opens new directions for heterogeneous data analysis.