Dynamic Resource Games in the Wood Flooring Industry: A Bayesian Learning and Lyapunov Control Framework

Abstract

1. Introduction

- We formulate a Partially Observable Stochastic Dynamic Game model that endogenizes key intangible assets—such as brand reputation and customer base—as unobservable market states within the context of the wood flooring industry, systematically characterizing their dynamic evolution under firm investments, channel competition, and macroeconomic randomness.

- We design a hierarchical online learning and control algorithm termed L-BAP, which organically integrates Bayesian filtering, Lyapunov approximate dynamic programming, and game theory to efficiently combine hidden state estimation, long-term objective optimization, and inter channel strategic behavior prediction within a unified framework.

- We provide rigorous theoretical performance analysis for the L-BAP algorithm. Through Lyapunov function construction, we prove the mean-square boundedness of system queues and derive the performance gap bound between algorithm rewards and optimal policies under complete information, clearly revealing the estimation–optimization trade-off.

- We validate the proposed framework’s effectiveness through high-fidelity simulations. Experimental results demonstrate that L-BAP significantly outperforms several strong baselines—including myopic learning and decentralized reinforcement learning methods—across multiple dimensions: long-term profitability, inventory risk control, and customer service levels. We further provide ablation and sensitivity analyses to isolate the contributions of Bayesian filtering, Lyapunov planning, and game-response prediction.

2. Related Work

2.1. Traditional Resource Allocation Methods in Manufacturing

2.2. Game Theory Applications in Supply Chain and Channel Management

2.3. Stochastic Network Optimization and Lyapunov Theory

3. System Modeling and Problem Formulation

3.1. Model Notation and Definitions

Data Availability and State Estimability

3.2. Core State Variables and System Dynamics

3.3. State Evolution Equations

3.4. Demand, Sales, and Profit Functions

3.5. Problem Formulation: A Partially Observable Stochastic Dynamic Game

4. Online Learning and Control Algorithm Design

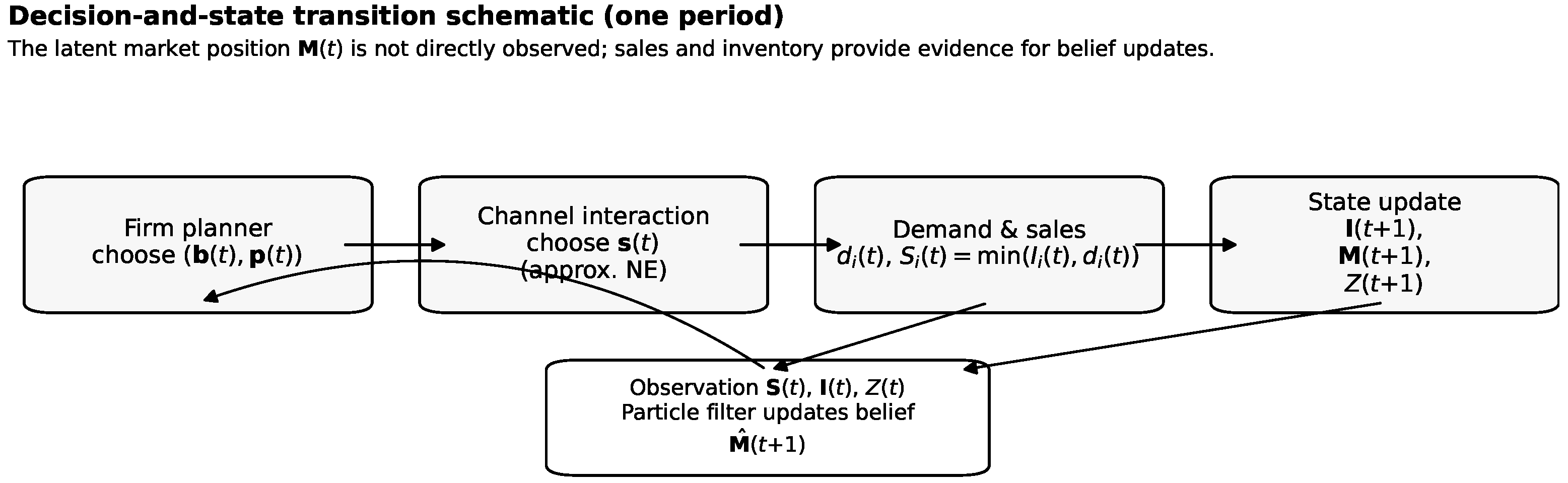

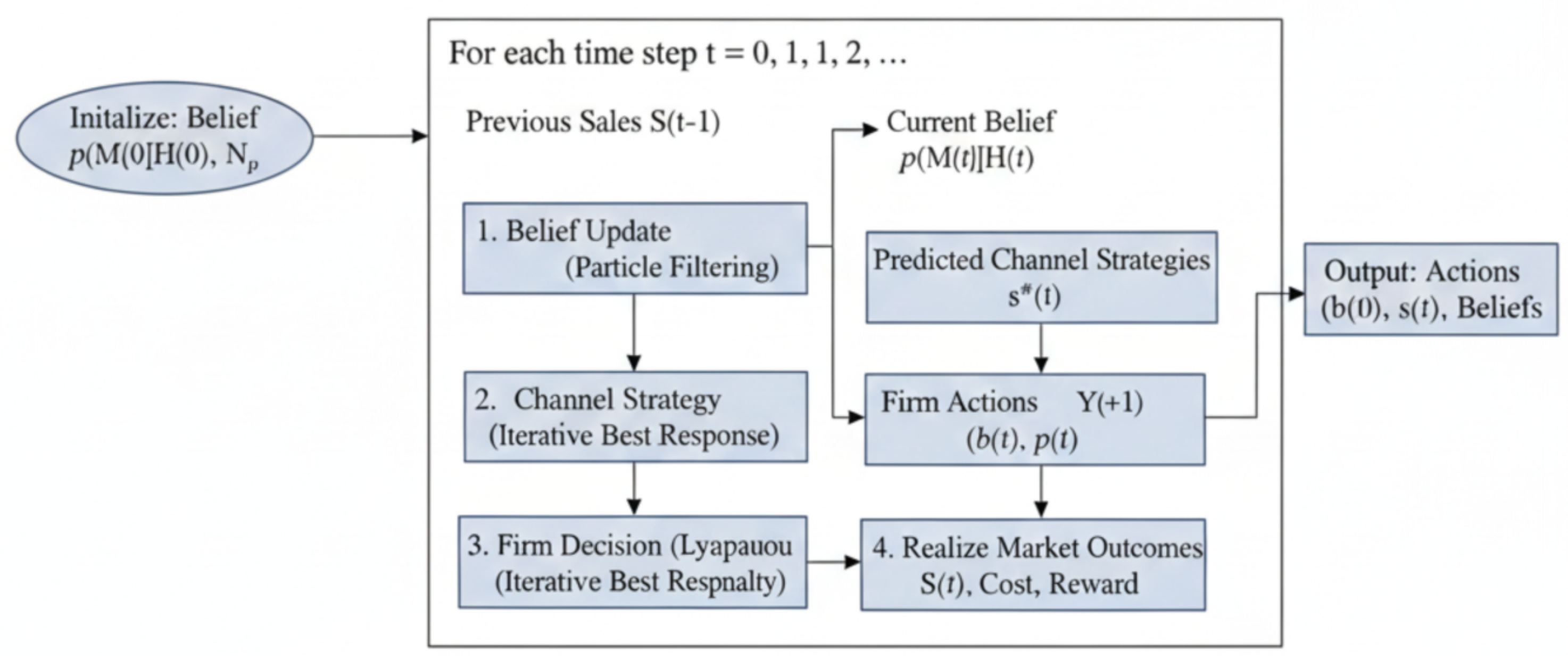

4.1. Algorithm Overview: Hierarchical Bayesian Approximate Dynamic Programming Framework

4.2. Bayesian Estimation of Market Position via Particle Filtering

| Algorithm 1 Particle Filter Update for Market Position Vector |

|

4.3. Lyapunov Approximate Dynamic Programming Framework

4.4. Solving Hierarchical Decision Subproblems

| Algorithm 2 Hierarchical Online Learning and Control Algorithm (Period t) |

|

4.5. Theoretical Performance Analysis

4.5.1. Technical Assumptions for Analysis

4.5.2. Lyapunov Drift Upper Bound Lemma

4.5.3. Performance Analysis of POSDG

4.5.4. Queue Stability and Performance Bound Theorem

4.5.5. Convergence and Computational Complexity Analysis

5. Performance Evaluation and Experimental Analysis

5.1. Experimental Setup and Parameter Configuration

5.2. Baseline Algorithms for Comparison

5.3. Performance Evaluation Metrics

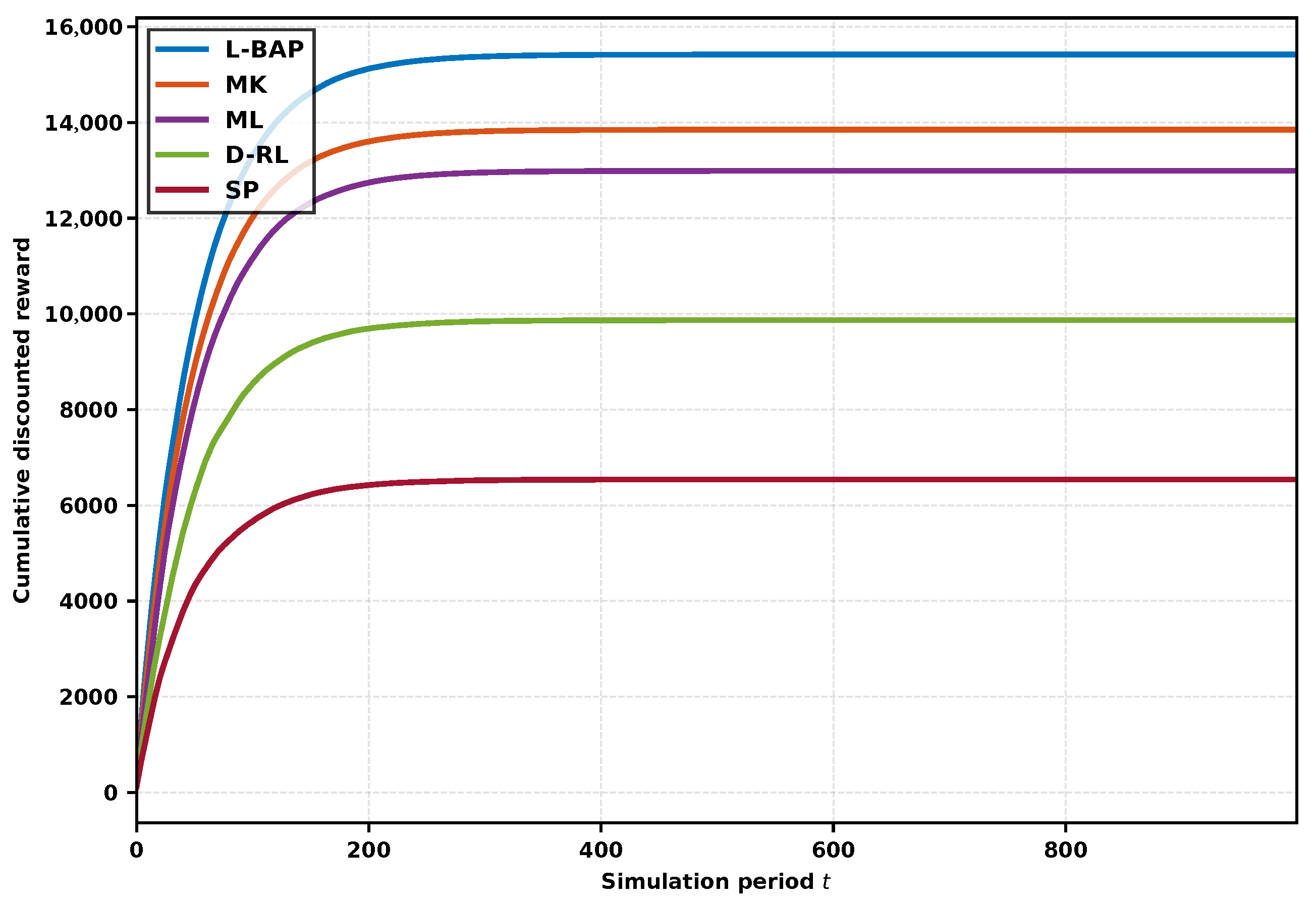

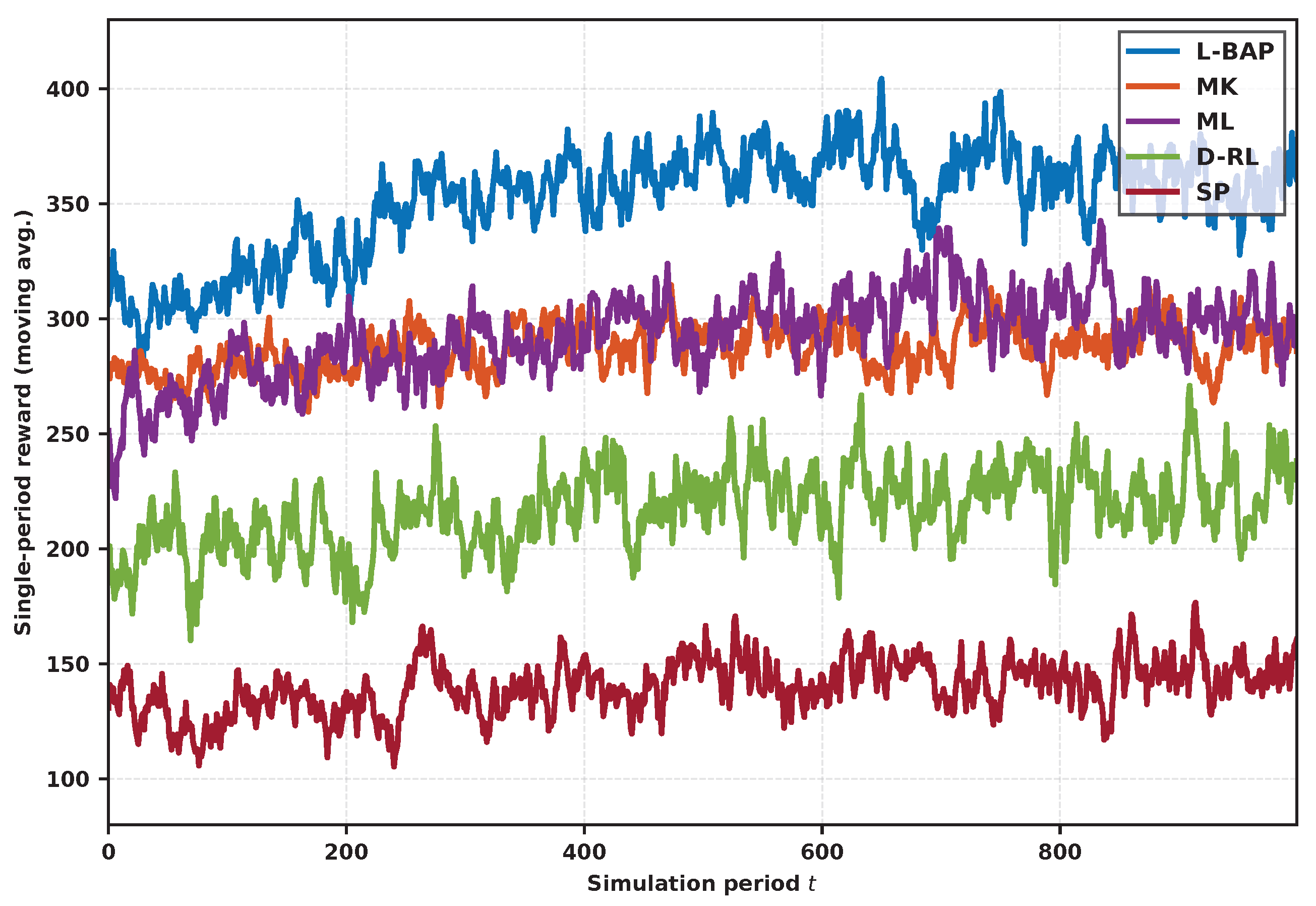

5.4. Experimental Results and Analysis

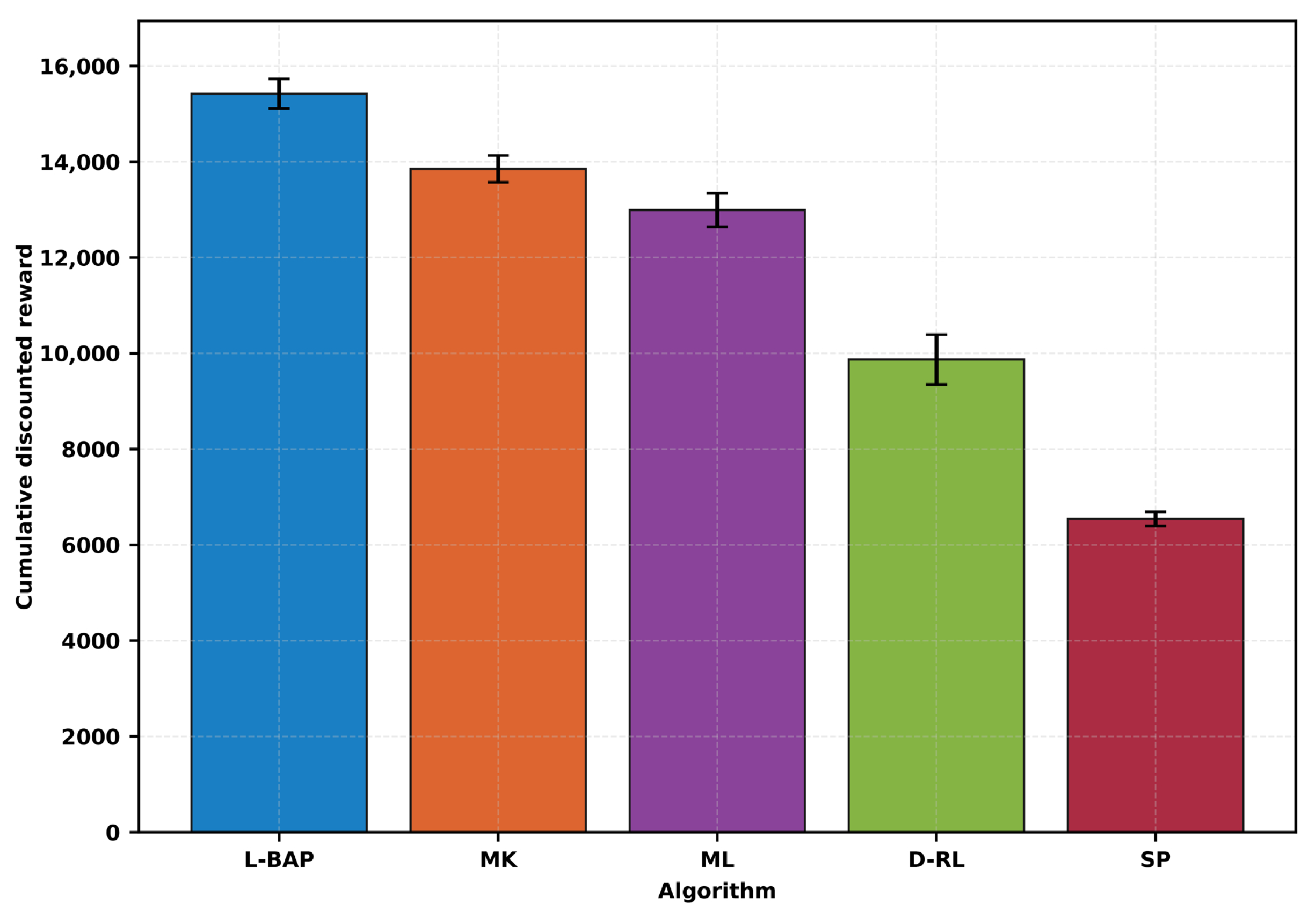

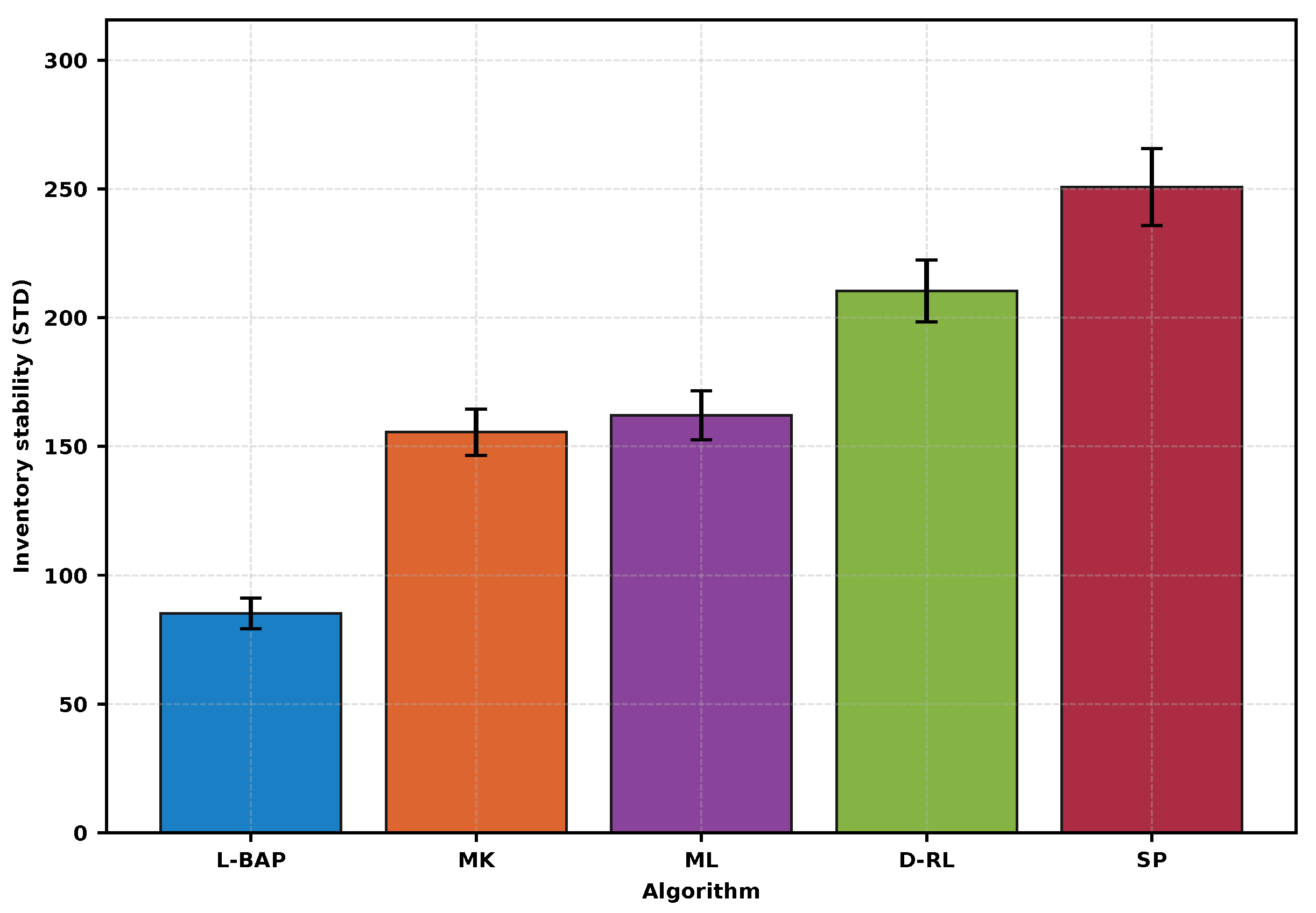

- Discussion. The bar summaries in Figure 7 and Figure 8 confirm the time-series trends: L-BAP achieves the highest long-run reward while simultaneously reducing inventory volatility by a large margin. Importantly, MK (perfect information but myopic) is still outperformed by L-BAP, highlighting that stability-aware planning has a first-order impact beyond state observability alone.

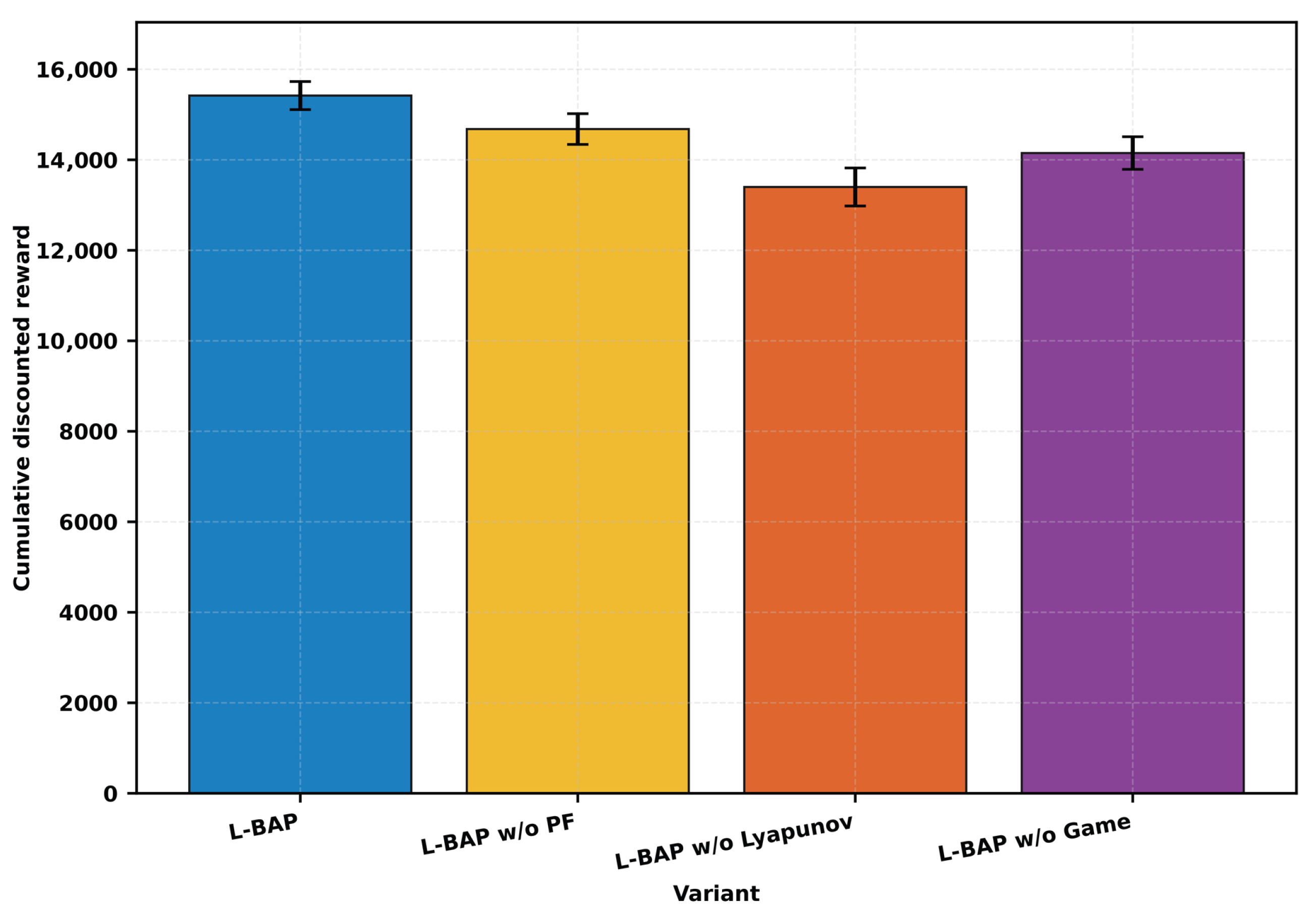

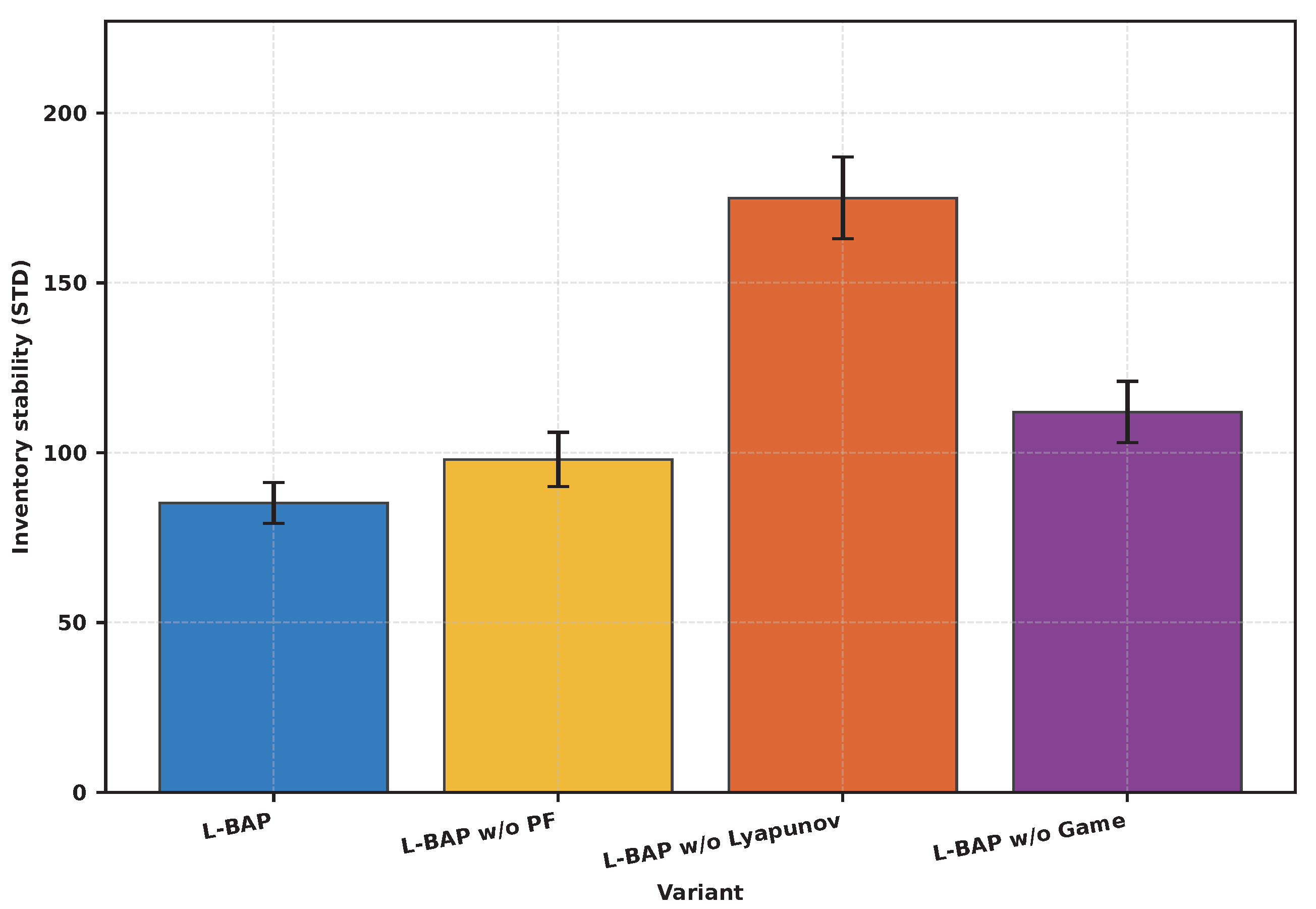

5.5. Ablation Study and Component Contribution

- Findings. Removing Lyapunov planning causes the largest degradation in inventory stability and also lowers reward, indicating that the drift-plus-penalty structure is the main driver for robust long-term control under lead times. Removing particle filtering reduces reward because the planner cannot track latent market shifts and therefore misallocates budgets and production. Removing the game module also degrades reward and increases volatility, because the firm decisions become systematically mismatched with strategic channel reactions. Overall, the ablation results support the modular design philosophy: each component contributes materially, and their combination yields the best reward–stability balance.

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Proofs for Theoretical Performance Analysis

Appendix A.1. Analytical Foundation: Restatement of Technical Assumptions

Appendix A.2. Detailed Derivation of Lyapunov Drift Upper Bound

Appendix A.3. Drift-Plus-Penalty Analysis and Performance Gap Derivation

Appendix A.4. Proof of Queue Stability and Performance Bound Theorems

References

- Zhou, Q.; Li, J.; Guo, S.; Chen, H.; Wu, C.; Yang, Y. Adaptive Incentive and Resource Allocation for Blockchain-Supported Edge Video Streaming Systems: A Cooperative Learning Approach. IEEE Trans. Mob. Comput. 2025, 24, 539–556. [Google Scholar]

- Yuan, S.; Dong, B.; Li, J.; Guo, S.; Chen, H.; Wu, C.; Wu, J.; Zhao, W. Adaptive Incentivize for Federated Learning with Cloud-Edge Collaboration under Multi-Level Information Sharing. IEEE Trans. Comput. 2025, 74, 2445–2460. [Google Scholar] [CrossRef]

- Chen, H.; Han, Z.; Wu, C.; Zhang, Y. Jira: Joint incentive design and resource allocation for edge-based real-time video streaming systems. IEEE Trans. Wirel. Commun. 2022, 22, 2901–2916. [Google Scholar]

- Li, J.; Wu, C. Jora: Blockchain-Based Efficient Joint Computing Offloading and Resource Allocation for Edge Video Streaming Systems. J. Syst. Archit. 2022, 133, 102740. [Google Scholar]

- Liu, Y.; Guo, S.; Wu, C.; Yang, Y. Efficient Online Computing Offloading for Budget-Constrained Cloud-Edge Collaborative Video Streaming Systems. IEEE Trans. Cloud Comput. 2025, 13, 273–287. [Google Scholar]

- Yuan, S.; Dong, B.; Lv, H.; Liu, H.; Chen, H.; Wu, C.; Guo, S.; Ding, Y.; Li, J. Adaptive Incentive for Cross-Silo Federated Learning in IIoT: A Multiagent Reinforcement Learning Approach. IEEE Internet Things J. 2024, 11, 15048–15058. [Google Scholar] [CrossRef]

- Lv, H.; Liu, H.; Wu, C.; Guo, S.; Liu, Z.; Chen, H. TradeFL: A Trading Mechanism for Cross-Silo Federated Learning. In Proceedings of the 2023 IEEE 43rd International Conference on Distributed Computing Systems (ICDCS), Hong Kong, China, 18–21 July 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 920–930. [Google Scholar]

- Han, J.; Yu, C.; Liu, G.; Yuan, S.; Tong, Z. Large models based high-fidelity voice services over 6G narrowband non-terrestrial networks. Digit. Commun. Netw. 2025, 11, 1864–1873. [Google Scholar] [CrossRef]

- Liang, J.; Zhu, Y.; Yu, X.; Chen, J.; Wu, C. Sharding for Blockchain based Mobile Edge Computing System: A Deep Reinforcement Learning Approach. In Proceedings of the IEEE Global Communications Conference (GLOBECOM), Madrid, Spain, 7–11 December 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 1–6. [Google Scholar]

- Wang, Y.; Lou, J.; Wu, C.; Guo, S.; Yang, Y. Online Data Trading for Cloud-Edge Collaboration Architecture. In Proceedings of the IEEE International Conference on Communications, Denver, CO, USA, 9–13 June 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 4554–4559. [Google Scholar]

- Ji, Y.; Zhang, Y. DCVP: Distributed Collaborative Video Stream Processing in Edge Computing. In Proceedings of the IEEE 26th International Conference on Parallel and Distributed Systems (ICPADS), Hong Kong, China, 2–4 December 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 625–632. [Google Scholar]

- Lou, J.; Tang, Z.; Lu, X.; Yuan, S.; Li, J.; Jia, W.; Wu, C. Efficient Serverless Function Scheduling in Edge Computing. In Proceedings of the IEEE International Conference on Communications, Denver, CO, USA, 9–13 June 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 1029–1034. [Google Scholar]

- Liu, H.; Fu, H.; Yuan, S.; Wu, C.; Luo, Y.; Li, J. Adaptive Processing for Video Streaming with Energy Constraint: A Multi-Agent Reinforcement Learning Method. In Proceedings of the IEEE Global Communications Conference, Kuala Lumpur, Malaysia, 4–8 December 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 122–127. [Google Scholar]

- Liu, H.; Liu, J.; Zhou, Z.; Yuan, S.; Lou, J.; Wu, C.; Li, J. A stochastic learning algorithm for multi-agent game in mobile network: A Cross-Silo federated learning perspective. Comput. Netw. 2025, 269, 111458. [Google Scholar] [CrossRef]

- Barčić, A.P.; Kuzman, M.K.; Jošt, M.; Grošelj, P.; Klarić, K.; Oblak, L. Perceptions of Wood Flooring: Insights from Croatian Consumers and Wood Experts. Buildings 2025, 15, 1780. [Google Scholar] [CrossRef]

- Wang, H.; Wang, J.; Zhang, X.; Li, Y. Deformation rate of engineered wood flooring with response surface methodology. PLoS ONE 2023, 18, e0292815. [Google Scholar] [CrossRef]

- Heidari, M.D.; Bergman, R.; Salazar, J.; Hubbard, S.S.; Bowe, S.A. Life-Cycle Assessment of Prefinished Engineered Wood Flooring in the Eastern United States; Forest Products Laboratory Research Paper FPL-RP-718; U.S. Department of Agriculture Forest Service: Madison, WI, USA, 2023.

- Morganti, L.; Rudenå, A.; Brunklaus, B. Wood-for-construction supply chain digital twin to drive circular economy and actor-based LCA information. J. Clean. Prod. 2025, 520, 146074. [Google Scholar] [CrossRef]

- Zhang, D.; Turan, H.H.; Sarker, R.; Essam, D. Robust optimization approaches in inventory management: Part A—The survey. IISE Trans. 2025, 57, 818–844. [Google Scholar] [CrossRef]

- Wu, K.; Li, Y.; Zhao, X. Game-theoretic models for sustainable supply chains with information asymmetry. Int. J. Syst. Sci. Oper. Logist. 2025, 12, 2520619. [Google Scholar]

- Krassnitzer, P.; Wimmer, M.; Fuchs, M. Sustainability in flooring: Assessing the environmental and economic impacts of circular business models based on a wood parquet case study. Discov. Sustain. 2025, 6, 466. [Google Scholar] [CrossRef]

- Bergsagel, D.; Heisel, F.; Owen, J.; Rodencal, M. Engineered wood products for circular construction: A multi-scale perspective. npj Mater. Circ. 2025, 3, 24. [Google Scholar]

- Neely, M.J. Stochastic Network Optimization with Application to Communication and Queueing Systems; Morgan & Claypool Publishers: San Rafael, CA, USA, 2010. [Google Scholar]

- Alilou, M.; Mohammadpour; Shotorbani, A.; Mohammadi-Ivatloo, B. Lyapunov-based real-time optimization method in microgrids: A comprehensive review. Renew. Sustain. Energy Rev. 2025, 213, 115416. [Google Scholar] [CrossRef]

- Yuan, S.; Chen, X.; Xing, S.; Li, J.; Chen, H.; Liu, Z.; Guo, S. Transformer-Based Scalable Multi-Agent Reinforcement Learning for Joint Resource Optimization in Cloud-Edge-End Video Streaming Systems. IEEE Trans. Cogn. Commun. Netw. 2025, 12, 3482–3496. [Google Scholar] [CrossRef]

| Notation | Definition |

|---|---|

| State Variables | |

| Market position vector of channel i in period t (partially observable) | |

| Physical inventory level of finished goods for channel i at period t start (observable) | |

| Vector of in-process production orders for channel i in period t, | |

| Macroeconomic market state in period t (observable) | |

| Complete system state in period t | |

| Virtual debt queue used in Lyapunov planning (Section 4) | |

| Decision/Action Variables | |

| Marketing budget allocated to channel i by the firm in period t | |

| Production order quantity placed for channel i by the firm in period t | |

| Sales strategy intensity adopted by channel i in period t | |

| Model Functions and Intermediate Variables | |

| Market demand function for channel i in period t | |

| Actual sales volume for channel i in period t | |

| Operating profit generated by channel i in period t | |

| Inventory holding cost and production cost functions | |

| Observation likelihood of sales in particle filtering (Section 4) | |

| Parameters | |

| Production order lead time | |

| Discount factor for future long-term rewards | |

| Natural decay matrix for market position | |

| Cross-channel competitive influence matrix from channel j to i | |

| Unit sales marginal profit for channel i | |

| Total marketing budget and production-capacity constraints in period t | |

| History of all observable information up to period t | |

| Parameter Category | Symbol | Value/Form |

|---|---|---|

| State Evolution | ||

| Off-diagonal matrix reflecting strong competition from channel 3 to 1 and 2 | ||

| Demand Function | Heterogeneous parameter vectors set according to channel characteristics | |

| Cost Function | ||

| Macro Environment |

| Algorithm | Cumulative Discounted Reward | Inventory STD | Service Level | RMSE |

|---|---|---|---|---|

| L-BAP | 15,420 ± 310 | 85.2 ± 6.0 | 96.5% ± 0.6% | 0.88 |

| MK | 13,850 ± 280 | 155.6 ± 9.0 | 91.2% ± 1.0% | N/A |

| ML | 12,990 ± 350 | 162.1 ± 9.5 | 92.1% ± 0.9% | 0.91 |

| D-RL | 9870 ± 520 | 210.5 ± 12.0 | 85.6% ± 1.3% | N/A |

| SP | 6540 ± 150 | 250.8 ± 15.0 | 78.2% ± 1.5% | N/A |

| Variant | Cumulative Discounted Reward | Inventory STD |

|---|---|---|

| L-BAP | 15,420 ± 310 | 85.2 ± 6.0 |

| L-BAP w/o PF | 14,680 ± 340 | 98.0 ± 8.0 |

| L-BAP w/o Lyapunov | 13,400 ± 420 | 175.0 ± 12.0 |

| L-BAP w/o Game | 14,150 ± 360 | 112.0 ± 9.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wang, Y.; Vasilakos, A.V. Dynamic Resource Games in the Wood Flooring Industry: A Bayesian Learning and Lyapunov Control Framework. Algorithms 2026, 19, 78. https://doi.org/10.3390/a19010078

Wang Y, Vasilakos AV. Dynamic Resource Games in the Wood Flooring Industry: A Bayesian Learning and Lyapunov Control Framework. Algorithms. 2026; 19(1):78. https://doi.org/10.3390/a19010078

Chicago/Turabian StyleWang, Yuli, and Athanasios V. Vasilakos. 2026. "Dynamic Resource Games in the Wood Flooring Industry: A Bayesian Learning and Lyapunov Control Framework" Algorithms 19, no. 1: 78. https://doi.org/10.3390/a19010078

APA StyleWang, Y., & Vasilakos, A. V. (2026). Dynamic Resource Games in the Wood Flooring Industry: A Bayesian Learning and Lyapunov Control Framework. Algorithms, 19(1), 78. https://doi.org/10.3390/a19010078