1. Introduction

The fatigue state of drivers significantly impacts driving safety. With prolonged driving and irregular work hours, fatigued driving has become a major factor contributing to traffic accidents. Recent studies indicate that driver fatigue markedly reduces reaction time, judgment, and alertness, thereby increasing the risk of accidents. To address this challenge, the development of reliable driver fatigue detection systems has become a crucial topic in the field of traffic safety. Electroencephalography (EEG) technology, a widely used electrophysiological detection method, is an important tool for brain fatigue detection. By placing electrodes along the scalp, EEG records changes in postsynaptic potentials of large groups of neurons firing synchronously, reflecting human behavioral awareness. Compared to traditional fatigue detection methods, such as subjective scales, facial activity, and external device monitoring, EEG-based signals are objective, can be continuously monitored over time, are not affected by external lighting, and, as a physiological signal, can provide early warning before the onset of visible signs like eye closure or operational errors. This makes it especially valuable for high-reliability tasks, such as in-flight operations, where it can provide real-time, dynamic, and objective assessments of a driver’s fatigue state. Today, EEG technology is extensively applied in the study of mental fatigue among drivers. Research on fatigue classification based on EEG technology primarily focuses on two areas: (1) feature extraction and (2) classifier selection.

In the field of fatigue classification, traditional EEG features are mainly categorized into three types: time-domain features, frequency-domain features, and time-frequency features [

1,

2]. Since most EEG devices collect signals in the time domain, time-domain features are the most intuitive and accessible, with common examples including mean, standard deviation, variance, and differential mean of various orders [

3]. Atkinson et al. extracted statistical features, fractal dimensions, and other characteristics from EEG signals as inputs for a support vector machine method, achieving an average accuracy of 73.10% in a binary emotion classification task on the DEAP dataset. Given that frequency-domain analysis can reveal frequency information of the signal, feature extraction for fatigue classification tasks often relies on frequency-domain methods. Fourier transform or wavelet transform is typically used to convert time-domain EEG signals into the frequency domain for analysis and feature extraction. Common frequency-domain features include band energy, band power, power spectral density, and differential entropy [

4,

5,

6]. For instance, Wang Lien et al. used the Welch method to calculate the power spectral density of the δ, θ, α, and β frequency bands of filtered EEG signals as frequency features, providing a direct reflection of how power varies with frequency. However, classical spectral estimation methods like the Welch method are more suitable for long sequences and have poor spectral resolution for short sequences [

1]. Liu et al. proposes a fatigue driving detection method using single-channel EEG signals, combined with fuzzy entropy features based on Complete Ensemble Empirical Mode Decomposition with Adaptive Noise (CEEMDAN). The study employs a self-training semi-supervised learning method to convert unlabeled data into pseudo-labeled data, further improving the accuracy of fatigue driving detection [

7]. Wang Nen et al. divided EEG signals into five frequency bands, using the power spectral density ratio, the ratio of focus to relaxation, and blink frequency as fatigue detection features, achieving a three-class classification accuracy of 88.57%, which falls short of the high reliability required for pilot fatigue classification tasks [

8]. However, since both time-domain and frequency-domain feature extraction are computed from a single domain, important features with high resolution might be lost. Therefore, many researchers choose time-frequency features, with commonly used methods including short-time Fourier transform and filter-based Hilbert transform [

9]. For example, Chen Wan et al. proposed a real-time alertness estimation method based on Differential Entropy (DE), improved moving average, and bidirectional two-dimensional principal component analysis (TD-2DPCA), using short-time Fourier transform to obtain time-frequency features. Validation on the SEED-VIG dataset yielded a Pearson correlation coefficient of about 0.91 and an RMSE of about 0.09, outperforming existing alertness estimation methods [

10].

Classifiers can be broadly categorized into two types: machine learning-based and deep learning-based. Common machine learning algorithms include Support Vector Machine (SVM), K-Nearest Neighbors (K-NN), and Random Forest (RF) [

11]. Zhou et al. used entropy methods for feature extraction and compared three classifiers: SVM, Random Forest, and BP neural network, achieving maximum classification accuracies of 96.6%, 84.6%, and 83.6%, respectively. Zhang et al. proposes a method for recognizing drivers’ mental fatigue based on EEG signals, using multi-dimensional feature selection and fusion. The method combines complex networks with frequency and spatial features, and uses Principal Component Analysis (PCA) for feature fusion. The classification accuracy of the Gaussian Support Vector Machine (Gaussian SVM) reaches 99.23% [

12]. Chen et al. extracted frequency domain and nonlinear features from EEG signals induced by steady-state visual evoked potential, using a combination of spectral analysis and sample entropy. They selected SVM as the classifier, achieving an accuracy of over 90%. However, the SVM method has drawbacks such as misclassification near the hyperplane, difficulty in determining the kernel function, significant performance impact due to the selection of adjustable parameters, and challenges in selecting high-quality limited samples.

Traditional machine learning methods require manual feature design and extraction, a process that demands expertise and experience. The choice of different feature selection methods can also impact model performance. Additionally, traditional machine learning models face limitations when handling large-scale data due to the need to compute a vast number of feature vectors or eigenvalues.

Unlike traditional machine learning methods that rely on manually designed features, some deep learning models—such as end-to-end CNNs and Transformers—can directly learn hierarchical representations from raw EEG signals, reducing the reliance on handcrafted feature extraction [

13,

14]. However, due to the high noise level, non-stationarity, and complex neurophysiological origins of EEG signals, many practical EEG classification systems still incorporate domain-informed preprocessing and feature engineering (e.g., time-frequency analysis, differential entropy) to enhance signal discriminability, improve model robustness, and facilitate interpretability. Therefore, hybrid approaches that combine expert-designed features with powerful classifiers remain widely adopted in tasks such as driver fatigue detection.

Additionally, deep learning models typically possess greater expressive power than traditional machine learning models, allowing them to capture more complex data relationships. As a result, deep learning algorithms have seen broader application in EEG signal classification. Therefore, in recent years, EEG classification techniques based on CNNs, Transformers, graph-based models, and self-supervised learning have been widely adopted.

For instance, Wang et al. proposed a method for detecting driving fatigue based on an electrode-frequency distribution map derived from EEG signals, combined with deep Convolutional Neural Networks (CNN) and deep transfer learning [

15]. They applied discrete Fourier transform to EEG signals from different channels, standardized the results to obtain electrode-frequency distribution maps, and used these in a CNN-based emotion recognition model, achieving a recognition accuracy of 90.59% on the SEED EEG dataset. Li et al. proposes a Channel-Weighted Spatial–Temporal Residual Network (CWSTR-Net) based on nonsmooth nonnegative matrix factorization (nsNMF) for fatigue detection using EEG signals. The network combines Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) to extract spatiotemporal features from EEG signals through an unsupervised channel weighting algorithm, achieving an average accuracy of 97.23% [

16].

The Transformer model initially achieved great success in the field of natural language processing (NLP) and has been widely applied to EEG signal classification tasks in recent years. For example, Ju et al. proposed a novel multi-scale convolutional Transformer model for decoding EEG signals based on motor imagery, visual imagery, and verbal imagery tasks to learn neural representations across different modalities. The model applies multi-head attention mechanisms in spatial, spectral, and temporal domains, analyzes brain networks through EEG source localization, and demonstrates good performance across multiple datasets with accuracy rates of 0.62, 0.70, and 0.72 respectively [

17]; Luo et al. proposed an end-to-end multi-branch fusion transformer framework that focuses on and fuses the full-sequence spatiotemporal spectral information of EEG signals through self-attention mechanisms to adaptively capture key elements. The model performs excellently on multiple datasets, achieving average accuracy rates of 86.93% on the BCIC IV-2a dataset, 94.64% on the BCIC II and III datasets, and 93.52% on the MMIDB dataset, all setting new state-of-the-art results. This provides a more suitable novel network architecture for MI-EEG decoding and helps improve the performance of brain–computer interface systems [

18]. Although this Transformer framework excels at capturing EEG features, it only indirectly reflects feature importance through the distribution of self-attention weights, lacking explicit mathematical logic to support the decision-making process. This makes it difficult to quantitatively explain *why a specific brain region’s signal plays a critical role in fatigue classification*, which poses limitations in applications such as driver fatigue detection that require highly trustworthy interpretability.

Graph Neural Networks (GNNs) have attracted widespread attention due to their ability to model spatial relationships between EEG electrodes. For instance, Fujihashi et al. proposed a graph-based compression scheme to improve the transmission quality of EEG signals at given bit rates. The scheme constructs graphs based on EEG sensor locations and uses parameterized graph shift operators to obtain graph basis functions, thereby achieving decorrelation processing of EEG signals. Through graph Fourier transform combined with quantization and entropy coding, the scheme can transmit high-quality EEG signals at lower bit rates and provides better signal quality than existing DCT- and DWT-based schemes at the same bit rates [

19]; Li et al. proposed a Graph-based Multi-task Self-Supervised learning model (GMSS) for EEG emotion recognition. By integrating spatial and frequency jigsaw puzzles as well as contrastive learning tasks, the model learns more generalized feature representations, thereby reducing overfitting risks and improving emotion recognition performance. Experimental results on the SEED, SEED-IV, and MPED datasets demonstrate that the GMSS model has significant advantages in learning discriminative and generalized features of EEG emotion signals [

20]. Although the GMSS model improves the rationality of feature learning by modeling spatial relationships among electrodes, its complex graph structure design not only increases computational cost but also fails to address the gradient vanishing problem in high-order feature learning. Moreover, it does not establish a direct link between model parameters and EEG physiological significance, resulting in interpretability that remains at the “structural level” rather than reaching the “mathematical and quantitative level”.

Self-supervised learning is a pre-training method that requires no large amounts of labeled data and has shown great potential in EEG signal analysis in recent years: Li et al. proposed a novel multi-task collaborative network that combines supervised learning (SL) and self-supervised learning (SSL) to extract more generalized EEG features. Through experiments on multiple datasets, this method demonstrates significant performance advantages in rapid serial visual presentation tasks, proving its effectiveness in learning more generalized features [

21].

The prior work most closely related to this study includes the Polynomial Gated Network (PGN) proposed by Huangfu et al. [

22] which integrates polynomial expansion layers with an LSTM-style gating mechanism to model both long- and short-term dependencies in EEG time series. That work achieved a training accuracy of 97.20% and a testing accuracy of 96.50% on the public SEED-VIG dataset, confirming the effectiveness of combining polynomial nonlinear approximation with dynamic temporal gating for fatigue state recognition [

22]. In contrast to this approach, the Residual Polynomial Network (RPN) proposed in this paper abandons complex gating structures and instead adopts polarity-aware residual connections along with high-order polynomial approximation. This not only simplifies the architecture and reduces computational cost, but also enhances the interpretability of the model in terms of neurophysiological mechanisms through an explicit mathematical formulation.

To more clearly summarize and compare the commonly used feature-extraction and classification methods in existing EEG-based fatigue detection,

Table 1 provides a systematic overview from the perspectives of method category, core idea, and applicable scenarios. These methods collectively form the foundation of current research, yet each also has its own limitations. For example, traditional features rely heavily on expert knowledge, while deep-learning models are often criticized for being “black boxes” lacking intuitive interpretability.

As shown in

Table 1, existing methods often struggle to strike a balance between performance and model interpretability, computational efficiency, and deep modeling of EEG time-series characteristics. Traditional polynomial networks (such as multidimensional Taylor networks, MTN) provide a clear mathematical structure through polynomial approximation; however, when dealing with complex time-series signals like EEG, their ability to dynamically model long- and short-term dependencies is limited.

Deep learning methods typically apply deep learning models directly to EEG signals or brain topography without accounting for the unique characteristics of EEG signals. Additionally, current deep learning models generally suffer from poor interpretability and weak generalization ability. Moreover, the complex structure and high computational demands of deep learning classification algorithms may hinder timely fatigue assessment. Therefore, this paper proposes a classification method based on Residual Polarity Network (RPN). RPN approximates nonlinear functions locally as polynomials, giving the model an intuitive mathematical structure and clear parameter interpretation, thereby enhancing its interpretability. Furthermore, by incorporating residual connections, the model’s structure is simplified, allowing for quicker fatigue classification results. To sum up, the major contributions of this paper are shown below:

RPN approximates nonlinear functions through polynomial networks, where the polynomials are derived from Laplace-transformed differential equations. Since differential equations inherently represent mathematical formulations of physical models, RPN possesses an intuitive mathematical structure and well-defined parameter significance, significantly enhancing model interpretability. This characteristic makes RPN not only suitable for driver fatigue classification tasks but also provides new insights for modeling other complex systems.

By incorporating skip connections, RPN simplifies the network architecture and substantially reduces computational complexity. Compared to traditional deep learning models, RPN relies solely on addition and multiplication operations, drastically lowering resource consumption and enabling excellent performance in real-time applications.

The skip connection design offers not only lightweight advantages but also ensures smooth information flow by directly transmitting input data to the output layer. This effectively mitigates common issues in high-order polynomial processing, such as overfitting and gradient vanishing. As a result, RPN demonstrates greater robustness when handling complex EEG signals while improving training efficiency and classification performance.

The remainder of this paper is organized as follows.

Section 2 introduces the SEED-VIG EEG dataset used in this study, including experimental design, data acquisition methods, and fatigue-state labeling procedures.

Section 3 details the EEG-based fatigue analysis framework, covering frequency band partitioning, Differential Entropy (DE) feature extraction, Linear Dynamical System (LDS) filtering, and the architecture/training process of the proposed Residual Polynomial Network (RPN).

Section 4 presents experimental results and comparative analyses, evaluating RPN’s classification performance, convergence speed, benchmarking against mainstream classifiers, and feature importance analysis via Sankey diagrams.

Section 5 concludes the paper by summarizing RPN’s advantages in driver fatigue detection and outlining future research directions.

3. Fatigue Analysis Based on EEG Signals

3.1. Feature Extraction of EEG Signals

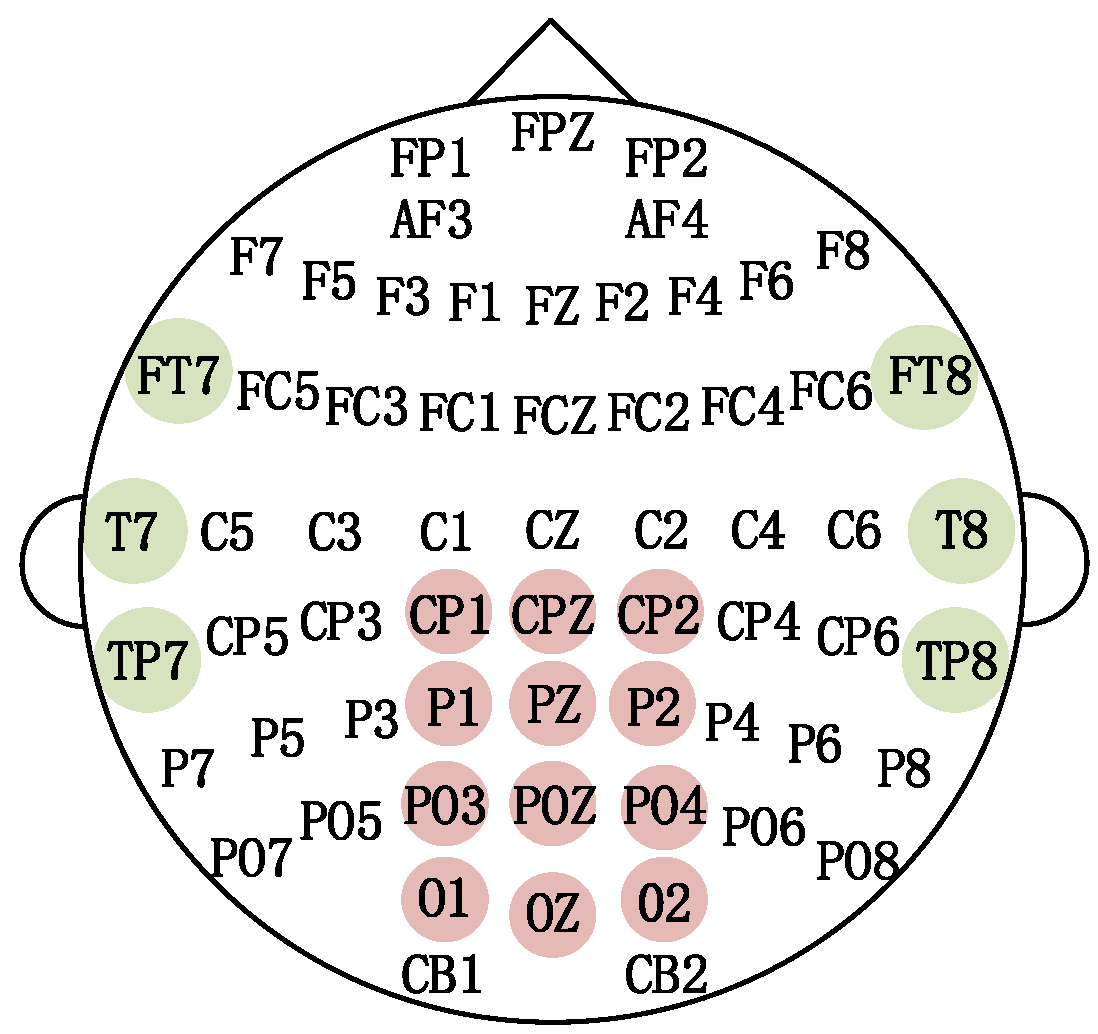

Current EEG-based research indicates that different brain regions are involved in various perceptual and cognitive activities. Specifically, the temporal lobe is associated with processing complex stimuli such as faces and scenes, as well as olfactory and auditory functions, while the occipital lobe is related to vision. Brain fatigue during flight tasks is primarily caused by visual and auditory demands, along with the repetitive confirmation of the surrounding environment. Therefore, EEG signals extracted from the temporal and occipital lobes are selected for analysis.

Research on four typical EEG rhythms has shown that δ (14–30 Hz) reflects drowsiness; θ (4–7 Hz) indicates frustration or mental depression; α (8–13 Hz) corresponds to a state of calm and focus; and β (0.5–4 Hz) is associated with tension, emotional arousal, or excitement [

24,

25]. The changes in these rhythms are closely related to levels of mental fatigue.

Table 2 below shows the relationship between different EEG frequency bands and corresponding activity states.

Differential Entropy (DE) is a generalization of Shannon’s information entropy

, for continuous variables. DE is used to describe the complexity of continuous variables, and its calculation equation is

Research has shown that DE outperforms traditional power spectral density and other time-frequency features in estimating alertness levels. Therefore, this paper uses DE as the feature for alertness estimation.

The dataset used in this paper applies an equal division of each channel signal with a 2 Hz bandwidth. For the 0–50 Hz frequency band, the signal is decomposed into 25 sub-bands: [0,2], [2,4], …, [48,50]. DE is then extracted from each sub-band, resulting in a feature matrix with dimensions of N × M, where N represents the number of channels and M represents the number of sub-bands. For example, in the experimental dataset used in this paper, the input feature format is equal to channels × samples × frequency bands (17 × 885 × 25). The first dimension (1–6) corresponds to the temporal lobe (T zone), while 7–17 corresponds to the occipital lobe (P zone), indicating that there are 17 EEG channels, 25 sub-bands, and 885 samples. Additionally, to filter out components unrelated to fatigue, the dataset introduces LDS to filter the EEG features.

To further reduce inter-subject variability and improve the model’s generalization capability, we applied Z-score normalization to the extracted DE features before feeding them into the RPN model. This preprocessing step ensures that the features are comparable across different subjects and channels. Let

denote the DE feature extracted from the

i-th EEG channel in the

j-th time window. After computing the DE values using the following expression:

We perform Z-score normalization on each feature to eliminate amplitude variations between subjects. The normalized feature is computed as

where

and

represent the mean and standard deviation of the DE features for the

i-th channel across all time windows of a given subject. Finally, the normalized features

are concatenated across all channels and time windows to form the final input sequence

of the RPN model:

where

M = 17 denotes the total number of EEG channels used.

3.2. RPN Model Architecture

This paper proposes the RPN model for fatigue classification, with its structure shown in

Figure 2. In the figure,

x(

t) represents the input feature sequence;

y(

t) represents the expansion of MTN into a sum of polynomials of various orders; and

W1 and

W2 are weight matrices. The network uses “

” and “

” as activation functions.

L(

t) is the loss value between the model’s output and the label, which is used for the autonomous updating of the model parameters.

represents the decayed learning rate, ensuring the model finds at least one optimal solution.

represents the final classification result of the model, which is the output of the classification model.

In the figure, arrows indicate the direction of data flow; the arrow at the position of represents a residual connection, which directly passes the input to the output layer to alleviate gradient vanishing and improve training stability; the arrow at indicates the direction of gradient flow during backpropagation; blue boxes represent different order terms in the polynomial expansion layer, where the ellipsis (…) indicates that several intermediate polynomial terms are omitted here; green boxes ( and ) represent the activation function layer; the pink box () represents the loss computation and parameter update module. The theoretical foundation of RPN lies in the integration of nonlinear function approximation theory and the residual learning framework. According to the Weierstrass Approximation Theorem, any continuous function on a closed interval can be uniformly approximated by polynomials to arbitrary precision. This property makes polynomial-based models highly expressive for capturing complex nonlinear dynamics in EEG signals. However, directly applying high-order polynomials in deep networks often leads to training difficulties such as gradient vanishing, overfitting, and poor generalization.

To address these challenges, RPN introduces a residual polynomial mapping mechanism. Specifically, the original input is added directly to the output of the polynomial transformation , forming a shortcut connection that facilitates gradient flow and stabilizes training. This design allows the model to focus on learning residual nonlinearities while preserving linear signal components, thereby improving both optimization efficiency and robustness.

The core component of RPN, the Multi-dimensional Taylor Network (MTN), consists of an input layer, an intermediate layer, and an output layer, utilizing a single intermediate forward layer structure. Its structure is shown in

Figure 3. In the figure, the input to the MTN input layer is a time series containing n nodes, denoted as

. The intermediate layer consists of n variables.

raised to the highest power m, and each power term is multiplied by the corresponding weight vector, followed by a summation operation. This results in the output of the output layer,

, thereby approximating the nonlinear relationship between the input and output.

represents the set of all power terms, where

i denotes the starting index of the variables forming the term, and

j represents the power. For example,

represents the set of second-order terms starting from the variable x1, composed of

. Similarly, this process is applied to obtain the sets for each power term. The collection of all

forms all the power terms in the expansion up to the highest order m in the intermediate layer sequence. The expression for

is as follows:

where

represents the power of the variable

in the

qth product term of the given power term. For the

wth input sequence

, its corresponding weight set can be denoted as

, where

indicates the starting index of the power term, and j represents the power. The output

can then be expressed as the sum of the products of each power term and its corresponding weight, as follows:

RPN integrates the structure of residual learning units with the MTN, altering the original learning objective of the neural network. The inclusion of direct connection pathways allows the original input information to bypass the polynomial layer and be directly transmitted to the output, as shown in

Figure 2. The input of the residual unit

x(

t) can be directly summed with the output of the polynomial layer. The sum is then multiplied by the weight matrix

W2, and passed through the softmax layer to be converted into probabilities, ultimately yielding the output

. By introducing residual connections between polynomial layers, the RPN classification model allows information to bypass certain layers, ensuring smooth information flow and enhancing the network’s expressive power. This approach addresses the issues of overfitting and vanishing gradients commonly encountered in traditional neural networks when dealing with high-order polynomials.

3.3. Training and Optimization Strategy

In the training process of the RPN model, we combined the BackPropagation (BP) algorithm and the Adam optimization algorithm [

26] to fully leverage its multi-layer network structure. The BP algorithm was chosen for its precise multi-layer gradient updating, suitable for the deep structure of RPN. Meanwhile, Adam’s adaptive learning rate mechanism improved training efficiency and performance, making optimization and convergence in the complex parameter space across different layers more achievable. Specifically, we first used the BP algorithm to compute the gradient of the error, ensuring that the model effectively reduces error through parameter updates at each layer. The Adam optimization algorithm then dynamically optimizes each layer’s gradient through adaptive learning rate adjustments, effectively accelerating the training process and enhancing convergence stability. Firstly, use the first-order moment estimate and the second-order moment estimate of the gradient to dynamically and autonomously adjust the learning rate of each parameter. The calculation formulas for the first-order moment estimate and the second-order moment estimate of the gradient in the

k-th iteration are shown as follows:

where

represents the first-order moment estimate (mean) of the gradient in the

k-th iteration,

represents the second-order moment estimate (variance) of the gradient in the

k-th iteration, and

represents the first-order gradient in the

k-th iteration.

and

are the exponential decay rates of the first-order moment estimate and the second-order moment estimate of the gradient set artificially, respectively, and

. In order to avoid the gradient descent failing to converge to the global optimum or even diverging, a learning rate decay coefficient is introduced in the Adam algorithm, aiming to make the learning step size as large as possible within an acceptable range in the early stage of training. Meanwhile, as the number of training rounds increases, the learning step size, that is, the learning rate, becomes smaller and smaller, so that the learning results will at least oscillate back and forth within an optimal range or even gradually approach the optimum point. Therefore, the update formula of the Adam algorithm is as follows:

where

represents the step size that decreases as the number of training steps increases, and

and

are the weight values of the (

k − 1)-th and

k-th iterations, respectively.

It is worth noting that EEG signals often exhibit significant inter-subject variability, mainly due to differences in brain structure, cognitive patterns, and fatigue development processes across individuals. To enhance the robustness of the proposed RPN model in cross-subject tasks, we applied Z-score normalization to the extracted DE features during preprocessing, aiming to reduce amplitude and distribution differences between subjects. Moreover, RPN incorporates residual connections and polynomial approximation mechanisms, allowing for smoother information flow across layers and improving the model’s adaptability to diverse feature patterns across individuals. Combined with the adaptive learning rate mechanism of the Adam optimizer, RPN dynamically adjusts parameter update steps, further enhancing its stability and generalization capability when handling EEG data from different subjects.

3.4. Driver Fatigue Classification Method Based on RPN

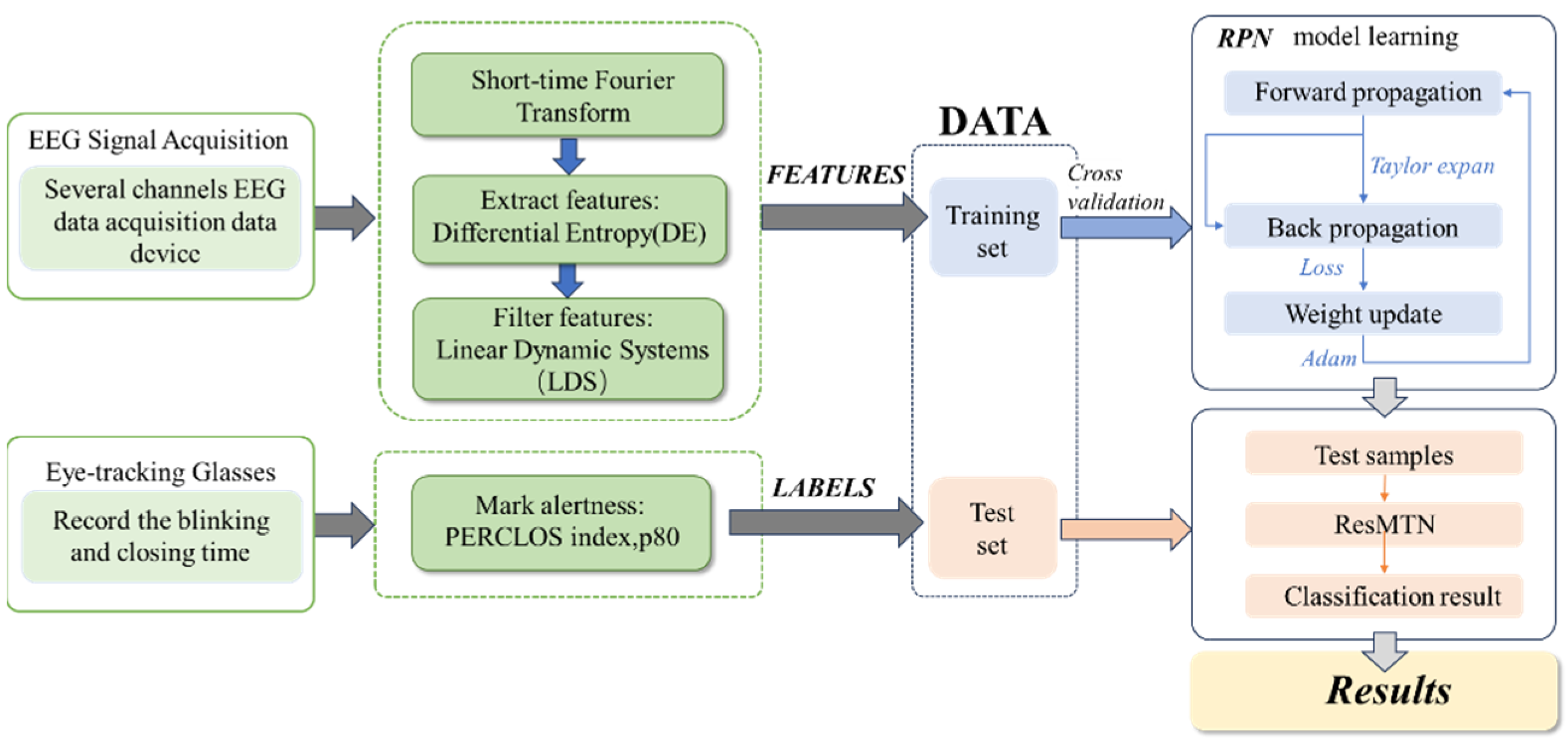

EEG signals are decomposed into 2 Hz bandwidth segments, followed by a Short-Time Fourier Transform (STFT) applied to the signals within a sliding time window, as shown in

Figure 4.

In the figure, thick arrows indicate the direction of data processing flow; thin arrows represent the path of model training and updating; light green boxes represent the data acquisition and preprocessing module; dark green boxes represent the feature extraction, filtering module, and labeling module; blue boxes indicate training set division and model training; pink boxes indicate test set division and model testing; yellow boxes represent the final result output.

This yields the time-varying spectrum of the signals, from which differential entropy features are extracted. These features are then filtered using LDS to obtain the final feature values, which serve as inputs to the RPN classification model. Additionally, the PERCLOS p80 standard is used as the label. Given the training set, the RPN fatigue classification model is first trained and then tested on the test set.

4. Experimental Results

4.1. Sankey Diagram Analysis

In the Sankey diagram, features with wider flow bands indicate greater contributions to the model’s decision-making. The diagram visually illustrates the relationships between 17 input features and the two classification outcomes (0: alert, 1: fatigued), as shown in

Figure 5.

As shown in

Figure 5, Channel 1 (FT7) and Channel 12 (P2) exhibit wider feature flow paths, indicating their pivotal role in the model’s decision-making process. This observation is further supported by neurophysiological evidence: FT7 is located in the anterior left temporal lobe, a region responsible for auditory processing and spatial attention. Under fatigue, neuronal activity in this area tends to decrease, leading to more pronounced changes in differential entropy (DE) features—particularly in the δ band—making FT7 a highly discriminative indicator for fatigue detection. Similarly, P2 is situated in the posterior parietal cortex, adjacent to the occipital visual cortex, and is closely associated with fine-grained visual information processing. As fatigue impairs drivers’ visual focus and sustained attention, the α band (8–13 Hz), which reflects a state of relaxed alertness, shows a greater reduction in DE values at P2, thereby enhancing its contribution to fatigue classification.

The dominance of these channels not only aligns with known brain-behavior relationships but also provides actionable insights for feature engineering and system optimization. Their strong and physiologically meaningful contributions suggest that targeted feature derivation—such as constructing fatigue-sensitive composite features from FT7 and P2—could further enhance model performance. Moreover, the Sankey diagram reveals that most features contribute slightly more to Class 1 (fatigued) than to Class 0 (alert), reflecting a consistent tendency of the model to prioritize fatigue-related patterns. This inherent bias indicates stronger robustness in detecting fatigue states, which is crucial for real-world safety-critical applications. Together, these findings underscore the translational potential of the proposed RPN framework: by identifying physiologically interpretable, high-impact channels, it enables the design of simplified, user-friendly EEG systems that maintain high accuracy while reducing hardware complexity and improving practical deployability.

4.2. Selection of Hyperparameters

In the proposed RPN model, the polynomial approximation layer serves as one of the core components, where the highest polynomial degree directly determines the model’s capacity for fitting nonlinear functions. To establish this critical parameter appropriately, we conducted systematic theoretical analysis and experimental validation during the model design phase. Theoretically, higher-degree polynomials possess stronger nonlinear representation capability, enabling more precise approximation of complex functional relationships. However, as the degree increases, the number of model parameters grows exponentially, leading not only to higher computational costs but also potentially causing overfitting issues. Furthermore, in practical applications, high-degree polynomials tend to be more susceptible to noise in input features, which may compromise the model’s generalization performance. Therefore, while maintaining model expressiveness, it is essential to constrain the maximum polynomial degree.

To identify the optimal balance between representational power and computational efficiency, we designed a series of comparative experiments evaluating the classification performance of RPN models with varying polynomial degrees. All experiments followed identical training strategies and data partitioning protocols. The experimental results are presented in

Table 2:

In this experiment, the proposed RPN classifier is compared with SVM, KNN, DT, and LSTM classification algorithms. A subject-wise 10-fold cross-validation was performed, where all subjects were first partitioned into 10 non-overlapping groups, and in each fold, one group was used as the test set (10% of total subjects) while the remaining nine groups were used for training. This ensures no subject appears in both training and testing sets, preventing data leakage and enabling a rigorous evaluation of cross-subject generalization. The classification results are shown in

Table 3.

The experimental results demonstrate that when the polynomial degree increases from 2 to 3, the model’s classification accuracy improves by 4.32%, indicating limited representational capacity in lower-degree models. Further increasing the degree from 3 to 4 yields an additional 1.18% accuracy gain, reaching the optimal performance level. However, when extending to degree 5, while the accuracy shows only marginal degradation (−0.13%), the training time increases substantially (+8.85 s). This suggests emerging overfitting tendencies and significantly compromised training efficiency at excessive polynomial degrees.

Through comprehensive consideration of classification performance, training efficiency, and generalization capability, we ultimately set the maximum polynomial degree in RPN to 4. This configuration not only satisfies fundamental requirements of polynomial approximation theory but has also been empirically validated for effectiveness in driver fatigue classification tasks. The selected parameterization maintains model simplicity and real-time processing capability while ensuring high classification accuracy—a balanced solution well-suited for practical deployment scenarios.

To ensure reproducible results and suppress overfitting, after determining the polynomial order as m = 4, all hyperparameters were fixed as follows: the Adam optimizer was used with , and ; the initial learning rate was set to 0.001 and decayed using a cosine annealing strategy, with a maximum of 1000 training epochs and an early stopping patience of 8; the batch size was 32, and the L2 weight decay coefficient was ; a dropout rate of 0.2 was applied before .

4.3. Classification Results of the RPN Model

The features from SEED-VIG are selected as the model input, and classification labels for fatigue levels are obtained using the PERCLOS labels calculated from eye-tracking data, based on the P80 criterion. A binary classification of fatigue is performed using 10-fold cross-validation, with the maximum polynomial degree set to 4 and an initial learning rate of 0.001. The experiment is repeated ten times, and the ten confusion matrices are shown in

Figure 6. The final experimental results show an average accuracy of 97.65%, a sensitivity of 96.55%, and a specificity of 99.37%, with an average runtime of 27.04 s per experiment. The confusion matrix reveals balanced classification performance across both fatigued and alert sample categories, with no significant class bias observed. These results demonstrate that the proposed RPN classification model achieves high accuracy in fatigue classification.

In the figure, green cells represent True Positives (TP) and True Negatives (TN), indicating the number of samples that are truly fatigued and predicted as fatigued by the model, as well as the number of samples that are truly alert and predicted as alert by the model. Pink cells represent False Positives (FP) and False Negatives (FN), indicating the number of samples that are truly alert but misclassified as fatigued by the model, and the number of samples that are truly fatigued but misclassified as alert by the model. The green numbers in the gray cell at the bottom right represent the accuracy rate. The numbers in each green and pink cell represent the sample count and proportion, respectively, facilitating multi-angle evaluation of model performance. From the confusion matrix in

Figure 6, it can be observed that the model’s predictions for the “Awake” (0) and “Fatigue” (1) classes both show a high concentration along the diagonal. Specifically, the vast majority of samples are correctly classified into their true class regions, while the number of misclassified samples appearing in off-diagonal regions is extremely low.

4.4. Comparison of Convergence Speed and Training Accuracy

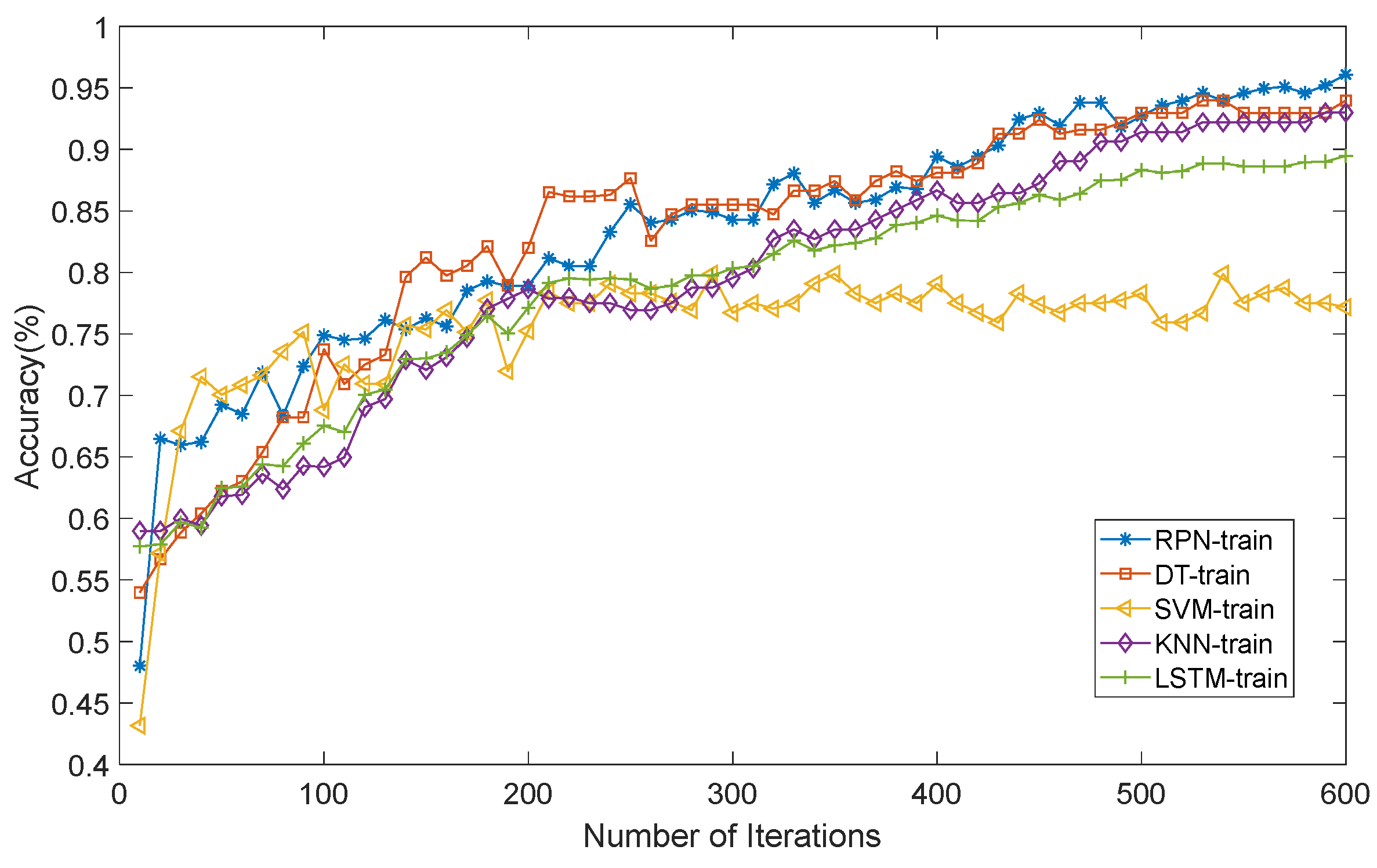

In this study, to verify the effectiveness of our proposed RPN method for determining fatigue levels based on EEG signals, we conducted comparative experiments with traditional methods such as DT (Decision Tree), SVM (Support Vector Machine), KNN (K-Nearest Neighbors), and LSTM (Long Short-Term Memory). The experiments focused on the convergence speed and training accuracy of each method.

As shown in

Figure 7, in terms of convergence speed, an analysis of multiple sets of experimental data clearly indicates that the RPN method can reach a stable state in a relatively short time. In contrast, DT, SVM, and KNN methods show a certain lag in the convergence process and require more iterations to gradually stabilize. Although LSTM has certain advantages among deep learning methods, its convergence speed is still slightly inferior compared to the RPN method. This is mainly due to three reasons: (1) RPN uses polynomial approximation for nonlinear functions, providing a clear mathematical expression and calculation method, facilitating parameter adjustment; (2) integrating ResNet ideas accelerates information transmission and parameter updates; (3) we introduce the Adam algorithm to improve convergence speed.

Secondly, as can be seen from

Figure 7, in terms of training accuracy, the performance of the RPN method is also significantly higher than that of DT, SVM, KNN, and LSTM methods. Specifically, the DT method, due to its simple decision rules, is prone to overfitting or underfitting when dealing with complex EEG signals, resulting in lower training accuracy. Although the SVM method can handle nonlinear problems to some extent, its processing capacity for large-scale data is limited. The KNN method depends on the selection of neighbor samples and is easily affected by noisy data. While the LSTM method can learn long-term dependencies in time-series data, its performance still needs improvement when dealing with high-dimensional, complex EEG signals. In comparison, RPN integrates ResNet ideas into MTNs, using polynomial networks to approximate nonlinear functions, better capturing complex features in EEG signals, thus outperforming other methods in accuracy.

4.5. Comparison Among Different Methods

In this experiment, the proposed RPN classifier is compared with SVM, KNN, DT, LSTM, Graph-based MI-MBFT [

17] and Multiscale Convolutional Transformer [

18] classification algorithms. A 10-fold cross-validation is performed, with 10% of the data selected as the test set. The classification results for SVM, KNN, DT, LSTM, Graph-based MI-MBFT and Multiscale Convolutional Transformer are shown in

Table 4.:

As evidenced in

Table 4, the proposed RPN classifier demonstrates statistically superior performance to conventional machine learning algorithms (SVM, KNN, and DT) across all key metrics-accuracy, sensitivity, and specificity. When benchmarked against LSTM architectures, RPN maintains superior accuracy and sensitivity performance. Notably, while LSTM achieves 100% specificity, this result primarily reflects class imbalance in the training data where fatigue-labeled samples were underrepresented.

Moreover, compared to the latest Transformer methods, RPN achieves 3.01% and 9.65% higher accuracy than MI-MBFT and Multiscale Convolutional Transformer, respectively, while reducing training time by 40.2% and 35.6%, fully demonstrating the dual advantages of the polynomial-residual structure in both accuracy and efficiency.

To more intuitively demonstrate the performance differences among the models, we compared the accuracy, sensitivity, and specificity of RPN, SVM, KNN, DT, LSTM, Graph-based MI-MBFT and Multiscale Convolutional Transformer models using bar charts in

Figure 8. The results show that RPN outperforms all other models in these metrics, demonstrating the best classification performance. Particularly, RPN significantly surpasses other methods in accuracy, indicating its clear advantage in overall prediction precision. Moreover, RPN’s high sensitivity and specificity further prove its strong reliability in detecting positive samples and powerful discrimination capability in distinguishing negative samples. These findings fully validate the effectiveness of the RPN structure in improving classification performance.

4.6. Ablation Study on Feature Selection

To systematically evaluate the effectiveness of the DE features adopted in this study for driver fatigue classification tasks and verify their advantages over other commonly used EEG features, we conducted comparative experiments of feature types under the same model architecture. Specifically, we extracted the following five typical EEG features, including DE; Power Spectral Density (PSD); Sample Entropy (SampEn); Wavelet Coefficients; and Differential Mean, among which this study employed DE. All features were extracted based on the δ, θ, α, and β frequency bands from the SEED-VIG dataset and input into the same RPN classification model for training and testing. The experiments adopted a ten-fold cross-validation strategy to ensure result stability and reproducibility. The experimental results are shown in

Table 5.:

As can be seen from the table, DE significantly outperforms other feature types across all metrics. Its classification accuracy is 3.19% higher than that of the second-best performer PSD. This experiment validates both the applicability and superiority of DE.

4.7. Statistical Significance Analysis of Performance Improvements

To further validate that the performance improvements of the proposed RPN model over baseline methods are statistically significant rather than due to random variation, we conducted a series of paired t-tests across all models using the 10-fold cross-validation results.

Specifically, we compared the classification accuracy of RPN with that of several baseline models (SVM, KNN, DT, LSTM, Transformer, and Graph-based methods) in each fold of the cross-validation. The mean difference, standard error, t-value, and corresponding p-value were calculated for each comparison. A significance level of α = 0.05 was used to determine whether the differences were statistically meaningful. The statistical results are summarized in the following table:

As shown in

Table 6, all

p-values are less than 0.001, indicating that the performance improvements of RPN over all baseline models are highly statistically significant at the 0.05 significance level. These results confirm that the superior classification performance of RPN is not due to chance but reflects its robustness and effectiveness in capturing fatigue-related EEG patterns.

In summary, the RPN model proposed in this study offers a high-precision, interpretable, and efficient new paradigm for EEG-based driver fatigue detection. Although RPN achieved excellent performance on the SEED-VIG dataset (accuracy 97.65%) and demonstrated lightweight and energy-efficient characteristics—making it well-suited for integration into embedded in-vehicle real-time fatigue monitoring systems—we also clearly recognize the challenges and directions for improvement before its widespread practical application. First, the model’s effectiveness has been validated primarily on a single public dataset. To ensure its generalizability, future work must conduct rigorous cross-dataset validation and transfer learning studies on independent datasets involving diverse populations, driving scenarios, and recording equipment. Second, the model’s performance still shows some sensitivity to hyperparameters such as polynomial order. In practical deployment, this could be transformed into an advantage: by leveraging the key channels identified in the Sankey diagram of this study (e.g., FT7, P2), it can guide the development of simplified, low-cost dedicated EEG headwear devices, and enable parameter optimization tailored to specific hardware platforms and user groups. Furthermore, the integration of driver-specific data for online fine-tuning or adaptive calibration mechanisms could be explored to enhance personalized detection performance. Finally, the current offline analysis must evolve toward real-time online monitoring. This requires investigating online learning and efficient inference strategies for RPN under streaming data, strict latency constraints, and edge computing resource limitations—essential for extending its application to broader scenarios such as high-stakes medical decision-making (e.g., epilepsy prediction).