Abstract

A plethora of methods are used for solving equations in the finite-dimensional Euclidean space. Higher-order derivatives, on the other hand, are utilized in the calculation of the local convergence order. However, these derivatives are not on the methods. Moreover, no bounds on the error and uniqueness information for the solution are given either. Thus, the advantages of these methods are restricted in their application to equations with operators that are sufficiently many times differentiable. These limitations motivate us to write this paper. In particular, we present the more interesting semi-local convergence analysis not given previously for two sixth-order methods that are run under the same set of conditions. The technique is based on the first derivative that only appears in the methods. This way, these methods are more applicable for addressing equations and in the more general setting of Banach space-valued operators. Hence, the applicability is extended for these methods. This is the novelty of the paper. The same technique can be used in other methods. Finally, examples are used to test the convergence of the methods.

Keywords:

Banach spaces; Fréchet derivative; convergence order; semi-local convergence; convergence ball MSC:

65H10; 65G99; 47H99; 49M15

1. Introduction

Let us consider a Fréchet derivable operator , where X, Y are Banach spaces and is a convex and open set. In computational sciences and other related fields, equations of the type

are regularly used to address numerous complicated problems. It is important to realize that obtaining the solutions to these equations is a challenging problem. The solutions are only being found analytically in a limited number of cases. Therefore, iterative procedures are often developed to solve these equations. However, it is a difficult task to create an effective iterative strategy for dealing with Equation (1). The popular Newton’s method is widely used to solve this equation. In order to increase the convergence order modifications of methods such as Chebyshev’s, Jarratt’s, etc. have been developed.

Various higher order iterative ways computing solution of (1) have been provided in [1,2,3]. These methods are based on Newton-like methods [2,3,4,5,6,7,8,9,10]. In [11], two cubically convergent iterative procedures are designed by Cordero and Torregrosa. Another third-order convergent method based on the evaluations of two F, one , and one inversion of the matrix is presented by Darvishi and Barati [5]. In addition, Darvishi and Barati [5] also suggested methods having convergence order four. Sharma et al. [12] composed two weighted-Newton steps to generate an efficient fourth-order weighted Newton method for nonlinear systems. In addition, fourth and sixth-order convergent iterative algorithms are developed by Sharma and Arora [13] to solve nonlinear systems.

The main objective of this article is to extend the application of the sixth convergence order methods that we have selected from [13,14], respectively. These methods are:

and

respectively. If methods (2) and (3) are reduced to the methods designed in [12,14], respectively. The motivation and the benefits of using these methods have been well explained in [13,14]. These methods require the evaluation of two derivatives, one inverse, and two operator evaluations per iteration. The convergence analysis was given in the special case when . The local convergence of these methods is shown with the application of expensive Taylor formulas. Moreover, the existence of derivatives up to order seven is assumed. These derivatives do not appear in the methods. However, this approach reduces their applicability.

Motivation for writing this paper. Let us look at the following function to explain a viewpoint

where and the F is defined on . Then, the unboundedness of makes the previous functions’ convergence results ineffective for methods (2) and (3). Notice also that the results in [15,16] can not be used to solve equations with operators that are not at least seven times differentiable. However, these methods may converge. Moreover, existing results provide little information regarding the bounds of the error, the domain of convergence, or the location of the solution.

Novelty of the paper. The new approach addresses these concerns in the more general setting of Banach spaces. Moreover, we use only conditions on the derivative that appears in these methods. Furthermore, we investigate the ball analysis of an iterative method in detail in order to determine convergence radii, approximate error bounds, and calculate the region where is the only solution. Another benefit of this analysis is that it simplifies the very difficult task of selecting . Consequently, we are motivated to investigate and compare the semi-local convergence of (2) and (3) (not given in [15,16]) under an identical set of constraints. Additionally, an error estimates and the convergence radii, the convergence theorems. Furthermore, the uniqueness of the convergence ball is discussed.

Future Work. The methods mentioned previously can also be extended with our technique along the same lines. These methods can be used to solve equations in the related works [15,16,17].

2. Majorizing Sequences

The real sequences defined in this section shall be shown to be majorizing for method (2) and method (3) in the next Section.

Let and for some and consider functions , , to be continuous and nondecreasing. Define the sequence for all

and

We use the same convergence notation for the second sequence

and

Next, the same convergence criteria are developed for these sequences.

Lemma 1.

Suppose that either sequence generated by Formula (5) or Formula (6) satisfy

and

for some parameter and all

Then, these sequences are bounded from above by , nondecreasing and convergent to the same .

Proof.

If follows by the Formulas (5) and (6) and the conditions (7) and (8) that the conclusions if the Lemma 1 hold. In particular, the limit point is the unique least upper bound of these sequences.

Notice that and do not have to be the same for each sequence. □

If the function is strictly increasing, the possibly choice for .

The semi-local convergence is discussed in the next Section.

3. Convergence

The following common set of conditions is sufficient for the convergence of these methods.

Suppose:

There exist a starting point and a parameter such that and

for all , where the function is continuous and nondecreasing.

Equation has a smallest positive solution .

Set and .

for all , where the function is continuous and nondecreasing.

and

.

Next, the semi-local convergence is given first for method (2).

Theorem 1.

Proof.

The following items shall be shown using mathematical induction on the number k:

Item (10) holds for , since by the condition , the definition of the method (2) and the sequence (5)

Notice also that the iterates and are well defined and . Then, for , conditions (7), and give

This estimate together with the standard lemma by Banach on linear operator [2] implies that

By replacing the value of given in the first substep in the second substep of the method (2), we have

In view of the definition of the sequence (5), condition , (13) (for ) and the identity (14), we obtain the estimate

where

and

The following estimates are also used

and similarly

Thus, the iterate .

Hence, the iterate and the item (12) hold.

On the other hand, we can write

and

Then, by the first substep of the method (2)

and It follows that the item (10) hold for replacing k and . Then, the induction for the items (10)–(12) is completed.

Remark 1.

The parameter can replace the limit point in the condition or in the condition (8).

The next result discusses the location and the uniqueness of a solution for the equation .

Proposition 1.

Suppose: The exists a solution of the equation for some parameter .

The condition hold.

For

Set .

Then, the equation is uniquely solved by in the region .

Proof.

Let for some with . The application of the conditions , and (21) gives in turn that.

concluding that the linear operator M is invertible and , since

□

Remark 2.

The uniqueness of the solution result given in Proposition 1 is not using all the conditions of the Theorem 1. However, if all these conditions are used, then set .

4. Numerical Example

Example 1.

Consider the system of nonlinear equations with defined by

Here . The initial approximation is calculated by the formula , where s is a real number. The exact solution is . The iterative process is stopped if the condition holds

Table 1 and Table 2 show values of errors for different s and . Notice that the closer is to the faster the convergence.

Table 1.

The values at each iteration for .

Table 2.

The values at each iteration for .

Example 2.

Consider the boundary value problem

Denote , , where and . Using the approximation for the first and second-order derivatives

the following system of the nonlinear equations

with is obtained.

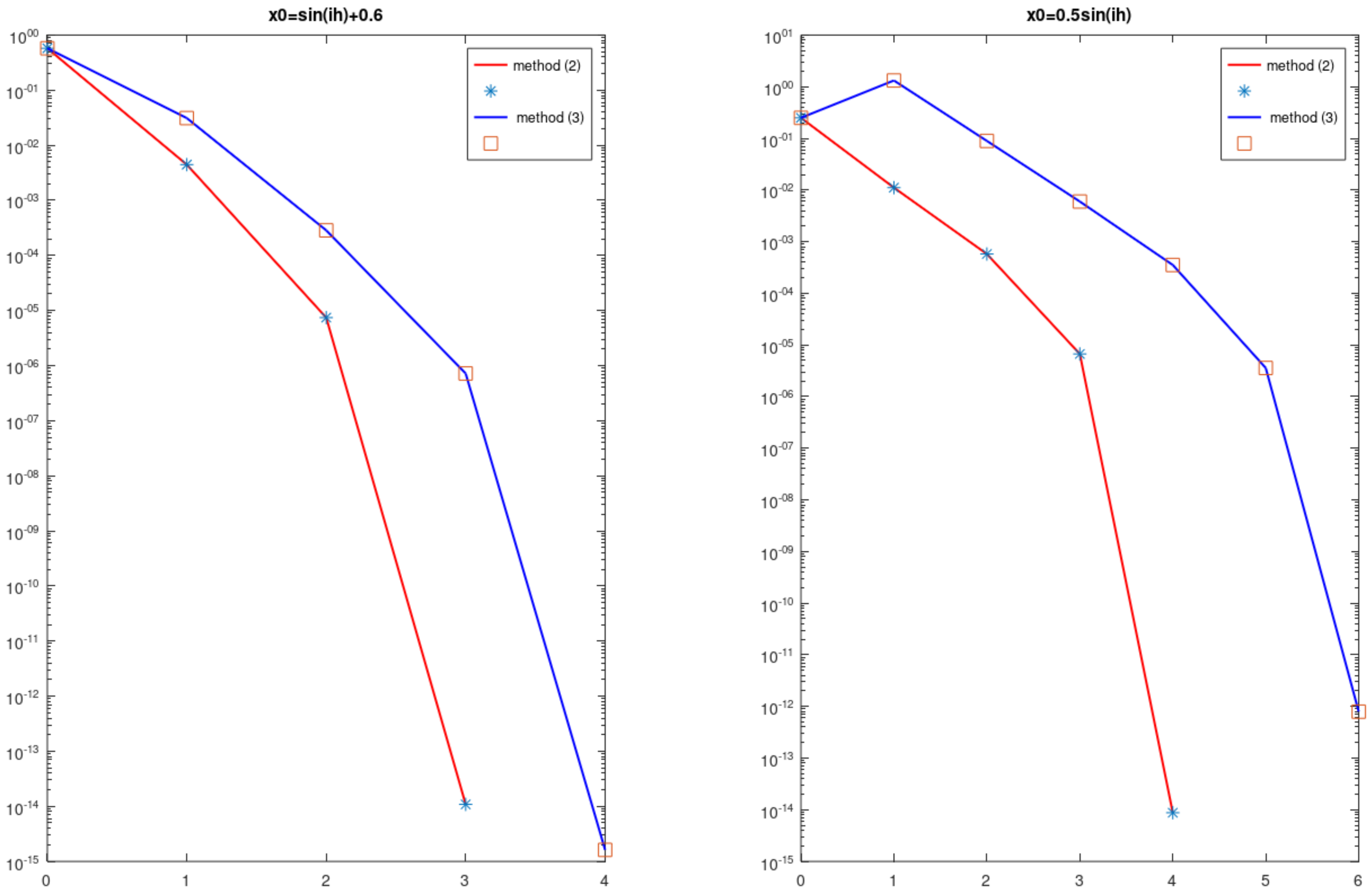

Figure 1 shows at each iteration. The results are obtained for and . The starting approximations were given by formulas (for the graphs on the left) and (for the graphs on the right). Notice that the method (2) convergences faster than (3) for both problems.

Figure 1.

Example 2: norm of residual at each iteration.

5. Conclusions

The local convergence analysis of the method (2) and the method (3) previously was given under hypotheses on the seventh derivative on the space . The analysis did not provide computable error bounds or uniqueness results for the solution. The rest of the methods listed in the Introduction have the same limitations. We wrote this paper to address these problems and to extend the applicability of these methods. As a sample, we demonstrated that with method (2) and method (3). However, the new approach works on the rest of the aforementioned methods. In particular, we considered the semi-local convergence analysis for these methods which is more interesting and challenging that the local convergence. Computable error estimates as well as the uniqueness of the solution results were given in the more general setting of Banach spaces. Moreover, the convergence is based only on the derivative appearing on the method and -continuity conditions. The new approach will be applied in the future to other iterative methods.

Author Contributions

Conceptualization, I.K.A., S.S., S.R. and H.Y.; methodology, I.K.A., S.S., S.R. and H.Y.; software, I.K.A., S.S., S.R. and H.Y.; validation, I.K.A., S.S., S.R. and H.Y.; formal analysis, I.K.A., S.S., S.R., and H.Y.; investigation, I.K.A., S.S., S.R., and H.Y.; resources, I.K.A., S.S., S.R. and H.Y.; data curation, I.K.A., S.S., S.R. and H.Y.; writing—original draft preparation, I.K.A., S.S., S.R. and H.Y.; writing—review and editing, I.K.A., S.S., S.R. and H.Y.; visualization, I.K.A., S.S., S.R., and H.Y.; supervision, I.K.A., S.S., S.R. and H.Y.; project administration, I.K.A., S.S., S.R. and H.Y.; funding acquisition, I.K.A., S.S., S.R. and H.Y. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Argyros, I.K. The Theory and Applications of Iteration Methods, 2nd ed.; Engineering Series; CRC Press: Boca Raton, FL, USA, 2022. [Google Scholar]

- Shakhno, S.M. Convergence of the two-step combined method and uniqueness of the solution of nonlinear operator equations. J. Comput. Appl. Math. 2014, 261, 378–386. [Google Scholar] [CrossRef]

- Shakhno, S.M. On an iterative algorithm with superquadratic convergence for solving nonlinear operator equations. J. Comput. Appl. Math. 2009, 231, 222–235. [Google Scholar] [CrossRef]

- Argyros, I.K.; Shakhno, S.; Yarmola, H. Two-step solver for nonlinear equation. Symmetry 2019, 11, 128. [Google Scholar] [CrossRef]

- Darvishi, M.T.; Barati, A. A fourth-order method from quadrature formulae to solve systems of nonlinear equations. Appl. Math. Comput. 2007, 188, 257–261. [Google Scholar] [CrossRef]

- Hueso, J.L.; Martínez, E.; Teruel, C. Convergence, efficiency and dynamics of new fourth and sixth order families of iterative methods for nonlinear systems. J. Comput. Appl. Math. 2015, 275, 412–420. [Google Scholar] [CrossRef]

- Jarratt, P. Some fourth order multipoint iterative methods for solving equations. Math. Comp. 1966, 20, 434–437. [Google Scholar] [CrossRef]

- Kou, J.; Li, Y. An improvement of the Jarratt method. Appl. Math. Comput. 2007, 189, 1816–1821. [Google Scholar] [CrossRef]

- Magrenán, Á.A. Different anomalies in a Jarratt family of iterative root-finding methods. Appl. Math. Comput. 2014, 233, 29–38. [Google Scholar]

- Chun, C.; Neta, B. Developing high order methods for the solution of systems of nonlinear equations. Appl. Math. Comput. 2019, 342, 178–190. [Google Scholar] [CrossRef]

- Cordero, A.; Torregrosa, J.R. Variants of Newtons method using fifth-order quadrature formulas. Appl. Math. Comput. 2007, 190, 686–698. [Google Scholar]

- Sharma, J.R.; Guha, R.K.; Sharma, R. An efficient fourth order weighted-Newton method for systems of nonlinear equations. Numer. Algor. 2013, 62, 307–323. [Google Scholar] [CrossRef]

- Sharma, J.R.; Arora, H. Efficient Jarratt-like methods for solving systems of nonlinear equations. Calcolo 2014, 51, 193–210. [Google Scholar] [CrossRef]

- Xiao, X.; Yin, H. A simple and efficient method with high order convergence for solving systems of nonlinear equations. Comput. Math. Appl. 2015, 69, 1220–1231. [Google Scholar] [CrossRef]

- Zhang, J.; Yang, G. Low-complexity tracking control of strict-feedback systems with unknown control directions. IEEE Trans. Autom. Control. 2019, 64, 5175–5182. [Google Scholar] [CrossRef]

- Zhang, X.; Dai, L. Image enhancement based on rough set and fractional order differentiator. Fractal Fract. 2020, 6, 214. [Google Scholar] [CrossRef]

- Ding, W.; Wang, Q.; Zhang, J. Analysis and prediction of COVID-19 epidemic in South Africa. ISA Trans. 2022, 124, 182–190. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).