Abstract

Particle swarm optimization (PSO) algorithm is generally improved by adaptively adjusting the inertia weight or combining with other evolution algorithms. However, in most modified PSO algorithms, the random values are always generated by uniform distribution in the range of [0, 1]. In this study, the random values, which are generated by uniform distribution in the ranges of [0, 1] and [−1, 1], and Gauss distribution with mean 0 and variance 1 (, and ), are respectively used in the standard PSO and linear decreasing inertia weight (LDIW) PSO algorithms. For comparison, the deterministic PSO algorithm, in which the random values are set as 0.5, is also investigated in this study. Some benchmark functions and the pressure vessel design problem are selected to test these algorithms with different types of random values in three space dimensions (10, 30, and 100). The experimental results show that the standard PSO and LDIW-PSO algorithms with random values generated by or are more likely to avoid falling into local optima and quickly obtain the global optima. This is because the large-scale random values can expand the range of particle velocity to make the particle more likely to escape from local optima and obtain the global optima. Although the random values generated by or are beneficial to improve the global searching ability, the local searching ability for a low dimensional practical optimization problem may be decreased due to the finite particles.

1. Introduction

Based on the intelligent collective behaviors of some animals such as fish schooling and bird flocking, particle swarm optimization (PSO) algorithm was first introduced by Kennedy and Eberhart [1]. This algorithm is a stochastic population based heuristic global optimization technology, and it has advantages of simple implementation and rapid convergence capability [2,3,4]. Therefore, PSO algorithm has been widely utilized in function optimization [5], neural network training [6,7,8,9], parameters optimization of fuzzy system [10,11,12], and control system [13,14,15,16,17], etc.

However, the PSO algorithm is easily trapped in local optima when it is used to solve complex problems [18,19,20,21,22,23,24,25,26,27,28,29,30,31]. This disadvantage seriously limits the application of the PSO algorithm. In order to deal with this issue, many modifications or improvements are proposed to improve the performance of the PSO algorithm. Generally, the improved methods include changing the parameter values [19,20,21], tuning the inertia weight or population size [22,23,24,25], and combining with other evolution algorithms [26,27,28,29,30,31], etc. In recent years, the PSO algorithms for dynamic optimization problems are developed. The multi-swarm PSO algorithms, such as, multi-swarm charged/quantum PSO [32], species-based PSO [33], clustering-based PSO [34], child and parent swarms PSO [35], multi-strategy ensemble PSO [36], chaos mutation-based PSO [37], distributed adaptive PSO [38], and stochastic diffusion search—aided PSO [39], are developed to improve their performances. Furthermore, some dynamic neighborhood topology-based PSO algorithms are developed to deal with dynamic optimization problems [40,41]. These improvements or modifications have improved the global optimization ability of the PSO algorithm to some extent. However, these methods cannot effectively prevent the stagnation of optimization and premature convergence. This is because the velocity of particle becomes very small in the position of the local optima, which renders the particle unable to escape from the local optimum. Therefore, it is necessary to propose an effective way to make the particle jump out of the local optimum.

In the PSO algorithm, the velocity of particle is updated according to its previous velocity and the distances of its current position from its own best position and the group’s best position [20]. The coefficients of previous velocity and two distances are inertia weight and random values, respectively. In previous research, a variety of inertia weight strategies were proposed and developed to improve the performance of the PSO algorithm. However, the random values for most modified PSO algorithms are always generated by uniform distribution in the range of [0, 1]. Obviously, the random values represent the weights of two distances for updating the particle velocity. If the range of random values is small, these two distances have little influence on the new particle velocity, which means that the velocity cannot be effectively increased or changed to escape from local optima. In order to improve the global optimization ability of the PSO algorithm, it is necessary to expand the range of random values.

In this paper, the random values generated by different probability distributions are utilized to investigate their effects on the PSO algorithms. In addition, the deterministic PSO algorithm, in which the random values are set as 0.5, is investigated for comparison. The performances of PSO algorithms with different types of random values are tested and compared by the experiments of benchmark functions in three space dimensions. The rest of the paper is organized as follow. Section 2 presents the standard PSO algorithm and its modification strategies. The different types of random values are provided in Section 3. Section 4 provides the performances of PSO algorithms with different types of random values, and the effects of random values on PSO algorithms are also analyzed in this section. Finally, Section 5 concludes this paper.

2. Standard and Modified Particle Swarm Optimization Algorithms

2.1. Standard Particle Swarm Optimization Algorithm

In standard PSO algorithm, each particle represents a potential solution to the task within the search space. In the D-dimensional space, the position vector and velocity vector of the ith particle can be expressed as and , respectively. After the random initialization of particles, the velocity and position of the ith particle are updated as follow,

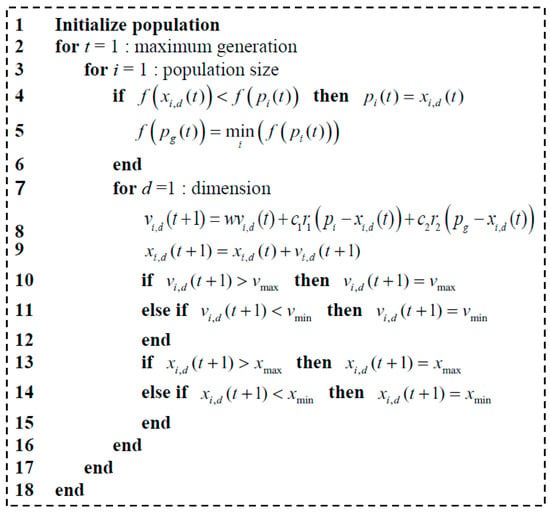

where, is the inertia weight and can be used to control the influence of previous velocity on the new one; the parameters and are two constants which determine the weights of and ; represents the best previous position of the ith individual and denotes the best previous position of all particles in current generation; and represent two separately generated random values which uniformly distribute in the range of [0, 1]. The pseudocode of standard particle swarm optimization is shown in Figure 1.

Figure 1.

Pseudocode of standard particle swarm optimization.

2.2. Modifications for Particle Swarm Optimization Algorithm

In the original studies of the PSO algorithm, the range of inertia weight () attracted researchers’ attention, and they suggested that the PSO algorithm with an inertia weight within the range of [0.9, 1.2] can take the least average number of iterations to find the global optimum [42]. In later research, the inertia weight adaptation mechanism is established to improve the global optimization ability of the PSO algorithm. Generally, the various inertia weighting strategies can be classified into three categories: (1) constant or random inertia weight strategies; (2) time varying inertia weight strategies; (3) adaptive inertia weight strategies.

2.2.1. Constant or Random Inertia Weight Strategies

The value of inertia weight is constant during the search or is determined randomly [20,43]. The impact of the inertia weight on the performance of the PSO algorithm is analyzed by Shi and Eberhart [20], and then they proposed a random inertia weight strategy for the PSO algorithm to track the optima in a dynamic environment, which can be expressed as,

where is a random value in [0, 1]. Then is a uniform random variable in the range of [0.5, 1].

2.2.2. Time Varying Inertia Weight Strategies

The inertia weight is defined as a function of time or iteration number [44,45,46]. In these strategies, the inertia weight can be updated in many ways. For example, the linear decreasing inertia weight can be expressed as [35,36],

where is the current iteration of algorithm and represents the maximum number of iterations; and are the upper and lower bounds of inertia weight, and they are 0.9 and 0.4, respectively.

Based on the linear decreasing inertia weight strategy, the nonlinear decreasing strategy for inertia weight is proposed for the PSO algorithm [47], and it can be expressed as,

where is the nonlinear modulation index. Obviously, with , this strategy becomes the linearly decreasing inertia weight strategy.

In addition, some similar methods [48,49,50,51], which use linear or nonlinear decreasing inertia weight, haven been proposed to improve the performance of the PSO algorithm.

2.2.3. Adaptive Inertia Weight Strategies

The inertia weight is adjusted by using one or more feedback parameters to balance the global and local searching abilities of the PSO algorithm [52,53,54,55]. These feedback parameters include the global best fitness, the local best fitness, the particle rank, and the distance to the global best position, etc.

In each iteration, the inertia weight determined by the ratio of the global best fitness and the average of particles’ local best fitness can be expressed as [17],

where and represent the best previous positions of the i-th individual and all particles, respectively; N is the number of particles.

The inertia weight updated by the particle rank can be expressed as [53],

where is the inertia weight of the i-th particle in current iteration; represents the position of the i-th particle when the particles are ordered based on their best fitness.

The inertia weight adjusted by the distance to the global best position can be expressed as [54],

where the inertia weight ; is the current Euclidean distance of the i-th particle from the global best, and it can be expressed as,

and is the maximum distance of a particle from the global best in the previous generation.

In recent research, the PSO algorithm with inertia weight adjusted by the average absolute value of velocity or the situation of swarm is proposed to keep the balance between local search and global search [56,57,58]. In addition, the adaptive population size strategy is an effective way to improve the accuracy and efficiency of the PSO algorithm [59,60,61,62].

It is obvious that the improvements of the PSO algorithm are generally implemented by adaptively adjusting inertia weight or population size. These methods can avoid falling into local optima by adaptively updating the velocity of particle to some extent. However, the effect of random values on the particle velocity has never been discussed. Therefore, the PSO algorithm with different types of random values will be studied in Section 3 in detail.

3. Particle Swarm Optimization Algorithm with Different Types of Random Values

3.1. Random Values with Uniform Distribution in the Range of [0, 1]

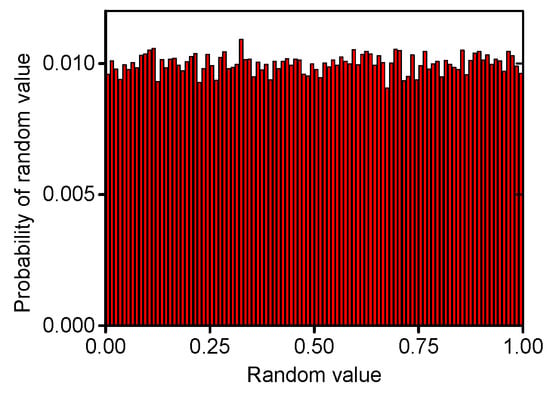

In the traditional PSO algorithm, the random values and are generated by uniform distribution in the range of [0, 1] (). As shown in Figure 2, the probability of each random value is similar in the range.

Figure 2.

Random values with uniform distribution in the range of [0, 1].

3.2. Random Values with Uniform Distribution in the Range of [−1, 1]

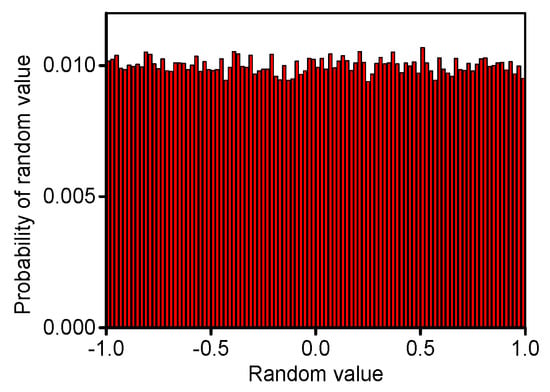

In order to expand the range of random values, the random values and are generated by uniform distribution in the range of [−1, 1] () for the PSO algorithm. As shown in Figure 3, the probability of each random value is similar in the range.

Figure 3.

Random values with uniform distribution in the range of [−1, 1].

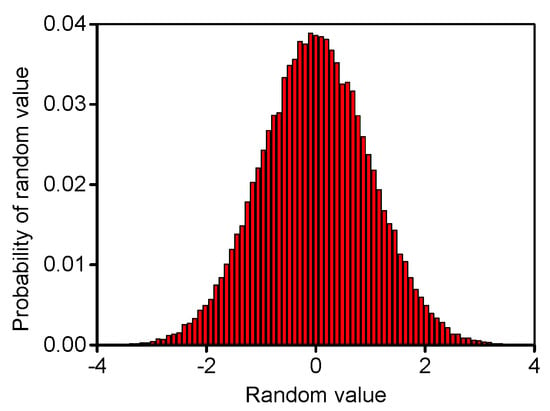

3.3. Random Values with Gauss Distribution

In order to expand the range of random values and change their probability, the random values and are generated by Gauss distribution with mean 0, and variance 1 () for the PSO algorithm. As shown in Figure 4, the probability of each random value is different and symmetrically distributes about . Furthermore, its probability is increased with decreasing the absolute value of random number.

Figure 4.

Random values with Gauss distribution.

4. Experiments and Analysis

4.1. Experimental Setup

In order to investigate the performances of PSO algorithms with different types of random values, some commonly used benchmark functions are adopted and shown in Table 1. The dimensions of search space are 10, 30 and 100 in this study. The standard PSO algorithm is selected to investigate the effects of random values. In addition, because the linear decreasing inertia weight (LDIW) PSO algorithm has a better global search ability in starting phase to help the algorithm converge to an area quickly and a stronger local search ability in the latter phase to obtain high precision value, so the LDIW PSO algorithm is also utilized to study the effects of random values. Moreover, although the effects of setting parameters on deterministic PSO algorithm have been studied [63], the deterministic PSO algorithm (r = 0.5) is adopted to compare with standard PSO and LDIW-PSO algorithms.

Table 1.

Benchmark functions.

To have a fair comparison, the parameter settings of all algorithms are same. In this study, the population size is 100, and the maximum number of function evaluations is 10,000. The parameters and are all 2. For standard PSO algorithm, the inertia weight is 0.7. For LDIW-PSO algorithm, and are 0.9 and 0.4, respectively. In order to eliminate random discrepancy, the results of all experiments are averaged over 30 independent runs.

4.2. Experimental Results and Comparisons

For some benchmark functions, the comparisons of standard PSO algorithm with different types of random values are shown in Table 2. The bold numbers indicate the best solutions for each test function in the certain space dimension. Obviously, the performances of deterministic PSO algorithm (r = 0.5) are the worst for all the benchmark functions. This is because the random values are deterministic which decreases the diversity of particles. For the random values generated by ,the standard PSO algorithm can only obtain the optimal solutions of Sphere1, Sphere2, Alpine and Moved axis parallel hyper-ellipsoid when the space dimension is 10, but this algorithm is useless for other test functions or higher space dimensions. However, for the random values generated by or , the standard PSO algorithm can obtain the optimal solutions of all test functions in every space dimension except Rosenbrock. In the low space dimension (10), the best solution of Rosenbrock is obtained by the standard PSO algorithm with random values distributed in . However, in the high space dimension (30 or 100), its best solution is obtained by the standard PSO algorithm with random values generated by or . In addition, the best solutions of Levy and Montalvo 2 (30 dimensions) and Sinusoidal (100 dimensions) are also obtained by the standard PSO algorithm with random values distributed in . Obviously, the random values make an important effect on the performance of standard PSO algorithm, and its performance is highly improved when the range of random value is expanded. This implies that the standard PSO algorithm with large-scale random values can avoid falling into local optima and obtain the global optima.

Table 2.

Comparisons of standard particle swarm optimization (PSO) algorithm with different types of random values for benchmark functions.

The comparisons of LDIW-PSO algorithm with different types of random values for benchmark functions are shown in Table 3. In addition, the bold numbers indicate the best solutions for each test function in the certain space dimension. For all the benchmark functions, the performances of deterministic LDIW-PSO algorithm (r = 0.5) are also the worst. For the and , the performance of LDIW-PSO algorithm is similar with that of standard PSO algorithm. However, for the random values distributed in , the performance of LDIW-PSO algorithm is improved compared to the standard PSO algorithm, which is also reported in some references [5,20,45,46]. It should be noted that the LDIW-PSO algorithm with random values generated by can obtain the optimal solutions of all test function in low space dimension (10 and 30) except Sphere2, Rotated Expanded Scaffer and Schwefel. In addition, for Levy and Montalvo 2, Sinusoidal and Alpine in 30 dimensions, the performance of LDIW-PSO algorithm with random values generated by is better than that with random values generated by or . However, in the high space dimension (100), the LDIW-PSO algorithm with random values generated by cannot obtain the optimal solutions of these test functions, which implies that this algorithm is useless for the problems in high dimension space. Therefore, the performance of improved PSO (LDIW-PSO) algorithm is also influenced by the random values, especially for solving the problems in high dimension space. Furthermore, the LDIW-PSO algorithm with a wide range of random values is more beneficial to escape from local optima and obtain the global optima.

Table 3.

Comparisons of LDIW-PSO algorithm with different types of random values for benchmark functions.

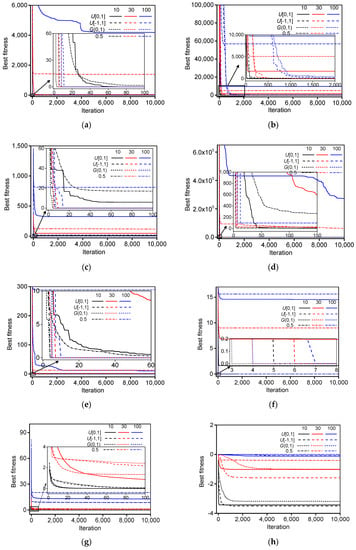

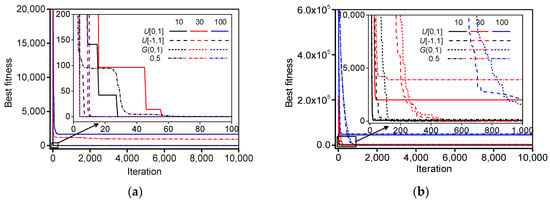

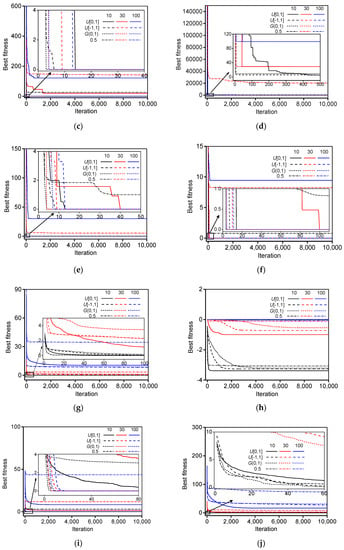

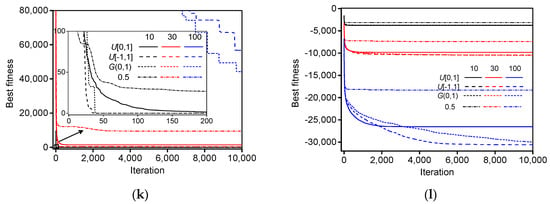

Figure 5 shows the mean best fitness of the standard PSO algorithm with different types of random values for benchmark functions. For the same type of random values, the performance of the standard PSO algorithm improves with decreasing the space dimension, and the convergence velocity also improves with decreasing the space dimension. For the same space dimension of each test function, the performances of deterministic PSO algorithm (r = 0.5) are the worst. However, the performances of standard PSO algorithm with random values generated by or are the best for the most benchmark functions. In the low dimension (10 and 30), the performances of standard PSO algorithm with random values generated by and are the best for Levy and Montalvo 2 and Sinusoidal, respectively. The performance of the standard PSO algorithm with random values distributed in is slightly worse than that of the standard PSO algorithm with random values distributed in . In addition, the global optima can be obtained within 50 iterations by the standard PSO algorithm with random values generated by or . This indicates that the standard PSO algorithm with large-scale random values can more quickly obtain the global optima.

Figure 5.

The mean best fitness of standard PSO algorithm with different types of random values for benchmark functions: (a) Sphere1; (b) Sphere2; (c) Rastrigin; (d) Rosenbrock; (e) Griewank; (f) Ackley; (g) Levy and Montalvo 2; (h) Sinusoidal; (i) Rotated Expanded Scaffer; (j) Alpine; (k) Moved axis parallel hyper-ellipsoid; (l) Schwefel. (The solid, dash, short dash and short dash dot lines represent the random values generated by uniform distribution in the ranges of [0, 1] and [−1, 1], Gauss distribution, and 0.5, respectively; the black, red and blue lines represent the space dimensions 10, 30, and 100, respectively).

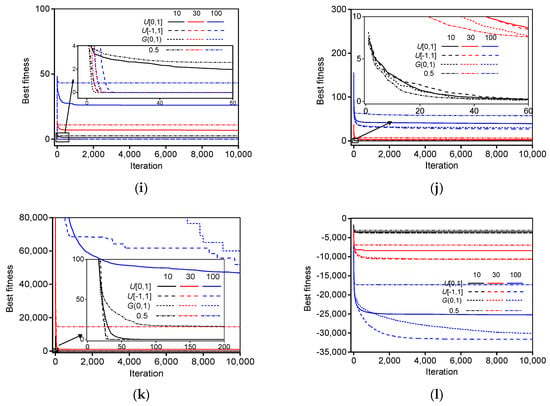

The mean best fitness of the LDIW-PSO algorithm with different types of random values for benchmark functions are shown in Figure 6. In addition, the performance and convergence velocity of the LDIW-PSO algorithm are all improved with decreasing the space dimension for the same random values of each test function. When the random values are generated by , compared to the standard PSO algorithm, the LDIW-PSO algorithm has better performance. In the space dimensions 10 and 30, the global optima of some test functions can be obtained by the LDIW-PSO algorithm with random values are generated by , but its convergence velocity is slower than that of the LDIW-PSO algorithm with random values generated by or . Moreover, in the low dimension (10 and 30), the performance of the LDIW-PSO algorithm with random values generated by is the best for Levy and Montalvo 2, Sinusoidal and Alpine. When the space dimension is 100, the global optima cannot be obtained by the LDIW-PSO algorithm with random values distributed in , but can be obtained by the LDIW-PSO algorithm with random values distributed in or . This implies that the LDIW-PSO algorithm with large-scale random values can be more likely to obtain the global optima with less iterations.

Figure 6.

The mean best fitness of LDIW-PSO algorithm with different types of random values for benchmark functions: (a) Sphere1; (b) Sphere2; (c) Rastrigin; (d) Rosenbrock; (e) Griewank; (f) Ackley; (g) Levy and Montalvo 2; (h) Sinusoidal; (i) Rotated Expanded Scaffer; (j) Alpine; (k) Moved axis parallel hyper-ellipsoid; (l) Schwefel. (The solid, dash, short dash and short dash dot lines represent the random values generated by uniform distribution in the ranges of [0, 1] and [−1, 1], Gauss distribution, and 0.5, respectively; the black, red and blue lines represent the space dimensions 10, 30, and 100, respectively).

4.3. Application and Analysis

4.3.1. Application in Engineering Problem

The pressure vessel design, which was initially introduced by Sandgren [64], is a real world engineering problem. There are four involved variables, including the thickness (x1), thickness of the head (x2), the inner radius (x3), and the length of the cylindrical section of the vessel (x4). The highly constrained problem of pressure vessel design can be expressed as,

where x1 and x2 are integer multipliers of 0.0625. x3 and x4 are continuous variables in the ranges of 40 ≤ x3 ≤ 80 and 20 ≤ x4 ≤ 60. In this study, the standard PSO and LDIW-PSO algorithms with different types of random values are utilized to solve this engineering problem.

The parameters of standard PSO and LDIW-PSO algorithms for the engineering problem are the same as those for benchmark functions. In order to eliminate random discrepancy, the results are averaged over 30 independent runs. The optimization results are shown in Table 4. Obviously, the results of all algorithms are similar. However, the performances of LDIW-PSO algorithm random values generated by and are slightly poorer than those of other algorithms. This is because the pressure vessel design is a low dimensional optimization problem. Although the random values generated by or are beneficial to improve the diversity of particles, the local searching ability may be decreased due to the finite particles.

Table 4.

Comparisons of standard PSO and LDIW-PSO algorithms with different types of random values for pressure vessel design.

4.3.2. Analysis

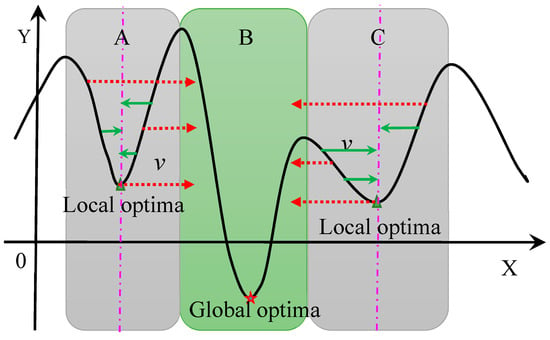

According to the experimental results and comparisons, it can be concluded that the performances of standard PSO and LDIW-PSO algorithms are all highly improved by expanding the range of random values. This is because that the large-scale random values are helpful in increasing the velocity of particles, and then the particles avoid falling into the local optima. As shown in Figure 7, in the local optima areas (A or C), if the velocity cannot be increased or its direction cannot be changed, the particle will gradually fall into the local optima and cannot jump out. However, if the velocity of particle can be increased or changed according to a certain probability, the global optima will be obtained more easily and quickly.

Figure 7.

Schematic diagram of particles’ velocity.

For the standard PSO and LDIW-PSO algorithms, if the random values are set as 0.5 or generated by , the diversity of particle is decreased, and the variation range of particle velocity is limited in a narrow band. Therefore, the probability of escaping local optima is very small. This is because the particle velocity gradually tends to 0 when the particle falls into local optima. In addition, the random value is a positive/negative number, which may lead to the monotonous variation of particle velocity. This also decreases the possibility of escaping local optima. However, the PSO algorithms with large-scale random values (distributed in or ) can overcome these problems to some extent. Furthermore, for a low dimensional practical optimization problem, the random values generated by or can improve the diversity of particles, but the local searching ability may be decreased due to the finite particles. However, keeping the balance between local search and global search is very important for the performances of these PSO algorithms. So, the PSO algorithm with random values distributed in and deterministic PSO algorithm (r = 0.5) have better local searching ability for some low dimensional optimization problems.

5. Conclusions

In this paper, the standard PSO algorithm and one of its modifications (LDIW-PSO algorithm) are adopted to study and analyze the influences of random values generated by uniform distribution in the ranges of [0, 1] and [−1, 1], Gauss distribution with mean 0 and variance 1 (, and ). In addition, the deterministic PSO algorithm, in which the random values are set as 0.5, is also investigated in this study. Some benchmark functions and the pressure vessel design problem are utilized to test and compare the performances of two PSO algorithms with different types of random values in three space dimensions (10, 30, and 100). The experimental results show that the performances of deterministic PSO algorithms are the worst. Moreover, the performances of two PSO algorithms with random values generated by or are much better than that of the algorithms with random values generated by for most benchmark functions. In addition, the convergence velocities of the algorithms with random values distributed in or are much faster than that of the algorithms with random values distributed in . It is concluded that the PSO algorithms with large-scale random values can effectively avoid falling into the local optima and quickly obtain the global optima. However, for a low dimensional practical optimization problem, the random values generated by or are beneficial to improve the global searching ability, but the local searching ability may be decreased due to the finite particles.

Acknowledgments

This work was supported by the National Key Research and Development Program of China (Grant No. 2017YFB0701700), Educational Commission of Hunan Province of China (Grant No. 16c1307) and Innovation Foundation for Postgraduate of Hunan Province of China (Grant No. CX2016B045).

Author Contributions

Hou-Ping Dai and Dong-Dong Chen conceived and designed the experiments; Hou-Ping Dai performed the experiments; Hou-Ping Dai and Dong-Dong Chen analyzed the data; Zhou-Shun Zheng contributed reagents/materials/analysis tools; Dong-Dong Chen wrote the paper. All authors have read and approved the final manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Kennedy, J.; Eberhart, R.C. Particle swarm optimization. In Proceedings of the IEEE International Conference on Neuron Networks, Perth, WA, Australia, 27 November–1 December 1995; pp. 1942–1948. [Google Scholar]

- Wang, Y.; Li, B.; Weise, T.; Wang, J.Y.; Yuan, B.; Tian, Q.J. Self-adaptive learning based particle swarm optimization. Inf. Sci. 2011, 180, 4515–4538. [Google Scholar] [CrossRef]

- Liang, J.J.; Qin, A.K.; Suganthan, P.N.; Baskar, S. Comprehensive learning particleswarm optimizer for global optimization of multimodal functions. IEEE Trans. Evol. Comput. 2006, 10, 281–295. [Google Scholar] [CrossRef]

- Chen, D.B.; Zhao, C.X. Particle swarm optimization with adaptive population size and its application. Appl. Soft Comput. 2009, 9, 39–48. [Google Scholar] [CrossRef]

- Xu, G. An adaptive parameter tuning of particle swarm optimization algorithm. Appl. Math. Comput. 2013, 219, 4560–4569. [Google Scholar] [CrossRef]

- Mirjalili, S.A.; Hashim, S.Z.M.; Sardroudi, H.M. Training feedforward neural networks using hybrid particle swarm optimization and gravitational search algorithm. Appl. Math. Comput. 2012, 218, 11125–11137. [Google Scholar] [CrossRef]

- Ren, C.; An, N.; Wang, J.; Li, L.; Hu, B.; Shang, D. Optimal parameters selection for BP neural network based on particle swarm optimization: A case study of wind speed forecasting. Knowl. Based Syst. 2014, 56, 226–239. [Google Scholar] [CrossRef]

- Zhang, J.R.; Zhang, J.; Lok, T.M.; Lyu, M.R. A hybrid particle swarmoptimization–back-propagation algorithm for feedforward neural network training. Appl. Math. Comput. 2007, 185, 1026–1037. [Google Scholar]

- Das, G.; Pattnaik, P.K.; Padhy, S.K. Artificial Neural Network trained by Particle Swarm Optimization for non-linear channel equalization. Expert Syst. Appl. 2014, 41, 3491–3496. [Google Scholar] [CrossRef]

- Lin, C.J.; Chen, C.H.; Lin, C.T. A hybrid of cooperative particle swarm optimization and cultural algorithm for neural fuzzy networks and its prediction applications. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2009, 39, 55–68. [Google Scholar]

- Juang, C.F.; Hsiao, C.M.; Hsu, C.H. Hierarchical cluster-based multispecies particle-swarm optimization for fuzzy-system optimization. IEEE Trans. Fuzzy Syst. 2010, 18, 14–26. [Google Scholar] [CrossRef]

- Kuo, R.J.; Hong, S.Y.; Huang, Y.C. Integration of particle swarm optimization-based fuzzy neural network and artificial neural network for supplier selection. Appl. Math. Model. 2010, 34, 3976–3990. [Google Scholar] [CrossRef]

- Tang, Y.; Ju, P.; He, H.; Qin, C.; Wu, F. Optimized control of DFIG-based wind generation using sensitivity analysis and particle swarm optimization. IEEE Trans. Smart Grid 2013, 4, 509–520. [Google Scholar] [CrossRef]

- Sui, X.; Tang, Y.; He, H.; Wen, J. Energy-storage-based low-frequency oscillation damping control using particle swarm optimization and heuristic dynamic programming. IEEE Trans. Power Syst. 2014, 29, 2539–2548. [Google Scholar] [CrossRef]

- Jiang, H.; Kwong, C.K.; Chen, Z.; Ysim, Y.C. Chaos particle swarm optimization and T–S fuzzy modeling approaches to constrained predictive control. Expert Syst. Appl. 2012, 39, 194–201. [Google Scholar] [CrossRef]

- Moharam, A.; El-Hosseini, M.A.; Ali, H.A. Design of optimal PID controller using hybrid differential evolution and particle swarm optimization with an aging leader and challengers. Appl. Soft Comput. 2016, 38, 727–737. [Google Scholar] [CrossRef]

- Arumugam, M.S.; Rao, M.V.C. On the improved performances of the particle swarm optimization algorithms with adaptive parameters, cross-over operators and root mean square (RMS) variants for computing optimal control of a class of hybrid systems. Appl. Soft Comput. 2008, 8, 324–336. [Google Scholar] [CrossRef]

- Pehlivanoglu, Y.V. A new particle swarm optimization method enhanced with a periodic mutation strategy and neural networks. IEEE Trans. Evolut. Comput. 2013, 17, 436–452. [Google Scholar] [CrossRef]

- Ratnaweera, A.; Halgamuge, S.; Waston, H. Self-organizing hierarchical particle optimizer with time-varying acceleration coefficients. IEEE Trans. Evol. Comput. 2004, 8, 240–255. [Google Scholar] [CrossRef]

- Shi, Y.H.; Eberhart, R.C. A modified particle swarm optimizer. In Proceedings of the IEEE International Conference on Computational Intelligence, Anchorage, AK, USA, 4–9 May 1998; pp. 69–73. [Google Scholar]

- Xing, J.; Xiao, D. New Metropolis coefficients of particle swarm optimization. In Proceedings of the IEEE Chinese Control and Decision Conference, Yantai, China, 2–4 July 2008; pp. 3518–3521. [Google Scholar]

- Taherkhani, M.; Safabakhsh, R. A novel stability-based adaptive inertia weight for particle swarm optimization. Appl. Soft Comput. 2016, 38, 281–295. [Google Scholar] [CrossRef]

- Nickabadi, A.; Ebadzadeh, M.M.; Safabakhsh, R. A novel particle swarm optimization algorithm with adaptive inertia weight. Appl. Soft Comput. 2011, 11, 3658–3670. [Google Scholar] [CrossRef]

- Zhang, L.; Tang, Y.; Hua, C.; Guan, X. A new particle swarm optimization algorithm with adaptive inertia weight based on Bayesian techniques. Appl. Soft Comput. 2015, 28, 138–149. [Google Scholar] [CrossRef]

- Hu, M.; Wu, T.; Weir, J.D. An adaptive particle swarm optimization with multiple adaptive methods. IEEE Trans. Evol. Comput. 2013, 17, 705–720. [Google Scholar] [CrossRef]

- Shi, X.H.; Liang, Y.C.; Lee, H.P.; Lu, C.; Wang, L.M. An improved GA and a novel PSOGA-based hybrid algorithm. Inf. Process. Lett. 2005, 93, 255–261. [Google Scholar] [CrossRef]

- Zhang, J.; Sanderson, A.C. JADE: Adaptive differential evolution with optional external archive. IEEE Trans. Evol. Comput. 2009, 13, 945–958. [Google Scholar] [CrossRef]

- Mousa, A.A.; El-Shorbagy, M.A.; Abd-El-Wahed, W.F. Local search based hybrid particle swarm optimization algorithm for multiobjective optimization. Swarm Evol. Comput. 2012, 3, 1–14. [Google Scholar] [CrossRef]

- Liu, Y.; Niu, B.; Luo, Y. Hybrid learning particle swarm optimizer with genetic disturbance. Neurocomputing 2015, 151, 1237–1247. [Google Scholar] [CrossRef]

- Duan, H.B.; Luo, Q.A.; Shi, Y.H.; Ma, G.J. Hybrid Particle Swarm Optimization and Genetic Algorithm for Multi-UAV Formation Reconfiguration. IEEE Computat. Intell. Mag. 2013, 8, 16–27. [Google Scholar] [CrossRef]

- Epitropakis, M.G.; Plagianakos, V.P.; Vrahatis, M.N. Evolving cognitive and social experience in particle swarm optimization through differential evolution: A hybrid approach. Inf. Sci. 2012, 216, 50–92. [Google Scholar] [CrossRef]

- Blackwell, T.; Branke, J. Multiswarms, exclusion, and anti-convergence in dynamic environments. IEEE Trans. Evol. Comput. 2006, 10, 459–472. [Google Scholar] [CrossRef]

- Parrott, D.; Li, X. Locating and tracking multiple dynamic optima by a particle swarm model using speciation. IEEE Trans. Evol. Comput. 2006, 10, 440–458. [Google Scholar] [CrossRef]

- Li, C.; Yang, S. A clustering particle swarm optimizer for dynamic optimization. In Proceedings of the 2009 Congress on Evolutionary Computation, Trondheim, Norway, 18–21 May 2009; pp. 439–446. [Google Scholar]

- Kamosi, M.; Hashemi, A.B.; Meybodi, M.R. A new particle swarm optimization algorithm for dynamic environments. In Proceedings of the 2010 Congress on Swarm, Evolutionary, and Memetic Computing, Chennai, India, 16–18 December 2010; pp. 129–138. [Google Scholar]

- Du, W.; Li, B. Multi-strategy ensemble particle swarm optimization for dynamic optimization. Inf. Sci. 2008, 178, 3096–3109. [Google Scholar] [CrossRef]

- Dong, D.M.; Jie, J.; Zeng, J.C.; Wang, M. Chaos-mutation-based particle swarm optimizer for dynamic environment. In Proceedings of the 2008 Conference on Intelligent System and Knowledge Engineering, Xiamen, China, 17–19 November 2008; pp. 1032–1037. [Google Scholar]

- Cui, X.; Potok, T.E. Distributed adaptive particle swarm optimizer in dynamic environment. In Proceedings of the 2007 Conference on Parallel and Distributed Processing Symposium, Rome, Italy, 26–30 March 2007; pp. 1–7. [Google Scholar]

- De, M.K.; Slawomir, N.J.; Mark, B. Stochastic diffusion search: Partial function evaluation in swarm intelligence dynamic optimization. In Stigmergic Optimization; Springer: Berlin/Heidelberg, Germany, 2006; pp. 185–207. [Google Scholar]

- Janson, S.; Middendorf, M. A hierarchical particle swarm optimizer for noisy and dynamic environments. Genet. Program. Evol. Mach. 2006, 7, 329–354. [Google Scholar] [CrossRef]

- Zheng, X.; Liu, H. A different topology multi-swarm PSO in dynamic environment. In Proceedings of the 2009 Conference on Medicine & Education, Jinan, China, 14–16 August 2009; pp. 790–795. [Google Scholar]

- Shi, Y.H.; Eberhart, R.C. Parameter selection in particle swarm optimization. In Proceedings of the 7th Annual International Conference on Evolutionary Programming, San Diego, CA, USA, 25–27 March 1998; pp. 591–601. [Google Scholar]

- Eberhart, R.C.; Shi, Y.H. Tracking and optimizing dynamic systems with particle swarms. In Proceedings of the 2001 Congress on Evolutionary Computation, Seoul, Korea, 27–30 May 2001; pp. 81–86. [Google Scholar]

- Yang, C.; Gao, W.; Liu, N.; Song, C. Low-discrepancy sequence initialized particle swarm optimization algorithm with high-order nonlinear time-varying inertia weight. Appl. Soft Comput. 2015, 29, 386–394. [Google Scholar] [CrossRef]

- Shi, Y.H.; Eberhart, R.C. Empirical study of particle swarm optimization. In Proceedings of the 1999 Congress on Evolutionary Computation, Washington, DC, USA, 6–9 July 1999; pp. 1945–1950. [Google Scholar]

- Eberhart, R.C.; Shi, Y.H. Comparing inertia weights and constriction factors in particle swarm optimization. In Proceedings of the IEEE Congress on Evolutionary Computation, La Jolla, CA, USA, 16–19 July 2000; pp. 84–88. [Google Scholar]

- Chatterjee, A.; Siarry, P. Nonlinear inertia weight variation for dynamic adaptation in particle swarm optimization. Comput. Oper. Res. 2006, 33, 859–871. [Google Scholar] [CrossRef]

- Feng, Y.; Teng, G.F.; Wang, A.X.; Yao, Y.M. Chaotic inertia weight in particle swarm optimization. In Proceedings of the 2nd International Conference on Innovative Computing, Information and Control, Kumamoto, Japan, 5–7 September 2007; p. 475. [Google Scholar]

- Fan, S.K.S.; Chiu, Y.Y. A decreasing inertia weight particle swarm optimizer. Eng. Optimiz. 2007, 39, 203–228. [Google Scholar] [CrossRef]

- Jiao, B.; Lian, Z.; Gu, X. A dynamic inertia weight particle swarm optimization algorithm. Chaos Solitons Fract. 2008, 37, 698–705. [Google Scholar] [CrossRef]

- Lei, K.; Qiu, Y.; He, Y. A new adaptive well-chosen inertia weight strategy to automatically harmonize global and local search ability in particle swarm optimization. In Proceedings of the 1st International Symposium on Systems and Control in Aerospace and Astronautics, Harbin, China, 19–21 January 2006; pp. 977–980. [Google Scholar]

- Yang, X.; Yuan, J.; Mao, H. A modified particle swarm optimizer with dynamic adaptation. Appl. Math. Comput. 2007, 189, 1205–1213. [Google Scholar] [CrossRef]

- Panigrahi, B.K.; Pandi, V.R.; Das, S. Adaptive particle swarm optimization approach for static and dynamic economic load dispatch. Energ. Convers. Manag. 2008, 49, 1407–1415. [Google Scholar] [CrossRef]

- Suresh, K.; Ghosh, S.; Kundu, D.; Sen, A.; Das, S.; Abraham, A. Inertia-adaptiveparticle swarm optimizer for improved global search. In Proceedings of the Eighth International Conference on Intelligent Systems Design and Applications, Kaohsiung, Taiwan, 26–28 November 2008; pp. 253–258. [Google Scholar]

- Tanweer, M.R.; Suresh, S.; Sundararajan, N. Self-regulating particle swarm optimization algorithm. Inf. Sci. 2015, 294, 182–202. [Google Scholar] [CrossRef]

- Nakagawa, N.; Ishigame, A.; Yasuda, K. Particle swarm optimization using velocity control. IEEJ Trans. Electr. Inf. Syst. 2009, 129, 1331–1338. [Google Scholar] [CrossRef]

- Clerc, M.; Kennedy, J. The particle swarm: Explosion stability and convergence in a multi-dimensional complex space. IEEE Trans. Evol. Comput. 2002, 6, 58–73. [Google Scholar] [CrossRef]

- Iwasaki, N.; Yasuda, K.; Ueno, G. Dynamic parameter tuning of particle swarm optimization. IEEJ Trans. Electr. Electr. 2006, 1, 353–363. [Google Scholar] [CrossRef]

- Leong, W.F.; Yen, G.G. PSO-based multiobjective optimization with dynamic population size and adaptive local archives. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2008, 38, 1270–1293. [Google Scholar] [CrossRef] [PubMed]

- Rada-Vilela, J.; Johnston, M.; Zhang, M. Population statistics for particle swarm optimization: Single-evaluation methods in noisy optimization problems. Soft Comput. 2014, 19, 1–26. [Google Scholar] [CrossRef]

- Hsieh, S.T.; Sun, T.Y.; Liu, C.C.; Tsai, S.J. Efficient population utilization strategy for particle swarm optimizer. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2009, 39, 444–456. [Google Scholar] [CrossRef] [PubMed]

- Ruan, Z.H.; Yuan, Y.; Chen, Q.X.; Zhang, C.X.; Shuai, Y.; Tan, H.P. A new multi-function global particle swarm optimization. Appl. Soft Comput. 2016, 49, 279–291. [Google Scholar] [CrossRef]

- Serani, A.; Leotardi, C.; Iemma, U.; Campana, E.F.; Fasano, G.; Diez, M. Parameter selection in synchronous and asynchronous deterministic particle swarm optimization for ship hydrodynamics problems. Appl. Soft Comput. 2016, 49, 313–334. [Google Scholar] [CrossRef]

- Sandgren, E. Nonlinear integer and discrete programming in mechanical design optimization. J. Mech. Des. ASME 1990, 112, 223–229. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).