1. Introduction

Driven by the intelligent manufacturing strategy, the high-end equipment manufacturing industry is undergoing a significant transition from automated production lines to digitalized and intelligent factories. According to a recent systematic review published in Frontiers in Robotics and AI (2025), the core vision of next-generation smart factories has shifted from mere capacity expansion to Zero-Defect Manufacturing [

1,

2]. Recent research in ITEGAM-JETIA (2025) indicates that for critical fundamental components, such as carbonitrided 820CrMnTi gears subjected to high-cycle fatigue loads, micrometer-level dimensional deviations or surface micro-cracks can induce severe NVH (Noise, Vibration, and Harshness) issues, potentially leading to catastrophic mechanical failures [

3].

For a long time, precision dimensional measurement has relied heavily on contact-based Coordinate Measuring Machines (CMMs) or specialized pneumatic gauges. Although CMMs are recognized as the accuracy benchmark in metrology, their point-by-point scanning principle results in low throughput, typically requiring several minutes to complete a full-scale evaluation of a single part. This creates a massive time-domain gap with modern assembly lines that operate on a second-level production cycle. According to an industry analysis report released by Metrology News (2025), on high-throughput production lines, the inspection speed of CMMs is far below the production rate, resulting in over 90% of products effectively remaining in a “quality blind zone” [

4]. Furthermore, contact probes pose a risk of system error drift due to mechanical wear and can easily scratch the mirror-finished surfaces of precision-ground workpieces. Therefore, developing an online machine vision inspection system that is non-contact, high-throughput, and capable of achieving micrometer-level precision has become an urgent requirement to break through the quality and efficiency bottlenecks of high-end manufacturing.

Machine vision has performed exceptionally well in controlled environments; however, transplanting it to industrial scenarios involves bridging a significant gap from idealized laboratory models to complex on-site physical fields. This adaptability crisis fundamentally stems from the complex surface physics of materials, such as 820CrMnTi, after specific heat treatments (e.g., carbonitriding) and unstructured environmental noise. As pointed out by IntelLogic (2025) in research on automotive precision component inspection, the carbonitriding process creates anisotropic micro-textures, making these highly reflective metal surfaces prone to specular artifacts under strong industrial lighting. The reflectance characteristics of micro-textures fluctuate drastically with the observation angle, resulting in the simultaneous presence of dynamic “highlight overflow and deep dark dead zones” in the image [

5]. This extreme dynamic range far exceeds the linear response threshold of conventional industrial cameras. Recent research from the University of Hong Kong further indicates that under millisecond-level production cycles, traditional automatic exposure algorithms based on global average grayscale cannot adapt to such drastic local light intensity jumps, often causing key edge features to be swallowed by light spots or annihilated in dark areas [

6]. This directly violates the prior assumption of uniform edge grayscale gradient distribution in traditional visual algorithms, rendering gradient-based geometric measurements ineffective.

On the other hand, actual machining sites are filled with uncontrollable unstructured noise. Unlike dust-free laboratories, production line environments are accompanied by cutting fluid evaporation, oil splatters, and metal dust deposition. Zhao et al. quantitatively analyzed the nonlinear scattering effect of water fog or haze on optical path transmission by constructing an image restoration model in industrial scenarios [

7]. The study pointed out that random spots formed by oil stains and droplets on metal surfaces produce high-frequency texture interference similar to defects in images, causing a sharp drop in the local Signal-to-Noise Ratio. Even more challenging are the micrometer-level metal burrs remaining on part edges. Mjahad et al. used multi-scale statistical feature extraction methods to conduct deep clustering analysis on the microscopic topography of edge regions [

8]. The results showed that these non-functional defects are highly aliased with real workpiece edges in terms of geometric morphology and grayscale features. Mathematically, they no longer belong to Gaussian white noise but manifest as unpredictable Heavy-tailed Distribution outliers. This feature aliasing makes it difficult for traditional algorithms based on frequency-domain filtering or simple threshold segmentation (such as Sobel [

9] or Canny [

10]) to strip away interference while preserving real edges, constituting a strong noise background that is extremely difficult to separate.

To address industrial scenarios, sub-pixel edge localization algorithms are more frequently selected. These are mainly based on spatial moments (such as Zernike moments [

11]), polynomial interpolation, or Gaussian fitting. Tao et al. proposed the CIS algorithm based on stable edge regions. Although this significantly improves the positioning accuracy of ideal edges through local integral mapping, such methods generally rely on the prior assumption that edge grayscale follows a Gaussian distribution or an ideal step signal [

12]. Traditional Least Squares Estimation (LSE) exhibits extremely high sensitivity to outliers when processing such data. As pointed out by Huber et al. in robust estimation research, LSE uses mean square error as a loss function, which applies enormous penalty weights to anomalies far from the distribution center [

13]. This causes the fitted edge contour to deviate compromisingly toward burrs, thereby producing false dimensional out-of-tolerance results [

14]. This statistical mismatch between Gaussian-assumption-based mathematical models and industrial non-Gaussian noise is the fundamental reason for the instability of high-precision measurements.

In recent years, deep learning technologies represented by Convolutional Neural Networks (CNNs) and Transformers have achieved notable advancements in the field of industrial defect detection [

15]. According to a 2025 review in Frontiers in Robotics and AI, the anomaly recognition rate of data-driven end-to-end models in complex texture backgrounds has far exceeded that of traditional algorithms [

1]. However, in the field of dimensional metrology, deep learning still faces severe deployment challenges. The first is the lack of metrological traceability. Research by MDPI (2025) emphasizes that the core of precision measurement lies in the clear evaluation of measurement uncertainty [

16]. The “black box” nature of deep neural networks makes it difficult for engineers to analyze whether the dimensional deviation output by the network is caused by actual physical deformation of the work-piece or stems from the network weights over-fitting to specific lighting conditions. Secondly, to achieve sub-pixel segmentation within micrometer tolerances, high-computing-power deep networks (such as DeepLabV3+ [

17] or U-Net variants [

18]) are usually required. This often leads to inference delays that fail to meet the millisecond-level production beat of modern production lines [

19].

In the actual inspection of 820CrMnTi parts, minute burrs remaining from cutting and random oil stains constitute typical heavy-tailed distribution noise. For complex components like 820CrMnTi, which have high reflectivity, strong texture interference, and micrometer-level tolerance requirements, existing mainstream measurement methods still have a significant disconnect between physical robustness and metrological credibility.

In summary, there is an urgent need for a hybrid architecture that acts as a physics-informed optical characterization method, possessing the interpretability and traceability of physical models while also possessing the nonlinear error compensation capabilities of data-driven models. To address these industrial challenges, a Robust Geometric Feature Coupling Network (RGFCN) is proposed. The main contributions of this study are summarized as follows:

- (1)

A Programmatic Adaptive Exposure Control (PAEC) mechanism is proposed to dynamically suppress highlight overflow and dark dead zones at the physical imaging layer by utilizing real-time grayscale histogram feedback.

- (2)

An Adaptive Principal Axis Aligned Scanning (PAAS) strategy is designed to construct a dynamic follow-up coordinate system, effectively eliminating projection cosine errors induced by random workpiece poses.

- (3)

A Huber M-estimation robust fitting mechanism is integrated with Gaussian gradient energy fields to achieve statistical immunity to non-functional defects, such as minute burrs and oil stains.

- (4)

A Gradient Boosting Decision Tree (GBDT)-based implicit error compensation approach is developed to map and neutralize the non-linear errors introduced by lens distortion and mechanical assembly tilt.

- (5)

Comprehensive metrological performance validation is performed on over 1000 mass-produced 820CrMnTi parts, demonstrating a reduction in false reject rates by over 90% and proving 6-Sigma level process stability in complex industrial environments.

3. Dual-Drive Heterogeneous Coupling Architecture

To address the common challenges of unstable edge extraction caused by complex light field interference in industrial settings and the difficulty of accurately characterizing non-linear distortion fields using a single geometric pinhole model, this paper proposes a RGFCN that fuses physical geometric streams with data-driven streams.

As shown in

Figure 3, RGFCN abandons the end-to-end “black box” mode of traditional deep learning methods and constructs a serial coupling mechanism comprising explicit geometric deconstruction and implicit error compensation. Mathematically, this architecture can be formalized as the linear superposition of the physical measurement model

and the data correction model

:

where

is the final measurement value;

is the input image;

is the set of geometric parameters;

is the high-dimensional feature vector; and

is the coupling weight. In this architecture, since the GBDT directly predicts the residual error in physical metric units (millimeters),

acts as a deterministic scaling coefficient set to

under standard calibrated conditions, representing a direct additive compensation. Crucially, in uncalibrated extreme edge cases (e.g., input features drastically deviating from the training distribution),

functions as a confidence gate (

), gracefully degrading the system back to the pure physical-geometric model to ensure fail-safe measurement. The architecture specifically includes:

This branch serves as the “skeleton” of the system, aiming to guarantee the interpretability and physical robustness of the measurement. First, the PAEC mechanism is introduced to dynamically suppress highlight overflow at the physical imaging layer. This replaces traditional histogram equalization, ensuring the linearity and Signal-to-Noise Ratio of the raw signal. Second, the Adaptive Principal Axis Aligned Scanning strategy is utilized to construct a following coordinate system, eliminating projection errors introduced by random workpiece poses. Finally, by combining the Gaussian gradient energy field with Huber M-estimation, robust fitting is performed on noisy edges at the sub-pixel scale to output initial measurement values with statistical immunity characteristics.

This branch acts as the “muscle” of the system, aiming to break through the accuracy limits of physical models and solve the non-linear residual problem. First, a high-dimensional feature space is constructed. A multi-dimensional feature vector is extracted, including geometric coordinates, local gradient magnitude, and lens radial distance. Second, residual manifold learning is performed. Leveraging the powerful non-linear fitting capability of Ensemble GBDT, the system implicitly learns the complex error manifold formed by lens distortion, installation tilt, and sensor plane unevenness. Finally, implicit compensation is performed. The GBDT outputs the predicted system error value to apply micron-level reverse correction to the output of the geometric stream.

Through this heterogeneous coupling of the physical guarantee and the data refinement, the RGFCN architecture simultaneously achieves rotational invariance for random poses and global compensation for system non-linear errors at the underlying logic level. To clarify the explicit execution sequence, the algorithmic workflow of RGFCN is summarized in five sequential steps: (1) Image Acquisition and PAEC intervention to ensure raw signal linearity; (2) Pose estimation and PAAS execution to establish the orthogonal scanning coordinate system; (3) Sub-pixel robust edge extraction via Huber M-estimation and Gaussian fields to obtain the initial geometric measurement ; (4) Extraction of the 5D feature vector based on , followed by inference through the pre-trained GBDT to predict the systematic residual ; (5) Final dimension synthesis by coupling the two streams via Equation (18).

4. Results and Discussion

This section systematically validates the effectiveness of the RGFCN model in the online inspection of 820CrMnTi complex-shaped components through stratified experiments. The experimental logic follows a progressive sequence: imaging quality optimization, geometric pose adaptation, microscopic feature extraction, system error compensation, and comprehensive metrological performance verification. All experiments were conducted on the industrial vision platform described in

Section 4.1.

4.1. Experimental Setup

To achieve micron-level inspection in unstructured industrial environments, a machine vision inspection platform was constructed for this study. The system design adheres to the principle of prioritizing optical correction supplemented by algorithmic compensation, aiming to minimize imaging errors at the source.

A Hikvision 12-megapixel global shutter CMOS camera (pixel size 3.45 µm × 3.45 µm) was selected, paired with a high-resolution bi-telecentric lens. Addressing the non-Lambertian reflection and anisotropic textures exhibited by the carbonitrided surface, a specialized lighting method was designed. This light source leverages the annular diffuse emission characteristics of the square frame to achieve uniform illumination of the inspection surface, effectively eliminating specular reflection hotspots on the carbonitrided surface. The measurement process involved dynamic inspection with a conveyor belt speed of 65 mm/s. All algorithms were deployed on a standard industrial personal computer (CPU: Intel Core i5-1035NG7, Intel Corporation, Santa Clara, CA, USA) and implemented with parallel acceleration under the CPU version of the PyTorch (2.9.1) framework. The physical configuration of the experimental platform is shown in

Figure 4.

To ensure the ecological validity of the algorithm, data were continuously acquired across 5 independent production batches over a 24 h cycle, capturing day–night lighting fluctuations and varying degrees of oil adhesion. Crucially, to properly contextualize the metrological performance and the subsequent Process Capability () evaluation, the standard dimensional tolerance zone for the measured 820CrMnTi component is strictly defined as mm. This establishes the Lower Specification Limit (LSL) at 40.2 mm and the Upper Specification Limit (USL) at 40.8 mm.

The dataset comprises 1200 samples. Among these, 200 samples were selected via stratified random sampling to cover the full spectrum of lighting and contamination conditions, and were calibrated using a high-precision contact CMM (Carl Zeiss AG, Oberkochen, Germany) to serve as the ground truth. These 200 CMM-measured samples were strictly separated into 160 for training the GBDT model and 40 for rigorous testing. It was ensured that the specific defect morphologies and environmental conditions of the 40 test parts were strictly unseen by the model during the training phase.

4.2. Evaluation of Adaptive Imaging

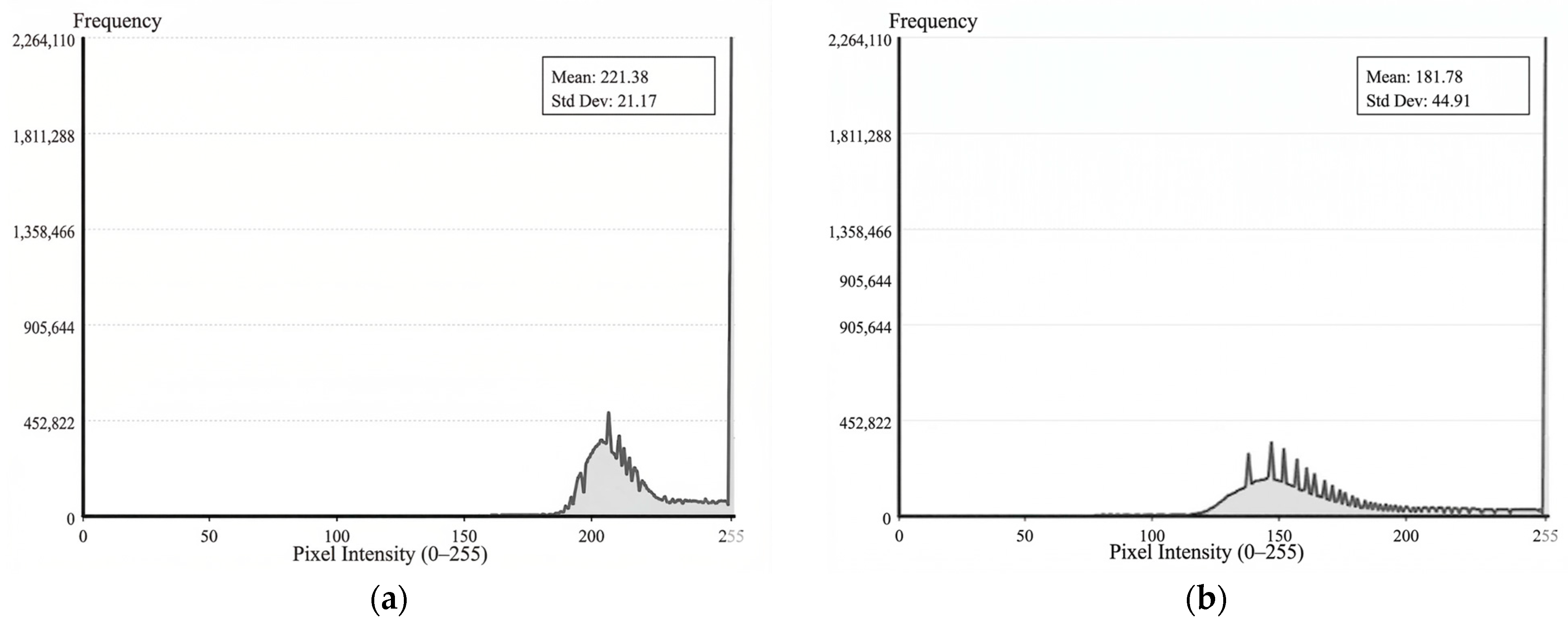

Figure 5a presents the grayscale statistical distribution prior to the activation of the PAEC mechanism, while

Figure 5b illustrates the distribution following its activation. Experimental results demonstrate that the PAEC mechanism significantly optimizes the grayscale histogram distribution of the image. In the absence of PAEC, specular reflection induced highlight overflow, causing a substantial accumulation of pixels in the 255 saturation region. The average grayscale value reached 221.38, with a standard deviation of merely 21.17, indicating severe compression of the dynamic range. Following the intervention of PAEC, the non-linear feedback control based on the

ratio successfully constrained highlight pixels to strictly within a 2% threshold. Consequently, the average grayscale value decreased to 181.78, effectively preventing the sensor from entering the non-linear saturation region.

The optimization of grayscale distribution directly determines the resolvability of edge gradients.

Figure 6 illustrates the specific impact of this improvement on measurement accuracy. Under overexposed conditions (

Figure 6a), the grayscale difference across the edge approaches zero, resulting in a gradient disappearance phenomenon. This leads to a significant angular deviation between the measurement line and the actual contour. Conversely, following PAEC optimization (

Figure 6b), the edge gradient is clearly restored, ensuring that subsequent algorithms can precisely capture sub-pixel contours.

4.3. Verification of Rotation Invariance

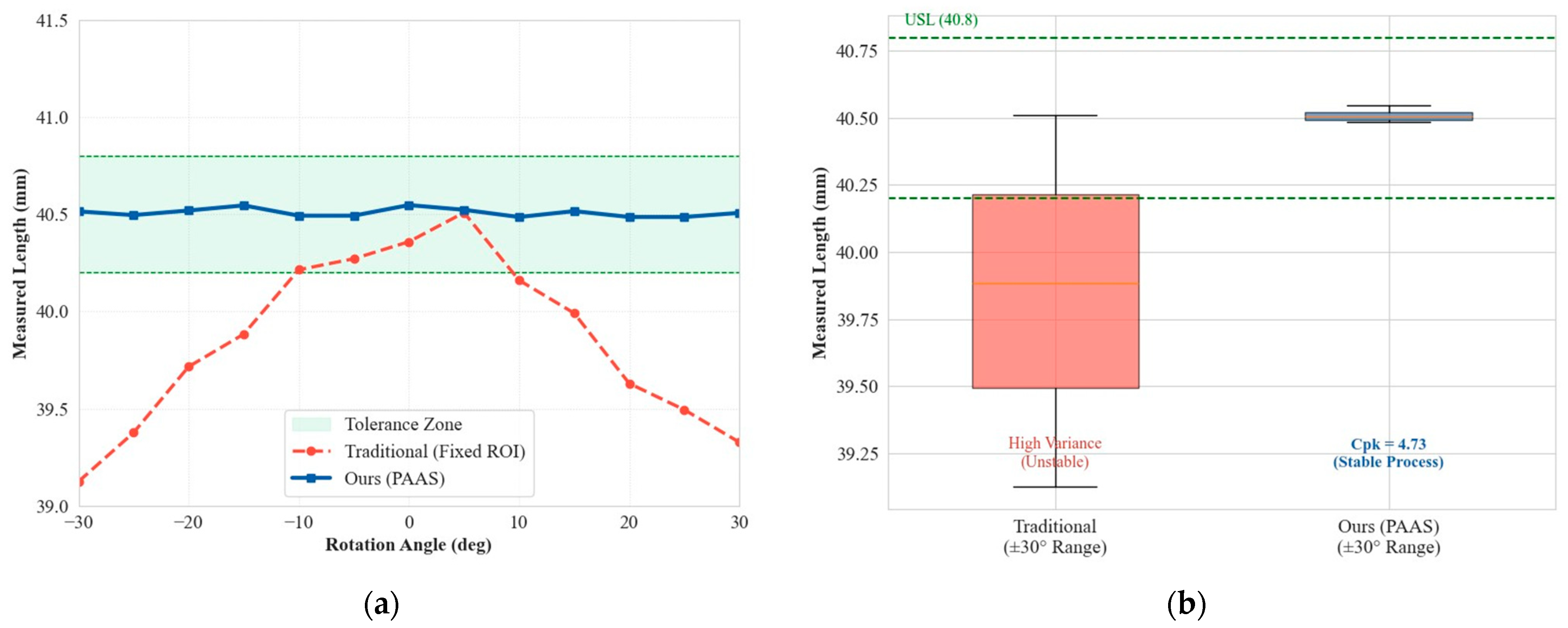

To address the issue of random part poses caused by industrial conveyor belt vibration, this section compares the traditional fixed ROI method with the Adaptive PAAS strategy proposed in this paper. The experiment involved rotating standard parts within the field of view from −30° to +30°.

Figure 7a–d illustrates the traditional method, which relies on a preset rectangular box. When the part rotates, the ROI fails to track the workpiece’s principal axis, resulting in severe misalignment of the detection box and even the introduction of background noise.

Figure 7e–h demonstrates the PAAS strategy, which, by constructing a following coordinate system and dynamically adjusting the scanning direction, ensures that the scanning lines remain orthogonal to the part edge at any angle, thereby achieving visual rotational invariance.

Figure 8 presents the quantitative analysis results of dimensional inspection under different angles. The measurement error of the traditional method varies significantly with the rotation angle

. When

, the data drops below the lower tolerance limit. In contrast, the measurement trajectory of the PAAS method approximates a horizontal straight line, consistently adhering to the target value of 40.5 mm. The statistical box plot shows that PAAS controls the measurement standard deviation at

mm, with a Process Capability Index (

) reaching 4.73, demonstrating that the system possesses 6-Sigma level process stability.

4.4. Sub-Pixel Positioning Precision

To transcend the limitations of physical pixel resolution, a comparative analysis was conducted between the traditional pixel-level algorithm (Canny operator) and the proposed sub-pixel positioning model based on gradient energy fields.

Figure 9 illustrates the edge fitting performance at the microscopic scale.

Constrained by physical resolution, edges detected by conventional methods exhibit distinct “staircase-like” aliasing (

Figure 9a), a phenomenon that fundamentally restricts measurement precision. Conversely, the gradient energy field model proposed herein achieves smooth, continuous sub-pixel fitting (

Figure 9b) by resolving the first-order moment centroid within the energy dispersion region, effectively suppressing high-frequency noise even in areas with rough edges.

The quantitative validation results of sub-pixel positioning precision are presented in

Figure 10. The standard deviation of the linearity error for the traditional method reaches

px, with drastic fluctuations exceeding 20 pixels observed at noise points. In contrast, the proposed method significantly reduces the linearity error to

px, representing an approximate 3.7-fold improvement in precision.

To quantitatively evaluate the sub-pixel positioning performance,

Figure 11 illustrates the linearity error metrics comparing the traditional Canny operator with the proposed Gaussian gradient energy field method. As shown in the performance comparison, the proposed sub-pixel method significantly reduces the standard deviation of the linearity error from 7.53 pixels to 2.05 pixels. Considering the camera’s physical pixel size of 3.45 μm, this corresponds to a sub-pixel precision improvement from approximately 25.98 μm to 7.07 μm. Furthermore, the maximum observed error is strongly suppressed from 21.4 pixels to merely 4.8 pixels, demonstrating exceptional boundary tracking stability even under high-frequency noise interference.

4.5. Robustness Mechanism Against Defects

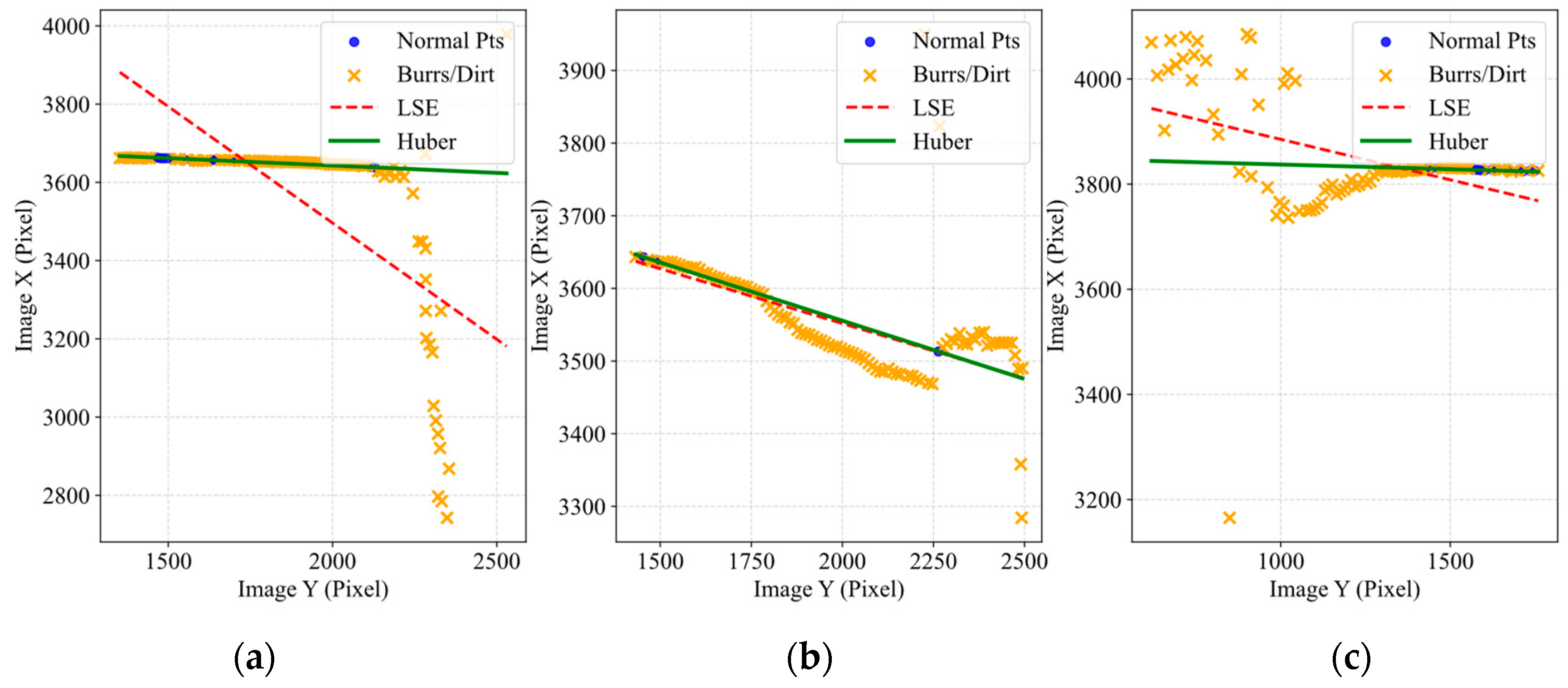

Targeting the common interference caused by burrs and oil stains on the edges of 820CrMnTi components, this section validates the defect immunity capability of the Huber M-estimation mechanism.

Figure 12 visually demonstrates the trajectory differences between Huber estimation and traditional LSE when processing defective edges. The red dashed line (LSE) is deflected by burrs. The green solid line (Huber) recovers the true edge. Due to the extreme sensitivity of LSE to outliers, the fitting line is strongly drawn towards the burrs, resulting in a deflection. This leads to a false measurement error of approximately 0.5 mm. Conversely, the Huber algorithm automatically disregards outliers through a down-weighting mechanism, penetrating the interference zone to restore the true geometric topology of the component.

In a further microscopic analysis,

Figure 13 illustrates the fitting of microscopic point clouds under three typical operating conditions: extreme local abrupt changes, long-segment continuous interference, and discrete noise. In all scenarios, the Huber algorithm is able to robustly lock onto the main subject point set, thereby avoiding false out-of-tolerance errors caused by local defects.

The defect immunity capability of the Huber M-estimation mechanism is quantitatively reflected in the significant reduction in misclassification rates.

Figure 14 presents the statistical robustness validation based on a test batch containing severe non-functional defects, such as edge burrs and oil stains. Due to the leverage effect of heavy-tailed outliers, the traditional Least Squares Estimation (LSE) method is easily deflected, exhibiting a high false reject rate (out-of-tolerance) of 14.5% (0.145). In contrast, by adaptively down-weighting the outlier residuals, the proposed Huber M-estimation mechanism drastically reduces the false reject rate to 1.1% (0.011) and slightly lowers the false accept rate from 1.2% to 0.8%. This verifies the method’s statistical immunity to non-Gaussian defect noise in actual production environments.

4.6. Implicit Error Compensation via GBDT

GBDTs are employed to address non-linear residuals introduced by lens distortion and installation tilt.

Figure 15 displays the reconstruction results of the full-field error manifold. Under linear calibration, the error field exhibits significant bowl-shaped distortion and anisotropic distribution. Following GBDT intervention, this regular non-linear error is completely eliminated, and the residual field transforms into uniformly distributed random noise, demonstrating the model’s effective decoupling of the complex error manifold.

4.7. Overall Metrological Performance Verification

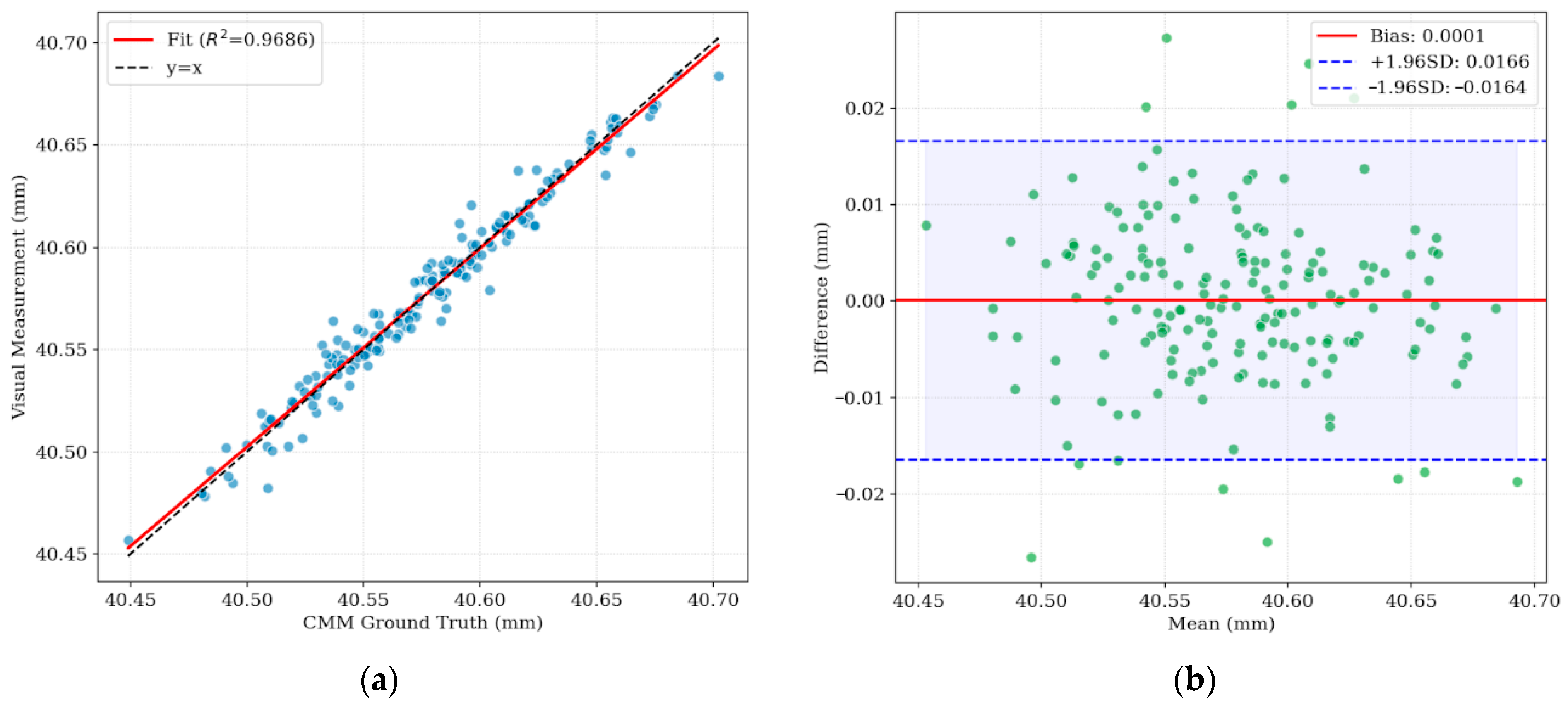

To comprehensively verify the metrological traceability and reliability of the RGFCN system in actual industrial scenarios, a full-range comparative analysis was conducted between the system measurement values and the ground truth values. The overall metrological performance evaluation results of the system are shown in

Figure 16.

Figure 16a illustrates the linear regression relationship between the visual measurement values and the ground truth values. The blue scatter points in the figure converge tightly around the ideal diagonal line

(black dashed line), without exhibiting obvious dispersion or non-linear deviation. Statistical analysis indicates a coefficient of determination (

) of 0.9686. This high correlation demonstrates that the RGFCN model maintains excellent linear responsivity throughout the entire measurement range, effectively eliminating the gain drift problem common in traditional visual measurement, and possesses the potential to replace contact measurement methods.

Figure 16b further analyzes the distribution characteristics of measurement residuals using a Bland–Altman plot. The blue dashed lines represent the 95% limits of agreement, ranging approximately from −0.0164 mm to 0.0166 mm. The results indicate that the vast majority of measurement differences fall precisely within this confidence interval, with an average bias (red solid line) as low as 0.0001 mm. The residual scatter points are uniformly distributed above and below the mean line, showing no funnel-shaped trend varying with size. This confirms the absence of significant systematic bias in the system.

5. Discussion and Limitations

The end-to-end computational time per frame for the proposed pipeline—encompassing PAEC dynamic imaging, PAAS coordinate following, Huber sub-pixel fitting, and GBDT correction—is approximately 20 ms. This processing speed seamlessly satisfies the practical 500 ms cycle requirement of modern high-throughput production lines, representing a massive throughput advantage over traditional CMMs.

While the system demonstrates excellent robustness for 820CrMnTi components, its generalizability is subject to certain limitations. The methodology relies heavily on a well-calibrated bi-telecentric optical setup and necessitates initial CMM-measured datasets to adequately train the GBDT error manifold. Furthermore, in scenarios of extreme contamination where heavy oil stains completely occlude the physical edges of the part, the geometric features cannot be recovered physically, potentially compromising the measurement accuracy. Future work will explore cross-material generalization and unsupervised error compensation to further enhance the system’s industrial adaptability.