Abstract

In recent years, Machine Learning (ML) has become a buzzword in the petroleum industry, with numerous applications which guide engineers in better decision making. The most powerful tool that most production development decisions rely on is reservoir simulation with applications in multiple modeling procedures, such as individual simulation runs, history matching and production forecast and optimization. However, all of these applications lead to considerable computational time and computer resource-associated costs, rendering reservoir simulators as not fast and robust enough, and thus introducing the need for more time-efficient and intelligent tools, such as ML models which are able to adapt and provide fast and competent results that mimic the simulator’s performance within an acceptable error margin. In a recent paper, the developed ML applications in a subsurface reservoir simulation were reviewed, focusing on improving the speed and accuracy of individual reservoir simulation runs and history matching. This paper consists of the second part of that study, offering a detailed review of ML-based Production Forecast Optimization (PFO). This review can assist engineers as a complete source for applied ML techniques in reservoir simulation since, with the generation of large-scale data in everyday activities, ML is becoming a necessity for future and more efficient applications.

1. Introduction

The primary objective of the petroleum industry and its applications is to discover and exploit oil and gas reserves to ensure affordable energy access, meet global energy demands, and maximize profits. Reservoir engineers rely on subsurface reservoir simulation as a vital tool to accomplish these goals, which plays a crucial role in gaining a comprehensive understanding of reservoir behavior, facilitating detailed analysis, and optimizing recovery processes. Simulation is used in various planning stages, reservoir development, and management to enhance the efficiency of extracting hydrocarbon reserves from underground reservoirs.

As discussed in detail in Part I [1] of the present review, reservoir simulation integrates principles from physics, mathematics, reservoir engineering, geoscience, and computer programming to model the hydrocarbon reservoir performance under various operating conditions. The reservoir simulator’s output, typically comprised of the spatial and temporal distribution of the pressure and phase saturation, is introduced to the simulation models of the following physical components in the hydrocarbon production chain, including those that produce fluids at the surface (wellbore) and process the reservoir fluids (surface facilities), thus allowing for the complete modeling system down to the sales point [2,3]. Reservoir simulators are the mathematical tools built to accurately predict all the physical fluid flow phenomena inside the reservoir with a reasonable error margin, thus acting as a “digital twin” of the physical system.

Simulators estimate the reservoir’s performance by solving the differential and algebraic equations derived from the integration of mass, momentum, and energy conservation together with thermodynamic equilibrium, which describe the multiphase fluid flow in porous media. By using numerical methods, typically finite volumes, these equations can be solved throughout the entire reservoir model for variables with space- and time-dependent characteristics, such as pressure, temperature, fluid saturation, etc., which are representative of the performance of a reservoir [3].

For this task, a static and a dynamic reservoir model must be first set up. A static reservoir model is a three-dimensional representation of a reservoir’s geological properties, such as the porosity, permeability, and rock type. It is created using geological, well, and seismic data together with a thorough interpretation, providing an approximate “snapshot” of the real reservoir at a specific time [4,5,6]. On the other hand, a dynamic reservoir model is a time-dependent simulation of fluid flow in the reservoir. It builds upon the static model by incorporating production history, fluid properties, and reservoir management strategies. The dynamic model is employed to predict reservoir behavior, optimize production, and assess various development scenarios, such as enhanced oil recovery techniques and the impact of drilling new wells.

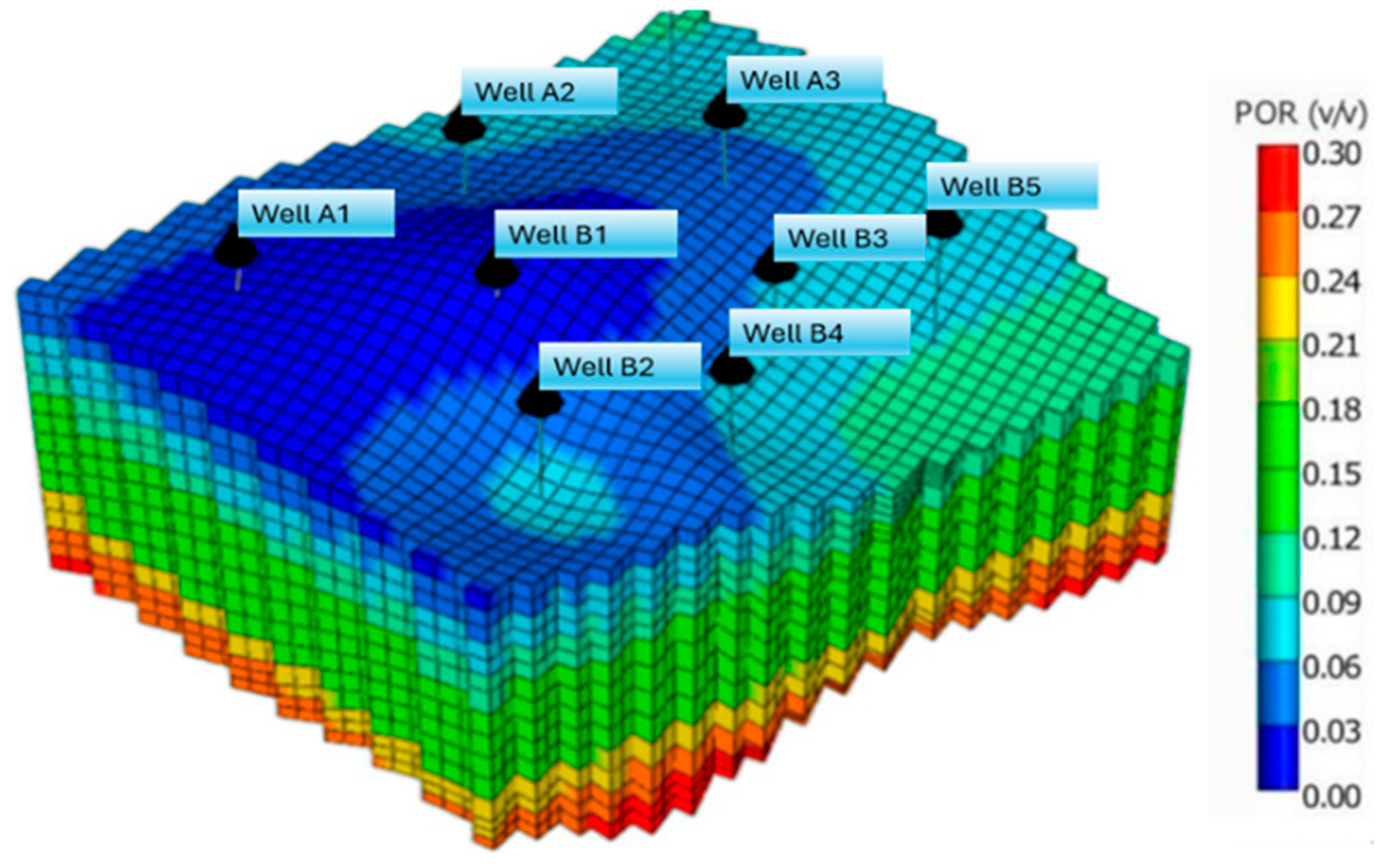

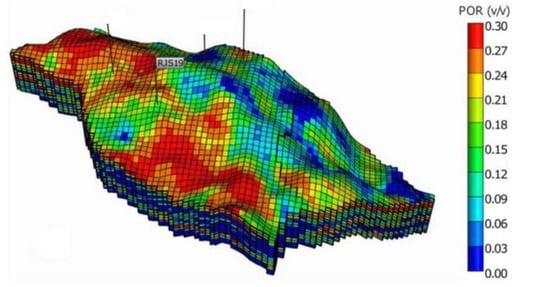

To integrate the static and dynamic reservoir models, the simulator divides the reservoir into many cells (grid blocks), or otherwise into a large number of space and time sections, where each cell is modeled individually (Figure 1). The simulation method assumes that each reservoir cell behaves like a tank with uniform pressure, temperature and composition of the individual phases for each specific time. During the fluid flow, each cell communicates with all neighboring cells to exchange mass and energy. Subsurface reservoir models can be highly complex, exhibiting high inhomogeneity, a vast variance of the petrophysical properties, such as porosity and permeability, and peculiar shapes capturing the structure and stratigraphy of the real reservoir.

Figure 1.

Reservoir model with millions of grid blocks [7].

Typically, reservoir simulations employ black oil or compositional fluid models to simulate the thermodynamic behavior of fluids. Black oil models are commonly used for simple phase behavior phenomena, providing a straightforward and reasonably accurate approach [8,9]; however, in cases of production forecasting and optimization applications, especially for complex phenomena, such as CO2 injection for Enhanced Oil Recovery (EOR), fully compositional simulations are necessary to monitor detailed changes in fluid composition [10]. Compositional reservoir simulations involve stability and flash calculations using an Equation of State (EoS) model to determine the number and composition of fluid phases in each grid block. These calculations are computationally demanding, require high-performance systems to be executed successfully and, therefore, consume a significant portion of the simulation’s CPU time, as both problems need to be solved repeatedly for each discretization block, at each iteration of the non-linear solver and for each time step [11,12].

Once the reservoir model has been set up, the most computationally expensive applications of simulation, History Matching (HM) and Production Forecast and Optimization (PFO) of future reservoir performance, can be performed. HM is the most important step preceding the reservoir performance optimization and is widely covered in Part I of the present review. When it comes to predicting and optimizing reservoir performance under various production scenarios, reservoir simulation plays a vital role. It is an essential tool for production management and techno-economic planning, as these activities heavily rely on accurate reservoir performance predictions. Forecasting entails making predictions about future production rates and the distribution of reservoir pressure and saturation, which rely on historical data, reservoir characteristics, and other pertinent factors. It helps operators make informed decisions regarding resource allocation, production planning, and investment strategies. Optimization, on the other hand, focuses on maximizing production efficiency and profitability while minimizing costs, downtime, and environmental impact. It involves analyzing production processes, well performance, and reservoir behavior to identify areas for improvement. PFO techniques may include wellbore management, enhanced oil recovery methods, production scheduling, and asset management strategies [13].

While the aforementioned PFO applications are central to reservoir engineering, they pose challenges in terms of their heavy computational cost. The iterative nature of the calculations involved makes the problem under investigation computationally demanding, becoming particularly cumbersome for extensive and detailed reservoir models, where the increasing number of grid blocks, the diverse reservoir parameter distribution, and the complex well operation schedules significantly prolong the calculations performed by traditional non-linear solvers [14]. Thus, to achieve accurate forecasts and effective optimization, oil and gas operators must devise efficient ways to deal with these types of problems, such as Machine Learning (ML) techniques, to make decisions, optimize production operations, and adapt to changing market conditions, ultimately maximizing the value of oil and gas assets.

The increasing volume of data has prompted extensive research across engineering disciplines to extract meaningful patterns and insights, since human cognition alone is often insufficient to process and comprehend this vast amount of information [15]. In recent years, data-driven ML techniques have gained significant traction and proven successful in supporting field development plans. These techniques enable the development of models that capture the essence of physical problems without the need to explicitly express fundamental laws mathematically. Typically, these models take the form of functions or differential equations that provide approximate and partially imprecise results, offering fast, robust, and cost-effective solutions [16,17,18].

ML offers an automated methodology for constructing numerical models that can learn and identify patterns from observed or synthetically derived data, reducing the need for extensive human intervention and facilitating the decision-making process. ML can help in analyzing vast and complex datasets, ranging from seismic data to production records. By uncovering complex patterns and relationships, it significantly refines reservoir characterization, providing a more comprehensive and accurate representation of subsurface conditions. This improved understanding minimizes uncertainties associated with reservoir properties, boundaries, and heterogeneity, thus elevating the quality and reliability of the model. Furthermore, ML’s predictive capabilities can be used to optimize production strategies. By analyzing real-time production data and considering the complex interplay of variables, algorithms are able to devise optimal operational plans. This results in maximizing recovery rates, minimizing operational costs, and optimizing production.

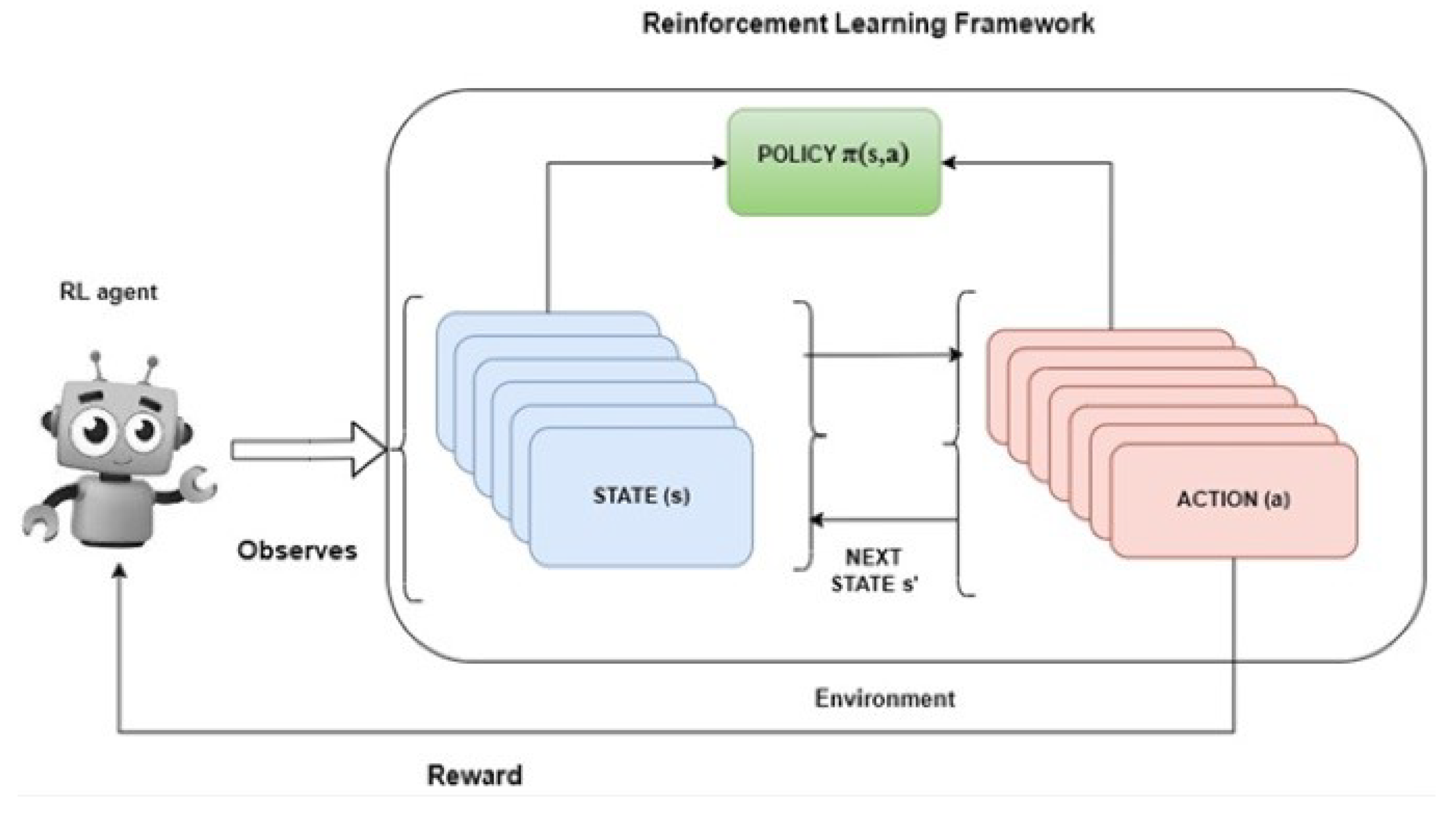

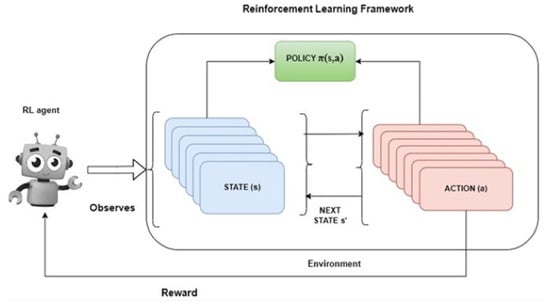

As presented in Part I of the present review, the most common types of ML are Supervised Learning (SL), Unsupervised Learning (UL), and Reinforcement Learning (RL). Furthermore, the development of an ML model consists of three primary steps. First, data is collected into a large dataset, as its quantity impacts the model’s accuracy, which is used for the model’s training process. Second, data preparation, including dimensionality reduction and handling outliers or missing data, is crucial for ensuring prediction precision. Finally, the model is trained using input variables and the desired output for supervised learning, which are assessed on the model’s ability to effectively predict and classify previously unseen data.

In the context of subsurface reservoir simulation, traditional simulators generate large data ensembles offline, for various conditions, to train ML models. Unlike most ML applications, the derived data comes from computational processes rather than experiments, resulting in noiseless information. Once trained, the ML model serves as a ‘’digital twin’’ of the reservoir, offering fast and accurate predictions about past, present, and future performance. This allows the model to address various problems and support decision making more efficiently [19,20].

This review discusses the approaches of ML-based reservoir simulations to provide a wide perspective on the state-of-the-art methods currently in use for PFO applications, as HM and individual reservoir simulation runs were extensively covered in Part I of the present review. More specifically, the modeling of subsurface reservoirs is covered extensively in Part I by incorporating a review of proxy models (SRMs) and ML models that focus on CPU time-intense sub-problems while maintaining the rigorous differential equation-solving method. The most pronounced application in this category is the handling of the phase equilibrium problem in its black oil or compositional form which needs to be handled numerous times during the reservoir simulation run.

In contrast to HM methods that follow a rather straightforward approach [1], ML-based techniques for PFO offer a broad spectrum of applications. These applications include optimizing the oil and gas cumulative or individual well production, predicting the water breakthrough time in water-flooding scenarios, forecasting CO2 injection-related sweeping and recovery, and identifying optimal locations for new wells to enhance the future production.

In this part of the present review paper (Part II), the ML methods for the subsurface reservoir simulation reviewed are categorized based on the context of the problem under investigation and the ultimate purpose of each reviewed method. Section 2 reviews ML methods that serve PFO applications and Section 3 concludes the present review.

2. Machine Learning Strategies for Production Forecast and Optimization Applications

A precise prediction of oil recoveries and determination of the optimal parameters that can maximize production or Net Present Value (NPV) is crucial to establishing techno-economic assessments for various production operations. However, obtaining precise predictions using standard reservoir simulation packages can be very cumbersome, since most recovery operations are governed by the high non-linearity of the flow equations and the complex spatial distribution of the reservoir parameters (e.g., porosity, permeability, saturation, etc.). Furthermore, even in cases where the underlying relationship between oil rate and reservoir parameters can be easily established, the limited availability of data, which is usually the case, especially for newly discovered reservoirs, imposes an increased uncertainty on the simulations. On the other hand, when the number of reservoir parameters is huge and much data are available, it is usually hard and impractical to reach an optimal solution, since the models can be of very high dimensionality. Consequently, building robust and precise PFO models using the available data is of major importance to reservoir engineers since, that way, they can gain better insights about the reservoir performance and thus, plan optimally for any reservoir management and field development strategies.

As Bao et al. [21] quote, “Many methods have been developed to estimate the oil production rate in an accurate and fast manner, such as decline curve (DCA) and well test analysis, numerical /analytical reservoir stimulation for both conventional and unconventional reservoirs, but the challenge lies in the noise and missing data and anomalies that could happen in the field”. While simple reservoir simulations, material balance, and a nodal analysis can be sufficient for small production systems, large complicated systems need a more advanced approach based on multiple simulation runs that, as already mentioned, can be extremely time-consuming [22]. All the above constraints have inspired engineers to consider new and novel alternatives that can mitigate the CPU time intensity of reservoir simulators to a great extent.

The ever-growing data volume in the petroleum industry has led to the widespread use of ML techniques, especially in PFO applications. Different ML methods are widely used to obtain insights into various operational and design processes (i.e., CO2 injection into the reservoir for EOR purposes or injection well spacing optimization to improve recovery, respectively) using data obtained from the field (gauges, flow meters, etc.), sampling, logging, or experimental procedures. These methods can be adapted to obtain hydrocarbon predictions and optimize many reservoir- and production-related parameters, thus leading to an effective production optimization in a fraction of the time that would otherwise be needed using traditional reservoir simulators.

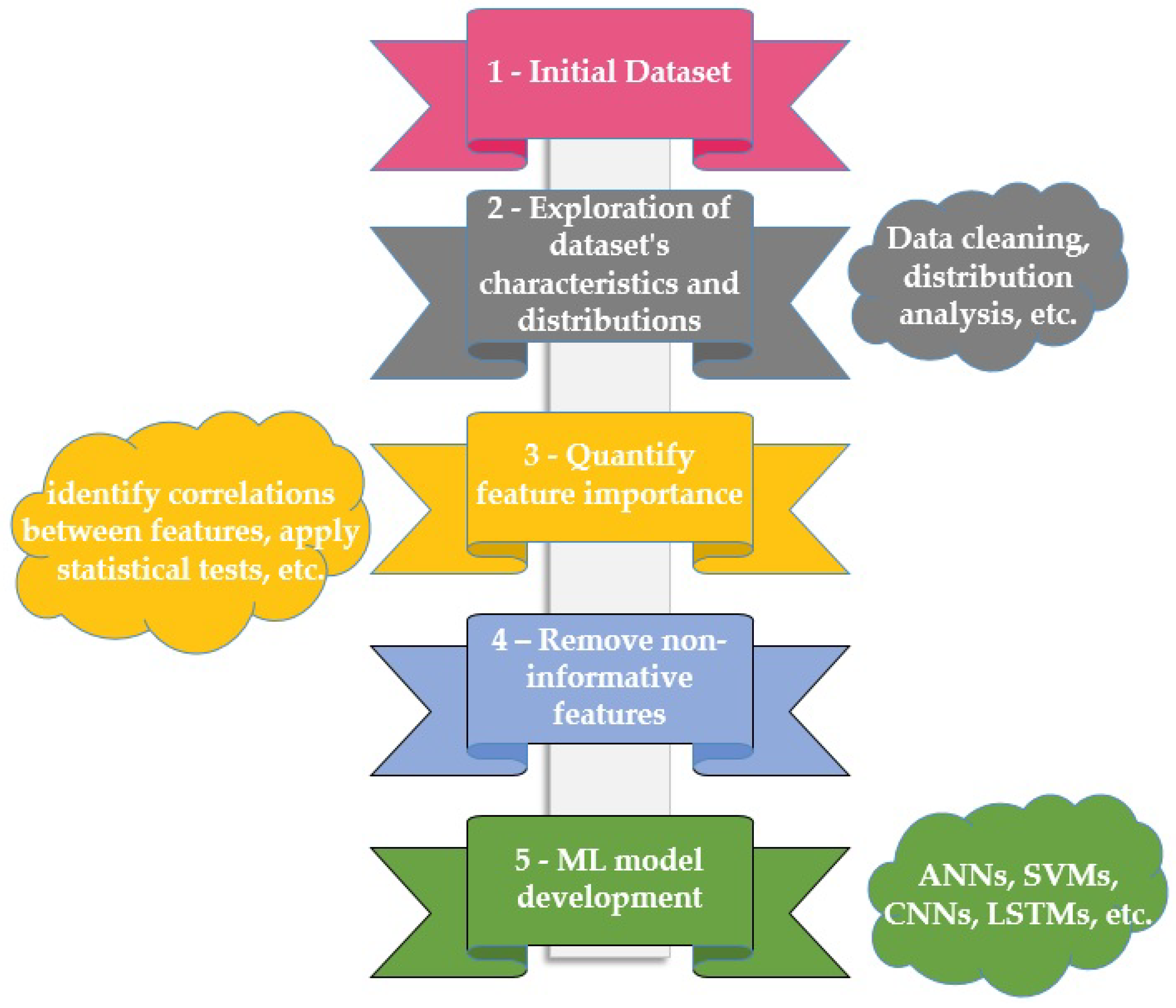

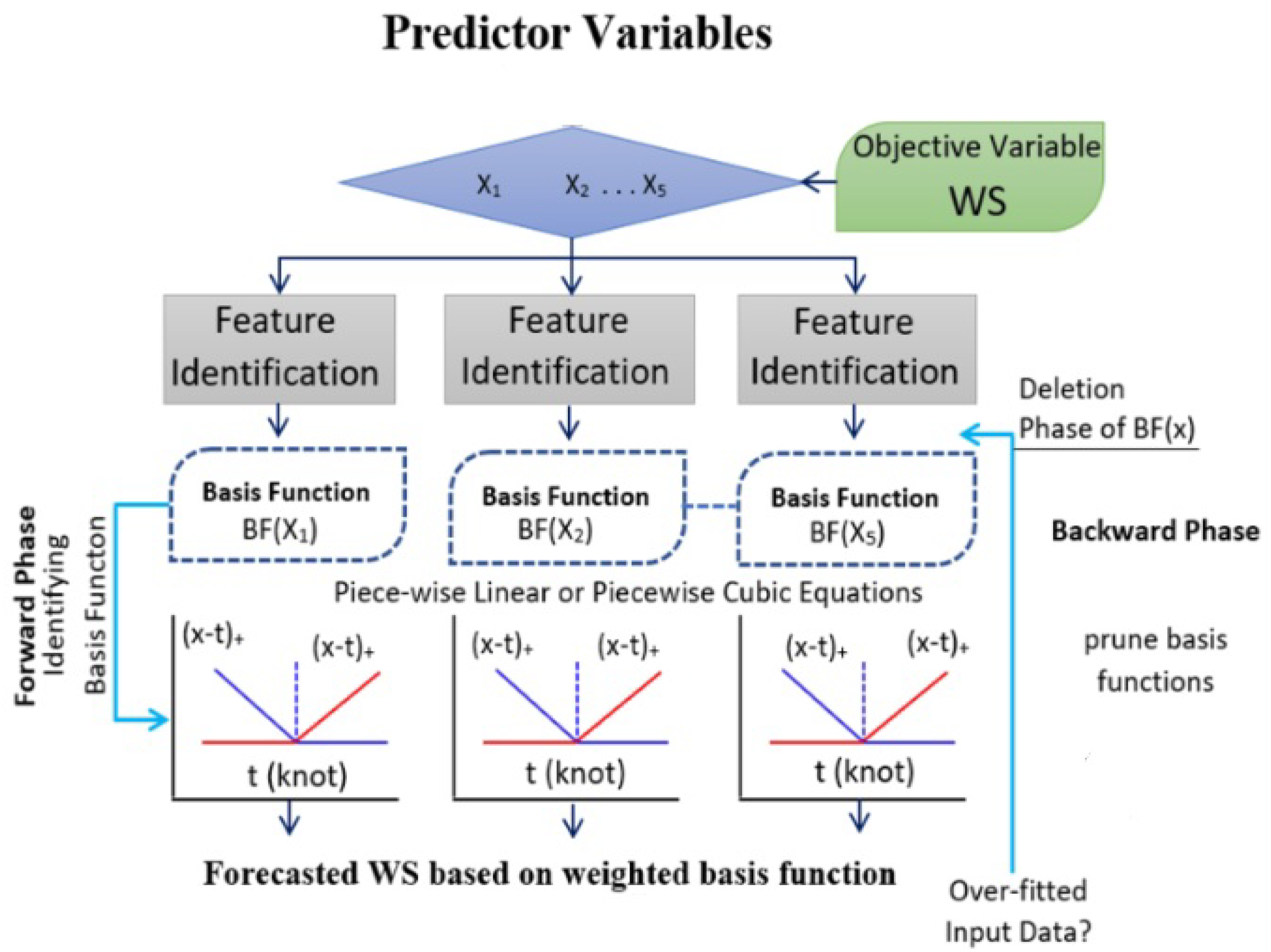

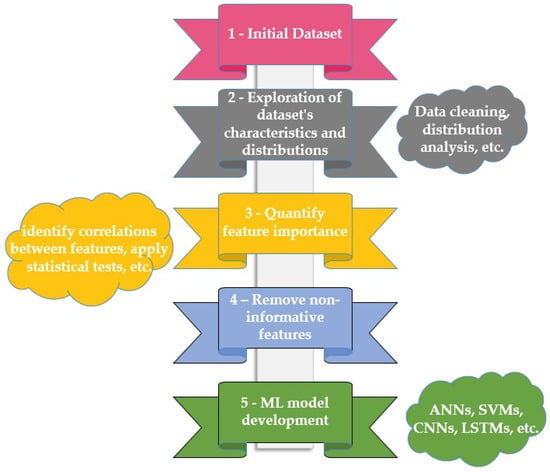

However, since data volumes have grown significantly and engineers need to deal with large amounts of data flows, some of them containing non-useful information, screening out those non-informative features is a crucial process in the construction of a reservoir model using ML, aimed at enhancing the model’s predictive accuracy and preventing the introduction of noise or overfitting (Figure 2). In the context of reservoir modeling, where accurate predictions are paramount for efficient resource management, this process involves a comprehensive evaluation of each feature’s contribution to the model’s performance. Starting with an exploration of the dataset’s characteristics and distributions, techniques such as feature importance analysis, correlation assessments, and domain knowledge are employed. By leveraging algorithms that quantify feature importance based on their impact on predictive accuracy, identifying correlations between features and the target variable, and applying statistical tests, the model developer can determine the relevance of each feature. Moreover, considering the inter-feature relationships, redundant or highly correlated attributes are identified and streamlined. Furthermore, the collaboration with domain experts can significantly aid in discerning irrelevant features that may not carry meaningful geological or geophysical significance. Through this systematic approach, non-informative features are identified, and dimensionality reduction techniques, such as regularization and dimensionality reduction algorithms, help prioritize informative attributes. This iterative process ensures that the reservoir model is built upon a foundation of relevant features, leading to enhanced precision, improved generalization, and a robust representation of the reservoir dynamics.

Figure 2.

Screening out non-informative dataset features.

It should further be noted that a great number of the methods discussed in this review are based on an existing and already history-matched reservoir model rather than directly on field data. In such cases, the PFO operator is already aware that that uncertainty has been compromised through history matching, and the reservoir simulator is supposed to act as the digital twin of the real reservoir. Therefore, the incorporation of irrelevant information to the ML models is automatically skipped as the data introduced to the machines is taken from the physics-driven reservoir simulator.

Usually, for the application of ML models for PFO, two kinds of problems are considered. The first class is the so-called forward problem, in which ML models use reservoir and design parameters (e.g., fixed Bottom Hole Pressures—BHPs or production rates, injection rates and/or gas stream composition for various EOR operations, well spacing, etc.) as inputs to predict the response of the system (e.g., future production rates or NPV). Those models act as direct prediction proxies for fluid production and pressure response forecasts. The second class is the inverse design problem, where ML models predict the necessary design parameters to obtain a desired system response (i.e., production rate) [23].

The ML methodologies reviewed in this section are divided into seven distinct categories, namely production optimization and production forecast concerning conventional reservoirs, production forecast for unconventional reservoirs, EOR/Sequestration projects, heavy oil reservoirs, gas condensate reservoirs, and a few applications on flow assurance problems. Each production forecast category is split into static/dynamic models, since each type is developed using a different ML technique and presents a different output (time-static and time-dependent values, respectively).

2.1. Production Optimization of Conventional Oil Reservoirs

2.1.1. Production Optimization Based on ANNs

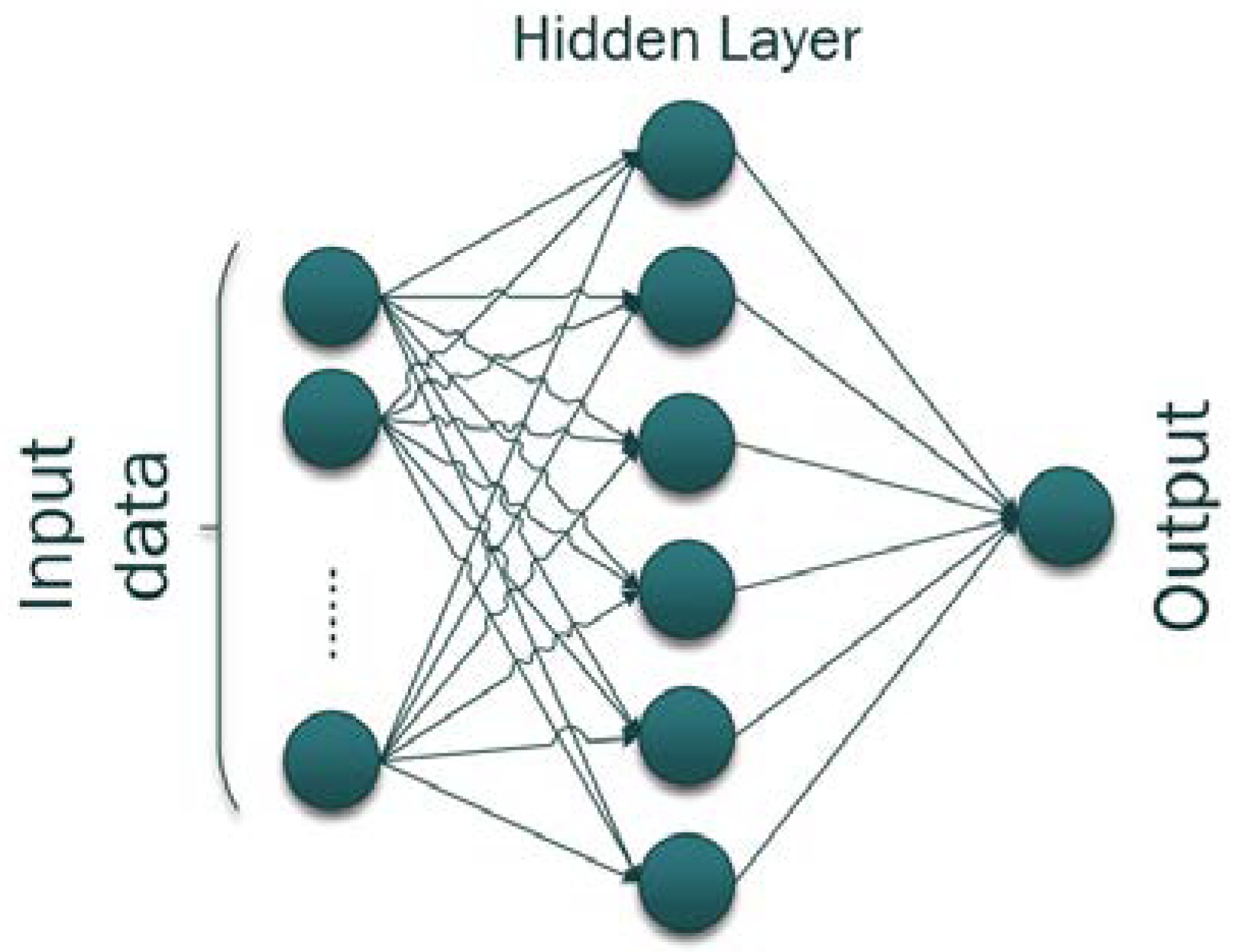

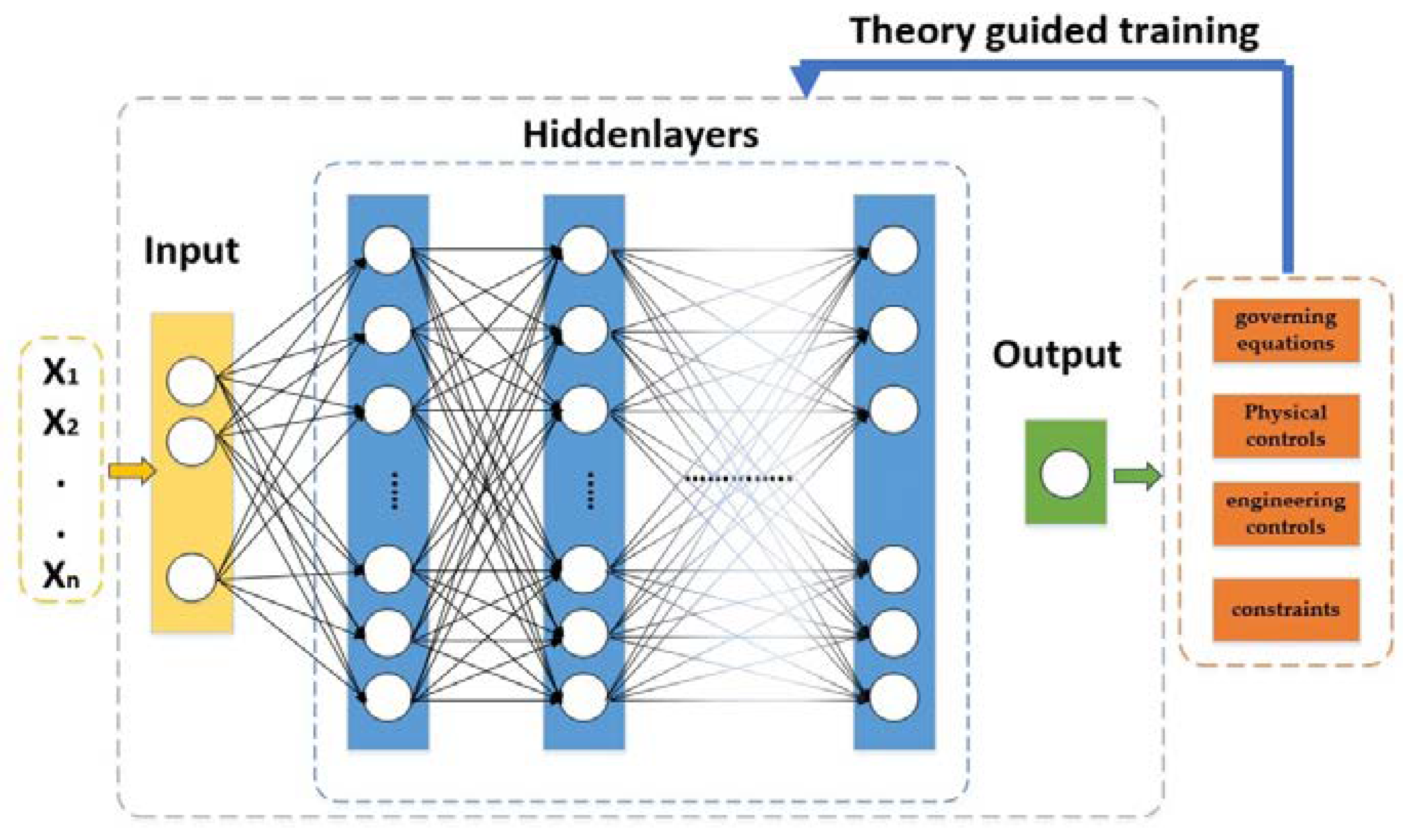

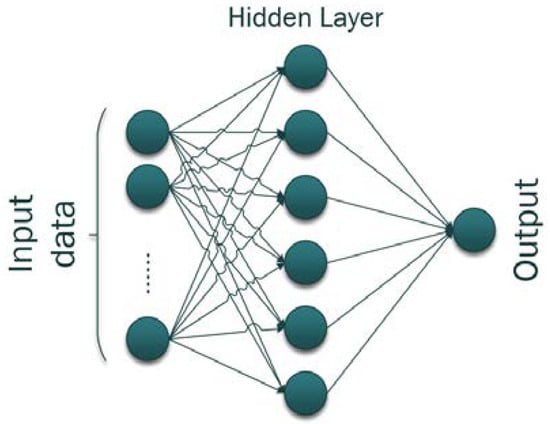

Starting with ML production optimization applications for conventional primary and secondary stage oil fields (i.e., non-complex reservoirs without an EOR project implementation), Koray et al. [24] developed simple Artificial Neural Network (ANN) models for the oil recovery optimization by exploiting different field development scenarios, namely normal depletion and water-flooding. ANNs are considered ideal candidates for such problems, as their architecture enables the quick capture of complex relationships within data. As depicted in Figure 3, they consist of interconnected nodes, or neurons, organized into layers that process input data and produce output predictions. This architecture excels in recognizing patterns, especially in cases where the relationships between input variables and desired outputs are nonlinear. For simple optimization tasks, ANNs can efficiently learn from historical production data and identify optimal combinations of operational parameters to achieve specific production goals. The authors used well locations, injection rates, production/injection BHPs, and water cuts of each development scenario as input to forecast the cumulative oil production for 15 years. By applying various test scenarios to the trained ANN, it was concluded that the water-flooding development strategy was the one that maximized oil production.

Figure 3.

Simple one-layered Artificial Neural Network.

Zangl et al. [25] and Andersen [26] developed a similar approach by coupling a Genetic Algorithm (GA) with ANNs to achieve production optimization while reducing the computational time required by only running a limited amount of simulations. More specifically, Zangl et al. [25] trained the ANN using information about the wellhead pressure and temperature, choke size, and BHP as input and oil production as output, as obtained from simulation realizations, whereas Andersen [26] used production and injection well rates as input and total oil production as output. That way, as flow rates are regulated, different cumulative production values can be gained. Once the models are trained and validated, their output is used as a very close approximation to the simulator’s output and, that way, the GA is then employed to perform the optimization.

Although there exist many mathematical algorithms and optimization tools that are usually employed to determine the optimal number and placement of production wells [27,28], Centilmen et al. [29] moved into the ML field and built an ANN, focusing on the well placement that achieves the optimum production. The authors used two input options, one stationary (well locations) and one input related to the problem under investigation (the distance between two wells, production time, etc.) to determine each well’s production (output). The model, after being trained and validated, was used to optimize the location for the new wells that must be drilled. Doraisamy et al. [30] further improved Centilmen et al.’s work by coupling soft and hard computing methodologies to efficiently determine the optimum well placement while exploiting the processing power of back-propagation and recurrent networks and the precise description of the related physics, respectively. To achieve that, the authors trained the ANN using available real data from the oil field and data generated numerically by a simulator (coordinates of the existing, training and new wells, distances between the new and the existing wells, etc.) to determine the oil rate of each well configuration. Min et al. [31] developed an ANN embodying the productivity potential to determine the optimum new well placement that maximizes the total production. The authors introduced the productivity potential to decrease the input dataset size, since it indirectly contains many reservoir parameters (e.g., porosity, permeability) that otherwise should have been introduced individually. Thus, the model’s input consisted of time-related parameters, the wells’ distances, and the productivity potential to produce the output that is the cumulative production.

Among all secondary production schemes, water-flooding is the most commonly used one, since it is a relatively easy-to-implement process with better economics than gas injection and can effectively assist oil production. Teixeira et al. [32] developed two ANN models of varying configurations to uncover the relation between total oil production (output) and control parameters (input) for the optimization of the production strategy of a reservoir under water-flooding. The authors used water injection rates, oil production rates, and BHPs as input to predict the optimum future oil production. The proposed models were shown to perform satisfactorily; however, the results exhibited a noisy behavior in both models that could disturb the optimization process.

All of the above ANN-based techniques exhibit high efficiency in optimizing production since the quality of the predicted solutions is comparable to the performance of the original reservoir simulator when utilized to exhaustively optimize the production. Furthermore, the use of those models leads to a great decrease in the total CPU time, proving that the developed approaches can help solve optimization problems and increase the economic benefits of an oil field.

2.1.2. Production Optimization Based on Other Methods Other Than ANNs

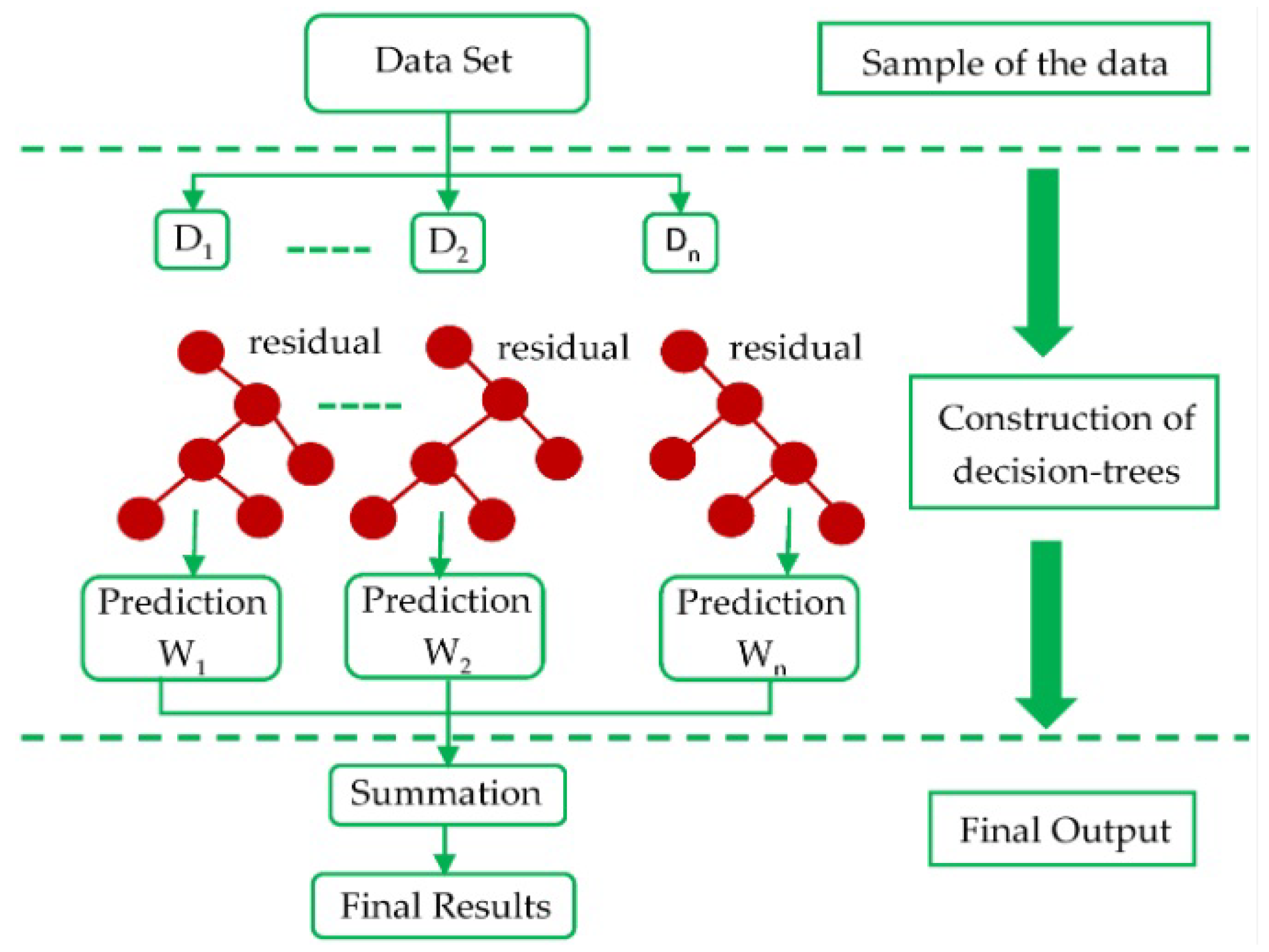

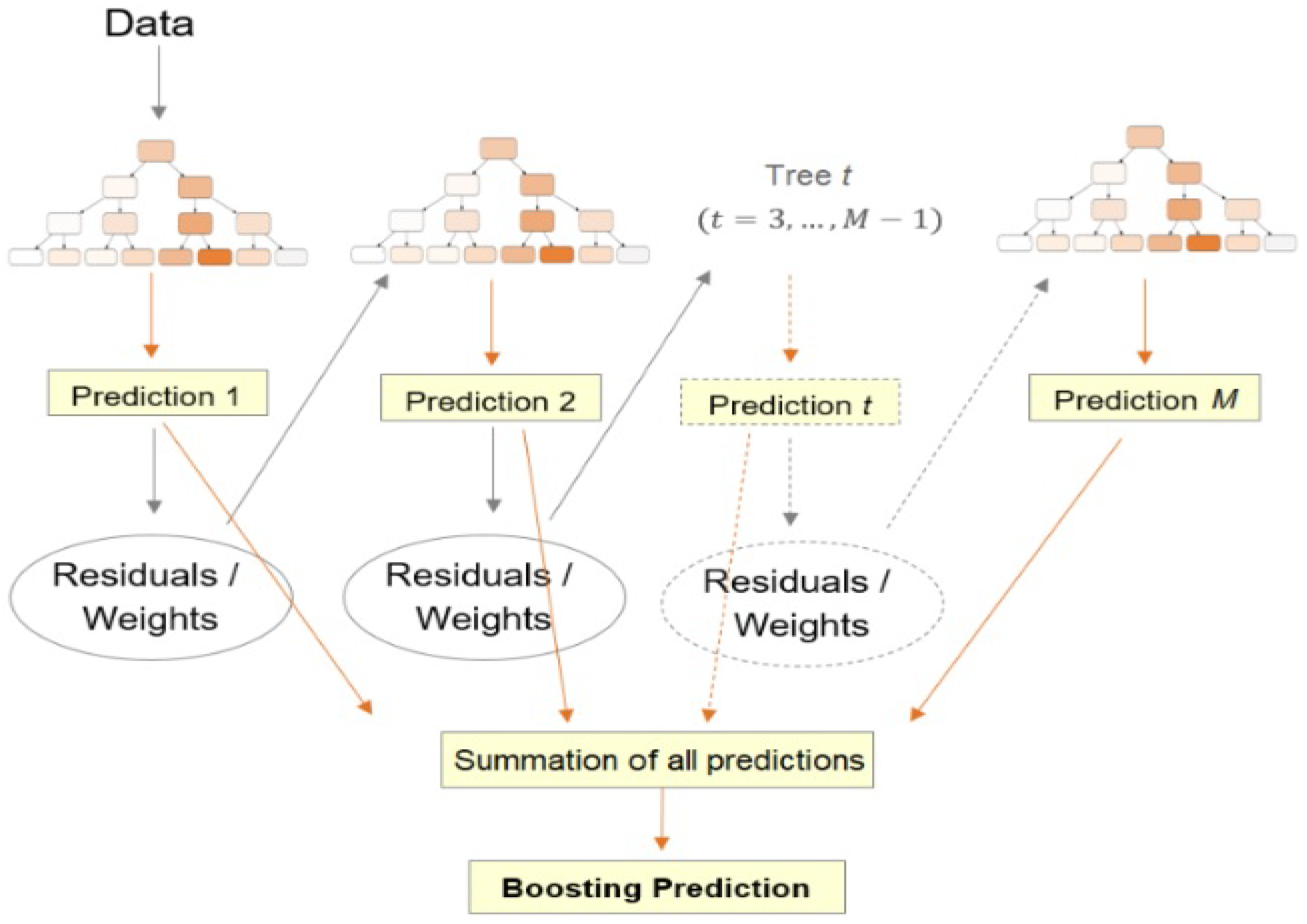

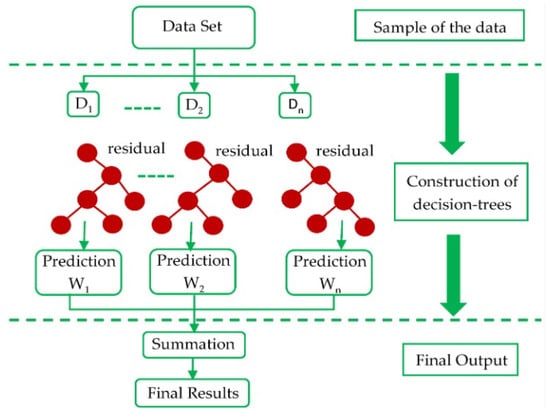

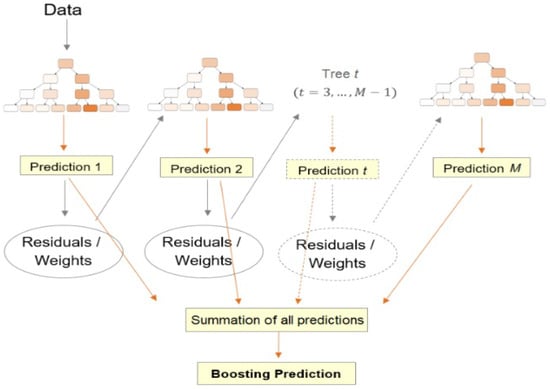

Besides ANNs, many alternative ML methods have also been developed to effectively assist production optimization, such as Extreme Gradient Boosting (XGB), which is an ensemble learning algorithm (designed for both classification and regression tasks) that consists of a collection of Decision Trees (DTs) and falls under the category of gradient boosting methods. XGB operates through an iterative procedure (Figure 4) that transforms a training dataset into a predictive model. It starts by initializing the prediction with a constant value, typically the mean of the target variable. Then, it constructs a DT using the training dataset, aiming to minimize the gradient of the loss function. This tree captures patterns in the data and predicts residuals—differences between the actual and predicted values. The predictions from this tree are then multiplied by a small learning rate, representing the step size towards the optimal prediction. The residuals are updated using the multiplied predictions. This process iterates, with each new tree learning from the residuals left by previous trees. Finally, the predictions from all the trees are combined by summing them up, resulting in the final prediction. This sequential aggregation of predictions and residuals forms the basis of XGB’s ensemble algorithm, which efficiently captures complex relationships in data while ensuring high predictive accuracy.

Figure 4.

Structure of XGB algorithm [33].

Chai et al. [34] developed such an ML model, along with an ANN as well as a simpler MultiVariate Regression (MVR) model for comparison reasons, to optimize injection and production rates (output) and, therefore, optimize the development strategy of the field. First, a conventional simulator was utilized to run several production schemes and to obtain a training dataset consisting of heterogeneous porosity and permeability fields and BHPs as input and injection and production rates as output, to train the selected ML models. The Karhunen–Lo’eve expansion method was utilized to reduce the parameter space and, thus, reduce the complexity of the model. The results showed that both the XGB and ANN presented quite high and invariable accuracy when compared to the MVR method, even for the case of relatively small datasets, although the ANN presented a superior performance when larger datasets were considered. After the models were trained and tested, the well controls (BHPs for both injection and production wells) were optimized using three search-based algorithms, Particle Swarm Optimization (PSO) [35], GA, and a combination of these two, called Genetical Swarm Optimization (GSO) [36]. The main idea was that these methods provide a quick and agile solution to the optimization problem and the model can provide fast results based on the given input parameters. The results showed that GA and GSO generated better results when compared to PSO.

Guo et al. [37] developed one of the first Least Square Support Vector Regression (LSSVR) models to identify the optimum well controls (i.e., well operating conditions) that maximize the NPV and, thus, optimize the field’s production. LSSVR is an extension of the Support Vector Machine (SVM) algorithm, tailored to regression scenarios, and uses support vectors that seek to find a regression function that can accurately predict target values based on input features. To handle nonlinear relationships, LSSVR employs kernel functions to implicitly transform data into a higher-dimensional space, allowing the algorithm to capture intricate patterns in the data. Guo et al. [37] run conventional reservoir simulations, the number of which is selected with the help of the Latin Hypercube (LH) sampling method in order to build the training dataset for the LSSVR model, which consists of various well operating conditions (water injection rate for injectors; BHPs for producers) as input and NPV values as output. After the dataset is generated, the forward LSSVR model is used for optimization in conjunction with a steepest-ascent algorithm. The results were compared with the ones of the Stochastic Simplex Approximate Gradient (StoSAG) optimization algorithm [38] and conventional simulations, showing that the total computational cost is substantially reduced and, most importantly, the model’s performance presents very similar results to the CPU time-expensive StoSAG algorithm and the simulator.

Al-Lawati et al. [39] developed an unsupervised ML method to optimize gas production by determining the most favorable production parameter setup. The unsupervised model was developed to identify well clusters with comparable production profiles, which are then utilized to determine the actions that must be taken (drilling, choke size, etc.) to obtain optimal production. The model was trained using the daily gas production, drilling and completion data, and other well control data (e.g., choke size). One of the advantages of the proposed procedure is that the model can be adapted accordingly to be used as an optimization approach for many applications, such as budget handling or emissions control. Shirangi [40] developed unsupervised fast NPV models using ANNs or Support Vector Regression (SVR) and trained with well controls as input to predict NPV values, which are then optimized using a pattern search algorithm. Before the models’ development, the authors performed a kernel clustering technique to select the minimum set of conventional simulation runs required to best represent the uncertainty of the whole set. Since the quality of an ML model depends highly on the quality of the training dataset, once the models were trained, the authors added new training data points close to the optimum value to train a new model. The procedure (getting improved optimum and enhancing the training population) is repeated until the desired error margin is reached. Both models provided significant computational time reduction and accurate results.

Finally, Gu et al. [41] developed an XGB model to predict the water cut (output) of producing water-flooded wells, trained with dynamic production data (e.g., water injection, delivery capacity, liquid production, etc.) as input. The input was preprocessed using the Spearman rank correlation analysis [42], which determines the correlation between two parameters, and, in the present case, the correlation between water cut and dynamic attributes. After the model is trained and tested, a differential evolution algorithm is utilized for the optimization of the injection-production strategy by minimizing the obtained water cut. The results showed a significantly improved water-flooding process.

2.2. Production Forecasting of Conventional Reservoirs

Forecasting oil production and production economic-related parameters (e.g., NPV) equips oil and gas operators with a rigorous plan to reduce risks that might alternatively affect operational and financial decisions. The task of conducting trustworthy production predictions can be burdensome and extremely time-consuming [43], especially when numerical simulators demand a thorough reservoir static and dynamic description. Reservoir simulation demands certainty related to input data, validated through HM, which is a time-consuming approach that does not always guarantee uncertainty reduction. Furthermore, all readily available empirical correlations that calculate oil production volumes are developed using field data and, thus, they cannot be adapted and used to every reservoir around the world due to their varying complexity and heterogeneity [44].

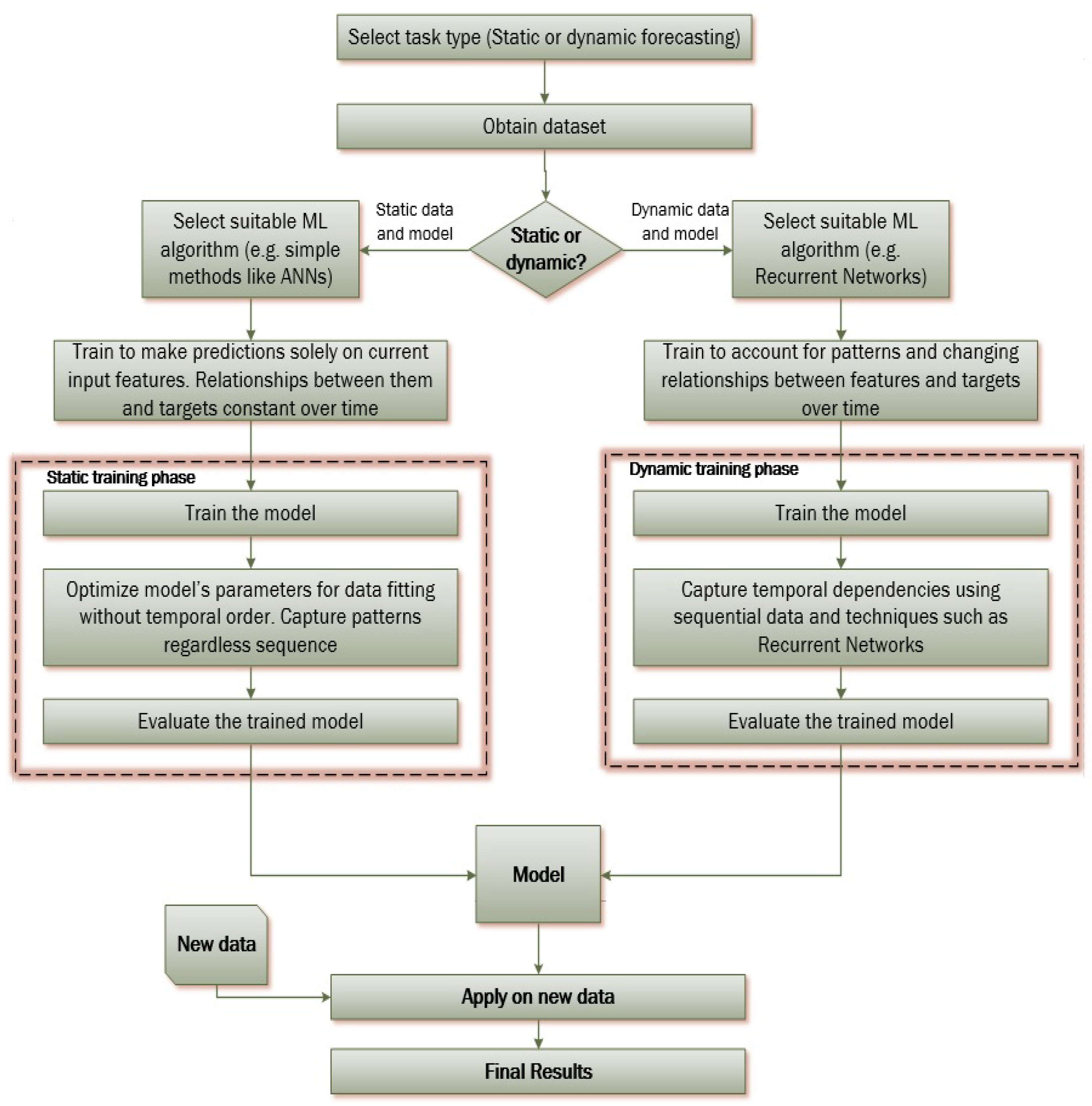

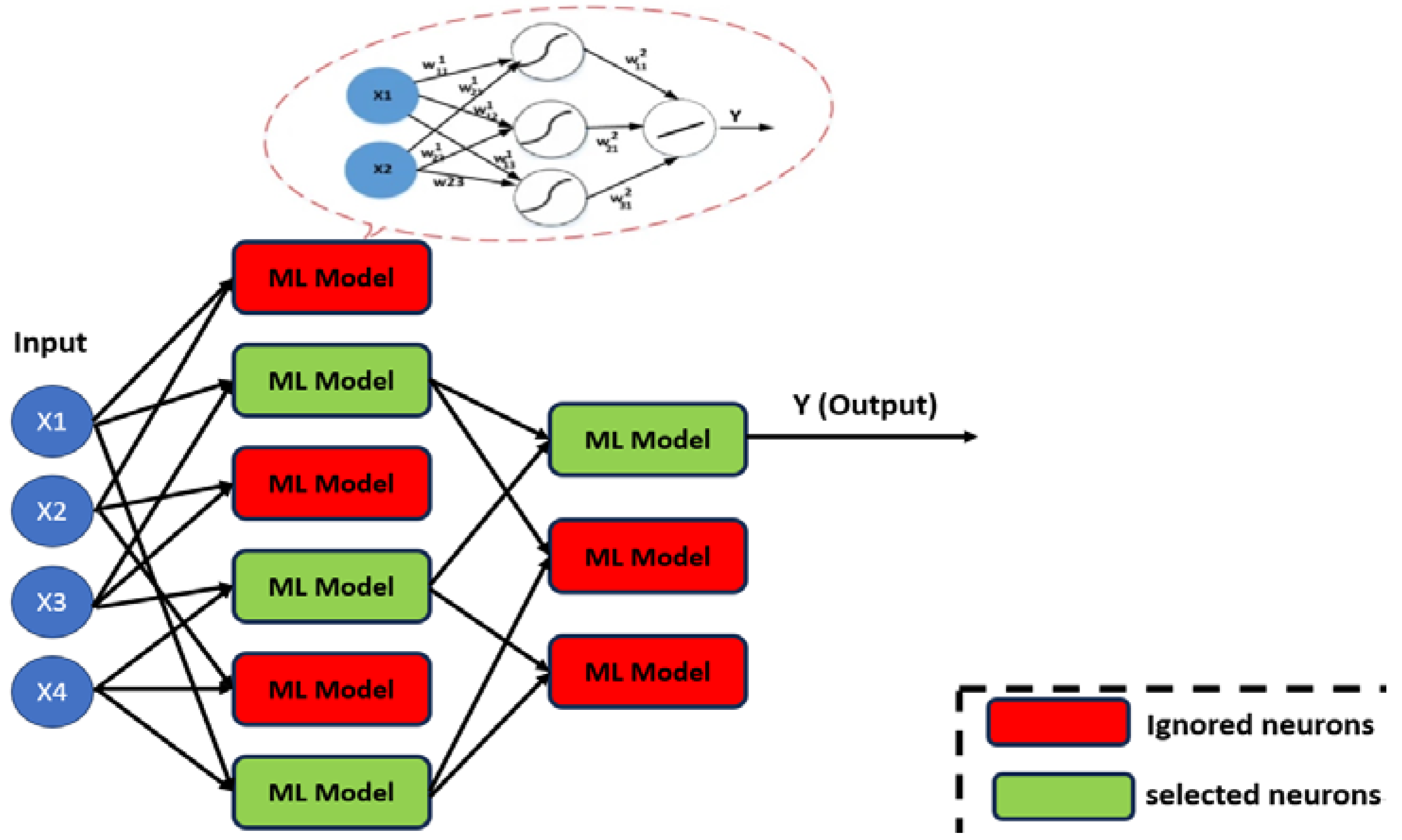

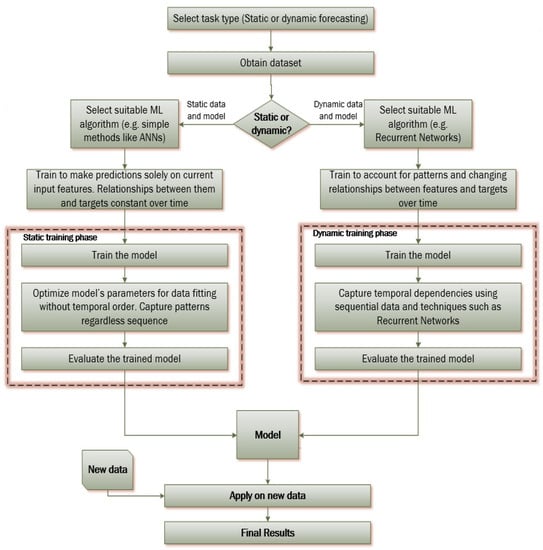

ML methods, mostly ANNs, are widely utilized for production prediction purposes since they can provide fast and robust models that tend to overcome the drawbacks of conventional numerical simulators. Production prediction ML-based techniques can be roughly divided into two groups (Figure 5). The first is based on building ML models that predict reservoir properties and behavior based on the current state of the reservoir without considering temporal changes. It provides a snapshot of the reservoir at a specific moment, which is useful for analyzing static scenarios or short-term predictions. The training procedure for a static model involves using a dataset that captures various reservoir parameters at a particular time. The model learns the relationships between these parameters and the target variable, such as production rates or pressure, without accounting for historical data or temporal trends. The trained model can then predict reservoir outcomes based solely on the current input parameters.

Figure 5.

Static and dynamic model training procedure.

The second group, on the other hand, accounts for the temporal evolution of reservoir behavior. It considers how reservoir properties change over time and how different stages influence each other. Dynamic models use time series data to capture historical patterns, making them suitable for long-term predictions and understanding reservoir dynamics. The training procedure for a dynamic model involves creating a dataset that incorporates time sequences of various reservoir parameters and corresponding outcomes. Techniques such as Recurrent Neural Networks (RNNs) or Time Series models are commonly used to handle the temporal dependencies. These models learn to predict future reservoir behavior by considering historical data points and their sequential relationships and are very efficient in predicting future time series values governed by nonlinear dynamics without the need to include the effects of any reservoir physical process, hence requiring significantly fewer input data [45].

Although both groups aim at predicting time-related data, the terms “static” and “dynamic models” are used respectively to distinguish between those predicting distinct characteristic values or time series.

2.2.1. Static Machine Learning Models

For the first category, Gharbi et al. [46] were among the first to develop an ANN to forecast the oil recovery at breakthrough for an immiscible water displacement process. The authors trained a simple ANN model using five dimensionless scaling parameters as input (mobility ratio, capillary to viscous forces ratio, gravitational to viscous forces ratio, length–thickness aspect ratio, and dip angle number) and oil recovery as output. Their work can be thought of as a generalization of the Buckley–Leverett theory using ML. Weiss et al. [47] developed an ANN model to forecast oil production for a water-flooding operation, with the help of the Fuzzy ranking method [48] that is utilized to determine the ANN input parameters (unitized area, permeability, water–oil ratio, porosity, initial BHP, and Gas to Oil Ratio—GOR). Cao et al. [49] also developed an ANN model using production-related data, to forecast the future production of existing wells, as well as of new wells by taking advantage of the production history of neighboring wells sharing similar characteristics, geological maps, pressure data, and operational constraints. In Fan et al. [50], ANN models were successfully built to forecast production using static reservoir parameters (porosity and thickness), water saturation, and well drainage areas. Simple ANN models were also developed by Elmabrouk et al. [51] and Sun et al. [52] to provide oil production forecasts, using real historical production data. The results of the above simple ANN-based models showed that they could accurately predict future oil production since they presented comparable results to conventional simulators in a fraction of the time that it would otherwise be required.

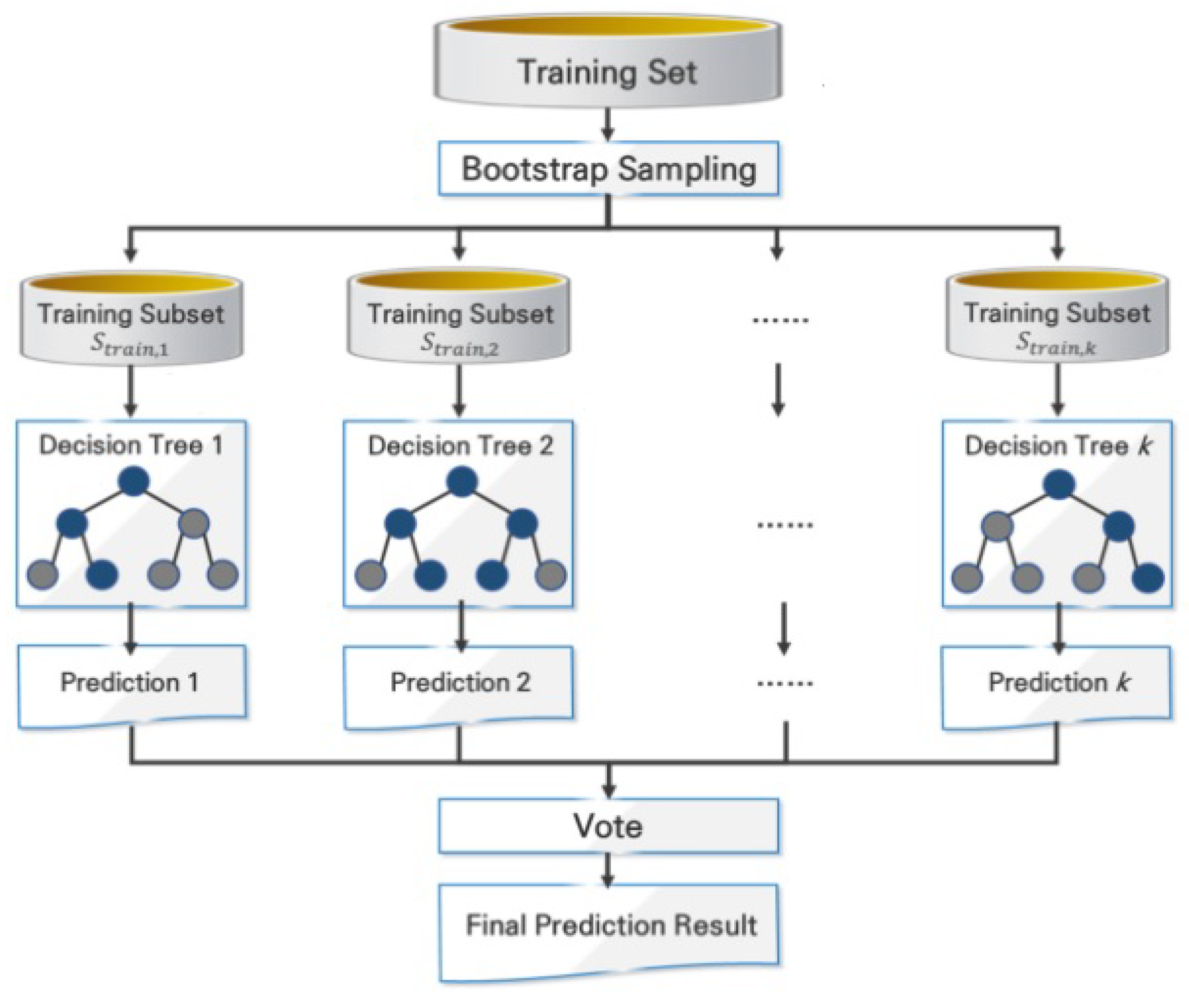

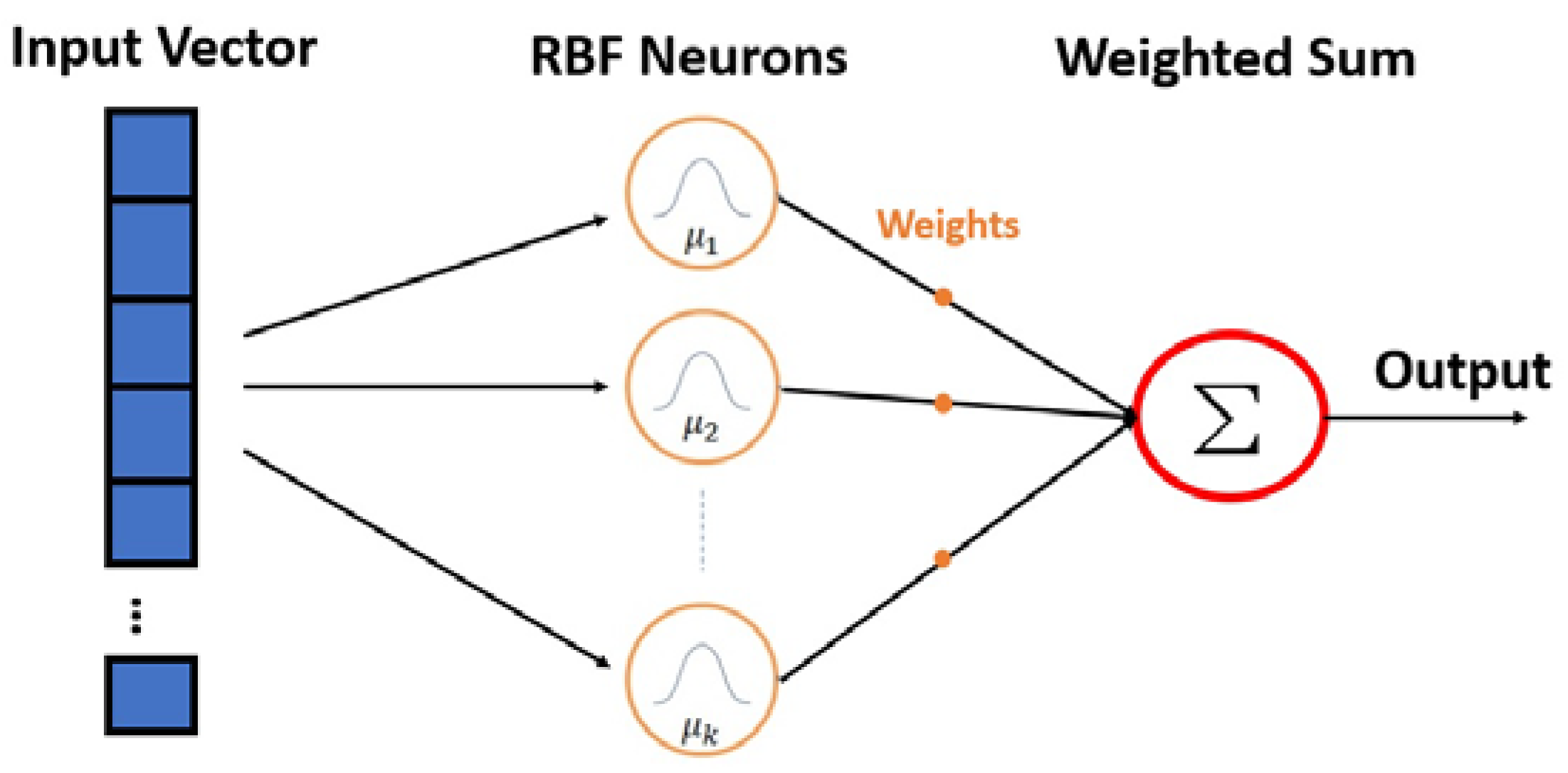

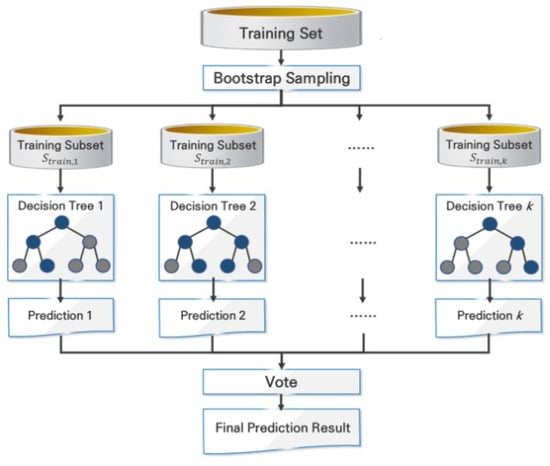

Apart from ANNs, many other methods can be used for production prediction purposes, such as Random Forests (RFs) and Gradient Boosting Regressors (GBRs). RF is a highly effective ensemble ML method used for both classification and regression tasks. It functions by combining the predictions of multiple individual DTs to achieve higher accuracy and generalization, as can be observed in Figure 6. Each tree is trained on a different subset of the data, introducing variability and reducing the risk of overfitting. During training, subsets of the data are randomly selected with replacement through a process called bootstrapping. Additionally, a random subset of features is chosen for each tree to enhance diversity among them. Each DT is trained to predict the target variable based on its associated input features. During prediction, each tree generates its output, and the final prediction is determined through a majority vote (for classification) or an average (for regression).

Figure 6.

Random Forest architecture [53].

RFs and GBRs are both ensemble ML algorithms used for regression tasks, sharing the goal of improving predictive accuracy by combining multiple learners. However, they differ in their approach and characteristics. RFs aggregate predictions from individual decision trees in parallel, using bootstrapped subsets of data and random feature sampling to enhance diversity. GBRs, on the other hand, build an ensemble sequentially by focusing on minimizing residuals of previous learners, allowing each new learner to correct the weaknesses of the previous ones. This sequential nature may lead to slower training but often results in higher accuracy [54]. Ultimately, the choice between them depends on the problem’s complexity, available resources, and the trade-off between training time and predictive performance.

Chahar et al. [44] developed such models (RFs and GBRs), as well as an ANN model, to forecast oil production using production data, with the ANN and RF models presenting the best performance. Furthermore, the authors claim that those models can be trained with any dataset to assist production predictions. Martyushev et al. [55] tried to assess the RF method and evaluate whether it can effectively predict reservoir pressures for an oil field development when compared with statistical models, using the dynamics of indicators describing the wells’ operation and values calculated using hydrodynamic studies. Their results showed that the RF method presents a severely improved performance in comparison with a linear regression method, as far as the prediction accuracy is concerned. Han et al. [56] developed an SVM model coupled with a PSO algorithm to make predictions about the oil recovery factor using static and dynamic data, as well as fluid properties and well spacing density data, for a reservoir with low permeability. The coupled SVM-PSO model exhibited improved accuracy, compared to a classic back-propagation ANN-PSO model.

Focusing on the parameters’ dimensionality reduction for more complex reservoir models, several methods have been proposed, such as that by Zhu et al. [57], who were the first to develop an encoder–decoder Convolutional Neural Network (CNN); the methods are extensively described in Part I of the present review, and include an image-to-image regression problem to predict single-phase flow velocity and pressure fields using permeability maps (images) as input. Thus, the encoder–decoder model is built in a way so as to efficiently apprehend the complicated high-dimensional input field without employing any other dimensionality reduction techniques. This way, the encoder extracts multi-dimensional features from the input, which are then utilized by the decoder to reconstruct the output fields. Furthermore, the authors proposed a Bayesian approach to the encoder–decoder CNN model, since Bayesian networks can determine uncertainty estimates for the predictions, especially when small training datasets are used. Subsequently, Stein’s variational gradient descent algorithm [58] is used to approximate the Bayesian inference on many uncertain variables. This is a nonparametric variational inference algorithm that combines the benefits of variational inference, Monte Carlo, quasi-Monte Carlo, and gradient-based optimization methods, accomplishing great accuracy performance and uncertainty quantification, as compared with other Bayesian methods, even for small training sets. The proposed model’s efficiency was shown to be very good, implying that Bayesian models can be robust proxies for modeling and uncertainty quantification in high-dimensional problems, as well as in problems with small training datasets.

Cornelio et al. [59] suggested using encoder–decoder CNN networks to pass on common features in a dataset related to a mature unconventional field to allow a production forecast in a new unconventional one that lacks important data that are necessary for production predictions or any other field development calculations. The authors used simulation data from two fields, the Bakken (mature with many available data) and the Eagle Ford Shale (new with fewer available historical data). The former known field was used to produce the training dataset (formation and completion parameter range as input and corresponding production as output) for the model to allow the learning transfer to occur for the production forecast of the latter unknown field when new data are inserted. The primary goal of the study was to utilize the model’s learning mechanism, developed from the known field, to find, extrapolate, and share all important features to create production predictive models for the unknown one. The results showed that when the model was trained with a relatively large dataset from the known field, it could make trustworthy forecasts for wells found in that field; however, the same model could not generate good results for a well in the unknown field. Nevertheless, when the extrapolated features of the trained model were combined with a trained model of the unknown field, the results showed that the prediction efficiency presented a considerable improvement.

Illarionov E. et al. [60] presented a unified approach to reservoir simulation and HM by using a single encoder–decoder ANN with commonly used gradient-based optimization methods for both processes. The authors used the geological parameters of a 3D reservoir model as input to the model and production rate predictions as output. The encoder is utilized to transform the reservoir dynamics into a latent vector space (compressed representation of only significant features), and the decoder is used to reconstruct them into a reservoir grid. Zhang et al. [61] developed a similar study, without the HM process, with a dense encoder–decoder network using permeability and relative permeability data as inputs to make predictions about field pressures, well rates, and fluid saturation during a water-flooding process. Tang et al. [62] developed a Deep Learning (DL) Recurrent Neural Network (RNN) CNN model for prediction and HM purposes, respectively, in channelized geological models. The prediction model’s training was performed using pressure and saturation maps as input and production predictions as output, obtained by the simulation results of fluid flow in a 2D channelized system. After training and testing, the RNN’s results were utilized to match the complex channelized reservoir system using a CNN with the Principal Component Analysis (PCA) parameterization method.

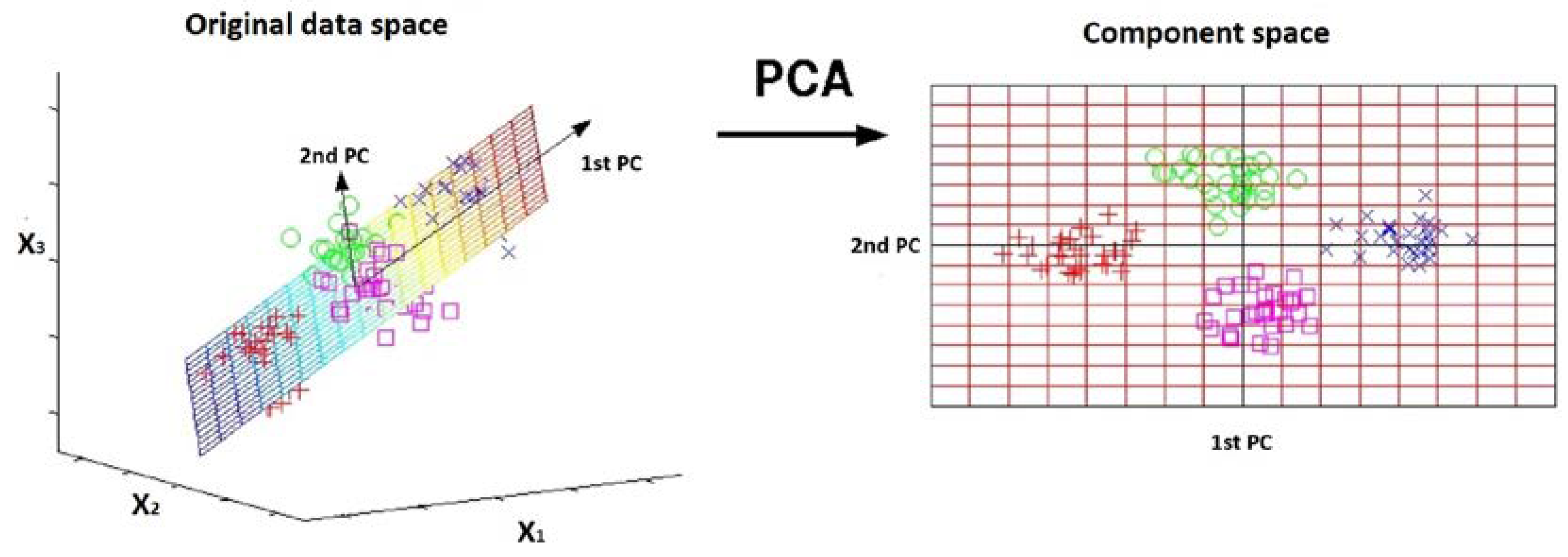

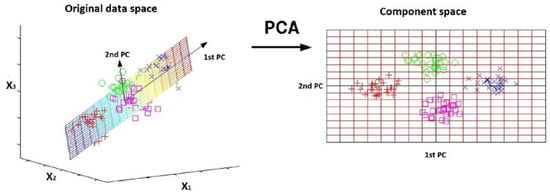

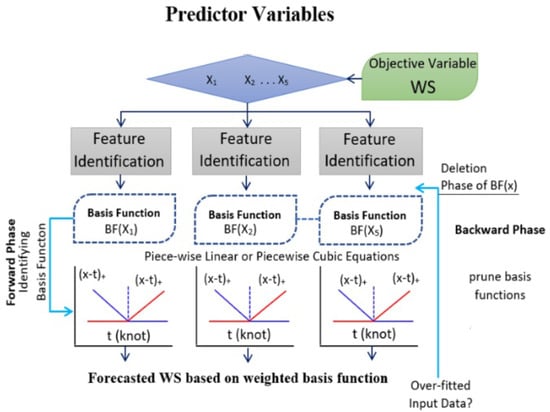

PCA can be a valuable parameterization method in the context of ML for subsurface reservoir simulations, where datasets can be high-dimensional and complex. One of its benefits is its ability to reduce the dimensionality of the input data while retaining much of its important variability. By transforming the original features into a new set of uncorrelated variables (Figure 7), or principal components, PCA can significantly simplify the dataset. This dimensionality reduction not only helps alleviate dimensionality-related issues leading to improved computational efficiency, but it also aids in visualizing and interpreting the data. Furthermore, PCA’s capability to identify the most informative features allows for more focused modeling and potentially enhanced generalization performance.

Figure 7.

Principal component analysis.

All of the above ML models coupled with dimensionality reduction techniques present very accurate prediction results, while also maintaining a smaller computational cost when compared to the conventional simulators, or even to simple ML models that take into account a fully dimensional database. It must be noted that the biggest contribution of these techniques is towards complex reservoir systems where the number of parameters can be extremely high, while also presenting large distribution variations from one field location to the other (e.g., porosity and permeability). In those cases, the dimensionality reduction can significantly reduce the time that would otherwise be required since the prediction calculations are executed much faster.

Considering a more sophisticated approach, Zhong et al. [63] developed an ML model using a conditional convolutional Generative Adversarial Network (GAN), which is also described extensively in Part I of the present review that predicts the water saturation of a water-flooded heterogeneous reservoir to obtain the reservoir fluid production rate from material balance calculations. The authors used the permeability distribution information as input and the water saturation as output. The main advantage of the proposed methodology is that it can be used to estimate water and oil saturation distributions concurrently, enabling fast calculations of water and oil production rates efficiently.

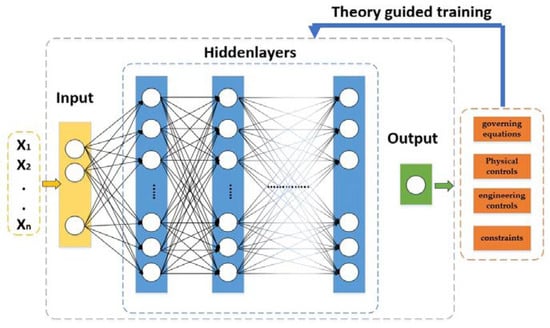

Wang et al. [64] developed a not-so-used DL network, the Theory-guided Neural Network (TgNN) to forecast future reservoir responses. This is a supervised ML method since the network is trained using simulation data and, at the same time, it is directed by theory (e.g., governing equations, physical or engineering controls and constraints, sensors, etc.) related to the problem under investigation (Figure 8). Engineering controls and constraints are included since they are vital for a more precise prediction of the system’s response, which may not be sufficiently described only by physical laws corresponding to the problem under investigation. The main benefit of TgNNs is that they can make predictions with higher precision, compared to the classic DL ANNs, since they can generate more physically reasonable predictions and can generalize to problems beyond the ones covered by the existing training dataset. The authors tested the trained model with more complex scenarios (e.g., using data with noise or with outliers, using more sparse data, various engineering controls, etc.). One interesting scenario was one where monitors or sensors were set out of order, a case where unreasonable data may emerge. They performed this scenario by considering those data as outliers in three different cases, 5%, 7%, and 10%, for two different prediction steps, 30 and 50, respectively. As outliers increased, the model’s precision became worse, particularly in the smaller prediction step. Nonetheless, as the simulation proceeded, the impact of outliers was decreased by the integrated sensor knowledge in the model. Overall, the results demonstrated that the proposed model has superior predictability, as it can forecast future responses more easily, and is more generalized than DL models since it contains the theory aspects.

Figure 8.

Theory-guided Neural Network architecture.

2.2.2. Dynamic Machine Learning Models

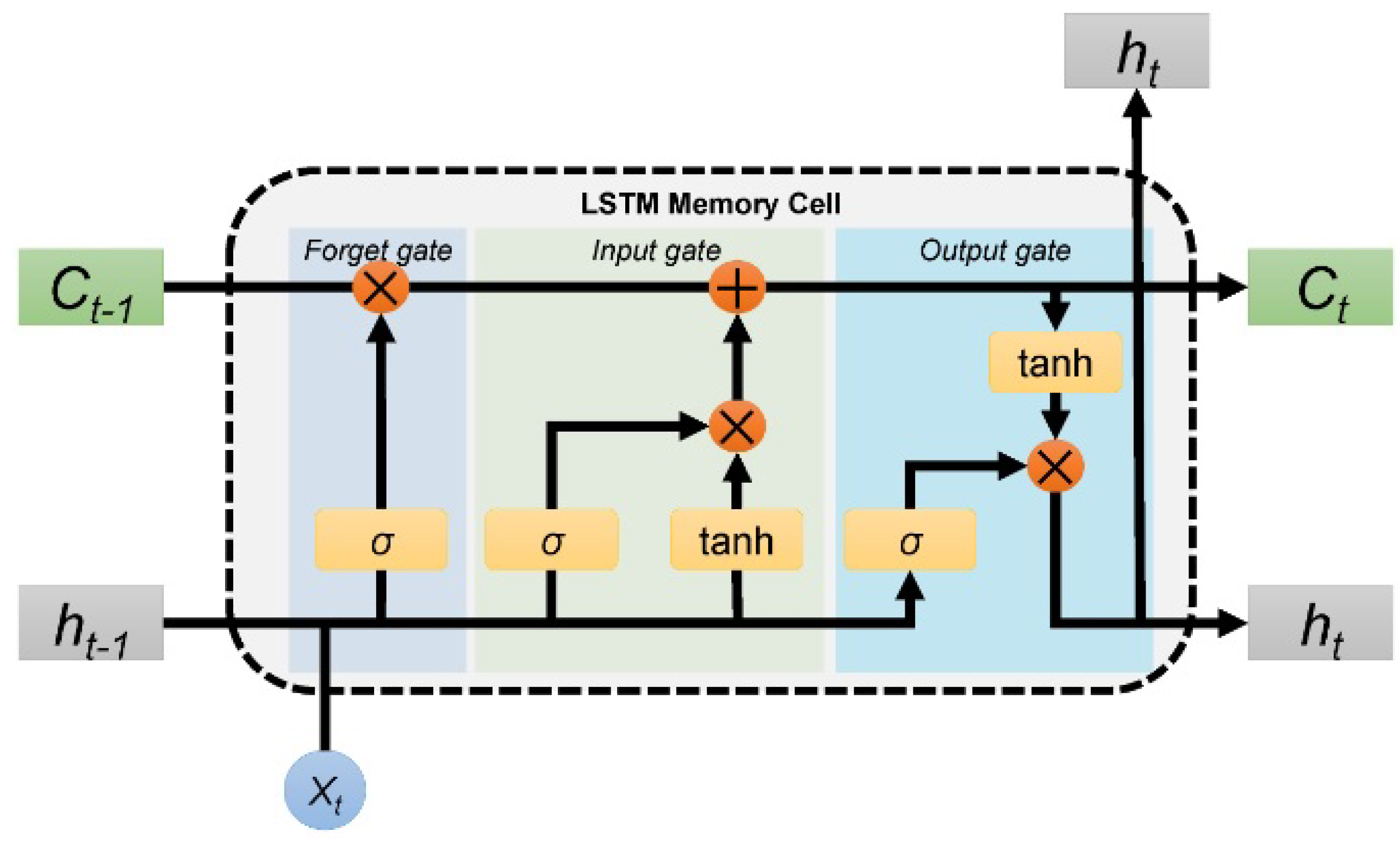

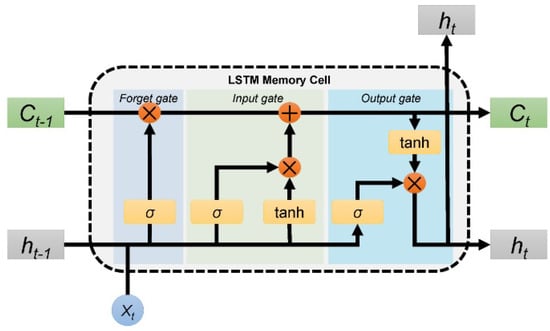

Classic ANNs and their variations are broadly adopted for production prediction purposes; however, they are not suitable for predicting production in a time-series way, since they cannot save information about past time. For that reason, various types of RNNs, usually Long Short-Term Memory (LSTM) ones as they can capture nonlinear features from an input dataset, are widely used to make such predictions, since each neural contains a loop that saves previous information to be used in a later stage. That way, information can be passed back and forth freely, enabling production predictions to consider time as an influential factor [65].

The architecture of an LSTM includes recurrent connections with specialized gating mechanisms that allow them to store and retrieve information over long sequences. This overcomes the vanishing gradient problem, a limitation of traditional recurrent networks, by preserving information for extended periods. Each LSTM cell contains three gates—input gate, forget gate, and output gate—that regulate the flow of information, as can be observed in Figure 9. The input gate decides which new information is important and should be added to the cell’s memory. The forget gate helps the cell determine what old information to keep and what to forget; lastly, the output gate combines the cell’s current memory with the input data to produce the output. These gates work together to manage the flow of information, allowing LSTMs to capture patterns in data sequences and remember important details over long periods, which is especially useful for tasks involving time series data such as language processing or predicting reservoir behavior.

Figure 9.

Architecture of an LSTM cell [66].

Li et al. [67] developed a CNN model coupled with an LSTM model for time-series production prediction purposes, optimized by a PSO algorithm. That way, the important temporal production-related parameters are extracted with the CNN and are inserted into the LSTM model to obtain the corresponding features in a time-series manner. The key hyperparameters in the CNN-LSTM model are optimized with the PSO algorithm to optimally construct the model for efficient production predictions. In a similar study involving data dimensionality reductions, Liu et al. [45] used an ensemble Empirical-Mode Decomposition (EMD) technique [68] to build an LSTM model, and, later in another study [69], a Mean Decrease Impurity (MDI) technique [70] to develop an LSTM model that provided a time-series production forecast. In the first study, the EMD technique is incorporated to break down non-stationary and nonlinear production time series into orthogonal components of simple time series. First, the initial dataset, consisting of the past oil production series, is divided into a training and a testing one. Then, the test data are slowly incorporated into the training data which are decomposed using the EMD technique to generate numerous intrinsic mode functions, the stability is assessed by the means, and the curve similarity is computed using the dynamic time warping method [71]. After that, the stable functions are chosen as inputs for the LSTM model. Furthermore, the model’s hyperparameters are optimized using a GA. In the second study, the MDI is used to determine each parameter’s average impurity reduction, which is used as an indicator for the parameter selection. The more independent the production is from a parameter, the smaller is the impurity reduction value. That way, the parameters that do not significantly affect the production are eliminated, keeping only the important ones, which, in this case, are a total of 14 parameters (e.g., recoverable reserves, wellhead pressure, water cut, water injection rate, production time, etc.). Then, those parameters were introduced to the model to predict future production.

In addition, Wang et al. [55] developed a simple LSTM model to forecast oil production in a time series way from past oil production data, including the oil production index over time. Furthermore, Song et al. [72] and Huang et al. [73] also developed LSTM models, identifying the relationship between past oil rate sequential data with future production rates. Fan et al. [74] proposed an integrated approach using an Autoregressive Integrated Moving Average (ARIMA) [75] and an LSTM model together with Daily Production time series (ARIMA-LSTM-DP) to develop robust models for predicting future production. This specific approach is chosen since ARIMA is an effective statistical analysis model for stationary time series forecasting and the LSTM model is considered a reliable approach since it can capture nonlinear fluctuations in the training dataset, which can be created because of the wells’ shut-in operations. Although many engineers tend to ignore shut-in data and perform the forecasting without them, the shut-in duration is usually crucial since it can pinpoint the extent of the formation pressure recovery that has a great effect on future production. Therefore, since the shut-in operations can generate nonlinear fluctuations on the production curves, the daily production time series should be taken into consideration for the LSTM model to make accurate production forecasts. The procedure is as follows: an original training dataset from real field production data is generated and the ARIMA statistical model is employed to extract the linear production time series and return the residuals (nonlinear), which, together with the daily production time series, consist of the final input dataset for the LSTM model. Then, the LSTM model is used to make non-linear predictions. The results of the proposed approach showed that the ARIMA model alone presents a good accuracy only for the linear production decline curves, while the LSTM model demonstrates more accurate results for the nonlinear. Finally, the integrated ARIMA-LSTM-DP model presents the best predictions. Finally, Sagheer et al. [76] built a deep LSTM model, together with a GA that optimized its architecture, to make predictions about the production performance from past raw production data. Before the model’s training, the authors performed a data pre-processing procedure to mitigate the effect of noise.

Bao et al. [21] developed an RNN model for the water-flooded reservoir characterization and production prediction purposes. The model was created to identify the underlying relationship between several control parameters, such as the water injection rate and BHPs (input), and the desired production, such as production rate, water cut, and breakthrough time (output). The breakthrough time is represented in a range between 0 and 1, with the latter indicating the breakthrough. The authors examined two types of RNN models, a cascaded LSTM (an updated version of LSTMs), and an Ensemble Kalman Filter (EnKF) enhanced LSTM. The former is built based on two sequential models, one model that predicts the breakthrough time, whose output is fed to the second model that, together with the other parameters, predicts the oil production. LSTM networks usually have a large number of hyperparameters that must be tuned to obtain the optimum results. For that reason, a Bayesian optimization method is used for that purpose, since it performs faster and more accurately. The results have a good accuracy; however, they present an abrupt jump close to the breakthrough time of one well, indicating that it might have a bigger effect on the other wells, something that can potentially be fixed with more training data. Then, the EnKF is used to the previous LSTM model to perform a history matching with real-time production data, enabling the model to be constantly updated based on new observations. The results showed that the EnKF improved the results; however, the EnKF presented overfitting problems.

The results showed that all aforementioned LSTM models, with or without data dimensionality reduction techniques, and employed to further improve the production prediction accuracy, present very good results, even for more complex input data, while also retaining a fast prediction performance.

He et al. [77] developed ANN models to forecast oil well performance that were trained using the production history of each well, as well as their spacing and other time-series data. After the model is trained, it is capable of forecasting future oil production, without the need for reservoir data. The results showed that the model is very efficient in predicting future performance. Ahmadi et al. [78] used Fuzzy logic, simple ANNs, and ANNs coupled with the Imperialist Competitive Algorithm (ICA) [79], and Berneti et al. [80] used only a simple ANN with the ICA to make future oil rate predictions based on data collected using flow meters (i.e., temperature and pressure values) as input and oil flow rates as output. The ICA-ANN model was shown to present the best performance. In addition, Zhang et al. [81] developed Multivariate Time Series (MTS) analysis [82] and Vector Auto-Regressive (VAR) [83] models to predict oil production for water-flooded reservoirs. MTS is used to determine the interactions for a group of time series parameters. When historical production data are considered as the input to a model, it usually presents dependencies with other parameters as well, such as the water injection in one or multiple injectors. More specifically, for water-flooded reservoirs, it is usually troublesome to identify data relationships among injectors and producers, thus MTS should be used for a better forecasting capability. VAR, on the other hand, is a forecasting algorithm utilized mostly when the relationship between the different time series is bi-directional, which is usually the case when many injectors and producers operate at the same time. Thus, the authors first implemented an MTS analysis to optimize the injection and production time series data; i.e., medium or higher correlations between the flow rate of a producer and at least one injector are identified. That way, the VAR model can efficiently mine the flow rate relationship among an injector/producer pair. The results show that the proposed models are very accurate and can efficiently determine each injection well’s effect on production, providing a useful guide to engineers for a water-flooding development plan.

Another more complex type of ANN is Higher Order Neural Networks (HONNs) [84], which can handle nonlinear relationships and higher-level interactions between input variables. They do this by incorporating polynomial activation functions and extending the number of layers, which allows the network to model more complex data patterns. In a traditional ANN, the relationship between input and output is typically defined by a weighted sum of the inputs that are passed through an activation function. However, in a HONN, the weighted sum is replaced by a weighted sum of products of inputs. This allows HONNs to model interactions between inputs explicitly and to learn more complex decision boundaries. For example, a second-order neural network can model interactions between pairs of input features, a third-order network can model interactions between triples of features, and so forth. The higher the order of the network, the more complex are the interactions it can model.

As such, Chakra et al. [85] applied a complex network to forecast production for an oil field with limited data, along with a low-pass filter data-processing procedure to reduce the noise and Auto-Correlation and Cross-Correlation Functions (ACF and CCF) to determine the optimal input parameters. These statistical functions can determine the relationships among the input parameters. The authors examined two cases, one with only oil production data where the ACF function was used and one with water, oil, and gas production data where the CCF function was used, since it can find correlation among one or more time series data more efficiently. Although there was limited availability of input parameters, both models performed efficiently, showing that HONN models can be trained even with fewer input data. Prasetyo et al. [86] used the same concept and developed an EMD back-propagation HONN for fields with less available data, successfully making predictions on production using historical time series production data.

Furthermore, the application of pattern recognition methods to production forecasts, such as the studies by López-Yáñez et al. [87,88], has gained more popularity. More specifically, the authors used ANN and Gamma regression [89] models (pattern recognition), trained with static and dynamic parameters (water, oil, and gas production; BHPs) to forecast future oil production in a time-series manner. Gamma regression algorithms are SL algorithms designed to predict continuous numerical values and are particularly useful when the errors in prediction have varying impacts on the outcome, and a flexible approach to modeling these errors is required. The architecture involves incorporating a gamma distribution to model the heteroscedasticity of the errors, meaning that the variability of the errors changes across the range of predicted values. This distribution captures the varying uncertainty in different regions of the data. During training, the model estimates the parameters of the gamma distribution by optimizing a likelihood function that captures the relationship between the predicted values and the actual outcomes. The result is a regression model that can more accurately represent the changing uncertainties in the predictions, making it suitable for situations where accurate quantification of prediction errors is essential or any scenario where the variability of prediction errors is not constant across the data space.

The results of López-Yáñez et al. [87,88] showed that the proposed Gamma classifier model exhibits an efficient performance. These models present very good forecasting results for short-term periods (maximum one year); however, they exhibited difficulties when longer forecasting periods were examined. To account for that, Aizenberg et al. [90] extended the above studies and developed a Multilayer Neural Network with Multi-Valued Neurons (MLMVN) [91], which is a derivative-free backpropagation complex ANN, to examine long-term oil forecasting faster using real data that describe the dynamic behavior of the field (e.g., past monthly oil production from several wells), without taking into consideration the reservoir characteristics. MLMVNs employ neurons that can take on multiple discrete values, offering a more nuanced representation of information. This ability to have multi-valued activations allows MLMVNs to capture complex relationships in data more effectively, especially in tasks where finer distinctions are necessary. Therefore, as the authors note, the main benefit of this approach is that it enables local variation predictions instead of smooth curve ones as conventional methods, since the latter can discard usable data points resulting in incorrect predictions. The proposed models presented good results for a long-term oil production forecast, since they can perform long-term predictions for up to 10–15 years.

2.3. Machine Learning Methods for PFO Applications in Unconventional Reservoirs

2.3.1. Static Machine Learning Models

In recent years, researchers have agreed that it is not always feasible to determine estimates about hydrocarbons-in-place or future production performance for unconventional reservoirs (tight or fractured ones) by using traditional methods, since simple mathematical models cannot adequately describe the complexity of such systems. Therefore, ML approaches have been widely used in forecasting oil recovery and NPVs for hydraulic fracture schemes or tight reservoirs since they can assist in effectively making better and faster management decisions [92].

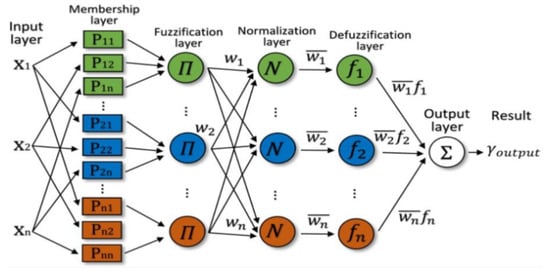

ML methods, more specifically, ANNs, have been used in many hydraulic fracturing applications, such as the selection of the candidate hydraulic-fractured wells that maximize gas production by Yu et al. [93] who used ANNs, GA-based Fuzzy Neural Networks (FNNs), and SVMs. They produced several realizations using traditional simulators to obtain the training sets comprising of parameters that affect the post-fracture system response (acoustic travel time, gas saturation, proppant concertation, etc.) as input and the system’s response, i.e., gas production as the output. The FNN-GA model was shown to be the most accurate. In addition, Oberwinkler et al. [94] tried to optimize the production in hydraulic-fractured reservoirs using an ANN model coupled with a GA to optimize the production after a fracture treatment. The ANN is trained using the proppant type, volume, and mass, as well as net pay thickness as inputs, and was shown to efficiently identify the underlying relationship between them and the corresponding cumulative production after one year of fracture treatment. Clar et al. [95] coupled an ANN model with a bootstrap re-sampling method to evaluate the production performance for fractured wells. They created a training data ensemble comprising of reservoir, hydraulic fracture, and production parameter values to train the model. Before the model’s training, the authors applied a cross-validation method to determine the most optimal model. After training, the bootstrap algorithm was used to examine the model’s uncertainty. The parameters that were observed to have an important impact on production are lateral length, true vertical depth, porosity, and fracture fluid intensity. Therefore, the coupled model provided a clear picture of which outputs were statistically more important, facilitating a better interpretation of the results. In addition, Carpenter C. [96] also developed a DL ANN to determine the underlying relationship between geology and the average estimated ultimate recovery for hydraulically fractured horizontal wells in tight reservoirs. The model was trained and evaluated using geological (thickness, porosity, water saturation, etc.) and production data. The results showed that the ultimate recovery predictions are imperfect; however, they are still about twice as precise as traditional methods. One of the reasons for that is the lack of fracture-related information, since that can significantly affect recovery.

Ockree et al. [97] developed three RF models to predict water, oil, and gas production, respectively, for a hydraulic-fractured reservoir. Since the production of the three reservoir fluids (water, oil, and gas) may be affected by different parameters, all fluids were modeled separately. The authors included a data pre-processing procedure using the Robust Mahalanobis statistical multivariate approach [98] to remove any outliers and incorrect data and ensure better predictions. Moreover, they removed several highly correlated parameters using a heat map of parameter correlations (known as the attribute correlation matrix). The final training input dataset, consisting of geology-related data (e.g., reservoir pressure, net pay, etc.), well spacing data (e.g., drainage per stage) and completion data (e.g., proppant per fluid ratio, stage spacing, etc.), was used for the application of the bootstrapping method, which created a thousand randomly selected replicate datasets to train a corresponding amount of DTs. When the training and testing are completed, the best model is selected by comparing the predicted production with real production values.

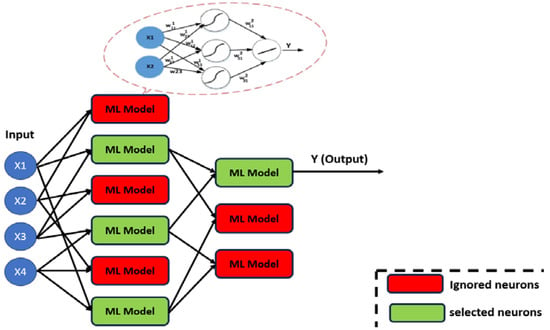

In addition to the ensemble methods mentioned in the precious sections, there is also the Adaptive Boosting (AdaBoost) method, which is a popular ensemble ML method. For regression cases, AdaBoost Regressor aims to build a robust predictive model by combining multiple weak learners into a strong ensemble (Figure 10). Its architecture involves iteratively fitting weak learners to the data, with each subsequent learner focusing on the instances that the previous ones struggled to predict accurately. The training process assigns varying weights to training instances, giving more importance to those with higher prediction errors. In each iteration, the algorithm adjusts the weights based on the errors made by the previous weak learners. The final prediction is a weighted sum of the individual learners’ predictions, where the weights are determined by their performance. AdaBoost adapts to the complexity of the data by giving more weight to instances that are challenging to predict, allowing it to excel in situations where the relationship between features and target values is non-linear or intricate.

Figure 10.

Adaptive Boosting architecture [99].

Xue et al. [100] developed tree-based ensemble regression models (Polynomial regression, DTs, RFs, AdaBoost, and XGB) to optimize a completion development with the ultimate goal of improving the cumulative oil recovery for hydraulic-fractured reservoirs. First, the authors used the LH sampling method to randomly generate parameter samples. Then, they performed simulation runs using the results of an experimental design, obtaining the training dataset that consisted of well spacing, injection rate, stage and perforation spacing, pump rate, and fracture treatment quantity as input variables. After training and testing the models, they can be utilized to make predictions. The proposed approach provided high-speed predictions. The main advantage of this methodology is that it can be utilized to examine economic aspects for future completion scenarios and to improve the wells’ productivity. One of the drawbacks of this research is that the authors used a limited number of training samples for the proxies to decrease the number of simulations required, endangering the credibility of the models.

In another study, Wang et al. [101] created four ML models using ANNs, RFs, AdaBoost, and SVMs, for comparison reasons, to predict a well’s cumulative production for the first year. The training dataset consisted of well information (e.g., well locations, true vertical depth, well lateral length, wellbore direction) and stimulation information (e.g., fracture stages, total volumes of fluid and proppant, fracturing fluid type). Furthermore, a recursive feature elimination method was first utilized to examine which parameters present the greatest effect on the prediction models. The authors claim that the proposed models can help engineers design hydraulic fracture treatments in tight reservoirs. The results showed that the RF model has the best performance in terms of prediction accuracy; however, it presented overfitting issues. Park et al. [102] used different ML methods, namely polynomial regression, XGB, ANNs, and RFs, to optimize a field development strategy. The authors used conventional simulators for different injection-production scenarios to create the training dataset which was inserted into the ML models so that they learn the influence of reservoir and design (completion, well spacing, and timing) parameters on the cumulative oil production of existing and potentially new wells. Then, when the model is trained, it is applied at the well-scale and is used together with a GA that performs an optimization procedure to obtain a group of history-matched models to make production and NPV full-scale predictions. By testing the models with a blind dataset, the authors were able to show that their approach can successfully forecast the well performance even in areas with a limited availability of data (i.e., potentially newly drilled areas with fewer data), taking advantage of the history-matched parameters of nearby wells. The importance of this approach lies in the fact that the underlying simulator’s physics is embedded in the models, allowing any design parameter to be connected to well performance. The results showed that polynomial regression and XGB methods can assist an unconventional field planning based on the predicted production and economic metrics for many completion designs. However, physically impossible production values are obtained (negative oil production), showing that considering training sets without priorly obtained field knowledge can produce unrealistic values. For that reason, Lizhe et al. [92] developed a new more generalized ANN by inserting prior knowledge into the model apart from the original training data (treatment pressure and rate, cluster spacing), to predict the NPV and improve the efficiency of a hydraulic fracturing scheme. Thus, the resulting training dataset is a combination of the original one together with the fracture geometry raw (width and length of each fracture) and hand-crafted (average area, width and length, and variation of width and length) parameters, which constitute the prior knowledge. Four other models (RFs, AdaBoost, SVMs, and regular ANNs) are created for comparison reasons. The results showed that the accuracy of the models is very good and that the proposed approach surpasses the other four models in terms of efficiency.

Panja et al. [103] developed three ML models, namely Least Square Support Vector Machines (LSSVMs), ANNs, and a response surface model using second-order polynomial equations to predict the oil RF and produced GOR in fractured wells. The LSSVM method [104] is an advancement of the SVM one, in the sense that the solution can be more easily found using a set of linear equations instead of convex quadratic programming problems associated with the classic SVMs. The models were trained using static and dynamic parameters (e.g., permeability, rock compressibility, initial reservoir pressure, BHPs, etc.). The results show that the LSSVM presents the most accurate oil recovery predictions while also maintaining a good efficiency for a more complex GOR nature. Ahmadi et al. [105] developed several ML techniques, namely LSSVMs, ANNs, and Hybrid Fuzzy Kalman filter [106] with a GA (HFK-GA), to determine the water coning breakthrough time in fracture reservoirs using flow rate, cone height, viscosity of reservoir fluid, and fracture number as input data. Coning predictions are very important, since as the production rate increases the coning also increases, driving engineers to determine the cone breakthrough to set production at an allowable rate. The results showed that the LSSVM technique presented the most accurate results, as compared with the other two methods.

2.3.2. Dynamic Machine Learning Models

There are many cases, especially for hydraulic fracture applications in complex fields, where the employed ML methods are considered a challenging task. Those cases can be related to having few training samples due to unavailable field data, not taking into consideration the fracture geometry, etc. [107]. Pal M. [108] was the first to develop a DL-RNN-LSTM model to predict future oil and water production rates in a time series way for a tight carbonate reservoir under water-flooding with long horizontal wells, using a limited amount of real injection and production data as inputs. Due to data collection issues, such as challenges in injection/production measurements, in the collection of high-frequency injection/production data and in variations of injection/production data, data quality issues emerged; thus, simple manual data pre-processing procedures (e.g., checks for data formatting errors, missing values, repeated rows, spelling inconsistencies, etc.) were performed to obtain better prediction results. Furthermore, the author calculated data correlations to observe how water injection affects oil, gas, and water production. The results showed that the proposed ML model was able to predict oil production for a 5-year period with relatively good accuracy. However, when the model was used to predict the production only for the first year, the accuracy achieved was considerably higher. Furthermore, it was shown that the accuracy of the prediction results was significantly better for wells with much more available production data, suggesting that the accuracy increases as the availability of data increases. Srinivasan et al. [108] developed a novel approach to perform real-time HM and make long-term production predictions for fractured shale gas reservoirs with limited filed information utilizing Reduced Order Models (ROMs) and ANNs. The author’s aim was to generate synthetic data from both conventional reservoir simulations and ROMs (known as low-fidelity models). That way, the ANN can be trained with data obtained from the fast ROM, which is then updated with a small amount of data from the slow traditional approach (transfer learning) to improve accuracy. This process enables an efficient approach to problems related to sparsely available field or simulation data, something that is very common for unconventional reservoirs, thus providing more accurate results on production predictions. It was shown that training the ANN only with data from ROMs does not provide very accurate prediction results; however, introducing a small dataset of simulation results can boost the model’s performance. The proposed physics-informed approach was shown to be capable of performing real-time HM and production predictions for tight and/or fractured reservoirs.

Pan et al. [109] applied a cascaded LSTM model, (tree-structured LSTM network) coupled with a Savitzky–Golay filter [110] for hydraulic fracture production prediction and monitoring purposes. The filter is a polynomial algorithm used to smooth time-series data. The authors examined two cases, one with pressure and production rate time series data and one with only production time series data. In the former case, the coupled model will be used for data processing, missing values identification and, finally, production predictions. On the other hand, in the latter case, a denoising LSTM was used to reduce the noise in the training production dataset, as well as identify the missing values; however, the production predictions are executed using the conventional DCA method. The denoising model with fewer data was capable of smoothing the time series data; however, it could only assign missing values for a short period. By adding more data (i.e., pressure histories), the missing values are effectively reconstructed to obtain better predictions.

2.4. Machine Learning Methods for EOR/Sequestration Projects

2.4.1. Machine Learning Models for the MMP Calculation