Machine Learning Schemes for Anomaly Detection in Solar Power Plants

Abstract

:1. Introduction

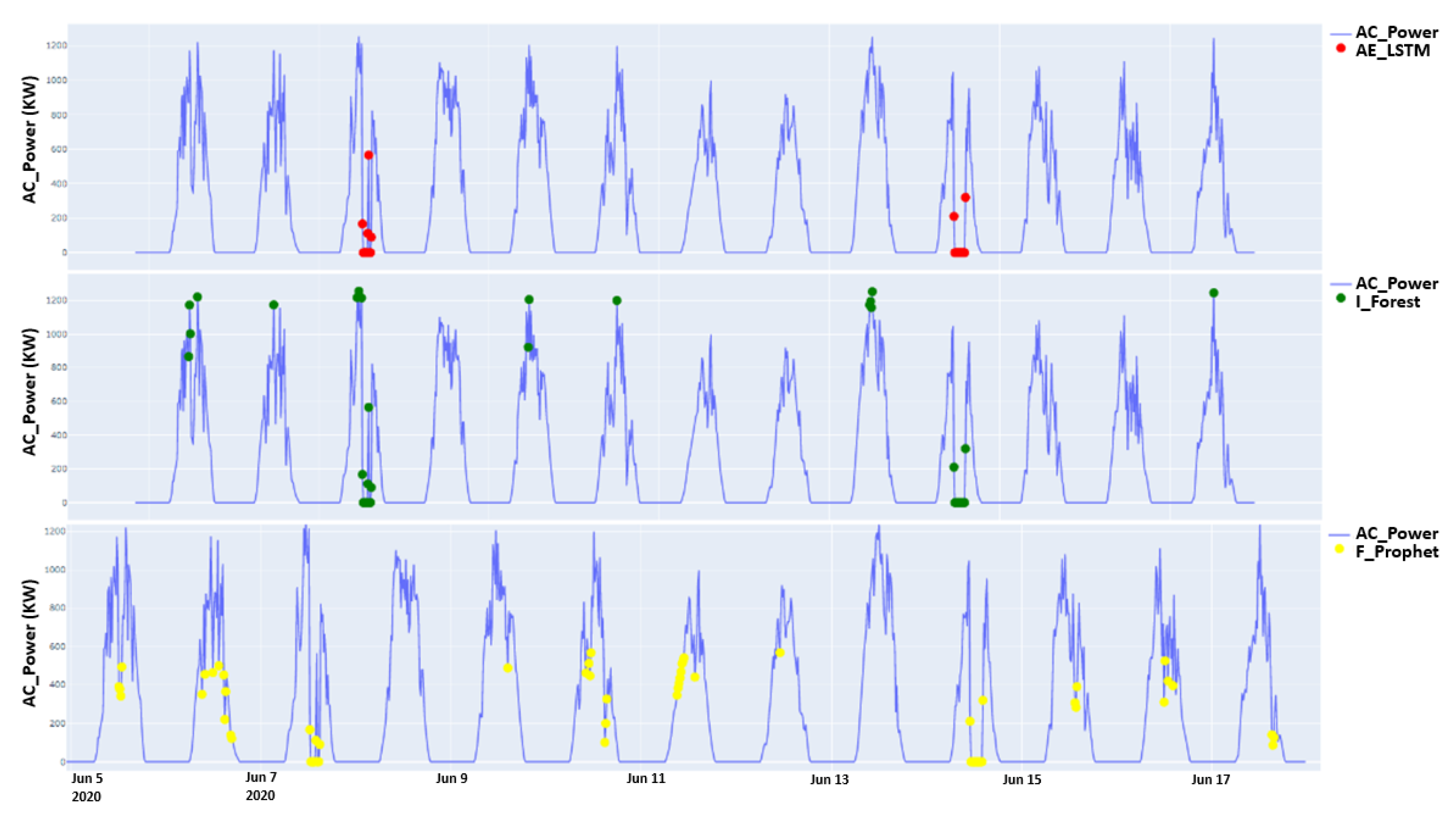

- The investigation of three well-known anomaly detection models: Autoencoder LSTM (AE-LSTM), Facebook-Prophet, and Isolation Forest. Comparison tests were conducted examining the accuracy and performance of these models with their optimized hyperparameters.

- Defining and classifying the internal and external factors that induce anomalies in the PV power plant, investigating their effects on the model’s accuracy, and studying the correlation effect and its impact on detecting anomalies.

2. Related Work

3. Materials and Methods: ML Algorithms

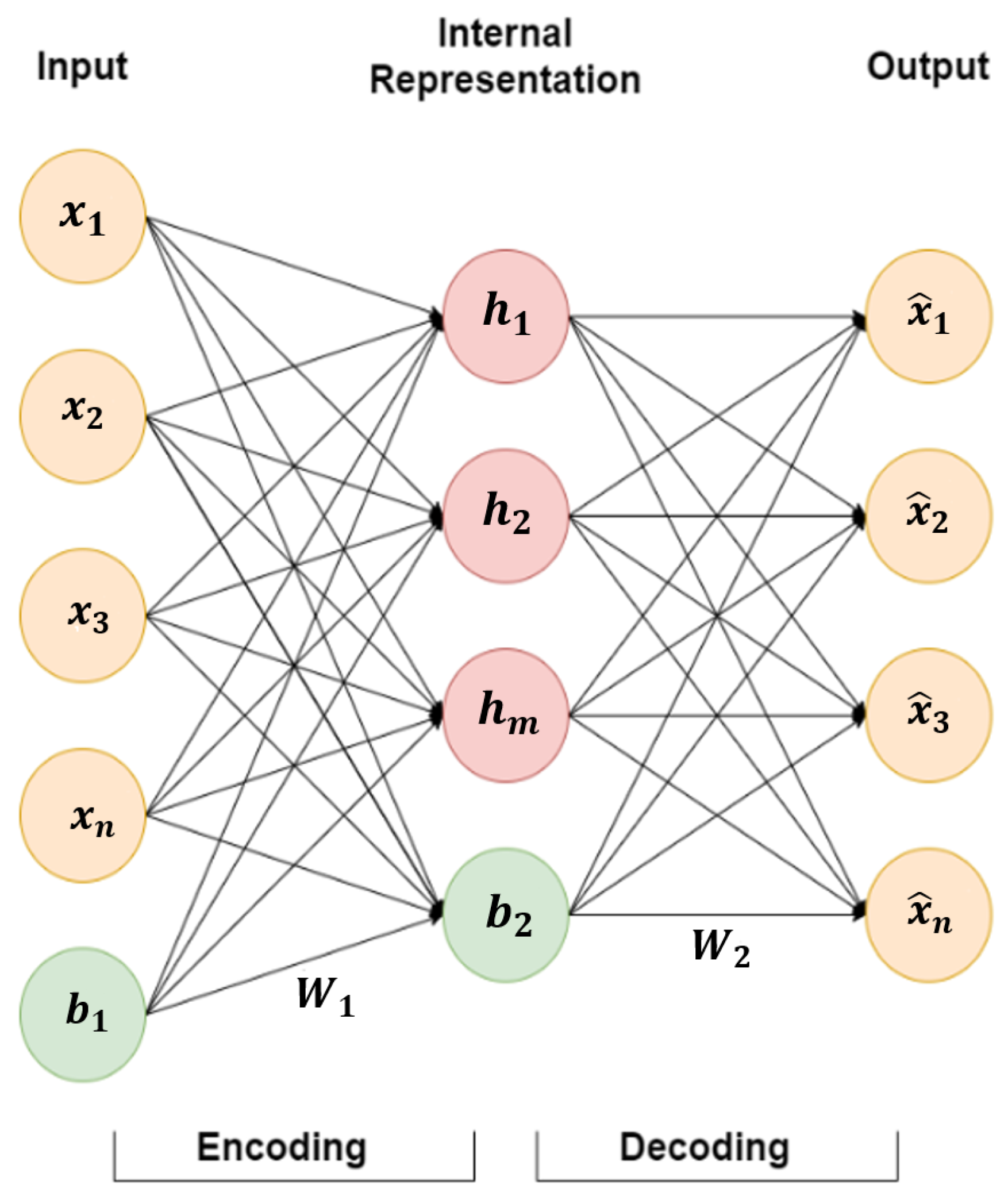

3.1. AutoEncoder Long Short-Term Memory (AE-LSTM)

3.2. Facebook-Prophet

3.3. Isolation Forest

4. Collected Data

5. Results and Discussion

5.1. Facebook-Prophet Optimized Parameters

- n_changepoints = 0.9;

- changepoint_prior_scale = 200;

- seasonality_mode = multiplicative.

5.2. AE-LSTM Optimized Parameters

5.3. Isolation Forest Optimized Parameters

5.4. Anomaly Detection Performance

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| Acronyms | |

| PV | Photovoltaic |

| AE-LSTM | AutoEncoder Long Short-Term Memory |

| ANN | Artificial neural network |

| RNN | Recurrent neural networks |

| R-squared | |

| ReLU | Rectifier activation function |

| 1-SVM | One-class support vector machine |

| Facebook-Prophet | |

| Isolation Forest | |

| Notations | |

| X | Input vector of AutoEncoder |

| Predicted output vector of AutoEncoder | |

| W | Weights |

| b | Bias |

| f | Activation function |

| H | Intermediate representation of the primary data |

| h | The present final output |

| c | Current cell state |

| x | Present input |

| f | Forget gate |

| i | Input gate |

| u | The input to the cell c that is gated by the input gate |

| o | The output control signal |

| ⊙ | An element-wise multiplication |

| Trend function | |

| The holidays function | |

| C | The carrying capacity |

| k | The growth rate |

| m | An offset specification |

| s | Change points |

| Vector of rate adjustments | |

| The cumulative growth until change points s | |

| p | The threshold value |

| q | A sample of selected features |

| The length of path | |

| The harmonic | |

| Spearman’s rank | |

| d | The difference among the two ranks of each observation |

| y | The actual data |

| The predicted data |

References

- Awaysheh, F.M.; Alazab, M.; Garg, S.; Niyato, D.; Verikoukis, C. Big data resource management & networks: Taxonomy, survey, and future directions. IEEE Commun. Surv. Tutor. 2021, 23, 2098–2130. [Google Scholar]

- Alshehri, M.; Kumar, M.; Bhardwaj, A.; Mishra, S.; Gyani, J. Deep Learning Based Approach to Classify Saline Particles in Sea Water. Water 2021, 13, 1251. [Google Scholar] [CrossRef]

- Agarwal, A.; Sharma, P.; Alshehri, M.; Mohamed, A.A.; Alfarraj, O. Classification model for accuracy and intrusion detection using machine learning approach. PeerJ Comput. Sci. 2021, 7, e437. [Google Scholar] [CrossRef]

- Benninger, M.; Hofmann, M.; Liebschner, M. Online Monitoring System for Photovoltaic Systems Using Anomaly Detection with Machine Learning. In Proceedings of the NEIS 2019, Conference on Sustainable Energy Supply and Energy Storage Systems, Hamburg, Germany, 19–20 September 2019; VDE: Hongkong, China, 2019; pp. 1–6. [Google Scholar]

- Li, C.; Yang, Y.; Zhang, K.; Zhu, C.; Wei, H. A fast MPPT-based anomaly detection and accurate fault diagnosis technique for PV arrays. Energy Convers. Manag. 2021, 234, 113950. [Google Scholar] [CrossRef]

- Hu, B. Solar Panel Anomaly Detection and Classification. Master’s Thesis, University of Waterloo, Waterloo, ON, Canada, 2012. [Google Scholar]

- Branco, P.; Gonçalves, F.; Costa, A.C. Tailored algorithms for anomaly detection in photovoltaic systems. Energies 2020, 13, 225. [Google Scholar] [CrossRef] [Green Version]

- Firth, S.K.; Lomas, K.J.; Rees, S.J. A simple model of PV system performance and its use in fault detection. Sol. Energy 2010, 84, 624–635. [Google Scholar] [CrossRef] [Green Version]

- Elsheikh, A.H.; Sharshir, S.W.; Abd Elaziz, M.; Kabeel, A.E.; Guilan, W.; Haiou, Z. Modeling of solar energy systems using artificial neural network: A comprehensive review. Sol. Energy 2019, 180, 622–639. [Google Scholar] [CrossRef]

- Elsheikh, A.H.; Katekar, V.P.; Muskens, O.L.; Deshmukh, S.S.; Abd Elaziz, M.; Dabour, S.M. Utilization of LSTM neural network for water production forecasting of a stepped solar still with a corrugated absorber plate. Process Saf. Environ. Prot. 2021, 148, 273–282. [Google Scholar] [CrossRef]

- Elsheikh, A.H.; Panchal, H.; Ahmadein, M.; Mosleh, A.O.; Sadasivuni, K.K.; Alsaleh, N.A. Productivity forecasting of solar distiller integrated with evacuated tubes and external condenser using artificial intelligence model and moth-flame optimizer. Case Stud. Therm. Eng. 2021, 28, 101671. [Google Scholar] [CrossRef]

- Ibrahim, M.; Alsheikh, A.; Al-Hindawi, Q.; Al-Dahidi, S.; ElMoaqet, H. Short-time wind speed forecast using artificial learning-based algorithms. Comput. Intell. Neurosci. 2020, 2020, 8439719. [Google Scholar] [CrossRef]

- Aslam, S.; Herodotou, H.; Mohsin, S.M.; Javaid, N.; Ashraf, N.; Aslam, S. A survey on deep learning methods for power load and renewable energy forecasting in smart microgrids. Renew. Sustain. Energy Rev. 2021, 144, 110992. [Google Scholar] [CrossRef]

- Latha, R.S.; Sreekanth, G.R.R.; Suganthe, R.C.; Selvaraj, R.E. A survey on the applications of Deep Neural Networks. In Proceedings of the 2021 IEEE International Conference on Computer Communication and Informatics (ICCCI), Coimbatore, India, 27–29 January 2021; pp. 1–3. [Google Scholar]

- De Benedetti, M.; Leonardi, F.; Messina, F.; Santoro, C.; Vasilakos, A. Anomaly detection and predictive maintenance for photovoltaic systems. Neurocomputing 2018, 310, 59–68. [Google Scholar] [CrossRef]

- Natarajan, K.; Bala, P.K.; Sampath, V. Fault Detection of Solar PV System Using SVM and Thermal Image Processing. Int. J. Renew. Energy Res. (IJRER) 2020, 10, 967–977. [Google Scholar]

- Harrou, F.; Dairi, A.; Taghezouit, B.; Sun, Y. An unsupervised monitoring procedure for detecting anomalies in photovoltaic systems using a one-class Support Vector Machine. Sol. Energy 2019, 179, 48–58. [Google Scholar] [CrossRef]

- Feng, M.; Bashir, N.; Shenoy, P.; Irwin, D.; Kosanovic, D. SunDown: Model-driven Per-Panel Solar Anomaly Detection for Residential Arrays. In Proceedings of the 3rd ACM SIGCAS Conference on Computing and Sustainable Societies, Guayaquil, Ecuador, 15–17 June 2020; pp. 291–295. [Google Scholar]

- Sanz-Bobi, M.A.; San Roque, A.M.; De Marcos, A.; Bada, M. Intelligent system for a remote diagnosis of a photovoltaic solar power plant. J. Phys. Conf. Ser. 2012, 364, 012119. [Google Scholar] [CrossRef] [Green Version]

- Zhao, Y.; Liu, Q.; Li, D.; Kang, D.; Lv, Q.; Shang, L. Hierarchical anomaly detection and multimodal classification in large-scale photovoltaic systems. IEEE Trans. Sustain. Energy 2018, 10, 1351–1361. [Google Scholar] [CrossRef]

- Mulongo, J.; Atemkeng, M.; Ansah-Narh, T.; Rockefeller, R.; Nguegnang, G.M.; Garuti, M.A. Anomaly detection in power generation plants using machine learning and neural networks. Appl. Artif. Intell. 2020, 34, 64–79. [Google Scholar] [CrossRef]

- Benninger, M.; Hofmann, M.; Liebschner, M. Anomaly detection by comparing photovoltaic systems with machine learning methods. In Proceedings of the NEIS 2020, Conference on Sustainable Energy Supply and Energy Storage Systems, Hamburg, Germany, 14–15 September 2020; VDE: Hongkong, China, 2020; pp. 1–6. [Google Scholar]

- Balzategui, J.; Eciolaza, L.; Maestro-Watson, D. Anomaly detection and automatic labeling for solar cell quality inspection based on Generative Adversarial Network. Sensors 2021, 21, 4361. [Google Scholar] [CrossRef]

- Wang, Q.; Paynabar, K.; Pacella, M. Online automatic anomaly detection for photovoltaic systems using thermography imaging and low rank matrix decomposition. J. Qual. Technol. 2021, 1–14. [Google Scholar] [CrossRef]

- Hempelmann, S.; Feng, L.; Basoglu, C.; Behrens, G.; Diehl, M.; Friedrich, W.; Brandt, S.; Pfeil, T. Evaluation of unsupervised anomaly detection approaches on photovoltaic monitoring data. In Proceedings of the 2020 47th IEEE Photovoltaic Specialists Conference (PVSC), Calgary, AB, Canada, 15 June–21 August 2020; pp. 2671–2674. [Google Scholar]

- Iyengar, S.; Lee, S.; Sheldon, D.; Shenoy, P. Solarclique: Detecting anomalies in residential solar arrays. In Proceedings of the 1st ACM SIGCAS Conference on Computing and Sustainable Societies, Menlo Park and San Jose, CA, USA, 20–22 June 2018; pp. 1–10. [Google Scholar]

- Tsai, C.W.; Yang, C.W.; Hsu, F.L.; Tang, H.M.; Fan, N.C.; Lin, C.Y. Anomaly Detection Mechanism for Solar Generation using Semi-supervision Learning Model. In Proceedings of the 2020 IEEE Indo–Taiwan 2nd International Conference on Computing, Analytics and Networks (Indo-Taiwan ICAN), Rajpura, India, 7–15 February 2020; pp. 9–13. [Google Scholar]

- Pereira, J.; Silveira, M. Unsupervised anomaly detection in energy time series data using variational recurrent autoencoders with attention. In Proceedings of the 2018 17th IEEE international conference on machine learning and applications (ICMLA), Orlando, FL, USA, 17–20 December 2018; pp. 1275–1282. [Google Scholar]

- Kosek, A.M.; Gehrke, O. Ensemble regression model-based anomaly detection for cyber-physical intrusion detection in smart grids. In Proceedings of the 2016 IEEE Electrical Power and Energy Conference (EPEC), Ottawa, ON, Canada, 12–14 October 2016; pp. 1–7. [Google Scholar]

- Rossi, B.; Chren, S.; Buhnova, B.; Pitner, T. Anomaly detection in smart grid data: An experience report. In Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Budapest, Hungary, 9–12 October 2016; pp. 002313–002318. [Google Scholar]

- Toshniwal, A.; Mahesh, K.; Jayashree, R. Overview of anomaly detection techniques in machine learning. In Proceedings of the 2020 IEEE Fourth International Conference on I-SMAC (IoT in Social, Mobile, Analytics and Cloud) (I-SMAC), Palladam, India, 7–9 October 2020; pp. 808–815. [Google Scholar]

- Hu, D.; Zhang, C.; Yang, T.; Chen, G. Anomaly Detection of Power Plant Equipment Using Long Short-Term Memory Based Autoencoder Neural Network. Sensors 2020, 20, 6164. [Google Scholar] [CrossRef]

- Que, Z.; Liu, Y.; Guo, C.; Niu, X.; Zhu, Y.; Luk, W. Real-time Anomaly Detection for Flight Testing using AutoEncoder and LSTM. In Proceedings of the 2019 IEEE International Conference on Field-Programmable Technology (ICFPT), Tianjin, China, 9–13 December 2019; pp. 379–382. [Google Scholar]

- Hochreite, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Werbos, P.J. Backpropagation through time: What it does and how to do it. Proc. IEEE 1990, 78, 1550–1560. [Google Scholar] [CrossRef] [Green Version]

- Taylor, S.J.; Letham, B. Forecasting at scale. Am. Stat. 2018, 72, 37–45. [Google Scholar] [CrossRef]

- Srivastava, S. Benchmarking Facebook’s Prophet, PELT and Twitter’s Anomaly Detection and Automated de Ployment to Cloud. Master’s Thesis, University of Twente, Enschede, The Netherlands, 2019. [Google Scholar]

- Hariri, S.; Kind, M.C.; Brunner, R.J. Extended isolation forest. IEEE Trans. Knowl. Data Eng. 2019, 33, 1479–1489. [Google Scholar] [CrossRef] [Green Version]

- Kannal, A. Solar Power Generation Data. Kaggle.com. Available online: https://www.kaggle.com/anikannal/solar-power-generation-data (accessed on 25 January 2022).

- Corder, G.W.; Foreman, D.I. Nonparametric Statistics: A Step-by-Step Approach; John Wiley & Sons: Hoboken, NJ, USA, 2014. [Google Scholar]

- Awaysheh, F.M.; Alazab, M.; Gupta, M.; Pena, T.F.; Cabaleiro, J.C. Next-generation big data federation access control: A reference model. Future Gener. Comput. Syst. 2020, 108, 726–741. [Google Scholar] [CrossRef] [Green Version]

- ParameterGrid. Available online: https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.ParameterGrid.html (accessed on 25 January 2022).

- Kebande, V.R.; Awaysheh, F.M.; Ikuesan, R.A.; Alawadi, S.A.; Alshehri, M.D. A Blockchain-Based Multi-Factor Authentication Model for a Cloud-Enabled Internet of Vehicles. Sensors 2021, 21, 6018. [Google Scholar] [CrossRef]

- Kebande, V.R.; Alawadi, S.; Awaysheh, F.M.; Persson, J.A. Active Machine Learning Adversarial Attack Detection in the User Feedback Process. IEEE Access 2021, 9, 36908–36923. [Google Scholar] [CrossRef]

- Whitley, D. A genetic algorithm tutorial. Stat. Comput. 1994, 4, 65–85. [Google Scholar] [CrossRef]

| Parameter | Grid |

|---|---|

| n_changepoints | [10,25,50,75,100,150,200,300,400,500] |

| changepoint_prior_scale | [0.1,0.2,0.3,0.4,0.5,0.6,0.7,0.8,0.9] |

| seasonality_mode | [‘multiplicative’, ‘additive’] |

| Parameter | Grid |

|---|---|

| Number_hidden_neurons L1 | [5,10,15,20,25,30] |

| Number_hidden_neurons L2 | [5,10,15,20,25,30] |

| Number_hidden_neurons L3 | [5,10,15,20,25,30] |

| Number_hidden_neurons L4 | [5,10,15,20,25,30] |

| batch | [5,10,15,20,25,30] |

| epochs | [200,250,300,350,400,450,500] |

| Parameter | Grid |

|---|---|

| bootstrap | [False, True] |

| n_estimators | [50,100,200,300,400,500,600,700,800,900,1000,1500,2000] |

| contamination | [0,0.01,0.03,0.06,0.09,0.12,0.15,0.2,0.25,0.3,0.4,0.45,0.5] |

| Healthy | Anomaly | |

|---|---|---|

| Healthy | True Positives (TP) = 216 | False Negatives (FN) = 13 |

| Anomaly | False Positives (FP) = 0 | True Negatives (TN) = 12 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ibrahim, M.; Alsheikh, A.; Awaysheh, F.M.; Alshehri, M.D. Machine Learning Schemes for Anomaly Detection in Solar Power Plants. Energies 2022, 15, 1082. https://doi.org/10.3390/en15031082

Ibrahim M, Alsheikh A, Awaysheh FM, Alshehri MD. Machine Learning Schemes for Anomaly Detection in Solar Power Plants. Energies. 2022; 15(3):1082. https://doi.org/10.3390/en15031082

Chicago/Turabian StyleIbrahim, Mariam, Ahmad Alsheikh, Feras M. Awaysheh, and Mohammad Dahman Alshehri. 2022. "Machine Learning Schemes for Anomaly Detection in Solar Power Plants" Energies 15, no. 3: 1082. https://doi.org/10.3390/en15031082

APA StyleIbrahim, M., Alsheikh, A., Awaysheh, F. M., & Alshehri, M. D. (2022). Machine Learning Schemes for Anomaly Detection in Solar Power Plants. Energies, 15(3), 1082. https://doi.org/10.3390/en15031082