In this section, we investigate RF variants with different data preprocessing methods and training modes. We compare RF performance with that of other models based on classical statistical methods and ML methods.

5.1. Results for Different Preprocessing Methods and Training Modes

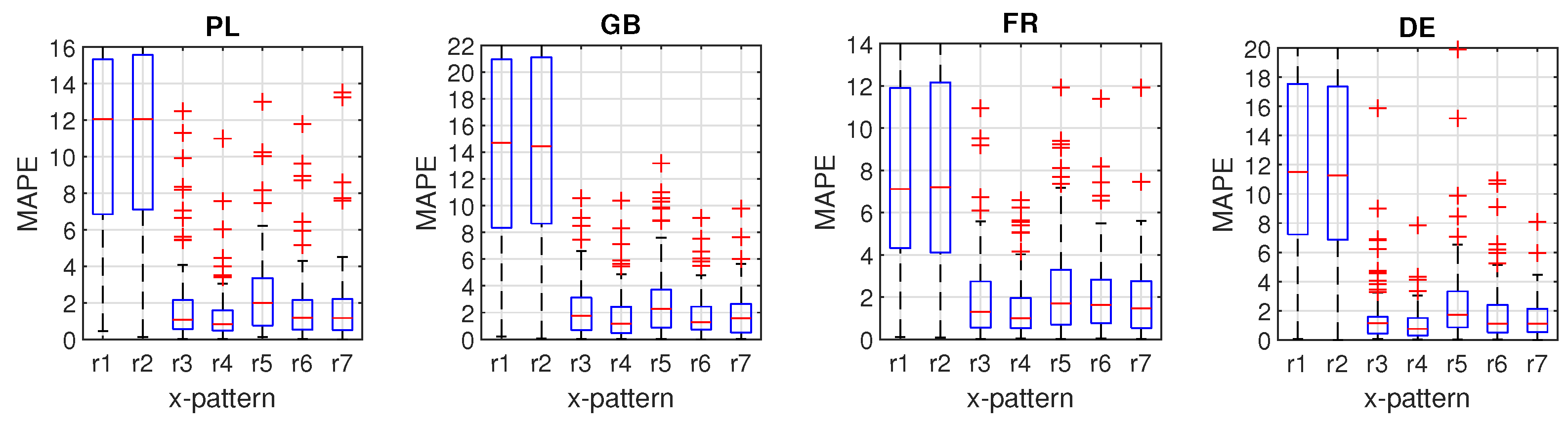

Table 1 and

Table 2 show mean absolute percentage error (MAPE) and root mean square error (RMSE), respectively, for input patterns r1–r7 and different training modes.

Figure 4,

Figure 5 and

Figure 6 show the boxplots of MAPE. The results can be summarised as follows:

It is evident from these tables and figures that the global extended mode yields the lowest errors, when combined with patterns r4 (for PL, FR and DE) or r6 (for GB).

The local training mode brings lower errors than the global one when patterns r1, r2, r6 and r7 are used, i.e., patterns which are composed of the daily curves. The local mode is usually better than the global extended one when patterns r1 and r2 are used, but it is worse than the global extended mode when cross-patterns are used, which also reflect a weekly seasonality.

The highest errors for the global mode were observed when patterns r1 and r2 were used. In these cases, the errors were up to nine times greater than in the alternative training modes. Pattern r4 is recommended for the global mode, which for all countries provided the lowest errors. Modifying the global mode by extending the input patterns with calendar variables has always resulted in a reduction of errors.

Figure 4.

Local training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Figure 4.

Local training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Figure 5.

Global training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Figure 5.

Global training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Figure 6.

Global extended training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Figure 6.

Global extended training mode: Boxplots of validation MAPE for different input patterns r1–r7.

Table 1.

Validation MAPE for different input patterns r1–r7 and training modes (lowest errors in bold, second lowest errors in italics).

Table 1.

Validation MAPE for different input patterns r1–r7 and training modes (lowest errors in bold, second lowest errors in italics).

| Data | Training Mode | r1 | r2 | r3 | r4 | r5 | r6 | r7 |

|---|

| PL | Local | 1.54 | 1.55 | 1.89 | 2.17 | 3.66 | 1.45 | 1.63 |

| Global | 11.55 | 11.61 | 2.11 | 1.30 | 2.94 | 1.82 | 1.82 |

| Global ext. | 1.92 | 1.79 | 1.40 | 1.24 | 1.96 | 1.50 | 1.55 |

| GB | Local | 1.92 | 1.91 | 2.29 | 2.21 | 3.68 | 1.76 | 1.75 |

| Global | 14.88 | 15.01 | 2.21 | 1.75 | 2.92 | 1.84 | 1.83 |

| Global ext. | 2.22 | 2.09 | 1.79 | 1.59 | 2.12 | 1.47 | 1.52 |

| FR | Local | 1.88 | 1.77 | 2.12 | 2.43 | 5.10 | 1.74 | 1.86 |

| Global | 8.50 | 8.54 | 2.09 | 1.53 | 2.90 | 2.11 | 1.92 |

| Global ext. | 1.98 | 1.71 | 1.59 | 1.37 | 1.71 | 1.63 | 1.64 |

| DE | Local | 1.34 | 1.31 | 1.67 | 1.93 | 3.47 | 1.21 | 1.45 |

| Global | 12.33 | 12.34 | 1.62 | 1.07 | 2.61 | 1.79 | 1.54 |

| Global ext. | 1.55 | 1.57 | 1.17 | 0.99 | 1.65 | 1.17 | 1.19 |

Table 2.

Validation RMSE for different input patterns r1–r7 and training modes (lowest errors in bold, second lowest errors in italics).

Table 2.

Validation RMSE for different input patterns r1–r7 and training modes (lowest errors in bold, second lowest errors in italics).

| Data | Training Mode | r1 | r2 | r3 | r4 | r5 | r6 | r7 |

|---|

| PL | Local | 436 | 522 | 489 | 540 | 1014 | 412 | 473 |

| Global | 2420 | 2427 | 577 | 347 | 741 | 454 | 458 |

| Global ext. | 468 | 448 | 364 | 337 | 509 | 406 | 410 |

| GB | Local | 918 | 825 | 1100 | 1118 | 1816 | 811 | 841 |

| Global | 6309 | 6358 | 1035 | 860 | 1445 | 811 | 824 |

| Global ext. | 925 | 937 | 784 | 757 | 985 | 669 | 690 |

| FR | Local | 1630 | 1576 | 1851 | 1939 | 3893 | 1406 | 1626 |

| Global | 5602 | 5635 | 1714 | 1144 | 2697 | 1538 | 1437 |

| Global ext. | 1414 | 1280 | 1287 | 1048 | 1450 | 1232 | 1272 |

| DE | Local | 1590 | 1190 | 1748 | 2099 | 3046 | 1168 | 1641 |

| Global | 8927 | 8926 | 1410 | 878 | 2094 | 1411 | 1131 |

| Global ext. | 1224 | 1229 | 833 | 713 | 1261 | 915 | 883 |

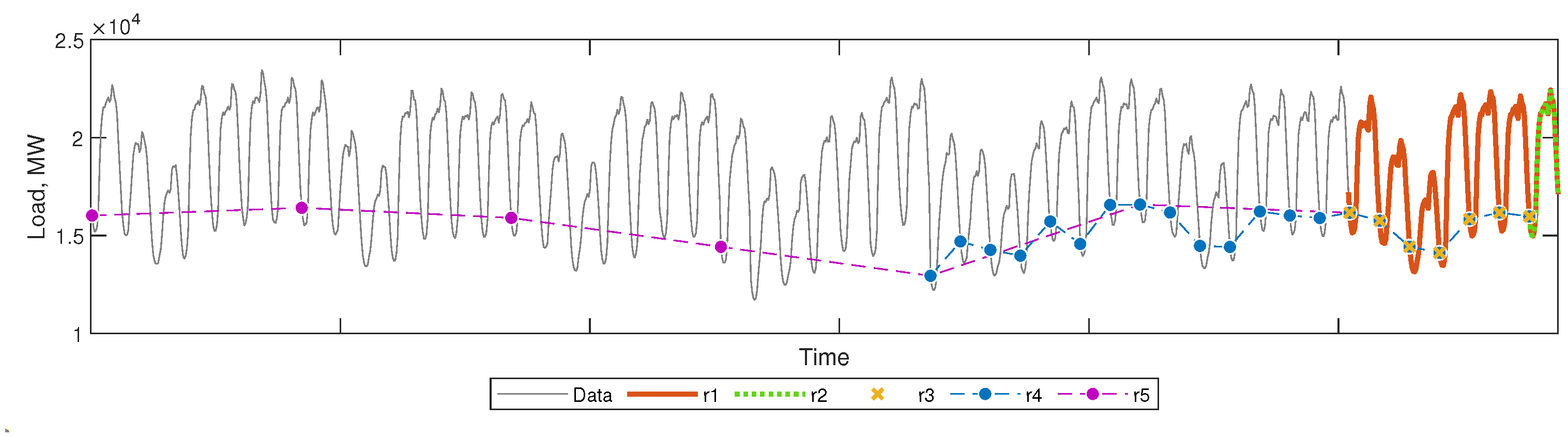

Based on the results, the recommended training mode is global extended with r4 patterns for PL, FR and DE, and r6 patterns for GB. These variants of RF were used in the experiments described in the next sections.

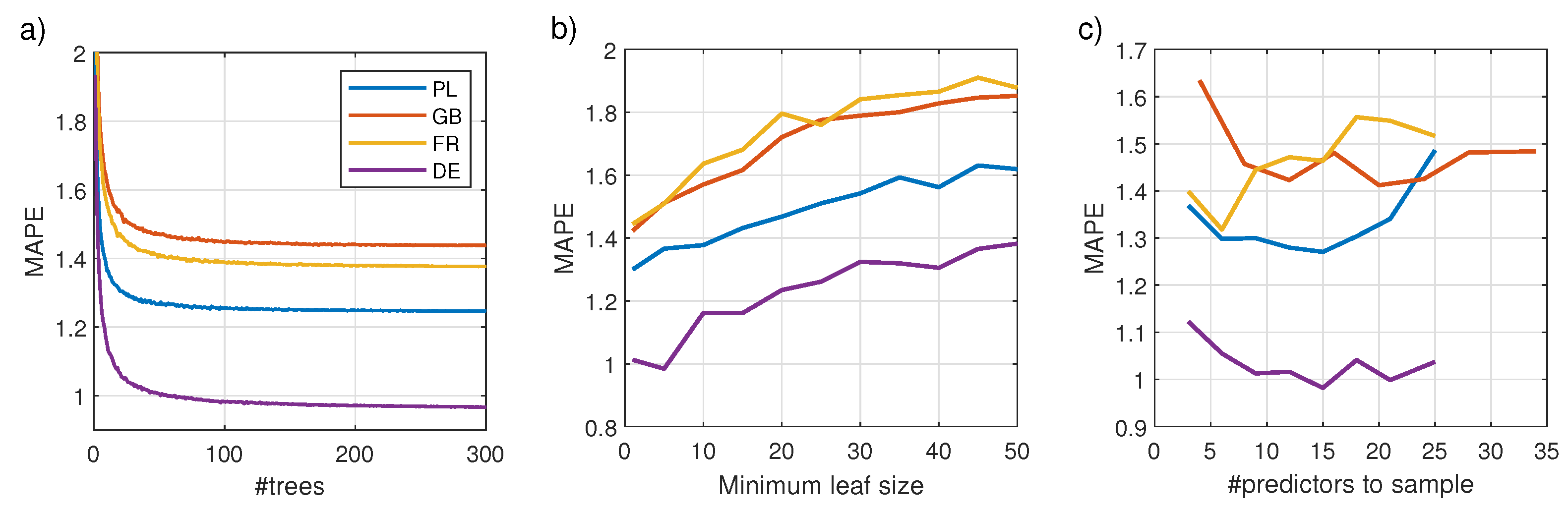

5.2. Tuning Hyperparameters

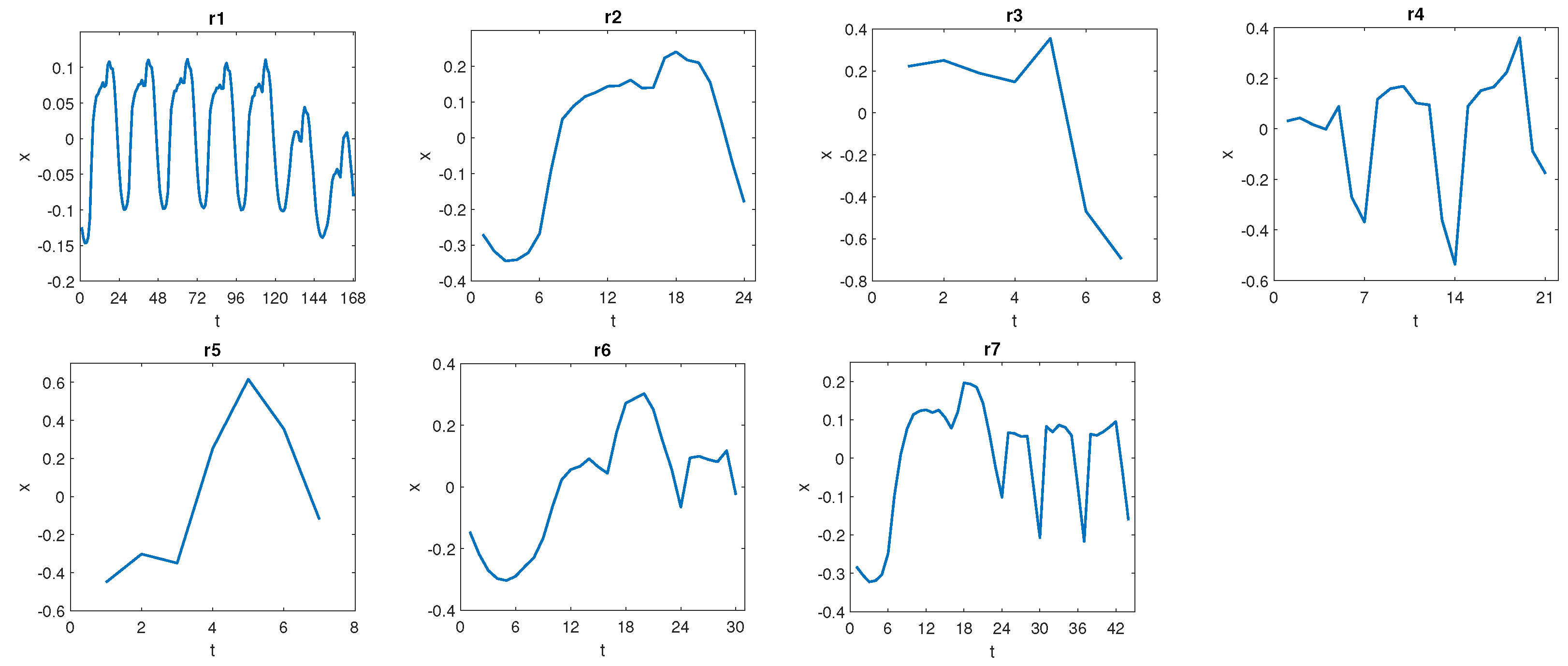

In this experiment, we change the selected hyperparameter in the range shown in

Figure 7, keeping the remaining hyperparameters at their constant values as follows: number of trees in the forest—

K = 100, minimum number of leaf node observations—

, and number of predictors to select at random for each decision split—

.

Figure 7 shows the impact of hyperparameters on the forecasting error (MAPE). As expected, the error decreases with the number of trees in the forest. The reduction in MAPE when the RF size changes from 1 do 300 trees was from 38.7% for PL to 50.0% for DE. At the same time, a significant reduction in the forecast variance was also observed from 63.8% for PL to 82.5% for DE. It can be seen from

Figure 7b that an increase in the minimum leaf size leads to a deterioration in the results. Small values of

m, close to 1, are preferred. This means that trees as deep as possible are the most beneficial in RF. The optimal number of predictors selected in the nodes to perform a split varies from country to country (see

Figure 7c). For PL and DE it is 15, for GB it is 20, and for FR it is 6. These values differ from the recommended

, which are 8 for PL, FR and DE, and 11 for GB.

Using optimal values of the hyperparameters for each country, we investigate the methods of split predictor selection s1–s3.

Table 3 shows the results, validation MAPE and RMSE. Both accuracy measures show similar results for all methods of split predictor selection. Therefore, s1 is recommended as a simple, standard method, which does not cause any additional computational burden.

Figure 8 shows the “importance” or “predictive strength” of the predictors estimated on the out-of-bag data (this is discussed further in

Section 5.4). As can be seen from this figure, when r4 extended pattern is used (PL, FR and DE), the most important predictor is the last component of the r-pattern, i.e., the predictor expressing electricity demand at forecasted hour

t of the day preceding the forecasted day,

. The importance of this predictor reaches 3.5 for FR and over 5 for PL and DE, while the importance of other demand predictors is usually below 2. Among the calendar predictors, the most important for r4 extended pattern are those coding the season of the year, especially for DE. For cross-pattern r6 (GB), the most important predictors are the calendar ones: day of the week and season of the year (

). The next positions are occupied by predictors coding the demand for the last four hours of the day before the forecasted day (

is clearly the most important of these) and predictor coding demand at forecasted hour

t week ago,

. Note the low importance of the other predictors representing demand at hour

t of the preceding days,

–

.

Table 4 shows the forecasting results for the test set when using RF with the optimal values of hyperparameters. As performance metrics we use: MAPE, MdAPE (median of absolute percentage error), IqrAPE (interquartile range of APE), RMSE, MPE (mean percentage error), and StdPE (standard deviation of PE). MdAPE measures the mean error without the influence of outliers, while RMSE, as a square error, is especially sensitive to outliers.

The MAPE and MdAPE values in

Table 4 indicate that the most accurate forecasts were obtained for PL and DE, while the least accurate were for GB. MPE allows us to assess the forecast bias. Positive values of MPE indicate underprediction, while its negative values indicate overprediction. Note that for PL and DE the forecast bias was significantly smaller than for GB and FR. The same can be said about the forecasts dispersion measured by IqrAPE and StdPE.

5.4. Discussion

In our previous work [

19], we used a local training mode with input patterns r2, which express daily profiles. Our current research revealed that input patterns incorporating weekly seasonality (r4) or both daily and weekly seasonality (r6) combined with global training with extended inputs improve the results (note that in

Table 1 and

Table 2, patterns r4 and r6 provide lower errors than r2 for all countries). Calendar data used as additional input in the global extended training helps the trees to properly partition the input space and thus approximate the target function with greater accuracy. It does not take place without costs: the complexity of the model increases due to learning on all data, not just the selected data as in local training.

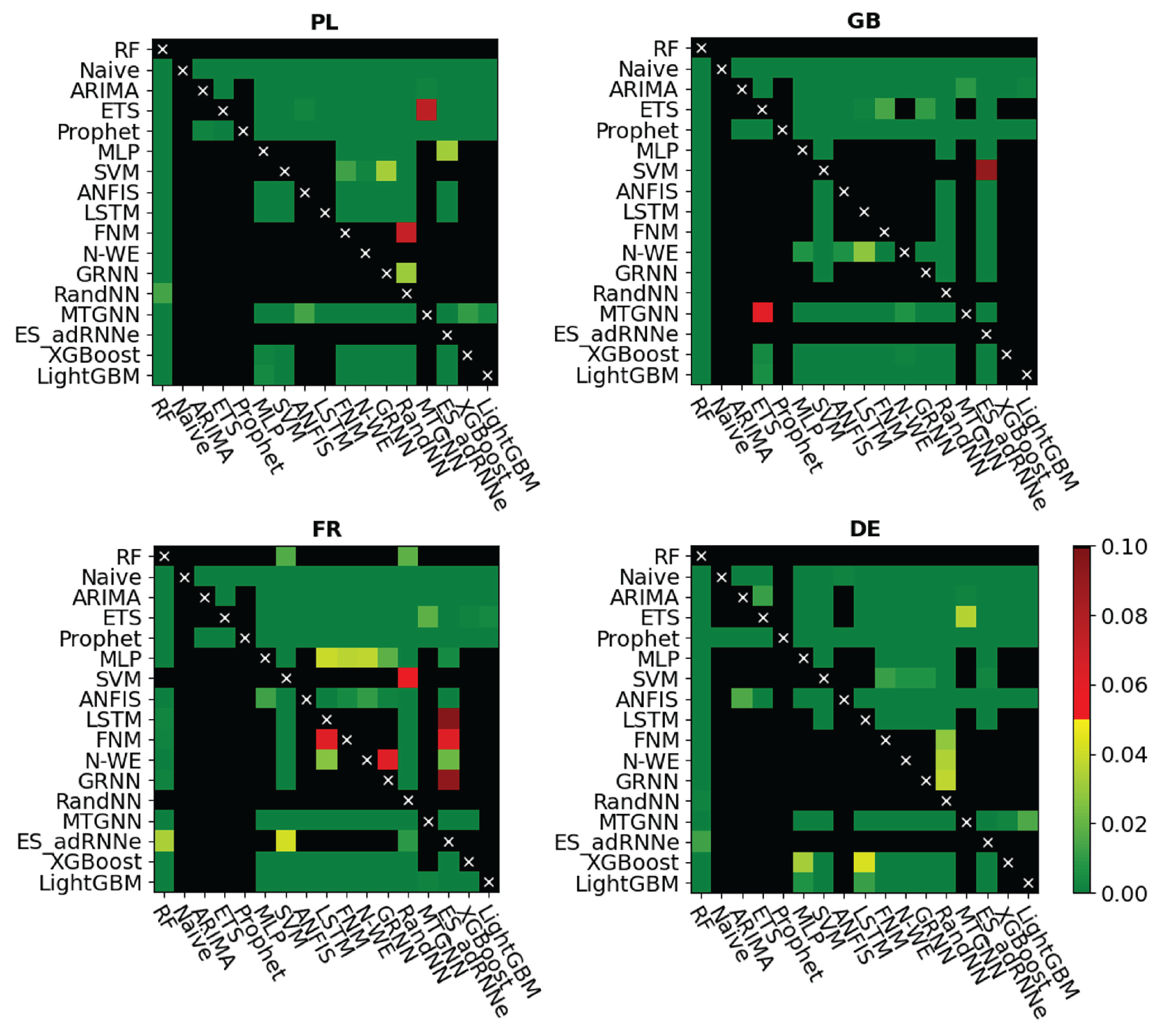

Table 5 and

Figure 9 show that the proposed RF outperforms classical statistical models (ARIMA and ETS), modern statistical model (Prophet), classical ML models (MLP, SVM, ANFIS, GRNN), modern ML models (LSTM, RandNN), similarity-based models (FNM, N-WE) as well as state-of-the-art ML models (MTGNN, ES-adRNNe) and boosted regression trees (XGBoost, LightGBM). The last two models, as well as the proposed RF model, also used calendar variables, even in larger numbers. However, in contrast to these models, our model uses specific time series preprocessing, which may be a decisive advantage. Our model also outperforms ES-adRNNe, which is a very sophisticated and complex model developed especially for STLF [

39]. To increase its predictive power, it is equipped with a new type of RNN cell with delayed connections and inherent attention, it processes time series adaptively, learning their representation and it learns in the cross-learning mode (i.e., it learns from many time series in the same time). It reveals its strength with a large amount of data, numerous and long time series. In our case, this condition was not met—there were only four, relatively short series available for training the models. In this case, the proposed RF model, which learns from individual series, generated more accurate forecasts than ES-adRNNe.

It is worth noting that RF has few hyperparameters to tune, which makes it easy to optimize (compare with DNNs with many hyperparameters). The results of our experiments confirmed that the number of trees in the forest should be as large as possible and the mimimum leaf size can be set to one. Therefore, the key hyperparameter remains the number of predictors to sample in the nodes. Its optimal values significantly differed from the recommended default values. Our attempt to increase the performance of the RF model through alternative methods of selecting predictors for split failed. Neither the curvature test nor the interaction test, which take into account the relationship between predictors and response when splitting data in nodes, improves the results significantly over the default CART method.

In our study, we used both continuous and categorical predictors. Such a mix causes many difficulties for other models such as ARIMA, ETS, NNs, SVM, LSTM and others. Categorical variables cannot be processed by these models directly. Such predictors must be converted into numerical data, so as to maintain the relationship between their values. The method of this conversion can be treated as an additional hyperparameter. RF has no problems with categorical variables, which is its big advantage. Moreover, RF can deal easily with any number of additional exogenous predictors and does not need to unify predictor ranges, which is often necessary for other models. RF can even deal with raw data because the predictors are not processed by the tree in any way, just selected in nodes, to construct a specific decision model (flowchart-like structure).

Regression tree provides fast one-pass training which does not need to repeatedly refer to the data. In contrast, NNs, which use a variant of the gradient descent optimization algorithm with multiple scanning of a dataset, are more time-consuming to train. Additionally due to the number of hyperparameters, they are also much more expensive to optimize in terms of time than RF. The training of a tree does not provide an optimal result because decisions about data split are made in nodes using a local rather than a global criterion, i.e., the split made may not be optimal from the point of view of the final result. However, the NN learning process also does not lead to optimal results due to sensitivity to the starting point and tendency to fall into the traps of the local minimum. Note that non-optimality of the trees is mitigated by their aggregation in the forest. Aggregation also smoothes out functions modeled by individual trees and reduces their variance. The learning process of RF can be easily paralleled because the individual trees learn independently.

One useful feature of RF is that it enables the generalization error to be estimated using out-of-bag (OOB) patterns, i.e., training patterns not selected for the bootstrap sample (approximately one third of the training patterns are left out in each bootstrap sample). Therefore, the time-consuming cross-validation that is widely used in other models for estimating the generalization error is not needed. Using OOB patterns, the generalization error can be estimated during one training session, along the way. Although for forecasting problems, where training patterns should precede validation to prevent data leakage, the OOB approach as well as standard cross-validation may be questionable. For this reason, we did not use the OOB approach in this study. Instead, we applied a different strategy. We chose a set of 100 validation patterns from 2014 and for each of them we trained RF on training patterns preceding the validation pattern.

A valuable feature of RF is its built-in mechanism for predictor selection. In each node, the predictor which improves the split-criterion the most is selected. The splitting criterion favors informative predictors over noisy ones, and can completely disregard irrelevant ones. Thus, in RFs an additional feature selection procedure is unnecessary. Based on the internal mechanism for selecting predictors, the predictor importance or strength can be estimated. The importance measure attributed to the splitting predictor is the accumulated improvement this predictor gives to the split-criterion at each split in each tree. RF also offers another method of estimating the predictor importance based on the OOB patterns [

27]. When the tree is grown, the OOB patterns are passed down the tree, and the prediction error is recorded. Then the values for the given predictor are randomly permuted across the OOB samples, and the error is computed again. The importance measure is defined as the increase in error. This measure is computed for every tree, then averaged over the entire forest and divided by the standard deviation over the entire forest. Such a measure is presented in

Figure 8. Note that information about the predictor importance is a key factor, which helps to improve the interpretability of the model and can be used for feature selection for other models.

Model interpretability is an emerging area in ML that aims to make the model more transparent and strengthen confidence in its results. This topic is also explored in electricity demand forecasting literature [

44]. In [

45], it was shown that the predictor importance is related to the model sensitivity to inputs and also to the method of importance estimation. An LSTM-based model, which is proposed in [

45], is equipped with a built-in mechanism based on a mixture attention technique for temporal importance estimation of predictors. In the experimental study, this model demonstrated higher sensitivity to inputs than tree-based models (RF and XGBoost) which showed very low sensitivity on the predictors except one, which strongly dominated (the authors used built-in functions of scikit-learn to calculate the predictor importance for tree-based models). In our study, the predictor importance is more diverse (see

Figure 8), which may result from the fact that our trees are very deep and thus involve a great number of predictors. Note that tree-based models enhance interpretability not only through built-in mechanisms of predictor importance estimation, which show predictive power of individual predictors, but also through their flowchart-like tree structure. They can be interpreted simply by plotting a tree and observing how the splits are made and what is the arrangement of the leaves. It should be noted, however, that while following the path that a single tree takes to make a decision is trivial and self-explanatory, following the paths of hundreds of trees in the ensemble is much more difficult. To facilitate this, in [

46], model compression methods were proposed that transform a tree ensemble into a single tree that approximates the same decision function.

In this study we use a standard RF formulation which is a MISO model producing point forecasts. Thus for prediction of 24 values of the daily curve of electricity demand, we need to train 24 RF models. In [

32], we proposed a multivariate regression tree for STLF, which produces a vector as an output, representing the 24 predicted values. Using such MIMO trees as ensemble members simplifies and speeds up the forecasting process. A promising extension of the RF in the direction of probabilistic forecasting can be achieved using a quantile regression forest, which can infer the full conditional distribution of the response variable for high-dimensional predictor variables [

47].