Linear Power Flow Method Improved With Numerical Analysis Techniques Applied to a Very Large Network

Abstract

:1. Introduction

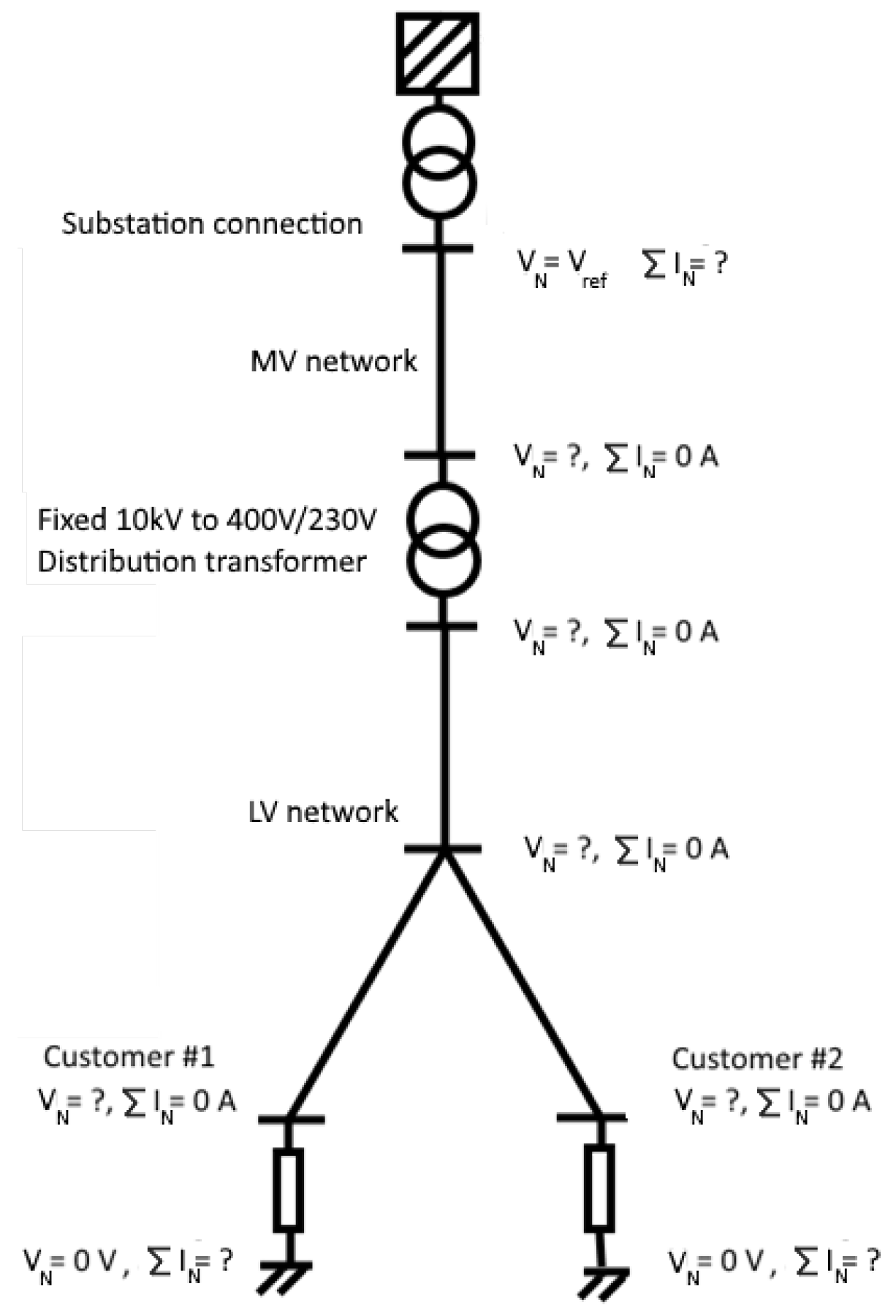

2. Linear Power Flow Problem

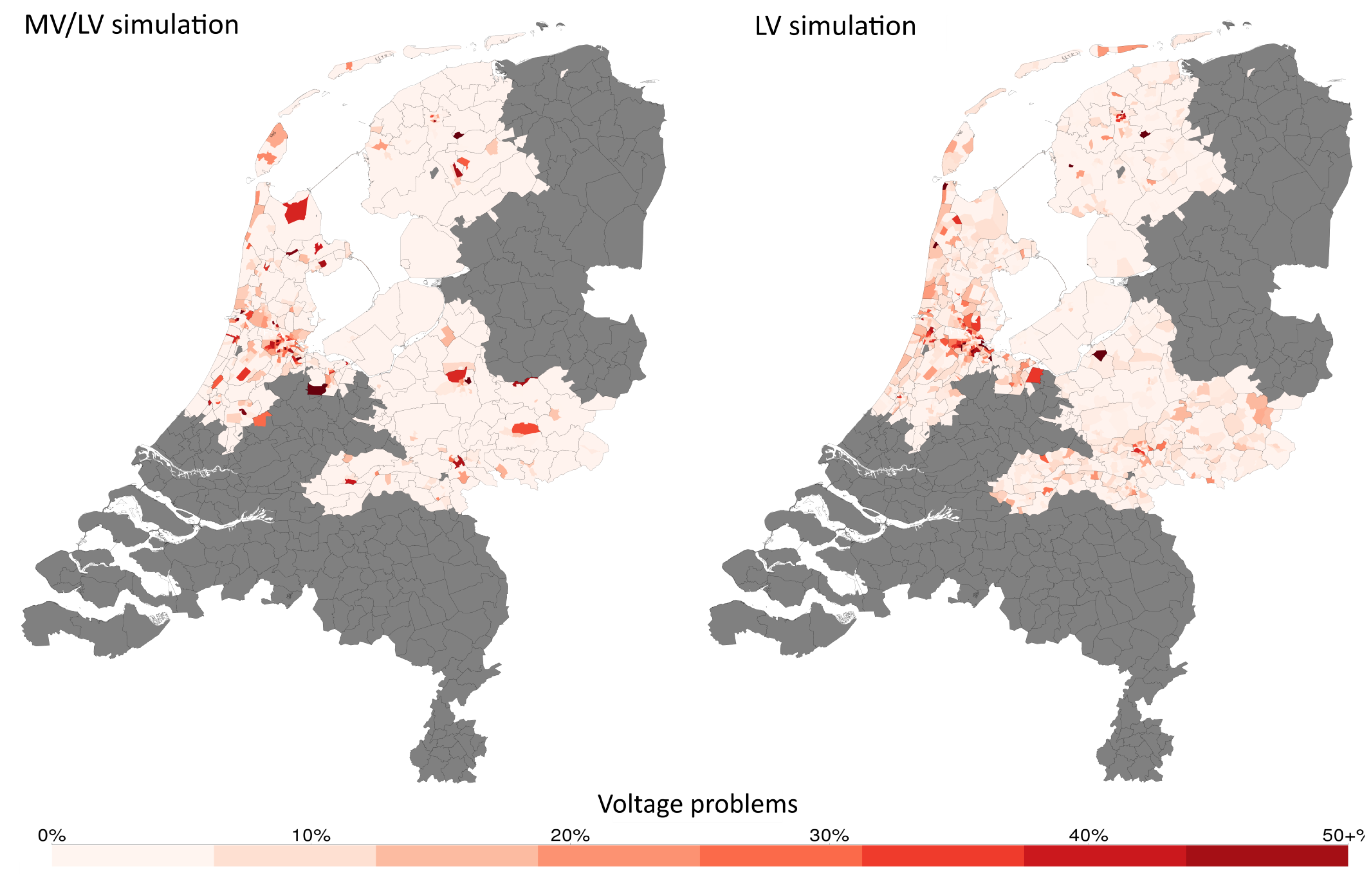

2.1. Solving in Terms of Only Real Numbers

2.1.1. Neglecting Imaginary Parts

2.1.2. Reformulating Equations with Complex Numbers

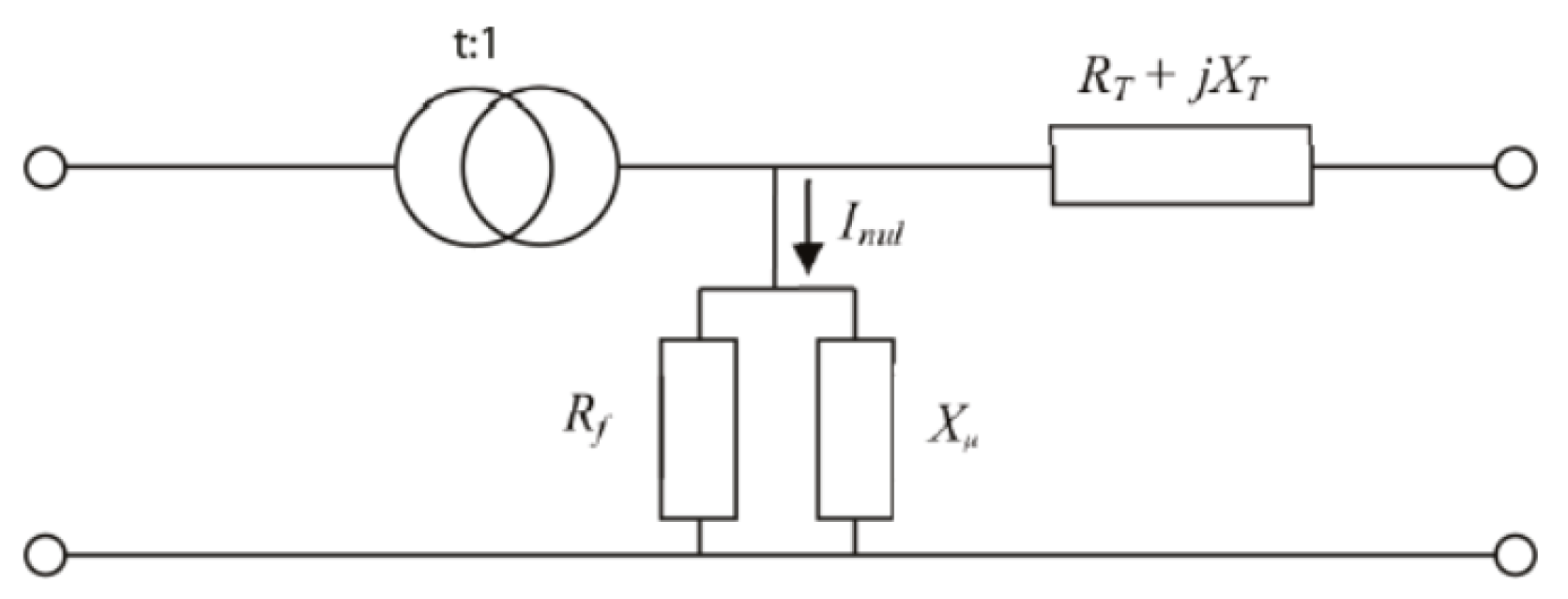

2.2. Modelling MV/LV Transformers

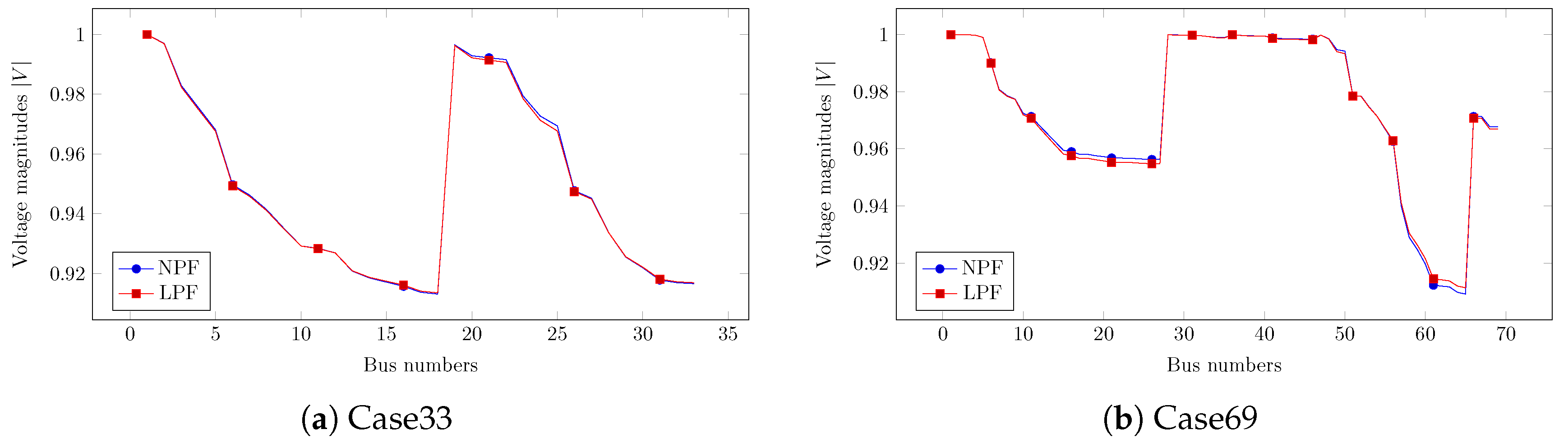

3. Comparison between Linear and Nonlinear Power Flow Problems

3.1. Comparison to the Newton Power Flow Method

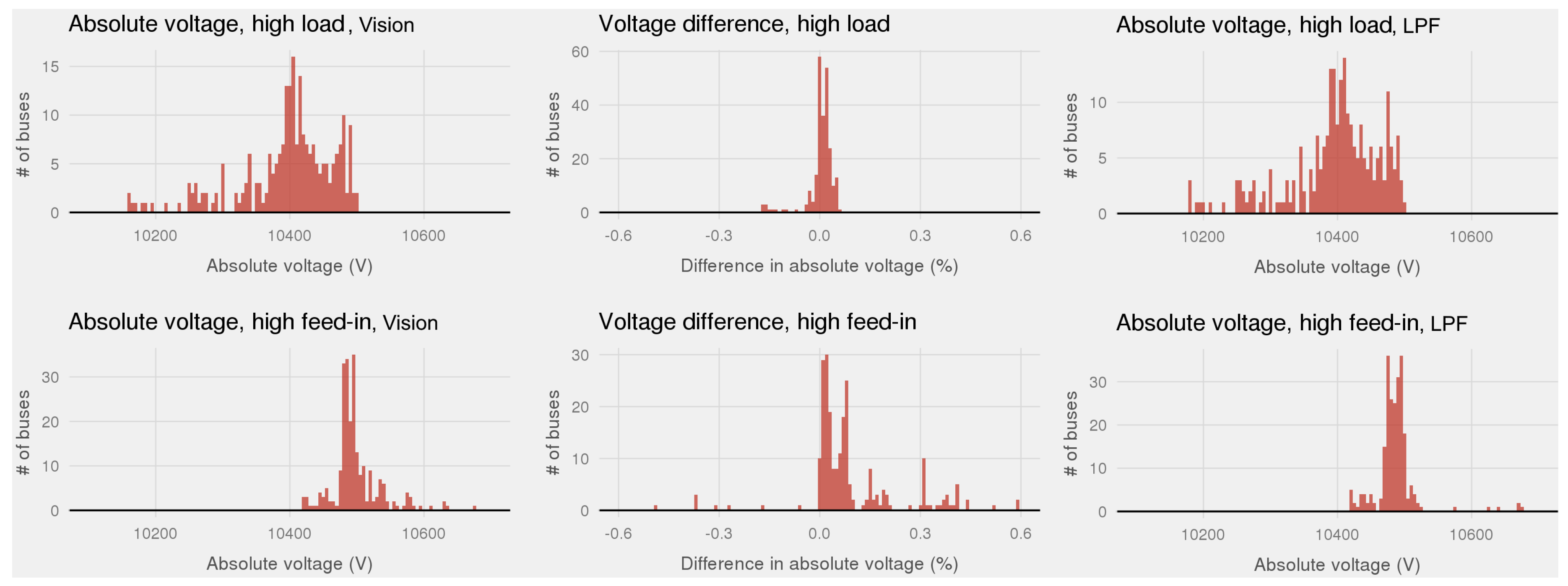

3.2. Comparison to a Commercial Power Flow Software Vision

4. Case Study of Large Dutch Power Grid (LLPF)

4.1. Data and Assumptions

4.2. Simulation Results

5. Application of Numerical Analysis Techniques on the LLPF Problem

5.1. LLPF Problem With Real Components

5.2. LLPF Problem With Complex Components

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| AMD | Approximate Minimum Degree |

| BFS | Backward-Forward Sweep |

| BiCGSTAB | Bi-Conjugate Gradient Stabilized |

| CG | Conjugate Gradient |

| DC | Direct Current |

| DNO | Distribution Network Operator |

| FDLF | Fast Decoupled Load Flow |

| GMRES | Generalized Minimal RESidual |

| G-S | Gauss Seidel |

| IC | Incomplete Cholesky |

| ILU | Incomplete LU decomposition |

| LLPF | Large Linear Power Flow |

| LPF | Linear Power Flow |

| LU | Lower and Upper triangular matrix decomposition |

| LV | Low Voltage |

| MV | Medium Voltage |

| NA | Numerical Analysis |

| NNZ | Number of Non-Zeros |

| NPF | Nonlinear Power Flow |

| N-R | Newton power flow |

| PCG | Preconditioned Conjugate Gradient |

| RCM | Reverse Cuthill-McKee |

| RES | Renewable Energy sources |

| SPD | Symmetric and Positive Definite |

| ZI | Combination of constant impedance Z and constant current I load models |

| ZIP | Combination of ZI and constant power P load models |

References

- Van Westering, W.; Droste, B.; Hellendoorn, H. Combined Medium Voltage and Low Voltage simulation to accurately determine the location of Voltage Problems in large Grids. In Proceedings of the CIRED 27nd International Conference on Electricity Distribution, Madrid, Spain, 3–6 June 2019. [Google Scholar]

- Stevenson, W.D. Elements of Power System Analysis; McGraw-Hill: New York, NY, USA, 1975. [Google Scholar]

- Tinney, W.F.; Hart, C.E. Power flow solution by Newton’s method. IEEE Trans. Power Appar. Syst. 1967, PAS-86, 1449–1460. [Google Scholar] [CrossRef]

- Stott, B.; Alsaç, O. Fast decoupled load flow. IEEE Trans. Power Appar. Syst. 1974, PAS-93, 859–869. [Google Scholar] [CrossRef]

- Tripathy, S.C.; Prasad, G.D.; Malik, O.P.; Hope, G.S. Load-Flow Solutions for Ill-Conditioned Power Systems by a Newton-Like Method. IEEE Trans. Power Appar. Syst. 1982, PAS-101, 3648–3657. [Google Scholar] [CrossRef]

- Cheng, C.S.; Shirmohammadi, D. A three-phase power flow method for real-time distribution system analysis. IEEE Trans. Power Syst. 1995, 10, 671–679. [Google Scholar] [CrossRef]

- Haque, M. A general load flow method for distribution systems. Electr. Power Syst. Res. 2000, 54, 47–54. [Google Scholar] [CrossRef]

- da Costa, V.M.; Martins, N.; Pereira, J.L.R. Developments in the Newton Raphson power flow formulation based on current injections. IEEE Trans. Power Syst. 1999, 14, 1320–1326. [Google Scholar] [CrossRef]

- Chang, G.; Chu, S.; Wang, H. An improved backward/forward sweep load flow algorithm for radial distribution systems. IEEE Trans. Power Syst. 2007, 22, 882–884. [Google Scholar] [CrossRef]

- Martinez, J.A.; Mahseredjian, J. Load flow calculations in distribution systems with distributed resources. A review. In Proceedings of the 2011 IEEE Power and Energy Society General Meeting, Detroit, MI, USA, 24–28 July 2011; pp. 1–8. [Google Scholar]

- Balamurugan, K.; Srinivasan, D. Review of power flow studies on distribution network with distributed generation. In Proceedings of the 2011 IEEE Ninth International Conference on Power Electronics and Drive Systems, Singapore, 5–8 December 2011; pp. 411–417. [Google Scholar]

- Eminoglu, U.; Hocaoglu, M.H. Distribution systems forward/backward sweep-based power flow algorithms: A review and comparison study. Electr. Power Components Syst. 2008, 37, 91–110. [Google Scholar] [CrossRef]

- Zhang, Y.S.; Chiang, H.D. Fast Newton-FGMRES solver for large-scale power flow study. IEEE Trans. Power Syst. 2010, 25, 769–776. [Google Scholar] [CrossRef]

- Idema, R.; Lahaye, D.J.; Vuik, C.; van der Sluis, L. Scalable Newton-Krylov solver for very large power flow problems. IEEE Trans. Power Syst. 2012, 27, 390–396. [Google Scholar] [CrossRef]

- Lahaye, D.; Vuik, K. Globalized Newton–Krylov–Schwarz AC Load Flow Methods for Future Power Systems. In Intelligent Integrated Energy Systems; Springer: Berlin/Heidelberg, Germany, 2019; pp. 79–98. [Google Scholar]

- Schavemaker, P.; van der Sluis, L. Electrical Power System Essentials; Wiley: Hoboken, NJ, USA, 2008. [Google Scholar]

- Martí, J.R.; Ahmadi, H.; Bashualdo, L. Linear power-flow formulation based on a voltage-dependent load model. IEEE Trans. Power Deliv. 2013, 28, 1682–1690. [Google Scholar] [CrossRef]

- Montoya, O.D.; Grisales-Noreña, L.; González-Montoya, D.; Ramos-Paja, C.; Garces, A. Linear power flow formulation for low-voltage DC power grids. Electr. Power Syst. Res. 2018, 163, 375–381. [Google Scholar] [CrossRef]

- Teng, J.H. A direct approach for distribution system load flow solutions. IEEE Trans. Power Deliv. 2003, 18, 882–887. [Google Scholar] [CrossRef]

- van Westering, W.; Hellendoorn, H. Low voltage power grid congestion reduction using a community battery: Design principles, control and experimental validation. Int. J. Electr. Power Energy Syst. 2020, 114, 105349. [Google Scholar] [CrossRef]

- Kirtley, J. 6.061 Introduction to Power Systems Class Notes Chapter 5 Introduction to Load Flow; MIT Open Courseware: Cambridge, MA, USA, 2018. [Google Scholar]

- Kersting, W.H. Distribution System Modeling and Analysis; CRC Press: Boca Raton, FL, USA, 2001. [Google Scholar]

- van Oirsouw, P. Netten Voor Distributie Van Elektriciteit, 2nd ed.; Phase to Phase B.V.: Arnhem, The Netherlands, 2011; Chapter 8. [Google Scholar]

- Sereeter, B.; Vuik, K.; Witteveen, C. Newton Power Flow Methods for Unbalanced Three-Phase Distribution Networks. Energies 2017, 10, 1658. [Google Scholar] [CrossRef]

- Sereeter, B.; Vuik, C.; Witteveen, C. On a comparison of Newton-Raphson solvers for power flow problems. J. Comput. Appl. Math. 2019, 360, 157–169. [Google Scholar] [CrossRef]

- Vision Network Analysis [Application]. Available online: http://www.phasetophase.nl/en_products/vision_network_analysis.html (accessed on 7 December 2016).

- Baran, M.E.; Wu, F.F. Network reconfiguration in distribution systems for loss reduction and load balancing. IEEE Trans. Power Deliv. 1989, 4, 1401–1407. [Google Scholar] [CrossRef]

- Birchfield, A.B.; Xu, T.; Overbye, T.J. Power flow convergence and reactive power planning in the creation of large synthetic grids. IEEE Trans. Power Syst. 2018, 33, 6667–6674. [Google Scholar] [CrossRef]

- Bolgaryn, R.; Scheidler, A.; Braun, M. Combined Planning of Medium and Low Voltage Grids. In Proceedings of the 2019 IEEE Milan PowerTech, Milan, Italy, 23–27 June 2019. [Google Scholar]

| Test Cases | CPU Time (s) | |||

|---|---|---|---|---|

| LPF | NPF & Iteration | |||

| Case33 | 0.0005 | 0.0039 & 3 it | 8.3946 | |

| Case69 | 0.0006 | 0.0047 & 4 it | 8.3585 | 0.0011 |

| Case991 | 0.0016 | 0.0117 & 3 it | 7.2025 | |

| Matrix | |||

|---|---|---|---|

| , | 1 | 1 | 1 |

| Algorithms | Time & Iter | NNZ | |

|---|---|---|---|

| 14.32 s | |||

| 7.12 s | 0 | ||

| + RCM | 6.94 s | ||

| Cholesky | 152.2 s | ||

| + RCM | 5.01 s | ||

| PCG(IC(0)) + RCM | NA | NA | |

| PCG(Cholesky) + RCM | 6.24 s & 1 it | ||

| PCG(IC()) + RCM | 6.65 s & 4 it | ||

| PCG(IC()) + RCM | 4.96 s & 1 it |

| Time & Iter | Relative Tolerance for PCG | ||

|---|---|---|---|

| & | |||

| Drop tolerance for IC | 4.96 s & 1 it | 4.96 s & 1 it | |

| & | & | ||

| 4.96 s & 1 it | 4.96 s & 1 it | ||

| & | & | ||

| Algorithms | Time & Iter | NNZ | |

|---|---|---|---|

| Equation (15) | 42.6 s | 0 | |

| Equation (9): | 17.23 s | ||

| + RCM | 15.58 s | ||

| LU + RCM | 7.41 s | ||

| GMRES(ilu(0)) + RCM | 177.86 s & 20 it | ||

| BiCGSTAB(ilu(0)) + RCM | 56.21 s & 20 it | ||

| GMRES(ilu()) + RCM | 18.75 s & 2 it | ||

| GMRES(ilu()) + RCM | 13.78 s & 1 it | ||

| GMRES(ilu()) + RCM | 14.27 s & 1 it | ||

| BiCGSTAB(ilu()) + RCM | 10.57 s & 0.5 it | ||

| BiCGSTAB(ilu()) + RCM | 10.77 s & 0.5 it | ||

| BiCGSTAB(ilu()) + RCM | 10.92 s & 0.5 it |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sereeter, B.; van Westering, W.; Vuik, C.; Witteveen, C. Linear Power Flow Method Improved With Numerical Analysis Techniques Applied to a Very Large Network. Energies 2019, 12, 4078. https://doi.org/10.3390/en12214078

Sereeter B, van Westering W, Vuik C, Witteveen C. Linear Power Flow Method Improved With Numerical Analysis Techniques Applied to a Very Large Network. Energies. 2019; 12(21):4078. https://doi.org/10.3390/en12214078

Chicago/Turabian StyleSereeter, Baljinnyam, Werner van Westering, Cornelis Vuik, and Cees Witteveen. 2019. "Linear Power Flow Method Improved With Numerical Analysis Techniques Applied to a Very Large Network" Energies 12, no. 21: 4078. https://doi.org/10.3390/en12214078

APA StyleSereeter, B., van Westering, W., Vuik, C., & Witteveen, C. (2019). Linear Power Flow Method Improved With Numerical Analysis Techniques Applied to a Very Large Network. Energies, 12(21), 4078. https://doi.org/10.3390/en12214078