Forecasting Energy Use in Buildings Using Artificial Neural Networks: A Review

Abstract

1. Introduction

1.1. Rationale

1.2. Objectives

- What are the overall trends of the deployment of ANN models for building energy forecasting? What are the forecasting model types applied? What varieties of ANN models have been deployed? How have their architectures (hyperparameters) been selected?

- What information can be obtained from the case studies they have been applied to? What is their target variable(s) level? What type of data have they been trained on? What are the performance ranges based on the forecast horizon(s)?

- What are new trends emerging in ANN, and how are they being deployed to building energy forecasting? Will such new trends help in the deployment of ANN in energy forecasting of various fields?

2. A Brief Explanation of Forecasting and Artificial Neural Networks

2.1. Forecasting Defined

- Prediction is the estimation of the value of an independent variable at present time (t) when all model inputs (regressors) values are known, from measurements or calculations, at present time (t) and/or past times (t − 1, t − 2…). In other words, prediction is the estimation of a current value(s), based on current and past situations.

- Forecast is the estimation of the value of an independent variable at future times, e.g., (t +1, t + 2…) when all model inputs (regressors) values are known, from measurements or calculations, at present time (t) and/or past times (t − 1, t − 2…). The forecasted value of one regressor at future times, e.g., (t + 1, t + 2…), can also be used. In other words, forecasting is the estimation of future values based on current and past situations.

- Estimation is the action, according to Oxford’s definition [22], “to roughly calculate the value, number, quantity or extent of.”

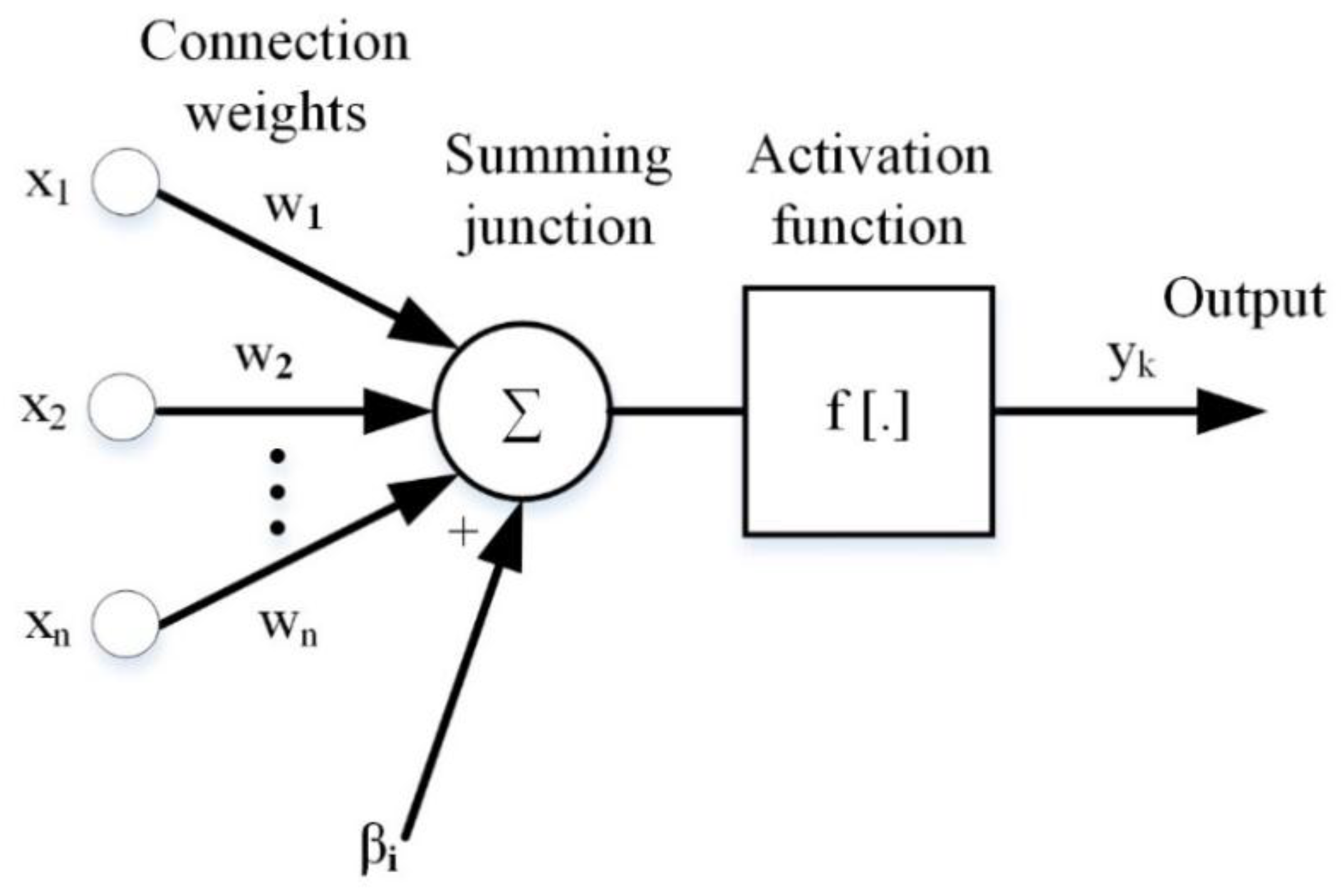

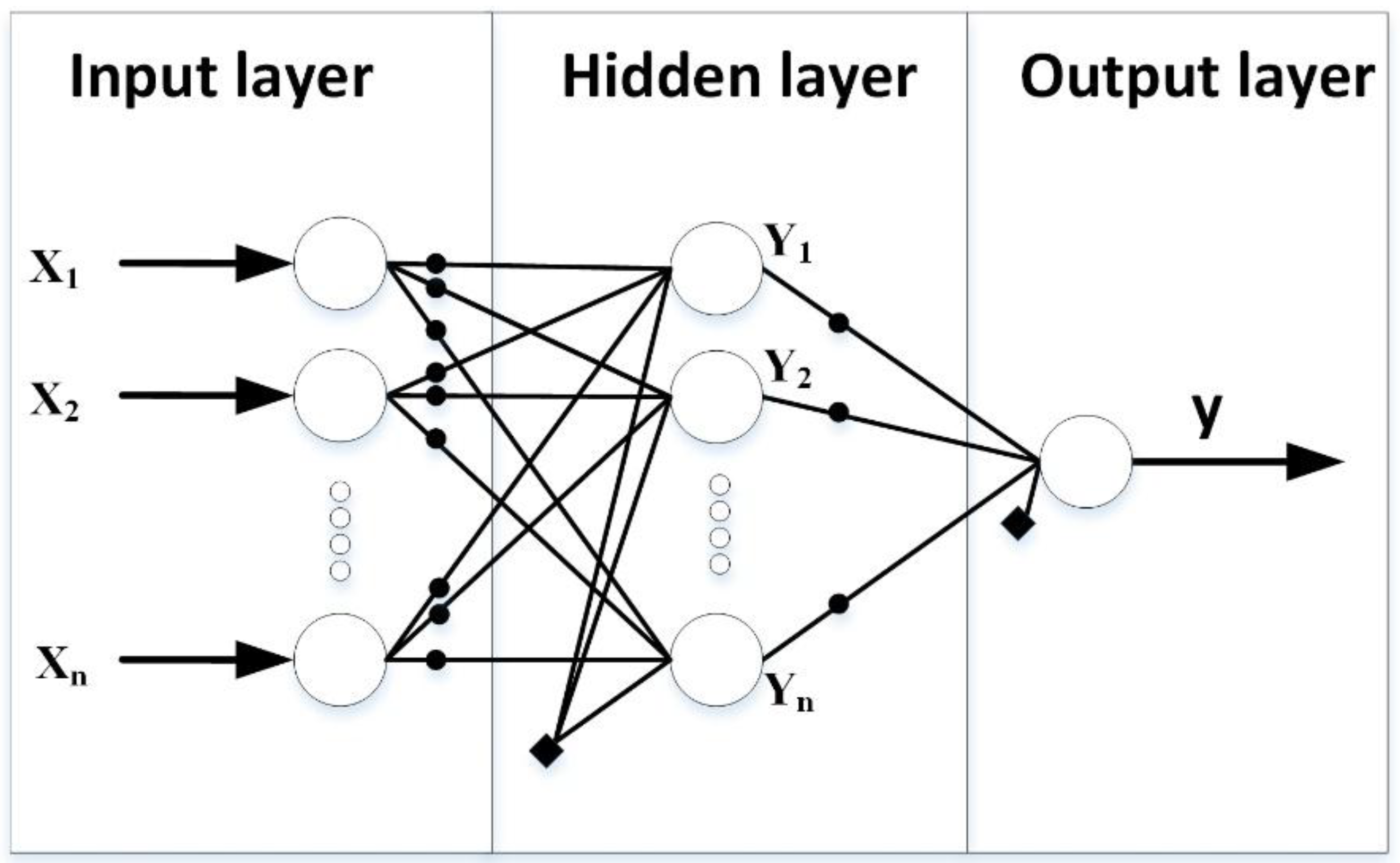

2.2. Overview Artificial Neural Network

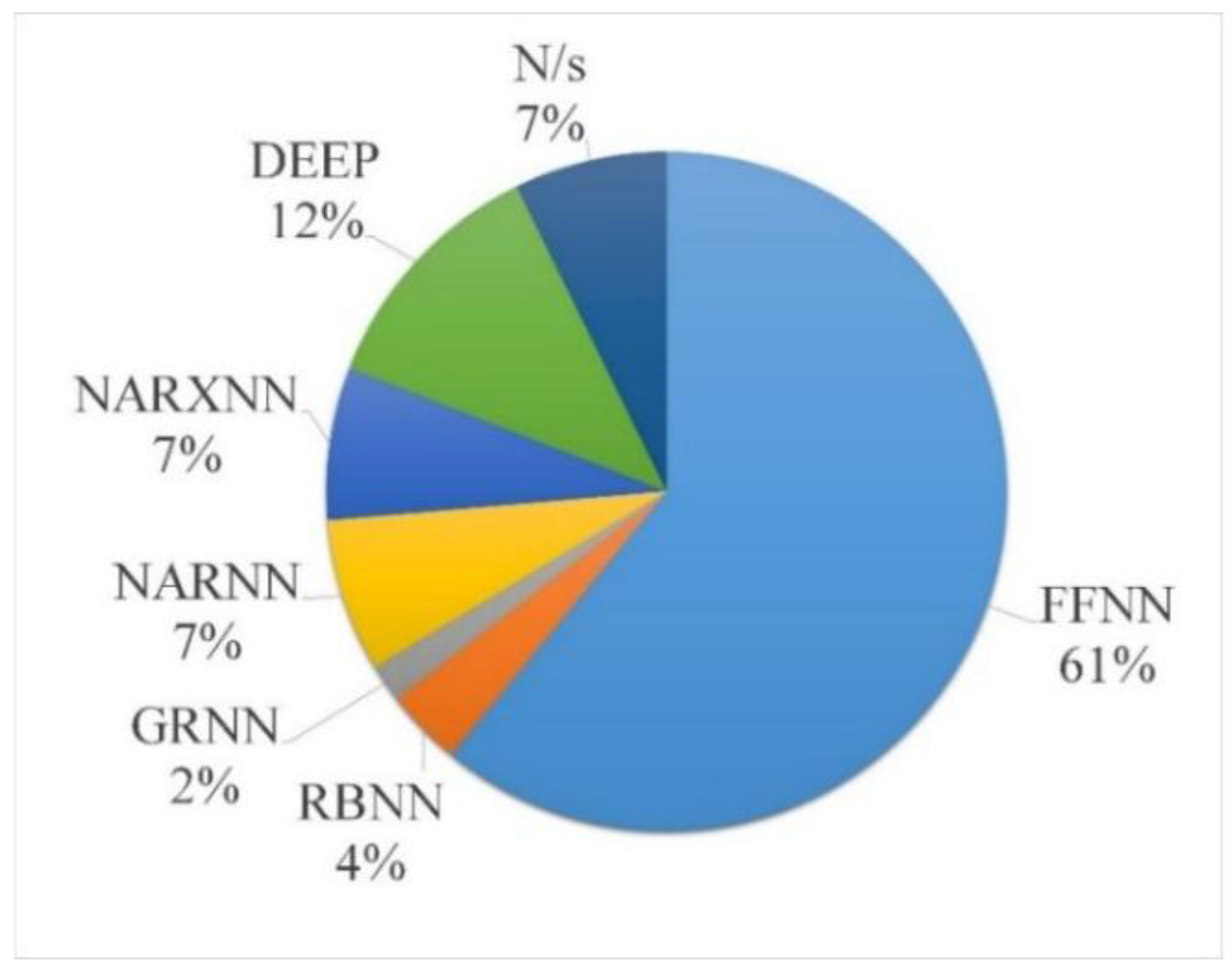

2.3. Varieties of Artificial Neural Networks

2.4. Neural Network Architecture Selection

2.4.1. Heuristics

2.4.2. Cascade-Correlation

2.4.3. Evolutionary Algorithms (EA)

2.4.4. Automated Architectural Search

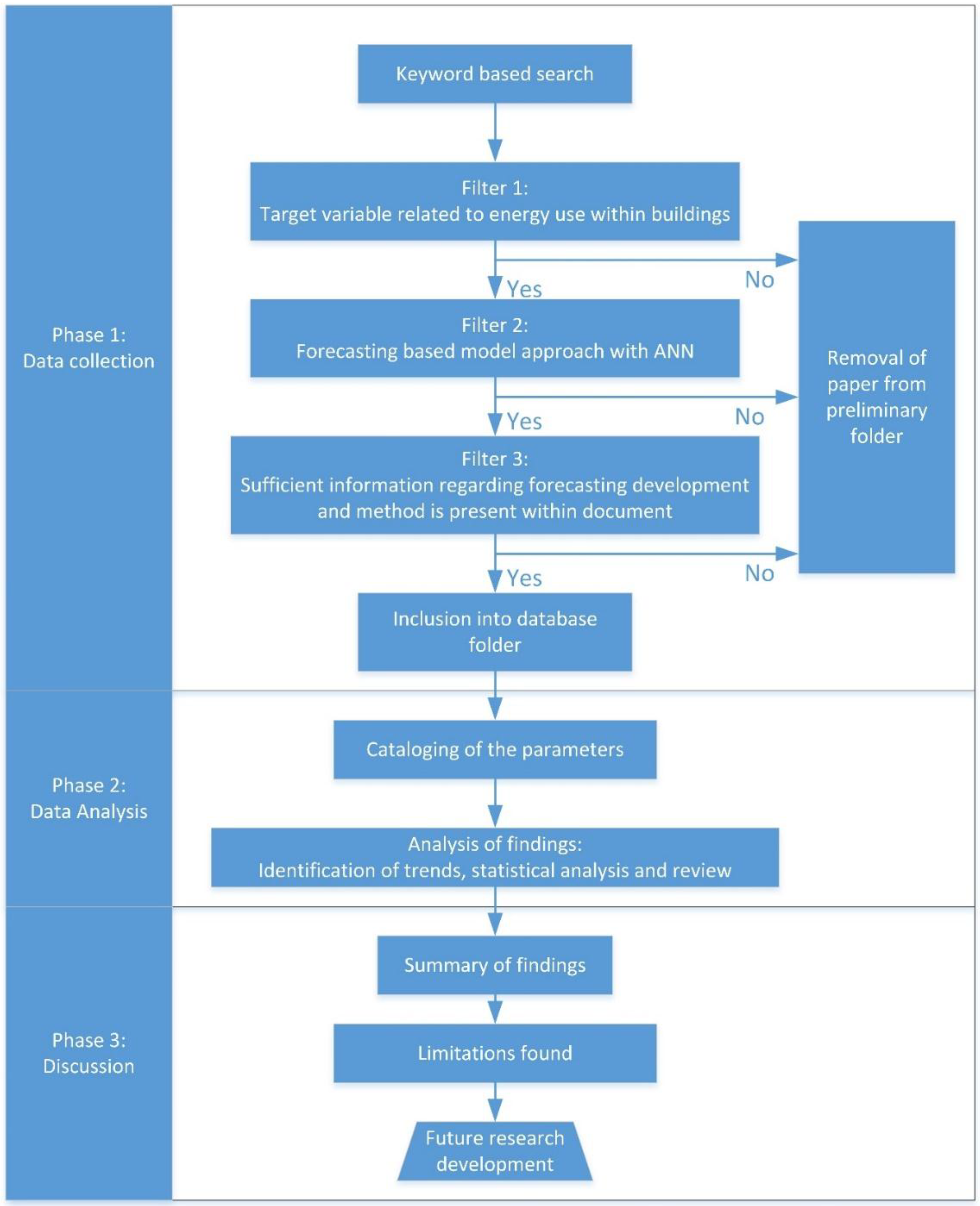

3. Methodology of the Literature Review

4. Data Analysis

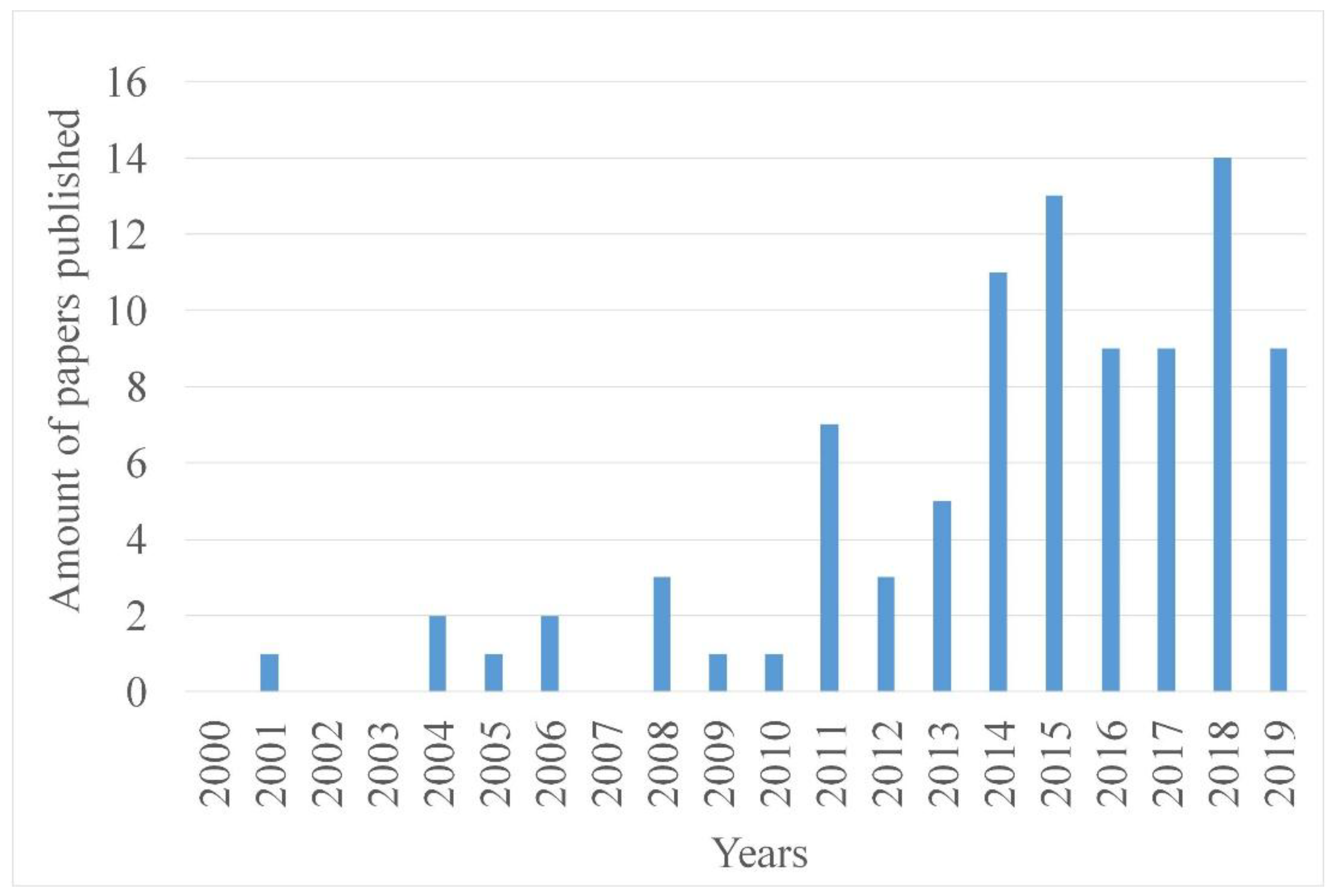

4.1. Year of Publication

4.2. Purpose

4.3. Case Study Locations

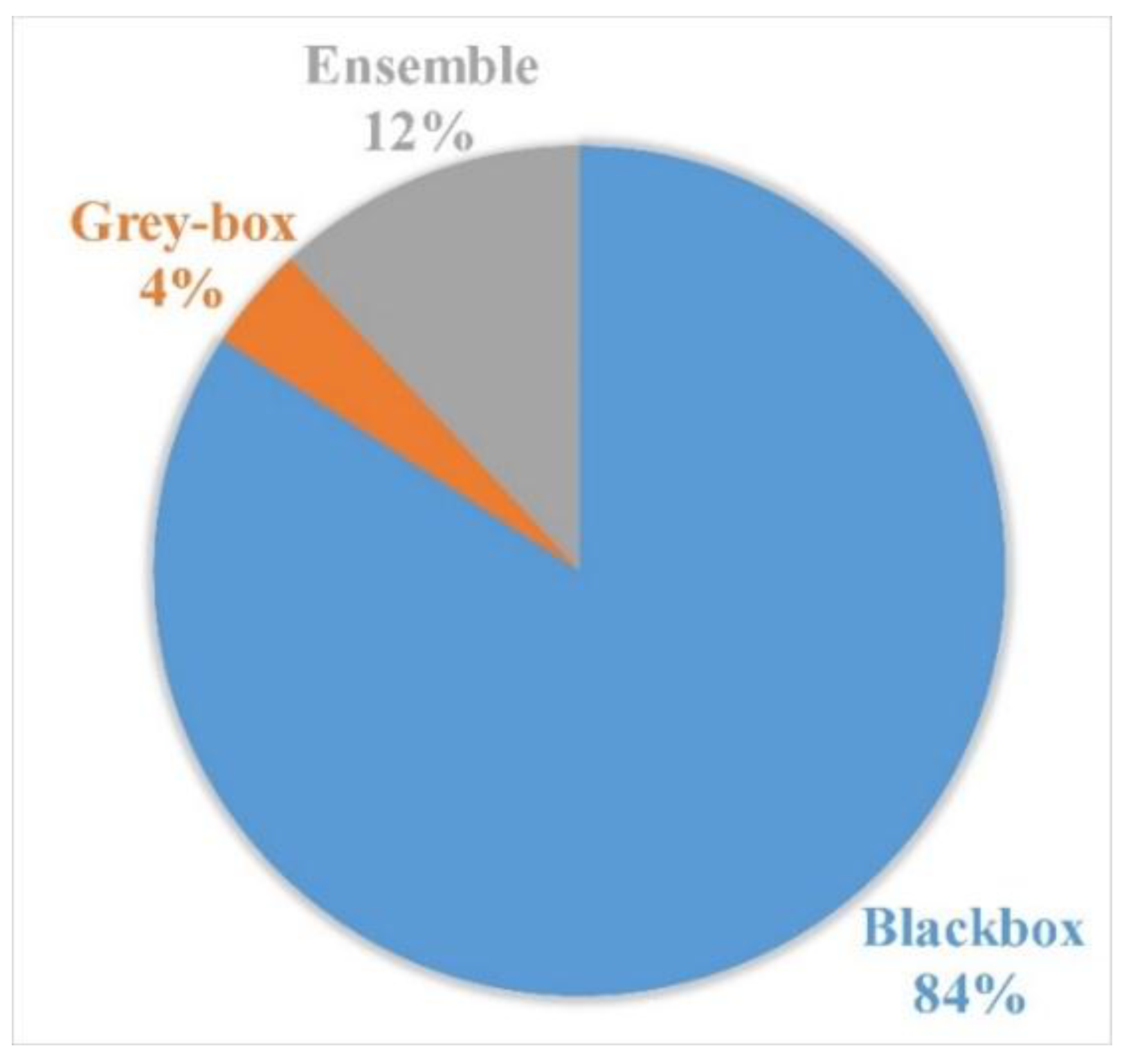

4.4. Forecasting Model Type

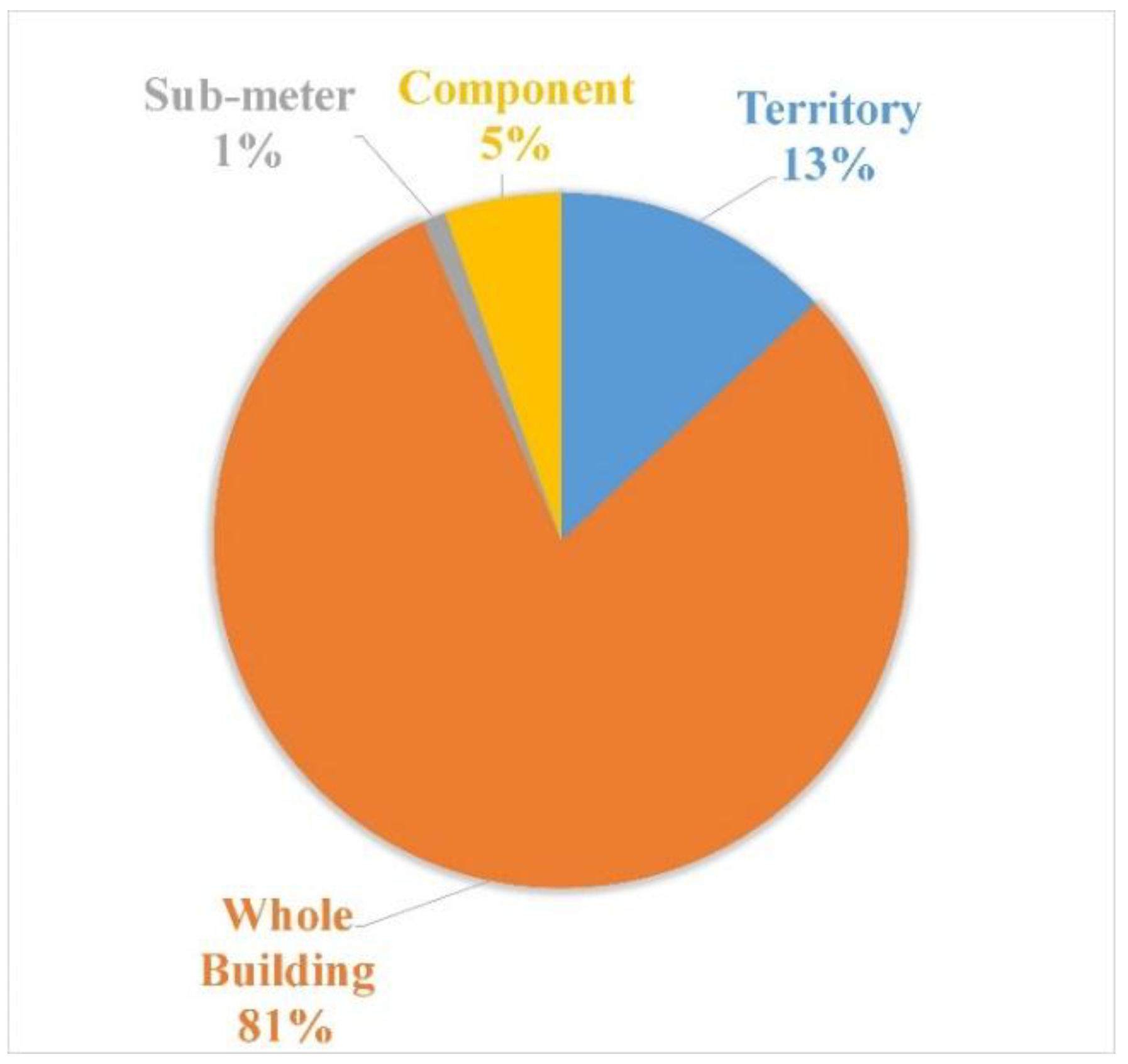

4.5. Application Level

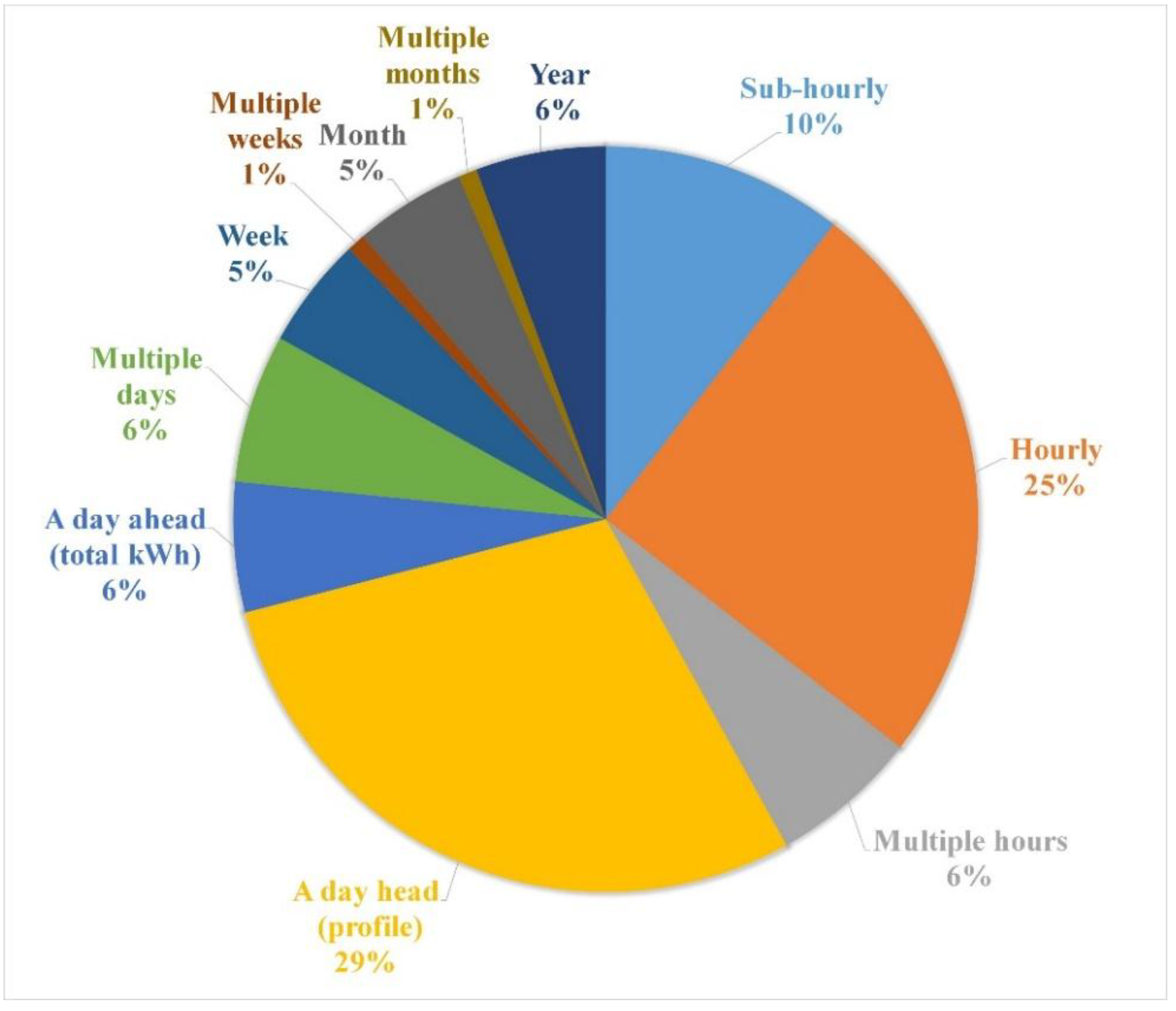

4.6. Forecast Horizon

4.7. Target Variables

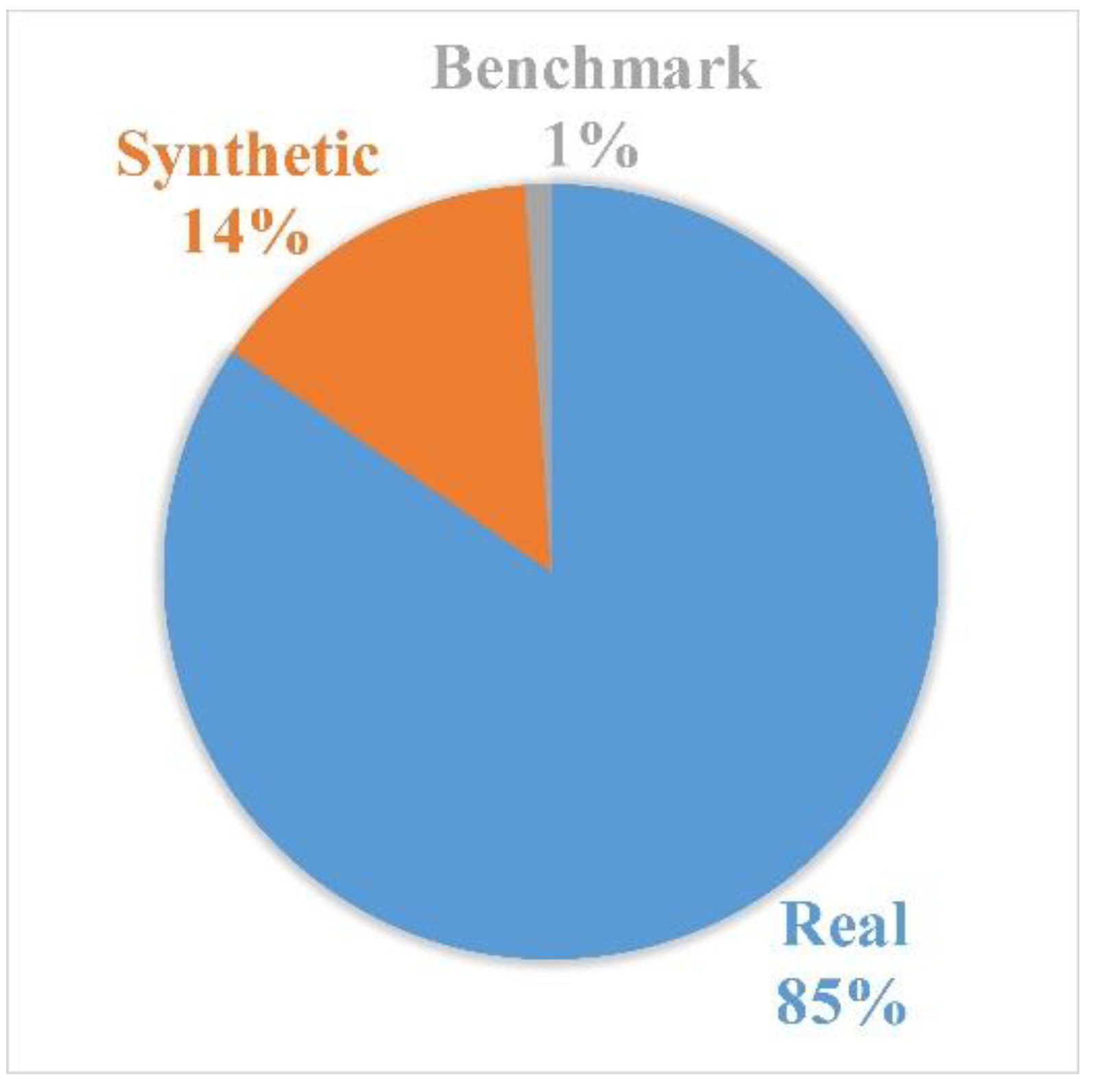

4.8. Data Sources

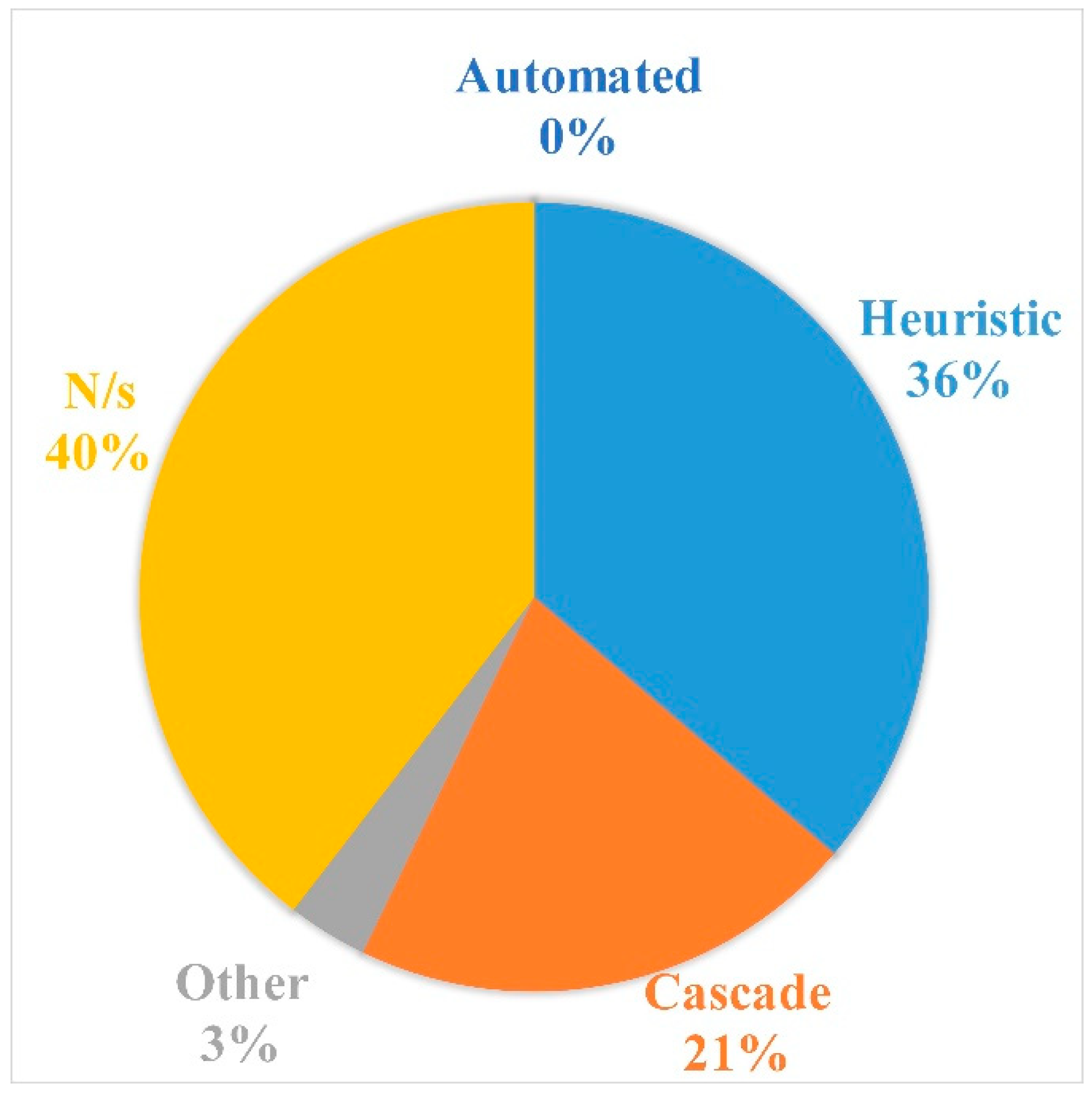

4.9. ANN Architecture Selection

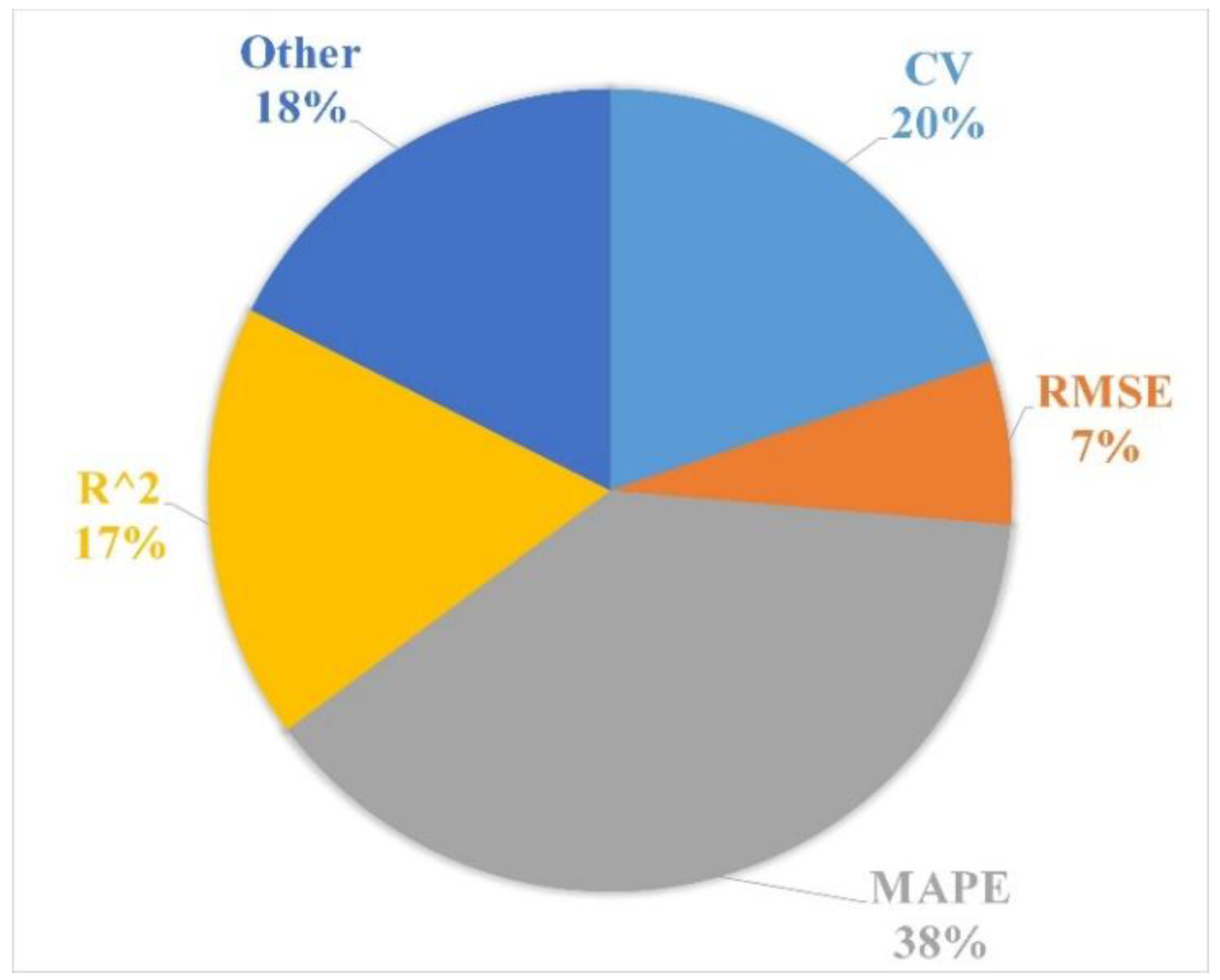

4.10. Performance Metrics

5. Discussion

5.1. Summary of Findings

5.2. Summary of ANN Forecasting Performance

5.3. Limitations of Using the ANN Forecasting Models

5.4. Future Areas of Research

- The development and applications of ensemble forecasting models. Ensemble models which have been deployed, have shown good performance results and may help improve forecasting stability. As such, further development is needed exploring such models on extended forecast horizons, occupant driven loads, components, etc.;

- Many studies deployed ANNs as black-box-based models. However, one of the major drawbacks of such models is the lack of understanding to the systems governing equations. Further research should focus on the application of grey-box models, due to their flexibility and their help in understanding the relationship between the regressors and target variable;

- Additionally, research could explore the usage of different neural network varieties with an emphasis on the recurrent neural networks and deep learning algorithms. Applications within other fields have shown promising results, and as such, they may help improve energy-based modeling within buildings. However, as a new area of research, many gaps are present;

- Furthermore, the development and application of automated architecture selection methods may help in the performance of energy forecasting. Such methods would help ease development time associated with developing forecasting models and remove the necessity for expert knowledge with regards to ANN development. This may also help with the reproducibility of results. Additionally, developments here would be transferable to other fields which have applied ANNs;

- Finally, the development for more occupancy forecasting may help improve energy efficiency and their strategies. Occupancy and occupant driven loads remain an area with little attention, despite being a primary factor in many internal loads and/or occupant load driven buildings. Further research could help with a variety of energy-based strategies and tasks including thermal comfort, lighting, sub-meter, and appliance-based strategies.

6. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- NRCan. Energy Use Data Handbook. Available online: http://www.statcan.gc.ca/access_acces/alternative_alternatif.action?teng=57-003-x2016001-eng.pdf&tfra=57-003-x2016001-fra.pdf&l=eng&loc=57-003-x2016001-eng.pdf (accessed on 20 September 2013).

- American Society of Heating, Refrigeration and Air-Conditioning Engineers. ASHRAE handbook: Fundamentals. In American Society of Heating, Refrigerating and Air-Conditioning Engineers; ASHRAE: Atlanta, GA, USA, 2009. [Google Scholar]

- Robinson, C.; Dilkina, B.; Hubbs, J.; Zhang, W.; Guhathakurta, S.; Brown, M.A.; Pendyala, R.M. Machine learning approaches for estimating commercial building energy consumption. Appl. Energy 2017, 208, 889–904. [Google Scholar] [CrossRef]

- Wang, L.; Kubichek, R.; Zhou, X. Adaptive learning based data-driven models for predicting hourlybuilding energy use. Energy Build. 2018, 159, 454–461. [Google Scholar] [CrossRef]

- Kontokosta, C.E.; Tull, C. A data-driven predictive model of city-scale energy use in buildings. Appl. Energy 2017, 197, 303–317. [Google Scholar] [CrossRef]

- Breiman, L. Statistical Modeling: The Two Cultures. Stat. Sci. 2001, 16, 199–231. [Google Scholar] [CrossRef]

- Yu, Z.; Haghighat, F.; Fung, B.C.M.; Yoshino, H. A decision tree method for building energy demand modeling. Energy Build. 2010, 42, 1637–1646. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, Y.; Zeng, R.; Srinivasan, R.S.; Ahrentzen, S. Random Forest based hourly building energy prediction. Energy Build. 2018, 171, 11–25. [Google Scholar] [CrossRef]

- Li, C.; Tao, Y.; Ao, W.; Yang, S.; Bai, Y. Improving forecasting accuracy of daily enterprise electricity consumption using a random forest based on ensemble empirical mode decomposition. Energy 2018, 165, 1220–1227. [Google Scholar] [CrossRef]

- Touzani, S.; Granderson, J.; Fernandes, S. Gradient boosting machine for modeling the energy consumption ofcommercial buildings. Energy Build. 2018, 158, 1533–1543. [Google Scholar] [CrossRef]

- Wahid, F.; Kim, D. A Prediction Approach for Demand Analysis of Energy Consumption Using K-Nearest Neighbor in Residential Buildings. Int. J. Smart Home 2016, 10, 97–108. [Google Scholar] [CrossRef]

- Monfet, D.; Corsi, M.; Choinière, D.; Arkhipova, E. Development of an energy prediction tool for commercial buildings using case-based reasoning. Energy Build. 2014, 81, 152–160. [Google Scholar] [CrossRef]

- Le Cam, M.; Zmeureanu, R.; Daoud, A. Cascade-based short-term forecasting method of the electric demand of HVAC system. Energy 2016, 119, 1098–1107. [Google Scholar] [CrossRef]

- Zhao, H.-X.; Magoulés, F. A review on the prediction of building energy consumption. Renew. Sustain. Energy Rev. 2012, 16, 3586–3592. [Google Scholar] [CrossRef]

- Daut, M.A.M.; Hassan, M.Y.; Abdullah, H.; Rahman, H.A.; Abdullah, M.P.; Hussin, F. Building electrical energy consumption forecasting analysis using conventional and artificial intelligence methods: A Review. Renew. Sustain. Energy Rev. 2017, 70, 1108–1118. [Google Scholar] [CrossRef]

- Wang, Z.; Srinivasan, R.S. A review of artificial intelligence based building energy use prediction: Contrasting the capabilities of single and ensemble prediction models. Renew. Sustain. Energy Rev. 2017, 75, 796–808. [Google Scholar] [CrossRef]

- Amasyali, K.; El-Gohary, N.M. A review of data-driven building energy consumption prediction studies. Renew. Sustain. Energy Rev. 2018, 81, 1192–1205. [Google Scholar] [CrossRef]

- Wei, Y.; Zhang, X.; Shi, Y.; Xia, L.; Pan, S.; Wu, J.; Han, M.; Zhao, X. A review of data-driven approaches for prediction and classification of building energy consumption. Renew. Sustain. Energy Rev. 2018, 82, 1027–1047. [Google Scholar] [CrossRef]

- Merriam-Webster. Definition of Forecast. Available online: https://www.merriam-webster.com/dictionary/forecast (accessed on 30 January 2018).

- Merriam-Webster. Definition of Predict. Available online: https://www.merriam-webster.com/dictionary/predicting (accessed on 10 August 2018).

- Hyndman, R.J.; Athanasopoulos, G. Forecasting: Principles and Practice, 2nd ed.; OTexts: Melbourne, Australia, 2018. [Google Scholar]

- Oxford Dictionary. Estimate. Available online: https://en.oxforddictionaries.com/definition/estimate (accessed on 30 August 2018).

- McCulloch, W.S.; Pitts, W. A logical calculus of the ideas immanent in nervous activity. Bull. Math. Boil. 1943, 5, 115–133. [Google Scholar] [CrossRef]

- Rosenblatt, F. The perceptron: A probabilistic model for information storage and organization in the brain. Psychol. Rev. 1958, 65, 386–408. [Google Scholar] [CrossRef]

- Specht, D. A General Regression Neural Network. IEEE Trans. Neural Netw. 1991, 2, 568–576. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Yao, X. Evolving artificial neural networks. Proc. IEEE 1999, 87, 1423–1447. [Google Scholar]

- Hecht-Nielsen, R. Theory of the backpropagation neural network. In Proceedings of the 2013 International Joint Conference on Neural Networks (IJCNN), Dallas, TX, USA, 4–9 August 2013; pp. 593–605. [Google Scholar]

- Dong, Q.; Xing, K.; Zhang, H. Artificial Neural Network for Assessment of Energy Consumption and Cost for Cross Laminated Timber Office Building in Severe Cold Regions. Sustainability 2017, 10, 84. [Google Scholar] [CrossRef]

- Yang, J.; Rivard, H.; Zmeureanu, R. Building energy prediction with adaptive artificial neural networks. In Proceedings of the Ninth International IBPSA Conference, Montreal, QC, Canada, 15–18 August 2005. [Google Scholar]

- Benedetti, M.; Cesarotti, V.; Introna, V.; Serranti, J. Energy consumption control automation using Artificial Neural Networks and adaptive algorithms: Proposal of a new methodology and case study. Appl. Energy 2016, 165, 60–71. [Google Scholar] [CrossRef]

- Li, K.; Hu, C.; Liu, G.; Xue, W. Building’s electricity consumption prediction using optimized artificial neural networks and principal component analysis. Energy Build. 2015, 108, 106–113. [Google Scholar] [CrossRef]

- Schalkoff, R. Artificial Intelligence: An Engineering Approach; McGraw-Hill: New York, NY, USA, 1990. [Google Scholar]

- Rahman, A.; Smith, A.D. Predicting fuel consumption for commercial buildings with machine learning algorithms. Energy Build. 2017, 152, 341–358. [Google Scholar] [CrossRef]

- Barron, A.R. Approximation and estimation bounds for artificial neural networks. Mach. Learn. 1994, 14, 115–133. [Google Scholar] [CrossRef]

- Wang, L.; Lee, E.W.; Yuen, R.K. Novel dynamic forecasting model for building cooling loads combining an artificial neural network and an ensemble approach. Appl. Energy 2018, 228, 1740–1753. [Google Scholar] [CrossRef]

- WSG Inc. NeuroShell 2 Manual; Ward Systems Group Inc.: Frederick, MD, USA, 1995. [Google Scholar]

- Karatzas, K.; Katsifarakis, N. Modelling of household electricity consumption with the aid of computational intelligence methods. Adv. Build. Energy Res. 2018, 12, 84–96. [Google Scholar] [CrossRef]

- Hutter, F.; Kotthoff, L.; Vanschoren, J. Automated Machine Learning; Springer: New York, NY, USA, 2019. [Google Scholar]

- Zoph, B.; Le, Q. Neural Architecture Search with Reinforcement Learning. In Proceedings of the International Conference on Learning Representations, Toulon, France, 24–26 April 2017. [Google Scholar]

- Liu, H.; Simonyan, K.; Yang, Y. DARTS: Differentiable Architecture Search. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 30 April 2019. [Google Scholar]

- Chae, Y.T.; Horesh, R.; Hwang, Y.; Lee, Y.M. Artificial neural network model for forecasting sub-hourly electricity usage in commercial buildings. Energy Build. 2016, 111, 184–194. [Google Scholar] [CrossRef]

- Powell, K.M.; Sriprasad, A.; Cole, W.J.; Edgar, T.F. Heating, cooling, and electrical load forecasting for a large-scale district energy system. Energy 2014, 74, 877–885. [Google Scholar] [CrossRef]

- Deb, C.; Eang, L.S.; Yang, J.; Santamouris, M.; Santamouris, M. Forecasting diurnal cooling energy load for institutional buildings using Artificial Neural Networks. Energy Build. 2016, 121, 284–297. [Google Scholar] [CrossRef]

- Penya, Y.K.; Borges, C.E.; Fernández, I.; Hernández, C.E.B. Short-term Load Forecasting in Non-Residential Buildings; IEEE: Piscataway, NJ, USA, 2011. [Google Scholar]

- Mena, R.; Rodriguez, F.; Castilla, M.; Arahal, M. A prediction model based on neural networks for the energy consumption of a bioclimatic building. Energy Build. 2014, 82, 142–155. [Google Scholar] [CrossRef]

- Sakawa, M.; Kato, K.; Ushiro, S. Cooling load prediction in a district heating and cooling system through simplified robust filter and multilayered neural network. Appl. Artif. Intell. 2010, 15, 633–643. [Google Scholar] [CrossRef]

- Ben-Nakhi, A.E.; Mahmoud, M.A. Cooling load prediction for buildings using general regression neural networks. Energy Convers. Manag. 2004, 45, 2127–2141. [Google Scholar] [CrossRef]

- Karatasou, S.; Santamouris, M.; Geros, V. Modeling and predicting building’s energy use with artificial neural networks: Methods and results. Energy Build. 2006, 38, 949–958. [Google Scholar] [CrossRef]

- González, P.A.; Zamarreño, J.M. Prediction of hourly energy consumption in buildings based on a feedback artificial neural network. Energy Build. 2005, 37, 595–601. [Google Scholar] [CrossRef]

- Fernández, I.; Hernández, C.E.B.; Penya, Y.K. Efficient building load forecasting. In ETFA2011; IEEE: Piscataway, NJ, USA, 2011; pp. 1–8. [Google Scholar]

- Escrivá-Escrivá, G.; Álvarez-Bel, C.; Roldán-Blay, C.; Alcázar-Ortega, M. New artificial neural network prediction method for electrical consumption forecasting based on building end-uses. Energy Build. 2011, 43, 3112–3119. [Google Scholar] [CrossRef]

- Kamaev, V.A.; Shcherbakov, M.V.; Panchenko, D.P.; Shcherbakova, N.L.; Brebels, A.; Kamaev, V. Using connectionist systems for electric energy consumption forecasting in shopping centers. Autom. Remote. Control 2012, 73, 1075–1084. [Google Scholar] [CrossRef]

- Bagnasco, A.; Fresi, F.; Saviozzi, M.; Silvestro, F.; Vinci, A. Electrical consumption forecasting in hospital facilities: An application case. Energy Build. 2015, 103, 261–270. [Google Scholar] [CrossRef]

- Fan, C.; Xiao, F.; Wang, S. Development of prediction models for next-day building energy consumption and peak power demand using data mining techniques. Appl. Energy 2014, 127, 1–10. [Google Scholar] [CrossRef]

- Guo, Y.; Wang, J.; Chen, H.; Li, G.; Liu, J.; Xu, C.; Huang, R.; Huang, Y. Machine learning-based thermal response time ahead energy demand prediction for building heating systems. Appl. Energy 2018, 221, 16–27. [Google Scholar] [CrossRef]

- Farzana, S.; Liu, M.; Baldwin, A.; Hossain, M.U. Multi-model prediction and simulation of residential building energy in urban areas of Chongqing, South West China. Energy Build. 2014, 81, 161–169. [Google Scholar] [CrossRef]

- Yun, K.; Luck, R.; Mago, P.J.; Cho, H. Building hourly thermal load prediction using an indexed ARX model. Energy Build. 2012, 54, 225–233. [Google Scholar] [CrossRef]

- Roldán-Blay, C.; Escrivá-Escrivá, G.; Álvarez-Bel, C.; Roldán-Porta, C.; Rodriguez-Garcia, J. Upgrade of an artificial neural network prediction method for electrical consumption forecasting using an hourly temperature curve model. Energy Build. 2013, 60, 38–46. [Google Scholar] [CrossRef]

- Jetcheva, J.G.; Majidpour, M.; Chen, W.-P. Neural network model ensembles for building-level electricity load forecasts. Energy Build. 2014, 84, 214–223. [Google Scholar] [CrossRef]

- Penya, Y.K.; Borges, C.E.; Agote, D.; Fernández, I.; Hernández, C.E.B. Short-term load forecasting in air-conditioned non-residential Buildings. In Proceedings of the 2011 IEEE International Symposium on Industrial Electronics, Gdansk, Poland, 27–30 June 2011; pp. 1359–1364. [Google Scholar]

- Paudel, S.; Elmtiri, M.; Kling, W.L.; Le Corre, O.; Lacarrière, B. Pseudo dynamic transitional modeling of building heating energy demand using artificial neural network. Energy Build. 2014, 70, 81–93. [Google Scholar] [CrossRef]

- Le Cam, M.; Daoud, A.; Zmeureanu, R. Forecasting electric demand of supply fan using data mining techniques. Energy 2016, 101, 541–557. [Google Scholar] [CrossRef]

- Jurado, S.; Nebot, A.; Mugica, F.; Avellana, N.; Gomez, S.J.; Mugica, F.J. Hybrid methodologies for electricity load forecasting: Entropy-based feature selection with machine learning and soft computing techniques. Energy 2015, 86, 276–291. [Google Scholar] [CrossRef]

- Ruiz, L.; Rueda, R.; Cuéllar, M.; Pegalajar, M. Energy consumption forecasting based on Elman neural networks with evolutive optimization. Expert Syst. Appl. 2018, 92, 380–389. [Google Scholar] [CrossRef]

- Ruiz, L.G.B.; Cuéllar, M.P.; Calvo-Flores, M.D.; Jiménez, M.D.C.P. An Application of Non-Linear Autoregressive Neural Networks to Predict Energy Consumption in Public Buildings. Energies 2016, 9, 684. [Google Scholar] [CrossRef]

- Tascikaraoglu, A.; Sanandaji, B.M. Short-term residential electric load forecasting: A compressive spatio-temporal approach. Energy Build. 2016, 111, 380–392. [Google Scholar] [CrossRef]

- Garnier, A.; Eynard, J.; Caussanel, M.; Grieu, S. Predictive control of multizone heating, ventilation and air-conditioning systems in non-residential buildings. Appl. Soft Comput. 2015, 37, 847–862. [Google Scholar] [CrossRef]

- Liu, Y.; Wang, W.; Ghadimi, N. Electricity load forecasting by an improved forecast engine for building level consumers. Energy 2017, 139, 18–30. [Google Scholar] [CrossRef]

- Dong, B.; Li, Z.; Rahman, S.M.; Vega, R. A hybrid model approach for forecasting future residential electricity consumption. Energy Build. 2016, 117, 341–351. [Google Scholar] [CrossRef]

- Kusiak, A.; Xu, G. Modeling and optimization of HVAC systems using a dynamic neural network. Energy 2012, 42, 241–250. [Google Scholar] [CrossRef]

- Srinivasan, D. Energy demand prediction using GMDH networks. Neurocomputing 2008, 72, 625–629. [Google Scholar] [CrossRef]

- Yuce, B.; Mourshed, M.; Rezgui, Y. A Smart Forecasting Approach to District Energy Management. Energies 2017, 10, 1073. [Google Scholar] [CrossRef]

- Azadeh, A.; Ghaderi, S.; Sohrabkhani, S. Annual electricity consumption forecasting by neural network in high energy consuming industrial sectors. Energy Convers. Manag. 2008, 49, 2272–2278. [Google Scholar] [CrossRef]

- Le Cam, M.; Zmeureanu, R.; Daoud, A. Comparison of inverse models used for the forecast of the electric demand of chillers. In Proceedings of the Conference of International Building Performance Simulation Association, Chambéry, France, 25–28 August 2013. [Google Scholar]

- Li, X.; Wen, J.; Bai, E.-W. Developing a whole building cooling energy forecasting model for on-line operation optimization using proactive system identification. Appl. Energy 2016, 164, 69–88. [Google Scholar] [CrossRef]

- Yildiz, B.; Bilbao, J.; Sproul, A. A review and analysis of regression and machine learning models on commercial building electricity load forecasting. Renew. Sustain. Energy Rev. 2017, 73, 1104–1122. [Google Scholar] [CrossRef]

- Yokoyama, R.; Wakui, T.; Satake, R. Prediction of energy demands using neural network with model identification by global optimization. Energy Convers. Manag. 2009, 50, 319–327. [Google Scholar] [CrossRef]

- Deb, C.; Eang, L.S.; Yang, J.; Santamouris, M.; Santamouris, M. Forecasting Energy Consumption of Institutional Buildings in Singapore. Procedia Eng. 2015, 121, 1734–1740. [Google Scholar] [CrossRef]

- Son, H.; Kim, C. Forecasting Short-term Electricity Demand in Residential Sector Based on Support Vector Regression and Fuzzy-rough Feature Selection with Particle Swarm Optimization. Procedia Eng. 2015, 118, 1162–1168. [Google Scholar] [CrossRef]

- Hou, Z.; Lian, Z.; Yao, Y.; Yuan, X. Cooling-load prediction by the combination of rough set theory and an artificial neural-network based on data-fusion technique. Appl. Energy 2006, 83, 1033–1046. [Google Scholar] [CrossRef]

- Gunay, B.; Shen, W.; Newsham, G. Inverse blackbox modeling of the heating and cooling load in office buildings. Energy Build. 2017, 142, 200–210. [Google Scholar] [CrossRef]

- Platon, R.; Dehkordi, V.R.M.J. Hourly prediction of a building’s electricity consumption using case-based reasoning, artificial neural networks and principal component analysis. Energy Build. 2015, 92, 10–18. [Google Scholar] [CrossRef]

- Geysen, D.; De Somer, O.; Johansson, C.; Brage, J.; Vanhoudt, D. Operational thermal load forecasting in district heating networks using machine learning and expert advice. Energy Build. 2018, 162, 144–153. [Google Scholar] [CrossRef]

- Arabzadeh, V.; Alimohammadisagvand, B.; Jokisalo, J.; Siren, K. A novel cost-optimizing demand response control for a heat pump heated residential building. In Build Simul; Tsinghua University Press: Beijing, China, 2018; Volume 11, pp. 533–547. [Google Scholar]

- Ferlito, S.; Atrigna, M.; Graditi, G.; de Vito, S.; Salvato, M.; Buonanno, A.; di Francia, G. Predictive models for building’s energy consumption: An Artificial Neural Network (ANN) approach. In Proceedings of the XVIII AISEM Annual Conference, Trento, Italy, 3–5 February 2015. [Google Scholar]

- Ahmad, T.; Chen, H. Short and medium-term forecasting of cooling and heating load demand in building environment with data-mining based approaches. Energy Build. 2018, 166, 460–476. [Google Scholar] [CrossRef]

- Liao, G.-C. Hybrid Improved Differential Evolution and Wavelet Neural Network with load forecasting problem of air conditioning. Int. J. Electr. Power Energy Syst. 2014, 61, 673–682. [Google Scholar] [CrossRef]

- Kato, K.; Sakawa, M.; Ishimaru, K.; Ushiro, S.; Shibano, T. Heat load prediction through recurrent neural network in district heating and cooling systems. In Proceedings of the 2008 IEEE International Conference on Systems, Man and Cybernetics, Singapore, 12–15 October 2008. [Google Scholar]

- Chitsaz, H.; Shaker, H.; Zareipour, H.; Wood, D.; Amjady, N. Short-term electricity load forecasting of buildings in microgrids. Energy Build. 2015, 99, 50–60. [Google Scholar] [CrossRef]

- Thokala, N.K.; Bapna, A.; Chandra, M.G. A deployable electrical load forecasting solution for commercial buildings. In Proceedings of the 2018 IEEE International Conference on Industrial Technology (ICIT), Lyon, France, 20–22 February 2018; pp. 1101–1106. [Google Scholar]

- Schachter, J.A.; Mancarella, P. A short-term load forecasting model for demand response applications. In Proceedings of the 11th International Conference on the European Energy Market, Krakow, Poland, 28–30 May 2014. [Google Scholar]

- Chakraborty, D.; Elzarka, H. Advanced machine learning techniques for building performance simulation: A comparative analysis. J. Build. Perform. Simul. 2018, 12, 193–207. [Google Scholar] [CrossRef]

- Kialashaki, A.; Reisel, J.R. Modeling of the energy demand of the residential sector in the United States using regression models and artificial neural networks. Appl. Energy 2013, 108, 271–280. [Google Scholar] [CrossRef]

- Amarasinghe, K.; Marino, D.L.; Manic, M. Deep neural networks for energy load forecasting. In Proceedings of the 2017 IEEE 26th International Symposium on Industrial Electronics (ISIE), Edinburgh, UK, 19–21 June 2017. [Google Scholar]

- Fan, C.; Xiao, F.; Zhao, Y. A short-term building cooling load prediction method using deep learning algorithms. Appl. Energy 2017, 195, 222–233. [Google Scholar] [CrossRef]

- Fu, G. Deep belief network based ensemble approach for cooling load forecasting of air-conditioning system. Energy 2018, 148, 269–282. [Google Scholar] [CrossRef]

- Mocanu, E.; Nguyen, P.H.; Gibescu, M.; Kling, W.L. Deep learning for estimating building energy consumption. Sustain. Energy Grids Netw. 2016, 6, 91–99. [Google Scholar] [CrossRef]

- Rahman, A.; Srikumar, V.; Smith, A. Predicting electricity consumption for commercial and residential buildings using deep recurrent neural networks. Appl. Energy 2017, 212, 372–385. [Google Scholar] [CrossRef]

- Kusiak, A.; Xu, G.; Tang, F. Optimization of an HVAC system with a strength multi-objective particle-swarm algorithm. Energy 2011, 36, 5935–5943. [Google Scholar] [CrossRef]

- Li, Z.; Dong, B.; Vega, R. A hybrid model for electrical load forecasting—A new approach integrating data-mining with physics-based models. ASHRAE Trans. 2015, 121, 1–9. [Google Scholar]

- Rahman, M.; Dong, B.; Vega, R. Machine Learning Approach Applied in Electricity Load Forecasting: Within Residential Houses Context. ASHRAE Trans. 2015, 121, 1V. [Google Scholar]

- Yao, Y.; Lian, Z.; Liu, S.; Hou, Z. Hourly cooling load prediction by a combined forecasting model based on Analytic Heirarchy Process. Int. J. Therm. Sci. 2004, 43, 1107–1118. [Google Scholar] [CrossRef]

- Kusiak, A.; Xu, G.; Zhang, Z. Minimization of energy consumption in HVAC systems with data-driven models and an interior-point method. Energy Convers. Manag. 2014, 85, 146–153. [Google Scholar] [CrossRef]

- Kusiak, A.; Zeng, Y.; Xu, G. Minimizing energy consumption of an air handling unit with a computational intelligence approach. Energy Build. 2013, 60, 355–363. [Google Scholar] [CrossRef]

- Zeng, Y.; Zhang, Z.; Kusiak, A.; Tang, F.; Wei, X. Optimizing wastewater pumping system with data-driven models and a greedy electromagnetism like algorithm. Stoch. Environ. Res. Risk Assess. 2016, 30, 1263–1275. [Google Scholar] [CrossRef]

- He, X.; Zhang, Z.; Kusiak, A. Performance optimization of HVAC systems with computational intelligence algorithms. Energy Build. 2014, 81, 371–380. [Google Scholar] [CrossRef]

- Zeng, Y.; Zhang, Z.; Kusiak, A. Predictive modeling and optimization of a multi-zone HVAC system with data mining and firefly algorithms. Energy 2015, 86, 393–402. [Google Scholar] [CrossRef]

- Kusiak, A.; Li, M. Reheat optimization of the variable-air-volume box. Energy 2010, 35, 1997–2005. [Google Scholar] [CrossRef]

- Yan, B.; Malkawi, A.; Yi, Y.K. Case study of applying different energy use modeling methods to an existing building. In Proceedings of the Conference of International Building Performance Simulation Association, Sydney, Australia, 14–16 November 2011. [Google Scholar]

- Li, C.; Ding, Z.; Zhao, D.; Yi, J.; Zhang, G. Building Energy Consumption Prediction: An Extreme Deep Learning Approach. Energies 2017, 10, 1525. [Google Scholar] [CrossRef]

- Macas, M.; Moretti, F.; Fonti, A.; Giantomassi, A.; Comodi, G.; Annunziato, M.; Pizzuti, S.; Capra, A. The role of data sample size and dimensionality in neural network based forecasting of building heating related variables. Energy Build. 2016, 111, 299–310. [Google Scholar] [CrossRef]

- Kapetanakis, D.-S.; Christantoni, D.; Mangina, E.; Finn, D. Evaluation of machine learning algorithms for demand response potential forecasting. In Proceedings of the International Conference on Building Simulation, San Francisco, CA, USA, 7–9 August 2017. [Google Scholar]

- Khalil, E.; Medhat, A.; Morkos, S.; Salem, M. Neural networks approach for energy consumption in air-conditioned administrative building. ASHRAE Trans. 2012, 118, 257–264. [Google Scholar]

- Koschwitz, D.; Frisch, J.; Van Treeck, C. Data-driven heating and cooling load predictions for non-residential buildings based on support vector machine regression and NARX Recurrent Neural Network: A comparative study on district scale. Energy 2018, 165, 134–142. [Google Scholar] [CrossRef]

- Rahman, A.; Smith, A.D. Predicting heating demand and sizing a stratified thermal storage tank using deep learning algorithms. Appl. Energy 2018, 228, 108–121. [Google Scholar] [CrossRef]

- Hribar, R.; Potocnik, P.; Silc, J.; Papa, G. A comparison of models for forecasting the residential natural gas. Energy 2019, 167, 511–522. [Google Scholar] [CrossRef]

- Reynolds, J.; Ahmad, M.W.; Rezgui, Y.; Hippolyte, J.-L. Operational supply and demand optimisation of a multi-vector district energy system using artificial neural networks and a genetic algorithm. Appl. Energy 2019, 235, 699–713. [Google Scholar] [CrossRef]

- Ahmad, T.; Chen, H.; Shair, J.; Xu, C.; Shair, J. Deployment of data-mining short and medium-term horizon cooling load forecasting models for building energy optimization and management. Int. J. Refrig. 2019, 98, 399–409. [Google Scholar] [CrossRef]

- Cai, M.; Pipattanasompom, M.; Rahman, S. Day-ahead building-level load forecasts using deep learning vs. traditional time-series techinques. Appl. Energy 2019, 236, 1078–1088. [Google Scholar] [CrossRef]

- Xu, L.; Wang, S.; Tang, R. Probabilistic load forecasting for buildings considering weather forecasting uncertainty and uncertain peak load. Appl. Energy 2019, 237, 180–195. [Google Scholar] [CrossRef]

- Fan, C.; Sun, Y.; Zhao, Y.; Song, M.; Wang, J. Deep learning-based feature engineering methods for improved building energy prediction. Appl. Energy 2019, 240, 35–45. [Google Scholar] [CrossRef]

- Chou, J.-S.; Tran, D.-S. Forecasting energy consumption time series using machine learning techniques based on usage patterns of residential householders. Energy 2018, 165, 709–726. [Google Scholar] [CrossRef]

- Tian, C.; Li, C.; Zhang, G.; Lv, Y. Data driven parallel prediction of building energy consumption using generative adversarial nets. Energy Build. 2019, 186, 230–243. [Google Scholar] [CrossRef]

- Katsatos, M.; Mourtis, K. Application of Artificial Neuron Networks as energy consumption forecasting tool in the building of Regulatory Authority of Energy, Athens, Greece. Energy Procedia 2019, 157, 851–861. [Google Scholar] [CrossRef]

- Xuan, Z.; Xuehui, Z.; Liequan, L.; Zubing, F.; Junwei, Y.; Dongmei, P. Forecasting performance comparison of two hybrid machine learning models for cooling load of a large-scale commercial building. J. Build. Eng. 2019, 21, 64–73. [Google Scholar] [CrossRef]

- De Felice, M.; Yao, X. Neural networks ensembles for short-term load forecasting. In Proceedings of the 2011 IEEE Symposium on Computational Intelligence Applications In Smart Grid (CIASG), Paris, France, 11–15 April 2011; pp. 1–8. [Google Scholar]

- Liu, D.; Chen, Q. Prediction of building lighting energy consumption based on support vector regression. In Proceedings of the 2013 9th Asian Control. Conference (ASCC), Istanbul, Turkey, 23–26 June 2013; pp. 1–5. [Google Scholar]

- Gulin, M.; Vašak, M.; Banjac, G.; Tomisa, T. Load forecast of a university building for application in microgrid power flow optimization. In Proceedings of the 2014 IEEE International Energy Conference (ENERGYCON), Cavtat, Croatia, 13–16 May 2014; pp. 1223–1227. [Google Scholar]

- Ebrahim, A.F.; Mohammed, O. Household Load Forecasting Based on a Pre-Processing Non-Intrusive Load Monitoring Techniques. In Proceedings of the 2018 IEEE Green Technologies Conference (GreenTech), Austin, TX, USA, 4–6 April 2018. [Google Scholar]

- ASHRAE. Guideline 14-2014, Measurement of Energy, Demand, and Water Savings; ASHRAE: Atlanta, GA, USA, 2014. [Google Scholar]

- U.S. Energy Information Agency. Commercial Building Energy Consumption Survey. Available online: https://www.eia.gov/consumption/commercial/reports/2012/energyusage/ (accessed on 1 December 2018).

- MathWorks, Neural Network Toolbox. Available online: https://www.mathworks.com/help/nnet/index.html (accessed on 25 April 2017).

- Zhang, Y.; Yang, Q. A Survey on Multi-Task Learning; Cornell University: New York, NY, USA, 2018. [Google Scholar]

- Singaravel, S.; Suykens, J.; Geyer, P. Deep-learning neural-network architectures and methods: Using component-based models in building-design energy prediction. Adv. Eng. Inform. 2018, 38, 81–90. [Google Scholar] [CrossRef]

| Equation | Reference |

|---|---|

| [29,30,31] | |

| [32] | |

| [33,34] | |

| [35,36] | |

| [37,38] | |

| [39] |

| Sub-Hourly | |||

|---|---|---|---|

| Paper ID # | Time Step | Forecast Horizon | Error |

| [106] | 15 min | 15 min | 0.001–0.059% (MAPE) |

| [125] | 30 min | 30 min | 0.939–8.34% (MAPE) |

| Hourly | |||

| [125] | 1 h | 1 h | 0.59–19.1% (MAPE) |

| [101] | 1 h | 1 h | 36.5% (MAPE) |

| Multiple hours | |||

| [113] | 12 h | 12 h | 5.03–7.4% (MAPE) |

| Daily (load) | |||

| [56] | Daily | Day ahead | 4.75–6.46% (MAPE) |

| [52] | Daily | Day ahead | 6.63–17.64% (MAPE) |

| Sub-Hourly | |||

|---|---|---|---|

| Paper ID # | Time Step | Forecast Horizon | Error |

| [57] | 5 min | 40 min | 13.2–14.4% (MAPE) |

| Hourly | |||

| [98] | 15 min | 1 h | 4.5–5.4% (MAPE) |

| [102] | 5 min | 1 h | 8.59–23.86% (MAPE) |

| Multiple hours | |||

| [84] | 1 h | 1–6 h | 7.30–8.48% (CV-RMSE) |

| [64] | 15 min | 1–6 h | 30% (CV-RMSE) |

| Daily (profile) | |||

| [78] | 1 h | 24 h | 1.04–4.64% (MAPE) |

| [85] | 1 h | 24 h | 11.56–11.92% (MAPE) |

| [53] | 15 min | 24 h | 2.59–22.56% (MAPE) |

| [124] | 15 min | 24 h | 36.86–42.31% (MAPE) |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Runge, J.; Zmeureanu, R. Forecasting Energy Use in Buildings Using Artificial Neural Networks: A Review. Energies 2019, 12, 3254. https://doi.org/10.3390/en12173254

Runge J, Zmeureanu R. Forecasting Energy Use in Buildings Using Artificial Neural Networks: A Review. Energies. 2019; 12(17):3254. https://doi.org/10.3390/en12173254

Chicago/Turabian StyleRunge, Jason, and Radu Zmeureanu. 2019. "Forecasting Energy Use in Buildings Using Artificial Neural Networks: A Review" Energies 12, no. 17: 3254. https://doi.org/10.3390/en12173254

APA StyleRunge, J., & Zmeureanu, R. (2019). Forecasting Energy Use in Buildings Using Artificial Neural Networks: A Review. Energies, 12(17), 3254. https://doi.org/10.3390/en12173254