Battery Energy Management in a Microgrid Using Batch Reinforcement Learning †

Abstract

:1. Introduction

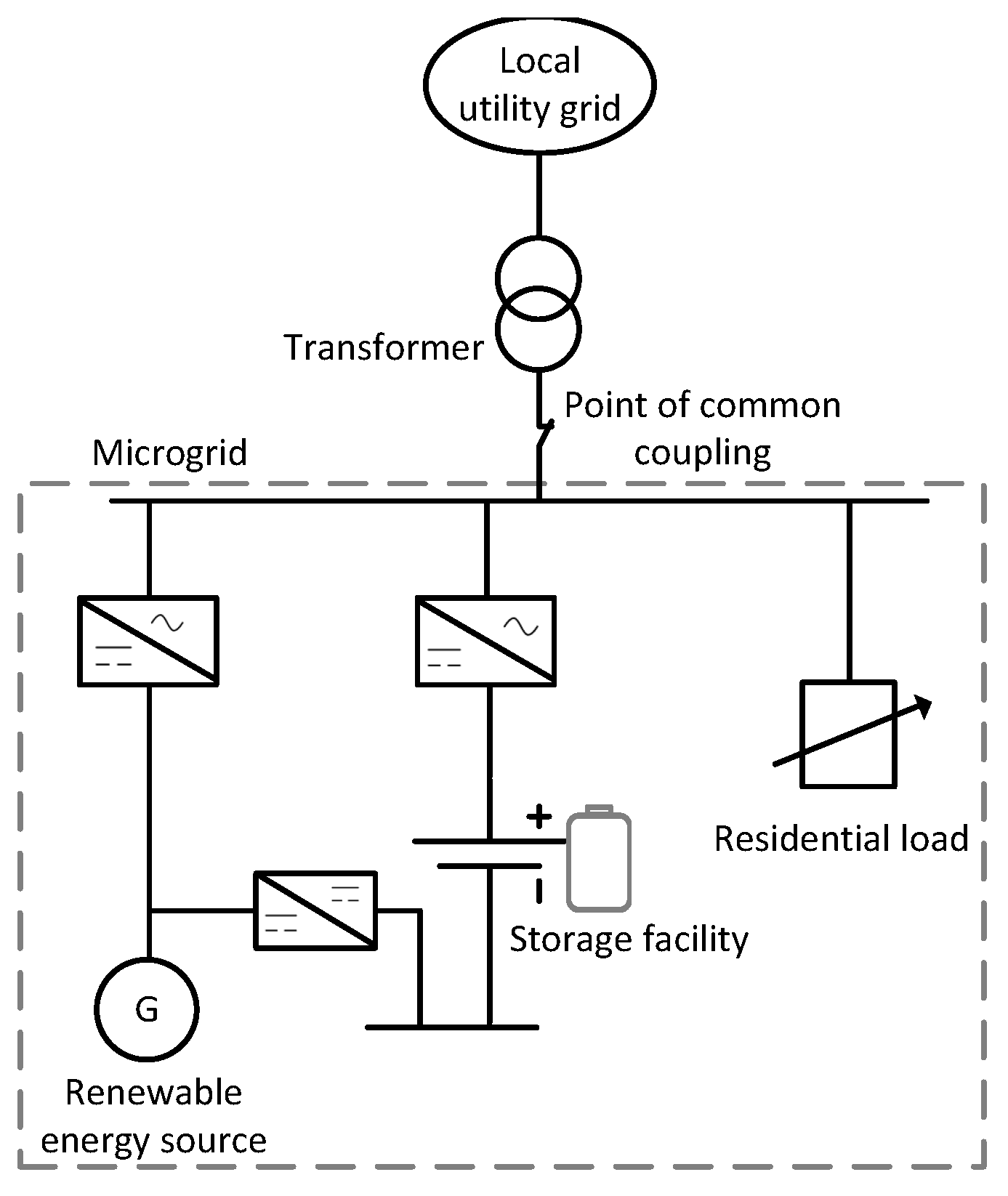

2. Microgrid Model

2.1. Battery Model

- Capacity constraint: The battery cannot be charged above or discharged below , where battery capacity and minimum battery energy level:

- Charge/discharge constraint: The battery cannot be charged and discharged simultaneously. Let and represent charge and discharge actions respectively, where the actions are binary (zero or one). The charge/discharge constraint is represented as follows:

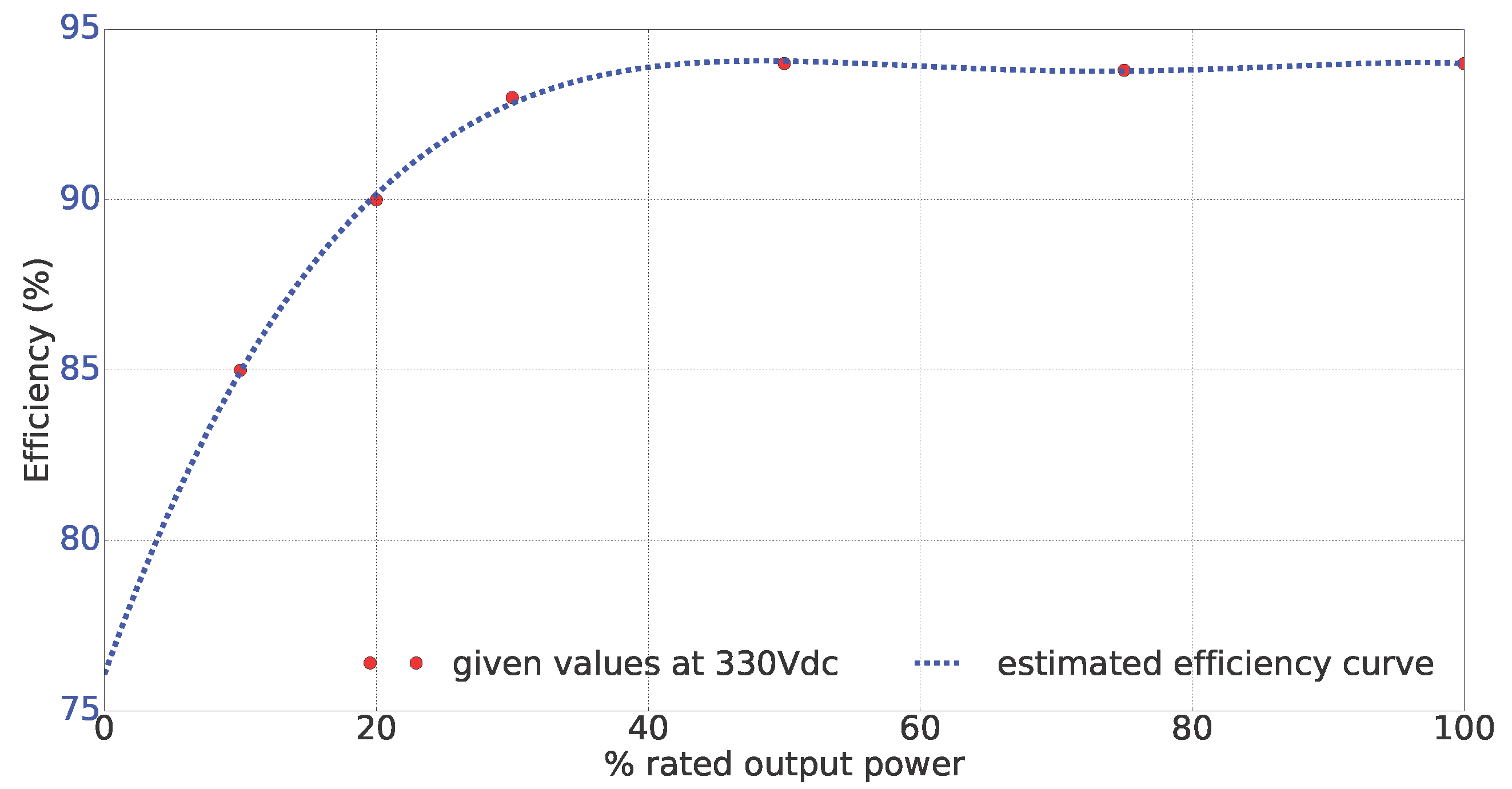

2.2. Inverter Model

3. Problem Formulation

3.1. State Space ()

- (i)

- Timing feature: The timing component, , is date- and time-dependent and contains the microgrid’s state information related to the time period. Using this information, the learning agent can capture some information on the dynamics of the microgrid relevant for the learning process. The timing feature is defined as follows:where represents the quarter-hour of the day and the day of the week. The timing component allows the learning agent to acquire information such as the consumption pattern of residential consumers and the PV production profile. Most residential consumers and PV systems tend to follow a repetitive daily consumption and production pattern respectively.

- (ii)

- Controllable feature: The controllable component contains state information related to system quantities that can be measured locally and that are influenced by the control actions. In this case, the battery SoC is the controllable component: . In the context of this paper, the SoC is uniformly sampled to 25 bins of equal length in the interval [0,1]. The SoC is defined as:

- (iii)

- Exogenous feature: The exogenous feature, , contains the observable exogenous information that has an impact on the system dynamics and the cost function, but cannot be influenced by the control actions. This feature is time- and weather-dependent. This work assumes the availability of a deterministic forecast of the exogenous state information.where represents the residential load and the information on the PV generation.

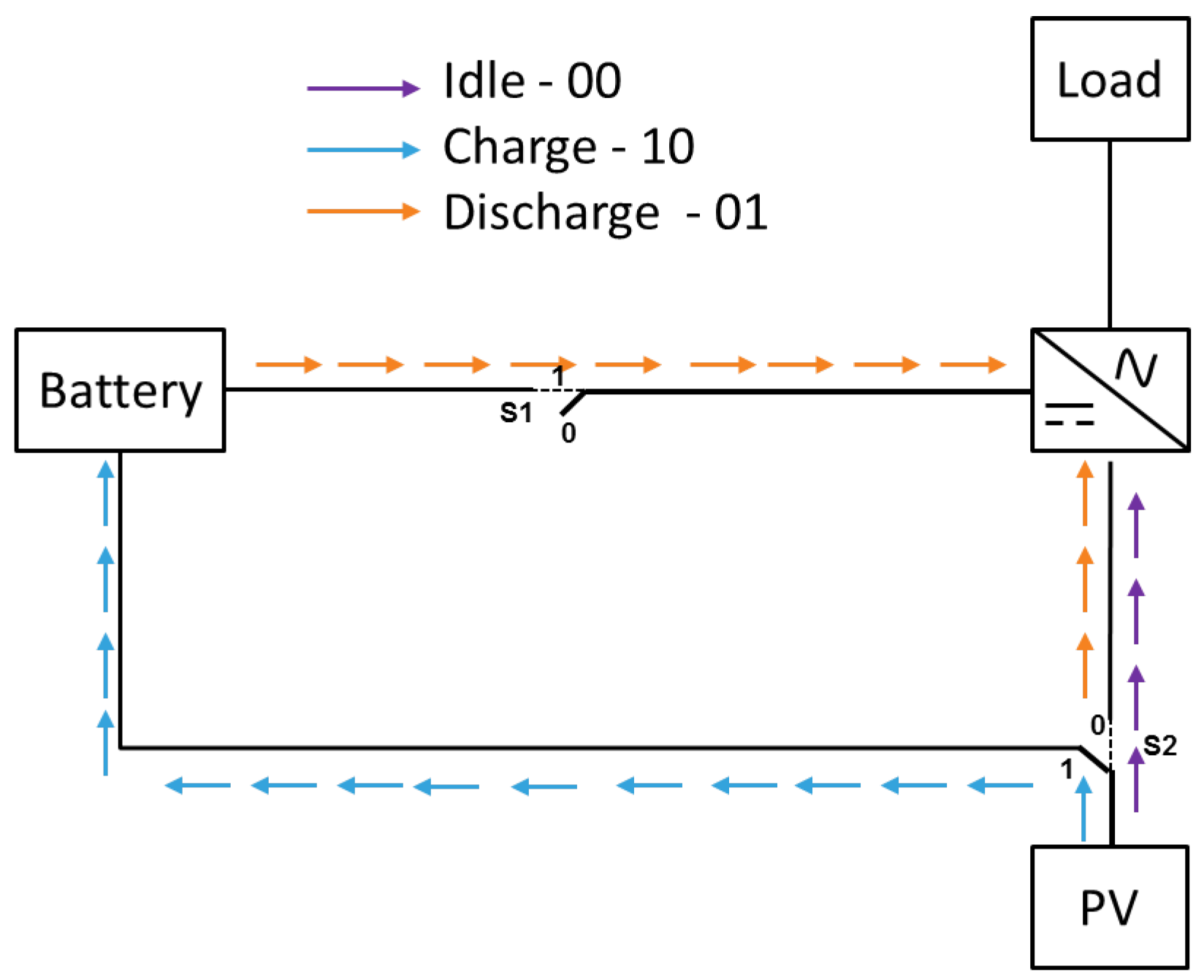

3.2. Action Space ()

- : battery idle, i.e., covering all the electricity demand by using energy produced by the PV system and/or purchasing from the grid.

- : charging the battery using all power generated by the PV while purchasing all energy demanded by the consumer from the local utility grid.

- : cover part or all of the energy demand by discharging the battery; buy electricity from the grid if PV generated and discharged energy from the battery are not sufficient.

3.3. Backup Controller

3.4. Cost Function

3.5. Reinforcement Learning

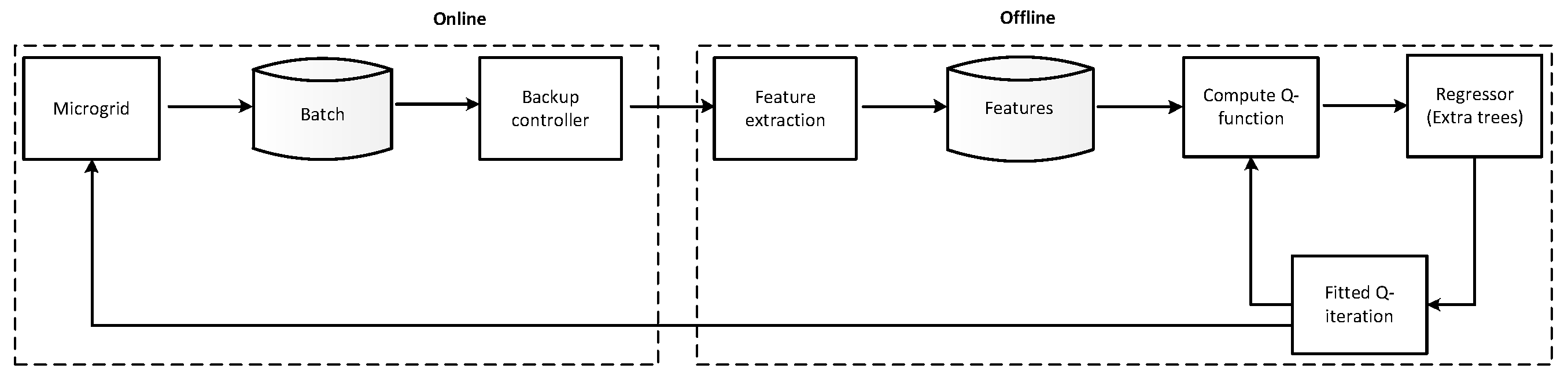

4. Implementation

4.1. Batch Reinforcement Learning

4.2. Fitted Q-Iteration

| Algorithm 1 Fitted Q-iteration with function approximation and forecast of exogenous information [21]. |

| Input: discount factor , control period T |

| 1: Generate samples |

| ← () observed exogenous component of the state is replaced by its forecast |

| 2: Initialize to zero for all state-action pairs, |

| 3: For do |

| 4: For do |

| 5: |

| 6: end for |

| 7: use a regression algorithm to build from |

| 8: end for |

| Output: |

4.3. Regressor

4.4. Microgrid Case Study

5. Simulation Results and Discussion

5.1. Scenario 1: Fixed Electricity Prices

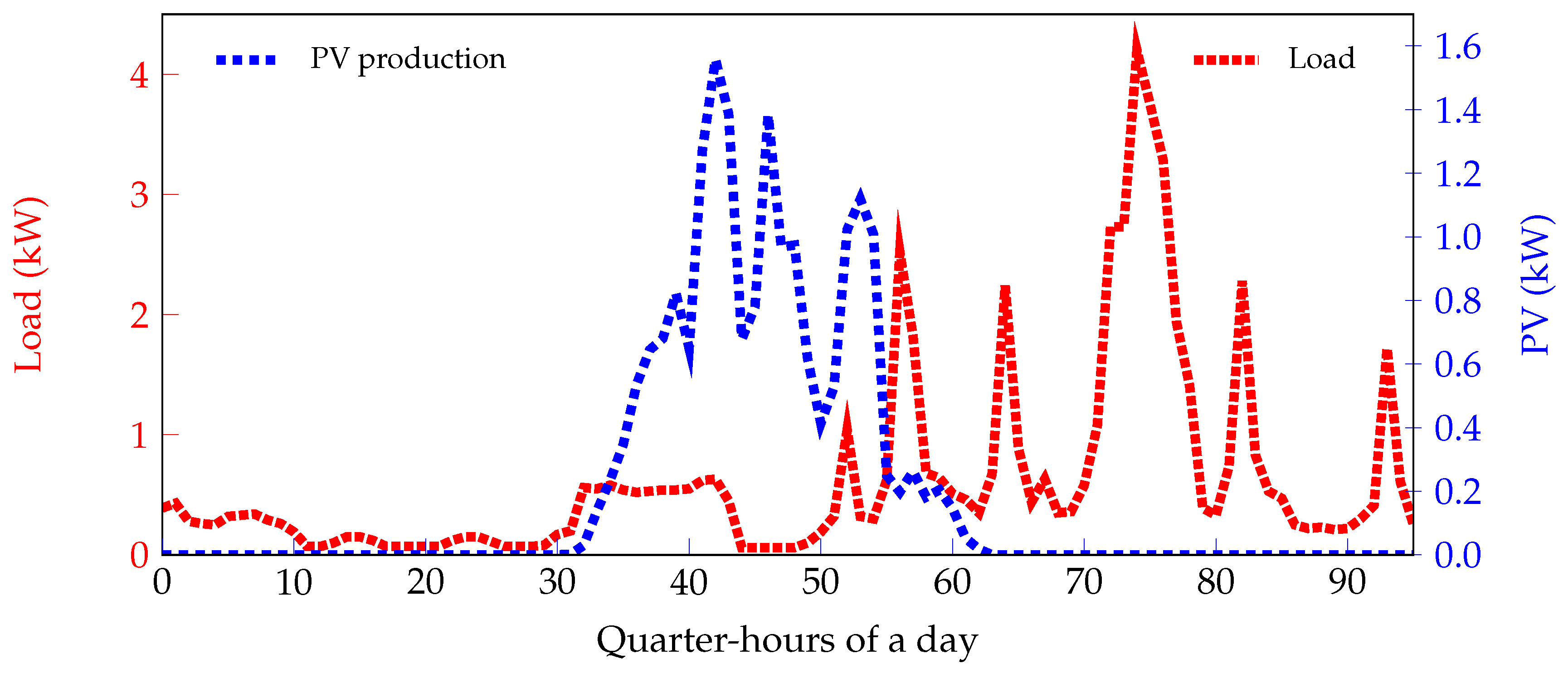

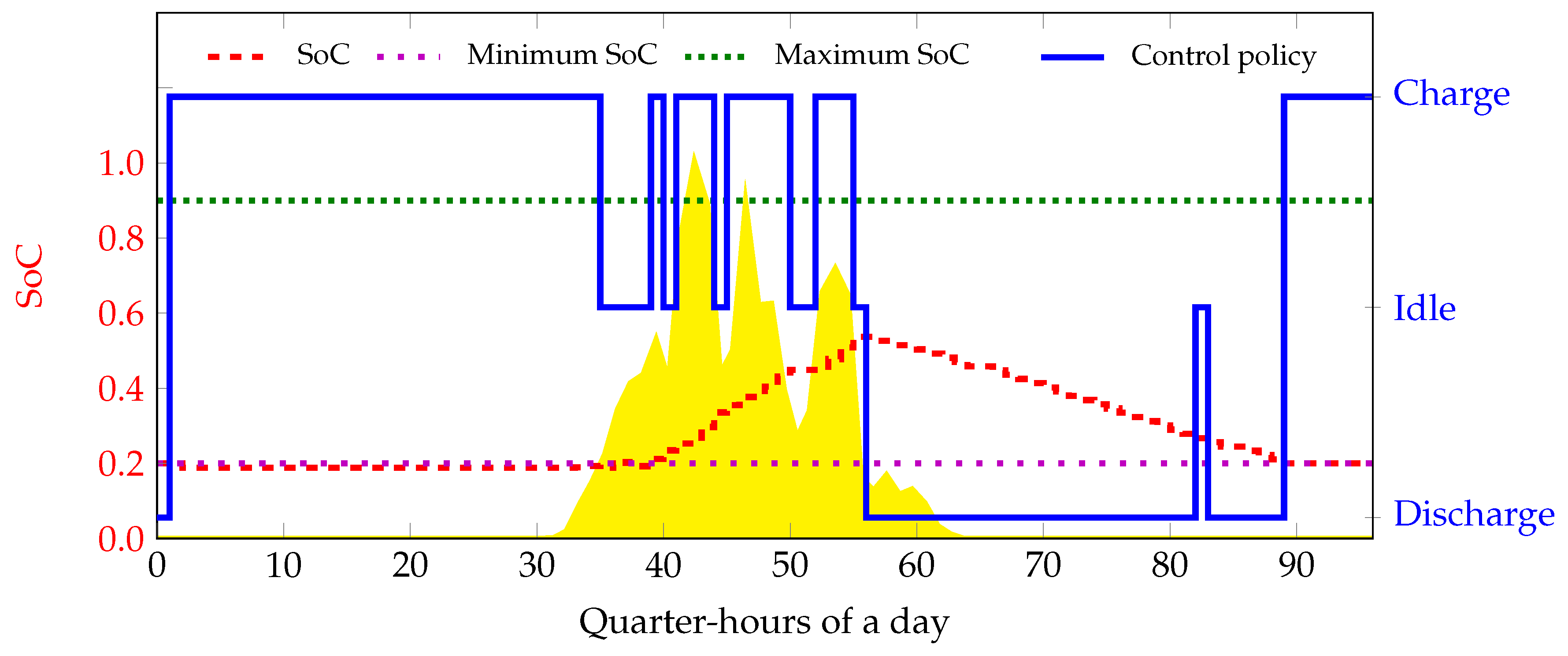

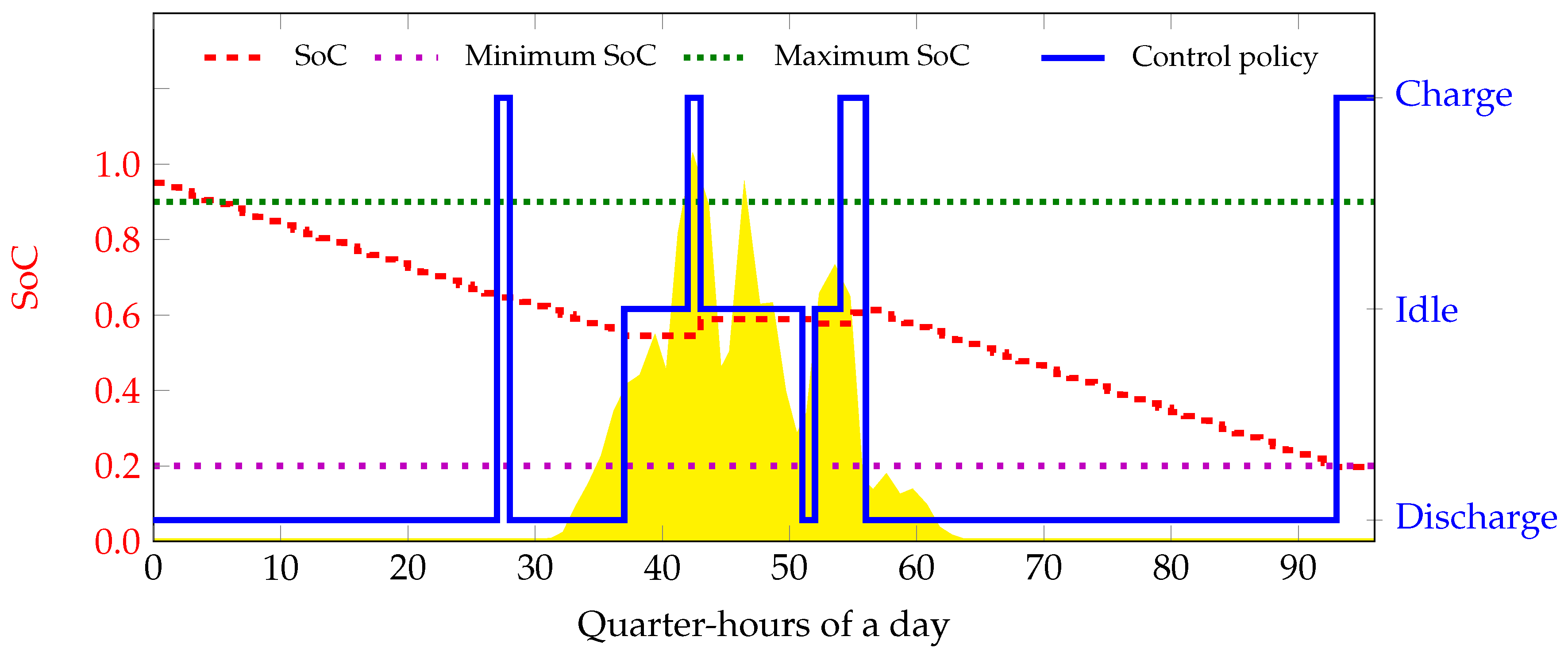

- Experiment 1This experiment shows the policy obtained when the elements of the exogenous component of the state space are not considered, i.e., . A perfect forecast of the load and PV generation is provided. Simulation results are presented in Figure 6.

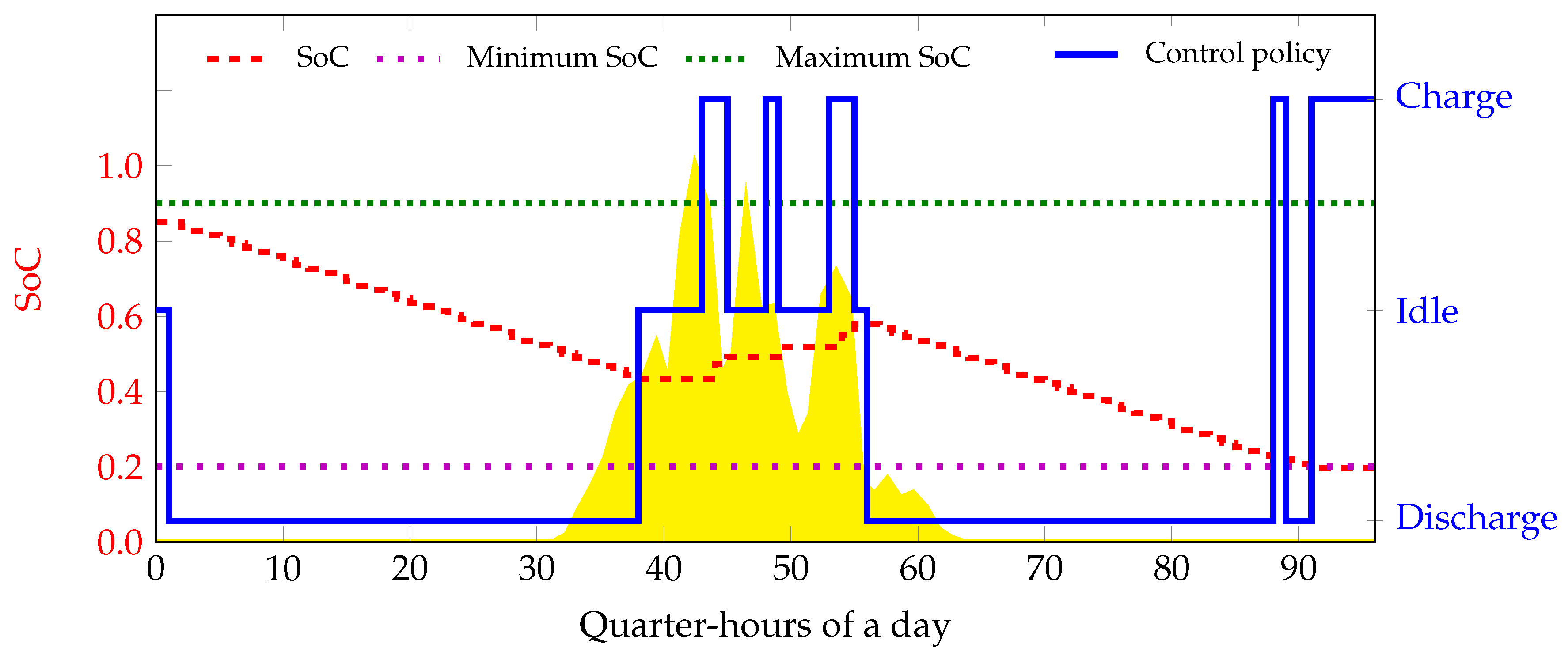

- Experiment 2In this experiment, all the elements of the exogenous component of the state space are considered. . The load and the PV are discretized to 50 discrete values between kW and kW, 0 kW and 8 kW respectively. Figure 7 shows the control policy and SoC evolution learned by the agent.

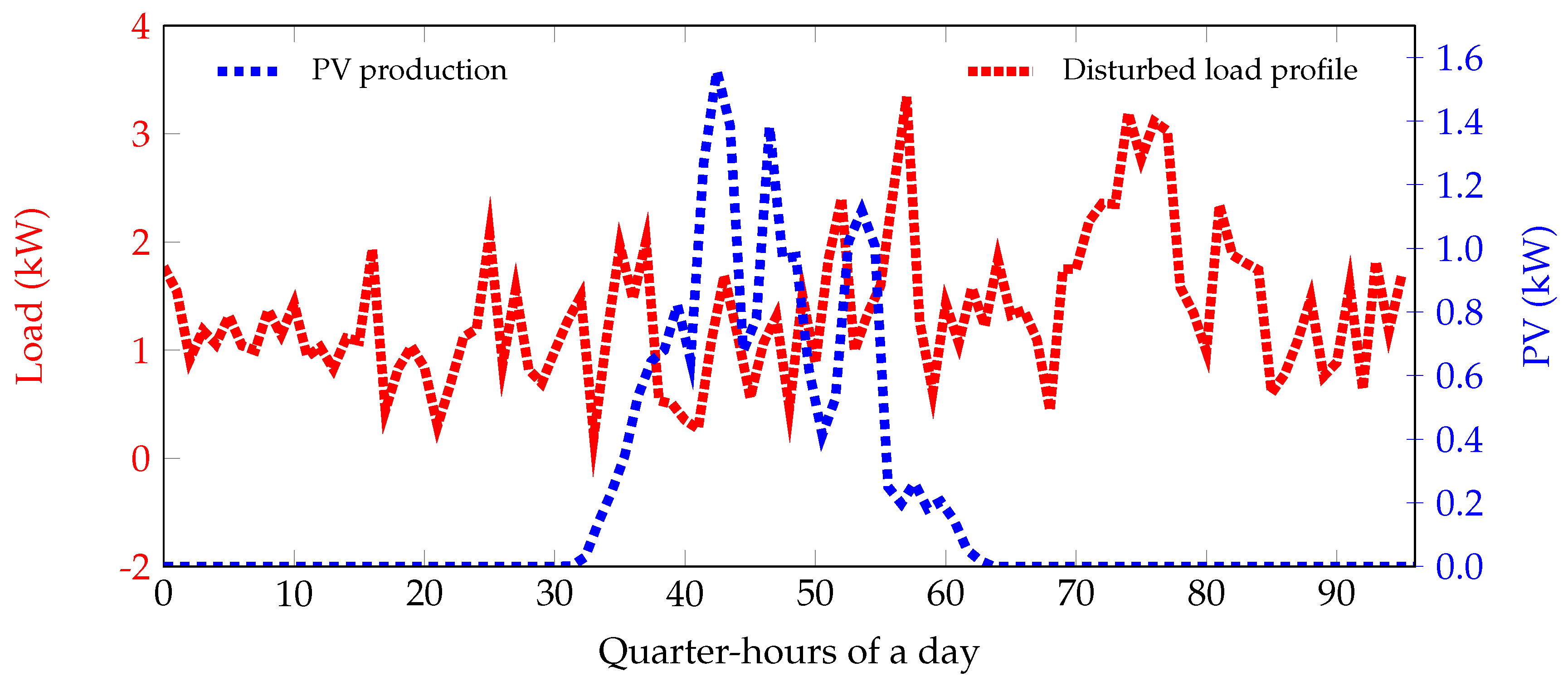

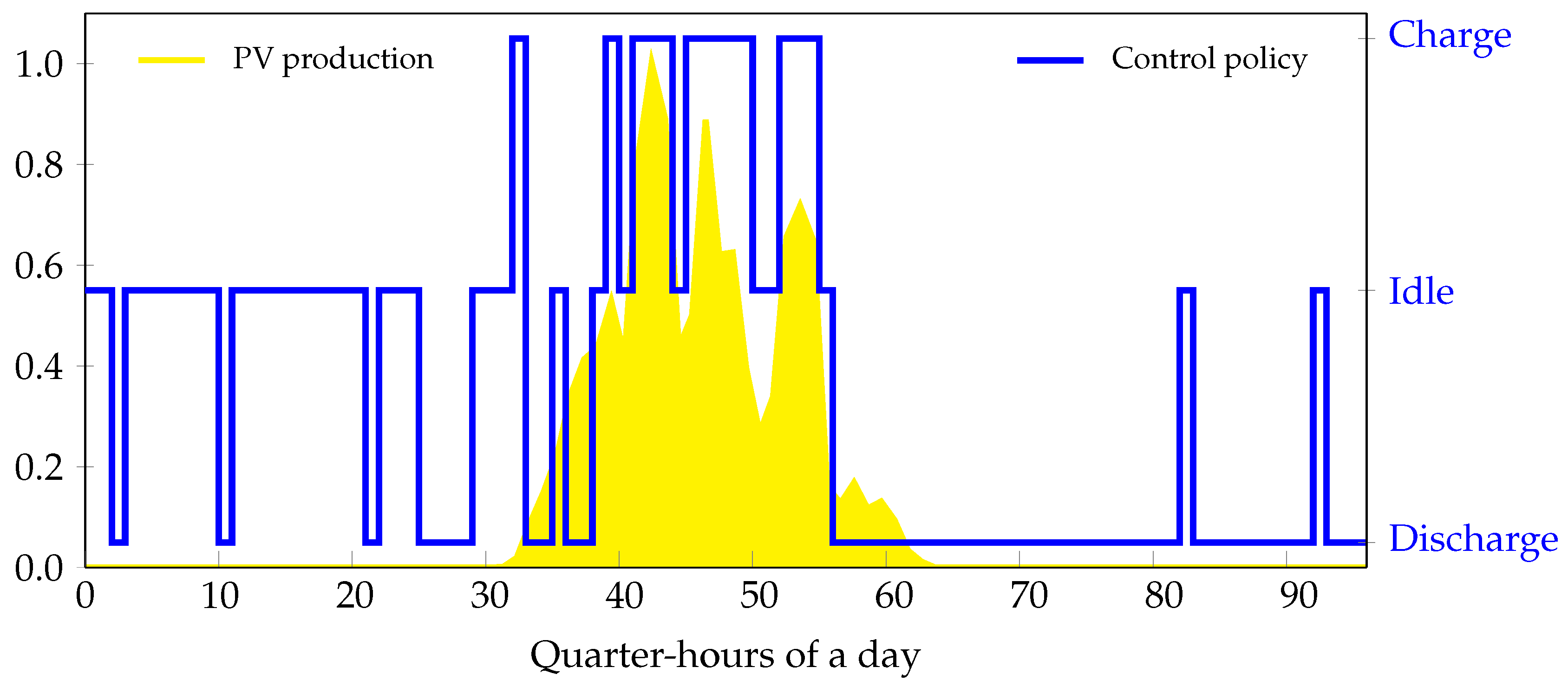

- Experiment 3The final experiment in this scenario considers that . A disturbance is added to the perfect load forecast to introduce uncertainty, as illustrated in Figure 8. This disturbance is white noise; standard normal distribution, i.e., a normal distribution with mean, , and standard deviation, . We choose to introduce a disturbance in the load because it is common in real life to have uncertainties in the energy usage patterns of residential consumers. Simulations results can be seen in Figure 9. By learning the time component of the feature space, the RL agent can learn the PV production profile. A perfect forecast of the PV generation is provided. The load is uniformly sampled to 50 values between kW and kW.

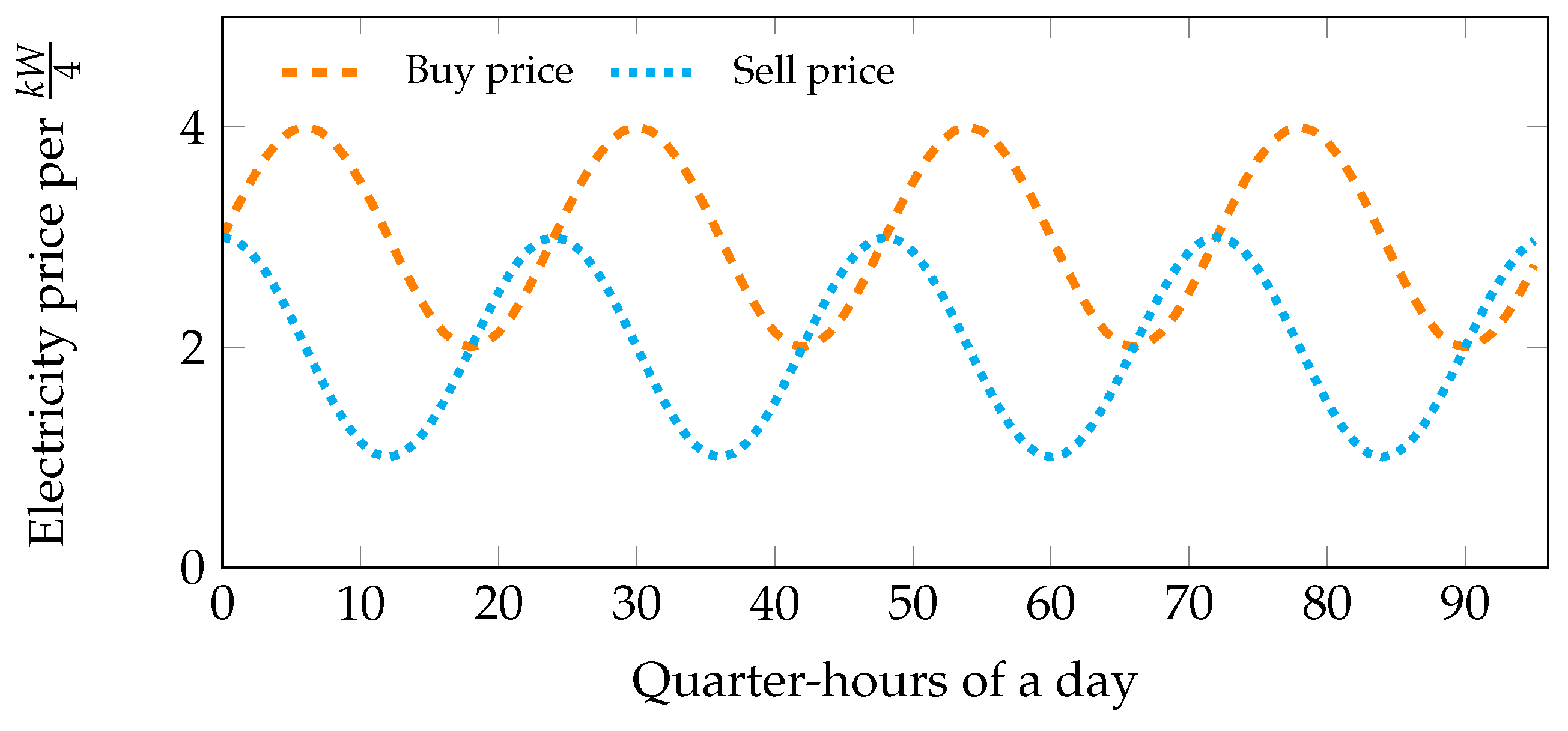

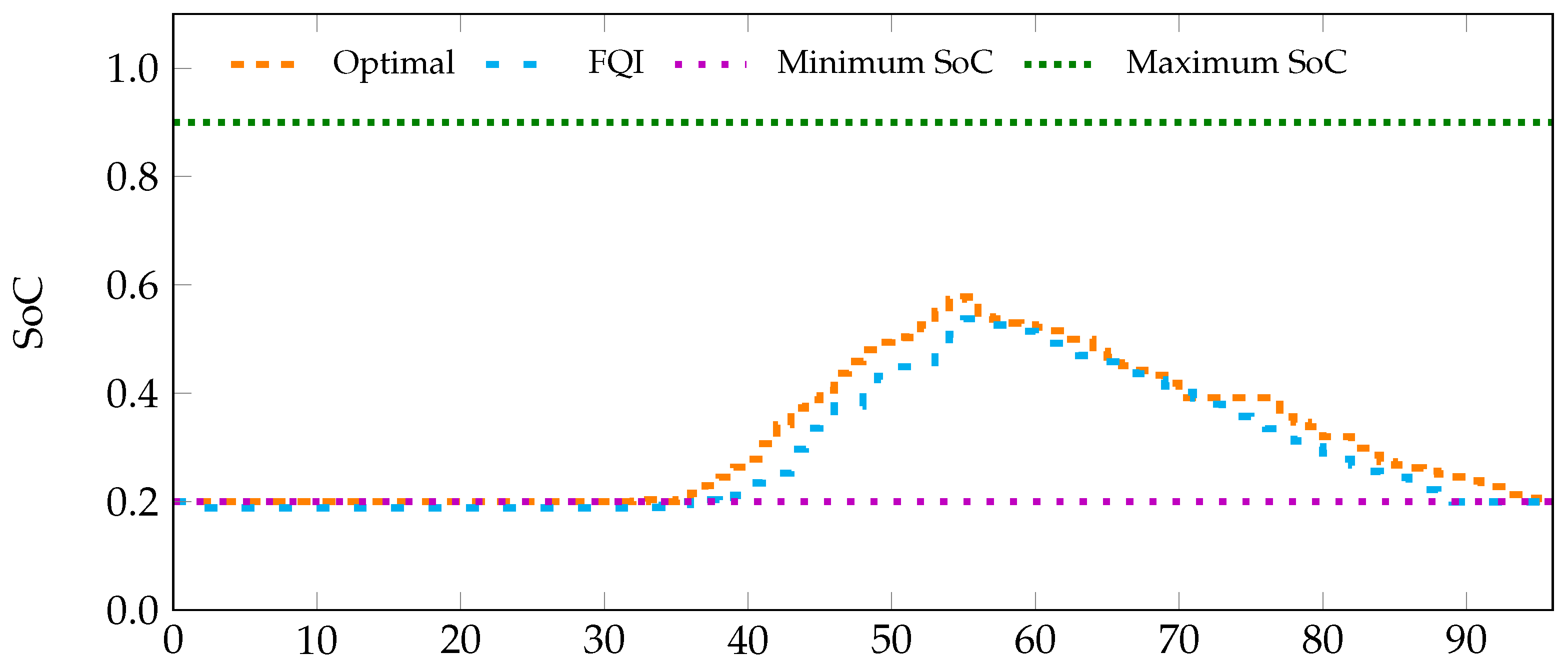

5.2. Scenario 2: Dynamic Electricity Pricing

5.3. Performance Indicators

- (a)

- Battery utilization rate, B: the ratio of the cumulative power from the PV used to charge the battery, described by the following equation.

- (b)

- Inverter power utilization rate, P:

- (c)

- Net electricity cost :where , .

5.4. Theoretical Benchmark in CPLEX

6. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

Abbreviations

| AC | Alternating Current |

| DC | Direct Current |

| FQI | Fitted-Q Iteration |

| MDP | Markov Decision Process |

| PV | Photovoltaic |

| RES | Renewable Energy Sources |

| RL | Reinforcement Learning |

| SoC | State of Charge |

References

- Voropai, N.I.; Efimov, D.N. Operation and control problems of power systems with distributed generation. In Proceedings of the 2009 IEEE Power Energy Society General Meeting, Calgary, AB, Canada, 26–30 July 2009; pp. 1–5. [Google Scholar]

- European Commission: Energy, Moving towards a Low Carbon Economy. Available online: https://ec.europa.eu/energy/en/topics/renewable-energy (accessed on 23 August, 2017).

- Leo, R.; Milton, R.S.; Sibi, S. Reinforcement learning for optimal energy management of a solar microgrid. In Proceedings of the Global Humanitarian Technology Conference—South Asia Satellite (GHTC-SAS), Trivandrum, India, 26–27 September 2014; pp. 183–188. [Google Scholar]

- Dimeas, A.L.; Hatziargyriou, N.D. Agent based Control for Microgrids. In Proceedings of the Power Engineering Society General Meeting, Tampa, FL, USA, 24–28 June 2007; pp. 1–5. [Google Scholar]

- Parhizi, S.; Lotfi, H.; Khodaei, A.; Bahramirad, S. State of the Art in Research on Microgrids: A Review. IEEE Access 2015, 3, 890–925. [Google Scholar] [CrossRef]

- François-Lavet, V.; Gemine, Q.; Ernst, D.; Fonteneau, R. Towards the minimization of the levelized energy costs of microgrids using both long-term and short-term storage devices. In Smart Grid: Networking, Data Management, and Business Models; CRC Press: Boca Raton, FL, USA, 2016; pp. 295–319. [Google Scholar]

- Zhao, B.; Xue, M.; Zhang, X.; Wang, C.; Zhao, J. An MAS based energy management system for a stand-alone microgrid at high altitude. Appl. Energy 2015, 143, 251–261. [Google Scholar] [CrossRef]

- Bacha, S.; Picault, D.; Burger, B.; Etxeberria-Otadui, I.; Martins, J. Photovoltaics in Microgrids: An Overview of Grid Integration and Energy Management Aspects. IEEE Ind. Electron. Mag. 2015, 9, 33–46. [Google Scholar] [CrossRef]

- Tesla Gigafactory. Available online: https://www.tesla.com/gigafactory (accessed on 4 July 2017).

- Van Moffaert, K.; De Hauwere, Y.M.; Vrancx, P.; Nowé, A. Reinforcement Learning for Energy-Reducing Start-Up Schemes. In Proceedings of the 24th Benelux Conference on Artificial Intelligence, Maastricht, The Netherlands, 25–26 October 2012. [Google Scholar]

- Camacho, E.F.; Alba, C.B. Model Predictive Control; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Ernst, D.; Glavic, M.; Capitanescu, F.; Wehenkel, L. Reinforcement learning versus model predictive control: A comparison on a power system problem. IEEE Trans. Syst. Man Cybern. Syst. 2009, 39, 517–529. [Google Scholar] [CrossRef] [PubMed]

- Bifaretti, S.; Cordiner, S.; Mulone, V.; Rocco, V.; Rossi, J.; Spagnolo, F. Grid-connected Microgrids to Support Renewable Energy Sources Penetration. Energy Procedia 2017, 105, 2910–2915. [Google Scholar] [CrossRef]

- Prodan, I.; Zio, E. A model predictive control framework for reliable microgrid energy management. Int. J. Electr. Power Energy Syst. 2014, 61, 399–409. [Google Scholar] [CrossRef]

- Bertsekas, D. Dynamic Programming and Optimal Control; Athena Scientific: Belmont, MA, USA, 1995. [Google Scholar]

- Costa, L.M.; Kariniotakis, G. A Stochastic Dynamic Programming Model for Optimal Use of Local Energy Resources in a Market Environment. In Proceedings of the 2007 IEEE Lausanne Power Tech, Lausanne, Switzerland, 1–5 July 2007; pp. 449–454. [Google Scholar]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Kuznetsova, E.; Li, Y.F.; Ruiz, C.; Zio, E.; Ault, G.; Bell, K. Reinforcement learning for microgrid energy management. Energy 2013, 59, 133–146. [Google Scholar] [CrossRef]

- Dimeas, A.; Hatziargyriou, N. Multi-agent reinforcement learning for microgrids. In Proceedings of the Power and Energy Society General Meeting, Providence, RI, USA, 25–29 July 2010; pp. 1–8. [Google Scholar]

- Lee, D.; Powell, W. An Intelligent Battery Controller Using Bias-Corrected Q-learning. In Proceedings of the Twenty-Sixth AAAI Conference on Artificial Intelligence, Toronto, ON, Canada, 22–26 July 2012. [Google Scholar]

- Ruelens, F.; Claessens, B.; Vandael, S.; De Schutter, B.; Babuska, R.; Belmans, R. Residential demand response applications using batch reinforcement learning. arXiv, 2015; arXiv:1504.02125. [Google Scholar]

- Ernst, D.; Geurts, P.; Wehenkel, L. Tree-based batch mode reinforcement learning. J. Mach. Learn. Res. 2005, 6, 503–556. [Google Scholar]

- Lange, S.; Gabel, T.; Riedmiller, M. Reinforcement learning: State-of-the-Art. Springer 2012, 12, 45–73. [Google Scholar]

- Ruelens, F.; Iacovella, S.; Claessens, B.J.; Belmans, R. Learning agent for a heat-pump thermostat with a set-back strategy using model-free reinforcement learning. Energies 2015, 8, 8300–8318. [Google Scholar] [CrossRef]

- Claessens, B.J.; Vandael, S.; Ruelens, F.; De Craemer, K.; Beusen, B. Peak shaving of a heterogeneous cluster of residential flexibility carriers using reinforcement learning. In Proceedings of the 2013 4th IEEE/PES Innovative Smart Grid Technologies Europe (ISGT EUROPE), Lyngby, Denmark, 6–9 October 2013; pp. 1–5. [Google Scholar]

- Ruelens, F.; Claessens, B.J.; Vandael, S.; Iacovella, S.; Vingerhoets, P.; Belmans, R. Demand response of a heterogeneous cluster of electric water heaters using batch reinforcement learning. In Proceedings of the Power Systems Computation Conference (PSCC), Wroclaw, Poland, 18–22 August 2014; pp. 1–7. [Google Scholar]

- Vandael, S.; Claessens, B.; Ernst, D.; Holvoet, T.; Deconinck, G. Reinforcement learning of heuristic EV fleet charging in a day-ahead electricity market. IEEE Trans. Smart Grid 2015, 6, 1795–1805. [Google Scholar] [CrossRef]

- De Somer, O.; Soares, A.; Kuijpers, T.; Vossen, K.; Vanthournout, K.; Spiessens, F. Using Reinforcement Learning for Demand Response of Domestic Hot Water Buffers: A Real-Life Demonstration. arXiv, 2017; arXiv:1703.05486. [Google Scholar]

- François-Lavet, V.; Taralla, D.; Ernst, D.; Fonteneau, R. Deep reinforcement learning solutions for energy microgrids management. In Proceedings of the European Workshop on Reinforcement Learning (EWRL 2016), Barcelona, Spain, 3–4 December 2016. [Google Scholar]

- Busoniu, L.; Babuška, R.; De Schutter, B.; Ernst, D. Reinforcement Learning and Dynamic Programming Using Function Approximators; CRC Press: Boca Raton, FL, USA, 2010. [Google Scholar]

- Ernst, D.; Glavic, M.; Geurts, P.; Wehenkel, L. Approximate Value Iteration in the Reinforcement Learning Context. Application to Electrical Power System Control. Int. J. Emerg. Electr. Power Syst. 2005, 3. [Google Scholar] [CrossRef]

- Olivares, D.E.; Mehrizi-Sani, A.; Etemadi, A.H.; Cañizares, C.A.; Iravani, R.; Kazerani, M.; Hajimiragha, A.H.; Gomis-Bellmunt, O.; Saeedifard, M.; Palma-Behnke, R.; et al. Trends in Microgrid Control. IEEE Trans. Smart Grid 2014, 5, 1905–1919. [Google Scholar] [CrossRef]

- Driesse, A.; Jain, P.; Harrison, S. Beyond the curves: Modeling the electrical efficiency of photovoltaic inverters. In Proceedings of the 33rd IEEE Photovoltaic Specialists Conference, San Diego, CA, USA, 11–16 May 2008; pp. 1–6. [Google Scholar]

- Riedmiller, M. Neural fitted Q-iteration—First experiences with a data efficient neural reinforcement learning method. In Proceedings of the 16th European Conference on Machine Learning (ECML), Porto, Portugal, 3–7 October 2005; Springer: New York, NY, USA, 2005; Volume 3720, p. 317. [Google Scholar]

- Farahmand, A.M.; Ghavamzadeh, M.; Szepesvari, C.; Mannor, S. Regularized Fitted Q-Iteration for planning in continuous-space Markovian decision problems. In Proceedings of the 2009 American Control Conference, St. Louis, MO, USA, 10–12 June 2009; pp. 725–730. [Google Scholar]

- Geurts, P.; Ernst, D.; Wehenkel, L. Extremely randomized trees. Mach. Learn. 2006, 63, 3–42. [Google Scholar] [CrossRef]

- Linear Project. Available online: http://www.linear-smartgrid.be/en/research-smart-grids (accessed on 1 August 2017).

- ILOG, Inc. ILOG CPLEX: High-Performance Software for Mathematical Programming and Optimization, 2006. Available online: http://www.ilog.com/products/cplex/ (accessed on 14 September 2017 ).

| Binary Representation | Action |

|---|---|

| 00: S1 = 0, S2 = 0 | Idle |

| 10: S1 = 1, S2 = 0 | Charge |

| 01: S1 = 0, S2 = 1 | Discharge |

| 11: S1 = 0, S2 = 1 | Not possible due to charge/discharge constraint, Equation (3) |

| Indicator | Experiment 1 | Experiment 2 | Experiment 3 |

|---|---|---|---|

| Battery utilization rate, | 27 | 47 | 32 |

| Inverter power utilization rate, | 17 | 21 | 17 |

| Net electricity cost, (euros) | 155 | 149 | 156 |

| Indicator | Experiment 1 | Experiment 2 | Experiment 3 |

|---|---|---|---|

| Battery utilization rate, | 30 | 49 | 34 |

| Inverter power utilization rate, | 18 | 21 | 17 |

| Net electricity cost, (euros) | 75 | 71 | 77 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mbuwir, B.V.; Ruelens, F.; Spiessens, F.; Deconinck, G. Battery Energy Management in a Microgrid Using Batch Reinforcement Learning. Energies 2017, 10, 1846. https://doi.org/10.3390/en10111846

Mbuwir BV, Ruelens F, Spiessens F, Deconinck G. Battery Energy Management in a Microgrid Using Batch Reinforcement Learning. Energies. 2017; 10(11):1846. https://doi.org/10.3390/en10111846

Chicago/Turabian StyleMbuwir, Brida V., Frederik Ruelens, Fred Spiessens, and Geert Deconinck. 2017. "Battery Energy Management in a Microgrid Using Batch Reinforcement Learning" Energies 10, no. 11: 1846. https://doi.org/10.3390/en10111846

APA StyleMbuwir, B. V., Ruelens, F., Spiessens, F., & Deconinck, G. (2017). Battery Energy Management in a Microgrid Using Batch Reinforcement Learning. Energies, 10(11), 1846. https://doi.org/10.3390/en10111846