Recognizing Your Hand and That of Your Romantic Partner

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

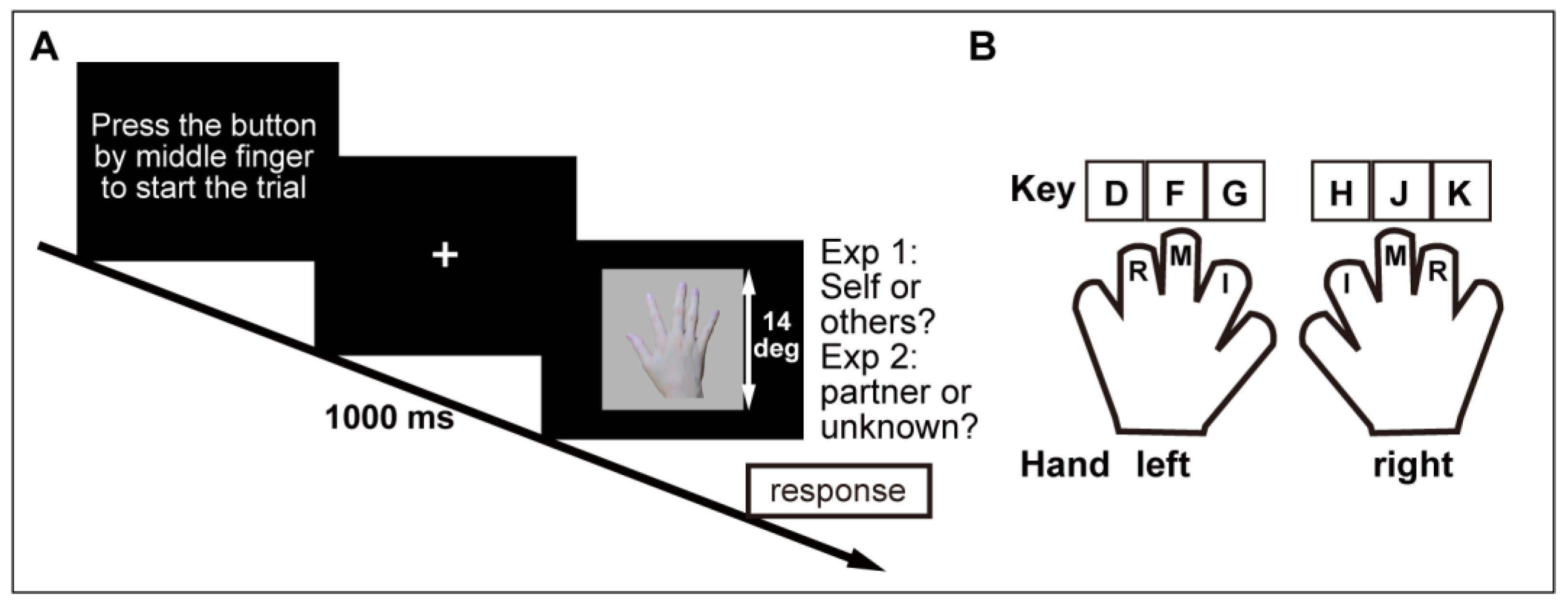

2.2. Stimuli and Procedure

2.2.1. Photographing Participants’ Hands

Task 1: Self–Other Discrimination Task

Task 2: Partner/Unknown Opposite-Sex Person Discrimination Task

2.2.2. Hand Discrimination Task

Task 1: Self–Other Discrimination Task

Task 2: Partner/Unknown Opposite-Sex Person Discrimination Task

2.3. Data Processing and Analysis

3. Results

3.1. Accuracy

3.1.1. Task 1: Self-Other Discrimination Task

3.1.2. Task 2: Partner/Unknown Opposite-Sex Person Discrimination Task

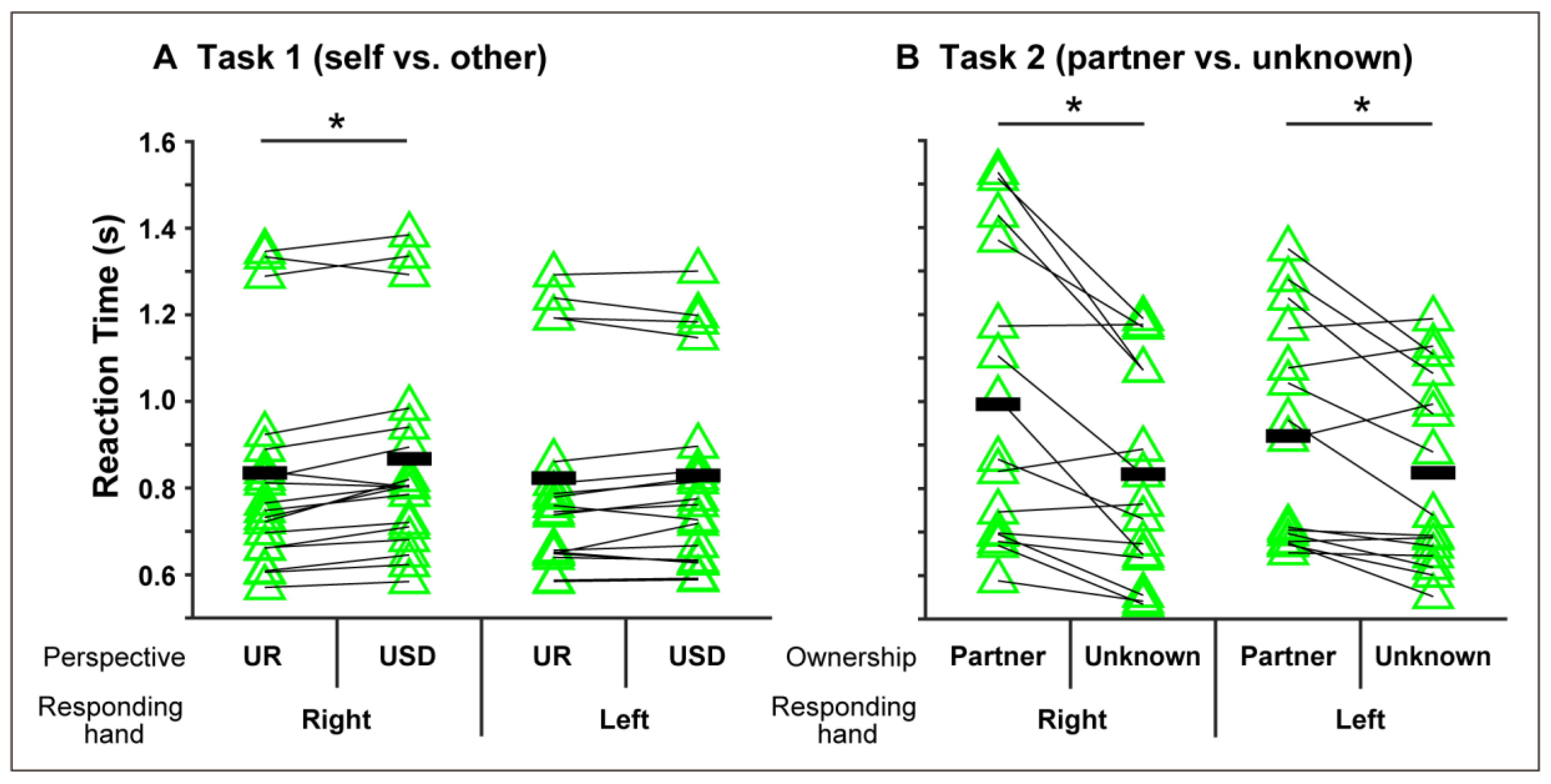

3.2. RTs

3.2.1. Task 1: Self-Other Discrimination Task

3.2.2. Task 2: Partner/Unknown Opposite-Sex Person Discrimination Task

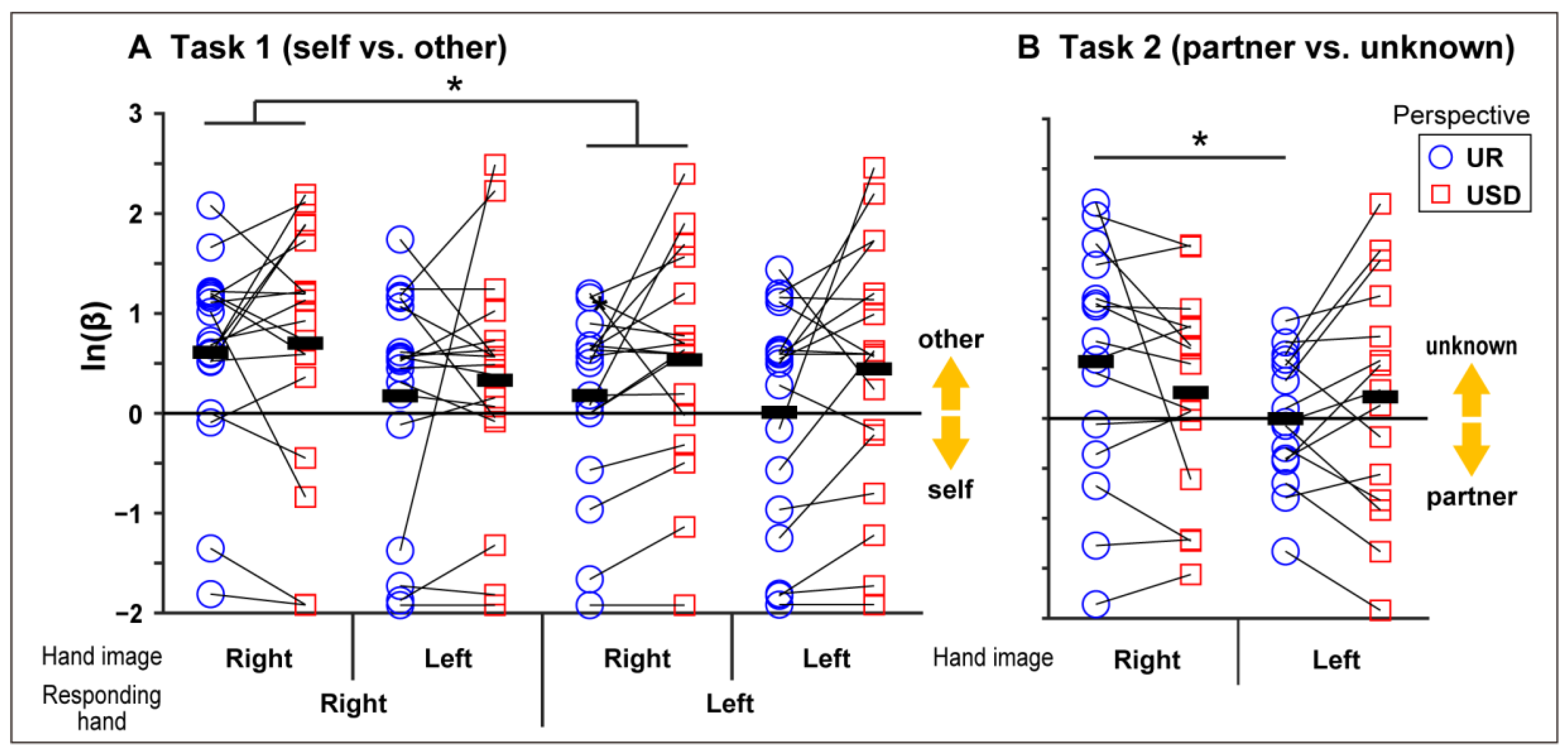

3.3. Dprime and ln(β)

3.3.1. Task 1: Self–Other Discrimination Task

3.3.2. Task 2: Partner/Unknown Opposite-Sex Person Discrimination Task

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Decety, J.; Sommerville, J.A. Shared representations between self and other: A social cognitive neuroscience view. Trends Cogn. Sci. 2003, 7, 527–533. [Google Scholar] [CrossRef] [PubMed]

- Ruby, P.; Decety, J. Effect of subjective perspective taking during simulation of action: A PET investigation of agency. Nat. Neurosci. 2001, 4, 546–550. [Google Scholar] [CrossRef] [PubMed]

- Jeannerod, M. Visual and action cues contribute to the self–other distinction. Nat. Neurosci. 2004, 7, 422–423. [Google Scholar] [CrossRef] [PubMed]

- Keenan, J.P.; Wheeler, M.A.; Gallup, G.G.; Pascual-Leone, A. Self-recognition and the right prefrontal cortex. Trends Cogn. Sci. 2000, 4, 338–344. [Google Scholar] [CrossRef]

- Downing, P.E.; Jiang, Y.; Shuman, M.; Kanwisher, N. A cortical area selective for visual processing of the human body. Science 2001, 293, 2470–2473. [Google Scholar] [CrossRef] [PubMed]

- Peelen, M.V.; Downing, P.E. Is the extrastriate body area involved in motor actions? Nat. Neurosci. 2005, 8, 125. [Google Scholar] [CrossRef]

- Fusco, G.; Fusaro, M.; Aglioti, S.M. Midfrontal-occipital Ɵ-tACS modulates cognitive conflicts related to bodily stimuli. Soc. Cogn. Affect. Neurosci. 2020. [Google Scholar] [CrossRef]

- Peelen, M.V.; Downing, P.E. The neural basis of visual body perception. Nat. Rev. Neurosci. 2007, 8, 636–648. [Google Scholar] [CrossRef] [PubMed]

- Kanwisher, N.; McDermott, J.; Chun, M.M. The fusiform face area: A module in human extrastriate cortex specialized for face perception. J. Neurosci. 1997, 17, 4302–4311. [Google Scholar] [CrossRef]

- Aranda, C.; Ruz, M.; Tudela, P.; Sanabria, D. Focusing on the bodily self: The influence of endogenous attention on visual body processing. Atten. Percept. Psychophys. 2010, 72, 1756–1764. [Google Scholar] [CrossRef]

- Frassinetti, F.; Maini, M.; Romualdi, S.; Galante, E.; Avanzi, S. Is it mine? Hemispheric asymmetries in corporeal self-recognition. J. Cogn. Neurosci. 2008, 20, 1507–1516. [Google Scholar] [CrossRef] [PubMed]

- Rossetti, Y.; Holmes, N.; Rode, G.; Farnè, A. Cognitive and bodily selves: How do they interact following brain lesion? In the Embodied Self: Dimensions, Coherence and Disorders; Fuchs, T., Sattel, H., Henningsen, P., Eds.; Schattauer Verlag: Stuttgart, Germany, 2010; pp. 117–133. ISBN 978-3-7945-2791-5. [Google Scholar]

- Tallis, R. The Hand: A Philosophical Inquiry into Human Being; Edinburgh University Press: Edinburgh, UK, 2003. [Google Scholar]

- Wilson, F.R. The Hand: How Its Use Shapes the Brain, Language, and Human Culture; Vintage Books: New York, NY, USA, 1999; ISBN 978-0-679-74047-6. [Google Scholar]

- Argyle, M. Bodily Communication, 2nd ed.; Routledge: London, UK, 1988; ISBN 978-0-203-75383-5. [Google Scholar]

- Jeannerod, M. The Neural and Behavioural Organization of Goal-Directed Movements; Oxford University Press: Oxford, UK, 1988. [Google Scholar]

- Jones, L.; Lederman, S. Human Hand Function; Oxford University Press: Oxford, UK, 2006. [Google Scholar]

- Baek, S.; Kim, K.I.; Kim, T. Pushing the envelope for RGB-based dense 3D hand pose estimation via neural rendering. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 1067–1076. [Google Scholar]

- Hasson, Y.; Varol, G.; Tzionas, D.; Kalevatykh, I.; Black, M.J.; Laptev, I.; Schmid, C. Learning joint reconstruction of hands and manipulated objects. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 11799–11808. [Google Scholar]

- Bracci, S.; Ietswaart, M.; Peelen, M.V.; Cavina-Pratesi, C. Dissociable neural responses to hands and non-hand body parts in human left extrastriate visual cortex. J. Neurophysiol. 2010, 103, 3389–3397. [Google Scholar] [CrossRef]

- Chan, A.W.-Y.; Peelen, M.V.; Downing, P.E. The effect of viewpoint on body representation in the extrastriate body area. Neuroreport 2004, 15, 2407–2410. [Google Scholar] [CrossRef]

- Saxe, R.; Jamal, N.; Powell, L. My body or yours? The effect of visual perspective on cortical body representations. Cerebral Cortex 2006, 16, 178–182. [Google Scholar] [CrossRef]

- Frassinetti, F.; Pavani, F.; Zamagni, E.; Fusaroli, G.; Vescovi, M.; Benassi, M.; Avanzi, S.; Farnè, A. Visual processing of moving and static self body-parts. Neuropsychologia 2009, 47, 1988–1993. [Google Scholar] [CrossRef]

- Ferri, F.; Frassinetti, F.; Costantini, M.; Gallese, V. Motor simulation and the bodily self. PLoS ONE 2011, 6, e17927. [Google Scholar] [CrossRef]

- Conson, M.; Aromino, A.R.; Trojano, L. Whose hand is this? Handedness and visual perspective modulate self/other discrimination. Exp. Brain Res. 2010, 206, 449–453. [Google Scholar] [CrossRef] [PubMed]

- Aron, A.; Aron, E.N.; Tudor, M.; Nelson, G. Close relationships as including other in the self. J. Personal. Soc. Psychol. 1991, 60, 241–253. [Google Scholar] [CrossRef]

- Mashek, D.J.; Aron, A.; Boncimino, M. Confusions of self with close others. Personal. Soc. Psychol. Bull. 2016. [Google Scholar] [CrossRef]

- Balas, B.; Cox, D.; Conwell, E. The effect of real-world personal familiarity on the speed of face information processing. PLoS ONE 2007, 2. [Google Scholar] [CrossRef]

- Taylor, M.J.; Arsalidou, M.; Bayless, S.J.; Morris, D.; Evans, J.W.; Barbeau, E.J. Neural correlates of personally familiar faces: Parents, partner and own faces. Hum. Brain Mapp. 2009, 30, 2008–2020. [Google Scholar] [CrossRef]

- Devue, C.; Collette, F.; Balteau, E.; Degueldre, C.; Luxen, A.; Maquet, P.; Brédart, S. Here I am: The cortical correlates of visual self-recognition. Brain Res. 2007, 1143, 169–182. [Google Scholar] [CrossRef]

- Macmillan, N.A.; Creelman, C.D. Detection Theory: A User’s Guide; Cambridge University Press: New York, NY, USA, 1991; p. xv, 407. ISBN 978-0-521-36359-4. [Google Scholar]

- Frassinetti, F.; Ferri, F.; Maini, M.; Benassi, M.G.; Gallese, V. Bodily self: An implicit knowledge of what is explicitly unknown. Exp. Brain Res. 2011, 212, 153–160. [Google Scholar] [CrossRef] [PubMed]

- Conson, M.; Errico, D.; Mazzarella, E.; De Bellis, F.; Grossi, D.; Trojano, L. Impact of body posture on laterality judgement and explicit recognition tasks performed on self and others’ hands. Exp. Brain Res. 2015, 233, 1331–1338. [Google Scholar] [CrossRef]

- Brady, N.; Maguinness, C.; Choisdealbha, Á.N. My hand or yours? Markedly different sensitivity to egocentric and allocentric views in the hand laterality task. PLoS ONE 2011, 6, e23316. [Google Scholar] [CrossRef]

- Ní Choisdealbha, Á.; Brady, N.; Maguinness, C. Differing roles for the dominant and non-dominant hands in the hand laterality task. Exp. Brain Res. 2011, 211, 73–85. [Google Scholar] [CrossRef]

- Conson, M.; Volpicella, F.; De Bellis, F.; Orefice, A.; Trojano, L. “Like the palm of my hands”: Motor imagery enhances implicit and explicit visual recognition of one’s own hands. Acta Psychol. 2017, 180, 98–104. [Google Scholar] [CrossRef]

- Zapparoli, L.; Invernizzi, P.; Gandola, M.; Berlingeri, M.; De Santis, A.; Zerbi, A.; Banfi, G.; Paulesu, E. Like the back of the (right) hand? A new fMRI look on the hand laterality task. Exp. Brain Res. 2014, 232, 3873–3895. [Google Scholar] [CrossRef]

- Bucchioni, G.; Fossataro, C.; Cavallo, A.; Mouras, H.; Neppi-Modona, M.; Garbarini, F. Empathy or ownership? Evidence from corticospinal excitability modulation during pain observation. J. Cogn. Neurosci. 2016, 28, 1760–1771. [Google Scholar] [CrossRef]

- Berlucchi, G.; Aglioti, S.; Tassinari, G. Rightward attentional bias and left hemisphere dominance in a cue-target light detection task in a callosotomy patient. Neuropsychologia 1997, 35, 941–952. [Google Scholar] [CrossRef]

- Hodges, N.J.; Lyons, J.; Cockell, D.; Reed, A.; Elliott, D. Hand, space and attentional asymmetries in goal-directed manual aiming. Cortex 1997, 33, 251–269. [Google Scholar] [CrossRef]

- Parsons, L.M.; Gabrieli, J.D.; Phelps, E.A.; Gazzaniga, M.S. Cerebrally lateralized mental representations of hand shape and movement. J. Neurosci. 1998, 18, 6539–6548. [Google Scholar] [CrossRef]

- Keenan, J.P.; McCutcheon, B.; Freund, S.; Gallup, G.G.; Sanders, G.; Pascual-Leone, A. Left hand advantage in a self-face recognition task. Neuropsychologia 1999, 37, 1421–1425. [Google Scholar] [CrossRef]

- De Bellis, F.; Trojano, L.; Errico, D.; Grossi, D.; Conson, M. Whose hand is this? Differential responses of right and left extrastriate body areas to visual images of self and others’ hands. Cogn. Affect. Behav. Neurosci. 2017, 17, 826–837. [Google Scholar] [CrossRef] [PubMed]

- Kircher, T.T.; Senior, C.; Phillips, M.L.; Rabe-Hesketh, S.; Benson, P.J.; Bullmore, E.T.; Brammer, M.; Simmons, A.; Bartels, M.; David, A.S. Recognizing one’s own face. Cognition 2001, 78, B1–B15. [Google Scholar] [CrossRef]

| Upright | Upside-Down | |||||||

|---|---|---|---|---|---|---|---|---|

| Self | Other | Self | Other | |||||

| Right | Left | Right | Left | Right | Left | Right | Left | |

| Response with | ||||||||

| right hand | 0.913 | 0.929 | 0.926 | 0.874 | 0.884 | 0.892 | 0.921 | 0.882 |

| (0.029) | (0.026) | (0.035) | (0.044) | (0.037) | (0.035) | (0.036) | (0.040) | |

| left hand | 0.923 | 0.956 | 0.908 | 0.877 | 0.891 | 0.890 | 0.909 | 0.886 |

| (0.040) | (0.013) | (0.034) | (0.039) | (0.032) | (0.037) | (0.035) | (0.039) | |

| Upright | Upside-Down | |||||||

|---|---|---|---|---|---|---|---|---|

| Self | Other | Self | Other | |||||

| Right | Left | Right | Left | Right | Left | Right | Left | |

| Response with | ||||||||

| right hand | 0.819 | 0.850 | 0.902 | 0.869 | 0.839 | 0.823 | 0.853 | 0.862 |

| (0.040) | (0.036) | (0.039) | (0.040) | (0.042) | (0.042) | (0.054) | (0.041) | |

| left hand | 0.872 | 0.929 | 0.903 | 0.861 | 0.848 | 0.917 | 0.88 | 0.834 |

| (0.055) | (0.044) | (0.031) | (0.042) | (0.058) | (0.036) | (0.030) | (0.057) | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fukui, T.; Murayama, A.; Miura, A. Recognizing Your Hand and That of Your Romantic Partner. Int. J. Environ. Res. Public Health 2020, 17, 8256. https://doi.org/10.3390/ijerph17218256

Fukui T, Murayama A, Miura A. Recognizing Your Hand and That of Your Romantic Partner. International Journal of Environmental Research and Public Health. 2020; 17(21):8256. https://doi.org/10.3390/ijerph17218256

Chicago/Turabian StyleFukui, Takao, Aya Murayama, and Asako Miura. 2020. "Recognizing Your Hand and That of Your Romantic Partner" International Journal of Environmental Research and Public Health 17, no. 21: 8256. https://doi.org/10.3390/ijerph17218256

APA StyleFukui, T., Murayama, A., & Miura, A. (2020). Recognizing Your Hand and That of Your Romantic Partner. International Journal of Environmental Research and Public Health, 17(21), 8256. https://doi.org/10.3390/ijerph17218256