Predicting the Output of Solar Photovoltaic Panels in the Absence of Weather Data Using Only the Power Output of the Neighbouring Sites

Abstract

1. Introduction

1.1. Time Series Forecasting

1.2. Forecasting of Solar PV Power

- A study of the feasibility of forecasting solar PV outputs in the absence of meteorological data.

- Utilizing popular deep learning models for the forecast of the solar PV output for optimization of the performance and minimization of the maintenance costs of PV sites.

- Identifying an appropriate method for the forecast of solar PV output at various forecasting lengths.

- Suggesting a suitable workflow for the forecasting of solar PV outputs in scenarios where meteorological data are unavailable and under three different settings: the multivariate, univariate and multi-in uni-out settings.

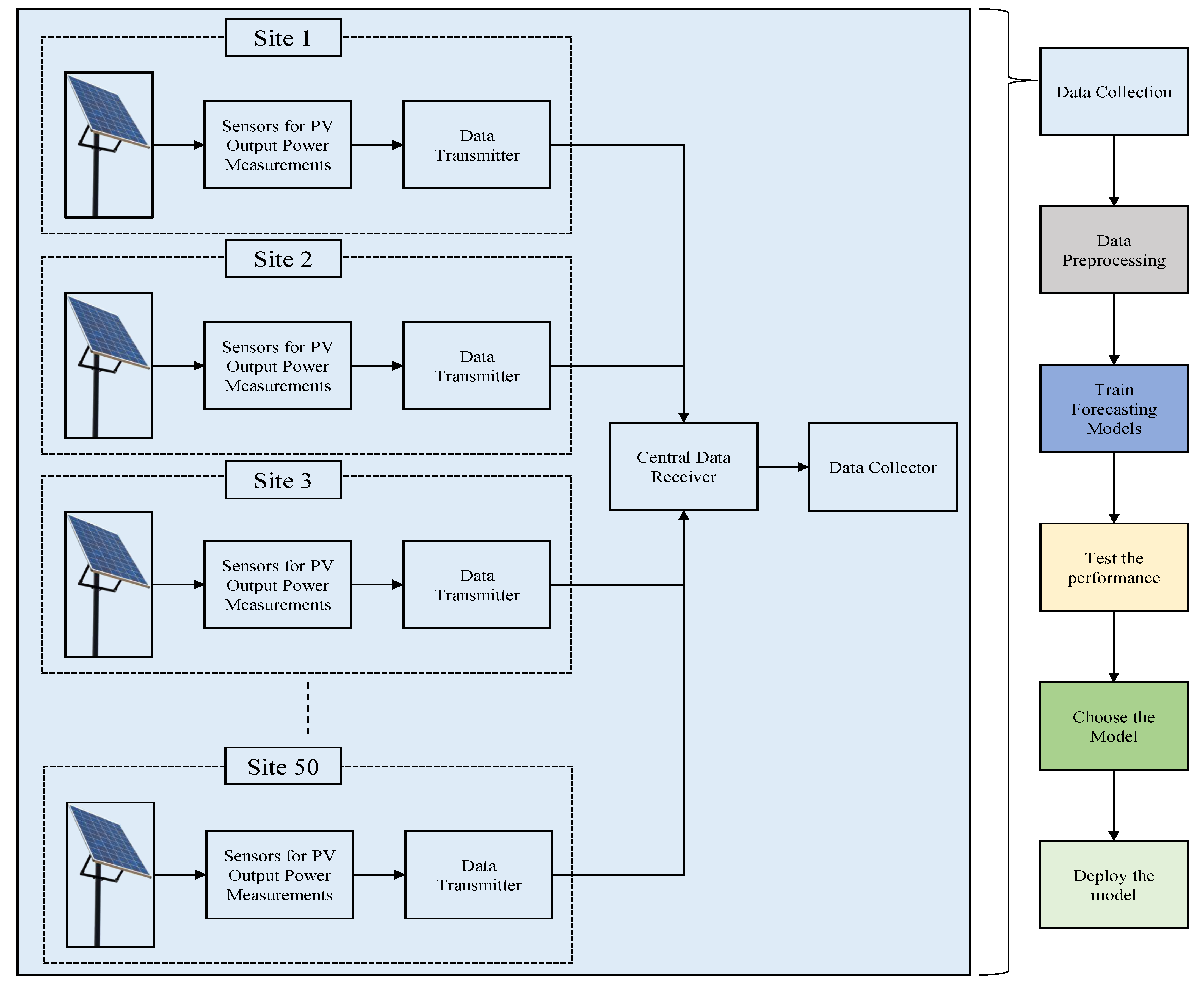

2. Methods

2.1. Data Description

2.2. Used Forecasting Models

2.2.1. Recurrent Neural Network (RNN) [38]

2.2.2. Gated Recurrent Unit (GRU) [39]

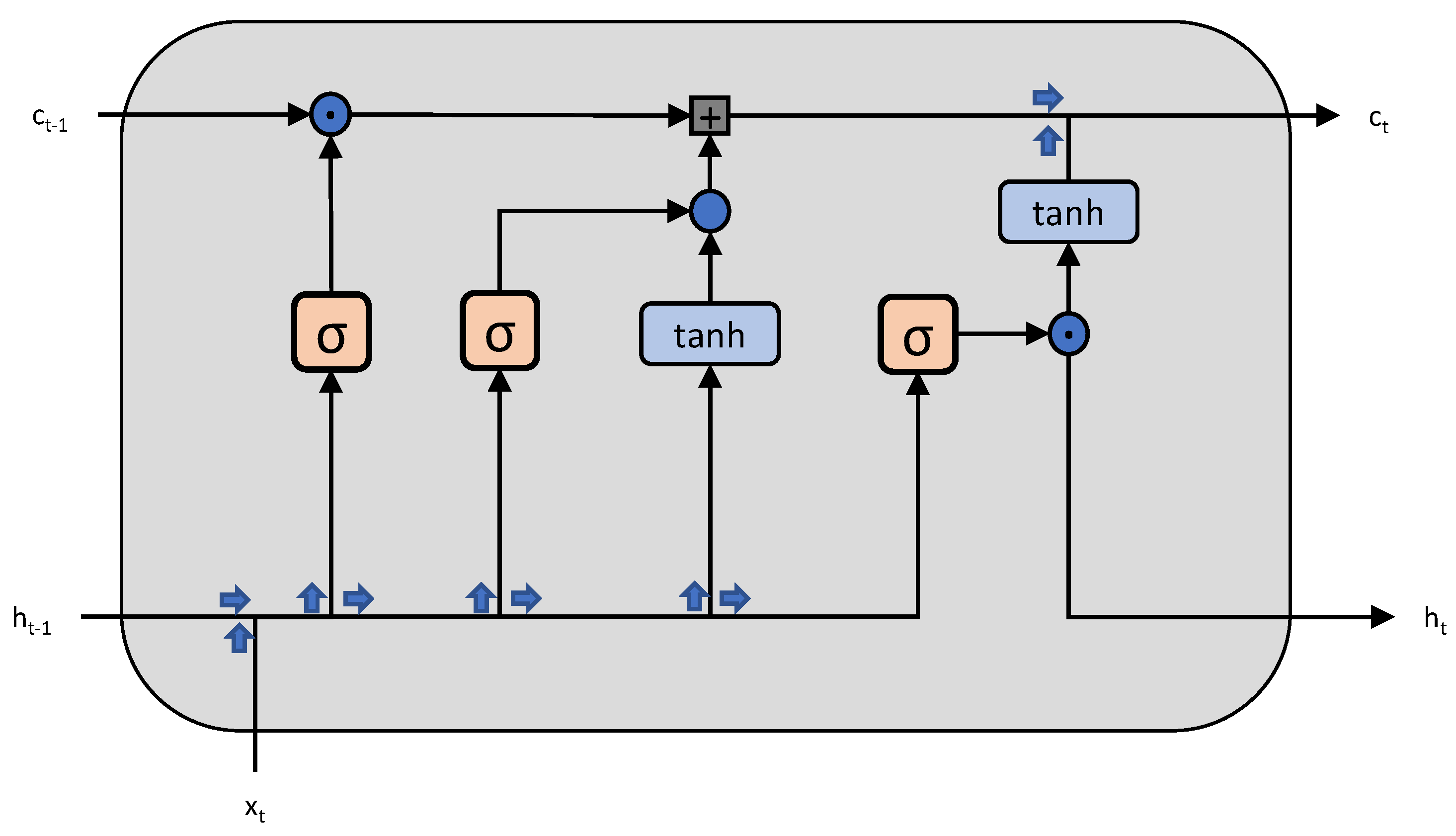

2.2.3. Long Short-Term Memory (LSTM) [37]

2.2.4. Transformer [24]

2.3. Forecast Settings

3. Experiment and Results

3.1. Implementation Details

3.2. Details of Hyper-Parameters Used

3.3. Evaluation Metrics

3.3.1. Mean Square Error (MSE)

3.3.2. Mean Absolute Error (MAE)

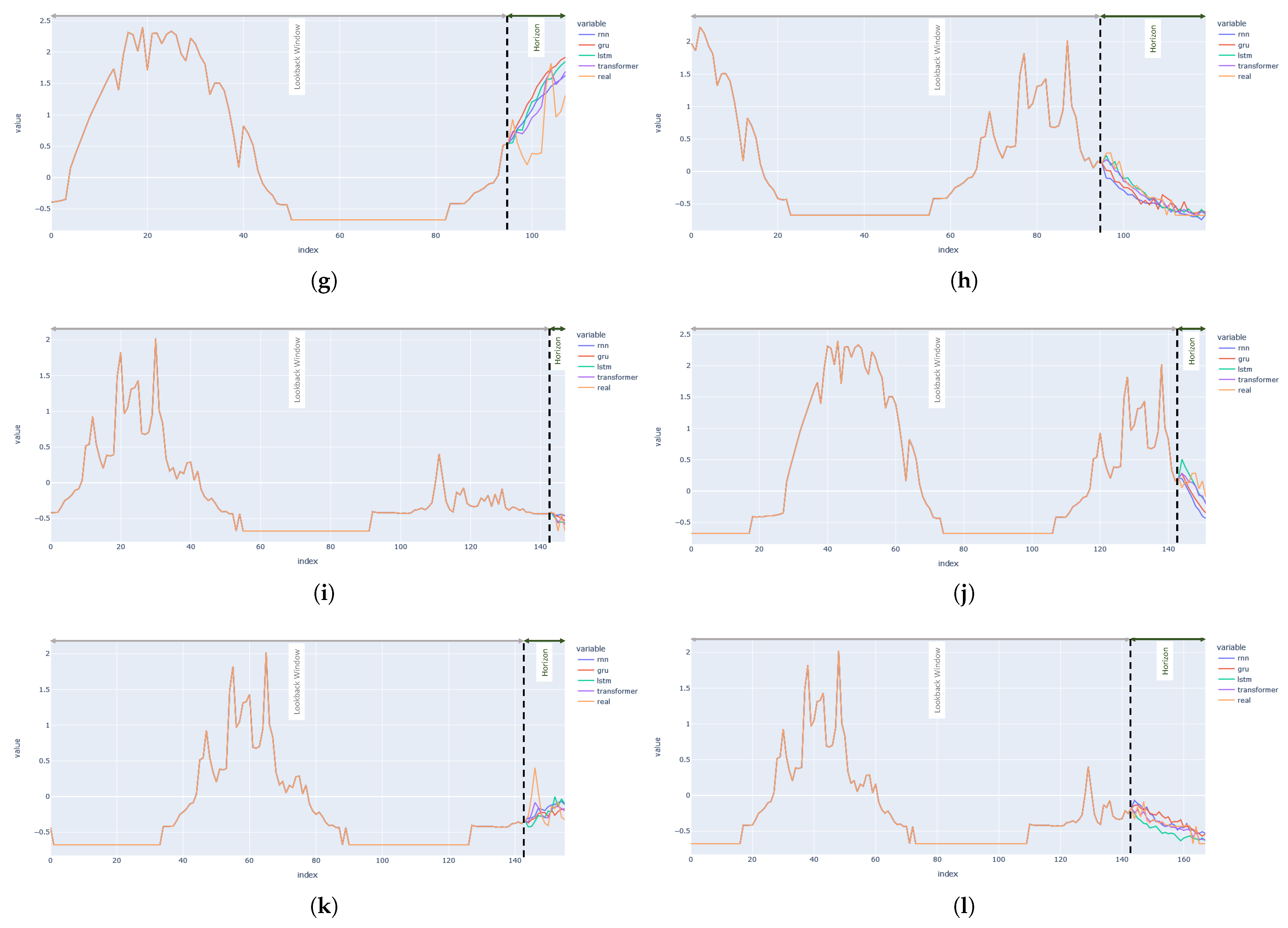

3.4. Results

3.4.1. Results of Multi-In Multi-Out Setting

3.4.2. Results of Multi-In Uni-Out Setting

3.4.3. Results of Uni-In Uni-Out Setting

3.4.4. Comparative Analysis of Results Obtained

4. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| TSF | Time Series Forecasting |

| PV | Photo Voltaic |

| RNN | Recurrent Neural Network |

| GRU | Gated Recurrent Unit |

| LSTM | Long Short-Term Memory |

| LB | Lookback |

| FH | Forecast Horizon |

| TCN | Temporal Convolution Networks |

| GNN | Graph Neural Networks |

| SVM | Support Vector Machines |

| GHI | Global Horizontal Irradiance |

| DNI | Direct Normal Irradiance |

| GA | Genetic Algorithm |

| ARIMA | Autoregressive Integrated Moving Average |

| KNN | K- Nearest Neighbours |

| RF | Random Forest |

| GAM | Generative Additive Model |

| GRBT | Gradient Boosted Regression Trees |

| BPTT | Back Propagation Through Time |

| MSE | Mean Square Error |

| MAE | Mean Absolute Error |

References

- Brahma, B.; Wadhvani, R. Solar Irradiance Forecasting Based on Deep Learning Methodologies and Multi-Site Data. Symmetry 2020, 12, 1830. [Google Scholar] [CrossRef]

- Heng, J.; Wang, J.; Xiao, L.; Lu, H. Research and application of a combined model based on frequent pattern growth algorithm and multi-objective optimization for solar radiation forecasting. Appl. Energy 2017, 208, 845–866. [Google Scholar] [CrossRef]

- Amrouche, B.; Le Pivert, X. Artificial neural network based daily local forecasting for global solar radiation. Appl. Energy 2014, 130, 333–341. [Google Scholar] [CrossRef]

- Liang, L.; Su, T.; Gao, Y.; Qin, F.; Pan, M. FCDT-IWBOA-LSSVR: An innovative hybrid machine learning approach for efficient prediction of short-to-mid-term photovoltaic generation. J. Clean. Prod. 2023, 385, 135716. [Google Scholar] [CrossRef]

- Persson, C.; Bacher, P.; Shiga, T.; Madsen, H. Multi-site solar power forecasting using gradient boosted regression trees. Sol. Energy 2017, 150, 423–436. [Google Scholar] [CrossRef]

- Jamal, T.; Shafiullah, G.; Carter, C.; Ferdous, S.; Rahman, M. Benefits of Short-term PV Forecasting in a Remote Area Standalone Off-grid Power Supply System. In Proceedings of the 2018 IEEE Power & Energy Society General Meeting (PESGM), Portland, OR, USA, 5–10 August 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Ramhari, P.; Pavel, L.; Ranjan, P. Techno-economic feasibility analysis of a 3-kW PV system installation in Nepal. Renew. Wind. Water Sol. 2021, 8, 5. [Google Scholar]

- Tuncel, K.S.; Baydogan, M.G. Autoregressive forests for multivariate time series modeling. Pattern Recognit. 2018, 73, 202–215. [Google Scholar] [CrossRef]

- De Bézenac, E.; Rangapuram, S.S.; Benidis, K.; Bohlke-Schneider, M.; Kurle, R.; Stella, L.; Hasson, H.; Gallinari, P.; Januschowski, T. Normalizing kalman filters for multivariate time series analysis. Adv. Neural Inf. Process. Syst. 2020, 33, 2995–3007. [Google Scholar]

- Box, G.E.; Jenkins, G.M.; MacGregor, J.F. Some recent advances in forecasting and control. J. R. Stat. Soc. Ser. (Appl. Stat.) 1974, 23, 158–179. [Google Scholar] [CrossRef]

- Holt, C.C. Forecasting seasonals and trends by exponentially weighted moving averages. Int. J. Forecast. 2004, 20, 5–10. [Google Scholar] [CrossRef]

- Winters, P.R. Forecasting sales by exponentially weighted moving averages. Manag. Sci. 1960, 6, 324–342. [Google Scholar] [CrossRef]

- Lütkepohl, H. New Introduction to Multiple Time Series Analysis; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Wen, R.; Torkkola, K.; Narayanaswamy, B.; Madeka, D. A multi-horizon quantile recurrent forecaster. arXiv 2017, arXiv:1711.11053. [Google Scholar]

- Qin, Y.; Song, D.; Chen, H.; Cheng, W.; Jiang, G.; Cottrell, G. A dual-stage attention-based recurrent neural network for time series prediction. arXiv 2017, arXiv:1704.02971. [Google Scholar]

- Maddix, D.C.; Wang, Y.; Smola, A. Deep factors with gaussian processes for forecasting. arXiv 2018, arXiv:1812.00098. [Google Scholar]

- Salinas, D.; Flunkert, V.; Gasthaus, J.; Januschowski, T. DeepAR: Probabilistic forecasting with autoregressive recurrent networks. Int. J. Forecast. 2020, 36, 1181–1191. [Google Scholar] [CrossRef]

- Van Den Oord, A.; Dieleman, S.; Zen, H.; Simonyan, K.; Vinyals, O.; Graves, A.; Kalchbrenner, N.; Senior, A.W.; Kavukcuoglu, K. WaveNet: A generative model for raw audio. SSW 2016, 125, 2. [Google Scholar]

- Borovykh, A.; Bohte, S.; Oosterlee, C.W. Conditional time series forecasting with convolutional neural networks. arXiv 2017, arXiv:1703.04691. [Google Scholar]

- Sen, R.; Yu, H.F.; Dhillon, I.S. Think globally, act locally: A deep neural network approach to high-dimensional time series forecasting. Adv. Neural Inf. Process. Syst. 2019, 32. [Google Scholar]

- Li, Y.; Yu, R.; Shahabi, C.; Liu, Y. Diffusion convolutional recurrent neural network: Data-driven traffic forecasting. arXiv 2017, arXiv:1707.01926. [Google Scholar]

- Yu, B.; Yin, H.; Zhu, Z. Spatio-temporal graph convolutional networks: A deep learning framework for traffic forecasting. arXiv 2017, arXiv:1709.04875. [Google Scholar]

- Song, C.; Lin, Y.; Guo, S.; Wan, H. Spatial-temporal synchronous graph convolutional networks: A new framework for spatial-temporal network data forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.u.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; Zhang, W. Informer: Beyond efficient transformer for long sequence time-series forecasting. In Proceedings of the AAAI, Virtually, 2–9 February 2021. [Google Scholar]

- Li, S.; Jin, X.; Xuan, Y.; Zhou, X.; Chen, W.; Wang, Y.X.; Yan, X. Enhancing the locality and breaking the memory bottleneck of transformer on time series forecasting. Adv. Neural Inf. Process. Syst. 2019, 32. [Google Scholar]

- Wu, H.; Xu, J.; Wang, J.; Long, M. Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting. Adv. Neural Inf. Process. Syst. 2021, 34. [Google Scholar]

- Liu, M.; Zeng, A.; Xu, Z.; Lai, Q.; Xu, Q. Time Series is a Special Sequence: Forecasting with Sample Convolution and Interaction. arXiv 2021, arXiv:2106.09305. [Google Scholar]

- Sharma, N.; Sharma, P.; Irwin, D.; Shenoy, P. Predicting solar generation from weather forecasts using machine learning. In Proceedings of the 2011 IEEE International Conference on Smart Grid Communications (SmartGridComm), Brussels, Belgium, 17–20 October 2011; pp. 528–533. [Google Scholar] [CrossRef]

- Ragnacci, A.; Pastorelli, M.; Valigi, P.; Ricci, E. Exploiting dimensionality reduction techniques for photovoltaic power forecasting. In Proceedings of the 2012 IEEE International Energy Conference and Exhibition (ENERGYCON), Florence, Italy, 9–12 September 2012; pp. 867–872. [Google Scholar] [CrossRef]

- Marquez, R.; Coimbra, C.F. Forecasting of global and direct solar irradiance using stochastic learning methods, ground experiments and the NWS database. Sol. Energy 2011, 85, 746–756. [Google Scholar] [CrossRef]

- Pedro, H.T.; Coimbra, C.F. Assessment of forecasting techniques for solar power production with no exogenous inputs. Sol. Energy 2012, 86, 2017–2028. [Google Scholar] [CrossRef]

- Zamo, M.; Mestre, O.; Arbogast, P.; Pannekoucke, O. A benchmark of statistical regression methods for short-term forecasting of photovoltaic electricity production, part I: Deterministic forecast of hourly production. Sol. Energy 2014, 105, 792–803. [Google Scholar] [CrossRef]

- Zamo, M.; Mestre, O.; Arbogast, P.; Pannekoucke, O. A benchmark of statistical regression methods for short-term forecasting of photovoltaic electricity production. Part II: Probabilistic forecast of daily production. Sol. Energy 2014, 105, 804–816. [Google Scholar] [CrossRef]

- Bessa, R.; Trindade, A.; Silva, C.S.; Miranda, V. Probabilistic solar power forecasting in smart grids using distributed information. Int. J. Electr. Power Energy Syst. 2015, 72, 16–23. [Google Scholar] [CrossRef]

- Almeida, M.P.; Perpiñán, O.; Narvarte, L. PV power forecast using a nonparametric PV model. Sol. Energy 2015, 115, 354–368. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning Internal Representations by Error Propagation; Technical Report; California University San Diego La Jolla Institute for Cognitive Science: La Jolla, CA, USA, 1985. [Google Scholar]

- Cho, K.; Van Merriënboer, B.; Bahdanau, D.; Bengio, Y. On the properties of neural machine translation: Encoder-decoder approaches. arXiv 2014, arXiv:1409.1259. [Google Scholar]

- Wu, N.; Green, B.; Ben, X.; O’Banion, S. Deep transformer models for time series forecasting: The influenza prevalence case. arXiv 2020, arXiv:2001.08317. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

| Time | Site 1 | Site 2 | Site 3 | Site 4 | Site 5 | ....... | Site 47 | Site 48 | Site 49 | Site 50 |

|---|---|---|---|---|---|---|---|---|---|---|

| 2020-01-01 06:45 | 0 | 0 | 0 | 0 | 0 | ....... | 0 | 0 | 0 | 0 |

| 2020-01-01 07:00 | 0 | 0 | 0 | 0 | 0 | ....... | 0 | 0 | 0 | 0 |

| 2020-01-01 07:15 | 0 | 0 | 0 | 0 | 0 | ....... | 0 | 0 | 0 | 0 |

| 2020-01-01 07:30 | 0 | 207 | 216 | 0 | 225 | ....... | 0 | 186 | 217 | 215 |

| 2020-01-01 07:45 | 212 | 205 | 217 | 217 | 218 | ....... | 212 | 192 | 265 | 215 |

| 2020-01-01 08:00 | 211 | 250 | 212 | 271 | 211 | ....... | 212 | 494 | 465 | 215 |

| 2020-01-01 08:15 | 225 | 377 | 209 | 363 | 585 | ....... | 214 | 745 | 708 | 235 |

| 2020-01-01 08:30 | 240 | 424 | 865 | 648 | 798 | ....... | 239 | 953 | 934 | 250 |

| 2020-01-01 08:45 | 260 | 541 | 1087 | 948 | 1017 | ....... | 321 | 1138 | 1147 | 306 |

| 2020-01-01 09:00 | 506 | 861 | 1278 | 1147 | 1251 | ....... | 428 | 1315 | 1322 | 505 |

| ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... |

| 2020-06-22 14:15 | 794 | 1176 | 1738 | 1177 | 715 | ....... | 1447 | 1349 | 1370 | 1417 |

| 2020-06-22 14:30 | 839 | 885 | 970 | 866 | 972 | ....... | 792 | 892 | 897 | 780 |

| 2020-06-22 14:45 | 911 | 1043 | 1021 | 1093 | 458 | ....... | 1489 | 1211 | 1241 | 1419 |

| 2020-06-22 15:00 | 1681 | 643 | 1130 | 659 | 591 | ....... | 567 | 693 | 703 | 567 |

| 2020-06-22 15:15 | 1474 | 1032 | 1123 | 823 | 275 | ....... | 1086 | 1057 | 947 | 1007 |

| ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... | ....... |

| RNN, LSTM, GRU | Transformer | ||

|---|---|---|---|

| Hyper-Parameter | Value | Hyper-Parameter | Value |

| Number of hidden state | 64 | Number of heads | 4 |

| Number of recurrent layers | 2 | Number of encoder layers | 3 |

| Number of decoder layers | 3 | ||

| Number of expected features in the encoder/decoder inputs | 128 | ||

| Feedforward network dimension | 256 | ||

| Number of epochs | 100 | Number of epochs | 50 |

| Dropout | 0.3 | Dropout | 0.3 |

| Weight Decay | Weight Decay | ||

| Learning rate | Learning rate | ||

| Models | RNN | GRU | LSTM | Transformer | |||||

|---|---|---|---|---|---|---|---|---|---|

| LBL | FHL | MSE | MAE | MSE | MAE | MSE | MAE | MSE | MAE |

| 48 | 4 | 0.1337 | 0.2111 | 0.1280 | 0.2049 | 0.1334 | 0.2084 | 0.1070 | 0.1786 |

| 8 | 0.2351 | 0.3103 | 0.1643 | 0.2479 | 0.1927 | 0.252 | 0.1429 | 0.2245 | |

| 12 | 0.2059 | 0.2796 | 0.2414 | 0.3027 | 0.2197 | 0.2739 | 0.1856 | 0.2618 | |

| 24 | 0.2514 | 0.3168 | 0.237 | 0.2946 | 0.2998 | 0.3199 | 0.2352 | 0.2768 | |

| 96 | 4 | 0.1356 | 0.2109 | 0.1345 | 0.2081 | 0.1377 | 0.2052 | 0.0971 | 0.1722 |

| 8 | 0.1657 | 0.2409 | 0.1574 | 0.2387 | 0.1612 | 0.2286 | 0.1137 | 0.2113 | |

| 12 | 0.2386 | 0.2927 | 0.2165 | 0.2828 | 0.2646 | 0.2881 | 0.1603 | 0.2404 | |

| 24 | 0.276 | 0.3416 | 0.2709 | 0.3113 | 0.4467 | 0.4007 | 0.2069 | 0.2451 | |

| 144 | 4 | 0.1472 | 0.2203 | 0.1427 | 0.2174 | 0.1385 | 0.2088 | 0.115 | 0.1823 |

| 8 | 0.2331 | 0.2985 | 0.158 | 0.2336 | 0.2523 | 0.1887 | 0.1246 | 0.2345 | |

| 12 | 0.3148 | 0.3478 | 0.2233 | 0.2851 | 0.2465 | 0.1773 | 0.1687 | 0.2312 | |

| 24 | 0.3475 | 0.3800 | 0.4703 | 0.3966 | 0.2359 | 0.2997 | 0.184 | 0.2211 | |

| Models | RNN | GRU | LSTM | Transformer | |||||

|---|---|---|---|---|---|---|---|---|---|

| LBL | FHL | MSE | MAE | MSE | MAE | MSE | MAE | MSE | MAE |

| 48 | 4 | 0.1366 | 0.2249 | 0.1435 | 0.2264 | 0.1112 | 0.1995 | 0.1120 | 0.1762 |

| 8 | 0.1382 | 0.2265 | 0.1382 | 0.228 | 0.1157 | 0.2083 | 0.1184 | 0.1834 | |

| 12 | 0.1454 | 0.2488 | 0.1369 | 0.2264 | 0.1104 | 0.2049 | 0.1212 | 0.1893 | |

| 24 | 0.2217 | 0.3054 | 0.1717 | 0.2594 | 0.1517 | 0.2405 | 0.1300 | 0.2020 | |

| 96 | 4 | 0.1335 | 0.2249 | 0.1446 | 0.2259 | 0.1030 | 0.1947 | 0.1171 | 0.1763 |

| 8 | 0.1474 | 0.2331 | 0.133 | 0.2199 | 0.1154 | 0.1980 | 0.1245 | 0.1923 | |

| 12 | 0.1410 | 0.2417 | 0.1301 | 0.2161 | 0.1087 | 0.1975 | 0.1217 | 0.1838 | |

| 24 | 0.2178 | 0.2913 | 0.2174 | 0.2901 | 0.1919 | 0.2707 | 0.1747 | 0.2216 | |

| 144 | 4 | 0.1396 | 0.2281 | 0.1494 | 0.2304 | 0.1106 | 0.1978 | 0.0993 | 0.1624 |

| 8 | 0.147 | 0.2278 | 0.1399 | 0.2254 | 0.1237 | 0.2076 | 0.1153 | 0.1669 | |

| 12 | 0.1472 | 0.244 | 0.1347 | 0.2256 | 0.1054 | 0.1923 | 0.1192 | 0.1823 | |

| 24 | 0.2457 | 0.3220 | 0.2096 | 0.2812 | 0.1801 | 0.2550 | 0.1562 | 0.2112 | |

| Models | RNN | GRU | LSTM | Transformer | |||||

|---|---|---|---|---|---|---|---|---|---|

| LBL | FHL | MSE | MAE | MSE | MAE | MSE | MAE | MSE | MAE |

| 48 | 4 | 0.0887 | 0.1560 | 0.0917 | 0.1624 | 0.0923 | 0.1621 | 0.094 | 0.1518 |

| 8 | 0.1140 | 0.2099 | 0.1066 | 0.1890 | 0.1054 | 0.1815 | 0.1128 | 0.1914 | |

| 12 | 0.1248 | 0.2241 | 0.1204 | 0.2033 | 0.1238 | 0.2094 | 0.1247 | 0.1924 | |

| 24 | 0.201 | 0.2811 | 0.1947 | 0.2671 | 0.2167 | 0.2788 | 0.1832 | 0.2531 | |

| 96 | 4 | 0.0892 | 0.1616 | 0.0900 | 0.1543 | 0.0879 | 0.1577 | 0.0912 | 0.1423 |

| 8 | 0.1057 | 0.1963 | 0.1031 | 0.1765 | 0.1004 | 0.1755 | 0.0996 | 0.1724 | |

| 12 | 0.1272 | 0.2197 | 0.1276 | 0.1989 | 0.1202 | 0.2056 | 0.1065 | 0.1818 | |

| 24 | 0.1968 | 0.2737 | 0.2324 | 0.2865 | 0.2055 | 0.2723 | 0.1624 | 0.2395 | |

| 144 | 4 | 0.0949 | 0.1628 | 0.0927 | 0.1577 | 0.0931 | 0.161 | 0.0832 | 0.1463 |

| 8 | 0.1087 | 0.1925 | 0.1096 | 0.1837 | 0.1049 | 0.1828 | 0.0999 | 0.1582 | |

| 12 | 0.132 | 0.2281 | 0.1266 | 0.2027 | 0.1216 | 0.2037 | 0.1123 | 0.1812 | |

| 24 | 0.2341 | 0.2973 | 0.2414 | 0.2927 | 0.221 | 0.2848 | 0.2120 | 0.2541 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jeong, H. Predicting the Output of Solar Photovoltaic Panels in the Absence of Weather Data Using Only the Power Output of the Neighbouring Sites. Sensors 2023, 23, 3399. https://doi.org/10.3390/s23073399

Jeong H. Predicting the Output of Solar Photovoltaic Panels in the Absence of Weather Data Using Only the Power Output of the Neighbouring Sites. Sensors. 2023; 23(7):3399. https://doi.org/10.3390/s23073399

Chicago/Turabian StyleJeong, Heon. 2023. "Predicting the Output of Solar Photovoltaic Panels in the Absence of Weather Data Using Only the Power Output of the Neighbouring Sites" Sensors 23, no. 7: 3399. https://doi.org/10.3390/s23073399

APA StyleJeong, H. (2023). Predicting the Output of Solar Photovoltaic Panels in the Absence of Weather Data Using Only the Power Output of the Neighbouring Sites. Sensors, 23(7), 3399. https://doi.org/10.3390/s23073399