GRU-SVM Based Threat Detection in Cognitive Radio Network

Abstract

1. Introduction

2. Related Work

3. Key Terminologies

3.1. Cognitive Radio Network

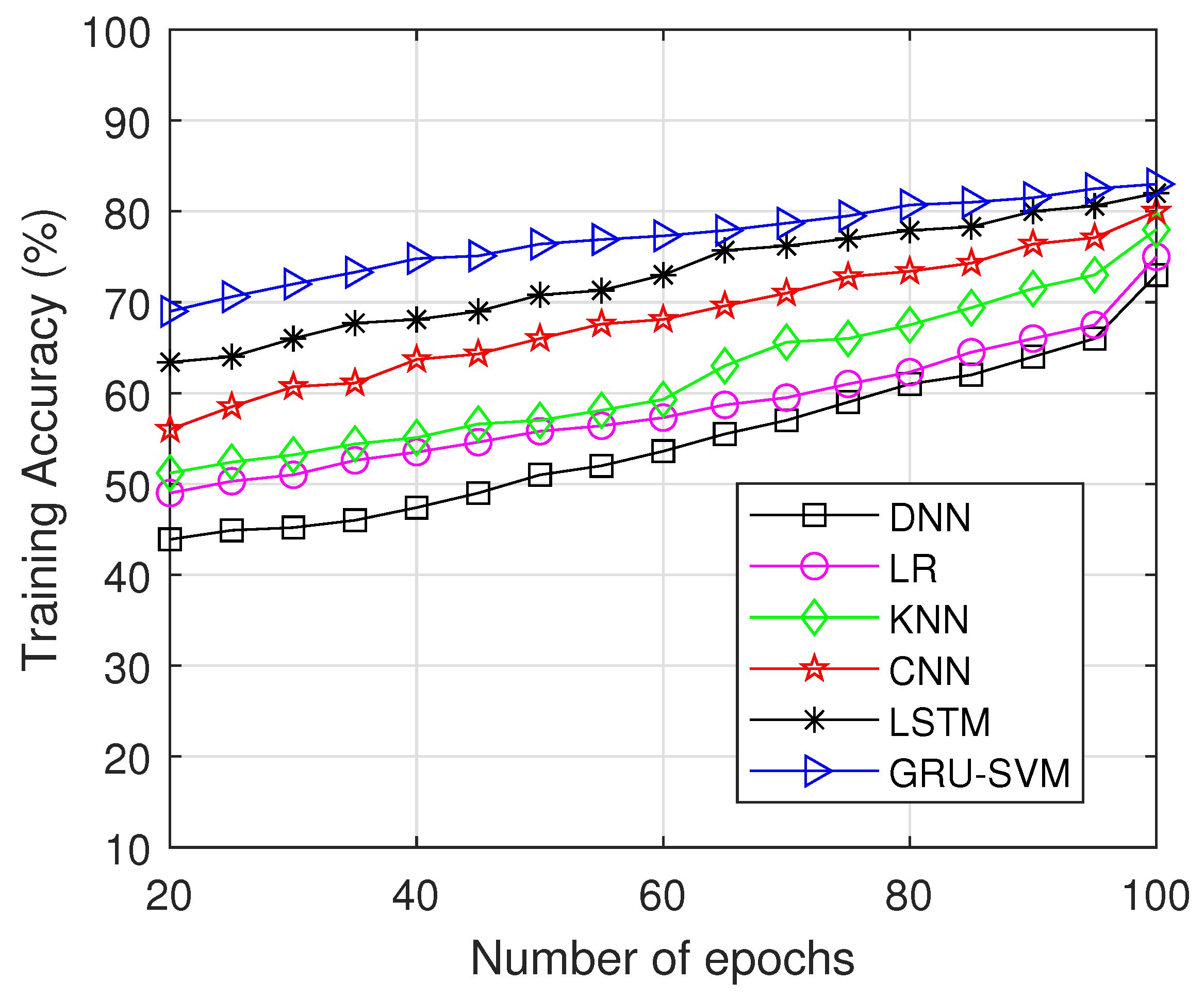

3.2. Spectrum Sensing

3.3. Energy Detection

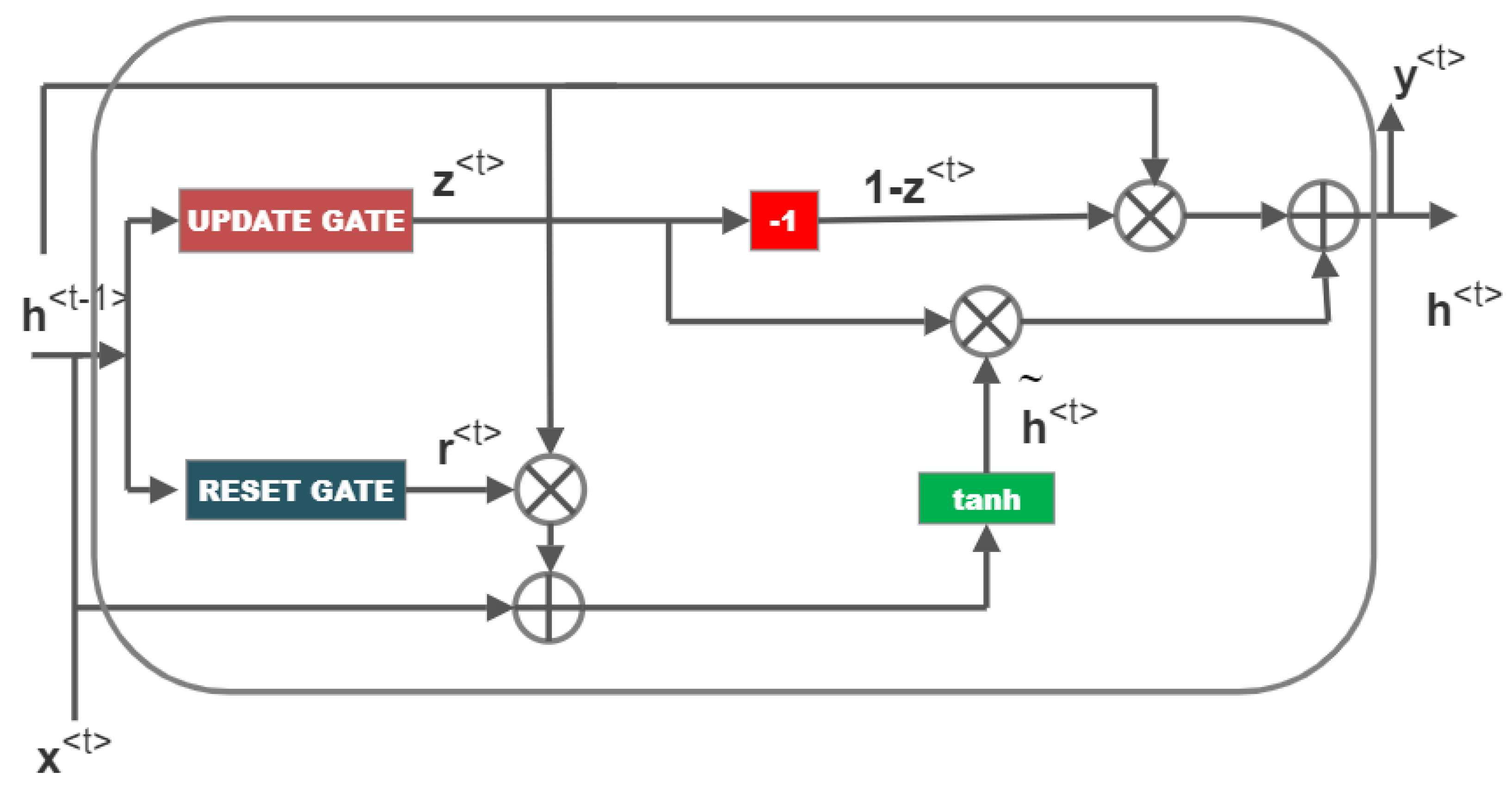

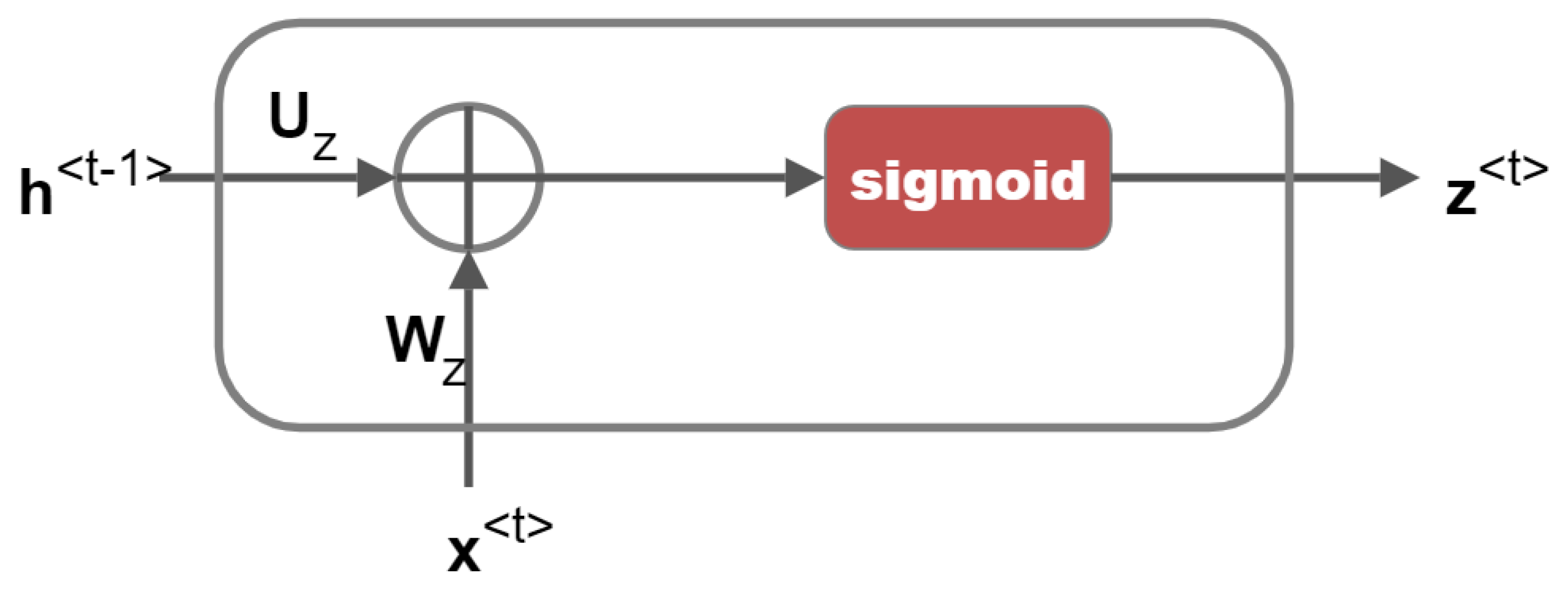

3.4. Gated Recurrent Unit

3.4.1. Update Gate

3.4.2. Reset Gate

3.5. Support Vector Machine

4. Proposed Work

4.1. System Model

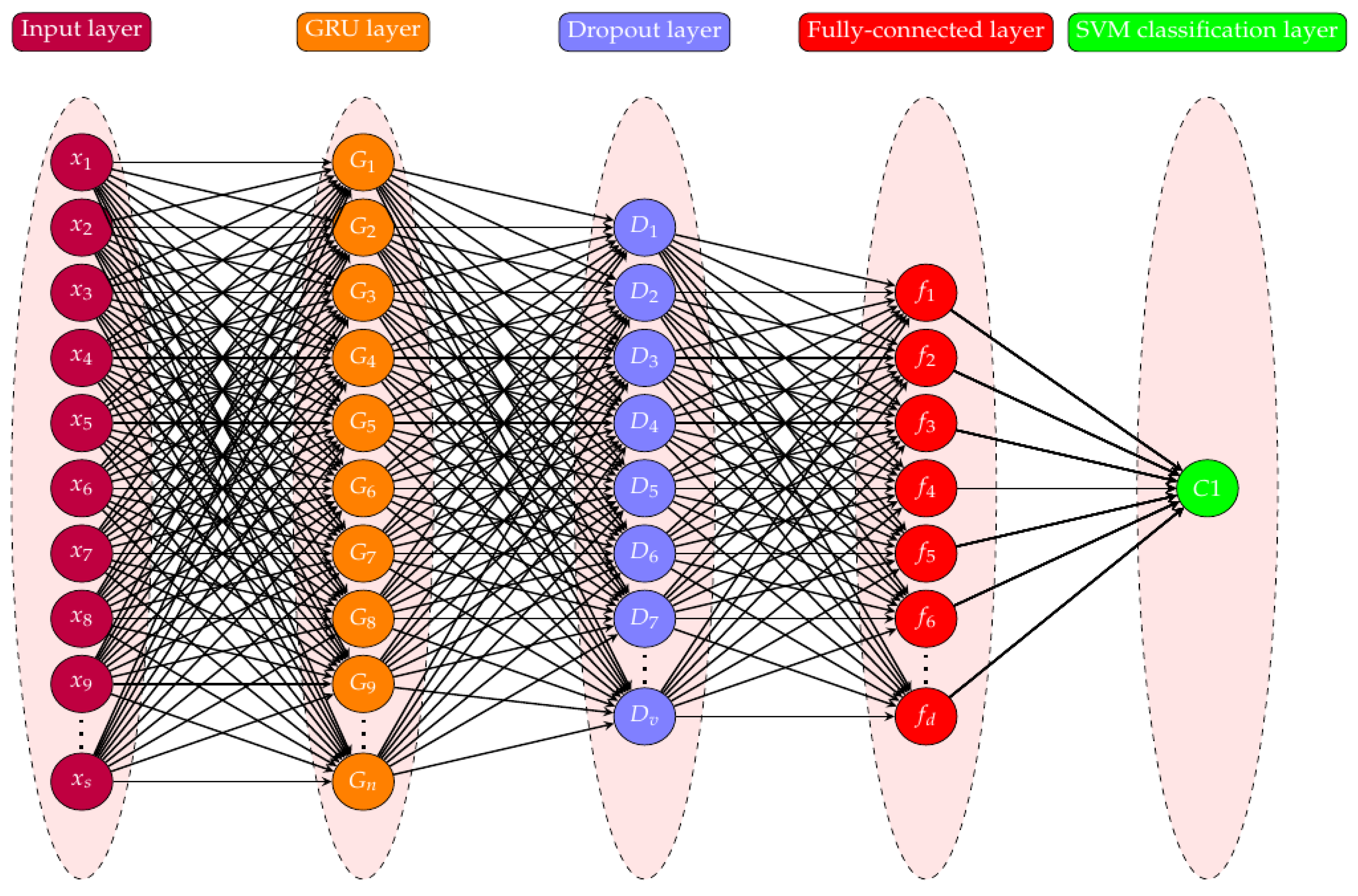

4.2. GRU-SVM Based Threat Detection

4.3. Dataset Preprocessing

5. Analysis of Computational Complexity

6. Results and Discussion

- Precision:Precision is the percentage of truely positives, of all the predicted positives.

- Sensitivity (Recall):The model’s sensitivity indicates how well it can predict positive outcomes. Sensitivity is the percentage of predicted positives, of all the positive cases.

- Specificity:The model’s specificity indicates how well it can predict negative outcomes.

- F1-score:The “harmonic mean” of sensitivity and precision is called the F1-score. It is suitable for imbalanced datasets and takes into account both false positive and false negative cases. The True Negative values are not considered in this score:

- Accuracy:The model’s accuracy indicates how frequently it is accurate.

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| GRU | Gated Recurrent Unit |

| LSTM | Long short-term memory |

| RNN | Recurrent Neural Network |

| DNN | Deep Neural Network |

| LR | Logistic Regression |

| CNN | Convolutional Neural Network |

| SVM | Support Vector Machine |

| KNN | K-Nearest Neighbor |

References

- Wang, J.; Liu, H.; Liu, F. Research on deep learning method of intrusion attack prediction in software defined network. In Proceedings of the ITM Web of Conferences, Tamil Nadu, India, 28–29 October 2022; Volume 47, p. 02042. [Google Scholar]

- Tang, T.A.; McLernon, D.; Mhamdi, L.; Zaidi, S.A.R.; Ghogho, M. Intrusion detection in sdn-based networks: Deep recurrent neural network approach. In Deep Learning Applications for Cyber Security; Springer: Berlin/Heidelberg, Germany, 2019; pp. 175–195. [Google Scholar]

- Wu, Y.; Wei, D.; Feng, J. Network attacks detection methods based on deep learning techniques: A survey. Secur. Commun. Netw. 2020, 2020, 8872923. [Google Scholar] [CrossRef]

- Bastola, S.B.; Shakya, S.; Sharma, S. Distributed Denial of Service Attack Detection on Software Defined Networking Using Deep Learning. In Proceedings of the 2017 International Conference on Advances in Computing, Communications and Informatics (ICACCI), Udupi, India, 13–16 September 2021. [Google Scholar]

- Parvin, S.; Hussain, F.K.; Hussain, O.K.; Han, S.; Tian, B.; Chang, E. Cognitive radio network security: A survey. J. Netw. Comput. Appl. 2012, 35, 1691–1708. [Google Scholar] [CrossRef]

- Arjoune, Y.; Kaabouch, N. A comprehensive survey on spectrum sensing in cognitive radio networks: Recent advances, new challenges, and future research directions. Sensors 2019, 19, 126. [Google Scholar] [CrossRef] [PubMed]

- Sriharipriya, K.; Baskaran, K. Collaborative spectrum sensing of cognitive radio networks with simple and effective fusion scheme. Circuits Syst. Signal Process. 2014, 33, 2851–2865. [Google Scholar] [CrossRef]

- Sriharipriya, K.; Baskaran, K. Optimal number of cooperators in the cooperative spectrum sensing schemes. Circuits Syst. Signal Process. 2018, 37, 1988–2000. [Google Scholar] [CrossRef]

- Salahdine, F.; Kaabouch, N. Security threats, detection, and countermeasures for physical layer in cognitive radio networks: A survey. Phys. Commun. 2020, 39, 101001. [Google Scholar] [CrossRef]

- Clement, J.C.; Sriharipriya, K. Robust Spectrum Sensing Scheme against Malicious Users Attack in a Cognitive Radio Network. In Proceedings of the 2021 International Conference on Electrical, Computer and Energy Technologies (ICECET), Cape Town, South Africa, 9–10 December 2021; pp. 1–4. [Google Scholar]

- Zhang, Y.; Wu, Q.; Shikh-Bahaei, M. Ensemble learning based robust cooperative sensing in full-duplex cognitive radio networks. In Proceedings of the 2020 IEEE International Conference on Communications Workshops (ICC Workshops), Dublin, Ireland, 7–11 June 2020; pp. 1–6. [Google Scholar]

- Shirolkar, A.; Sankpal, S. Deep Learning Based Performance of Cooperative Sensing in Cognitive Radio Network. In Proceedings of the 2021 2nd Global Conference for Advancement in Technology (GCAT), Bangalore, India, 1–3 October 2021; pp. 1–4. [Google Scholar]

- Sriharipriya, K.; Sanju, R. Artifical neural network based multi dimensional spectrum sensing in full duplex cognitive radio networks. In Proceedings of the 2017 International Conference on Computing Methodologies and Communication (ICCMC), Erode, India, 18–19 July 2017; pp. 307–312. [Google Scholar]

- Dey, S.K.; Rahman, M.M. Flow based anomaly detection in software defined networking: A deep learning approach with feature selection method. In Proceedings of the 2018 4th International Conference on Electrical Engineering and Information & Communication Technology (iCEEiCT), Dhaka, Bangladesh, 13–15 September 2018; pp. 630–635. [Google Scholar]

- Dey, S.K.; Rahman, M.M. Effects of machine learning approach in flow-based anomaly detection on software-defined networking. Symmetry 2019, 12, 7. [Google Scholar] [CrossRef]

- Lazar, V.; Buzura, S.; Iancu, B.; Dadarlat, V. Anomaly Detection in Software Defined Wireless Sensor Networks Using Recurrent Neural Networks. In Proceedings of the 2021 IEEE 17th International Conference on Intelligent Computer Communication and Processing (ICCP), Cluj-Napoca, Romania, 28–30 October 2021; pp. 19–24. [Google Scholar]

- Ahmed, A.; Khan, M.S.; Gul, N.; Uddin, I.; Kim, S.M.; Kim, J. A comparative analysis of different outlier detection techniques in cognitive radio networks with malicious users. Wirel. Commun. Mob. Comput. 2020, 2020, 8832191. [Google Scholar] [CrossRef]

- Tao, X.; Peng, Y.; Zhao, F.; Yang, C.; Qiang, B.; Wang, Y.; Xiong, Z. Gated recurrent unit-based parallel network traffic anomaly detection using subagging ensembles. Ad Hoc Netw. 2021, 116, 102465. [Google Scholar] [CrossRef]

- Lent, D.M.B.; Novaes, M.P.; Carvalho, L.F.; Lloret, J.; Rodrigues, J.J.; Proença, M.L. A Gated Recurrent Unit Deep Learning Model to Detect and Mitigate Distributed Denial of Service and Portscan Attacks. IEEE Access 2022, 10, 73229–73242. [Google Scholar] [CrossRef]

- Kurochkin, I.; Volkov, S. Using GRU based deep neural network for intrusion detection in software-defined networks. In Proceedings of the IOP Conference Series: Materials Science and Engineering, Ulaanbaatar, Mongolia, 10–13 September 2020; Volume 927, p. 012035. [Google Scholar]

- Assis, M.V.; Carvalho, L.F.; Lloret, J.; Proença Jr, M.L. A GRU deep learning system against attacks in software defined networks. J. Netw. Comput. Appl. 2021, 177, 102942. [Google Scholar] [CrossRef]

- Putchala, M.K. Deep Learning Approach for Intrusion Detection System (IDS) in The Internet of Things (IOT) Network Using Gated Recurrent Neural Networks (GRU). Master’s Thesis, Wright State University, Dayton, OH, USA, 2017. [Google Scholar]

- Aygül, M.A.; Furqan, H.M.; Nazzal, M.; Arslan, H. Deep learning-assisted detection of PUE and jamming attacks in cognitive radio systems. In Proceedings of the 2020 IEEE 92nd Vehicular Technology Conference (VTC2020-Fall), Victoria, BC, Canada, 18 November–16 December 2020; pp. 1–5. [Google Scholar]

- Muthukumar, P.B.; Samudhyatha, B.; Gurugopinath, S. Deep Learning Techniques for Cooperative Spectrum Sensing Under Generalized Fading Channels. In Proceedings of the 2022 International Conference on Wireless Communications Signal Processing and Networking (WiSPNET), Chennai, India, 24–26 March 2022; pp. 183–188. [Google Scholar]

- Alhammadi, A.; Roslee, M.; Alias, M.Y. Analysis of spectrum handoff schemes in cognitive radio network using particle swarm optimization. In Proceedings of the 2016 IEEE 3rd International Symposium on Telecommunication Technologies (ISTT), Kuala Lumpur, Malaysia, 28–30 November 2016; pp. 103–107. [Google Scholar]

- Roslee, M.; Alhammadi, A.; Alias, M.Y.; Anuar, K.; Nmenme, P. Efficient handoff spectrum scheme using fuzzy decision making in cognitive radio system. In Proceedings of the 2017 3rd International Conference on Frontiers of Signal Processing (ICFSP), Paris, France, 6–8 September 2017; pp. 72–75. [Google Scholar]

- Alhammadi, A.; Roslee, M.; Alias, M.Y.; Sheikhidris, K.; Jack, Y.J.; Abas, A.B.; Randhava, K.S. An intelligent spectrum handoff scheme based on multiple attribute decision making for LTE-A network. Int. J. Electr. Comput. Eng. 2019, 9, 5330. [Google Scholar] [CrossRef]

- Sureka, N.; Gunaseelan, K. Investigations on detection and prevention of primary user emulation attack in cognitive radio networks using extreme machine learning algorithm. J. Ambient. Intell. Humaniz. Comput. 2021, 1–10. [Google Scholar] [CrossRef]

- Elghamrawy, S.M. Security in cognitive radio network: Defense against primary user emulation attacks using genetic artificial bee colony (GABC) algorithm. Future Gener. Comput. Syst. 2020, 109, 479–487. [Google Scholar] [CrossRef]

- Dong, Q.; Chen, Y.; Li, X.; Zeng, K. Explore recurrent neural network for PUE attack detection in practical CRN models. In Proceedings of the 2018 IEEE International Smart Cities Conference (ISC2), Kansas City, MO, USA, 16–19 September 2018; pp. 1–9. [Google Scholar]

- Agarap, A.F.M. A neural network architecture combining gated recurrent unit (GRU) and support vector machine (SVM) for intrusion detection in network traffic data. In Proceedings of the 2018 10th International Conference on Machine Learning and Computing, Macau, China, 26–28 February 2018; pp. 26–30. [Google Scholar]

- Ur Rehman, S.; Khaliq, M.; Imtiaz, S.I.; Rasool, A.; Shafiq, M.; Javed, A.R.; Jalil, Z.; Bashir, A.K. Diddos: An approach for detection and identification of distributed denial of service (ddos) cyberattacks using gated recurrent units (gru). Future Gener. Comput. Syst. 2021, 118, 453–466. [Google Scholar] [CrossRef]

- Kumar, G.P.; Reddy, D.K. Hierarchical Cat and Mouse based ensemble extreme learning machine for spectrum sensing data falsification attack detection in cognitive radio network. Microprocess. Microsystems 2022, 90, 104523. [Google Scholar] [CrossRef]

- Turkyilmaz, Y.; Senturk, A.; Bayrakdar, M.E. Employing machine learning based malicious signal detection for cognitive radio networks. Concurr. Comput. Pract. Exp. 2022, 35, e7457. [Google Scholar] [CrossRef]

- Zhang, Y.; Wu, Q.; Shikh-Bahaei, M.R. On Ensemble learning-based secure fusion strategy for robust cooperative sensing in full-duplex cognitive radio networks. IEEE Trans. Commun. 2020, 68, 6086–6100. [Google Scholar] [CrossRef]

- Taggu, A.; Marchang, N. Detecting Byzantine attacks in Cognitive Radio Networks: A two-layered approach using Hidden Markov Model and machine learning. Pervasive Mob. Comput. 2021, 77, 101461. [Google Scholar] [CrossRef]

- Ling, M.H.; Yau, K.L.A.; Qadir, J.; Poh, G.S.; Ni, Q. Application of reinforcement learning for security enhancement in cognitive radio networks. Appl. Soft Comput. 2015, 37, 809–829. [Google Scholar] [CrossRef]

- Arun, S.; Umamaheswari, G. An adaptive learning-based attack detection technique for mitigating primary user emulation in cognitive radio networks. Circuits, Syst. Signal Process. 2020, 39, 1071–1088. [Google Scholar] [CrossRef]

- Prata, M. Energy Anomaly Detection. Available online: https://www.kaggle.com/code/mpwolke/energy-anomaly-detection/data (accessed on 25 October 2022).

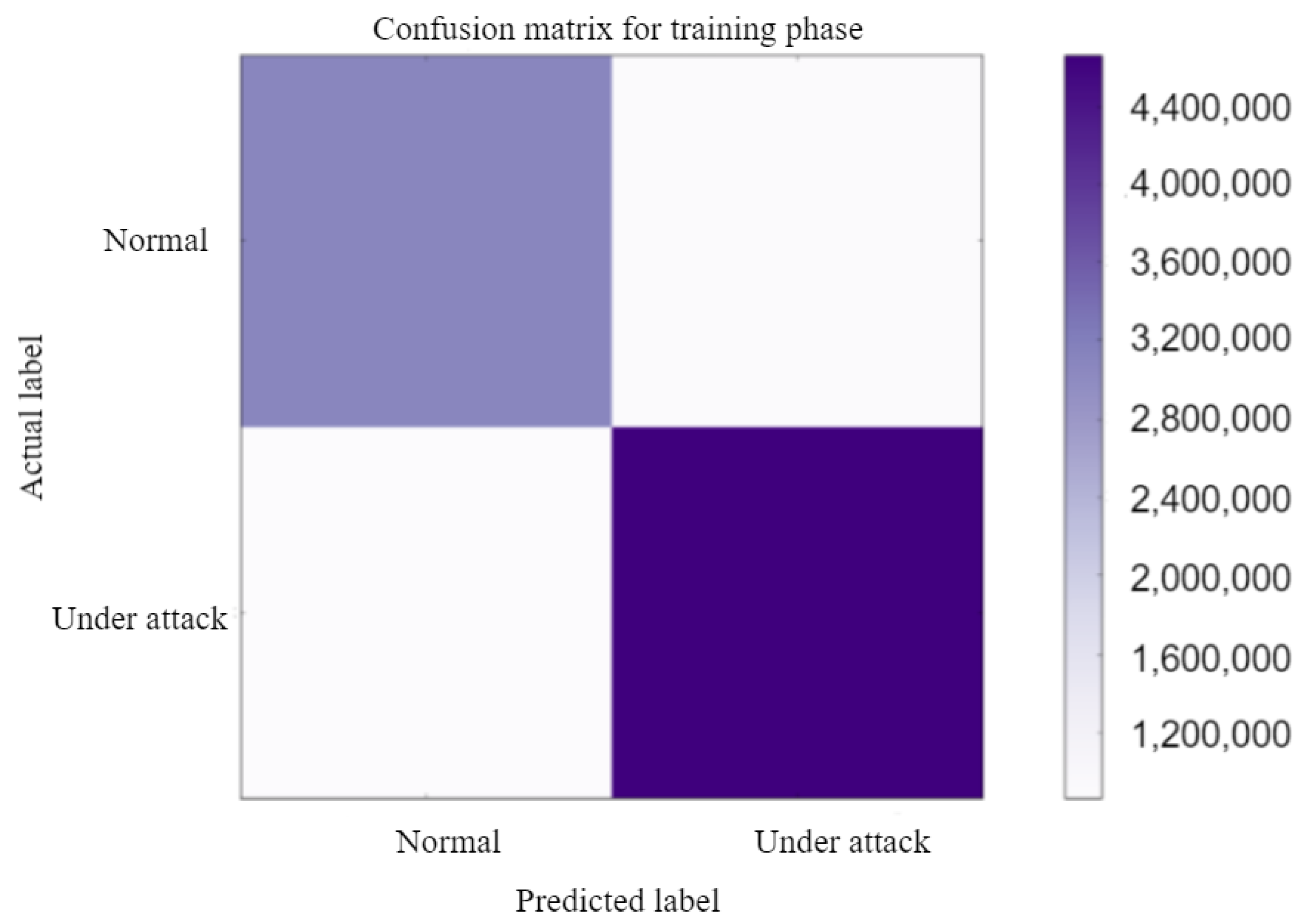

| Evaluation Metrics | Training | Testing |

|---|---|---|

| Accuracy | 80.9929 | 82.4515 |

| Precision | 79.0306 | 89.3005 |

| Sensitivity (Recall) | 84.3726 | 78.5418 |

| F1 Score | 81.6142 | 83.5763 |

| Specificity | 77.6132 | 87.6022 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

I, E.E.; Clement, J.C. GRU-SVM Based Threat Detection in Cognitive Radio Network. Sensors 2023, 23, 1326. https://doi.org/10.3390/s23031326

I EE, Clement JC. GRU-SVM Based Threat Detection in Cognitive Radio Network. Sensors. 2023; 23(3):1326. https://doi.org/10.3390/s23031326

Chicago/Turabian StyleI, Evelyn Ezhilarasi, and J Christopher Clement. 2023. "GRU-SVM Based Threat Detection in Cognitive Radio Network" Sensors 23, no. 3: 1326. https://doi.org/10.3390/s23031326

APA StyleI, E. E., & Clement, J. C. (2023). GRU-SVM Based Threat Detection in Cognitive Radio Network. Sensors, 23(3), 1326. https://doi.org/10.3390/s23031326