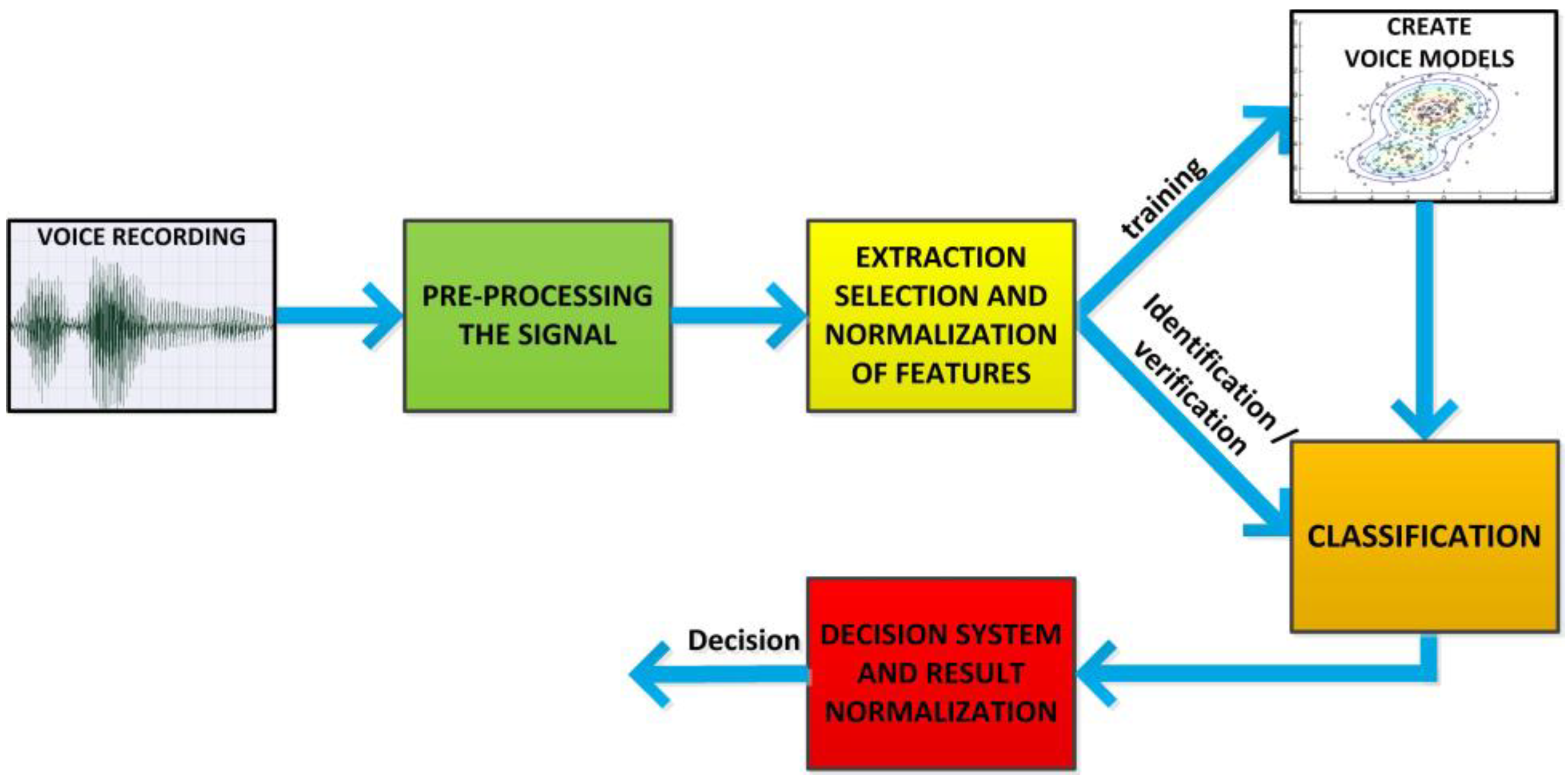

3.2. Signal Pre-Processing

The first element of signal pre-processing is its normalization, which involves two actions, namely, the removal of average value and scaling.

The removal of the average value from a digital speech signal is performed because of an imperfect acquisition process. In physical terms, a speech signal represents changes of acoustic pressure, this is why one can assume that its average value is zero. In a practical realization of digital speech processing, the value is almost always non-zero due to the processing of fragments of speech featuring a finite length.

Scaling is the second operation to which the signal is subject. It compensates signal non-matching to the scope of the converter while enabling the reinforcement of those speech fragments that were recorded too silently. In the case of a system dedicated to speaker identification/verification, regardless of the contents of utterance, it is not necessary to keep energy relations among the particular fragments of audio recording. This is why the authors perform scaling against a maximal signal value in order to avoid overdriving, and they use the available representation of numbers to be the maximum.

During audio recording analysis, it is important to eliminate silence, which is a natural element of almost every utterance. This operation enables a decrease in the number of signal frames to be analyzed, which contributes to how quickly a voice is recognized, and to the effectiveness of appropriate identification/verification, as only those frames are analyzed that are relevant from the point of view of speaker recognition. In the system presented, silence elimination takes place via the designation of the signal energy of the

i-th

N long frame, which is then normalized against a framework featuring the greatest energy

Emax from a given speaker’s utterance, according to the following equation:

Then, the achieved value of the normalized signal energy in frame

Ei is compared with the experimentally designated threshold

pr (Equation (2)), which, in addition, depends on an average value of the energies of the particular frames

Ē, which enables the appropriate elimination of silence in a diverse acoustic environment:

Parameters related to frame length, shift, and threshold value were optimized with the use of a genetic algorithm [

17].

Another element of signal pre-processing includes the limitation of sound components featuring the lowest frequencies (not heard by humans). For this purpose, a high-pass filter was used. Filter parameters were matched in the optimization process [

17].

The subsequent stage of signal pre-processing includes signal segmentation in order to divide it into short (quasi-stationary) fragments called frames. This makes it possible to analyze each signal frame, from which separate vectors of distinctive features will be created at the further stage of the system operation. During the signal segmentation, the frame’s length as well as shift were subject to segmentation.

The segmentation process is related with windowing, i.e., signal multiplication by a time window

w(

n), the width of which determines the frame length

N. It seems natural to apply a rectangular window in a time domain, as it does not distort the time signal. However, due to its redundancy, the time domain is not directly used for voice signal analysis. Frequency analysis is used for this purpose. The multiplication in the time domain operation corresponds to a convolution in frequency domain. The rectangular window spectrum is affected by a high level of sidelobes, which triggers the creation of strong artifacts in the signal analyzed, which in turn is related with the so-called spectrum leakage phenomenon. In order to minimize this adverse phenomenon, the Hamming window has been applied in the ASR System, which features a broader main lobe and lower levels of sidelobes:

In the speech recognition system, in which the semantic context of an utterance is the key element, it is justified to use windows of variable lengths, whereas in the case of speaker recognition systems which do not depend on speech content, there is no need for phonetic features-based segmentation, and this is why uniform segmentation is used.

The last step in the process of signal pre-processing involves the selection of its frames, which is important from the point of view of the ASR System. The above-discussed function of silence elimination is the first ‘rough’ stage of eliminating longer silent-speech fragments. The presented solution features three additional mechanisms of frame selection, which take place following the signal segmentation.

The first mechanism results from the desire to analyze laryngeal tone, which occurs only in voiced speech, which is why only ‘voiced frames’ are used during selection for the purpose of further analysis. In voiced fragments, the maxima in the frequency domain occur regularly (every period of the laryngeal tone), which cannot be said about voiceless speech fragments, which are similar to noise signals. The classification of voiced and voiceless speech fragments has been carried out with the help of an autocorrelation function [

16]. The autocorrelation of signal frames is calculated according to the following equation:

where

s(

n) is a speech signal frame with the length of

N samples. The occurrence of a periodical maxima in the autocorrelation function enables the defining of the resonance of the signal frame analyzed. The autocorrelation function achieves the highest value for a zero shift; however, this stripe is related to signal energy, which is why, when looking for resonant frames, one has to examine the second maximum

rmax of the autocorrelation function, which must be compared with the empirically calculated voicing threshold:

The resonance threshold is another parameter that underwent optimization in the process of the development of the ASR System. The calculated autocorrelation function also serves to determine the speaker’s base frequency F0, which is a vital descriptor of the speech signal.

The re-detection of the speaker’s activity is another criterion applied when selecting representative signal frames, but this time, it involves the elimination of shorter silence fragments, the frame lengths and shifts of which are used for the further extraction of distinctive features. The process of choosing a threshold value of this criterion was also subject to optimization. Moreover, all of the hitherto mentioned system parameters were subject to multi-criteria optimization, with account being taken of their interrelations [

17]. The signal frame is rejected if the following inequality is not fulfilled:

where

Pr is the power of the currently examined frame;

Ps is a statistical value of the average power of the frame; and

pp is an empirically calculated threshold.

The last stage of frame selection takes place based on their noise level. This is possible thanks to a comparison of the value of the base frequency calculated by means of two independent methods—autocorrelation

F0ac and cepstral method

F0c. Those two methods of calculating

F0 have different resistances to signal noise. Making correct use of those characteristics enables us to define the signal frames that do not fulfil a defined quality criterion (Equation (7)) [

18]. According to the literature [

18], a calculation of the base frequency by means of the autocorrelation method is more exact than a calculation by means of the cepstral method, but the former one is less resistant to signal noise. Thus, the smaller the difference between base frequency determined by means of the two methods, the less noise in the signal frame concerned:

Here, pf represents the optimized threshold value.

3.3. Generation of Distinctive Features

The generation of distinctive features, i.e., numerical descriptors representing the voices of particular speakers, is the key module of the biometric system. This stage is particularly important because any errors and shortcomings occurring at that point lower the capability for discriminating the parametrized voices of speakers, which cannot be made up for in the later stages of voice processing within the ASR System. The main goal of this stage of the operation is to transform the input waveform in order to achieve the smallest possible number of descriptors, including the most important information that characterize a given speaker’s voice, while minimizing their sensitivity to signal change, which is irrelevant from the point of view of ASR. In other words, minimizing the dependence on semantic contents or the parameters of the acoustic tract used during the acquisition of voice recordings.

Parametrization in the time domain is not effective in the case of speech signals, because despite its completeness, the signal—when considered in the time domain—features a very high redundancy. Further analysis in the frequency domain is far more effective from the point of view of this system. One of the reasons for such an approach is the inspiration for how the human hearing sense operates, which is developed in the course of evolution in order to correctly interpret the amplitude and frequency envelope of a speech signal by means of isolating the components featuring particular frequencies using the specialized structures of the internal ear [

19].

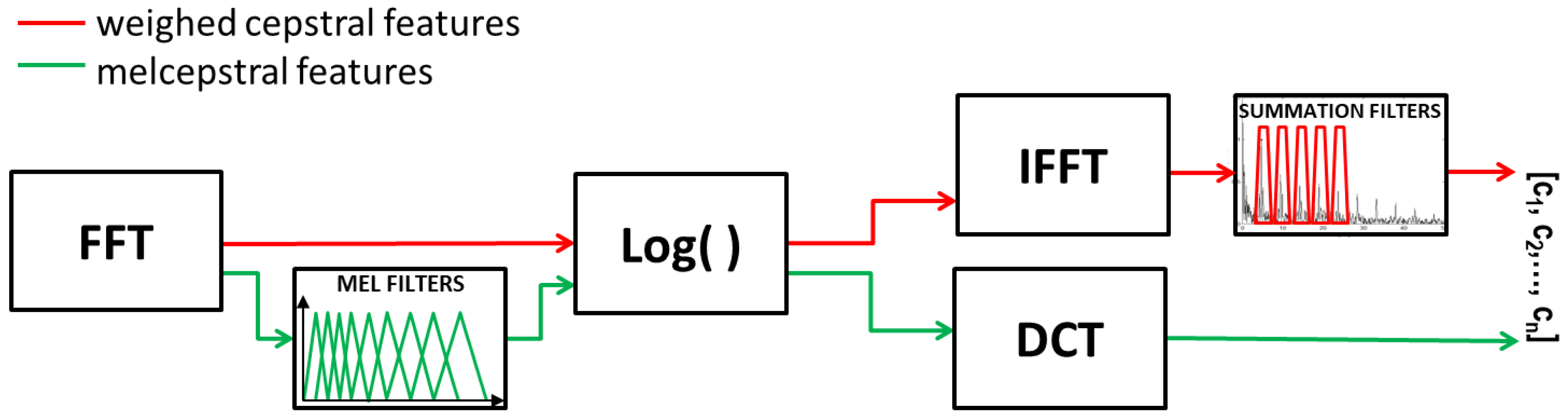

The frequency form of a speech signal is only a starting point for further parametrization. In the ASR System concerned, two types of descriptors were applied, which require further mathematical transformations of the amplitude spectrum, namely, weighed cepstral features and melcepstral features. The generation process of the features mentioned above are presented in

Figure 3.

A detailed description of the particular mathematical transformations necessary to extract the considered distinctive features of the vocal signal are described below.

Following acquisition and initial processing, a speech signal assumes the form of a digital signal

s(

n), for

n = 0, 1, …,

N − 1, where

N is the length of the signal frame considered. In order to make a signal transformation into the frequency domain, signal windowing takes place with the use of the window function

w(

n) (in the case of the ASR System concerned, it is the Hamming window) and Discrete Fourier Transform (DFT):

where

is the kernel of Fourier Transform. The input vector

s(

n) including

N elements in the time domain is transformed into an output vector

S(

k),

k = 0, 1, …,

N − 1, which is a representation of the former in the frequency domain. In practical realization, one seeks that the number of samples be an iteration of 2, which allows for an implementation of the Fast Fourier Transform (FFT) algorithm.

A frequency analysis of the speech signal is more effective than a time domain analysis, and a direct analysis of the frequency spectrum enables the easy discrimination of semantic contents; however, extracting the ontogenetic character based on the spectrum envelope is still a difficult task. This is why, in further analysis, the signal will be subject to transformation to so-called cepstral time.

Cepstrum makes use of a logarithm operation, which in the case of complex numbers, is related to complications resulting from the need to ensure phase continuity. Since the phase analysis of sound is used by the hearing sense only to locate the source of the voice and does not carry any principal ontogenetic information, thus, in the case of speaker recognition, the so-called real cepstrum is used, which operates on the spectrum module and which for discrete signals, is reduced down to the following form:

and finally,

Due to the cyclicality of the Fourier transform kernel and the amplitude spectrum properties, the above logarithm from an amplitude spectrum module

C(

k) is cyclical and is described by the equation:

This is an even function that ensures symmetry against the Y-axis, which is why its development features exclusively cosinusoidal (even) functions, and it does not matter whether, in the process of a reverse operation, a simple or inverse Fourier Transform is used. Due to this fact, a real cepstrum can clearly be interpreted as the spectrum of logarithmic amplitude spectrum [

20]. The domain of such a transformation is called pseudo-time, and the X-axis is expressed in seconds.

When analyzing a speech signal spectrum, one can observe the occurrence of a fast-changing factor resulting from stimulation, and a slowly changing factor that modulates the amplitudes of particular impulses created as a result of the stimulation. Thanks to the application of a transformation in the form of an amplitude spectrum logarithm, it is possible to change the relationship of the two components from a multiplicative to an additive one. As a result of calculating the spectrum of such a signal (simple or inverse Fourier Transform), slowly the variable waveforms related to the vocal tract, which carry the semantic content, are located near to zero on the pseudo-time axis, while impulses related to the laryngeal sound occur near its period and repeat every period. The unequivocal determination of a starting point for the relevant information related with laryngeal sound is impossible, due to the fact that, in reality, the sound is not a single tone. This element was subject to optimization [

17], and filtration in the domain of cepstral time is called liftering.

Cepstral analysis greatly facilitates the classification of speakers based on the obtained laryngeal sound peaks; however, in order to obtain the first type of distinctive features called weighed cepstral features, the authors of this article additionally applied summation filters in sub-bands [

21]. The detection of maxima within the cepstrum in places foreseen by knowing a base frequency does not take place within this algorithm; what does take place is the summing up of all the points from this area with a certain weight. Sums around particular stripes, starting from the second maximum, are normalized to the sum of stripes surrounding the first maximum, which correspond to the base frequency. An idea for an algorithm applying a trapezoidal weighted function is presented in

Figure 4. The type of filter applied, and the scope of summing, were subject to optimization [

17].

Another parametrization method used by the authors, which is the most popular one in the case of speech signals, is the MFCC method [

22]. This method involves the sub-band filtration of the speech signal with the support of band-pass filters distributed evenly on a mel-frequency scale.

The mel scale is inspired by natural mechanisms occurring in the human hearing organ, and reflects its nonlinear amplitude resolution. Although it is able to discriminate sounds within the range from 20 Hz to 16 kHz, the hearing organ is the most sensitive for the frequencies from 1 to 5 kHz. Thanks to the application of nonlinear frequency processing during speech signal analysis, it is possible to increase effective differentiation among particular frequencies. Low frequencies and logarithmic high frequencies are mapped (linear mapping) using the mel scale in huge approximation. Moreover, the scale allows for significant data reduction. Converting the amplitude spectrum to the mel scale is performed according to the following equation [

23]:

Frequency conversion takes place with the use of a bank of filters distributed in accordance with the mel scale described, using the above-mentioned equation. Particular filters possess triangular frequency characteristics. According to the literature, the filter shape has no significant importance if the filters overlap [

24], which occurs in this case.

In order to conduct a linear mapping of the signal spectrum with the application of M filters, one has to carry out the following mapping:

where

Fi(

k) is the filter function in the

i-th sub-band. This operation maps an

N-point linear DFT spectrum to the

M-point filtered spectrum in the mel scale. From 20 to 30 filters are used as a standard; however, numerous global studies on speaker recognition systems have proven that the use of a small number of filters has a negative impact on speaker recognition effectiveness.

Another stage during MFCC generation is to convert the spectrum amplitude to a logarithm scale, which facilitates the deconvolution of the voice-tract impulse response from laryngeal stimulation:

The last mapping of the features considered involves subjecting them to Discrete Cosine Transform (DCT), for the purposes of decorrelation, according to the following equation:

where

M is the number of mel filters;

J is the number of MFCC coefficients; and

MFCCj is the

j-th melcepstral coefficient.

It is obvious that the initial melcepstral coefficients, that are closely related with the contents of the statement, would be rejected in further considerations, as in the case of resetting a portion of the signal located near zero on the cepstral time scale.

In an assessment of world researchers, in the case of speaker recognition, the best realization of MFCC parametrization is in the form proposed by Young [

25], which gives a higher efficiency of speaker recognition than the original Davis and Memelstein method [

22]; this is why it has been used for the purposes of our System. According to that idea, the definition of particular band ranges starts from calculating a range of frequencies ∆

f according to the following equation:

where

fg is the upper frequency of the voice signal;

fd is the lower frequency of the voice signal; and

M is the number of filters.

That range is a fixed step that defines the filters’ middle frequencies, which are defined as follows:

The values of the above-mentioned frequencies are given in mels, which enable the even distribution of the filters on a perceptual scale, while preserving a nonlinear distribution within the frequency scale. The filter bands overlap so that each subsequent filter starts at the middle frequency of the previous filter.

3.4. Selection of Distinctive Features

At the feature generation stage, a maximally large set of distinctive features was extracted, which may be used for the purposes of the ASR System. According to global research, the use of a maximally large set of features does not always ensure that the best results are obtained [

26,

27,

28]. Feature selection often enables the obtaining of greater or the same accuracy of classification for a reduced features’ vector, which in turn translates into a significant shortening of the calculation time and a simplifying of the classifier itself. Some features that are subject to evaluation may assume a form of measurement noise, which adversely affects the results of speaker recognition. There are features, however, that are strongly correlated with each other, which makes them dominate over the remaining part of the set and adversely affects the quality of classification.

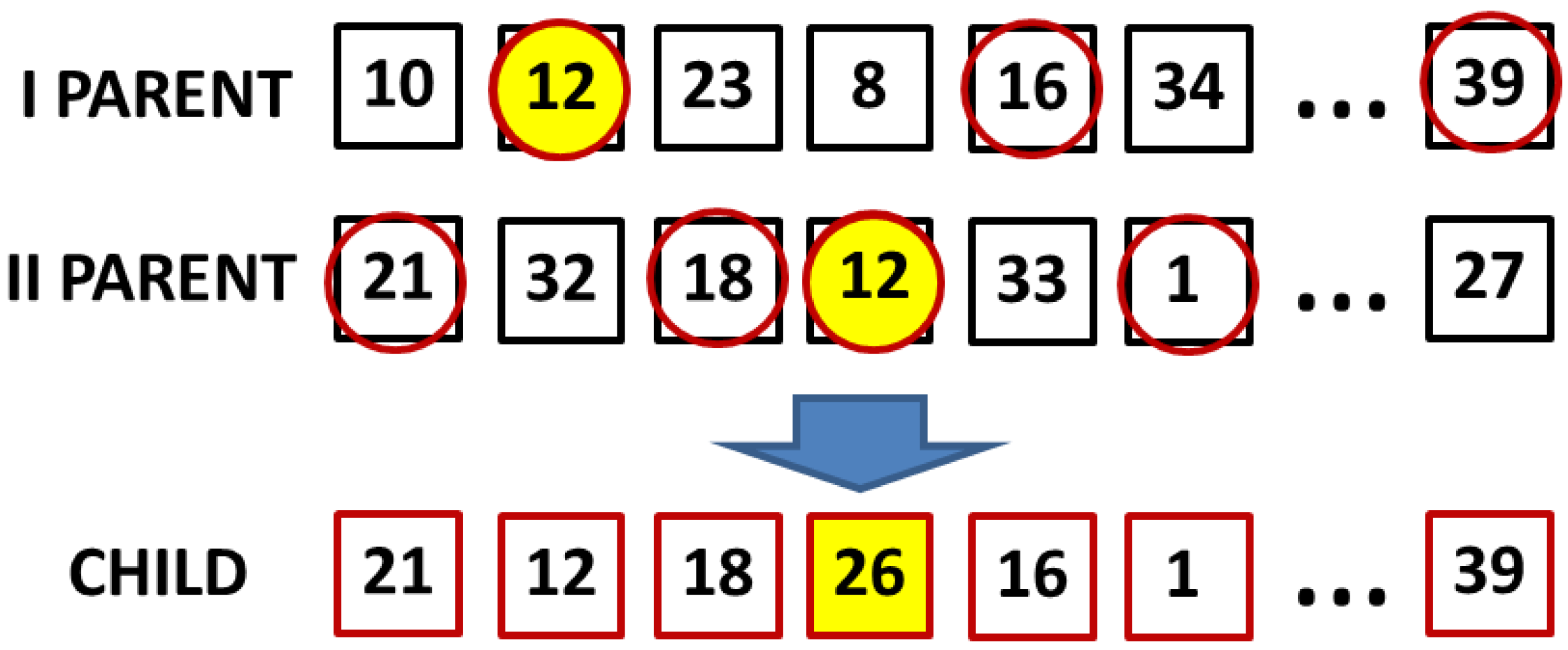

The method of feature selection attempted by the authors uses a genetic algorithm (GA) [

27]. This method enables an optimum set of features to be obtained, with account being taken of their synergy; however, this requires long-term calculations. In the initiation phase of the algorithm operation, the Shannon entropy of particular variables is calculated. In such a case, the variables are understood as being particular distinctive features and vectors of class membership. Entropy should be understood as a measure of disorder, which, for the case of one variable (18) constitutes a measure of its redundancy. If calculating the joint entropy (19), one takes account of the joint redundancy of both variables, and for totally independent variables, it assumes the value of the sum of entropies of particular variables:

The entropy values of particular variables and their joint entropies enable a level of mutual information to be defined according to the following equation [

29]:

Mutual information allows for the use of a variable X (one of the distinctive features) for forecasting the value of a variable Y (another distinctive feature or one of the class membership vectors considered). In other words, it enables a definition of how much the knowledge of one variable may lower the uncertainty of another variable. The first two terms of Equation (20) attest to the “stability” of the features considered; the lower the redundancy of the features, the more certain the joint information and the higher its value. By subtracting the joint entropy of variables considered from the first two terms, one can easily obtain information about the level of the variables’ correlation. For totally dependent variables, the joint information equals 1, and for independent variables, it assumes the value of 0.

As a result, a vector of mutual information I(yhi; y) is created, which occurs among distinctive features yhi subject to selection and the class membership vector y, as well as a matrix of joint information among the features I(yhi; yhj). Due to the time-consuming nature of calculating the mutual information among the variables considered, in the case of the genetic algorithm presented, one calculates the initial data, which initiate the further operation of the algorithm. These data consist of mutual information calculated in a full set of features subject to selection. The initial data obtained are then used for each evaluation of the fitting of particular individuals (sets of individual features) within a population.

An initial population is created pseudo-randomly by generating chromosomes (vectors including the feature numbers drawn). Then, the chromosomes are assessed based on the fitting function (23), which includes the averaged mutual information between the features and the class membership vector (21), and between the mutually distinctive features (22) [

29]:

Not exceeding a set threshold value of a difference between a maximum value of the fitting function and its average value within a given situation is the criterion set by the authors for discontinuing further calculations. Calculations are also discontinued if a certain maximum number of generations has been achieved.

If the requirements for the discontinuation of calculations have not been satisfied, a further step of the algorithm involves the selection of the best fitted individuals. Prior to selection, the chromosomes are sorted according to the fitting functions, starting from the best fitted individuals. The selection is performed pseudo-randomly; however, it is with a preference for better fitted individuals according to the following equation [

29]:

where

a is a constant value;

rk is a pseudo-random value from the range [0; 1], drawn for the

k-th individual;

gk is the index of the individual drawn, who was deemed well fitted and classified for undergoing further genetic operation (crossover).

In crossover, for the purpose of establishing a set of features (a chromosome) of a new individual, one needs to crossbreed two parental chromosomes, so that in order to create

N individuals of a new population, one needs to crossbreed 2

N parental individuals. This is why for each

k-th individual from the new population, two parental individuals are drawn, i.e., the selection function is realized twice (24). Crossover occurs in a multi-point way; namely, each feature of a new chromosome is matched pseudo-randomly from among the numbers of features occurring in a given index in a feature number vector of one of the two parental chromosomes (

Figure 5). The drawing procedure is repeated until a new chromosome is obtained, the length of which is the same as that of the parental vectors.

The above-mentioned mutation operation that takes place in the genetic algorithm is used in case features’ duplication during crossover. It is depicted in

Figure 5 in the form of features marked with yellow circles. As a result of the genetic operations, a new population of individuals is created, which are subject to evaluation via the fitting function. The above-described operations are repeated until the termination condition is reached. As a result of feature selection by means of the genetic algorithm, we obtain a vector of features of the best fitted individual from the last generation.

Feature selection is related with an extra challenge, namely, an appropriate merger of the selected features, and a possibility for selecting prior to the merger and on the entire vector of descriptors. The authors have tested different variants of selection, as well as a merger of distinctive features, in order to choose a variant that enables the highest accuracy of speaker classification to be obtained [

21].

3.5. Normalization of Distinctive Features

The normalization process is a further stage of distinctive feature processing. To this end, the authors have tested the following methods: cepstral mean subtraction (CMS); cepstral mean and variance normalization (CMVN); cepstral mean and variance normalization over a sliding window (WCMVN); and the so-called feature warping [

30]. The last of the methods listed enabled the achievement of the highest effectiveness of correct identifications/verifications of speakers while minimizing the impact of additive noise and components related with the acoustic tone on the matrix of the feature vectors. This is why only that method will be described further.

The aim of warping is to map the observed distribution of distinctive features in a certain window of

N observations, so that the final distribution of features is approximate to the assumed distribution

h(

z). Thanks to this approach, the resultant distributions of features may be more coherent in different environments of voice acquisition. Due to a multimodal character of speech, an ideal target distribution should also be multimodal and convergent with actual distribution of features of a given speaker; however, in this case, the authors have assumed a normal distribution:

Such an approach may cause a non-optimal efficiency of the normalization process due to the simplification applied, which is an inspiration for further research in this respect.

It is worth pointing out that normalization of each feature takes place regardless of the other features, and that feature vectors from particular observations are already appropriately selected from among those that have not been created by a speech signal.

After selecting an appropriate target distribution, the normalization process may be started. In the respective window of

N feature vectors, each of the features is sorted independently, in descending order. In order to define a single element of a warped feature while taking account of a feature located in the middle of a moving window, we calculate feature ranking in a sorted list. This is achieved in such a way that the most positive feature assumes the value of 1, and the most negative feature assumes the value of

N. This ranking is then put into a lookup table. The lookup table can be used to map the rank of the sorted cepstral features into a warped feature using warping normal distribution. Considering the analysis window of

N vectors and rank

R of the middle distinctive feature in a current moving window, a lookup table, or warped feature elements can be defined by means of finding

m:

where

m is the feature warped components. The warped value of

m may be initially estimated by initially assuming the rank

R =

N, by calculating

m by numerical integration and by repeating the process for each decreased

R value.

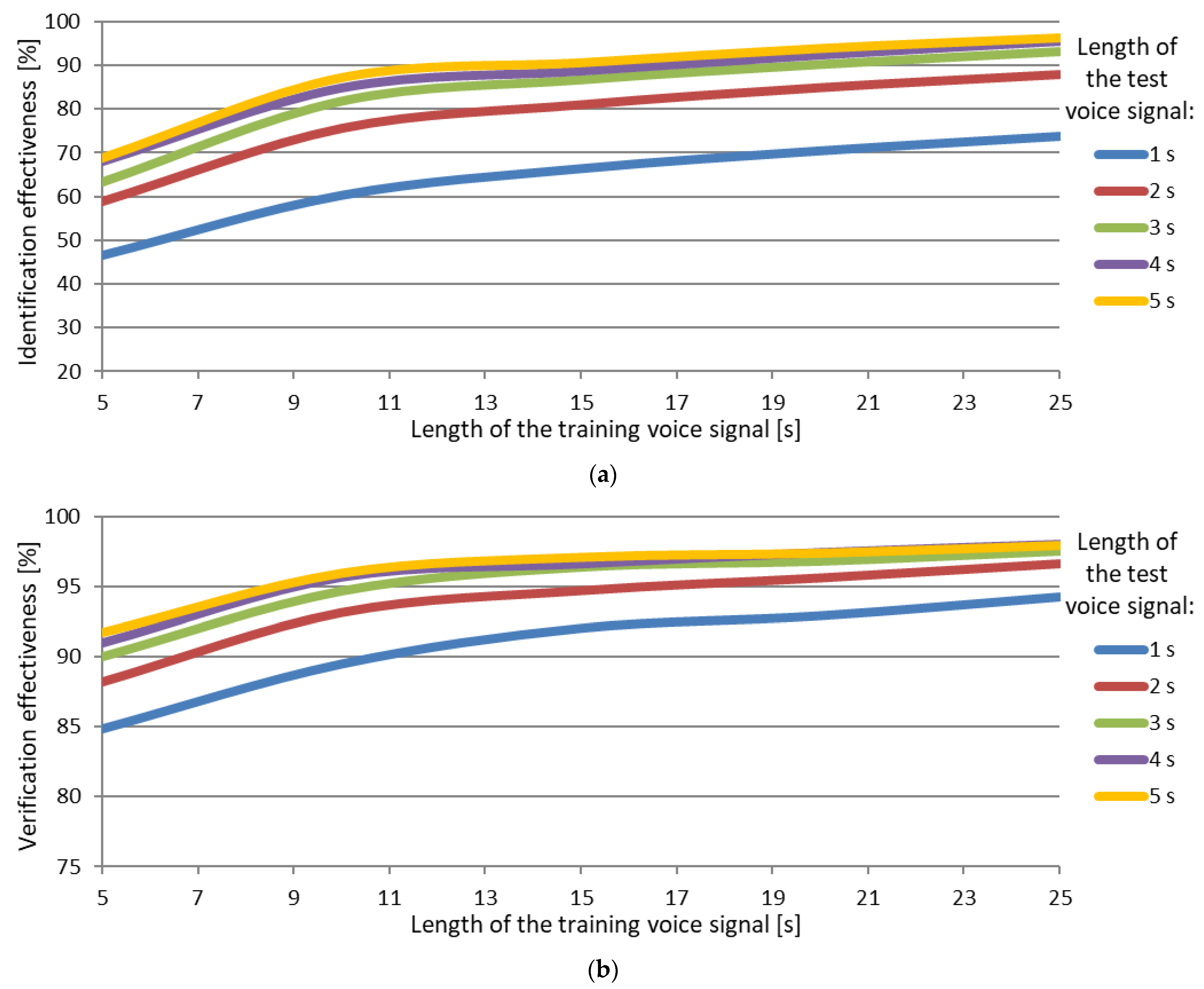

3.6. Modeling the Speaker’s Voice

Thanks to the appropriate use of distinctive features that constitute a training dataset for the classifier, it is possible to create voice models that are economical in terms of memory and rich in terms of ontogenetic information at the same time. The GMMs used in the classification process constitute parametrical probability density-functions and are represented by the sums

C of Gaussian distributions [

31]. This enables the creation of vocal models that are relatively small in terms of memory but rich in ontogenetic information, by means of using a training dataset

X = {

x1,

x2, …,

xT}, where

T means a number of

d-sized vectors of distinctive features.

The initial values of the distribution parameters

λ = {

xi,

µi, Σ

i} for

i = 1, ...,

C may be matched in a pseudo-random or determined way. Those parameters are: the expected values (

µi) and covariant matrices (Σ

i), as well as the distribution weights (

wi), while the sum of weights of all the distributions equals 1. Next, we calculate the probability density function of the occurrence of

d-dimensional vectors of distinctive features originating from the training dataset of a given speaker in the created model of his/her voice. This function may be approximated by means of a weighted sum

C of Gaussian distributions, and for a single observation t, it assumes the form of [

32]:

The

N function that describes a

d-dimensional Gaussian distribution is defined by equation [

32]:

During GMMs training, their parameters are estimated so that they match to training dataset

X. This most often takes place via the estimation of maximum likelihood (ML), which is a product of the probability density function for the considered observation vectors [

32].

The assumption behind the method is that

p(

X|) ≥

p(

X|λ), where model

λ constitutes the initiation data for a new model

. Due to the numerous feature vectors used during model training, which for the same speaker are not equal to one another, the probability function (Equation (29)) is nonlinear and has a number of maxima, which frustrates its direct maximization. That is why the maximization of the probability function takes place through iteration, and an estimation of the model’s parameters takes place in accordance with the expectation maximization (EM) algorithm [

33]. In practical realization, an auxiliary function is computed [

32]:

where

p(

i|xt,

λ) means the probability a posteriori of the occurrence of the

i-th distribution in model

λ, when feature vector

xt is observed. According to the assumption of the EM algorithm, if inequality

Q(

λ,

) ≥

Q(

λ,

λ) occurs, then also

p(

X|) ≥

p(

X|λ).

The operation of the EM algorithm involves an iterative repetition of two steps. The first one is the estimation of the probability value

p(

i|xt,

λ) (Equation (31)) [

32], and the second one is maximization, which enables defining the parameters of the new model

(Equations (32)–(34)) [

32], which maximizes the function described by means of Equation (30). Each subsequent step makes use of quantities arrived at in the previous step. The process of model training is terminated in a lack of adequate increments of the probability function, or if a maximum number of iterations has been reached:

In order to depict a result achieved in the GMM training process, a distinctive feature vector has been limited to two dimensions, and models have been presented in a three-dimensional drawing (

Figure 6). Each black point means a single multidimensional feature (in this case, a two-dimensional one) from each observation.

During speaker identification, a decision is made as to which speaker represented by the voice models

λk (for

k = 1, …,

N, where

N is a number of voices in a given dataset), the recognized fragment of voice represented by the

X set of distinctive features vector most probably belongs. To this end firstly, through a discrimination function

gk(

X) (Equation (37)), the conditional probability is calculated for each model, to attest that a specific model

λk represents the specific vectors of distinctive features

X [

32]:

Using Bayes’ theorem [

32],

where

p(

X|λk) is a likelihood function originating from speaker model (29) and means a probability that a recognized

X set of vectors is represented by a voice model

λk. Moreover,

p(

λk) represents the statistical popularity of the voice in the dataset, and as each voice is equally probable, then

p(

λk) = 1/

N. p(

X) is a probability of occurrence of a given feature vector X in a speech signal, and it is the same for each of the voice models. The probability is used for normalization purposes, and in the case of using the ranking only (according to criterion 40), when looking for the most probable model, it can be ignored. The discrimination function assumes the form of [

32]:

The most probable voice model selection takes place on a ranking basis in accordance with criterion [

32]:

Finally, in practical realization, a log-likelihood value is determined, which enables a change of multiplicative relationship between subsequent observations to an additive one, and the criterion assumes the form of [

32]:

where the probability

p(

xt|

λk) is determined according to Equation (27).

The process of classification with the use of GMMs is also related with a universal background model (UBM), which is created based on training data coming from various classes [

34,

35]. In the case of speaker recognition, we will call it a universal vocal model, and the training data used during the creation of its weighed mixture of Gaussian distributions includes the audio recordings of various speakers. The model has two main applications. The first one is to use it as initiating data when creating models of particular speakers. Thanks to such an approach, it is possible to train the model with a smaller number of iterations, as the model does not start from strongly divergent initial data. Moreover, as shown in the literature and in experiments conducted by the authors [

35], thanks to the use of UBM in the ASR System, it is possible to achieve a better effectiveness of the correct identification/verification of speakers. The deterministic method of the matching initial values of a speaker’s voice model presented in this article is realized based on the GMM-UBM algorithm [

36]. The authors used the above-mentioned algorithm and created a universal model of voices in numerous variants of limiting a voice cohort set included in the UBM training dataset. One of the approaches to limiting the cohort set involved the creation of a sex-independent and a sex-dependent UBM.

According to the experiments conducted by [

36], if there is an additional attribute in the form of information about the probable sex of the recognized speaker, the chance of its correct identification/verification increases. This may be of vital importance for criminology, where UBM profiling is possible via training within a limited dataset of speakers or recordings originating from a given type of devices.

A universal voice model may also be used in a decision-making system for the normalization of the achieved probability results, and in clearer terms—of the logarithm of probability (43) to make sure that the testing signal comes from a given speaker [

36].

3.7. Decision-Making System

In the decision-making system within the automated speaker-recognition system, there are two hypotheses about probability where the distinctive features of an utterance analyzed actually occur in a given speaker’s statistical model. Those hypotheses may be formulated as follows:

H0 (zero hypothesis)—voice signal X comes from speaker k,

H1 (alternative hypothesis)—voice signal X comes from another speaker ~k from the population.

A decision regarding whether a voice signal

X comes from speaker k or from another speaker ~

k depends on the relationship of probabilities of the above-mentioned hypotheses and comparing it with a detection threshold

θ. If we assume that the zero hypothesis is represented by model

λhyp, and that the alternative hypothesis is represented by model

, then the relation appears as follows:

The above equation is called the Neyman–Pearson lemma or a likelihood ratio test (LRT) [

37]. The likelihood quotient (40) is often given in a logarithmic (additive) form:

As a hypothesis, one can be assured of a result of Equation (39), where the GMM classifier created by the authors looks for a maximum value of the sum of probability density logarithms, which points to an existence of the feature vector

xt in speaker model

λk. According to the above, this relationship may be presented as follows:

where

N is the number of all voices in the dataset; and

T is the number of distinctive feature vectors extracted from a recognizable speech signal. The ultimate form of the Neyman–Pearson lemma is as follows:

The logarithm value of the likelihood of the alternative hypothesis

in the presented System is calculated by means of a direct use of the likelihood logarithm obtained thanks to the created UBM.