5G Infrastructure Network Slicing: E2E Mean Delay Model and Effectiveness Assessment to Reduce Downtimes in Industry 4.0

Abstract

1. Introduction

- (i)

- We propose an analytical model for estimating the E2E mean response time of the infrastructure network slices.

- (ii)

- Based on the developed model, we provide a delay evaluation study to show the effectiveness of the infrastructure slicing to ensure isolation among PLs in order to minimize the cost of production downtimes.

- (iii)

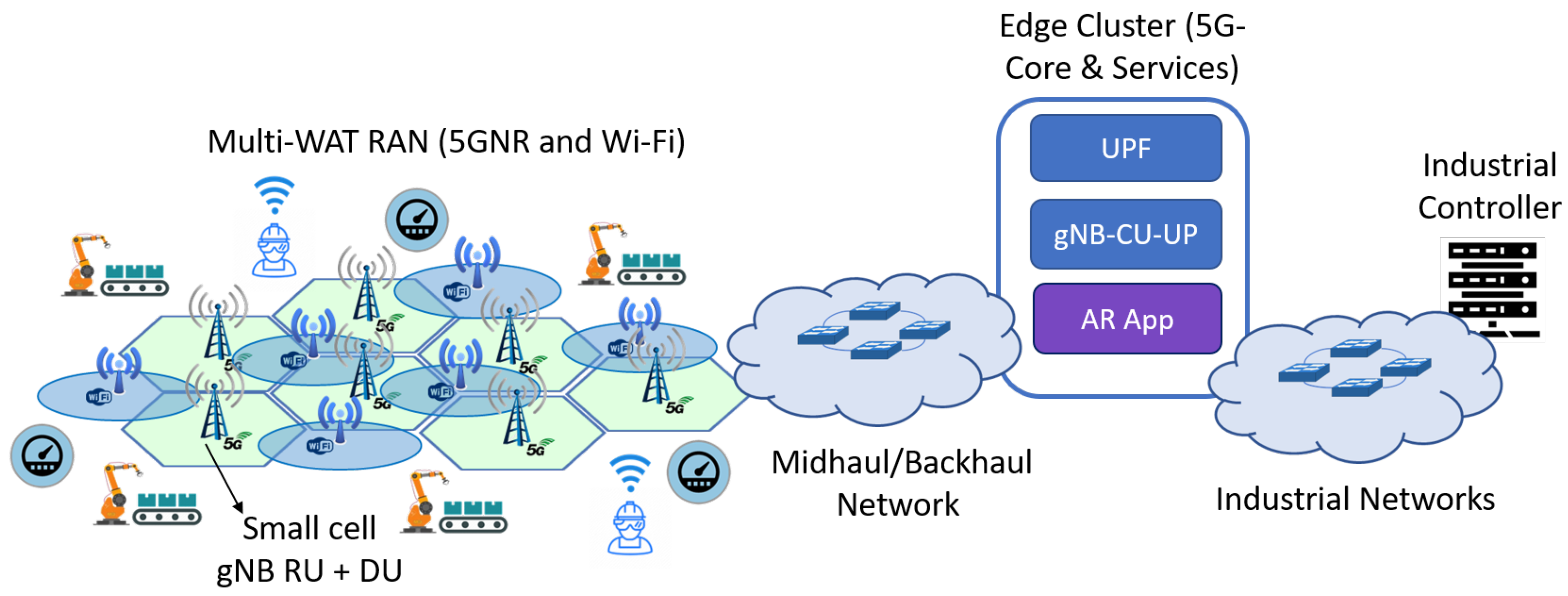

- Last, but not least, we consider a realistic configuration for an industrial scenario that consists of a factory floor with several PLs. More precisely, we derive the configuration of many parameters from experimental data extracted from the literature (accordingly specified in the corresponding sections). Other parameters have been measured through realistic simulations.

2. Background and Related Works

2.1. Network Slicing and Isolation

- The resources ring-fencing of a slice so as not to negatively impact the proper performance of the rest of the slices.

- The communication capabilities between slices, i.e., not supporting the communication between them if full isolation is required.

- Security capabilities in the sense of protection against deliberate attacks between slices.

- Wireless quota: it refers to the spectrum allocated to each slice in each radio access node. 3GPP 5G standards include functionality to abstract the complexity of non-3GPP wireless technologies (e.g., Wi-Fi and Li-Fi) access points making each appear as a single gNB towards the User Plane Function (UPF). Using non-3GPP technologies leveraging this 5G feature is appealing for enhanced throughput and reliability. Please observe that the specification of the wireless quota depends on the Wireless Access Technology (WAT) (e.g., 5G New Radio (NR), Wi-Fi, and Li-Fi).

- Compute quota: it stands for the computational resources dedicated to each slice in each compute node. It includes physical Central Processing Unit (CPU) cores, RAM, disk, and networking resources.

- Transport quota: it is the set of resources allocated to each slice in the TN. The TN provides connectivity among the 5G components. Typically, these resources might include transmission capacity at a given set of links and buffer space at the corresponding transport nodes’ output ports. A Virtual Local Area Network (VLAN) identifier (tag) can be assigned to each slice in order to differentiate traffic from different slices at layer 2 (L2).

- (i)

- Support of industrial URLLC critical services: URLLC critical services of Industry 4.0 impose the most demanding requirements in industrial networks. The restriction and ring-fencing of resources for their dedication to URLLC services is crucial to guarantee the stringent requisites demanded by these kinds of services and applications.

- (ii)

- Network performance isolation of the Operational Technology (OT) domain components: The division/segmentation of an industrial network into well-isolated parts for supporting the operation of disjoint OT components becomes essential to limit the scope of a malfunctioning, thus reducing production downtimes and associated expenditures.

- (iii)

- Multi-tenancy support: Part of the success of private 5G networks will be the ability to allow the provision of communication services from different customers (tenants) with such an isolation level that guarantees the agreed performance and management capabilities. Several use cases requiring multi-tenancy support have been proposed in the literature [15].

2.2. Analytical Performance Models for Network Slicing

2.3. Network Slicing Isolation Assessment

3. System Model

3.1. Computing Domain

- (i)

- Processing of packets, for instance, in gNB-CU and UPF instances, from each 5G stream is quite independent from other streams. Then, there is no need to divide processing into smaller pieces to spread it across cores.

- (ii)

- RTC mode minimizes the context switchings and maximizes the cache hit rate, which results in a lower packet processing delay.

3.2. Radio Access Network Domain

3.3. Transport Network Domain

4. E2E Mean Delay Model

4.1. Network Slice End-to-End Mean Response Time

- stands for the constant delays in the system, i.e., those delay components that do not depend on traffic load (e.g., propagation delays) or those whose dependency on the traffic load is negligible (e.g., switching fabric processing time of the physical L2 bridges or RAM accesses in VNFs when they execute CPU-intensive tasks).

- is the mean sojourn time of the queue k. As previously mentioned, a queue k is associated with a given data plane component or functionality (e.g., UPF, gNB-CU, gNB-DU, gNB-RU, and TN bridge), an instance of the respective component, and a resource within that instance. There is no pre-established rule to perform the queues to numerical indexes mapping, though this assignment shall remain the same for all the computations.

- denotes the visit ratio of the queue k (a specific resource), i.e., the average number of times a packet or the respective processing task visits the queue k since it enters until it leaves the network slice. For instance, a VNF packet processing could be modeled as three queues related to the CPU, RAM, and disk resources, each accessed a given number of times on average to run the packet processing task.

4.2. Mean Sojourn Time per Queue Computation

4.3. First and Second Order Moments Computation of the Internal Arrival Processes

4.4. Estimation of the Service Processes Related Input Parameters

4.4.1. Packet Transmission at the Transport Network Bridges’ Ouput Ports

4.4.2. Packet Processing Times Characterization at the User Plane Function

4.4.3. Packet Processing Times Characterization at the Central Unit

4.4.4. Packet Processing Times at the Distributed Unit

4.4.5. Packet Processing Times Characterization at the Radio Unit

4.4.6. Packet Transmission Times Characterization at the Radio Interface (NR-Uu)

5. Experimental Setup

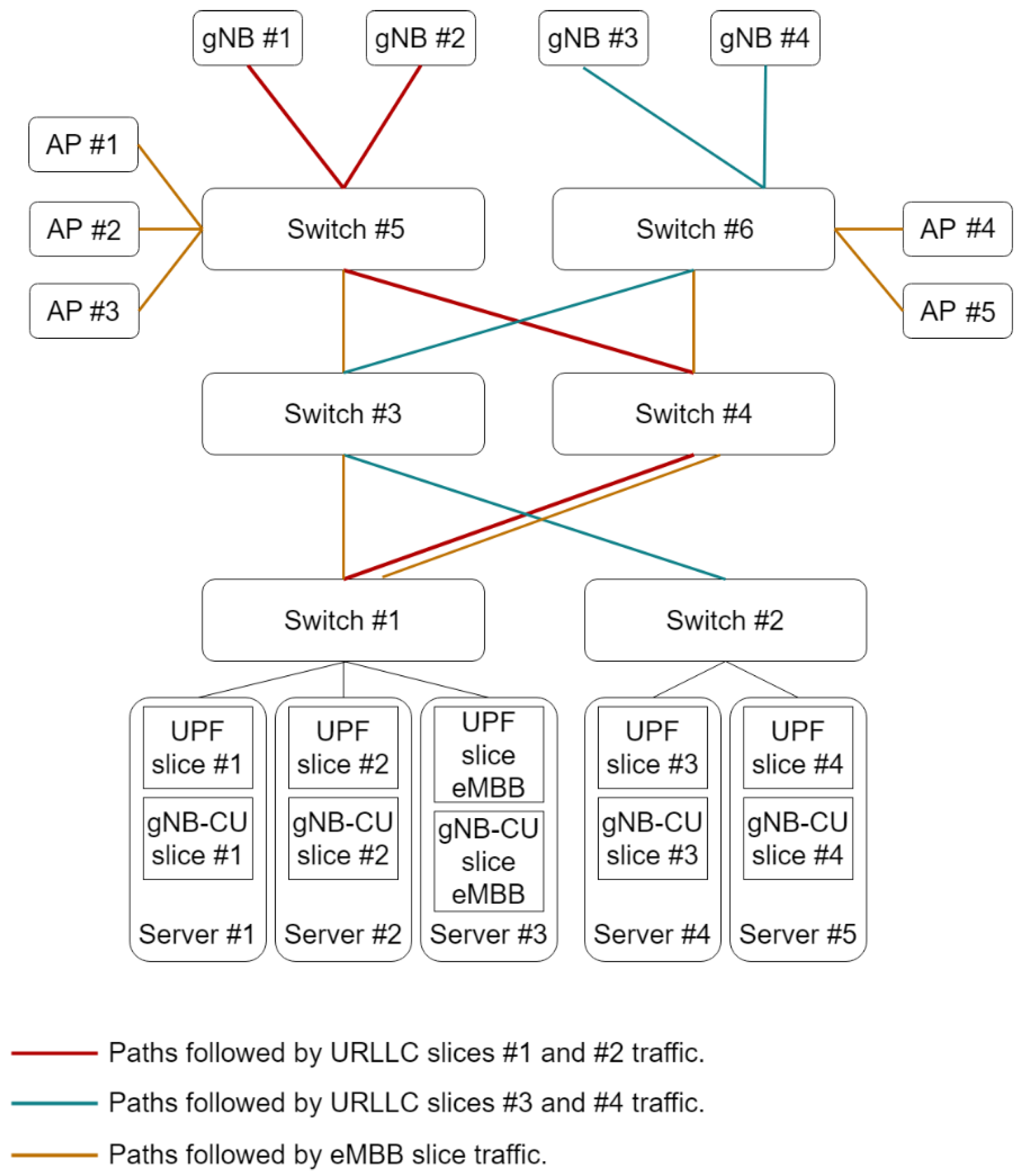

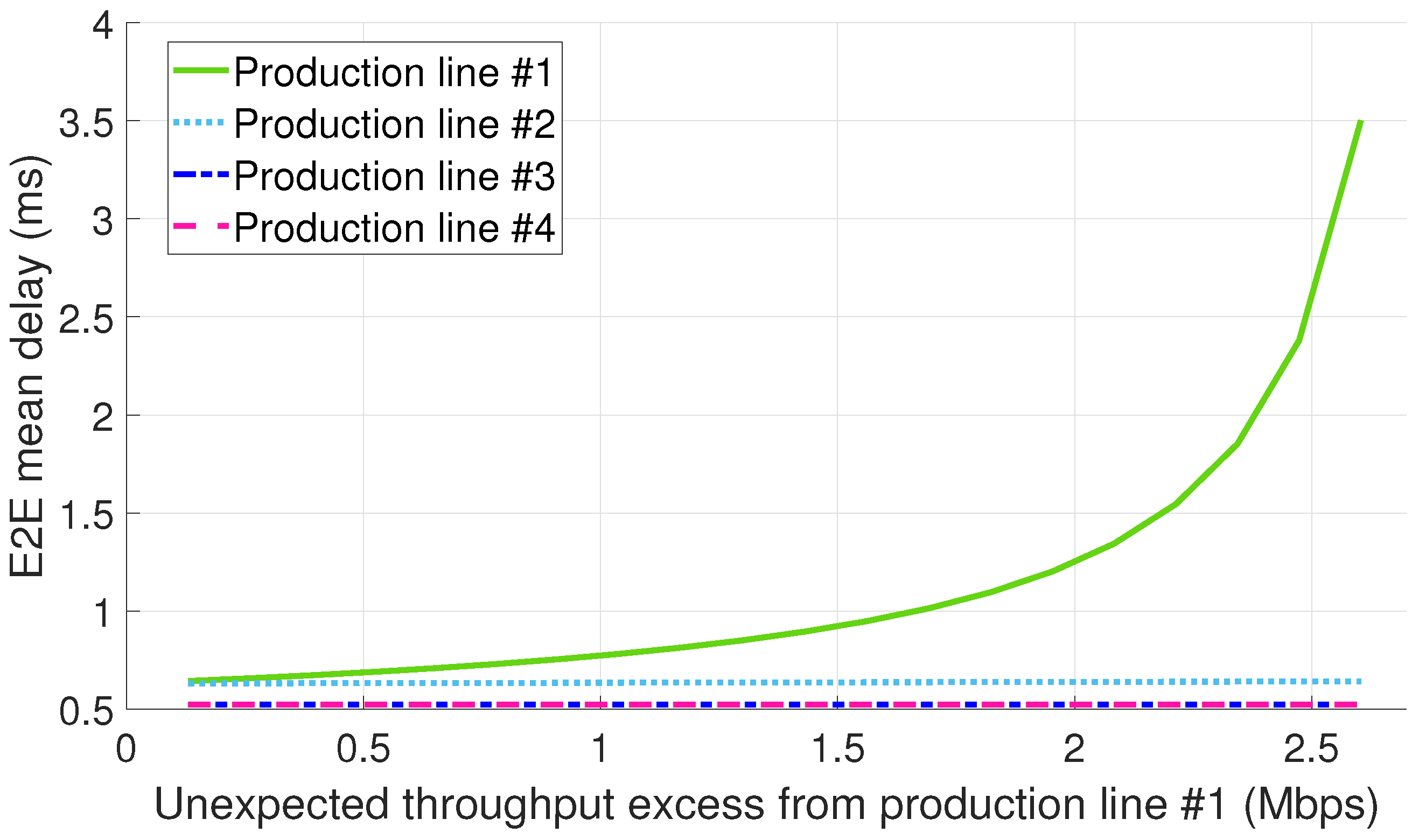

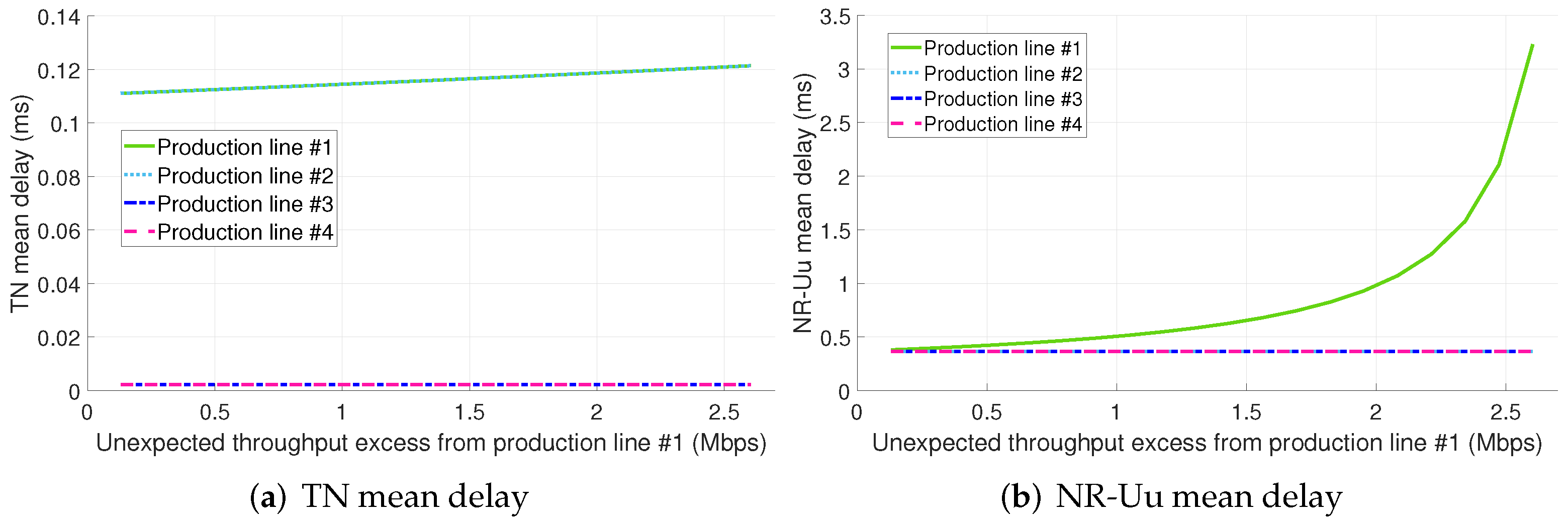

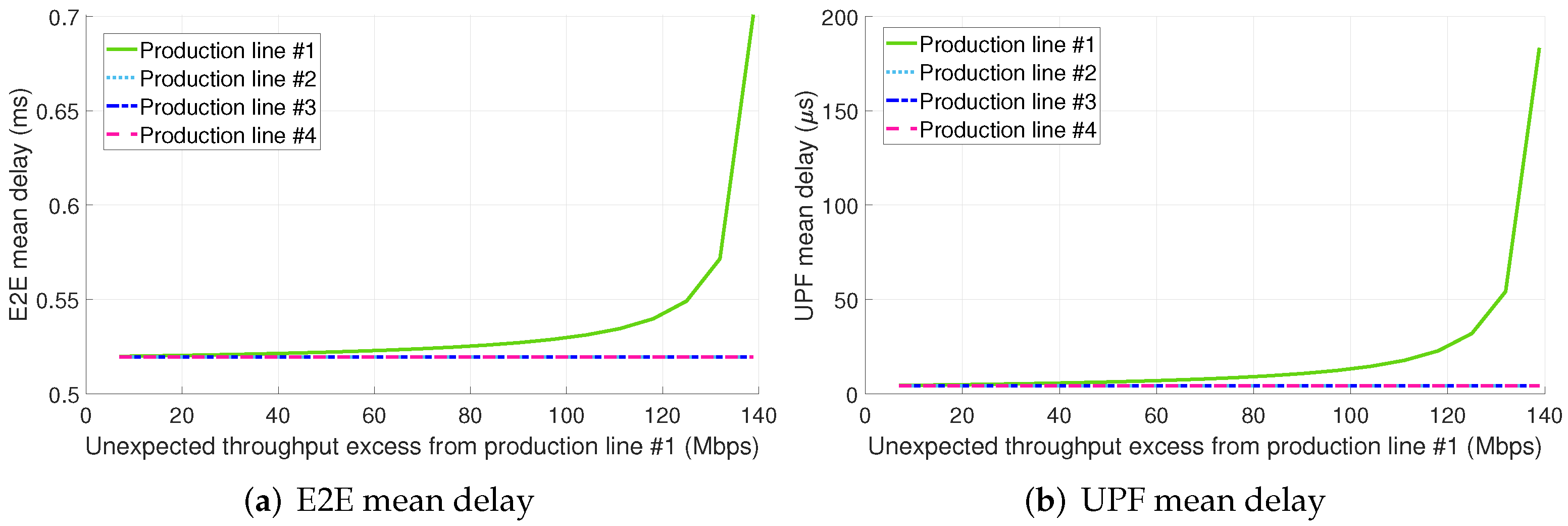

- Configuration 1: The URLLC traffic generated by each of the four PLs in the factory floor is served by a segregated slice, thus providing isolation between the production lines. The PL #1 generates an aggregated non-conformant traffic that does not meet the aggregated committed data rate due to a failure in its operation.

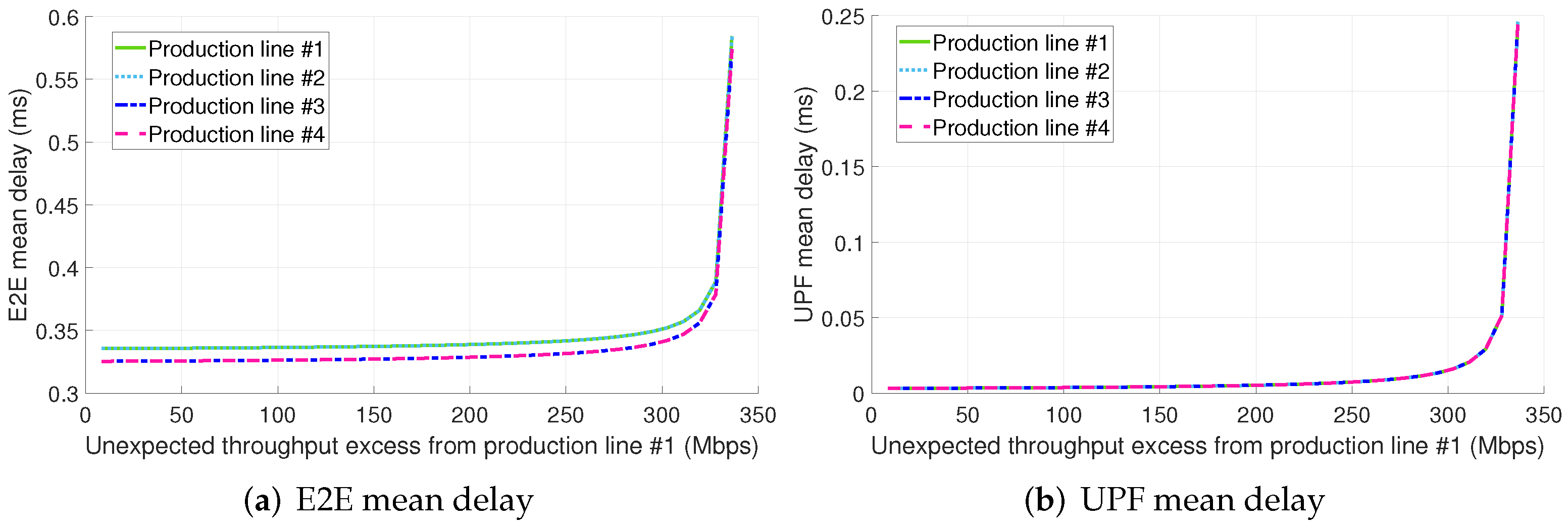

- Configuration 2: The URLLC traffic generated by all of the four PLs in the factory floor is served by a single slice. The production line #1 generates non-conformant traffic due to a failure in its operation.

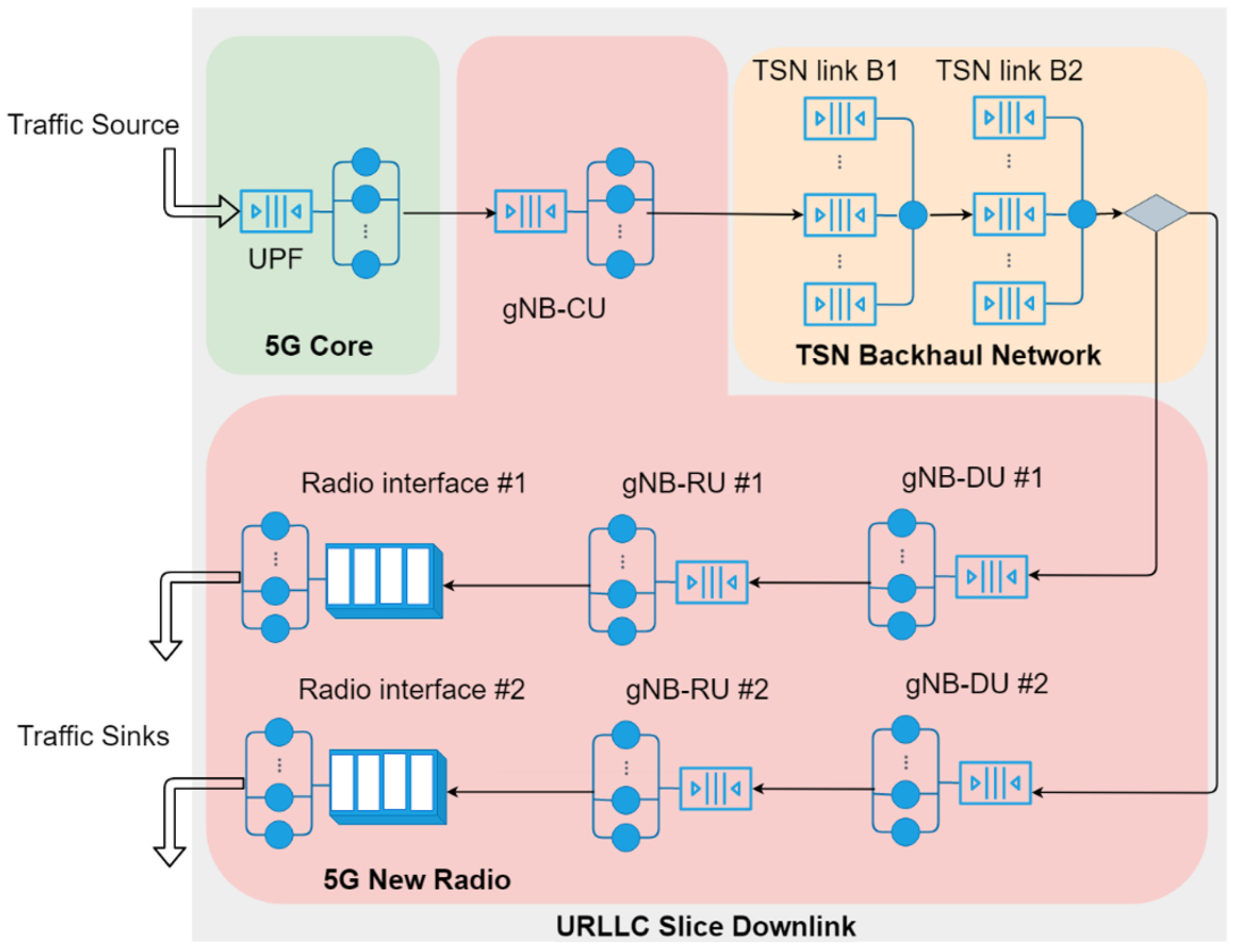

- Variant A: The midhaul network in Figure 4 is realized as a standard IEEE 802.1Q Ethernet network where there is no traffic prioritization.

- Variant B: The midhaul network in Figure 4 is implemented as an asynchronous TSN network, whose building block is the ATS. There is an ATS instance at every TSN bridge egress port. The ATS includes a per-flow traffic regulation through the interleaved shaping and traffic prioritization.

6. Results

- (i)

- Compared to configurations 1.A and 2.A, the bottleneck in this configuration is the UPF, which has an initial utilization much lower than radio resources given our setup.

- (ii)

- The throughput excess in this configuration leverages statistical multiplexing to utilize the UPF computational resources surplus allocated to PLs #2, #3, and #4 in configuration 1.B.

7. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| Acronym | Acronym expansion |

| 3GPP | 3rd Generation Partnership Project |

| 4G | Fourth Generation |

| 5G | Fifth Generation |

| 5GS | 5G System |

| AGV | Automated Guided Vehicle |

| AP | Access Point |

| AR | Augmented Reality |

| ATS | Asynchronous Traffic Shaper |

| BBU | Baseband Unit |

| BE | best-effort |

| CBS | Credit-Based Shaper |

| CDF | Cumulative Distribution Function |

| CN | Core Network |

| CPU | Central Processing Unit |

| CU | Central Unit |

| DCI | Downlink Control Indicator |

| DNC | Deterministic Network Calculus |

| DU | Distributed Unit |

| DRP | Dynamic Resource Provisioning |

| E2E | end-to-end |

| eMBB | enhanced Mobile Broadband |

| EPC | Evolved Packet Core |

| FCFS | First Come, First Served |

| gNB | Next Generation NodeB |

| GOP | Giga OPerations |

| GOPS | Giga Operations Per Second |

| HARQ | Hybrid Automatic Repeat Request |

| IFFT | Inverse Fast Fourier Transform |

| IT | Information Technology |

| KVM | Kernel-based Virtual Machine |

| L2 | layer 2 |

| LXC | Linux Container |

| LTE | Long-Term Evolution |

| MAC | Medium Access Control |

| MCS | Modulation and Coding Scheme |

| MIMO | Multiple-Input Multiple-Output |

| ML | Machine Learning |

| MTBF | Mean Time Between Failures |

| NF | Network Function |

| NFV | Network Functions Virtualisation |

| NP | nondeterministic polynomial time |

| NR | New Radio |

| NS | Network Softwarization |

| OP | Operational Technology |

| PCP | Priority Code Point |

| PDCP | Packet Data Convergence Protocol |

| PL | production line |

| PLR | Packet Loss Ratio |

| PMF | Probability Mass Function |

| PM | Physical Machine |

| PRADC | Primary Resource Access Delay Contribution |

| PRB | Physical Resource Block |

| QoS | Quality of Service |

| QN | Queuing Network |

| QNA | Queuing Network Analyzer |

| QT | Queuing Theory |

| RAM | Random Access Memory |

| RAN | Radio Access Network |

| RF | Radio Frequency |

| RLC | Radio Link Control |

| RRC | Radio Resource Control |

| RTC | run-to-completion |

| RU | Radio Unit |

| SCV | squared coefficient of variation |

| SDN | Software-Defined Networking |

| SDAP | Service Data Adaptation Protocol |

| SINR | Signal-to-Interference-plus-Noise Ratio |

| SNC | Stochastic Network Calculus |

| SNS | Softwarized Network Service |

| TAS | Time-Aware Shaper |

| TN | Transport Network |

| TR | Technical Report |

| TSN | Time-Sensitive Networking |

| UE | User Equipment |

| URLLC | Ultra-Reliable and Low Latency Communication |

| UP | User Plane |

| UPF | User Plane Function |

| VLAN | Virtual Local Area Network |

| VNF | Virtual Network Function |

| VNFC | Virtual Network Function Component |

| VR | Virtual Reality |

| WAT | Wireless Access Technology |

References

- Prados-Garzon, J.; Ameigeiras, P.; Ordonez-Lucena, J.; Muñoz, P.; Adamuz-Hinojosa, O.; Camps-Mur, D. 5G Non-Public Networks: Standardization, Architectures and Challenges. IEEE Access 2021, 9, 153893–153908. [Google Scholar] [CrossRef]

- 5G for Business: A 2030 Market Compass. Setting a Direction for 5G-Powered B2B Opportunities; White Paper; Ericsson: Stockholm, Sweden, 2019.

- Casado, M.; McKeown, N.; Shenker, S. From Ethane to SDN and Beyond. SIGCOMM Comput. Commun. Rev. 2019, 49, 92–95. [Google Scholar] [CrossRef]

- Feamster, N.; Rexford, J.; Zegura, E. The Road to SDN: An Intellectual History of Programmable Networks. SIGCOMM Comput. Commun. Rev. 2014, 44, 87–98. [Google Scholar] [CrossRef]

- Mijumbi, R.; Serrat, J.; Gorricho, J.L.; Bouten, N.; De Turck, F.; Boutaba, R. Network Function Virtualization: State-of-the-Art and Research Challenges. IEEE Commun. Surv. Tutor. 2016, 18, 236–262. [Google Scholar] [CrossRef]

- Hawilo, H.; Shami, A.; Mirahmadi, M.; Asal, R. NFV: State of the art, challenges, and implementation in next generation mobile networks (vEPC). IEEE Netw. 2014, 28, 18–26. [Google Scholar] [CrossRef]

- Ordonez-Lucena, J.; Ameigeiras, P.; Lopez, D.; Ramos-Munoz, J.J.; Lorca, J.; Folgueira, J. Network Slicing for 5G with SDN/NFV: Concepts, Architectures, and Challenges. IEEE Commun. Mag. 2017, 55, 80–87. [Google Scholar] [CrossRef]

- Samdanis, K.; Costa-Perez, X.; Sciancalepore, V. From network sharing to multi-tenancy: The 5G network slice broker. IEEE Commun. Mag. 2016, 54, 32–39. [Google Scholar] [CrossRef]

- Oladejo, S.O.; Falowo, O.E. 5G network slicing: A multi-tenancy scenario. In Proceedings of the 2017 Global Wireless Summit (GWS), Cape Town, South Africa, 15–18 October 2017; pp. 88–92. [Google Scholar] [CrossRef]

- Alliance, N. 5G Security Recommendations Package# 2: Network Slicing; NGMN: Frankfurt, Germany, 2016; pp. 1–12. [Google Scholar]

- Kotulski, Z.; Nowak, T.W.; Sepczuk, M.; Tunia, M.; Artych, R.; Bocianiak, K.; Osko, T.; Wary, J.P. Towards constructive approach to end-to-end slice isolation in 5G networks. EURASIP J. Inf. Secur. 2018, 2, 2. [Google Scholar] [CrossRef]

- 3GPP TS28.541 V17.4.0.; 5G Management and Orchestration; (5G) Network Resource Model (NRM); Stage 2 and Stage 3 (Release 17). 3GPP: Valbonne, France, 2021.

- Camps-Mur, D. 5G-CLARITY Deliverable D4.1: Initial Design of the SDN/NFV Platform and Identification of Target 5G-CLARITY ML Algorithms; Technical Report, 5G-PPP; 2020; Available online: https://www.5gclarity.com/wp-content/uploads/2021/02/5G-CLARITY_D51.pdf (accessed on 1 November 2021).

- Tzanakaki, A.; Ordonez-Lucena, J.; Camps-Mur, D.; Manolopoulos, A.; Georgiades, P.; Alevizaki, V.M.; Maglaris, S.; Anastasopoulos, M.; Garcia, A.; Chackravaram, K.; et al. 5G-CLARITY Deliverable D2.2 Primary System Architecture; Technical Report, 5G-PPP; 2020; Available online: https://www.5gclarity.com/wp-content/uploads/2021/10/5G-CLARITY_D23.pdf (accessed on 1 November 2021).

- Taleb, T.; Afolabi, I.; Bagaa, M. Orchestrating 5G Network Slices to Support Industrial Internet and to Shape Next-Generation Smart Factories. IEEE Netw. 2019, 33, 146–154. [Google Scholar] [CrossRef]

- Schulz, P.; Ong, L.; Littlewood, P.; Abdullah, B.; Simsek, M.; Fettweis, G. End-to-End Latency Analysis in Wireless Networks with Queuing Models for General Prioritized Traffic. In Proceedings of the 2019 IEEE International Conference on Communications Workshops (ICC Workshops), Shanghai, China, 20–24 May 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Xu, Q.; Wang, J.; Wu, K. Learning-Based Dynamic Resource Provisioning for Network Slicing with Ensured End-to-End Performance Bound. IEEE Trans. Netw. Sci. Eng. 2020, 7, 28–41. [Google Scholar] [CrossRef]

- Xu, Q.; Wang, J.; Wu, K. Resource Capacity Analysis in Network Slicing with Ensured End-to-End Performance Bound. In Proceedings of the 2018 IEEE International Conference on Communications (ICC), Kansas City, MO, USA, 20–24 May 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Yu, B.; Chi, X.; Liu, X. Martingale-based Bandwidth Abstraction and Slice Instantiation under the End-to-end Latency-bounded Reliability Constraint. IEEE Commun. Lett. 2021, 1. [Google Scholar] [CrossRef]

- Sweidan, Z.; Islambouli, R.; Sharafeddine, S. Optimized flow assignment for applications with strict reliability and latency constraints using path diversity. J. Comput. Sci. 2020, 44, 101163. [Google Scholar] [CrossRef]

- Fantacci, R.; Picano, B. End-to-End Delay Bound for Wireless uVR Services Over 6G Terahertz Communications. IEEE Internet Things J. 2021, 8, 17090–17099. [Google Scholar] [CrossRef]

- Picano, B. End-to-End Delay Bound for VR Services in 6G Terahertz Networks with Heterogeneous Traffic and Different Scheduling Policies. Mathematics 2021, 9, 1638. [Google Scholar] [CrossRef]

- Liu, J.; Zhang, L.; Yang, K. Modeling Guaranteed Delay of Virtualized Wireless Networks Using Network Calculus. In Mobile and Ubiquitous Systems: Computing, Networking, and Services; Stojmenovic, I., Cheng, Z., Guo, S., Eds.; Springer: Cham, Switzerland, 2013. [Google Scholar]

- Chien, H.T.; Lin, Y.D.; Lai, C.L.; Wang, C.T. End-to-End Slicing With Optimized Communication and Computing Resource Allocation in Multi-Tenant 5G Systems. IEEE Trans. Veh. Technol. 2020, 69, 2079–2091. [Google Scholar] [CrossRef]

- Ye, Q.; Zhuang, W.; Li, X.; Rao, J. End-to-End Delay Modeling for Embedded VNF Chains in 5G Core Networks. IEEE Internet Things J. 2019, 6, 692–704. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Ameigeiras, P.; Ramos-Munoz, J.J.; Navarro-Ortiz, J.; Andres-Maldonado, P.; Lopez-Soler, J.M. Performance Modeling of Softwarized Network Services Based on Queuing Theory With Experimental Validation. IEEE Trans. Mob. Comput. 2021, 20, 1558–1573. [Google Scholar] [CrossRef]

- Kalør, A.E.; Guillaume, R.; Nielsen, J.J.; Mueller, A.; Popovski, P. Network Slicing in Industry 4.0 Applications: Abstraction Methods and End-to-End Analysis. IEEE Trans. Ind. Inform. 2018, 14, 5419–5427. [Google Scholar] [CrossRef]

- Yarkina, N.; Gaidamaka, Y.; Correia, L.M.; Samouylov, K. An Analytical Model for 5G Network Resource Sharing with Flexible SLA-Oriented Slice Isolation. Mathematics 2020, 8, 1177. [Google Scholar] [CrossRef]

- Rodriguez, V.Q.; Guillemin, F. Cloud-RAN modeling based on parallel processing. IEEE J. Sel. Areas Commun. 2018, 36, 457–468. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Taleb, T.; Bagaa, M. Optimization of Flow Allocation in Asynchronous Deterministic 5G Transport Networks by Leveraging Data Analytics. IEEE Trans. Mob. Comput. 2021, 1. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Laghrissi, A.; Bagaa, M.; Taleb, T.; Lopez-Soler, J.M. A Complete LTE Mathematical Framework for the Network Slice Planning of the EPC. IEEE Trans. Mob. Comput. 2020, 19, 1–14. [Google Scholar] [CrossRef]

- Karamyshev, A.; Khorov, E.; Krasilov, A.; Akyildiz, I. Fast and accurate analytical tools to estimate network capacity for URLLC in 5G systems. Comput. Netw. 2020, 178, 107331. [Google Scholar] [CrossRef]

- Lee, D.; Park, J.; Hiremath, C.; Mangan, J.; Lynch, M. Towards Achieving High Performance in 5G Mobile Packet Core’s User Plane Function; Intel Corporation: Mountain View, CA, USA, 2018. [Google Scholar]

- Prados-Garzon, J.; Laghrissi, A.; Bagaa, M.; Taleb, T. A Queuing Based Dynamic Auto Scaling Algorithm for the LTE EPC Control Plane. In Proceedings of the 2018 IEEE Global Communications Conference (GLOBECOM), Abu Dhabi, United Arab Emirates, 9–13 December 2018; pp. 1–7. [Google Scholar] [CrossRef]

- Afolabi, I.; Prados-Garzon, J.; Bagaa, M.; Taleb, T.; Ameigeiras, P. Dynamic Resource Provisioning of a Scalable E2E Network Slicing Orchestration System. IEEE Trans. Mob. Comput. 2020, 19, 2594–2608. [Google Scholar] [CrossRef]

- Ali, J.; Roh, B.H. An Effective Hierarchical Control Plane for Software-Defined Networks Leveraging TOPSIS for End-to-End QoS Class-Mapping. IEEE Access 2020, 8, 88990–89006. [Google Scholar] [CrossRef]

- Montero, R.; Agraz, F.; Pagès, A.; Spadaro, S. End-to-End Network Slicing in Support of Latency-Sensitive 5G Services. In Optical Network Design and Modeling; Tzanakaki, A., Varvarigos, M., Muñoz, R., Nejabati, R., Yoshikane, N., Anastasopoulos, M., Marquez-Barja, J., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 51–61. [Google Scholar]

- Bose, S.K. An Introduction to Queueing Systems; Springer Science & Business Media: New York, NY, USA, 2013. [Google Scholar]

- Le Boudec, J.Y.; Thiran, P. Network Calculus: A Theory of Deterministic Queuing Systems for the Internet; Springer Science & Business Media: New York, NY, USA, 2001; Volume 2050. [Google Scholar]

- Jiang, Y.; Liu, Y. Stochastic Network Calculus; Springer: Berlin/Heidelberg, Germany, 2008; Volume 1. [Google Scholar]

- Fidler, M.; Rizk, A. A Guide to the Stochastic Network Calculus. IEEE Commun. Surv. Tutor. 2015, 17, 92–105. [Google Scholar] [CrossRef]

- Whitt, W. The queueing network analyzer. Bell Syst. Tech. J. 1983, 62, 2779–2815. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Ameigeiras, P.; Ramos-Munoz, J.J.; Andres-Maldonado, P.; Lopez-Soler, J.M. Analytical modeling for Virtualized Network Functions. In Proceedings of the 2017 IEEE International Conference on Communications Workshops (ICC Workshops), Paris, France, 21–25 May 2017; pp. 979–985. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Taleb, T.; El Marai, O.; Bagaa, M. Closed-Form Expression for the Resources Dimensioning of Softwarized Network Services. In Proceedings of the 2019 IEEE Global Communications Conference (GLOBECOM), Waikoloa, HI, USA, 9–13 December 2019; pp. 1–6. [Google Scholar] [CrossRef]

- 3GPP TR38.802 V14.2.0; Technical Specification Group Radio Access Network. Study on New Radio Access Technology Physical Layer Aspects (Release 14). 3GPP: Valbonne, France, 2017.

- Nojima, D.; Katsumata, Y.; Shimojo, T.; Morihiro, Y.; Asai, T.; Yamada, A.; Iwashina, S. Resource Isolation in RAN Part While Utilizing Ordinary Scheduling Algorithm for Network Slicing. In Proceedings of the 2018 IEEE 87th Vehicular Technology Conference (VTC Spring), Porto, Portugal, 3–6 June 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Yang, X.; Liu, Y.; Wong, I.C.; Wang, Y.; Cuthbert, L. Effective isolation in dynamic network slicing. In Proceedings of the 2019 IEEE Wireless Communications and Networking Conference (WCNC), Marrakesh, Morocco, 15–18 April 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Bhattacharjee, S.; Katsalis, K.; Arouk, O.; Schmidt, R.; Wang, T.; An, X.; Bauschert, T.; Nikaein, N. Network Slicing for TSN-Based Transport Networks. IEEE Access 2021, 9, 62788–62809. [Google Scholar] [CrossRef]

- Kurtz, F.; Bektas, C.; Dorsch, N.; Wietfeld, C. Network Slicing for Critical Communications in Shared 5G Infrastructures—An Empirical Evaluation. In Proceedings of the 2018 4th IEEE Conference on Network Softwarization and Workshops (NetSoft), Montreal, QC, Canada, 25–29 June 2018; pp. 393–399. [Google Scholar] [CrossRef]

- Bektas, C.; Monhof, S.; Kurtz, F.; Wietfeld, C. Towards 5G: An Empirical Evaluation of Software-Defined End-to-End Network Slicing. In Proceedings of the 2018 IEEE Globecom Workshops (GC Wkshps), Abu Dhabi, United Arab Emirates, 9–13 December 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Kasgari, A.T.Z.; Saad, W. Stochastic optimization and control framework for 5G network slicing with effective isolation. In Proceedings of the 2018 52nd Annual Conference on Information Sciences and Systems (CISS), Princeton, NJ, USA, 21–23 March 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Sattar, D.; Matrawy, A. Optimal Slice Allocation in 5G Core Networks. IEEE Netw. Lett. 2019, 1, 48–51. [Google Scholar] [CrossRef]

- Key 5G Use Cases and Requirements: From the Viewpoint of Operational Technology Providers; White Paper; 5G-ACIA: Frankfurt am Main, Germany, 2020.

- Yu, H.; Musumeci, F.; Zhang, J.; Xiao, Y.; Tornatore, M.; Ji, Y. DU/CU Placement for C-RAN over Optical Metro-Aggregation Networks. In Optical Network Design and Modeling; Tzanakaki, A., Varvarigos, M., Muñoz, R., Nejabati, R., Yoshikane, N., Anastasopoulos, M., Marquez-Barja, J., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 82–93. [Google Scholar]

- Debaillie, B.; Desset, C.; Louagie, F. A Flexible and Future-Proof Power Model for Cellular Base Stations. In Proceedings of the 2015 IEEE 81st Vehicular Technology Conference (VTC Spring), Glasgow, UK, 11–14 May 2015; pp. 1–7. [Google Scholar] [CrossRef]

- Nikaein, N. Processing Radio Access Network Functions in the Cloud: Critical Issues and Modeling. In Proceedings of the 6th International Workshop on Mobile Cloud Computing and Services, MCS ’15, Paris, France, 11 September 2015; Association for Computing Machinery: New York, NY, USA, 2015; pp. 36–43. [Google Scholar] [CrossRef]

- Li, C.P.; Jiang, J.; Chen, W.; Ji, T.; Smee, J. 5G ultra-reliable and low-latency systems design. In Proceedings of the 2017 European Conference on Networks and Communications (EuCNC), Oulu, Finland, 12–15 June 2017; pp. 1–5. [Google Scholar] [CrossRef]

- Polyanskiy, Y.; Poor, H.V.; Verdu, S. Channel Coding Rate in the Finite Blocklength Regime. IEEE Trans. Inf. Theory 2010, 56, 2307–2359. [Google Scholar] [CrossRef]

- Tang, J.; Shim, B.; Quek, T.Q.S. Service Multiplexing and Revenue Maximization in Sliced C-RAN Incorporated With URLLC and Multicast eMBB. IEEE J. Sel. Areas Commun. 2019, 37, 881–895. [Google Scholar] [CrossRef]

- Schiessl, S.; Gross, J.; Al-Zubaidy, H. Delay analysis for wireless fading channels with finite blocklength channel coding. In Proceedings of the 18th ACM International Conference on Modeling, Analysis and Simulation of Wireless and Mobile Systems, Cancun, Mexico, 2–6 November 2015; pp. 13–22. [Google Scholar]

- Bouillard, A.; Jouhet, L.; Thierry, E. Tight Performance Bounds in the Worst-Case Analysis of Feed-Forward Networks. In Proceedings of the 2010 IEEE INFOCOM, San Diego, CA, USA, 14–19 March 2010; pp. 1–9. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Taleb, T.; Bagaa, M. LEARNET: Reinforcement Learning Based Flow Scheduling for Asynchronous Deterministic Networks. In Proceedings of the ICC 2020–2020 IEEE International Conference on Communications (ICC), Dublin, Ireland, 7–11 June 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Chinchilla-Romero, L.; Ameigeiras, P.; Muñoz, P.; Lopez-Soler, J.M. Asynchronous Time-Sensitive Networking for Industrial Networks. In Proceedings of the 2021 Joint European Conference on Networks and Communications 6G Summit (EuCNC/6G Summit), Porto, Portugal, 8–11 June 2021; pp. 130–135. [Google Scholar] [CrossRef]

- Nasrallah, A.; Thyagaturu, A.S.; Alharbi, Z.; Wang, C.; Shao, X.; Reisslein, M.; Elbakoury, H. Performance Comparison of IEEE 802.1 TSN Time Aware Shaper (TAS) and Asynchronous Traffic Shaper (ATS). IEEE Access 2019, 7, 44165–44181. [Google Scholar] [CrossRef]

- Prados-Garzon, J.; Taleb, T. Asynchronous Time-Sensitive Networking for 5G Backhauling. IEEE Netw. 2021, 35, 144–151. [Google Scholar] [CrossRef]

- Specht, J.; Samii, S. Urgency-Based Scheduler for Time-Sensitive Switched Ethernet Networks. In Proceedings of the 2016 28th Euromicro Conference on Real-Time Systems (ECRTS), Toulouse, France, 5–8 July 2016; pp. 75–85. [Google Scholar] [CrossRef]

- Specht, J.; Samii, S. Synthesis of Queue and Priority Assignment for Asynchronous Traffic Shaping in Switched Ethernet. In Proceedings of the 2017 IEEE Real-Time Systems Symposium (RTSS), Paris, France, 5–8 December 2017; pp. 178–187. [Google Scholar] [CrossRef]

- IEEE P802. 1Qcr D; IEEE Draft Standard for Local and Metropolitan Area Networks—Media Access Control (MAC) Bridges and Virtual Bridged Local Area Networks Amendment: Asynchronous Traffic Shaping. IEEE Standards Association: Piscataway, NJ, USA, 2017; pp. 1–151.

- Mhedhbi, M.; Morcos, M.; Galindo-Serrano, A.; Elayoubi, S.E. Performance Evaluation of 5G Radio Configurations for Industry 4.0. In Proceedings of the 2019 International Conference on Wireless and Mobile Computing, Networking and Communications (WiMob), Barcelona, Spain, 21–23 October 2019; pp. 1–6. [Google Scholar] [CrossRef]

- A 5G Traffic Model for Industrial Use Cases; White Paper; 5G-ACIA: Frankfurt am Main, Germany, 2019.

- 3GPP TS22.104 V17.4.0; Service Requirements for Cyber-Physical Control Applications in Vertical Domains. 3GPP: Valbonne, France, 2020.

| References | Mathematical Framework | Description | ||

|---|---|---|---|---|

| QT | DNC | SNC | ||

| Schulz et al. [16] | ✗ | This work aims to provide an E2E delay model for a mobile network. To that end, it proposes a model to estimate the sojourn time distribution of the GI/GI/1 queue and assumes Kleinrock’s independence approximation. The model is tested and validated for a single M/D/1 queue with different scheduling policies. | ||

| Ye et al. [25] | ✗ | This article models the E2E delay traversing a VNF chain. The primary assumption considered is the system bottlenecks are the CPU processing and link transmission, both following a generalized processor sharing discipline for service. The proposed model consists of an independent tandem of M/D/1 queues for each flow. | ||

| Xu et al. [17,18] | ✗ | This paper derives statistical E2E delay bounds for network slicing considering Gaussian traffic and deterministic service. This model is leveraged to perform dynamic resource provisioning, i.e., to adjust the slice allocated resources according to the traffic fluctuations. Specifically, resource dimensioning is carried out using the derived performance bounds. | ||

| Yu et al. [19] | ✗ | This article provides stochastic performance bounds for network slices using martingale-based approaches. The resulting bounds are employed to translate delay requirements into bandwidth ones and to estimate the power allocation at the RAN considering an ALOHA-like medium access technique for URLLC traffic. | ||

| Sweidan et al. [20] | ✗ | This work studies the joint problem of E2E networks slices composition, the mapping of URLLC applications to slices, and multiple disjoint paths to slices assignment. It models the E2E mean delay of a network slice as an open network of M/M/1 queues. | ||

| Fantacci et al. [21] | ✗ | This article relies on martingale theory to derive statistical bounds of the slices E2E packet delay. The model is applied for virtual network embedding. It focuses on ultimate VR services operated in 6G Terahertz networks. They validate the bounds through simulation and compare their accuracy with an equivalent Markov tandem queue model. | ||

| Liu et al. [23] | ✗ | This paper presents a worst-case delay model for virtual wireless networks, including physical and virtual nodes. It considers that different slices might receive differentiated treatment through the use of virtual queues. | ||

| Picano [22] | ✗ | This work aims to evaluate the performance of the Sixth Generation (6G) pervasive edge computing network for handling virtual reality traffic for two scheduling policies, namely, First Come, First Served (FCFS) and earliest deadline first. To that end, it relies on a martingale-based model similar to the one proposed in [21]. | ||

| Chien et al. [24] | ✗ | This article proposes a solution for slices capacity allocation and traffic offloading from the central office to the edge cloud. The solution relies on an E2E mean delay model consisting of a feedforward network of M/M/1 queues, each standing for either a node or a link. The solution is validated experimentally. | ||

| Kalør et al. [27] | ✗ | This paper focuses on modeling the E2E delay of URLLC network slices using DNC for deterministic and switched networks. It presents an industrial medicine manufacturing system as a case study to illustrate the usefulness of DNC for analyzing the worst-case E2E delay of network slices. | ||

| Notation | Description |

|---|---|

| Variables related to the E2E mean response time computation | |

| K | Number of queues in the queuing network. |

| Constant delays in the system. | |

| T | Mean response time of a network slice. |

| Mean sojourn time at queue k. | |

| Visit ratio of queue k. | |

| Mean external arrival rate at queue k. | |

| SCV of the external arrival process at queue k. | |

| Number of servers at queue k. | |

| SCV of the inter-arrival packet times at queue k. | |

| Average service rate at queue k. | |

| Average service rate at queue k for priority class p. | |

| SCV of the service time at queue k. | |

| SCV of the service time at queue k for priority class p. | |

| Probability that a packet leaves node i to node k. | |

| Multiplicative factor for the flow leaving queue i. | |

| Link delay between queues i and k. | |

| The Erlang’s C formula. | |

| , | System of equations coefficients for computing the mean and squared coefficient of variation (SCV) of the inter-arrival packet times to every queue. |

| , , | Auxiliary variables for and computation. |

| Proportion of arrivals to node k from its external arrival process. | |

| Proportion of arrivals from node i to node k. | |

| Link utilization at queue k. | |

| Link utilization for queue k for priority class p. | |

| Mean delay of a non-preemptive multi-priority queue for priority class p. | |

| Mean delay estimation of a G/G/m queue. | |

| Aggregated arrival rate at queue k. | |

| Aggregated mean packet arrival rate of queue k for priority class p. | |

| Variables of service processes related input parameters | |

| L | Average packet size. |

| C | Nominal link capacity. |

| UPF packet processing rate per physical CPU core. | |

| Number of instructions to be executed to process a single packet. | |

| CPU power. | |

| gNB-CU serving rate. | |

| CPU power. | |

| Number of Giga OPerationss (GOPs) required to process a single packet in a given gNB-CU instance. | |

| gNB-DU average packet rate. | |

| Dynamic processing component. | |

| Remainder user processing component. | |

| RU packet processing rate. | |

| Base offset for the cell processing. | |

| Base offset for the platform processing. | |

| Number of servers in the radio interface. | |

| Number of PRBs available at the radio interface. | |

| Average number of PRBs required to serve a single packet. | |

| Service rate at the radio interface. | |

| Time slot duration. | |

| Parameters | Value |

|---|---|

| Number of production lines | 4 |

| Number of URLLC flows per production line | 56 |

| URLLC service | Motion Control (MC) [70,71] |

| Packet delay budget MC | 1 ms [70,71] |

| Packet length MC | 80 bytes |

| Sustainable rate per MC flow | 1.55 Mbps [70,71] |

| Burstiness per MC flow | 2592 bits |

| eMBB traffic generated from server #3 to each Wi-Fi AP | AP#1: 330 Mbps, AP#2: 330 Mbps, AP#3: 330 Mbps AP#4: 800 Mbps, AP#5: 800 Mbps |

| eMBB packet size | 1500 bytes |

| UPF service rate per processing unit (CPU core) | 357,140 packets per second (from data included in [33]) |

| SCV of the UPF service time | 0.65 (from experimental measurements in [26]) |

| gNB-CU service rate per processing unit (CPU core) | 601,340 packets per second (from data included in [55]) |

| SCV of the gNB-CU service time | 0.65 (from experimental measurements in [26]) |

| CPU core power (Intel Xeon Platinum 8180) | 25.657 GOPS |

| gNB-DU service rate per processing unit (CPU core) | Substitute , , and in (17) (fittings derived from experimental data in [56]) |

| SCV of the gNB-DU service time | 1 |

| gNB-RU service rate per processing unit | Substitute , and in (18) (fittings derived from experimental data in [56]) |

| SCV of the gNB-RU service time | 1 |

| Processing units allocated to each network component. The number of processing units were designed to ensure that the utilization of the computing resources for every component is lower than 75%. | Configuration 1: UPF: 1 CPU core (Intel Xeon 8081) gNB-CU: 1 CPU core (Intel Xeon 8081) gNB-DU: 24 CPU cores (Intel SandyBridge i7-3930K @3.20Ghz gNB-RU: 3 CPU cores (Intel SandyBridge i7-3930K @3.20Ghz) Configuration 2: UPF: 3 CPU cores (Intel Xeon 8081) gNB-CU: 2 CPU cores (Intel Xeon 8081) gNB-DU: 96 CPU cores (Intel SandyBridge i7-3930K @3.20Ghz) gNB-RU: 10 CPU cores (Intel SandyBridge i7-3930K @3.20Ghz) |

| Visit ratios of the UPF and gNB-CU | 1 |

| Visit ratios of the gNB-DU, gNB-RU and radio interface | 0.5 |

| TSN links capacities | All links have a capacity of 1 Gbps |

| MC traffic-to-priority level assignment at every TSN bridge output port | 1 (1 is the highest priority level and 8 is the lowest) |

| eMBB traffic-to-priority level assignment at every TSN bridge output port | 8 |

| PRB bandwidth | 180 kHz |

| Radio interface time slot duration | 142.8 s |

| Number of PRBs dedicated for each URLLC slice per gNB | Configuration 1: Slice#1: gNB#1: 166, gNB#2: 166, gNB#3: 0, gNB#4: 0 Slice#2: gNB#1: 166, gNB#2: 166, gNB#3: 0, gNB#4: 0 Slice#3: gNB#1: 0, gNB#2: 0, gNB#3: 166, gNB#4: 166 Slice#4: gNB#1: 0, gNB#2: 0, gNB#3: 166, gNB#4: 166 Configuration 2: Slice#1: gNB#1: 333, gNB#2: 333, gNB#3: 333, gNB#4: 333 |

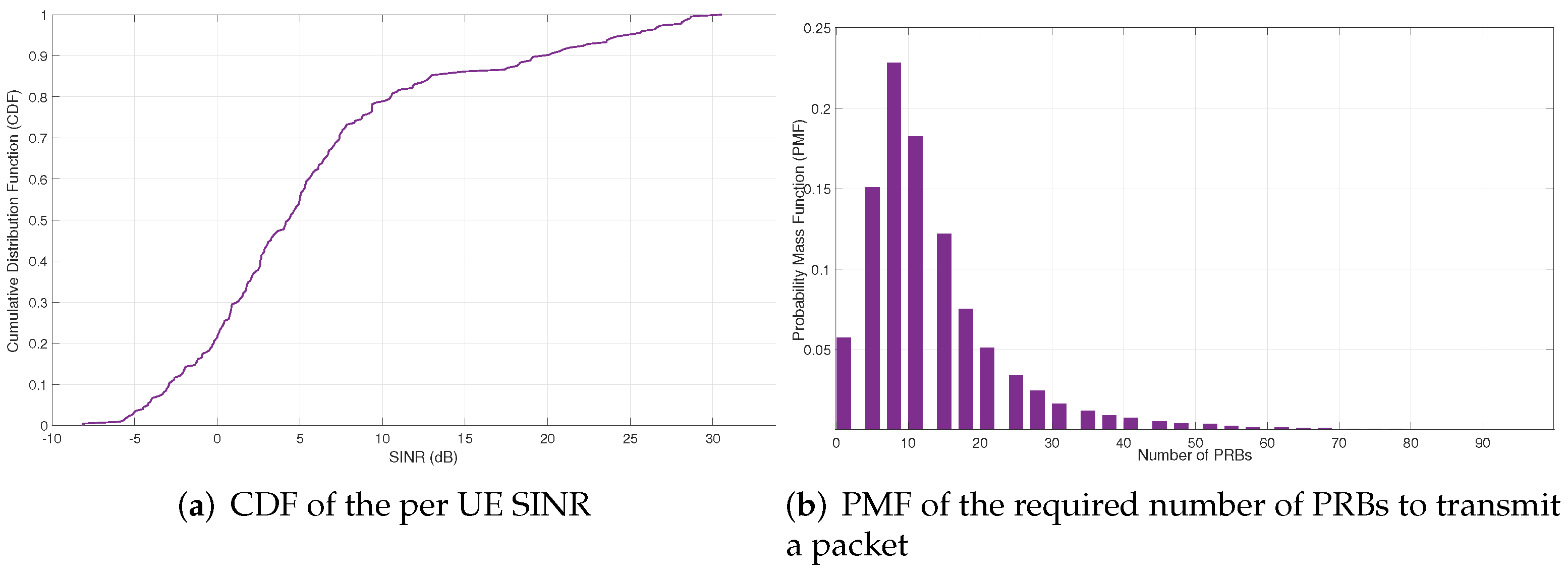

| Mean number of PRBs required to transmit a URLLC packet at the radio interface () | 15.8 |

| Average spectral efficiency per user | 2.8173 bps/Hz (MCS index=22) |

| Average SINR per user | 3.5368 dB |

| External arrival process (to the UPF) | Poissonian |

| ConFigure 1.A | ConFigure 1.B | ConFigure 2.A | ConFigure 2.B | |

|---|---|---|---|---|

| TN | PL#1-2: 111.10-121.40 PL#3-4: 2.25 | PL#1-2: 40.16-41.87 PL#3-4: 2.07 | PL#1-2: 40.16-41.87 PL#3-4: 2.07 | PL#1-2: 12.18 PL#3-4: 2.07 |

| UPF | PL#1: 4.22-4.28 PL#2-4: 4.21 | PL#1: 4.41-183.20 PL#2-4: 4.21 | PL#1-4: 3.18-3.23 | PL#1-4: 3.21 |

| CU | PL#1: 2.09-2.10 PL#2-4: 2.09 | PL#1: 2.13-4.36 PL#2-4: 2.09 | PL#1-4: 2.04-2.08 | PL#1-4: 2.06-7.94 |

| DU | PL#1: 13.25 PL#2-4: 13.25 | PL#1-4: 132.50 | PL#1-4: 132.50 | PL#1-4: 132.50 |

| RU | PL#1:1-2: 13.13-13-16 PL#2-4: 13.13 | PL#1-4: 13.13 | PL#1-2: 12.88-12.93 PL#3-4: 12.88 | PL#1-4: 12.87 |

| NR-Uu | PL#1: 379.80-3229.00 PL#2-4: 367.60 | PL#1-4: 367.60 | PL#1-2: 174.20-1114.00 PL#3-4: 174.20 | PL#1-4: 172.60 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chinchilla-Romero, L.; Prados-Garzon, J.; Ameigeiras, P.; Muñoz, P.; Lopez-Soler, J.M. 5G Infrastructure Network Slicing: E2E Mean Delay Model and Effectiveness Assessment to Reduce Downtimes in Industry 4.0. Sensors 2022, 22, 229. https://doi.org/10.3390/s22010229

Chinchilla-Romero L, Prados-Garzon J, Ameigeiras P, Muñoz P, Lopez-Soler JM. 5G Infrastructure Network Slicing: E2E Mean Delay Model and Effectiveness Assessment to Reduce Downtimes in Industry 4.0. Sensors. 2022; 22(1):229. https://doi.org/10.3390/s22010229

Chicago/Turabian StyleChinchilla-Romero, Lorena, Jonathan Prados-Garzon, Pablo Ameigeiras, Pablo Muñoz, and Juan M. Lopez-Soler. 2022. "5G Infrastructure Network Slicing: E2E Mean Delay Model and Effectiveness Assessment to Reduce Downtimes in Industry 4.0" Sensors 22, no. 1: 229. https://doi.org/10.3390/s22010229

APA StyleChinchilla-Romero, L., Prados-Garzon, J., Ameigeiras, P., Muñoz, P., & Lopez-Soler, J. M. (2022). 5G Infrastructure Network Slicing: E2E Mean Delay Model and Effectiveness Assessment to Reduce Downtimes in Industry 4.0. Sensors, 22(1), 229. https://doi.org/10.3390/s22010229