Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization

Abstract

:1. Introduction

- Investigating feasibility of deep transfer learning technique in repetitive FMG-based pHRI applications utilizing inter-session FMG data for the first time;

- Proposing a unified transfer learner for both supervised domain adaptation and domain generalization;

- Leveraging periodical calibration as needed with less data than normally required; and

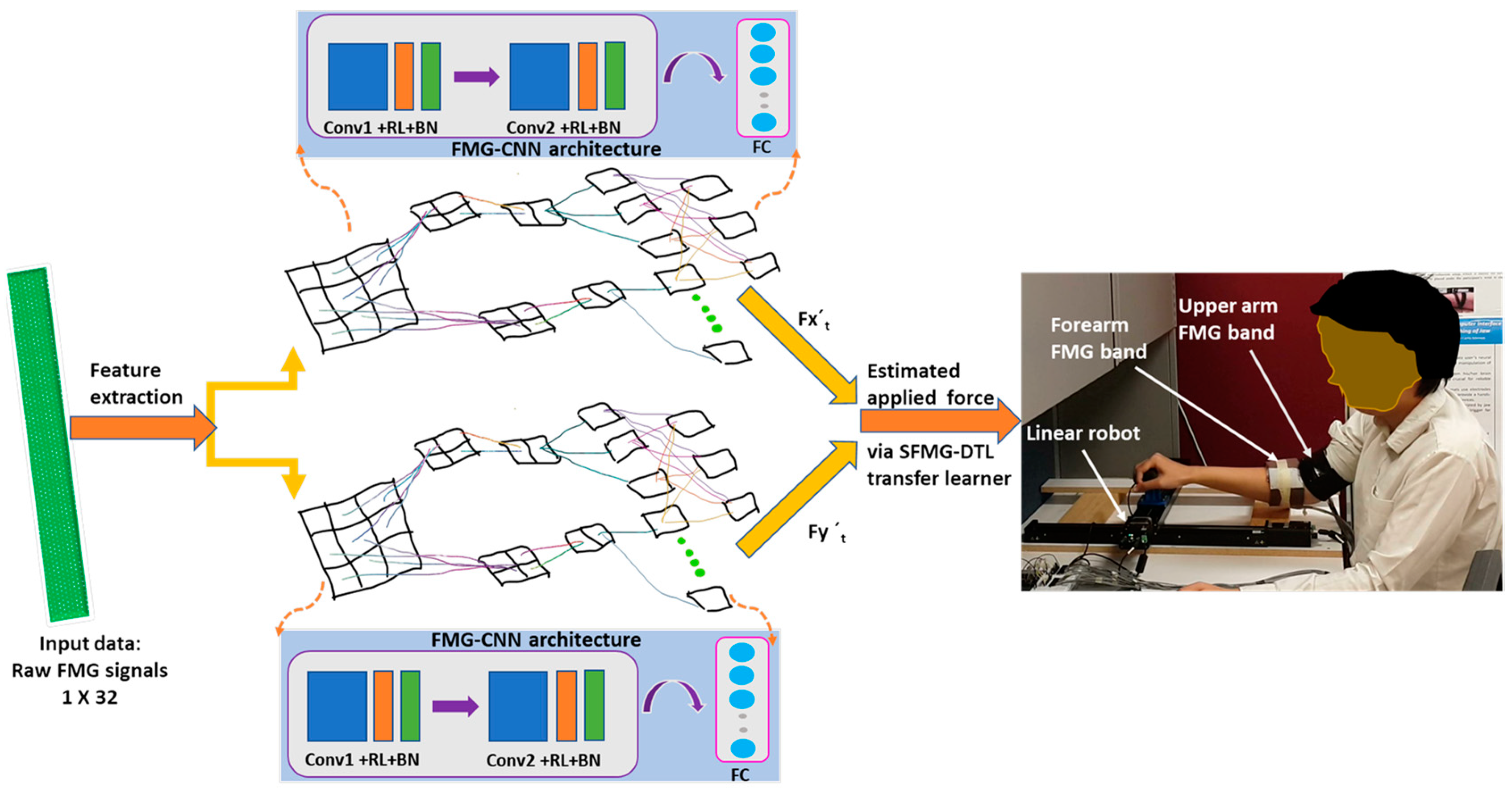

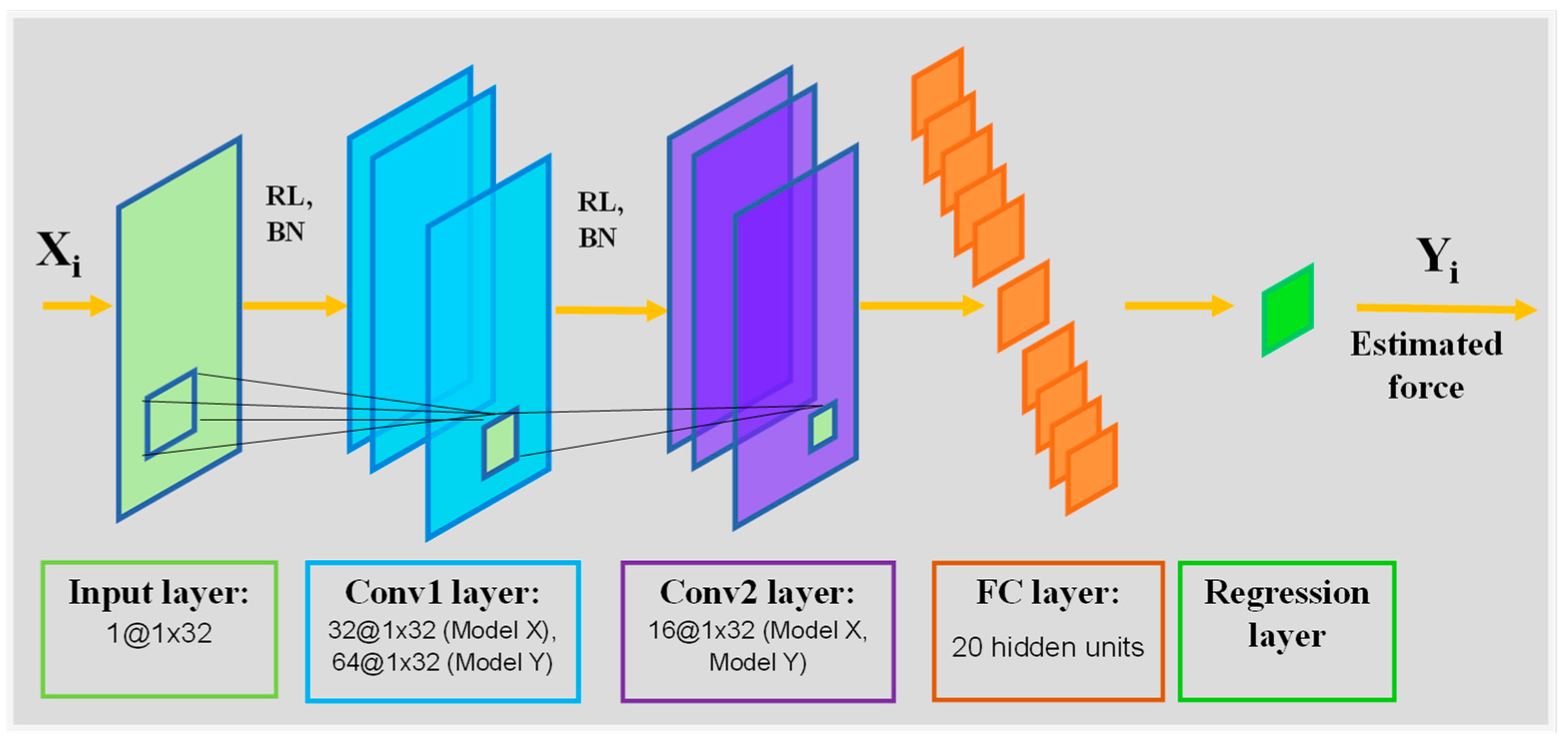

- Proposing a nonlinear FMG-CNN regression architecture for mapping applied force from FMG signals without requiring biomechanical modelling of the human arm.

2. Materials and Methods

2.1. Problem Statement

2.1.1. Source and Target Domain

2.1.2. Applied Interaction Force Estimation

2.2. Experimental Setup

2.3. Proposed FMG-CNN Architecture

2.4. Protocol

2.4.1. Training Phase

Multiple-Source Data Collection

Pretraining Deep Learning Model

2.4.2. Evaluation Phase

Case i: Evaluating Intra-Subject/Inter-Session Target Domain (Dt-SDA, Tt-SDA) via Domain Adaptation (Ds ≠ Dt, Ts ≈ Tt)

Case ii: Evaluating Cross-Subject/Inter-Participant Target Domain (Dt-SDG, Tt-SDG) via Domain Generalization (Ds ≠ Dt, Ts ≠ Tt)

2.5. Performance Matrices

2.5.1. Statistical Tools and Tests

2.5.2. ML and DL Algorithms

3. Results

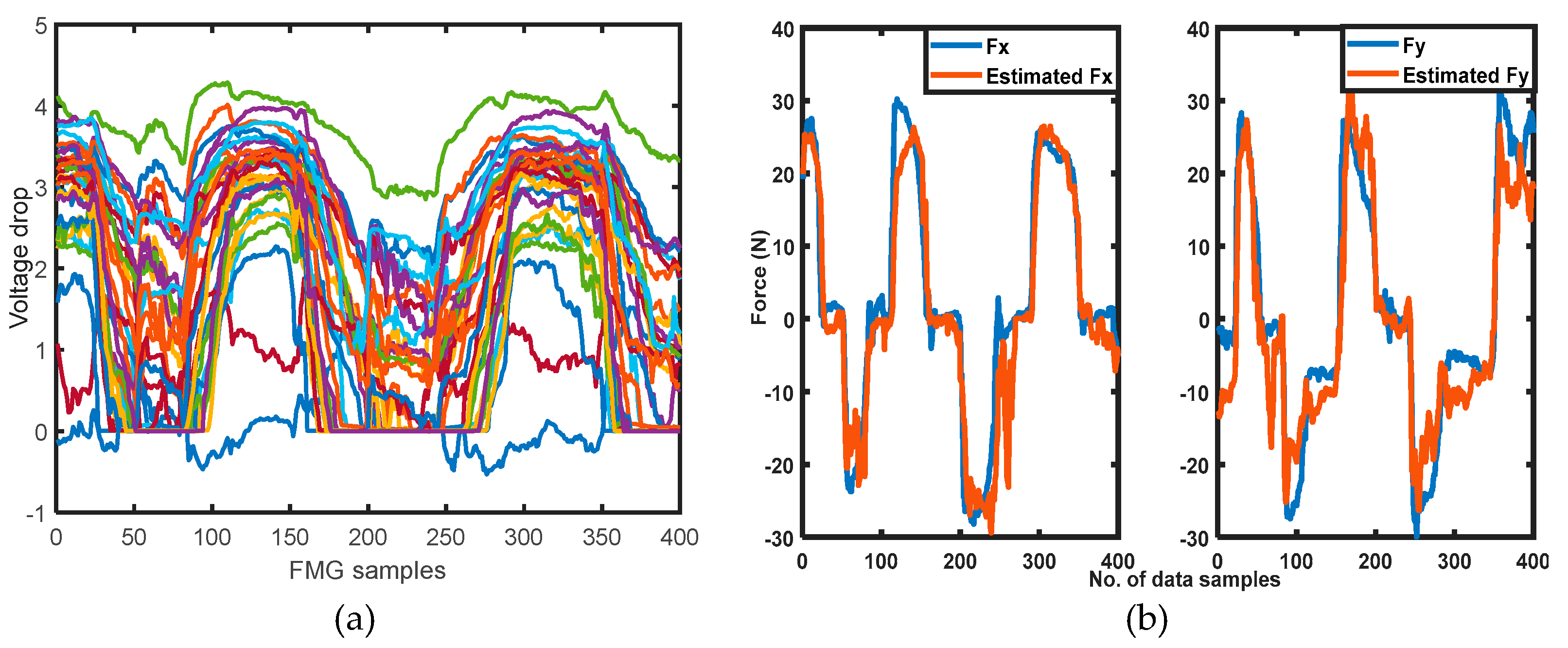

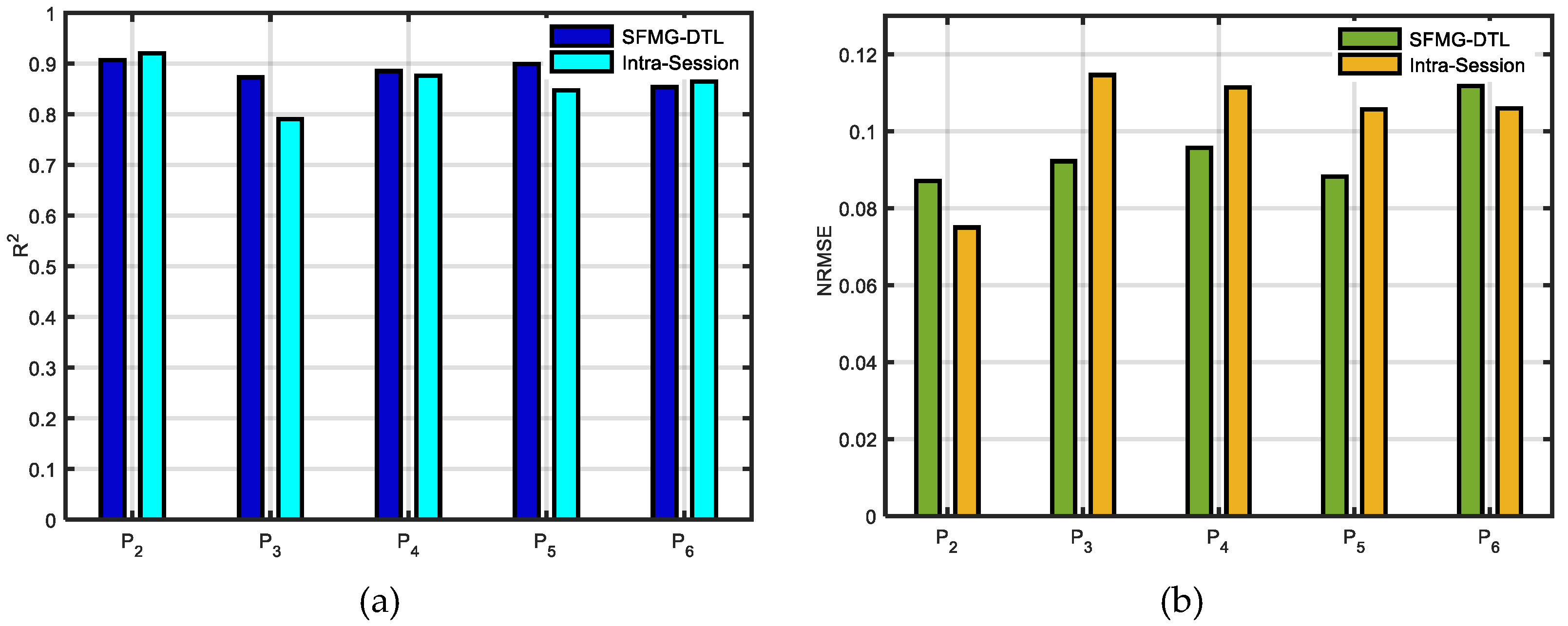

3.1. Supervised Domain Adaptation

3.2. Supervised Domain Generalization

4. Discussions

4.1. Viability of Calibration

4.2. Viability of SDG

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Xiao, Z.G.; Menon, C. Towards the development of a wearable feedback system for monitoring the activities of the upper-extremities. J. NeuroEng. Rehabil. 2014, 11, 2. [Google Scholar] [CrossRef] [Green Version]

- Li, Y.; Zhang, Q.; Zeng, N.; Chen, J.; Zhang, Q. Discrete hand motion intention decoding based on transient myoelectric signals. IEEE Access 2019, 7, 81360–81369. [Google Scholar] [CrossRef]

- Duan, F.; Dai, L.; Chang, W.; Chen, Z.; Zhu, C.; Li, W. sEMG-based identification of hand motion commands using wavelet neural network combined with discrete wavelet transform. IEEE Trans. Indust. Elect. 2016, 63, 1923–1934. [Google Scholar] [CrossRef]

- Allard, U.C.; Nougarou, F.; Fall, C.L.; Giguère, P.; Gosselin, C.; Laviolette, F.; Gosselin, B. A convolutional neural network for robotic arm guidance using sEMG based frequency-features. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2016), Daejeon, Korea, 9–14 October 2016; pp. 2464–2470. [Google Scholar]

- Meattini, R.; Benatti, S.; Scarcia, U.; De Gregorio, D.; Benini, L.; Melchiorri, C. An sEMG-based human–robot interface for robotic hands using machine learning and synergies. IEEE Trans. Compon. Packag. Manuf. Tech. 2018, 8, 1149–1158. [Google Scholar] [CrossRef]

- Oskoei, M.A.; Hu, H. Myoelectric control systems—A survey. Biomed. Signal Process. Control 2007, 2, 275–294. [Google Scholar] [CrossRef]

- Xiao, Z.G.; Menon, C. A review of force myography research and development. Sensors 2019, 19, 4557. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Jiang, X.; Merhi, L.K.; Xiao, Z.G.; Menon, C. Exploration of force myography and surface electromyography in hand gesture classification. Med. Eng. Phys. 2017, 41, 63–73. [Google Scholar] [CrossRef]

- Belyea, A.; Englehart, K.; Scheme, E. FMG Versus EMG: A comparison of usability for real-time motion recognition based control. IEEE Trans. Biomed. Eng. 2019, 66, 3098–3104. [Google Scholar] [CrossRef] [PubMed]

- Radmand, A.; Scheme, E.; Englehart, K. High-density force myography: A possible alternative for upper-limb prosthetic control. J. Rehab. R. D. (JRRD) 2016, 53, 443–456. [Google Scholar] [CrossRef]

- Ha, N.; Withanachchi, G.P.; Yihun, Y. Performance of Forearm FMG for Estimating Hand Gestures and Prosthetic Hand Control. J. Bionic Eng. 2019, 16, 88–98. [Google Scholar] [CrossRef]

- Godiyal, A.K.; Verma, H.K.; Khanna, N.; Joshi, D. A force myography-based system for gait event detection in overground and ramp walking. IEEE Trans. Instrum. Meas. 2018, 67, 2314–2323. [Google Scholar] [CrossRef]

- Godiyal, A.K.; Mondal, M.; Joshi, S.D.; Joshi, D. Force Myography Based Novel Strategy for Locomotion Classification. IEEE Trans. Human-Mach. Syst. 2018, 48, 648–657. [Google Scholar] [CrossRef]

- Zakia, U.; Jiang, X.; Menon, C. Deep learning technique in recognizing hand grasps using FMG signals. In Proceedings of the 2020 11th IEEE Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON), Vancouver, BC, Canada, 4–7 November 2020; pp. 0546–0552. [Google Scholar] [CrossRef]

- Andersen, T.B.; Eliasen, R.; Jarlund, M.; Yang, B. Force myography benchmark data for hand gesture recognition and transfer learning. arXiv 2020, arXiv:2007.14918. [Google Scholar]

- Anvaripour, M.; Khoshnam, M.; Menon, C.; Saif, M. FMG- and RNN-Based Estimation of Motor Intention of Upper-Limb Motion in Human-Robot Collaboration. Front. Robot. AI 2020, 7, 183. [Google Scholar] [CrossRef]

- Scheme, E.; Englehart, K. Electromyogram pattern recognition for control of powered upper-limb prostheses: State of the art and challenges for clinical use. J. Rehab. R. D. 2011, 48, 643–659. [Google Scholar] [CrossRef]

- Côté-Allard, U.; Fall, C.L.; Drouin, A.; Campeau-Lecours, A.; Gosselin, C.; Glette, K.; Gosselin, B. Deep Learning for Electromyographic Hand Gesture Signal Classification Using Transfer Learning. IEEE Trans. Neural Sys. Rehab. Eng. 2019, 27, 760–771. [Google Scholar] [CrossRef] [Green Version]

- Côté-Allard, U.; Fall, C.L.; Campeau-Lecours, A.; Gosselin, C.; Laviolette, F.; Gosselin, B. Transfer learning for sEMG hand gestures recognition using convolutional neural networks. In Proceedings of the IEEE International Conference on Systems, Man, and Cybernetics (SMC), Banff, AB, Canada, 5–8 October 2017; pp. 1663–1668. [Google Scholar] [CrossRef]

- Kobylarz, J.; Bird, J.J.; Faria, D.R.; Ribeiro, E.P.; Ekárt, A. Thumbs up, thumbs down: Non-verbal human-robot interaction through real-time EMG classification via inductive and supervised transductive transfer learning. J. Ambient Intell. Human Comput. 2020, 11, 6021–6031. [Google Scholar] [CrossRef] [Green Version]

- Du, Y.; Jin, W.; Wei, W.; Hu, Y.; Geng, W. Surface EMG-Based Inter-Session Gesture Recognition Enhanced by Deep Domain Adaptation. Sensors 2017, 17, 458. [Google Scholar] [CrossRef] [Green Version]

- Kanoga, S.; Matsuoka, M.; Kanemura, A. Transfer Learning Over Time and Position in Wearable Myoelectric Control Systems. In Proceedings of the 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 18–21 July 2018; pp. 1–4. [Google Scholar] [CrossRef]

- Prahm, C.; Schulz, A.; Paaßen, B.; Schoisswohl, J.; Kaniusas, E.; Dorffner, G.; Aszmann, O. Counteracting Electrode Shifts in Upper-Limb Prosthesis Control via Transfer Learning. IEEE Trans. Neural Sys. Rehab. Eng. 2019, 27, 956–962. [Google Scholar] [CrossRef] [Green Version]

- Prahm, C.; Paassen, B.; Schulz, A.; Hammer, B.; Aszmann, O. Transfer Learning for Rapid Re-calibration of a Myoelectric Prosthesis After Electrode Shift. In Converging Clinical and Engineering Research on Neurorehabilitation II. Biosystems & Biorobotics; Springer: Berlin/Heidelberg, Germany, 2017; p. 15. [Google Scholar] [CrossRef] [Green Version]

- Jiang, X.; Bardizbanian, B.; Dai, C.; Chen, W.; Clancy, E.A. Data Management for Transfer Learning Approaches to Elbow EMG-Torque Modeling. IEEE Trans. Biomed. Eng. 2021, 2592–2601. [Google Scholar] [CrossRef]

- Vidovic, M.M.C.; Hwang, H.J.; Amsüss, S.; Hahne, J.M.; Farina, D.; Müller, K.R. Improving the Robustness of Myoelectric Pattern Recognition for Upper Limb Prostheses by Covariate Shift Adaptation. IEEE Trans. Neural Syst. Rehabil. Eng. 2016, 24, 961–970. [Google Scholar] [CrossRef]

- Côté-Allard, U.; Gagnon-Turcotte, G.; Phinyomark, A.; Glette, K.; Scheme, E.J.; Laviolette, F.; Gosselin, B. Unsupervised Domain Adversarial Self-Calibration for Electromyography-Based Gesture Recognition. IEEE Access 2020, 8, 177941–177955. [Google Scholar] [CrossRef]

- Jiang, X.; Merhi, L.K.; Menon, C. Force exertion affects grasp classification using force myography. IEEE Trans. Human-Mach. Syst. 2017, 48, 219–226. [Google Scholar] [CrossRef]

- Sakr, M.; Jiang, X.; Menon, C. Estimation of user-applied isometric force/torque using upper extremity force myography. Front. Robot. AI 2019, 6, 120. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sakr, M.; Menon, C. Exploratory evaluation of the force myography (fmg) signals usage for admittance control of a linear actuator. In Proceedings of the IEEE International Conference on Biomedical Robotics and Biomechatronics, Twente, The Netherlands, 26–29 August 2018; pp. 903–908. [Google Scholar] [CrossRef]

- Zakia, U.; Menon, C. Estimating exerted hand force via force myography to interact with a biaxial stage in real-time by learning human intentions: A preliminary investigation. Sensors 2020, 20, 2104. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Zakia, U.; Menon, C. Toward Long-Term FMG Model-Based Estimation of Applied Hand Force in Dynamic Motion During Human–Robot Interactions. IEEE Trans. Human-Mach. Syst. 2021, 51, 310–323. [Google Scholar] [CrossRef]

- Ghifary, M.; Balduzzi, D.; Kleijn, W.B.; Zhang, M. Scatter Component Analysis: A Unified Framework for Domain Adaptation and Domain Generalization. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1414–1430. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wouter, K.; Marco, L. An introduction to domain adaptation and transfer learning. arXiv 2018, arXiv:1812.11806. [Google Scholar]

- Pan, S.; Yang, J. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Yang, Q.; Zhang, Y.; Dai, W.; Pan, S.J. Transfer Learning; Cambridge University Press: Cambridge, UK, 2020. [Google Scholar]

- Motiian, S.; Piccirilli, M.; Adjeroh, D.A.; Doretto, G. Unified Deep Supervised Domain Adaptation and Generalization. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 5716–5726. [Google Scholar] [CrossRef] [Green Version]

- Field, A. Discovering Statistics Using IBM SPSS, 5th ed.; Sage: Los Angeles, LA, USA, 2017. [Google Scholar]

| Acronyms | Meaning | Acronyms | Meaning |

|---|---|---|---|

| SDA | Supervised domain adaptation | SQ-1 | Interaction force in square motion with variable sizes in domain adaptation |

| SDG | Supervised domain generalization | SQ-2 | Interaction force in square motion in domain generalization |

| Ds | Source domain | Dt-SDA, Tt-SDA | Target domain and target task in inter-session SDA |

| Dt | Target domain | Dt-SDG, Tt-SDG | Target domain amd target task in inter-participant SDG |

| Ts | Source task | Dsi | Multiple source domains |

| Tt | Target task | Fxt’ | Estimated applied forces in X dimension |

| Cd | Calibration data | Fyt’ | Estimated applied forces in Y dimension |

| Pretraining Phase | Evaluation Phase | ||||

|---|---|---|---|---|---|

| Source Domain | Hyper Parameters | Target Domain | Hyper Parameters | Fine Tuning | Target Test Data |

| = {Xs, Ys} {P1}, where, = 8400 × 32 samples, TSDA: applied force in SQ-1 motion | SGD Epochs: 40 LR: 1E-4 | case i. Dt-SDA= {Xs, Ys} {P1} where TSDA: applied force in SQ-1 motion | SGD Epochs: 60 LR: 1E-5 | Cd = {Xc, Yc} 1200 × 32 samples | Dt = {Xt, Yt} 400 × 32 samples |

| case ii. Dt-SDG = {Xs, Ys} {P2, …, P6}, where TSDG: applied force in SQ-2 motion | |||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zakia, U.; Menon, C. Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization. Sensors 2022, 22, 211. https://doi.org/10.3390/s22010211

Zakia U, Menon C. Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization. Sensors. 2022; 22(1):211. https://doi.org/10.3390/s22010211

Chicago/Turabian StyleZakia, Umme, and Carlo Menon. 2022. "Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization" Sensors 22, no. 1: 211. https://doi.org/10.3390/s22010211

APA StyleZakia, U., & Menon, C. (2022). Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization. Sensors, 22(1), 211. https://doi.org/10.3390/s22010211