A Systematic Review of Urban Navigation Systems for Visually Impaired People

Abstract

1. Introduction

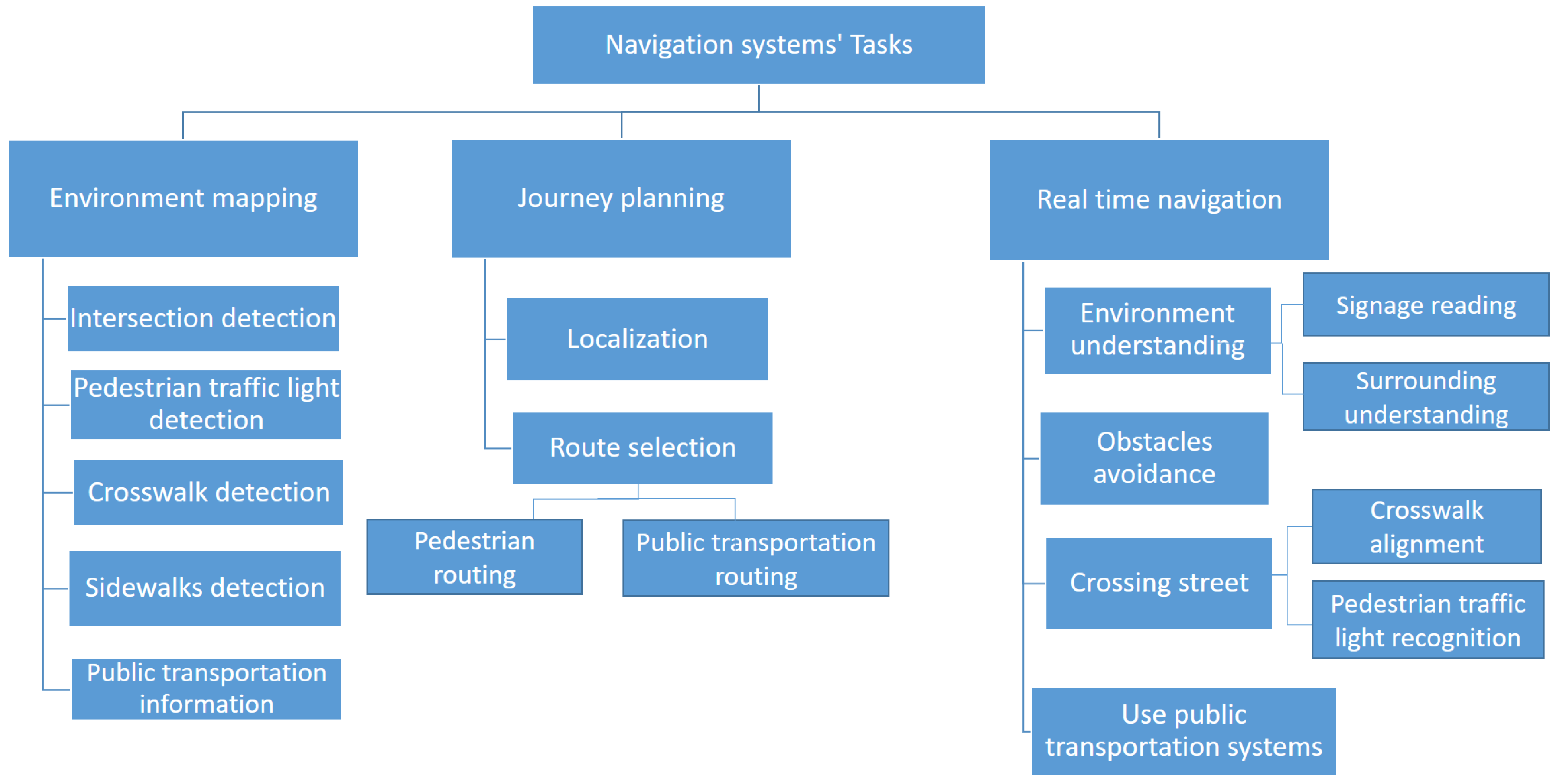

- A hierarchical taxonomy of the phases and associated task breakdown of pedestrian urban navigation associated with safe navigation for BVIP, is presented.

- For each task, we provide a detailed review of research work and developments, limitations of approaches taken, and potential future directions.

- The research area of navigation systems for BVIP overlaps with other research fields including smart cities, automated journey planning, autonomous vehicles, and robot navigation. We highlight these overlaps throughout to provide a useful and far-reaching review of this domain and its context to other areas.

- We highlight and clarify the range of used terminologies in the domain.

- We review the range of available applications and purpose-built/modified devices to support BVIP.

2. Related Work

2.1. Specific Sub-Domain Surveys

2.2. Terminology

3. A Taxonomy of Outdoor Navigation Systems for BVIP

- Intersection detection: detects the location of road intersections. An intersection is defined as a point where two or more roads meet, and represents a critical safety point of interest to BVIP.

- Pedestrian traffic light detection: detects the location and orientation of pedestrian traffic lights. These are traffic lights that have stop/go signals designed for pedestrians, as opposed to solely vehicle drivers.

- Crosswalk detection: detects an optimal marked location where visually impaired users can cross a road, such as a zebra crosswalk.

- Sidewalk detection: detects the existence and location of the pedestrian sidewalk (pavement) where BVIP can walk safely.

- Public transportation information: defines the locations of public transportation stops and stations, and information about the degree of accessibility of each one.

- Localization: defines the initial start point of the journey, where users start their journey from.

- Route selection: finds the best route to reach a specified destination.

- Environment understanding: helps BVIP to understand their surroundings, including reading signage and physical surrounding understanding.

- Avoiding obstacles: detects the obstacles on a road and helps BVIP to avoid them.

- Crossing street: helps BVIP in crossing a road when at a junction. This task helps the individual to align with the location of a crosswalk. Furthermore, it recognizes the status of a pedestrian traffic light to determine the appropriate time to cross, so they can cross safely.

- Using public transportation systems: This task assists BVIP in using public transportation systems such as a bus or train.

4. Overview of Navigation Systems by Device

- Sensors-based: this category collects data through various sensors such as ultrasonic sensors, liquid sensors, and infrared (IR) sensors.

- Electromagnetic/radar-based: radar is used to receive information about the environment, particularly objects in the environment.

- Camera-based: cameras capture a scene to produce more detailed information about the environment, such as an object’s colour and shape.

- Smartphone-based: in this case, the BVIP has their own device with a downloaded application. Some applications utilise just the phone camera, with others using the phone camera and other phone sensors such as GPS, compass, etc.

- Combination: in these categories, two types of data gathering methods are used to combine the benefits of both of them such as sensor and smartphone, sensor and camera, and camera and smartphone.

| Devices | Journey Planning | Real-Time navigation | ||||||

|---|---|---|---|---|---|---|---|---|

| Localization | Route Selection | Environment Understanding | Obstacle Avoidance | Crossing Street | Using Public Transportation | |||

| Signage Reading | Surrounding Understanding | Pedestrian traffic Lights Recognition | Crosswalk Alignment | |||||

| Sensors-based | [22,23,24] | [22,23,25,26,27,28,29,30,31] | [32] | |||||

| Electromagnetic/radar-based | [33,34,35] | |||||||

| Camera-based | [36,37,38,39] | [40,41] | [42,43] | [39,44,45,46,47,48,49,50] | [51,52,53] | [51] | ||

| Smartphone-based | [54,55,56,57,58,59] | [54,55,57] | [57,58] | [57,60,61] | [57,62,63,64] | [62,65] | [66] | |

| Sensor and camera based | [67,68,69,70,71] | |||||||

| Electromagnetic/radar-based and camera based | [72] | |||||||

| Sensor and smartphone based | [73,74,75] | [74] | [73,75,76] | [77] | [77] | [78,79] | ||

| Camera and smartphone based | [80] | [80] | [80] | |||||

5. Environment Mapping

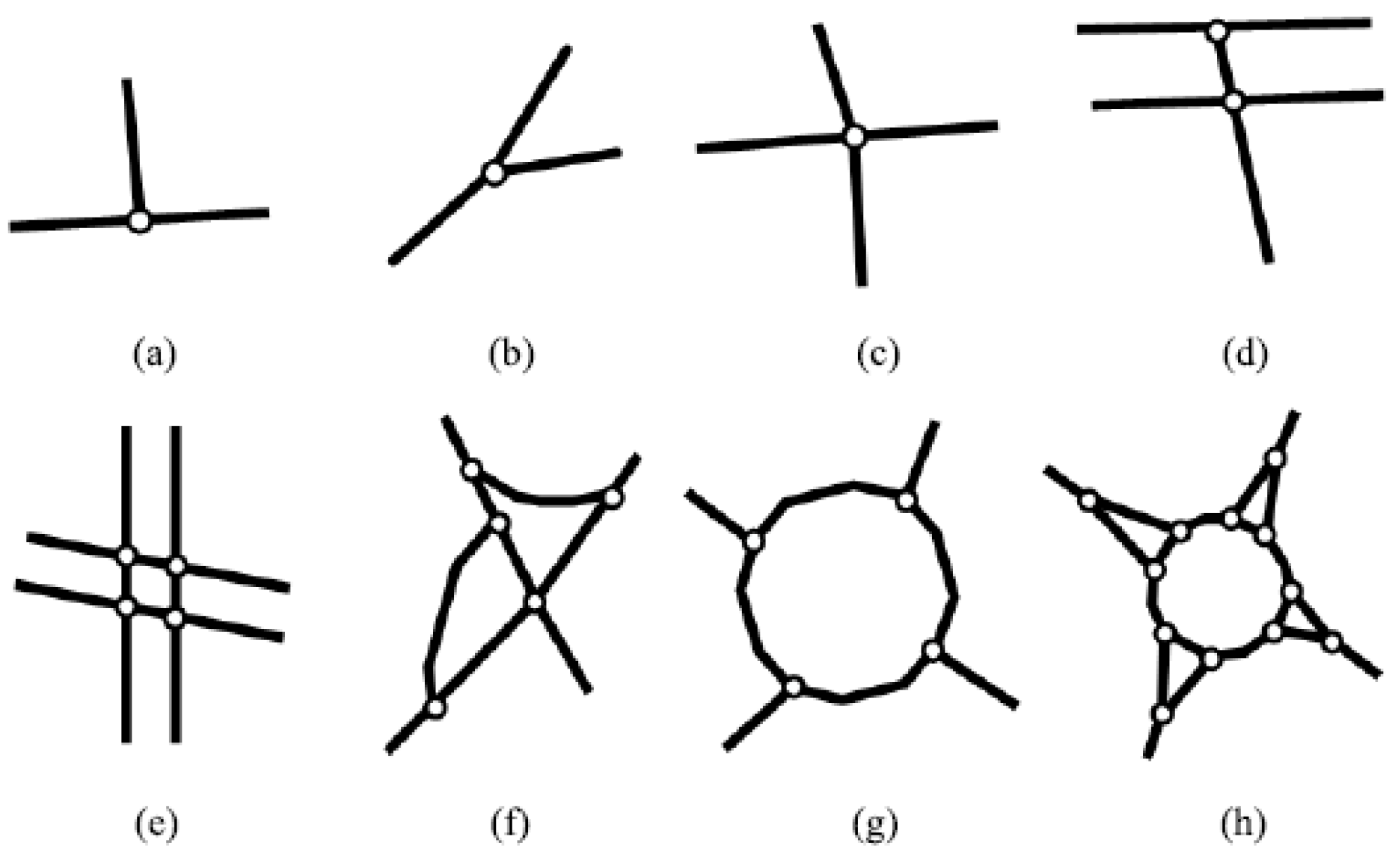

5.1. Intersection Detection

5.2. Pedestrian Traffic Lights Detection

5.3. Crosswalk Detection

- Crosswalks differ in shape and style across countries.

- The painting of crosswalks may be partially or completely worn away, especially in countries with poor road maintenance practices.

- Vehicle, pedestrians, and other objects may mask the crosswalk.

- Strong shadows may darken the appearance of the crosswalk.

- The change in weather and time when an image is captured affects the illumination of the image.

5.4. Sidewalk Detection

5.5. Public Transportation Information

5.6. Discussion of Environment Mapping Research

5.7. Future Work for Environment Mapping

6. Journey Planning

6.1. Localization

6.2. Route Selection

6.3. Discussion of Journey Planning Research

6.4. Future Work for Journey Planning

7. Real-Time Navigation

7.1. Environment Understanding

7.1.1. Signage Reading

7.1.2. Surroundings Understanding:

7.2. Obstacle Avoidance

7.3. Crossing the Street

7.3.1. Crosswalk Alignment

7.3.2. Pedestrian Traffic Light Recognition

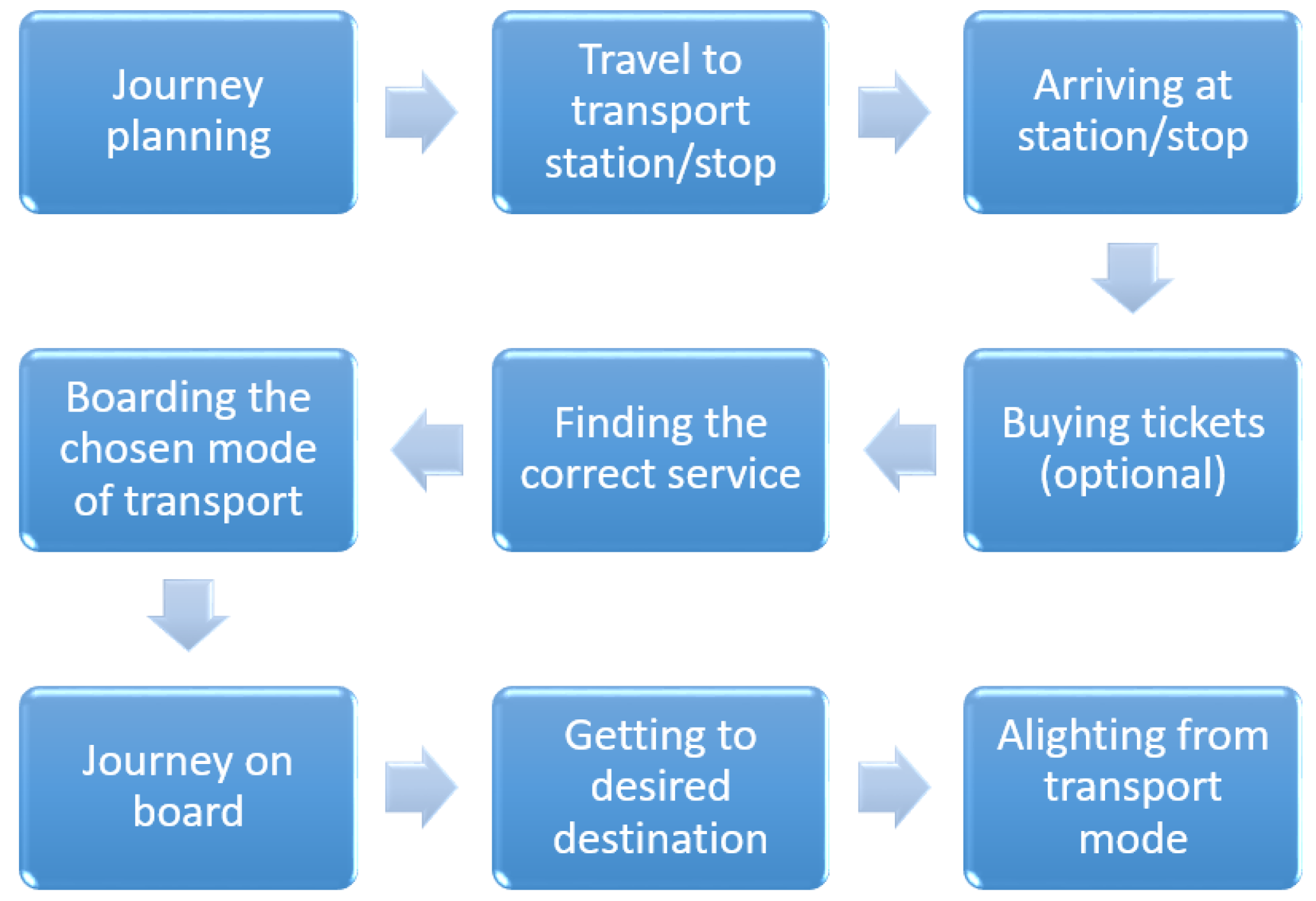

7.4. Using Public Transportation Systems

7.5. Discussion of Real-Time Navigation

7.6. Future Work for the Real-Time Navigation Phase

8. Feedback and Wearability of Navigation Systems Devices

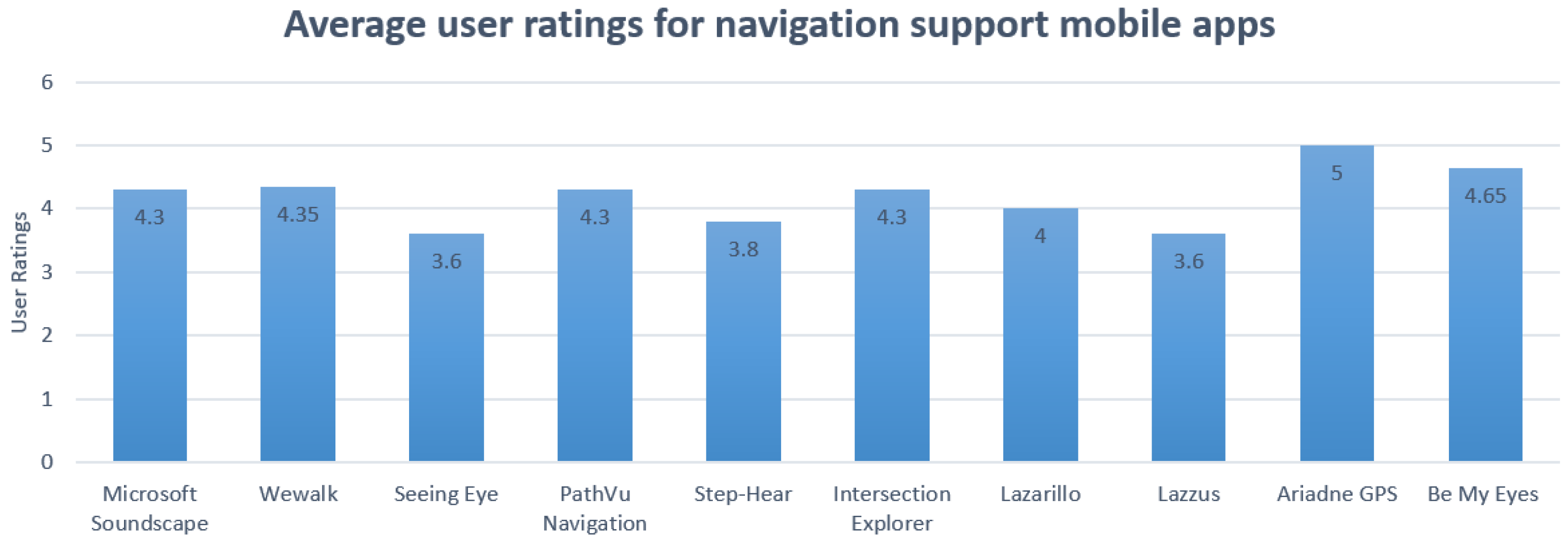

9. Applications and Devices

9.1. Handheld

9.2. Wearable

9.3. Discussion

10. Main Findings

10.1. Discussion

10.2. General Comparison

11. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- WHO. Visual Impairment and Blindness. Available online: https://www.who.int/en/news-room/fact-sheets/detail/blindness-and-visual-impairment (accessed on 25 November 2020).

- Mocanu, B.C.; Tapu, R.; Zaharia, T. DEEP-SEE FACE: A Mobile Face Recognition System Dedicated to Visually Impaired People. IEEE Access 2018, 6, 51975–51985. [Google Scholar] [CrossRef]

- Dunai Dunai, L.; Chillarón Pérez, M.; Peris-Fajarnés, G.; Lengua Lengua, I. Euro banknote recognition system for blind people. Sensors 2017, 17, 184. [Google Scholar] [CrossRef] [PubMed]

- Park, C.; Cho, S.W.; Baek, N.R.; Choi, J.; Park, K.R. Deep Feature-Based Three-Stage Detection of Banknotes and Coins for Assisting Visually Impaired People. IEEE Access 2020, 8, 184598–184613. [Google Scholar] [CrossRef]

- Tateno, K.; Takagi, N.; Sawai, K.; Masuta, H.; Motoyoshi, T. Method for Generating Captions for Clothing Images to Support Visually Impaired People. In Proceedings of the 2020 Joint 11th International Conference on Soft Computing and Intelligent Systems and 21st International Symposium on Advanced Intelligent Systems (SCIS-ISIS), Hachijo Island, Japan, 5–8 December 2020; pp. 1–5. [Google Scholar]

- Aladren, A.; López-Nicolás, G.; Puig, L.; Guerrero, J.J. Navigation Assistance for the Visually Impaired Using RGB-D Sensor with Range Expansion. IEEE Syst. J. 2016, 10, 922–932. [Google Scholar] [CrossRef]

- Alwi, S.R.A.W.; Ahmad, M.N. Survey on outdoor navigation system needs for blind people. In Proceedings of the 2013 IEEE Student Conference on Research and Developement, Putrajaya, Malaysia, 16–17 December 2013; pp. 144–148. [Google Scholar]

- Islam, M.M.; Sadi, M.S.; Zamli, K.Z.; Ahmed, M.M. Developing walking assistants for visually impaired people: A review. IEEE Sens. J. 2019, 19, 2814–2828. [Google Scholar] [CrossRef]

- Real, S.; Araujo, A. Navigation systems for the blind and visually impaired: Past work, challenges, and open problems. Sensors 2019, 19, 3404. [Google Scholar] [CrossRef]

- Fernandes, H.; Costa, P.; Filipe, V.; Paredes, H.; Barroso, J. A review of assistive spatial orientation and navigation technologies for the visually impaired. Univers. Access Inf. Soc. 2019, 18, 155–168. [Google Scholar] [CrossRef]

- Paiva, S.; Gupta, N. Technologies and Systems to Improve Mobility of Visually Impaired People: A State of the Art. In Technological Trends in Improved Mobility of the Visually Impaired; Paiva, S., Ed.; Springer: Berlin/Heidelberg, Germany, 2020; pp. 105–123. [Google Scholar]

- Mohamed, A.M.A.; Hussein, M.A. Survey on obstacle detection and tracking system for the visual impaired. Int. J. Recent Trends Eng. Res. 2016, 2, 230–234. [Google Scholar]

- Lakde, C.K.; Prasad, P.S. Review paper on navigation system for visually impaired people. Int. J. Adv. Res. Comput. Commun. Eng. 2015, 4, 166–168. [Google Scholar] [CrossRef]

- Duarte, K.; Cecílio, J.; Furtado, P. Overview of assistive technologies for the blind: Navigation and shopping. In Proceedings of the International Conference on Control Automation Robotics & Vision (ICARCV), Singapore, 10–12 December 2014; pp. 1929–1934. [Google Scholar]

- Manjari, K.; Verma, M.; Singal, G. A Survey on Assistive Technology for Visually Impaired. Internet Things 2020, 11, 100188. [Google Scholar] [CrossRef]

- Tapu, R.; Mocanu, B.; Tapu, E. A survey on wearable devices used to assist the visual impaired user navigation in outdoor environments. In Proceedings of the International Symposium on Electronics and Telecommunications (ISETC), Timisoara, Romania, 14–15 November 2014; pp. 1–4. [Google Scholar]

- Fei, Z.; Yang, E.; Hu, H.; Zhou, H. Review of machine vision-based electronic travel aids. In Proceedings of the 23rd International Conference on Automation and Computing (ICAC), Huddersfield, UK, 7–8 September 2017; pp. 1–7. [Google Scholar]

- Budrionis, A.; Plikynas, D.; Daniušis, P.; Indrulionis, A. Smartphone-based computer vision travelling aids for blind and visually impaired individuals: A systematic review. Assist. Technol. 2020, 1–17. [Google Scholar] [CrossRef]

- Kuriakose, B.; Shrestha, R.; Sandnes, F.E. Smartphone Navigation Support for Blind and Visually Impaired People-A Comprehensive Analysis of Potentials and Opportunities. In International Conference on Human-Computer Interaction; Springer: Berlin/Heidelberg, Germany, 2020; pp. 568–583. [Google Scholar]

- Petrie, H.; Johnson, V.; Strothotte, T.; Raab, A.; Fritz, S.; Michel, R. MoBIC: Designing a travel aid for blind and elderly people. J. Navig. 1996, 49, 45–52. [Google Scholar] [CrossRef]

- Dakopoulos, D.; Bourbakis, N.G. Wearable obstacle avoidance electronic travel aids for blind: A survey. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2009, 40, 25–35. [Google Scholar] [CrossRef]

- Kaushalya, V.; Premarathne, K.; Shadir, H.; Krithika, P.; Fernando, S. ‘AKSHI’: Automated help aid for visually impaired people using obstacle detection and GPS technology. Int. J. Sci. Res. Publ. 2016, 6, 110. [Google Scholar]

- Meshram, V.V.; Patil, K.; Meshram, V.A.; Shu, F.C. An astute assistive device for mobility and object recognition for visually impaired people. IEEE Trans. Hum. Mach. Syst. 2019, 49, 449–460. [Google Scholar] [CrossRef]

- Alghamdi, S.; van Schyndel, R.; Khalil, I. Accurate positioning using long range active RFID technology to assist visually impaired people. J. Netw. Comput. Appl. 2014, 41, 135–147. [Google Scholar] [CrossRef]

- Jeong, G.Y.; Yu, K.H. Multi-section sensing and vibrotactile perception for walking guide of visually impaired person. Sensors 2016, 16, 1070. [Google Scholar] [CrossRef] [PubMed]

- Chun, A.C.B.; Al Mahmud, A.; Theng, L.B.; Yen, A.C.W. Wearable Ground Plane Hazards Detection and Recognition System for the Visually Impaired. In Proceedings of the 2019 International Conference on E-Society, E-Education and E-Technology, Taipei, Taiwan, 15–17 August 2019; pp. 84–89. [Google Scholar]

- Rahman, M.A.; Sadi, M.S.; Islam, M.M.; Saha, P. Design and Development of Navigation Guide for Visually Impaired People. In Proceedings of the IEEE International Conference on Biomedical Engineering, Computer and Information Technology for Health (BECITHCON), Dhaka, Bangladesh, 28–30 November 2019; pp. 89–92. [Google Scholar]

- Chang, W.J.; Chen, L.B.; Chen, M.C.; Su, J.P.; Sie, C.Y.; Yang, C.H. Design and Implementation of an Intelligent Assistive System for Visually Impaired People for Aerial Obstacle Avoidance and Fall Detection. IEEE Sen. J. 2020, 20, 10199–10210. [Google Scholar] [CrossRef]

- Kwiatkowski, P.; Jaeschke, T.; Starke, D.; Piotrowsky, L.; Deis, H.; Pohl, N. A concept study for a radar-based navigation device with sector scan antenna for visually impaired people. In Proceedings of the 2017 First IEEE MTT-S International Microwave Bio Conference (IMBIOC), Gothenburg, Sweden, 15–17 May 2017; pp. 1–4. [Google Scholar]

- Sohl-Dickstein, J.; Teng, S.; Gaub, B.M.; Rodgers, C.C.; Li, C.; DeWeese, M.R.; Harper, N.S. A device for human ultrasonic echolocation. IEEE Trans. Biomed. Eng. 2015, 62, 1526–1534. [Google Scholar] [CrossRef] [PubMed]

- Patil, K.; Jawadwala, Q.; Shu, F.C. Design and construction of electronic aid for visually impaired people. IEEE Trans. Hum. Mach. Syst. 2018, 48, 172–182. [Google Scholar] [CrossRef]

- Sáez, Y.; Muñoz, J.; Canto, F.; García, A.; Montes, H. Assisting Visually Impaired People in the Public Transport System through RF-Communication and Embedded Systems. Sensors 2019, 19, 1282. [Google Scholar] [CrossRef]

- Cardillo, E.; Di Mattia, V.; Manfredi, G.; Russo, P.; De Leo, A.; Caddemi, A.; Cerri, G. An electromagnetic sensor prototype to assist visually impaired and blind people in autonomous walking. IEEE Sens. J. 2018, 18, 2568–2576. [Google Scholar] [CrossRef]

- Pisa, S.; Pittella, E.; Piuzzi, E. Serial patch array antenna for an FMCW radar housed in a white cane. Int. J. Antennas Propag. 2016, 2016. [Google Scholar] [CrossRef]

- Kiuru, T.; Metso, M.; Utriainen, M.; Metsävainio, K.; Jauhonen, H.M.; Rajala, R.; Savenius, R.; Ström, M.; Jylhä, T.N.; Juntunen, R.; et al. Assistive device for orientation and mobility of the visually impaired based on millimeter wave radar technology—Clinical investigation results. Cogent Eng. 2018, 5, 1450322. [Google Scholar] [CrossRef]

- Cheng, R.; Hu, W.; Chen, H.; Fang, Y.; Wang, K.; Xu, Z.; Bai, J. Hierarchical visual localization for visually impaired people using multimodal images. Expert Syst. Appl. 2021, 165, 113743. [Google Scholar] [CrossRef]

- Lin, S.; Cheng, R.; Wang, K.; Yang, K. Visual localizer: Outdoor localization based on convnet descriptor and global optimization for visually impaired pedestrians. Sensors 2018, 18, 2476. [Google Scholar] [CrossRef]

- Fang, Y.; Yang, K.; Cheng, R.; Sun, L.; Wang, K. A Panoramic Localizer Based on Coarse-to-Fine Descriptors for Navigation Assistance. Sensors 2020, 20, 4177. [Google Scholar] [CrossRef]

- Duh, P.J.; Sung, Y.C.; Chiang, L.Y.F.; Chang, Y.J.; Chen, K.W. V-Eye: A Vision-based Navigation System for the Visually Impaired. IEEE Trans. Multimed. 2020. [Google Scholar] [CrossRef]

- Hairuman, I.F.B.; Foong, O.M. OCR signage recognition with skew & slant correction for visually impaired people. In Proceedings of the International Conference on Hybrid Intelligent Systems (HIS), Malacca, Malaysia, 5–8 December 2011; pp. 306–310. [Google Scholar]

- Devi, P.; Saranya, B.; Abinayaa, B.; Kiruthikamani, G.; Geethapriya, N. Wearable Aid for Assisting the Blind. Methods 2016, 3. [Google Scholar] [CrossRef]

- Bazi, Y.; Alhichri, H.; Alajlan, N.; Melgani, F. Scene Description for Visually Impaired People with Multi-Label Convolutional SVM Networks. Appl. Sci. 2019, 9, 5062. [Google Scholar] [CrossRef]

- Mishra, A.A.; Madhurima, C.; Gautham, S.M.; James, J.; Annapurna, D. Environment Descriptor for the Visually Impaired. In Proceedings of the International Conference on Advances in Computing, Communications and Informatics (ICACCI), Bangalore, India, 19–22 September 2018; pp. 1720–1724. [Google Scholar]

- Lin, Y.; Wang, K.; Yi, W.; Lian, S. Deep learning based wearable assistive system for visually impaired people. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Seoul, Korea, 27 October–2 November 2019. [Google Scholar]

- Younis, O.; Al-Nuaimy, W.; Rowe, F.; Alomari, M.H. A smart context-aware hazard attention system to help people with peripheral vision loss. Sensors 2019, 19, 1630. [Google Scholar] [CrossRef]

- Elmannai, W.; Elleithy, K.M. A novel obstacle avoidance system for guiding the visually impaired through the use of fuzzy control logic. In Proceedings of the IEEE Annual Consumer Communications & Networking Conference (CCNC), Las Vegas, NV, USA, 12–15 January 2018; pp. 1–9. [Google Scholar]

- Yang, K.; Wang, K.; Bergasa, L.M.; Romera, E.; Hu, W.; Sun, D.; Sun, J.; Cheng, R.; Chen, T.; López, E. Unifying terrain awareness for the visually impaired through real-time semantic segmentation. Sensors 2018, 18, 1506. [Google Scholar] [CrossRef]

- Kang, M.C.; Chae, S.H.; Sun, J.Y.; Lee, S.H.; Ko, S.J. An enhanced obstacle avoidance method for the visually impaired using deformable grid. IEEE Trans. Consum. Electron. 2017, 63, 169–177. [Google Scholar] [CrossRef]

- Kang, M.C.; Chae, S.H.; Sun, J.Y.; Yoo, J.W.; Ko, S.J. A novel obstacle detection method based on deformable grid for the visually impaired. IEEE Trans. Consum. Electron. 2015, 61, 376–383. [Google Scholar] [CrossRef]

- Poggi, M.; Mattoccia, S. A wearable mobility aid for the visually impaired based on embedded 3d vision and deep learning. In Proceedings of the IEEE Symposium on Computers and Communication (ISCC), Messina, Italy, 27–30 June 2016; pp. 208–213. [Google Scholar]

- Cheng, R.; Wang, K.; Lin, S. Intersection Navigation for People with Visual Impairment. In International Conference on Computers Helping People with Special Needs; Springer: Berlin/Heidelberg, Germany, 2018; pp. 78–85. [Google Scholar]

- Cheng, R.; Wang, K.; Yang, K.; Long, N.; Bai, J.; Liu, D. Real-time pedestrian crossing lights detection algorithm for the visually impaired. Multimed. Tools Appl. 2018, 77, 20651–20671. [Google Scholar] [CrossRef]

- Li, X.; Cui, H.; Rizzo, J.R.; Wong, E.; Fang, Y. Cross-Safe: A computer vision-based approach to make all intersection-related pedestrian signals accessible for the visually impaired. In Science and Information Conference; Springer: Berlin/Heidelberg, Germany, 2019; pp. 132–146. [Google Scholar]

- Chen, Q.; Wu, L.; Chen, Z.; Lin, P.; Cheng, S.; Wu, Z. Smartphone Based Outdoor Navigation and Obstacle Avoidance System for the Visually Impaired. In International Conference on Multi-disciplinary Trends in Artificial Intelligence; Springer: Berlin/Heidelberg, Germany, 2019; pp. 26–37. [Google Scholar]

- Velazquez, R.; Pissaloux, E.; Rodrigo, P.; Carrasco, M.; Giannoccaro, N.I.; Lay-Ekuakille, A. An outdoor navigation system for blind pedestrians using GPS and tactile-foot feedback. Appl. Sci. 2018, 8, 578. [Google Scholar] [CrossRef]

- Spiers, A.J.; Dollar, A.M. Outdoor pedestrian navigation assistance with a shape-changing haptic interface and comparison with a vibrotactile device. In Proceedings of the 2016 IEEE Haptics Symposium (HAPTICS), Philadelphia, PA, USA, 8–11 April 2016; pp. 34–40. [Google Scholar]

- Bai, J.; Liu, D.; Su, G.; Fu, Z. A cloud and vision-based navigation system used for blind people. In Proceedings of the 2017 International Conference on Artificial Intelligence, Automation and Control Technologies, Wuhan, China, 7–9 April 2017; pp. 1–6. [Google Scholar]

- Cheng, R.; Wang, K.; Bai, J.; Xu, Z. Unifying Visual Localization and Scene Recognition for People With Visual Impairment. IEEE Access 2020, 8, 64284–64296. [Google Scholar] [CrossRef]

- Gintner, V.; Balata, J.; Boksansky, J.; Mikovec, Z. Improving reverse geocoding: Localization of blind pedestrians using conversational ui. In Proceedings of the 2017 8th IEEE International Conference on Cognitive Infocommunications (CogInfoCom), Debrecen, Hungary, 11–14 September 2017; pp. 000145–000150. [Google Scholar]

- Shadi, S.; Hadi, S.; Nazari, M.A.; Hardt, W. Outdoor navigation for visually impaired based on deep learning. Proc. CEUR Workshop Proc. 2019, 2514, 97–406. [Google Scholar]

- Lin, B.S.; Lee, C.C.; Chiang, P.Y. Simple smartphone-based guiding system for visually impaired people. Sensors 2017, 17, 1371. [Google Scholar] [CrossRef] [PubMed]

- Yu, S.; Lee, H.; Kim, J. Street Crossing Aid Using Light-Weight CNNs for the Visually Impaired. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshop (ICCVW), Seoul, Korea, 27–28 October 2019; pp. 2593–2601. [Google Scholar]

- Ghilardi, M.C.; Simoes, G.S.; Wehrmann, J.; Manssour, I.H.; Barros, R.C. Real-Time Detection of Pedestrian Traffic Lights for Visually-Impaired People. In Proceedings of the 2018 International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–8. [Google Scholar]

- Ash, R.; Ofri, D.; Brokman, J.; Friedman, I.; Moshe, Y. Real-time pedestrian traffic light detection. In Proceedings of the IEEE International Conference on the Science of Electrical Engineering in Israel (ICSEE), Eilat, Israel, 12–14 December 2018; pp. 1–5. [Google Scholar]

- Ghilardi, M.C.; Junior, J.J.; Manssour, I.H. Crosswalk Localization from Low Resolution Satellite Images to Assist Visually Impaired People. IEEE Comput. Graph. Appl. 2018, 38, 30–46. [Google Scholar] [CrossRef] [PubMed]

- Yu, C.; Li, Y.; Huang, T.Y.; Hsieh, W.A.; Lee, S.Y.; Yeh, I.H.; Lin, G.K.; Yu, N.H.; Tang, H.H.; Chang, Y.J. BusMyFriend: Designing a bus reservation service for people with visual impairments in Taipei. In Proceedings of Companion Publication of the 2020 ACM Designing Interactive Systems Conference; ACM: New York, NY, USA, 2020; pp. 91–96. [Google Scholar]

- Ni, D.; Song, A.; Tian, L.; Xu, X.; Chen, D. A walking assistant robotic system for the visually impaired based on computer vision and tactile perception. Int. J. Soc. Robot. 2015, 7, 617–628. [Google Scholar] [CrossRef]

- Joshi, R.C.; Yadav, S.; Dutta, M.K.; Travieso-Gonzalez, C.M. Efficient Multi-Object Detection and Smart Navigation Using Artificial Intelligence for Visually Impaired People. Entropy 2020, 22, 941. [Google Scholar] [CrossRef]

- Vera, D.; Marcillo, D.; Pereira, A. Blind guide: Anytime, anywhere solution for guiding blind people. In World Conference on Information Systems and Technologies; Springer: Berlin/Heidelberg, Germany, 2017; pp. 353–363. [Google Scholar]

- Islam, M.M.; Sadi, M.S.; Bräunl, T. Automated walking guide to enhance the mobility of visually impaired people. IEEE Trans. Med. Robot. Bionics 2020, 2, 485–496. [Google Scholar] [CrossRef]

- Martinez, M.; Roitberg, A.; Koester, D.; Stiefelhagen, R.; Schauerte, B. Using technology developed for autonomous cars to help navigate blind people. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Venice, Italy, 22–29 October 2017; pp. 1424–1432. [Google Scholar]

- Long, N.; Wang, K.; Cheng, R.; Hu, W.; Yang, K. Unifying obstacle detection, recognition, and fusion based on millimeter wave radar and RGB-depth sensors for the visually impaired. Rev. Sci. Instrum. 2019, 90, 044102. [Google Scholar] [CrossRef]

- Meliones, A.; Filios, C. Blindhelper: A pedestrian navigation system for blinds and visually impaired. In Proceedings of the ACM International Conference on PErvasive Technologies Related to Assistive Environments, Corfu Island, Greece, 29 June–1 July 2016; pp. 1–4. [Google Scholar]

- Ahmetovic, D.; Gleason, C.; Ruan, C.; Kitani, K.; Takagi, H.; Asakawa, C. NavCog: A navigational cognitive assistant for the blind. In Proceedings of the 18th International Conference on Human-Computer Interaction with Mobile Devices and Services, Florence, Italy, 6–9 September 2016; pp. 90–99. [Google Scholar]

- Elmannai, W.; Elleithy, K.M. A Highly Accurate and Reliable Data Fusion Framework for Guiding the Visually Impaired. IEEE Access 2018, 6, 33029–33054. [Google Scholar] [CrossRef]

- Mocanu, B.C.; Tapu, R.; Zaharia, T.B. When Ultrasonic Sensors and Computer Vision Join Forces for Efficient Obstacle Detection and Recognition. Sensors 2016, 16, 1807. [Google Scholar] [CrossRef]

- Shangguan, L.; Yang, Z.; Zhou, Z.; Zheng, X.; Wu, C.; Liu, Y. Crossnavi: Enabling real-time crossroad navigation for the blind with commodity phones. In Proceedings of ACM International Joint Conference on Pervasive and Ubiquitous Computing; ACM: New York, NY, USA, 2014; pp. 787–798. [Google Scholar]

- Flores, G.H.; Manduchi, R. A public transit assistant for blind bus passengers. IEEE Pervasive Comput. 2018, 17, 49–59. [Google Scholar] [CrossRef]

- Shingte, S.; Patil, R. A Passenger Bus Alert and Accident System for Blind Person Navigational. Int. J. Sci. Res. Sci. Technol. 2018, 4, 282–288. [Google Scholar]

- Bai, J.; Liu, Z.; Lin, Y.; Li, Y.; Lian, S.; Liu, D. Wearable travel aid for environment perception and navigation of visually impaired people. Electronics 2019, 8, 697. [Google Scholar] [CrossRef]

- Guth, D.A.; Barlow, J.M.; Ponchillia, P.E.; Rodegerdts, L.A.; Kim, D.S.; Lee, K.H. An intersection database facilitates access to complex signalized intersections for pedestrians with vision disabilities. Transp. Res. Rec. 2019, 2673, 698–709. [Google Scholar] [CrossRef]

- Zhou, Q.; Li, Z. Experimental analysis of various types of road intersections for interchange detection. Trans. GIS 2015, 19, 19–41. [Google Scholar] [CrossRef]

- Dai, J.; Wang, Y.; Li, W.; Zuo, Y. Automatic Method for Extraction of Complex Road Intersection Points from High-resolution Remote Sensing Images Based on Fuzzy Inference. IEEE Access 2020, 8, 39212–39224. [Google Scholar] [CrossRef]

- Bhatt, D.; Sodhi, D.; Pal, A.; Balasubramanian, V.; Krishna, M. Have i reached the intersection: A deep learning-based approach for intersection detection from monocular cameras. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 4495–4500. [Google Scholar]

- Baumann, U.; Huang, Y.Y.; Gläser, C.; Herman, M.; Banzhaf, H.; Zöllner, J.M. Classifying road intersections using transfer-learning on a deep neural network. In Proceedings of the International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 683–690. [Google Scholar]

- Saeedimoghaddam, M.; Stepinski, T.F. Automatic extraction of road intersection points from USGS historical map series using deep convolutional neural networks. Int. J. Geogr. Inf. Sci. 2019, 34, 947–968. [Google Scholar] [CrossRef]

- Tümen, V.; Ergen, B. Intersections and crosswalk detection using deep learning and image processing techniques. Phys. A Stat. Mech. Appl. 2020, 543, 123510. [Google Scholar] [CrossRef]

- Kumar, A.; Gupta, G.; Sharma, A.; Krishna, K.M. Towards view-invariant intersection recognition from videos using deep network ensembles. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 1053–1060. [Google Scholar]

- Bock, J.; Krajewski, R.; Moers, T.; Runde, S.; Vater, L.; Eckstein, L. The ind dataset: A drone dataset of naturalistic road user trajectories at german intersections. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Las Vegas, NV, USA, 19 October–13 November 2020; pp. 1929–1934. [Google Scholar]

- Wang, J.; Wang, C.; Song, X.; Raghavan, V. Automatic intersection and traffic rule detection by mining motor-vehicle GPS trajectories. Comput. Environ. Urban Syst. 2017, 64, 19–29. [Google Scholar] [CrossRef]

- Rebai, K.; Achour, N.; Azouaoui, O. Road intersection detection and classification using hierarchical SVM classifier. Adv. Robot. 2014, 28, 929–941. [Google Scholar] [CrossRef]

- Oeljeklaus, M.; Hoffmann, F.; Bertram, T. A combined recognition and segmentation model for urban traffic scene understanding. In Proceedings of the International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017; pp. 1–6. [Google Scholar]

- Koji, T.; Kanji, T. Deep Intersection Classification Using First and Third Person Views. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Paris, France, 9–12 June 2019; pp. 454–459. [Google Scholar]

- Maddern, W.; Pascoe, G.; Linegar, C.; Newman, P. 1 Year, 1000 km: The Oxford RobotCar Dataset. Int. J. Robot. Res. 2017, 36, 3–15. [Google Scholar] [CrossRef]

- Lara Dataset. Available online: http://www.lara.prd.fr/benchmarks/trafficlightsrecognition (accessed on 27 November 2020).

- Cordts, M.; Omran, M.; Ramos, S.; Rehfeld, T.; Enzweiler, M.; Benenson, R.; Franke, U.; Roth, S.; Schiele, B. The cityscapes dataset for semantic urban scene understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 3213–3223. [Google Scholar]

- GrandTheftAutoV. Available online: https://en.wikipedia.org/wiki/Development_of_Grand_Theft_Auto_V (accessed on 25 November 2020).

- Mapillary. Available online: https://www.mapillary.com/app (accessed on 25 November 2020).

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? In The kitti vision benchmark suite. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 3354–3361. [Google Scholar]

- Krylov, V.A.; Kenny, E.; Dahyot, R. Automatic discovery and geotagging of objects from street view imagery. Remote Sens. 2018, 10, 661. [Google Scholar] [CrossRef]

- Krylov, V.A.; Dahyot, R. Object geolocation from crowdsourced street level imagery. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases; Springer: Berlin/Heidelberg, Germany, 2018; pp. 79–83. [Google Scholar]

- Kurath, S.; Gupta, R.D.; Keller, S. OSMDeepOD-Object Detection on Orthophotos with and for VGI. GI Forum. 2017, 2, 173–188. [Google Scholar] [CrossRef]

- Riveiro, B.; González-Jorge, H.; Martínez-Sánchez, J.; Díaz-Vilariño, L.; Arias, P. Automatic detection of zebra crossings from mobile LiDAR data. Opt. Laser Technol. 2015, 70, 63–70. [Google Scholar] [CrossRef]

- Intersection Perception Through Real-Time Semantic Segmentation to Assist Navigation of Visually Impaired Pedestrians. In Proceedings of the 2018 IEEE International Conference on Robotics and Biomimetics (ROBIO), Kuala Lumpur, Malaysia, 12–15 December 2018; pp. 1034–1039.

- Berriel, R.F.; Lopes, A.T.; de Souza, A.F.; Oliveira-Santos, T. Deep Learning-Based Large-Scale Automatic Satellite Crosswalk Classification. IEEE Geosci. Remote Sens. Lett. 2017, 14, 1513–1517. [Google Scholar] [CrossRef]

- Wu, X.H.; Hu, R.; Bao, Y.Q. Block-Based Hough Transform for Recognition of Zebra Crossing in Natural Scene Images. IEEE Access 2019, 7, 59895–59902. [Google Scholar] [CrossRef]

- Ahmetovic, D.; Manduchi, R.; Coughlan, J.M.; Mascetti, S. Mind Your Crossings: Mining GIS Imagery for Crosswalk Localization. ACM Trans. Access. Comput. 2017, 9, 1–25. [Google Scholar] [CrossRef]

- Berriel, R.F.; Rossi, F.S.; de Souza, A.F.; Oliveira-Santos, T. Automatic large-scale data acquisition via crowdsourcing for crosswalk classification: A deep learning approach. Comput. Graph. 2017, 68, 32–42. [Google Scholar] [CrossRef]

- Malbog, M.A. MASK R-CNN for Pedestrian Crosswalk Detection and Instance Segmentation. In Proceedings of the IEEE International Conference on Engineering Technologies and Applied Sciences (ICETAS), Kuala Lumpur, Malaysia, 20–21 December 2019; pp. 1–5. [Google Scholar]

- Neuhold, G.; Ollmann, T.; Rota Bulo, S.; Kontschieder, P. The mapillary vistas dataset for semantic understanding of street scenes. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 5000–5009. [Google Scholar]

- Cheng, R.; Wang, K.; Yang, K.; Long, N.; Hu, W.; Chen, H.; Bai, J.; Liu, D. Crosswalk navigation for people with visual impairments on a wearable device. J. Electron. Imaging 2017, 26, 053025. [Google Scholar] [CrossRef]

- Pedestrian-Traffic-Lane (PTL) Dataset. Available online: https://github.com/samuelyu2002/ImVisible (accessed on 27 November 2020).

- Zimmermann-Janschitz, S. The Application of Geographic Information Systems to Support Wayfinding for People with Visual Impairments or Blindness. In Visual Impairment and Blindness: What We Know and What We Have to Know; IntechOpen: London, UK, 2019. [Google Scholar]

- Hara, K.; Azenkot, S.; Campbell, M.; Bennett, C.L.; Le, V.; Pannella, S.; Moore, R.; Minckler, K.; Ng, R.H.; Froehlich, J.E. Improving public transit accessibility for blind riders by crowdsourcing bus stop landmark locations with google street view: An extended analysis. ACM Trans. Access. Comput. 2015, 6, 1–23. [Google Scholar] [CrossRef]

- Cáceres, P.; Sierra-Alonso, A.; Vela, B.; Cavero, J.M.; Ángel Garrido, M.; Cuesta, C.E. Adding Semantics to Enrich Public Transport and Accessibility Data from the Web. Open J. Web Technol. 2020, 7, 1–18. [Google Scholar]

- Mirri, S.; Prandi, C.; Salomoni, P.; Callegati, F.; Campi, A. On Combining Crowdsourcing, Sensing and Open Data for an Accessible Smart City. In Proceedings of the Eighth International Conference on Next Generation Mobile Apps, Services and Technologies, Oxford, UK, 10–12 September 2014; pp. 294–299. [Google Scholar]

- Low, W.Y.; Cao, M.; De Vos, J.; Hickman, R. The journey experience of visually impaired people on public transport in London. Transp. Policy 2020, 97, 137–148. [Google Scholar] [CrossRef]

- Arroyo, R.; Alcantarilla, P.F.; Bergasa, L.M.; Romera, E. Are you able to perform a life-long visual topological localization? Auton. Robot. 2018, 42, 665–685. [Google Scholar] [CrossRef]

- Tang, X.; Chen, Y.; Zhu, Z.; Lu, X. A visual aid system for the blind based on RFID and fast symbol recognition. In Proceedings of the International Conference on Pervasive Computing and Applications, Port Elizabeth, South Africa, 26–28 October 2011; pp. 184–188. [Google Scholar]

- Kim, J.E.; Bessho, M.; Kobayashi, S.; Koshizuka, N.; Sakamura, K. Navigating visually impaired travelers in a large train station using smartphone and bluetooth low energy. In Proceedings of Annual ACM Symposium on Applied Computing; ACM: New York, NY, USA, 2016; pp. 604–611. [Google Scholar]

- Cohen, A.; Dalyot, S. Route planning for blind pedestrians using OpenStreetMap. Environ. Plan. Urban Anal. City Sci. 2020. [Google Scholar] [CrossRef]

- Bravo, A.P.; Giret, A. Recommender System of Walking or Public Transportation Routes for Disabled Users. In International Conference on Practical Applications of Agents and Multi-Agent Systems; Springer: Berlin/Heidelberg, Germany, 2018; pp. 392–403. [Google Scholar]

- Hendawi, A.M.; Rustum, A.; Ahmadain, A.A.; Hazel, D.; Teredesai, A.; Oliver, D.; Ali, M.; Stankovic, J.A. Smart personalized routing for smart cities. In Proceedings of the International Conference on Data Engineering (ICDE), San Diego, CA, USA, 19–22 April 2017; pp. 1295–1306. [Google Scholar]

- Yusof, T.; Toha, S.F.; Yusof, H.M. Path planning for visually impaired people in an unfamiliar environment using particle swarm optimization. Procedia Comput. Sci. 2015, 76, 80–86. [Google Scholar] [CrossRef]

- Fogli, D.; Arenghi, A.; Gentilin, F. A universal design approach to wayfinding and navigation. Multimed. Tools Appl. 2020, 79, 33577–33601. [Google Scholar] [CrossRef]

- Wheeler, B.; Syzdykbayev, M.; Karimi, H.A.; Gurewitsch, R.; Wang, Y. Personalized accessible wayfinding for people with disabilities through standards and open geospatial platforms in smart cities. Open Geospat. Data Softw. Stand. 2020, 5, 1–15. [Google Scholar] [CrossRef]

- Gupta, M.; Abdolrahmani, A.; Edwards, E.; Cortez, M.; Tumang, A.; Majali, Y.; Lazaga, M.; Tarra, S.; Patil, P.; Kuber, R.; et al. Towards More Universal Wayfinding Technologies: Navigation Preferences Across Disabilities. In Proceedings of the CHI Conference on Human Factors in Computing Systems; ACM: New York, NY, USA, 2020; pp. 1–13. [Google Scholar]

- Jung, J.; Park, S.; Kim, Y.; Park, S. Route Recommendation with Dynamic User Preference on Road Networks. In Proceedings of the International Conference on Big Data and Smart Computing (BigComp), Kyoto, Japan, 27 February–2 March 2019; pp. 1–7. [Google Scholar]

- Hossain, M.Z.; Sohel, F.; Shiratuddin, M.F.; Laga, H. A comprehensive survey of deep learning for image captioning. ACM Comput. Surv. 2019, 51, 1–36. [Google Scholar] [CrossRef]

- Matsuzaki, S.; Yamazaki, K.; Hara, Y.; Tsubouchi, T. Traversable Region Estimation for Mobile Robots in an Outdoor Image. J. Intell. Robot. Syst. 2018, 92, 453–463. [Google Scholar] [CrossRef]

- Yang, K.; Wang, K.; Cheng, R.; Hu, W.; Huang, X.; Bai, J. Detecting traversable area and water hazards for the visually impaired with a pRGB-D sensor. Sensors 2017, 17, 1890. [Google Scholar] [CrossRef] [PubMed]

- Yang, K.; Wang, K.; Hu, W.; Bai, J. Expanding the detection of traversable area with RealSense for the visually impaired. Sensors 2016, 16, 1954. [Google Scholar] [CrossRef] [PubMed]

- Chang, N.H.; Chien, Y.H.; Chiang, H.H.; Wang, W.Y.; Hsu, C.C. A Robot Obstacle Avoidance Method Using Merged CNN Framework. In Proceedings of the International Conference on Machine Learning and Cybernetics (ICMLC), Kobe, Japan, 7–10 July 2019; pp. 1–5. [Google Scholar]

- Mancini, M.; Costante, G.; Valigi, P.; Ciarfuglia, T.A. Fast robust monocular depth estimation for Obstacle Detection with fully convolutional networks. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 4296–4303. [Google Scholar]

- Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; Nießner, M. Scannet: Richly-annotated 3d reconstructions of indoor scenes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- The PASCAL Visual Object Classes Challenge. 2007. Available online: http://host.robots.ox.ac.uk/pascal/VOC/voc2007/ (accessed on 27 November 2020).

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014. [Google Scholar]

- Jensen, M.B.; Nasrollahi, K.; Moeslund, T.B. Evaluating state-of-the-art object detector on challenging traffic light data. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017; pp. 9–15. [Google Scholar]

- Rothaus, K.; Roters, J.; Jiang, X. Localization of pedestrian lights on mobile devices. In Proceedings of the Asia-Pacific Signal and Information Processing Association, 2009 Annual Summit and Conference, Lanzhou, China, 18–21 November 2009; pp. 398–405. [Google Scholar]

- Fernández, C.; Guindel, C.; Salscheider, N.O.; Stiller, C. A deep analysis of the existing datasets for traffic light state recognition. In Proceedings of the International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 248–254. [Google Scholar]

- Wang, X.; Jiang, T.; Xie, Y. A Method of Traffic Light Status Recognition Based on Deep Learning. In Proceedings of the 2018 International Conference on Robotics, Control and Automation Engineering, Beijing, China, 26–28 December 2018; pp. 166–170. [Google Scholar]

- Kulkarni, R.; Dhavalikar, S.; Bangar, S. Traffic Light Detection and Recognition for Self Driving Cars Using Deep Learning. In Proceedings of the Fourth International Conference on Computing Communication Control and Automation (ICCUBEA), Pune, India, 16–18 August 2018; pp. 1–4. [Google Scholar]

- Zuo, Z.; Yu, K.; Zhou, Q.; Wang, X.; Li, T. Traffic signs detection based on faster r-cnn. In Proceedings of the International Conference on Distributed Computing Systems Workshops (ICDCSW), Atlanta, GA, USA, 5–8 June 2017; pp. 286–288. [Google Scholar]

- Lee, E.; Kim, D. Accurate traffic light detection using deep neural network with focal regression loss. Image Vis. Comput. 2019, 87, 24–36. [Google Scholar] [CrossRef]

- Müller, J.; Dietmayer, K. Detecting traffic lights by single shot detection. In Proceedings of the International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 266–273. [Google Scholar]

- Hassan, N.; Ming, K.W.; Wah, C.K. A Comparative Study on HSV-based and Deep Learning-based Object Detection Algorithms for Pedestrian Traffic Light Signal Recognition. In Proceedings of the 2020 3rd International Conference on Intelligent Autonomous Systems (ICoIAS), Singapore, 26–29 February 2020; pp. 71–76. [Google Scholar]

- Ouyang, Z.; Niu, J.; Liu, Y.; Guizani, M. Deep CNN-based Real-time Traffic Light Detector for Self-driving Vehicles. IEEE Trans. Mob. Comput. 2019, 19, 300–313. [Google Scholar] [CrossRef]

- Gupta, A.; Choudhary, A. A Framework for Traffic Light Detection and Recognition using Deep Learning and Grassmann Manifolds. In Proceedings of the 2019 IEEE Intelligent Vehicles Symposium (IV), Paris, France, 9–12 June 2019; pp. 600–605. [Google Scholar]

- Lu, Y.; Lu, J.; Zhang, S.; Hall, P. Traffic signal detection and classification in street views using an attention model. Comput. Vis. Media 2018, 4, 253–266. [Google Scholar] [CrossRef]

- Ozcelik, Z.; Tastimur, C.; Karakose, M.; Akin, E. A vision based traffic light detection and recognition approach for intelligent vehicles. In Proceedings of the International Conference on Computer Science and Engineering (UBMK), Antalya, Turkey, 5–8 October 2017; pp. 424–429. [Google Scholar]

- Moosaei, M.; Zhang, Y.; Micks, A.; Smith, S.; Goh, M.J.; Murali, V.N. Region Proposal Technique for Traffic Light Detection Supplemented by Deep Learning and Virtual Data; Technical Report; SAE: Warrendale, PA, USA, 2017. [Google Scholar]

- Saini, S.; Nikhil, S.; Konda, K.R.; Bharadwaj, H.S.; Ganeshan, N. An efficient vision-based traffic light detection and state recognition for autonomous vehicles. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Los Angeles, CA, USA, 11–14 June 2017; pp. 606–611. [Google Scholar]

- John, V.; Yoneda, K.; Qi, B.; Liu, Z.; Mita, S. Traffic light recognition in varying illumination using deep learning and saliency map. In Proceedings of the International IEEE Conference on Intelligent Transportation Systems (ITSC), Qingdao, China, 8–11 October 2014; pp. 2286–2291. [Google Scholar]

- John, V.; Yoneda, K.; Liu, Z.; Mita, S. Saliency map generation by the convolutional neural network for real-time traffic light detection using template matching. IEEE Trans. Comput. Imaging 2015, 1, 159–173. [Google Scholar] [CrossRef]

- Possatti, L.C.; Guidolini, R.; Cardoso, V.B.; Berriel, R.F.; Paixão, T.M.; Badue, C.; De Souza, A.F.; Oliveira-Santos, T. Traffic light recognition using deep learning and prior maps for autonomous cars. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Budapest, Hungary, 14–19 July 2019; pp. 1–8. [Google Scholar]

- Pedestrian Traffic Light Dataset (PTLD). Available online: https://drive.google.com/drive/folders/0B2MY7T7S8OmJVVlCTW1jYWxqUVE (accessed on 27 November 2020).

- Lafratta, A.; Barker, P.; Gilbert, K.; Oxley, P.; Stephens, D.; Thomas, C.; Wood, C. Assessment of Accessibility Stansards for Disabled People in Land Based Public Transport Vehicle; Department for Transport: London, UK, 2008.

- Soltani, S.H.K.; Sham, M.; Awang, M.; Yaman, R. Accessibility for disabled in public transportation terminal. Procedia Soc. Behav. Sci. 2012, 35, 89–96. [Google Scholar] [CrossRef]

- Guerreiro, J.; Ahmetovic, D.; Sato, D.; Kitani, K.; Asakawa, C. Airport accessibility and navigation assistance for people with visual impairments. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019; pp. 1–14. [Google Scholar]

- Panambur, V.R.; Sushma, V. Study of challenges faced by visually impaired in accessing bangalore metro services. In Proceedings of the Indian Conference on Human-Computer Interaction, Hyderabad, India, 1–3 November 2019; pp. 1–10. [Google Scholar]

- Busta, M.; Neumann, L.; Matas, J. Deep textspotter: An end-to-end trainable scene text localization and recognition framework. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2204–2212. [Google Scholar]

- Xing, L.; Tian, Z.; Huang, W.; Scott, M.R. Convolutional character networks. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27–28 October 2019; pp. 9126–9136. [Google Scholar]

- Long, S.; He, X.; Yao, C. Scene text detection and recognition: The deep learning era. Int. J. Comput. Vis. 2021, 129, 161–184. [Google Scholar] [CrossRef]

- Zhangaskanov, D.; Zhumatay, N.; Ali, M.H. Audio-based smart white cane for visually impaired people. In Proceedings of the International Conference on Control, Automation and Robotics (ICCAR), Beijing, China, 19–22 April 2019; pp. 889–893. [Google Scholar]

- Khan, N.S.; Kundu, S.; Al Ahsan, S.; Sarker, M.; Islam, M.N. An assistive system of walking for visually impaired. In Proceedings of the International Conference on Computer, Communication, Chemical, Material and Electronic Engineering (IC4ME2), Rajshahi, Bangladesh, 8–9 February 2018; pp. 1–4. [Google Scholar]

- Katzschmann, R.K.; Araki, B.; Rus, D. Safe Local Navigation for Visually Impaired Users With a Time-of-Flight and Haptic Feedback Device. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 583–593. [Google Scholar] [CrossRef]

- Wang, H.C.; Katzschmann, R.K.; Teng, S.; Araki, B.; Giarré, L.; Rus, D. Enabling independent navigation for visually impaired people through a wearable vision-based feedback system. In Proceedings of the IEEE international conference on robotics and automation (ICRA), Singapore, 29 May–3 June 2017; pp. 6533–6540. [Google Scholar]

- Xu, S.; Yang, C.; Ge, W.; Yu, C.; Shi, Y. Virtual Paving: Rendering a Smooth Path for People with Visual Impairment through Vibrotactile and Audio Feedback. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2020, 4, 1–25. [Google Scholar]

- Aftershokz. Available online: https://aftershokz.co.uk/blogs/news/how-does-bone-conduction-headphones-work (accessed on 27 November 2020).

- Sivan, S.; Darsan, G. Computer Vision based Assistive Technology for Blind and Visually Impaired People. In Proceedings of the International Conference on Computing Communication and Networking Technologies, Dallas, TX, USA, 6–8 July 2016. [Google Scholar]

- Maptic. Available online: https://www.core77.com/projects/68198/Maptic-Tactile-Navigation-for-the-Visually-Impaired (accessed on 27 November 2020).

- Microsoft Soundscape. Available online: https://www.microsoft.com/en-us/research/product/soundscape/ (accessed on 27 November 2020).

- Smart Cane. Available online: http://edition.cnn.com/2014/06/20/tech/innovation/sonar-sticks-use-ultrasound-blind/index.html (accessed on 27 November 2020).

- WeWalk Cane. Available online: https://wewalk.io/en/product (accessed on 27 November 2020).

- Horus. Available online: https://www.prnewswire.com/news-releases/horus-technology-launches-early-access-program-for-ai-powered-wearable-for-the-blind-rebrands-company-as-eyra-300351430.html (accessed on 27 November 2020).

- Ray Electronic Mobility Aid. Available online: https://www.maxiaids.com/ray-electronic-mobility-aid-for-the-blind (accessed on 27 November 2020).

- Ultra Cane. Available online: https://www.ultracane.com/ (accessed on 27 November 2020).

- Blind Square. Available online: https://www.blindsquare.com/ (accessed on 27 November 2020).

- Envision Glasses. Available online: https://www.letsenvision.com/blog/envision-announces-ai-powered-smart-glasses-for-the-blind-and-visually-impaired (accessed on 27 November 2020).

- Eye See. Available online: https://mashable.com/2017/09/14/smart-helmet-visually-impaired/?europe=true (accessed on 27 November 2020).

- Nearby Explorer. Available online: https://tech.aph.org/neo_info.htm (accessed on 27 November 2020).

- Seeing Eye GPS. Available online: http://www.senderogroup.com/products/seeingeyegps/index.html (accessed on 27 November 2020).

- pathVu Navigation. Available online: pathvu.com (accessed on 27 November 2020).

- Step Hear. Available online: https://www.step-hear.com/ (accessed on 27 November 2020).

- Intersection Explorer. Available online: https://play.google.com/store/apps/details?id=com.google.android.marvin.intersectionexplorer&hl=en_IE&gl=US (accessed on 27 November 2020).

- LAZARILLO APP. Available online: https://lazarillo.app/ (accessed on 27 November 2020).

- lazzus. Available online: http://www.lazzus.com/ (accessed on 27 November 2020).

- Sunu Band. Available online: https://www.sunu.com/en/index (accessed on 27 November 2020).

- Ariadne GPS. Available online: https://apps.apple.com/us/app/id441063072 (accessed on 13 April 2021).

- Aira. Available online: https://aira.io/how-it-works (accessed on 15 April 2021).

- Be My Eyes. Available online: https://www.bemyeyes.com/ (accessed on 15 April 2021).

- BrainPort. Available online: https://www.wicab.com/brainport-vision-pro (accessed on 15 April 2021).

- Cardillo, E.; Caddemi, A. Insight on electronic travel aids for visually impaired people: A review on the electromagnetic technology. Electronics 2019, 8, 1281. [Google Scholar] [CrossRef]

| Datasets Name | Capture Perspective | Number of Images | Coverage Area | Available On-Line | Paper | Year |

|---|---|---|---|---|---|---|

| Tümen and Ergen dataset [87] | Google street view (GSV) | 296 images | N/A | No | [87] | 2020 |

| Saeedimoghaddam and Stepinski dataset [86] | Map tiles | 4000 tiles | 27 cities in 15 U.S. states and captured the maps of different years | No | [86] | 2019 |

| Part of Oxford RobotCar dataset [94] | Vehicle | 310 sequences | Central Oxford | No | [84] | 2017 |

| Part of Lara [95] | Vehicle | 62 sequences | Paris, France | No | [84] | 2017 |

| Part of Cityscapes dataset [96] | Vehicle | 1599 images | Nine cities | Yes | [92] | 2017 |

| Kumar et al. dataset [88] | Grand Theft Auto V (GTA) [97] Gaming platform | 2000 videos from GTA and Mapillary [98] | - | No | [88] | 2018 |

| Construct videos from Mapillary [98] | Vehicle | 2000 videos from GTA and Mapillary [98] | 6 continents | No | [88] | 2018 |

| Construct dataset form KITTI [99] | Vehicle | 410 images +70 sequences | City of Karlsruhe, Germany | No | [93] | 2019 |

| Datasets Name | Perspective | Number of Images | Type | Conditions (Day/Night, etc.) | Coverage Area | Available On-Line | Paper |

|---|---|---|---|---|---|---|---|

| GSV dataset | GSV | 657,691 | Zebra | Crosswalk lines may disappear, Crosswalks are partially covered, shadows affect the illumination of the road, different styles of zebra crosswalks | 20 states of the Brazil | No | [108] |

| IARA | Vehicle | 12,441 | Zebra | Capture during the day | The capital of Espírito Santo, Vitória | Yes | [108] |

| GOPRO | Vehicle | 11,070 | Zebra | N/A | Vitória, Vila Velha and Guarapari, Espírito Santo, Brazil | Yes | [108] |

| Berriel et al. dataset [105] | Aerial | 245,768 | Zebra | Different crosswalk design, and different conditions (Crosswalk lines may disappear, Crosswalks are partially covered and so on) | 3 continents, 9 countries, and at least 20 cities | No | [105] |

| Kurath et al. dataset [102] | Aerial | 44,705 | Zebra | N/A | Switzerland | No | [102] |

| Tümen and Ergen dataset [87] | GSV | 296 | Zebra | N/A | N/A | No | [87] |

| Part of Mapillary Vistas dataset [110] | Street level | 20,000 | Zebra | Images captured with different camera in different weather, season, point of view and daytime | 6 continents | Yes | [104] |

| Cheng et al. Dataset [111] | Pedestrian | 191 | Zebra | N/A | N/A | Yes | [104] |

| Pedestrian Traffic Lane [112] | Pedestrian | 5059 | Zebra | N/A | N/A | Yes | [62] |

| Malbog dataset [109] | Vehicle | 500 | Zebra | Images captured in the morning and afternoon periods | N/A | No | [109] |

| Datasets Name | #Num of Images | Number of Obstacles | Approach | Paper | Year |

|---|---|---|---|---|---|

| Shadi et al. dataset [60] | 2760 images | 15 objects for BVIP’s usage | Semantic Segmentation | [60] | 2019 |

| Cityscapes dataset [96] | 5k fine frames | 30 objects | Semantic Segmentation | [39] | 2020 |

| Part of Scannet dataset [135] | 25k frames | 40 objects | Semantic Segmentation | [44] | 2019 |

| Cityscapes dataset [96] | 5k fine frames | 30 objects | Semantic Segmentation | [44] | 2019 |

| RGB dataset | 14k frames | 6k objects for BVIP’s usage | Semantic Segmentation | [44] | 2019 |

| RGB-D dataset | 21k frames | 6k objects for BVIP’s usage | Semantic Segmentation | [44] | 2019 |

| PASCAL dataset [136] | 11,540 images | 20 objects | Object Detection | [61] | 2017 |

| Lin et al.dataset [61] | 1710 images | 7 objects | Object Detection | [61] | 2017 |

| Part of PASCAL dataset [136] | 10k image patches | 20 objects | Patch Classification | [76] | 2016 |

| Common Objects in Context (COCO) dataset [137] | 328k images | 80 objects | Object Recognition | [45] | 2019 |

| PASCAL dataset [136] | 11,540 images | 20 objects | Object Recognition | [45] | 2019 |

| Yang et al. dataset [47] | 37,075 images | 22 objects | Semantic Segmentation | [47] | 2018 |

| Joshi et al. dataset [68] | 650 images per class | 25 objects | Object Detection | [68] | 2020 |

| COCO dataset [137] | 328k images | 80 objects | Object Detection | [80] | 2019 |

| Datasets Name | #Num of Images | Conditions (Day /Night, etc.) | Country | Available On-Line | Paper | Year |

|---|---|---|---|---|---|---|

| Li et al. dataset [53] | 3693 images | N/A | New York City | No | [53] | 2019 |

| Ash et al. dataset [64] | 950 color images, 121 short videos | Taken during daytime | Israel | No | [64] | 2018 |

| Hassan and Ming dataset [146] | 400 images (HSV threshold selection) +5000 images (train) +400 images (test) | Variation in lights (HSV threshold selection) Different in distances from PTLs (test) | Singapore | No | [146] | 2020 |

| Pedestrian Traffic Lane [112] | 5059 images | Variation in weather, position, orientation, and diverse size, and type of intersections | N/A | Yes | [62] | 2019 |

| Pedestrian Traffic Light [156] | 4399 images | N/A | Brazilian cities | Yes | [63] | 2018 |

| Part of Mapillary Vistas dataset [110] | 20,000 images | Images captured with different camera at different weather, season, point of view and daytime | 6 continents | Yes | [104] | 2018 |

| Cheng et al. dataset [52] | 17,774 videos | N/A | China, Italy, and Germany | Yes | [104] | 2018 |

| Paper | Year | Traffic Light Type | Different Shapes | Tracking | Detect Active Colour | Low Resolutions | Different Size | Stability | Illumination |

|---|---|---|---|---|---|---|---|---|---|

| [53] | 2020 | Pedestrian | |||||||

| [64] | 2018 | Pedestrian | ✔ | ||||||

| [146] | 2020 | Pedestrian | ✔ | ||||||

| [62] | 2019 | Pedestrian | ✔ | ✔ | |||||

| [63] | 2018 | Pedestrian | |||||||

| [104] | 2018 | Pedestrian | ✔ | ||||||

| [147] | 2019 | Vehicle | |||||||

| [148] | 2019 | Vehicle | |||||||

| [144] | 2019 | Vehicle | ✔ | ||||||

| [149] | 2018 | Vehicle | ✔ | ✔ | |||||

| [145] | 2018 | Vehicle | ✔ | ||||||

| [151] | 2017 | Vehicle | ✔ | ||||||

| [152] | 2017 | Vehicle | ✔ | ||||||

| [155] | 2019 | Vehicle | ✔ | ✔ | |||||

| [153] | 2014 | Vehicle | ✔ | ✔ | |||||

| [150] | 2017 | Vehicle | ✔ | ✔ |

| Name | Components | Features | Feedback/Wearability/Cost | Weak Points |

|---|---|---|---|---|

| Maptic [171] | Sensor, Several feedback units, Phone | (1) Upper body obstacles detection (2) Navigation guidance | Haptic/Wearable/Unknown | Ground obstacles detection not supported |

| Microsoft Soundscape [172] | Phone, Beacons | (1) Navigation guidance (2) points of interest information | Audio/Handheld/Free | Obstacles detection not supported |

| SmartCane [173] | Sensor, Cane, Vibrations unit | Obstacles detection | Haptic/Handheld/ Commercial | Navigation guidance not supported |

| WeWalk [174] | Sensor, Cane, Phone | (1) Obstacles detection (2) Navigation guidance (3) Using public transportation (4) Points of interest information | Audio and haptic/Handheld (weight = 252 g/0.55 pounds (The weight of the white cane is not included))/ Commercial ($599) | Obstacle recognition and scene description not supported |

| Horus [175] | Bone conducted headset, two cameras, battery and GPU | (1) Obstacles detection (2) Read text (3) Face recognition (4) Scene description | Audio/Wearable/Commercial (cost around US $2000) | Navigation guidance not supported |

| Ray Electronic Mobility Aid [176] | Ultrasonic | Obstacles detection | Audio and Haptic/Handheld (60 g)/Commercial ($395.00) | Navigation guidance not supported |

| UltraCane [177] | A dual-range, Narrow beam ultrasound system, Cane | Obstacles detection | Haptic/Handheld/ Commercial (£590.00) | Navigation guidance not supported |

| BlindSquare [178] | Phone | (1) Navigation guidance (2) Using public transportation (3) Points of interest information | Audio/Handheld/ Commercial ($39.99) | Obstacles detection not supported |

| Envision Glasses [179] | Glasses with camera | (1) Read text (2) Scene description (3) Help in finding belongs, detect colours, Scan bar-codes (4) Recognize faces, make calls, | ask for help and share context >Via audio/Wearable (46 g)/ Commercial ($2099) | Obstacle detection and navigation guidance not supported |

| Eye See [180] | Helmet, Camera, Laser | (1) Obstacle detection (2) Read text (3) People descriptions | Via audio/Wearable/Unknown | Navigation guidance not supported |

| Nearby Explorer [181] | Phone | (1) Navigation guidance (2) Points of interest information (3) User tracking (4) Object’s information | Via audio and haptic/Handheld/Free | Obstacles detection not supported |

| Seeing Eye GPS [182] | Phone | (1) Navigation guidance (2) Points of interest and intersections information | Audio/Handheld/Commercial | Obstacles detection not supported |

| PathVu Navigation [183] | Phone | Alert about sidewalk problems | Via audio/Handheld/Free | Obstacles detection and navigation guidance not supported |

| Step-hear [184] | Phone | (1) Navigation guidance (2) Using public transportation | Via audio/Handheld/Free | Obstacle detection not supported |

| InterSection Explorer [185] | Phone | Information about street and intersections | Audio/Handheld/Free | Obstacles detection and navigation guidance not supported |

| LAZARILLO APP [186] | Phone | (1) Navigation guidance (2) Using public transportation (3) Point of interests information | Audio/Handheld/Free | Obstacles detection not supported |

| Lazzus APP [187] | Phone | (1) Navigation guidance (2) points of interest, crossings and intersections information | Audio/Handheld/Commercial (one year license $29.99) | Obstacles detection not supported |

| Sunu Band [188] | Sensors | Upper body obstacles detection | Haptic/Wearable/ Commercial ($299.00) | Ground obstacles detection not supported |

| Ariadne GPS [189] | Phone | (1) Navigation guidance (2) Explore the map | Audio/Handheld/Commercial ($4.99) | Obstacles detection not supported |

| Aira [190] | Phone | Support by sighted person | Audio/Handheld/ Commercial ($99 for 120 min) | Very expensive and Not preserve privacy |

| Be My Eyes [191] | Phone | Support by sighted person | Audio/Handheld/Free | Not preserve privacy |

| BrainPort [192] | Video camera a hand-held controller, a tongue array | Object detection | Haptic/Handheld and wearble/Commercial | Navigation guidance not supported |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

El-taher, F.E.-z.; Taha, A.; Courtney, J.; Mckeever, S. A Systematic Review of Urban Navigation Systems for Visually Impaired People. Sensors 2021, 21, 3103. https://doi.org/10.3390/s21093103

El-taher FE-z, Taha A, Courtney J, Mckeever S. A Systematic Review of Urban Navigation Systems for Visually Impaired People. Sensors. 2021; 21(9):3103. https://doi.org/10.3390/s21093103

Chicago/Turabian StyleEl-taher, Fatma El-zahraa, Ayman Taha, Jane Courtney, and Susan Mckeever. 2021. "A Systematic Review of Urban Navigation Systems for Visually Impaired People" Sensors 21, no. 9: 3103. https://doi.org/10.3390/s21093103

APA StyleEl-taher, F. E.-z., Taha, A., Courtney, J., & Mckeever, S. (2021). A Systematic Review of Urban Navigation Systems for Visually Impaired People. Sensors, 21(9), 3103. https://doi.org/10.3390/s21093103