A Novel Seam Tracking Technique with a Four-Step Method and Experimental Investigation of Robotic Welding Oriented to Complex Welding Seam

Abstract

1. Introduction

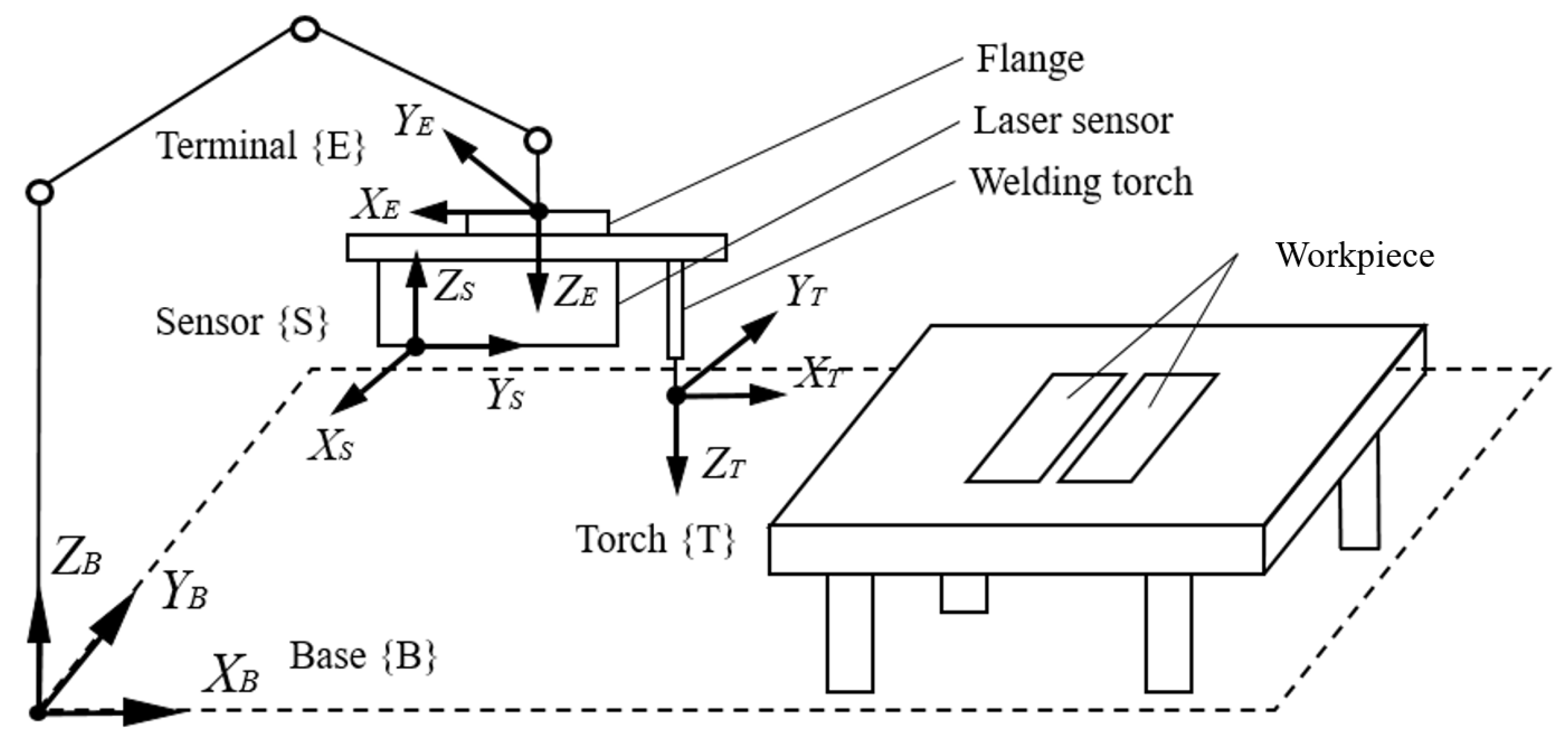

2. Seam Tracking System Composition

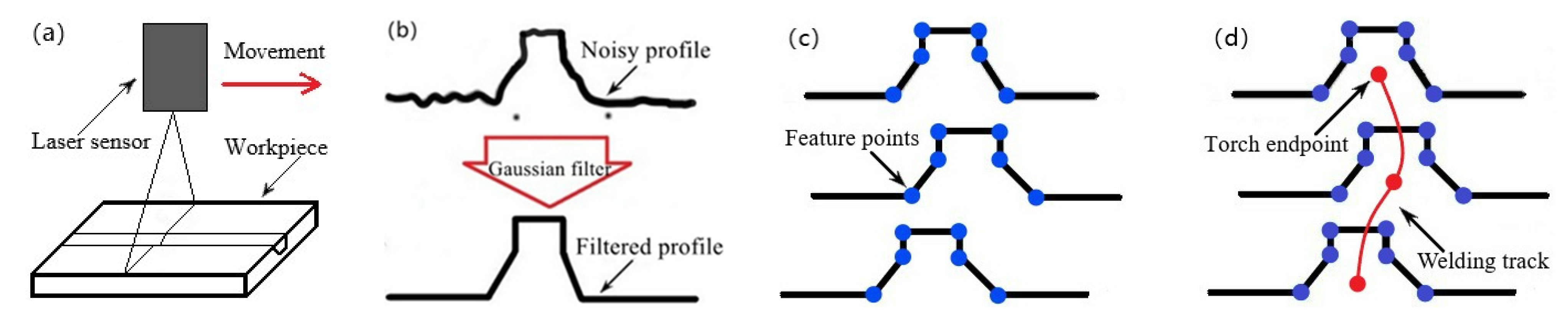

3. Seam Tracking Methodology with Four Steps

3.1. Scanning and Filtering

3.2. Feature Point Extracting

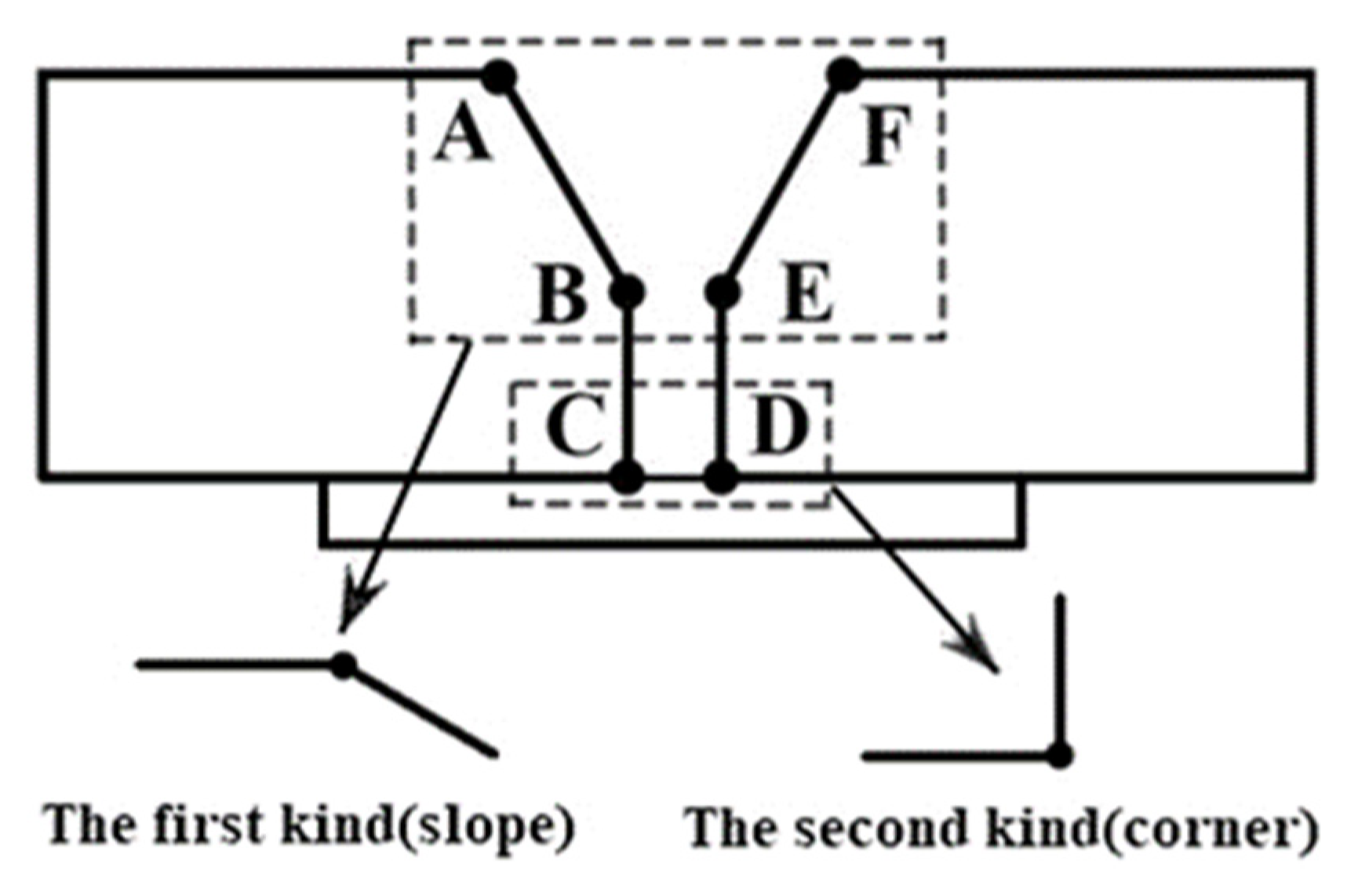

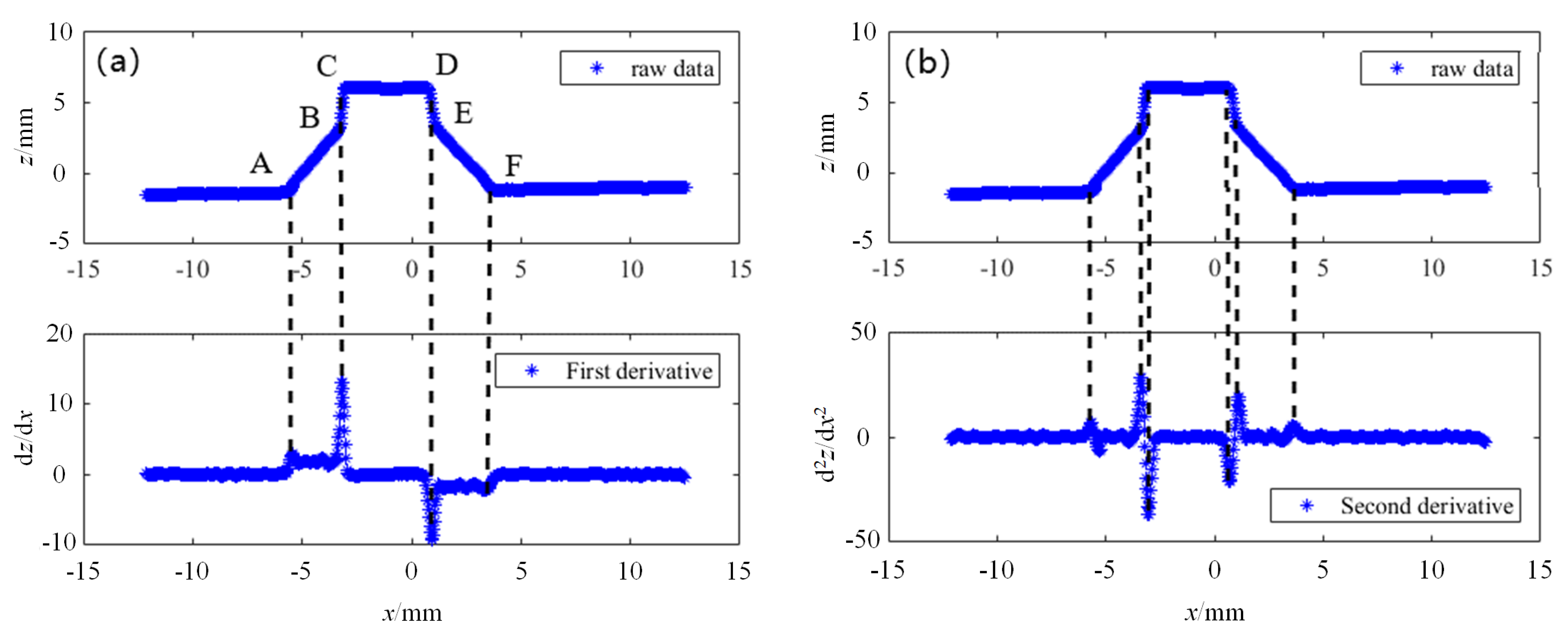

3.2.1. Initial Positioning of Feature Points

3.2.2. Precise Positioning of Feature Points

3.3. Path Planning

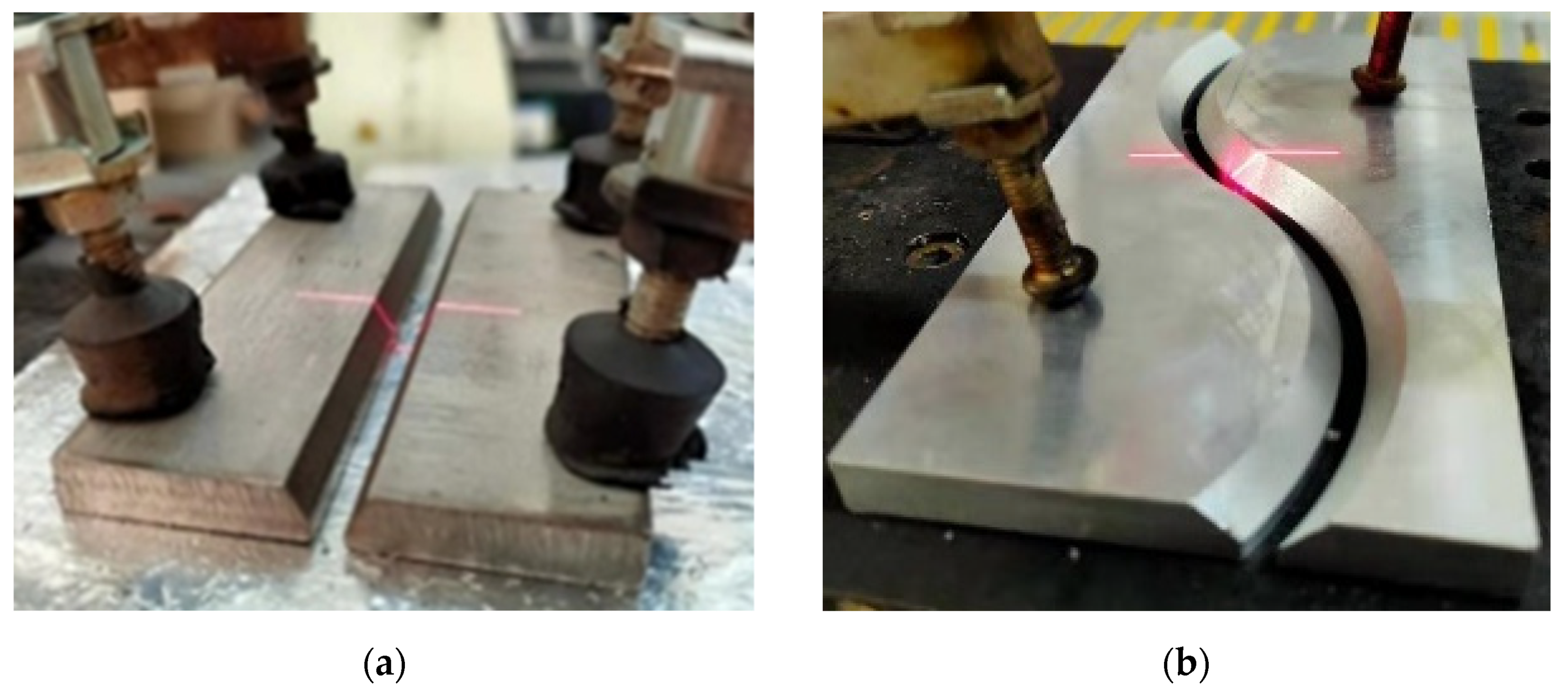

- Select a point P on the weldment, make the end of the welding torch this point, and record the position of P in the {B} coordinate system BP = (xB, yB, zB, 1)T, as shown in Figure 8a.

- Move the robot so that the laser line of the sensor passes through this point, and record the position of P in the {S} coordinate system SP = (xS, 0 zS, 1)T, as shown in Figure 8b.

- Switch the current tool coordinate system of the robot to {E}, record the pose data of the robot at this time, and from the Euler rotation equation, can be expressed as [41]:where α, β, γ are the rotation angles of the X, Y, and Z axes of the tool coordinate system {E}, respectively.

4. Experimental Procedures

5. Conclusions

- A set of seam tracking systems based on laser sensing and visual information extraction is designed, and the method involving scanning, filtering, feature points extracting, and path planning is proposed to realize high-precision seam tracking;

- The groove information is collected through the laser sensor and the data are filtered, and the corresponding three-dimensional coordinate value in the sensor coordinate system is calculated using the two-dimensional coordinates of the image feature points;

- The accuracy problem of feature point positioning when the weldment surface has defects is solved. Experimental results show that the average deviations of both straight line and curve of welding feature points after precise positioning is less than 0.5 mm;

- The experimental errors are mainly caused by the calibration error of the sensor coordinate system and the calculation error of the feature points extracting algorithm. In addition, increasing the resolution of the sensor could further improve the measurement accuracy.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Amruta Rout, B.B.V.L.; Deepak, B.B. Advances in weld seam tracking techniques for robotic welding: A review. Robot. Comput. Integr. Manuf. 2019, 56, 12–37. [Google Scholar] [CrossRef]

- Pires, A.J.N.; Loureiro, T.; Godinho, P.; Ferreira, B.; Fernando, J.M. Welding robots. IEEE Robot. Autom. Mag. 2003, 10, 45–55. [Google Scholar] [CrossRef]

- Shao, W.J.; Huang, Y.; Zhang, Y. A novel weld seam detection method for space weld seam of narrow butt joint in laser welding. Opt. Laser Technol. 2018, 99, 39–51. [Google Scholar] [CrossRef]

- Pires, A.J.N.; Loureiro, G.B. Welding Robots: Technology, System Issues and Application; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2006. [Google Scholar]

- Lei, T.; Rong, Y.M.; Wang, H.; Huang, Y.; Li, M. A review of vision-aided robotic welding. Comput. Ind. 2020, 123, 103326–103355. [Google Scholar] [CrossRef]

- Hong, L.; Xiaoqi, C. Laser visual sensing for seam tracking in robotic arc welding of titanium alloys. Int. J. Adv. Manuf. Technol. 2005, 26, 1012–1017. [Google Scholar]

- Peiquan, X.; Guoxiang, X.; Xinhua, T.; Shun, Y. A visual seam tracking system for robotic arc welding. Int. J. Adv. Manuf. Technol. 2008, 37, 70–75. [Google Scholar]

- Shi, F.; Tao, L.; Chen, S. Efficient weld seam detection for robotic welding based on local image processing. Ind. Robot. Int. J. 2009, 56, 277–283. [Google Scholar] [CrossRef]

- Mikael, F.; Gunnar, B. Design and validation of a universal 6d seam tracking system in robotic welding based on laser scanning. Ind. Robot. Int. J. 2003, 30, 437–448. [Google Scholar]

- Wu, Q.-Q.; Lee, J.-P.; Park, M.-H.; Park, C.-K.; Kim, I.-S. A study on development of optimal noise filter algorithm for laser vision system in GMA welding. Procedia Eng. 2014, 97, 819–827. [Google Scholar] [CrossRef][Green Version]

- Jeong, S.-K.; Lee, G.-Y.; Lee, W.-K.; Kim, S.-B. Development of high speed rotating arc sensor and seam tracking controller for welding robots. In Proceedings of the 2001 IEEE International Symposium on Industrial Electronics, (Cat. No.01TH8570), Pusan, Korea, 12–16 June 2001; pp. 845–850. [Google Scholar]

- Ushio, M.; Mao, W. Modelling of an arc sensor for dc mig/mag welding in open arc mode: Study of improvement of sensitivity and reliability of arc sensors in GMA welding. Weld. Int. 1996, 10, 622–631. [Google Scholar] [CrossRef]

- You, B.-H.; Kim, J.-W. A study on an automatic seam tracking system by using an electromagnetic sensor for sheet metal arc welding of butt joints, In: Proceedings of the institution of mechanical engineers. Part B J. Eng. Manuf. 2002, 216, 911–920. [Google Scholar] [CrossRef]

- Kang-Yul, B.; Jin-Hyun, P. A study on development of inductive sensor for automatic weld seam tracking. J. Mater. Process. Technol. 2006, 176, 111–116. [Google Scholar]

- Freire, B.T.; Miguel, M.J.; Leopoldo, C.; Ramdn, C. Weld seams detection and recognition for robotic arc-welding through ultrasonic sensors. In Proceedings of the 1994 IEEE International Symposium on Industrial Electronics (ISIE’94), Santiago, Chile, 25–27 May 1994; pp. 310–315. [Google Scholar]

- Maqueira, B.; Umeagukwu, C.I.; Jarzynski, J. Application of ultrasonic sensors to robotic seam tracking. IEEE Trans. Robot. Autom. 1989, 5, 337–344. [Google Scholar] [CrossRef]

- He, Y.; Chen, Y.; Xu, Y.; Huang, Y.; Chen, S. Autonomous detection of weld seam profiles via a model of saliency-based visual attention for robotic arc welding. J. Intell. Robot. Syst. 2016, 81, 395–402. [Google Scholar] [CrossRef]

- Guo, J.C.; Zhu, Z.; Yu, Y.; Sun, B. Research and Application of Visual Sensing Technology Based on Laser Structured Light in Welding Industry. Chin. J. Lasers 2017, 44, 7–16. [Google Scholar]

- Hou, Z.; Xu, Y.L.; Xiao, R.Q.; Chen, S.B. A teaching-free welding method based on laser visual sensing system in robotic GMAW. Int. J. Adv. Manuf. Technol. 2020, 109, 1755–1774. [Google Scholar] [CrossRef]

- Zou, Y.B.; Wang, Y.B.; Zhou, W.L. Research on Line Laser Seam Tracking Method based on Guassian Kernelized Correlation Filters. Appl. Laser 2016, 36, 578–584. [Google Scholar]

- Chen, X.H.; Dharmawan, A.G.; Foong, S.H.; Soh, G.S. Seam tracking of large pipe structures for an agile robotic welding system mounted on scaffold structures. Robot. Comput. Integr. Manuf. 2018, 50, 242–255. [Google Scholar] [CrossRef]

- Chang, D.Y.; Son, D.H.; Lee, J.W.; Kim, T.W.; Lee, K.Y.; Kim, J.W. A new seam-tracking algorithm through characteristic-point detection for a portable welding robot. Robot. Comput. Integr. Manuf. 2012, 28, 1–13. [Google Scholar] [CrossRef]

- Wang, N.F.; Zhong, K.F.; Shi, X.D.; Zhang, X.M. A robust weld seam recognition method under heavy noise based on structured-light vision. Robot. Comput. Integr. Manuf. 2020, 61, 1–9. [Google Scholar] [CrossRef]

- Matsui, S.; Goktug, G. Slit laser sensor guided real-time seam tracking arc welding robot system for non-uniform joint gaps, Industrial Technology. Proc. IEEE Int. Conf. Ind. Technol. 2002, 1, 159–162. [Google Scholar]

- Olaf, C.; Jakub, J.; Suszynski, M. Programming of Industrial Robots Using the Recognition of Geometric Signs in Flexible Welding Process. Symmetry 2020, 12, 1429. [Google Scholar]

- Shah, H.N.M.; Sulaiman, M.; Shukor, A.Z.; Kamis, Z.; Rahman, A.A. Butt welding joints recognition and location identification by using local thresholding. Robot. Comput. Integr. Manuf. 2018, 51, 181–188. [Google Scholar] [CrossRef]

- Xinde, L.; Pei, L.; Omar, K.M.; Xiangheng, H.; Sam, G.S. A welding seam identification method based on cross-modal perception. Ind. Robot. Int. J. Robot. Res. Appl. 2019, 46, 453–459. [Google Scholar]

- Jakub, W.; Marcin, S. Optical scanner assisted robotic assembly. Assem. Autom. 2017, 37, 434–441. [Google Scholar]

- Marcin, S.; Jakub, W.; Jan, Z. No Clamp Robotic Assembly with Use of Point Cloud Data from Low-Cost Triangulation Scanner. Teh. Vjesn. Tech. Gaz. 2018, 25, 904–909. [Google Scholar]

- Zhou, G.H.; Xu, G.C.; Gu, X.P.; Liu, J.; Tian, Y.K.; Zhou, L. Simulation and experimental study on the quality evaluation of laser welds based on ultrasonic test. Int. J. Adv. Manuf. Technol. 2017, 93, 3897–3906. [Google Scholar] [CrossRef]

- Yang, G.W.; Yan, S.M.; Wang, Y.Z. V-Shaped Seam Tracking Based on Particle Filter with Histogram of Oriented Gradient. Chin. J. Lasers 2020, 47, 330–338. [Google Scholar]

- He, Y.S.; Yu, Z.H.; Li, J.; Yu, L.S.; Ma, G.H. Discerning Weld Seam Profiles from Strong Arc Background for the Robotic Automated Welding Process via Visual Attention Features. Chin. J. Mech. Eng. 2020, 33, 799–816. [Google Scholar] [CrossRef]

- Zou, Y.B.; Wang, Y.B.; Zhou, W.L.; Chen, X.Z. Real-time seam tracking control system based on line laser visions. Opt. Laser Technol. 2018, 103, 182–192. [Google Scholar] [CrossRef]

- Shao, W.J.; Liu, X.F.; Wu, Z.J. A robust weld seam detection method based on particle filter for laser welding by using a passive vision sensor. Int. J. Adv. Manuf. Technol. 2019, 104, 2971–2980. [Google Scholar] [CrossRef]

- Chen, W.J. Research on Seam Track Measuring System Based on Stripe Type Laser Sensor [Dissertation]; South China University of Technology: Guangzhou, China, 2018. [Google Scholar]

- Wang, X.Y.; Zhu, Z.M.; Zhou, F.Q.; Zhang, F.M. Complete calibration of a structured light stripe vision sensor through a single cylindrical target. Opt. Lasers Eng. 2020, 131, 106096. [Google Scholar] [CrossRef]

- Li, L.; Lin, B.Q.; Zou, Y.B. Study on Seam Tracking System Based on Stripe Type Laser Sensor and Welding Robot. Chin. J. Lasers 2015, 42, 34–41. [Google Scholar]

- Kidong, L.; Insung, H.; Young-Min, K.; Huijun, L.; Munjin, K.; Jiyoung, Y. Real-Time Weld Quality Prediction Using a Laser Vision Sensor in a Lap Fillet Joint during Gas Metal Arc Welding. Sensors 2020, 20, 1625–1641. [Google Scholar]

- Jawad, M.; Halis, A.; Essam, A. Welding seam profiling techniques based on active vision sensing for intelligent robotic welding. Int. J. Adv. Manuf. Technol. 2017, 88, 127–145. [Google Scholar]

- Qiao, G.F.; Sun, D.L.; Song, G.M. A Rapid Coordinate Transformation Method for Serial Robot Calibration System. J. Mech. Eng. 2020, 56, 1–8. [Google Scholar]

- Lei, T.; Huang, Y.; Wang, H.; Rong, Y.M. Automatic weld seam tracking of tube-to-tube sheet TIG welding robot with multiple sensors. J. Manuf. Process. 2020, 3, 47–52. [Google Scholar]

- Kovacevic, R.; Zhang, S.B.; Zhang, M.Y. Noncontact Ultrasonic Sensing for Seam Tracking in Arc Welding Processes. J. Manuf. Sci. Eng. 1998, 120, 600–608. [Google Scholar]

| Discontinuous Points Type | Amplitude | First Derivative | Second Derivative |

|---|---|---|---|

| The first | continuity | Step mutation | extremum |

| The second | continuity | non-existent | / |

| Feature Points | A | B | C | D | E | F |

|---|---|---|---|---|---|---|

| X/mm | −5.67 | −3.37 | −3.02 | 0.72 | 1.11 | 3.59 |

| Z/mm | −1.35 | 2.89 | 6.03 | 6.01 | 3.15 | −1.02 |

| Fitting Straight Line | 1 | 2 | 3 | 4 | 5 | 6 | 7 |

|---|---|---|---|---|---|---|---|

| SSE | 0.08 | 0.44 | 0.39 | 0.15 | 0.50 | 0.15 | 0.21 |

| R-squared | 0.85 | 0.99 | 0.95 | 0.87 | 0.97 | 0.99 | 0.81 |

| Feature Points | A | B | C | D | E | F |

|---|---|---|---|---|---|---|

| X/mm | −5.73 | −3.31 | −3.04 | 0.78 | 1.10 | 3.76 |

| Z/mm | −1.39 | 3.07 | 5..98 | 5..99 | 3.22 | −1.18 |

| Welding Type | Dimension/mm | Thickness/mm | Slope Angle/° | Blunt Edge/mm |

|---|---|---|---|---|

| Straight line | 100 × 60 | 8 | 45 | 2.5 |

| Curve | 130 × 70 | 10 | 60 | 3 |

| Welding Type | Initial Positioning | Precise Positioning | ||||||

|---|---|---|---|---|---|---|---|---|

| dx/mm | dz/mm | px/% | pz/% | dx/mm | dz/mm | px/% | pz/% | |

| Straight line | 0.628 | 0.214 | 6.688 | 2.665 | 0.387 | 0.230 | 4.121 | 2.864 |

| Curve | 0.736 | 0.185 | 7.838 | 2.304 | 0.429 | 0.251 | 4.569 | 3.126 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, G.; Zhang, Y.; Tuo, S.; Hou, Z.; Yang, W.; Xu, Z.; Wu, Y.; Yuan, H.; Shin, K. A Novel Seam Tracking Technique with a Four-Step Method and Experimental Investigation of Robotic Welding Oriented to Complex Welding Seam. Sensors 2021, 21, 3067. https://doi.org/10.3390/s21093067

Zhang G, Zhang Y, Tuo S, Hou Z, Yang W, Xu Z, Wu Y, Yuan H, Shin K. A Novel Seam Tracking Technique with a Four-Step Method and Experimental Investigation of Robotic Welding Oriented to Complex Welding Seam. Sensors. 2021; 21(9):3067. https://doi.org/10.3390/s21093067

Chicago/Turabian StyleZhang, Gong, Yuhang Zhang, Shuaihua Tuo, Zhicheng Hou, Wenlin Yang, Zheng Xu, Yueyu Wu, Hai Yuan, and Kyoosik Shin. 2021. "A Novel Seam Tracking Technique with a Four-Step Method and Experimental Investigation of Robotic Welding Oriented to Complex Welding Seam" Sensors 21, no. 9: 3067. https://doi.org/10.3390/s21093067

APA StyleZhang, G., Zhang, Y., Tuo, S., Hou, Z., Yang, W., Xu, Z., Wu, Y., Yuan, H., & Shin, K. (2021). A Novel Seam Tracking Technique with a Four-Step Method and Experimental Investigation of Robotic Welding Oriented to Complex Welding Seam. Sensors, 21(9), 3067. https://doi.org/10.3390/s21093067