On the Use of Movement-Based Interaction with Smart Textiles for Emotion Regulation

Abstract

1. Introduction

- Body movements and emotions. The experiment aims to find out which body movement has a positive impact on users’ emotional state(s);

- Feedback mechanisms. Because the type of augmented sensory feedback may also have an impact on the users’ emotional state(s), the experiment aims to explore their preferences on feedback mechanisms when eliciting specific movements;

- Movement and feedback mechanisms. We also want to determine if there is an interaction effect between movement(s) and feedback mechanism(s);

- Emotion assessment. Finally, we want to determine whether participants’ self-emotional assessment is consistent with their emotional expressions when evaluating the prototype.

2. Related Work

2.1. Emotion Regulation and Body Movements

2.2. Feedback Mechanisms and Emotions

2.3. Smart Textiles and Emotions

3. E-motionWear

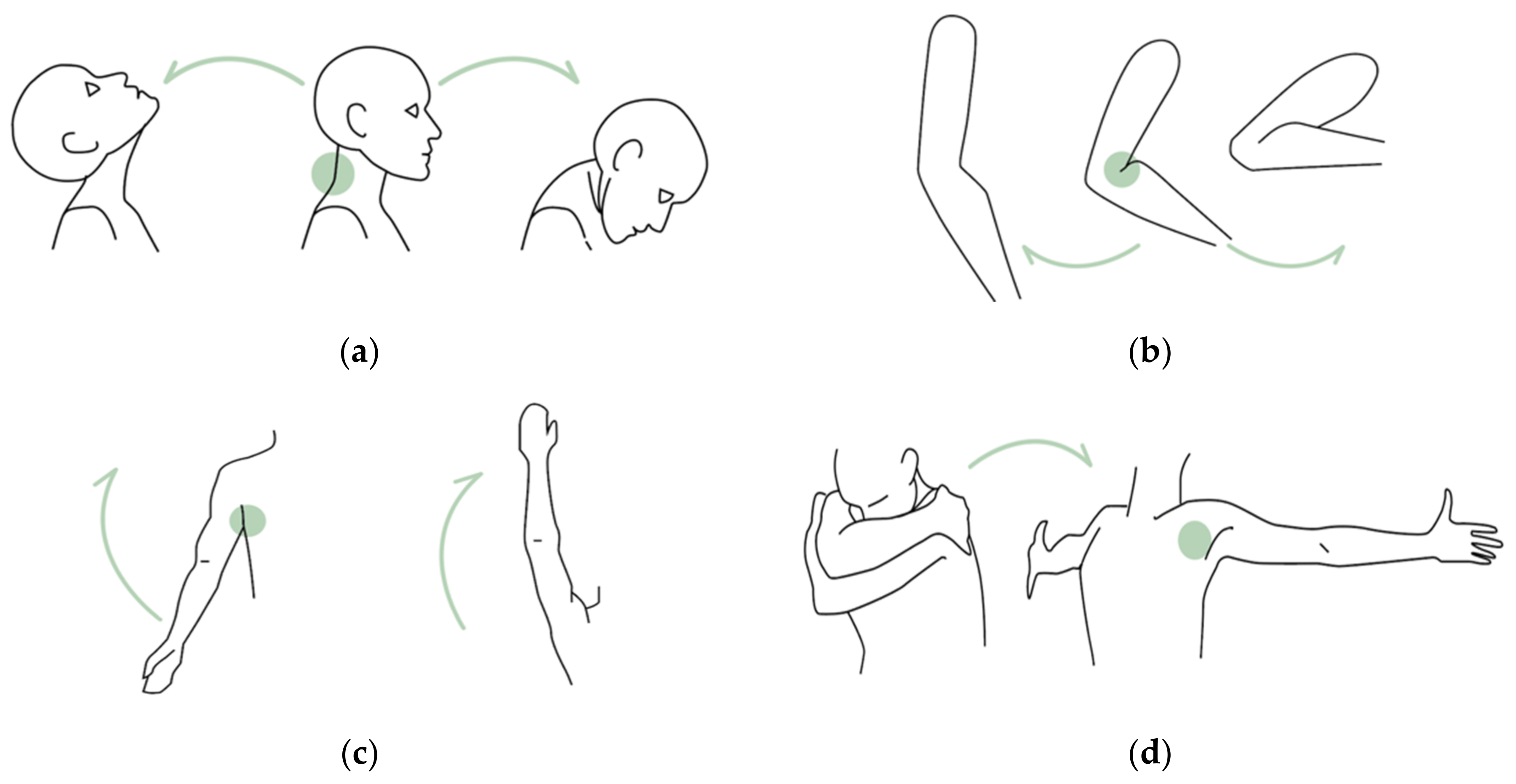

3.1. Movements

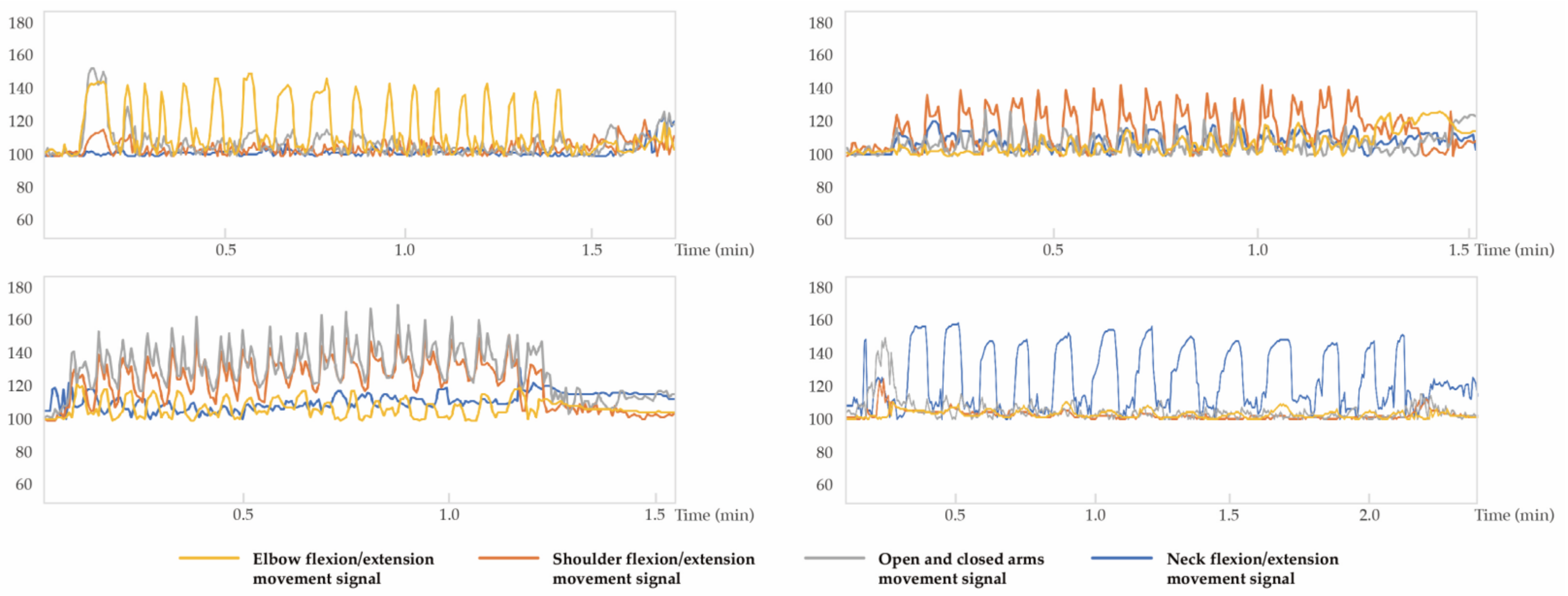

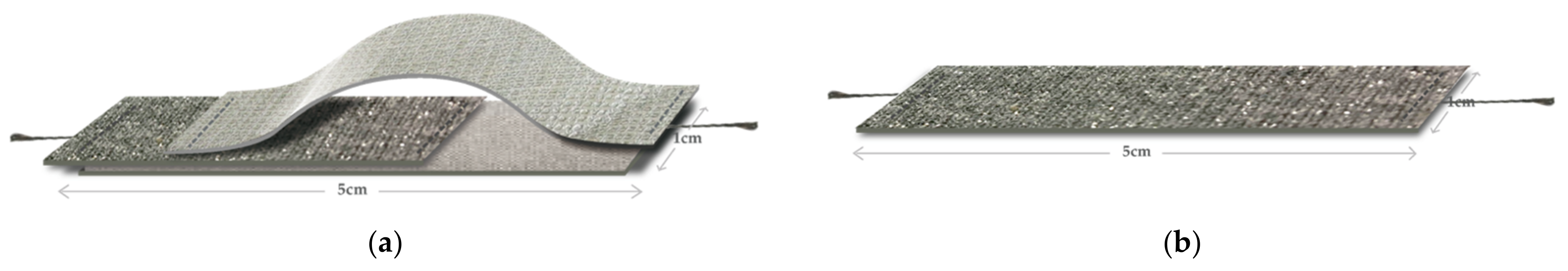

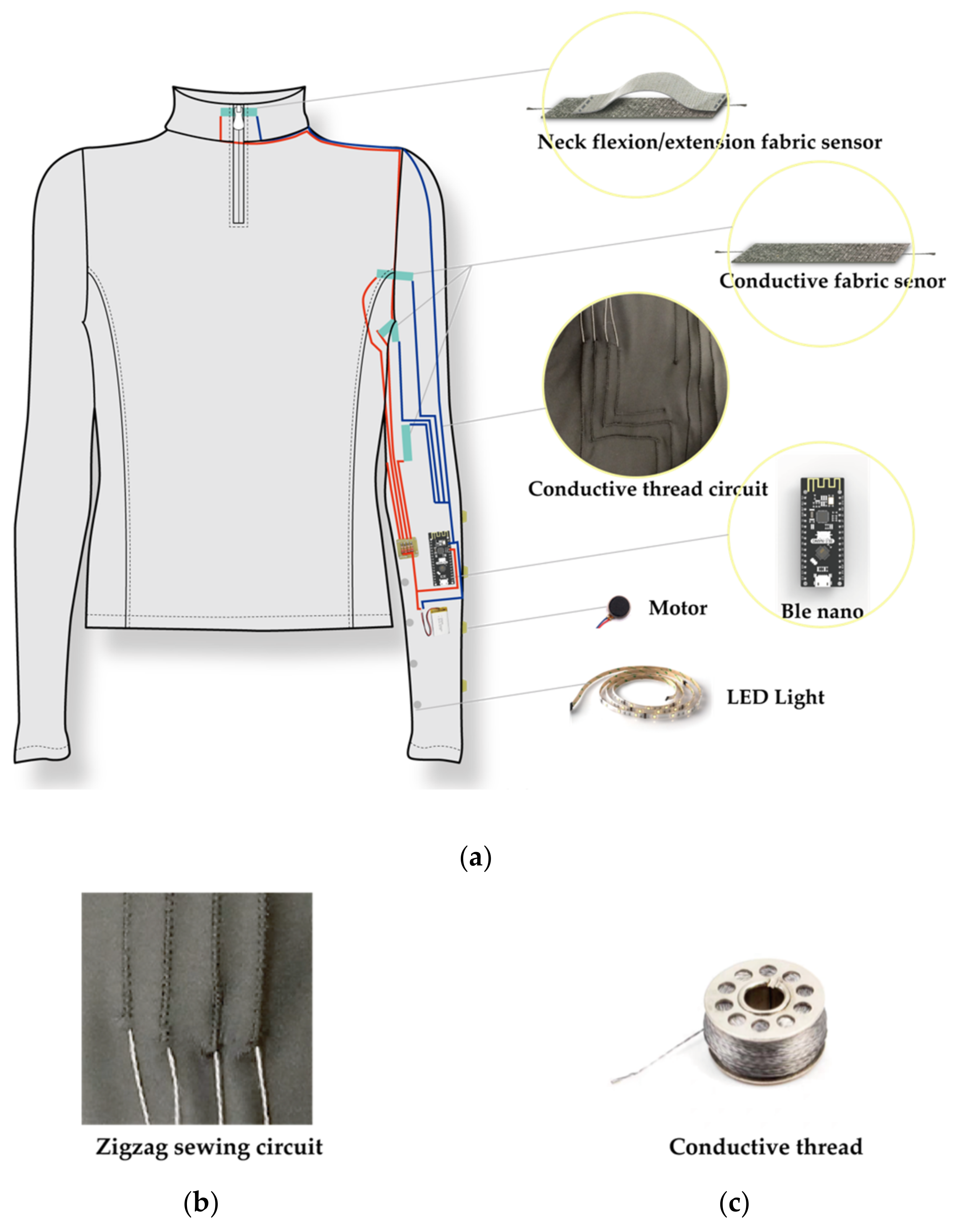

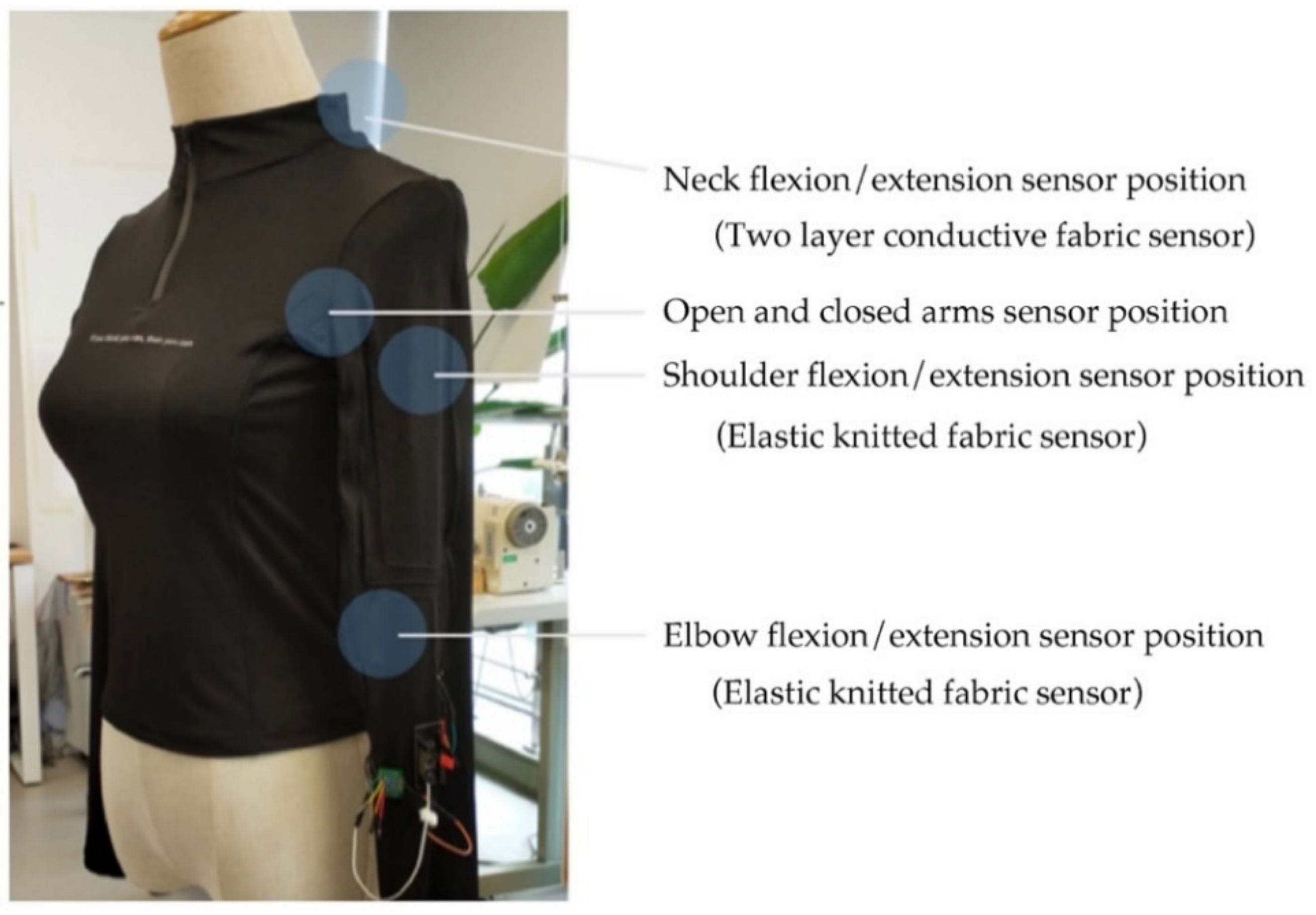

3.2. E-MotionWear Sensing

3.3. Feedback Mechanisms

3.4. E-MotionWear Implementation

4. User Evaluation

4.1. Participants

4.2. Measures

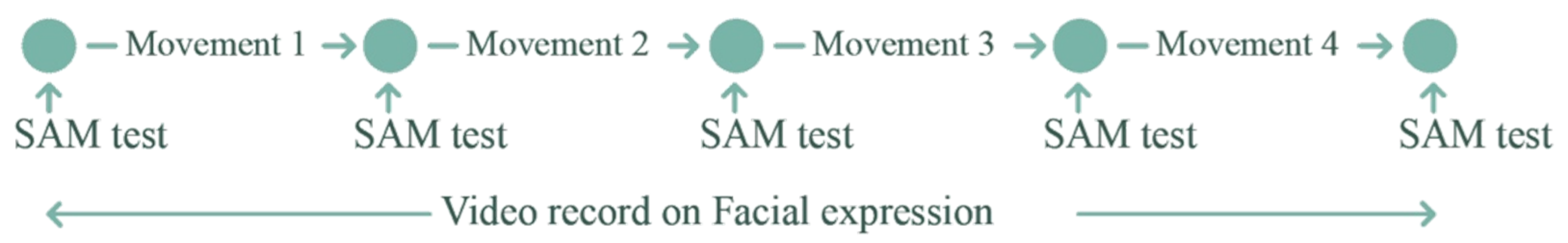

- Participants’ subjective emotional feelings were collected using the Self-Assessment Manikin (SAM) [51] questionnaire;

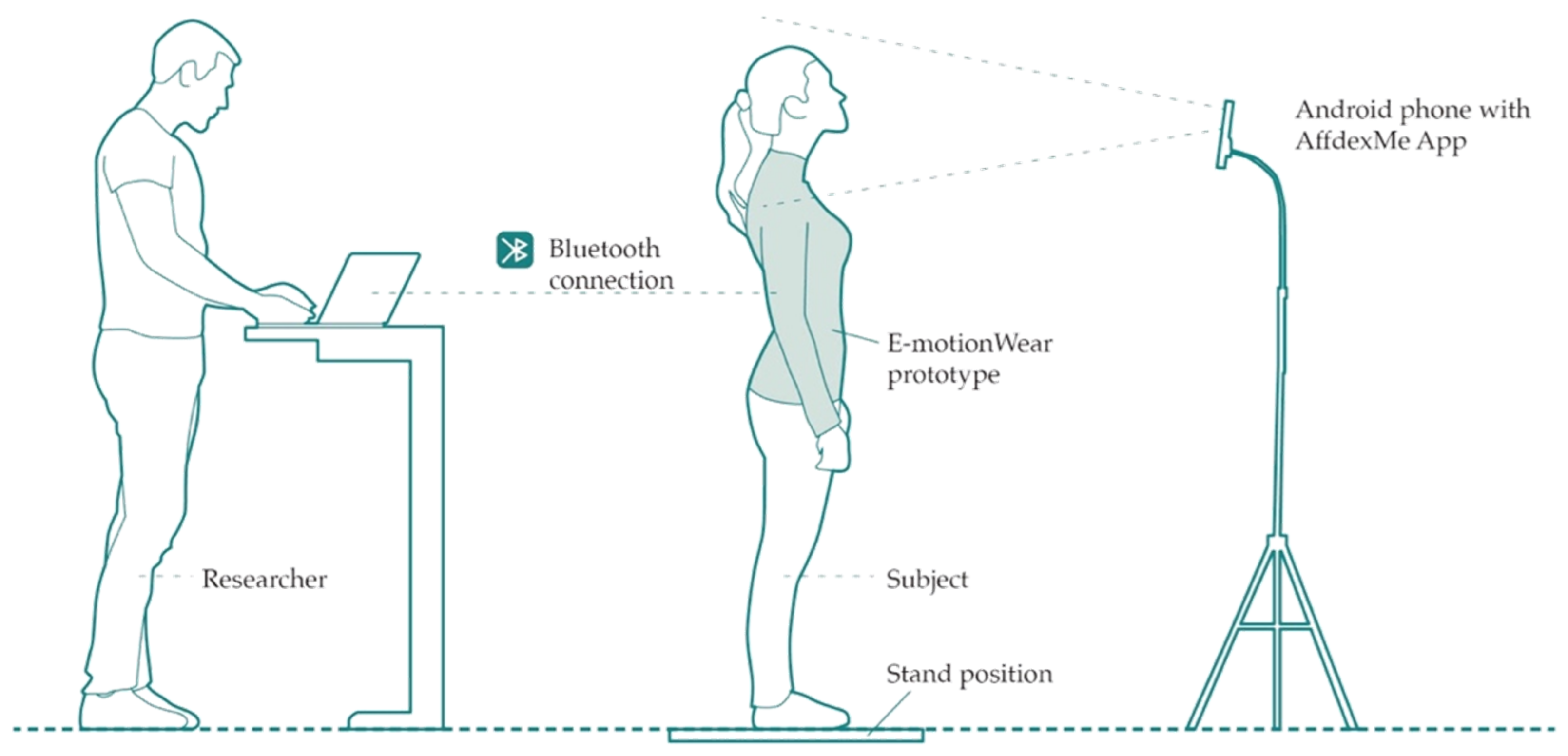

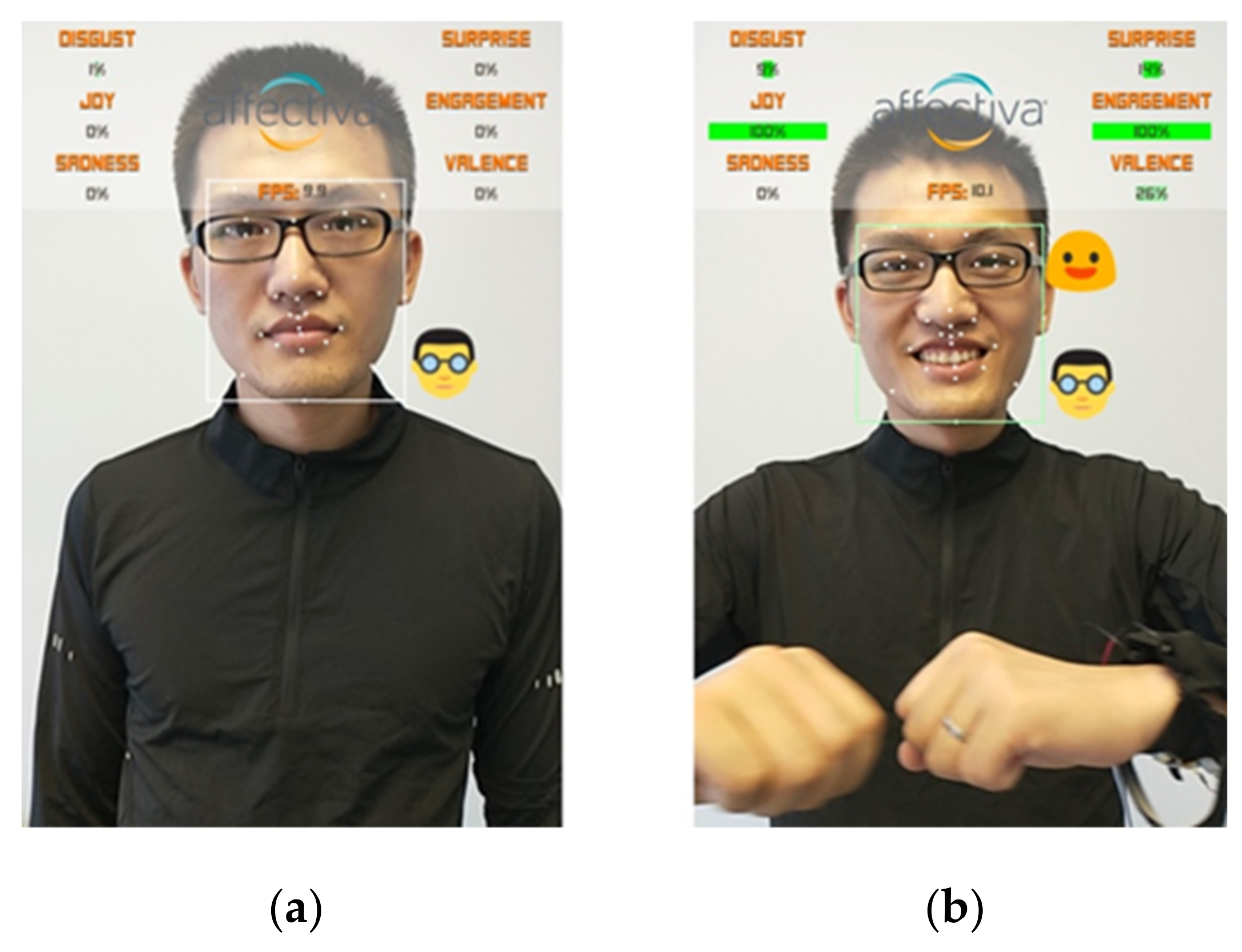

- The AffdexMe App [52] was used to capture facial emotions. This study considered the following AffdexMe facial emotions: Disgust, Joy, Sadness, Surprise, Engagement, and Valence;

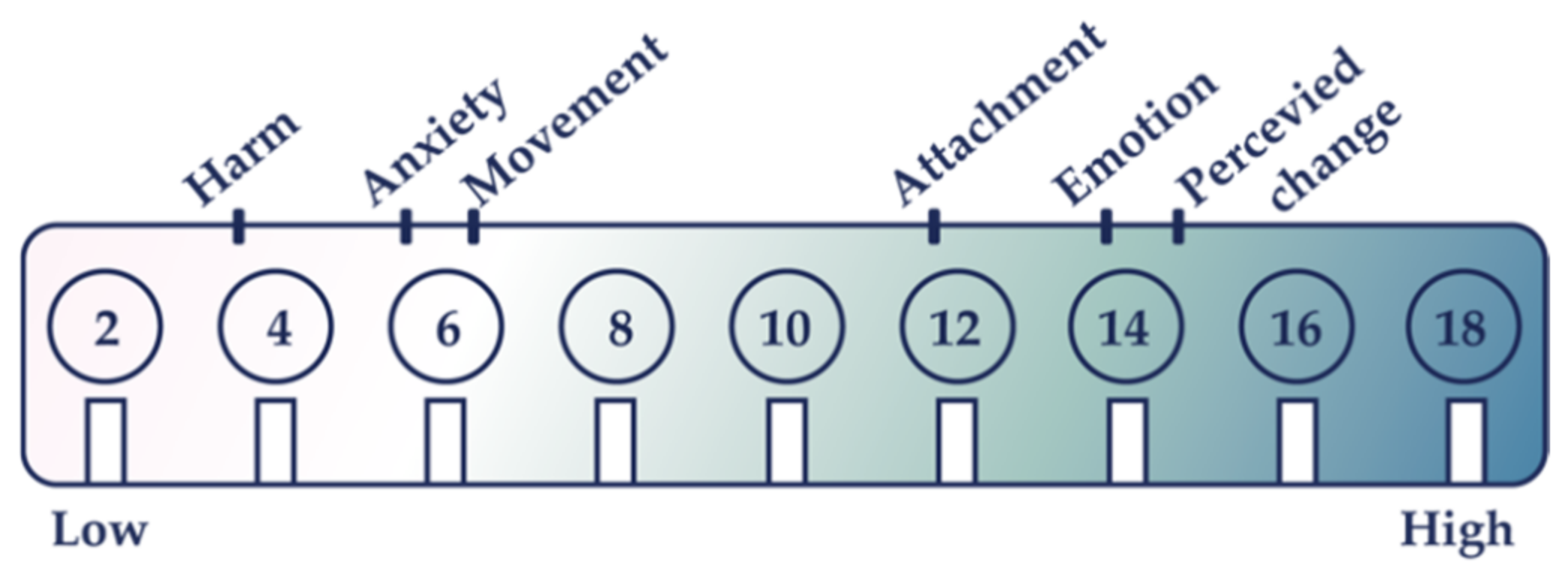

- Our prototype’s wearable comfort was evaluated using the Comfort Rating Scale (CRS) [53], a value between 0 to 20.

4.3. Apparatus

4.4. Procedure

5. Results

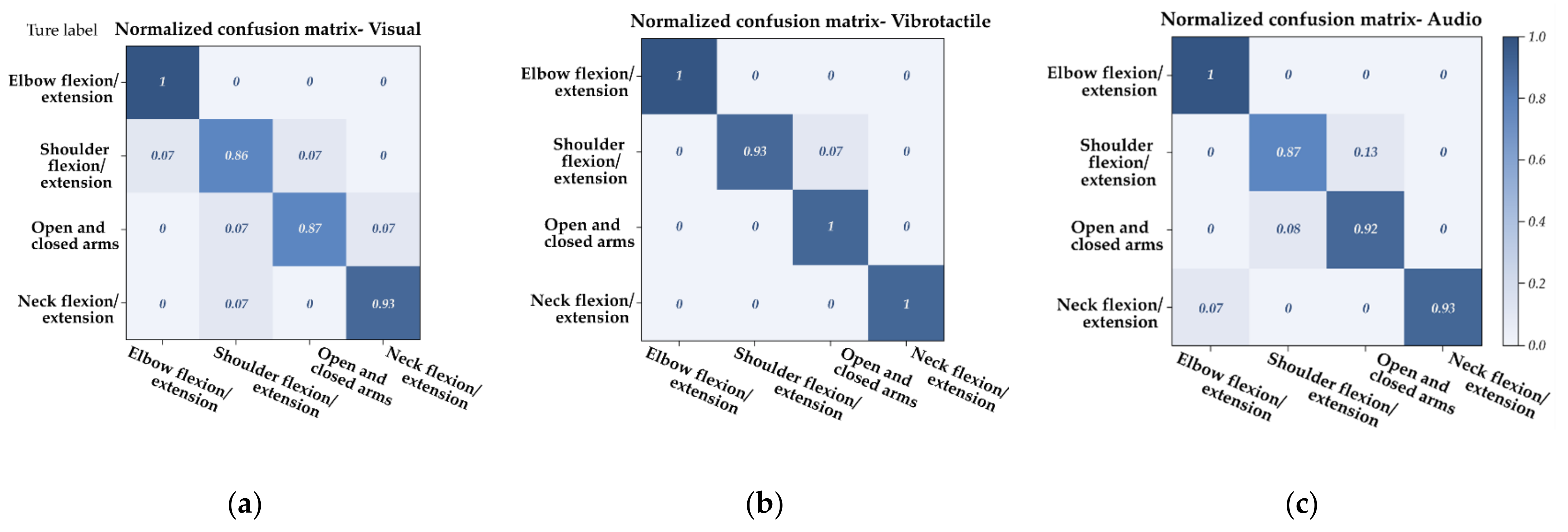

5.1. E-MotionWear Performance Assessment with Confusion Matrix

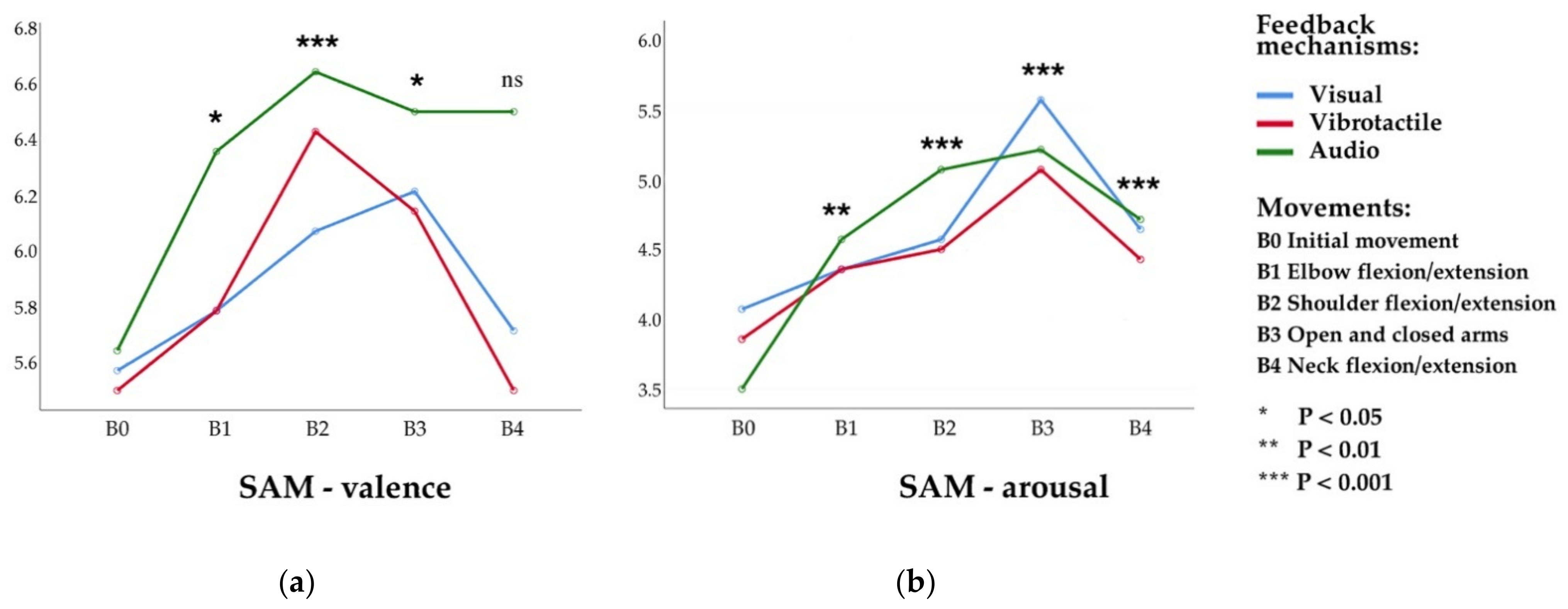

5.2. Self-Assessment Manikin (SAM) Analysis

5.2.1. SAM-Valence

5.2.2. SAM-Arousal

5.2.3. SAM-Dominance

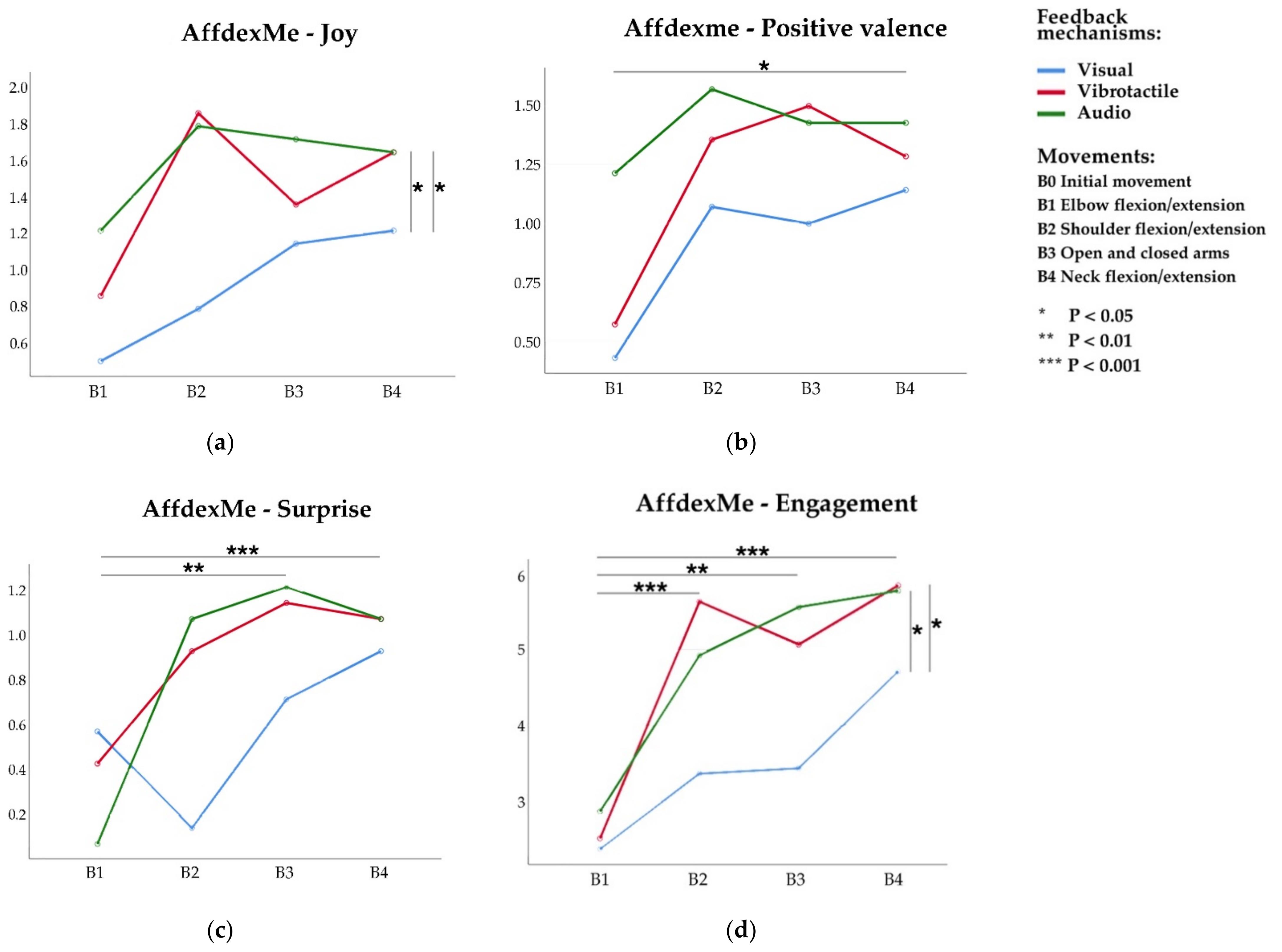

5.3. Facial Expression Analysis

5.3.1. Joy

5.3.2. Surprise

5.3.3. Positive Valence

5.3.4. Engagement

5.4. Wearable Comfort of E-MotionWear

5.5. Participants’ Feedback

6. Discussion

6.1. Experimental Evidence

6.2. Design Recommendations

- Easily perceivable and pleasant feedback to motivate movements. The feedback mechanism should provide a pleasant user experience and informative presentation without confusing the users. Given that the wearer will be in different environments on any given day and doing different activities, it is better to provide users with the option to choose their preferred feedback mechanisms. As stated in [36], there is a need for the personalization of movement-based interaction. However, when using movement-based interactive textiles, visual feedback is not easy to capture, and more consideration could be given to tactile and auditory feedback. Besides, as mentioned in [55], vibrotactile can be noticed only on selected body areas to provide an intuitive correspondence to the movement of the user. The amplitude, frequency, or melody of the feedback mechanisms will also affect the user, which should be adjusted according to the actual use scenarios.

- Upper-body movements to promote positive emotions. It is important to note that not all movements lead to positive emotions. Among the four movements in the experiment, the body-expanding and upwards movements were proven to be more effective in promoting positive emotions. Using the upper body to execute these movements is more comfortable, both sitting and standing. Effective movements mainly focus on arm activities. However, the movements should not be too complicated and be easy to remember for users. As individuals have their own movement preferences, users should be provided with multiple choices instead of a single movement interaction in a wearable system. Movements should be designed according to their use scenario and users’ physical conditions. Excessive and improper exercise may cause physical fatigue and injury. More attention should be paid to protection, such as the neck movements.

- Favour fabric sensors. As [56] concludes, the measurement of video, optical, and accelerometer-based body motion analysis systems are limited in their applicability. In this case, the fabric-based sensor could provide a low-cost solution, especially for long-time movement monitoring. Through fiber materials and structures, multiple fabric movement sensors could be developed to fulfil different requirements.

- Flexible smart t-shirt for movement detections. We have several considerations when designing the E-motionWear prototype. The smart textile prototype should be flexible and comfortable for the wearer, fit different body movements and shapes, and able to detect any required movement and its amplitude. Elastic jersey fabrics or knitted fabrics are widely used in smart textiles for monitoring body movements, which have also been proved to be effective, but some users may be uncomfortable wearing them if they are too tight.

- Aesthetics. We should hide electronic components with e-textile technologies, like conductive thread, fabric sensor, to avoid unnecessary concerns and anxieties of users, which can be achieved by combing traditional clothing manufacturing techniques into the design of interactive textiles. The intention to use new technologies tends to decline with age [30]. However, the interactive textile interfaces could reduce the obtrusiveness of wearing electronic devices to increase user acceptance from both appearance and psychological aesthetics perspectives.

6.3. Limitations and Future Work

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

References

- Barrett, L.F.; Bliss-Moreau, E. Chapter 4 Affect as a Psychological Primitive. Adv. Exp. Soc. Psychol. 2009, 41, 167–218. [Google Scholar] [CrossRef]

- Mauss, I.B.; Levenson, R.W.; McCarter, L.; Wilhelm, F.H.; Gross, J.J. The tie that binds? Coherence among emotion experience, behavior, and physiology. Emotion 2005, 5, 175. [Google Scholar] [CrossRef] [PubMed]

- Sapolsky, R.M. Stress, Stress-Related Disease, and Emotional Regulation. Handbook of Emotion Regulation; The Guilford Press: New York, NY, USA, 2007; pp. 606–615. [Google Scholar]

- Baghaei, N.; Naslund, J.; Hach, S.; Liang, H.N. Designing Technologies for Youth Mental Health: Preliminary Studies of User Preferences, Intervention Acceptability, and Prototype Testing. Front. Public Health 2020, 8, 45. [Google Scholar] [CrossRef] [PubMed]

- DeSteno, D.; Gross, J.J.; Kubzansky, L. Affective science and health: The importance of emotion and emotion regulation. Health Psychol. 2013, 32, 474–486. [Google Scholar] [CrossRef] [PubMed]

- Koole, S.L. The psychology of emotion regulation: An integrative review. Cogn. Emot. 2009, 23, 4–41. [Google Scholar] [CrossRef]

- De Rooij, A. Toward emotion regulation via physical interaction. In Proceedings of the Companion Publication of the 19th International Conference on Intelligent User Interfaces 2014, Haifa, Israel, 24–27 February 2014; pp. 57–60. [Google Scholar]

- Shafir, T. Using movement to regulate emotion: Neurophysiological findings and their application in psychotherapy. Front. Psychol. 2016, 7, 1451. [Google Scholar] [CrossRef]

- Eshafir, T.; Tsachor, R.P.; Welch, K.B. Emotion Regulation through Movement: Unique Sets of Movement Characteristics are Associated with and Enhance Basic Emotions. Front. Psychol. 2016, 6, 2030. [Google Scholar] [CrossRef]

- Cherenack, K.; van Pieterson, L. Smart textiles: Challenges and opportunities. J. Appl. Phys. 2012, 112, 091301. [Google Scholar] [CrossRef]

- Van der Lugt, B.; Feijs, L. Stress reduction in everyday wearables: Balanced. In Proceedings of the 2019 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2019 ACM International Symposium on Wearable Computers 2019, London, UK, 9–13 September 2019; pp. 1050–1053. [Google Scholar]

- Segura, E.M.; Vidal, L.T.; Rostami, A. Bodystorming for movement-based interaction design. Hum. Technol. 2016, 12, 193–251. [Google Scholar] [CrossRef]

- Ho, A.G.; Siu, K.W.M. Emotion Design, Emotional Design, Emotionalize Design: A Review on Their Relationships from a New Perspective. Des. J. 2012, 15, 9–32. [Google Scholar] [CrossRef]

- Wang, K.J.; Zheng, C.Y. Wearable robot for mental health intervention: A pilot study on EEG brain activities in response to human and robot affective touch. In Proceedings of the 2019 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2019 ACM International Symposium on Wearable Computers 2019, London, UK, 9–13 September 2019; pp. 949–953. [Google Scholar]

- Ashford, R. Responsive and Emotive Wearable Technology: Physiological Data, Devices and Communication. Ph.D. Thesis, Goldsmiths, University of London, London, UK, 2018. [Google Scholar]

- Uğur, S. Wearing Embodied Emotions: A Practice Based Design Research on Wearable Technology; Springer: Milan, Italy, 2013. [Google Scholar]

- El Ali, A.; Yang, X.; Ananthanarayan, S.; Röggla, T.; Jansen, J.; Hartcher-O’Brien, J.; Cesar, P. ThermalWear: Exploring Wearable On-chest Thermal Displays to Augment Voice Messages with Affect. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems 2020, Honolulu, HI, USA, 25–30 April 2020; pp. 1–14. [Google Scholar]

- Shafir, T. Movement-based strategies for emotion regulation. In Handbook on Emotion Regulation: Processes, Cognitive Effects and Social Consequences; Nova Science Publishers, Inc.: New York, NY, USA, 2015; pp. 231–249. [Google Scholar]

- Rossberg-Gempton, I.; Poole, G.D. The effect of open and closed postures on pleasant and unpleasant emotions. Arts Psychother. 1993, 20, 75–82. [Google Scholar] [CrossRef]

- De Rooij, A.; Jones, S. (E) motion and creativity: Hacking the function of motor expressions in emotion regulation to augment creativity. In Proceedings of the Ninth International Conference on Tangible, Embedded, and Embodied Interaction 2015, Stanford, CA, USA, 15–19 January 2015; pp. 145–152. [Google Scholar]

- De Rooij, A.; Corr, P.J.; Jones, S. Creativity and emotion: Enhancing creative thinking by the manipulation of computational feedback to determine emotional intensity. In Proceedings of the 2017 ACM SIGCHI Conference on Creativity and Cognition, Singapore, 27–30 June 2017; pp. 148–157. [Google Scholar]

- Hao, N.; Xue, H.; Yuan, H.; Wang, Q.; Runco, M.A. Enhancing creativity: Proper body posture meets proper emotion. Acta Psychol. 2017, 173, 32–40. [Google Scholar] [CrossRef] [PubMed]

- Casasanto, D.; Dijkstra, K. Motor action and emotional memory. Cognition 2010, 115, 179–185. [Google Scholar] [CrossRef] [PubMed]

- Cacioppo, J.T.; Priester, J.R.; Berntson, G.G. Rudimentary determinants of attitudes: II. Arm flexion and extension have differential effects on attitudes. J. Personal. Soc. Psychol. 1993, 65, 5. [Google Scholar] [CrossRef]

- Darwin, C.; Prodger, P. The Expression of the Emotions in Man and Animals; Oxford University Press: Oxford, UK, 1998. [Google Scholar]

- Lang, P.J.; Greenwald, M.K.; Bradley, M.M.; Hamm, A.O. Looking at pictures: Affective, facial, visceral, and behavioral reactions. Psychophysiology 1993, 30, 261–273. [Google Scholar] [CrossRef] [PubMed]

- Damasio, A.R. The Feeling of What Happens: Body and Emotion in the Making of Consciousness, 1st ed; Harcourt Brace: New York, NY, USA, 1999; p. 12. [Google Scholar]

- Francis, A.L.; Beemer, R.C. How does yoga reduce stress? Embodied cognition and emotion highlight the influence of the musculoskeletal system. Complement. Ther. Med. 2019, 43, 170–175. [Google Scholar] [CrossRef] [PubMed]

- Punkanen, M.; Saarikallio, S.; Luck, G. Emotions in motion: Short-term group form Dance/Movement Therapy in the treatment of depression: A pilot study. Arts Psychother. 2014, 41, 493–497. [Google Scholar] [CrossRef]

- Peek, S.T.M.; Wouters, E.J.M.; Van Hoof, J.; Luijkx, K.; Boeije, H.R.; Vrijhoef, H.J.M. Factors influencing acceptance of technology for aging in place: A systematic review. Int. J. Med. Inf. 2014, 83, 235–248. [Google Scholar] [CrossRef]

- Berthouze, N.; Isbister, K. Emotion and Body-Based Games: Overview and Opportunities. In Principles of Noology; Springer Nature: Berlin, Germany, 2016; pp. 235–255. [Google Scholar]

- Zangouei, F.; Gashti, M.A.B.; Höök, K.; Tijs, T.; de Vries, G.J.; Westerink, J. How to stay in the emotional rollercoaster: Lessons learnt from designing EmRoll. In Proceedings of the 6th Nordic Conference on Human-Computer Interaction: Extending Boundaries 2010, Reykjavik, Iceland, 16–20 October 2010; pp. 571–580. [Google Scholar]

- Melzer, A.; Derks, I.; Heydekorn, J.; Steffgen, G. Click or Strike: Realistic versus Standard Game Controls in Violent Video Games and Their Effects on Aggression; Springer: Berlin/Heidelberg, Germany, 2010; pp. 171–182. [Google Scholar]

- Isbister, K. How to stop being a buzzkill: Designing yamove!, a mobile tech mash-up to truly augment social play. In Proceedings of the 14th International Conference on Human-Computer Interaction with Mobile Devices and Services Companion 2012, San Francisco, CA, USA, 21–24 September 2012; pp. 1–4. [Google Scholar]

- Sigrist, R.; Rauter, G.; Riener, R.; Wolf, P. Augmented visual, auditory, haptic, and multimodal feedback in motor learning: A review. Psychon. Bull. Rev. 2013, 20, 21–53. [Google Scholar] [CrossRef]

- Bhömer, M.T.; Du, H. Designing Personalized Movement-based Representations to Support Yoga. In Proceedings of the 2018 ACM Conference Companion Publication on Designing Interactive Systems, Hong Kong, China, 9–13 June 2018; pp. 283–287. [Google Scholar]

- Alagarai Sampath, H.; Indurkhya, B.; Lee, E.; Bae, Y. Towards multimodal affective feedback: Interaction between visual and haptic modalities. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems 2015, Seoul, Korea, 18–23 April 2015; pp. 2043–2052. [Google Scholar]

- Wilson, G.A.; Freeman, E.; Brewster, S.A. Multimodal affective feedback: Combining thermal, vibrotactile, audio and visual signals. In Proceedings of the 18th ACM International Conference on Multimodal Interaction 2016, Tokyo, Japan, 12–16 November; pp. 400–401.

- Salminen, K.; Surakka, V.; Lylykangas, J.; Raisamo, J.; Saarinen, R.; Raisamo, R.; Rantala, J.; Evreinov, G. Emotional and behavioral responses to haptic stimulation. In Proceedings of the Twenty-Sixth Annual CHI Conference on Human Factors in Computing Systems—CHI ’08, ACM, Florence, Italy, 5–10 April 2008; pp. 1555–1562. [Google Scholar]

- Yoshida, S.; Tanikawa, T.; Sakurai, S.; Hirose, M.; Narumi, T. Manipulation of an emotional experience by real-time deformed facial feedback. In Proceedings of the 4th Augmented Human International Conference on—AH ’13, Stuttgart, Germany, 7–8 March 2013; pp. 35–42. [Google Scholar]

- Macdonald, S.A.; Brewster, S.; Pollick, F. Eliciting Emotion with Vibrotactile Stimuli Evocative of Real-World Sensations. In Proceedings of the 2020 International Conference on Multimodal Interaction, ACM, Utrecht, The Netherlands, 11–15 October 2020; pp. 125–133. [Google Scholar]

- Graham-Knight, J.B.; Corbett, J.; Lasserre, P.; Liang, H.N.; Hasan, K. Exploring Haptic Feedback for Common Message Notification Between Intimate Couples with Smartwatches. In Proceedings of the 32nd Australian Conference on Human-Computer Interaction (OzCHI’ 20), ACM, Sydney, Australia, 1–4 December 2020; pp. 1–12. [Google Scholar]

- Bresin, R.; de Witt, A.; Papetti, S.; Civolani, M.; Fontana, F. Expressive sonification of footstep sounds. In Proceedings of the ISon 2010, Stockholm, Sweden, 7 April 2010; pp. 51–54. [Google Scholar]

- Tajadura-Jiménez, A.; Basia, M.; Deroy, O.; Fairhurst, M.; Marquardt, N.; Bianchi-Berthouze, N. As light as your footsteps: Altering walking sounds to change perceived body weight, emotional state and gait. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems 2015, Seoul, Korea, 18–23 April 2015; pp. 2943–2952. [Google Scholar]

- Valenza, G.; Lanatà, A.; Scilingo, E.P.; De Rossi, D. Towards a smart glove: Arousal recognition based on textile Electrodermal Response. In Proceedings of the 2010 Annual International Conference of the IEEE Engineering in Medicine and Biology, Buenos Aires, Argentina, 31 August–4 September 2010; Volume 2010, pp. 3598–3601. [Google Scholar]

- Wu, W.; Zhang, H.; Pirbhulal, S.; Mukhopadhyay, S.C.; Zhang, Y.T. Assessment of biofeedback training for emotion man-agement through wearable textile physiological monitoring system. IEEE Sens. J. 2015, 15, 7087–7095. [Google Scholar] [CrossRef]

- Zhou, B.; Ghose, T.; Lukowicz, P. Expressure: Detect Expressions Related to Emotional and Cognitive Activities Using Forehead Textile Pressure Mechanomyography. Sensors 2020, 20, 730. [Google Scholar] [CrossRef] [PubMed]

- Gravina, R.; Li, Q. Emotion-relevant activity recognition based on smart cushion using multi-sensor fusion. Inf. Fusion 2019, 48, 1–10. [Google Scholar] [CrossRef]

- Wang, W.; Nagai, Y.; Fang, Y.; Maekawa, M. Interactive technology embedded in fashion emotional design. Int. J. Cloth. Sci. Technol. 2018, 30, 302–319. [Google Scholar] [CrossRef]

- Jiang, M.; Bhömer, M.T.; Liang, H.-N. Exploring the Design of Interactive Smart Textiles for Emotion Regulation. In Proceedings of the Mining Data for Financial Applications; Springer Nature: Berlin, Germany, 2020; pp. 298–315. [Google Scholar]

- Bradley, M.M.; Lang, P.J. Measuring emotion: The self-assessment manikin and the semantic differential. J. Behav. Ther. Exp. Psychiatry 1994, 25, 49–59. [Google Scholar] [CrossRef]

- Affectiva. Available online: https://www.affectiva.com/ (accessed on 24 December 2020).

- Knight, J.; Baber, C.; Schwirtz, A.; Bristow, H. The comfort assessment of wearable computers. In Proceedings of the IEEE Sixth International Symposium on Wearable Computers, White Plains, NY, USA, 21–23 October 2003; Volume 2, pp. 65–74. [Google Scholar]

- Kulke, L.; Feyerabend, D.; Schacht, A. A Comparison of the Affectiva iMotions Facial Expression Analysis Software with EMG for Identifying Facial Expressions of Emotion. Front. Psychol. 2020, 11, 329. [Google Scholar] [CrossRef]

- Markopoulos, P.P.; Wang, Q.; Tomico, O.; Da Rocha, B.G.; Bhömer, M.T.; Giacolini, L.; Palaima, M.; Virtala, N. Actuating wearables for motor skill learning: A constructive design research perspective. Des. Health 2020, 4, 231–251. [Google Scholar] [CrossRef]

- Gibbs, P.T.; Asada, H.H. Wearable Conductive Fiber Sensors for Multi-Axis Human Joint Angle Measurements. J. Neuroeng. Rehabilitation 2005, 2, 7. [Google Scholar] [CrossRef]

- Wilson, G.A.; Romeo, P.; Brewster, S.A. Mapping Abstract Visual Feedback to a Dimensional Model of Emotion. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 1779–1787. [Google Scholar]

- Yoo, Y.; Yoo, T.; Kong, J.; Choi, S. Emotional responses of tactile icons: Effects of amplitude, frequency, duration, and envelope. In Proceedings of the 2015 IEEE World Haptics Conference (WHC), Evanston, IL, USA, 22–26 June 2015; pp. 235–240. [Google Scholar]

- Stöckli, S.; Schulte-Mecklenbeck, M.; Borer, S.; Samson, A.C. Facial expression analysis with AFFDEX and FACET: A vali-dation study. Behav. Res. Methods 2018, 50, 1446–1460. [Google Scholar] [CrossRef]

- Kim, K.H.; Bang, S.W.; Kim, S.R. Emotion recognition system using short-term monitoring of physiological signals. Med. Biol. Eng. Comput. 2004, 42, 419–427. [Google Scholar] [CrossRef]

- Xiefeng, C.; Wang, Y.; Dai, S.; Zhao, P.; Liu, Q. Heart sound signals can be used for emotion recognition. Sci. Rep. 2019, 9, 1–11. [Google Scholar] [CrossRef]

- Bos, D.O. EEG-based emotion recognition. The Influence of Visual and Auditory Stimuli. Psychology 2006, 56, 1–17. [Google Scholar]

- Melzer, A.; Shafir, T.; Tsachor, R.P. How Do We Recognize Emotion from Movement? Specific Motor Components Contribute to the Recognition of Each Emotion. Front. Psychol. 2019, 10, 1389. [Google Scholar] [CrossRef] [PubMed]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jiang, M.; Nanjappan, V.; ten Bhömer, M.; Liang, H.-N. On the Use of Movement-Based Interaction with Smart Textiles for Emotion Regulation. Sensors 2021, 21, 990. https://doi.org/10.3390/s21030990

Jiang M, Nanjappan V, ten Bhömer M, Liang H-N. On the Use of Movement-Based Interaction with Smart Textiles for Emotion Regulation. Sensors. 2021; 21(3):990. https://doi.org/10.3390/s21030990

Chicago/Turabian StyleJiang, Mengqi, Vijayakumar Nanjappan, Martijn ten Bhömer, and Hai-Ning Liang. 2021. "On the Use of Movement-Based Interaction with Smart Textiles for Emotion Regulation" Sensors 21, no. 3: 990. https://doi.org/10.3390/s21030990

APA StyleJiang, M., Nanjappan, V., ten Bhömer, M., & Liang, H.-N. (2021). On the Use of Movement-Based Interaction with Smart Textiles for Emotion Regulation. Sensors, 21(3), 990. https://doi.org/10.3390/s21030990