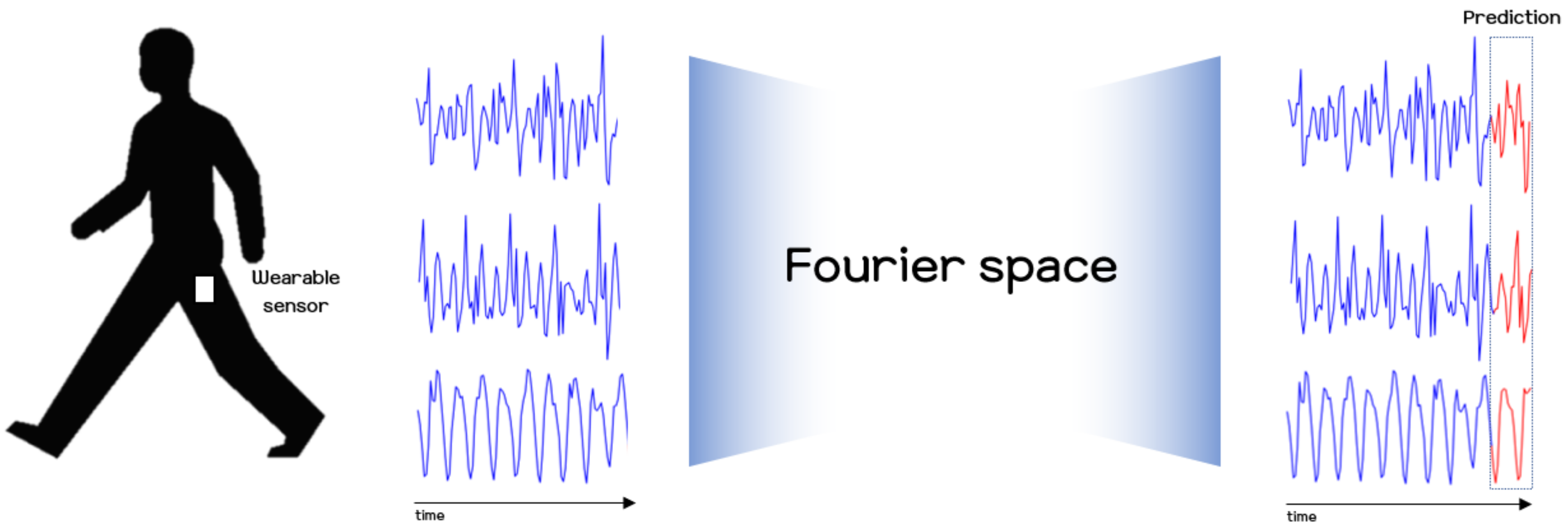

Predicting Human Motion Signals Using Modern Deep Learning Techniques and Smartphone Sensors

Abstract

:1. Introduction

2. Methods

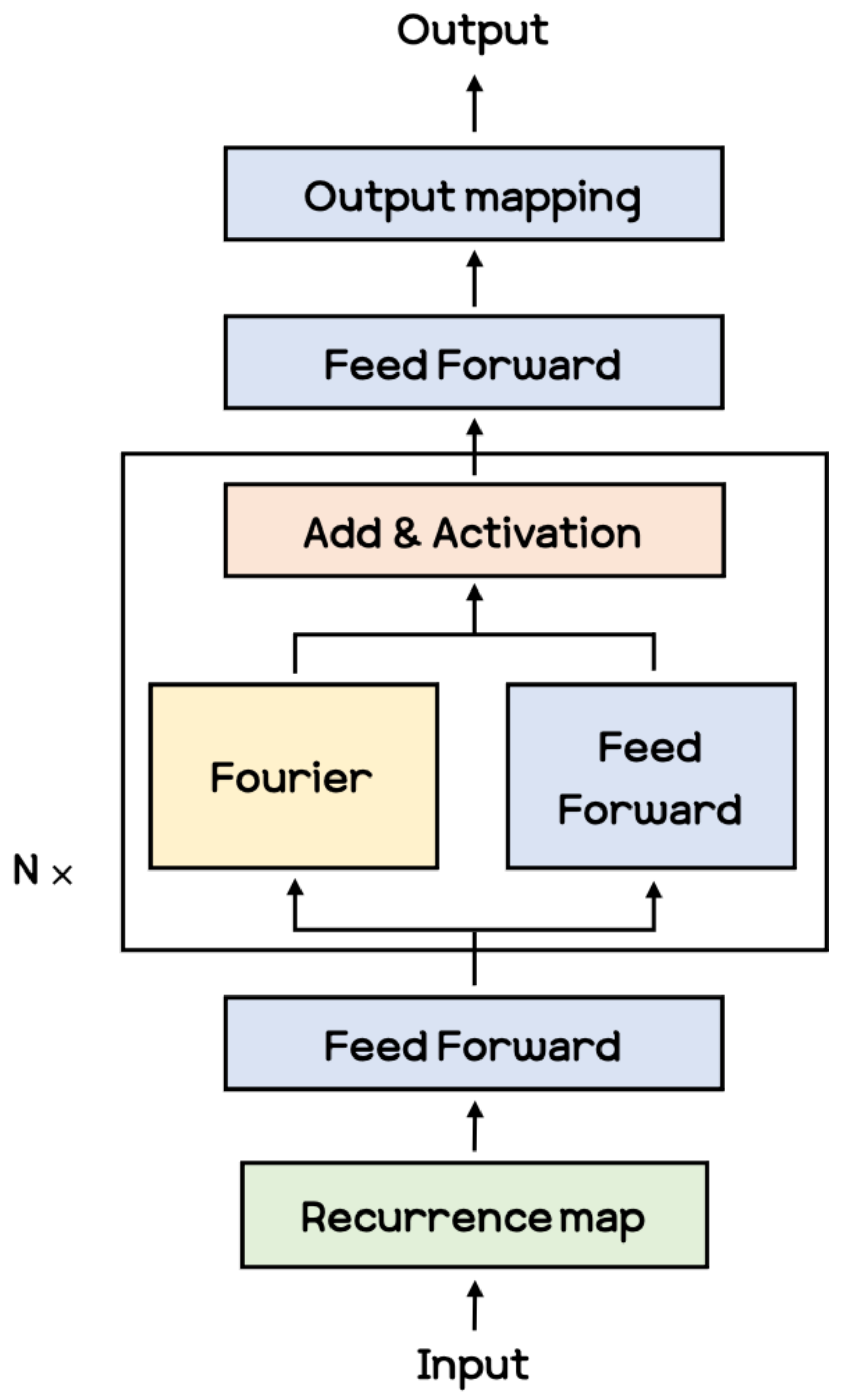

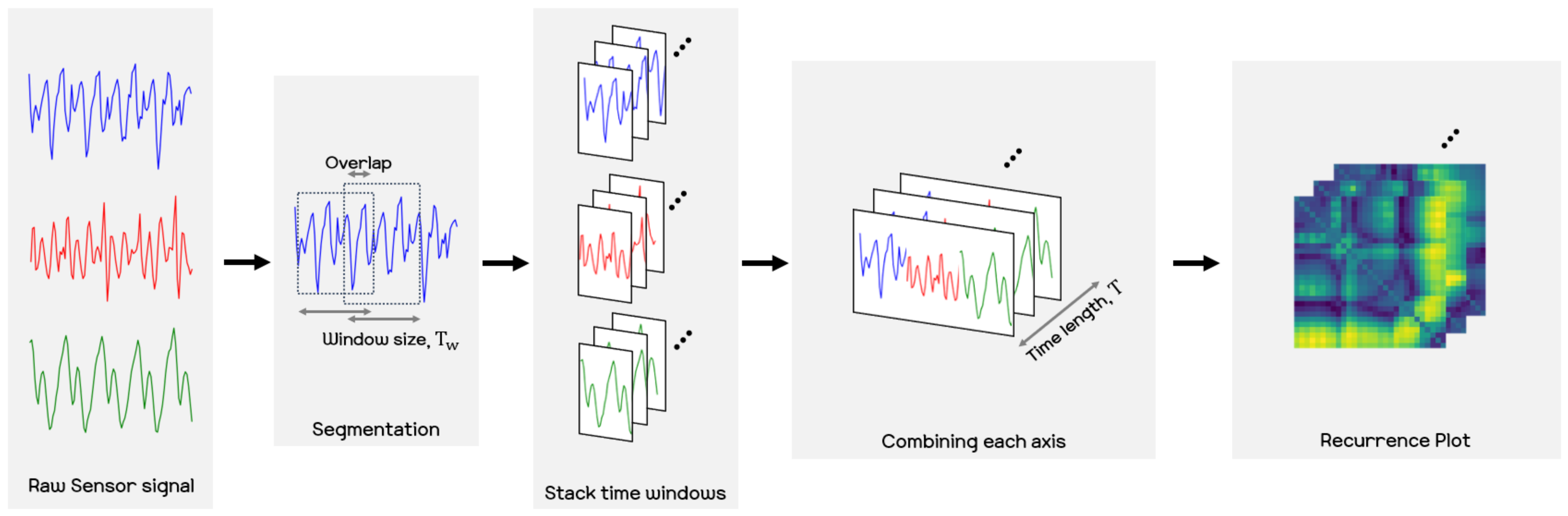

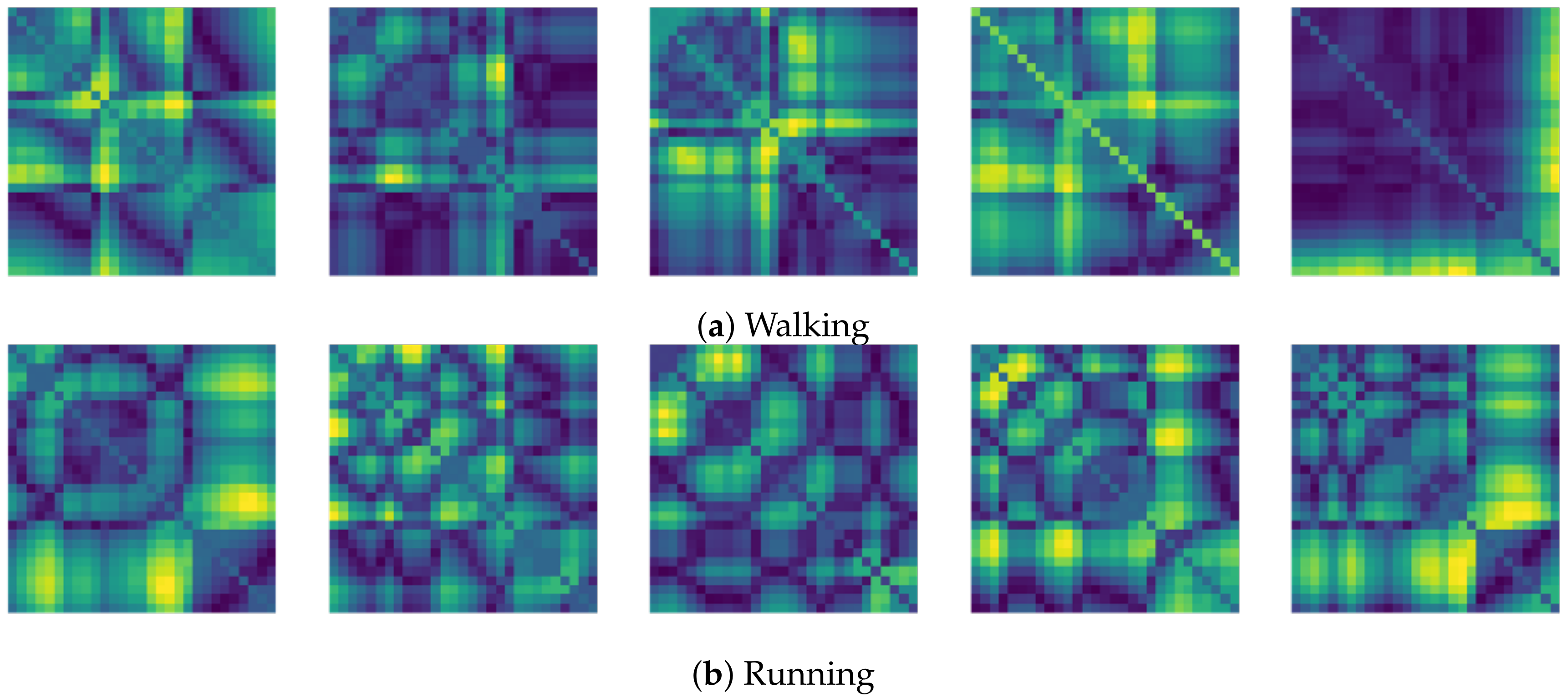

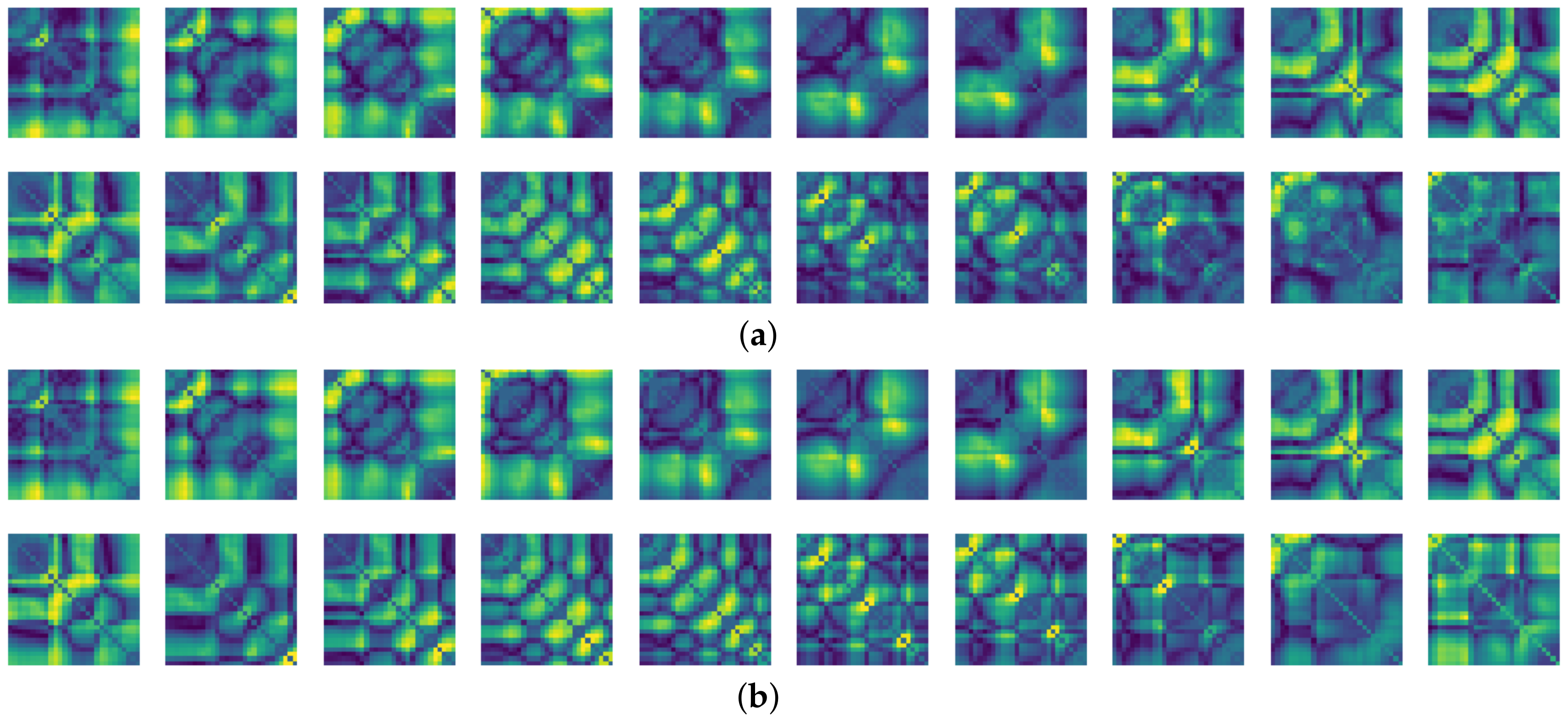

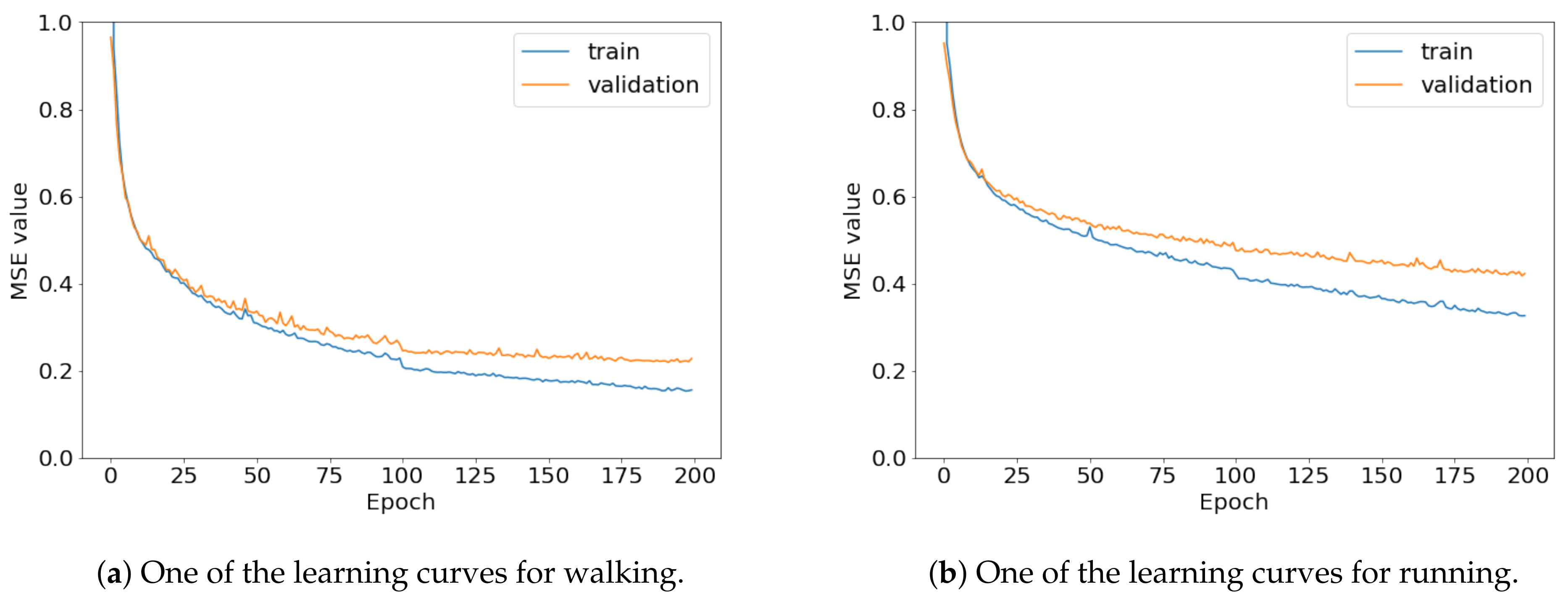

2.1. Recurrence Plot

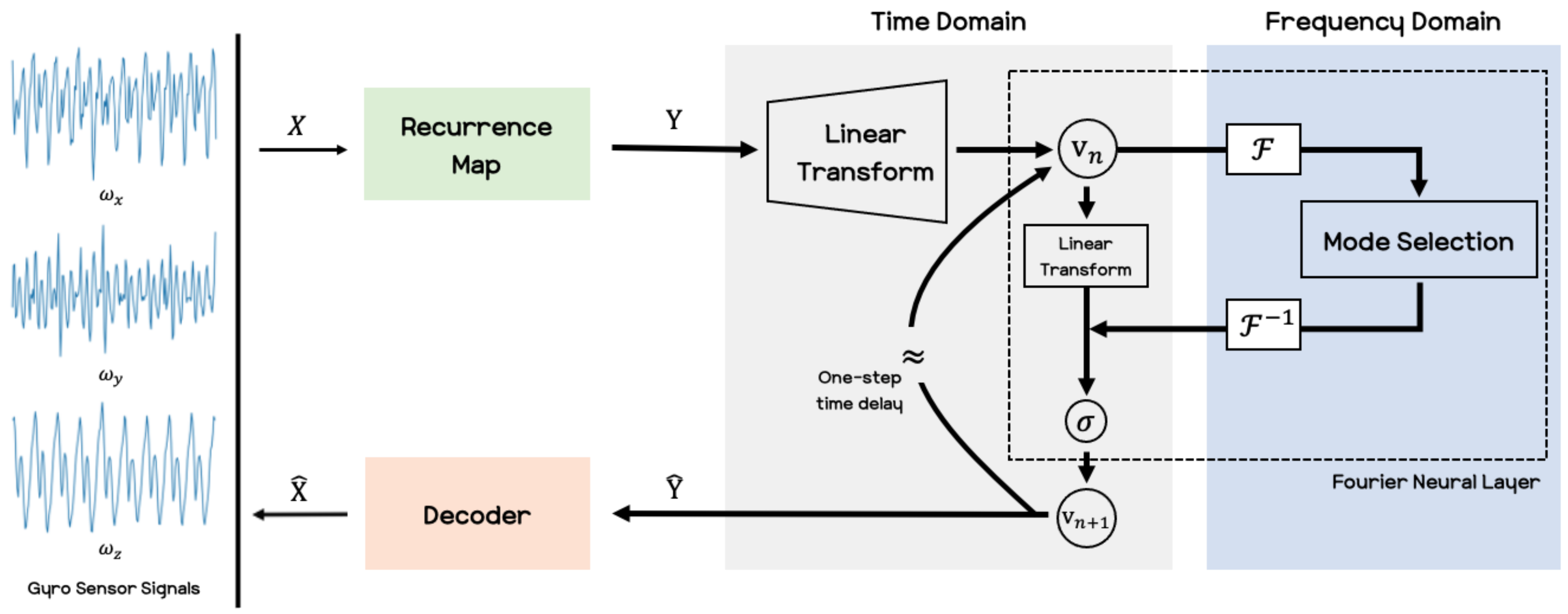

2.2. Fourier Neural Operator

| Procedure 1: Procedure for Performing Fourier Neural Operator |

|

2.3. Decoder

3. Experiments

3.1. Data Collection

- (a)

- Set the predetermined route for motions to be collected for the data (e.g., walking, running).

- (b)

- Place the smartphone on the thigh and tied it up to prevent shaking.

- (c)

- Execute the Matlab mobile application on the smartphone.

- (d)

- Set the sampling rate at 30 Hz, and set it to upload its sensor log to cloud storage.

- (e)

- Select the angular velocity sensor (among the acceleration, magnetic field, orientation, angular velocity, position sensor).

- (f)

- Press the start button to begin acquiring sensor data in the Matlab application.

- (g)

- The participants perform the predefined motion 3 s after pressing the start button to eliminate any effects that may have occurred before executing the action.

- (h)

- The participants perform the motion for about 60 s, which could total 1800 samples.

- (i)

- The participants ceases the motion and presses the stop button 3 s afterward, for the same reason of preventing noise related problems.

- (j)

- After identifying and naming the data set, the data are uploaded to the cloud server.

- (k)

- Download the data acquired from the gyro sensor to a desktop computer.

- (l)

- Repeat steps (d) to (k) for other motions.

| Procedure 2: Procedure for Obtaining Recurrence Plot |

|

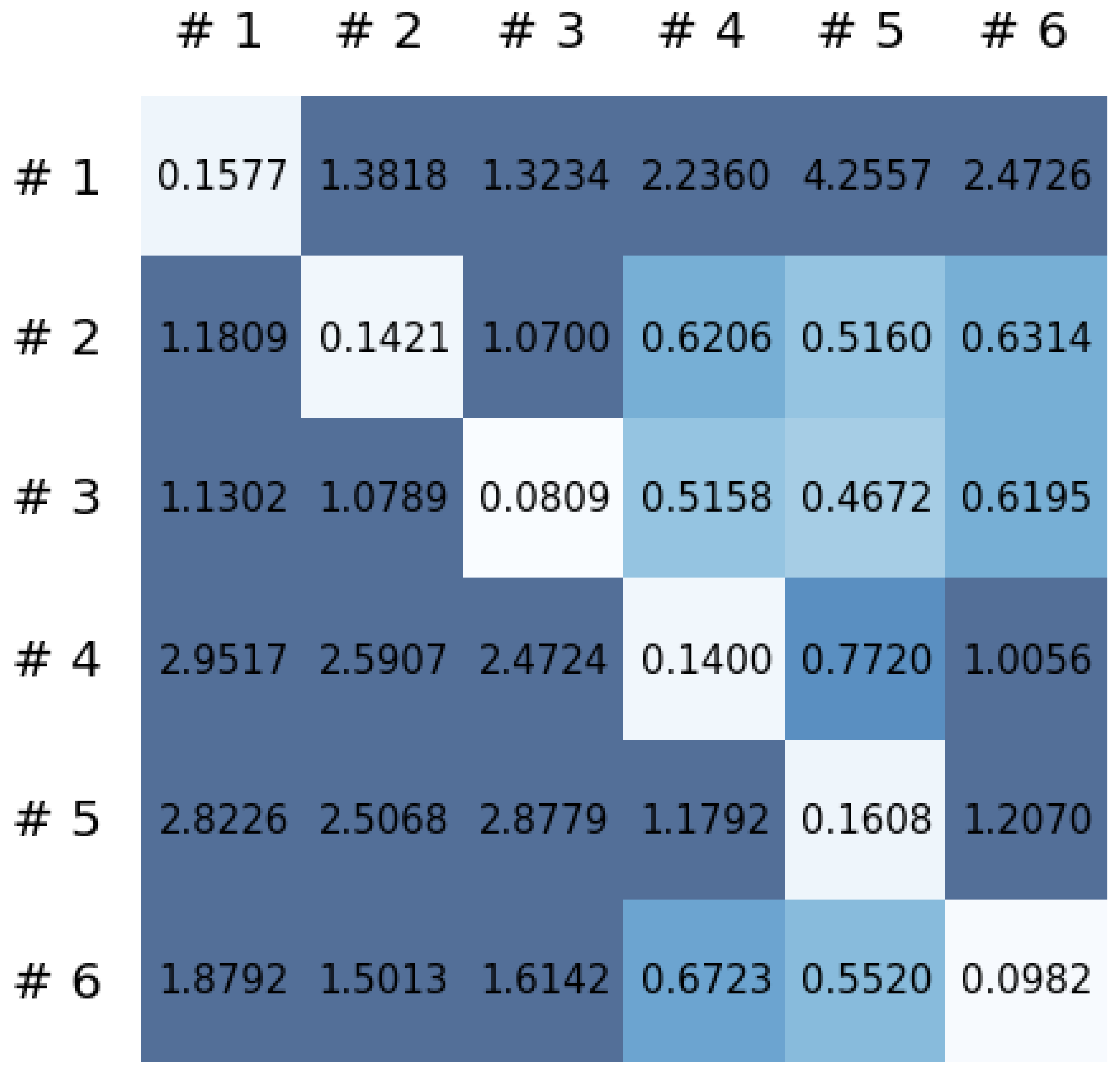

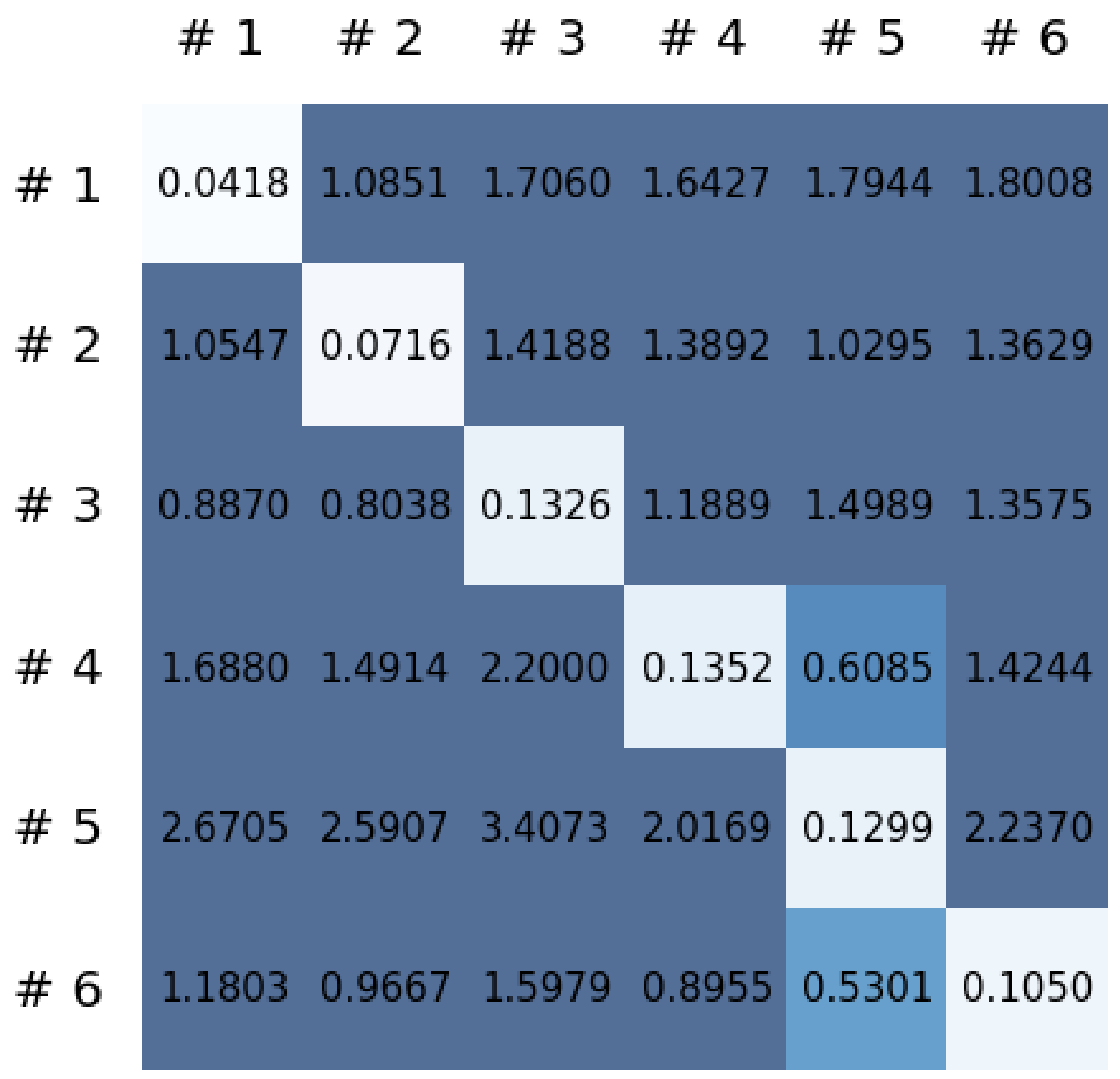

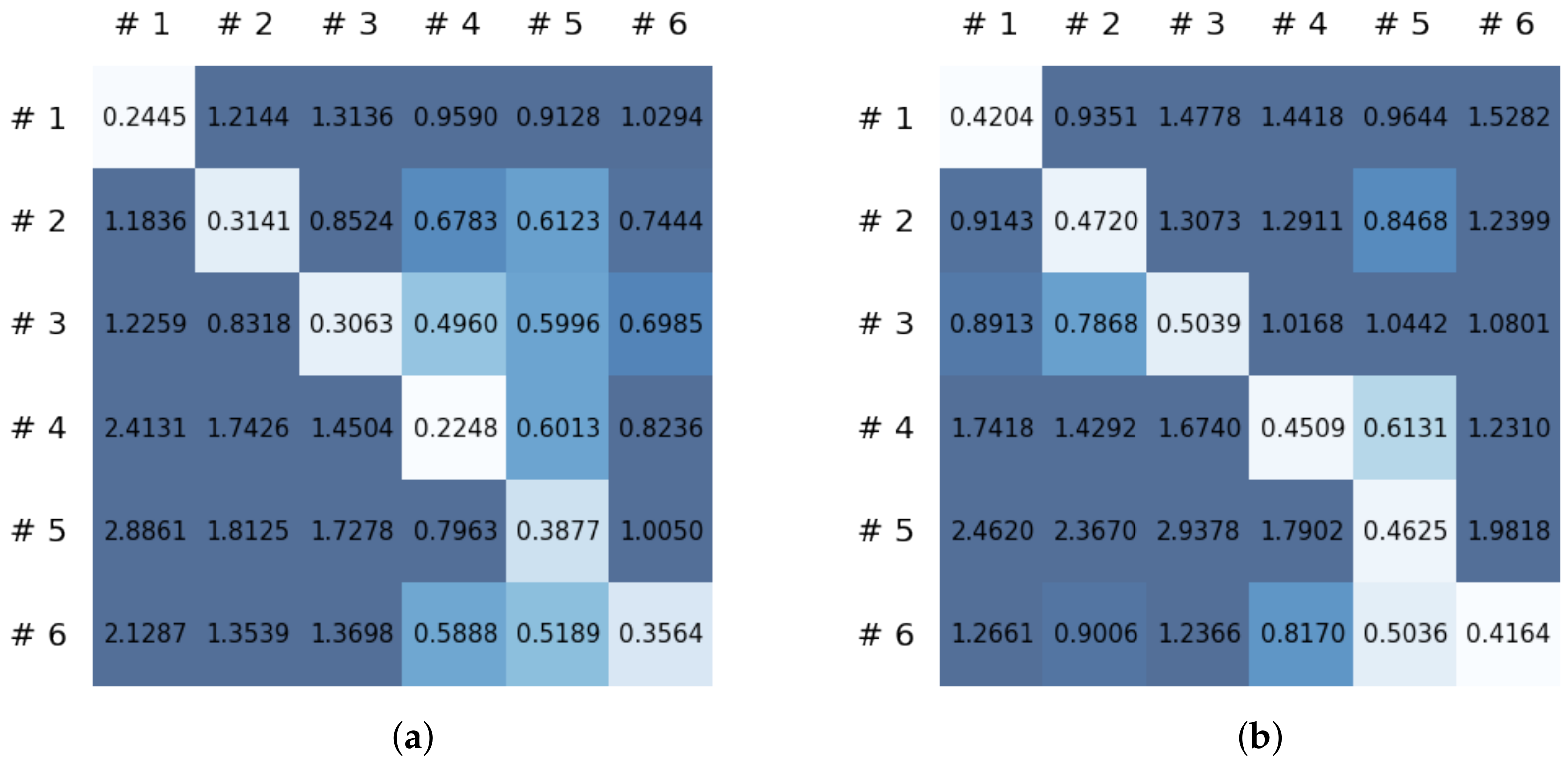

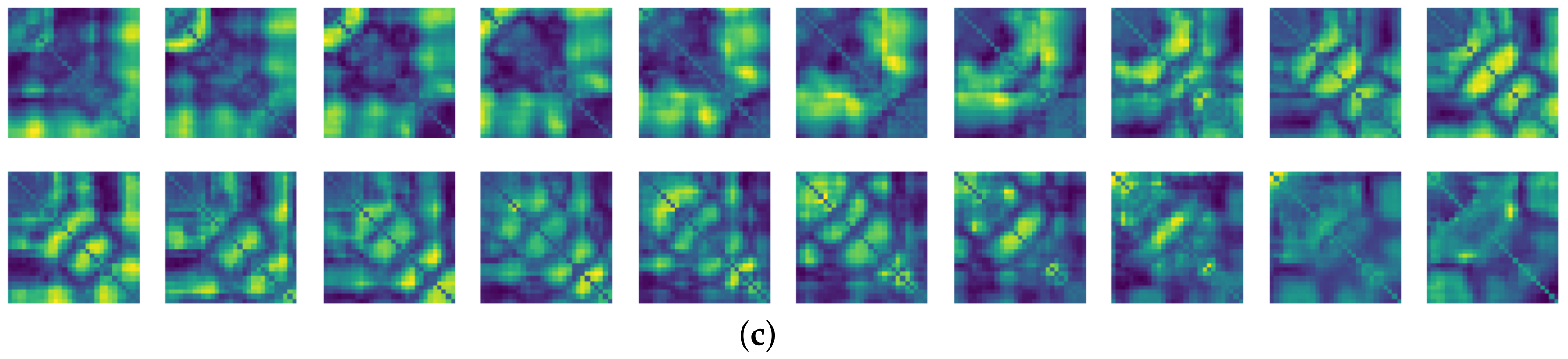

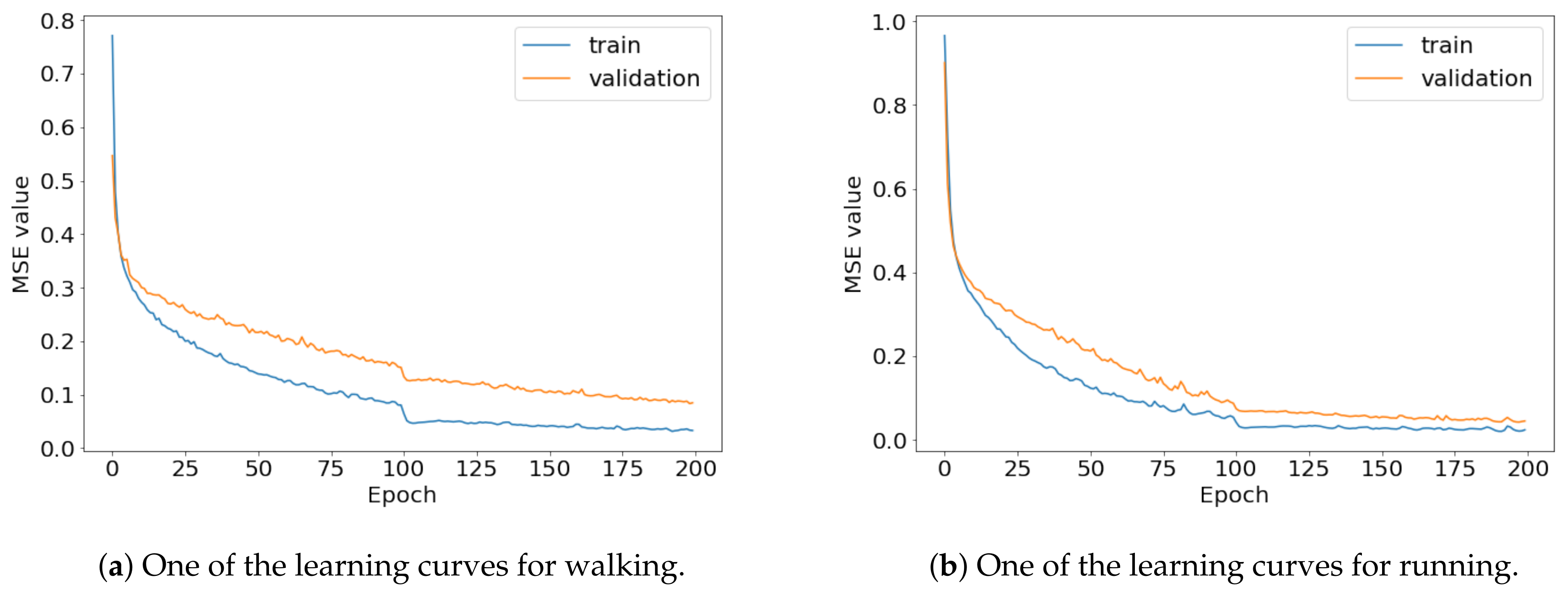

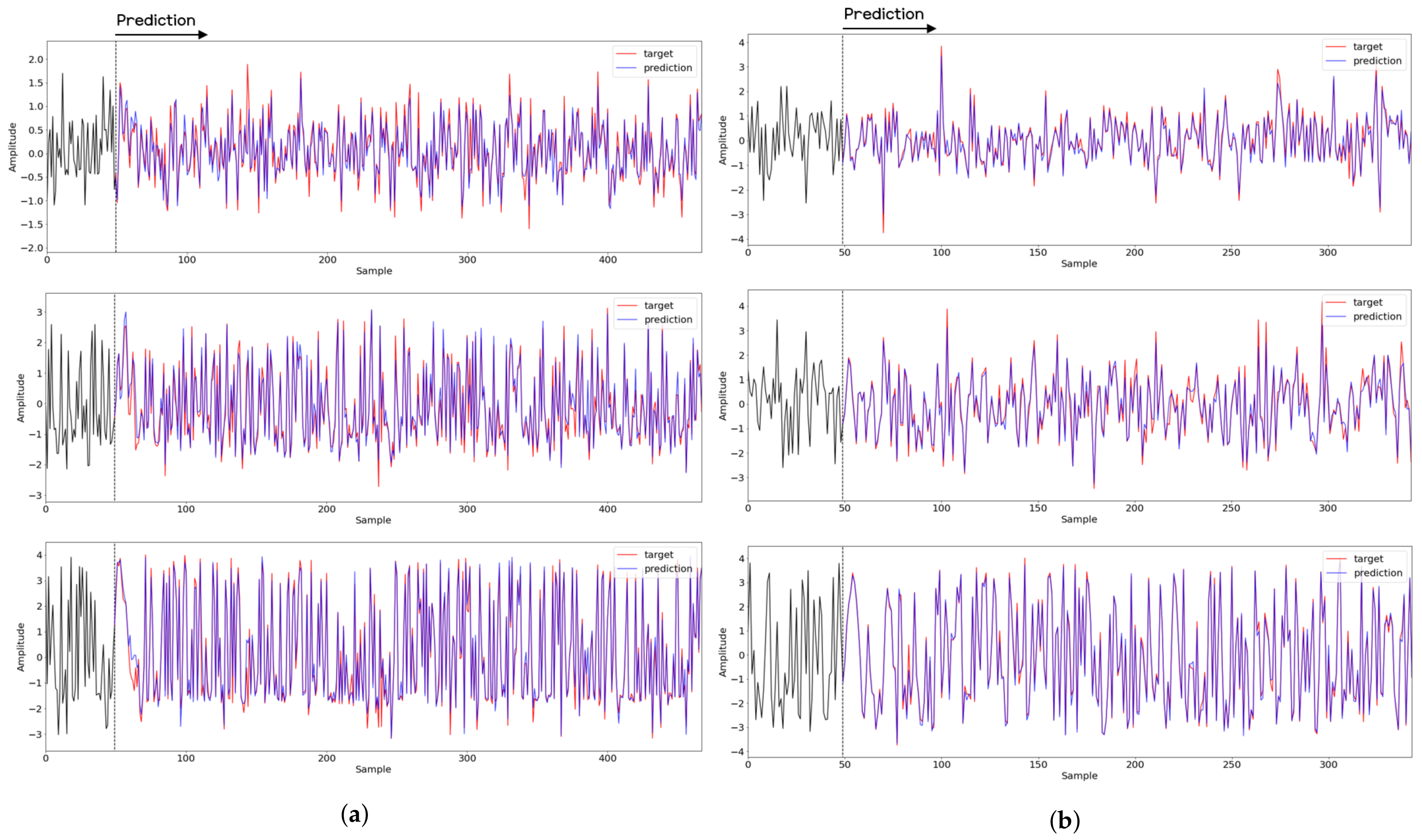

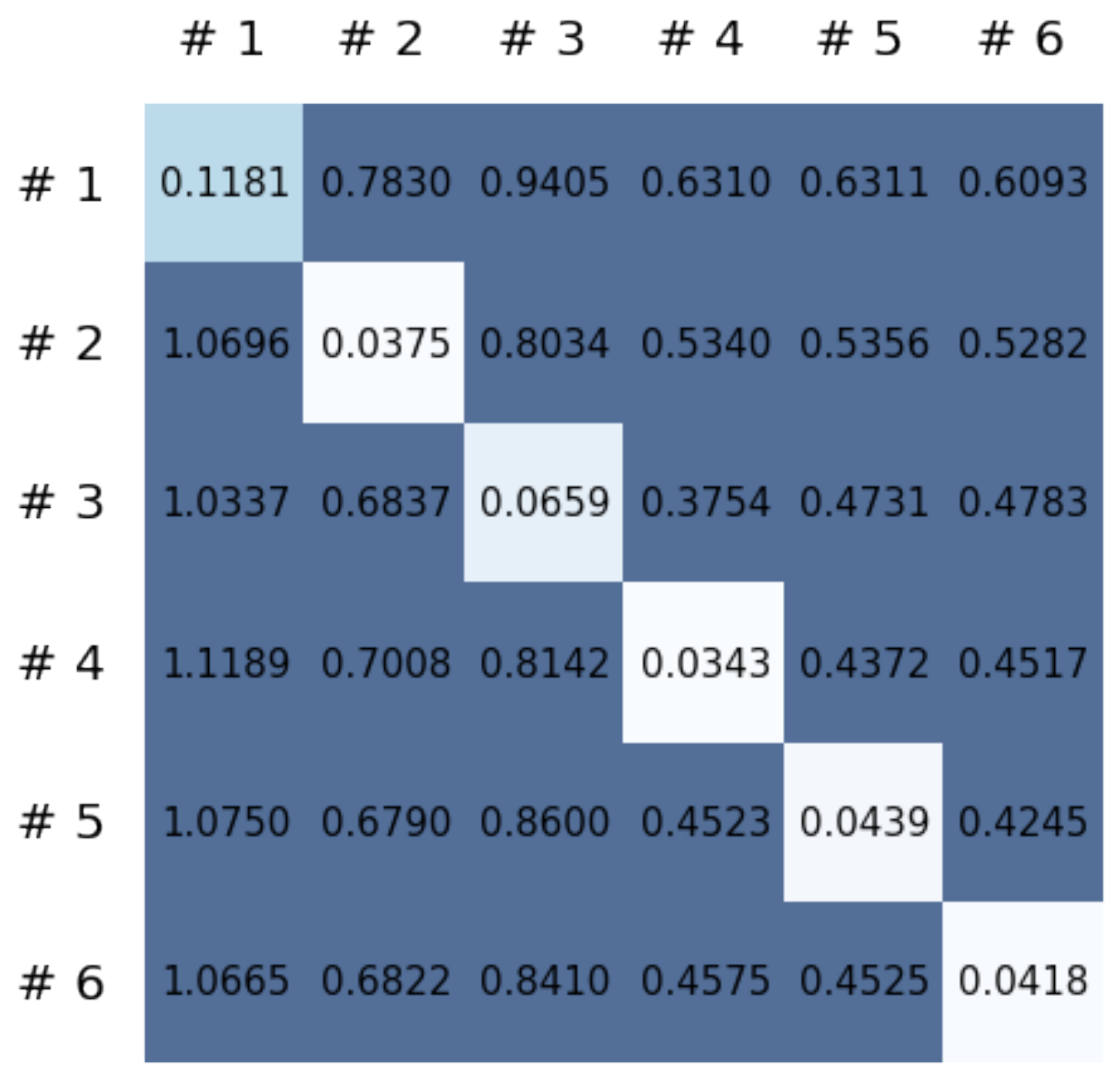

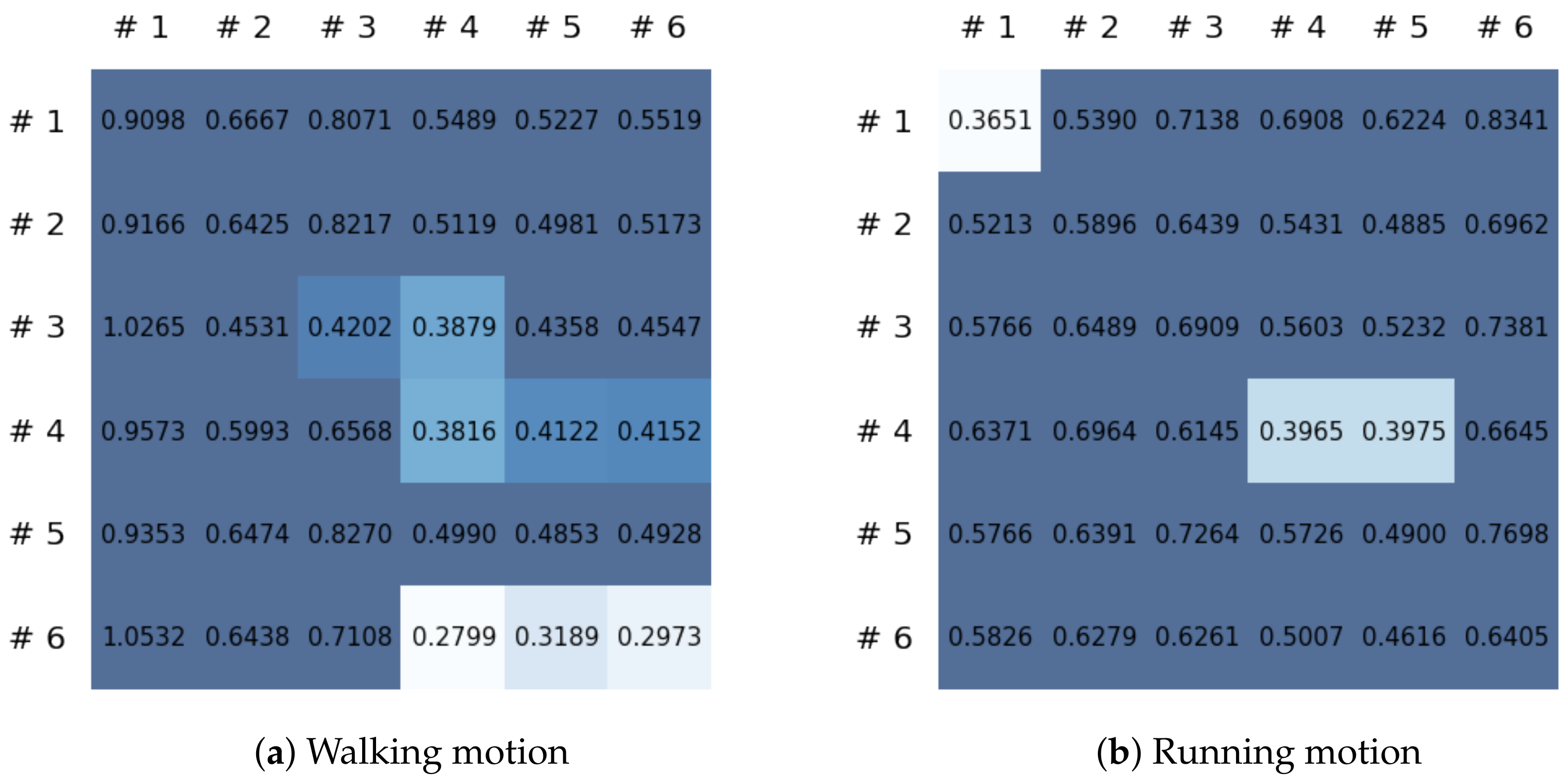

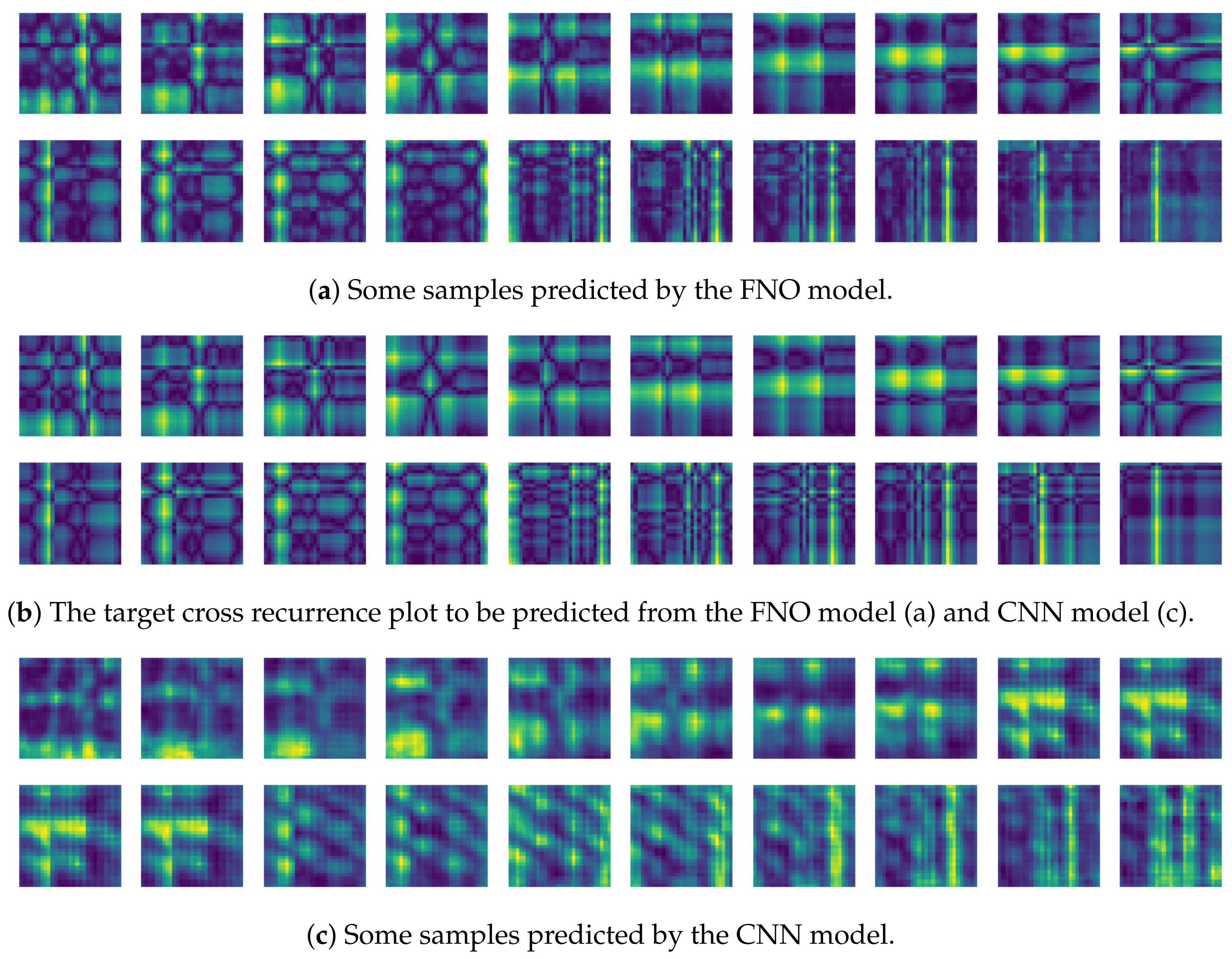

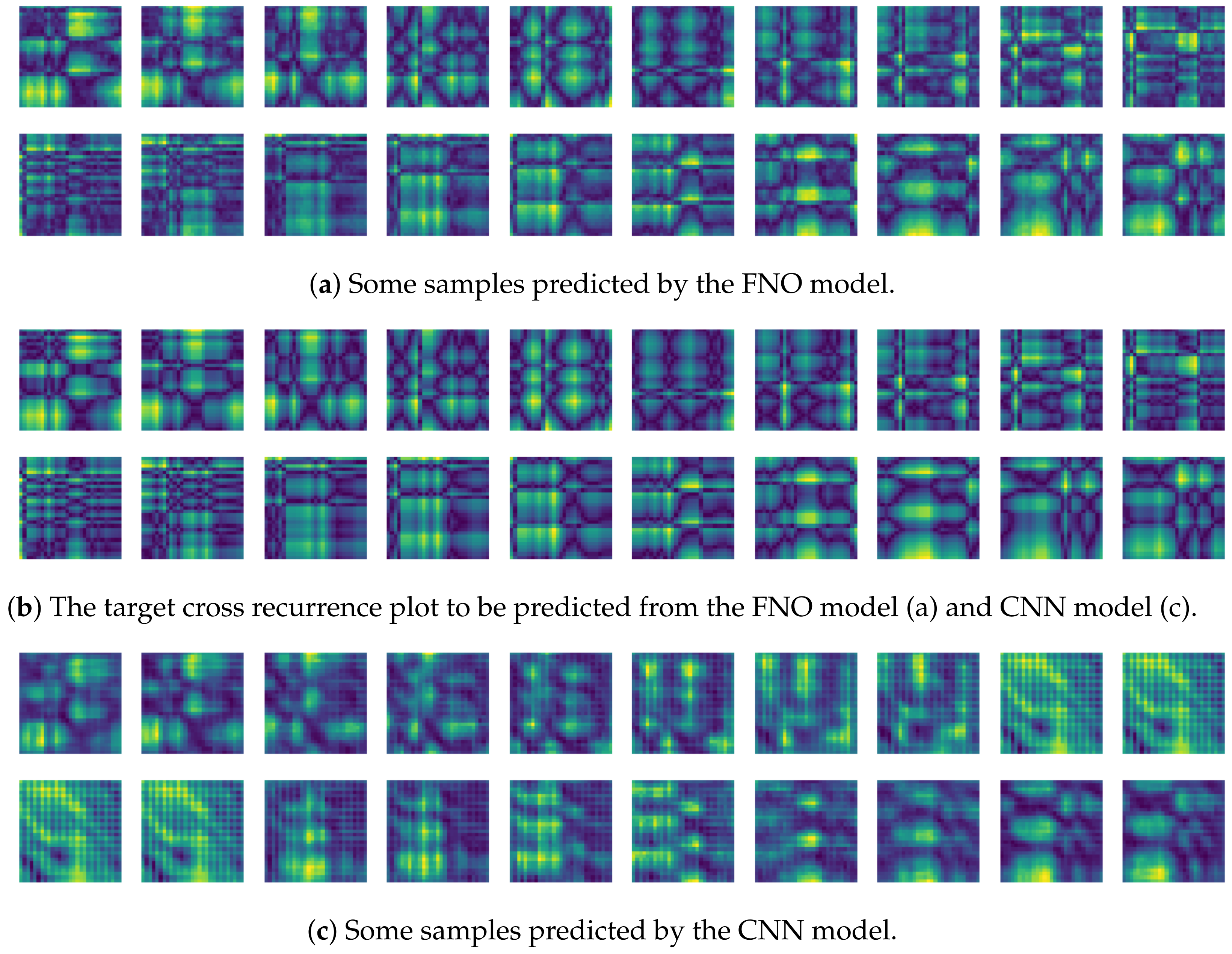

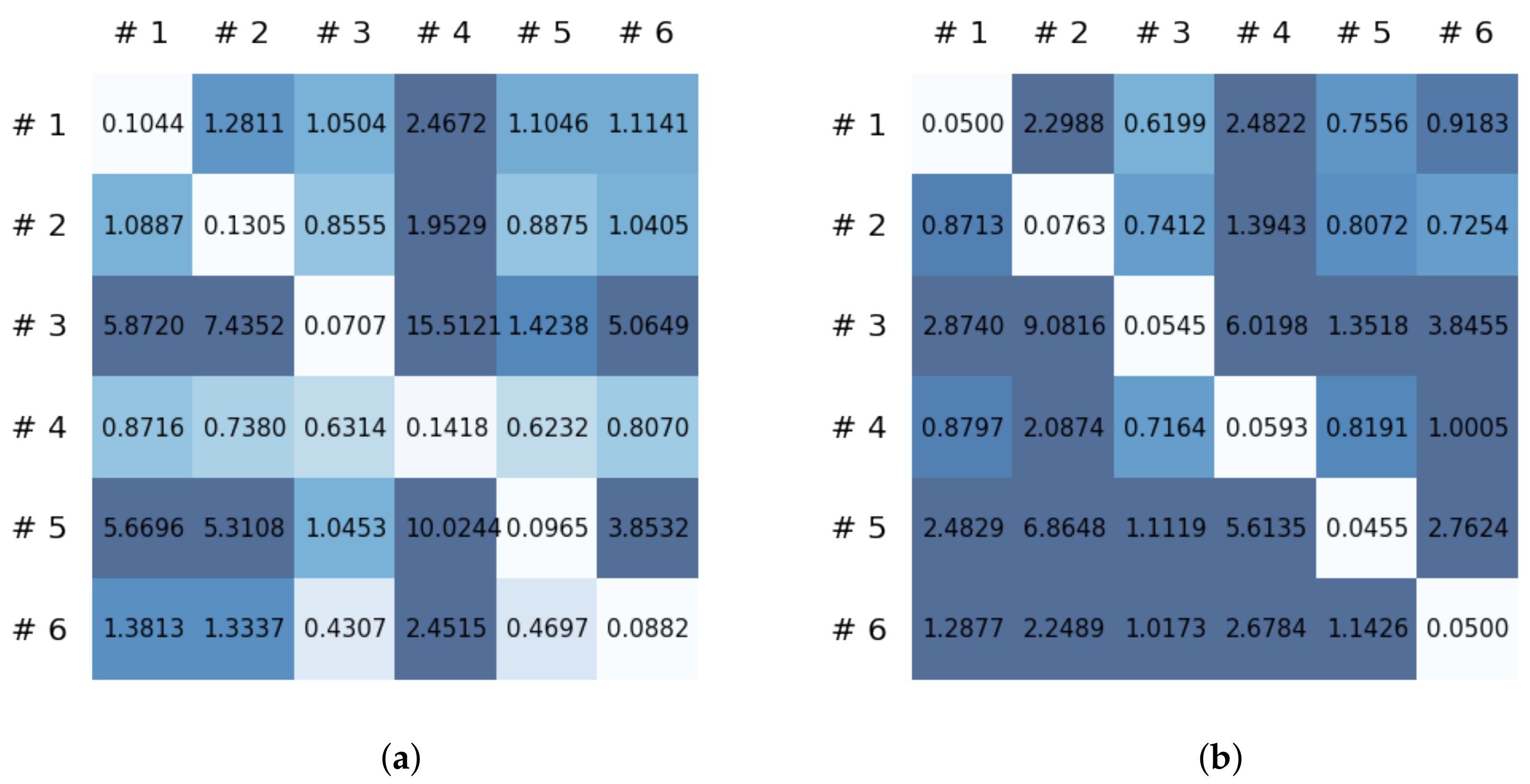

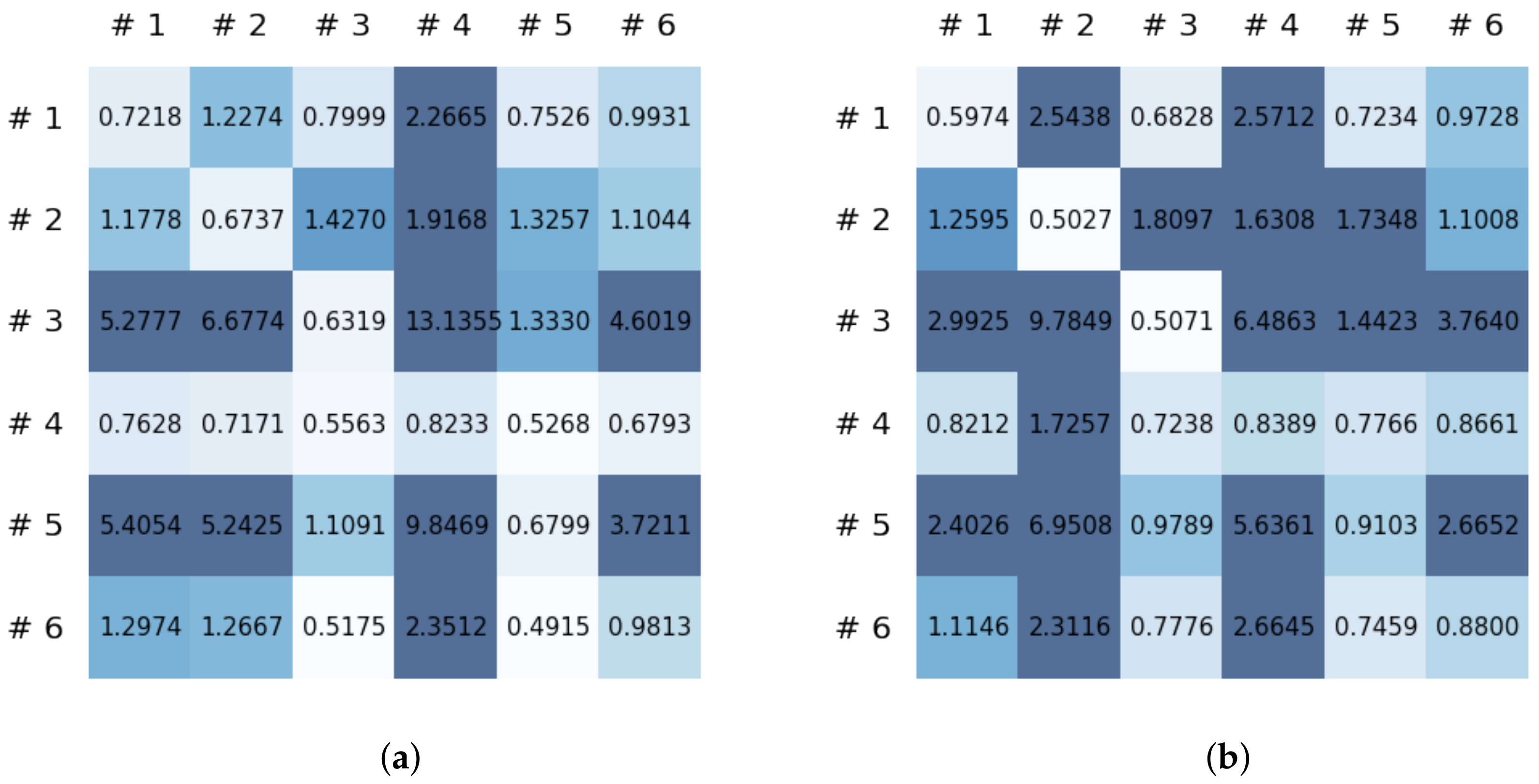

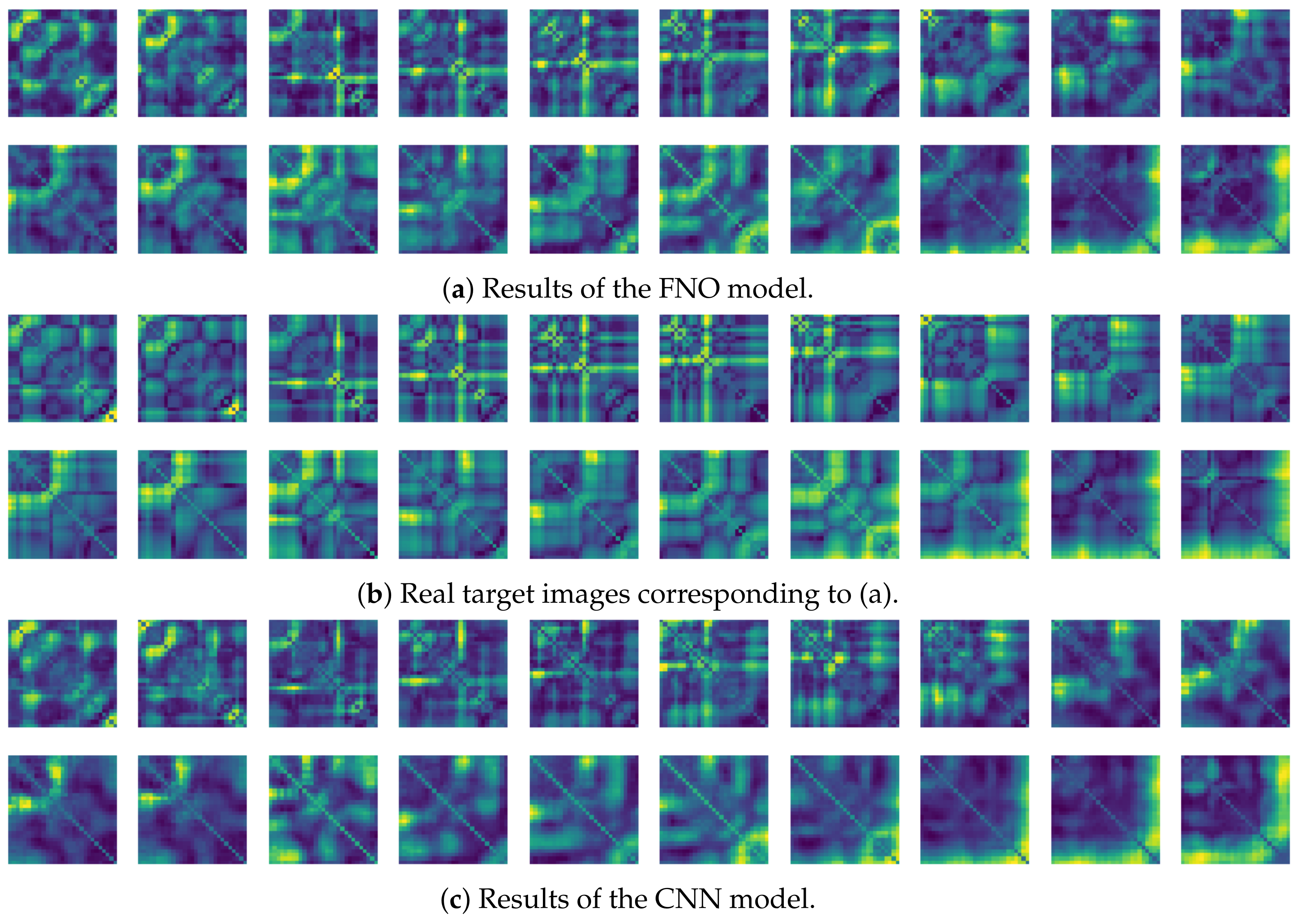

3.2. Experimental Results

4. Discussion and Conclusions

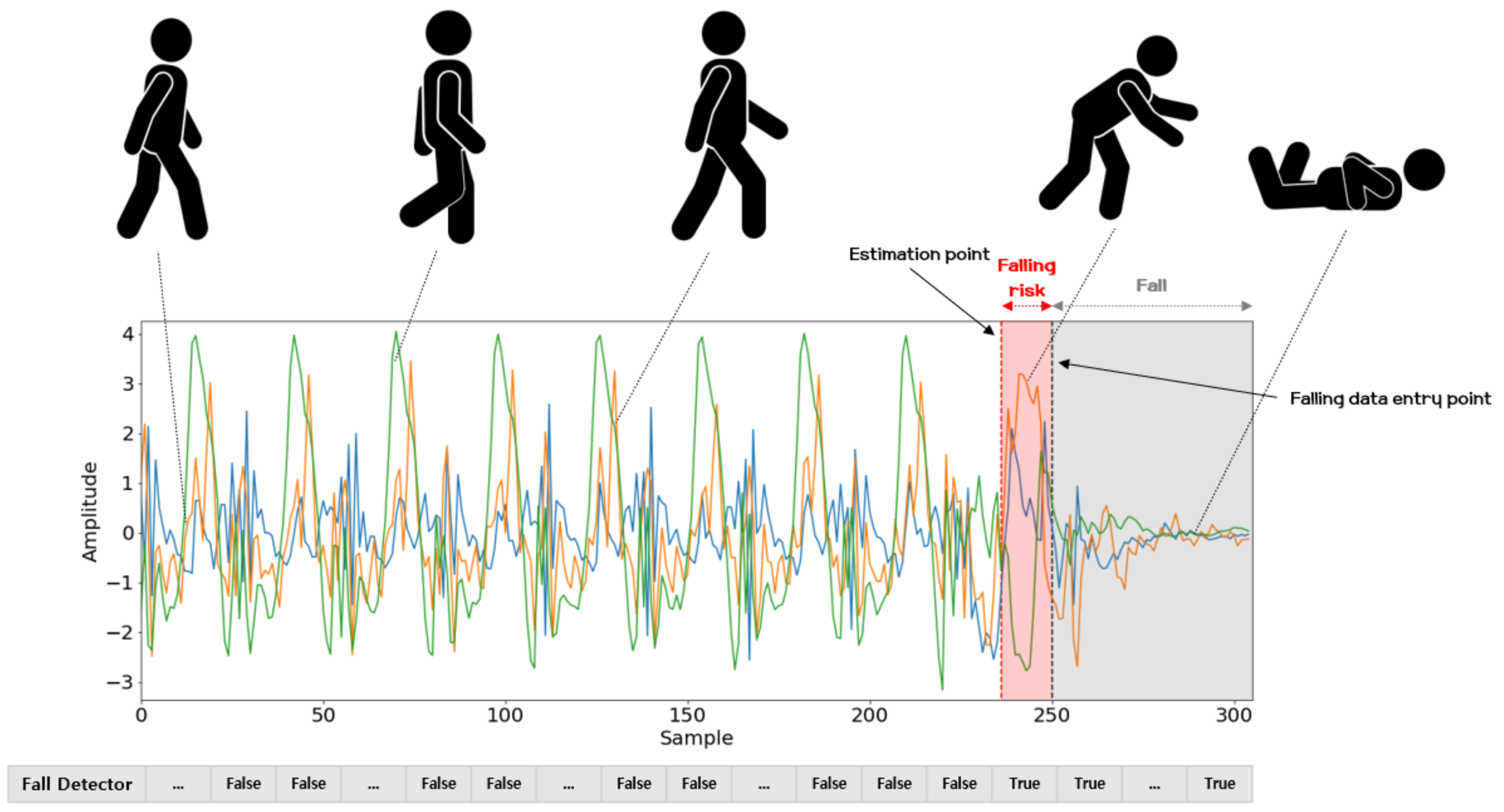

4.1. Discussion

4.2. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ribeiro, P.M.S.; Matos, A.C.; Santos, P.H.; Cardoso, J.S. Machine Learning Improvements to Human Motion Tracking with IMUs. Sensors 2020, 20, 6383. [Google Scholar] [CrossRef]

- Wang, J.M.; Fleet, D.J.; Hertzmann, A. Gaussian process dynamical models for human motion. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 30, 283–298. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kim, T.; Park, J.; Heo, S.; Sung, K.; Park, J. Characterizing dynamic walking patterns and detecting falls with wearable sensors using Gaussian process methods. Sensors 2017, 17, 1172. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kim, J.; Lee, J.; Jang, W.; Lee, S.; Kim, H.; Park, J. Two-stage latent dynamics modeling and filtering for characterizing individual walking and running patterns with smartphone sensors. Sensors 2019, 19, 2712. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Golestani, N.; Moghaddam, M. Human activity recognition using magnetic induction-based motion signals and deep recurrent neural networks. Nat. Commun. 2020, 11, 1551. [Google Scholar] [CrossRef]

- Jordao, A.; Nazare, A.C., Jr.; Sena, J.; Schwartz, W.R. Human activity recognition based on wearable sensor data: A standardization of the state-of-the-art. arXiv 2018, arXiv:1806.05226. [Google Scholar]

- Zhang, Y.; Zhang, Y.; Zhang, Z.; Bao, J.; Song, Y. Human activity recognition based on time series analysis using U-Net. arXiv 2018, arXiv:1809.08113. [Google Scholar]

- Wu, J.; Feng, Y.; Sun, P. Sensor fusion for recognition of activities of daily living. Sensors 2018, 18, 4029. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wang, A.; Zhao, S.; Zheng, C.; Yang, J.; Chen, G.; Chang, C.Y. Activities of Daily Living Recognition With Binary Environment Sensors Using Deep Learning: A Comparative Study. IEEE Sens. J. 2020, 21, 5423–5433. [Google Scholar] [CrossRef]

- Cao, Z.; Gao, H.; Mangalam, K.; Cai, Q.Z.; Vo, M.; Malik, J. Long-term human motion prediction with scene context. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; Springer: Cham, Switzerland, 2020; pp. 387–404. [Google Scholar]

- Dwibedi, D.; Aytar, Y.; Tompson, J.; Sermanet, P.; Zisserman, A. Counting out time: Class agnostic video repetition counting in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 10387–10396. [Google Scholar]

- Muro-De-La-Herran, A.; Garcia-Zapirain, B.; Mendez-Zorrilla, A. Gait analysis methods: An overview of wearable and non-wearable systems, highlighting clinical applications. Sensors 2014, 14, 3362–3394. [Google Scholar] [CrossRef] [Green Version]

- Nguyen, M.D.; Mun, K.R.; Jung, D.; Han, J.; Park, M.; Kim, J.; Kim, J. IMU-based spectrogram approach with deep convolutional neural networks for gait classification. In Proceedings of the 2020 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 4–6 January 2020; pp. 1–6. [Google Scholar]

- Alsheikh, M.A.; Selim, A.; Niyato, D.; Doyle, L.; Lin, S.; Tan, H.P. Deep activity recognition models with triaxial accelerometers. In Proceedings of the Workshops at the Thirtieth AAAI Conference on Artificial Intelligence, Phoenix, AZ, USA, 12–13 February 2016. [Google Scholar]

- Jia, Y.; Song, R.; Wang, G.; Yan, C.; Guo, Y.; Zhong, X. Human Activity Classification with Multi-frequency Spectrogram Fusion and Deep Learning. In Proceedings of the 2019 IEEE 4th International Conference on Signal and Image Processing (ICSIP), Wuxi, China, 19–21 July 2019; pp. 117–121. [Google Scholar]

- Liu, Z.; Xu, L.; Jia, Y.; Guo, S. Human Activity Recognition Based on Deep Learning with Multi-spectrogram. In Proceedings of the 2020 IEEE 5th International Conference on Signal and Image Processing (ICSIP), Nanjing, China, 23–25 October 2020; pp. 11–15. [Google Scholar]

- Zheng, X.; Wang, M.; Ordieres-Meré, J. Comparison of data preprocessing approaches for applying deep learning to human activity recognition in the context of industry 4.0. Sensors 2018, 18, 2146. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Hur, T.; Bang, J.; Lee, J.; Kim, J.I.; Lee, S. Iss2Image: A novel signal-encoding technique for CNN-based human activity recognition. Sensors 2018, 18, 3910. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lu, J.; Tong, K.Y. Robust single accelerometer-based activity recognition using modified recurrence plot. IEEE Sens. J. 2019, 19, 6317–6324. [Google Scholar] [CrossRef]

- Garcia-Ceja, E.; Uddin, M.Z.; Torresen, J. Classification of recurrence plots’ distance matrices with a convolutional neural network for activity recognition. Procedia Comput. Sci. 2018, 130, 157–163. [Google Scholar] [CrossRef]

- Jianjie, L.; Raymond, T. Encoding Accelerometer Signals as Images for Activity Recognition Using Residual Neural Network. Available online: https://arxiv.org/vc/arxiv/papers/1803/1803.09052v1.pdf (accessed on 1 March 2018).

- Penatti, O.A.; Santos, M.F. Human activity recognition from mobile inertial sensors using recurrence plots. arXiv 2017, arXiv:1712.01429. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Cho, K.; Van Merriënboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv 2014, arXiv:1406.1078. [Google Scholar]

- Oord, A.V.D.; Dieleman, S.; Zen, H.; Simonyan, K.; Vinyals, O.; Graves, A.; Kalchbrenner, N.; Senior, A.; Kavukcuoglu, K. Wavenet: A generative model for raw audio. arXiv 2016, arXiv:1609.03499. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; Springer: Cham, Switzerland, 2020; pp. 213–229. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Li, Z.; Kovachki, N.; Azizzadenesheli, K.; Liu, B.; Bhattacharya, K.; Stuart, A.; Anandkumar, A. Multipole graph neural operator for parametric partial differential equations. arXiv 2020, arXiv:2006.09535. [Google Scholar]

- Anandkumar, A.; Azizzadenesheli, K.; Bhattacharya, K.; Kovachki, N.; Li, Z.; Liu, B.; Stuart, A. Neural Operator: Graph Kernel Network for Partial Differential Equations. In Proceedings of the ICLR 2020 Workshop on Integration of Deep Neural Models and Differential Equations, Virtual Conference, Addis Ababa, Ethiopia, 26 April 2020. [Google Scholar]

- Li, Z.; Kovachki, N.; Azizzadenesheli, K.; Liu, B.; Bhattacharya, K.; Stuart, A.; An kumar, A. Fourier neural operator for parametric partial differential equations. arXiv 2020, arXiv:2010.08895. [Google Scholar]

- Chen, R.T.; Rubanova, Y.; Bettencourt, J.; Duvenaud, D. Neural ordinary differential equations. arXiv 2018, arXiv:1806.07366. [Google Scholar]

- Hatami, N.; Gavet, Y.; Debayle, J. Classification of time-series images using deep convolutional neural networks. In Proceedings of the Tenth International Conference on Machine Vision (ICMV 2017), Vienna, Austria, 13–15 November 2017; International Society for Optics and Photonics: Bellingham, WA, USA, 2018; Volume 10696, p. 106960Y. [Google Scholar]

- Zhang, Y.; Hou, Y.; Zhou, S.; Ouyang, K. Encoding time series as multi-scale signed recurrence plots for classification using fully convolutional networks. Sensors 2020, 20, 3818. [Google Scholar] [CrossRef]

- Eckmann, J.P.; Kamphorst, S.O.; Ruelle, D. Recurrence plots of dynamical systems. World Sci. Ser. Nonlinear Sci. Ser. A 1995, 16, 441–446. [Google Scholar]

- Hornik, K.; Stinchcombe, M.; White, H. Multilayer feedforward networks are universal approximators. Neural Netw. 1989, 2, 359–366. [Google Scholar] [CrossRef]

- Lee-Thorp, J.; Ainslie, J.; Eckstein, I.; Ontanon, S. FNet: Mixing Tokens with Fourier Transforms. arXiv 2021, arXiv:2105.03824. [Google Scholar]

- Lu, L.; Jin, P.; Karniadakis, G.E. Deeponet: Learning nonlinear operators for identifying differential equations based on the universal approximation theorem of operators. arXiv 2019, arXiv:1910.03193. [Google Scholar]

- Guiry, J.J.; van de Ven, P.; Nelson, J.; Warmerdam, L.; Riper, H. Activity recognition with smartphone support. Med. Eng. Phys. 2014, 36, 670–675. [Google Scholar] [CrossRef]

- Micucci, D.; Mobilio, M.; Napoletano, P. Unimib shar: A dataset for human activity recognition using acceleration data from smartphones. Appl. Sci. 2017, 7, 1101. [Google Scholar] [CrossRef] [Green Version]

- Huang, E.J.; Onnela, J.P. Augmented Movelet Method for Activity Classification Using Smartphone Gyroscope and Accelerometer Data. Sensors 2020, 20, 3706. [Google Scholar] [CrossRef] [PubMed]

- Malekzadeh, M.; Clegg, R.G.; Cavallaro, A.; Haddadi, H. Mobile sensor data anonymization. In Proceedings of the International Conference on Internet of Things Design and Implementation, Montreal, QC, Canada, 15–18 April 2019; pp. 49–58. [Google Scholar]

- Vavoulas, G.; Chatzaki, C.; Malliotakis, T.; Pediaditis, M.; Tsiknakis, M. The mobiact dataset: Recognition of activities of daily living using smartphones. In Proceedings of the International Conference on Information and Communication Technologies for Ageing Well and e-Health, Rome, Italy, 21–22 April 2016; Volume 2, pp. 143–151. [Google Scholar]

- Matlab Application. Available online: https://apps.apple.com/us/app/matlab-mobile/id370976661 (accessed on 9 December 2021).

- iPhone XS Specification. Available online: https://support.apple.com/kb/SP779?locale=en_US (accessed on 12 September 2018).

- Shoaib, M.; Bosch, S.; Incel, O.D.; Scholten, H.; Havinga, P.J. Fusion of smartphone motion sensors for physical activity recognition. Sensors 2014, 14, 10146–10176. [Google Scholar] [CrossRef]

- Shoaib, M.; Scholten, H.; Havinga, P.J. Towards physical activity recognition using smartphone sensors. In Proceedings of the 2013 IEEE 10th International Conference on Ubiquitous Intelligence and Computing and 2013 IEEE 10th International Conference on Autonomic and Trusted Computing, Vietri sul Mare, Italy, 18–21 December 2013; pp. 80–87. [Google Scholar]

- San Buenaventura, C.V.; Tiglao, N.M.C. Basic human activity recognition based on sensor fusion in smartphones. In Proceedings of the 2017 IFIP/IEEE Symposium on Integrated Network and Service Management (IM), Lisbon, Portugal, 8–12 May 2017; pp. 1182–1185. [Google Scholar]

- Sousa Lima, W.; Souto, E.; El-Khatib, K.; Jalali, R.; Gama, J. Human activity recognition using inertial sensors in a smartphone: An overview. Sensors 2019, 19, 3213. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Definition of Walking and Running, Walk Jog Run Club. Available online: http://www.wjrclub.com/terms-and-definitions.html (accessed on 21 September 2001).

- Virtanen, P.; Gommers, R.; Oliphant, T.E.; Haberl, M.; Reddy, T.; Cournapeau, D.; Burovski, E.; Peterson, P.; Weckesser, W.; Bright, J.; et al. SciPy 1.0: Fundamental algorithms for scientific computing in Python. Nat. Methods 2020, 17, 261–272. [Google Scholar] [CrossRef] [Green Version]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- DelMarco, S.; Deng, Y. Detection of chaotic dynamics in human gait signals from mobile devices. In Proceedings of the Mobile Multimedia/Image Processing, Security, and Applications, Anaheim, CA, USA, 22 June 2017; International Society for Optics and Photonics: Bellingham, WA, USA; Volume 10221, p. 102210C. [Google Scholar]

- World Health Organization. Falls. Available online: https://www.who.int/news-room/fact-sheets/detail/falls (accessed on 26 April 2021).

- Luna-Perejón, F.; Domínguez-Morales, M.J.; Civit-Balcells, A. Wearable fall detector using recurrent neural networks. Sensors 2019, 19, 4885. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Santos, G.L.; Endo, P.T.; Monteiro, K.H.D.C.; Rocha, E.D.S.; Silva, I.; Lynn, T. Accelerometer-based human fall detection using convolutional neural networks. Sensors 2019, 19, 1644. [Google Scholar] [CrossRef] [Green Version]

- Gutiérrez, J.; Rodríguez, V.; Martin, S. Comprehensive review of vision-based fall detection systems. Sensors 2021, 21, 947. [Google Scholar] [CrossRef]

- Liu, W.; Wang, X.; Owens, J.D.; Li, Y. Energy-based Out-of-distribution Detection. arXiv 2020, arXiv:2010.03759. [Google Scholar]

- Zhang, T.; Wang, J.; Xu, L.; Liu, P. Fall detection by wearable sensor and one-class SVM algorithm. In Proceedings of the Intelligent Computing in Signal Processing and Pattern Recognition, Kunming, China, 16–19 August 2006; Springer: Berlin/Heidelberg, Germany, 2006; pp. 858–863. [Google Scholar]

- Santoyo-Ramón, J.A.; Casilari, E.; Cano-García, J.M. A study of one-class classification algorithms for wearable fall sensors. Biosensors 2021, 11, 284. [Google Scholar] [CrossRef]

| Models | FNO | CNN |

|---|---|---|

| MSE value | 0.134 | 0.332 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, T.; Park, J.; Lee, J.; Park, J. Predicting Human Motion Signals Using Modern Deep Learning Techniques and Smartphone Sensors. Sensors 2021, 21, 8270. https://doi.org/10.3390/s21248270

Kim T, Park J, Lee J, Park J. Predicting Human Motion Signals Using Modern Deep Learning Techniques and Smartphone Sensors. Sensors. 2021; 21(24):8270. https://doi.org/10.3390/s21248270

Chicago/Turabian StyleKim, Taehwan, Jeongho Park, Juwon Lee, and Jooyoung Park. 2021. "Predicting Human Motion Signals Using Modern Deep Learning Techniques and Smartphone Sensors" Sensors 21, no. 24: 8270. https://doi.org/10.3390/s21248270

APA StyleKim, T., Park, J., Lee, J., & Park, J. (2021). Predicting Human Motion Signals Using Modern Deep Learning Techniques and Smartphone Sensors. Sensors, 21(24), 8270. https://doi.org/10.3390/s21248270