An Autonomous Robot-Aided Auditing Scheme for Floor Cleaning

Abstract

:1. Introduction

2. Objective

- Develop an audit sensor capable of analysing the extent of cleanliness at a given point on the floor.

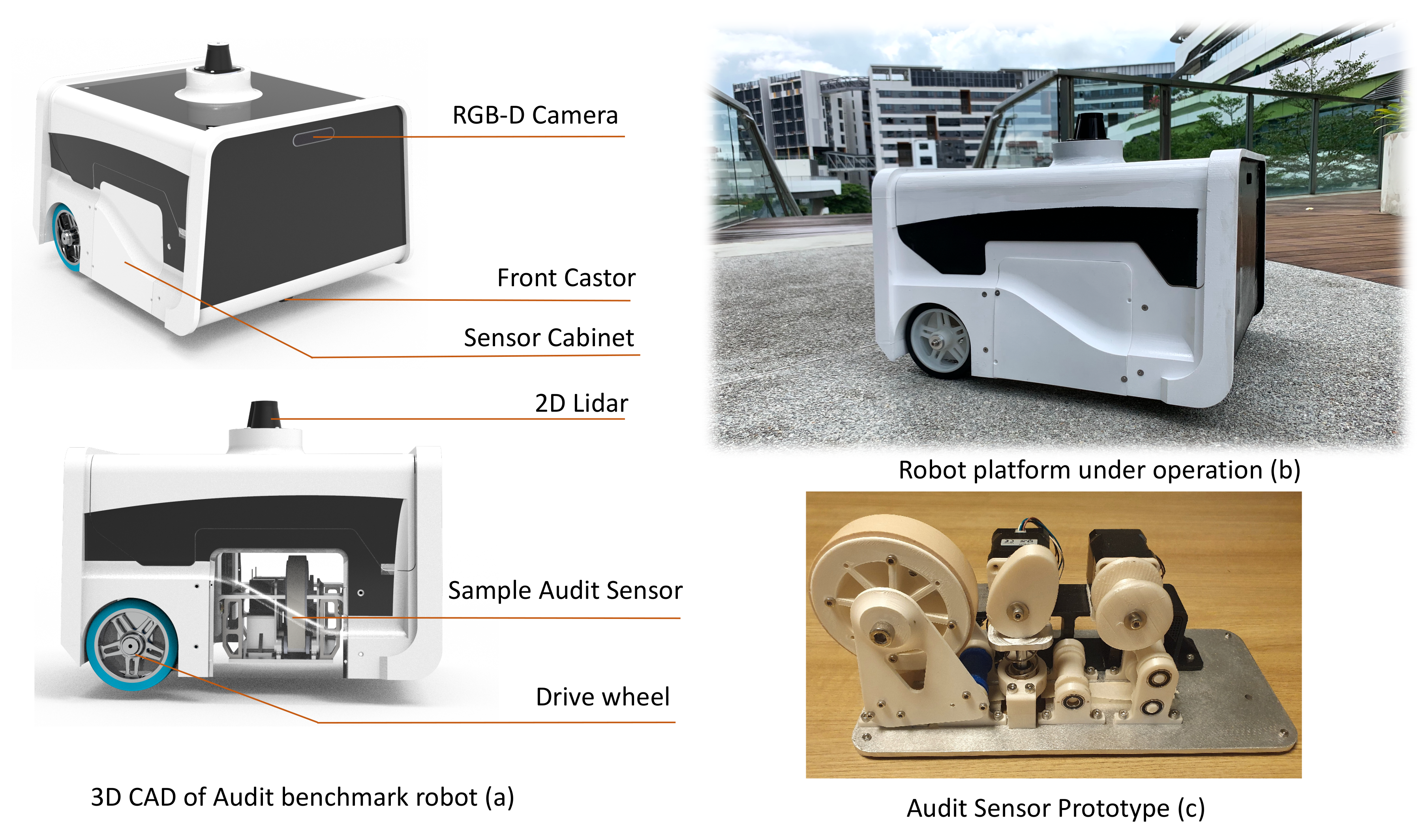

- Integrate the audit sensor in an in-house developed mobile robot, and formulate an exploration strategy for auditing an area.

- Experimentally determine the cleanliness benchmark score of the audit area using the in-house developed mobile robot.

3. Cleaning Audit Framework Outline

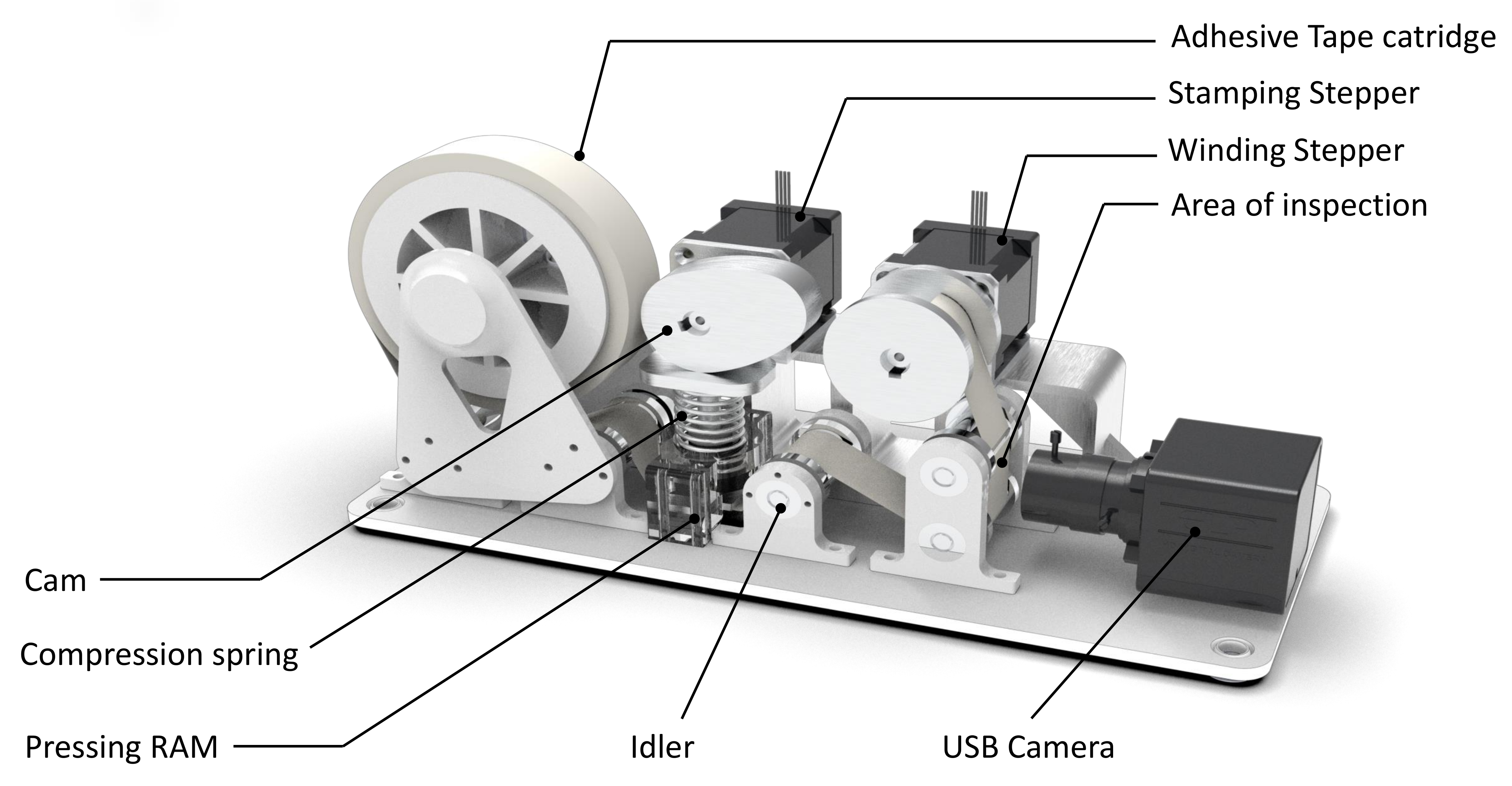

4. Audit Sensor

Working Principle

5. Audit Robot and Framework Integration

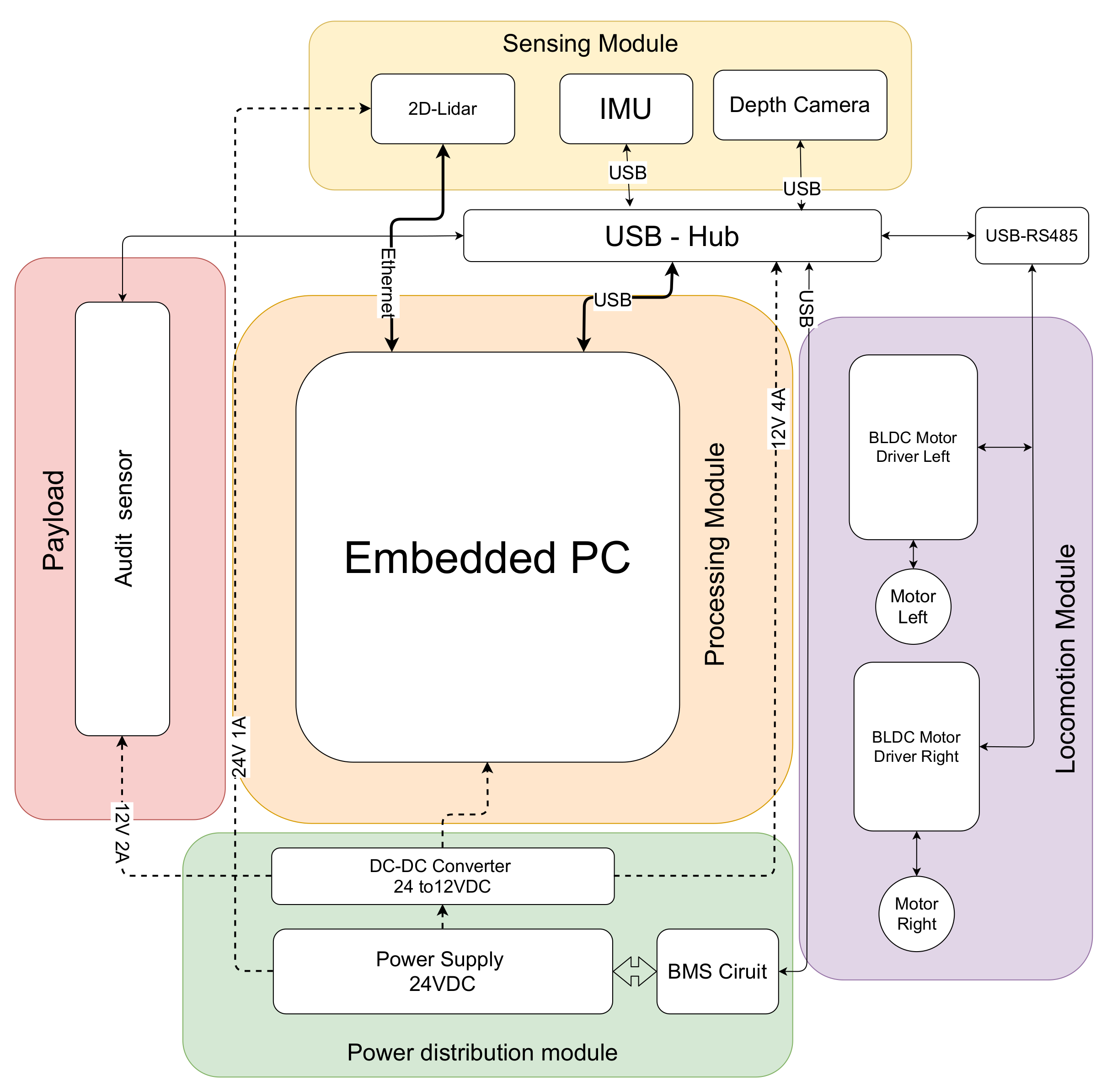

Audit-Robot Architecture

6. Exploration Strategy

| Algorithm 1 Pseudo-code of the exploration strategy with modified frontier exploration algorithm |

|

- Capture the RGB frame and aligned depth map of the environment;

- Perform a semantic segmentation and extract the floor region;

- Extract the dirt region using periodic pattern filter applied on floor region;

- Using depth map, transform the centroid of dirt region from pixel-coordinate to robot co-ordinate;

6.1. Semantic Segmentation

6.2. Periodic Pattern Filter

- Step 1: Transform the image to a frequency domain using 2D Fast Fourier Transform

- Step 2: The periodic pattern filter H is determined by applying an FFT shift operation in the input image

- Step 3: Choose the maximum log components from

- Step 4: Suppress the frequency components less than

- Dirt density map: A grid-map with audit scores are labeled to its corresponding location. The significance of dirt density map is to visualize the sample-level details of dirt accumulation corresponds to a region.

- Total audit score (K): Determined by the algebraic sum of sample audit score. The total audit score is a positive integer that represents degree of untidiness of a given location. An ideal scenario of perfectly clean surface should have an audit score of zero. Using Equation (6) for sample audit score, the total audit score K is given by:where N is the total number of samples collected. The maximum value of total audit score is given by (13):Since maximum audit score ( ) given by a sample is unity, the maximum possible total audit score is N.

- Cleaning benchmark score (): It is the measure of cleanliness of a surface out of 100. The cleaning benchmark score is determined from Total audit score K and N, which is given by:where N is the total number of samples collected and is the maximum possible audit score. If the maximum sample audit score (Equation (6)) is 1, can be taken as N.

7. Results and Discussion

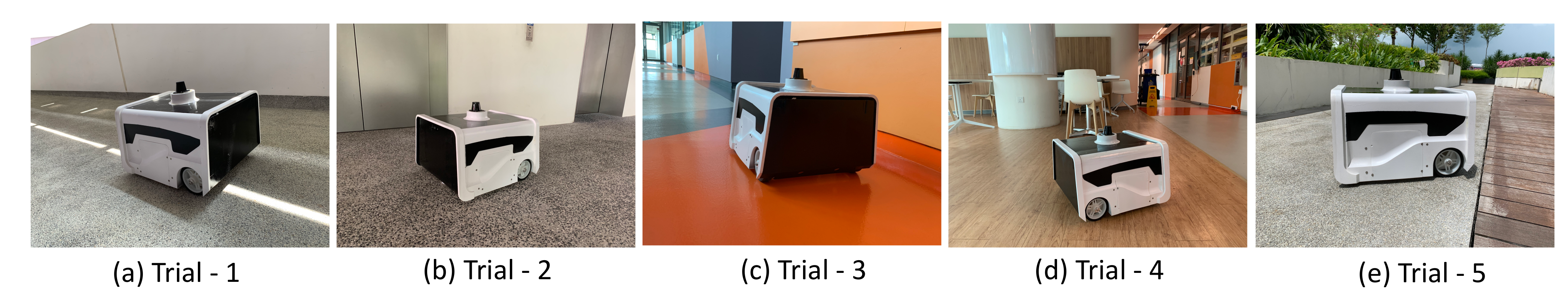

7.1. Experiment Trials

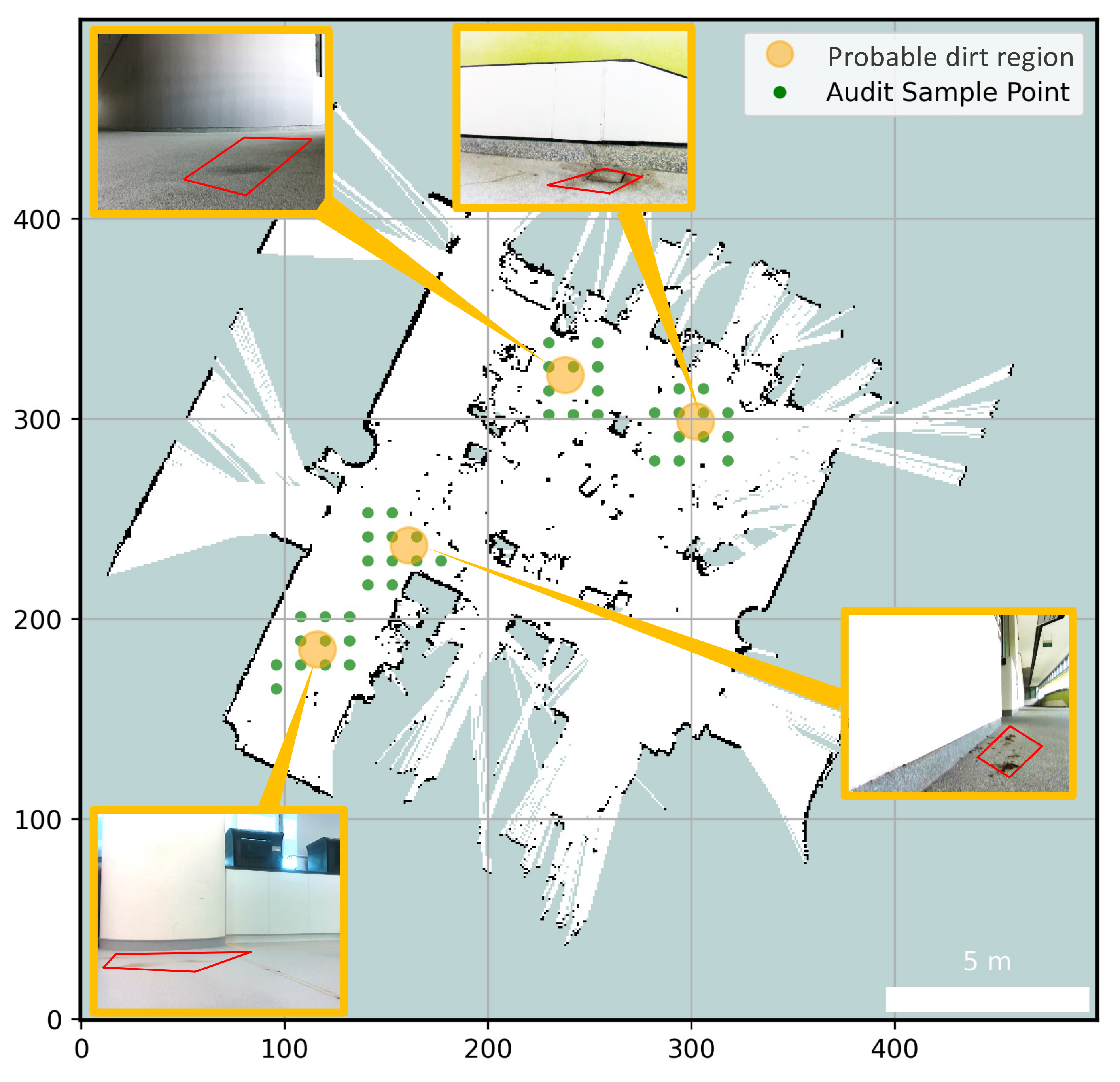

- Trial 1: The trial was carried out in an indoor space between the corridor and connecting bridge in the university. The dirt particles are sprinkled in four different locations. Robot identified eight unique probable dirt location corresponds to the identified dirt region. The 2D map of the explored region, location of the sample point, the audit scores corresponds to sample points and the distribution of dirt obtained are given in Figure 8.

- Trial 2: The trial was carried out in a lift lobby. The environment of operation was semi-outdoor with a floor type was coarse cement.Similar to the dirt particles are sprinkled in four different locations. In trial2, Robot identified the dirt locations in six unique probable dirt location. Corresponding to six unique probable dirt location, 76 samples are collected. Even though the number of probable dirt locations are same as trial 1, the positions of the dirt locations identified where close to the map boundaries hence the number of accessible sample points are less compared to trial 1. The 2D map of the explored region, location of the sample point, the audit scores corresponds to sample points and the distribution of dirt obtained are given in Figure 9.

- Trial 3: The trial was carried out in an narrow long indoor corridor. This environment is a comparatively clean area than other trial locations since its an indoor space going under regular maintenance. The floor type was polished vinyl. The dirt particles are sprinkled on four different locations. Robot identified four unique probable dirt location corresponds to the identified dirt region. Robot took total of 48 samples corresponding to the probable dirt locations. The narrow region of the corridor resulted the generation of only 48 sample points which was accessible by the robot.The 2D map of the explored region, location of the sample point, the audit scores corresponds to sample points and the distribution of dirt obtained are given in Figure 10.

- Trial 4: The trial was carried out inside a cafeteria with polished vinyl floor type. The dirt particles where sprinkled in four different locations. Robot identified eight unique probable dirt location corresponds to the identified dirt region. Robot took total of 127 sample points corresponding to probable dirt location. The trial region was spacious compared to the other location this allowed to perform more sampling for inspection The 2D map of the explored region, location of the sample point, the audit scores corresponds to sample points and the distribution of dirt obtained are given in Figure 11.

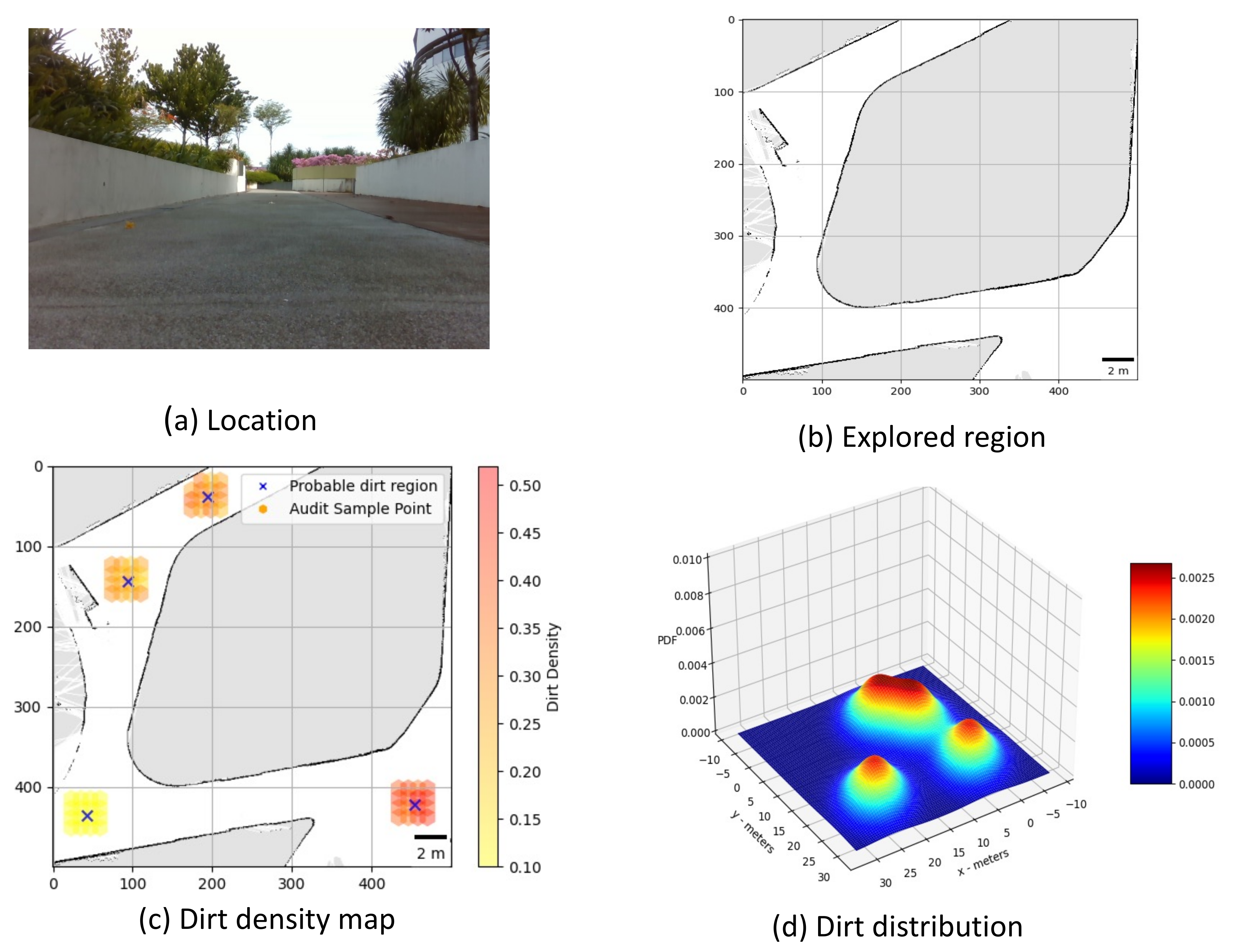

- Trial 5: The trial was carried out in an semi-outdoor park with coarse cemented and wooden floor type. The dirt particles where sprinkled in four different locations. Robot detected four probable dirt region. A total of 63 sample points are collected.The 2D map of the explored region, location of the sample point, the audit scores corresponds to sample points and the distribution of dirt obtained are given in Figure 12.

7.2. Observation and Inference

- Floor texture: The floor with a coarse texture makes the dust particle less susceptible for adhesive dust lifting. However it is acceptable to an extend as long as the cleanliness benchmark is done with similar floor types.

- Color of the dust particles: Some of the dust particles remain undetected during sample auditing, especially dust particles that are more reflective (white paper bits and stapler pins).

- Transition between floor types: The periodic pattern suppression algorithm gives a false positive on detection of probable dirt region when the robot encounters a transition from one floor type to another.

8. Conclusions and Future Works

- Exploration of electrostatic dirt lifting principle for audit sensor

- Usage of machine learning based data-driven approach for sample auditing.

- Improving the sample auditing procedure with odour based sensing.

- Integration of cleaning audit result to improve the cleaning efficiency of cleaning robots.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Cleaning a Nation: Cultivating a Healthy Living Environment. Available online: https://www.clc.gov.sg/research-publications/publications/urban-systems-studies/view/cleaning-a-nation-cultivating-a-healthy-living-environment (accessed on 2 March 2021).

- The Global Cleaning Industry: By the Numbers. Available online: https://www.cleaningservicereviewed.com/global-cleaning-industry-statistics/ (accessed on 2 March 2021).

- Contract Cleaning Services Market Size, Share & Trends Analysis Report By Service Type. Available online: https://www.grandviewresearch.com/industry-analysis/contract-cleaning-services-market (accessed on 2 March 2021).

- Giske, L.A.L.; Bjørlykhaug, E.; Løvdal, T.; Mork, O.J. Experimental study of effectiveness of robotic cleaning for fish-processing plants. Food Control 2019, 100, 269–277. [Google Scholar] [CrossRef]

- Villacís, J.E.; Lopez, M.; Passey, D.; Santillán, M.H.; Verdezoto, G.; Trujillo, F.; Paredes, G.; Alarcón, C.; Horvath, R.; Stibich, M. Efficacy of pulsed-xenon ultraviolet light for disinfection of high-touch surfaces in an Ecuadorian hospital. BMC Infect. Dis. 2019, 19, 575. [Google Scholar] [CrossRef] [Green Version]

- Mets Kiritsis, L. Can Cheap Robotic Vacuum Cleaners be Made More Efficient?: A Computer Simulation of a Smarter Robotic Vacuum Cleaner. Ph.D. Thesis, School of Computer Science, KTH Royal Institute of Technology, Stockholm, Sweden, 6 June 2018. [Google Scholar]

- Fleming, M.; Patrick, A.; Gryskevicz, M.; Masroor, N.; Hassmer, L.; Shimp, K.; Cooper, K.; Doll, M.; Stevens, M.; Bearman, G. Deployment of a touchless ultraviolet light robot for terminal room disinfection: The importance of audit and feedback. Am. J. Infect. Control 2018, 46, 241–243. [Google Scholar] [CrossRef] [PubMed]

- Lee, Y.S.; Kim, S.H.; Gil, M.S.; Lee, S.H.; Kang, M.S.; Jang, S.H.; Yu, B.H.; Ryu, B.G.; Hong, D.; Han, C.S. The study on the integrated control system for curtain wall building façade cleaning robot. Autom. Constr. 2018, 94, 39–46. [Google Scholar] [CrossRef]

- Samarakoon, S.B.P.; Muthugala, M.V.J.; Le, A.V.; Elara, M.R. HTetro-infi: A reconfigurable floor cleaning robot with infinite morphologies. IEEE Access 2020, 8, 69816–69828. [Google Scholar] [CrossRef]

- Prabakaran, V.; Elara, M.R.; Pathmakumar, T.; Nansai, S. Floor cleaning robot with reconfigurable mechanism. Autom. Constr. 2018, 91, 155–165. [Google Scholar] [CrossRef]

- Liu, H.; Ma, J.; Huang, W. Sensor-based complete coverage path planning in dynamic environment for cleaning robot. CAAI Trans. Intell. Technol. 2018, 3, 65–72. [Google Scholar] [CrossRef]

- Pathmakumar, T.; Rayguru, M.M.; Ghanta, S.; Kalimuthu, M.; Elara, M.R. An Optimal Footprint Based Coverage Planning for Hydro Blasting Robots. Sensors 2021, 21, 1194. [Google Scholar] [CrossRef]

- Al-Hamad, A.; Maxwell, S. How clean is clean? Proposed methods for hospital cleaning assessment. J. Hosp. Infect. 2008, 70, 328–334. [Google Scholar] [CrossRef]

- Gold, K.M.; Hitchins, V.M. Cleaning assessment of disinfectant cleaning wipes on an external surface of a medical device contaminated with artificial blood or Streptococcus pneumoniae. Am. J. Infect. Control 2013, 41, 901–907. [Google Scholar] [CrossRef]

- Smith, P.W.; Sayles, H.; Hewlett, A.; Cavalieri, R.J.; Gibbs, S.G.; Rupp, M.E. A study of three methods for assessment of hospital environmental cleaning. Healthc. Infect. 2013, 18, 80–85. [Google Scholar] [CrossRef]

- Lewis, T.; Griffith, C.; Gallo, M.; Weinbren, M. A modified ATP benchmark for evaluating the cleaning of some hospital environmental surfaces. J. Hosp. Infect. 2008, 69, 156–163. [Google Scholar] [CrossRef]

- Sanna, T.; Dallolio, L.; Raggi, A.; Mazzetti, M.; Lorusso, G.; Zanni, A.; Farruggia, P.; Leoni, E. ATP bioluminescence assay for evaluating cleaning practices in operating theatres: Applicability and limitations. BMC Infect. Dis. 2018, 18, 583. [Google Scholar] [CrossRef]

- Halimeh, N.A.; Truitt, C.; Madsen, R.; Goldwater, W. Proposed ATP Benchmark Values by Patient Care Area. Am. J. Infect. Control 2019, 47, S45. [Google Scholar] [CrossRef]

- Chang, C.L.; Chang, C.Y.; Tang, Z.Y.; Chen, S.T. High-efficiency automatic recharging mechanism for cleaning robot using multi-sensor. Sensors 2018, 18, 3911. [Google Scholar] [CrossRef] [Green Version]

- Yin, J.; Apuroop, K.G.S.; Tamilselvam, Y.K.; Mohan, R.E.; Ramalingam, B.; Le, A.V. Table cleaning task by human support robot using deep learning technique. Sensors 2020, 20, 1698. [Google Scholar] [CrossRef] [Green Version]

- Ramalingam, B.; Hayat, A.A.; Elara, M.R.; Félix Gómez, B.; Yi, L.; Pathmakumar, T.; Rayguru, M.M.; Subramanian, S. Deep Learning Based Pavement Inspection Using Self-Reconfigurable Robot. Sensors 2021, 21, 2595. [Google Scholar] [CrossRef]

- Le, A.V.; Ramalingam, B.; Gómez, B.F.; Mohan, R.E.; Minh, T.H.Q.; Sivanantham, V. Social Density Monitoring Toward Selective Cleaning by Human Support Robot With 3D Based Perception System. IEEE Access 2021, 9, 41407–41416. [Google Scholar]

- Ramalingam, B.; Veerajagadheswar, P.; Ilyas, M.; Elara, M.R.; Manimuthu, A. Vision-Based Dirt Detection and Adaptive Tiling Scheme for Selective Area Coverage. J. Sens. 2018, 2018. [Google Scholar] [CrossRef]

- Grünauer, A.; Halmetschlager-Funek, G.; Prankl, J.; Vincze, M. The power of GMMs: Unsupervised dirt spot detection for industrial floor cleaning robots. In Annual Conference Towards Autonomous Robotic Systems; Springer: Berlin/Heidelberg, Germany, 2017; pp. 436–449. [Google Scholar]

- Bormann, R.; Wang, X.; Xu, J.; Schmidt, J. DirtNet: Visual Dirt Detection for Autonomous Cleaning Robots. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1977–1983. [Google Scholar]

- Guevorkian, D.; Launiainen, A.; Liuha, P.; Lappalainen, V. Architectures for the sum of absolute differences operation. In Proceedings of the IEEE Workshop on Signal Processing Systems, San Diego, CA, USA, 16–18 October 2002; IEEE: Piscataway, NJ, USA, 2002; pp. 57–62. [Google Scholar]

- Vanne, J.; Aho, E.; Hamalainen, T.D.; Kuusilinna, K. A high-performance sum of absolute difference implementation for motion estimation. IEEE Trans. Circuits Syst. Video Technol. 2006, 16, 876–883. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [Green Version]

- Dosselmann, R.; Yang, X.D. A comprehensive assessment of the structural similarity index. Signal Image Video Process. 2011, 5, 81–91. [Google Scholar] [CrossRef]

- Peng, D.g.; Zhang, H.; Yang, L.; Li, H. Design and realization of modbus protocol based on embedded linux system. In Proceedings of the 2008 International Conference on Embedded Software and Systems Symposia, Chengdu, China, 29–31 July 2008; IEEE: Piscataway, NJ, USA, 2008; pp. 275–280. [Google Scholar]

- Grunnet-Jepsen, A.; Sweetser, J.N.; Winer, P.; Takagi, A.; Woodfill, J. Projectors for Intel® RealSense™ Depth Cameras D4xx; Intel Support, Intel Corporation: Santa Clara, CA, USA, 2018. [Google Scholar]

- Kuipers, B.; Byun, Y.T. A robot exploration and mapping strategy based on a semantic hierarchy of spatial representations. Robot. Auton. Syst. 1991, 8, 47–63. [Google Scholar] [CrossRef]

- Mielle, M.; Magnusson, M.; Andreasson, H.; Lilienthal, A.J. SLAM auto-complete: Completing a robot map using an emergency map. In Proceedings of the 2017 IEEE International Symposium on Safety, Security and Rescue Robotics (SSRR), Shanghai, China, 11–13 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 35–40. [Google Scholar]

- Tang, Y.; Cai, J.; Chen, M.; Yan, X.; Xie, Y. An autonomous exploration algorithm using environment-robot interacted traversability analysis. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3–8 November 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 4885–4890. [Google Scholar]

- Da Silva Lubanco, D.L.; Pichler-Scheder, M.; Schlechter, T. A Novel Frontier-Based Exploration Algorithm for Mobile Robots. In Proceedings of the 2020 6th International Conference on Mechatronics and Robotics Engineering (ICMRE), Barcelona, Spain, 12–15 February 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–5. [Google Scholar]

- Fang, B.; Ding, J.; Wang, Z. Autonomous robotic exploration based on frontier point optimization and multistep path planning. IEEE Access 2019, 7, 46104–46113. [Google Scholar] [CrossRef]

- Yamauchi, B. Frontier-based exploration using multiple robots. In Proceedings of the Second International Conference on Autonomous Agents, Minneapolis, MN, USA, 10–13 May 1998; pp. 47–53. [Google Scholar]

- Hart, P.E.; Nilsson, N.J.; Raphael, B. A formal basis for the heuristic determination of minimum cost paths. IEEE Trans. Syst. Sci. Cybern. 1968, 4, 100–107. [Google Scholar] [CrossRef]

- Fox, D.; Burgard, W.; Thrun, S. The dynamic window approach to collision avoidance. IEEE Robot. Autom. Mag. 1997, 4, 23–33. [Google Scholar] [CrossRef] [Green Version]

- Balasuriya, B.; Chathuranga, B.; Jayasundara, B.; Napagoda, N.; Kumarawadu, S.; Chandima, D.; Jayasekara, A. Outdoor robot navigation using Gmapping based SLAM algorithm. In Proceedings of the 2016 Moratuwa Engineering Research Conference (MERCon), Moratuwa, Sri Lanka, 5–6 April 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 403–408. [Google Scholar]

- Ramalingam, B.; Mohan, R.E.; Pookkuttath, S.; Gómez, B.F.; Sairam Borusu, C.S.C.; Wee Teng, T.; Tamilselvam, Y.K. Remote Insects Trap Monitoring System Using Deep Learning Framework and IoT. Sensors 2020, 20, 5280. [Google Scholar] [CrossRef]

- Zhou, B.; Zhao, H.; Puig, X.; Xiao, T.; Fidler, S.; Barriuso, A.; Torralba, A. Semantic understanding of scenes through the ade20k dataset. Int. J. Comput. Vis. 2019, 127, 302–321. [Google Scholar] [CrossRef] [Green Version]

- Zhou, B.; Zhao, H.; Puig, X.; Fidler, S.; Barriuso, A.; Torralba, A. Scene Parsing through ADE20K Dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2881–2890. [Google Scholar]

- Gurita, A.; Mocanu, I.G. Image Segmentation Using Encoder-Decoder with Deformable Convolutions. Sensors 2021, 21, 1570. [Google Scholar] [CrossRef]

- O’Brien, T.A.; Kashinath, K.; Cavanaugh, N.R.; Collins, W.D.; O’Brien, J.P. A fast and objective multidimensional kernel density estimation method: FastKDE. Comput. Stat. Data Anal. 2016, 101, 148–160. [Google Scholar] [CrossRef] [Green Version]

- Kelley, L.Z. kalepy: A Python package for kernel density estimation, sampling and plotting. J. Open Source Softw. 2021, 6, 2784. [Google Scholar] [CrossRef]

| Trial-1 | Trial-2 | Trial-3 | Trial-4 | Trial-5 | |

|---|---|---|---|---|---|

| Environment | Indoor | Semi-outdoor | Indoor | Indoor | Semi-outdoor |

| Floor type | Polished concrete | Coarse cemented | Vinyl | Vinyl | Cemented and wooden |

| Area Explored (m2) | 246.70 | 187.53 | 118.39 | 217.11 | 248.17 |

| Samples audited | 100 | 76 | 48 | 127 | 63 |

| No of dirt locations | 6 | 6 | 4 | 8 | 4 |

| Average sample audit score | 0.354 | 0.361 | 0.376 | 0.384 | 0.392 |

| Peak sample audit score | 0.437 | 0.442 | 0.495 | 0.486 | 0.491 |

| Peak audit score location (m,m) | (13.00,2.25) (11.15,4.50) (13.50,4.35) | (7.55,8.05) (19.05,12.45) (18.25,13.00) | (13.15,11.00) (15.45,12.05) (14.25,11.15) | (17.45,10.25) (21.00,13.15) (19.35,9.75) | (20.15,21.00) (22.35,22.50) |

| Peak dirt distribution location (m,m) | (3,0) | (12,9) | (16,8) | (16,10) | (0,3) |

| Exploration time (s) | 1580.0 | 1551.0 | 1175.0 | 1810.0 | 1247.0 |

| Total audit score | 37.032 | 31.152 | 21.850 | 48.531 | 25.739 |

| Cleaning benchmark score (Out of 100) | 62.968 | 59.011 | 54.479 | 61.787 | 59.144 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pathmakumar, T.; Kalimuthu, M.; Elara, M.R.; Ramalingam, B. An Autonomous Robot-Aided Auditing Scheme for Floor Cleaning. Sensors 2021, 21, 4332. https://doi.org/10.3390/s21134332

Pathmakumar T, Kalimuthu M, Elara MR, Ramalingam B. An Autonomous Robot-Aided Auditing Scheme for Floor Cleaning. Sensors. 2021; 21(13):4332. https://doi.org/10.3390/s21134332

Chicago/Turabian StylePathmakumar, Thejus, Manivannan Kalimuthu, Mohan Rajesh Elara, and Balakrishnan Ramalingam. 2021. "An Autonomous Robot-Aided Auditing Scheme for Floor Cleaning" Sensors 21, no. 13: 4332. https://doi.org/10.3390/s21134332

APA StylePathmakumar, T., Kalimuthu, M., Elara, M. R., & Ramalingam, B. (2021). An Autonomous Robot-Aided Auditing Scheme for Floor Cleaning. Sensors, 21(13), 4332. https://doi.org/10.3390/s21134332