ECPC-ICP: A 6D Vehicle Pose Estimation Method by Fusing the Roadside Lidar Point Cloud and Road Feature

Abstract

1. Introduction

- We proposed a novel method ECPC for initial pose estimation under sparse point clouds. ECPC integrated road normal information into global features of the sparse point cloud and achieved a robust solution to the initial pose.

- We proposed a point cloud sparseness description according to the measurement characteristics of roadside Lidar for quantitative experimental verification. The experiment was developed under point clouds with different sparseness, which proved the effectiveness of the proposed ECPC-ICP algorithm.

2. Related Works

2.1. Model-Based Methods

2.2. Model-Free Methods

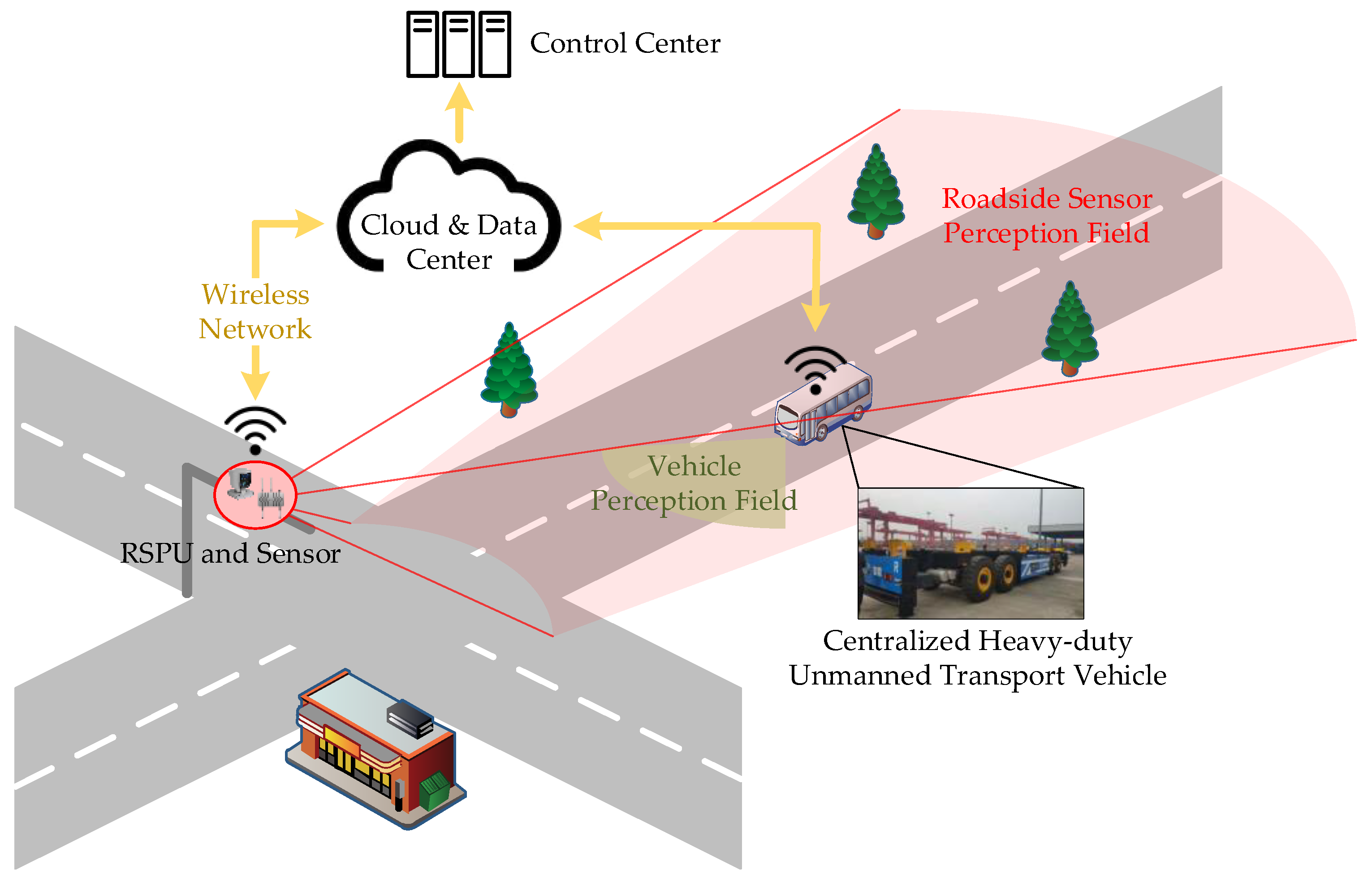

3. Pose Estimation Considering Road Constraint

3.1. 6D Pose Estimation Modeling

3.2. Point Cloud Preprocessing and Segmentation

3.3. ECPC-ICP Pose Estimation Method

3.3.1. Target Template Preparing

3.3.2. ECPC Initial Pose Estimation

| Algorithm 1 ECPC Initial Pose Estimation. |

| Input: environment constraint vector , clustered point cloud Output: coarse rigid transform matrix 1: 2: 3: 4: 5: 6: 7: 8: 9: 10: return |

3.3.3. Precise Pose Calculation

4. Experiment

4.1. Simulated Test Environment

4.1.1. Template Point Cloud Acquisition

4.1.2. Experimental Design

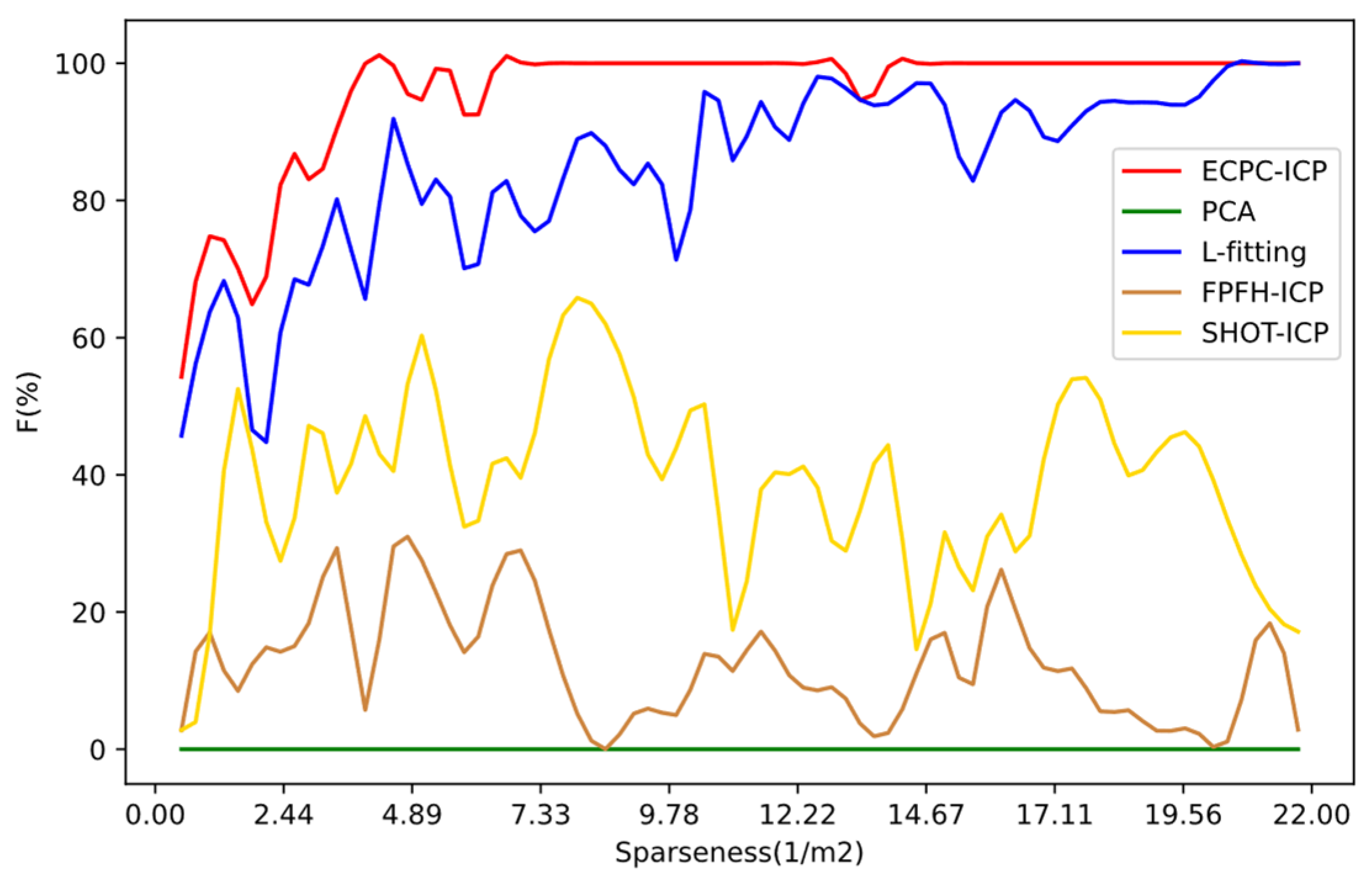

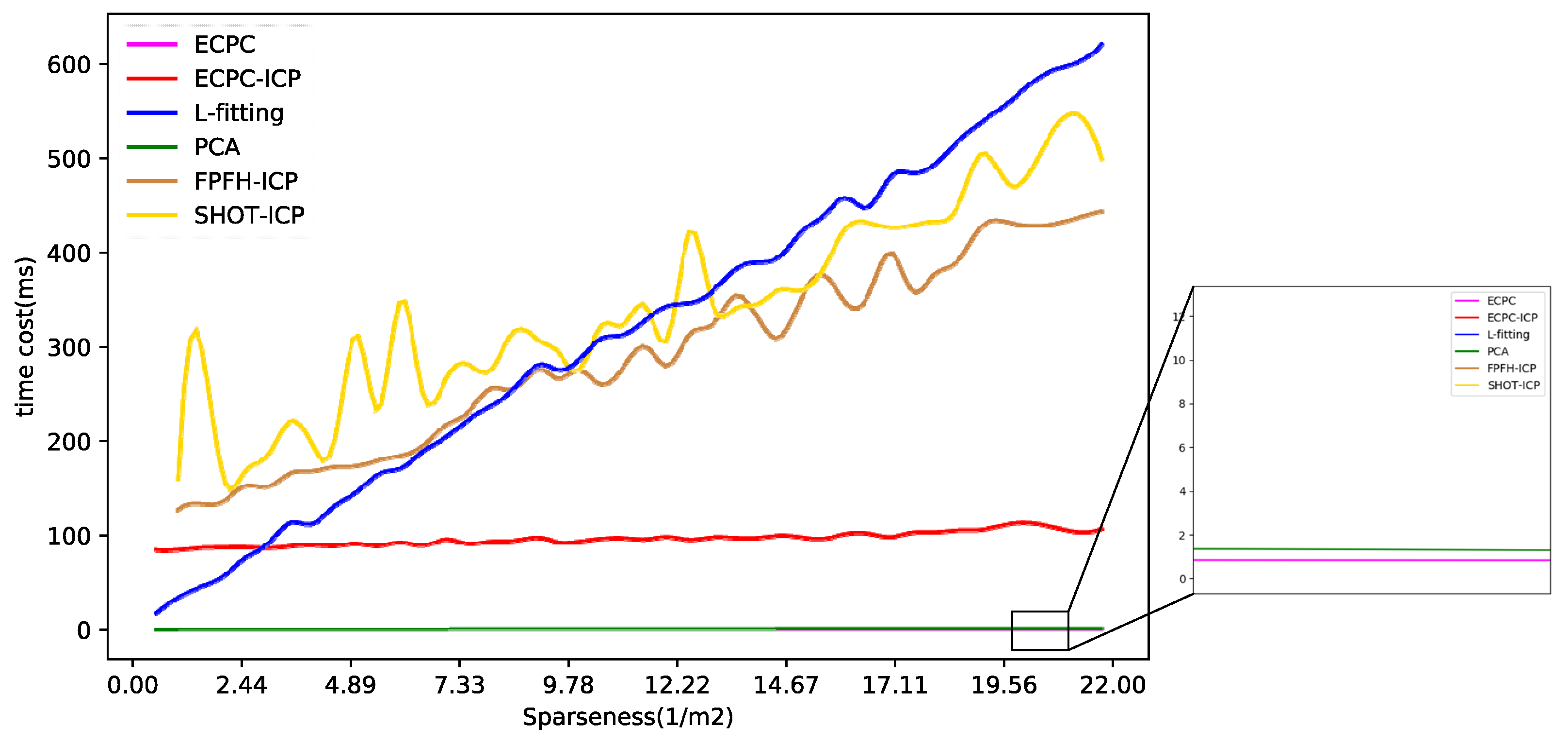

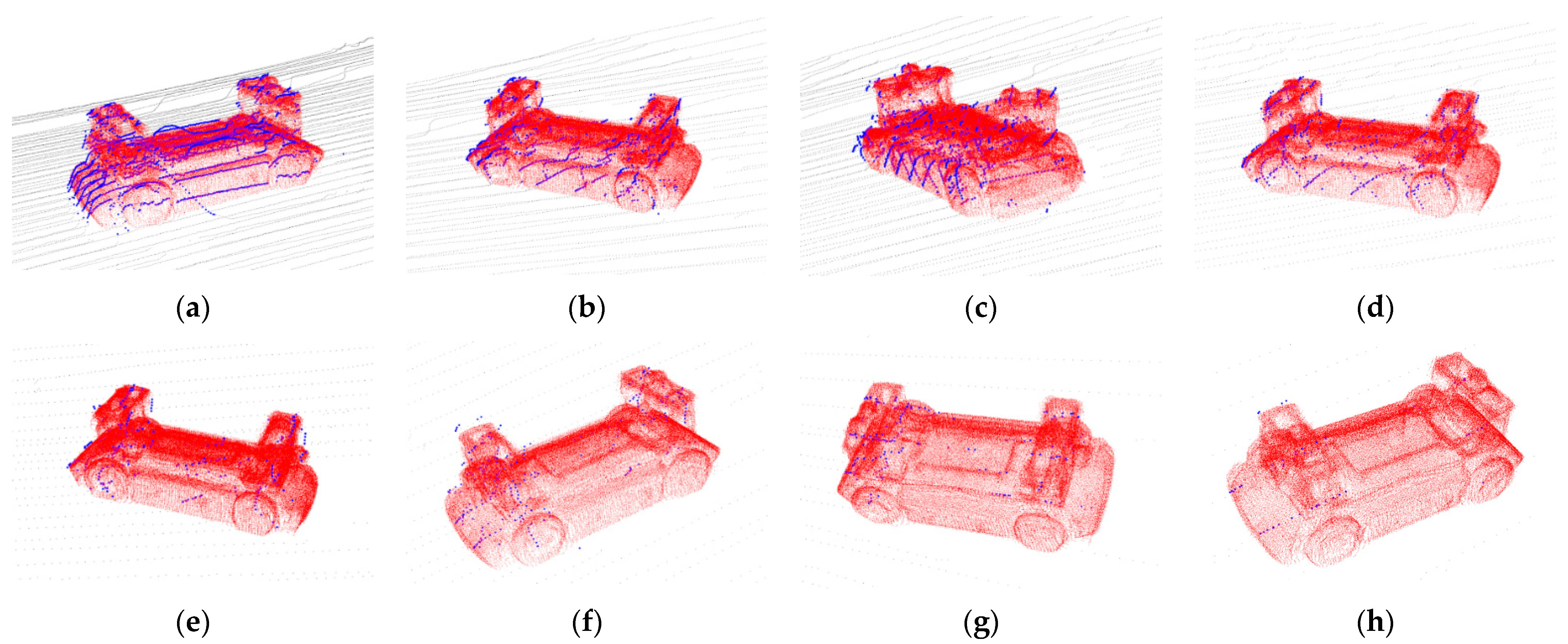

4.1.3. Results and Discussion

4.2. Real Test Environment

4.2.1. Template Point Cloud Acquisition

4.2.2. Experimental Design

4.2.3. Results and Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ni, T.; Li, W.; Zhang, H.; Yang, H.; Kong, Z. Pose Prediction of Autonomous Full Tracked Vehicle Based on 3D Sensor. Sensors 2019, 19, 5120. [Google Scholar] [CrossRef]

- Massa, F.; Bonamini, L.; Settimi, A.; Pallottino, L.; Caporale, D. LiDAR-Based GNSS Denied Localization for Autonomous Racing Cars. Sensors 2020, 20, 3992. [Google Scholar] [CrossRef] [PubMed]

- Godoy, J.; Jiménez, V.; Artuñedo, A.; Villagra, J. A Grid-Based Framework for Collective Perception in Autonomous Vehicles. Sensors 2021, 21, 744. [Google Scholar] [CrossRef] [PubMed]

- Scaramuzza, D.; Siegwart, R. Appearance-Guided Monocular Omnidirectional Visual Odometry for Outdoor Ground Vehicles. IEEE Trans. Robot. 2008, 24, 1015–1026. [Google Scholar] [CrossRef]

- Durrant-Whyte, H.; Bailey, T. Simultaneous localization and mapping: Part I. IEEE Robot. Autom. Mag. 2006, 13, 99–110. [Google Scholar] [CrossRef]

- Chen, X.; Ma, H.; Wan, J.; Li, B.; Xia, T. Multi-view 3d object detection network for autonomous driving. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1907–1915. [Google Scholar]

- Kornuta, T.; Laszkowski, M. Perception subsystem for object recognition and pose estimation in RGB-D images. In Proceedings of the International Conference on Automation, Warsaw, Poland, 2–4 March 2016; pp. 597–607. [Google Scholar]

- Liu, J.; Bai, F.; Weng, H.; Li, S.; Cui, X.; Zhang, Y. A Routing Algorithm Based on Real-Time Information Traffic in Sparse Environment for VANETs. Sensors 2020, 20, 7018. [Google Scholar] [CrossRef]

- Chen, L.; Wang, Q.; Lu, X.K.; Cao, D.P.; Wang, F.Y. Learning Driving Models From Parallel End-to-End Driving Data Set. Proc. IEEE 2020, 108, 262–273. [Google Scholar] [CrossRef]

- González, D.; Pérez, J.; Milanés, V.; Nashashibi, F. A review of motion planning techniques for automated vehicles. IEEE Trans. Intell. Transp. Syst. 2015, 17, 1135–1145. [Google Scholar] [CrossRef]

- Xiong, H.; Zhang, M.; Zhang, R.; Zhu, X.; Yang, L.; Guo, X.; Cai, B. A new synchronous control method for dual motor electric vehicle based on cognitive-inspired and intelligent interaction. Future Gener. Comput. Syst. 2019, 94, 536–548. [Google Scholar] [CrossRef]

- Zhang, R.H.; He, Z.C.; Wang, H.W.; You, F.; Li, K.N. Study on Self-Tuning Tyre Friction Control for Developing Main-Servo Loop Integrated Chassis Control System. IEEE Access 2017, 5, 6649–6660. [Google Scholar] [CrossRef]

- Zheng, L.; Zhu, Y.; Xue, B.; Liu, M.; Fan, R. Low-cost gps-aided lidar state estimation and map building. In Proceedings of the 2019 IEEE International Conference on Imaging Systems and Techniques (IST), Abu Dhabi, United Arab Emirates, 9–10 December 2019; pp. 1–6. [Google Scholar]

- Shan, T.X.; Englot, B. LeGO-LOAM: Lightweight and Ground-Optimized Lidar Odometry and Mapping on Variable Terrain. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems, Madrid, Spain, 1–5 October 2018; pp. 4758–4765. [Google Scholar]

- Xiong, H.; Tan, Z.; Zhang, R.; He, S. A New Dual Axle Drive Optimization Control Strategy for Electric Vehicles Using Vehicle-to-Infrastructure Communications. IEEE Trans. Ind. Inform. 2019, 16, 2574–2582. [Google Scholar] [CrossRef]

- Tsukada, M.; Oi, T.; Kitazawa, M.; Esaki, H. Networked Roadside Perception Units for Autonomous Driving. Sensors 2020, 20, 5320. [Google Scholar] [CrossRef] [PubMed]

- Huang, W.; Huang, M. Double-layer fusion of lidar and roadside camera for cooperative localization. J. Zhejiang Univ. Eng. Sci. 2020, 54, 1369–1379. [Google Scholar]

- Tarko, A.; Ariyur, K.; Romero, M.; Bandaru, V.; Lizarazo, C. T-Scan: Stationary LiDAR for Traffic and Safety Applications-Vehicle Detection and Tracking; Joint Transportation Research Program Publication No. FHWA/IN/JTRP-2016/24; Purdue University: West Lafayette, IN, USA, 2016. [Google Scholar]

- Arnold, E.; Dianati, M.; de Temple, R.; Fallah, S. Cooperative perception for 3D object detection in driving scenarios using infrastructure sensors. IEEE Trans. Intell. Transp. Syst. 2020. [Google Scholar] [CrossRef]

- Chen, L.; Fan, L.; Xie, G.; Huang, K.; Nuechter, A. Moving-Object Detection From Consecutive Stereo Pairs Using Slanted Plane Smoothing. IEEE Trans. Intell. Transp. Syst. 2017, 18, 3093–3102. [Google Scholar] [CrossRef]

- Sun, Y.; Xu, H.; Wu, J.; Zheng, J.; Dietrich, K.M. 3-D Data Processing to Extract Vehicle Trajectories from Roadside LiDAR Data. Transp. Res. Rec. J. Transp. Res. Board 2018, 2672, 14–22. [Google Scholar] [CrossRef]

- Koppanyi, Z.; Toth, C.K. Object Tracking with LiDAR: Monitoring Taxiing and Landing Aircraft. Appl. Sci. 2018, 8, 22. [Google Scholar]

- Wang, D.Z.; Posner, I.; Newman, P. Model-free detection and tracking of dynamic objects with 2D lidar. Int. J. Robot. Res. 2015, 34, 1039–1063. [Google Scholar] [CrossRef]

- Steder, B.; Rusu, R.B.; Konolige, K.; Burgard, W. Point Feature Extraction on 3D Range Scans Taking into Account Object Boundaries. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 2601–2608. [Google Scholar]

- Salti, S.; Tombari, F.; Di Stefano, L. SHOT: Unique signatures of histograms for surface and texture description. Comput. Vis. Image Underst. 2014, 125, 251–264. [Google Scholar] [CrossRef]

- Rusu, R.B.; Marton, Z.C.; Blodow, N.; Beetz, M. Learning informative point classes for the acquisition of object model maps. In Proceedings of the 2008 10th International Conference on Control, Automation, Robotics and Vision, Hanoi, Vietnam, 17–20 December 2008; pp. 643–650. [Google Scholar]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast Point Feature Histograms (FPFH) for 3D Registration. In Proceedings of the ICRA: 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 1848–1853. [Google Scholar]

- Buch, A.G.; Kraft, D.; Kamarainen, J.-K.; Petersen, H.G.; Krueger, N. Pose Estimation using Local Structure-Specific Shape and Appearance Context. In Proceedings of the 2013 IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 6–10 May 2013; pp. 2080–2087. [Google Scholar]

- Yuan, Y.; Borrmann, D.; Hou, J.; Ma, Y.; Nüchter, A.; Schwertfeger, S. Self-Supervised Point Set Local Descriptors for Point Cloud Registration. Sensors 2021, 21, 486. [Google Scholar] [CrossRef]

- Tsin, Y.; Kanade, T. A correlation-based approach to robust point set registration. In Proceedings of the European Conference on Computer Vision, Prague, Czech Republic, 11–14 May 2004; pp. 558–569. [Google Scholar]

- Myronenko, A.; Song, X. Point set registration: Coherent point drift. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 2262–2275. [Google Scholar] [CrossRef]

- Vidal, J.; Lin, C.-Y.; Martí, R. 6D pose estimation using an improved method based on point pair features. In Proceedings of the 2018 4th international conference on control, automation and robotics (iccar), Auckland, New Zealand, 20–23 April 2018; pp. 405–409. [Google Scholar]

- Drost, B.; Ulrich, M.; Navab, N.; Ilic, S. Model globally, match locally: Efficient and robust 3D object recognition. In Proceedings of the 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, San Francisco, CA, USA, 13–18 June 2010; pp. 998–1005. [Google Scholar]

- Wang, R.D.; Xu, Y.C.; Sotelo, M.A.; Ma, Y.L.; Sarkodie-Gyan, T.; Li, Z.X.; Li, W.H. A Robust Registration Method for Autonomous Driving Pose Estimation in Urban Dynamic Environment Using LiDAR. Electronics 2019, 8, 43. [Google Scholar] [CrossRef]

- Rusu, R.B.; Blodow, N.; Marton, Z.C.; Beetz, M. Aligning point cloud views using persistent feature histograms. In Proceedings of the 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, France, 22–26 September 2008; pp. 3384–3391. [Google Scholar]

- Mo, Y.D.; Zou, X.J.; Situ, W.M.; Luo, S.F. Target accurate positioning based on the point cloud created by stereo vision. In Proceedings of the 2016 23rd International Conference on Mechatronics and Machine Vision in Practice (M2VIP), Nanjing, China, 28–30 November 2016; pp. 1–5. [Google Scholar]

- Guo, Y.; Bennamoun, M.; Sohel, F.; Lu, M.; Wan, J.; Kwok, N.M. A comprehensive performance evaluation of 3D local feature descriptors. Int. J. Comput. Vis. 2016, 116, 66–89. [Google Scholar] [CrossRef]

- Shlens, J. A tutorial on principal component analysis. arXiv 2014, arXiv:1404.1100. Available online: https://arxiv.org/abs/1404.1100 (accessed on 16 December 2020).

- Farrugia, T.; Barbarar, J. Pose normalisation for 3D vehicles. In Proceedings of the International Conference on Computer Analysis of Images and Patterns, Valletta, Malta, 2–4 September 2015; pp. 235–245. [Google Scholar]

- Zhang, X.; Xu, W.; Dong, C.; Dolan, J.M. Efficient L-Shape Fitting for Vehicle Detection Using Laser Scanners. In Proceedings of the 2017 IEEE Intelligent Vehicles Symposium (IV), Redondo Beach, CA, USA, 11–14 June 2017; pp. 54–59. [Google Scholar]

- Yang, J.; Zeng, G.; Wang, W.; Zuo, Y.; Yang, B.; Zhang, Y. Vehicle Pose Estimation Based on Edge Distance Using Lidar Point Clouds (Poster). In Proceedings of the 2019 22th International Conference on Information Fusion (FUSION), Ottawa, ON, Canada, 2–5 July 2019; pp. 1–6. [Google Scholar]

- Shen, X.; Pendleton, S.; Ang, M.H., Jr. Efficient L-shape Fitting of Laser Scanner Data for Vehicle Pose Estimation. In Proceedings of the 2015 IEEE 7th International Conference on Cybernetics and Intelligent Systems (CIS) and IEEE Conference on Robotics, Automation and Mechatronics (RAM), Angkor Wat, Cambodia, 15–17 July 2015; pp. 173–178. [Google Scholar]

- Qu, S.; Chen, G.; Ye, C.; Lu, F.; Wang, F.; Xu, Z.; Ge, Y. An Efficient L-Shape Fitting Method for Vehicle Pose Detection with 2D LiDAR. In Proceedings of the 2018 IEEE International Conference on Robotics and Biomimetics (ROBIO), Kuala Lumpur, Malaysia, 12–15 December 2018; pp. 1159–1164. [Google Scholar]

- Mertz, C.; Navarro-Serment, L.E.; MacLachlan, R.; Rybski, P.; Steinfeld, A.; Suppe, A.; Urmson, C.; Vandapel, N.; Hebert, M.; Thorpe, C.; et al. Moving object detection with laser scanners. J. Field Robot. 2013, 30, 17–43. [Google Scholar] [CrossRef]

- Granstrom, K.; Reuter, S.; Meissner, D.; Scheel, A. A multiple model PHD approach to tracking of cars under an assumed rectangular shape. In Proceedings of the 17th International Conference on Information Fusion (FUSION), Salamanca, Spain, 7–10 July 2014; pp. 1–8. [Google Scholar]

- Chen, T.T.; Wang, R.L.; Dai, B.; Liu, D.X.; Song, J.Z. Likelihood-Field-Model-Based Dynamic Vehicle Detection and Tracking for Self-Driving. IEEE Trans. Intell. Transp. Syst. 2016, 17, 3142–3158. [Google Scholar] [CrossRef]

- Zhengyou, Z. Iterative point matching for registration of free-form curves and surfaces. Int. J. Comput. Vis. 1994, 13, 119–152. [Google Scholar] [CrossRef]

- Besl, P.J.; McKay, H.D. A method for registration of 3-D shapes. IEEE Trans. Pattern Anal. Mach. Intell. 1992, 14, 239–256. [Google Scholar] [CrossRef]

- Biber, P.; Straßer, W. The normal distributions transform: A new approach to laser scan matching. In Proceedings of the 2003 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2003) (Cat. No. 03CH37453), Las Vegas, NV, USA, 27–31 October 2003; Volume 3, pp. 2743–2748. [Google Scholar]

- Wu, J.; Xu, H.; Zheng, J. Automatic Background Filtering and Lane Identification with Roadside LiDAR Data. In Proceedings of the 20th IEEE International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017; p. 6. [Google Scholar]

- Lv, B.; Xu, H.; Wu, J.Q.; Tian, Y.; Yuan, C.W. Raster-Based Background Filtering for Roadside LiDAR Data. IEEE Access 2019, 7, 76779–76788. [Google Scholar] [CrossRef]

- Zhao, J.X.; Xu, H.; Xia, X.T.; Liu, H.C. Azimuth-Height Background Filtering Method for Roadside LiDAR Data. In Proceedings of the IEEE Intelligent Transportation Systems Conference (IEEE-ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 2421–2426. [Google Scholar]

- Wu, J.Q.; Tian, Y.; Xu, H.; Yue, R.; Wang, A.B.; Song, X.G. Automatic ground points filtering of roadside LiDAR data using a channel-based filtering algorithm. Opt. Laser Technol. 2019, 115, 374–383. [Google Scholar] [CrossRef]

- Zhao, J.X.; Xu, H.; Liu, H.C.; Wu, J.Q.; Zheng, Y.C.; Wu, D.Y. Detection and tracking of pedestrians and vehicles using roadside LiDAR sensors. Transp. Res. Part C Emerg. Technol. 2019, 100, 68–87. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Konolige, K.; Agrawal, M.; Bias, M.R.; Bolles, R.C.; Gerkey, B.; Sola, J.; Sundaresan, A. Mapping, Navigation, and Learning for Off-Road Traversal. J. Field Robot. 2009, 26, 88–113. [Google Scholar] [CrossRef]

- Zhang, W.; Qi, J.; Wan, P.; Wang, H.; Xie, D.; Wang, X.; Yan, G. An Easy-to-Use Airborne LiDAR Data Filtering Method Based on Cloth Simulation. Remote Sens. 2016, 8, 501. [Google Scholar] [CrossRef]

- Rusu, R.B.; Cousins, S. 3d is here: Point cloud library (pcl). In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 1–4. [Google Scholar]

- Burt, A.; Disney, M.; Calders, K. Extracting individual trees from lidar point clouds using treeseg. Methods Ecol. Evol. 2019, 10, 438–445. [Google Scholar] [CrossRef]

- Singular Value Decomposition. Available online: https://en.wikipedia.org/wiki/Singular_value_decomposition (accessed on 8 June 2020).

- Solidworks. Available online: https://www.solidworks.com/zh-hans (accessed on 10 May 2020).

- Blender Sensor Simulation. Available online: https://www.blensor.org/ (accessed on 13 June 2020).

- Xiao, C.; Wachs, J. Triangle-Net: Towards Robustness in Point Cloud Classification. arXiv 2020, arXiv:2003.00856. Available online: https://arxiv.org/abs/2003.00856 (accessed on 7 January 2021).

- Wu, W.X.; Qi, O.G.; Li, F.X.; Soc, I.C. PointConv: Deep Convolutional Networks on 3D Point Clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 17–22 June 2019; pp. 9621–9630. [Google Scholar]

- Li, S.; Dai, L.; Wang, H.; Wang, Y.; He, Z.; Lin, S. Estimating Leaf Area Density of Individual Trees Using the Point Cloud Segmentation of Terrestrial LiDAR Data and a Voxel-Based Model. Remote Sens. 2017, 9, 1202. [Google Scholar] [CrossRef]

- Zhang, J.-g.; Chen, Y.-g.; Lei, X.; Bao, X.-t.; Yu, F.-h.; Zhang, T.-t. Impact of Point Cloud Density on Evaluation of Underwater Riprap. Water Resour. Power 2018, 36, 100–102, 213. [Google Scholar]

- Damkjer, K.L.; Foroosh, H. Mesh-free sparse representation of multidimensional LIDAR data. In Proceedings of the 2014 IEEE International Conference on Image Processing (ICIP), Paris, France, 27–30 October 2014; pp. 4682–4686. [Google Scholar]

- LeiShen Intelligent System. Available online: http://www.lslidar.com/ (accessed on 10 September 2020).

| Method | Error MAE (m) | Error MAE (deg) | ||||

|---|---|---|---|---|---|---|

| Local X-Axis | Local Y-Axis | Local Z-Axis | Yaw | Pitch | Roll | |

| PCA | 1.23932 | 0.13477 | 0.79728 | 4.07111 | 3.89515 | 30.49411 |

| L-fitting | 0.31533 | 0.17401 | / | 1.58945 | / | / |

| ECPC (Ours) | 0.46180 | 0.09200 | 0.39429 | 1.96267 | 0.00042 | 0.00044 |

| ECPC-ICP (Ours) | 0.06334 | 0.02157 | 0.01066 | 0.16794 | 0.27018 | 0.34759 |

| Method | Success Ratio F |

|---|---|

| PCA | 2.7777% |

| L-fitting | 84.7222% |

| FPFH-ICP | 14.7487% |

| SHOT-ICP | 40.4762% |

| ECPC-ICP (Ours) | 95.5026% |

| Method | Mean Calculation Time (ms) |

|---|---|

| PCA | 0.8135 |

| L-fitting | 308.24 |

| FPFH-ICP | 283.11 |

| SHOT-ICP | 335.77 |

| ECPC (Ours) | 0.4633 |

| ECPC-ICP (Ours) | 96.13 |

| Index | ECPC-ICP Pose | GNSS/RTK Pose | 6D Error (m&°) | (1/m2) | (m) | (m) |

|---|---|---|---|---|---|---|

| 1 | (4.718, −6.426, 5.609, 0.922, −0.003, 0.386) | (4.659, −6.456, 5.739, 0.917, −0.008, 0.397) | (0.002, 0.026, −0.143, −0.65, 0.27, 1.78) | 17.1 | 0.0198 | 0.0112 |

| 2 | (9.310, −6.418, 5.141, 0.999, −0.009, −0.018) | (9.322, −6.457, 5.161, 0.999, −0.002, −0.032) | (−0.011, 0.038, −0.021, 0.78, −0.36, 1.84) | 10.4 | 0.0176 | 0.0137 |

| 3 | (10.967, −6.401, 5.727, 0.839, 0.013, 0.543) | (10.990, −6.413, 5.650, 0.855, −0.013, 0.518) | (0.020, 0.014, 0.077, 1.67, 1.55, −1.41) | 8.4 | 0.0171 | 0.0120 |

| 4 | (14.572, −6.421, 4.086, −0.041, −0.015, −0.999) | (14.548, −6.377, 4.086, −0.046, 0.0003, −0.998) | (−0.0005, −0.043, 0.024, 0.34, −0.88, 0.78) | 6.05 | 0.0226 | 0.0170 |

| 5 | (9.248, −6.407, 5.567, 0.828, 0.001, 0.559) | (9.210, −6.387, 5.564, 0.831, −0.013, 0.556) | (0.033, −0.019, −0.018, 0.26, 0.87, −0.069) | 10.35 | 0.0134 | 0.0097 |

| 6 | (17.988, −6.357, 5.991, 0.996, 0.077, 0.005) | (18.117, −6.406, 5.80, 0.997, −0.0003, −0.077) | (−0.143, 0.056, 0.178, 4.59, 4.67, −10.81) | 4.09 | 0.0698 | 0.0703 |

| 7 | (8.371, −6.411, 4.195, 0.998, 0.004, −0.058) | (8.336, −6.404, 4.240, 0.997, −0.002, −0.077) | (0.037, −0.007, −0.042, 1.05, 0.39, 0.50) | 12.6 | 0.0119 | 0.0089 |

| 8 | (10.418, −6.401, 5.180, 0.588, −0.0008, 0.808) | (10.401, −6.375, 5.213, 0.587, −0.019, 0.808) | (−0.016, −0.027, −0.032, −0.07, 1.06, −1.62) | 9.3 | 0.0154 | 0.0097 |

| 9 | (8.484, −6.407, 4.404, 0.098, −0.0037, −0.995) | (8.465, −6.409, 4.481, 0.084, −0.0019, −0.996) | (0.078, 0.0016, 0.012, 0.81, −0.08, 4.62) | 12.3 | 0.0163 | 0.0113 |

| 10 | (18.851, −6.375, 4.479, 0.999, 0.036, −0.0025) | (18.880, −6.398, 4.503, 0.999, −0.003, −0.028) | (−0.028, 0.023, −0.026, 1.38, 2.26, 0.75) | 3.9 | 0.0119 | 0.0104 |

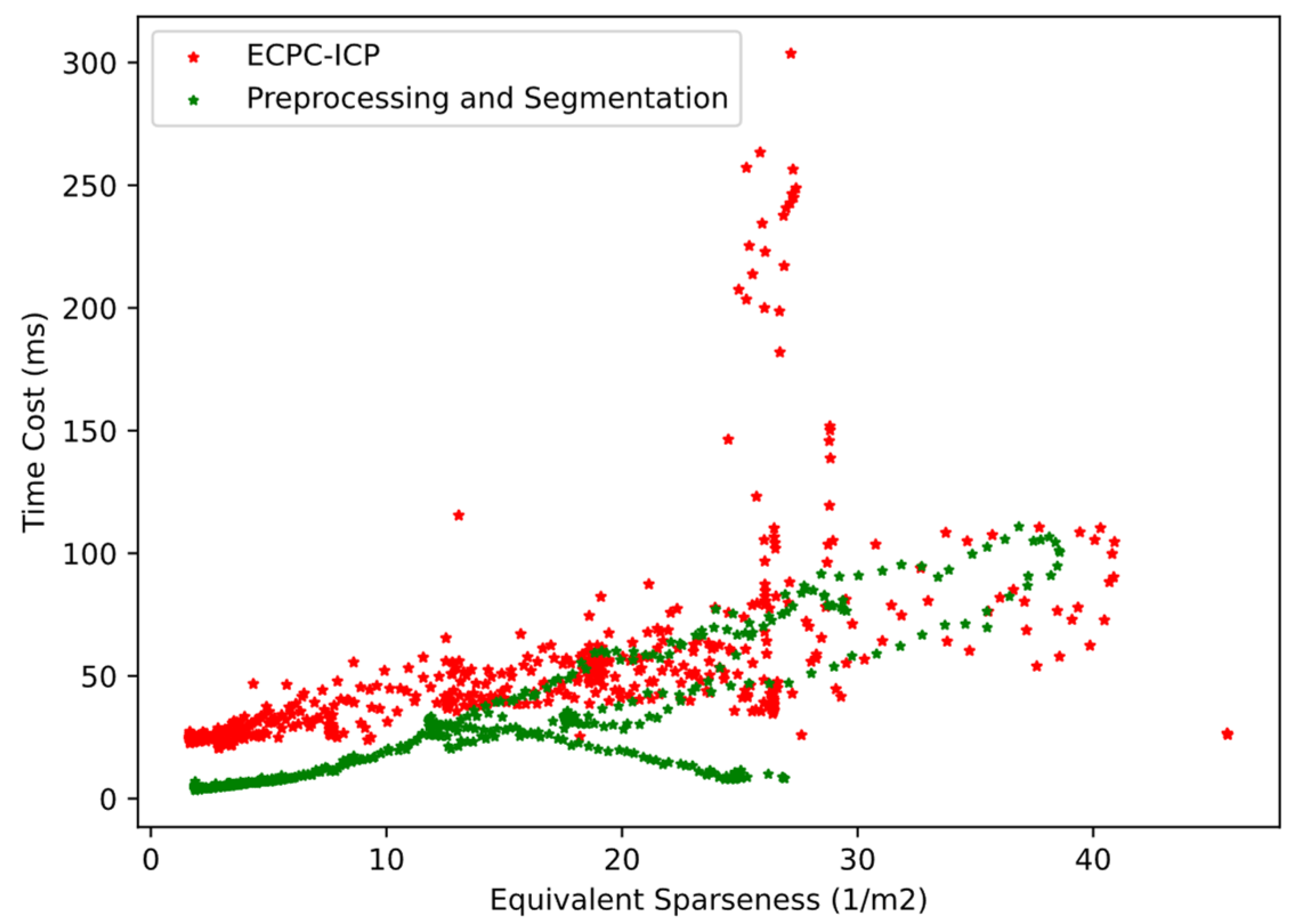

| Method | Mean Time Cost (ms) | Mean Time Cost (ms) |

|---|---|---|

| ECPC-ICP | 53.1928 | 40.3334 |

| Preprocessing and Segmentation | 27.8227 | / |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gu, B.; Liu, J.; Xiong, H.; Li, T.; Pan, Y. ECPC-ICP: A 6D Vehicle Pose Estimation Method by Fusing the Roadside Lidar Point Cloud and Road Feature. Sensors 2021, 21, 3489. https://doi.org/10.3390/s21103489

Gu B, Liu J, Xiong H, Li T, Pan Y. ECPC-ICP: A 6D Vehicle Pose Estimation Method by Fusing the Roadside Lidar Point Cloud and Road Feature. Sensors. 2021; 21(10):3489. https://doi.org/10.3390/s21103489

Chicago/Turabian StyleGu, Bo, Jianxun Liu, Huiyuan Xiong, Tongtong Li, and Yuelong Pan. 2021. "ECPC-ICP: A 6D Vehicle Pose Estimation Method by Fusing the Roadside Lidar Point Cloud and Road Feature" Sensors 21, no. 10: 3489. https://doi.org/10.3390/s21103489

APA StyleGu, B., Liu, J., Xiong, H., Li, T., & Pan, Y. (2021). ECPC-ICP: A 6D Vehicle Pose Estimation Method by Fusing the Roadside Lidar Point Cloud and Road Feature. Sensors, 21(10), 3489. https://doi.org/10.3390/s21103489