1. Introduction

When the spectral sensitivities of the RGB sensors found in a camera and the XYZ color matching functions (CMFs) [

1] are exactly a linear transform apart, the camera is said to be colorimetric [

2]. When this colorimetric condition is met, the RGBs measured by the camera—for all and any spectral stimuli—are the same linear transform from the corresponding XYZ coordinates. If a camera’s spectral sensitivities are a linear transform from the XYZ CMFs, it satisfies the so-called

Luther condition [

3,

4].

Despite being suitable for color measurement, in general, cameras are rarely made to be colorimetric due to manufacturing and engineering considerations, including the need for low cost manufacturing and low-noise in the captured images [

5]. Moreover, for cross-illuminant color measurement—where we wish to measure XYZs under different lights and then map the recorded tristimuli to a fixed reference illuminant, the best sensors are not linearly related to XYZ CMFs [

6,

7,

8].

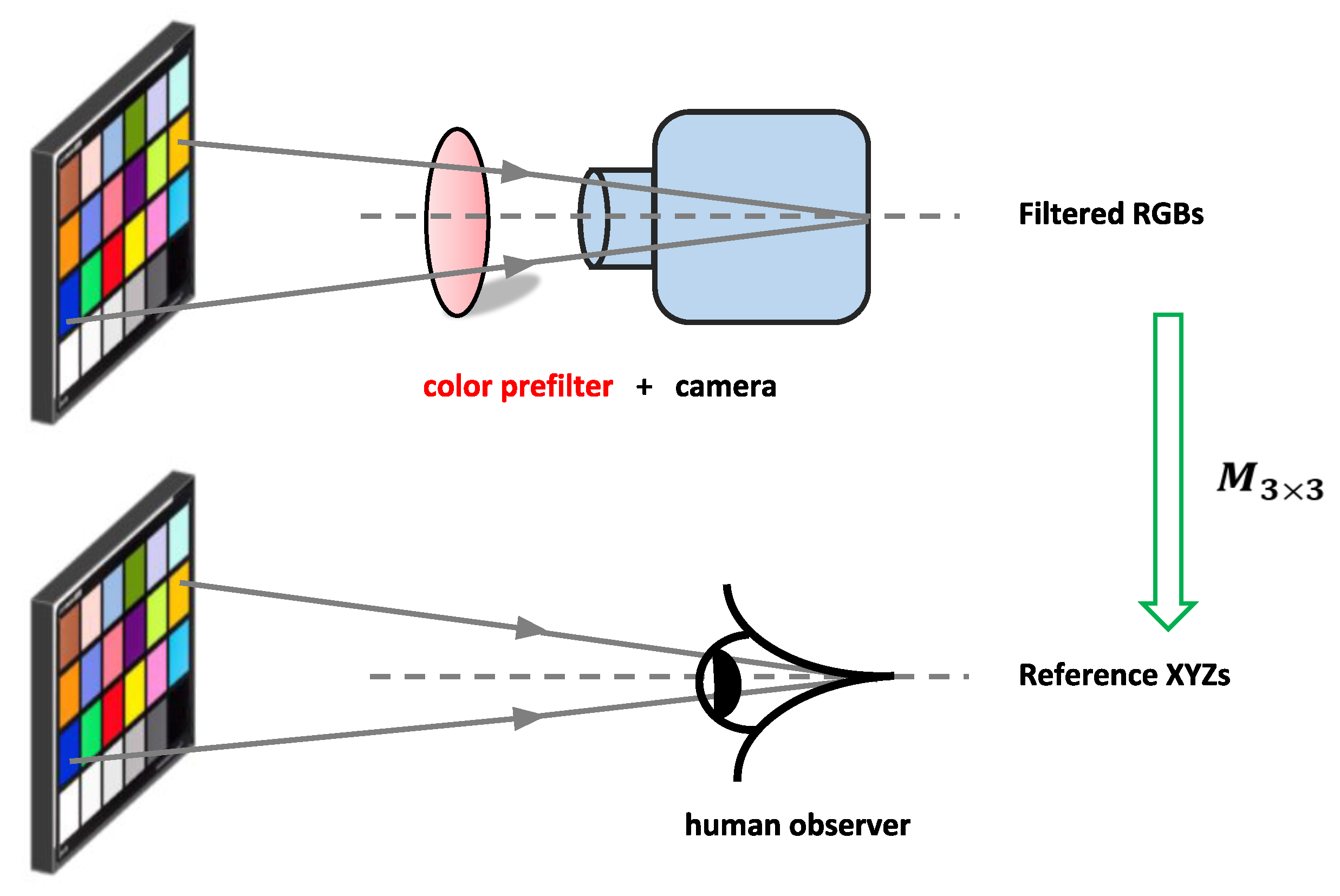

It was recently proposed [

9,

10] that a color filter could be designed which, when placed in front of the camera, would make it more colorimetric, see

Figure 1 (including for the cross-illuminant measurement case [

10]). In Finlayson et al. [

9], the filter is found that makes the camera best satisfy the Luther condition, that is, the filtered spectral sensitivities are as close as possible to being a linear

matrix correction from the XYZ CMFs. Curiously, the Luther-condition optimization is dependent on the target CMFs. If, for example, the best filter is found for the sRGB [

11] sensor basis (a linear combination from the XYZ CMFs such as the cone mechanisms [

12]), then a different optimal filter is found. That there are multiple optimal answers, perhaps, points to a weakness in the optimization statement?

In color science, the Vora-Value is a single number in the interval of [0, 1] which reports how close a camera’s spectral sensitivities are to the human visual subspace (HVS) [

13]. When the Vora-Value is 1, this means the camera is colorimetric; when it is 0, it means the camera is completely unsuited for the color measurement task. The Vora-Value formula is, by construction, independent of the bases that describe the camera and the HVS [

14]. In effect, the Vora-Value measures how close the vector spaces—respectively spanned by linear combinations of the camera spectral sensitivities and XYZ CMFs—are to one another. Finlayson and Zhu [

15] employed the Vora-Value as an optimization criterion to solved for the optimal filter for a given camera.

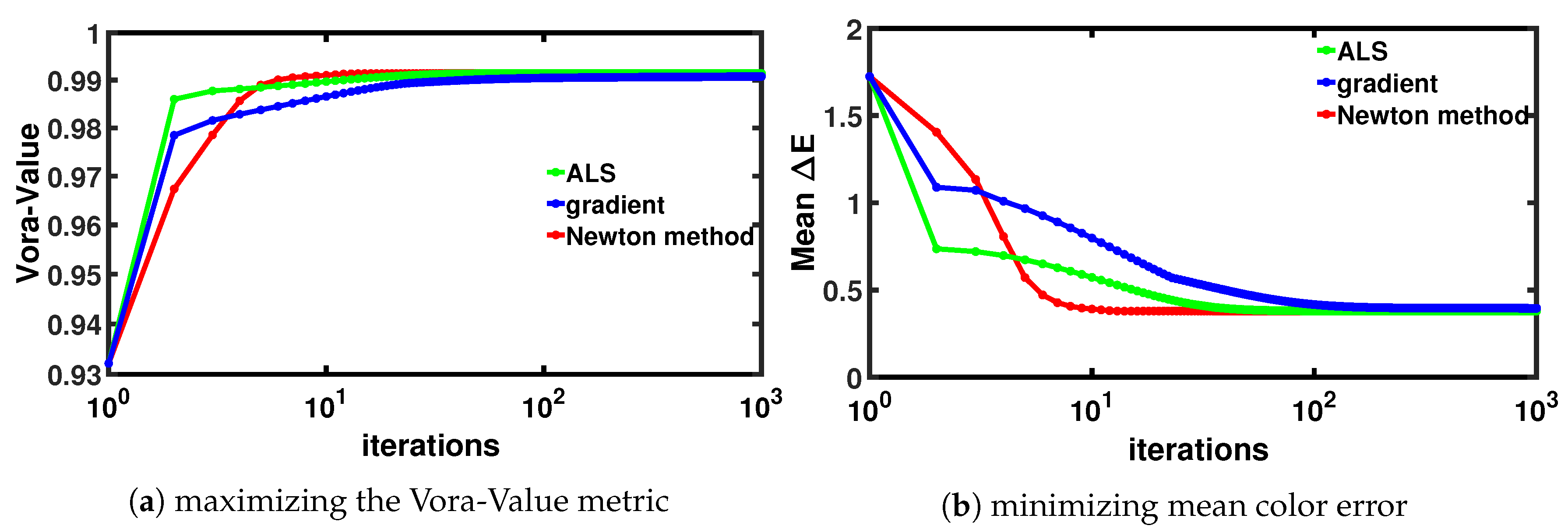

From an algorithmic viewpoint, the Luther and Vora-Value optimizations are quite different from one another. The Luther condition optimization [

9] is solved using a simple alternating least-squares procedure which converges quickly. The Vora-Value optimization [

15], however, has a more complex derivation and is optimized by a gradient ascent approach. The Vora-Value optimization converges less quickly, though arguably to a better overall answer (because of the basis independence property of the Vora-Value).

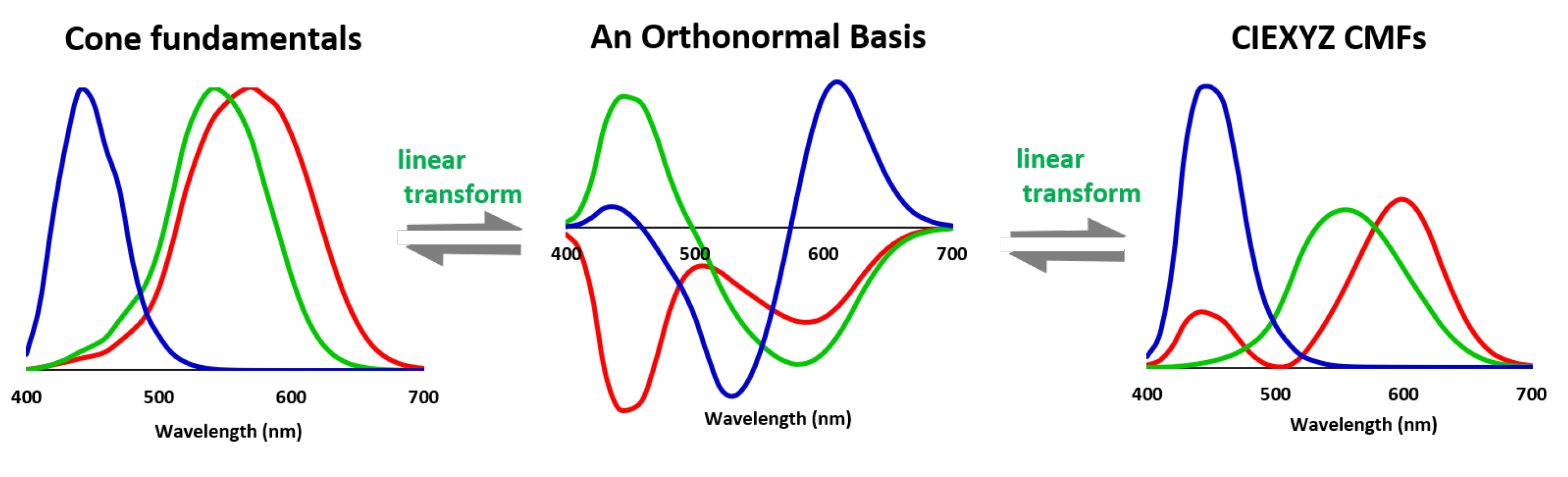

The key result, which we present in this paper, is to prove that if we choose to optimize the Luther condition using not the XYZ CMFs but a linear combination which is orthonormal, then we are optimizing the Vora-Value. This means we can use the quicker-to-converge Alternating Least-Squares (ALS) optimization to find the best Vora-Value filter. Now, we recast the prior-art gradient-ascent Vora-Value approach so that we can use Newton’s method [

16] (which includes 2nd derivatives) to speed up the optimization. Even compared to the faster Newton’s method, the simple ALS method has competitive performance, though it does converge a little more slowly.

However, an additional advantage of the ALS method is that it is easier to add constraints into the problem formulation than to do so for the Vora-Value approach. Indeed, prior work [

17] has shown how we can bound both the smoothness of the derived filter and its transmission properties (e.g., that the filter has to transmit at least 40% of the light at every wavelength across the visible spectrum).

Experiments demonstrate that the Vora-Value optimized color filter can dramatically increase the colorimetric accuracy across a large set of commercial cameras.

The rest of the paper is structured as follows. In

Section 2, we review the definition of the Luther condition and the Vora-Value. There, we introduce the concept of orthonormal basis which will underpin our optimization methods. In

Section 3, we present the equivalence theorem relating the Luther- and Vora-Value optimizations. We also show how Newton’s method can be used to find the filter that maximizes the Vora-Value. Experimental results are reported in

Section 4. The paper concludes in

Section 5.

3. Methods and Algorithms

In previous work [

9], it was shown how to find a filter that best satisfies the Luther condition under the assumption that we wish to find filtered camera sensors that best approximate XYZ CMFs. Further, the optimization was formulated using the Alternating Least-Squares paradigm. Below we reformulate the problem and make three important modifications. First we make an orthonormal basis set (linear combination) of the CMFs as the target. This step will help elucidate the similarity between optimizing a filter to best meet the Luther condition and to maximize the Vora-Value. Second, we recast the optimization as a gradient ascent optimization (and study the Newton formulations). Third, to ensure the well foundedness of the formulation—and to bound the shape of the filter—we add an additional regularization term that constrains the filter.

3.1. The Modified-Luther Condition Optimization

Physically, the effect of placing a transmissive filter, (a vector), in front of a camera can be modeled as where is a matrix operation that turns a vector into a diagonal matrix. To ease notation, we define and write . When we wish to extract from , we write (‘e’ signifies to ‘extract’ the diagonal).

In Equation (

9) we mathematically formulate the filter-modified Luther-condition optimization:

where the subscript

denotes the Frobenius norm. Note that here we do not constrain the filter to be positive although a physical filter should have positive transmittance values. We remove this constraint because we found that, empirically, our method always returned an all-positive filter solution. Here we use the symbol

to denote the objective function with the regularization term. Clearly,

is the core optimization: we wish to find

(the filter) such that the filtered sensitivities are approximately a

linear transform

from the orthonormalized CMFs, here

. The second term in the optimization is the squared norm of the filter where

is a penalty term defined by the user. As

becomes large, the optimization must effectively choose a filter whose norm is smaller. Small norm filters tend to be smoother and less jaggy. We say that the scalar

regularizes the optimization.

The regularization term in the optimization makes it tilt towards a filter with lower transmittance values over those with higher transmittances. Indeed, a filter that has a value of 1% at every wavelength has a lower norm than one which transmits 100% of the light at every wavelength. However, we argue that this is not a problem. Remember that in the core optimization, and are recovered up to a scaling factor, that is, and is an equally good solution. Viewed through the lens of this scaling indeterminacy, the filter that reflects 1% of light is equally efficacious as the one that transmits 100%. Pragmatically, because we know this scaling indeterminacy exists, we always scale the optimized filter so that its maximum transmittance value is 100%.

Importantly, the regularization term usefully pushes the optimization towards recovering smoother filters. A very jaggy filter with an average transmittance of (say) 50% has a much higher norm than one that has a uniform 50% transmittance across the spectrum. Jaggy filters are harder to manufacture so penalizing their discovery in the optimization makes sense.

In

Section 3.5, we show how we can add more explicit constraints on the shape and transmittance properties of the filters that we design.

3.2. The Equivalence of the Modified-Luther and Vora-Value Optimizations

We begin by considering the special case of

in Equation (

9):

Suppose we are given the filter matrix

, then, employing the closed-form Moore-Penrose inverse solution to the least-squares [

35], the best

is obtained

Here, we see that the best linear mapping

in the Luther-condition optimization of Equation (

9) is essentially a function of the unknown variable matrix

. By multiplying this newly solved

with

, we have

That is, we pre-multiply the orthonormal basis for the XYZs,

, by the projector for

(see Equation (

5) for the definition of projector matrix).

Substituting into Equation (

10), the optimization can be rewritten as

where

is the identity matrix. Equation (

13) has the advantage that the optimization depends only on the filter

.

Theorem 1. By minimizing , we maximize .

Proof of Theorem 1. We will use the following axioms:

, idempotency of projectors

, the projector is symmetric.

.

Using axiom 1, we rewrite the formula in Equation (

13) as

Now, we carry out some algebraic manipulation of the argument of Equation (

14). Using axiom 2 for moving

to the other side and known from Equation (

7),

, we rewrite

Manipulating the above expression using the properties of projector matrix (axioms 3 through 5), we obtain

Using axiom 6, clearly in Equation (

16),

is a positive constant given the known matrix

. Therefore, we minimize the derived expression by maximizing

. Thus, we can write:

From the definition of Vora-Value, we know

. We conclude:

☐

In summary, we have shown that a least-squares procedure that finds a filter that—in combination with a linear least-squares mapping (see Equation (

10)–(

13))—best fits an orthogonal basis of the color matching functions (i.e., minimizes the fitting error) must simultaneously maximize the Vora-Value.

3.3. Gradient Ascent for Solving the Optimization

So far we have concerned ourselves with formulating the filter design problem, that is, writing down what it is we wish to solve for. Now, we wish to present the mechanics of how we optimize for the filter. Given we have shown the equivalence for maximizing the Vora-Value and minimizing the modified Luther condition error, there is only one optimization at hand. The ALS method—for optimizing the Luther condition—is well described in Reference [

10]. Henceforth we will concern ourselves with maximizing the Vora-Value (safe in the knowledge that the filter that is found also optimizes the modified Luther condition problem) in this section. Moreover, presenting the gradient method is a necessary step for us to present a much faster algorithm: the Newton’s method [

16].

Since we are trying to maximize the Vora-Value, we will adopt a gradient ascent procedure. For a given filter, we can calculate its Vora-Value. The set of all Vora-Values can be thought of as a hilly landscape. By calculating the derivative (how the Vora-Value changes) when the filter is modified in certain directions, we can choose to take a small step in a direction that increases the Vora-Value.

By choosing to modify the filter step-wise—at each step seeking to go further up the hill—we modify the filter until we are on the hill top. Of course there is nothing in the gradient ascent method that guarantees that we will find an optimal solution to the filter design problem. At minimum, we will certainly, arrive at a filter that is better than the initial guess (e.g., the 100% do-nothing filter when the filter is fully transmissive). And, as we present later, we find a very good filter to our problem.

Remember that the Vora-Value is defined to be the trace of the projector of the filtered sensitivities pre-multiplying the projector for the XYZ CMFs. So we wish to maximize:

We maximize

in the usual calculus way by first finding the derivative of the Vora-Value function and then updating

by moving in the direction of the gradient (and in so doing we increase

). It can be shown—details given in Reference [

36]—that the derivative of

calculated with respect to

is calculated as:

Equivalently, the gradient of the objective function in terms of the underlying filter vector—remember

(

)—can be written as

where the gradient with respect to the filter vector,

, is a

vector. It is evident that the gradient function has a very interesting structure: it is the diagonal of the product of three projection matrices multiplied by the inverse of the filter (at hand).

The simple Gradient Ascent algorithm for finding the filter solution of the optimization is shown below (see Algorithm 1). Note that we use the term ‘gradient ascent’ (but not the more familiar term ‘gradient descent’) because we are maximizing our objective function. The algorithm works by updating the current filter at step

k to a new filter at step

. At step

k we calculate the gradient and then update according to:

. The term

is the step size (a small positive value) which controls how far the filter should move towards the direction of the gradient. The simplest approach would be to set

to a small fixed number. But, in this case, the optimization will take a long time to converge. To make convergence quicker, we adaptively choose the stepsize. In our work we find the best step

per iteration using the line search method. We refer the reader to Reference [

37] for more details.

As we will see in the next section, even when we choose the step size judiciously, convergence is still relatively slow. A common technique to find the filter more quickly is the Newton’s method (which involves calculating 2nd derivatives). A naive implementation of the Newton’s method quickly runs into numerical stability problems and one way to side step these is to adopt the regularized formulation of the Vora-Value formulation. Remembering our theorem linking the Luther-condition method to the Vora-Value, we can rewrite the maximization where we re-include the penalty term on the norm of the filter as a minimization:

It follows that the gradient function of the regularized optimization (denoted by

in Equation (

9)) as

Clearly,

. In the next section we present the Newton’s method for finding the regularized filter and use the corresponding gradient from Equation (

23).

| Algorithm 1 Gradient Ascent for solving the optimization. |

- 1:

- 2:

repeat - 3:

Compute the gradient: - 4:

Choose a step size: - 5:

Update ) - 6:

- 7:

until - 8:

return.

|

3.4. Newton’s Method for Solving the Regularized Optimization

Often simple gradient ascent is slow to converge. A better way to tackle the optimization is to adopt the Newton’s method when the second derivative (i.e., the Hessian matrix) of the objective function can be computed. Newton’s method uses the curvature information (second derivative) of the objective function and generally is faster to converge, typically it has a quadratic convergence speed compared to linearly for gradient ascent/descent. When a neighborhood of the optimal solution is reached, often in just a few iterations a solution with adequately high accuracy can be achieved. Describing the details of how the Newton’s method works is beyond the scope of this paper—but see Reference [

37] for a review—we will rather describe how it is used and report the Hessian we calculate for the filter design problem.

The Hessian for the filter design problem is a

matrix (assuming 31 sample wavelengths) and is denoted

. The term

denotes the partial derivative

. Again with the derivation given in Reference [

36], the Hessian for our problem is calculated as:

where ∘ denotes the Hadamard product (or elementwise product) of two matrices. In the equation, the last term relates to the regularization term. Because we are adding a non-zero multiple,

, to the identity matrix, we prove in Reference [

36] that this ensures the Hessian to be positive definite. Because the inverse of Hessian drives the Newton’s method, it is essential the Hessian has full rank.

Newton’s method for the problem of filter design is encapsulated in Algorithm 2. Here, given an initial guess

, we choose a stepsize after calculating the gradient and Hessian. Again step-size is tackled using the backtracking line search procedure [

16]. Later we update the filter solution by using its gradient and Hessian:

. The algorithm will keep refine the filter until a stopping criterion is met. In this paper, we simply use an empirically determined maximum iteration number (e.g., 1000) when the algorithm is well converged.

| Algorithm 2 Newton’s method for solving the optimization. |

- 1:

- 2:

repeat - 3:

Compute the gradient and Hessian: and - 4:

Choose a stepsize: - 5:

Update - 6:

- 7:

until stopping criterion is satisfied - 8:

return

|

As we will see in the next section the Newton’s method converges much more quickly than gradient ascent. However, the cost of implementation is significant. Here the gradient and Hessian must be calculated at each step in the iteration. More onerously the inverse of the Hessian must also be calculated (a computationally non-trivial operation).

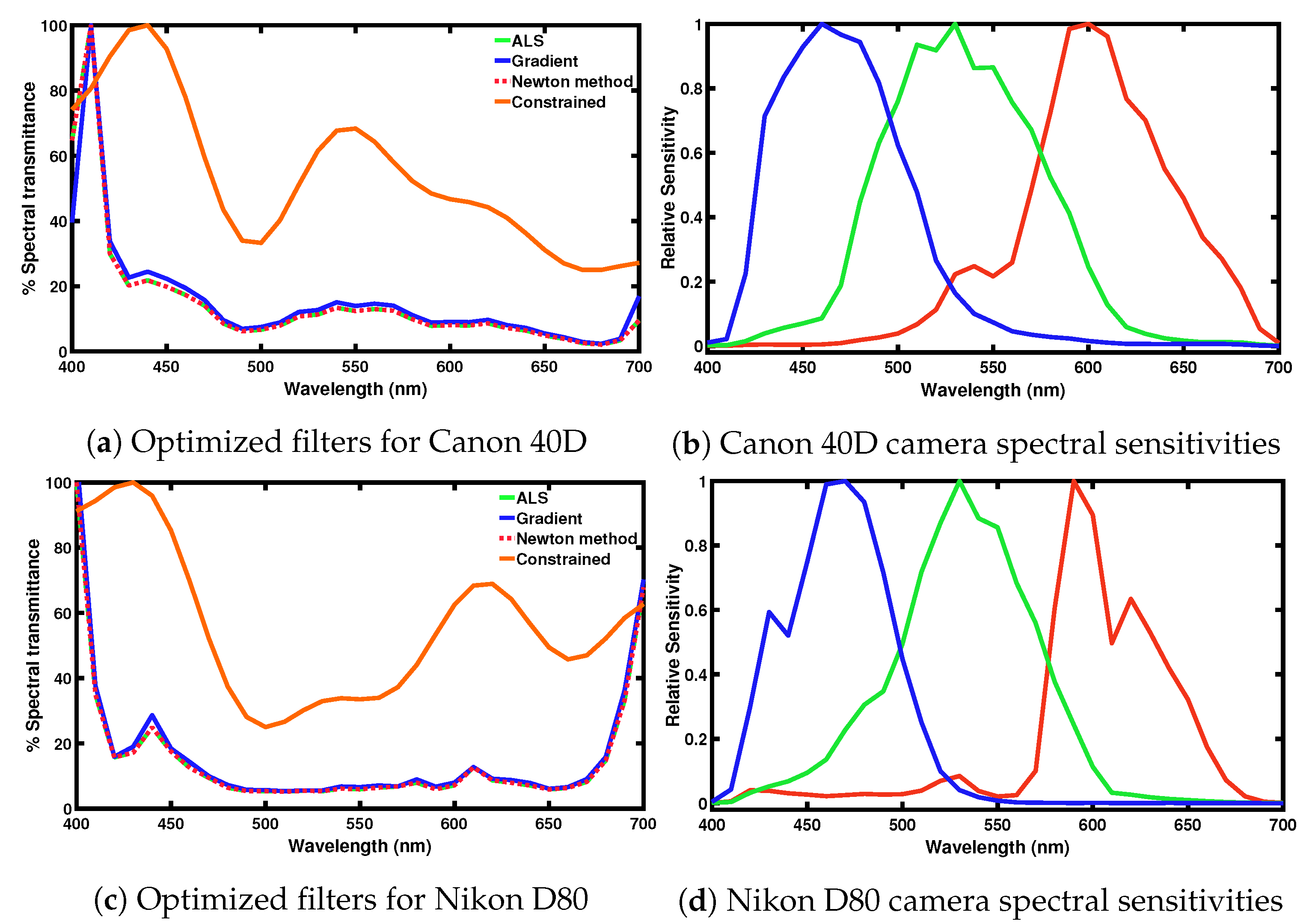

3.5. Adding Constraints to the Filter Optimisation

By default the filter found by using the algorithms proposed in the previous subsections can be arbitrarily non-smooth (even with the regularization term) and it might also be very non-transmissive (see

Figure 3). Non-smoothness can limit the feasibility of filter fabrication. Moreover, it is perhaps unsettling that we do not (and cannot) predict how filter smoothness relates to the regularization penalty term. As well as being smooth, we may also wish a color filter to transmit at least a lower bound percentage of the light that passes though. Indeed, a filter with too low transmittance values would perforce, have limited practical utility.

In this paper, we have shown how we can maximize the Vora-Value either by employing a gradient ascent algorithm or, equivalently, by adopting a simple Alternating Least-Squares (ALS) algorithm where we seek to find the filter such that the filtered sensitivities are close to being linearly transformable to an orthonormal basis of the human visual system sensitivity (e.g., a linear combination of the XYZ CMFs which are orthonormal). In previous work [

17], we have shown how the ALS approach can be modified—using Quadratic Programming (QP) [

38]—to incorporate smoothness and transmittance constraints.

While the QP solution is not the focus of this paper, we will include, for interest, an example of the constrained optimization in the next section. We refer the reader to Reference [

17] where details of the constrained ALS formulation are presented.

5. Conclusions

In this paper, we presented a unifying filter design method based on the underlying relation between the Luther condition and the Vora-Value. Mathematically, we prove that for the filter design problem, these two approaches can be well unified by using the orthonormal basis (a special basis set linearly transformed from the CMFs). That is, the optimal filter targeting at these two objectives can be simultaneously achieved. Hence, we can find an optimized Luther-filter that in the least-squares sense, also optimizes the Vora-Value. We have used three algorithms to solve for the best filter solution, that is, alternating least-squares (ALS), gradient ascent and Newton’s method; the latter of which has a faster convergence speed. We also find that the ALS approach has competitive performance compared to Newton’s method but has a much simpler formulation.

Experiments validate our method. For all cameras tested we find the Vora-Value is significantly increased compared to the cameras without filtering. In the color measurement test, we demonstrate that by using our optimized filters, we find the filtered sensitivities of digital cameras improve significantly in color accuracy compared to the prior arts.