Physiological Sensors Based Emotion Recognition While Experiencing Tactile Enhanced Multimedia

Abstract

1. Introduction

- We present a method, utilizing multi-modal physiological signals including EEG, GSR, and PPG (acquired using wearable sensors), for emotion recognition in response to TEM.

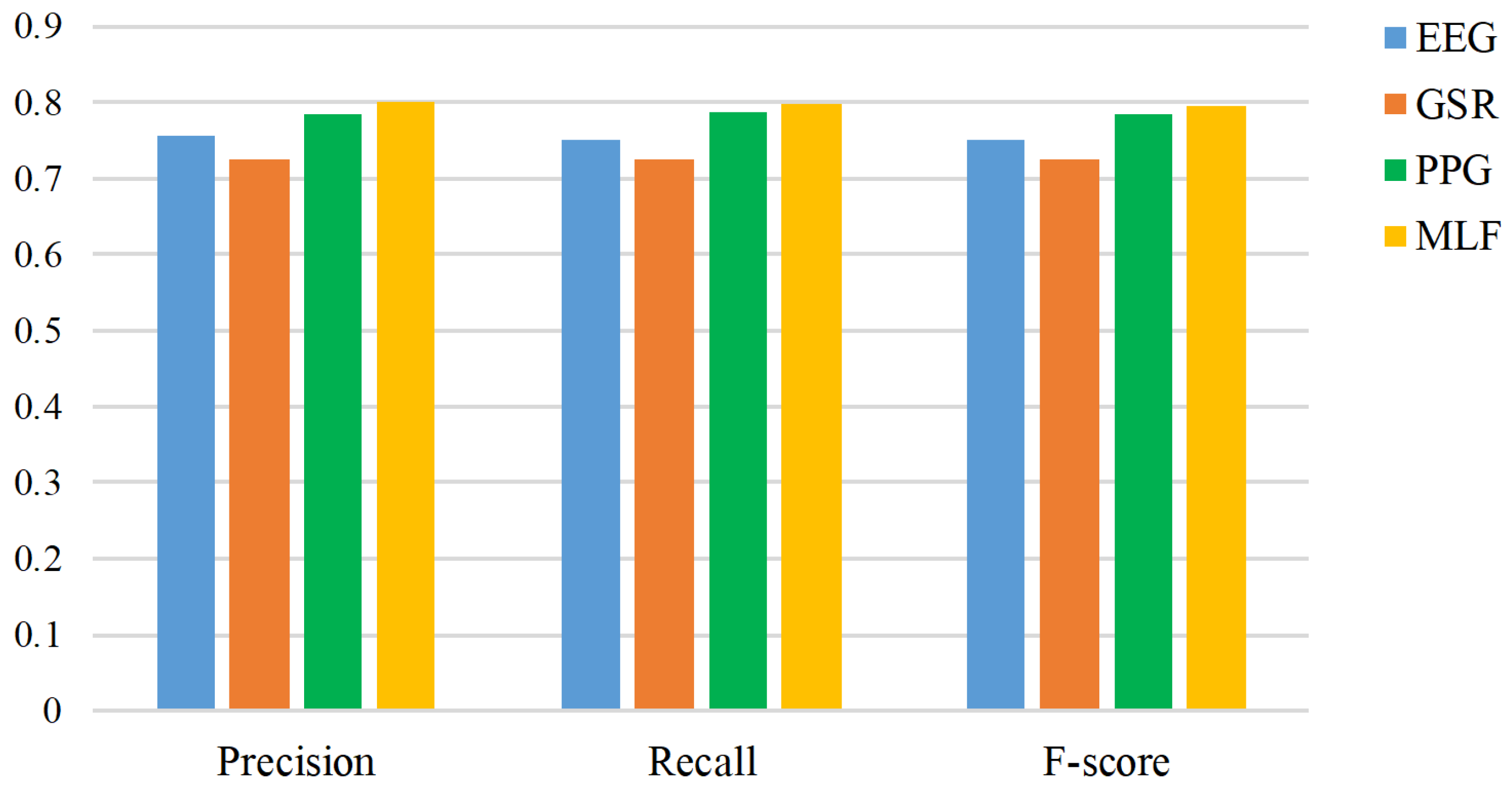

- Our results show that utilizing a multimodal fusion strategy for emotion recognition in response to TEM outperforms using data individually from EEG, GSR, and PPG.

2. Related Work

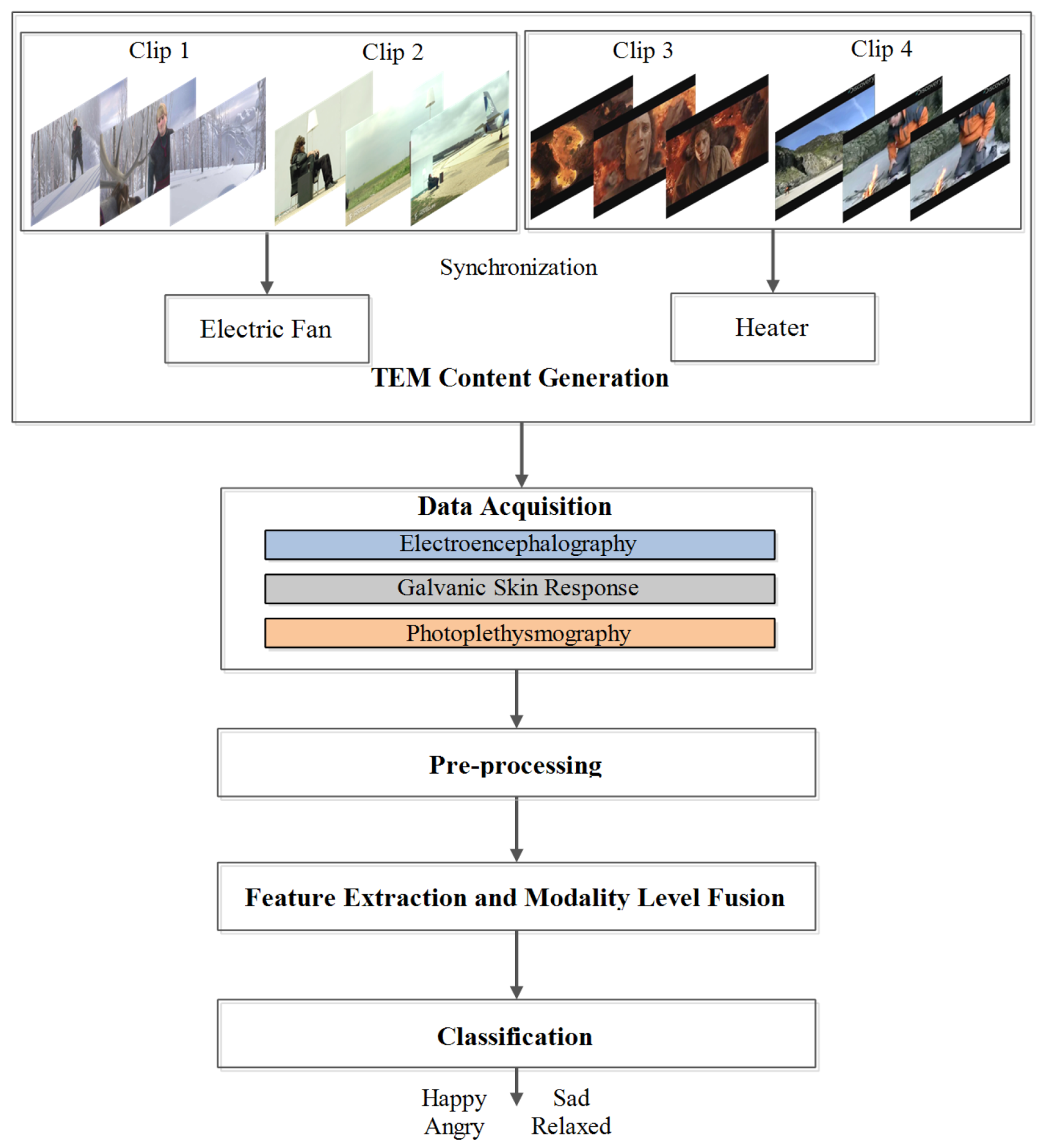

3. Proposed Methodology

3.1. TEM Content Generation

3.2. Data Acquisition

3.2.1. Participants

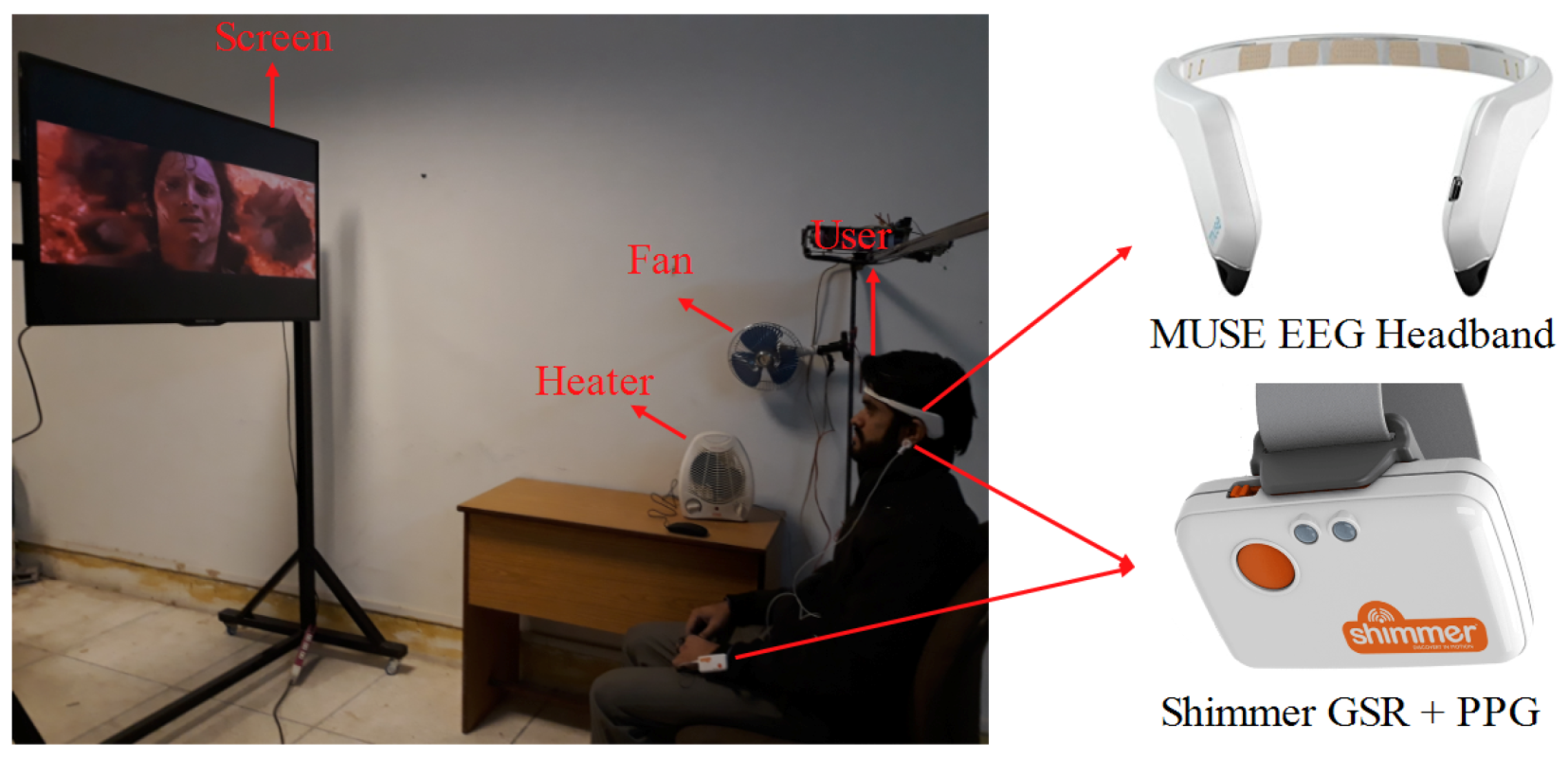

3.2.2. Apparatus

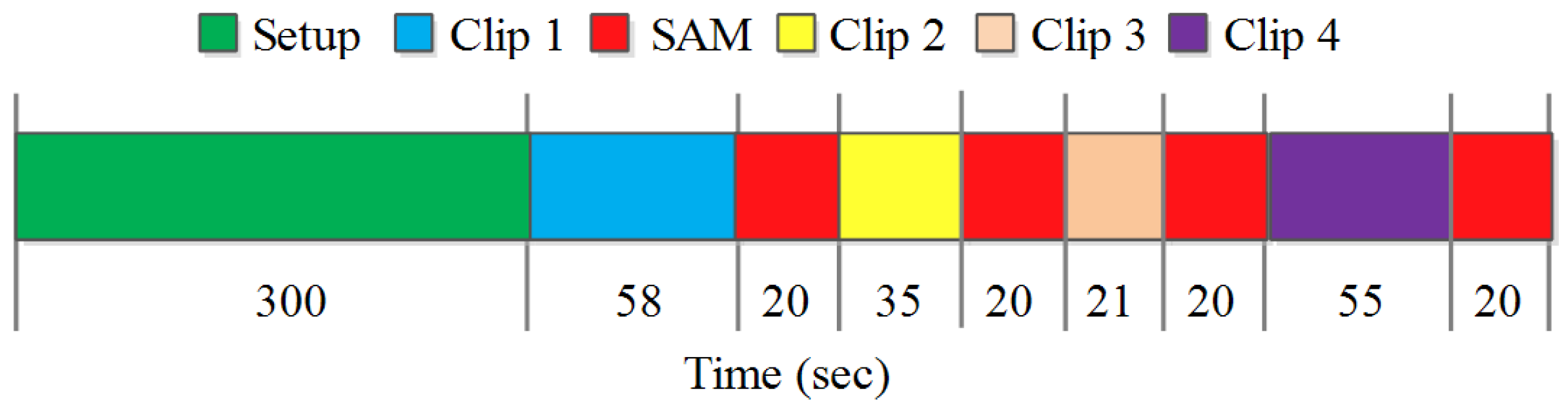

3.2.3. Experimental Procedure

3.3. Pre-Processing

3.4. Feature Extraction and Modality Level Fusion

3.5. Classification

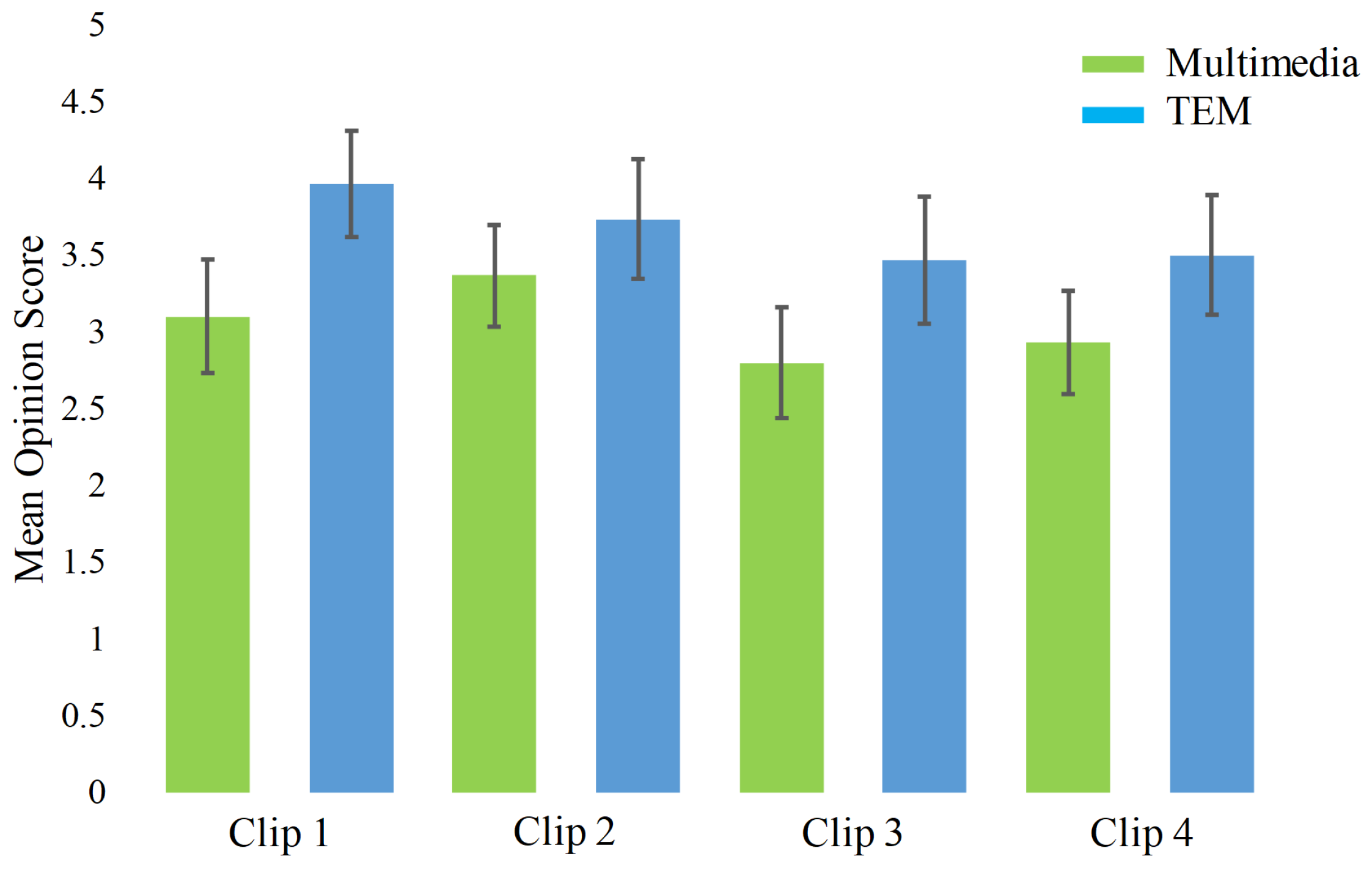

4. Experimental Results and Discussion

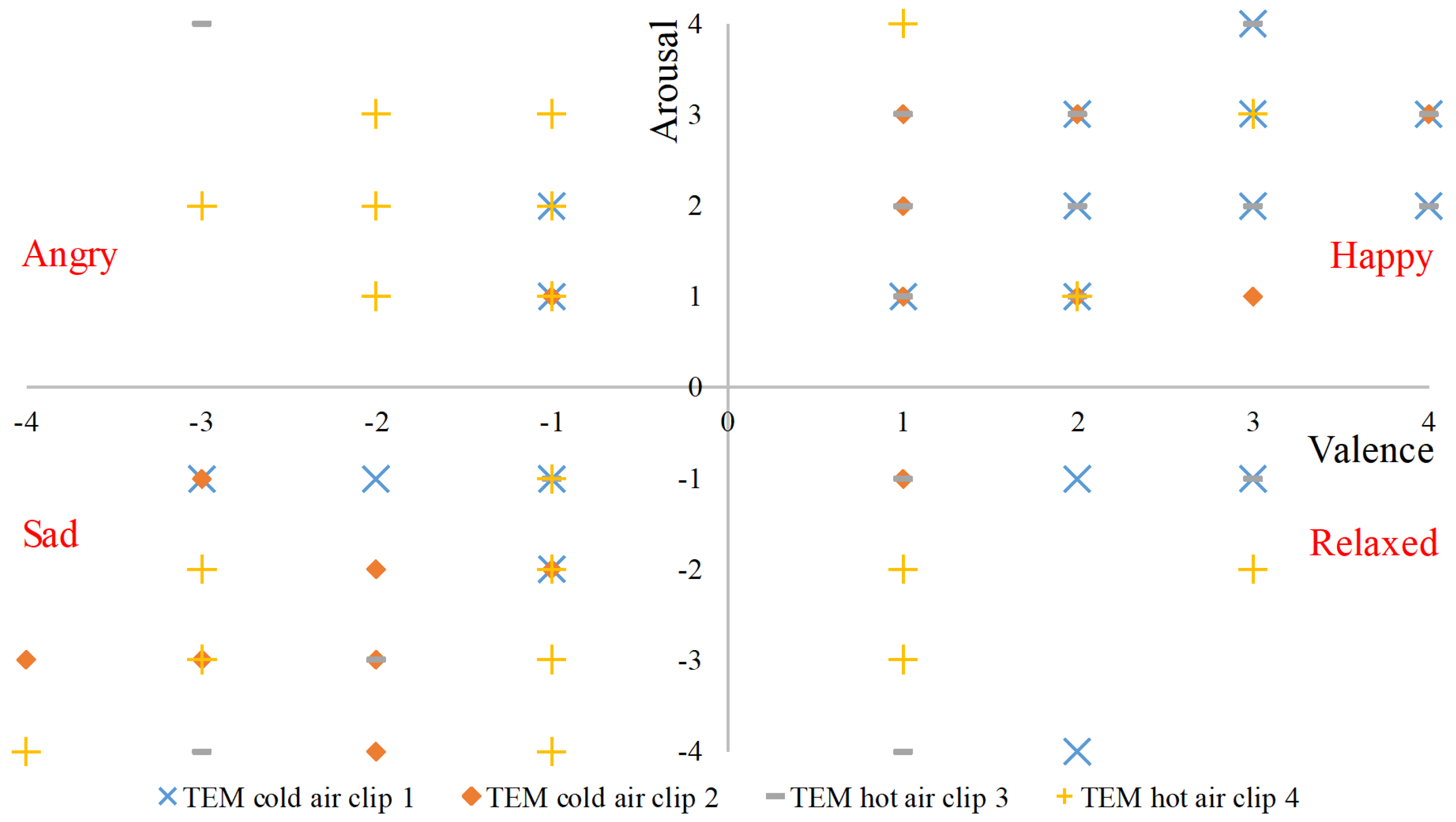

4.1. Data Labeling

4.2. Performance Evaluation

4.3. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Ghinea, G.; Timmerer, C.; Lin, W.; Gulliver, S.R. Mulsemedia: State of the art, perspectives, and challenges. ACM Trans. Multimed. Comput. Commun. Appl. (TOMM) 2014, 11, 17. [Google Scholar] [CrossRef]

- Covaci, A.; Zou, L.; Tal, I.; Muntean, G.M.; Ghinea, G. Is multimedia multisensorial?—A review of mulsemedia systems. ACM Comput. Surv. (CSUR) 2019, 51, 91. [Google Scholar] [CrossRef]

- Saleme, E.B.; Covaci, A.; Mesfin, G.; Santos, C.A.; Ghinea, G. Mulsemedia DIY: A survey of devices and a tutorial for building your own mulsemedia environment. ACM Comput. Surv. (CSUR) 2019, 52, 1–29. [Google Scholar] [CrossRef]

- Saleme, E.B.; Santos, C.A.; Ghinea, G. A mulsemedia framework for delivering sensory effects to heterogeneous systems. Multimed. Syst. 2019, 25, 421–447. [Google Scholar] [CrossRef]

- Picard, R.W.; Picard, R. Affective Computer; MIT Press: Cambridge, UK, 1997; Volume 252. [Google Scholar]

- Ekman, P.; Friesen, W.V. Unmasking the Face: A Guide to Recognizing Emotions from Facial Clues; ISHK: Los Altos, CA, USA, 2003. [Google Scholar]

- Russell, J.A. A circumplex model of affect. J. Personal. Soc. Psychol. 1980, 39, 1161. [Google Scholar] [CrossRef]

- Gunes, H.; Schuller, B.; Pantic, M.; Cowie, R. Emotion representation, analysis and synthesis in continuous space: A survey. In Proceedings of the Face and Gesture 2011, Santa Barbara, CA, USA, 21–25 March 2011; pp. 827–834. [Google Scholar]

- Bethel, C.L.; Salomon, K.; Murphy, R.R.; Burke, J.L. Survey of psychophysiology measurements applied to human-robot interaction. In Proceedings of the RO-MAN 2007—The 16th IEEE International Symposium on Robot and Human Interactive Communication, Jeju, Korea, 26–29 August 2007; pp. 732–737. [Google Scholar]

- Dzedzickis, A.; Kaklauskas, A.; Bucinskas, V. Human Emotion Recognition: Review of Sensors and Methods. Sensors 2020, 20, 592. [Google Scholar] [CrossRef]

- Dellaert, F.; Polzin, T.; Waibel, A. Recognizing emotion in speech. In Proceedings of the Fourth International Conference on Spoken Language Processing, ICSLP’96, Philadelphia, PA, USA, 3–6 October 1996; Volume 3, pp. 1970–1973. [Google Scholar]

- Mustaqeem; Kwon, S. A CNN-Assisted Enhanced Audio Signal Processing for Speech Emotion Recognition. Sensors 2020, 20, 183. [Google Scholar]

- Zhao, J.; Mao, X.; Chen, L. Speech emotion recognition using deep 1D & 2D CNN LSTM networks. Biomed. Signal Process. Control. 2019, 47, 312–323. [Google Scholar]

- Kalsum, T.; Anwar, S.M.; Majid, M.; Khan, B.; Ali, S.M. Emotion recognition from facial expressions using hybrid feature descriptors. IET Image Process. 2018, 12, 1004–1012. [Google Scholar] [CrossRef]

- Qayyum, H.; Majid, M.; Anwar, S.M.; Khan, B. Facial Expression Recognition Using Stationary Wavelet Transform Features. Math. Probl. Eng. 2017, 2017. [Google Scholar] [CrossRef]

- Zhou, B.; Ghose, T.; Lukowicz, P. Expressure: Detect Expressions Related to Emotional and Cognitive Activities Using Forehead Textile Pressure Mechanomyography. Sensors 2020, 20, 730. [Google Scholar] [CrossRef] [PubMed]

- Busso, C.; Deng, Z.; Yildirim, S.; Bulut, M.; Lee, C.M.; Kazemzadeh, A.; Lee, S.; Neumann, U.; Narayanan, S. Analysis of emotion recognition using facial expressions, speech and multimodal information. In Proceedings of the 6th International Conference on Multimodal Interfaces, State College, PA, USA, 13–15 October 2004; pp. 205–211. [Google Scholar]

- Ranganathan, H.; Chakraborty, S.; Panchanathan, S. Multimodal emotion recognition using deep learning architectures. In Proceedings of the 2016 IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Placid, NY, USA, 7–10 March 2016; pp. 1–9. [Google Scholar]

- Raheel, A.; Majid, M.; Anwar, S.M. Facial Expression Recognition based on Electroencephalography. In Proceedings of the 2019 2nd International Conference on Computing, Mathematics and Engineering Technologies (iCoMET), Sukkur, Pakistan, 30–31 January 2019; pp. 1–5. [Google Scholar]

- Qayyum, H.; Majid, M.; ul Haq, E.; Anwar, S.M. Generation of personalized video summaries by detecting viewer’s emotion using electroencephalography. J. Vis. Commun. Image Represent. 2019, 65, 102672. [Google Scholar] [CrossRef]

- McCraty, R. Heart-brain neurodynamics: The making of emotions. In Media Models to Foster Collective Human Coherence in the PSYCHecology; IGI Global: Hershey, PA, USA, 2019; pp. 191–219. [Google Scholar]

- Shu, L.; Xie, J.; Yang, M.; Li, Z.; Li, Z.; Liao, D.; Xu, X.; Yang, X. A review of emotion recognition using physiological signals. Sensors 2018, 18, 2074. [Google Scholar] [CrossRef] [PubMed]

- Chen, X.; Pan, Z.; Wang, P.; Yang, X.; Liu, P.; You, X.; Yuan, J. The integration of facial and vocal cues during emotional change perception: EEG markers. Soc. Cogn. Affect. Neurosci. 2016, 11, 1152–1161. [Google Scholar] [CrossRef] [PubMed]

- Shi, Y.; Ruiz, N.; Taib, R.; Choi, E.; Chen, F. Galvanic skin response (GSR) as an index of cognitive load. In Proceedings of the CHI’07 Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 28 April–3 May 2007; pp. 2651–2656. [Google Scholar]

- Lee, C.; Yoo, S.; Park, Y.; Kim, N.; Jeong, K.; Lee, B. Using neural network to recognize human emotions from heart rate variability and skin resistance. In Proceedings of the IEEE-EMBS 2005, 27th Annual International Conference of the Engineering in Medicine and Biology Society, Shanghai, China, 17–18 January 2006; pp. 5523–5525. [Google Scholar]

- Mather, M.; Thayer, J.F. How heart rate variability affects emotion regulation brain networks. Curr. Opin. Behav. Sci. 2018, 19, 98–104. [Google Scholar] [CrossRef] [PubMed]

- Yamuza, M.T.V.; Bolea, J.; Orini, M.; Laguna, P.; Orrite, C.; Vallverdu, M.; Bailon, R. Human emotion characterization by heart rate variability analysis guided by respiration. IEEE J. Biomed. Health Informatics 2019, 23, 2446–2454. [Google Scholar] [CrossRef]

- Murray, N.; Lee, B.; Qiao, Y.; Miro-Muntean, G. The influence of human factors on olfaction based mulsemedia quality of experience. In Proceedings of the 2016 Eighth International Conference on Quality of Multimedia Experience (QoMEX), Lisbon, Portugal, 6–8 June 2016; pp. 1–6. [Google Scholar]

- Yuan, Z.; Bi, T.; Muntean, G.M.; Ghinea, G. Perceived synchronization of mulsemedia services. IEEE Trans. Multimed. 2015, 17, 957–966. [Google Scholar] [CrossRef]

- Covaci, A.; Trestian, R.; Saleme, E.a.B.; Comsa, I.S.; Assres, G.; Santos, C.A.S.; Ghinea, G. 360 Mulsemedia: A Way to Improve Subjective QoE in 360 Videos. In Proceedings of the 27th ACM International Conference on Multimedia, Association for Computing Machinery, Nice, France, 21–25 October 2019; pp. 2378–2386. [Google Scholar]

- Keighrey, C.; Flynn, R.; Murray, S.; Murray, N. A QoE evaluation of immersive augmented and virtual reality speech & language assessment applications. In Proceedings of the 2017 Ninth International Conference on Quality of Multimedia Experience (QoMEX), Erfurt, Germany, 31 May–2 June 2017; pp. 1–6. [Google Scholar]

- Egan, D.; Brennan, S.; Barrett, J.; Qiao, Y.; Timmerer, C.; Murray, N. An evaluation of Heart Rate and ElectroDermal Activity as an objective QoE evaluation method for immersive virtual reality environments. In Proceedings of the 2016 Eighth International Conference on Quality of Multimedia Experience (QoMEX), Lisbon, Portugal, 6–8 June 2016; pp. 1–6. [Google Scholar]

- Mesfin, G.; Hussain, N.; Kani-Zabihi, E.; Covaci, A.; Saleme, E.B.; Ghinea, G. QoE of cross-modally mapped Mulsemedia: An assessment using eye gaze and heart rate. Multimed. Tools Appl. 2020, 79, 7987–8009. [Google Scholar] [CrossRef]

- Covaci, A.; Saleme, E.B.; Mesfin, G.A.; Hussain, N.; Kani-Zabihi, E.; Ghinea, G. How do we experience crossmodal correspondent mulsemedia content? IEEE Trans. Multimed. 2020, 22, 1249–1258. [Google Scholar] [CrossRef]

- Raheel, A.; Anwar, S.M.; Majid, M. Emotion recognition in response to traditional and tactile enhanced multimedia using electroencephalography. Multimed. Tools Appl. 2019, 78, 13971–13985. [Google Scholar] [CrossRef]

- Raheel, A.; Majid, M.; Anwar, S.M.; Bagci, U. Emotion Classification in Response to Tactile Enhanced Multimedia using Frequency Domain Features of Brain Signals. In Proceedings of the 2019 IEEE 41st Annual International Conference of the Engineering in Medicine and Biology Society (EMBC), Berlin, Germany, 23–27 July 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Bhatti, A.M.; Majid, M.; Anwar, S.M.; Khan, B. Human emotion recognition and analysis in response to audio music using brain signals. Comput. Hum. Behav. 2016, 65, 267–275. [Google Scholar] [CrossRef]

- Kim, M.; Cheon, S.; Kang, Y. Use of Electroencephalography (EEG) for the Analysis of Emotional Perception and Fear to Nightscapes. Sustainability 2019, 11, 233. [Google Scholar] [CrossRef]

- Becerra, M.; Londoño-Delgado, E.; Pelaez-Becerra, S.; Serna-Guarín, L.; Castro-Ospina, A.; Marin-Castrillón, D.; Peluffo-Ordóñez, D. Odor Pleasantness Classification from Electroencephalographic Signals and Emotional States. In Proceedings of the Colombian Conference on Computing, Cartagena, Colombia, 26–28 September 2018; Springer: Berlin/Heidelberg, Germany, 2018; pp. 128–138. [Google Scholar]

- Singh, H.; Bauer, M.; Chowanski, W.; Sui, Y.; Atkinson, D.; Baurley, S.; Fry, M.; Evans, J.; Bianchi-Berthouze, N. The brain’s response to pleasant touch: An EEG investigation of tactile caressing. Front. Hum. Neurosci. 2014, 8, 893. [Google Scholar] [CrossRef] [PubMed]

- Udovičić, G.; Ðerek, J.; Russo, M.; Sikora, M. Wearable emotion recognition system based on GSR and PPG signals. In Proceedings of the 2nd International Workshop on Multimedia for Personal Health and Health Care, Mountain View, CA, USA, 23–27 October 2017; pp. 53–59. [Google Scholar]

- Koelstra, S.; Muhl, C.; Soleymani, M.; Lee, J.S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. Deap: A database for emotion analysis; using physiological signals. IEEE Trans. Affect. Comput. 2011, 3, 18–31. [Google Scholar] [CrossRef]

- Soleymani, M.; Pantic, M.; Pun, T. Multimodal emotion recognition in response to videos. IEEE Trans. Affect. Comput. 2012, 3, 211–223. [Google Scholar] [CrossRef]

- Wen, W.; Liu, G.; Cheng, N.; Wei, J.; Shangguan, P.; Huang, W. Emotion recognition based on multi-variant correlation of physiological signals. IEEE Trans. Affect. Comput. 2014, 5, 126–140. [Google Scholar] [CrossRef]

- Mohammadi, Z.; Frounchi, J.; Amiri, M. Wavelet-based emotion recognition system using EEG signal. Neural Comput. Appl. 2017, 28, 1985–1990. [Google Scholar] [CrossRef]

- Liu, Y.J.; Yu, M.; Zhao, G.; Song, J.; Ge, Y.; Shi, Y. Real-time movie-induced discrete emotion recognition from EEG signals. IEEE Trans. Affect. Comput. 2018, 9, 550–562. [Google Scholar] [CrossRef]

- Albraikan, A.; Tobón, D.P.; El Saddik, A. Toward user-independent emotion recognition using physiological signals. IEEE Sens. J. 2018, 19, 8402–8412. [Google Scholar] [CrossRef]

- Huang, X.; Kortelainen, J.; Zhao, G.; Li, X.; Moilanen, A.; Seppänen, T.; Pietikäinen, M. Multi-modal emotion analysis from facial expressions and electroencephalogram. Comput. Vis. Image Underst. 2016, 147, 114–124. [Google Scholar] [CrossRef]

- Chai, X.; Wang, Q.; Zhao, Y.; Li, Y.; Liu, D.; Liu, X.; Bai, O. A fast, efficient domain adaptation technique for cross-domain electroencephalography (EEG)-based emotion recognition. Sensors 2017, 17, 1014. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.H.; Wu, C.T.; Cheng, W.T.; Hsiao, Y.T.; Chen, P.M.; Teng, J.T. Emotion recognition from single-trial EEG based on kernel Fisher’s emotion pattern and imbalanced quasiconformal kernel support vector machine. Sensors 2014, 14, 13361–13388. [Google Scholar] [CrossRef] [PubMed]

- Petrantonakis, P.C.; Hadjileontiadis, L.J. A novel emotion elicitation index using frontal brain asymmetry for enhanced EEG-based emotion recognition. IEEE Trans. Inf. Technol. Biomed. 2011, 15, 737–746. [Google Scholar] [CrossRef] [PubMed]

- Zhang, J.; Yin, Z.; Chen, P.; Nichele, S. Emotion recognition using multi-modal data and machine learning techniques: A tutorial and review. Inf. Fusion 2020, 59, 103–126. [Google Scholar] [CrossRef]

- Zhang, X.; Xu, C.; Xue, W.; Hu, J.; He, Y.; Gao, M. Emotion recognition based on multichannel physiological signals with comprehensive nonlinear processing. Sensors 2018, 18, 3886. [Google Scholar] [CrossRef]

- Alonso-Martin, F.; Malfaz, M.; Sequeira, J.; Gorostiza, J.F.; Salichs, M.A. A multimodal emotion detection system during human–robot interaction. Sensors 2013, 13, 15549–15581. [Google Scholar] [CrossRef]

- Kim, K.H.; Bang, S.W.; Kim, S.R. Emotion recognition system using short-term monitoring of physiological signals. Med Biol. Eng. Comput. 2004, 42, 419–427. [Google Scholar] [CrossRef]

- Koelstra, S.; Yazdani, A.; Soleymani, M.; Mühl, C.; Lee, J.S.; Nijholt, A.; Pun, T.; Ebrahimi, T.; Patras, I. Single trial classification of EEG and peripheral physiological signals for recognition of emotions induced by music videos. In Proceedings of the International Conference on Brain Informatics, Toronto, ON, Canada, 28–30 August 2010; Springer: Berlin/Heidelberg, Germany, 2010; pp. 89–100. [Google Scholar]

- Kanjo, E.; Younis, E.M.; Ang, C.S. Deep learning analysis of mobile physiological, environmental and location sensor data for emotion detection. Inf. Fusion 2019, 49, 46–56. [Google Scholar] [CrossRef]

- Kim, J.; André, E. Emotion recognition based on physiological changes in music listening. IEEE Trans. Pattern Anal. Mach. Intell. 2008, 30, 2067–2083. [Google Scholar] [CrossRef]

- Ayata, D.; Yaslan, Y.; Kamasak, M.E. Emotion based music recommendation system using wearable physiological sensors. IEEE Trans. Consum. Electron. 2018, 64, 196–203. [Google Scholar] [CrossRef]

- Chang, C.Y.; Tsai, J.S.; Wang, C.J.; Chung, P.C. Emotion recognition with consideration of facial expression and physiological signals. In Proceedings of the 2009 IEEE Symposium on Computational Intelligence in Bioinformatics and Computational Biology, Nashville, TN, USA, 30 March–2 April 2009; pp. 278–283. [Google Scholar]

- Khalili, Z.; Moradi, M. Emotion detection using brain and peripheral signals. In Proceedings of the 2008 Cairo International Biomedical Engineering Conference, Cairo, Egypt, 18–20 December 2008; pp. 1–4. [Google Scholar]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A multimodal database for affect recognition and implicit tagging. IEEE Trans. Affect. Comput. 2011, 3, 42–55. [Google Scholar] [CrossRef]

- Abadi, M.K.; Subramanian, R.; Kia, S.M.; Avesani, P.; Patras, I.; Sebe, N. DECAF: MEG-based multimodal database for decoding affective physiological responses. IEEE Trans. Affect. Comput. 2015, 6, 209–222. [Google Scholar] [CrossRef]

- Subramanian, R.; Wache, J.; Abadi, M.K.; Vieriu, R.L.; Winkler, S.; Sebe, N. ASCERTAIN: Emotion and personality recognition using commercial sensors. IEEE Trans. Affect. Comput. 2016, 9, 147–160. [Google Scholar] [CrossRef]

- Correa, J.A.M.; Abadi, M.K.; Sebe, N.; Patras, I. Amigos: A dataset for affect, personality and mood research on individuals and groups. IEEE Trans. Affect. Comput. 2018. [Google Scholar] [CrossRef]

- Song, T.; Zheng, W.; Lu, C.; Zong, Y.; Zhang, X.; Cui, Z. MPED: A multi-modal physiological emotion database for discrete emotion recognition. IEEE Access 2019, 7, 12177–12191. [Google Scholar] [CrossRef]

- Santamaria-Granados, L.; Munoz-Organero, M.; Ramirez-Gonzalez, G.; Abdulhay, E.; Arunkumar, N. Using deep convolutional neural network for emotion detection on a physiological signals dataset (AMIGOS). IEEE Access 2018, 7, 57–67. [Google Scholar] [CrossRef]

- Martínez-Rodrigo, A.; Zangróniz, R.; Pastor, J.M.; Latorre, J.M.; Fernández-Caballero, A. Emotion detection in ageing adults from physiological sensors. In Ambient Intelligence-Software and Applications; Springer: Berlin/Heidelberg, Germany, 2015; pp. 253–261. [Google Scholar]

- Zhuang, N.; Zeng, Y.; Yang, K.; Zhang, C.; Tong, L.; Yan, B. Investigating patterns for self-induced emotion recognition from EEG signals. Sensors 2018, 18, 841. [Google Scholar] [CrossRef]

- Dissanayake, T.; Rajapaksha, Y.; Ragel, R.; Nawinne, I. An Ensemble Learning Approach for Electrocardiogram Sensor Based Human Emotion Recognition. Sensors 2019, 19, 4495. [Google Scholar] [CrossRef]

- Athavipach, C.; Pan-ngum, S.; Israsena, P. A Wearable In-Ear EEG Device for Emotion Monitoring. Sensors 2019, 19, 4014. [Google Scholar] [CrossRef]

- Alghowinem, S.; Goecke, R.; Wagner, M.; Alwabil, A. Evaluating and Validating Emotion Elicitation Using English and Arabic Movie Clips on a Saudi Sample. Sensors 2019, 19, 2218. [Google Scholar] [CrossRef]

- Chen, D.W.; Miao, R.; Yang, W.Q.; Liang, Y.; Chen, H.H.; Huang, L.; Deng, C.J.; Han, N. A feature extraction method based on differential entropy and linear discriminant analysis for emotion recognition. Sensors 2019, 19, 1631. [Google Scholar] [CrossRef]

- Alazrai, R.; Homoud, R.; Alwanni, H.; Daoud, M.I. EEG-based emotion recognition using quadratic time-frequency distribution. Sensors 2018, 18, 2739. [Google Scholar] [CrossRef] [PubMed]

- Lee, K.W.; Yoon, H.S.; Song, J.M.; Park, K.R. Convolutional neural network-based classification of driver’s emotion during aggressive and smooth driving using multi-modal camera sensors. Sensors 2018, 18, 957. [Google Scholar] [CrossRef] [PubMed]

- Goshvarpour, A.; Goshvarpour, A. The potential of photoplethysmogram and galvanic skin response in emotion recognition using nonlinear features. Phys. Eng. Sci. Med. 2020, 43, 119–134. [Google Scholar] [CrossRef] [PubMed]

- Seo, J.; Laine, T.H.; Sohn, K.A. An Exploration of Machine Learning Methods for Robust Boredom Classification Using EEG and GSR Data. Sensors 2019, 19, 4561. [Google Scholar] [CrossRef] [PubMed]

- Lee, J.; Yoo, S.K. Design of user-customized negative emotion classifier based on feature selection using physiological signal sensors. Sensors 2018, 18, 4253. [Google Scholar] [CrossRef] [PubMed]

- Zhang, J.; Chen, M.; Zhao, S.; Hu, S.; Shi, Z.; Cao, Y. ReliefF-based EEG sensor selection methods for emotion recognition. Sensors 2016, 16, 1558. [Google Scholar] [CrossRef]

- Shu, L.; Yu, Y.; Chen, W.; Hua, H.; Li, Q.; Jin, J.; Xu, X. Wearable Emotion Recognition Using Heart Rate Data from a Smart Bracelet. Sensors 2020, 20, 718. [Google Scholar] [CrossRef]

- Kwon, Y.H.; Shin, S.B.; Kim, S.D. Electroencephalography based fusion two-dimensional (2D)-convolution neural networks (CNN) model for emotion recognition system. Sensors 2018, 18, 1383. [Google Scholar] [CrossRef]

- Zheng, W.L.; Lu, B.L. Investigating critical frequency bands and channels for EEG-based emotion recognition with deep neural networks. IEEE Trans. Auton. Ment. Dev. 2015, 7, 162–175. [Google Scholar] [CrossRef]

- Zhang, T.; Zheng, W.; Cui, Z.; Zong, Y.; Li, Y. Spatial–temporal recurrent neural network for emotion recognition. IEEE Trans. Cybern. 2019, 49, 839–847. [Google Scholar] [CrossRef] [PubMed]

- Al Machot, F.; Elmachot, A.; Ali, M.; Al Machot, E.; Kyamakya, K. A deep-learning model for subject-independent human emotion recognition using electrodermal activity sensors. Sensors 2019, 19, 1659. [Google Scholar] [CrossRef] [PubMed]

- Oh, S.; Lee, J.Y.; Kim, D.K. The Design of CNN Architectures for Optimal Six Basic Emotion Classification Using Multiple Physiological Signals. Sensors 2020, 20, 866. [Google Scholar] [CrossRef] [PubMed]

- Ali, M.; Al Machot, F.; Haj Mosa, A.; Jdeed, M.; Al Machot, E.; Kyamakya, K. A globally generalized emotion recognition system involving different physiological signals. Sensors 2018, 18, 1905. [Google Scholar] [CrossRef]

- Yang, H.; Han, J.; Min, K. A Multi-Column CNN Model for Emotion Recognition from EEG Signals. Sensors 2019, 19, 4736. [Google Scholar] [CrossRef]

- Chao, H.; Dong, L.; Liu, Y.; Lu, B. Emotion recognition from multiband EEG signals using CapsNet. Sensors 2019, 19, 2212. [Google Scholar] [CrossRef]

- Poria, S.; Majumder, N.; Hazarika, D.; Cambria, E.; Gelbukh, A.; Hussain, A. Multimodal sentiment analysis: Addressing key issues and setting up the baselines. IEEE Intell. Syst. 2018, 33, 17–25. [Google Scholar] [CrossRef]

- Raheel, A.; Majid, M.; Anwar, S.M. A study on the effects of traditional and olfaction enhanced multimedia on pleasantness classification based on brain activity analysis. Comput. Biol. Med. 2019, 103469. [Google Scholar] [CrossRef]

- Mesfin, G.; Hussain, N.; Covaci, A.; Ghinea, G. Using Eye Tracking and Heart-Rate Activity to Examine Crossmodal Correspondences QoE in Mulsemedia. ACM Trans. Multimed. Comput. Commun. Appl. (TOMM) 2019, 15, 34. [Google Scholar] [CrossRef]

- Bălan, O.; Moise, G.; Moldoveanu, A.; Leordeanu, M.; Moldoveanu, F. Fear level classification based on emotional dimensions and machine learning techniques. Sensors 2019, 19, 1738. [Google Scholar] [CrossRef]

- Bradley, M.M.; Lang, P.J. Measuring emotion: The self-assessment manikin and the semantic differential. J. Behav. Ther. Exp. Psychiatry 1994, 25, 49–59. [Google Scholar] [CrossRef]

- Kaur, B.; Singh, D.; Roy, P.P. A novel framework of EEG-based user identification by analyzing music-listening behavior. Multimed. Tools Appl. 2017, 76, 25581–25602. [Google Scholar] [CrossRef]

- Davidson, R.J. Affective neuroscience and psychophysiology: Toward a synthesis. Psychophysiology 2003, 40, 655–665. [Google Scholar] [CrossRef] [PubMed]

- Sutton, S.K.; Davidson, R.J. Prefrontal brain asymmetry: A biological substrate of the behavioral approach and inhibition systems. Psychol. Sci. 1997, 8, 204–210. [Google Scholar] [CrossRef]

- Alarcao, S.M.; Fonseca, M.J. Emotions recognition using EEG signals: A survey. IEEE Trans. Affect. Comput. 2017, 10, 374–393. [Google Scholar] [CrossRef]

- Hassan, M.M.; Alam, M.G.R.; Uddin, M.Z.; Huda, S.; Almogren, A.; Fortino, G. Human emotion recognition using deep belief network architecture. Inf. Fusion 2019, 51, 10–18. [Google Scholar] [CrossRef]

| Reference | Stimuli | Senses Engaged | Sensors Used | Emotions Classified | Accuracy |

|---|---|---|---|---|---|

| [37] | Music | Auditory | EEG | Happy, sad, love, anger | |

| [58] | Music | Auditory | EMG, ECG, GSR, respiration | Low/High valence-arousal | |

| [60] | Images | Vision | GSR, ECG, temperature | Love, joy, surprise, fear | |

| [38] | Images | Vision | EEG | Fear | – |

| [61] | Images | Vision | EEG, peripheral signals | Positively excited, negatively | |

| excited, calm | |||||

| [41] | Images | Vision | GSR, PPG | Low/High valence-arousal | |

| [51] | Images | Vision | EEG | Low/ High valence-arousal | |

| [71] | Images | Vision | EEG | Low/High valence-arousal | |

| [39] | Odors | Olfaction | EEG | Pleasant, unpleasant | |

| [40] | Textile Fabrics | Tactile | EEG | Pleasant, unpleasant | |

| [42] | Videos | Vision, Auditory | EEG, GSR, EMG, EoG, BVP | Low/High valence-arousal | |

| [63] | Videos | Vision, Auditory | EEG, ECG, EoG, MEG | Low/High valence-arousal | |

| [64] | Videos | Vision, Auditory | EEG, ECG, GSR | Low/High valence-arousal | |

| [66] | Videos | Vision, Auditory | EEG, ECG, GSR, respiration | Joy, funny, anger, fear, | |

| disgust, neutrality | |||||

| [43] | Videos | Vision, Auditory | EEG, Eye | Pleasant, unpleasant, neutral, | |

| Tracking | calm, medium, activated | ||||

| [85] | Videos | Vision, Auditory | HRV, respiration | Happiness, fear, surprise, | |

| anger, sadness, disgust | |||||

| [77] | Videos | Vision, Auditory | EEG, GSR | Boredom | |

| [78] | Videos | Vision, Auditory | ECG, skin temperature, EDA | Negative emotion | |

| [53] | Videos | Vision, Auditory | ECG, EMG, GSR, PPG | Pleasure, fear, sadness, anger | |

| [86] | Videos | Vision, Auditory | ECG, skin temperature, EDA | Happiness, surprise, anger, | |

| disgust, sadness, fear | |||||

| [70] | Videos | Vision, Auditory | ECG | Joy, sadness, pleasure, anger, | |

| fear, neutral | |||||

| [72] | Videos | Vision, Auditory | EEG | Amusement, sadness, anger, | |

| fear, surprise, disgust | |||||

| [92] | Videos | Vision, Auditory | EEG | Low, medium, high fear | |

| [73] | Videos | Vision, Auditory | EEG | Positive, neutral, negative | |

| [74] | Videos | Vision, Auditory | EEG | Low/High valence-arousal | |

| [81] | Videos | Vision, Auditory | EEG, GSR | Low/High valence-arousal | |

| [69] | Videos | Vision, Auditory | EEG | Joy, neutrality, sadness, | |

| disgust, anger, fear | |||||

| [35] | Tactile enhanced | Vision, Auditory, | EEG | Happy, angry, sad, relaxed | |

| multimedia | Tactile |

| TEM | Sensorial | Clip | Synchronization | Duration of |

|---|---|---|---|---|

| Clip | Effect | Duration | Timestamp | Sensorial Effect |

| Clip 1 | Cold air | 58 s | 00:19–00:59 | 40 s |

| Clip 2 | Cold air | 35 s | 00:03–00:30 | 27 s |

| Clip 3 | Hot air | 21 s | 00:12–00:28 | 16 s |

| Clip 4 | Hot air | 55 s | 00:30–00:55 | 25 s |

| Sensor | Feature Description |

|---|---|

| , where and represent power on right | |

| and left hemisphere respectively, and b | |

| represents EEG band. | |

| EEG | |

| , where and are the | |

| mean of and respectively. | |

| , where X is the row vector consisting of | |

| GSR data and is the mean value. | |

| , where is the probability. | |

| GSR | , where is fourth moment of the GSR data. |

| , where is third moment of the GSR data. | |

| PPG | HR = Number of beats in a minute. |

| HRV = Time interval between heart beats. |

| Modality | Accuracy | MAE | RMSE | RAE | RRSE | Kappa |

|---|---|---|---|---|---|---|

| EEG | 75.00% | 0.13 | 0.35 | 40.13 | 84.73 | 0.62 |

| GSR | 72.61% | 0.14 | 0.35 | 42.68 | 86.15 | 0.59 |

| PPG | 78.57% | 0.11 | 0.31 | 33.13 | 75.18 | 0.68 |

| EEG+GSR | 69.04% | 0.13 | 0.28 | 39.30 | 69.54 | 0.57 |

| EEG+PPG | 72.61% | 0.13 | 0.32 | 37.97 | 79.25 | 0.60 |

| GSR+PPG | 75.00% | 0.12 | 0.30 | 35.83 | 72.93 | 0.63 |

| EEG+GSR+PPG | 79.76% | 0.11 | 0.31 | 33.23 | 76.06 | 0.69 |

| a | b | c | d | Classified as | Sensitivity | Specificity |

|---|---|---|---|---|---|---|

| 6 | 1 | 2 | 0 | a = Relaxed | 66.7% | 94.0% |

| 0 | 15 | 6 | 1 | b = Sad | 68.2% | 91.9% |

| 4 | 2 | 33 | 1 | c = Happy | 82.5% | 80.0% |

| 1 | 2 | 1 | 9 | d = Angry | 69.2% | 97.2% |

| (a) EEG | ||||||

| 7 | 1 | 2 | 1 | a = Relaxed | 77.8% | 94.7% |

| 0 | 18 | 5 | 0 | b = Sad | 81.8% | 91.9% |

| 2 | 3 | 29 | 5 | c = Happy | 72.5% | 77.3% |

| 0 | 0 | 4 | 7 | d = Angry | 53.8% | 94.4% |

| (b) GSR | ||||||

| 7 | 0 | 4 | 0 | a = Relaxed | 77.8% | 94.7% |

| 0 | 17 | 1 | 4 | b = Sad | 77.3% | 91.9% |

| 2 | 2 | 35 | 2 | c = Happy | 87.5% | 86.4% |

| 0 | 3 | 0 | 7 | d = Angry | 53.8% | 95.8% |

| (c) PPG | ||||||

| 7 | 0 | 1 | 0 | a = Relaxed | 77.8% | 98.7% |

| 1 | 16 | 3 | 2 | b = Sad | 72.7% | 90.3% |

| 1 | 6 | 35 | 2 | c = Happy | 87.5% | 79.5% |

| 0 | 0 | 1 | 9 | d = Angry | 69.2% | 98.6% |

| (d) EEG+GSR+PPG | ||||||

| Method | Modality | Emotions | No. of | No. of | Accuracy |

|---|---|---|---|---|---|

| TEM/Video Clips | Users (F/M) | ||||

| [91] | Eye gaze, Heart | Enjoyment, Perception | 6 (TEM) | 24 (9/15) | - |

| rate wrist band | |||||

| [35] | EEG | Happy, Angry, Sad, Relaxed | 2 (TEM) | 21 (10/11) | 63.41% |

| [36] | EEG | Happy, Angry, Sad, Relaxed | 2 (TEM) | 21 (10/11) | 76.19% |

| [85] | RSP and HRV | Happy, Angry, Sad, Fear, Surprise, Disgust | 6 (video) | 49 (19/30) | 94.02% |

| [98] | PPG, EMG, EDA | Happy, Sad, Disgust, Relaxed, Neutral | 40 (video) | 32 (16/16) | 89.53% |

| Proposed | EEG, GSR, PPG | Happy, Angry, Sad, Relaxed | 4 (Video) | 21 (10/11) | 70.01% |

| Proposed | EEG | Happy, Angry, Sad, Relaxed | 4 (TEM) | 21 (10/11) | 75.00% |

| Proposed | EEG, GSR, PPG | Happy, Angry, Sad, Relaxed | 4 (TEM) | 21 (10/11) | 79.76% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Raheel, A.; Majid, M.; Alnowami, M.; Anwar, S.M. Physiological Sensors Based Emotion Recognition While Experiencing Tactile Enhanced Multimedia. Sensors 2020, 20, 4037. https://doi.org/10.3390/s20144037

Raheel A, Majid M, Alnowami M, Anwar SM. Physiological Sensors Based Emotion Recognition While Experiencing Tactile Enhanced Multimedia. Sensors. 2020; 20(14):4037. https://doi.org/10.3390/s20144037

Chicago/Turabian StyleRaheel, Aasim, Muhammad Majid, Majdi Alnowami, and Syed Muhammad Anwar. 2020. "Physiological Sensors Based Emotion Recognition While Experiencing Tactile Enhanced Multimedia" Sensors 20, no. 14: 4037. https://doi.org/10.3390/s20144037

APA StyleRaheel, A., Majid, M., Alnowami, M., & Anwar, S. M. (2020). Physiological Sensors Based Emotion Recognition While Experiencing Tactile Enhanced Multimedia. Sensors, 20(14), 4037. https://doi.org/10.3390/s20144037