An Adaptive Exposure Fusion Method Using Fuzzy Logic and Multivariate Normal Conditional Random Fields

Abstract

1. Introduction

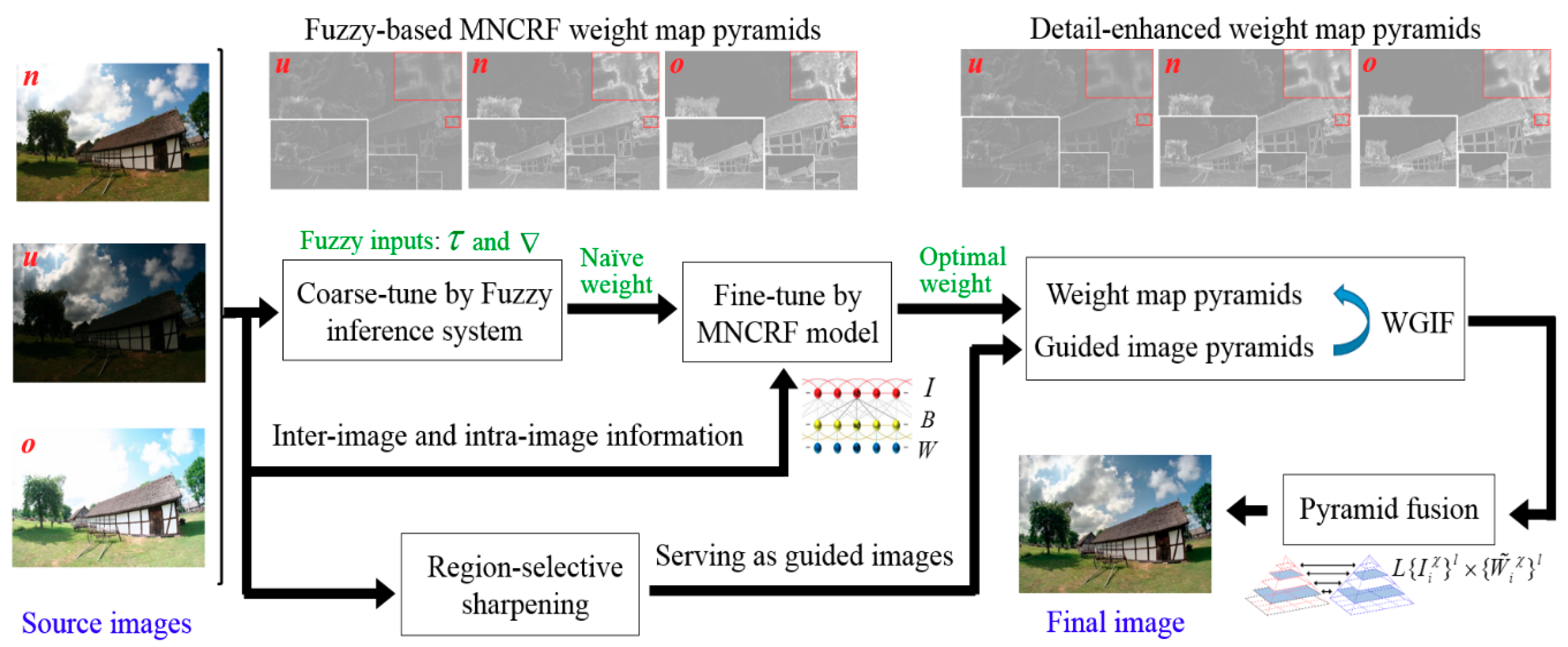

2. Motivation of Integrating Fuzzy Logic with MNCRF Model

3. Proposed Approach

3.1. Fuzzy-Based Pixel Weights Initialization

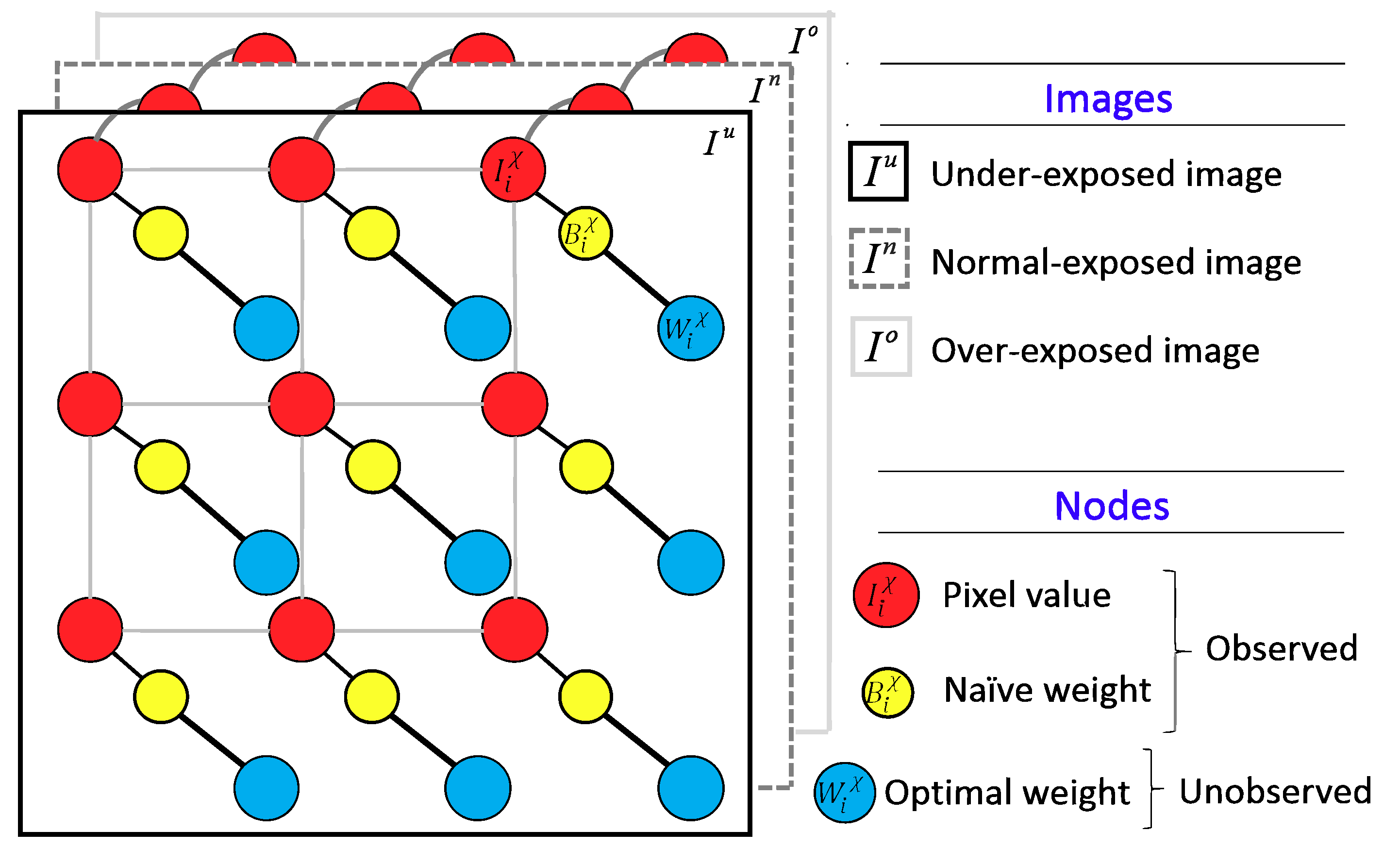

3.2. Weight Fine-Tuned Using the MNCRF Model

3.2.1. Inter-Image Relationships

3.2.2. Intra-Image Relationships

3.3. Enhanced Multiscale Fusion with Region-Selective WGIF-Based Sharpening

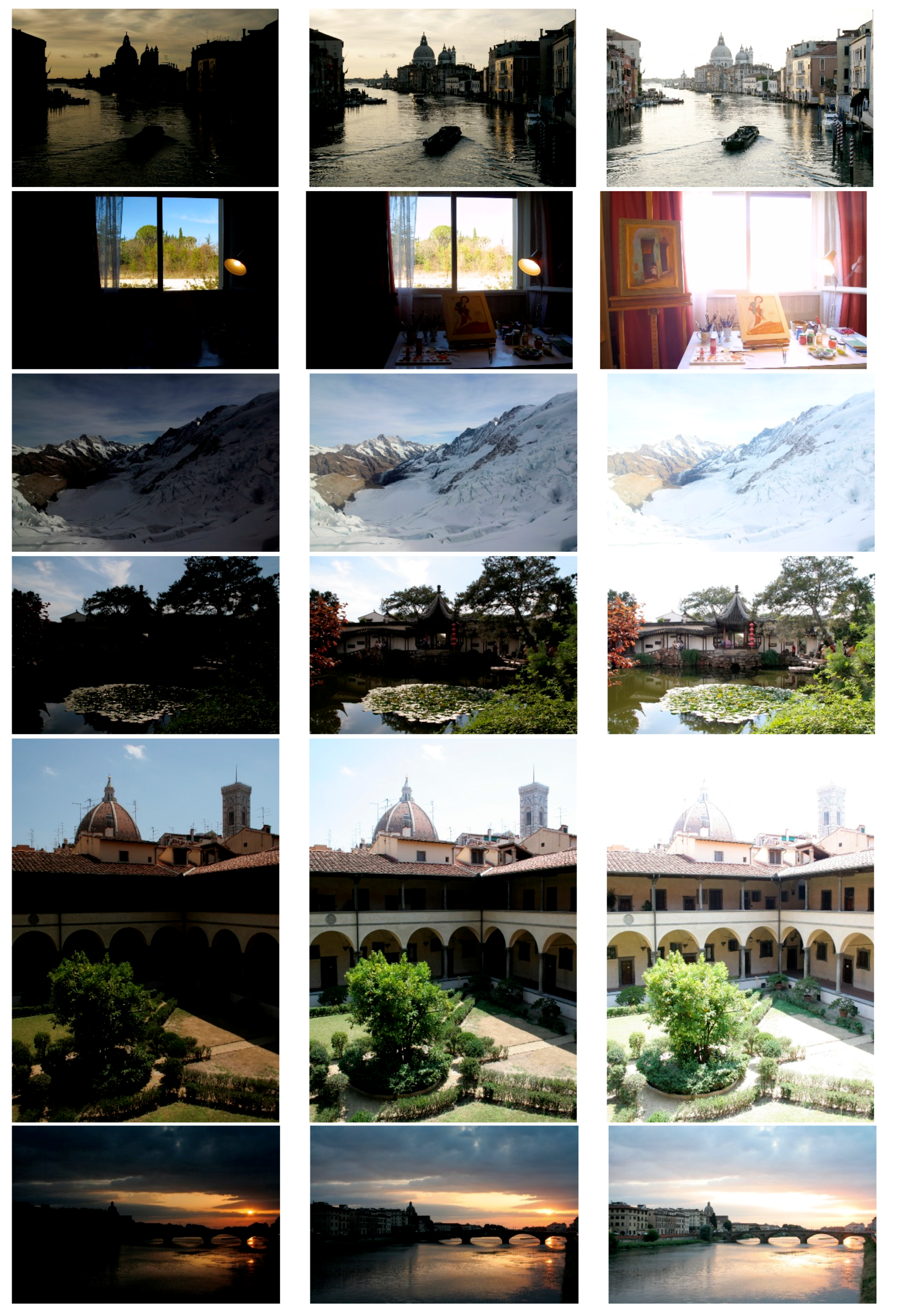

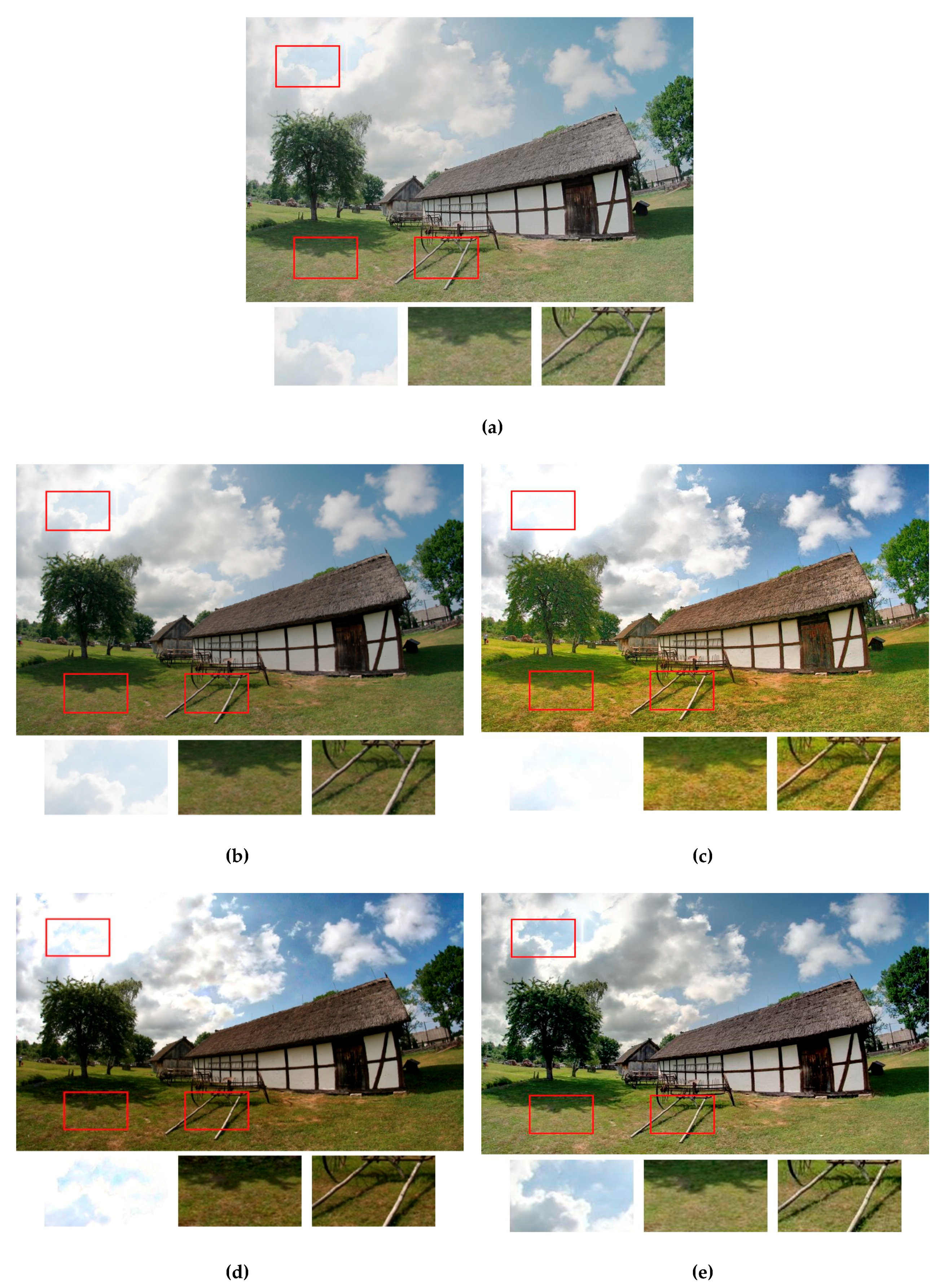

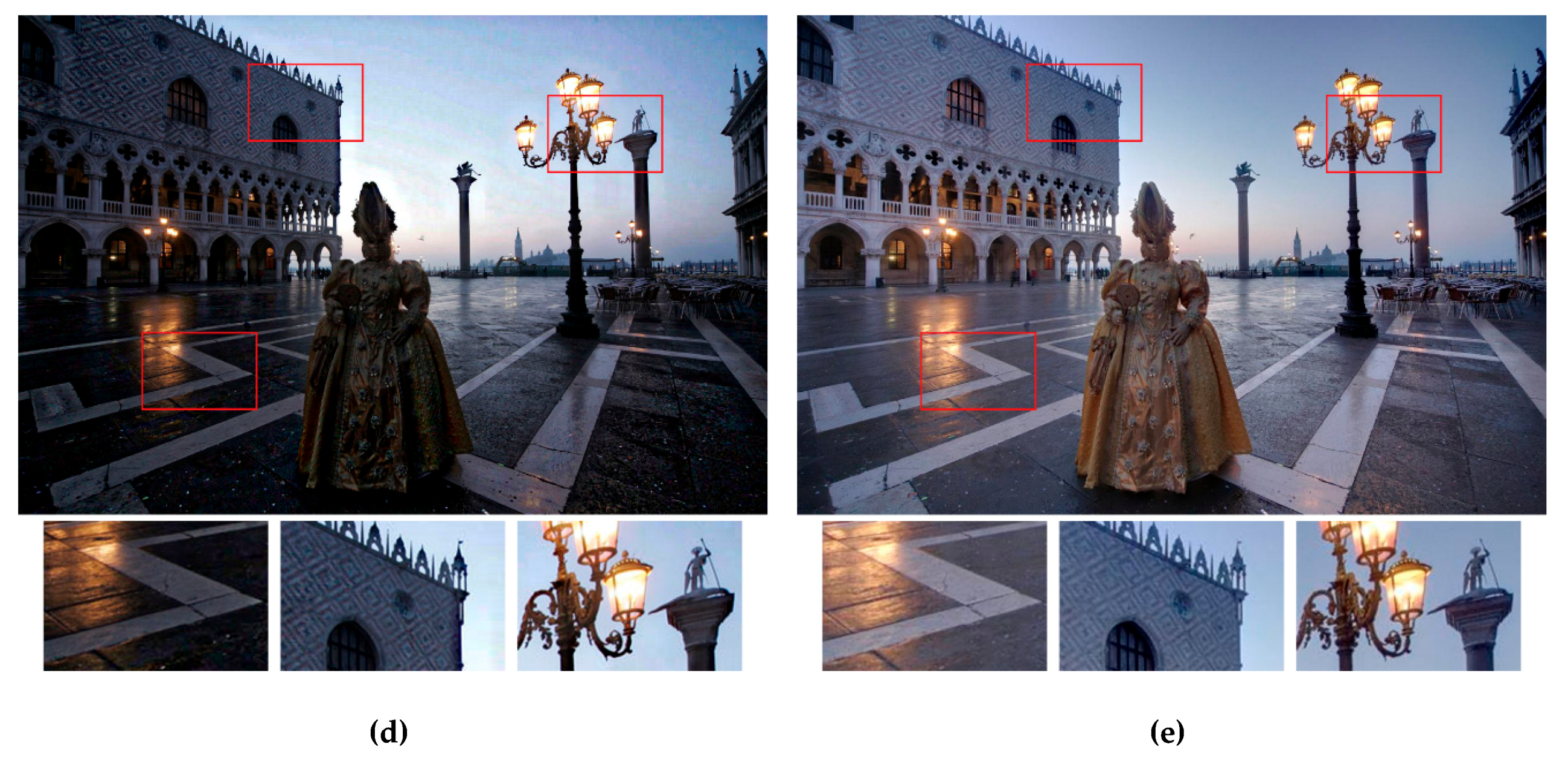

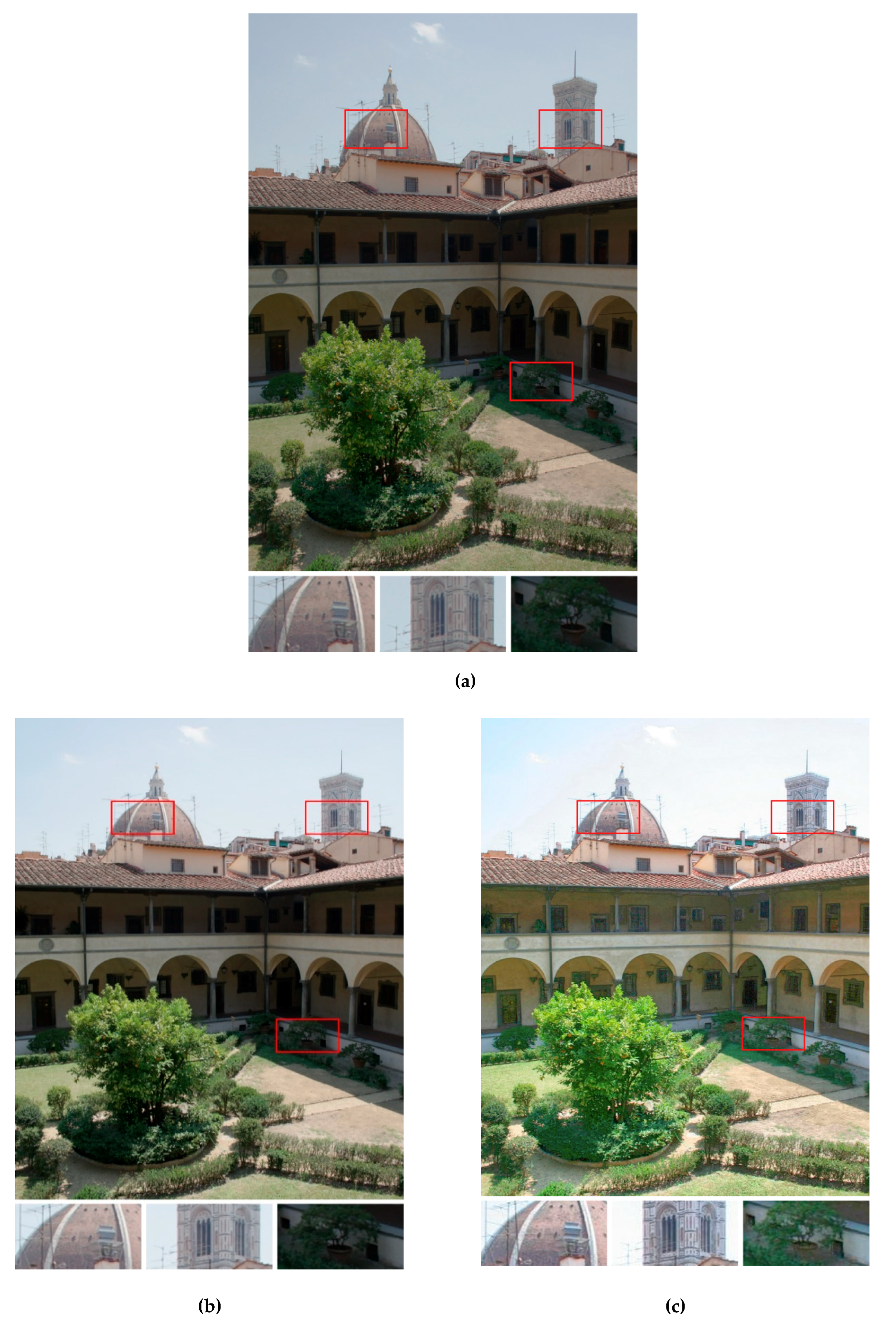

4. Experimental Results and Discussions

4.1. Comparison of the Objective Quality Measures

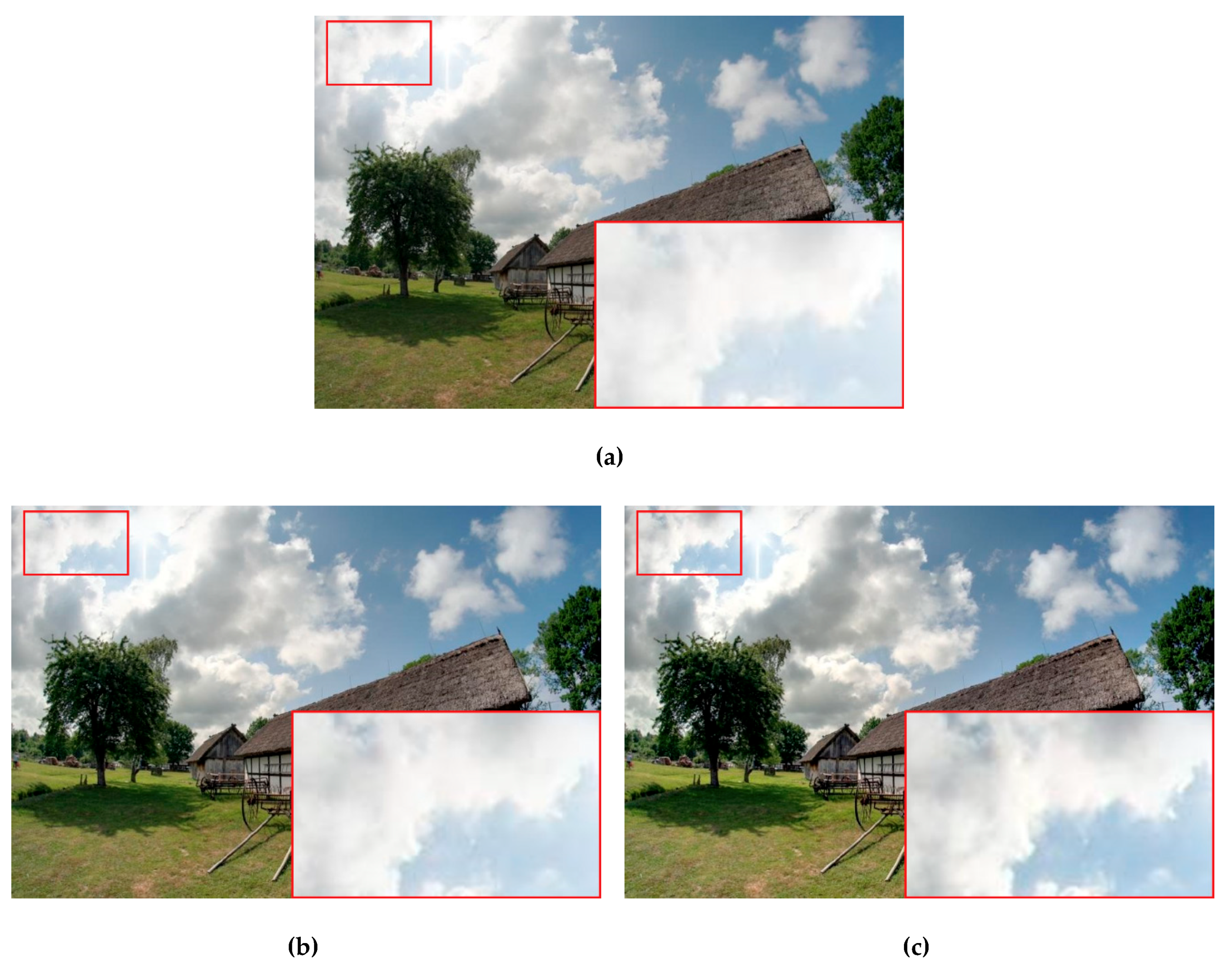

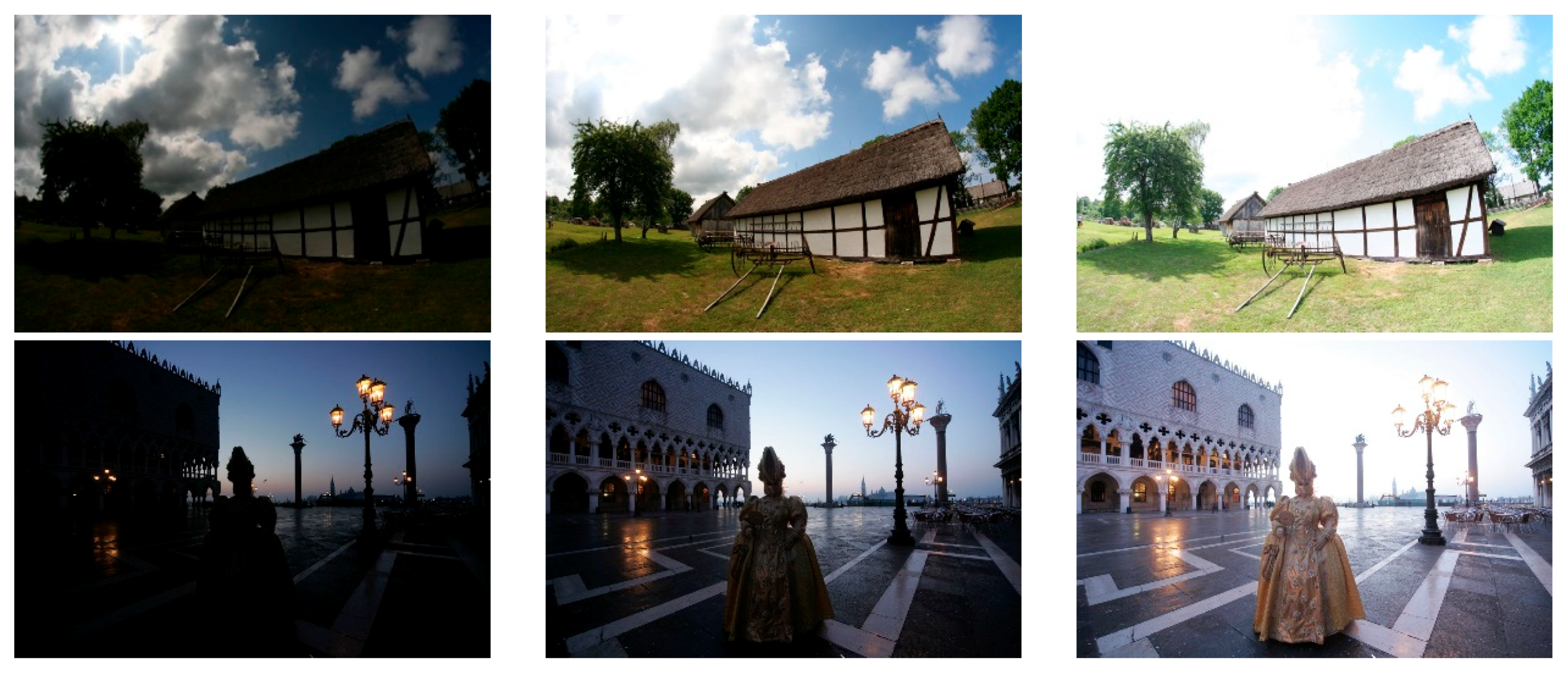

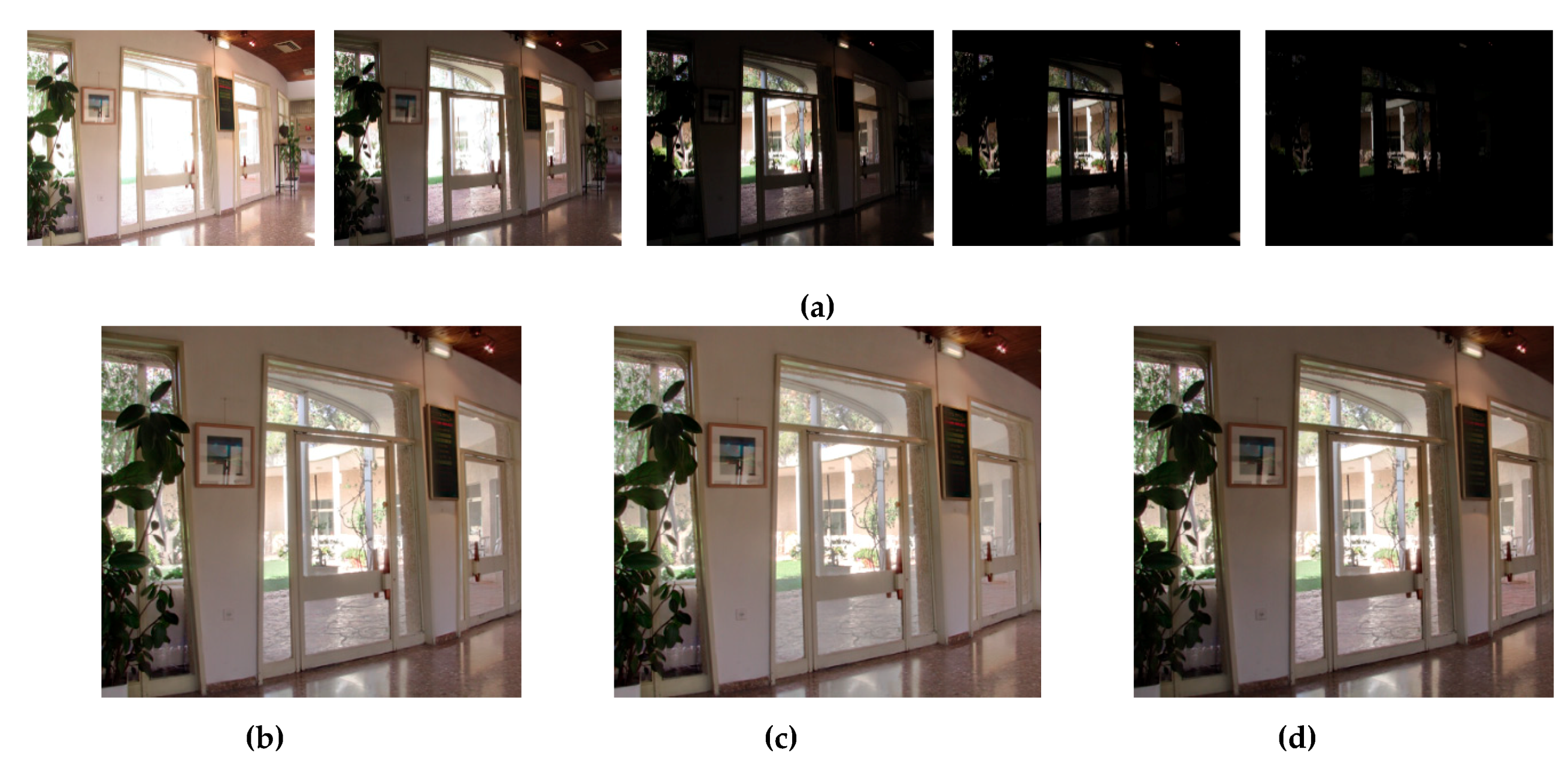

4.2. Visual Comparison and User Study Analysis

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Li, S.; Handa, A.; Zhang, Y.; Calway, A. HDR Fusion: HDR SLAM using a low-cost auto-exposure RGB-D sensor. In Proceedings of the 2016 Fourth International Conference on 3D Vision, Stanford, CA, USA, 25–28 October 2016; pp. 314–322. [Google Scholar]

- Wei, Z.; Wen, C.Y.; Li, Z.G. Local inverse tone mapping for scalable high dynamic range image coding. IEEE Trans. Circuits Syst. Video Technol. 2018, 28, 550–555. [Google Scholar] [CrossRef]

- Ozcinar, C.; Lauga, P.; Valenzise, G.; Dufaux, F. Spatio-temporal constrained tone mapping operator for HDR video compression. J. Vis. Commun. Image Represent. 2018, 55, 166–178. [Google Scholar] [CrossRef]

- Mertens, T.; Kautz, J.; Reeth, F.V. Exposure fusion: A simple and practical alternative to high dynamic range photography. Comput. Graph. Forum 2009, 28, 161–171. [Google Scholar] [CrossRef]

- Jung, J.; Ho, Y. Low-bit depth-high-dynamic range image generation by blending differently exposed images. Iet Image Process. 2013, 7, 606–615. [Google Scholar] [CrossRef]

- Ancuti, C.O.; Ancuti, C.; Vleeschouwer, C.; Bovik, A.C. Single-scale fusion: An effective approach to merging images. IEEE Trans. Image Process. 2017, 26, 65–78. [Google Scholar] [CrossRef] [PubMed]

- Kinoshita, Y.; Shiota, S.; Kiya, H. Automatic exposure compensation for multi-exposure image fusion. In Proceedings of the IEEE International Conference Image Processing, Athens, Greece, 7–10 October 2018; pp. 883–887. [Google Scholar]

- Liu, S.; Zhang, Y. Detail-preserving underexposed image enhancement via optimal weighted multi-exposure fusion. IEEE Trans. Consum. Electron. 2019, 65, 303–311. [Google Scholar] [CrossRef]

- Hayat, N.; Imran, M. Ghost-free multi exposure image fusion technique using dense SIFT descriptor and guided filter. J. Vis. Commun. Image Represent. 2019, 62, 295–308. [Google Scholar] [CrossRef]

- Kinoshita, Y.; Kiya, H. Scene segmentation-based luminance adjustment for multi-exposure image fusion. IEEE Trans. Image Process. 2019, 28, 4101–4116. [Google Scholar] [CrossRef]

- Ma, K.; Li, H.; Yong, H.; Wang, Z.; Meng, D.; Zhang, L. Robust multi-exposure image fusion: A structural patch decomposition approach. IEEE Trans. Image Process. 2017, 26, 2519–2532. [Google Scholar] [CrossRef]

- Ma, K.; Duanmu, Z.; Yeganeh, H.; Wang, Z. Multi-exposure image fusion by optimizing a structural similarity index. IEEE Trans. Comput. Imaging 2018, 4, 60–72. [Google Scholar] [CrossRef]

- Li, Z.; Zheng, J.; Zhu, Z.; Yao, W.; Wu, S. Weighted guided image filtering. IEEE Trans. Image Process. 2015, 24, 120–129. [Google Scholar] [CrossRef] [PubMed]

- Li, Z.; Zheng, J. Single image de-hazing using globally guided image filtering. IEEE Trans. Image Process. 2018, 27, 442–450. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Zheng, C.; Zheng, Q.; Yuan, H. Removing Monte Carlo noise using a Sobel operator and a guided image filter. Vis. Comput. 2018, 34, 589–601. [Google Scholar] [CrossRef]

- Belyaev, A.; Fayolle, P.A. Adaptive curvature-guided image filtering for structure + texture image decomposition. IEEE Trans. Image Process. 2018, 27, 5192–5203. [Google Scholar] [CrossRef] [PubMed]

- Lu, Z.; Long, B.; Li, K.; Lu, F. Effective guide image filtering for contrast enhancement. IEEE Signal Process. Lett. 2018, 25, 1585–1589. [Google Scholar] [CrossRef]

- Du, J.; Li, W.; Xiao, B. Anatomical-functional image fusion by information of interest in local laplacian filtering domain. IEEE Trans. Image Process. 2017, 26, 5855–5866. [Google Scholar] [CrossRef]

- Zhang, H.; Patel, V.M. Densely connected pyramid dehazing network. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 3194–3203. [Google Scholar]

- Ancuti, C.; Ancuti, C.O. Laplacian-guided image decolorization. In Proceedings of the 2016 IEEE International Conference on Image Processing, Phoenix, AZ, USA, 25–28 September 2016; pp. 4107–4111. [Google Scholar]

- Li, Z.; Wei, Z.; Wen, C.; Zheng, J. Detail-enhanced multi-scale exposure fusion. IEEE Trans. Image Process. 2017, 26, 1243–1252. [Google Scholar] [CrossRef]

- Kou, F.; Li, Z.; Wen, C.; Chen, W. Edge-preserving smoothing pyramid based multi-scale exposure fusion. J. Vis. Commun. Image Represent. 2018, 53, 235–244. [Google Scholar] [CrossRef]

- Kou, F.; Chen, W.; Wen, C.; Li, Z. Gradient domain guided image filtering. IEEE Trans. Image Process. 2015, 24, 4528–4539. [Google Scholar] [CrossRef]

- Wang, Q.; Chen, W.; Wu, X.; Li, Z. Detail-enhanced multi-scale exposure fusion in YUV color space. In IEEE Transactions on Circuits and Systems for Video TechnologyI; Early Access: Madison, CT, USA, 2019. [Google Scholar] [CrossRef]

- Singh, V.; Dev, R.; Dhar, N.K.; Agrawal, P.; Verma, N.K. Adaptive type-2 fuzzy approach for filtering salt and pepper noise in grayscale images. IEEE Trans. Fuzzy Syst. 2018, 26, 3170–3176. [Google Scholar] [CrossRef]

- Pham, T.X.; Siarry, P.; Oulhadj, H. Integrating fuzzy entropy clustering with an improved PSO for MRI brain image segmentation. Appl. Soft Comput. 2018, 65, 230–242. [Google Scholar] [CrossRef]

- Liu, M.; Zhou, Z.; Shang, P.; Xu, D. Fuzzified image enhancement for deep learning in iris recognition. IEEE Trans. Fuzzy Syst. 2019, 1–8. [Google Scholar] [CrossRef]

- Celebi, A.T.; Duvar, R.; Urhan, O. Fuzzy fusion based high dynamic range imaging using adaptive histogram separation. IEEE Trans. Consum. Electron. 2015, 61, 119–127. [Google Scholar] [CrossRef]

- Rahman, M.A.; Liu, S.; Wong, C.Y.; Lin, S.C.F.; Liu, S.C.; Kwok, N.M. Multi-focal image fusion using degree of focus and fuzzy logic. Digit. Signal Process. 2017, 60, 1–19. [Google Scholar] [CrossRef]

- Chen, Y.; Hsia, C.; Lu, C. Multiple exposure fusion based on sharpness-controllable fuzzy feedback. J. Intell. Fuzzy Syst. 2019, 36, 1121–1132. [Google Scholar] [CrossRef]

- Lafferty, J.; McCallum, A.; Pereira, F. Conditional Random Fields: Probabilistic Models for Segmenting and Labeling Sequence Data. In Proceedings of the 18th International Conference on Machine Learning, San Francisco, CA, USA, 28 June–1 July 2001; pp. 282–289. [Google Scholar]

- Thakare, B.S.; Deshmkuh, H.R. An Adaptive Approach for Image Denoising Using Pixel Classification and Gaussian Conditional Random Field Technique. In Proceedings of the 2017 International Conference on Computing, Communication, Control and Automation, Pune, India, 17–18 August 2017; pp. 1–8. [Google Scholar]

- Li, F.Y.; Shafiee, M.J.; Chung, A.G.; Chwyl, B.; Kazemzadeh, F.; Wong, A.; Zelek, J. High dynamic range map estimation via fully connected random fields with stochastic cliques. In Proceedings of the 2015 IEEE International Conference on Image Processing, Quebec City, QC, Canada, 27–30 September 2015; pp. 2159–2163. [Google Scholar]

- Fu, K.; Gu, I.Y.; Yang, J. Saliency detection by fully learning a continuous conditional random field. IEEE Trans. Multimed. 2017, 19, 1531–1544. [Google Scholar] [CrossRef]

- Sultani, W.; Mokhtari, S.; Yun, H.B. Automatic pavement object detection using superpixel segmentation combined with conditional random field. IEEE Trans. Intell. Transp. Syst. 2018, 19, 2076–2085. [Google Scholar] [CrossRef]

- Wang, H.C.; Lai, Y.C.; Cheng, W.H.; Cheng, C.Y.; Hua, K.L. Background extraction based on joint Gaussian conditional random fields. IEEE Trans. Circuits Syst. Video Technol. 2017, 28, 3127–3140. [Google Scholar] [CrossRef]

- Photomatix Database. Available online: https://www.hdrsoft.com/index.html (accessed on 10 September 2019).

- HDR Photography Gallery. Available online: https://www.easyhdr.com/examples/ (accessed on 10 September 2019).

- Trivedi, M.; Jaiswal, A.; Bhateja, V. A no-reference image quality index for contrast and Sharpness measurement. In Proceedings of the 3rd IEEE International Advance Computing Conference (IACC), Ghaziabad, India, 22–23 February 2013; pp. 1234–1239. [Google Scholar]

- Ma, K.; Zeng, K.; Wang, Z. Perceptual quality assessment for multi-exposure image fusion. IEEE Trans. Image Process. 2015, 24, 3345–3356. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, L.; Bovik, A. A feature-enriched completely blind image quality evaluator. IEEE Trans. Image Process. 2015, 24, 2579–2591. [Google Scholar] [CrossRef]

- Gu, K.; Lin, W.; Zhai, G.; Yang, X.; Zhang, W.; Chen, C. No-reference quality metric of contrast-distorted images based on information maximization. IEEE Trans. Cybern. 2017, 47, 4559–4565. [Google Scholar] [CrossRef] [PubMed]

- Yang, Y.; Cao, W.; Wu, S.; Li, Z. Multi-scale fusion of two large-exposure-ratio images. IEEE Signal Process. Lett. 2018, 25, 1885–1889. [Google Scholar] [CrossRef]

| Exposedness | Low | Medium | High | |

|---|---|---|---|---|

| Pixel-visibility | ||||

| Low | L | M–L | M | |

| Medium | M–L | M | M–H | |

| High | M | M–H | H | |

| Method | Method of [5] | Method of [6] | Method of [28] | Method of [23] | Proposed | |

|---|---|---|---|---|---|---|

| Image | ||||||

| Cottage | 7.1133 | 9.3121 | 9.4548 | 9.2748 | 9.4681 | |

| Masked Lady | 3.7015 | 7.2641 | 7.0846 | 7.3124 | 7.3579 | |

| Grand Canal | 7.1152 | 10.3240 | 10.7570 | 10.8148 | 10.8990 | |

| Studio | 2.9509 | 5.2579 | 4.9159 | 5.1922 | 5.2857 | |

| Mountains | 3.9031 | 4.6651 | 4.2465 | 4.5157 | 4.6059 | |

| Chinese Garden | 9.1185 | 14.9413 | 14.2991 | 15.2331 | 16.3545 | |

| Laurentian Library | 6.6231 | 10.9506 | 10.3437 | 10.9220 | 11.0333 | |

| Arno River | 2.6075 | 4.0337 | 4.7827 | 4.7998 | 4.4832 | |

| Average | 5.3916 | 8.3436 | 8.2355 | 8.5081 | 8.6860 | |

| Method | Method of Reference [5] | Method of Reference [6] | Method of Reference [28] | Method of Reference [23] | Proposed Method | |

|---|---|---|---|---|---|---|

| Image | ||||||

| Cottage | 7.7012 | 7.7878 | 7.7661 | 7.6545 | 7.9077 | |

| Masked Lady | 7.2523 | 7.4959 | 7.6040 | 7.5180 | 7.5058 | |

| Grand Canal | 7.4973 | 7.5888 | 7.8199 | 7.7353 | 7.7493 | |

| Studio | 7.5419 | 7.5544 | 7.4239 | 6.6548 | 7.5542 | |

| Mountains | 6.5399 | 6.7724 | 6.5173 | 7.3431 | 6.9725 | |

| Chinese Garden | 7.6596 | 7.8087 | 7.5363 | 7.3059 | 7.8311 | |

| Laurentian Library | 7.6578 | 7.8620 | 7.5551 | 7.5777 | 7.8934 | |

| Arno River | 7.3875 | 7.4426 | 7.1607 | 7.5230 | 7.4567 | |

| Average | 7.4047 | 7.5391 | 7.4229 | 7.4140 | 7.6088 | |

| Method | Method of Reference [5] | Method of Reference [6] | Method of Reference [28] | Method of Reference [23] | Proposed Method | |

|---|---|---|---|---|---|---|

| Image | ||||||

| Cottage | 0.8617 | 0.8875 | 0.8672 | 0.8967 | 0.9456 | |

| Masked Lady | 0.7878 | 0.8628 | 0.8467 | 0.9245 | 0.9345 | |

| Grand Canal | 0.8483 | 0.8695 | 0.8314 | 0.8247 | 0.9424 | |

| Studio | 0.7095 | 0.7659 | 0.6926 | 0.8762 | 0.8454 | |

| Mountains | 0.9187 | 0.9721 | 0.9292 | 0.8621 | 0.9824 | |

| Chinese Garden | 0.8146 | 0.9521 | 0.8693 | 0.9081 | 0.9640 | |

| Laurentian Library | 0.8523 | 0.9104 | 0.8820 | 0.9354 | 0.9625 | |

| Arno River | 0.8823 | 0.9110 | 0.8813 | 0.8358 | 0.9548 | |

| Average | 0.8344 | 0.8914 | 0.8500 | 0.8829 | 0.9415 | |

| Method | Method of Reference [5] | Method of Reference [6] | Method of Reference [28] | Method of Reference [23] | Proposed Method | |

|---|---|---|---|---|---|---|

| Image | ||||||

| Cottage | 15.7939 | 15.8029 | 16.9300 | 16.0214 | 17.2690 | |

| Masked Lady | 20.1251 | 19.4580 | 18.4810 | 18.9070 | 17.8545 | |

| Grand Canal | 17.7827 | 19.0877 | 17.8064 | 19.3340 | 16.2722 | |

| Studio | 24.6146 | 23.2614 | 21.3269 | 26.2480 | 20.3581 | |

| Mountains | 19.6483 | 19.2559 | 19.0458 | 17.0945 | 18.1613 | |

| Chinese Garden | 13.7786 | 13.9000 | 14.4244 | 15.4630 | 14.2424 | |

| Laurentian Library | 17.8698 | 17.2073 | 16.7130 | 19.0272 | 17.3954 | |

| Arno River | 25.5544 | 21.9432 | 22.9693 | 24.8623 | 20.9425 | |

| Average | 19.3959 | 18.7395 | 18.4621 | 19.6196 | 17.8119 | |

| Method. | Method of Reference [5] | Method of Reference [6] | Method of Reference [28] | Method of Reference [23] | Proposed Method | |

|---|---|---|---|---|---|---|

| Image | ||||||

| Cottage | 5.3764 | 5.7228 | 5.4693 | 5.7325 | 5.7211 | |

| Masked Lady | 4.6466 | 5.0959 | 5.0698 | 5.2302 | 5.3977 | |

| Grand Canal | 5.374 | 5.4604 | 5.4632 | 5.7162 | 5.3884 | |

| Studio | 5.0849 | 5.4033 | 5.1765 | 4.9845 | 5.6936 | |

| Mountains | 4.3825 | 4.3244 | 3.9839 | 4.9553 | 4.6781 | |

| Chinese Garden | 4.6375 | 5.7034 | 5.0167 | 5.0835 | 5.5842 | |

| Laurentian Library | 4.9774 | 5.3432 | 5.2776 | 5.5504 | 5.7724 | |

| Arno River | 4.8022 | 5.2405 | 5.0546 | 5.4673 | 5.4493 | |

| Average | 4.9102 | 5.2867 | 5.0640 | 5.3400 | 5.4606 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lin, Y.-H.; Hua, K.-L.; Lu, H.-H.; Sun, W.-L.; Chen, Y.-Y. An Adaptive Exposure Fusion Method Using Fuzzy Logic and Multivariate Normal Conditional Random Fields. Sensors 2019, 19, 4743. https://doi.org/10.3390/s19214743

Lin Y-H, Hua K-L, Lu H-H, Sun W-L, Chen Y-Y. An Adaptive Exposure Fusion Method Using Fuzzy Logic and Multivariate Normal Conditional Random Fields. Sensors. 2019; 19(21):4743. https://doi.org/10.3390/s19214743

Chicago/Turabian StyleLin, Yu-Hsiu, Kai-Lung Hua, Hsin-Han Lu, Wei-Lun Sun, and Yung-Yao Chen. 2019. "An Adaptive Exposure Fusion Method Using Fuzzy Logic and Multivariate Normal Conditional Random Fields" Sensors 19, no. 21: 4743. https://doi.org/10.3390/s19214743

APA StyleLin, Y.-H., Hua, K.-L., Lu, H.-H., Sun, W.-L., & Chen, Y.-Y. (2019). An Adaptive Exposure Fusion Method Using Fuzzy Logic and Multivariate Normal Conditional Random Fields. Sensors, 19(21), 4743. https://doi.org/10.3390/s19214743