Assistive Grasping Based on Laser-point Detection with Application to Wheelchair-mounted Robotic Arms

Abstract

1. Introduction

2. Laser point Detection

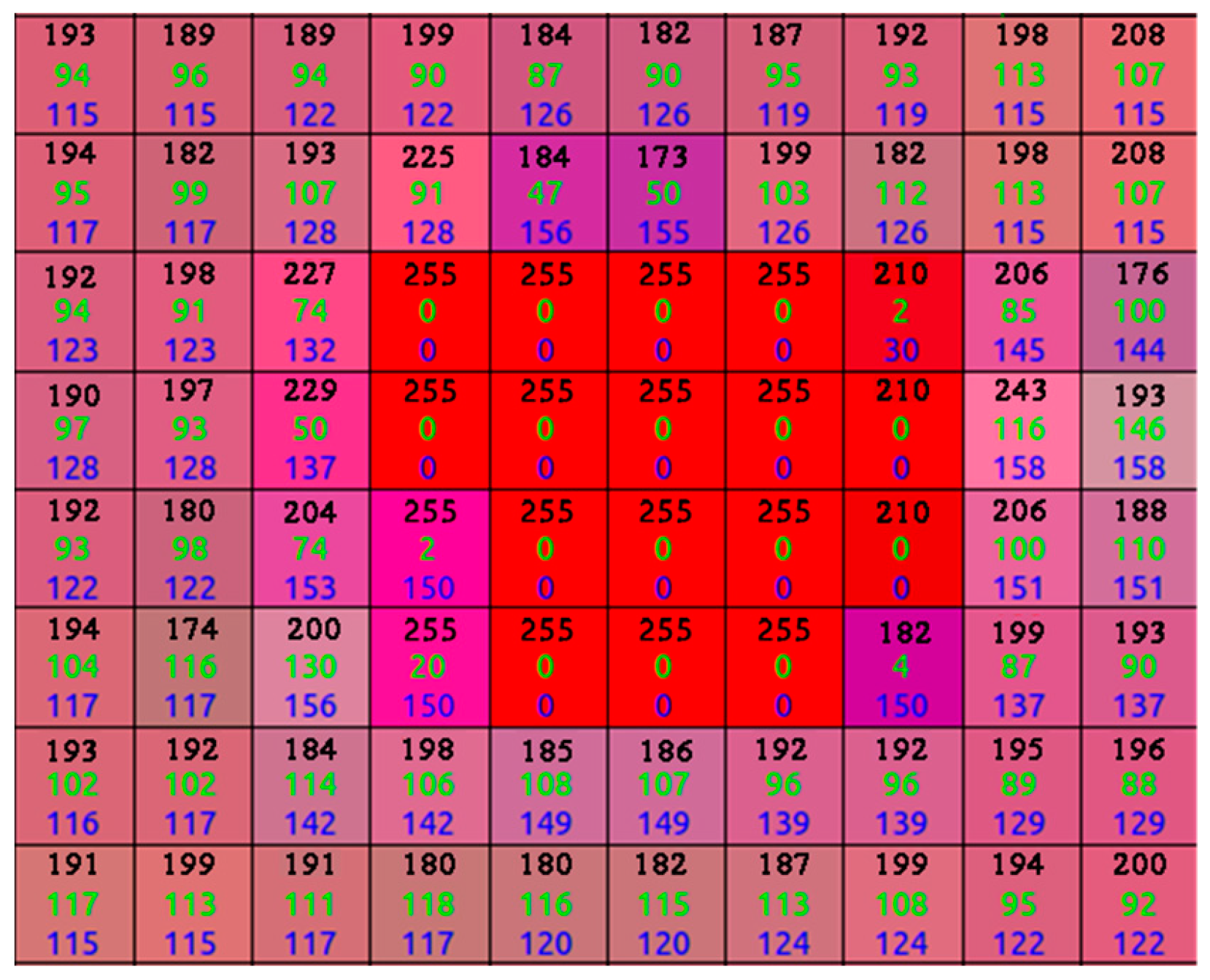

2.1. Image Pre-Processing

2.2. Laser-Point Detection

3. Object Grasping

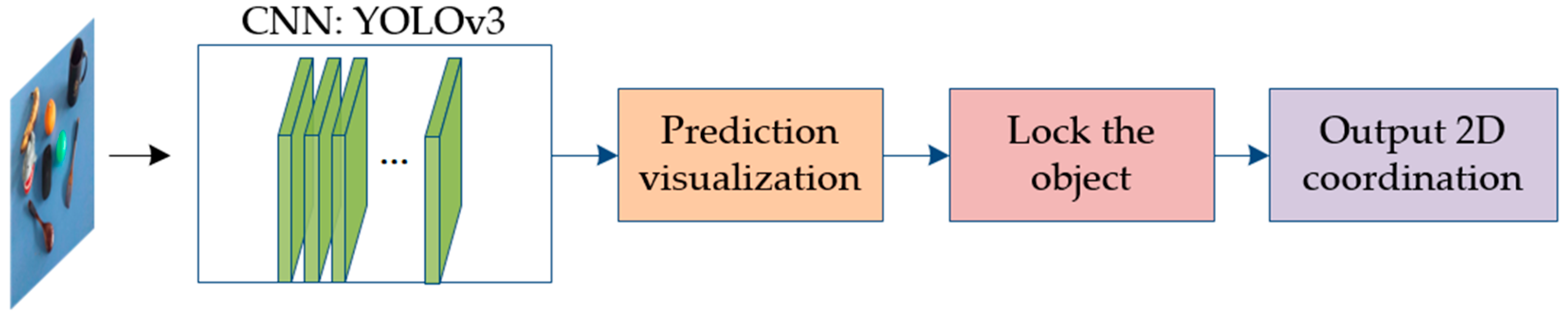

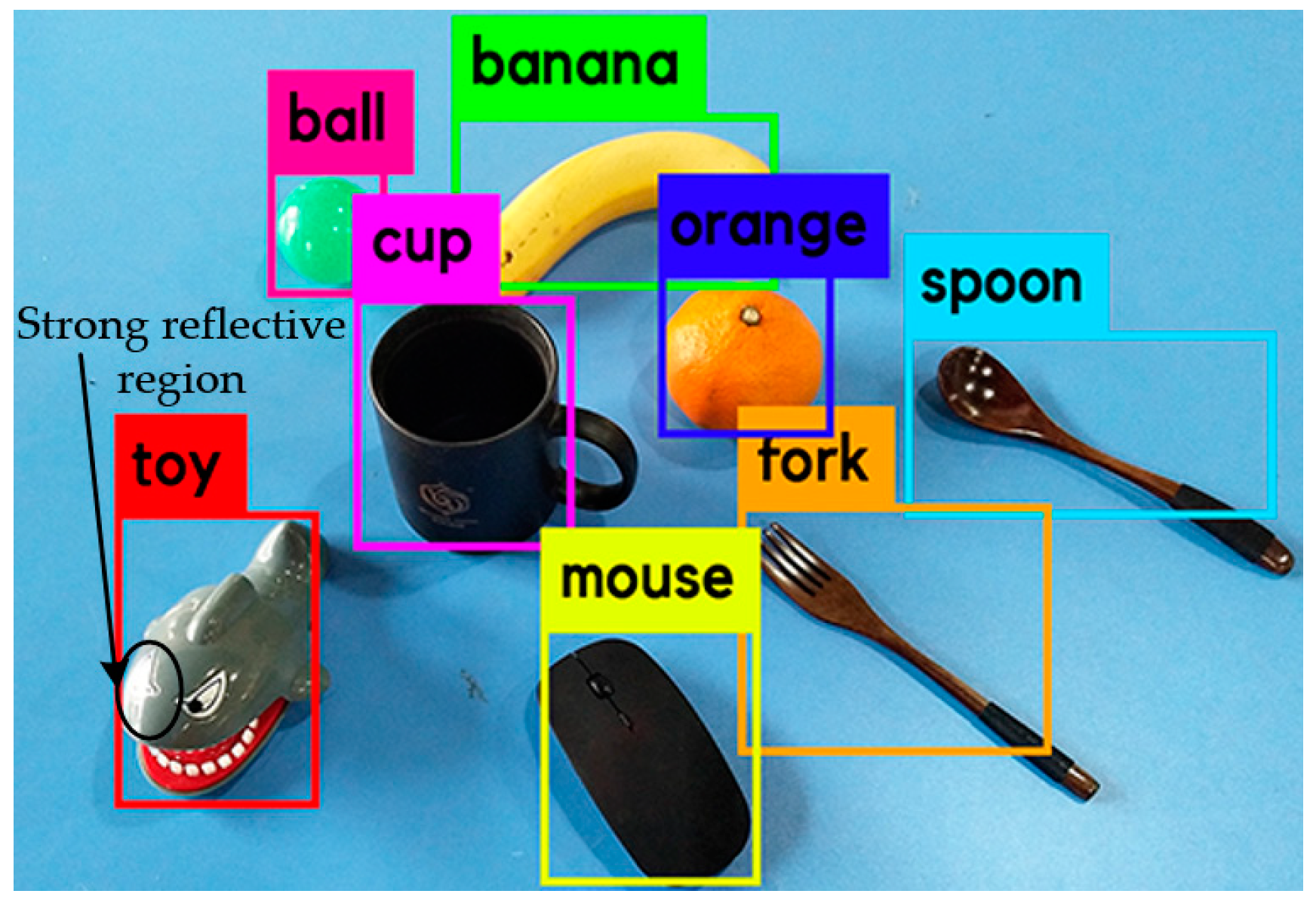

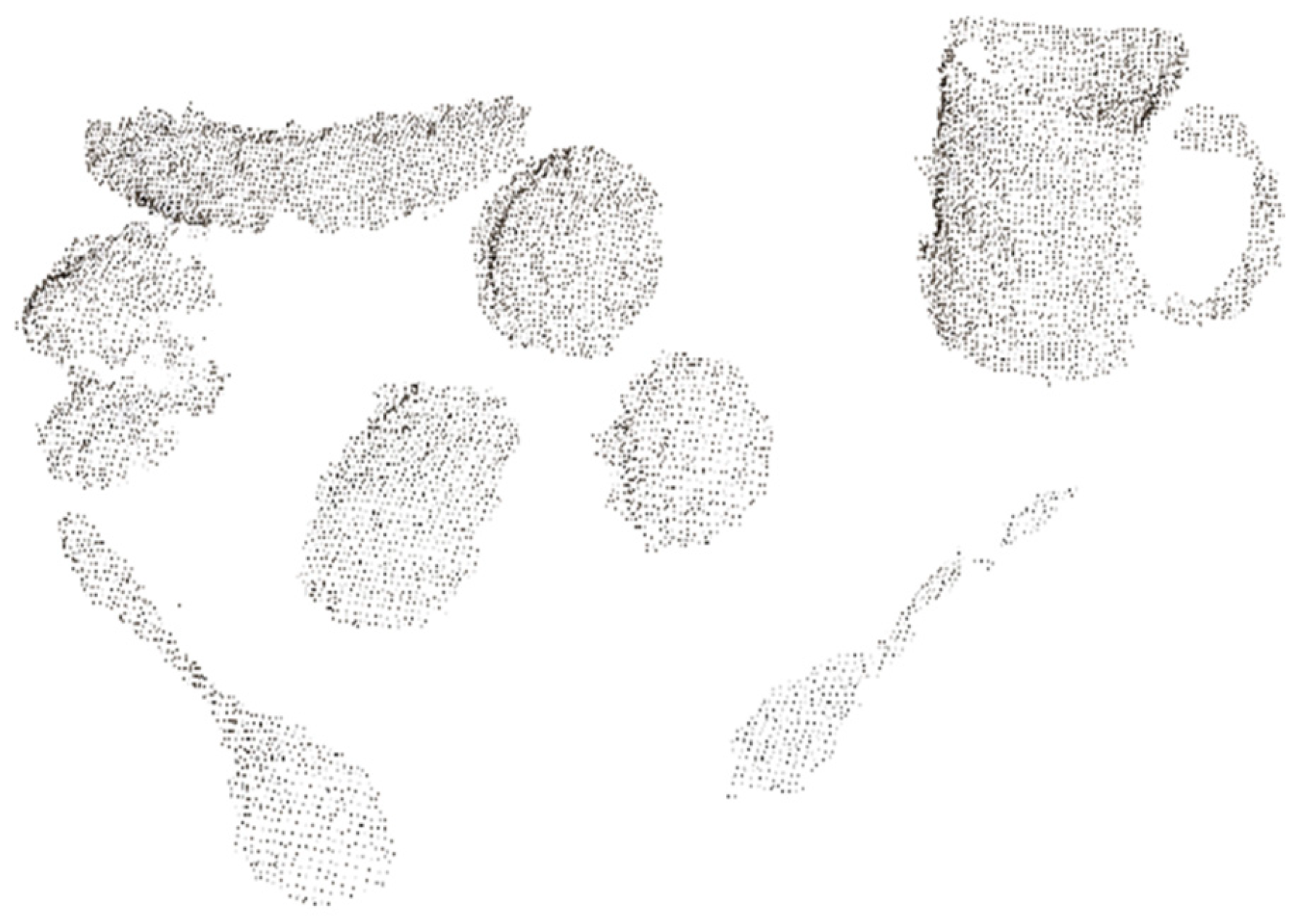

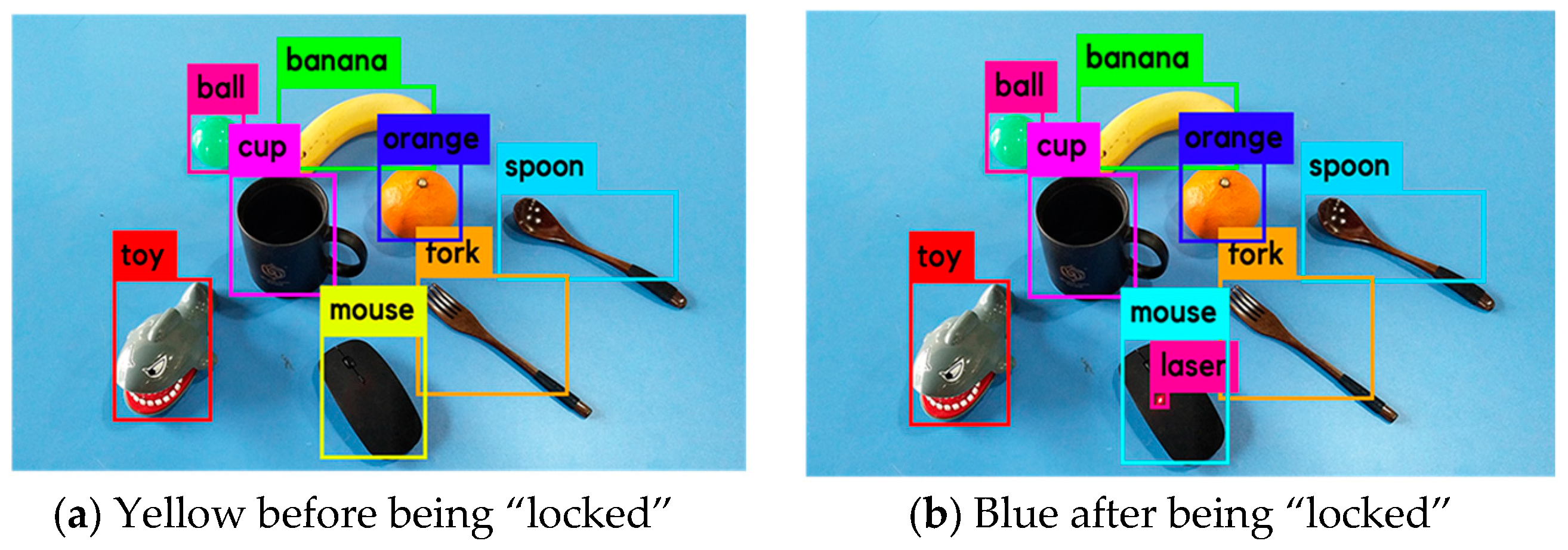

3.1. Object Determination

- = objects’ 3D X coordinate component;

- = “locked” object’s 2D Y coordinate component;

- = objects’ 3D Y coordinate component;

- = objects’ 3D Z coordinate component; and

- T = the threshold.

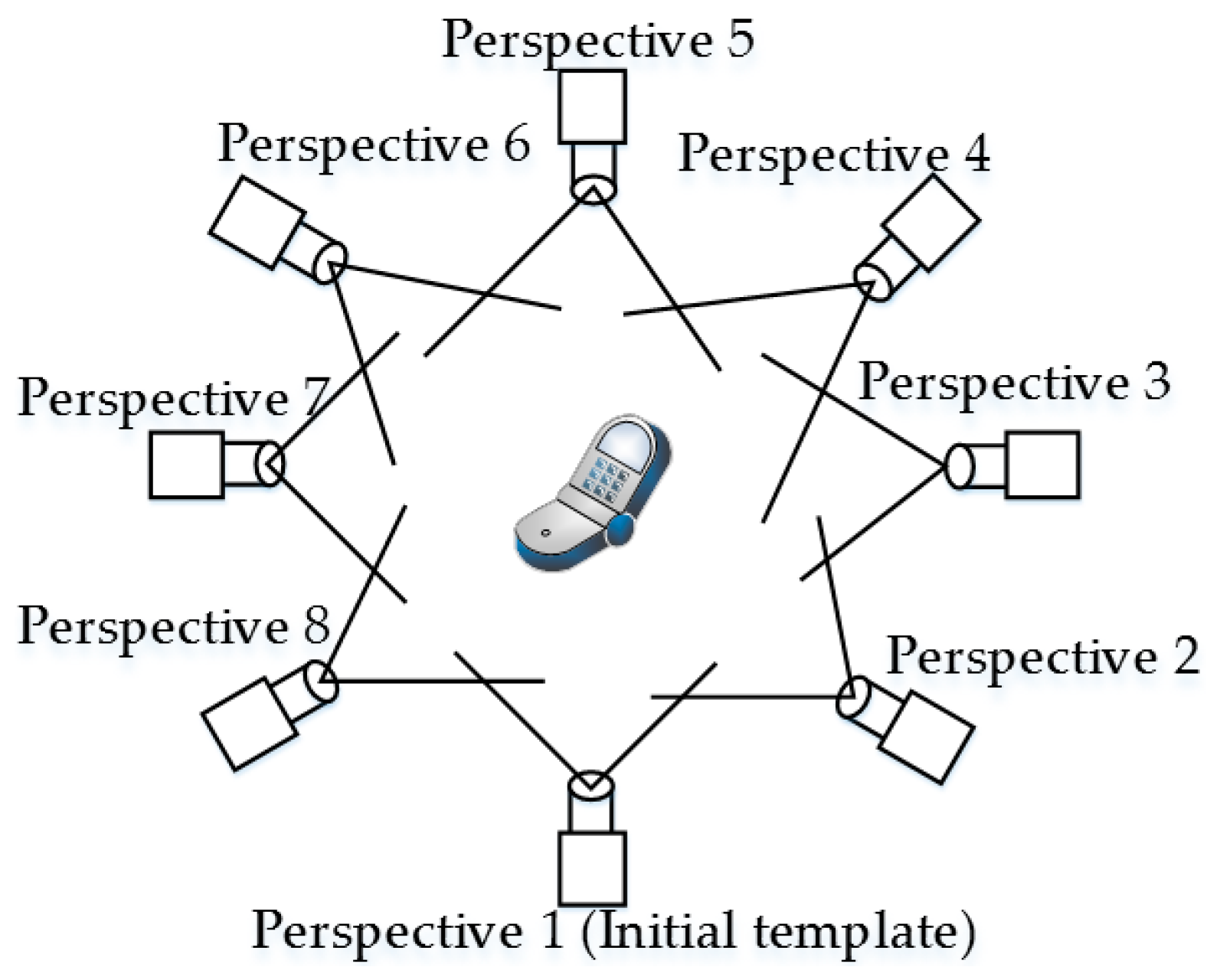

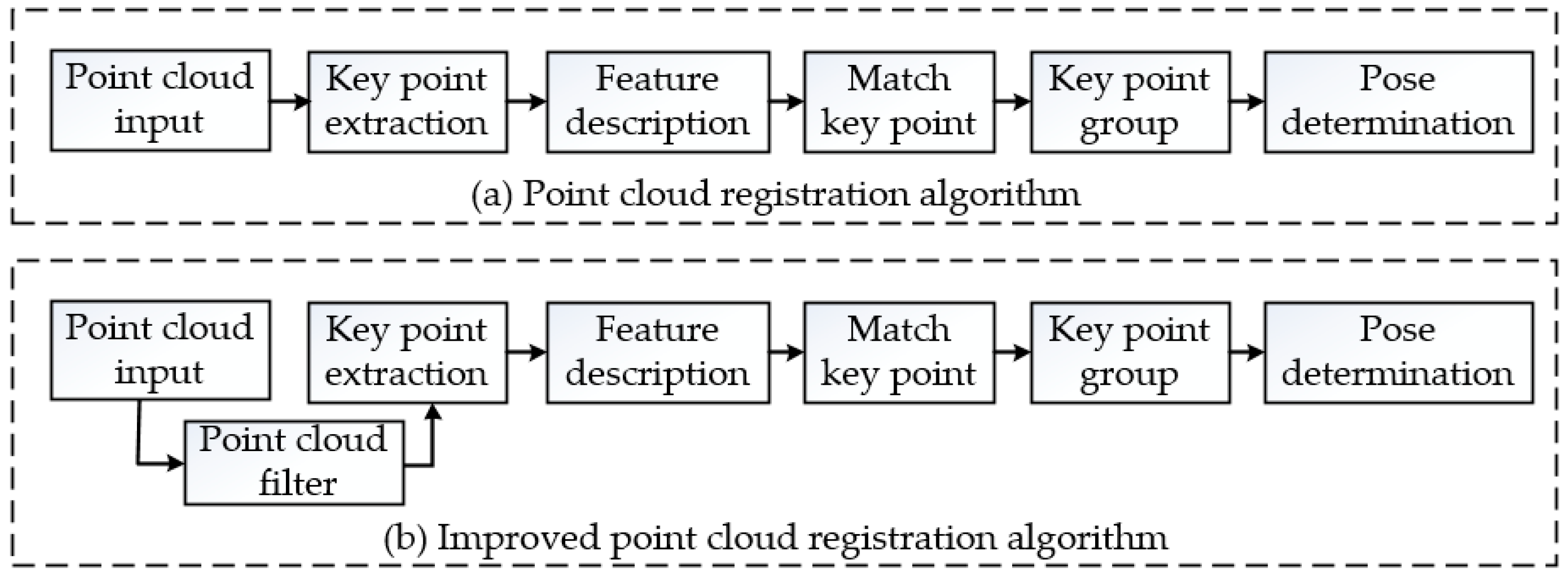

3.2. Grasping Pose Determination

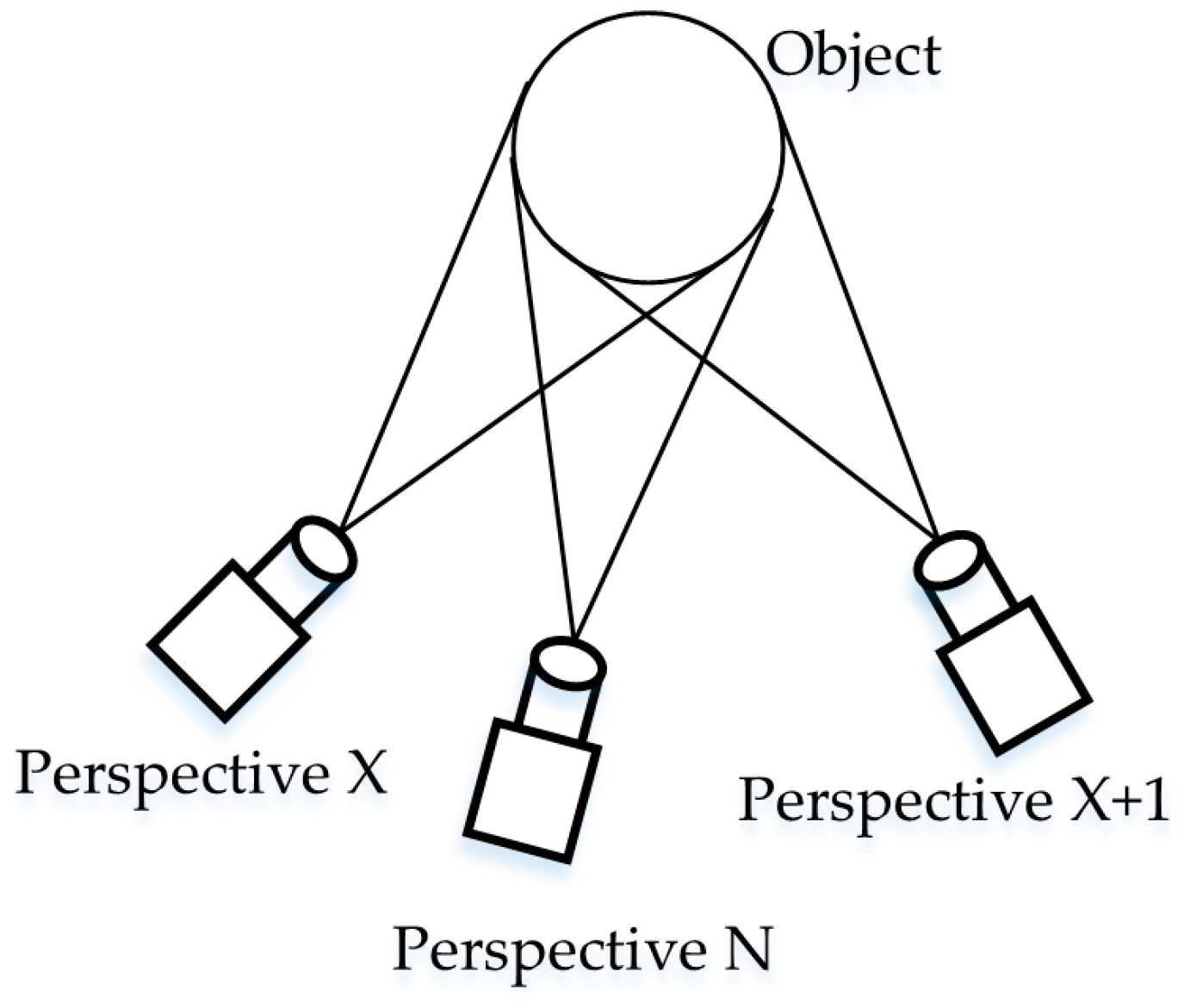

3.3. Coarse-Matching of Target to Template Objects

3.4. Precise-Matching of Target to Template Object

- (1)

- Extract the key points from Nc and Xc using the SIFT3D algorithm to obtain the key point sets Nf and Xf [33];

- (2)

- Calculate the local features using fast point feature histograms (FPFH) of Nf and Xf;

- (3)

- Group the key points in Nf and Xf, respectively;

- (4)

- Eliminate incorrect groups using the Hall vote algorithm [34];

- (5)

- Use the sample consensus initial alignment (SAC-IA) algorithm to register Nf and Xf and obtain the transformation matrix .

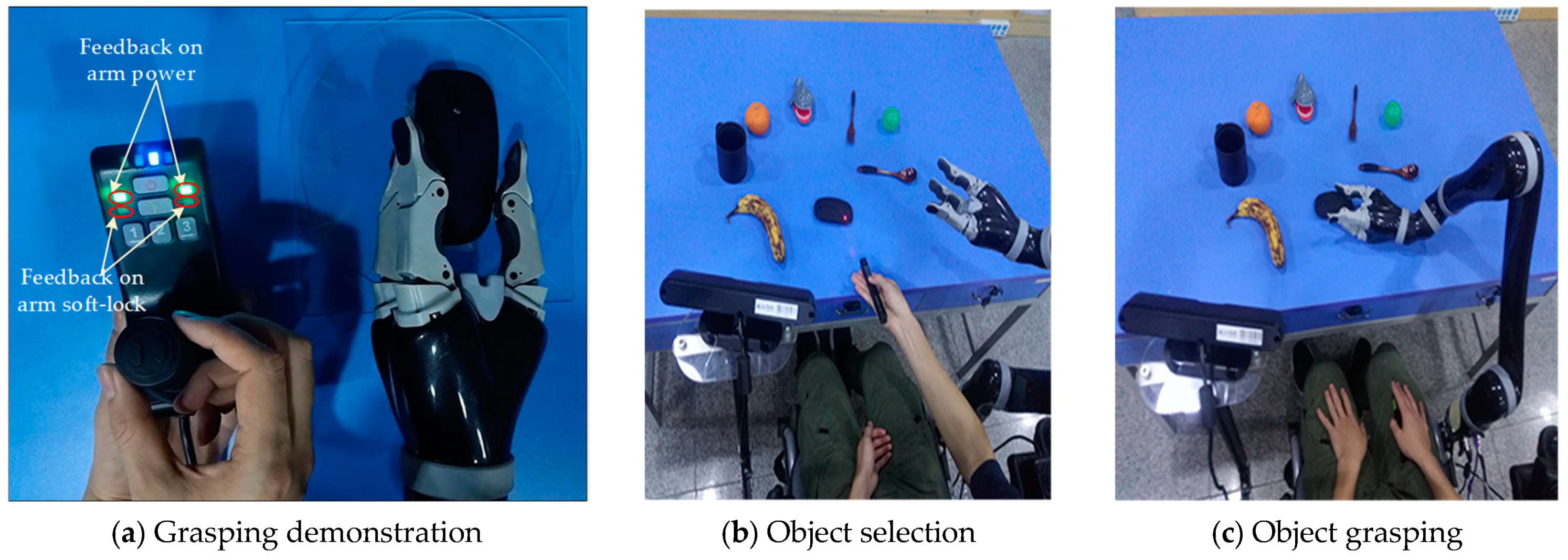

4. Experimental Verification

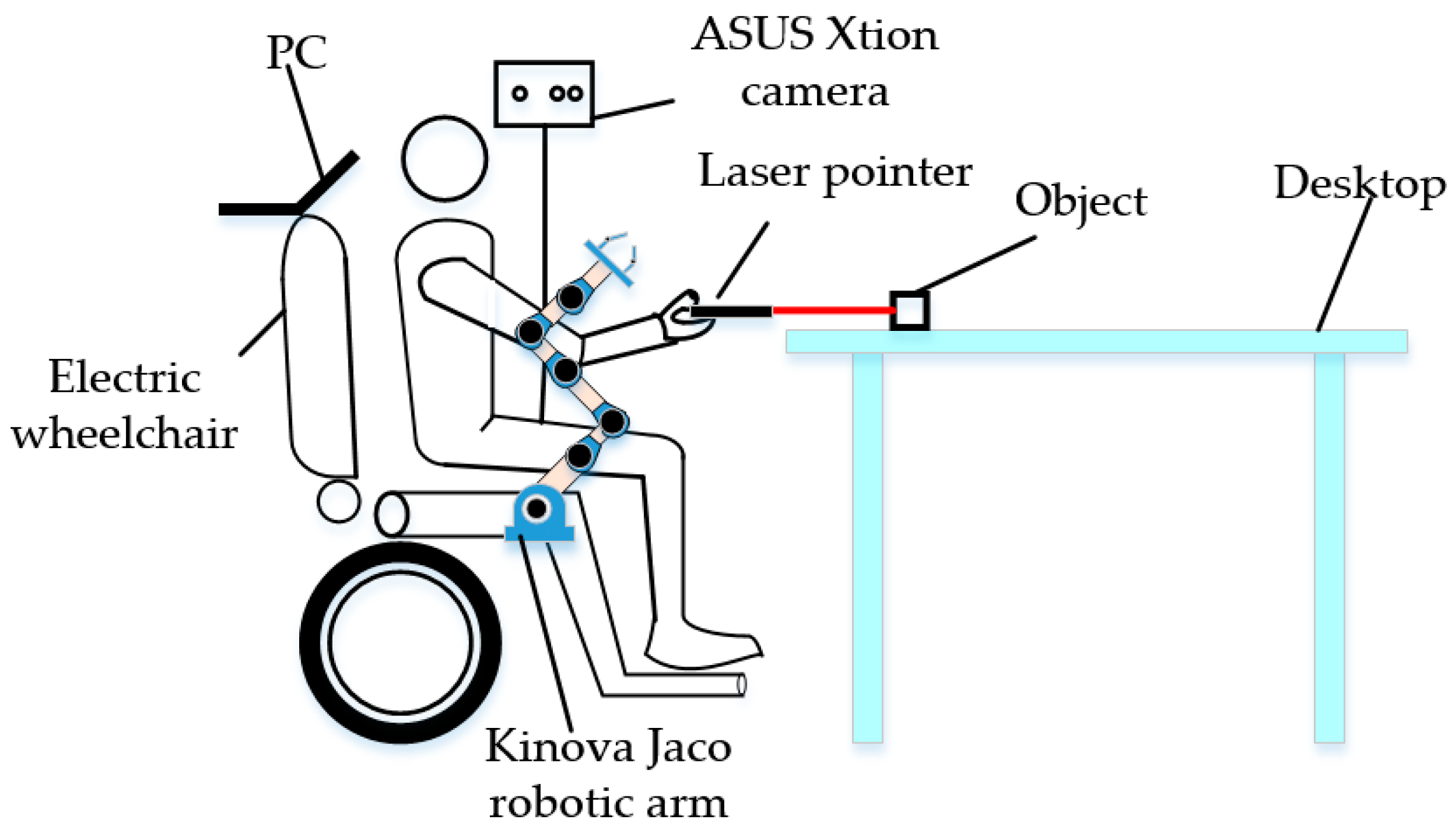

4.1. Experimental Setup

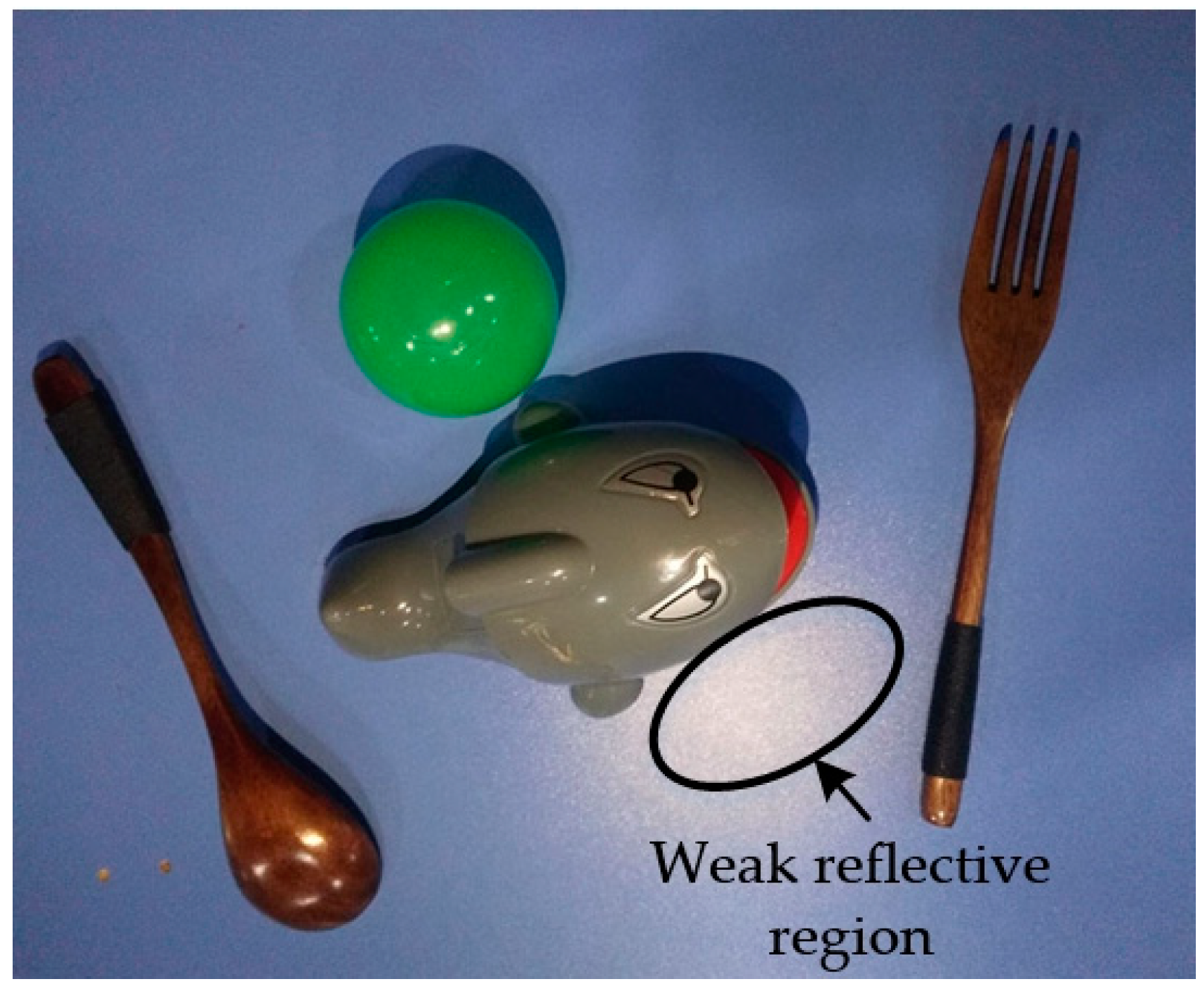

4.2. Experiment Results

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Tang, Y.; Dong, F.; Yamazaki, Y.; Shibata, T.; Hirota, K. Deep Level Situation Understanding for Casual Communication in Humans-Robots Interaction. Int. J. Fuzzy Log. Intell. Syst. 2015, 15, 1–11. [Google Scholar] [CrossRef]

- Wu, Q.; Wu, H. Development, Dynamic Modeling, and Multi-Modal Control of a Therapeutic Exoskeleton for Upper Limb Rehabilitation Training. Sensors 2018, 18, 3611. [Google Scholar] [CrossRef] [PubMed]

- Lee, H.K.; Kim, J.H. An HMM-based threshold model approach for gesture recognition. IEEE Trans. Pattern Anal. Mach. Intell. 1999, 21, 961–973. [Google Scholar]

- Tanaka, H.; Sumi, Y.; Matsumoto, Y. Assistive robotic arm autonomously bringing a cup to the mouth by face recognition. In Proceedings of the 2010 IEEE Advanced Robotics and ITS Social Impacts, Seoul, Korea, 26–28 October 2010; pp. 34–39. [Google Scholar]

- Kazi, Z.; Foulds, R. Knowledge driven planning and multimodal control of a telerobot. Robotica 1998, 16, 509–516. [Google Scholar] [CrossRef]

- Rouanet, P.; Oudeyer, P.Y.; Danieau, F.; Filliat, D. The impact of human–robot interfaces on the learning of visual objects. IEEE Trans. Robot. 2013, 29, 525–541. [Google Scholar] [CrossRef]

- Choi, K.; Min, B.K. Future directions for brain-machine interfacing technology. In Recent Progress in Brain and Cognitive Engineering; Springer: Dordrecht, The Netherlands, 2015. [Google Scholar]

- Imtiaz, N.; Mustafa, M.M.; Hussain, A.; Scavino, E. Laser pointer detection based on intensity profile analysis for application in teleconsultation. J. Eng. Sci. Technol. 2017, 12, 2238–2253. [Google Scholar]

- Kang, S.H.; Yang, C.K. Laser-pointer human computer interaction system. In Proceedings of the IEEE International Conference on Multimedia & Expo Workshops, Turin, Italy, 29 June–3 July 2015; pp. 1–6. [Google Scholar]

- Karvelis, P.; Roijezon, U.; Faleij, R.; Georgoulas, G.; Mansouri, S.S.; Nikolakopoulos, G. A laser dot tracking method for the assessment of sensorimotor function of the hand. In Proceedings of the Mediterranean Conference on Control and Automation, Valletta, Malta, 3–6 July 2017; pp. 217–222. [Google Scholar]

- Fukuda, Y.; Kurihara, Y.; Kobayashi, K.; Watanabe, K. Development of electric wheelchair interface based on laser pointer. In Proceedings of the ICCAS-SICE, Fukuoka, Japan, 18–21 August 2009; pp. 1148–1151. [Google Scholar]

- Gualtieri, M.; Kuczynski, J.; Shultz, A.M.; Pas, A.T.; Platt, R.; Yanco, H. Open world assistive grasping using laser selection. In Proceedings of the IEEE International Conference on Robotics and Automation, Singapore, 29 May–3 June 2017; pp. 4052–4057. [Google Scholar]

- Kemp, C.C.; Anderson, C.D.; Hai, N.; Trevor, A.J.; Xu, Z. A point-and-click interface for the real world: Laser designation of objects for mobile manipulation. In Proceedings of the 2008 3rd ACM/IEEE International Conference on Human-Robot Interaction, Amsterdam, The Netherlands, 12–15 March 2008. [Google Scholar]

- Hai, N.; Anderson, C.; Trevor, A.; Jain, A.; Xu, Z.; Kemp, C.C. EL-E: An assistive robot that fetches objects from flat surfaces. In Proceedings of the Robotic Helpers Workshop at HRI’08, Amsterdam, The Netherlands, 12 March 2008. [Google Scholar]

- Jain, A.; Kemp, C.C. EL-E: An assistive mobile manipulator that autonomously fetches objects from flat surfaces. Auton. Robot. 2010, 28, 45. [Google Scholar] [CrossRef]

- Lapointe, J.F.; Godin, G. On-screen laser spot detection for large display interaction. In Proceedings of the IEEE International Workshop on Haptic Audio Visual Environments & Their Applications, Ottawa, ON, Canada, 1 October 2005. [Google Scholar]

- Nguyen, H.; Jain, A.; Anderson, C.; Kemp, C.C. A clickable world: behavior selection through pointing and context for mobile manipulation. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, France, 22–26 September 2008; pp. 787–793. [Google Scholar]

- Zhou, P.; Wang, X.; Huang, Q.; Ma, C. Laser spot center detection based on improved circle fitting algorithm. In Proceedings of the 2018 2nd IEEE Advanced Information Management, Communicates, Electronic and Automation Control Conference (IMCEC), Xi’an, China, 25–27 May 2016. [Google Scholar]

- Stauffer, C.; Grimson, W.E.L. Adaptive background mixture models for real-time tracking. In Proceedings of the 1999 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (Cat. No PR00149), Fort Collins, CO, USA, 23–25 June 1999; Volume 2, p. 2246. [Google Scholar]

- Geng, L.; Xiao, Z. Real time foreground-background segmentation using two-layer codebook model. In Proceedings of the 2011 International Conference on Control, Automation and Systems Engineering, Singapore, 30–31 July 2011; pp. 1–5. [Google Scholar]

- Zhang, B.; Gu, J.; Chen, C.; Han, J.; Su, X.; Cao, X.; Liu, J. One-two-one networks for compression artifacts reduction in remote sensing. ISPRS J. Photogramm. Remote Sens. 2018, 145, 184–196. [Google Scholar] [CrossRef]

- Zhang, B.; Li, Z.; Cao, X.; Ye, Q.; Chen, C.; Shen, L.; Perina, A.; Jill, R. Output Constraint Transfer for Kernelized Correlation Filter in Tracking. IEEE Trans. Syst. Man Cybern. Syst. 2017, 47, 693–703. [Google Scholar] [CrossRef]

- Jeon, W.-S.; Rhee, S.-Y. Plant Leaf Recognition Using a Convolution Neural Network. Int. J. Fuzzy Log. Intell. Syst. 2017, 17, 26–34. [Google Scholar] [CrossRef]

- Shin, M.; Lee, J.-H. CNN Based Lithography Hotspot Detection. Int. J. Fuzzy Log. Intell. Syst. 2016, 16, 208–215. [Google Scholar] [CrossRef]

- Chu, J.; Guo, Z.; Leng, L. Object Detection Based on Multi-Layer Convolution Feature Fusion and Online Hard Example Mining. IEEE Access 2018, 6, 19959–19967. [Google Scholar] [CrossRef]

- Jiang, S.; Yao, W.; Hong, Z.; Li, L.; Su, C.; Kuc, T.-Y. A Classification-Lock Tracking Strategy Allowing a Person-Following Robot to Operate in a Complicated Indoor Environment. Sensors 2018, 18, 3903. [Google Scholar] [CrossRef] [PubMed]

- Choi, H. CNN Output Optimization for More Balanced Classification. Int. J. Fuzzy Log. Intell. Syst. 2017, 17, 98–106. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv, 2018; arXiv:1804.02767. [Google Scholar]

- Luan, S.; Zhang, B.; Zhou, S.; Chen, C.; Han, J.; Yang, W.; Liu, J. Gabor Convolutional Networks. IEEE Trans. Image Process. 2018, 27, 4357–4366. [Google Scholar] [CrossRef] [PubMed]

- Zhang, B.; Perina, A.; Li, Z.; Murino, V.; Liu, J.; Ji, R. Bounding Multiple Gaussians Uncertainty with Application to Object Tracking. Int. J. Comput. Vis. 2016, 118, 364–379. [Google Scholar] [CrossRef]

- Rusu, R.B.; Bradski, G.; Thibaux, R.; Hsu, J. Fast 3D recognition and pose using the Viewpoint Feature Histogram. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Taipei, Taiwan, 18–22 October 2014; pp. 2155–2162. [Google Scholar]

- Aldoma, A.; Vincze, M.; Blodow, N.; Gossow, D.; Gedikli, S.; Rusu, R.B.; Bradski, G. CAD-model recognition and 6DOF pose estimation using 3D cues. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Barcelona, Spain, 6–13 November 2011; pp. 585–592. [Google Scholar]

- Filipe, S.; Alexandre, L.A. A comparative evaluation of 3D keypoint detectors in a RGB-D object dataset. In Proceedings of the International Conference on Computer Vision Theory and Applications, Lisbon, Portugal, 5–8 January 2014; pp. 476–483. [Google Scholar]

- Tombari, F.; Stefano, L.D. Object recognition in 3D scenes with occlusions and clutter by Hough Voting. In Proceedings of the 2010 Fourth Pacific-Rim Symposium on Image and Video Technology, Singapore, 14–17 November 2010; pp. 349–355. [Google Scholar]

| Parameter | True Pose Transformation | Only VFH | VFH + Key Point Registration | VFH + Improved Key Point Registration |

|---|---|---|---|---|

| x (deg) | 0 | 0 | 2.1356 ± 0.2640 | 1.6453 ± 0.2135 |

| y (deg) | 0 | 0 | 1.3486 ± 0.1666 | −0.8749 ± 0.1135 |

| z (deg) | 45 | 60 | 46.4620 ± 5.7425 | 47.0037 ± 6.0999 |

| Px (mm) | 10 | 9.6123 ± 0.5518 | 10.6575 ± 0.6423 | |

| Py (mm) | 10 | 11.0065 ± 0.6318 | 9.5700 ± 0.5768 | |

| Pz (mm) | 0 | 0.0034 ± 0.0002 | 0.3364 ± 0.0203 | |

| t (s) | 2.44 | 5.36 | 4.43 | |

| Er | 0.2131 | 0.0414 | 0.0391 | |

| Pr | 1.0786 | 0.8659 |

| Parameter | True Pose Transformation | Only VFH | VFH + Key Point Registration | VFH + Improved Key Point Registration |

|---|---|---|---|---|

| x (deg) | 0 | 0 | 1.3841 ± 0.1625 | −0.3986 ± 0.0491 |

| y (deg) | 0 | 0 | −0.6849 ± 0.0804 | −0.7935 ± 0.0978 |

| z (deg) | 45 | 40 | 45.0064 ± 5.2845 | 46.5762 ± 5.7422 |

| Px (mm) | 10 | 10.3794 ± 0.5660 | 10.8067 ± 0.6188 | |

| Py (mm) | 10 | 9.3428 ± 0.5095 | 10.3957 ± 0.5952 | |

| Pz (mm) | 0 | −0.6437 ± 0.0351 | −0.5791 ± 0.0332 | |

| t (s) | 1.79 | 4.66 | 3.68 | |

| Er | 0.0712 | 0.0220 | 0.0257 | |

| Pr | 0.9951 | 1.069 |

| Grasped Object | Grasping Times | Number of Successes | Laser Point Detection Times |

|---|---|---|---|

| Banana | 30 | 22 | 30 |

| Orange | 30 | 30 | 30 |

| Ball | 30 | 30 | 30 |

| Toy | 30 | 22 | 30 |

| Mouse | 30 | 24 | 30 |

| Cup | 30 | 29 | 30 |

| Fork | 30 | 13 | 30 |

| Spoon | 30 | 15 | 30 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhong, M.; Zhang, Y.; Yang, X.; Yao, Y.; Guo, J.; Wang, Y.; Liu, Y. Assistive Grasping Based on Laser-point Detection with Application to Wheelchair-mounted Robotic Arms. Sensors 2019, 19, 303. https://doi.org/10.3390/s19020303

Zhong M, Zhang Y, Yang X, Yao Y, Guo J, Wang Y, Liu Y. Assistive Grasping Based on Laser-point Detection with Application to Wheelchair-mounted Robotic Arms. Sensors. 2019; 19(2):303. https://doi.org/10.3390/s19020303

Chicago/Turabian StyleZhong, Ming, Yanqiang Zhang, Xi Yang, Yufeng Yao, Junlong Guo, Yaping Wang, and Yaxin Liu. 2019. "Assistive Grasping Based on Laser-point Detection with Application to Wheelchair-mounted Robotic Arms" Sensors 19, no. 2: 303. https://doi.org/10.3390/s19020303

APA StyleZhong, M., Zhang, Y., Yang, X., Yao, Y., Guo, J., Wang, Y., & Liu, Y. (2019). Assistive Grasping Based on Laser-point Detection with Application to Wheelchair-mounted Robotic Arms. Sensors, 19(2), 303. https://doi.org/10.3390/s19020303