Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters in Infrared and Visible Images

Abstract

1. Introduction

2. Related Work

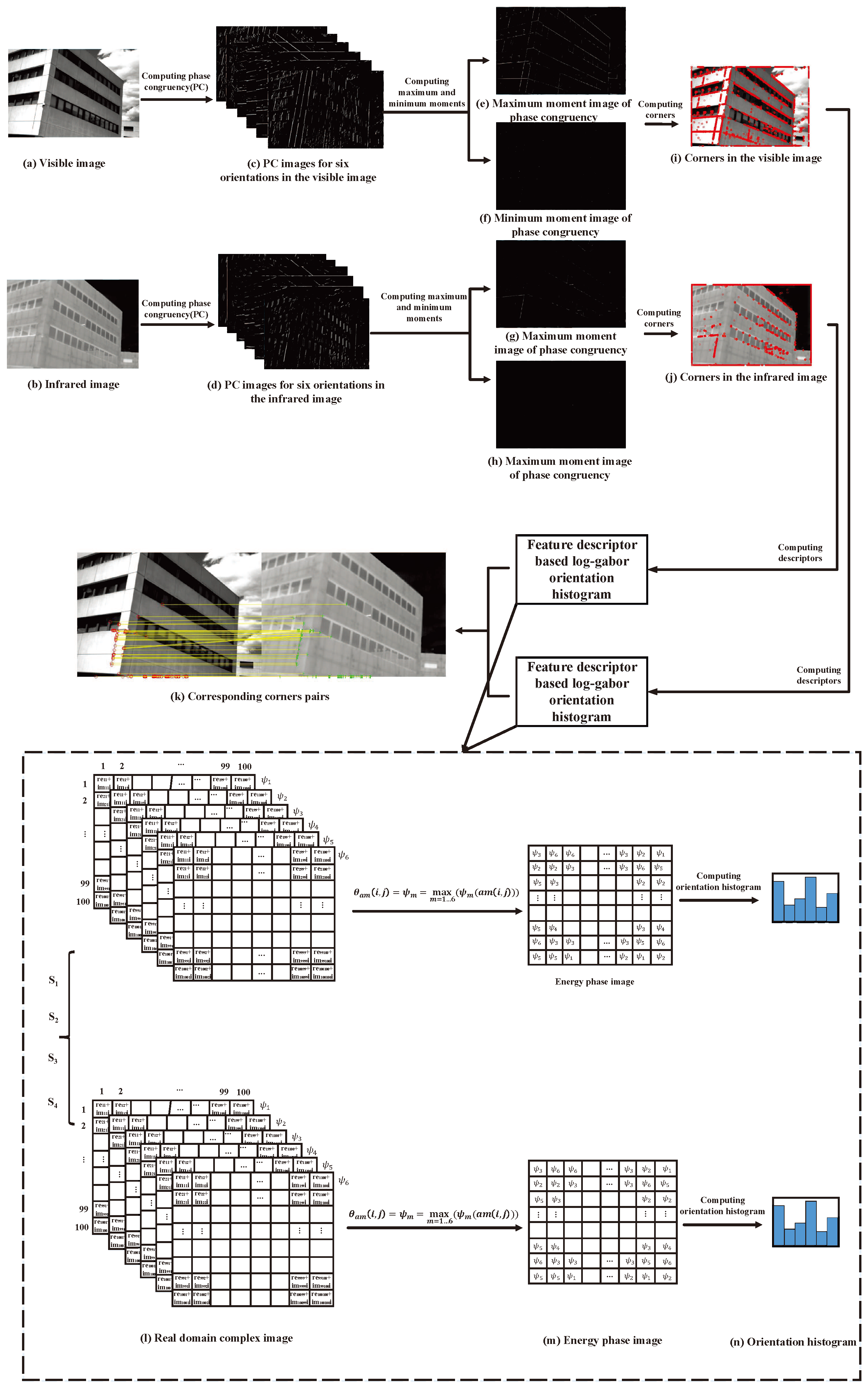

3. Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters for Infrared and Visible Images

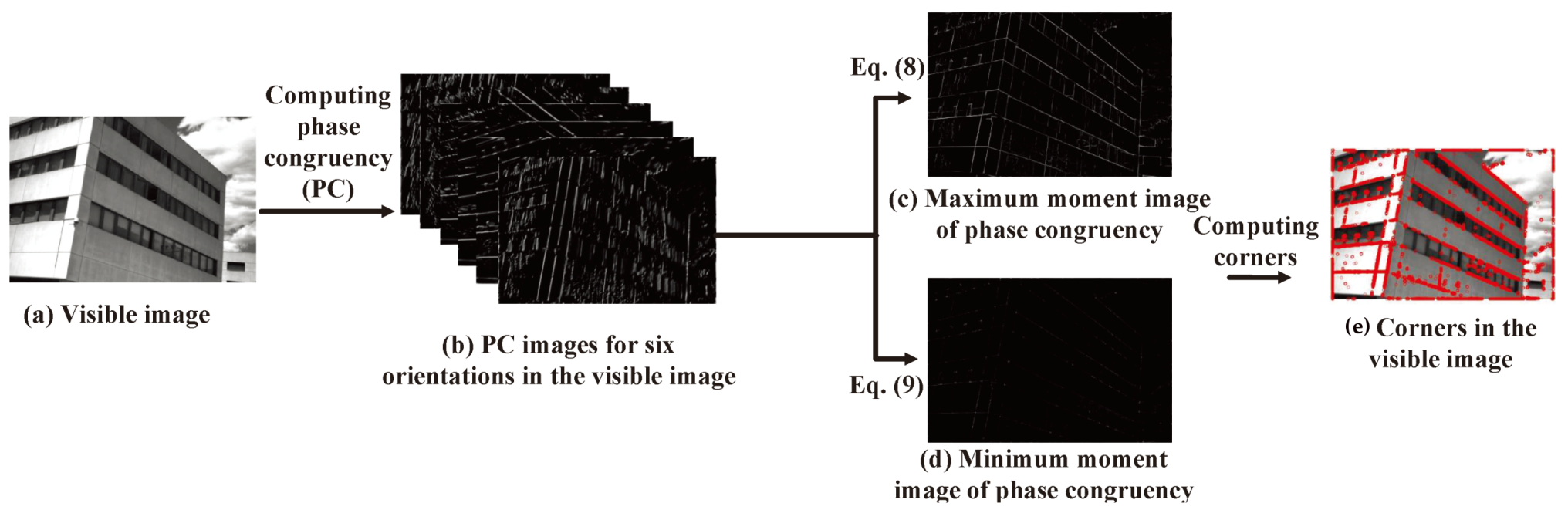

3.1. Corner Detection Based on Distinct Wavelength Phase Congruency

3.2. Corners Detection Combining Distinct Wavelength Phase Congruency of Original Images and Gaussian Smoothing Images

3.3. Feature Descriptor Based on Log-Gabor Filters and the Corresponding Keypoint Detection Using the RANSAC Algorithm

3.4. Similarity Computation of the Points Sets from the Visible and Infrared Images by BiDimRegressional Regression Modeling

- Vectors A,B represent the coordinates of the visible image, which are extracted from the independent image possessing the relationships with the corresponding infrared image from the same scene. A and B are known as the first and the second dimension, respectively.

- Vectors X,Y represent the coordinates of the infrared image, which are extracted from the independent image possessing the relationships with the corresponding visible image from the same scene. X and Y are known as the first and the second dimension, respectively.

- is the squared regression coefficient.

- F represents F statistics for the overall regression model including appendant degrees of freedom(df1,df2).

- p value is the accordant significance level.

4. Experiments

4.1. Experiment Data

4.2. Evaluation Measures

- Repeatable rate (RPR): as shown in Figure 8, the red points show repeatable keypoints and the green points show non-matching keypoints. In two images (the infrared image in Figure 8a and the visible image in Figure 8b), the high percentage of repeatable keypoints in both images is called repeatability. Thus, the repeatable rate (RPR) is defined as follows:where and are the numbers of true corresponding keypoints and total keypoints, respectively. A higher value denotes that the keypoint detection approach has a better performance.

- Recall rate (RR): this is shown as Figure 9, he red keypoints are the true matched corresponding keypoints and the yellow ones are non-matched keypoints. A better keypoint detection algorithm should detect more corresponding keypoints accurately over the repeatable keypoints set. Thus, the recall rate (RR) can be defined as:where and are the detected true matched point number and the undetected true matched point number, respectively. The higher the value of the RR is, the better the performance of the matching approach.

- Accuracy rate (AR): as shown in Figure 10, the detected corresponding keypoints should be accurate; the red lines show accurately detected corresponding keypoints and the green lines represent those which were not accurate. More red lines denote better performance of the image matching. Thus, the accuracy rate (AR) is defined as:where and are the numbers of detected true matched points and detected corresponding points, respectively. The higher the AR is, the better the performance of the matching approach.

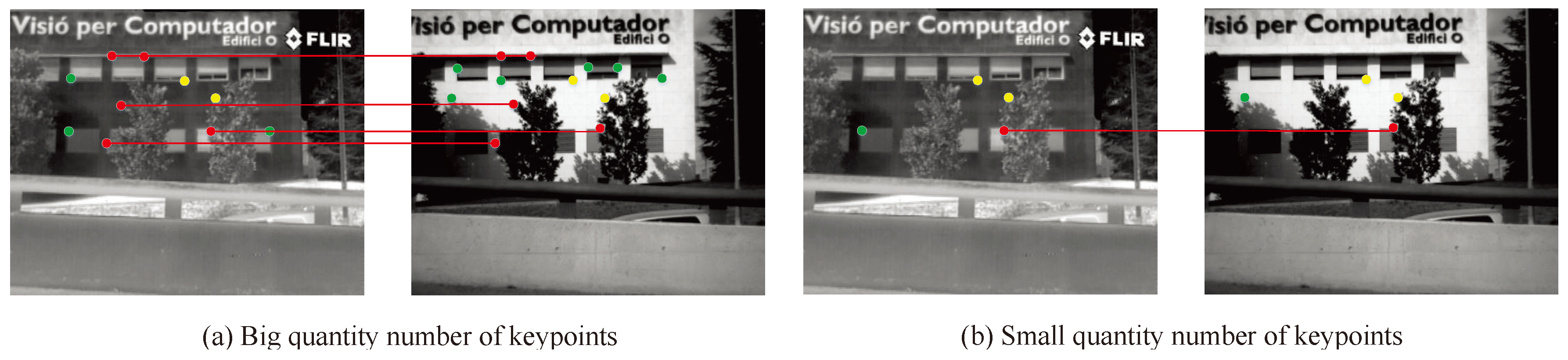

- Quantity rate (QR): Figure 11a demonstrates that a larger number of keypoints will generate more corresponding keypoints favorable to image matching. Figure 11b, in contrast, shows that a smaller number of keypoints can cause failure in image matching. Thus, the number of detected keypoints should be sufficiently large; for example, a reasonable number of keypoints should be detected, even on small objects. Nevertheless, the optimal number of features depends on the application. Thus, we define the QR as:where and are the numbers of detected keypoints and image pixels, respectively. A higher QR leads to better performance of the image matching, but also results in a slower speed of image matching. Thus, an adaptive QR should be determined for special applications.

- Efficiency (EF): The detection of features in an image should be considered as time-critical application. Thus, we defined the as:where , , and are known as the times of candidate keypoint detection, corresponding keypoint detection, and outlier removal, respectively. In order to satisfy time-critical applications, we must make small enough.

4.3. Experiment Results Comparison and Discussion

- Edge-oriented histogram descriptor (EHD): this algorithm first detects the contour of the image and, then, the edge histogram descriptor is obtained by using the MPEG-7 standard [69].

- Phase congruency edge-oriented histogram descriptor (PCEHD): This algorithm uses phase congruency to detect corners and edges in the image. In the corners, the EHD algorithm is utilized to obtain the feature descriptors.

- Log-Gabor feature descriptor (LGHD): this algorithm uses a fast algorithm to detect corners and log-Gabor filters to generate feature descriptors.

- Phase congruency log-Gabor image matching (PCLGM): This algorithm uses phase congruency to detect corners and edges in the image. In these corners, log-Gabor histograms are utilized to obtain the feature descriptors in overlapping subregions.

- Modified phase congruency log-Gabor image matching(MPCLGM): This algorithm modified corners detection based on phase congruency by combining the original images and Gaussian smoothed images. In these corners, log-Gabor feature descriptors are utilized to obtain the feature descriptors.

- Distinct modified phase congruency log-Gabor image matching(DMPCLGM): Distinct wavelengths are employed in the DMPCLGM for the visible and infrared images.

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Zheng, H.; Li, S.Y.; Shao, Y.Y.; Yang, S. Typical Building of Multi-Sensor Image Feature Extraction and Recognition. Artif. Intell. Sci. Technol. 2017, 259–272. [Google Scholar] [CrossRef]

- Son, J.; Kim, S.; Sohn, K. A Multi-vision Sensor-based Fast Localization System with Image Matching for Challenging Outdoor Environments. Expert Syst. Appl. 2015, 42, 8830–8839. [Google Scholar] [CrossRef]

- Xu, Y.; Zhou, J.; Zhuang, L. Binary auto encoding feature for multi-sensor image matching. In Proceedings of the 2016 Fourth International Conference on Ubiquitous Positioning, Indoor Navigation and Location Based Services (UPINLBS), Shanghai, China, 2–4 November 2016; pp. 278–282. [Google Scholar] [CrossRef]

- Conte, G.; Doherty, P. An Integrated UAV Navigation System Based on Aerial Image Matching. In Proceedings of the 2008 IEEE Aerospace Conference, Big Sky, MT, USA, 1–8 March 2008; pp. 1–10. [Google Scholar] [CrossRef]

- Zhang, Y.F.; Hui, Y. Study on Guidance Algorithm of Scene Matching based on Different Source Images for Cruise Missile. In Proceedings of the 2018 IEEE International Conference on Mechatronics and Automation (ICMA), Changchun, China, 5–8 August 2018; pp. 912–917. [Google Scholar] [CrossRef]

- Wang, L.; Han, J.; Zhang, Y.; Bai, L. Image fusion via feature residual and statistical matching. IET Comput. Vis. 2016, 10, 551–558. [Google Scholar] [CrossRef]

- Yang, W.; Zhong, L.; Chen, Y.; Lin, L.; Lu, Z.; Liu, S.; Wu, Y.; Feng, Q.; Chen, W. Predicting CT Image From MRI Data Through Feature Matching With Learned Nonlinear Local Descriptors. IEEE Trans. Med. Imaging 2018, 37, 977–987. [Google Scholar] [CrossRef] [PubMed]

- Pan, P.; Liu, J.; Zeng, Q.; Wang, Y.; Liu, S. Image matching for target location in airborne optoelectronic pod. In Proceedings of the 2016 IEEE Chinese Guidance, Navigation and Control Conference (CGNCC), Nanjing, China, 12–14 August 2016; pp. 1330–1332. [Google Scholar] [CrossRef]

- Mouats, T.; Aouf, N.; Sappa, A.D.; Aguilera, C.; Toledo, R. Multispectral Stereo Odometry. IEEE Trans. Intell. Transp. Syst. 2015, 16, 1210–1224. [Google Scholar] [CrossRef]

- Huang, Q.; Yang, J.; Wang, C.; Chen, J.; Meng, Y. Improved registration method for infrared and visible remote sensing image using NSCT and SIFT. In Proceedings of the 2012 IEEE International Geoscience and Remote Sensing Symposium, Munich, Germany, 22–27 July 2012; pp. 2360–2363. [Google Scholar] [CrossRef]

- Hariharan, H.; Koschan, A.; Abidi, B.; Gribok, A.; Abidi, M. Fusion of Visible and Infrared Images using Empirical Mode Decomposition to Improve Face Recognition. In Proceedings of the 2006 International Conference on Image Processing, Atlanta, GA, USA, 8–11 October 2006; pp. 2049–2052. [Google Scholar] [CrossRef]

- Cheng, K.; Lin, H. Automatic target recognition by infrared and visible image matching. In Proceedings of the 2015 14th IAPR International Conference on Machine Vision Applications (MVA), Tokyo, Japan, 18–22 May 2015; pp. 312–315. [Google Scholar] [CrossRef]

- Aguilera, C.; Barrera, F.; Lumbreras, F.; Sappa, A.D.; Toledo, R. Multispectral Image Feature Points. Sensors 2012, 12, 12661–12672. [Google Scholar] [CrossRef]

- Pan, J.S.; Kong, L.; Sung, T.W.; Tsai, P.W.; Snasel, V. α-Fraction First Strategy for Hierarchical Model in Wireless Sensor Networks. J. Internet Technol. 2018, 19, 1717–1726. [Google Scholar] [CrossRef]

- Pan, J.S.; Kong, L.; Sung, T.W.; Tsai, P.W.; Snášel, V. A Clustering Scheme for Wireless Sensor Networks Based on Genetic Algorithm and Dominating Set. J. Internet Technol. 2018, 19, 1111–1118. [Google Scholar] [CrossRef]

- Pan, J.S.; Lee, C.Y.; Sghaier, A.; Zeghid, M.; Xie, J. Novel Systolization of Subquadratic Space Complexity Multipliers Based on Toeplitz Matrix–Vector Product Approach. IEEE Trans. Large Scale Integr. Syst. 2019, 27, 1614–1622. [Google Scholar] [CrossRef]

- Wang, J.; Gao, Y.; Liu, W.; Sangaiah, A.K.; Kim, H.J. An Improved Routing Schema with Special Clustering using PSO Algorithm for Heterogeneous Wireless Sensor Network. Sensors 2019, 19, 671. [Google Scholar] [CrossRef]

- Wang, J.; Cao, J.; Sherratt, R.S.; Park, J.H. An improved ant colony optimization-based approach with mobile sink for wireless sensor networks. J. Supercomput. 2018, 74, 6633–6645. [Google Scholar] [CrossRef]

- Wang, J.; Gao, Y.; Liu, W.; Sangaiah, A.K.; Kim, H.J. Energy Efficient Routing Algorithm with Mobile Sink Support for Wireless Sensor Networks. Sensors 2019, 19, 1494. [Google Scholar] [CrossRef] [PubMed]

- Kovesi, P. Image Features From Phase Congruency. Videre J. Comput. Vision Res. 1999, 1, 1–26. [Google Scholar]

- Field, D.J. Relations between the statistics of natural images and the response properties of cortical cells. J. Opt. Soc. Am. A 1987, 4, 2379–2394. [Google Scholar] [CrossRef] [PubMed]

- Daugman, J. Uncertainty relation for resolution in space, spatial frequency, and orientation optimized by two-dimensional visual cortical filters. J. Opt. Soc. Am. Opt. Image Sci. 1985, 2, 1160–1169. [Google Scholar] [CrossRef]

- Daugman, J. Statistical Richness of Visual Phase Information: Update on Recognizing Persons by Iris Patterns. Int. J. Comput. Vis. 2001, 45, 25–38. [Google Scholar] [CrossRef]

- Kovesi, P.D. Phase congruency detects corners and edges. In Proceedings of the The Australian Pattern Recognition Society Conference, Sydney, Australia, 10–12 December 2003; pp. 309–318. [Google Scholar]

- Avants, B.B.; Epstein, C.; Grossman, M.; Gee, J. Symmetric Diffeomorphic Image Registration with Cross-Correlation: Evaluating Automated Labeling of Elderly and Neurodegenerative Brain. Med. Image Anal. 2008, 12, 26–41. [Google Scholar] [CrossRef]

- Yi, X.; Wang, B.; Fang, Y.; Liu, S. Registration of infrared and visible images based on the correlation of the edges. In Proceedings of the 2013 6th International Congress on Image and Signal Processing (CISP), Hangzhou, China, 16–18 December 2013; pp. 990–994. [Google Scholar] [CrossRef]

- Zhuang, Y.; Gao, K.; Miu, X.; Han, L.; Gong, X. Equation Infrared and Visual Image Registration Based on Mutual Information with a Combined Particle Swarm Optimization-Powell Search Algorithm. Opt. Int. J. Light Electron. Opt. 2015, 127, 188–191. [Google Scholar] [CrossRef]

- Sinisa, T.; Narendra, A. Region-based hierarchical image matching. Int. J. Comput. Vis. 2008, 78, 47–66. [Google Scholar] [CrossRef]

- Bhat, K.K.S.; Heikkilä, J. Line Matching and Pose Estimation for Unconstrained Model-to-Image Alignment. In Proceedings of the 2nd International Conference on 3D Vision, Tokyo, Japan, 8–11 December 2014; pp. 155–162. [Google Scholar] [CrossRef]

- Senthilnath, J.; Kalro, N.P. Accurate point matching based on multi-objective Genetic Algorithm for multi-sensor satellite imagery. Appl. Math. Comput. 2014, 236, 546–564. [Google Scholar] [CrossRef]

- Saleem, S.; Bais, A.; Sablatnig, R.; Ahmad, A.; Naseer, N. Feature points for multisensor images. Comput. Electr. Eng. 2017, 62, 511–523. [Google Scholar] [CrossRef]

- Lowe, D.G. Distinctive Image Features from Scale-Invariant Keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Bay, H.; Ess, A.; Tuytelaars, T.; Goolab, L.V. SURF: Speed-Up Robust Features. Comput. Vis. Image Underst. 2007, 110, 346–359. [Google Scholar] [CrossRef]

- Leutenegger, S.; Chli, M.; Siegwart, R.Y. BRISK: Binary Robust invariant scalable keypoints. In Proceedings of the 2011 International Conference on Computer Vision, Barcelona, Spain, 6–13 November 2011; pp. 2548–2555. [Google Scholar] [CrossRef]

- Rublee, E.; Rabaud, V.; Konolige, K.; Bradski, G. ORB: An efficient alternative to SIFT or SURF. In Proceedings of the 2011 International Conference on Computer Vision, ICCV2012, Barcelona, Spain, 6–13 November 2011; pp. 1–8. [Google Scholar] [CrossRef]

- Chen, J.; Shan, S.; He, C.; Zhao, G.; Pietikainen, M.; Chen, X.; Gao, W. WLD: A Robust Local Image Descriptor. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1705–1720. [Google Scholar] [CrossRef] [PubMed]

- Tang, F.L.T. A Webber local binary pattern descriptor for pancreas endoscopic ultrasound image classification. In Proceedings of the 2014 IEEE Workshop on Electronics, Computer and Applications, Ottawa, ON, Canada, 8–9 May 2014; pp. 836–839. [Google Scholar] [CrossRef]

- Li, Q.; Wang, G.; Liu, J.; Chen, S. Robust Scale-Invariant Feature Matching for Remote Sensing Image Registration. IEEE Geosci. Remote Sens. Lett. 2009, 6, 287–291. [Google Scholar] [CrossRef]

- Dana, J.; Anandan, P. Registration of visible and infrared images. Archit. Hardware Forw. Look. Infrared Issues Autom. Object Recognit. 1993, 1957, 2–13. [Google Scholar] [CrossRef]

- Firmenichy, D.; Brown, M.; Süsstrunk, S. Multispectral interest points for RGB-NIR image registration. In Proceedings of the 2011 18th IEEE International Conference on Image Processing, Brussels, Belgium, 11–14 September 2011; pp. 181–184. [Google Scholar] [CrossRef]

- Yi, Z.; Zhiguo, C.; Yang, X. Multi-spectral remote image registration based on SIFT. Electron. Lett. 2008, 44, 107–108. [Google Scholar] [CrossRef]

- Saleem, S.; Bais, A.; Sablatnig, R. A Performance Evaluation of SIFT and SURF for Multispectral Image Matching. In Image Analysis and Recognition; Campilho, A., Kamel, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 166–173. [Google Scholar]

- Sonn, S.; Bilodeau, G.; Galinier, P. Fast and Accurate Registration of Visible and Infrared Videos. In Proceedings of the 2013 IEEE Conference on Computer Vision and Pattern Recognition Workshops, Portland, OR, USA, 23–28 June 2013; pp. 308–313. [Google Scholar] [CrossRef]

- Kim, S.; Ryu, S.; Ham, B.; Kim, J.; Sohn, K. Local self-similarity frequency descriptor for multispectral feature matching. In Proceedings of the 2014 IEEE International Conference on Image Processing (ICIP), Paris, France, 27–30 October 2014; pp. 5746–5750. [Google Scholar] [CrossRef]

- Torabi, A.; Bilodeau, G. Local self-similarity-based registration of human ROIs in pairs of stereo thermal-visible videos. Pattern Recognit. 2013, 46, 578–589. [Google Scholar] [CrossRef]

- Cristhian, A.; Angel, S.; Ricardo, T. LGHD: A feature descriptor for matching across non-linear intensity variations. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015. [Google Scholar] [CrossRef]

- Li, Y.; Zou, J.; Jing, J.; Jin, H.; Yu, H. Establish keypoint matches on multispectral images utilizing descriptor and global information over entire image. Infrared Phys. Technol. 2016, 76, 1–10. [Google Scholar] [CrossRef]

- Qin, Y.; Cao, Z.; Zhuo, W.; Yu, Z. Robust key point descriptor for multi-spectral image matching. J. Syst. Eng. Electron. 2014, 25, 681–687. [Google Scholar] [CrossRef]

- Li, Y.; Jin, H.; Qiao, W. Robustly building keypoint mappings with global information on multispectral images. EURASIP J. Adv. Signal Process 2015, 2015, 53. [Google Scholar] [CrossRef]

- Fu, Z.; Qin, Q.; Luo, B.; Wu, C.; Sun, H. A Local Feature Descriptor Based on Combination of Structure and Texture Information for Multispectral Image Matching. IEEE Geosci. Remote Sens. Lett. 2019, 16, 100–104. [Google Scholar] [CrossRef]

- Daugman, J.G. Two-dimensional spectral analysis of cortical receptive field profiles. Vis. Res. 1980, 20, 847–856. [Google Scholar] [CrossRef]

- Nunes, C.F.G.; Pádua, F.L.C. A Local Feature Descriptor Based on Log-Gabor Filters for Keypoint Matching in Multispectral Images. IEEE Geosci. Remote Sens. Lett. 2017, 14, 1850–1854. [Google Scholar] [CrossRef]

- Rosten, E.; Drummond, T. Fusing points and lines for high performance tracking. In Proceedings of the Tenth IEEE International Conference on Computer Vision, Beijing, China, 17–21 October 2005; pp. 1508–1515. [Google Scholar] [CrossRef]

- Gao, W.; Kwong, S.; Zhou, Y.; Jia, Y.; Zhang, J.; Wu, W. Multiscale phase congruency analysis for image edge visual saliency detection. In Proceedings of the 2016 International Conference on Machine Learning and Cybernetics (ICMLC), Jeju, Korea, 10–13 July 2016; pp. 75–80. [Google Scholar] [CrossRef]

- Fan, J.; Wu, Y.; Li, M.; Liang, W.; Cao, Y. SAR and Optical Image Registration Using Nonlinear Diffusion and Phase Congruency Structural Descriptor. IEEE Trans. Geosci. Remote Sens. 2018, 56, 5368–5379. [Google Scholar] [CrossRef]

- Ma, W.; Wu, Y.; Liu, S.; Su, Q.; Zhong, Y. Remote Sensing Image Registration Based on Phase Congruency Feature Detection and Spatial Constraint Matching. IEEE Access 2018, 6, 77554–77567. [Google Scholar] [CrossRef]

- Zhu, Z.; Zheng, M.; Qi, G.; Wang, D.; Xiang, Y. A Phase Congruency and Local Laplacian Energy Based Multi-Modality Medical Image Fusion Method in NSCT Domain. IEEE Access 2019, 7, 20811–20824. [Google Scholar] [CrossRef]

- Morrone, M.; Owens, R. Feature detection from local energy. Pattern Recognit. Lett. 1987, 6, 303–313. [Google Scholar] [CrossRef]

- Mokhtarian, F.; Suomela, R. Robust image corner detection through curvature scale space. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 1376–1381. [Google Scholar] [CrossRef]

- Wang, X.; Shi, B.E. GPU implemention of fast Gabor filters. In Proceedings of the 2010 IEEE International Symposium on Circuits and Systems, Paris, France, 30 May–2 June 2010; pp. 373–376. [Google Scholar] [CrossRef]

- Nava, R.; Escalante, B.; Cristobal., G. Texture image retrieval based on log-Gabor features. In Progress in Pattern Recognition, Image Analysis, Computer Vision, and Applications; Springer: Berlin/Heidelberg, Germany, 2012; pp. 414–421. [Google Scholar] [CrossRef]

- Carbon, C.C. BiDimRegression: Bidimensional Regression Modeling Using R. J. Stat. Softw. 2013, 52, 1–11. [Google Scholar] [CrossRef]

- Barrera, F.; Lumbreras, F.; Sappa, A.D. Multispectral piecewise planar stereo using Manhattan-world assumption. Pattern Recognit. Lett. 2013, 34, 52–61. [Google Scholar] [CrossRef]

- Mikolajczyk, K.; Schmid, C. A performance evaluation of local descriptors. In Proceedings of the 2003 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Madison, WI, USA, 18–20 June 2003. [Google Scholar] [CrossRef]

- Zhu, Z.; Davari, K. Comparison of Local Visual Feature Detectors and Descriptors for the Registration of 3D Building Scenes. J. Comput. Civ. Eng. 2014, 29, 04014071. [Google Scholar] [CrossRef]

- Mikolajczyk, K.; Tuytelaars, T.; Schmid, C.; Zisserman, A.; Matas, J.; Schaffalitzky, F.; Kadir, T.; Gool, L.V. A Comparison of Affine Region Detectors. Int. J. Comput. Vis. 2005, 65, 43–72. [Google Scholar] [CrossRef]

- Tuytelaars, T.; Mikolajczyk, K. Local Invariant Feature Detectors: A Survey. Found. Trends Comput. Graph. Vis. 2008, 3, 177–280. [Google Scholar] [CrossRef]

- Manjunath, B.S.; Ohm, J.; Vasudevan, V.V.; Yamada, A. Color and texture descriptors. IEEE Trans. Circuits Syst. Video Technol. 2001, 11, 703–715. [Google Scholar] [CrossRef]

- Sikora, T.; Zhong, L.; Chen, Y.; Lin, L.; Lu, Z.; Liu, S.; Wu, Y.; Feng, Q.; Chen, W. The MPEG-7 visual standard for content description-an overview. IEEE Trans. Circuits Syst. Video Technol. 2001, 11, 696–702. [Google Scholar] [CrossRef]

| Descriptor | RPR | RR | AR | QR | EF |

|---|---|---|---|---|---|

| EHD | 0.02 | 0.04 | 0.14 | 0.005 | 0.28 |

| PCEHD | 0.02 | 0.02 | 0.01 | 0.005 | 1.05 |

| LGHD | 0.02 | 0.02 | 0.01 | 0.005 | 1.55 |

| PCLGM | 0.19 | 0.014 | 0.59 | 0.27 | 40.3 |

| MPCLGM | 0.198 | 0.013 | 0.62 | 0.31 | 65.54 |

| DMPCLGM | 0.27 | 0.01 | 0.64 | 0.46 | 82 |

| Descriptor | RPR | RR | AR | QR | EF |

|---|---|---|---|---|---|

| EHD | 0.025 | 0.054 | 0.1 | 0.0018 | 0.4 |

| PCEHD | 0.034 | 0.037 | 0.123 | 0.0025 | 1.87 |

| LGHD | 0.04 | 0.08 | 0.25 | 0.003 | 11.56 |

| PCLGM | 0.24 | 0.003 | 0.21 | 0.43 | 67.68 |

| MPCLGM | 0.24 | 0.013 | 0.26 | 0.43 | 71.62 |

| DMPCLGM | 0.24 | 0.0075 | 0.32 | 0.43 | 78.01 |

| Descriptor | RPR | RR | AR | QR | EF |

|---|---|---|---|---|---|

| EHD | 0.087 | 0.3 | 0.91 | 0.11 | 8.65 |

| PCEHD | 0.087 | 0.3 | 0.9 | 0.11 | 11.41 |

| LGHD | 0.087 | 0.4 | 0.93 | 0.11 | 30.3 |

| PCLGM | 0.2976 | 0.0282 | 0.8991 | 0.2279 | 30.51 |

| MPCLGM | 0.2976 | 0.0278 | 0.8919 | 0.22341 | 30.40 |

| DMPCLGM | - | - | - | - | - |

| Descriptor | RPR | RR | AR | QR | EF |

|---|---|---|---|---|---|

| EHD | 0.22 | 0.27 | 0.99 | 0.06 | 4.48 |

| PCEHD | 0.22 | 0.27 | 0.99 | 0.06 | 5.73 |

| LGHD | 0.22 | 0.28 | 1 | 0.06 | 15.16 |

| PCLGM | 0.22639 | 0.0528 | 0.7234 | 0.3134 | 69.05 |

| MPCLGM | 0.22639 | 0.0604 | 0.7261 | 0.4170 | 96.1237 |

| DMPCLGM | - | - | - | - | - |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, X.; Li, J.-B.; Pan, J.-S. Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters in Infrared and Visible Images. Sensors 2019, 19, 4244. https://doi.org/10.3390/s19194244

Liu X, Li J-B, Pan J-S. Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters in Infrared and Visible Images. Sensors. 2019; 19(19):4244. https://doi.org/10.3390/s19194244

Chicago/Turabian StyleLiu, Xiaomin, Jun-Bao Li, and Jeng-Shyang Pan. 2019. "Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters in Infrared and Visible Images" Sensors 19, no. 19: 4244. https://doi.org/10.3390/s19194244

APA StyleLiu, X., Li, J.-B., & Pan, J.-S. (2019). Feature Point Matching Based on Distinct Wavelength Phase Congruency and Log-Gabor Filters in Infrared and Visible Images. Sensors, 19(19), 4244. https://doi.org/10.3390/s19194244