Locally Oriented Scene Complexity Analysis Real-Time Ocean Ship Detection from Optical Remote Sensing Images

Abstract

1. Introduction

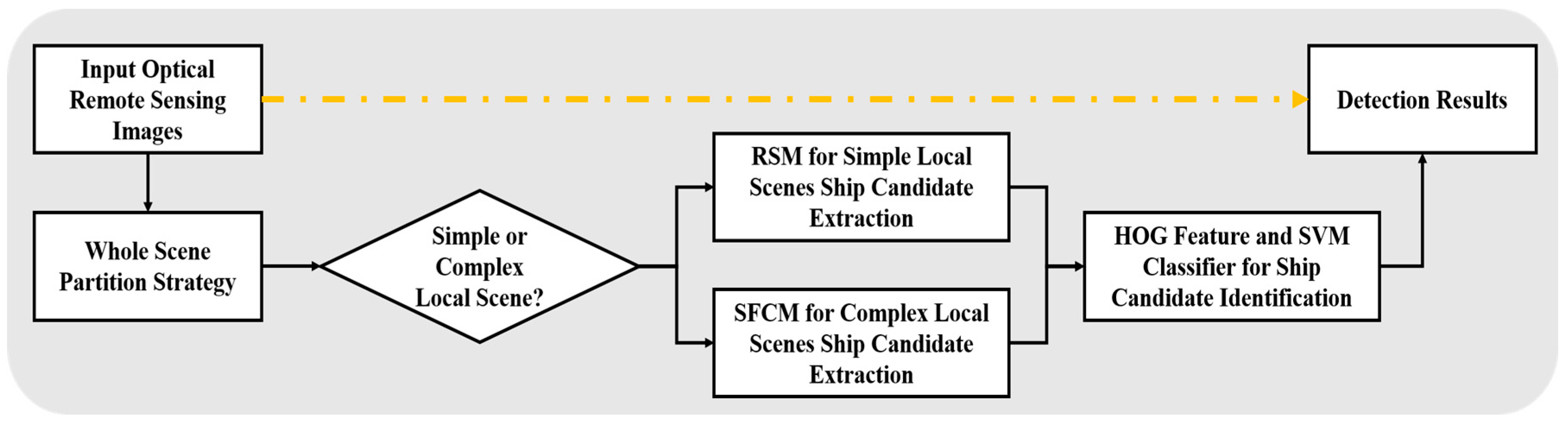

2. Optical Remote Sensing Ocean Ship Detection

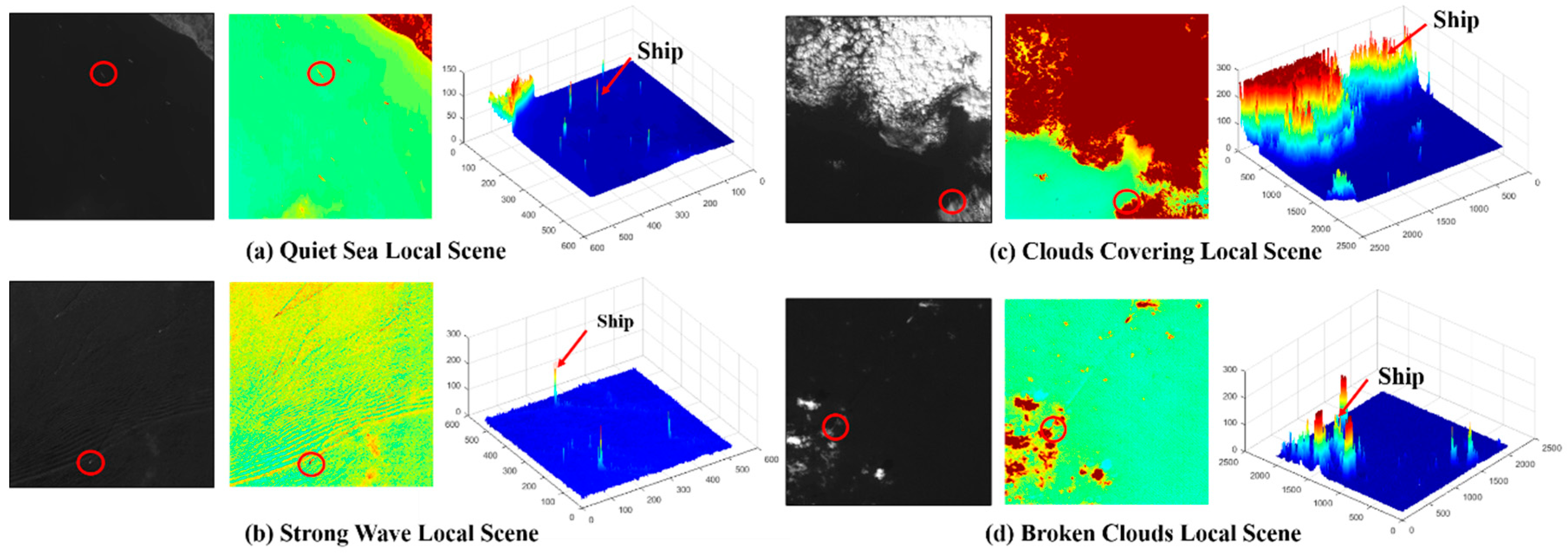

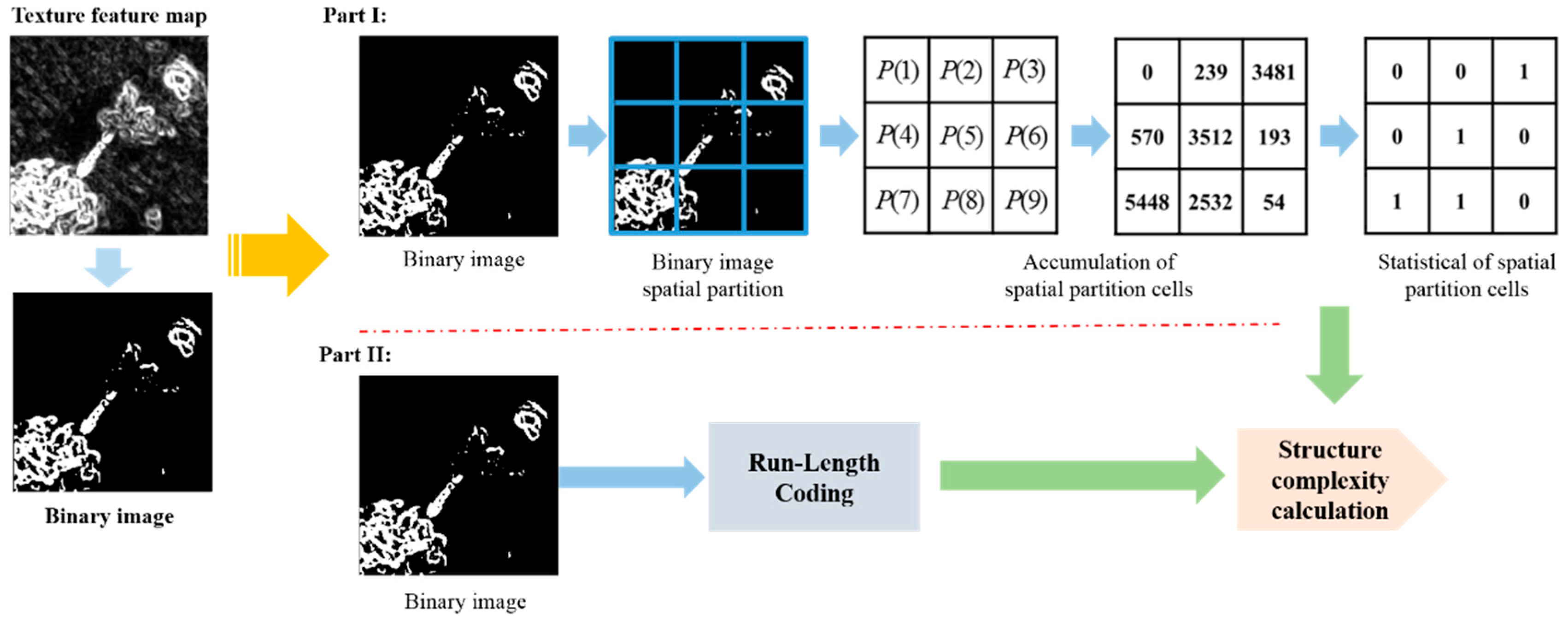

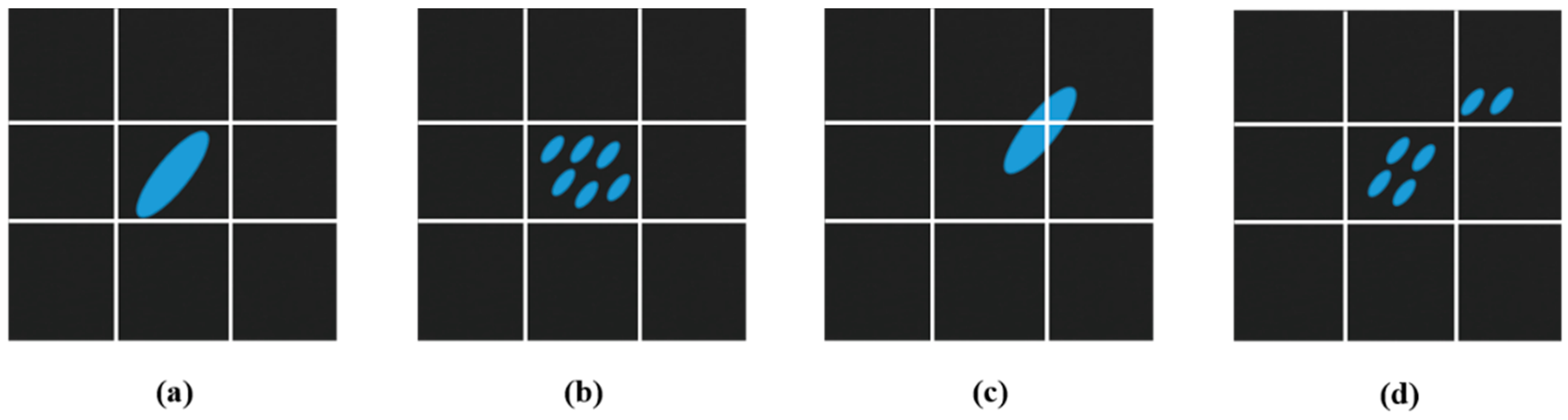

2.1. Locally Oriented Scene Complexity Analysis

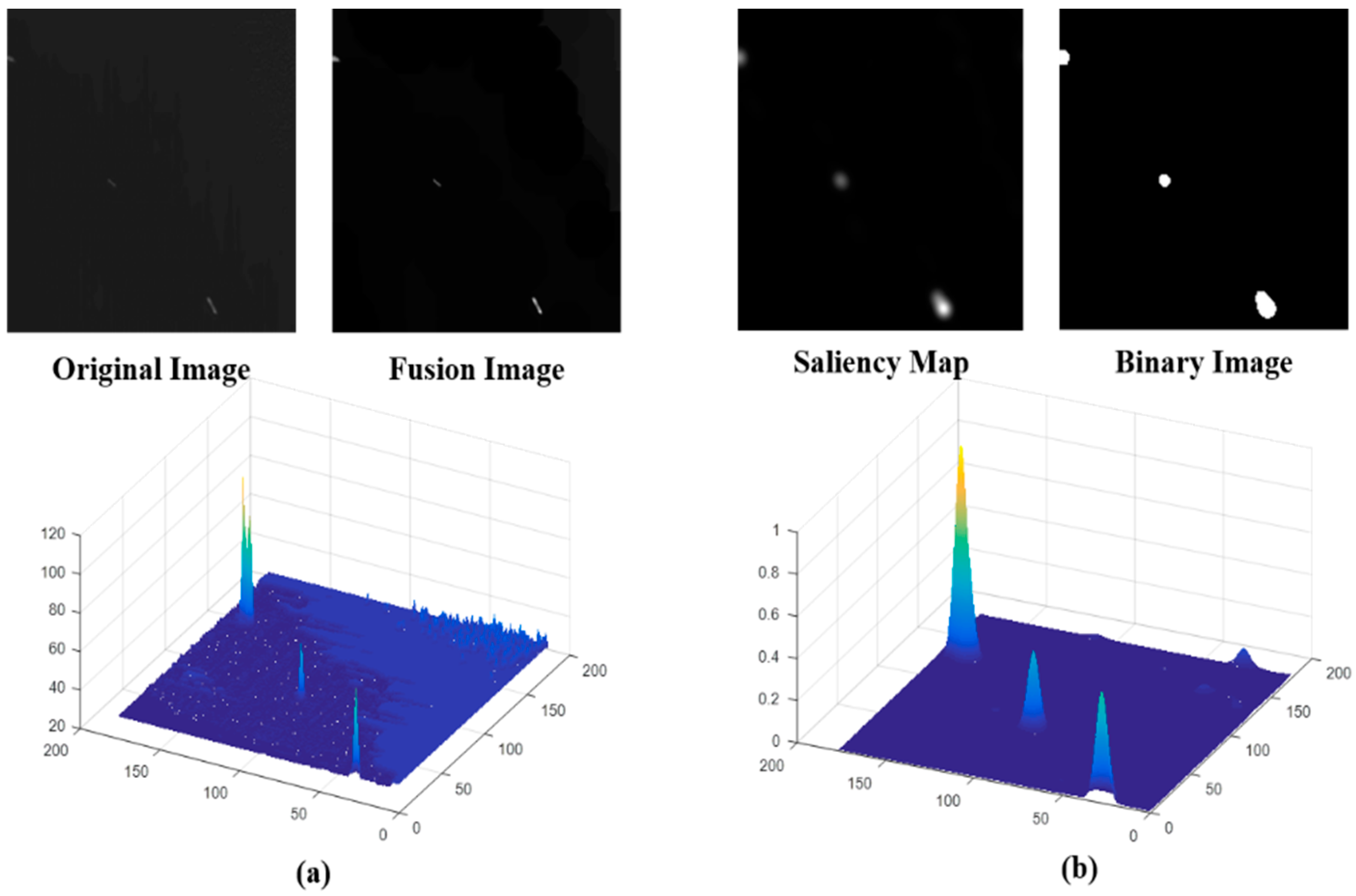

2.2. RSM for Simple Local Scene Ship Candidate Extraction

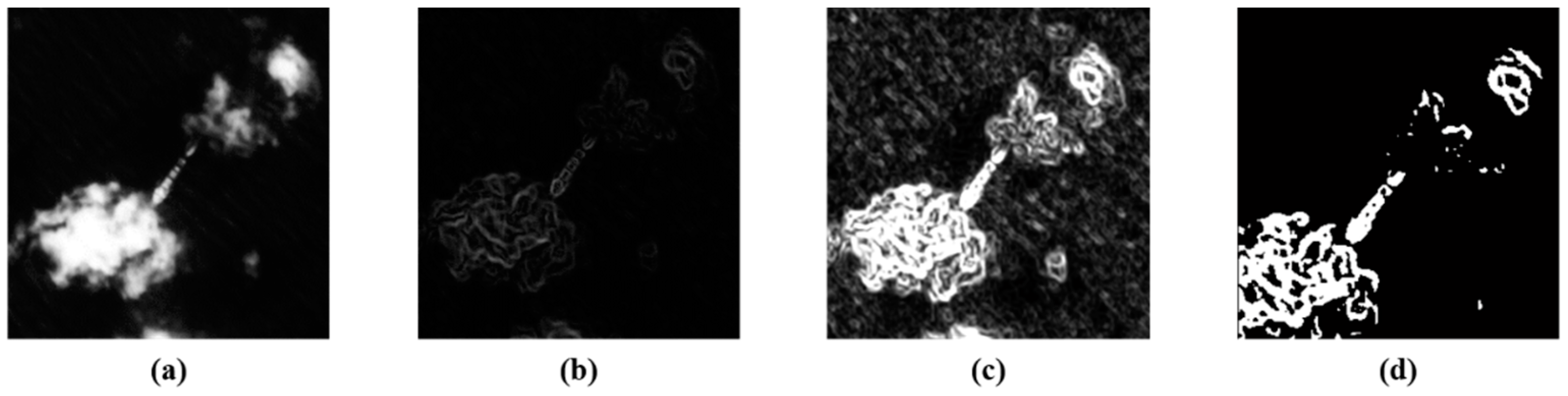

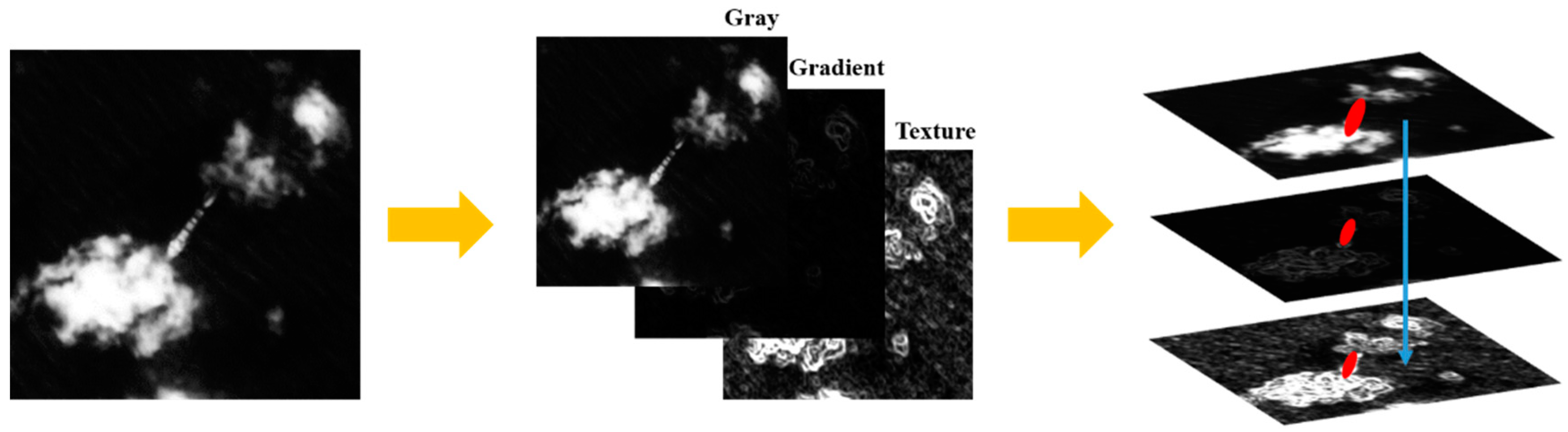

2.3. SFCM for Complex Local Scene Ship Candidate Extraction

2.4. HOG and SVM Classifier for Ship Candidate Confirmation

3. Experiments and Results Discussion

3.1. Real-Time Processing Factor Discussion of Locally Oriented Scenes Character Analysis

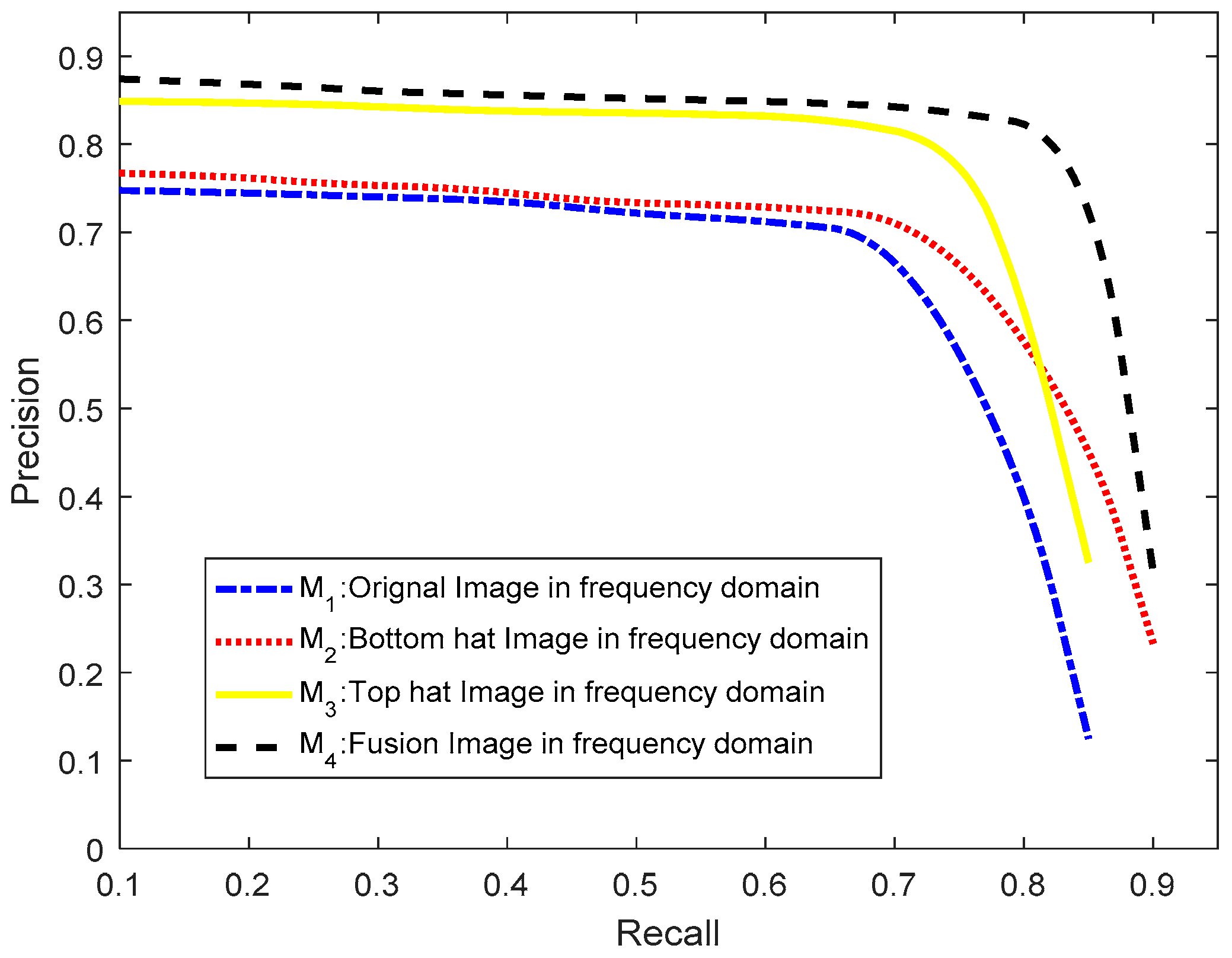

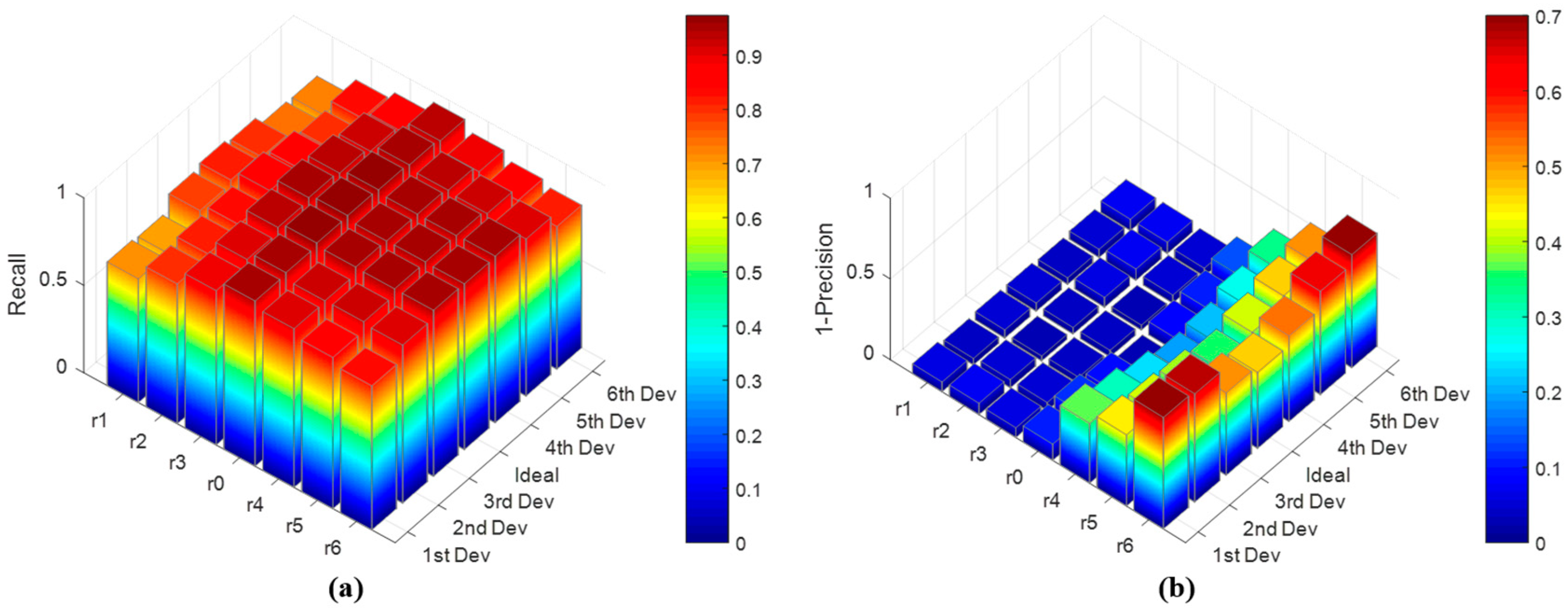

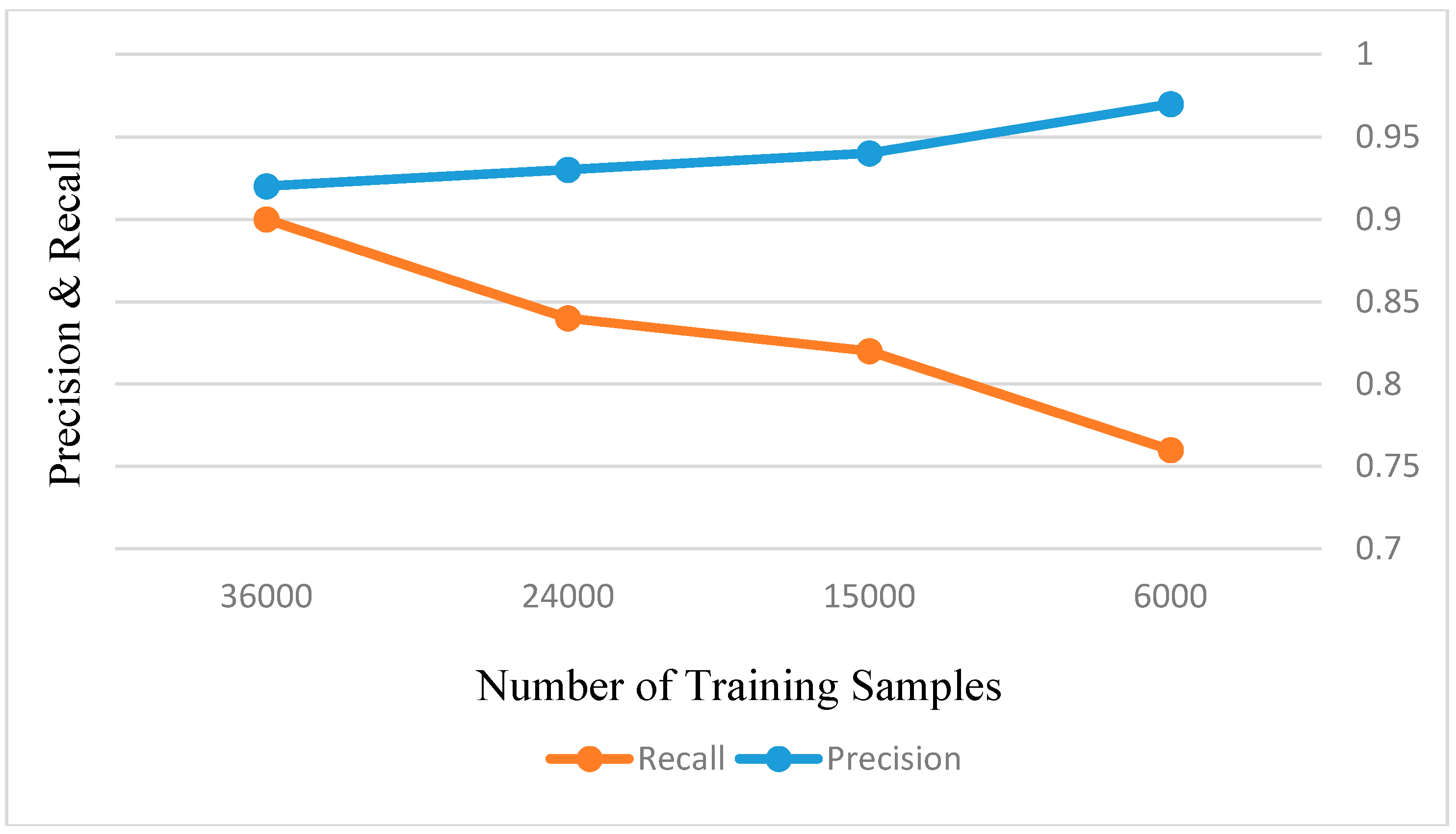

3.2. Performance Discussion of RSM

3.3. Performance Discussion of SFCM

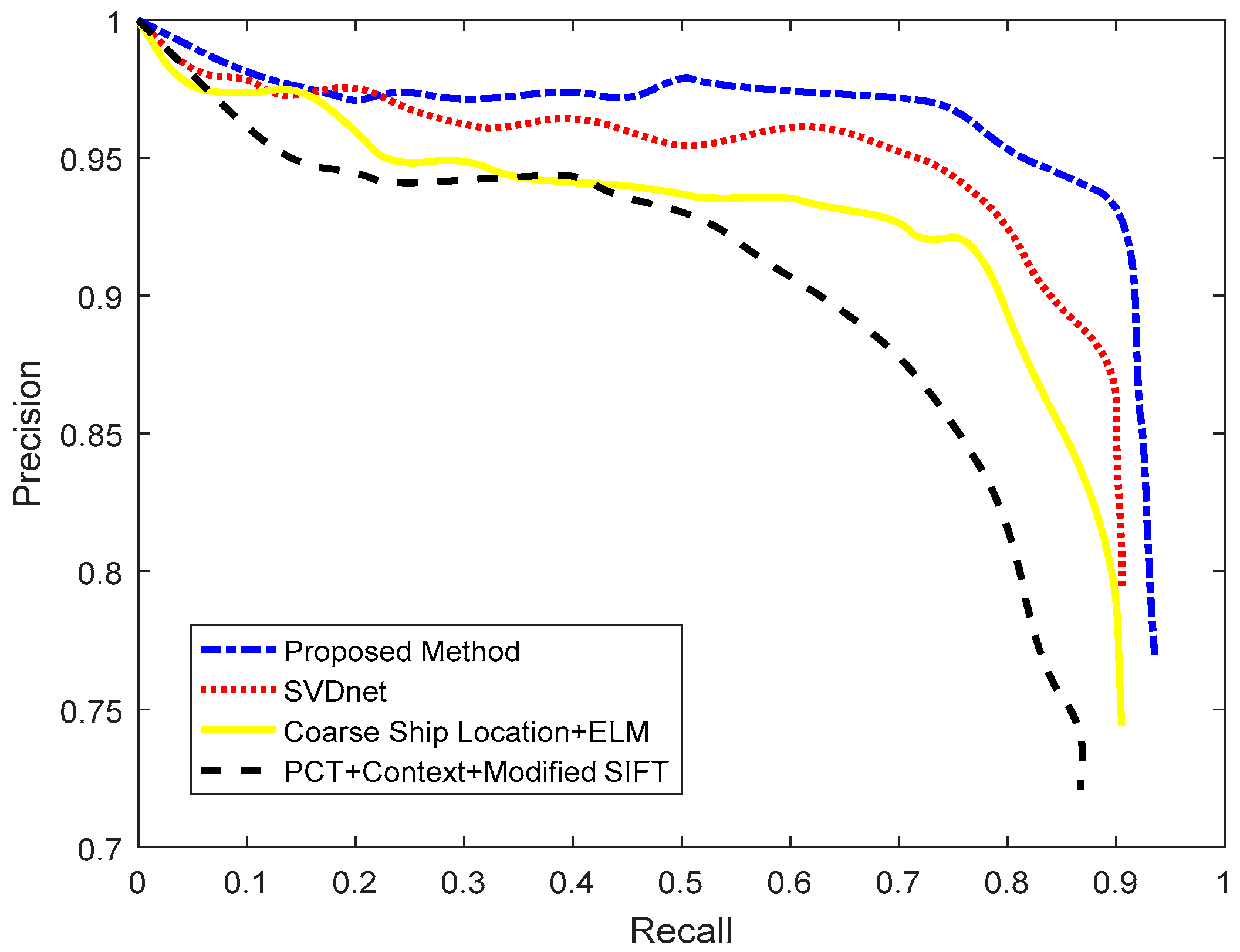

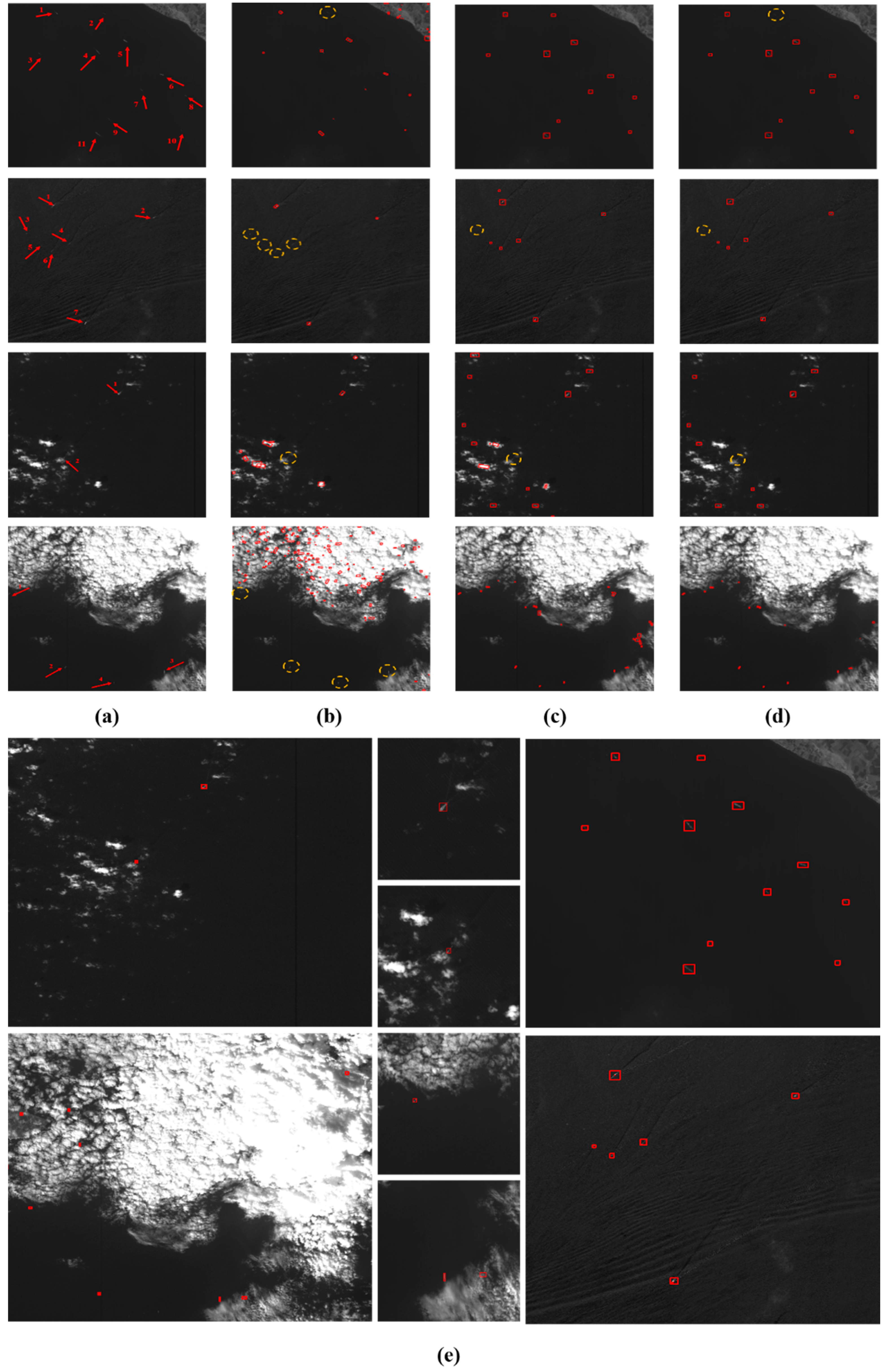

3.4. Ocean Ship Detection Result Comparing

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Leng, X.; Ji, K.; Yang, K. A Bilateral CFAR Algorithm for Ship Detection in SAR Images. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1536–1540. [Google Scholar] [CrossRef]

- Hou, B.; Chen, X.; Jiao, L. Multilayer CFAR Detection of Ship Targets in Very High Resolution SAR Images. IEEE Geosci. Remote Sens. Lett. 2014, 12, 811–815. [Google Scholar]

- Wang, C.; Bi, F.; Zhang, W. An Intensity-Space Domain CFAR Method for Ship Detection in HR SAR Images. IEEE Geosci. Remote Sens. Lett. 2017, 14, 529–533. [Google Scholar] [CrossRef]

- Wang, S.; Wang, M.; Yang, S. New Hierarchical Saliency Filtering for Fast Ship Detection in High-Resolution SAR Images. IEEE Trans. Geosci. Remote Sens. 2016, 55, 351–362. [Google Scholar] [CrossRef]

- Greidanus, H.; Kourti, N. Findings of the DECLIMS project—Detection and Classification of Marine Traffic from Space. In Proceedings of the SEASAR 2006, Frascati, Italy, 23–26 January 2006. [Google Scholar]

- Greidanus, H.; Kourti, N. A Detailed Comparison between Radar and Optical Vessel Signatures. In Proceedings of the 2006 IEEE International Conference on Geoscience and Remote Sensing Symposium, Denver, CO, USA, 31 July–4 August 2006; pp. 3267–3270. [Google Scholar]

- Corbane, C.; Najman, L.; Pecoul, E. A complete processing chain for ship detection using optical satellite imagery. Int. J. Remote Sens. 2010, 31, 5837–5854. [Google Scholar] [CrossRef]

- Zhu, C.; Zhou, H.; Wang, R. A Novel Hierarchical Method of Ship Detection from Spaceborne Optical Image Based on Shape and Texture Features. IEEE Trans. Geosci. Remote Sens. 2010, 48, 3446–3456. [Google Scholar] [CrossRef]

- Bi, F.; Zhu, B.; Gao, L. A Visual Search Inspired Computational Model for Ship Detection in Optical Satellite Images. IEEE Geosci. Remote Sens. Lett. 2012, 9, 749–753. [Google Scholar]

- Xia, Y.; Wan, S.; Jin, P. A Novel Sea-Land Segmentation Algorithm Based on Local Binary Patterns for Ship Detection. Int. J. Signal Process. Image Process. Pattern Recognit. 2014, 7, 237–246. [Google Scholar] [CrossRef]

- Qi, S.; Ma, J.; Lin, J. Unsupervised Ship Detection Based on Saliency and S-HOG Descriptor from Optical Satellite Images. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1451–1455. [Google Scholar]

- Yang, G.; Li, B.; Ji, S. Ship Detection from Optical Satellite Images Based on Sea Surface Analysis. IEEE Geosci. Remote Sens. Lett. 2014, 11, 641–645. [Google Scholar] [CrossRef]

- Tang, J.; Deng, C.; Huang, G.B. Compressed-Domain Ship Detection on Spaceborne Optical Image Using Deep Neural Network and Extreme Learning Machine. IEEE Trans. Geosci. Remote Sens. 2014, 53, 1174–1185. [Google Scholar] [CrossRef]

- Shi, Z.; Yu, X.; Jiang, Z. Ship Detection in High-Resolution Optical Imagery Based on Anomaly Detector and Local Shape Feature. IEEE Trans. Geosci. Remote Sens. 2014, 52, 4511–4523. [Google Scholar]

- Zou, Z.; Shi, Z. Ship Detection in Spaceborne Optical Image with SVD Networks. IEEE Trans. Geosci. Remote Sens. 2016, 54, 5832–5845. [Google Scholar] [CrossRef]

- Xu, F.; Liu, J.; Sun, M. A Hierarchical Maritime Target Detection Method for Optical Remote Sensing Imagery. Remote Sens. 2017, 9, 280. [Google Scholar] [CrossRef]

- Mattyus, G. Near real-time automatic vessel detection on optical satellite images. In Proceedings of the ISPRS Hannover Workshop 2013, Hannover, Germany, 21–24 May 2013. [Google Scholar]

- Dong, C.; Liu, J.; Xu, F. Ship Detection in Optical Remote Sensing Images Based on Saliency and a Rotation-Invariant Descriptor. Remote Sens. 2018, 10, 400. [Google Scholar] [CrossRef]

- Xu, F.; Liu, J.; Dong, C.; Wang, X. Ship Detection in Optical Remote Sensing Images Based on Wavelet Transform and Multi-Level False Alarm Identification. Remote Sens. 2017, 9, 985. [Google Scholar] [CrossRef]

- Sui, H.; Song, Z. A Novel Ship Detection Method for Large-Scale Optical Satellite Images Based on Visual Lbp Feature and Visual Attention Model. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 917–921. [Google Scholar]

- Otsu, N. A Threshold Selection Method from Gray-Level Histograms. IEEE Trans. Syst. Man Cybern. 2007, 9, 62–66. [Google Scholar] [CrossRef]

- Hensley, J.; Scheuermann, T.; Coombe, G. Fast Summed-Area Table Generation and its Applications. Comput. Graph. Forum 2010, 24, 547–555. [Google Scholar] [CrossRef]

- Dang, Q.; Yan, S.; Wu, R. A fast integral image generation algorithm on GPUs. In Proceedings of the IEEE International Conference on Parallel and Distributed Systems, Hsinchu, Taiwan, 16–19 December 2015; pp. 624–631. [Google Scholar]

- Yang, E.H.; Wang, L. Joint optimization of run-length coding, Huffman coding, and quantization table with complete baseline JPEG decoder compatibility. IEEE Trans. Image Process. 2009, 18, 63–74. [Google Scholar] [CrossRef] [PubMed]

- Yuan, H.; Hou, G.; Li, Y. Pulse Coupled Neural Network Algorithm for Object Detection in Infrared Image. In Proceedings of the 2009 International Symposium on Computer Network and Multimedia Technology, Wuhan, China, 18–20 January 2009; pp. 1–4. [Google Scholar]

- Achanta, R.; Hemami, S.; Estrada, F. Frequency-tuned salient region detection. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 1597–1604. [Google Scholar]

- Hou, X.; Zhang, L. Saliency Detection: A Spectral Residual Approach. In Proceedings of the 207 IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8. [Google Scholar]

- Harvey, N.R.; Porter, R.; Theiler, J. Ship detection in satellite imagery using rank-order grayscale hit-or-miss transforms. In Proceedings of the SPIE Defense, Security, and Sensing, Orlando, FL, USA, 5–9 April 2010. [Google Scholar]

- Zhang, H.; Wang, J.; Bai, X. Object detection via foreground contour feature selection and part-based shape model. In Proceedings of the International Conference on Pattern Recognition, Tsukuba, Japan, 11–15 November 2012; pp. 2524–2527. [Google Scholar]

- Tang, J.; Wang, H.; Yan, Y. Learning Hough regression models via bridge partial least squares for object detection. Neurocomputing 2015, 152, 236–249. [Google Scholar] [CrossRef]

- Tuzel, O.; Porikli, F.; Meer, P. Region Covariance: A Fast Descriptor for Detection and Classification. In Proceedings of the 9th European Conference on Computer Vision, Graz, Austria, 7–13 May 2006; pp. 589–600. [Google Scholar]

- Ke, X.; Du, M. Detection of maize seeds based on multi-scale feature fusion and extreme learning machine. J. Image Graph. January 2016, 41–48. [Google Scholar]

- Mukherjee, S.; Majumder, B.P.; Piplai, A. Kernelized Weighted SUSAN based Fuzzy C-Means Clustering for Noisy Image Segmentation. arXiv, 2016; arXiv:1603.08564. [Google Scholar]

- Ruan, Z.; Wang, G.; Xue, J.H. Subcategory Clustering with Latent Feature Alignment and Filtering for Object Detection. IEEE Signal Process. Lett. 2015, 22, 244–248. [Google Scholar] [CrossRef]

- Qin, Y.; Lu, H.; Xu, Y. Saliency detection via Cellular Automata. In Proceedings of the Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 110–119. [Google Scholar]

- Cheng, G.; Han, J. A Survey on Object Detection in Optical Remote Sensing Images. ISPRS J. Photogramm. Remote Sens. 2016, 117, 11–28. [Google Scholar] [CrossRef]

- Moreno-Torres, J.G.; Saez, J.A.; Herrera, F. Study on the impact of partition-induced dataset shift on k-fold cross-validation. IEEE Trans. Neural Netw. Learn. Syst. 2012, 23, 1304–1312. [Google Scholar] [CrossRef] [PubMed]

- Rodriguez, J.D.; Perez, A.; Lozano, J.A. Sensitivity Analysis of k-Fold Cross Validation in Prediction Error Estimation. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 569–575. [Google Scholar] [CrossRef] [PubMed]

| Local Scene Size | Size of Cells | Recall | Precision | Times (s)/Scene |

|---|---|---|---|---|

| 18 × 18 | 3 × 3 | 96.42% | 100% | 2.000 |

| 5 × 5 | 95.97% | 100% | 2.450 | |

| 7 × 7 | 94.32% | 100% | 2.770 | |

| 54 × 54 | 3 × 3 | 91.77% | 100% | 1.170 |

| 5 × 5 | 92.56% | 100% | 1.370 | |

| 7 × 7 | 91.05% | 100% | 1.690 | |

| 162 × 162 | 3 × 3 | 87.84% | 100% | 0.150 |

| 5 × 5 | 86.95% | 100% | 0.660 | |

| 7 × 7 | 84.73% | 100% | 0.790 | |

| 486 × 486 | 3 × 3 | 70.79% | 100% | 0.050 |

| 5 × 5 | 71.44% | 100% | 0.057 | |

| 7 × 7 | 69.94% | 100% | 0.077 | |

| 1458 × 1458 | 3 × 3 | 42.16% | 100% | 0.003 |

| 5 × 5 | 41.95% | 100% | 0.009 | |

| 7 × 7 | 41.04% | 100% | 0.014 |

| R | 5 | 10 | 15 | 20 | 25 | 30 | 35 | 40 |

|---|---|---|---|---|---|---|---|---|

| Precision | 100% | 100% | 100% | 88.17% | 65.19% | 53.57% | 36.14% | 25.26% |

| Recall | 23.32% | 44.72% | 87.21% | 100% | 100% | 100% | 100% | 100% |

| Local Scene Character | Quiet Sea Surface | Fleet of Ships | Broken Clouds | Heavy Clouds | Strong Waves |

|---|---|---|---|---|---|

| Precision | 92.5% | 84.4% | 75.3% | 80.4% | 66.8% |

| Recall | 90.0% | 77.5% | 64.9% | 67.7% | 65.7% |

| Time (s)/local scene | 0.025 | 0.043 | 0.032 | 0.061 | 0.042 |

| Local Scene Character | Quiet Sea Surface | Fleet of Ships | Broken Clouds | Heavy Clouds | Strong Waves |

|---|---|---|---|---|---|

| Precision | 94.3% | 92.6% | 89.7% | 90.0% | 90.8% |

| Recall | 95.0% | 93.5% | 90.5% | 91.2% | 89.7% |

| Time (s)/local scene | 0.64 | 0.74 | 0.82 | 0.58 | 0.70 |

| Method | R | Precision | Recall | Time (s)/Scene |

|---|---|---|---|---|

| RSM | / | 79.3% | 72.4% | 1.25 |

| SFCM | / | 89.5% | 93.0% | 4.72 |

| (RSM + SFCM)1 | 5 | 90.7% | 91.3% | 3.49 |

| (RSM + SFCM)2 | 15 | 89.4% | 90.2% | 2.46 |

| (RSM +S FCM + HOG)1 | 5 | 94.5% | 93.5% | 3.83 |

| (RSM + SFCM + HOG)2 | 15 | 92.9% | 91.7% | 2.96 |

| PCT + Context + Modified SIFT [9] | / | 79.8% | 78.3% | 3.29 |

| Coarse Ship Location + ELM [13] | / | 87.4% | 82.8% | 7.48 |

| SVDnet [15] | / | 89.8% | 86.1% | 9.89 |

| Local Scene | Target Size | Total Number of Real Ships | Total Number of Ships Detected | Precision | Number of Falsely Detected Ships | Recall |

|---|---|---|---|---|---|---|

| Proposed method | ||||||

| Quiet sea | Large | 45 | 43 | 96.50% | 5 | 93.88% |

| Small | 102 | 100 | ||||

| Texture sea | Large | 37 | 35 | 92.93% | 7 | 92.00% |

| Small | 63 | 64 | ||||

| Cluster sea | Large | 32 | 35 | 89.47% | 8 | 89.47% |

| Small | 44 | 41 | ||||

| PCT + Context + Modified SIFT Method [9] | ||||||

| Quiet sea | Large | 45 | 37 | 95.17% | 7 | 93.87% |

| Small | 102 | 108 | ||||

| Texture sea | Large | 37 | 40 | 84.69% | 15 | 83.00% |

| Small | 63 | 58 | ||||

| Cluster sea | Large | 32 | 30 | 59.46% | 30 | 57.89% |

| Small | 44 | 44 | ||||

| Coarse Ship Location + ELM Method [13] | ||||||

| Quiet sea | Large | 45 | 44 | 97.20% | 4 | 94.56% |

| Small | 102 | 99 | ||||

| Texture sea | Large | 37 | 35 | 88.42% | 11 | 84.00% |

| Small | 63 | 60 | ||||

| Cluster sea | Large | 32 | 30 | 76.61% | 19 | 69.74% |

| Small | 44 | 42 | ||||

| SVDnet Method [15] | ||||||

| Quiet sea | Large | 45 | 43 | 95.80% | 6 | 93.20% |

| Small | 102 | 100 | ||||

| Texture sea | Large | 37 | 41 | 89.11% | 11 | 90.00% |

| Small | 63 | 60 | ||||

| Cluster sea | Large | 32 | 30 | 84.43% | 13 | 75.00% |

| Small | 44 | 40 | ||||

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhuang, Y.; Qi, B.; Chen, H.; Bi, F.; Li, L.; Xie, Y. Locally Oriented Scene Complexity Analysis Real-Time Ocean Ship Detection from Optical Remote Sensing Images. Sensors 2018, 18, 3799. https://doi.org/10.3390/s18113799

Zhuang Y, Qi B, Chen H, Bi F, Li L, Xie Y. Locally Oriented Scene Complexity Analysis Real-Time Ocean Ship Detection from Optical Remote Sensing Images. Sensors. 2018; 18(11):3799. https://doi.org/10.3390/s18113799

Chicago/Turabian StyleZhuang, Yin, Baogui Qi, He Chen, Fukun Bi, Lianlin Li, and Yizhuang Xie. 2018. "Locally Oriented Scene Complexity Analysis Real-Time Ocean Ship Detection from Optical Remote Sensing Images" Sensors 18, no. 11: 3799. https://doi.org/10.3390/s18113799

APA StyleZhuang, Y., Qi, B., Chen, H., Bi, F., Li, L., & Xie, Y. (2018). Locally Oriented Scene Complexity Analysis Real-Time Ocean Ship Detection from Optical Remote Sensing Images. Sensors, 18(11), 3799. https://doi.org/10.3390/s18113799